Abstract

In the dementia field, a number of applications are being developed aimed at boosting functional abilities. There is an interesting gap as to how utilising serious games can further the knowledge on the potential relationship between hearing and cognitive health in mid-life. The aim of this study was to evaluate the auditory-cognitive training application HELIX, against outcome measures for speech-in-noise, cognitive tasks, communication confidence, quality of life, and usability. A randomised-controlled trial was completed for 43 participants with subjective hearing loss and/or cognitive impairment, over a play period of 4 weeks and a follow-up period of another 4 weeks. Outcome measures included a new online implementation of the Digit-Triplet-Test, a battery of online cognitive tests, and quality of life questionnaires. Paired semi-structured interviews and usability measures were completed to assess HELIX’s impact on quality of life and usability. An improvement in the performance of the Digit-Triplet-Test, measured 4 and 8 weeks after the baseline, was found within the training group; however, this improvement was not significant between the training and control groups. No significant improvements were found in any other outcome measures. Thematic analysis suggested HELIX prompted the realisation of difficulties and actions required, improved listening, and positive behaviour change. Employing a participatory design approach has ensured HELIX is relevant and useful for participants who may be at risk of developing age-related hearing loss and cognitive decline. Although an improvement in the Digit-Triplet-Test was seen, it is not possible to conclude whether this was as a result of playing HELIX.

Introduction

The World Health Organization (WHO) has classified dementia as a public health priority, with statistics suggesting approximately 50 million people live with a diagnosed dementia. With no treatment or approach proven to cure or prevent dementia, the focus is instead on reducing risk, improving early diagnosis and intervention (WHO, 2019). Although throughout the ageing process developing dementia becomes a greater risk, it is not an inescapable diagnosis for all. A significant library of research has been published which has suggested a possible relationship between potentially modifiable risk factors and developing cognitive decline, which could impair functioning to an extent where a dementia diagnosis is reached. There is potential to harness these potentially modifiable risk factors to delay or slow cognitive decline through early intervention (Livingston et al., 2020; WHO, 2019).

Although these more recent findings concur with previous evidence from Lin et al. (2011), suggesting that hearing loss can increase the risk of developing dementia in later life by up to five times, the causality in this relationship is still unknown. Although this potential relationship between cognitive decline and hearing loss has attracted a high level of attention in recent years, these findings should be interpreted with caution. The management of hearing loss should not be leveraged as a preventive action for developing dementia and should be treated as its own long-term health condition, as highlighted recently by Dawes and Munro (2024). Here, the authors discuss the results of the Aging and Cognitive Health Evaluation in Elders (ACHIEVE) trial (Lin et al., 2023). This was a highly anticipated and high-quality randomised-controlled trial (RCT), that found a hearing intervention (hearing aids supplemented with counselling and assistive listening devices) had no impact on reducing cognitive impairment in the sample population compared with an active control in receipt of health education and chronic disease prevention. Furthermore, there is some debate in the field about the legitimacy of a secondary analysis of a subsample.

Moreover, a recent systematic review and meta-analysis investigating whether hearing aid use could reduce the likelihood of developing dementia was retracted due to an error in the data reporting (Yeo et al., 2023). Other studies have found that the use of hearing aids may result in lower levels of depressive symptoms and health-related quality of life but have no impact on the deceleration of cognitive function that occurs through ageing (Atef et al., 2023).

It is clear from the research and potential generalisability of findings that the focus cannot solely be placed on device-based interventions, given that hearing aid adoption in the United Kingdom is still at approximately half of the population that could benefit (Anovum, 2022).

As highlighted by Dawes and Munro (2024), “non-device based interventions” may have an effect on cognitive performance, but only if the assumption that the relationship between hearing loss and risk of cognitive decline is via a psychosocial pathway. It would seem prudent to further investigate how maximising levels of social interactions may reduce the risk of social withdrawal, which could in turn reduce depression, social isolation, better cortical stimulation, and effectively reduce cognitive impairment (Dawes et al., 2015; Goodwin et al., 2024; Pichora-Fuller et al., 2015).

One potential route to do this is through a realistic, accessible, and low barrier to entry training intervention. Home-based auditory training has been shown to be time- and cost-effective (Van Wilderode et al., 2023). Examples range from speech to sound sources localisation training (Steadman et al., 2019). There is a low barrier to entry, as programmes can be accessed online or through downloading an application to a smart device. There is also high adoption of smartphones in age groups that could benefit from an application. In the United Kingdom in 2023, smartphone ownership was 86% for 55- to 64-year-olds and 80% in those 65 years plus (Statista, 2023). There is also an upward trend in using smartphones for activities other than the traditional means, such as phone calls and messaging. A recent survey by The Office of Communications (the UK’s government-approved communication regulator) found that 38% of adults play online games on mobile devices; this is a significant increase since 2009, where the figure was only 6% (Ofcom, 2022; Statista, 2023). These factors provide an ideal opportunity to offer auditory and cognitive training on smartphones through the lens of gaming, such as a serious game (Henshaw et al., 2015).

A serious game is developed for a purpose other than entertainment and can be used to train or educate the player. Multiple games have been produced to train diminishing cognition through healthy ageing. NeuroRacer (Anguera et al., 2013) was a 3D driving game played by older adults over a 4-week period, who demonstrated improvements in working memory and sustained attention. Interestingly, these two functions were not primarily targeted for training by this particular game and are so deemed off-task, as opposed to training targeted within the training known as on-task. The authors suggested a transfer of benefit occurred outside of the on-task performance. This improvement was sustained over a subsequent 6-month follow-up due to, as the authors suggest, the custom nature of the game and the fact that is was played outside of a traditional laboratory environment. Building on this work, Talaei-Khoei and Daniel (2018) found that even the perception of a transfer of benefit is important in older adults. One key finding from their study was that if a cognitive training game was seen to be empowering rather than supportive it could provide longer-term benefits. Participants felt that by playing a cognitive game using intellectual exercise, they could transfer this learning to sustain function through daily tasks in their real lives, such as reading, having conversations, and remembering to take medication.

Within the realms of auditory and cognitive training, there are a number of studies investigating the role that Speech-in-Noise (SiN) plays in cognition, albeit with mixed results. Research has focused on the interactions between SiN perception and cognitive performance that could signal how interventions can be designed to maximise the efficacy of future training programmes.

Dryden et al. (2017) conducted a systematic review assessing the association between SiN perception and cognitive ability for normal to moderate hearing-impaired subjects and whether this varied by type of outcome measure used. The review suggested cognitive performance and SiN perception shared an association, although this did depend on the type of cognition being assessed, auditory stimuli, and masker. Interestingly, the review focussed on “preclinical, unaided listeners,” as it was suggested that any device intervention could affect the interaction between the cognitive effort and how the degraded signals in the SiN tests were processed.

One type of cognition that has been assessed is working memory. There has been debate in the field regarding the role working memory might play in SiN perception, independent of the stimuli and masker types, but especially for those that use sentences as targets and a complex masker (Akeroyd, 2008; Rönnberg et al., 2013). The Ease of Language Understanding Model (ELU) (Rönnberg et al., 2019) suggests that humans possess a stored “arsenal” of phonological representations, so when there is a mismatch between this arsenal and the auditory stimulation, there becomes an increase in reliance on working memory to correct this supposed mismatch. This is especially relevant in SiN perception as the mismatch may be due to background noise. It follows that those who possess a better working memory can be more adept at ironing out the mismatch, granting improved SiN (Henshaw et al., 2022; Zekveld et al., 2012). Again, there is cause for caution to be exercised on the impact of working memory for all listeners. Füllgrabe and Rosen (2016) found that in normal hearing listeners under the age of 40, there was no evidence to suggest that working memory was a strong predictor of SiN ability.

Ferguson et al. (2019) reported significant off-task training improvements in working memory, as well as divided attention and self-reported hearing. This was for a phoneme discrimination training task, which was sustained for a 4-week follow-up period and included reported on-task learning. Other studies have also demonstrated that untrained measures of cognition, speech perception, and self-reported hearing efficacy have been improved with a degree of auditory training (Anderson et al., 2013; Ferguson et al., 2014; Saunders et al., 2016).

In a recent blinded RCT by Henshaw et al. (2022), the cognitive programme Cogmed Working Memory Training programme was evaluated with adult hearing aid users aged 50–75. This focused on whether training working memory processes (on-task) could better outcomes for adult hearing aid users in tasks with affinity for the training tasks and for abilities that were untrained (off-task). Although the experimental group demonstrated statistically significant improvements in the trained working memory task and self-reported hearing ability, there was no evidence to suggest that this on-task learning could be generalised to untrained measures for either cognitive or SiN ability. Although Henshaw et al. (2022) suggested that targeting executive functions in a combined auditory-cognitive training approach could yield the best results. Authors emphasised the importance of ensuring that the training stimuli should hold relevance to real-life listening situations where users are challenged, to maintain a motivation to continue; that the nature of the training should be designed by its target user groups. This suggestion draws parallels from the findings from Frost et al. (2020), whereby facilitators and barriers to an auditory-training programme were elicited from a participatory design process with relevant stakeholders, including service users, clinicians, and researchers. From an iterative design approach, six main themes were identified when designing an auditory-cognitive training application for users in mid-life with self-reported concerns about their hearing and/or their memory. These were (1) congruence with hobbies, (2) life gets in the way, (3) motivational challenge, (4) accessibility, (5) addictive competition, and (6) realism.

The themes demonstrated a mixture of facilitators and barriers. For instance, participants felt that the game needed to be an extension of an enjoyable hobby to garner and maintain interest. Stakeholders preferred a design aesthetic that mirrored real life, such as scenarios they would often frequent, as opposed to more gamified fantasy settings. Ensuring the game was accessible in terms of its appearance and challenging by using multiple scenarios were also facilitators. Sharing scores to add in a competitive challenge among peers and family was deemed important for some to help overcome a potential barrier of life’s other chores and responsibilities getting in the way of finding time to play.

There is scope to further investigate how working memory may interact with SiN performance and an argument that embedding this training within a serious game, instead of a traditional training programme could affect on how users can translate their learning within the game to their daily lives to provide easy-access benefits to on- and off task-learning.

HELIX has therefore been developed as a serious game, to reap the benefits from transposing a traditional computer-based training programme into a game-based play format. Therefore, providing an auditory-cognitive training application that targets parts of executive functions via working memory aimed at those on the periphery of age-acquired hearing loss and/or cognitive impairment. By primarily targeting this group of the population, i.e., those that are not using hearing interventions such as hearing aids, HELIX has the potential to provide a non–device-based intervention earlier in the ageing process that can be viewed as empowering rather than supportive. Using realistic scenarios that are relevant to daily listening situations also provides users with the opportunity to build confidence in executing daily tasks in these simulated scenarios. In this study, HELIX was evaluated.

Aims and objectives

The principal research question was how can prolonged training through a serious game affect speech perception in noise and cognitive performance in a mid-life population at risk of age-related hearing loss and mild cognitive impairment. The aim was to test the beta-version of the HELIX application in a sample population.

To achieve this aim, the primary objective was to evaluate the use of HELIX on SiN performance, with additional objectives:

To evaluate the use of HELIX on a battery of cognitive tests.

To evaluate the use of HELIX on communication confidence.

To measure the impact of HELIX on quality of life through self-report.

To measure the usability of HELIX through self-report.

Materials and methods

This study has been reported in accordance with the CONSORT statement (Schulz et al., 2010) and the COREQ checklist (Tong et al., 2007). The study was approved by the London—Bromley Research Ethics Committee (reference number 19/LO/1662).

Participants

Participants were recruited from a variety of sources including NHS Audiology and Cognitive disorder clinics, Join Dementia Research which is funded by the Department of Health and Social Care and delivered in partnership by the National Institute for Health Research (NIHR), Alzheimer Scotland, Alzheimer’s Research UK and the Alzheimer’s Society, and social media across England. All participants gave written informed consent and were provided with a £25 Amazon voucher on completion of the study.

Participants were required to meet five inclusion criteria: (i) 40–75 years old, (ii) report mild subjective concerns regarding their hearing and/or their memory, (iii) not using hearing amplification, (iv) not have a formal medical dementia diagnosis, and (v) to be able to use an iPad or iPhone without the need for assistance. To supplement the self-declaration of criteria (ii), screening tests were carried out to confirm participants did not have a suspected moderate-severe hearing or cognitive impairment. Data collection was completed between May and November in 2022. Due to the recovery of health services following the COVID-19 lockdowns, it was not possible to complete face-to-face hearing tests (Pure-Tone Audiometry), the entirety of this study was therefore digitalised to reduce footfall in hospitals and reduce risk for participants. SHOEBOX online (Thai-Van et al., 2023) was used as a hearing screening measure for participants to complete via a personalised link. Participants were required to score between 70 and 100 indicating normal hearing or between 30 and 70 indicating a mild-to-moderate hearing loss. A score of 30 or less would indicate a significant loss, and participants would therefore be excluded and referred on for management. This hearing screen could be completed on any device with any headphones. This online screen was chosen as it was utilised by NHS Audiology services during the pandemic to screen and reassess hearing. The Addenbrooke’s Cognitive Examination III (ACE-III) Remote Administration—UK Version A (Hsieh et al., 2013) was used to screen for cognitive impairment and was completed via a video call between the lead researcher and participants, using the participants’ preferred platform. Participants were required to score at least 82/100, indicating normal or mildly impaired cognition, as a score of less than 82 would be indicative of more severe cognitive impairment requiring management.

A sample size calculation was used for a two-sided significance of .05, a power of 80%, and the effect size of 0.89. This calculation required 21 participants per group, 42 in total. This calculation was based on a previous paper, which used a similar design methodology and the same primary outcome measure, which was the Digit-Triplet-Test (DTT) (Ferguson et al., 2014).

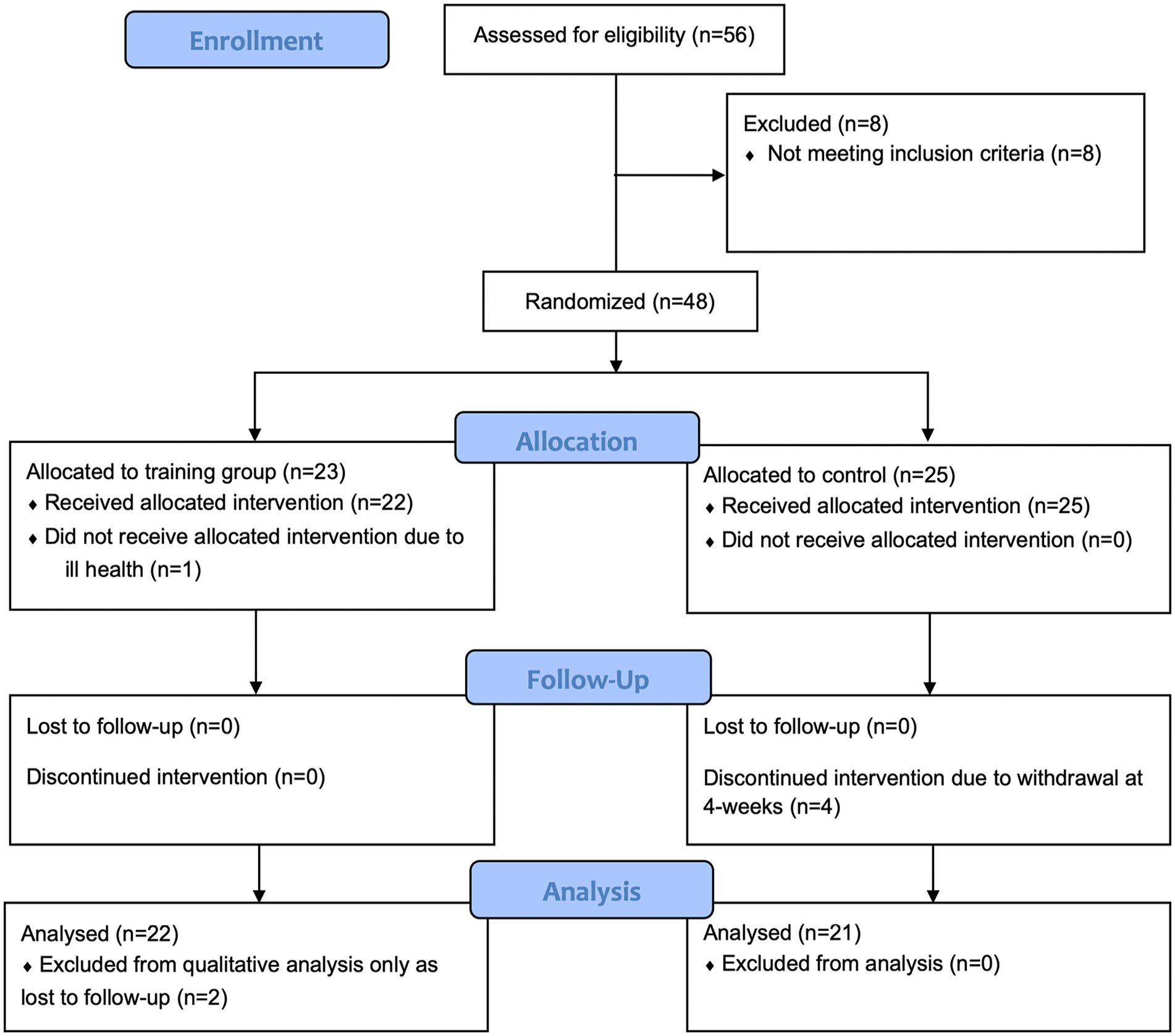

A total of 56 participants met inclusion criteria; of those 48 completed and passed both screening measures. A further 5 participants dropped out across the course of the study, resulting in 43 participants who completed (28 females:15 males; aged 40–74; M = 60.16 years old, SD = 8.10) (see Figure 1).

CONSORT flow diagram.

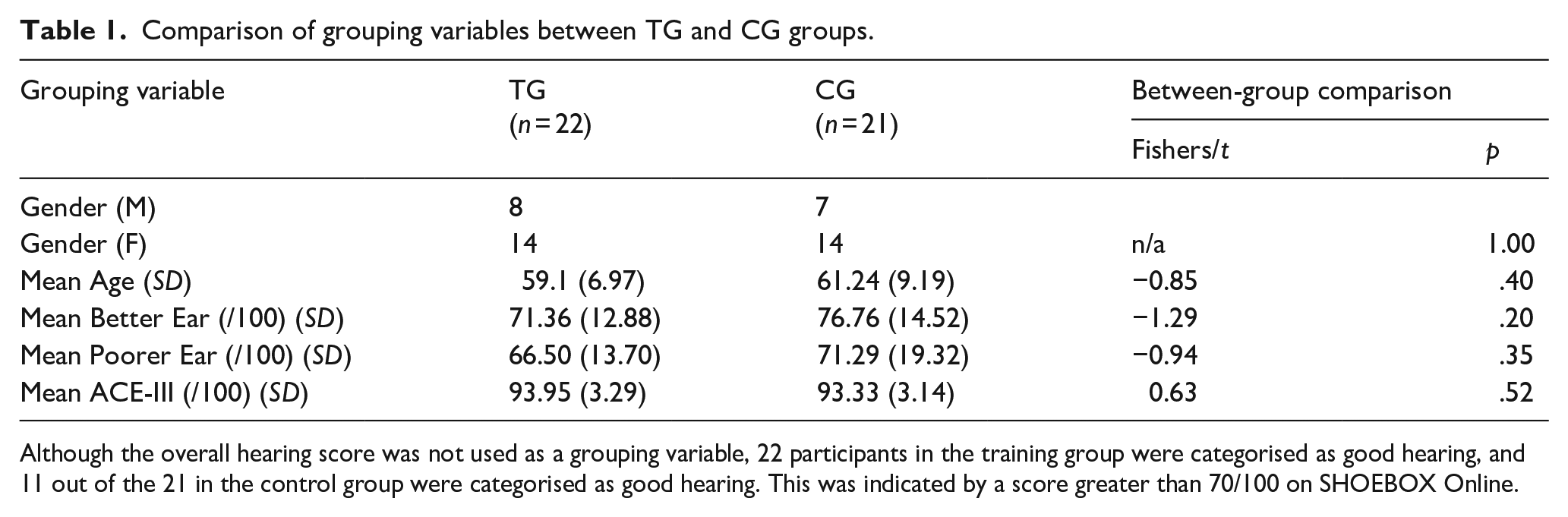

Participants were allocated to either the training group (TG) or a passive control group (CG) using the minimisation method, as used in a similar study by Ferguson et al. (2014). Similar grouping variables were used in this current study, which were better ear hearing scores on SHOEBOX Online (better ear between 70 and 100; poorer ear between 30 and 69) and gender. Age was also used as a grouping variable; the protected age grouping variable was younger (40–57):older (58–75). A summary can be found in Table 1.

Comparison of grouping variables between TG and CG groups.

Although the overall hearing score was not used as a grouping variable, 22 participants in the training group were categorised as good hearing, and 11 out of the 21 in the control group were categorised as good hearing. This was indicated by a score greater than 70/100 on SHOEBOX Online.

Study design

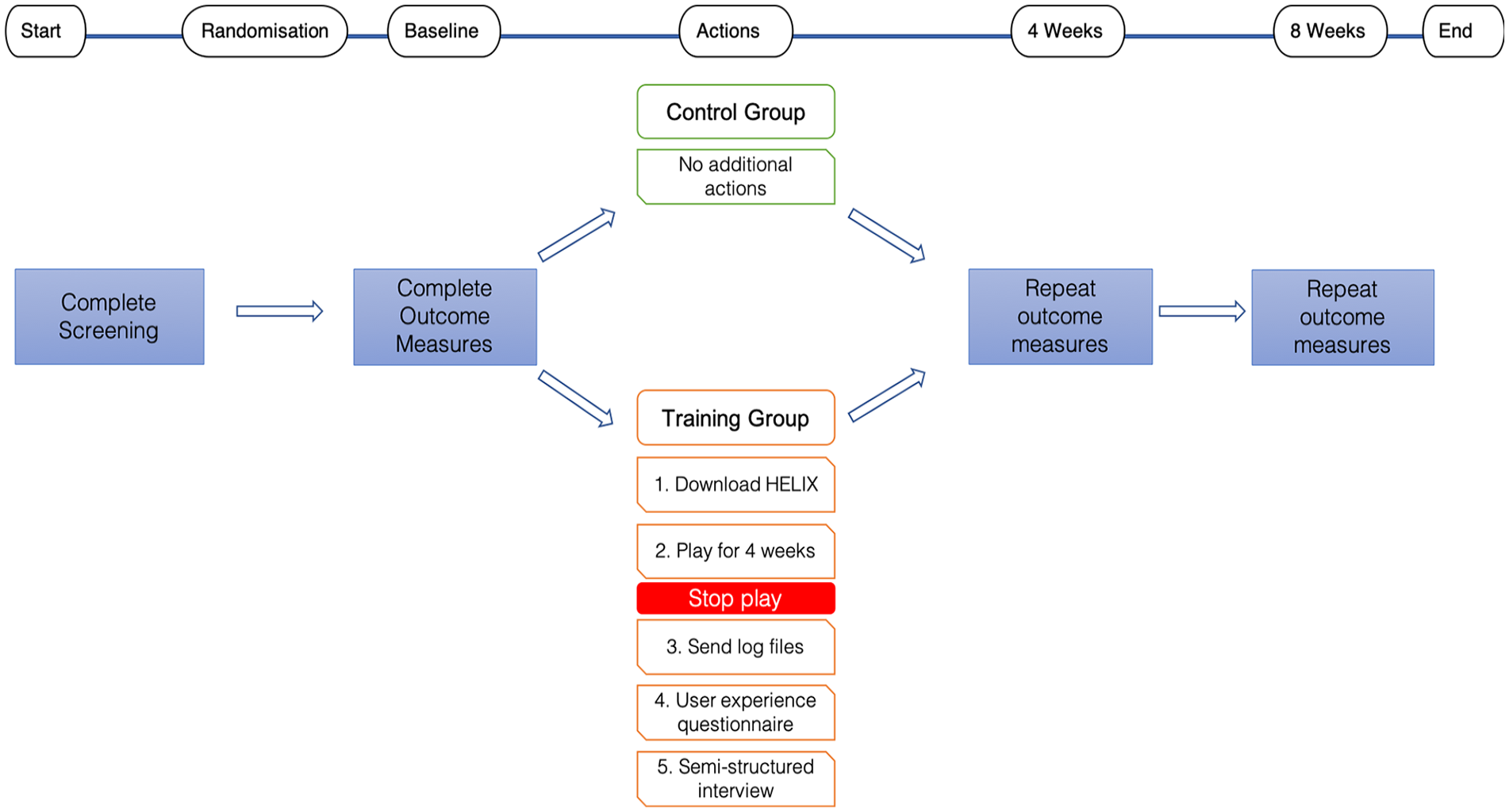

Participants completed a set of baseline outcome measures to assess a range of performance and self-report. Following randomisation, the TG was asked to download the application HELIX onto their own iPad or iPhone using TestFlight. Written instructions on how to download the test application were provided with easy-to-follow steps, screenshots, and invitation link. Once downloaded, participants were asked to set the URL to access the content and were given the option to enable notifications to remind them to play daily.

If participants did not have access to an iOS device or headphones, a loan device was provided for the duration of the study with the application installed and notifications enabled.

A second set of written instructions was provided explaining how the application should be used; this was for a minimum of 10 min per day, for 6 days per week for 4 weeks. This was the same method used for the weekly playtime in a similar study (Ferguson et al., 2014), whereby participants recorded a high compliance. There were no dropouts during the training period; it was therefore assumed that using a similar design in a similar participant pool would yield adequate levels of participation and usable data. Participants were asked to use headphones when using the app; however, it was not possible to enforce this as the app was intended to blend into daily routines and participants were using the app independently. After completing their 4 weeks of play, the TG was required to download and send their data log files of the gameplay to the researcher. A user experience questionnaire and paired semi-structured interviews were also completed for the TG to conduct an evaluation of the application and obtain qualitative data to aid in answering the secondary objectives. Outcome measures were repeated by all participants after 4 weeks—to assess any initial changes, and after 8 weeks to assess any longer-term changes without application play. An overview of the study design can be found in Figure 2.

Overview of study design showing data collection and action points for all participants.

Application design

Procedure

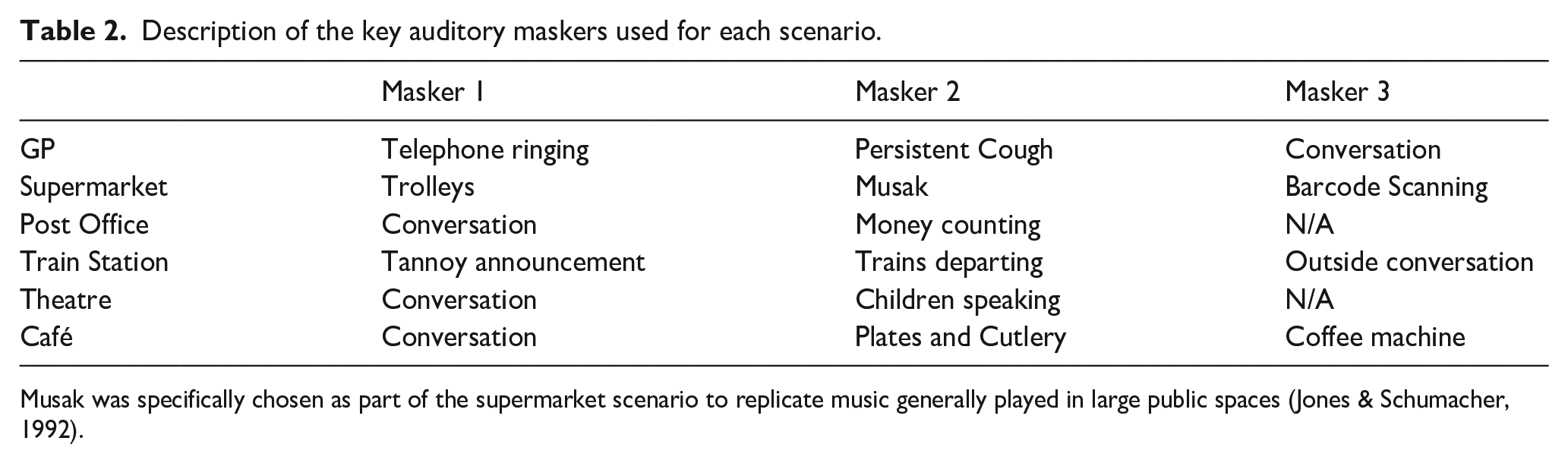

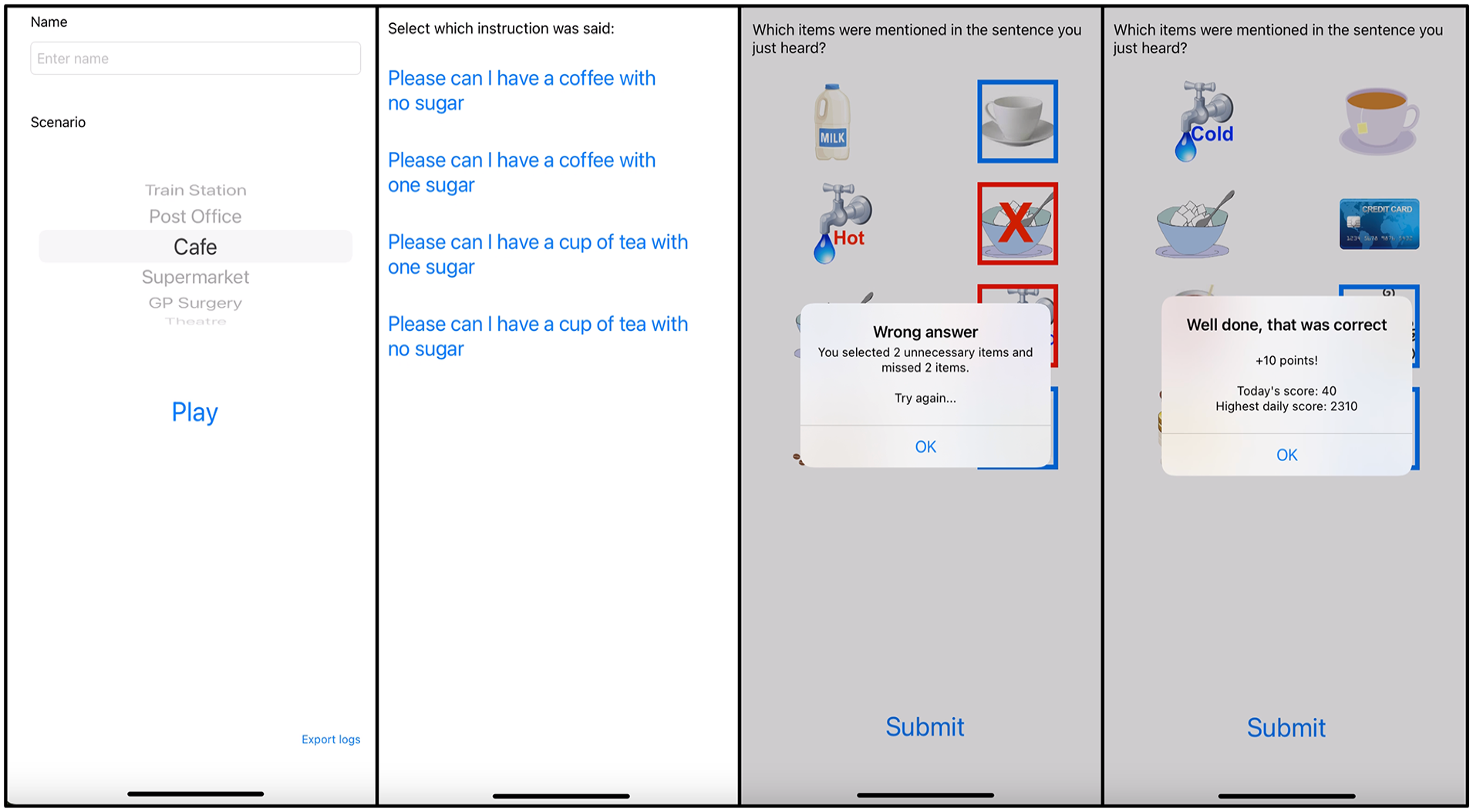

HELIX was designed to provide auditory-cognitive training through two tasks, the first being an auditory task and the second being a recall task targeting working and immediate memory. Each task will be described separately in the order the participants completed them. In each scenario, users were first presented with an auditory task. This required them to listen to an instruction (stimulus) and correctly identify it from a multiple choice of four similarly worded text options used as distractors. The auditory instruction would be presented within a masker produced to replicate the environment in each scenario. The masker for each level was designed to specifically mirror typical content for that scenario to maintain auditory realism, rather than using a multitalker babble for each scenario. For each Scenario 2–3, main auditory maskers were identified. Free-to-use audio files for each masker were downloaded from open-access sound effects websites. Each masker was then uploaded into the 3D Tune-In Toolkit (Cuevas-Rodríguez et al., 2019) for the spatialisation, which was performed in the binaural domain, therefore specifically for headphones playback. The 30-s virtual soundscapes for each specific scenario were created. The target stimulus was spatialised as well using the same method. A summary of the maskers is included in Table 2. An incorrect choice would trigger the replaying of the stimulus at a +2 dB more favourable signal-to-noise ratio (SNR), with the same multiple-choice options until the correct answer was chosen, although the order of the text options varied each time. This ensured the stimulus was audible enough to understand the instruction before moving to the cognitive task.

Description of the key auditory maskers used for each scenario.

Musak was specifically chosen as part of the supermarket scenario to replicate music generally played in large public spaces (Jones & Schumacher, 1992).

This second task was a recall task, targeting working and immediate memory. Participants were required to select images that represented the key words in the auditory instruction from the previous task. If all keywords were chosen correctly, the user would move on to the next level at a less favourable SNR. If incorrect, the user would receive feedback on how many images were correct, incorrect, and how many more/less were needed. The user would be given three attempts before being asked to move to the next level at the same SNR. Figure 3 shows the screenshots demonstrating the tasks and interface.

Examples of screenshots including the homescreen with scenario choice, multiple choice for auditory task, example of feedback with encouragement to retry, and positive endorsement with scoring on correct answer triggering progress to the next level.

Scenarios

Users had 6 scenarios available, with 10 levels per scenario equalling a total of 60 levels. Participants were asked to play each scenario at least once across the 4-week play period, following this, they could choose which scenarios to play and how many levels to complete as long as it satisfied the 10-min per day criteria. The scenarios were chosen based on the participatory design process (Frost et al., 2020) and literature, as they represented environments that the users would regularly frequent in their daily lives that would provide a challenging auditory soundscape, yet also require cognitive resources, thus providing a situation that would require speech recognition, understanding, and cognitive recall. The scenarios chosen were as follows:

Train Station.

Post Office.

Café.

Supermarket.

GP Surgery.

Theatre.

In each of the scenarios, the 10 levels were organised to increase in difficulty as the scenario progressed: Levels 1–3 (easy-1), 4–6 (medium-2), and 7–10 (hard-3).

For example, in the train station scenario, the easy tasks consisted of three key words or phrases: (Easy) The

The distractors for the key words were simple, such as Bristol Temple Meads, Bristol, 14:15 and Platform 15.

For medium tasks, the difficulty increased by introducing a fourth keyword or phrase, therefore adding to the cognitive load of the task.

(Medium) We’re sorry to announce that the

For hard tasks, the number of key words or phrases remained at 4, but become more complex in their instruction with multiple numbers or timestamps that could be easily misheard and misremembered, relying on greater cognitive resources to achieve the correct answer.

(Hard) Your seat reservation is for

Users would not be able to proceed to the cognitive task until the auditory task was correctly completed.

The coding of the application was completed using a JavaScript Object Notation (json).

All audio files for the stimuli were recorded by the researcher, a female speaker, using a Blue Snowball USB microphone and Audacity v2.3.2. All recordings were completed in the same session to ensure consistency. Signal levels were modified where needed to ensure that all stimuli were reproduced at the same Root Mean Square (RMS) level and calibrated with the background masker tracks.

The application underwent a short pilot, where minimal changes to some of the images were made, and the instructions for download were edited for added clarity.

Outcome measures

Measures were chosen based on their reliability, validity, and ability to be delivered online or remotely without impeding their effectiveness.

DTT

Speech recognition in noise was assessed using a newly developed version of the DTT, based on the parameters used in Cullington and Aidi (2017). This version of the DTT was designed to give an SNR output score in dB and have the results be freely accessible online. Versions of the DTT do exist and are currently available online, but none of them outputs an accurate log of the results data (they generally output overall estimates of performance, recommending whether or not to visit an audiologist to carry out further assessment), which is what our custom version does. As an overall score is also produced, this can be compared over multiple attempts. The DTT was implemented into the online platform using recordings made with support from the NIHR Manchester Biomedical Research Centre (Moore et al., 2019). A secondary version of the DTT which does not store results and is available for public use can be accessed via axdesign.co.uk/tools-and-devices/digit-triplet-test.

Cognitive tests

Cognitive performance was measured online via a customised battery of tests from a cognitive assessment platform (Cognitron), to remotely assess cognitive performance. A platform was set up specifically for this study, with particular tests chosen to measure and monitor performance in domains that HELIX targeted and may produce a transfer of benefit. The list below details the name of the test and related domain:

Prospective memory word list (immediate memory).

Target detection and Selective attention (attention).

2D manipulations (planning, manipulation, and information processing).

Blocks and Tower of London (planning and reasoning).

Spatial span (working memory).

Choice reaction time (attention and information processing).

Verbal analogies (verbal reasoning).

Self-report

For this study, the 12-item Communication Confidence Profile (CCP) was chosen to assess participants’ confidence in effective communication via a range of communication skills (Sweetow & Sabes, 2010). A second self-report measure was used to assess quality of life. The WHO Quality Of Life Age (WHOQOL-AGE) questionnaire was chosen as a short 13-item questionnaire designed as a specific tool to assess quality of life in an ageing population (Caballero et al., 2013). Links to both the DTT and Cognitron test, battery platforms were accessible alongside the self-report questionnaires through a Google Form, sent to each participant at each timepoint.

In addition, eight paired 60-min semi-structured interviews (DeJonckheere & Vaughn, 2019) were conducted with the TG via Zoom by the lead author (E.F., female), who had previous experience in conducting interviews. Only audio was recorded. The purpose was to explore the impact of using HELIX on quality of life and to elicit more detailed feedback on the usability of HELIX. Due to technical issues joining the Zoom call or scheduling conflicts, three participants completed their interviews alone. Two participants did not complete interviews. Paired semi-structured interviews were used with a topic guide, which is included in the supplementary files. Field notes were made by the lead author during the interviews. Audio recordings were transcribed in full, stored, and coded in Nvivo (Version 14). Coding was completed by two female authors (E.F., A.M.).

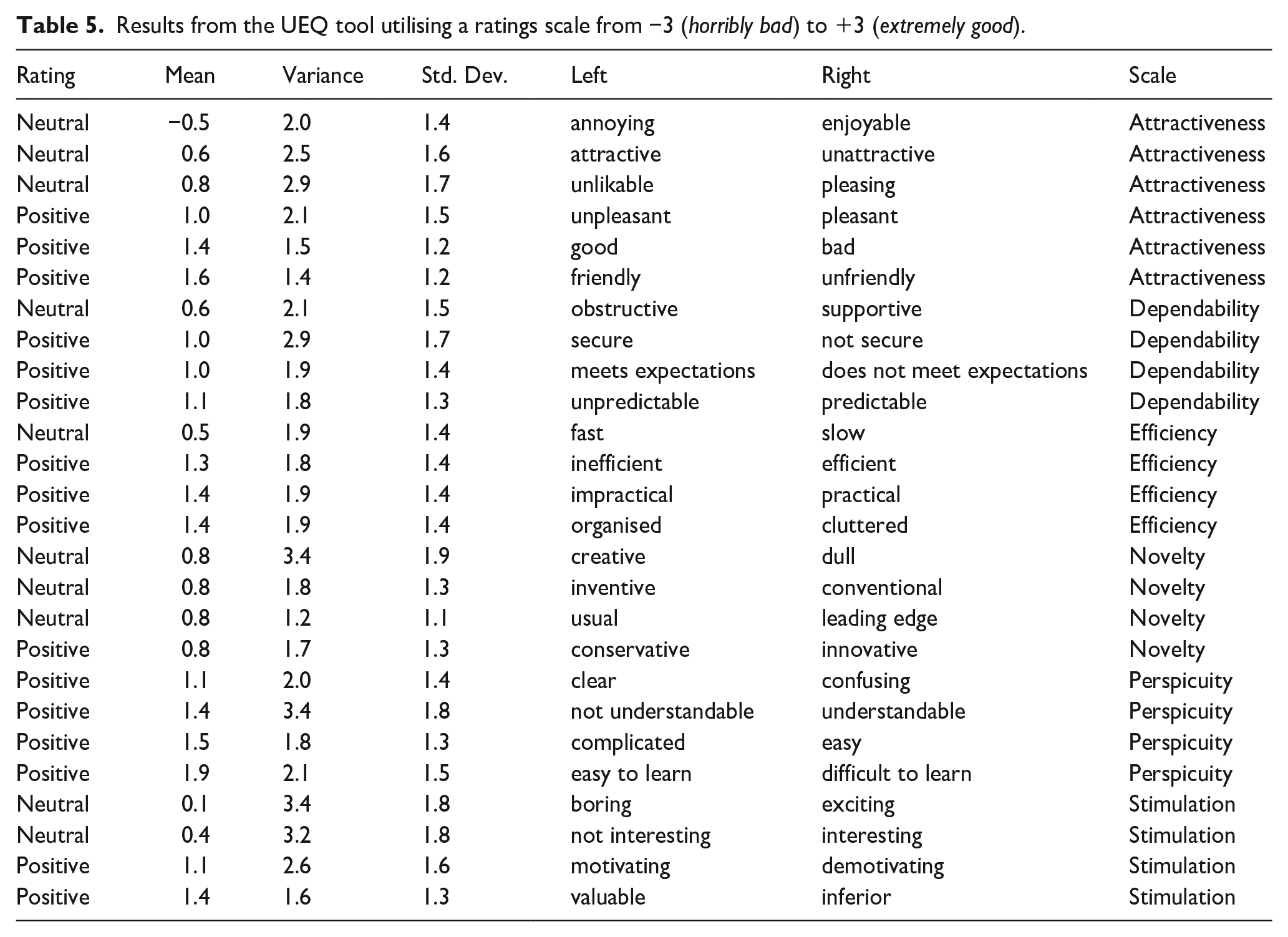

Finally, the TG completed the User Experience Questionnaire (UEQ). This was primarily used to assess usability and experience to provide data that may be used to improve the application in the future. Second, it was used to identify any possible trends that may account for the data obtained in any of the other outcome measures, e.g., a lack of stimulation, efficiency, or originality. The UEQ original version was used, comprised 26 questions using a 7-point Likert-type scale. Data were collected for 17 TG participants (Schrepp et al., 2014).

Results

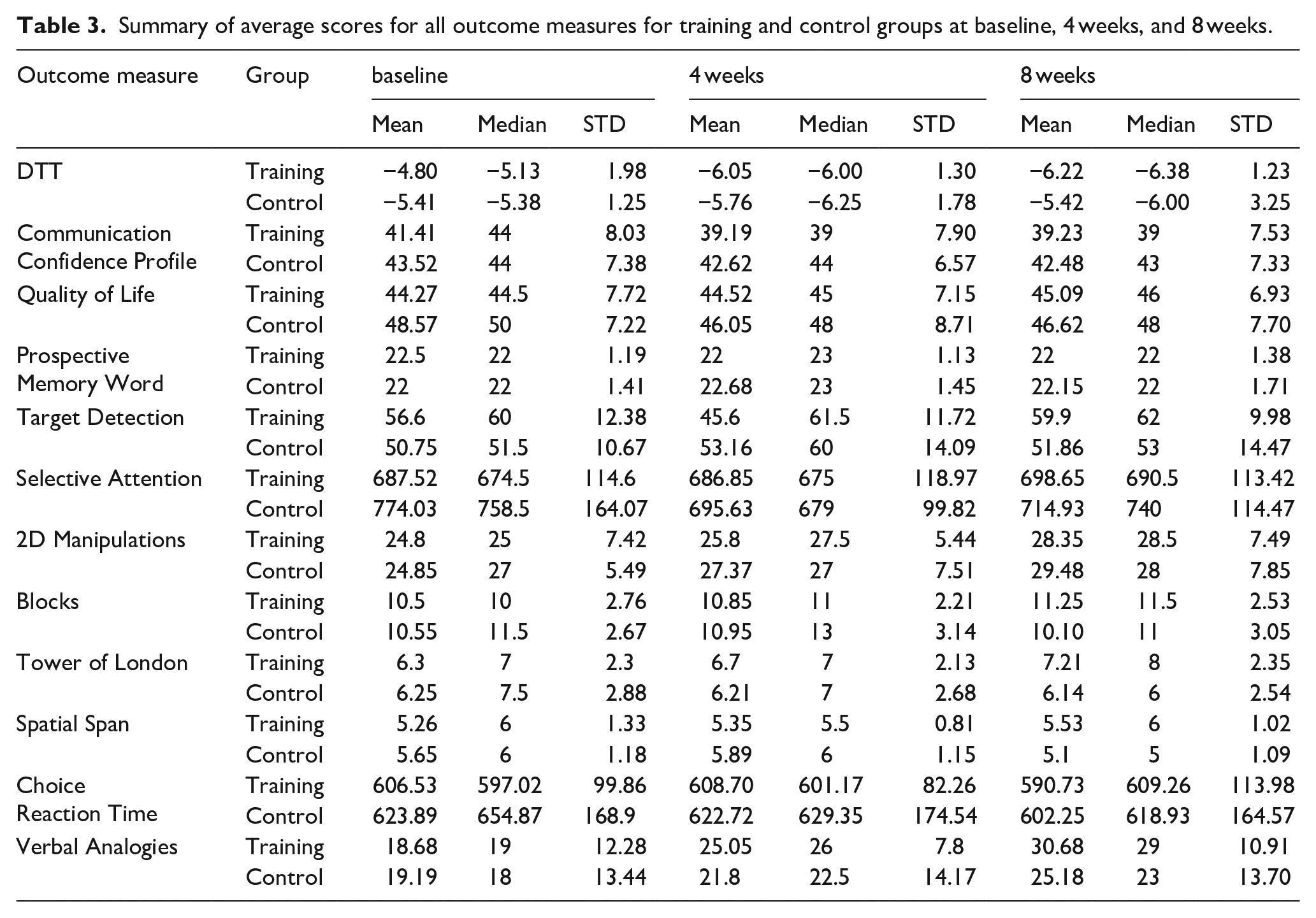

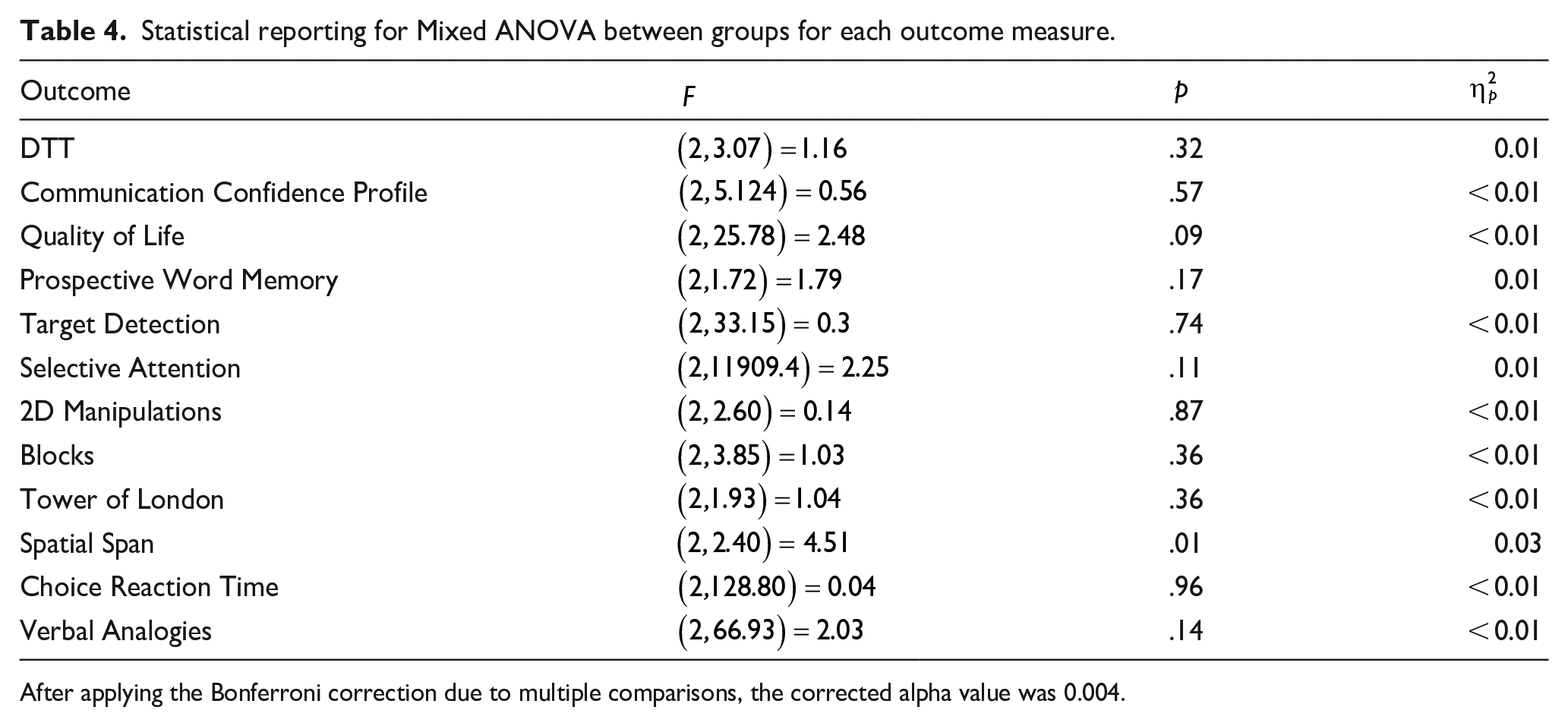

Data analysis was carried out using MATLAB R2023a. Descriptive statistics for all outcome measures can be found in Table 3. Mixed Analysis of Variance (ANOVA) between groups was completed for all outcome measures; statistics can be found in Table 4.

Summary of average scores for all outcome measures for training and control groups at baseline, 4 weeks, and 8 weeks.

Statistical reporting for Mixed ANOVA between groups for each outcome measure.

After applying the Bonferroni correction due to multiple comparisons, the corrected alpha value was 0.004.

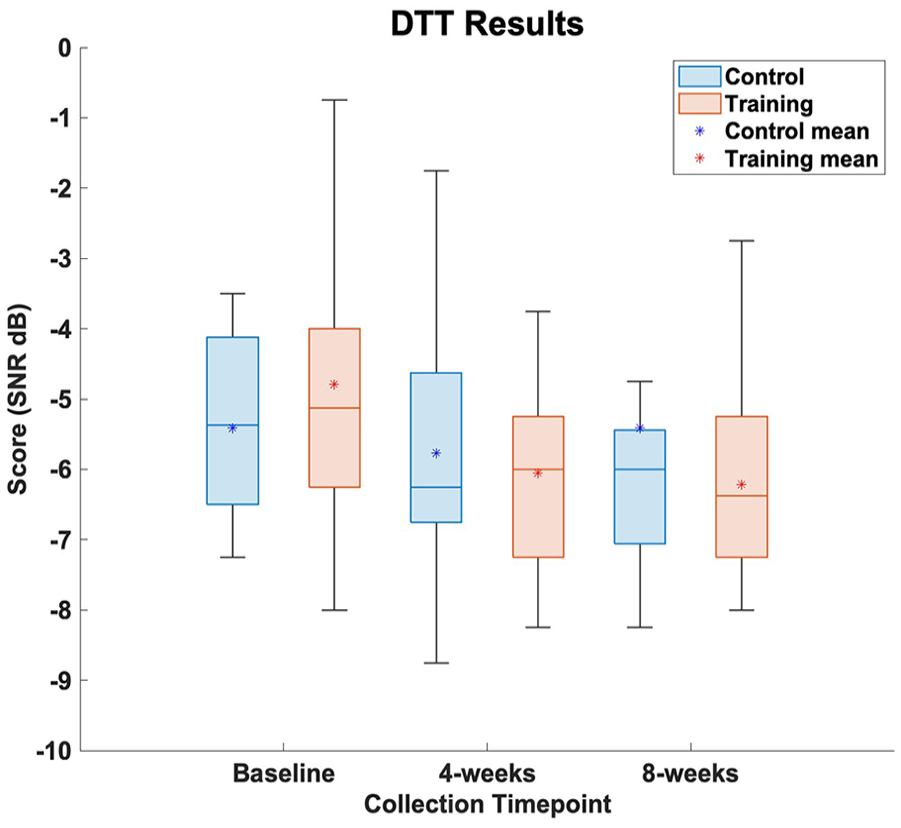

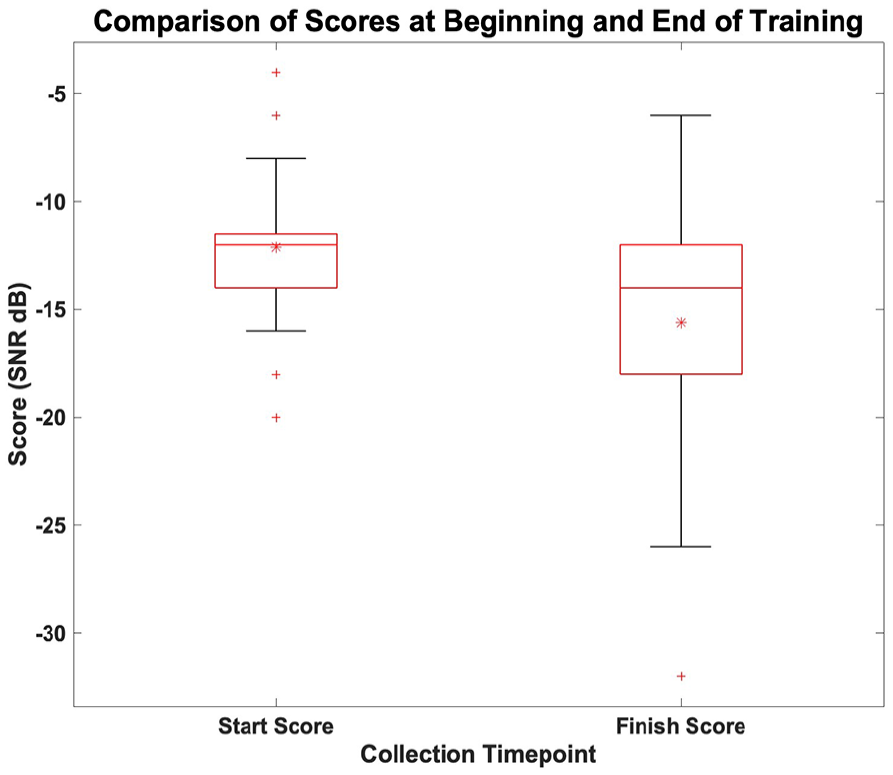

DTT

Repeated measures ANOVA within groups was completed for the TG to assess the impact of using HELIX across the three data collection points—Baseline, 4 weeks (after 4 weeks of regular use), and 8 weeks (following a 4-week period of not using the app). A significant effect of time was found [F(2,40) = 6.11, p < .01,

Paired boxplots demonstrating total DTT scores for both groups at each collection timepoint.

A mixed ANOVA was completed between groups. No significant effects between group and timepoint were found [F(2, 3.058) = 1.14, p = .3238,

Cognitive tests

Statistical analysis showed there were no significant improvements after using HELIX in the cognitive tests.

Self-report measures

There were no significant differences found in either the Communication Confidence Profile of the Quality of Life measures between groups.

User experience

The UEQ was analysed using the UEQ Data Analysis tool. The questionnaire measured the use of the application across six domains: Attractiveness, Perspicuity, Efficiency, Dependability, Stimulation, and Novelty by asking users to rate between two categories, e.g., annoying or enjoyable. The analysis tool rates the scale from −3 (horribly bad) to +3 (extremely good). Overall, the scores can be interpreted as follows: less than −0.8 as negative, −0.8 to +0.8 as neutral, and greater than 0.8 as positive. Positive scores were received for attractiveness, perspicuity, efficiency, and dependability, whereas stimulation and novelty received neutral scores. The highest scores reflect the application’s perspicuity, with more positive evaluations towards understandable, easy to learn, easy, and clear. The lowest scores were recorded for stimulation, with more neutral scores given for boring/exciting and not interesting/interesting. There was a more positive score received in this scale, however, for the pairing inferior/valuable. Mean values per item are listed in Table 5.

Results from the UEQ tool utilising a ratings scale from −3 (horribly bad) to +3 (extremely good).

Data logs

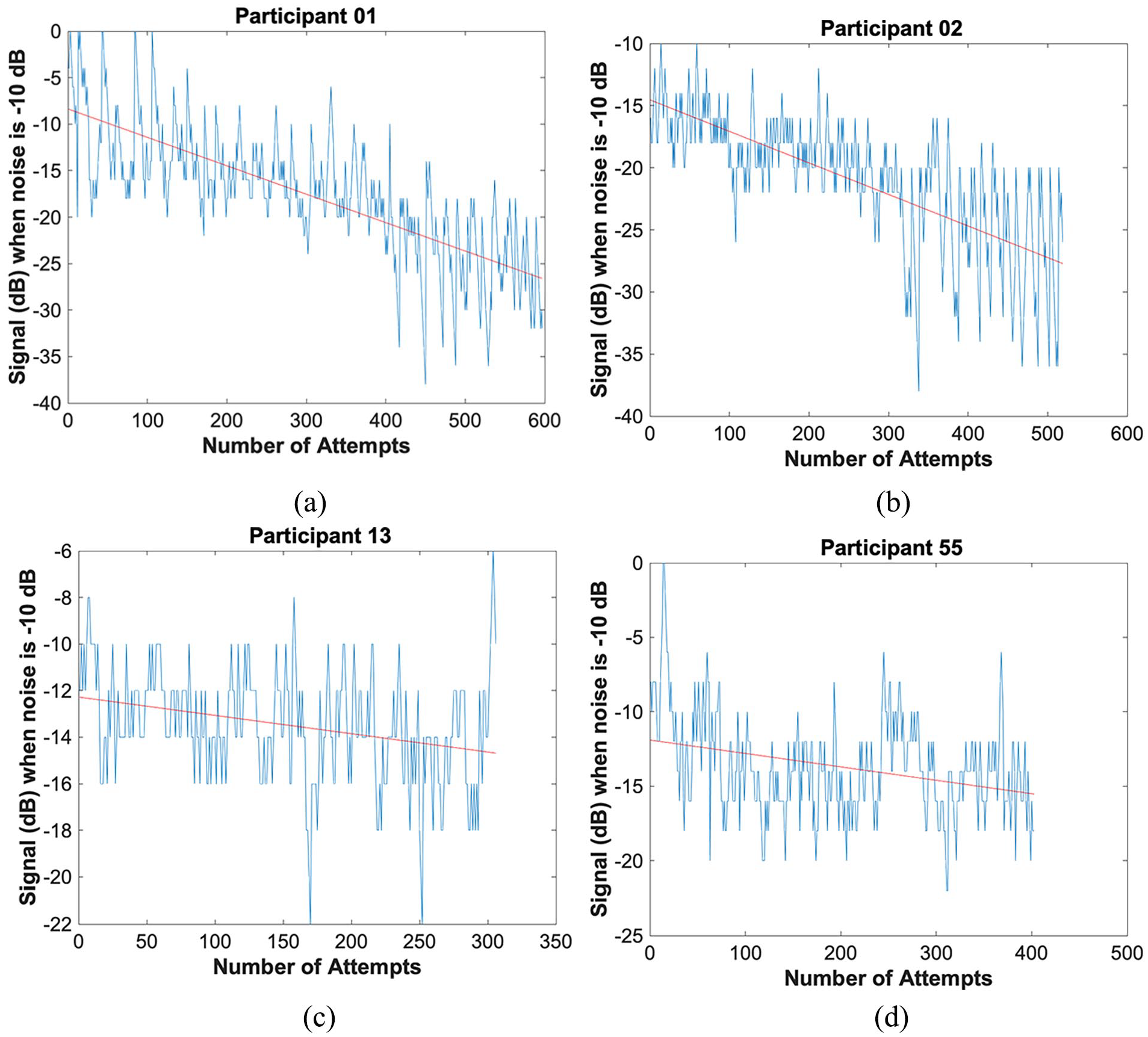

In addition to data logs, a t-test was carried out to analyse performance at the start and the end of the play period. The result from the start (M = −12.10, SD = 4.07) and end (M = −15.62, SD = 5.68) demonstrated an improvement in performance [t(40) = 2.31, p < .05]. A demonstration of change from start to finish for all participants is shown in Figure 5, and a selection of the logs is included in Figure 6.

Boxplots demonstrating the SNR at the beginning of the 4-week training period and the end, * indicates the mean values, and + indicates the outliers.

Plots displaying the signal amplitude compared with the noise signal of −10 dB for all attempts during the 4-week period for a selection of participants. A line of best fit has been added to highlight change over the play period. (a) Data logs for Participant 01; (b) data logs for Participant 02; (c) data logs for Participant 13; and (d) data logs for Participant 55.

Qualitative analysis

A thematic analysis approach (Braun & Clarke, 2006) was used to establish themes based on the effectiveness of HELIX on quality of life and its general usability.

Three themes emerged around quality of life, which were (1) immersive scenarios prompted realisation of difficulties and actions required, (2) gameplay fostered improved listening and recall self-efficacy, and (3) positive behaviour change. Three themes on usability were (4) repetition obstructed engagement, (5) easy to incorporate into daily life, and (6) improved scoring system required to be motivational.

Immersive scenarios prompted realisation of difficulties and actions required

Participants reported that not only did HELIX highlight their existing issues with their hearing and memory, but it also made them realise the difficulties they encountered in their daily lives. There was a consensus that because the immersive scenarios used were realistic and relatable, this prompted them to reflect more on how they were performing in their real lives, such as at work or with their families in terms of their hearing moreso than memory: You can see with your own eyes how much you struggle so it’s not just a subjective thing that is happening when you are in a Cafe or you are out and about with your friends. (P3) One of the things I started doing in the app was guessing, I wasn’t actually hearing it properly and then I realised I was doing that a lot in spoken conversation. (P9)

Not only did it cause self-reflection over their challenges with their hearing and memory, but it also prompted action: I should be getting an Audiology test and I would accept that I probably need hearing aids of some description. (P11) I think it is definitely something, on the way to get some help. (P56) I’m going to go and get a hearing test. (P40)

Gameplay fostered improved listening and recall self-efficacy

The repetition within HELIX provided players with increased confidence as they found across the 4-week play period that they were able to remember the keywords in the auditory stimuli. This familiarity with the scenarios and relationships between the stimuli and response options allowed players to leverage the memory of past experiences, contextual cues, and deductive reasoning to then formulate informed guesses or assumptions, especially when the comprehension of the key words became harder. Players were then able to complete more content within their 10-min playtime which gave a sense of improvement and achievement: Yes, it changed my confidence, as time went on I found I nearly completed three levels, because I became quicker as the time went on because I could remember the questions. (P1)

Specifically, participants commented that their listening skills improved from playing HELIX, as it highlighted how important concentration was in listening to and recalling information. Participants found that when they actively concentrated, they yielded better results in the game, again boosting confidence in their own abilities to replicate this in the real world, especially in unfamiliar environments: I’d probably feel a little bit more confident maybe, because they would probably be realistic in what you would expect to hear at a post office or a train station, being pre-warned is a little bit more information which makes me feel a bit more confident. (P8) You’re pre-empting things, you’re listening better, you actually are sort of really more focused on it because you are breaking down that noise barrier and actually listening to it. (P9)

Positive behaviour change

Despite the app being basic, but functional in its design, participants commented on how they could transfer their learning and improved listening self-efficacy into the real world in challenging listening situations, both socially and in the workplace. Using HELIX had provided a space where participants were able to take time to learn and reflect about their listening behaviours. This included implementing different behavioural strategies, such as removing distractions or physical changes such as purchasing different headphones to improve clarity by reducing noise. Using HELIX has also aided in fostering awareness about difficulties in hearing in background noise that require different communication tactics, such as asking speakers to adjust their conversational tone when in a noisy environment: Funnily enough, I had that in reality last night, my daughter was trying to talk to me and whilst we were waiting for the cinema to start and the trailers were quite loud and I was thinking I can’t do both of these, I need to choose one or the other. (P30) I need to have peace and quiet . . . I’m going to look at getting better earphones for work . . . Also the other thing is to be a bit bolder in coming back to people and saying either can you say that again or just saying actually I’ve even said from the outset I can’t hear very well in this noisy thing, can you slow down . . . I suppose I’m not ashamed of the fact that I need people to accommodate me too. (P26)

Repetition obstructed engagement

Despite reporting initial enjoyment from using HELIX, participants found that as time went on the limited content became too repetitive. Although some participants reported that the repetitive nature actually made the tasks easier, which boosted their confidence, the majority felt it was detrimental: I quite enjoyed it at the start, but then I got a little bit tired after about two weeks, so other than that it was fine. (P13) Repeat those things regularly so to be honest after four weeks I was pretty bored with it. (P26)

The repetitive nature, mainly due to the limit of 60 tasks, was felt to reduce motivation, engagement, and to deviate from the actual nature of the tasks: Once you knew the answers after a couple of weeks it was pointless. (P2) I remember this and then it’s like you got a bit complacent until the end, I know this so I’m not listening as well. (P12) If I couldn’t hear it properly I could remember what the answer was and then I felt I was cheating, because I was sort of remembering it rather than hearing it. (P30)

Easy to incorporate into daily life

Participants positively identified that the allocated 10-min playtime was easy to build in around their normal daytime activities, regardless of if they were in work or retired. It was reported that this was the right amount of time to maintain concentration and focus while still feeling a sense of achievement: I just did it when I got home after I’d had something to eat so it was in the evening so it wasn’t any trouble at all. (P1) Probably for me, spot on. I think 10 minutes is not a lot of time, I think if it was any shorter I don’t think you’d learn very much from it and I think if it’s any longer people would lose interest in it. (P2) I thought I’m sitting here let’s plough on and see how it goes, but equally I don’t work, I do a lot of other stuff, but I don’t work so I imagine that if I was still working I would prefer the 10 minute bursts. (P23)

In some cases, participants felt that HELIX was a daily task that they would like to continue with in the future: I’d possibly be buying it, but I’d definitely be downloading it if it was free. (P56)

Improved scoring system required to be motivational

As is common in training games, HELIX provided a daily and best score; however, participants felt that this was either unclear or irrelevant to their needs: I stopped looking at the score after a while because I couldn’t see how the score related to what was going on. (P22)

The concept of using a score was welcomed by participants, but they felt it needed to be clearer as to what the score meant, how the score could be changed, and how their score may relate to their peers—to effectively benchmark them against a norm. It was also important for the score to be clearly visible to players: I thought the scoring card when I first saw it I thought oh yeah that’s good because that made me want to kind of achieve a certain level, then I just thought well actually it doesn’t matter does it. (P26) It would be interesting to know how you’re doing in relation to other people of your similar age. (P23) Quite nice if there was a way of like a daily you know statistics or analysis to keep track . . . one day that you’re really tired that you’ve had a really bad day and your score goes down just to really you know start to make an association as for why I’m not listening well today. (P3)

Discussion

Main findings

The purpose of this study was to evaluate the auditory-cognitive training application HELIX across a range of auditory and cognitive tasks, in addition to self-reported communication confidence, quality of life, and overall usability of the app within a population in mid-life with self-reported concerns about their hearing and/or memory.

The primary objective was to evaluate SiN performance using a newly developed online version of the DTT. Although the TG demonstrated an improvement in performance over time that was not seen in the CG, statistical analysis showed that this improvement was not significant between the TG and CG. It is, therefore, not possible to say for certain whether using HELIX resulted in this improvement in the DTT in the TG.

On-task learning within the app was observed; participants demonstrated a statistically significant improvement in their SNR scores across the 4-week play period. This concurs with the large body of research in the field, whereas similarly to Henshaw et al. (2022), off-task learning in this current study was not detected, unlike other studies in auditory training (Anderson et al., 2013; Ferguson et al., 2014; Saunders et al., 2016), which saw improvements following training.

One of the secondary objectives was to evaluate cognitive performance via a battery of online cognitive tests. Although HELIX primarily focused on training working and immediate memory, other cognitive domains were assessed to analyse any potential transfer of benefits.

Unlike previous studies evaluating auditory and cognitive training (Ferguson et al., 2019), there were no significant improvements in any of the cognitive assessments following use of HELIX. This may be due to the nature of the cognitive task and it not being challenging or targeted enough. Although participants reported that they found the auditory tasks challenging, the cognitive task appeared to elicit more frustration due to choosing the images or being too easy. This was also reflected in the UEQ, where the lowest scores were received for stimulation and novelty. There is also a possibility that because the participants for both the TG and CG had a concentration of high scores in the cognitive screening tool (ACE-III), indicating near-normal cognition, that there may have been less ceiling room to improve cognitive performance. The TG and CG scored on average 93.95 and 93.33, respectively, out of 100, whereas the scores for the hearing screen were lower, suggesting the sample had a higher level of hearing impairment compared with cognitive impairment. In the interviews, participants felt they became more aware of their hearing difficulties due to the immersive scenarios and that improvements in the gameplay gave them more confidence in their ability to distinguish the speech stimuli in noise.

The final quantitative objective was to evaluate communication confidence and quality of life; both of these were conducted through questionnaires using closed questions. No significant improvements in either of these measures were reported by the TG; however, the findings from the qualitative analysis lend support to participants feeling they had in fact improved in their listening capabilities and made positive behaviour changes in their lives. This difference in findings is likely due to the semi-structured interviews allowing a greater exploration and in-depth look at the thoughts and experiences of the participants (Harris & Brown, 2019). Utilising both methods of data collection was important given the lack of mixed-methods studies in this field.

Usability

Usability was measured using the UEQ tool. Participants rated their experiences against a number of questions across six scales. Perspicuity and efficiency scored well, with highlights being that the application was easy to learn, understandable, easy, efficient, practical, organised, and friendly. This lends support to previous research that an auditory-cognitive training application is a low-barrier entry intervention that can be easily accessed by a population in mid-life (Henshaw et al., 2015; Van Wilderode et al., 2023). One theme from the interviews was that a short period of daily play on HELIX was easy to incorporate into normal routines. This is a positive finding given that one of the potential barriers identified in the participatory design phase was that the demands of daily living may get in the way of using HELIX (Frost et al., 2020). From the evaluation in this current study, however, this was not deemed to be the case. Participants were instructed to play each scenario at least once, but were then given autonomy to decide which scenarios to play for the remainder of the 4-week play period. This may have been a factor in maintaining motivation, as Henshaw et al. (2015) suggest that providing a free choice in which users can select tasks that hold relevance for them can essentially act as an extrinsic motivator for use.

Lower scores were received for the app being exciting, interesting, and enjoyable, with the latter skewed negatively towards being annoying. Similarly, in the interviews, the repetitive nature of the application received negative feedback as it was perceived to be boring. Although a number of participants did feel the repetitive nature improved their confidence in recall, thereby reducing the difficulty and actually acting as a motivator. Given that HELIX was iteratively designed with stakeholders to ensure it was relevant, it is unusual that these items were scored lowly. The authors do acknowledge that the application was of very simple and basic design, initially developed as exploratory research; the elementary design may have been the reason behind the lower scores for excitement and interest. Future investment in the aesthetic design of the app may improve these elements of usability. Despite the rudimentary design of the application, the qualitative results suggest it was still able to promote transferable, positive listening behaviours.

Thematic analysis

Six themes emerged from the interviews regarding effects on quality of life and usability. Participants felt they became more aware of their hearing difficulties due to the immersive scenarios and that improvements in the gameplay gave them more confidence in their ability to distinguish the speech stimuli in noise both in the game and in real-world scenarios. The authors suggest that this was because participants found that playing the application made them think more about their listening habits in realistic environments, i.e., when they were actively listening and concentrating and how to maximise their concentration to perform better in noisy situations. This draws parallels with the findings from Talaei-Khoei and Daniel (2018) and suggests that participants did perceive a transfer of benefits.

Although there were no significant findings in the quantitative measures, this should not detract from the positive qualitative findings and merits of using a participatory design process (Frost et al., 2020; Henshaw et al., 2015). Utilising a participatory design approach informed key elements of the game design, such as using multiple scenarios relevant to the daily lives of the intended user group. This kept HELIX simple, realistic, and ultimately useful. As suggested by Henshaw et al. (2022), using training stimuli that hold relevance to everyday listening situations ensures that users maintain a high level of engagement and motivation to continue. It is important to employ a mixed methodology, which is currently lacking in the field, to capture new insight into how auditory training can be used over and above quantitative measures (Talaei-Khoei & Daniel, 2018).

Limitations

The authors recognise that an opportunity sampling method was employed during an unprecedented lockdown due to the COVID-19 pandemic. Participants actively volunteered to join this study because they had self-reported concerns about their hearing and/or memory. This can be extremely emotive, especially when they have witnessed relatives’ quality of life affected by hearing loss or dementia. This recruitment was at a time when socialisation was undergoing severe restrictions. The sample, therefore, may have been more motivated than the general population to participate and to improve their hearing and memory, which may be a reason why there was a high compliance in using the application and incentive to improve. A passive “do-nothing” control was used in this study design. On reflection, the authors acknowledge that using an active control, as utilised by Lai et al. (2023), may have controlled for any non-specific learning effects in the study design. It could be suggested that asking an active control to train on an alternative task, not related to auditory-cognitive training, could give greater confidence when attributing any changes in the TG to using HELIX.

The outcome measures used in this current study were largely dictated by what was deliverable and credible during the COVID-19 pandemic. This resulted in all measures being completed online. As previously discussed in the literature (Ferguson et al., 2014), the DTT may not be complex enough to detect changes in SiN. The Quick Speech-In-Noise (QuickSIN) has been shown to successfully detect improved SiN listening, following a Listening and Communication Enhancement (LACE) programme (Lai et al., 2023). It would have been preferable to use QuickSIN in a clinical setting as a primary outcome measure, but this was not possible due to imposed restrictions.

Less positive usability scores are likely due to the basic task set-up and aesthetic. Future investment in the aesthetic design of the app may improve these elements of usability. It should be recognised that despite the rudimentary design of the application, participants still felt play promoted transferable, positive listening behaviours. Although the level of repetition in this current study was at times deemed an annoyance by users, it also acted as a reference point and reassurance for some. Any future designs of similar serious games should aim to include an element of repetition with scope to unlock newer content to maintain challenge.

Further development

There is scope to develop HELIX further, building on the common recommendations of the TG to improve usability and engagement. The main recommendations from the participants were to introduce more variety in content to counteract the negative detractors from repeating the same scenarios. Further scenarios suggested were watching television; asking for directions or using a Sat-Nav; pub or live music; and conversational settings with families or friends. There was also a need for varying speakers in the audio stimuli such as male and children’s voices. There were recommendations made over the accessibility of HELIX. This version was produced for iOS, so Android users required a loan device. For some participants, the images were too small or unclear, so there is scope to improve the visual aspect of the tasks. The authors concur that the recommendations would likely encourage continuous play and embed this application into daily routines for maximum impact.

Supplemental Material

sj-docx-1-qjp-10.1177_17470218251324168 – Supplemental material for HEaring and LIstening eXperience (HELIX): Evaluation of a co-designed serious game for auditory-cognitive training

Supplemental material, sj-docx-1-qjp-10.1177_17470218251324168 for HEaring and LIstening eXperience (HELIX): Evaluation of a co-designed serious game for auditory-cognitive training by Emily Frost, Paresh Malhotra, Talya Porat, Katarina Poole, Aarya Menon and Lorenzo Picinali in Quarterly Journal of Experimental Psychology

Footnotes

Acknowledgements

The authors thank all the participants for contributing their time and efforts for this project.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The project was funded by the Dyson School of Design Engineering, Imperial College London as part of the lead author’s PhD project.

Supplementary Material

The supplementary material is available at: qjep.sagepub.com.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.