Abstract

The ability to focus on task-relevant information while ignoring distractors is essential in many everyday life situations. The question of how profound and moderate visual deprivation impacts the engagement with a demanding memory task (top-down control) while ignoring task-irrelevant perceptual information (bottom-up) is not thoroughly understood. In this experiment, 17 blind individuals, 17 visually impaired individuals and 17 sighted controls were asked to recall the sequence of eight auditorily presented digits. Following digit presentation, two auditory distractor streams including a repetitive presentation of the same syllables (steady-state sounds) or different syllables (changing-state sounds) occurred spoken in different emotional prosodies (happy, fearful, angry, and neutral). Blind individuals not only showed overall superior serial recall performance but also displayed sustained memory retention for items presented more recently in the sequence (specifically at the fifth to the eighth digit positions) compared with sighted and visually impaired individuals. Furthermore, blind individuals showed a weaker serial position effect compared with visually impaired and sighted individuals. Emotional prosody also impacted serial recall differently in blind, visually impaired and sighted controls: Sighted and visually impaired participants exhibited improved serial recall when steady-state sounds carried a fearful or angry prosody. By contrast, in the steady-state condition, emotional prosody had no effect on serial recall performance in blind individuals. These findings may be linked to the enhanced ability of blind individuals to flexibly apply a combination of strategies, such as association and grouping.

Keywords

Introduction

Aristotle was one of the first philosophers to suggest that visual sensory deprivation could enhance memory capacity, as blind individuals have to rely on auditory, tactile and olfactory modalities to process sensory information (Esser, 1961). In support of this assumption, several empirical studies have shown improved auditory memory functions in blind individuals (Amedi et al., 2003; Hull & Mason, 1995; Kattner et al., 2023; Occelli et al., 2017; Pasqualotto et al., 2013; Raz et al., 2007; Röder & Rösler, 2003; Röder et al., 2001; Swanson & Luxenberg, 2009; Withagen et al., 2013), but other studies have reported impaired performance in blind individuals (Rönnberg & Nilsson, 1987; Setti et al., 2018; Swanson & Luxenberg, 2009). The pattern of these mixed findings has been summarised under the umbrella term of either “hypercompensation”—the superior performance in cognitive and perceptual tasks in blind individuals compared with sighted controls (Lewald, 2002)—or as “perceptual deficiency hypothesis”—summarised as the inferior performance in blind compared with sighted individuals (Axelrod, 1959).

Cognitive performance in blind individuals

Consistent with the “hypercompensation account,” enhanced memory performance in blind compared with sighted individuals has been found across various types of stimuli, such as auditory digits (Arcos et al., 2022; Hull & Mason, 1995; Kattner et al., 2023), letters (Arcos et al., 2022), words (Amedi et al., 2003; Occelli et al., 2017; Pasqualotto et al., 2013; Rokem & Ahissar, 2009), auditory sentences (Röder et al., 2001), environmental sounds (Röder & Rösler, 2003), and human vocalisations (Bull et al., 1983). Röder and coauthors (2001) observed superior memory performance in congenitally blind individuals compared with sighted controls when presenting auditory sentences including semantically appropriate or inappropriate words (encoding phase). In the subsequent recognition phase, the same words as during the encoding phase or new words were presented. Congenitally blind individuals discriminated old versus new words more accurately than sighted control participants. The superior memory performance observed in congenitally blind individuals has been linked to their more efficient encoding of physical properties, rather than a heightened ability to recognise semantic content, as suggested by Röder & Rösler in 2003.

According to a more recent study, the higher memory performance in congenitally blind individuals diminishes when testing auditory recognition after a one-year retention interval (Cornell Kärnekull et al., 2018), suggesting that visual deprivation may not improve the consolidation of auditory episodic memory.

The studies outlined above pose the important question of whether enhanced memory functions in blind individuals are related to higher and later-stage cognitive processes, or to their superior encoding of perceptual sensory auditory information. Rokem and Ahissar (2009) suggested that improved auditory memory performance in blind listeners might be related to their enhanced auditory perceptual skills and their enhanced use of auditory information. They observed that when controlling for the ability to recognise syllables, blind individuals did not outperform sighted individuals in the cognitive memory task.

In line with the perceptual deficiency hypothesis, Setti et al. (2018) observed lower performance in blind compared with sighted individuals on an auditory version of the Corsi block task, which involved recalling the spatial sequence of semantic sounds (e.g., bird sounds) or non-semantic sounds (e.g., pure tones) presented from 25 different spatial positions. Blindfolded sighted participants outperformed blind individuals, particularly in the semantic sounds condition. The authors suggested that blind individuals have difficulties combining spatial and semantic information, whereas sighted participants might use visual imagination to support the association of sounds with locations. In addition, the high spatial memory load (14 sounds presented from 25 locations) might impact memory performance in blind individuals (see also Beni & Cornoldi, 1988).

Alternatively, it could be speculated that blind individuals exhibit a reduced Hebb repetition effect in the semantic condition. The Hebb repetition effect, identified by Hebb (1961), is a crucial method for studying sequence learning. In serial recall tasks, where items must be remembered in a specific order, repeating one particular sequence every fourth trial leads to significant memory improvement beyond just the effects of practice (e.g., Horton et al., 2008). This enhancement in performance occurs irrespective whether participants are aware of the repetition (e.g., McKelvie, 1987). However, the Hebb repetition effect seems to be stimulus-specific: Auditory sequences marked by bursts of white noise did not produce a Hebb repetition effect in sighted individuals (Lafond et al., 2010). This suggests that when auditory cues do not support the grouping of sounds into a coherent sequence, such as with the semantic sounds for blind individuals in the Corsi block task, the Hebb effect might diminish (Setti et al., 2018).

According to the cross-calibration theory, the most precise (dominant) sense for a specific perceptual task is used to fine-tune the other senses (Burr & Gori, 2012). For space representation, the visual modality (dominant modality for space perception) might be used to calibrate the auditory modality (Gori et al., 2019). Recent research has revealed that in cases where visual calibration of space is not feasible, like in the case of blindness, there is a noticeable impairment in perceiving auditory space (Amadeo et al., 2019; Campus et al., 2019; Gori et al., 2019). Other contributing factors might be related to the age at onset of blindness, different causes of blindness, duration of blindness or the absence of an appropriate control group (see also Röder et al., 2001).

Cognitive performance in visually impaired individuals

In contrast to research on memory performance in individuals with profound vision loss, such as blind individuals, studies on memory performance in visually impaired individuals are less documented (Cattaneo et al., 2008; Vecchi et al., 2006). Research showed that visually impaired individuals who suffered from blurred vision performed equally well as sighted control participants in various spatial memory tasks (Vecchi et al., 2006). In these tasks, matrices consisting of wooden cubes were presented, and some target cubes within those matrices were covered with sandpaper so that they could be tactually distinguished from the remaining cubes (tactile checkerboards). Participants were asked to tactually explore and memorise the target cubes for 10 s and to indicate them on a blank paper after the exploration phase. Visually impaired and sighted controls did not differ in performance, whereas congenitally blind individuals performed significantly worse than visually impaired individuals. This was observed especially for those individuals, who were visually deprived in one eye from birth suggesting that binocular visual input might be necessary for building mental representations. Other studies indicated that sighted participants outperformed visually impaired individuals in a tactile and visual spatial working memory task (Cattaneo et al., 2008). It has been suggested that a supramodal representation of space is impaired by deficits in sensory processing (for instance vision). Consequently, a visual deficit can impact higher-level cognitive processes, even when presented in other modalities (see Cattaneo et al., 2008 for further discussions).

To summarise, similar as in blind individuals, the variability of task performance in visually impaired individuals outlined above depends on several factors such as the exact paradigms, stimulus modalities, tasks and participant sample, the kind of visual impairment, duration and onset at visual impairment.

Auditory distraction

Everyday life activities, such as memorising specific information, are usually accompanied by irrelevant background sound. According to the duplex-mechanism theory, auditory information can elicit distraction by either interfering specifically with the processes involved in the given task (e.g., interference-by-process) or by diverting attentional resources from the focal task to the irrelevant information (e.g., attentional capture; Hughes, 2014; Hughes et al., 2005; 2007).

Some studies provided evidence for the idea that emotional prosody of irrelevant distractors can capture attention. Emotional prosody is characterised by modulations of the intensity, the intonation (pitch height), and the speed (rhythm) of the acoustic signal to convey meaning of the message without changing the verbal content (Kattner & Ellermeier, 2018). Given these changes in the physical acoustical properties, emotional prosody might elicit enhanced interference in the context of a serial recall task than the neutral irrelevant speech. Kattner and Ellermeier (2018) observed that irrelevant speech was more disruptive in a serial recall task when it was spoken with an angry prosody, compared with neutral or happy prosody. Such a finding might indicate that the more pronounced acoustical modulations in the angry speech (e.g., higher fluctuation strength) produce more interference with serial-order processing (Kattner & Ellermeier, 2018). This would be in line with the assumption that auditory distraction depends on the intensity of acoustical modulations (e.g., frequency or amplitude) or the number of changes in the task-irrelevant sound (Jones & Macken, 1993; Tremblay & Jones, 1998). In a follow-up experiment, the authors showed that the enhanced disruptive effect of the angry speech (compared with neutral and happy speech) was indeed specific to the serial recall task, but it was not observed in the presumably non-serial missing-item task. This indicates that the extra disruptive effect of emotional prosody may not be due to attentional capture, but due to process-specific interference of the additional acoustical fluctuations in angry speech with serial-order processing of the to-be-remembered information. Of note, Kattner and Ellermeier (2018) demonstrated that the interference with serial recall occurred due to the acoustic properties of the sounds (e.g., enhanced fluctuation strength in angry speech, compared with neutral speech), but not via changing the semantic content, which suggests that “precategorically encoded changing-state sound” interferes with serial short-term memory.

In a more recent study (Kattner et al., 2023), changing-state and steady-state syllables were articulated in four different emotional prosodies (happy, neutral, fearful, and angry). In this task, blind, visually impaired and sighted individuals were instructed to recall the sequence of auditorily presented numbers. Following each number presentation, we presented two auditory distractor conditions:

Steady-state sounds (same syllables, such as baba baba baba).

Changing-state sounds (different syllables, such as baba, dedu, . . .).

The syllables, which participants were instructed to ignore, were spoken either in a happy, angry, fearful, or neutral prosody. Besides an enhanced serial recall performance, the results also demonstrated that the changing-state effect (difference between serial recall performances following steady state vs changing state) was less affected by prosody in blind participants compared with the other groups.

Mechanisms to reduce distraction

The ability to focus on task-relevant information while ignoring irrelevant distractors is a fundamental process while navigating through the environment (Wöstmann et al., 2022). One mechanism which has been suggested to guide these processes is top-down control (Corbetta & Shulman, 2002). For instance, the ability to ignore distractions might be explained not only by the distracting material itself as outlined in the previous paragraph but also by expectations, internal models, representations to optimise performance for any goal driven task, as well as strategies for achieving these goals (e.g., Desimone & Duncan, 1995; Engle, 2002; Hughes, 2014; MacLeod et al., 2003). For example, studies have shown that a warning sound prior to any deviation in the frequency of sounds, eliminates deviation effects, documented as delays in response times to the duration of the sounds (Horváth & Bendixen, 2012; Horváth et al., 2011; Sussman et al., 2003; Wetzel & Schröger, 2007; Wetzel et al., 2009). Similarly, warning cues and foreknowledge were found to attenuate auditory distraction by deviant sounds and intelligible irrelevant speech in a serial recall task (e.g., Hughes et al., 2013; Hughes & Marsh, 2020; Kattner et al., 2022; Röer et al., 2015), whereas the changing-state effect seems to be unaffected by these factors (Hughes et al., 2013). Other studies have demonstrated that the application of specific strategies in a serial recall task can improve memory performance (Perham et al., 2007). For example, categorised recall, which involves grouping the items to be memorised (different items related to train journey) into different categories (such as stations and times), was more resilient to background noise compared with the instruction to memorise the items in a specific order (serial recall).

Emotional processing in blind individuals

Given the enhanced perceptual sensory discrimination performance of blind individuals (Kujala et al., 1995, 1997) it might be argued that they are quicker at identifying emotional prosodies than sighted controls (Klinge et al., 2010). Klinge et al. (2010) reported that congenitally blind individuals showed higher amygdala activation compared with sighted controls, especially in response to fearful and angry voices. Blind individuals were also faster at detecting emotional prosody and the first vowel of words, with enhanced crossmodal activation in visual areas. However, other studies, such as Gamond et al. (2017), did not find superior prosody discrimination skills in early blind individuals. In a dichotic listening experiment, blind participants were not faster than sighted controls in detecting specific emotions and were overall slower. Another study found no difference in auditory emotion and authenticity perception between blind and sighted individuals, though blind individuals showed earlier neural signal modulations (Sarzedas et al., 2024). These discrepancies may be due to differences in participant groups, such as the age at onset of blindness and the degree of vision loss (residual light perception).

The tasks in these experiments required conscious judgement of emotions, but it has been argued that emotion processing does not require attention (Grandjean et al., 2005). Differences between blind and sighted individuals might be more pronounced when task-irrelevant emotional features need to be ignored during difficult tasks (see Topalidis et al., 2020). Given the enhanced memory performance in blind participants, they may be better at ignoring auditory distractors, especially those involving threat or fear. Therefore, we asked participants to memorise the serial order of numbers while ignoring irrelevant distractors spoken in different emotional prosodies.

Purpose of the study

The primary objective of this research is to further investigate in more detail how visual deprivation impacts the ability to retain auditory information in memory across different digit positions (1–8) while ignoring task-irrelevant auditory distractors. Thus, we aim to investigate whether blind individuals exhibit superior “sustained” memory performance across different digit positions compared with sighted or visually impaired individuals. This potential advantage might be related to their enhanced ability to flexibly apply different strategies. Furthermore, we aim to investigate the impact of bottom-up features, such as emotional prosody presented in changing-state and steady-state sounds on serial recall in visually impaired, blind and sighted individuals. It might be argued that blind individuals are better able to shield the distracting impact of emotional prosody on serial recall performance (see also Topalidis et al., 2020). Therefore, we thoroughly reanalysed the data set reported in Kattner et al. (2023). Our investigation focused on determining whether improved serial recall performance is associated with specific digit positions in blind individuals and whether the increased recall efficiency is linked to varying memory strategies influenced by the degree of visual deprivation. For instance, improved serial recall across all digit positions, and thus a reduced serial position effect would demonstrate better application of different strategies to ignore distractors. We hypothesise that blind individuals, given their everyday life experiences, might be trained to apply a higher range of different strategies (e.g., crossmodal such as auditory—tactile) to recall the eight different digits, compared with visually impaired and sighted individuals.

Method

Participants

Based on the data set reported in Kattner et al. (2023), we compared age-matched groups of blind, visually impaired and sighted individuals and we included 17 individuals in each group: n = 17 blind individuals (age-range: 21–67 years, M = 43 years, SD = 13, seven males, 10 females, see Table 1), n = 17 visually impaired individuals (age range: 27–67 years, M = 45 years, SD = 11, seven males, 10 females, see Table 2), and n = 17 sighted controls (age range: 21–67 years, M = 42 years, SD = 14, eight males, nine females, see Table 3). Seventeen individuals have been selected based on the participant number of blind individuals (see Kattner et al., 2023) and similar sample sizes have been reported in other studies investigating tactile acuity in blind individuals (Alary et al., 2009) or exceeds the number of participants reported in some previous memory experiments (Occelli et al., 2017; Pasqualotto et al., 2013; Röder et al., 2001). A sensitivity analysis run with GPower (within-between interaction analysis of variance [ANOVA]), including eight measurements (eight positions), and the between subject factor: Group (N = 3) revealed that the effect size of at least a η2 = .022 (f = 0.15; small-to medium) can be detected with a statistical power of 0.8 in our data (Faul et al., 2007, 2009). This indicates that the sample is sufficiently sensitive to detect small-to-medium effects, according to the guidelines by Faul et al. (2007, 2009). The groups did not significantly differ in age (all ps > .3). Participants who have been classified as visually impaired reported to be able to perceive light and indicated contrast perception, whereas blind participants had no more than residual light perception and did not indicate any contrast perception. The blind individuals consisted of n = 11 congenitally blind individuals, whose onset of blindness started from birth, n = 1 early blind individual, whose onset of blindness started within the first year of life (5 and 7 months of age) and n = 5 late blind individuals whose onset of blindness started after the age of 11 years.

Demographics, light perception, reason of blindness and onset of blindness in blind individuals.

Demographics, light perception, reason of visual impairment and onset of visual impairment in visually impaired individuals.

Demographics of sighted individuals.

N = 13 visually impaired individuals reported to be visually impaired from birth, one visually impaired individual reported visual impairment during infancy (n = 1); and three visually impaired individuals reported late onset visual impairment (after the age of 17 years).

The sample was not matched based on IQ, but we asked participants to indicate their highest educational degree: In the sighted group, nine participants reported a university degree; five participants reported a high school degree, one participant did not finish the high school degree and two participants did not report the educational degrees. In the visually impaired group, 12 participants reported a university degree, three participants had a high school degree, two participants did not indicate their degree. In the blind group, 11 participants had a university degree, four participants reported a high school degree, two participants did not report their degrees. Even though we cannot rule out the possibility that IQ has an impact on task-performance, we do not expect any IQ-related differences between groups given similar educational background.

The study was approved by the Ethics Committee of the University of Lincoln and the University of Darmstadt Ethics Committee (ethics approval code: 2020-3415). All experimental procedures adhered to the Declaration of Helsinki (2013). The data and experimental materials can be found on OSF (https://osf.io/savke/ and https://osf.io/cv9md/).

Apparatus/materials

Auditory distractor stimuli

The auditory material was developed for a previous study (Gädeke et al., 2013). Three different two-syllable German pseudo-words “baba, dede and nono” were included in the task-irrelevant auditory distractor streams spoken by one actress. These pseudo-words were articulated in four different emotional prosodies consisting of fearful, happy, neutral and angry. To create changing-state and steady-state distractor conditions, each pseudo-word was either repeated nine times in the steady-state condition (e.g., dede, dede, dede, dede) or the pseudo-words were randomly selected to create the changing-state condition (e.g., dede, baba, nono, dede, . . .). The pseudo-words were presented with a frequency of one item per second, resulting in 9 s sequences The duration of each pseudo-word ranged between 400 and 700 ms (neutral: 516 ms, 558 ms, 651 ms, happy: 402 ms, 704 ms, 588 ms; fearful: 442 ms, 568 ms, 487 ms, angry: 400 ms, 433 ms, 751 ms, for baba, dede, nono, respectively, see also Kattner et al., 2023).

Pre-recorded spoken digits

The main task required participants to memorise a sequence of pre-recorded spoken digits. To record this material, a male actor (either English or German native speaker) articulated the digits 1, 2, 3, and 4.

Experimental design

Each type of auditory sequence was presented either in a neutral, happy, angry, or fearful prosody, resulting in a 2 (state of sound) × 4 (prosody) + 1 (quiet) experimental design. There were six trials of each experimental condition (quiet, steady-state neutral, steady-state fearful, steady-state happy, steady-state angry, changing-state neutral, changing-state fearful, changing-state happy, changing-state angry). The resulting 54 trials were presented in random order.

Procedure

Headphone screening test

The experiment started with a headphone screening test for control purposes in this online experiment setting to make sure that participants were using headphones rather than loudspeakers (Woods et al., 2017). At the beginning, pink noise was presented, and participants were asked to adjust the volume to a “comfortable” level. Then participants completed a 3-alternative forced-choice level discrimination task, in which three 200-Hz tones were presented successively (1,000-ms tone duration, 200-ms inter-stimulus intervals). The level of one tone was 6 dB lower than the other two tones. Crucially, one of the two “louder” tones had the phase reversed between left and right channels, which reduces the sound pressure level in the air, thus allowing detection of the low-intensity tone only when headphones are used (Woods et al., 2017). Participants were asked to indicate which of the three tones was softer than the other two by pressing the respective digit key (1, 2, 3) on their keyboard. A brief rising-pitch or falling-pitch whistling sound (296 and 307 ms) was presented as feedback after each response, indicating that the response was correct or incorrect, respectively. The headphone test was passed if the correct tone was detected in five or more trials of a six-trial block. The next block started if less trials were correct, and the test was terminated after the fifth block with less than five correct responses. If the test was not passed within five blocks, a message was shown on the screen telling the participant that the audio system was not sufficient to start the main task.

Procedure

Participants could access the study via a link on PsyToolkit (Stoet, 2010, 2017). They were given an overview of the experiment and, those who agreed to continue were asked to provide informed consent for their participation in the study. After obtaining consent, participants received demographic questions about their age and gender. For those participants who identified as visually impaired, additional questions were asked about the cause of impairment, the age at which the visual impairment/blindness began and the ability to perceive light and contrast. All participants were then instructed to put on a blindfold and headphones to continue. Participants then began with the headphone screening test. During this test, they were asked to determine which of the three tones was softer than the other two by pressing the corresponding digit key (1, 2, or 3) on their keyboard. After each response, a brief whistling sound with a rising pitch (296 ms) indicated a correct answer, while a falling pitch (307 ms) indicated an incorrect answer.

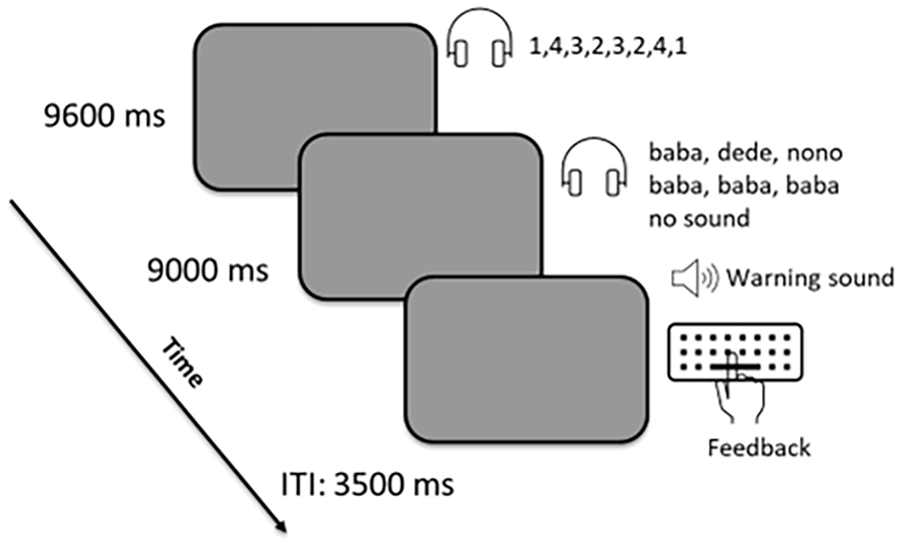

After the headphone screening test, the instruction of the serial recall task was presented as html text, which could be read by the screen reader. A screen reader is an assistive technology that converts text and other visual information on a screen into speech (or braille), enabling visually impaired users to interact with digital devices. All participants using a screen-reader were asked to turn the screen-reader off before starting the experiment to avoid any interferences. In the serial recall task, participants were asked to memorise sequences of spoken digits in serial order while different types of irrelevant sound were presented. At the beginning of the task, an audio message reminded participants to put on their blindfolds and begin the task by pressing the space bar. During each trial, participants heard a random sequence of eight spoken digits, selected with replacement from the digits 1 through 4. These digits were presented binaurally at a rate of one digit every 1,200 ms (see Figure 1). After the eighth digit, either irrelevant sound or silence was presented for 9,000 ms (the retention interval). The irrelevant sound consisted of sequences of nine spoken pseudo-words, presented at a rate of 1,000 ms per pseudo-word. On steady-state trials, a single pseudo-word was chosen randomly and repeated nine times, and on changing-state trials, nine pseudo-words were drawn with replacement from the stimulus set (“baba,” “dede,” “nono”). To rule out the possibility of an enhanced ability to identify speech under conditions of partial acoustic masking in blind individuals, digits and irrelevant sounds were not presented simultaneously during the encoding phase (for instance, Kattner & Ellermeier, 2014). Furthermore, there are some studies which report that distracting sounds during the retention interval are equally disruptive as sounds that are presented during digit presentation or both (e.g., Macken et al., 1999; Röer et al., 2014).

Experimental design. Participants were asked to memorise eight digits (drawn with replacement from the set 1–4). This was followed either by a period of silence (9,000 ms) or by irrelevant speech, such as (baba, baba, baba) spoken in four different emotional prosodies. A warning sound directed participants to indicate the order of the eight digits. Feedback was provided after participants indicated their response (see also Kattner et al., 2023).

After the retention interval, a 350-Hz tone was presented prompting participants to now recall the sequence of digits in the presented order using the digit keys (1–4) on their keyboard. After the eight digits had been entered, auditory feedback was presented in the form of 0–8 rising whistling sounds (296 ms each), with the number of whistles corresponding to the number of correct digits. The next trial started automatically after an inter-trial interval of 3,500 ms. Participants could take five short breaks during the task (after the trials 8, 17, 26, 35, and 44). An audio message notified participants about the breaks and directed them to continue by pressing the space bar.

Following the main task, participants were also asked to indicate the main strategies used to memorise the order of the digits. Finally, they were debriefed and thanked for their participation. All participants who needed assistance during the task could contact the researchers.

Data analyses

To investigate the impact of prosody (bottom-up process) on serial recall, we conducted the ANOVA including the factors Position (1–8), Prosody (happy, threat, fearful, neutral), State (steady state versus changing state) and Group (blind, visually impaired and sighted individuals). In these analyses, we further analysed main and interaction effects including the Group factor separately for changing-state and steady-state conditions as well as any interactions with Position and Prosody. 1

Huynh-Feldt-corrected values are reported when Mauchly’s test of sphericity was significant (p < .05). Effect sizes (partial eta squared) and Cohen’s d have been reported. To consider multiple comparisons, we calculated an adjusted p-value by multiplying the p-value with the number of comparisons (either N = 7 or N = 8).

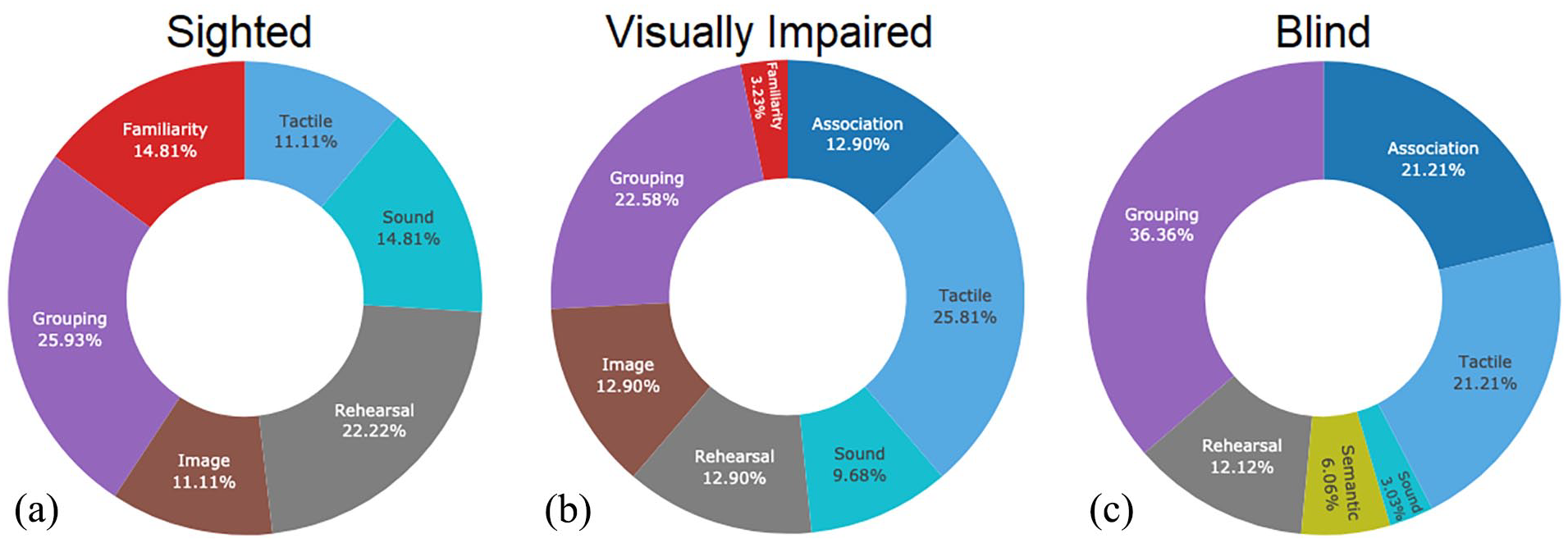

Furthermore, we plotted the distribution of the serial recall strategies separately for blind, visually impaired and sighted controls. Two authors categorised the verbal responses of each participant in the corresponding strategies: “rehearsal” (such as silently repeat the item), “grouping” (remembering the digits in groups), “association” (other things that could relate to the digits), “imagery” (visual image based on the meaning of numbers), “sound” (thought about the way the numbers sounded), “tactile” (Braille, piano playing), “familiar” (words that seemed recent or familiar) and “concentrate” (concentrated on numbers) (see also Morrison et al., 2016 for similar categories except the tactile category). Please note that we removed the “image” strategy from the coding responses in the blind group (given the blind participants in our sample).

For each group, we divided the sum of responses in each strategy by the total number of responses across all categories within each group. Chi-square tests were applied to compare the frequencies of specific strategy use between the groups.

We also calculated one-tailed t-tests for independent samples to investigate whether blind individuals, who employed specific strategies more frequently than sighted or visually impaired individuals, had superior memory performance compared with the other groups (e.g., blind individuals who used different strategies, visually impaired or sighted individuals).

To further investigate group differences related to the recency effects, we also calculated absolute, normative and relative recency measures according to Nicholls and Jones (2002, p. 5) and Maidment et al. (2013). The absolute recency measure is a metric that relies exclusively on recalling the last item of a list and therefore, might be influenced by the general performance level in a given condition or group. Consequently, in experimental settings where significant differences in overall performance are expected, it fails to offer an accurate assessment of recency by itself (Maidment et al., 2013). Therefore, other recency measures are relevant. An alternative way is to calculate normative recency effects which relate the serial recall performance at the final position relative to the average performance across all other positions: However, this measure can be impacted by other factors. For instance, in our experiment, there are frequently interactions between serial position and various experimental manipulations (such as prosody). These interactions are often attributed to minimal or non-existent effects of these manipulations on early serial positions, likely because recall performance is at or near their maximum at the beginning of the list. Therefore, another recency measure to consider is the difference between serial recall of the preterminal and the terminal position (relative recency effect).

We used t-tests for independent samples to compare those measurements between groups. The absolute recency effect was defined as the proportion of correctly recalled items at the last serial position 8. The normative recency effect has been defined as the recall accuracy at the last position (8) relative to the average accuracy of all positions (1–8). The relative recency effect has been defined as proportion correct at the last position (8) minus the proportion correct at the second to last position (7).

Results

The impact of emotional distractors of steady-state and changing-state sounds on serial recall across digit positions 1–8 in blind, visually impaired and sighted controls

To investigate how bottom-up features such as emotional prosody impacts serial recall performance in blind, visually impaired, and sighted individuals after changing-state and steady-state sounds have been presented, an ANOVA was conducted including the factors Prosody (happy, angry, fearful, neutral), State (changing state vs Steady state), Position (1–8) and Group (slighted individuals, blind and visually impaired individuals). The Position factor has been included to investigate sustained serial recall performance in blind individuals whereas the Prosody factor allows us to investigate perceptual effects on serial recall. ANOVA results are reported in Table 4.

ANOVA results including the factors Prosody, State, Position and Group.

* = p < .05; ** = p < .001.

Serial recall performance across digits positions 1–8 while ignoring the different prosodies of changing-state and steady-state sounds

The interaction between Position, Prosody, State and Group was not significant, F(42, 1,008) = .489, p = .995; ηp2 = .020, suggesting that different degrees of visual impairment do not impact the interaction between top-down (position) and bottom-up factors (prosody). Instead, the interaction between Position, Prosody and State was significant, F(21, 42) = 2.463, p < .001, ηp2 = .049.

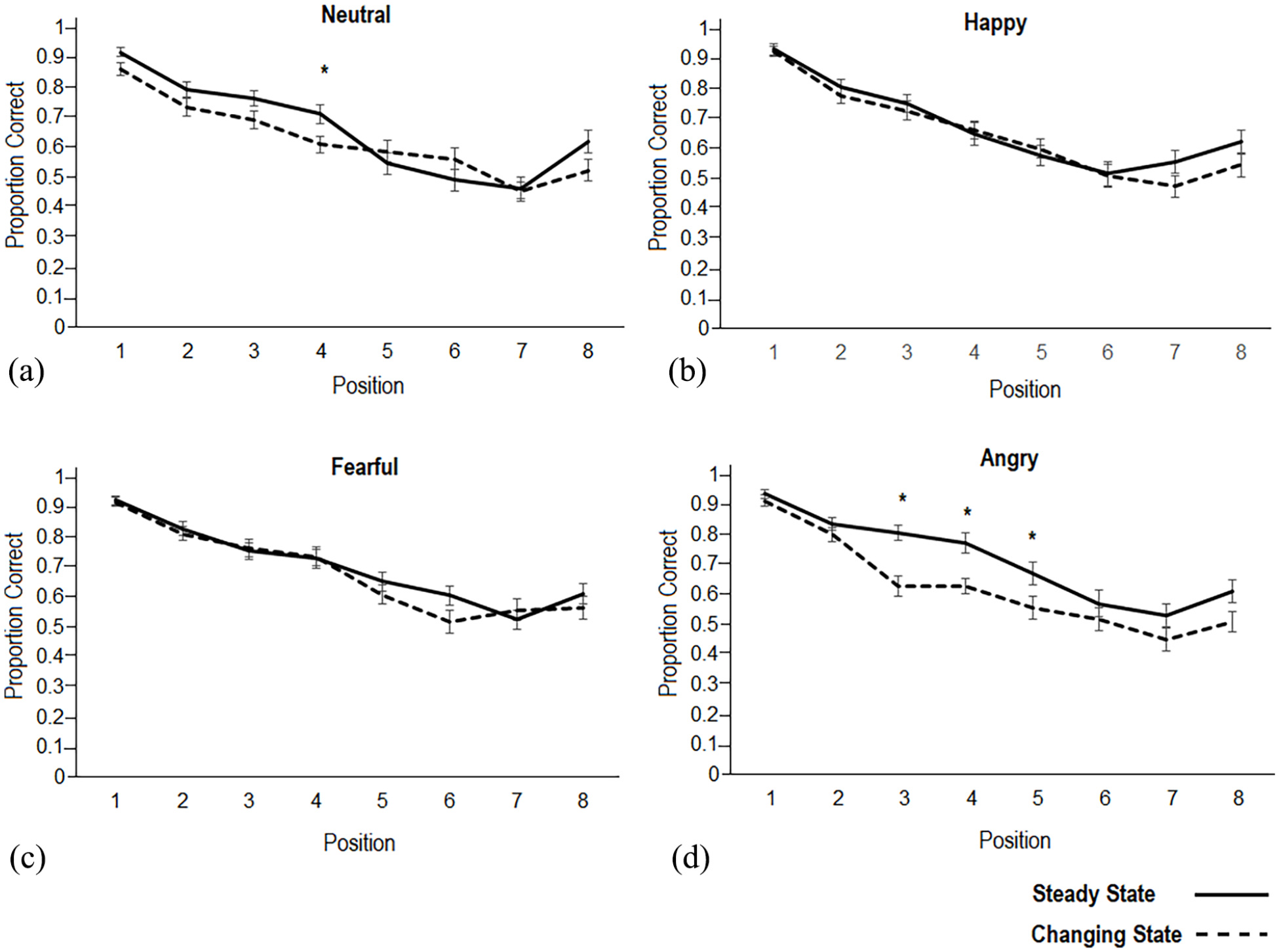

This interaction demonstrated that differences between steady-state and changing-state were especially observed for Positions 3–5 for the angry prosody with higher serial recall in the steady state compared with the changing state as well as at Position 4 for the neutral prosody (see Figure 2d; paired samples t-tests angry: position 3: t(50) = 4.654, p < .001; Cohen’s d = .652; position 4: t(50) = 4.415, p < .001; Cohen’s d = .618; position 5: t(50) = 3.125, p = .003; Cohen’s d = .438; neutral: position 4: t(50) = 2.79, p = .007; Cohen’s d = .392).

(a-d). Average proportion of correctly recalled digits in the serial recall task, categorised by prosody type (neutral (a), happy (b), fearful (c), angry (d)) and digit position (1–8). p-values < .05 after the correction of multiple comparisons (p-value multiplied with N = 8 comparisons) are indicated with an asterisk. Solid line: steady state; dashed line: changing state. Error bars indicate standard errors of the mean.

Group differences in serial recall as a function of digit position

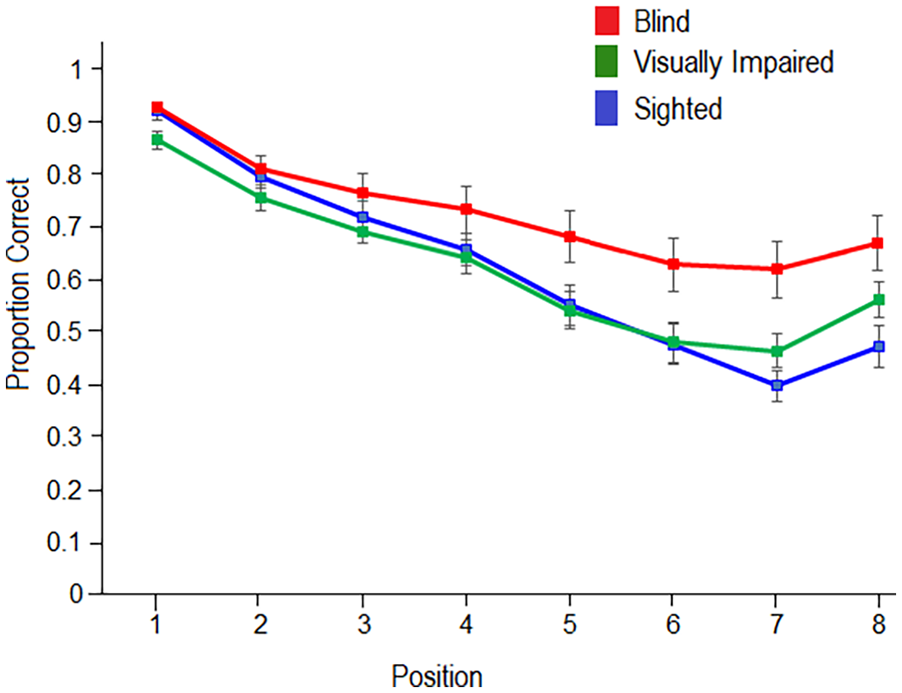

The 4*2*8*2 ANOVA indicated a significant interaction between Position and Group, F(14,336) = 3.77, p < .001, ηp2 = .136, see Figure 3. Blind individuals outperformed sighted controls at digit positions 5–8 (see Figure 3). Furthermore, blind individuals outperformed visually impaired individuals at positions 1, and 5–7 (see Figure 3). Sighted controls outperformed visually impaired individuals in position 1 (blind vs sighted controls: position 5: t(32) = 2.06, p = .048; Cohen’s d = .706; position 6: t(32) = 2.36, p = .025, Cohen’s d = .809; position 7: t(32) = 3.63, p < .001, Cohen’s d = 1.24; position 8: t(32) = 3.008, p = .005, Cohen’s d = 1.032; blind versus visually impaired individuals: position 1: t(32) = 2.968, p = .006, Cohen’s d = 1.018; position 5: t(32) = 2.66, p = .030, Cohen’s d = .777; position 6: t(32) = 2.326, p = .026, Cohen’s d = .798; position 7: t(32) = 2.486, p = .018, Cohen’s d = .853). Sighted individuals showed higher serial recall performance in position 1 compared with visually impaired individuals (position 1: t(32) = 2.308, p = .028; Cohen’s d = .792; all other comparisons were not significant, ps > .05).

Average proportion of correctly recalled digits in the serial recall task as a function of the type of position in sighted controls, visually impaired individuals, and blind individuals. Sighted individuals show a more gradual decrease in auditory memory performance compared with blind individuals. Group differences between blind and sighted controls in the correct recall of digits at positions 7 and 8 are significant after adjusting for multiple comparisons (with a correction factor of N = 8 comparisons). Error bars indicate standard error of the mean.

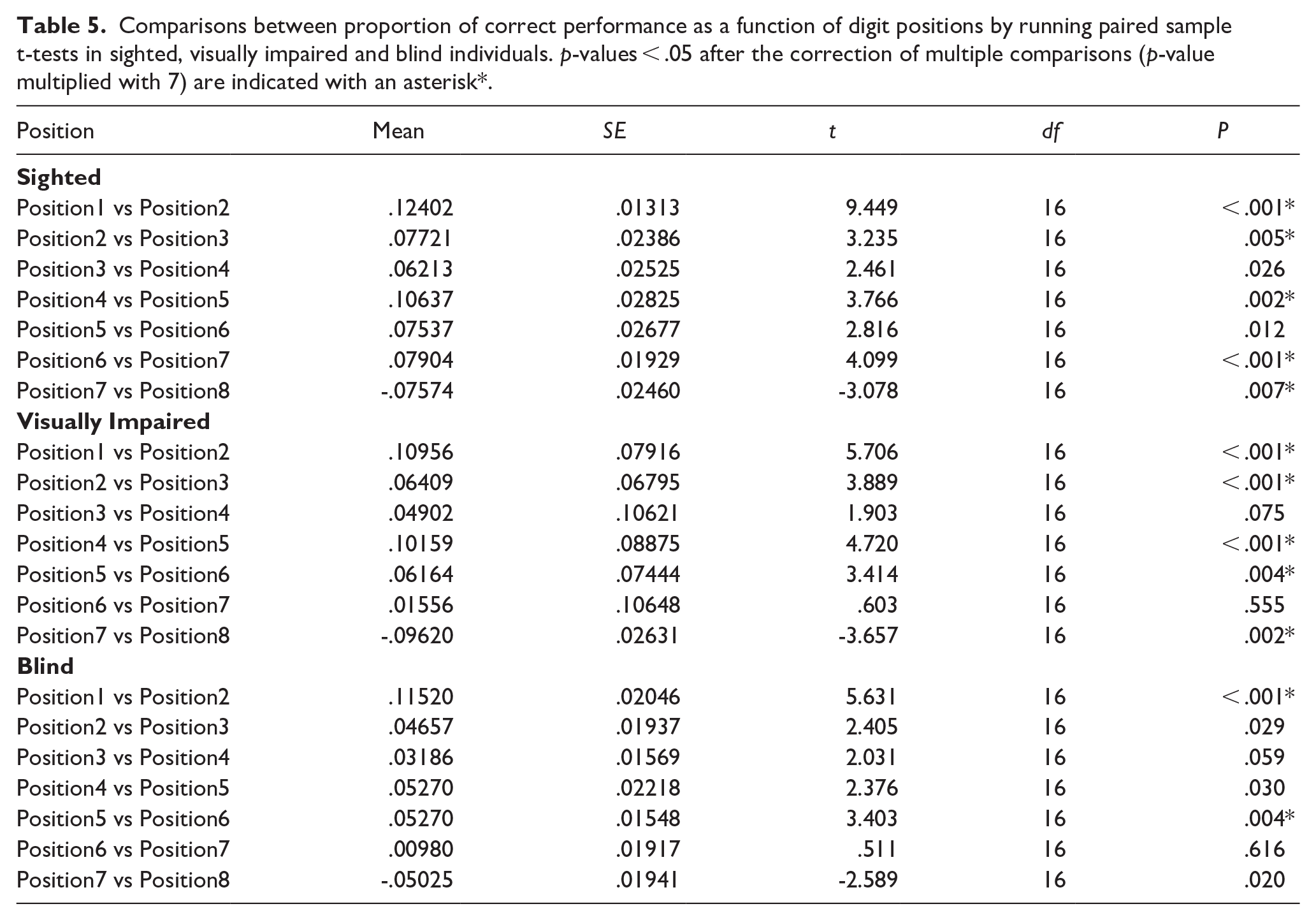

Sighted individuals showed a stronger serial position effect compared with visually impaired and blind individuals (stronger gradual decline in serial recall performance compared with visually impaired and blind individuals; Table 5). As shown in Figure 3, sighted individuals showed a steeper decline in serial recall from positions 1 to 7; and a significant higher serial recall in position 8 compared with position 7 (recency effect; see supplementary material for an overview of statistical results). Blind individuals showed a performance decline especially between positions 1 and 2 and 5 and 6. The difference between positions 7 and 8 as observed in sighted and visually impaired individuals was less pronounced in blind participants. Visually impaired individuals showed a similar pattern compared with sighted individuals (see Table 5, Figure 3).

Comparisons between proportion of correct performance as a function of digit positions by running paired sample t-tests in sighted, visually impaired and blind individuals. p-values < .05 after the correction of multiple comparisons (p-value multiplied with 7) are indicated with an asterisk*.

Furthermore, the main effect of Group was significant, F(2.48) = 4.308, p = .019, ηp2 = .152, indicating that blind individuals (M = .733, SE = .029) outperformed visually impaired (M = .629, SE = .029) and sighted controls (M = .628, SE = .029; blind versus sighted: t(32) = 2.351, p = .025, Cohen’s d = .806; blind versus visually impaired: t(32) = 2.364, p = .024; Cohen’s d = .811; sighted versus visually impaired: t(32) = .041, p = .968; Cohen’s d = .014).

The impact of emotional prosody on serial recall after steady-state and changing-state sounds

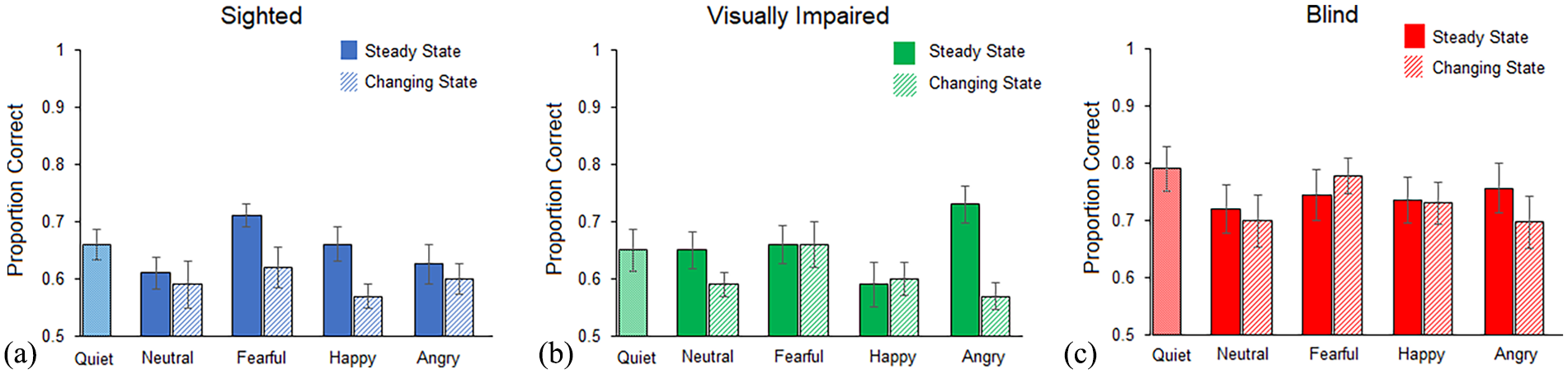

The three-way interaction between the factors Prosody, State, and Group was significant, F(6,144) = 4.085, p < .001, ηp2 = .145, see Figure 4.

(a-c). Average proportion of correctly recalled digits in the serial recall task as a function of prosody and sound in sighted controls, visually impaired individuals, and blind individuals. Error bars indicate standard error of the mean.

To investigate whether emotional prosody impacts steady state differently in blind compared with visually impaired and sighted controls, we run the subordinate ANOVAs separately for steady state and changing state. The ANOVA revealed an interaction between Group and Prosody for the steady-state condition, F(6,144) = 4.15, p < .001, ηp2 = .147, and a main effect of Prosody, F(3,144) = 4.091, p = .008; ηp2 = .147. While sighted and visually impaired individuals showed a main effect of Prosody (sighted: F(3,48) = 4.328, p = .009; ηp2 = .213; visually impaired individuals: F(3,48) = 6.653, p = .001; ηp2 = .294), blind individuals did not show any significant modulation by emotional prosody in the steady-state condition (main effect of Prosody, F(3,48) = .509, p = .678, see Figure 4). As shown in Figure 4, in sighted individuals, serial recall performance was higher in the fearful condition compared with the angry or neutral conditions (t-tests: fearful versus neutral: t(16) = 3.712, p = .002; fearful versus angry: t(16) = 3.095, p = .007). For visually impaired individuals, serial recall was heightened in the angry condition compared with the fearful, neutral and happy conditions (t-tests: t(16) = fearful versus angry: t(16) = 2.514, p = .023, angry versus neutral t(16) = 3.487, p < .003; and angry versus happy: t(16) = 3.54, p = .003).

However, the interaction between Group and Prosody was absent for the changing state, F(6,144) = .969, p = .449; ηp2 = .039.

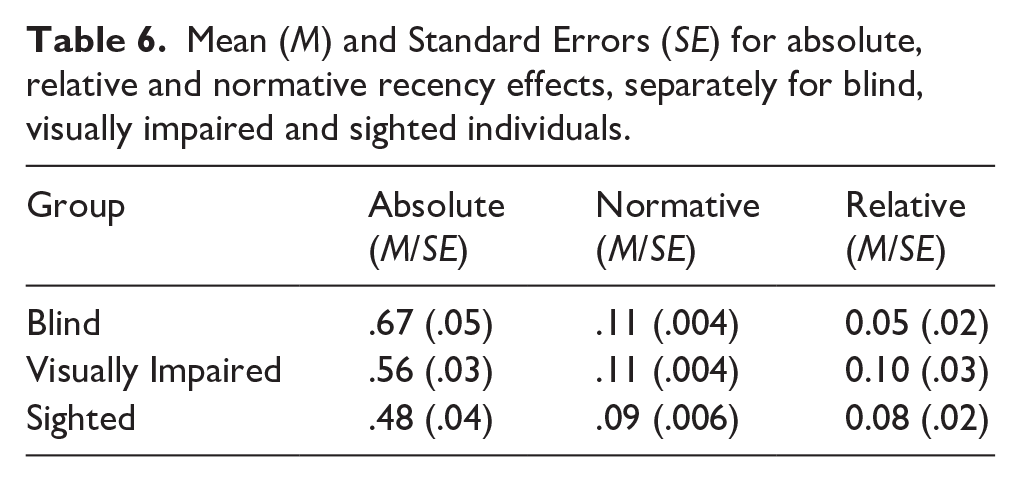

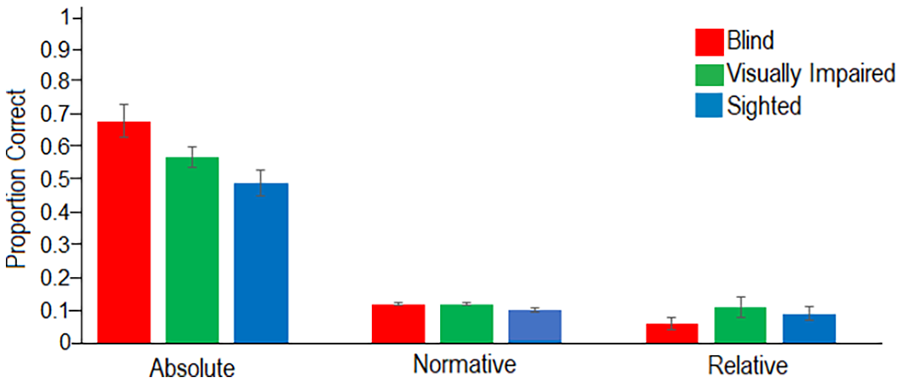

Absolute, normative and relative recency effects

To further investigate group differences in recency effects, we calculated absolute, normative, and relative recency effects separately for congenitally blind, visually impaired, and sighted controls (see also Nicholls & Jones, 2002, p. 5, and Maidment et al., 2013). To determine the absolute recency effect, we included the proportion of correctly recalled items at position 8. The normative measure was calculated by dividing the proportion of correct responses at the final position (8) by the proportion of correct responses across all serial positions. The relative measure is defined as the difference between the proportion of correct recalls at the final position (8) and the second to last position (7). Descriptive statistics of the different measures have been reported in Table 6.

Mean (M) and Standard Errors (SE) for absolute, relative and normative recency effects, separately for blind, visually impaired and sighted individuals.

Blind individuals showed a significantly higher absolute recency effect compared with sighted individuals, t(32) = 3.008, p = .003; Cohen’s d = 1.032. The comparisons between sighted and visually impaired and between blind and visually impaired individuals were not significant (sighted versus visually impaired: t(32) = -1.68, p = .103; Cohen’s d: -.576; blind versus visually impaired: t(32) = 1.762, p = .088; Cohen’s d = .604).

The normative recency effect was significantly higher in blind individuals compared with sighted controls, t(32) = 2.7, p = .011; Cohen’s d = .926. Moreover, visually impaired individuals showed a higher normative recency effect compared with sighted controls, t(32) = 2.245, p = .032; Cohen’s d = .770. The normative recency effect did not differ between blind and visually impaired individuals, t(32) = .229, p = .820; Cohen’s d = .079; see Figure 5.

Absolute, relative and normative recency effects separately for blind, visually impaired and sighted controls. Error bars indicate standard error of the mean.

There were no significant differences in the relative recency effects between the different groups (blind versus sighted; t(32) = -.81, p = .422; Cohen’s d = -.279; blind versus visually impaired: t(32) = -1.406, p = .169; Cohen’s d = -.482; sighted versus visually impaired: t(32) = -.57, p = .574; Cohen’s d = -.195; see Figure 5).

Serial recall strategies used by blind, visually impaired and sighted individuals

After participants completed the serial recall task, they were asked to indicate the strategies they used to memorise the serial order of the presented digits. As this task was optional, we recorded and categorised the answers of a subsample of participants (N = 44): N = 14 blind individuals, N = 16 visually impaired individuals and N = 14 sighted individuals. The answers were categorised by two authors according to the following strategies (see also: Morrison et al., 2016): “rehearsal” (such as silently repeat the item), “grouping” (remembering the digits in groups), “association” (other categories that could relate to the digits), “image” (visual image based on the meaning of numbers), “sound” (thought about the way the numbers sounded, rehearsal aloud), “tactile” (Braille, piano playing), familiar (digits presented in familiar sequences), “semantic” (semantic relations) (see Figure 6). Results indicated that whereas sighted individuals mainly used grouping strategies (25.9%) and rehearsal (22.2%) by repeating “aloud” (Sound, 14.8%), using the keyboard (Tactile, 11.11%) and images (11.11%) (see Figure 6), blind individuals reported more associations to other categories (Association, 21.1%) than sighted individuals (Association: blind versus sighted: χ2 (1) = 6.857, p = .009, N = 28; with Continuity Correction). For instance, blind individuals mentioned “rhythmic structuring of the number sequence, playing piano, mathematical connections, telephone number, as well as word associations such as 12 apostles, 31st December, 32 byte system.” Besides forming associations (21.21%; e.g., piano playing), blind individuals also used grouping (36.36%), and tactile strategies such as “braille,” and “fingering in piano playing,” (21.21%). Similar as blind individuals, visually impaired individuals used Associations during serial recall (12.9%) which did not differ between blind and visually impaired individuals (blind versus visually impaired: χ2 (1) = 1.077, p = .299, N = 30; with Continuity Correction) and between visually impaired and sighted individuals: χ2 (1) = 2.165, p = .141, N = 30; with Continuity Correction.

Serial recall strategies in Sighted individuals (a), Visually Impaired Individuals (b), and Blind (c). The pie charts have been generated based on the total number of responses in each group. Purple: Grouping, red: Familiarity; dark blue: Association; light blue: Tactile; cyan: Sound, grey: Rehearsal, brown: Image.

Serial recall performance as a function of different strategies

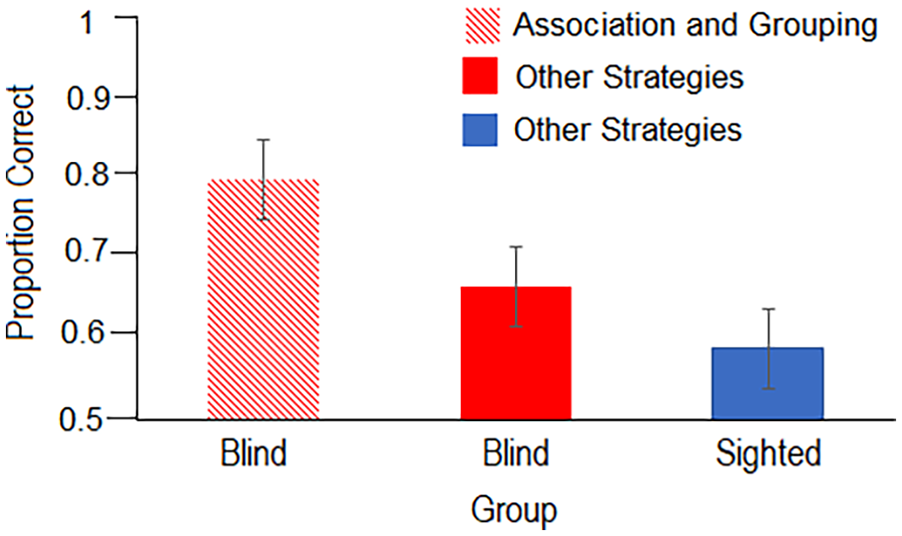

To investigate whether specific serial recall strategies are associated with better serial recall performance, we categorised blind individuals into two groups. Those who applied the “Associations and Grouping” strategies were assigned to one group (N = 6), while the remaining participants, who either used Association or Grouping and/or any other strategies, formed the second group (N = 8). The “Associations and Grouping” strategies were chosen as a selection criterion because blind individuals used these strategies more often than the sighted controls, and it is hypothesised that this specific strategy combination may lead to improved serial recall performance.

To assess serial recall performance and compare the different groups, we conducted an independent samples t-test (one-tailed) between the blind individuals who used the “Associations and Grouping” strategy and those who used other strategies or one of these strategies.

The results of the one-sided independent sample t-test revealed that blind participants who used the “Associations and Grouping” strategies (M = 0.8; SE = 0.05) outperformed the blind individuals who used other strategies (M = 0.66; SE = 0.05; t(12) = 1.88, p = .042, Cohen’s d = 1.017; one-tailed; see Figure 7). Both groups did not significantly differ in age, suggesting that the factor age might not explain the performance differences between these groups, t(12) = .396, p = .699; Cohen’s d = .214; blind group with Association-Grouping strategies: Mean age = 44 years, SD = 12; blind group using different strategies: Mean age = 42 years; SD = 11.

Proportion of correct serial recall performance as a function of serial recall strategies in Blind individuals using Association and Grouping (red pattern), in Blind individuals using either Association or Grouping or any remaining strategy (red bar), and in age-matched sighted individuals (blue). Please note that sighted individuals did not use the combination between Association and Grouping. Error bars represent standard errors of the mean.

In addition, we compared blind individuals who applied the “Associations and Grouping” strategies with their age-matched sighted controls. The blind group who used Associations and Grouping strategies (M = .8; SE = .05) outperformed the age-matched sighted controls (M = .59; SE = .05) in the serial recall tasks, t(10) = 3.006, p = .007, one-sided; Cohen’s d = 1.735.

We also compared the blind group who used other strategies (M = 0.66; SE = 0.05) with their matched sighted control group (M = 0.65, SE = .02). We observed that there was no significant difference between those groups, t(12) = .230, p = .411, one-sided; Cohen’s d = .115.

Discussion

The main aim of the current experiment was to investigate top-down and bottom-up effects in a serial recall task in blind, visually impaired and sighted controls. Top-down attentional control functions were tracked by investigating the sustained serial recall performance across digits presented at positions 1–8 and the gradual decline of serial recall with increasing digits. More specifically, the use of various recall strategies might be required to keep digits 1–8 in working memory while ignoring the distracting impact of sounds (Hughes, 2014). Bottom-up effects were investigated by analysing the impact of emotional prosody implemented in auditory distractors of changing-state and steady-state syllables on serial recall performance.

Primacy and recency effects in blind, visually impaired, and sighted controls

All participants revealed the typical primacy effect which indicates that the first digits in the list were better recalled compared with subsequent digits (Demaree et al., 2004). These results might be explained by the fact that participants used rehearsal strategies to memorise the first items in the sequence. Furthermore, participants showed better performance at position 8 compared with 7. We further investigated those recency effects at the final positions: Blind participants outperformed sighted controls when digits were presented at position 8 (absolute recency effect) but there were no significant differences in the absolute recency effect between blind and visually impaired individuals as well as sighted and visually impaired individuals When relating the recall accuracy for the digit in the last position (8) to recall accuracy across all positions (1–8), blind and visually impaired individuals showed higher scores compared with sighted controls, possibly indicating that group differences might be explained by the usage of different strategies in the blind throughout the serial recall task. When calculating the difference in recall accuracy between the terminal and preterminal position, we did not find any group differences, indicating that blind listeners do not differ from visually impaired or sighted listeners in this recency measure. This could also indicate that the increased difference between groups for more recent items is likely an inevitable result of better performance overall rather than a specific increase for more recent items. Similar as in recent studies, blind individuals outperformed sighted individuals and visual impaired individuals in memory performance (Amedi et al., 2003; Hull & Mason, 1995; Kattner et al., 2023; Occelli et al., 2017; Pasqualotto et al., 2013; Röder et al., 2001; Swanson & Luxenburg, 2009; Withagen et al., 2013). The increased serial recall performance in blind individuals might be related to the different strategies they used compared with sighted individuals to recall the items. Of note, blind individuals associated the serial order of the digits with various other domains, such as mathematics, semantics, and braille which might have contributed to their enhanced serial recall performance compared with sighted individuals. Indeed, blind individuals who applied “Grouping” and “Associations” strategies, showed higher serial recall performance compared with blind individuals who did not apply these strategies together or age-matched sighted controls. This is in line with previous studies which suggest that the application of strategies can improve working memory performance (Perham et al., 2007). Other studies argue that blind individuals are trained on serial memory in everyday life activities, which is essential to create a mental map of the environment in blind individuals (Raz et al., 2007). While in sighted individuals a visual scene is processed at a glance, blind individuals need to process a scene serially—out of separate auditory inputs from each location along the route (Millar, 1994) which corresponds to the requirements of a serial recall task. For instance, Raz and coauthors (2007) mentioned that “blind individuals tend to code spatial information (especially of large spaces) in the form of a local, sequential representation based on routes” (p. 1132). This has been described as “a natural consequence that the path traveled by a blind person cannot be apprehended at glance (e.g., from a mountaintop) but rather must be constructed serially out of segmented inputs from each location along the path” (p. 1132, see also Raz et al., 2007). Corresponding to the nature of the current auditory recall task, information needs to be encoded in a serial manner, by recalling the exact order of each auditory stimulus, which might represent the everyday life activities in blind individuals, such as route finding.

Enhanced top-down attentional control in blind individuals

Furthermore, blind individuals showed more sustained serial recall performance (especially for the last digits presented in the sequence) compared with sighted controls and visually impaired individuals. This is shown by a less gradual decline of serial recall performance as a function of digit position. Different theoretical accounts might explain these findings: Blind individuals might select and use different strategies more efficiently to focus on task-related items and ignore distractors (see also Collignon & De Volder, 2009, see Collignon et al., 2006; Kujala et al., 1997 for enhanced divided attention abilities). This effective strategy use might be modulated by top-down control. In line with this argument, several studies observed enhanced functional connectivity between frontal areas and visual cortical areas which might be essential in the regulation of top-down attentional control and reducing distractor interferences (e.g., Abboud & Cohen, 2019; Bedny et al., 2010; Burton et al., 2014; Deen et al., 2015; Kanjlia et al., 2021; Murphy et al., 2016; Wang et al., 2014). In addition, Stevens and coauthors (2007) observed that the recorded preparatory functional brain activity to an auditory cue extracted from the medial dorsal region of the occipital cortex in blind individuals predicted their task performance. It might be speculated that early visual deprivation might elicit reorganisations of brain networks including the fronto-parietal network, as well as the connectivity to sensory brain areas and between sensory brain areas, such as auditory and occipital areas (Bavelier & Neville, 2002).

Blind individuals’ serial short-term memory was also not affected by the emotional prosody in irrelevant speech, especially in the steady-state condition, suggesting that they may be capable of filtering out irrelevant auditory information, regardless of the nature and salience of the information (even angry stimuli). These findings are in line with a previous study in which blind individuals and sighted controls were asked to detect rare deviant syllables presented at a specific loudspeaker (left or right side) as fast and as accurately as possible while ignoring other rare distractor syllables presented from the other speaker or more frequently presented syllables from both loudspeakers (Topalidis et al., 2020). Blind individuals were faster in detecting deviant syllables at the attended loudspeaker while ignoring irrelevant syllables at the same speaker and the other (unattended) speaker. Moreover, in contrast to sighted individuals, blind individuals were not captured by a specific prosody, but they showed an enlarged event-related potential (ERP) amplitude in response to attended compared with unattended syllables across all emotions, which has been interpreted as an emotional “general” auditory spatial selective attention effect.

Those findings also correspond to Klinge et al. (2010a) who asked blind and sighted participants to either discriminate the emotional prosody of the syllable or to distinguish the first vowel of each stimulus. The authors observed not only better performance in blind individuals but also a higher activation to all emotional stimuli in visual brain areas whereas emotion-specific brain activation has been observed in the amygdala to fearful and angry stimuli. Thus, in our experiment, the attention of blind individuals does not seem to be captured by a specific salient emotion as compared with sighted individuals or visually impaired individuals. Furthermore, our findings are in line with the “hypercompensation” account and correspond to previous research showing improved memory performance in blind individuals (Kattner & Ellermeier, 2014; Röder et al., 2001; Röder & Rösler, 2003). In contrast to previous experiments, we observed this effect in the context of an online experiment, suggesting that the effect of enhanced memory performance in blind individuals can be replicated across different experimental settings, even when they are less controlled as in a lab environment.

In contrast to blind individuals, our results demonstrate that visually impaired individuals show enhanced performance in the angry prosody, with better performance during angry steady-state sound compared with the other emotional prosodies. Similarly, previous studies revealed that speech articulated in an angry prosody produced more interference with serial recall than neutral or happy-prosody speech (Kattner & Ellermeier, 2018). It is possible that the angry intonations of speech used in the present study may have different psychoacoustic properties (e.g., in terms of fluctuation strength) than the previously used speech samples with an angry prosody. Nevertheless, it is still puzzling why angry prosody should increase the steady-state effect in visually impaired individuals. It could be speculated that the experience of visual impairment may bias the auditory-attentional system towards active and potentially approaching emotional signals, whereas passive negative emotions may be filtered more readily (i.e., threat vs fear). Further studies are needed to replicate the angry/fearful prosody effect and its possible relation to visual experiences.

The interaction between bottom-up and top-down features in serial recall

Our results indicated that changing-state effects (differences between steady state and changing state) were especially observed in the angry prosody for positions 3–5 in all participants. Changing-state effects were also observed in the neutral prosody. However, for the neutral prosody those changing-state effects were less pronounced than in the angry prosody. Indeed, several studies have shown stronger attentional capture effects in the angry prosody condition (Ceravolo et al., 2016). This parallels the concept of emotional attention, where emotional cues in auditory stimuli are often preferentially processed, especially when signalling potential danger (Vuilleumier, 2005).

Constraints on generality

One limitation of the current study is that we did not consider the duration and onset of blindness in our analysis, given the limited sample size. It might be argued that in contrast to congenitally blind individuals, late blind individuals could be distracted more by emotional background syllables than congenitally and early blind individuals. Therefore, future experiments need to focus on a higher sample size in the specific groups to further investigate how onset of blindness explains distractibility.

Due to the online nature of the study, not all variables can be equally controlled as in a lab environment, such as volume of the material, background noise and whether sighted individuals wore a blindfold as instructed. For instance, we cannot control that sighted individuals have worn a blindfold. Thus, future experiments are needed to control for the effect of the blindfold. However, we would suggest that if they would have not worn the blindfold, sighted participants would have performed better in the memory task because they could have used visual assistance, such as writing down the numbers to be recalled. However, sighted individuals performed worse than blind individuals which is in line with many other studies, showing worse performance in sighted blindfolded individuals compared with blind individuals in memory tasks (Röder & Rösler, 2003; Rokem & Ahissar, 2009), and it also suggests that sighted participants may have complied with the instructions and did not use visual assistance. Furthermore, recent studies have documented that the crucial effects of auditory distraction (changing-state effect and deviation effect) can be studied quite reliably in an online setting (Elliott et al., 2022; Kattner & Bryce, 2021) and other online studies in older adults have been successfully performed and have been proposed as an alternative to lab-based studies (Haas et al., 2021). Follow-up studies are needed to test the effects of the current study in a lab environment. Memory strategies have been investigated using self-report after the main experiment. Future experiments might further investigate the impact of these different strategies in blind individuals by applying more objective measurements which do not depend on participant’s memory.

Conclusion

The present results show that blind individuals’ serial short-term memory is distracted less by emotional irrelevant speech compared with visually impaired and sighted individuals. This is shown by two main results: (1) Blind individuals show a more sustained serial recall performance across digit presentation 1–8 which might point to their enhanced top-down attentional control as well as various strategies applied in serial recall. (2) Blind individuals’ perceptual-cognitive system allows efficient filtering of irrelevant auditory information, thus reducing attentional capture by irrelevant sound. We show these results in an online setting, suggesting that online experiments might create a new valid experimental pathway of investigating compensatory neural plastic changes in blind individuals.

Supplemental Material

sj-docx-1-qjp-10.1177_17470218241300115 – Supplemental material for Enhanced auditory serial recall of recently presented auditory digits following auditory distractor presentation in blind individuals

Supplemental material, sj-docx-1-qjp-10.1177_17470218241300115 for Enhanced auditory serial recall of recently presented auditory digits following auditory distractor presentation in blind individuals by Julia Föcker, Leyu Huang, Alliza L Caling, Marieke Fischer, Andreas Ihle, Timothy Hodgson and Florian Kattner in Quarterly Journal of Experimental Psychology

Footnotes

Acknowledgements

We would like to thank all participants who took part in this experiment as well as all organisations which distributed the online link to this experiment. We would like to thank Sarah Cremona for participant recruitment.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplementary Material

The supplementary material is available at: qjep.sagepub.com

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.