Abstract

Frequently, problems can be solved in more than one way. In modern computerised environments, more ways than ever exist. Naturally, human problem solvers do not always decide for the best-performing strategy available. One underlying reason might be the inability to continuously and correctly monitor each strategy’s performance. Here, we supported some of our participants’ monitoring ability by providing written feedback regarding their speed and accuracy. Specifically, participants engaged in an object comparison task, which they were asked to solve with one of two strategies: an internal strategy (mental rotation) or an extended strategy (manual rotation). After receiving no feedback (30 participants), trialwise feedback (30 participants), or blockwise feedback (30 participants) in these no choice trials, all participants were asked to estimate their performance with both strategies and were then allowed to freely choose between strategies in choice trials. Results indicated that written feedback improves explicit performance estimates. However, results also indicated that such increased awareness does not guarantee improved strategy choice and that attending to written feedback might tamper with more adaptive ways inform the choice. Thus, we advise against prematurely implementing written feedback. While it might support adaptive strategy choice in certain environments, it did not in the present setup. We encourage further research that improves the understanding of how we monitor the performance of different cognitive strategies. Such understanding will help create interventions that support human problem solvers in making better choices in the future.

Keywords

Significance statement

In an increasingly technologised world, humans can support or replace internal thought with external tools (e.g., writing a shopping list to support memory and using the search function to support visual search) more than ever. Consequentially, it is more important than ever to help humans find cognitive strategies that work well for them—with or without a tool. Here, we provided some of our participants with written performance feedback regarding speed and accuracy of two different strategies. Interestingly, written feedback did not help participants mix strategies in a way that improved performance. Contrarily, we provided evidence that written feedback might have even harmed performance. We conclude that written performance feedback can be more harmful than beneficial and should only be employed when warranted.

Internal and extended strategies

In modern tech-infused environments, human performers can frequently decide whether to (1) exclusively rely on their mental capacities without using any external aids or to (2) reach out into the environment so solve a problem at hand. Here, we will refer to the former as relying on internal strategies (iS) and to the latter as relying on extended strategies (eS; see Clark & Chalmers, 1998). Mental arithmetic is an example for the former and instead using a calculator an example for the latter.

Note that, in terms of theoretical framing, we here prefer the term “using extended strategies” over what others, as well as ourselves, previously referred to as “cognitive offloading.” We acknowledge that both terms can be used interchangeably for the most part but decided on the former terminology because cognitive offloading has originally been defined as a behaviour purposefully exhibited to decrease internal demand (Risko & Gilbert, 2016), whereas “using extended strategies” is more liberal regarding the underlying reasons. Furthermore, we think that the choice between two internal strategies has lots in common with the choice between an internal and extended strategy. Such similarities might be more difficult to discuss if we call the former choice “strategy choice” but the latter “cognitive offloading.”

Monitoring the performance of internal and extended strategies

Human performers have the means to estimate their performance of an internal strategy (for a review, see Ullsperger et al., 2014). But monitoring can similarly apply to external aids like computers or fellow humans (e.g., Pavone et al., 2016; Pfister et al., 2020; van Schie et al., 2004; Weis & Wiese, 2019c). For example, when navigating with a map or smartphone, or when retrieving information from the internet, human performers gauge whether the navigation or information search was quick and yielded appropriate results. Monitoring the performances of both internal and extended strategies is essential when it comes to deciding which strategy to use for a given problem.

That humans can be able to monitor the performance of extended strategies is supported by studies in which the performance or reliability of an eS was altered. Results indicate that participants adaptively adjust how frequently they use an eS based on such manipulations (e.g., Gray et al., 2006; Grinschgl et al., 2020; Storm et al., 2017; Walsh & Anderson, 2009; Weis & Wiese, 2019c). For example, in one study, participants needed to navigate a mouse cursor over a button to perform an eS. The size of the button was manipulated between experiments. And crucially, participants adaptively decreased their eS use frequency with decreasing button size, which reflects the increased time costs of executing more precise movements (Gray et al., 2006).

While such results suggest that people are usually able to monitor cognitive strategies, other results point towards faulty monitoring (e.g., Dunn et al., 2018; Gilbert et al., 2020; Risko & Dunn, 2015; Touron, 2015). For example, in a previous study, participants were asked to remember sets of two to ten letters for later report via storing them in memory or by “storing” them on a piece of paper with a pen. Participants’ performance was abysmal when trying to remember 10 letters by memory. Nevertheless, participants were shown to use memory instead of pen and paper for the sets of 10 letters more than 10% of the time, which nearly always leads to wrong answers (Risko & Dunn, 2015).

What is the origin of such a maladaptive choice? Inaccurate beliefs about a strategy’s performance are a likely contributor. In the same study, an independent sample of participants largely overestimated their performance when asked how well they thought they could remember 10 letters by memory, which could explain the maladaptive choice. Similar influences of performance-related beliefs on strategy choice, which can be independent of actual behaviour, have been reported frequently (e.g., Boldt & Gilbert, 2019; Gilbert, 2015; Touron, 2015; Weis & Wiese, 2019c, 2020). But note that maladaptive choice can exist in some individuals even with well-calibrated performance beliefs (Gilbert et al., 2020), for example, due to some sort of technology aversion or mental challenge seeking (e.g., Weis & Kunde, 2024).

Written feedback (knowledge of result) and cognitive strategy choice

How could such a maladaptive choice be prevented? We conjecture that the availability and granularity of written performance feedback (often denoted knowledge of result) for relevant strategies, such as the objective duration and accuracy of completing a task, could recalibrate inaccurate beliefs. Thus, when written feedback is available, future strategy choices could rely on the information provided in the written feedback. When no feedback is available, the choice would need to be based on other sources, for example, based on the results of resource-demanding (Corallo et al., 2008) internal time monitoring (reviewed in Allan, 1979; Mauk & Buonomano, 2004).

Conceptually, we endorse the notion that written feedback and the results of unconscious internal monitoring belong to distinct yet interconnected processes: explicit and implicit metacognition (Frith & Frith, 2022). The beauty of explicit metacognition is that it allows us to harness the knowledge that other humans—or machines—have acquired, which typically is realised via language. In the case of written feedback in the present study, the idea is that knowledge has been acquired and is then communicated by a machine. Similarly, an experimenter could also inform the participant that a specific strategy is unreliable (Weis & Wiese, 2019c) or especially well-suited for a given task (Weis & Wiese, 2022).

Implicit metacognition, on the other hand, does not rely on conscious processing in the first place, although the results of unconscious monitoring can eventually penetrate consciousness (compare Figure 1 in Frith & Frith, 2022). For example, humans can monitor their own (e.g., Ullsperger et al., 2014) or other agents’ (e.g., Pavone et al., 2016; van Schie et al., 2004) performance and the results can then be used for further processing. The point here is not that the results of implicit monitoring need to stay unconscious but that the information sampling is unconscious, which is different from digesting language-based information.

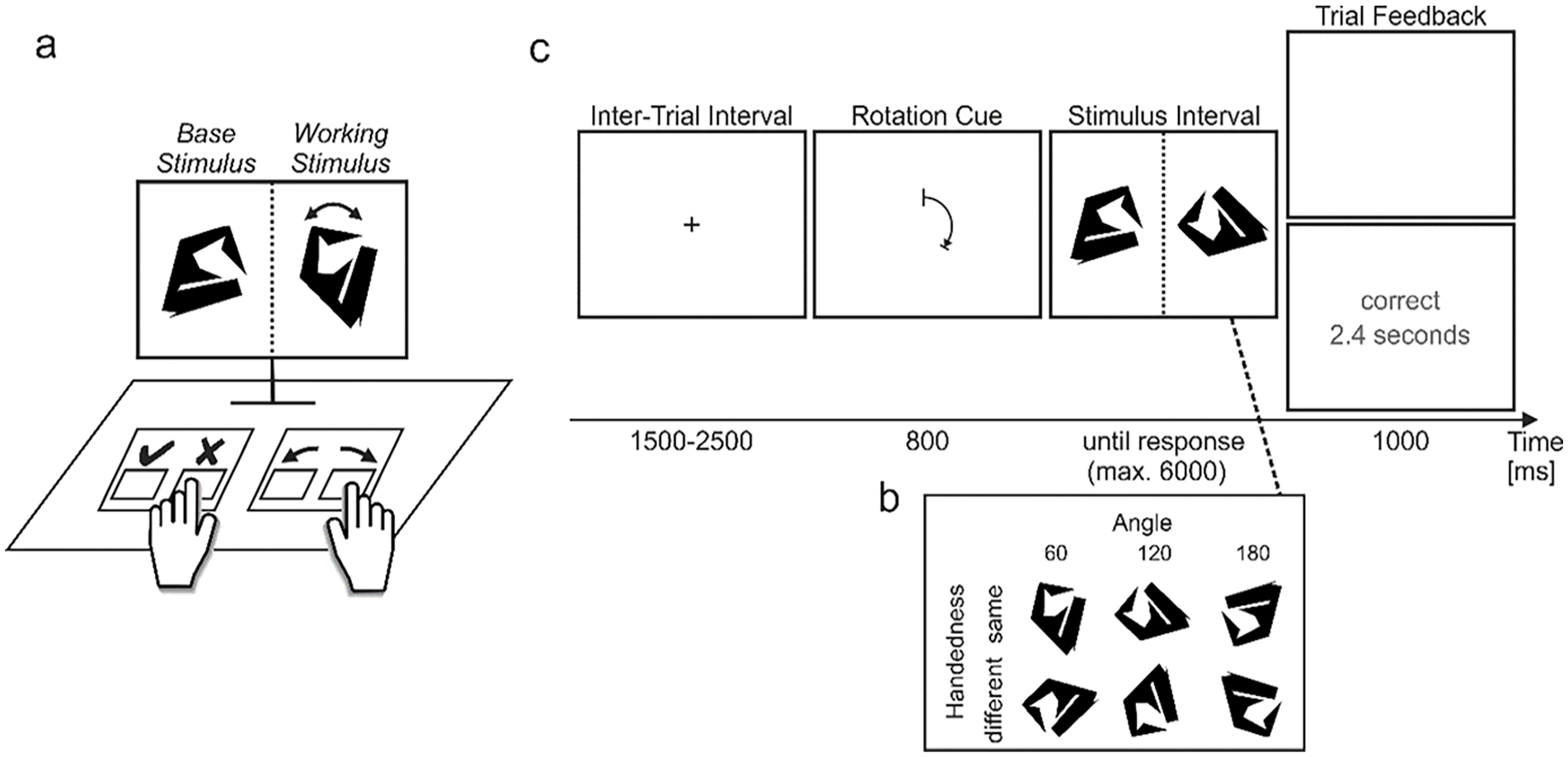

Extended rotation paradigm.

Skill acquisition research has shown that written feedback is frequently beneficial (cf. Salmoni et al., 1984). For example, written feedback can augment performance differences that might otherwise go unnoticed when relying on implicit metacognition alone. Thus, written feedback is thought to allow for more accurately selecting those instances of executing a skill that comes with superior performance in comparison to relying on unsupported self-observation. However, written feedback’s benefits need to be compared with its downsides. Since written feedback has to be processed itself, potential conflicts with concurrent cognitive processing are likely to emerge since mental processing resources are limited. Written feedback may also shift motivation to perform a task from intrinsic to extrinsic (Deci et al., 1999). Moreover, providing written feedback after a response can deteriorate implicit metacognition, possibly because processing the feedback interferes with the processes that inform or constitute implicit monitoring (Swinnen et al., 1990). We conclude that written feedback has the potential to improve choice but should be implemented with care.

In that vein, Touron and Hertzog (2014) suggested that the presentation of accuracy feedback in a memory task can be used to de-bias older performers’ choices between internal and extended strategies because older adults are susceptible to eS overuse due to overly pessimistic beliefs about their memory performance. And indeed, older performers downregulated eS use when receiving trialwise accuracy feedback. However, surprisingly, providing trialwise speed feedback in the same study was not able to downregulate eS use. It is surprising because older performers are known to have difficulties in monitoring response times (Craik & Hay, 1999) and the feedback should have revealed that eS use is substantially slower than iS use. In a related study, speed feedback was additionally provided block-wise (Hertzog et al., 2007). In that study, participants adaptively downregulated eS use in the feedback vs. no feedback condition, which suggests that blocked speed feedback is more digestible than its trialwise equivalent. Along these lines of research, the present study was designed as a starting point to further investigate the role of written feedback when deciding between an internal and an extended strategy to solve a problem.

Current study

To test the ramifications of written feedback, we engaged participants in an object-matching task that could be solved by relying on internal means (mental object rotation; iS) or extended means (manual object rotation; eS). The key manipulation concerned the availability of written performance feedback regarding both of these means, which was either not provided, provided trialwise (i.e., presented after each trial) or provided blockwise (i.e., averaged across various trials). The following hypotheses were evaluated:

Several studies have suggested an overuse of extended in comparison to internal strategies (e.g., Gilbert et al., 2020; Virgo et al., 2017; Touron, 2015). Such bias could be partly explained by a monitoring system that is calibrated for monitoring internal rather than extended strategies. If monitoring was exclusively based on the outcome, e.g., speed and accuracy as witnessed by the eye, performance estimates should be equally accurate for both types of strategy. In contrast, if the processing of an iS itself provided cues for the monitoring system that an outsourced process of an eS cannot provide, differences should emerge. Such internal cues have been suggested as a possible reason for inaccurate monitoring of an extended memory strategy (Dunn et al., 2018). Accordingly, we hypothesise that:

Method

Participants

A total of 90 (mean age 23.9 years; age range 18–40; 1 diverse, 67 female, 22 male) participants equalling 30 per group after exclusions were analysed for this manuscript. Participants were recruited from the participant pool of the university. Participants were reimbursed based on an hourly rate of €10. We prematurely stopped data collection that was preregistered based on an a priori power estimation conducted in G*Power with 70 participants per group (version 3.1.9.2, Faul et al., 2007). We decided to stop because, in an interim and not preregistered analysis conducted to ensure adequate data quality without technical errors, we observed that our results substantially deviated from our hypotheses. However, data quality seemed fine and after additional analyses reported below, we found that the restricted sample of 90 participants was sufficient to conclusively reject our main hypothesis.

The original power estimation was based on the least powerful test, i.e., the one-tailed independent t-test needed to follow up on the ANOVAs (alpha = .05, 1—beta = .9, d = .5). We set d to .5 in the power calculations since the effect of feedback type on strategy use in an earlier study was of similar magnitude (d = .5 in Touron & Hertzog, 2014). However, with the current sample, participants chose the more accurate strategy less frequently in both trial and block feedback conditions in comparison to the no feedback condition, Mdelta(no, trial) = -22.0%, Mdelta(no, block) = −12.5%. Effect sizes were negative, d(no, trial) = −.67, d(no, block) = −.39, and the associated bootstrapped 95% confidence intervals 1 excluded the effect size of .5 that was used for power calculations, CId(no, trial) = [−1.3, −.1], CId(no, block) = [−.9, .1]. Since the present sample already provided evidence that feedback was not associated with better strategy choice regarding accuracy, we perceived no benefit in running the whole sample. 2

From the initial sample of 110 participants, participants were excluded if they were (a) outside of 2.5 standard deviations around the group reaction time mean (0 participants), (b) below 75% accuracy for the internal blocks (i.e., Blocks 1 and 3 or 2 and 4, depending on the counterbalancing condition; 15 participants), or (c) did not rotate in at least 90% of the trials during the extended blocks (i.e., Blocks 1 and 3 or 2 and 4, depending on the counterbalancing condition; 5 additional participants). Thus, a total of 20 participants were excluded based on these preregistered criteria. Criterions similar to (a) and (b) have been used in an earlier study (Weis & Wiese, 2019c). Criterion (c) was necessary to ensure participants followed instructions and consequentially to ensure that the performance estimates for the eS were valid.

Apparatus

The experiment was presented on a computer screen (BENQ XL2411P 24-inch monitor set to a resolution of 1920 × 1080 pixels with a refresh rate of 100 Hz) positioned about 75 cm in front of participants. The experiment was programmed in and run with MATLAB version R2016a (The Mathworks, Inc., Natick, MA, United States) and the Psychophysics Toolbox (Brainard, 1997; Pelli, 1997). Participants responded using a USB-connected standard keyboard and mouse. The stimulus rotation (see section Methods: Procedure and Task) was updated at a frequency of about 35 Hz.

Procedure and task

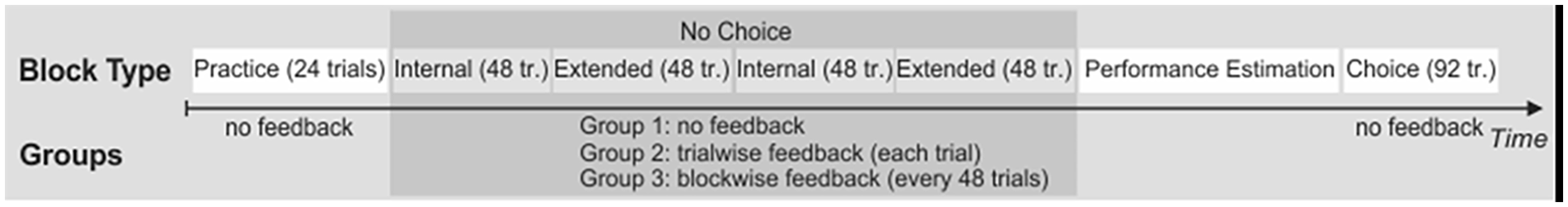

After being welcomed and providing informed consent, participants were asked to engage in the Extended Rotation Paradigm (Figure 1). Participants were asked to answer as quickly and accurately as possible. Specifically, participants first engaged in a practice block, followed by a no-choice block, a performance estimation block, and a choice block (Figure 2). Except for the performance estimation block, all blocks consisted of trials of the Extended Rotation Paradigm.

Procedure.

The practice block ensured that participants fully grasped the task at hand. Participants did not receive performance feedback during the practice block. However, participants were allowed to advance to the no-choice block only if they made at most one error throughout the last 16 trials of the practice block and hence received feedback on whether these last 16 trials (8 extended and 8 internal) as a whole were answered sufficiently correctly according to this condition. If there were at least two errors, participants were kindly requested to repeat the practice block.

Subsequently, the no-choice blocks allowed us to measure objective performance profiles of both internal and extended strategies. To that end, the no-choice block was subdivided into internal and extended sub-blocks. In these sub-blocks, participants were kindly asked to please either only rely on their mental abilities (internal sub-block) or the keyboard (extended sub-block) to rotate the working stimulus. During the internal sub-block, pressing a rotation key (compare Figure 1) did not rotate the working stimulus. Internal and extended sub-blocks alternated, which sub-block (i.e., internal or extended) occurred first was counterbalanced, and four blocks à 48 instead of two blocks à 96 trials were used to lessen sequential effects.

This experiment’s main manipulation, the feedback manipulation, was entirely realised during the no-choice block. One group of participants received no feedback at all throughout the no-choice block (no feedback), one group received feedback after each trial (trialwise feedback), and one group received summarised feedback about the performance of the last 48 trials at the end of each block (blockwise feedback: “Correct Answers: XX % [new line] Mean Response Time: X.X seconds”). Note that no feedback was given throughout practice and choice blocks.

Subsequently, the performance estimation block allowed us to measure the subjective performance of both internal and extended strategies. Specifically, participants were asked to provide an accuracy and speed estimate for both internal and extended no-choice trials (e.g., “Please estimate how frequently you answered correctly when exclusively rotating with your “inner eye” and without the keyboard.”; answers ranged from 60% to 100% on a visual analogue scale). Note that block feedback could potentially be rehearsed and then used for the explicit performance estimates. Also, the given performance estimates themselves could be rehearsed and used in the subsequent choice block. To avoid such rehearsals, participants had to correctly re-type an alphanumerical string consisting of 12 elements right before and right after the performance estimation block.

Subsequently, the choice block afforded to measure how participants choose between internal and extended strategies. To this end, participants were instructed to freely choose between mental and manual keyboard-based rotation (i.e., between iS and eS). Note that in the present study, feedback is only provided during no-choice blocks because a former study suggested that—when no choice blocks do exist—monitoring during choice trials might not be the prime cause for adaptive strategy selection (Weis & Wiese, 2019a).

The experimental session concluded with questionnaires about demographic data and consciously accessible considerations contributing to voluntary choice beyond individual performance differences (e.g., “With which strategy do you think your answers were more accurate?”), and the German Need for Cognition Scale (NCS; Bless et al., 1994; Cacioppo & Petty, 1982). These measures were collected to support exploratory analyses if necessary.

Stimuli

For the Extended Rotation Paradigm (see section Methods: Procedure and Task), 48 base stimuli with 16 edges were created based on a procedure described by Attneave and Arnoult (1956) and realised using a Matlab-based script provided by Collin and McMullen (2002). We decided to use rather complex stimuli with many (i.e., 16) edges and not alter the amount of edges between stimuli because a high similarity between complex stimuli was associated with a high Angle-RT slope (Folk & Luce, 1987). Given that this slope is seen as an indicator of the thought process needed for rotation, complex and similar stimuli likely increase the signal-to-noise ratio in the present study. An example base stimulus as well as all the possible manipulations of the associated working stimulus as shown at the beginning of the stimulus interval is presented in Figure 1b.

Analyses

Data cleaning

All trials were used for analysis. We purposefully did not filter out any trials based on RT since even large RTs had been included in the trialwise as well as—more importantly—the blockwise feedback that participants received throughout the study. However, we also ran exploratory analyses without trials in which RTs deviated more than 2 SDs from the individual mean and found no substantial deviations from the results reported here.

Hypotheses testing

H1 and H4 were investigated using two mixed ANOVAs with the three-level between-participants factor feedback (no, trialwise, blockwise) and the two-level within-participants factor strategy (internal, extended) as independent variables (IVs). One ANOVA was carried out with the absolute accuracy estimation error (erracc =—accuracyestimate—accuracyacrtual|) and one with the absolute RT estimation error (erracc =—RTestimate—RTactual|) as dependent variables (DVs). Estimates were taken from the performance estimation block, and actual performances from the no-choice block (cf. Figure 2). The mixed ANOVAs were followed up by t-tests where indicated by the hypotheses.

H2 was investigated with two one-way ANOVAs with feedback as IV and accuracy or RT during the choice block (cf. Figure 2), respectively, as DVs. H3 was investigated with two analogue ANOVAs with a percentage of trials in which that strategy was used that outperformed the other strategy during the no-choice block as DV. Whenever a rotation key was pressed throughout a trial, we used this as an indicator for an eS trial. Accordingly, when no rotation key was pressed, we used this as an indicator for an iS trial. ANOVAs were followed up by independent t-tests where indicated by the hypotheses.

All effect size intervals were bias-corrected and accelerated and were implemented via bootstrapping 2000 samples in R with the bootES function of the bootES package (version 1.2.3). To provide the readers with an option to gauge the evidence for the alternative over the null hypotheses, we complemented t-tests with Bayes Factors that were computed in R with the ttestBF function of the BayesFactor package (version 0.9.12-4.7) and medium priors (Morey & Rouder, 2011). To improve readability, we only discuss the results of the Bayes factor analyses when conflicting with a frequentist interpretation.

Results

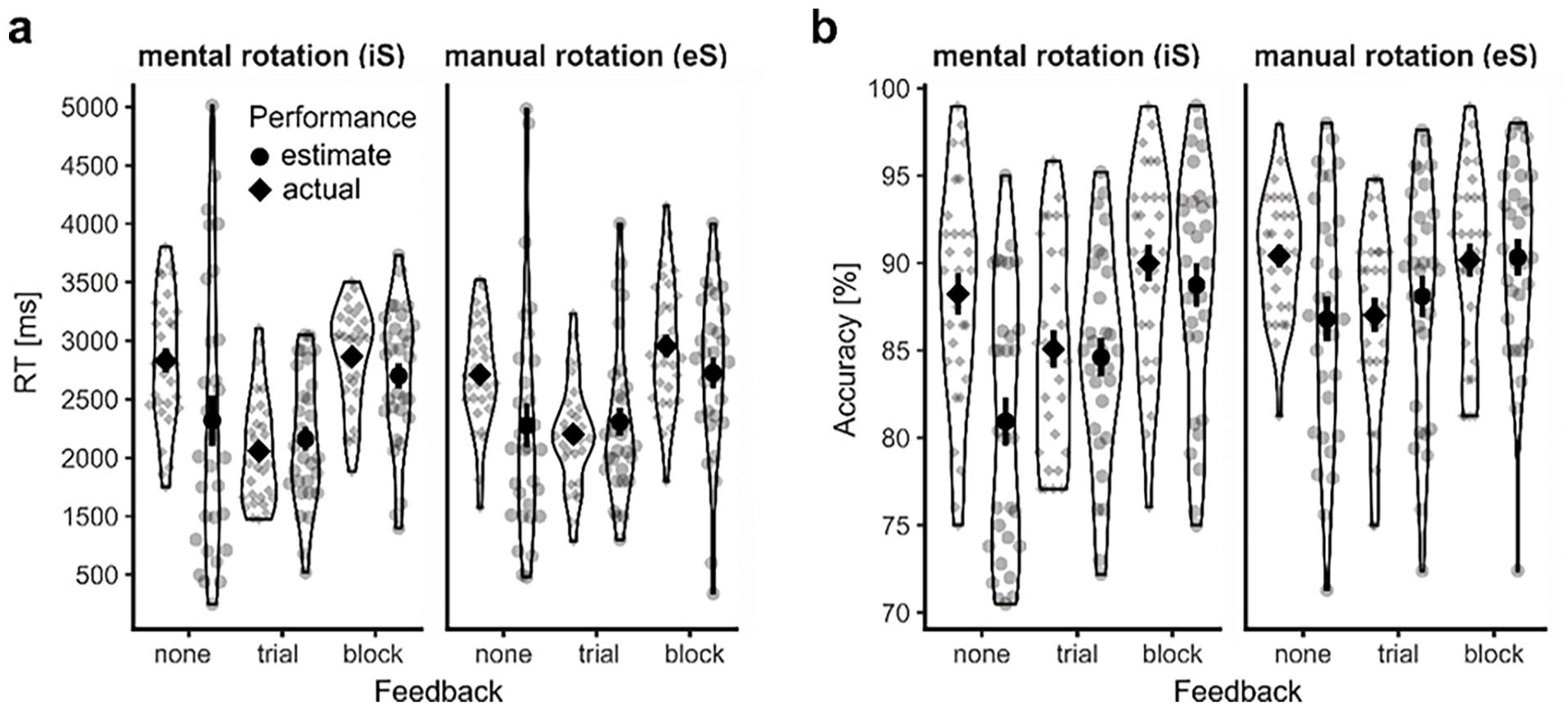

For data transparency reasons, all relevant averaged raw data including individual means can be inspected in Figure 3. Note that on the group level with all 90 participants, mental and manual rotation had a highly similar performance profile (manual rotation: MRT = 2,623 ms, Maccuracy = 89.2%, mental rotation: MRT = 2,584 ms, Maccuracy = 87.7%). Also note that participant employed manual rotation in a meaningful manner: If manual rotation was employed in the same handedness condition, the end location of the working stimulus usually closely matched the base stimulus (Figure S2).

Raw data for actual performance (no choice block) and performance estimates.

Hypotheses-driven results

Feedback improved performance estimates in comparison to no feedback (H1 is confirmed)

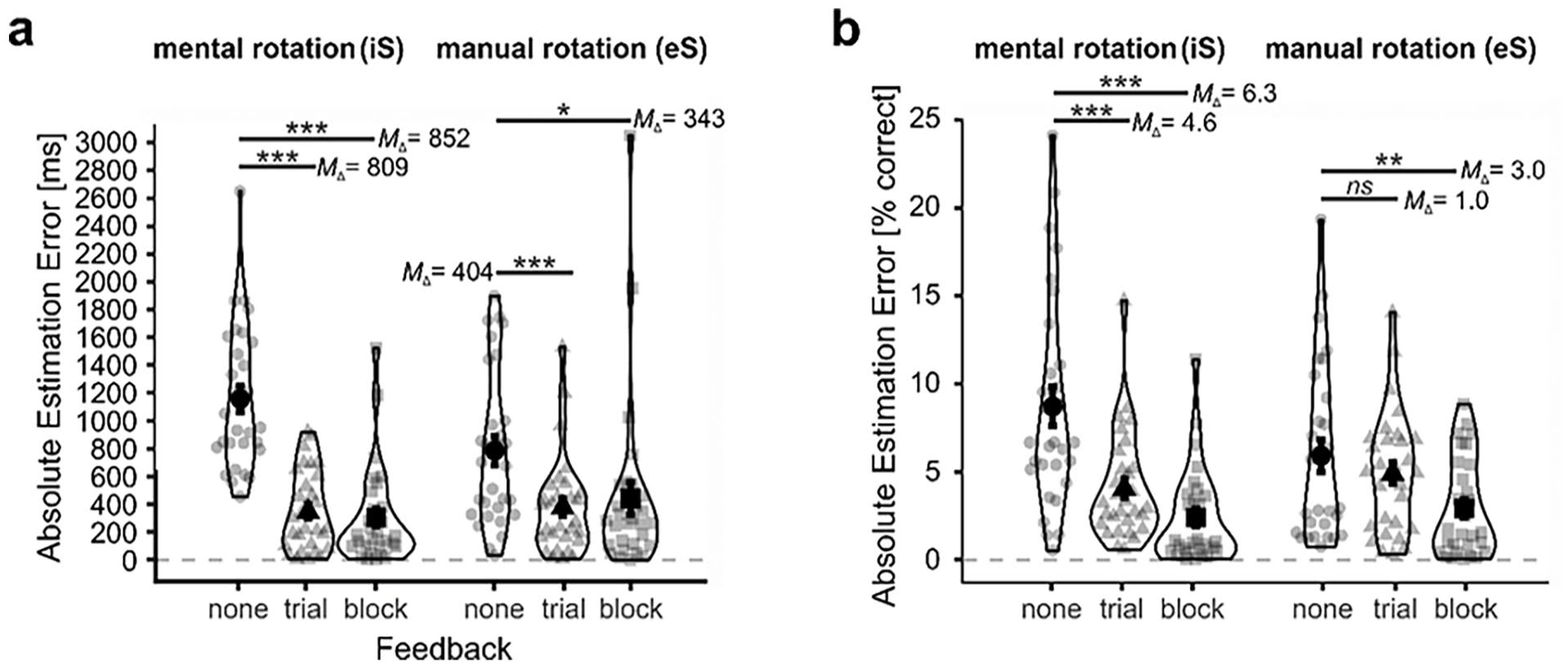

There was an interaction between how feedback and strategy influenced absolute RT estimates, F(2, 87) = 7.7, p < .001, ηG2 = .05, as well as absolute accuracy estimates, F(2, 87) = 6.4, p = .003, ηG2 = .04; Figure 4. Specifically, trial feedback improved absolute RT estimates for mental rotation, MΔ = −809 ms, t(58) = −7.6, p < .0001, dCohen = −2.0, 95% CI d = [−2.4, −1.4], BF10 = 23,494,263, as well as manual rotation, MΔ = −404 ms, t(58) = −3.4, p = .001, dCohen = −.9, 95% CI d = [−1.4, −.3], BF10 = 23.8. Trial feedback also improved absolute accuracy estimates for mental rotation, MΔ = −.046, t(58) = −3.8, p < .001, dCohen = −1.0, 95% CI d = [−1.4, −.4], BF10 = 65.0, but not manual rotation, MΔ = −.010, t(58) = −.9, p = .38, dCohen = −.23, 95% CI d = [−.7, .3], BF10 = .4. Block feedback improved absolute RT estimates mental rotation, MΔ = -852 ms, t(58) = −7.6, p < .0001, dCohen = -2.0, 95% CI d = [−2.6, −1.3], BF10 = 21,465,593, and possibly also manual rotation, MΔ = −343 ms, t(58) = 2.3, p = .028, dCohen = −.6, 95% CI d = [−1.2, .1], BF10 = 2.1, although the latter result is ambiguous when considering the low Bayes factor and the confidence interval of the effect size. Block feedback also improved absolute accuracy estimates for both mental rotation, MΔ = −.063, t(58) = −5.2, p < .0001, dCohen = −1.4, 95% CI d = [−1.8, −.9], BF10 = 6,072.8, and manual rotation, MΔ = −.030, t(58) = −2.9, p = .005, dCohen = −.7, 95% CI d = [−1.2, −.3], BF10 = 7.7.

Estimation errors.

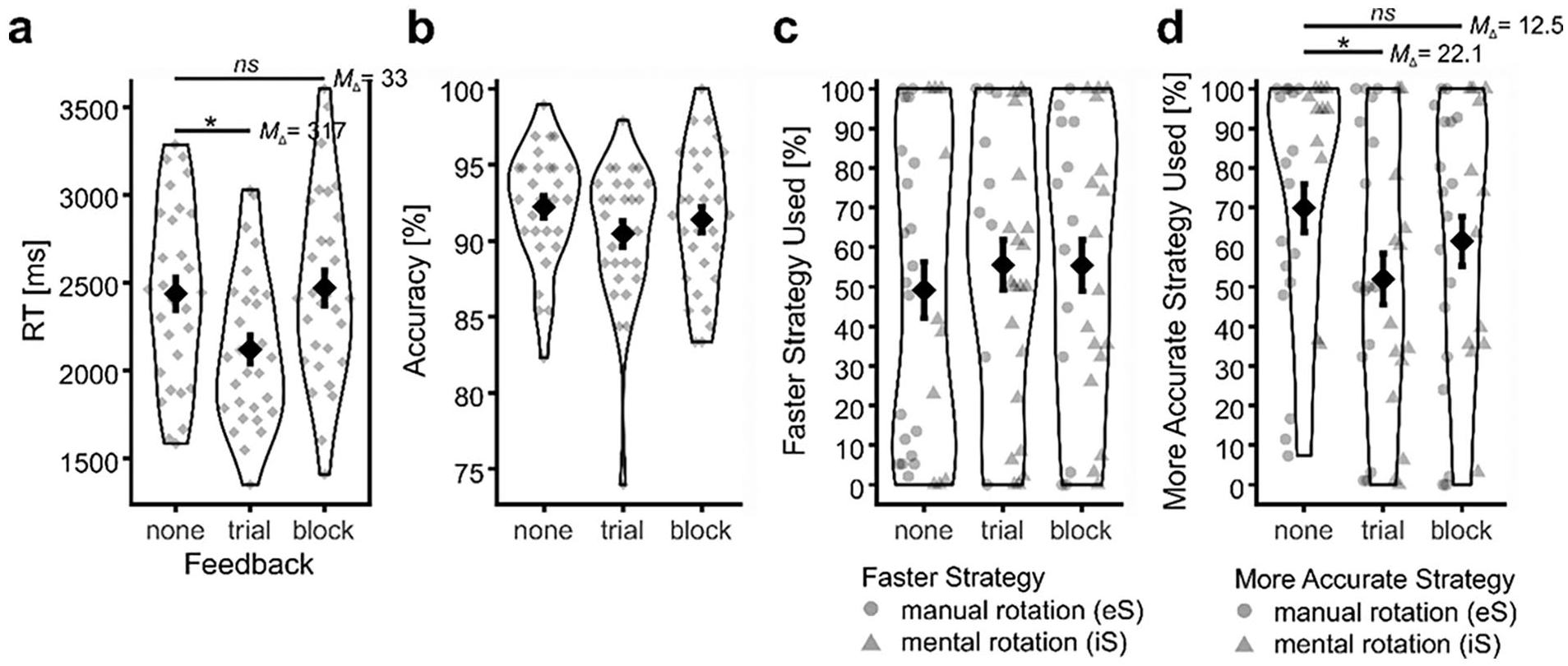

Feedback did not improve free choice performance (H2 is rejected)

RT in the choice block was influenced by feedback, F(2, 87) = 4.6, p = .013, ηG2 = .10; Figure 5a. Interestingly, the RT effect during choice trials was driven by fast responses of the trialwise feedback group, Mtrialwise = 2,120 ms, Mno feedback = 2,438 ms, t(58) = 2.6, p = .012, dCohen = −.7, 95% CI d = [−1.2, −.1], BF10 = 4.2, and not by the blockwise feedback group, Mblockwise = 2,471 ms, Mno feedback = 2,120 ms, t(58) = −.2, p = .806, dCohen = .06, 95% CI d = [−.4, .6], BF10 = .3. However, since the trialwise feedback group already exhibited particularly fast responses during no-choice trials (Figure 3a), trialwise feedback seems to speed up responses in general rather than improving strategy selection in the choice block. A similar global speeding up of responses when trialwise speed feedback was provided replicates an earlier observation (Touron & Hertzog, 2014). Accuracy in the choice block was not influenced by feedback, F(2, 87) = 1.2, p = .296, ηG2 = .03; Figure 5b. In sum, we conclude that there is no evidence for a beneficial effect of feedback on choice performance.

Performance and strategy choice during choice block.

Feedback does not adaptively impact eS use frequency (H3 is rejected)

Feedback did not influence our participants’ propensity to choose the faster strategy (F(2, 87) = .3, p = .744, ηG2 = .01); see Figure 5c. However, feedback did influence our participants’ propensity to choose the more accurate strategy (F(2, 85) = 3.2, p = .046, ηG2 = .07; Figure 5d. Unexpectedly, participants without feedback were choosing the more accurate strategy more rather than less frequently. Post hoc t-tests suggest a less adaptive choice mostly in comparison to the trialwise feedback group (Mno feedback =

Without feedback, eS performance is estimated more accurately than iS performance (H4 is rejected)

Our participants’ ability to evaluate their performance with different strategies was influenced both by feedback and strategy (see results for H1). At a closer look, eS performance was estimated more precisely than iS performance when no feedback was given. This was true for RT, MΔ = −372 ms, t(29) = −3.2, p = .004, dCohen = −.6, 95% CI d = [−.9, −.2], BF10 = 10.9, and accuracy, MΔ = −.03, t(29) = −2.7, p = .013, dCohen = -.5, 95% CI d = [−.9, 0], BF10 = 3.6. Once any type of feedback was given, the estimated performances of iS and eS were more comparable (all p > .2; all BF10 < .5 but > .2).

Exploratory results

So far, results indicated that feedback substantially improved absolute performance estimates. However, results also indicated that these improved estimates were not associated with improved choice. Why?

Descriptively, optimal strategies are chosen even without feedback

It could be that optimal strategies hardly exist because whenever one strategy is faster, the other is more accurate. If that were the case, feedback would not help participants make more adaptive choices. An exploratory look at the data indeed confirms that for most participants—62 out of 90—there is no unequivocally optimal strategy, meaning that no strategy had been better regarding both speed and accuracy during no-choice trials. However, for the remaining 28 participants, one strategy was unequivocally optimal with respect to performance during no-choice trials. Nevertheless, most participants in the no-feedback condition descriptively preferred the optimal strategy (10 out of 12 participants). Thus, optimal strategies existed for a sizable number of participants, and optimal strategies are frequently chosen even without feedback. 3 Individual actual and estimated performance data and associated eS use proportions can be inspected in Figure S1.

Without feedback, actual and estimated performance differences were unrelated

Surprisingly, actual and estimated performance differences between internal and extended strategies—i.e., ΔActual Performance(internal, extended) and ΔEstimated Performance(internal, extended) were calculated for each participant—during no choice trials were unrelated in the no-feedback group regarding accuracy, rPearson(28) = .14, p = .469, CI95% = [−.24, .47].) as well as RT, rPearson(28) = −.06, p = .759, CI95% = [−.41, .31]. Contrarily, actual and estimated performance differences during no-choice trials were substantially related in the trialwise feedback group regarding accuracy, rPearson(28) = .55, p = .002, CI95% = [.24, .76], as well as RT, rPearson(28) = .48, p = .008, CI95% = [.14, .72]. Similar results were obtained for the blockwise feedback group regarding accuracy, rPearson(28) = .83, p < .0001, CI95% = [.66, .91], as well as RT, rPearson(28) = .38, p = .039, CI95% = [.02, .65]. In sum, these exploratory analyses suggest that written feedback could be necessary to create accurate explicit representations of performance differences between the available strategies; see Figure S3 for the raw data underlying the correlations. That being said, we acknowledge that sample sizes are low and that these findings should be interpreted with care (Schönbrodt & Perugini, 2013).

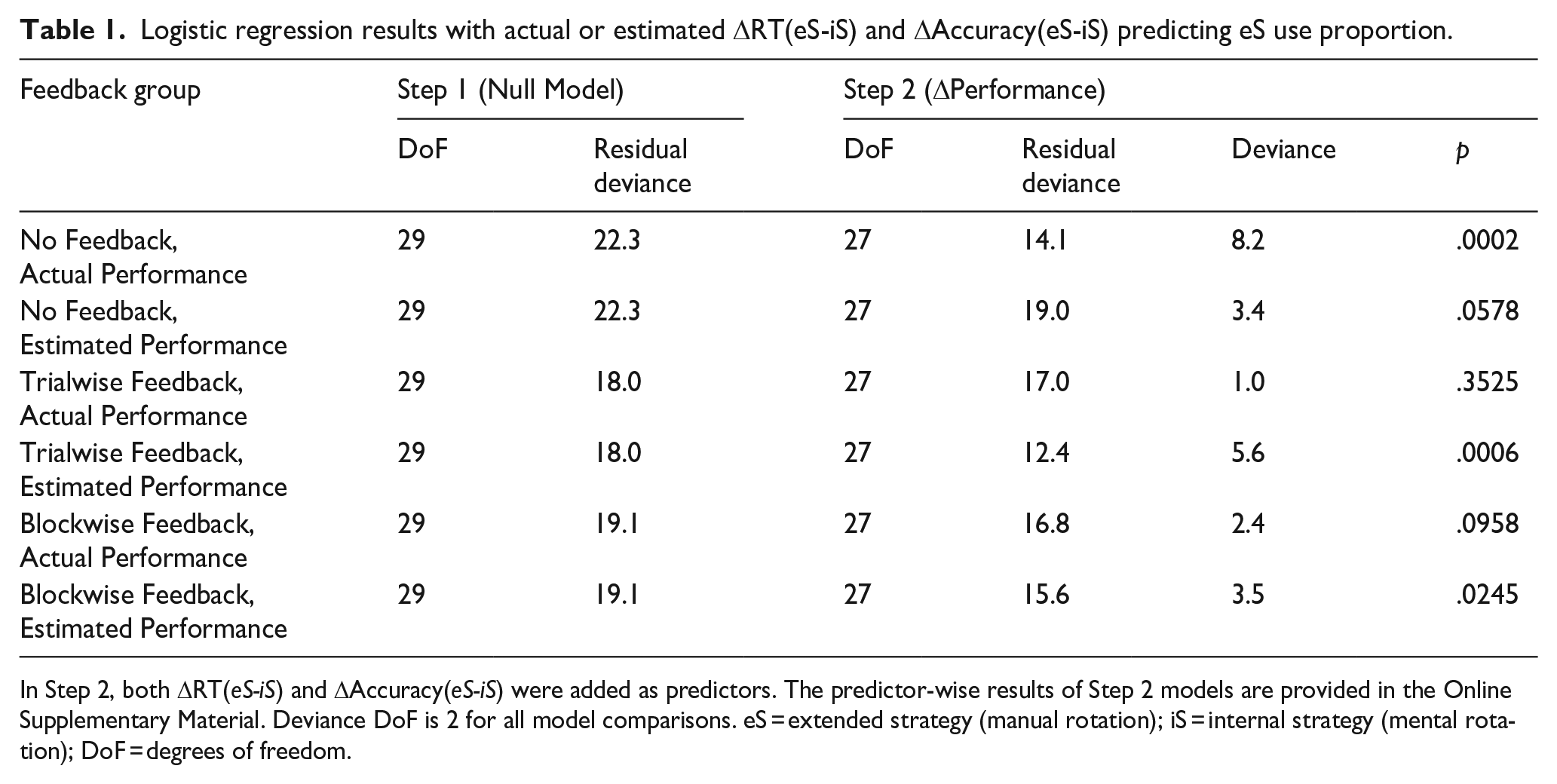

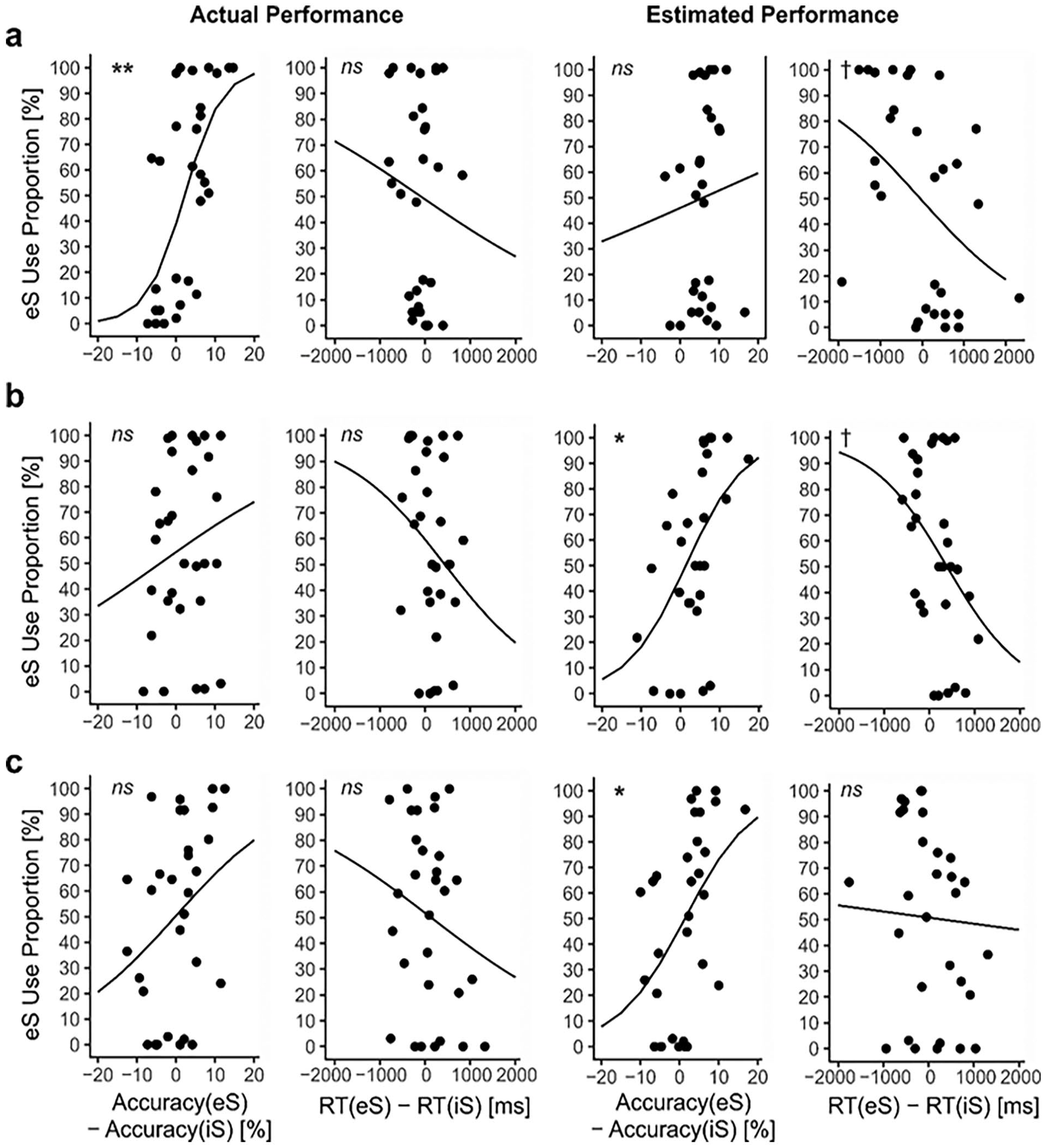

Only without feedback, actual performance differences predicted eS use proportion

Given that actual and estimated performance differences between internal and extended strategies were not correlated for the no feedback group, we wanted to gain an understanding of how much both measures influenced eS use proportion. To this end, we conducted two logistic regressions with eS Use Proportion as DV. For the first regression, we entered standardised actual performance differences throughout the no-choice block—i.e., ΔRT(extended-internal) and ΔAccuracy(extended-internal)—as predictors. For the second regression, we analogously entered the standardised estimated performance differences that participants estimated right after the no-choice block as predictors. We then tested the reduction of residual deviance against the null model for both models. Based on a .05 alpha level, results indicate that only actual (p = .0002) but not estimated performance (p = .0578) predicted eS use proportion; compare Table 1. Interestingly, the reverse was true for both conditions with feedback. Predicted eS use proportions for both accuracy and RT for each model are depicted in Figure 6. The associated model results can be inspected in Table S1. In sum, the no-feedback group predominantly used their actual performance in the no-choice block for strategy choice. However, ironically, both feedback groups relied little on actual performance. Instead, both feedback groups seemed to prefer relying on noisy performance estimates.

Logistic regression results with actual or estimated ΔRT(eS-iS) and ΔAccuracy(eS-iS) predicting eS use proportion.

In Step 2, both ΔRT(eS-iS) and ΔAccuracy(eS-iS) were added as predictors. The predictor-wise results of Step 2 models are provided in the Online Supplementary Material. Deviance DoF is 2 for all model comparisons. eS = extended strategy (manual rotation); iS = internal strategy (mental rotation); DoF = degrees of freedom.

Logistic regression estimated marginal means.

Discussion

When asked how well they performed with both mental and manual rotation strategies, respectively, participants who did not receive written performance feedback estimated their performance poorly in comparison to participants with feedback. Although the extent to which participants needed feedback to accurately estimate their performance was somewhat surprising to us, the substantial impact of feedback was not. What we however were considerably surprised about is that despite the metacognitive help that feedback clearly provided, feedback had not been supporting participants in making more adaptive strategy choices. Quite to the contrary, our explorations suggest that feedback had ultimately been more confusing than supportive. Only participants without feedback based their strategy choice on actual performance differences between the mental and manual strategies. Contrarily, participants who received either trialwise or blockwise feedback did not. Instead, the strategy choice of participants with feedback was associated with their estimated performance, which was likely so noisy that it provided comparably bad guidance.

Against the backdrop of the present findings, we want to discuss two questions. First, what is the mechanism behind adaptive—adaptiveness is when the choice is guided by actual performance of strategies—choice for participants without feedback? Second, why is this adaptive choice not present for participants with either trialwise or blockwise feedback?

What is the mechanism behind adaptive choice for participants without written feedback?

When designing the experiment, we expected that participants would monitor their performances with both mental and manual rotation and eventually integrate the monitoring results in a consciously accessible representation. These consciously accessible representations could then inform deliberate strategy choices. However, the present data oppose such a scenario. Data suggest that, when no feedback was given, neither were valid consciously accessible performance estimates created nor were these invalid estimates used to inform strategy choice. We infer that explicit metacognition had been largely irrelevant for the no feedback group, meaning that no consciously accessible performance representations had been created and mediating the choice process. It would be conceivable that explicit representations other than performance estimates informed our participants’ choice, which we, however, deem somewhat unlikely given the importance of performance for conscious strategy choice (e.g., Gilbert, 2015; Weis & Kunde, 2024).

Instead, present data suggest that implicit metacognition had been at work (for reviews, see Cary & Reder, 2002; Frith & Frith, 2022; Koriat, 2007). In other words, we suggest that important parts of the monitoring process had been conducted without creating explicit performance representations. The process might be an analogue to the one that participants employed when solving arithmetic problems in a previous study: the frequency of arithmetic problems that allowed a quick internal strategy—the parity-check strategy 4 —altered how frequently participants used that strategy. Crucially, when asking participants in that study, they had no explicit representation of the existence of such a strategy (Lemaire & Reder, 1999). As a conceptual note, we want to add that it is challenging to disambiguate what we and others (see Frith & Frith, 2022) call implicit metacognition from lower-level processes like associative learning or episodic retrieval. Adaptive behaviour can emerge without an active “performance monitoring network” (Ullsperger et al., 2014), and we cannot rule out a substantial impact of such lower-level processes. What remains is that, taken together, our results second the notion that problem solvers need not become aware of the reasons that influenced their strategy choice and that this unawareness does not necessarily harm—and might even benefit—performance.

Why is feedback associated with less adaptive strategy choice?

Since we provided participants with correct performance feedback, it was counterintuitive to find no relationship between actual performance differences between the available strategies during the no-choice block and subsequent strategy choice. Because we found such a relationship for participants without written feedback, we conclude that providing performance feedback must have corrupted strategy choice either (1) indirectly via interfering with implicit monitoring or low-level processes like associative learning 5 or (2) more directly by changing decision criteria.

Regarding the former, it is possible that the simple presence of written feedback focuses cognitive processing on the feedback, which might interfere with other more beneficial processes. Such interference has, for example, been found with immediate as compared to delayed feedback in a motor skill task (Swinnen et al., 1990) or after error commission as compared to correct responding in a perceptual task (Buzzell et al., 2017). However, if such inference was the prime reason for the missing correlation between actual performance differences and choice in the present study, blockwise feedback should have been superior to trialwise feedback as the number of potentially interfering feedback encounters has been much lower in the former as compared to the latter. Yet, since performance differences between mental and manual rotation during the no-choice block did not predict subsequent choice even in the block feedback condition, interference seems an unlikely explanation.

Instead, our data suggest that, when written feedback was provided, the choice was less based on information stemming from implicit metacognition or lower-level processes and more from explicit metacognition. Thus, our data support the notion that decision criteria for strategy choice were shifted when feedback was provided: The decision was more based on explicit representations of performance and less on implicit signals. Such a change in decision criteria might be analogue to decreased reliance on what has been termed an implicit “inner” monitoring loop and an increased reliance on an “outer” monitoring loop. The existence of an inner and an outer loop had been suggested as the basis for error detection in typists (Logan & Crump, 2010). Naturally, errors occur during typing. In that study, however, these errors had sometimes been corrected before appearing on the screen. Other times, inserted errors appeared on the screen although the typing was correct. What could be shown is that inner and outer loops operate with different kinds of feedback. The inner loop relies on the implicit processing of own typing signals and a typing error will lead to a slow-down in subsequent typing irrespective of what’s depicted on the screen. The outer loop relies on visual signals and affects explicit error processing: participants explicitly took blame for inserted errors and credit for corrected errors.

That inner and outer loops exist and can work independently would be in line with both present data and an earlier study by Engeler and Gilbert (2020). Engeler and Gilbert asked participants in an experimental group to predict their performance before each forced trial and received feedback on their actual performance after each forced trial for both an internal and an extended strategy. As in the present study, the intervention substantially improved how accurately participants estimated their performance with both strategies. 6 However, in subsequent choice trials, participants in a control group without predictions and feedback were—also as in the present study—making descriptively more rather than less adaptive choices between an internal and an extended strategy.

It is an open question whether monetary incentives might render feedback more helpful. However, given that previous research found monetary incentives to not be able to remediate maladaptive choices between an internal and an extended strategy (Gilbert et al., 2020), it is questionable why the situation should change once feedback is provided.

Finally, we deem it possible that the existence of feedback frees mental resources. The awareness that there is feedback—either trialwise or blockwise—could potentially lead to a top-down downregulation of implicit monitoring in the spirit of effort minimisation (see Delgado et al., 2005). In other words, we speculate that feedback might free cognitive resources that are usually devoted to implicit monitoring, such that feedback might not help performance but might still have positive impact. Clearly, further research is required to address this speculation.

Conclusion and outlook

To solve problems efficiently, a well-informed choice between internal and extended strategies is mandatory. Here, we showed that written feedback can indeed be used to improve participants’ awareness of strategy-specific performances. However, we also showed that increased awareness does not guarantee improved strategy choice and that written feedback might even tamper with more adaptive implicit mechanisms. Thus, our advice is to avoid implementing immediate written performance feedback without having good reasons to do so. While explicit metacognition might indeed be a “human superpower” (Frith & Frith, 2022, p. 1023), it was not that powerful in the present setup. Providing specific strategy advice right before a specific problem (Gilbert et al., 2020) or general strategy advice preceding several problems of the same class (Weis & Kunde, 2023) can be more helpful than feedback in that respect. A better understanding of the interplay between explicit and implicit metacognition is desirable to create interventions that help human problem solvers make better cognitive strategy choices in the future.

Supplemental Material

sj-docx-1-qjp-10.1177_17470218241282659 – Supplemental material for When feedback backfires: Knowledge of results can impair cognitive strategy choice

Supplemental material, sj-docx-1-qjp-10.1177_17470218241282659 for When feedback backfires: Knowledge of results can impair cognitive strategy choice by Patrick P Weis and Wilfried Kunde in Quarterly Journal of Experimental Psychology

Footnotes

Author Contribution

This research was conceptualised by PPW, designed by PPW and WK, experimental code was written by PPW, and data was collected, curated, and analysed by PPW. Results were validated and visualised by PPW. The original draft was written by PPW and reviewed and edited by WK. Funding was acquired by PPW. The project was administered and supervised by PPW.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This research was funded by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) – 463896411.

Ethics statement

Informed consent was obtained from all individual participants included in the study. This research complied with the tenets of the Declaration of Helsinki and was approved by the Ethics Committee at a European university.

Informed consent

Informed consent was obtained from all individual participants included in the study. This research complied with the tenets of the Declaration of Helsinki and was approved by the Ethics Committee at a European university.

Data availability

Data, analysis, and stimulus materials for both experiments are available in an online repository [https://osf.io/4gfqc]. The study design was preregistered [![]() ].

].

Supplementary material

The Supplementary Material is available at: qjep.sagepub.com

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.