Abstract

Many accounts of instruction-based learning assume that initial declarative representations are transformed into executable procedural ones, so as to enable instruction implementation. We tested the hypothesis that declarative-procedural transformation should be bound to a specific response modality and not transferable across different modalities. In Experiment 1, novel stimulus-response instructions had to be implemented either verbally or manually either once or three times. Modality-specific procedural encoding was probed via a subsequent implicit priming test. This involved the same stimuli but required a response that could be either compatible or incompatible with the originally instructed response using either the same or a different response modality. We found that procedural encoding was modality-specific as indicated by a stronger repetition-dependent increase of the compatibility effect when response modality was unchanged. Explicit test performance, serving as a marker of declarative encoding, was independent of modality transition and it was uncorrelated with implicit test performance. Unexpectedly, the implicit priming test also revealed a small yet significant transfer to the response modality that was previously not overtly implemented, likely reflecting covert response “simulation”. To examine if covertly simulated responding occurs even when instruction implementation is omitted altogether, we conducted Experiment 2. Subjects merely viewed novel stimulus-response instructions prior to testing. Again, we found evidence for procedural encoding of the non-implemented instructions. Moreover, a direct comparison of both experiments revealed higher test scores (both implicit and explicit) for previously non-implemented instructions than for previously implemented instructions. This calls for theoretical reconciliation with diverging previous study results.

Keywords

Introduction

In contrast to more laborious trial-and-error learning, if directly instructed, people can rapidly acquire novel tasks, enabling high-performance accuracy from the outset (Cole et al., 2013; Collins & Cockburn, 2020; Liu et al., 2015; Mohr et al., 2018; Ruge, Karcz, et al., 2018; Wolfensteller & Ruge, 2012). An often-made assumption is that instruction-based learning necessarily requires a translation of task information, which is conveyed in an abstract format by “the sender” into actual concrete behavior by “the receiver” (De Houwer et al., 2017). Accordingly, many accounts of instruction-based learning assume that newly instructed tasks are first encoded in a “task model” (Duncan et al., 2008) on a symbolic or declarative level of representation (Bhandari & Duncan, 2014; Cole et al., 2011; Hartstra et al., 2011; Monsell & Graham, 2021; Ruge & Wolfensteller, 2010). Actual behavioural implementation of novel instructions then depends on the transformation into a pragmatic or procedural type of representation (Gonzalez-Garcia et al., 2021; Liefooghe & De Houwer, 2018; Monsell & Graham, 2021; Oberauer, 2010; Ruge et al., 2019; Ruge & Wolfensteller, 2010; Theeuwes et al., 2018). In line with this, neuropsychological reports suggest that frontal cortex damage can be associated with dissociations between “knowing and doing” in the sense that (typically manual) implementation of novel task instructions is impaired, whereas (declarative) verbal report of these instructions remains intact (Duncan et al., 1996; Luria, 1962, 1973; Milner, 1963).

Although the notion of declarative-procedural transformation underlies many accounts of instruction-based learning, a comprehensive characterisation of its basic nature is still lacking. To help fill this gap, the present study set out to test the assumption that procedural task representations formed via declarative-procedural transformation should be bound to a specific response modality. This research question was partly driven by questions arising from a previous study approach which examined the relevance of overt instruction implementation for shaping procedural task representations. Hence, before turning to a more detailed description of our present study rationale (section “The present study rationale”), we first elaborate on these earlier results and their potential implications and limitations.

Previous studies

The relevance of overt instruction implementation can be examined by directly comparing behavioural effects following novel instructions that had never been implemented before with novel instructions that had been successfully implemented at least once (Jargow et al., 2022; Pfeuffer et al., 2017, 2018). The specific study design was based on a prime–probe setup where prime trials involved novel stimulus-response rules which could be either merely instructed or immediately implemented. Instruction learning success was subsequently probed by quantifying how strongly the “prime task” would interact with the implementation of a “probe task” involving the same stimulus set but requiring either a different response according to another task rule (incompatible trials) or the same response as before (compatible trials). A first important observation was that immediately implemented prime task instructions compared with merely instructed prime task instructions resulted in a significantly larger priming effect (i.e., compatibility effect) in the subsequent probe task (Pfeuffer et al., 2017). Yet, despite this benefit of prior immediate instruction implementation this study also showed that mere instruction was sufficient to induce a small yet statistically significant priming effect. This latter finding is in line with a variety of previous studies showing priming-like aftereffects following merely instructed tasks (e.g., Braem et al., 2017; Liefooghe & De Houwer, 2018; Meiran et al., 2015; Wenke et al., 2009). Observations like this might commonly suggest a potential role of covert or “simulated” instruction implementation even when the instructions are not overtly executed (cf., Liefooghe & De Houwer, 2018; Liefooghe et al., 2021; Palenciano et al., 2021; Ruge & Wolfensteller, 2010; Theeuwes et al., 2018). Thus far, a preliminary conclusion could suggest that learning processes, whether rooted in prior mere instruction or prior immediate instruction implementation, exhibit qualitative similarity. The divergence lies primarily in the quantitative aspect, delineating the efficacy of the declarative-procedural transformation process engaged during overt versus covert instruction implementation.

A more complicated picture emerged in a follow-up study by Pfeuffer et al. (2018). The authors examined the same type of priming effects and this time additionally included a manipulation of instruction repetition (once versus four times). The authors observed a highly interesting dissociation, namely, that only the repetition of immediately implemented instructions significantly amplified subsequent priming effects (see also Moutsopoulou et al., 2015). This contrasted with the repetition of merely instructed prime trials which failed to induce a significant repetition benefit beyond the “baseline” priming effect already established after a single merely instructed prime trial. Despite this clear-cut empirical dissociation between merely instructed prime trials (repetition benefit absent) and immediately implemented prime trial instructions (repetition benefit present), a straight-forward interpretation remains elusive. At least two decisively different explanations are equally consistent with this finding.

First, it may reflect qualitatively different types of instruction encoding proceeding at different rates of learning. In this scenario, mere instruction might exclusively involve declarative encoding, which reaches its peak levels rapidly, possibly after just one repetition (termed “one-shot learning”). By contrast, immediate instruction implementation might involve declarative-procedural transformation processes leading to a gradual strengthening of procedural representations over multiple implementation trials. Clearly, this first account stands against our earlier conclusion stating only quantitative encoding differences between the two prime trial conditions. Specifically, we had concluded that both merely instructed as well as immediately implemented prime trials commonly rely on the very same fundamental type of declarative-procedural transformation during covert and overt implementation, respectively.

An alternative second account, however, can reconcile the notion of a shared declarative-procedural transformation process with the absence of a repetition benefit for mere instruction trials. Crucially, the lack of external feedback during mere instruction trials regarding one’s engagement in covert implementation might result in a procedural encoding process that is generally less reliable and less enduring across repetitions compared with immediately implemented prime trials. Specifically, on the first instruction encounter, sheer novelty might capture attention and trigger covert instruction implementation, which might be sufficient to induce a weak but significant priming effect later on. During subsequent mere instruction trials, however, attention and task commitment might rapidly fade which could explain why the priming effect is not further boosted.

The present study rationale

The current study aimed to enable more clear-cut conclusions regarding the involvement of declarative and procedural encoding processes while at the same time eliminating potential confounds due to differences in attention and task commitment between different instruction conditions. To this end, we employed a new “procedural transfer” approach. Our rationale can be readily exemplified by Experiment 1 of this article, which was based on a similar prime–probe design as in Pfeuffer et al. (2018). Crucially, the mere instruction condition was replaced by an additional instruction implementation condition using a second response modality, in this case, overt verbal responding. Hence, prime trial instructions were always immediately implemented (either verbally or manually) and probe trial response modality could be either the same (e.g., prime verbal—probe verbal) or different (e.g., prime verbal—probe manual). This design enabled us to assess the relevance of (repeated) declarative-procedural transformation processes which should be indicated by a response-modality-specific amplification of the priming effect. A procedural rule representation (as a result of successful declarative-procedural transformation) should entail concrete (motoric) information about the way a response has to be made (e.g., the stimulus “apple” requires a right index finger button press), whereas this is not necessarily the case for declarative representations (e.g., house—right). Therefore, procedural rule representation should be inherently modality-specific. For instance, if probe trials required manual implementation, priming effects should be larger following manually implemented prime trials than following verbally implemented prime trials.

An additional important goal of the present study was to assess the impact of different instruction conditions not only by means of a probe trial priming test (i.e., a more “implicit” test) but also by means of a more explicit probe trial test where correct performance necessarily depended on prime trial instruction retrieval (Braem et al., 2019; Ruge, Karcz, et al., 2018). In the implicit test, a correct probe trial response only depended on the current probe trial task instruction which might be unintentionally affected by the still lingering but currently irrelevant prime trial task instruction. By contrast, the explicit test was constructed such that a correct probe trial response depended exclusively on the prime trial task instruction which needed to be retrieved from memory. Interestingly, a recent well-powered study showed that more implicit tests vs. more explicit tests of instruction memory were only weakly correlated (Braem et al., 2019). This, in turn, suggests that the two test types might—for the most part—capture distinct mnemonic subcomponents. This is also consistent with previous study examples from the classical conditioning domain demonstrating that the effects of mere instruction vs. implementation (or experience) can depend on the type of test or dependent variable being assessed (Olsson & Phelps, 2004; Sevenster et al., 2012). In the current study, we thus reasoned that the explicit test would be a more direct test of modality-independent declarative task representations than the implicit priming test. Derived from this, our assumption was that explicit test performance, and especially so explicit test accuracy, should be independent of modality-specific declarative-procedural transformation processes. Hence, even if the prime instruction (e.g., apple—left) was implemented verbally (or manually), this instruction should be remembered equally well also when probe response modality changes from verbal to manual (or from manual to verbal).

We conducted two experiments, the first particularly designed to examine the impact of prime–probe response modality transition as a means to further characterise the hypothesised involvement of declarative-procedural transformation processes. Experiment 2 employed merely instructed prime trials as in the work of Pfeuffer et al. (2018) while still employing the two different response modalities in probe trials. This experiment was particularly designed to enable a direct comparison with the present Experiment 1 under comparable overall context conditions.

Experiment 1

Experiment 1 aimed at probing the involvement of declarative-procedural transformation processes in instruction-based learning. As outlined in the general Introduction, we assumed that declarative-procedural transformation should be specific to a certain response modality. Accordingly, our first hypothesis was that novel stimulus-response instructions that were implemented either manually or verbally in prime trials would affect subsequent probe trial performance more strongly when probe trial response modality was the same as during prime trials. In the following, we call this experimental factor “prime–probe modality transition.” Second, we assumed that the formation of procedural representations (through declarative-procedural transformation) would proceed incrementally. Hence, our second and most crucial hypothesis was that the prime–probe modality transition effect should be stronger following three vs. one prime trial(s) per individual S–R instruction.

Importantly, we employed two randomly intermixed types of prime–probe blocks involving different types of probe trials which we assumed to be differentially sensitive to declarative and procedural representations, respectively. One type entailed the explicit recall of the previously instructed S–R associations from the prime trial phase. It was assumed to reflect instruction encoding on the declarative level and hence to be independent of the particular prime–probe response modality combination. We called this type of block “explicit test.” In contrast, the other block type included compatible and incompatible trials with respect to the previously instructed prime trials. It was used to assess the prime-to-probe compatibility effect and was expected to be affected by prime–probe modality transition. Accordingly, we predicted that the compatibility effect should increase with an increasing number of implemented prime trial instructions, but more so when the response modality was the same in prime and probe trials. We called this kind of block “implicit test.”

Participants

Eighty human subjects participated in this study (52 female, 28 male; mean age: 23.4 years, range 18–34 years). All participants were right-handed and had normal or corrected-to-normal vision. All subjects gave written informed consent in accordance with the Declaration of Helsinki and were paid €8 per hour or received course credit.

Materials and methods

The experiment was programmed and executed with the Presentation® software version 19. The experiment comprised a total of 48 prime–probe blocks for each subject. Each block comprised a unique set of four different visually presented stimuli. For half of the blocks, stimuli were written disyllabic words taken from a previous instruction-based learning protocol (Ruge et al., 2019; Ruge, Legler, et al., 2018). For the other half of the blocks, stimuli were abstract line drawings taken from another previous instruction-based learning protocol (Ruge, Karcz, et al., 2018; Ruge & Wolfensteller, 2010). In all the reported analyses, the different stimulus types were lumped together. Stimulus sets were randomly drawn for each participant. Each block started with a prime trial phase followed by a probe trial phase (Figure 1). During the prime trial phase, each stimulus was presented either once or three times (i.e., a total of 4 or 12 prime trials). During the probe trial phase, each stimulus was presented once (i.e., a total of four probe trials). The probe trial phase started after all prime trials had been completed. During all prime trials, the correct response (left, middle, and right of the right hand) was instructed via one of three shapes surrounding the stimulus, a triangle, a square, and a regular pentagon, respectively. The assignment of these response cues to responses was counterbalanced across participants and was constant across the entire experiment for a particular participant. To examine the prime–probe transition regarding response modality, the executed responses could be either manual (index finger, middle finger, or ring finger of the right hand) or verbal (overtly uttered words “left,” “middle,” and “right”). The Presentation software provided an algorithm that automatically classified the verbal responses according to the three options (“left,” “middle,” and “right”). This enabled us to evaluate verbal response accuracy online and display accuracy feedback accordingly. Note that unlike Pfeuffer et al. (2017, 2018) but similar to the vast majority of other instruction-based learning paradigms, the task instructions exclusively involved stimulus-response assignments (e.g., table—right index finger), whereas an additional level of stimulus classification (e.g., table—manmade—right index finger) was omitted in the present study.

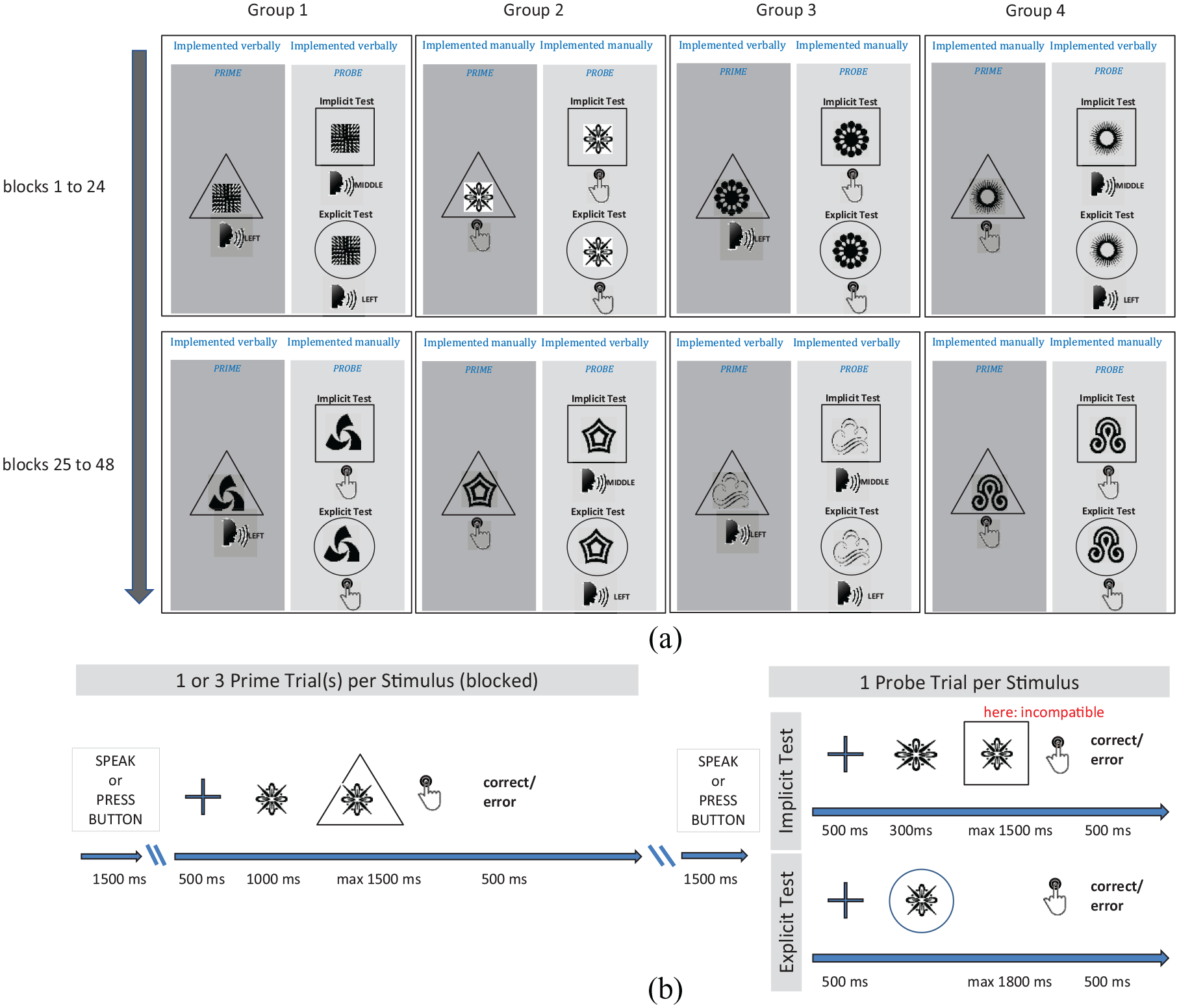

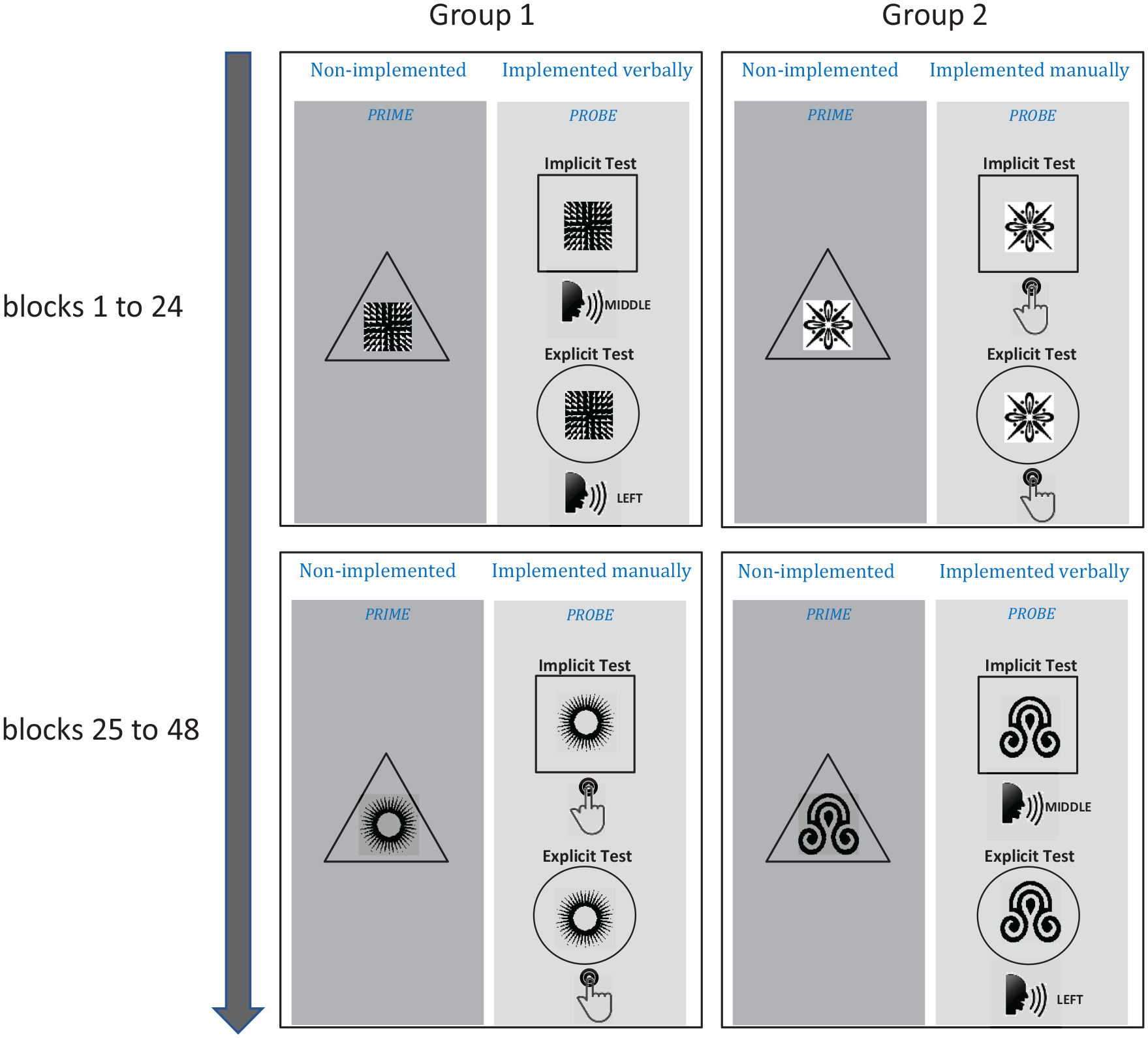

Experimental design Experiment 1. (a) Illustration of exemplary trials for different combinations of prime and probe trial types as a function of response modality (verbal or manual) in four different groups of subjects. In each group, the transition from prime to probe modality changed after learning Block 24. In each learning block, a unique set of four different stimuli (either abstract visual shapes or written words) were used. The required response (left, middle, right) was indicated by a geometrical shape (triangle, square, regular pentagon). Probe trials could be either an implicit priming test or an explicit recall test. In the implicit test, the same response cues were used as in the prime phase and indicated responses that could be either compatible or incompatible with the instructed prime trial response. In the explicit test, a circle indicated that the originally instructed prime trial response should again be used. (b) Within-trial timing of events for one exemplary prime trial and one exemplary probe trial (here: incompatible implicit test trial). The prime trial phase comprised either 1 or 3 repetitions of each of the 4 stimuli (i.e., 4 or 12 prime trials). The probe trial phase comprised one repetition of each of the four stimuli.

Although prime trials were of the same type for all 48 prime–probe blocks (only the stimuli differed), the probe trials could be either “implicit” test trials or “explicit” test trials randomly drawn for each prime–probe block (24 blocks each). Implicit probe trials were similar to prime trials, again comprising a response cue (triangle, square, or regular pentagon) denoting the now correct response for a given stimulus. The instructed probe trial response could be either the same as the prime trial response for that stimulus (compatible) or different from the prime trial response for that stimulus (incompatible).

In explicit probe trials, each stimulus was surrounded by a circle indicating that the original prime trial response needed to be retrieved from memory and executed.

Prime trials started with a 500 ms fixation cross presented in the centre of the screen, followed by a stimulus displayed centrally for 1,000 ms before the response cue was added to the stimulus and displayed for another max. 1,500 ms or until response execution. The response was followed by feedback, which was displayed centrally for 500 ms and indicated correct, erroneous, too slow responding, or using the wrong response modality (manual/verbal).

Implicit probe trial timing was identical to prime trial timing except that the interval between stimulus onset and cue onset was only 300 ms (instead of 1,000 ms). Explicit probe trial timing was identical to implicit probe trial timing except that the memory retrieval cue (circle) was displayed simultaneously with the stimulus.

Note that the compatibility effect evaluated in the implicit priming test could in principle reflect two different associative components involving the block-specific novel stimuli and the three response cues used throughout the entire experiment (triangle, square, and regular pentagon). Let us assume, for instance, the prime trial combination of S1 (table), RC1 (triangle), and R1 (index finger) together with the incompatible probe trial combination of S1 (table), RC2 (square), and R2 (middle finger). Then, probe performance could be impaired for two reasons. First, RC2 activates a representation of R2 whereas S1 (if prime trial learning was successful) directly activates a representation of R1. This causes a response conflict between R1 and R2. Second, probe trial S1 might additionally also activate a representation of RC1 (from the prime trial) which, in turn, might also activate a representation of R1 (via RC1–R1 association). Hence, in principle, the compatibility effect could be induced via [S-RC]–R associations and/or directly via S–R associations. Yet, direct S–R activation seems more plausible given that the subjects’ prime trial task was to produce a response and not to memorise a stimulus–stimulus (S-RC) association. Moreover, subjects could not anticipate whether the probe phase would comprise an implicit test or an explicit test. As the explicit test did not include RCs and therefore requires the retrieval of prime trial S–R associations, this again encourages the formation of direct S–R associations during the prime phase and its re-activation during the probe phase.

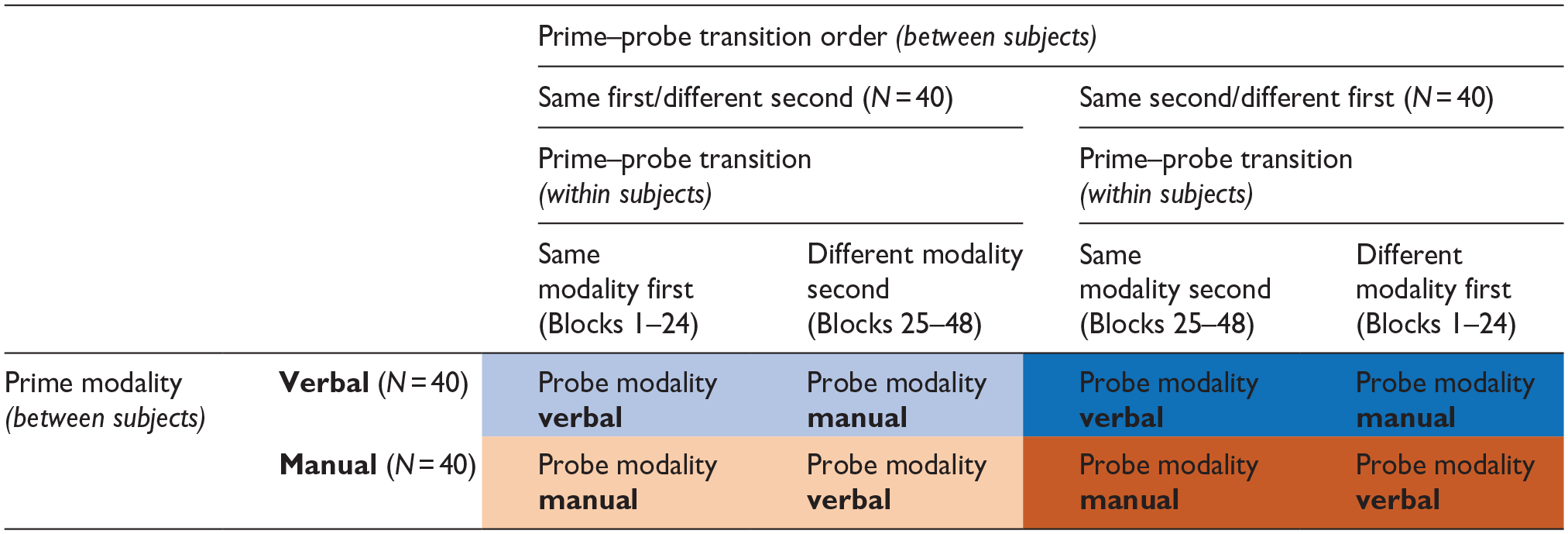

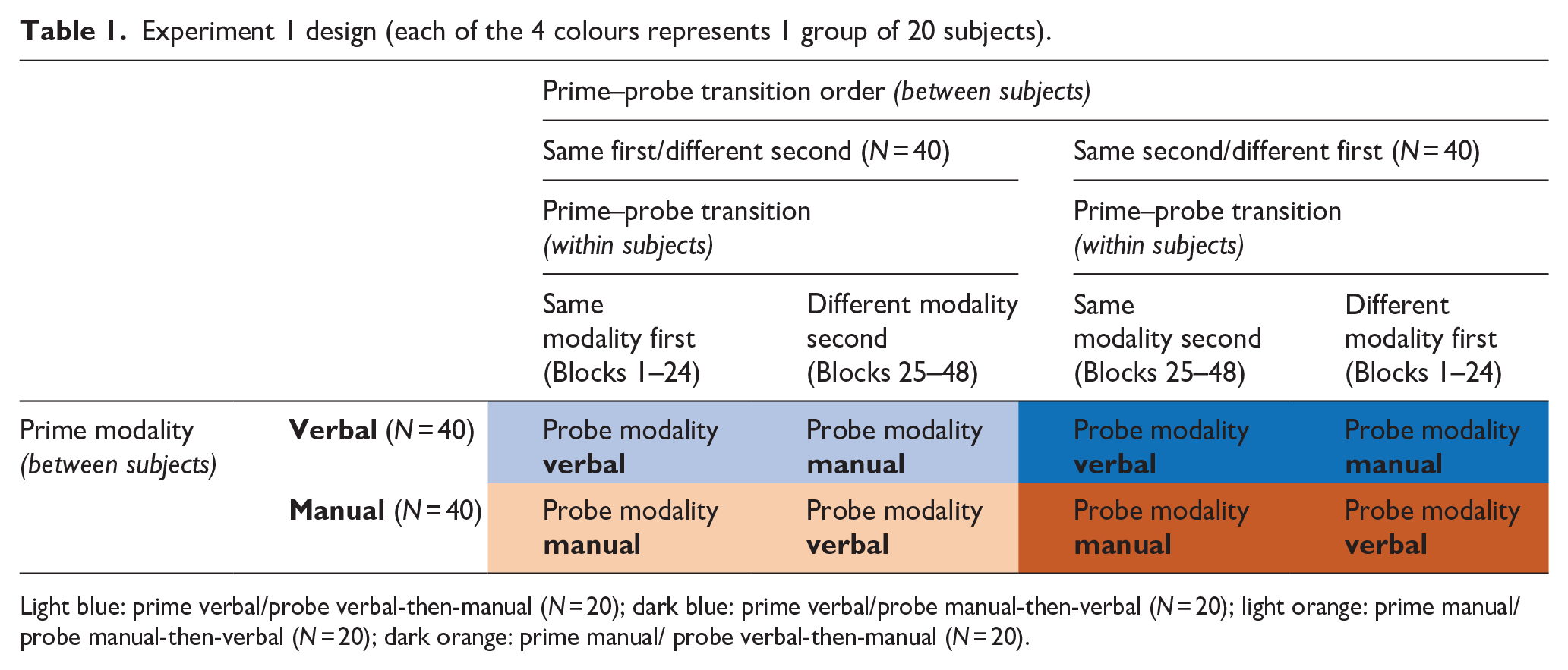

To examine the impact of prime–probe response modality transition, half of the prime–probe blocks (i.e., 24) involved the same response modality in both prime and probe trials (i.e., manual–manual or verbal–verbal), whereas the other half involved different response modalities (i.e., manual–verbal or verbal–manual). Before the start of a new prime trial phase as well as before the start of a new probe trial phase, a general instruction regarding response modality was displayed to remind subjects to respond either verbally or manually (see Figure 1). To not confuse the participants, the different prime–probe combinations regarding response modality (manual–manual; manual–verbal; verbal–verbal; verbal–manual) were blocked within subjects and the order of blocked modality combinations was counterbalanced across different groups of participants (each N = 20). Specifically, for each group of participants, prime–probe blocks 1–24 consistently realised only 1 of the 4 possible modality transition conditions (e.g., prime: manual; probe: manual) and then switched to another modality transition condition for the remaining blocks 25–48 (e.g., prime: manual; probe: verbal). Prime trial modality remained the same for all 48 blocks within each group. This scheme resulted in four groups, including (i) “prime:manual; probe:manual-then-verbal,” (ii) “prime:manual; probe:verbal-then-manual,” (iii) “prime:verbal; probe:verbal-then-manual,” and (iv) “prime:verbal; probe:manual-then-verbal.” Importantly, this counterbalancing scheme (see Table 1) ensured that each prime–probe modality transition condition (same modality, different modality) was realised equally often in the first and second half of the experiment, thereby precluding potential biases due to order effects.

Experiment 1 design (each of the 4 colours represents 1 group of 20 subjects).

Light blue: prime verbal/probe verbal-then-manual (N = 20); dark blue: prime verbal/probe manual-then-verbal (N = 20); light orange: prime manual/probe manual-then-verbal (N = 20); dark orange: prime manual/ probe verbal-then-manual (N = 20).

To familiarise themselves with the task procedure, subjects had to practice at least 4 prime–probe blocks before the start of the 1st and the 25th prime–probe block of the main experiment. This included two implicit and two explicit probe trial phases in random order. Different from the main experiment, a particular stimulus was repeated until correct responding in prime and probe trials. Subjects were told about this before the start of each practice phase. If the number of errors exceeded 75% in the last 2 explicit probe blocks, 2 blocks were added.

Before the start of the experiment, participants were instructed to respond as quickly and as accurately as possible and only after the presentation of the response cue (implicit test condition) or the memory recall cue (explicit test condition). Also, participants were told that implicit and explicit probe trial blocks were randomly intermixed. They were reminded that it was therefore important to always try to thoroughly memorise the instructed prime trial responses to be prepared for a potentially upcoming explicit probe test.

Data analysis

Mean response times (RTs) and mean error rates were analysed for both prime trials and probe trials. Mean RTs were computed for correct trials exceeding a minimum RT of 200 ms relative to the onset of the response cue. Regarding probe RTs, trials were only included when the corresponding prime trial for a specific stimulus had been correctly implemented as well. Error rates were computed as the proportion of error trials (wrong responses) relative to the number of all trials excluding those where participants used the wrong response modality or failed to respond at all.

For the analysis of prime and probe trial performance, the mean RTs and mean error rates were submitted to separate repeated measures analyses of variance (ANOVAs) including the within-subjects factor “prime number” (i.e., number of prime trials per stimulus; 1 or 3), the within-subjects factor “prime–probe modality transition” (same modality or different modalities), and the between-subjects factor “prime modality” (manual or verbal). Note that with this factorisation of the different conditions, the main effect of probe modality (manual vs. verbal) is captured by the interaction effect of prime modality × prime–probe modality transition.

The analysis of implicit probe test trials included “S-R compatibility” (compatible or incompatible) as an additional within-subjects factor which measured the “implicit priming effect.” As important aspects of the implicit test results turned out to be heavily affected by modality-specific speed–accuracy trade-offs, we decided to perform the main analysis based on the Linear Integrated Speed-Accuracy Score (LISAS). LISAS represents a weighted sum of correct RTs and error rates such that a higher RT value can be compensated by a lower error rate value and thereby yields more easily interpretable results (Bakun Emesh et al., 2022; Vandierendonck, 2017, 2021).

Furthermore, to increase interpretability, especially of non-significant null-hypothesis testing results, we performed complementary Bayesian analyses using Bayesian ANOVA and Bayesian correlation within the JASP software, version 0.18.1 (JASP-Team, 2023). For the Bayesian ANOVAs, we included the maximal set of random effects (van den Bergh et al., 2023).

For correlations, conclusions were based on BF10 (i.e., Bayes factor denoting the change in the odds favouring H1 over H0 given the data). For ANOVAs, conclusions were based on BFincl denoting the change in the odds favouring the model given the data achieved by including models with a certain effect (main effects or interactions averaged across matched models).

For the interpretation of Bayes factors, we used a popular heuristic (Schonbrodt & Wagenmakers, 2018). Evidence in favour of the null hypothesis (or against the inclusion of a certain effect in the ANOVA model) would be considered only “anecdotal” for 1/3 ⩽ BF < 1, “moderate” for 1/10 ⩽ BF < 1/3, “strong” for 1/30 ⩽ BF < 1/10, “very strong” for 1/100 ⩽ BF < 1/30, and “extreme” for BF < 1/100. Vice versa, evidence in favour of the alternative hypothesis (or for the inclusion of a certain effect in the ANOVA model) would be considered only “anecdotal” for 3 ⩾ BF > 1, “moderate” for 10 ⩾ BF > 3, “strong” for 30 ⩾ BF > 10, “very strong” for 100 ⩾ BF > 30, and “extreme” for BF > 100.

Results

Prime trials

Although the primary hypotheses targeted probe trial performance, we nevertheless also examined how some of the key experimental manipulations affected prime trial RTs and error rate. We therefore examined whether prime trial RTs and error rates would reflect the benefits of repeated S–R implementation (main effect number of prime trials), and we examined potential differences between prime response modalities (verbal vs. manual). We also tested whether prime trial performance would be affected by prime–probe modality transition (i.e., whether subjects expected the same or different response modality in the upcoming probe trials). It was important to show that prime trial performance would not be affected by prime–probe modality transition. This was indeed the case (see below). Thereby we could ensure that probe trial performance (our primary analysis target) is not confounded with altered prime trial processing in anticipation of upcoming probe trial response modality.

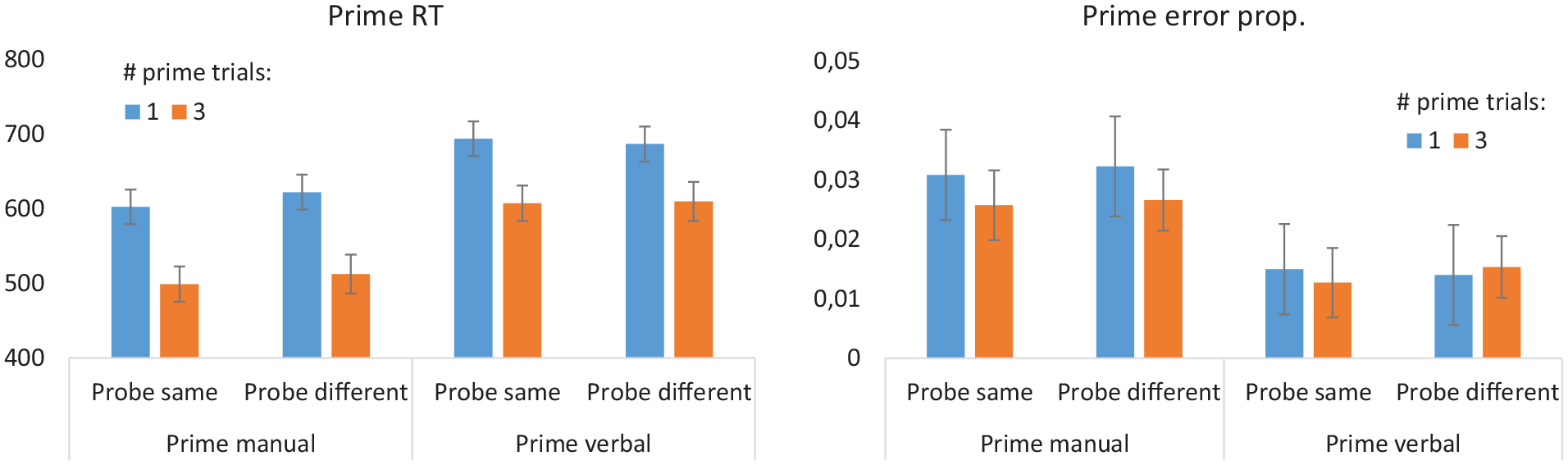

These results are depicted in Figure 2.

Prime trial results for Experiment 1. Error bars denote 95% confidence interval. Note that the distinction between “Probe same” and “Probe different” refers to the upcoming probe trial modality.

Response times

Generally, a greater number of prime trials per stimulus (3 vs. 1) significantly reduced RTs with extreme evidence in favour of the H1, F1,78 = 306.7, p(F) < .001, ηp2 = 0.80, BFincl = 1.158×1025. This reduction was significantly stronger for manual than verbal prime trials with anecdotal evidence in favour of the H1, F1,78 = 5.5, p(F) < .022, ηp2 = 0.065, BFincl = 2.714. Most importantly, there was neither a significant interaction between prime–probe modality transition and prime number with moderate evidence in favour of the H0, F1,78 = .104, p(F) > .748, ηp2 < 0.001, BFincl = 0.170, nor between prime–probe modality transition, prime number, and prime modality with anecdotal evidence in favour of the H0, F1,78 = 2.194, p(F) > .143, ηp2 < 0.027, BFincl = 0.604. Generally, verbal RTs were significantly slower than manual RTs with extreme evidence in favour of the H1, F1,78 = 35.3, p(F) < .001, ηp2 = 0.312, BFincl = 78.168×103.

Error rate

Mean error rate was generally very low (<3.1%) indicating that the pre-trained response cues (triangle, square, pentagon) were well memorised. Error rate was significantly smaller for verbal responses than manual responses with extreme evidence in favour of the H1, F1,78 = 18.35, p(F) < .001, ηp2 = 0.19, BFincl = 397.478. As for RTs, there was neither a significant interaction between prime–probe modality transition and prime number with moderate evidence in favour of the H0, F1,78 = 0.137, p(F) > .712, ηp2 < 0.002, BFincl = 0.197, nor between prime–probe modality transition, prime number, and prime modality with moderate evidence in favour of the H0, F1,78 = .258, p(F) > .613, ηp2 < 0.003, BFincl = 0.268.

The combination of reduced error rate and prolonged RT for verbal responses compared with manual responses hints towards a modality-related speed-accuracy trade-off with more accurate verbal responding at the cost of slower verbal responding and vice versa for manual responding. This seems to be a general pattern which also re-appears in subsequent analyses of probe responses.

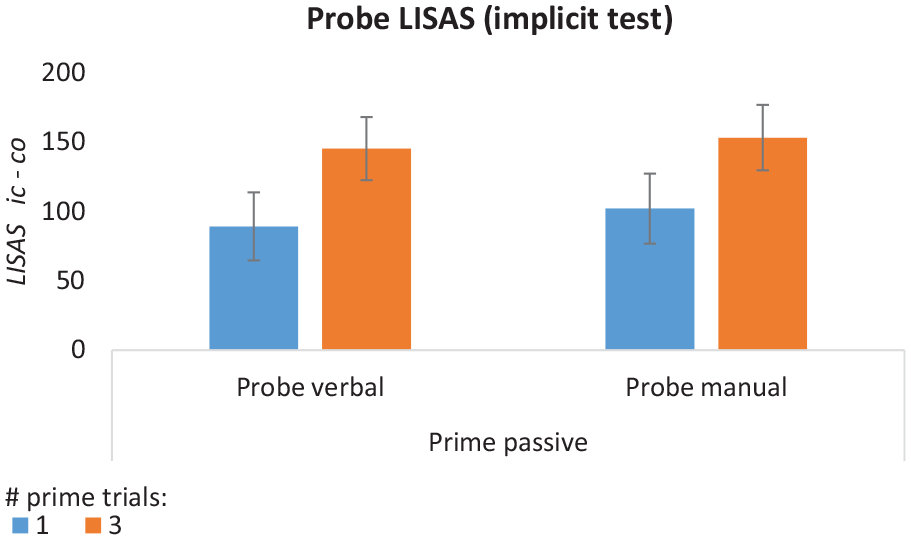

Probe trials—implicit test

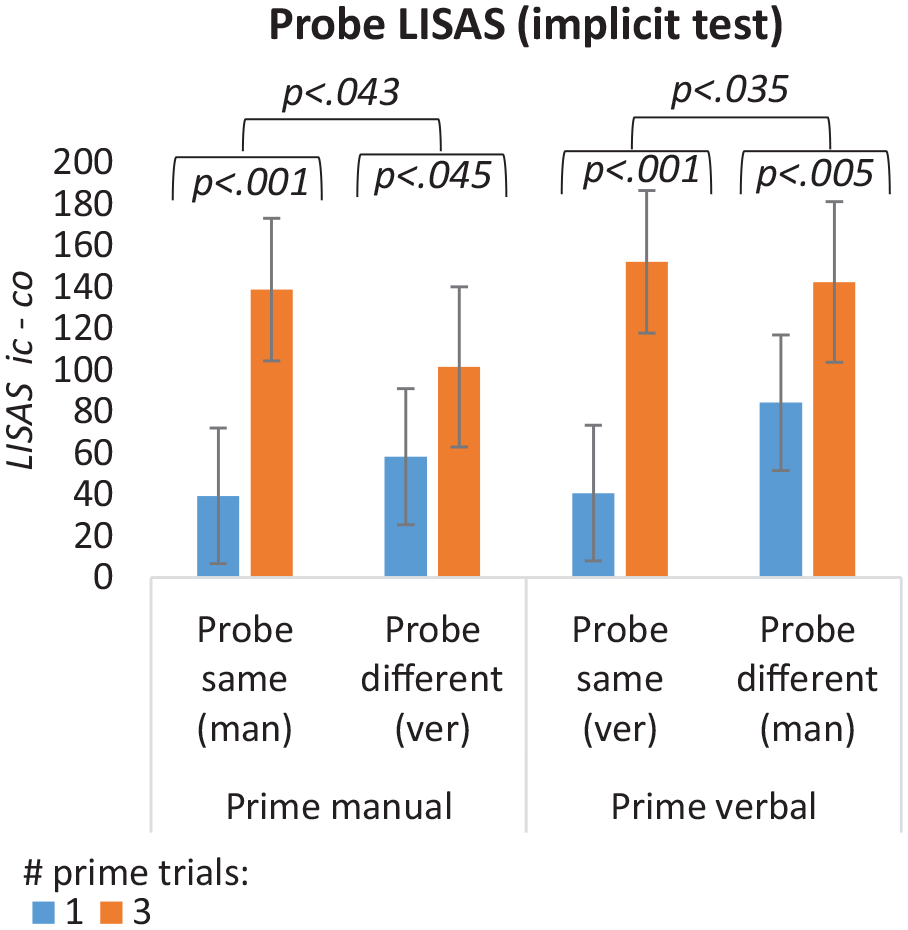

The report of implicit probe trial results focused on compatibility-related findings. The key hypothesis of modality-specific declarative-procedural transformation was tested by assessing whether the expected prime-repetition-dependent increase of the compatibility effect was further modulated by prime–probe modality transition (i.e., three-way interaction compatibility × number of prime instances × prime–probe modality transition). When analysing mean RT and error rate, we found that this three-way interaction was further modulated by prime trial modality (see Supplementary Materials Section 1). Crucially, RT and error rate were affected antagonistically likely reflecting modality-specific differences in speed-accuracy trade-off. This is a phenomenon that was already visible on a very general level in prime trial performance (see Figure 2). To obtain more easily interpretable results, we therefore decided to focus on the LISAS in the main text. These results are visualised in Figure 3. The complementary results for RT and error are shown in Supplementary Figure 1.

Implicit priming test results for Experiment 1. Values represent the LISAS difference between incompatible and compatible probe trial responses. Error bars represent 95% confidence interval.

Linear Integrated Speed-Accuracy Score

The LISAS was higher for incompatible than compatible probe trials with extreme evidence in favour of H1, F1,78 = 183.67, p(F) < .001, ηp2 = 0.702, BFincl = 7.885×1018, and the size of this compatibility effect was significantly larger for three vs. one prime instance with extreme evidence in favour of H1, F1,78 = 45.60, p(F) < .001, ηp2 = 0.369, BFincl = 2.818×106. This effect was driven by a combination of a LISAS decrease in compatible trials paralleled by a LISAS increase in incompatible trials (see Supplementary Figure 2). The two-way interaction between compatibility and prime–probe modality transition failed significance with moderate evidence in favour of H0, F1,78 = .093, p(F) = .762, ηp2 = 0.001, BFincl = 0.164.

Most importantly, the key hypothesis of modality-specific declarative-procedural transformation was tested by assessing whether the prime-repetition-dependent increase of the compatibility effect was further modulated by prime–probe modality transition (i.e., three-way interaction compatibility × number of prime instances × prime–probe modality transition). This predicted 3-way interaction effect was significant with moderate evidence in favour of H1, F1,78 = 6.54, p(F) = .012, ηp2 = 0.077, BFincl = 7.082, and was not further qualified by prime modality with moderate evidence in favour of H0, F1,78 = 0.004, p(F) < .949, ηp2 < 0.001, BFincl = 0.242. These latter two findings suggest that the LISAS successfully compensated the modality-specific speed-accuracy trade-offs at play when mean RTs and error rates were assessed separately (see Supplementary Materials Section 1). Hence, as summarised in Figure 3, the LISAS-based results reveal a consistent prime–probe modality transition effect irrespective of the particular prime trial modality.

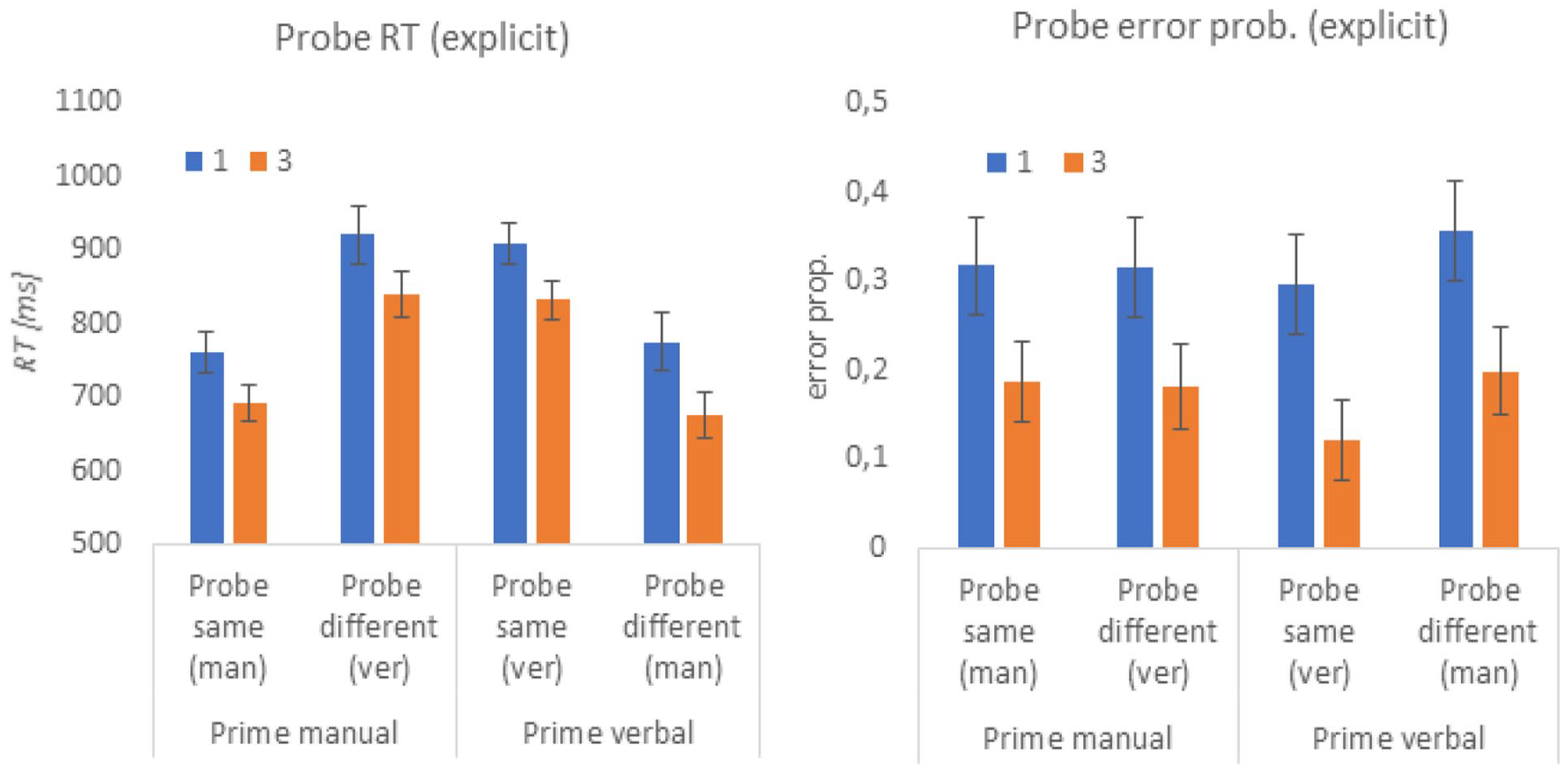

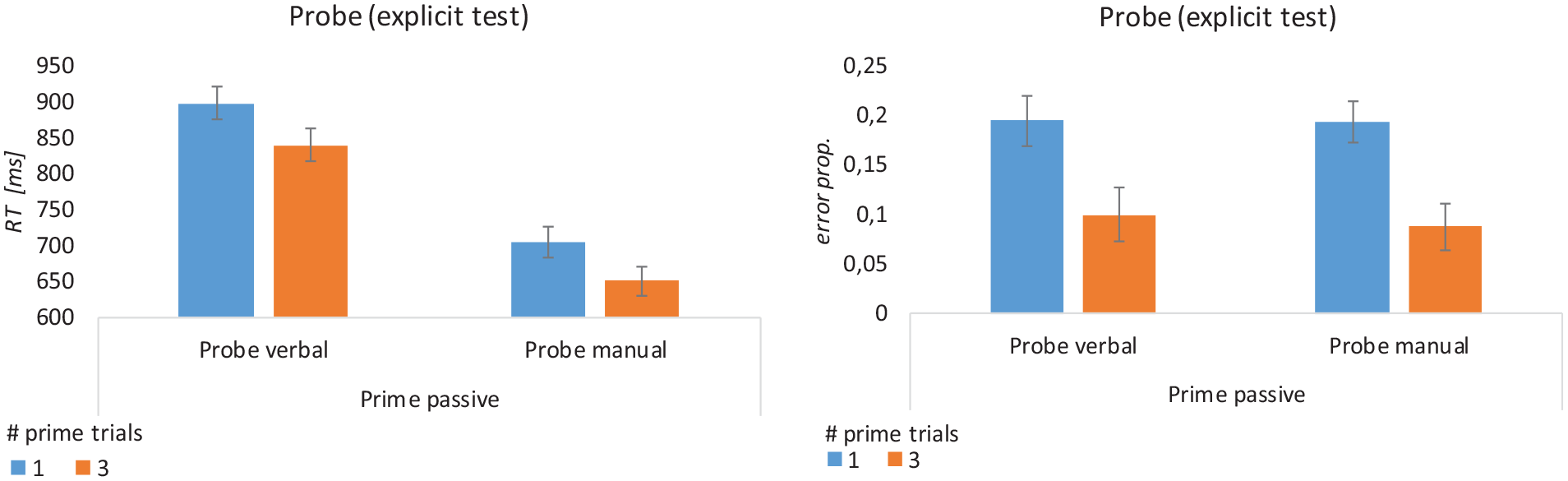

Probe trials—explicit test

These results are visualised in Figure 4.

Explicit test results for Experiment 1. Error bars represent 95% confidence interval.

Response time

Mimicking the prime trial results, mean RTs were generally slower for verbal probe responses than for manual probe responses as indicated by a significant interaction of prime–probe modality transition × prime modality with extreme evidence in favour of the H1, F1,78 = 136.39, p(F) < .001, ηp2 = 0.64, BFincl = 9.404×1015. Neither prime trial modality alone, F1,78 = .113, p(F) = .738, ηp2 = 0.001, BFincl = 0.151, nor prime–probe modality transition alone, F1,78 = .118, p(F) = .732, ηp2 = 0.002, BFincl = 0.190, significantly influenced probe RTs with moderate evidence in favour of the H0.

Mean probe RTs decreased significantly following 3 prime instances per S–R link compared with 1 prime instance with extreme evidence in favour of the H1, F1,78 = 163.35, p(F) < .001, ηp2 = 0.68, BFincl = 9.612×1015. The interaction prime number × prime modality did not reach significance with moderate evidence in favour of the H0, F1,78 = .877, p(F) = .352, ηp2 = 0.011, BFincl = 0.154. Most importantly, and strikingly different from the implicit test results, the RT decrease from 1 to 3 prime trials was neither significantly modulated by prime–probe modality transition, interaction prime number × modality transition, F1,78 = 1.364, p(F) = .246, ηp2 = 0.017, BFincl = 0.233, nor by modality transition × prime modality, interaction prime number × modality transition × prime modality, F1,78 = .085, p(F) = .772, ηp2 = 0.001, BFincl = 0.129, both with moderate evidence in favour of the H0.

Error rate

We observed slightly smaller error rates for verbal probe responses than for manual probe responses as indicated by a significant interaction of modality transition × prime modality with anecdotal evidence in favour of the H1, F1,78 = 6.06, p(F) < .016, ηp2 = 0.072, BFincl = 2.731. Prime trial modality alone did not significantly influence probe error rates with moderate evidence in favour of the H0, F1,78 = .045, p(F) = .932, ηp2 = 0.001, BFincl = 0.204. Prime–probe modality transition alone significantly affected error rates yet with only anecdotal evidence in favour of H0, F1,78 = 4.892, p(F) = .030, ηp2 = 0.059, BFincl = 0.912, indicating slightly lower error rates when probe modality was the same as the prime modality.

As for mean RTs, also probe error rates decreased following 3 prime instances per S–R link compared with 1 prime instance with extreme evidence in favour of the H1, F1,78 = 205.55, p(F) < .001, ηp2 = 0.73, BFincl = 1.453×1020. The interaction prime number × prime modality did not reach significance with anecdotal evidence in favour of the H0, F1,78 = 2.636, p(F) = .108, ηp2 = 0.033, BFincl = 0.354. Most importantly and as for RTs, this error rate decrease was neither significantly modulated by prime–probe modality transition, F1,78 = .15, p(F) = .695, ηp2 = 0.002, BFincl = 0.096, nor by modality transition × prime modality, F1,78 = .352, p(F) = .555, ηp2 = 0.004, BFincl = 0.154, with strong and moderate evidence in favour of the H0, respectively.

For consistency with the implicit test analysis, we also computed an ANOVA based on the LISAS. Not surprisingly, given the converging result patterns for RTs and error rate, the original results were confirmed. Hence, for the sake of brevity, these results are not reported.

Probe trial correlation between implicit and explicit test scores

The motivation to employ both implicit and explicit probe trial blocks was based on the assumption that each test would measure a distinct aspect of prime trial instruction encoding. Although the implicit test score was assumed to be more indicative of procedural encoding, the explicit test score was assumed to be more indicative of declarative encoding. Indeed, this was confirmed by the differential result patterns regarding the influence of prime–probe modality transition. To further substantiate this claim, we additionally computed the correlation between the implicit test scores (i.e., the compatibility effect) and the explicit test scores. Correlations were computed specifically regarding the difference between probe trial performance following 1 vs. 3 prime trials for all combinations of RT and error rate and separately for the same prime–probe modality blocks and different prime–probe modality blocks. No significant effects were found with moderate evidence in favour of the H0, all abs(r) < .145, p(r) > .200, BF10 <0.313.

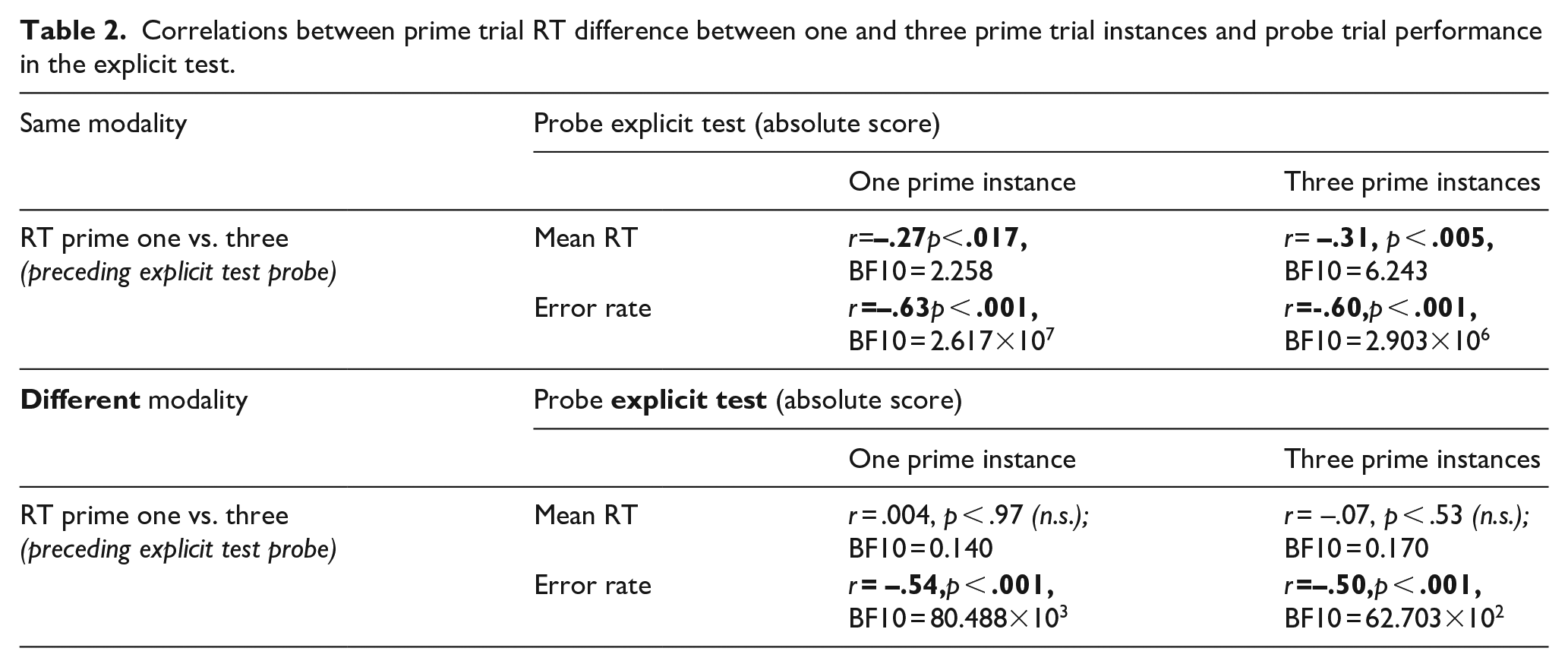

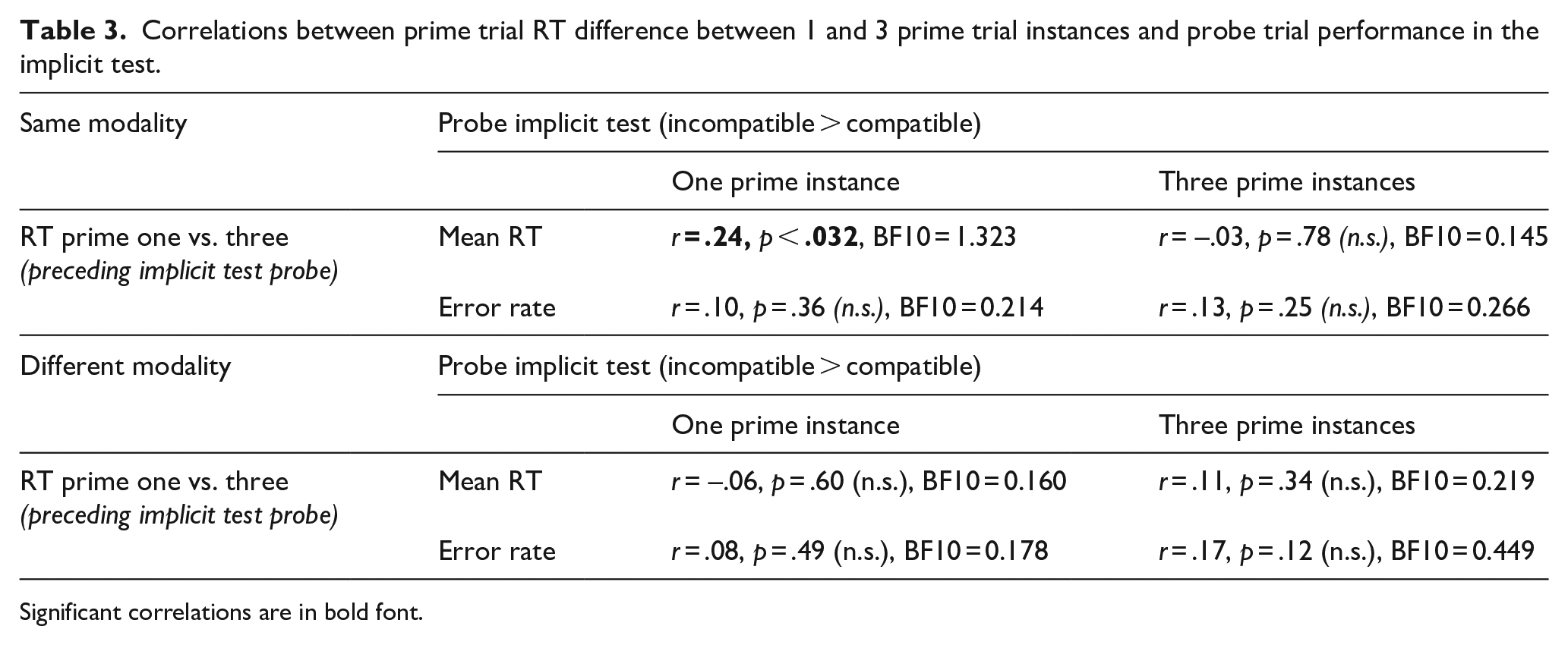

Prime × probe correlation

A final analysis followed up on previous results reported by Ruge, Karcz, et al. (2018). This earlier study showed that larger prime trial RT differences between one implemented prime instance and multiple implemented prime instances predicted smaller explicit test error rates specifically when subjects knew in advance that they would need to retrieve the instructions for correct task performance later on. Accordingly, we interpreted prime RTs to serve as an online maker of instruction encoding. The present study design enables us (i) to try to replicate these previous findings and (ii) to examine if this relationship is specific for the explicit test. If confirmed, we could conclude more specifically than before that prime trial RTs reflect instruction encoding on the declarative level.

Indeed, the original finding could be replicated by the present study specifically for explicit probe trial error rates (Table 2). Notably, if prime and probe modalities were different, the negative prime–probe correlation was exclusively found for error rates. Also note that overall, only the error rate correlations would survive correction for multiple testing (across all 16 tests including explicit and implicit tests). In contrast to the explicit test score, we found that the implicit test score (i.e., the size of the compatibility effect) could not be consistently predicted based on Prime RTs (Table 3).

Correlations between prime trial RT difference between one and three prime trial instances and probe trial performance in the explicit test.

Correlations between prime trial RT difference between 1 and 3 prime trial instances and probe trial performance in the implicit test.

Significant correlations are in bold font.

Discussion

Regarding implicit test performance, we expected a repetition-dependent amplification of the compatibility effect especially when the probe response modality was the same as the prime response modality. This was based on the assumption that procedural encoding would require actual response implementation, which was the case exclusively for the currently valid prime trial response modality. Consistent with our hypothesis, the repetition-dependent amplification of the compatibility effect was significantly more pronounced when prime and probe trial modality was the same than when it was different. This clearly shows that response modality matters for procedural instruction encoding.

Contrasting with the implicit test results, explicit test performance showed the expected benefit from repeated prime trial instruction implementation irrespective of prime–probe modality transition. This finding is consistent with our assumption that explicit test performance is mediated via modality-independent declarative code, which in turn, is detached from modality-specific procedural code. Importantly, this does not at all contradict the conclusion from the implicit test results that modality-specific declarative-procedural transformation had in fact taken place during overt prime implementation. But clearly, the resulting modality-specific procedural code was not picked up by the explicit test. In turn, this re-emphasises our assumption that the explicit test serves as a valid marker of declarative instruction encoding. That implicit and explicit tests really tap into different aspects of instruction encoding, was further supported by the absence of significant correlations between both test scores. Similarly, prime trial RTs were selectively predictive of explicit test performance, suggesting that prime trial RTs can be specifically interpreted as an online marker of declarative instruction encoding.

Besides these hypothesis-consistent results, we unexpectedly found that repeated prime trial implementation resulted in a small but significant increase of the compatibility effect even when prime and probe trial modalities were different. As elaborated next, we can think of two potential explanations, but only the second one seems sufficiently plausible to us.

First, the assumption that modality-independent declarative representations would not influence the size of the compatibility effect may be flawed. To start with, the explicit test provided clear evidence for improved modality-independent declarative encoding through repeated prime trial implementation. Specifically, explicit test accuracy as a proxy for declarative encoding increased moderately by roughly 10 percentage points from around 70% (1 repetition) to around 80% (3 repetitions). However, it remains doubtful if this increase is sufficiently large to explain the increased compatibility effect solely based on only moderately strengthened declarative representations when prime and probe response modalities are different. Caution is warranted even more so given that implicit and explicit test scores were generally uncorrelated.

Second, the assumption that procedural code is formed exclusively for the response modality that is actually implemented during the prime trial phase might be flawed. Hence, in the present study context, the currently invalid (i.e., not to-be-executed) prime response modality might have been implemented covertly. Specifically, it could well be that overt manual responding was accompanied by inner speech and overt verbal responding was accompanied by covert manual response simulation. Such covert response simulation might have resulted in procedural encoding also for the currently invalid modality, which, in turn, might have resulted in a small but significant repetition-dependent amplification effect. But how can this scenario be reconciled with seemingly inconsistent results reported by Pfeuffer et al. (2018)? They found that repeated mere instruction (i.e., no overt prime trial response implementation at all) failed to amplify the baseline compatibility effect induced by one single mere instruction trial, whereas repeated overt prime trial implementation did. In the “Introduction” section, we had speculated that the commitment to covertly implement the task during mere instruction trials might fade drastically over repeated instructions due to lacking external feedback regarding one’s own task engagement. This could explain why the compatibility effect was not further boosted by repeated mere instruction trials in the work of Pfeuffer et al. (2018). This might be different in the present study context due to the presence of external performance feedback. Specifically, in our experiment, overt prime trial instruction implementation using the currently valid response modality might have encouraged covert response simulation of the currently irrelevant response modality and even sustainedly so over repeated prime trial instructions.

To further explore this, we conducted a second experiment that employed a mere instruction condition as in Pfeuffer et al. (2018) which was now embedded within the same overall experimental setting as our Experiment 1. This allows for a clean comparison between implemented (Experiment 1) and non-implemented prime trial (Experiment 2) conditions in a between-subjects design. Thereby, it will be possible to clarify if the discrepancy between the present Experiment 1 and Pfeuffer et al. (2018) can be generally explained by the notion that merely instructed prime trial conditions discourage repeated covert response simulation.

Experiment 2

Experiment 2 was designed to clarify the discrepancy between the results of the present Experiment 1 and Pfeuffer et al. (2018) regarding the increased size of the compatibility effect following repeatedly implemented prime trial instructions in a different response modality (Experiment 1) and the absence of such an amplification effect following repeated mere instruction trials in Pfeuffer et al. (2018). To this end, our Experiment 2 was optimised with regard to a maximally clean comparison with Experiment 1. This implies a number of differences with respect to the Pfeuffer et al. (2018) design which will be discussed further below in the light of our actual results, such as rule complexity and within-subject vs. between-subjects comparison.

Participants

Data of 104 human subjects was collected and analysed (70 female, 34 male; mean age: 22.2 years, range 18–35 years). All participants were right-handed and had normal or corrected-to-normal vision. All subjects gave written informed consent in accordance with the Declaration of Helsinki and were paid €8 per hour or received course credit.

Materials and methods

Experiment 2 (see Figure 5) was identical to Experiment 1, except for the following changes regarding prime trials (probe trials remained unchanged). During all prime trials, response execution was not allowed and the fixation cross was displayed for 1,000 ms (instead of 500 ms). If an overt response was correctly not executed in prime trials, no feedback was displayed. In case of any overt response, feedback was displayed for 500 ms indicating that responding was not allowed (this was the case in less than 2% of all prime trials on average).

Experimental design of Experiment 2. The overall structure was similar to Experiment 1 (see Figure 1). Different from Experiment 1, the prime trial phase did not require an overt response (non-implemented “mere instruction” trials).

Different from Experiment 1, before the start of a new prime trial phase, a general “memorise” instruction was displayed to remind subjects to memorise the instructed response for each stimulus but to withhold overt responding. As in Experiment 1, before the start of a new probe trial phase, a general instruction regarding response modality was displayed to remind subjects to now respond either verbally or manually (see Figure 1).

As in Experiment 1, probe trial response modality was blocked and realised in two consecutive halves of the experiment. As only probe trials required an overt response, two groups of subjects (instead of four) were sufficient to counterbalance the order of response modality. In the “manual-verbal” group of subjects (N = 52) prime–probe blocks 1–24 required manual probe trial responses, whereas prime–probe blocks 25–48 required verbal probe trial responses. In the “verbal-manual” group of subjects (N = 52) prime–probe blocks 1–24 required verbal probe trial responses, whereas prime–probe blocks 25–48 required manual probe trial responses.

As in Experiment 1, to familiarise themselves with the task procedure, subjects had to practice at least 4 prime–probe blocks before the start of the 1st and the 25th prime–probe blocks of the actual experiment. This included two implicit and two explicit probe trial phases in random order. Different from the actual experiment a particular stimulus was repeated until correct responding. Subjects were told about this before the start of each practice phase. If the number of errors exceeded 75% in the last 2 explicit probe blocks, additional 2 blocks were added.

Before the start of the experiment, participants were instructed to respond as quickly and as accurately as possible and only after the presentation of the response cue or the memory recall cue (in the case of the explicit test condition). Also, participants were reminded that probe trials could require “memory recall” for correct responding in the explicit test condition (as indicated by the circle). They were reminded that it was therefore important to always try to thoroughly memorise the instructed prime trial responses. Different from Experiment 1, subjects were reminded not to respond in prime trials.

Data analysis

Mean RTs and mean error rates were analysed for probe trials. Mean RTs were computed for correct trials exceeding a minimum RT of 200 ms relative to the onset of the response cue. Probe trials were only included when subjects had correctly refrained from responding to the corresponding prime trial for a specific stimulus. Error rates were computed as the proportion of error trials (wrong responses) relative to all trials excluding those were participants used the wrong response modality or missed responding at all.

For the analysis of explicit test performance, the mean RTs and mean error rates were submitted to separate repeated measures ANOVAs including the within-subjects factor “prime number” (i.e., number of prime trials per stimulus; one or three), and the within-subjects factor “probe modality” (verbal or manual).

The analysis of implicit probe test trials included the within-subjects factor “prime number” (i.e., number of prime trials per stimulus; one or three), and the within-subjects factor “probe modality” (verbal or manual), as well as “S-R compatibility” (compatible or incompatible) as an additional within-subjects factor. For the sake of consistency with Experiment 1 and to reduce complexity, we decided to continue using LISAS for the main analyses of the implicit test results in Experiment 2 and also for the comparison of implicit test results in Experiment 1 vs. Experiment 2. The implicit test results for RT and error rate are reported in Supplementary Materials Section 2 (Experiment 2) and Supplementary Materials Section 3 (Experiment 1 vs. Experiment 2).

Results

Implicit test

Linear Integrated Speed-Accuracy Score

The LISAS was higher for incompatible than compatible probe trials with extreme evidence in favour of H1, F1,103 = 281.130, p(F) < .001, ηp2 = 0.732, BFincl = 1.013×1028. The size of the compatibility effect was significantly modulated by prime number with extreme evidence in favour of H1, F1,103 = 24.294, p(F) < .001, ηp2 = 0.191, BFincl = 3.195×103, indicating a stronger compatibility effect following 3 prime instances vs. 1 prime instance (Figure 6). Compatibility was not significantly modulated by response modality with moderate evidence in favour of H0, F1,103 = .469, p(F) = .495, ηp2 < 0.001, BFincl = 0.202. The 3-way interaction compatibility × prime number × probe modality failed significance with moderate evidence in favour of H0, F1,103 = 0.061, p(F) < .805, ηp2 < 0.001, BFincl = 0.105.

Implicit priming test results of Experiment 2. Values represent the LISAS difference between incompatible and compatible probe trial responses. Error bars represent 95% confidence interval.

Explicit test

Response time

Mean probe RTs were significantly faster after 3 vs. 1 prime instances per S–R link with extreme evidence in favour of H1, F1,103 = 89.77, p(F) < .001, ηp2 = 0.466, BFincl = 1.065×1012, and were also showing the typical pattern of being significantly faster for manual responses than verbal responses with strong evidence in favour of H1, F1,103 = 220.47, p(F) < .001, ηp2 = 0.682, BFincl = 1.377×1024. The interaction prime number × probe modality was not significant with moderate evidence in favour of H0, F1,103 = .173, p(F) = .678, ηp2 = 0.002, BFincl = 0.153. See Figure 7 for an illustration of these findings.

Explicit test results of Experiment 2. Error bars represent 95% confidence interval.

Error rate

Mean error rates were lower after 3 vs. 1 prime instance per S–R link with extreme evidence in favour of H1, F1,103 = 131.52, p(F) < .001, ηp2 = 0.561, BFincl = 9.033×1016. No other effect was significant with moderate evidence in favour of H0, all F1,103 < .523, p(F) < .523, ηp2 < 0.005, BFincl < .199. See Figure 7 for an illustration of these findings.

Probe trial correlation between implicit and explicit test scores

As for Experiment 1, we computed the correlations between the implicit test scores and the explicit test scores (for all combinations of RT and error rate) regarding the difference between probe trial performance following one vs. three prime trials. This was done separately for verbal and manual probe trial response modality blocks. As in Experiment 1, no significant effects were found all with moderate evidence in favour of the H0, all abs(r) < .129, p(r) > .192, BF10 < 0.284.

Discussion (Experiment 2)

The overall pattern of results is clear-cut. Both implicit and explicit test scores indicate that an increasing number of merely instructed prime trials increases both implicit and explicit test scores and the size of these effects was large. This is contrary to the results reported by Pfeuffer et al. (2018) where repeated merely instructed prime trials did not further increase a small “baseline” compatibility effect, which was already established after a single merely instructed prime trial. Although the original finding arguably suggested the absence of declarative-procedural transformation processes for repeatedly presented mere instruction trials, the opposite seems to be true for the present study. This discrepancy is most likely due to differences in the general experimental setup between our Experiment 2 and Pfeuffer et al. (2018). First and foremost, this includes task complexity which was lower in the present experiment. Specifically, by omitting an additional layer of stimulus classification (e.g., table—manmade—right index finger) on top of the four simple S–R rules to be memorised (e.g., table—right index finger), our Experiment 2 might have encouraged covertly simulated response implementation involving declarative-procedural transformation even without overt responding (cf., Liefooghe & De Houwer, 2018; Liefooghe et al., 2021; Palenciano et al., 2021; Ruge & Wolfensteller, 2010). In line with this, a recent study suggested a more reliable compatibility effect in a similar setup as in Pfeuffer et al. (2017) without the additional layer of stimulus classification (Jargow et al., 2022).

Other factors might have contributed as well. First, different from Pfeuffer et al. (2018), implicit test blocks were intermixed with explicit test blocks and the upcoming test type was unknown during the prime trial phase. This might have encouraged subjects to more effectively make use of repeated prime trial instructions to optimise S–R encoding for successful explicit test performance. As a side effect, this could have also boosted the size of the compatibility-based implicit test score. Second, different from Pfeuffer et al. (2018), where merely instructed prime trial blocks were intermixed with immediately implemented prime trial blocks, our Experiment 2 exclusively employed merely instructed prime trial blocks, which in turn might have encouraged an optimised encoding strategy for this prime type. Finally, instructions were presented differently in Pfeuffer et al. (2018) where the correct response was instructed orally (e.g., the orally presented spoken word “left” as a concrete response cue). This compares to the visually presented abstract response cues employed in the present study, which might also have contributed to differences in encoding strategy.

Comparison between Experiment 1 and Experiment 2

In this final analysis, we wanted to directly quantify potential differences in probe trial performance in implicit and explicit test blocks between immediately implemented (Experiment 1) and merely instructed (Experiment 2) prime trial instructions. As elaborated on in the General Introduction, this direct comparison remains inconclusive due to several possible processing differences between the two conditions. On one hand, this includes differences that are truly due to more effective declarative-procedural transformation through immediate implementation as compared with mere instruction. On the other hand, this includes differences that are rather due to confounding factors such as differences in attention towards task-related features or differences in general task commitment, which, in turn, might lead to differences in encoding quality of the instructed task.

Previous studies have revealed a rather complex and partly contradictory pattern of results. The implicit action priming score (i.e., compatibility effect) following implemented prime trials was typically larger than following merely instructed prime trials using within-subject designs (Jargow et al., 2022; Pfeuffer et al., 2017, 2018). However, Jargow et al. (2022) showed that this implementation advantage was largely reduced when an additional stimulus classification layer was omitted (as in our study design). Moreover, studies by Pfeuffer et al. (2017) and Jargow et al. (2022) included an explicit recall test after the completion of the implicit test. Participants had to explicitly recall the originally instructed correct classification or correct action for one exemplary stimulus at the end of each implicit probe phase (note the difference to the present study where implicit and explicit tests were conducted in separate learning blocks and hence applied to distinct instruction sets). In the work of Pfeuffer et al. (2017), different from the implementation-related benefit which was reflected by increased action priming scores, classification recall accuracy was actually lower (64.1%) following implemented prime trials than following merely instructed prime trials (70.4%) and the same was true for action recall accuracy (59.1% vs. 68.3%). A similar pattern was reported by Jargow et al. (2022). These explicit test results actually speak against any account that predicts a benefit of prime trial implementation. Instead, it suggests that declarative encoding (as assessed by explicit test) might benefit from the absence of concurrent task implementation.

In the light of this rather complex and partly contradictory results pattern, we reasoned that a between-subjects comparison of implemented vs. merely instructed prime trials could potentially yield more conclusive results.

Data analysis (Experiment 1 vs. Experiment 2)

To cleanly compare the two experiments, this analysis was based exclusively on the first halves of each experiment (i.e., Blocks 1–24; see Table 1). This was necessary as probe response modality changed in the middle of the experimental session for both experiments, which might have differentially affected performance in the second half of the experiment depending on whether prime trials were implemented (Experiment 1) or not (Experiment 2).

Mean RTs, mean error rates, as well as LISAS were analysed for probe trials in the same way as they were for each experiment separately. As a reminder, mean RTs were computed for correct trials exceeding a minimum RT of 200 ms relative to the onset of the response cue. Probe trial RTs from Experiment 1 were only included when the corresponding prime trial for a specific stimulus had been correctly implemented as well. Probe trial RTs from Experiment 2 were only included when correctly not having responded to the corresponding prime trial for a specific stimulus. Error rates were computed as the proportion of error trials (wrong responses) relative to all trials excluding those were participants used the wrong response modality or missed responding at all.

As for the experiment-specific analyses reported earlier, the main analysis was based on repeated measures ANOVAs, now additionally including the between-subjects factor “prime–probe transition” with three levels: Experiment 1 “same response modality,” Experiment 1 “different response modalities,” and Experiment 2 “passive-active.” The condition label “Experiment 1 same modality” refers to the group of subjects (N = 40) where response modality was the same for prime trials and probe trials. Accordingly, the condition label “Experiment 1 different modalities” refers to the other group of subjects (N = 40) where response modality was different for prime trials and probe trials. The condition label “passive-active” refers to the group of subjects who participated in Experiment 2 and who were required to refrain from responding during the prime trial phase (“passive” mere instruction trials) and only responded during the probe trial phase.

To address potential statistical distortions due to unequal sample sizes in the different groups (Experiment 1 “same response modality”: N = 40; Experiment 1 “different response modality”: N = 40; Experiment 2: N = 104), we performed complementary analyses using the “bwtrim” function of the WRS2 R-package, which computes robust statistics for mixed designs involving one within-subjects factor and one between-subjects factor based on trimmed means (Mair & Wilcox, 2020). These analyses yielded qualitatively similar results and are reported in Supplementary Material Section 4.

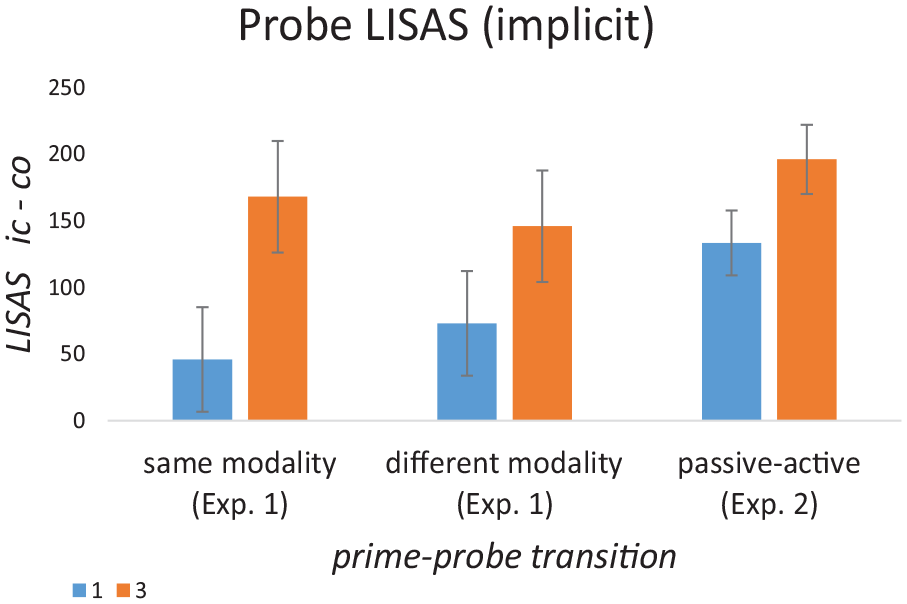

Results (Experiment 1 vs. Experiment 2)

Probe trials—implicit test

Consistent with the separate reports of Experiment 1 and Experiment 2 results, the main analysis is based on LISAS. These results are visualised in Figure 8. The complementary analysis of RT and error rate is reported in Supplementary Material Section 3. For the analysis of probe trial performance, LISAS were submitted to a repeated measures ANOVA including the within-subject factors “compatibility” (compatible vs. incompatible) and “prime number” (i.e., number of prime trials per stimulus; one or three) as well as the between-subjects factor “probe modality” (manual or verbal). To capture differences between Experiment 1 and Experiment 2, we included the between-subjects factor “prime–probe transition” with the three levels: Experiment 1 “same response modality,” Experiment 1 “different response modalities,” and Experiment 2 “passive-active.”

Implicit test results for the comparison between Experiment 1 and Experiment 2. Values represent the LISAS difference between incompatible and compatible probe trial responses. Error bars represent 95% confidence interval.

Linear Integrated Speed-Accuracy Score

Generally, and consistent with the results for each experiment analysed separately, LISAS were significantly higher for incompatible than for compatible probe trials with extreme evidence in favour of H1, F1,178 = 228.562, p(F) < .001, ηp2 = 0.562, BFincl = 6.225×1038, and the size of this compatibility effect was significantly larger for 3 vs. 1 prime instance with extreme evidence in favour of H1, F1,178 = 44.718, p(F) < .001, ηp2 = 0.201, BFincl = 6.850×108. There was a significant 2-way interaction between compatibility and prime–probe transition with strong evidence in favour of H1, F2,178 = 6.774, p(F) = .001, ηp2 = 0.071, BFincl = 24.452. Follow-up ANOVAs indicated that this interaction was due to a significantly stronger compatibility effect for Experiment 2 compared with both Experiment 1 “same modality” with strong evidence in favour of H1, F1,140 = 8.027, p(F) = .005, ηp2 = 0.055, BFincl = 10.714, as well as Experiment 1 “different modality” with moderate evidence in favour of H1, F1,140 = 7.834, p(F) = .006, ηp2 = 0.053, BFincl = 8.189. Note that this effect is the opposite of previously reported results showing a weaker compatibility effect following merely instructed prime trials (Pfeuffer et al., 2017, 2018).

No other interaction effect involving compatibility reached significance. More specifically, neither the 2-way interaction between compatibility and probe modality, F1,178 = .227, p(F) = .634, ηp2 = 0.001, BFincl = 0.162, nor the 3-way interaction compatibility × prime–probe transition × probe modality, F2,178 = .772, p(F) = .463, ηp2 = 0.009, BFincl = 0.029, nor the 3-way interaction compatibility × prime number × probe modality, F2,178 = .045, p(F) = .832, ηp2 < 0.001, BFincl = 0.129. Also, both the 3-way interaction compatibility × prime number × prime–probe transition, F2,178 = 2.067, p(F) = .130, ηp2 = 0.023, BFincl = 0.588, as well as the four-way interaction compatibility × prime number × prime–probe transition × probe modality, F2,178 = .974, p(F) = .380, ηp2 = 0.011, BFincl = 0.007, failed to reach significance.

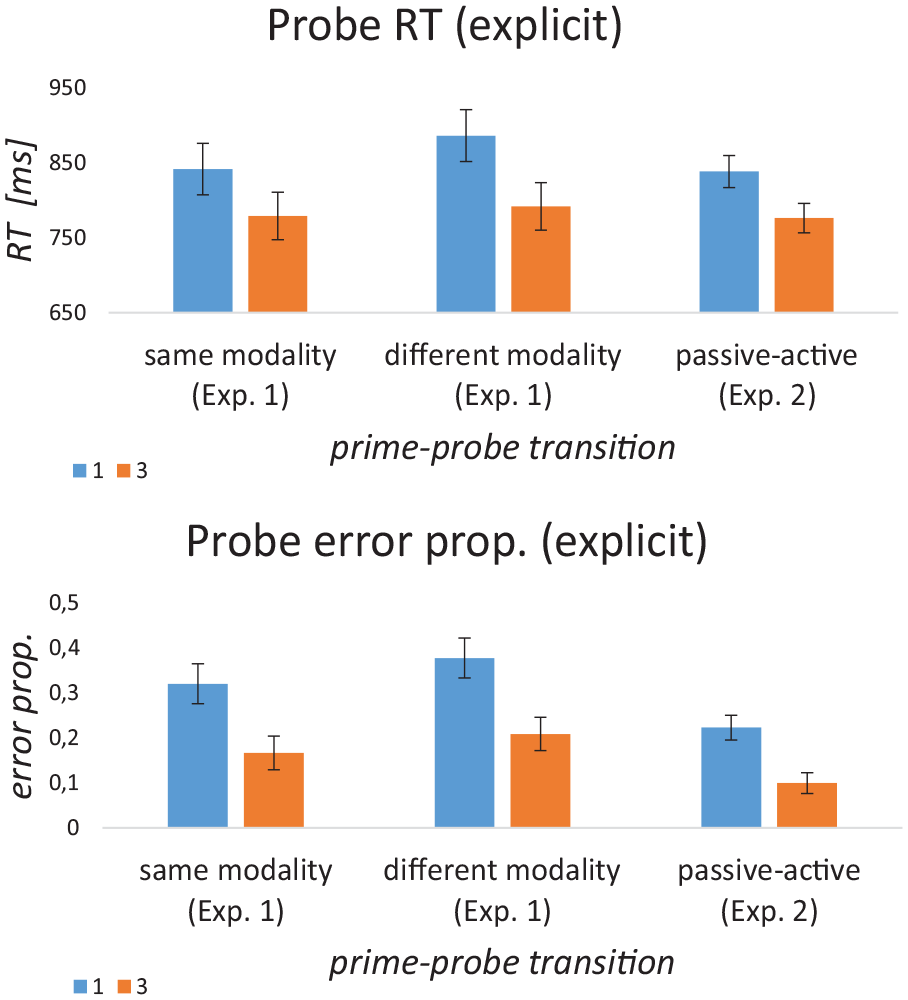

Probe trials—explicit test

For the analysis of explicit test probe trial performance, the mean RTs and mean error rates were submitted to separate repeated measures ANOVAs including the within-subjects factor “prime number” (i.e., number of prime trials per stimulus; one or three) and the between-subject factors “probe modality” (manual or verbal) and “prime–probe transition” (Experiment 1 “same,” Experiment 1 “different,” Experiment 2 “passive-active”) to capture differences between Experiment 1 and Experiment 2. Results are visualised in Figure 9.

Explicit test results for the comparison between Experiment 1 and Experiment 2. Error bars represent 95% confidence interval.

Response time

None of the effects involving the factor “prime–probe transition,” which captures differences between the 3 different prime trial conditions in the 2 experiments, reached significance, all p(F) > .115, BFincl < 0.544. The only significant findings were the typical effects of number of prime trials with extreme evidence in favour of H1, F1,178 = 105.94, p(F) < .001, ηp2 = 0.373, BFincl = 3.825×1017, indicating shorter RTs for 3 prime trials vs. 1 prime trial, and probe modality, F1,178 = 118.15, p(F) < .001, ηp2 = 0.399, BFincl = 9.547×1022, indicating shorter RTs for manual vs. verbal responses.

Error rate

Different from the mean RT results, for error rates, there was a significant main effect of prime–probe transition with extreme evidence in favour of H1, F2,178 = 20.83, p(F) < .001, ηp2 = 0.190, BFincl = 1.374×106, indicating a significantly lower mean error rate following merely instructed prime trials (Experiment 2) compared with implemented prime trial instructions in both Experiment1 “same,” F1,140 = 15.57, p(F) < .001, ηp2 = 0.100, BFincl = 158.649, and Experiment1 “different,” F1,140 = 41.38, p(F) < .001, ηp2 = 0.228, BFincl = 3.782×106. All other effects involving prime–probe transition did not reach significance, all p(F) > .053, BFincl < 1.270.

In addition, there was a significant main effect of number of prime trials with extreme evidence in favour of H1, F1,178 = 224.22, p(F) < .001, ηp2 = 0.557, BFincl = 5.415×1031, indicating a significantly lower error rate for 3 prime trials vs. 1 prime trial and a main effect of probe modality, F1,178 = 4.77, p(F) < .030, ηp2 = 0.026, BFincl = 0.422, indicating lower error rate for verbal vs. manual responses.

Discussion (Experiment 1 vs. Experiment 2)

These results suggest that merely instructed prime trials (Experiment 2) compared with immediately implemented instructions (Experiment 1) yield better S–R rule encoding during prime trials as indicated by higher scores in both implicit and explicit tests. Importantly, these higher test scores were reflected by an increased compatibility effect (implicit test) and by a reduced overall error rate in the explicit test. These general performance differences between experiments were not further modulated by the number of prime trials.

Figures 8 (implicit) and 9 (explicit) clearly show that Experiment 2 performance when compared with Experiment 1 already benefitted from an encoding boost in the first prime trial without additional benefit from additional prime trials 2 and 3 on top of this. In turn, this suggests that concurrently encoding and implementing the instruction seems to be disadvantageous. For implicit test results, this finding completely refutes our original hypothesis derived from Pfeuffer et al. (2017, 2018) who observed the opposite result: an increased compatibility effect following implemented prime trials relative to non-implemented merely instructed prime trials. One (but not the only) factor for this reversed result might be related to the reduced task complexity in our experiments by omitting the additional classification layer. In line with this idea, Jargow et al. (2022) showed that the originally reported implementation-related advantage was largely reduced (though not reversed) when the additional stimulus classification layer was omitted. However, this cannot explain why the original implementation advantage turned into a disadvantage in our study. An additional factor in favour of the merely instructed prime trial condition in our Experiment 2 could be related to the fact that mere instruction blocks were not intermixed with immediately implemented instruction blocks. This might have bolstered an optimised encoding strategy for mere instruction trials.

In contrast to the implicit test results, the improved explicit test scores following merely instructed prime trials are in line with previous study results by Pfeuffer et al. (2017) and Jargow et al. (2022). Together, this suggests that declarative encoding seems to benefit from mere instruction without implementation under a variety of study contexts. Importantly however, this finding clearly does not generalise to an entire class of previous studies, demonstrating mnemonic benefits for memory items in the context of “self-performed” or “enacted” task instructions, both long-term (Engelkamp, 1998; Nilsson, 2000) and short-term (Allen et al., 2023; Allen & Waterman, 2015; Jaroslawska et al., 2016). This striking inconsistency between study types might have multiple reasons. One obvious difference between our study design and typical “enactment” designs is that the latter typically involve more embodied tasks requiring real or imagined interaction with real-world objects. Indeed, it has been reported that the benefit of “enactment” disappeared in a simple computer-based mouse-clicking task compared with the typical setup involving interaction with a real-world environment (Yang, 2011). Another important aspect could be related to the type of memory test performed. In our study, the explicit test required the implementation of the newly instructed prime trial task. By contrast, in typical “enactment” designs, it is not tested how well the instructed task is implemented later on, but rather how well certain elements of the instruction are recalled (e.g., for an instruction like “put the pen into the envelope” the test would be “where was the pen put?”).

General conclusions

The rationale of this study was that instruction-based declarative-procedural transformation and the ensuing procedural representations should be specific for the employed response modality. Experiment 1 revealed that procedural encoding strength was exclusively indicated by the implicit test. More precisely, we found that response modality transition from prime to probe trials affected the repetition-dependent increase of the compatibility effect. The explicit test was not affected by modality transition, and in turn, might serve as an index of declarative instruction encoding strength.

The differential sensitivity of implicit and explicit test scores to response modality transition, and hence the seemingly different scopes of both tests, was further supported by two dissociations between the tests. First, implicit and explicit test scores were generally uncorrelated in both experiments. Second, repetition-dependent decrease in prime trial RTs as a previously proposed online measure of general instruction encoding effort (Ruge, Karcz, et al., 2018) was exclusively predictive of explicit test scores.

Notably, however, repeated prime trial implementation resulted in an increased compatibility effect not only when prime and probe trial modality was the same, but unexpectedly also when it was different (though significantly less pronounced). We argued this to reflect covert instruction implementation in the currently irrelevant prime trial response modality. This, in turn, might have resulted in procedural, yet relatively weaker, representations for this modality compared with the currently relevant (i.e., instructed) one.

Contrary to previous findings (Pfeuffer et al., 2018), Experiment 2 showed that procedural representations were also formed and strengthened through repetition in the absence of any overt response implementation. This suggests that procedural encoding through covert response “simulation” occurs preferentially under optimal context conditions. In the present study, “optimal context” might have been established by using relatively simple S–R instructions and by employing mere instruction trials in all blocks of Experiment 2 (i.e., not intermixed with other types of instruction). Similarly, an optimal context might have been established in Experiment 1, by employing long stretches of blocks constantly involving prime trial implementation in one modality (e.g., verbal) and probe trial implementation in the other (e.g., manual).

Finally, we rather unexpectedly found that both implicit and explicit test scores were higher (i.e., reflecting stronger instruction encoding) following mere instruction (Experiment 2) vs. immediately implemented instruction (Experiment 1). This finding is clearly inconsistent with a multitude of studies showing mnemonic benefits for “self-performed” or “enacted” task instructions, both long-term (Engelkamp, 1998; Nilsson, 2000) and short-term (Allen et al., 2023; Allen & Waterman, 2015; Jaroslawska et al., 2016). Possible explanations might be related to differences in “embodiment” (Yang, 2011) or the type of memory test employed (implementation of the newly instructed prime trial task as in our study vs. recall of certain elements of the instruction, e.g., for an instruction like “put the pen into the envelope” the test would be “where was the pen put?”). Clearly, this discrepancy needs further investigation.

Supplemental Material

sj-docx-1-qjp-10.1177_17470218241238164 – Supplemental material for Characterising the declarative-procedural transformation in instruction-based learning

Supplemental material, sj-docx-1-qjp-10.1177_17470218241238164 for Characterising the declarative-procedural transformation in instruction-based learning by Hannes Ruge, Janine Jargow, Eva Sinning, Sofia Fregni and Alexander Willy Baumann in Quarterly Journal of Experimental Psychology

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the German Research Foundation (DFG, SFB 940 project A2)

Supplementary Material

The supplementary material is available at qjep.sagepub.com.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.