Abstract

Social cues, such as eye gaze and pointing fingers, can increase the prioritisation of specific locations for cognitive processing. A previous study using a manual reaching task showed that, although both gaze and pointing cues altered target prioritisation (reaction times [RTs]), only pointing cues affected action execution (trajectory deviations). These differential effects of gaze and pointing cues on action execution could be because the gaze cue was conveyed through a disembodied head; hence, the model lacked the potential for a body part (i.e., hands) to interact with the target. In the present study, the image of a male gaze model, whose gaze direction coincided with two potential target locations, was centrally presented. The model either had his arms and hands extended underneath the potential target locations, indicating the potential to act on the targets (Experiment 1), or had his arms crossed in front of his chest, indicating the absence of potential to act (Experiment 2). Participants reached to a target that followed a nonpredictive gaze cue at one of three stimulus onset asynchronies. RTs and reach trajectories of the movements to cued and uncued targets were analysed. RTs showed a facilitation effect for both experiments, whereas trajectory analysis revealed facilitatory and inhibitory effects, but only in Experiment 1 when the model could potentially act on the targets. The results of this study suggested that when the gaze model had the potential to interact with the cued target location, the model’s gaze affected not only target prioritisation but also movement execution.

The gregarious nature of human existence permeates through every social interaction. Such nature is not only manifested in verbal communication but, more intriguingly, via nonverbal means: A simple look in the eyes could reveal a wealth of information regarding a person’s intention (Driver et al., 1999; Kendon, 1967) and modulate another person’s attention (Capozzi & Ristic, 2018; Dalmaso et al., 2020; Frischen, Bayliss, & Tipper, 2007). Moreover, these characteristics are not restricted to eye gaze because other social cues, such as finger-pointing, also exhibit similar effects (Ariga & Watanabe, 2009). One of the widely studied phenomena in social cueing is the orienting of attention. Granted that attention is a loaded term (Hommel et al., 2019; Posner & Boies, 1971), orienting of attention generally refers to “the alignment of some internal mechanisms with an external sensory input source that results in the preferential processing of that input” (Frischen, Bayliss, & Tipper, 2007, p. 701; also see McKay et al., 2021). In the context of visual perception, this phenomenon corresponds to when the observer orients to or prioritises certain visual cues in their visual field. The evaluation of orienting of attention has commonly relied on various implicit measures, such as changes in response accuracy and reaction time (RTs; Frischen, Bayliss, & Tipper, 2007). The present study examines the effect of visual cueing on the observers’ attention and action planning and execution in an upper-limb reaching task.

The orienting of attention is commonly assessed through the spatial-cueing paradigm, in which participants are instructed to respond to the appearance of a target at one of two potential locations with a key press. This paradigm commonly entails a nonpredictive cue (e.g., a peripherally presented blinking light, a centrally presented arrow, or a shift in a centrally presented model’s eye gaze) being presented prior to the target at or directed towards one of the potential target locations. The key feature of the stimuli is that the cue is non-predictive (e.g., the cue may appear on the right, but the target could randomly appear on the left or right) such that there is no top-down advantage for the observer to orient attention based on the cue. Results of the studies, however, have shown that this nonpredictive cue could affect the processing of the visual target, which is commonly reflected through differences in participants’ RTs to the target as a function of the relative locations of the cue and the target. Cued targets are those that are presented at a location consistent with the cue (i.e., the same location associated with the cue), whereas uncued targets are those that are presented at a location that is not consistent with the cue (i.e., a different location from the cue).

Generally, two types of effects are expected depending on the temporal separation between the cue and target onset (stimulus onset asynchrony, or SOA). First, RTs could be shorter for the cued targets than for the uncued targets at short SOAs (e.g., <200 ms) when there is little time difference between the onset of the cue and the onset of the target. This facilitation effect is thought to occur because the cue led to the short-term prioritisation of the cued location, increasing the efficiency with which the target is processed relative to targets at other uncued locations. Second, RTs for the cued targets are actually longer than for the uncued targets when there is a longer time (e.g., >300 ms) between the onset of the cue and the onset of the target. These longer RTs are thought to emerge because, as time elapses and no target appears, the short-term prioritisation coding decreases and is replaced by an inhibitory coding activated at the location of the cue. This inhibitory coding subsequently hinders or decreases the efficiency of processing of a target that then appears at the location relative to other uncued locations. In a nonsocial context, this inhibitory aftereffect has been termed inhibition of return (IOR; Okamoto-Barth & Kawai, 2006 ; Posner & Cohen, 1984; Posner et al., 1985).

For centrally presented gaze cues, the facilitation effects are typically observed at shorter SOAs, with peak facilitation effects appearing between 100 and 300 ms. Interstingly, these facilitation effects persist at longer SOAs, even present between 700 and 1000 ms SOAs (Friesen & Kingstone, 1998; Frischen, Bayliss, & Tipper, 2007). Moreover, despite the pronounced facilitation effect, RT-based IOR is rarely observed with centrally presented gaze cues without sophisticated experimental manipulations (Frischen & Tipper, 2004; Frischen, Smilek, et al., 2007). Therefore, given these different patterns of RTs, the relationship between mechanisms activated by social gaze cues and peripheral and central cues remains unclear.

Most existing research on the orienting of attention in social cueing uses tasks requiring discrete button presses and, as such, has only been able to examine RTs and/or response accuracy in choice tasks. Deviating from this tradition, Yoxon et al. (2019) used an upper-limb reaching task to examine the facilitatory and inhibitory effects of gaze cues on attention and action execution. Upper-limb reaching movements were employed because the characteristics of these movements can provide additional insight into how the central nervous system represents the excitation or inhibition of responses generated by the cue during response selection and decision-making (Howard & Tipper, 1997; Neyedli & Welsh, 2012; Welsh & Elliott, 2004 for reviews, see (Gallivan et al., 2018; Song & Nakayama, 2009). Adopted from the classical spatial-cueing paradigm, Yoxon et al. presented two potential target locations flanking an image of either a model’s disembodied head (Experiments 1 and 2) or a disembodied pointed finger (Experiment 3). The centrally presented cueing model provided a nonpredictive gaze or pointing cue to one of the potential target locations and the target was presented following one of the many SOAs (from 100 to 2400 ms). Participants were asked to ignore the cue and use their index finger to rapidly reach to and touch the target. The authors evaluated the effects of the cue and SOAs on RTs (measured as the time interval from the onset of the target to the movement initiation) and the initial movement angle (IMA) of the reaching movement (calculated as the absolute angle between the principal axis [an imaginary central line from the home position to the midpoint between the two target locations] and the movement trajectory at 20% of the reach). While RTs may reflect location prioritisation, IMAs reflect action planning. If the gaze cue exerts a facilitation effect on action planning (i.e., the cue activates a response that would lead the participant to interact with the cue), then IMAs should be smaller when moving to an uncued target than when moving to a cued target because the cue may have activated a response to the cued location that would interfere or combine with the subsequent response to the target, leading to a more central response trajectory. If the gaze cue leads to the activation of an inhibitory mechanism on the response to the cue, then IMAs should be larger on uncued than cued target trials because this inhibitory mechanism might reduce the representation of the response to the cue to below baseline levels, leading to a more peripheral response trajectory away from the location of the cue. Such patterns of trajectory deviations have been shown in rapid aiming responses following peripheral sudden onset cues (see Neyedli & Welsh, 2012; Welsh et al., 2013).

In Experiment 1 of Yoxon et al. (2019), the centrally presented model head remained fixated on the target until the end of the participant’s reaching movement. In contrast, in Experiment 2, the gaze cue only lasted for 150 ms before the eyes of the model returned to a neutral gaze direction. In both Experiments 1 and 2, RTs revealed a facilitation effect consistent with previous gaze cueing literature—a persistent facilitation effect without the emergence of an inhibition effect, even at long SOAs (Friesen & Kingstone, 1998; Friesen et al., 2004; Frischen & Tipper, 2004; Frischen, Bayliss, & Tipper, 2007). Interestingly, despite the facilitation effects in RT, there were no differences in IMA between movements to cued or uncued targets. These findings suggest that the gaze cue only affects attention, but not action planning. In Experiment 3, Yoxon and colleagues presented a pointing finger that remained directed towards one of the target locations throughout the SOA period (similar to the gaze cues in Experiment 1). The data revealed a facilitation effect in both RTs and IMAs, suggesting that the pointing cue also affects action planning. Based on the overall pattern of results, the authors reasoned that eye gaze and finger-pointing cues are processed differently and that the hand cues may have a more prominent role or direct influence on the salience of objects and locations for motor control.

The differences in the patterns of trajectory deviations between the eye gaze and pointing cues could be attributed to the compatibility between the cue and the effector involved in the task (Welsh & Pratt, 2008; Welsh & Zbinden, 2009; Yoxon et al., 2019). In the case of Yoxon et al. (2019), the finger-pointing cue is similar to the hand used in the manual aiming task, allowing the cue to become salient to the attention/action system that underlies the aiming movement, which consequently affected the movement planning and execution. Taken a step further, this conjecture implies that if the model which provides the cue manifests the potential to interact with the target locations through the same effectors as the participant uses in the response, the salience of the cue to the underlying attention/action system should remain. If this is the case, then a similar facilitation effect in the effector-based measurement (trajectory) as in the attention-based measurement (RT) should emerge. In a more concrete sense, predictions based on this reasoning could be that the gaze cue should elicit a similar facilitation effect in trajectories as the pointing cue only when the gaze cue model has the potential to interact with the target with the hands. Borrowed from the nomenclature of Gibson’s affordance theory (Gibson, 1986), this potential is referred to as act-ability, or the ability to act on an object.

The present study examines the effect of act-ability of the gaze cue model on attention and movement planning and execution in a manual reaching task. In Experiment 1, a male’s upper body was presented with his arms extending outwards with the hands placed below the potential target locations. If the model’s potential to interact with the target creates the conditions to enable his gaze cue to affect the action system, then eye gaze cues in this condition should lead to a facilitation effect on not only the participants’ RTs, but also their reach trajectories. In Experiment 2, the same model was presented but his arms were crossed in front of his chest, removing his potential to interact with the target. If act-ability is the key feature that leads to activation of the motor system by the gaze cues, then there should be a facilitation effect in movement trajectories in Experiment 1 when the hands of the model are near the targets, but not in the movement trajectories in Experiment 2 when the arms of the model are crossed. If the mere presence of a body and the arms of a model is sufficient to lead to motor system activation by the gaze cues (i.e., regardless of the model’s act-ability), then facilitation effects in RTs and trajectories should be observed in both Experiments 1 and 2. The finding of trajectory deviations in Experiment 2 might suggest that the absence of trajectory deviations in Experiments 1 and 2 of Yoxon et al. (2019) may have been the result of the eye gaze cue being presented in a disembodied head.

Experiment 1

Methods

Participants

Twenty adults (13 females and 7 males), aged between 19 and 46, participated in this experiment. All participants were right-handed with normal or corrected-to-normal vision. Participants provided full and informed consent. All procedures were approved and were consistent with the standards of the University of Toronto Research Ethics Board. Based on the effect size reported in Yoxon et al. (2019) (Experiment 3, IMA,

Stimuli and apparatus

The stimuli were presented on an Acer GD235HZ 24-inch monitor with a 1920 × 1080 resolution and 60 Hz refresh rate. The monitor was slanted at approximately 20° from the table facing the participant to ensure comfort during the experiment. The experiment was implemented in MATLAB (the Mathworks Inc.) using the Psychtoolbox-3 (Brainard, 1997; Kleiner et al., 2007; Pelli, 1997). The experimental setup was similar to that in Yoxon et al. (2019). For each trial, a home position (a blue circle with a 1.5 cm diameter) would appear 1 cm above the bottom edge of the screen, along with two unfilled blue squares (2 cm per side) as placeholders for the target. The blue squares were 28 cm horizontally from each other and 25 cm diagonally from the home position. An image of a young adult male was used as the cue model, placed between the two target placeholders. The male extended his arms out with hands opened and facing upwards, placed directly beneath the two placeholders as if he was ready to catch or grab them (Figure 1 top). Every object was displayed against a light grey background. During each trial, the movement of participants’ right index finger was tracked using an opto-electric motion tracking system (Optotrak, Northern Digital Inc., Waterloo, Ontario, Canada) with an infrared-emitting diode (IRED) that records three-dimensional (3D) coordinates at a 250 Hz sampling frequency.

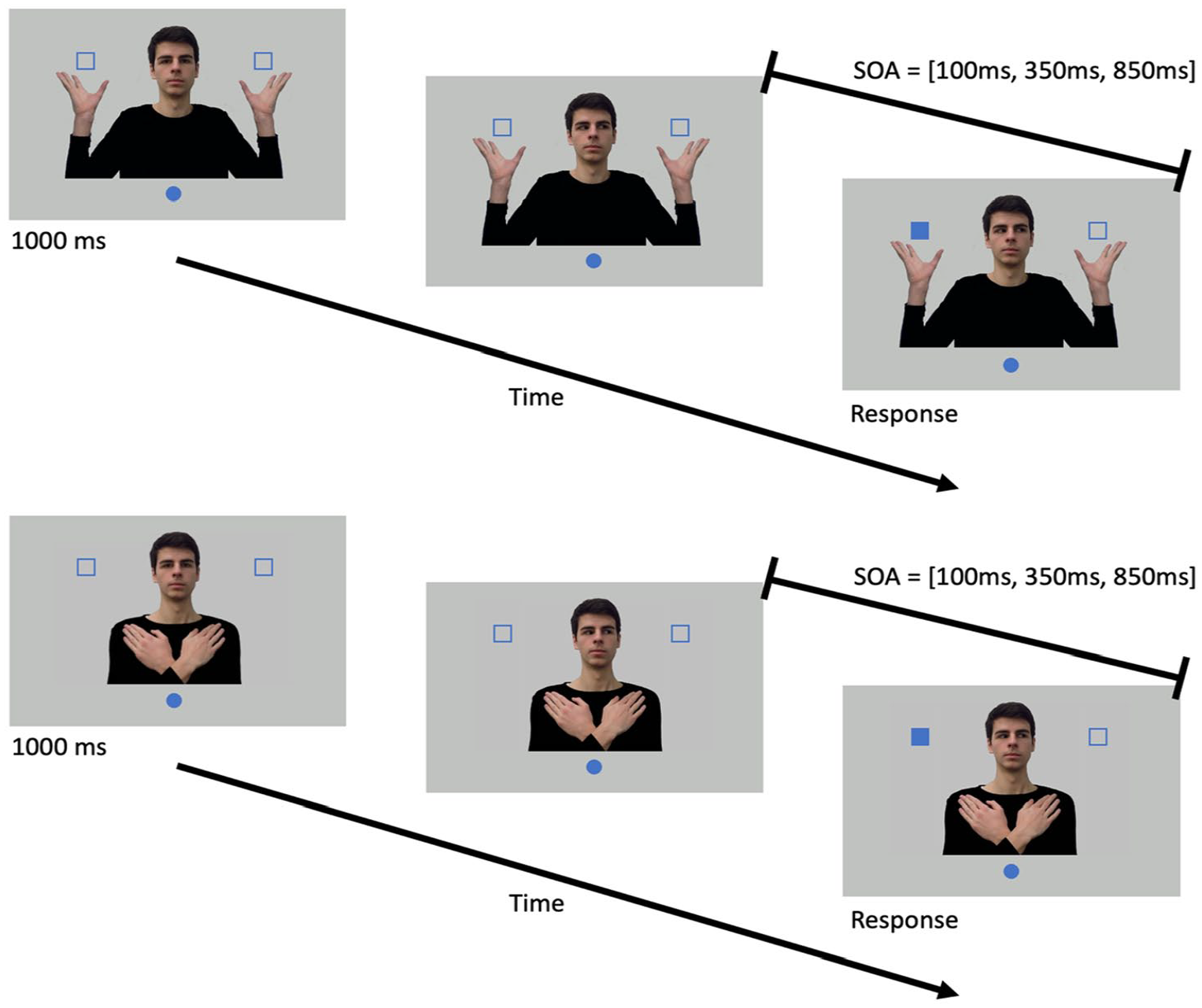

A schematic illustration of the experimental setup and timeline for a single trial in Experiments 1 (top) and 2 (bottom). Participants put their right index finger on the blue circle (home position) at the beginning of each trial. After 1,000 ms, the model would shift his gaze to one of the potential target locations. Following one of the stimulus onset asynchronies (SOA), one of the squares would turn solid, indicating that it was the target, and participants needed to reach to it as quickly as they could.

Procedure and design

After providing their full informed consent, participants were guided into a testing room and sat comfortably in front of the table with the slanted monitor. The experimenter would attach the IRED onto the participants’ right index finger. Prior to the experiment, participants were instructed to perform a screen calibration procedure, where they would sequentially reach to each corner of the screen. The end positions of each reach were recorded to derive the 3D orientation of the screen, measured in the same reference frame as the subsequent aiming movements. During data analysis, each participant’s screen calibration data were used to transform their respective reaching trajectories (see Data analysis for details).

Figure 1 (top) shows the timeline of a single trial. At the start of a trial, participants were presented with an image of the model with the eyes directed towards the participant. The participants placed their right index finger on the home position. After 1000 ms, the model’s gaze direction shifted to the left or right, towards the location of one of the target placeholders, and remained there for the rest of the trial. After a variable SOA (100, 350, or 850 ms), one of the unfilled squares turned solid (the target). Participants were instructed to reach to the solid target square as quickly as they could. The model’s gaze direction and the target location were independent of each other. Participants were informed of this nonpredictive gaze cue and were instructed to fixate on the male model prior to the target onset. Positions of the participants’ index finger were recorded using Optotrak for 1,500 ms starting from the moment the target was presented. Participants were instructed to hold their finger at the target location until the 1,500 ms data collection window was completed.

Given the two target locations (left and right) and two gaze directions (left and right), the target could either be cued (both the target location and gaze direction were the same) or uncued (the target location and gaze direction were opposite). Combined with three SOAs (100, 350, and 850 ms), there were 12 unique trial types (2 target locations × 2 gaze directions × 3 SOAs), which were treated as a block. Trials within each block were presented in a random order. Each block was repeated for 16 times, resulting in 192 trials. The first block was used as training and was not included in the analysis. The entire experiment took about 45 min to complete.

Data analysis

Data analysis was performed using a custom Python movement analysis package and was divided into the following steps.

Spatial Calibration

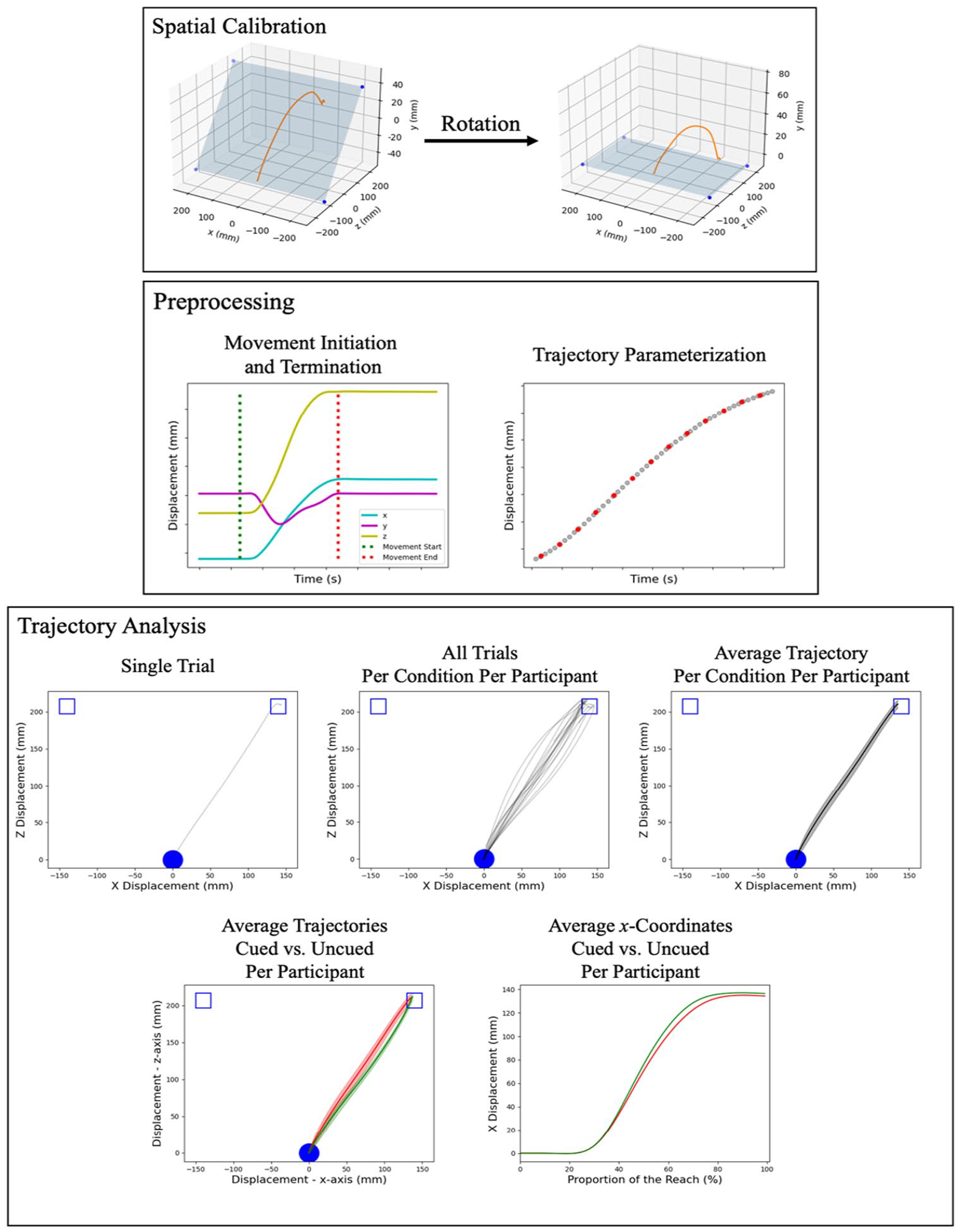

Given the screen surface was at an approximately 20° angle, each trajectory was first rotated back to the transverse plane (Figure 2

Where

Data Analysis Procedure.

Preprocessing

Missing data due to marker occlusion from each trajectory were replaced using linear interpolation with the interp1d function from SciPy (Virtanen et al., 2020). The locations of the missing data were recorded for visual inspection in a subsequent step. Then, a second-order low-pass Butterworth filter (250 Hz sampling frequency, 10 Hz cutoff frequency) was applied to each trajectory dimension. Velocity along each axis was calculated using a central difference method and was smoothed using the same Butterworth filter. Subsequently, the Pythagorean of the two primary movement axes, x and z, was computed to identify the movement onset and termination time (Figure 2

After identifying the movement segment, trials with missing data were visually inspected to ensure that (1) the missing data occurred outside the movement segment, and (2) there were no more than 15 consecutive missing data points (equivalent to 60 ms) within the movement. Trials with more than 15 consecutive missing data points within the movement segment were discarded to ensure that the linear interpolation did not introduce artefacts to the trajectory. A total of 34 trials, or 0.94% of the entire data set, were discarded.

One of the key challenges to statistically compare reach trajectories between conditions is normalisation. As Gallivan and Chapman (2014) reasoned, normalisation based on temporal re-sampling (i.e., re-sampling an equal amount of points within evenly spaced fractions of the total MT) may introduce artefacts in the results as the temporal aspect of the movement may covary with experimental manipulation. To address this issue, each dimension of each trajectory was parameterized using a third-order B-spline (Figure 2

Trajectory Analysis

To extract useful information from the fitted trajectories, trajectories were compiled and averaged for each unique combination of participant, target location, and cue location (Figure 2

Statistical analysis

Repeated measures analysis of variance (ANOVA) was conducted on MT and RT with two within-subject factors, SOA (3 levels: 100, 350, and 850 ms) and target (two levels: cued, uncued) using R’s ez package (Lawrence, 2016). Greenhouse-Geisser corrections were applied to factors that did not satisfy the sphericity assumption and are indicated by the decimal values in the reported degrees of freedom. For significant effects, post hoc simple contrasts with Tukey’s corrections were calculated to determine the source of the effect. Another repeated measures ANOVA was conducted on trajectory areas with SOA (3 levels: 100, 350, and 850 ms) and trajectory segments (5 levels: 0%–20%, . . ., 80%–100%) as two within-subject factors. Because the comparison between the trajectory areas with 0 would indicate any facilitatory and/or inhibitory effect, a series of one-sample t-tests comparing each segment’s area with 0 was also conducted and their corresponding 95% confidence intervals (CIs) are reported.

Results

Reaction time

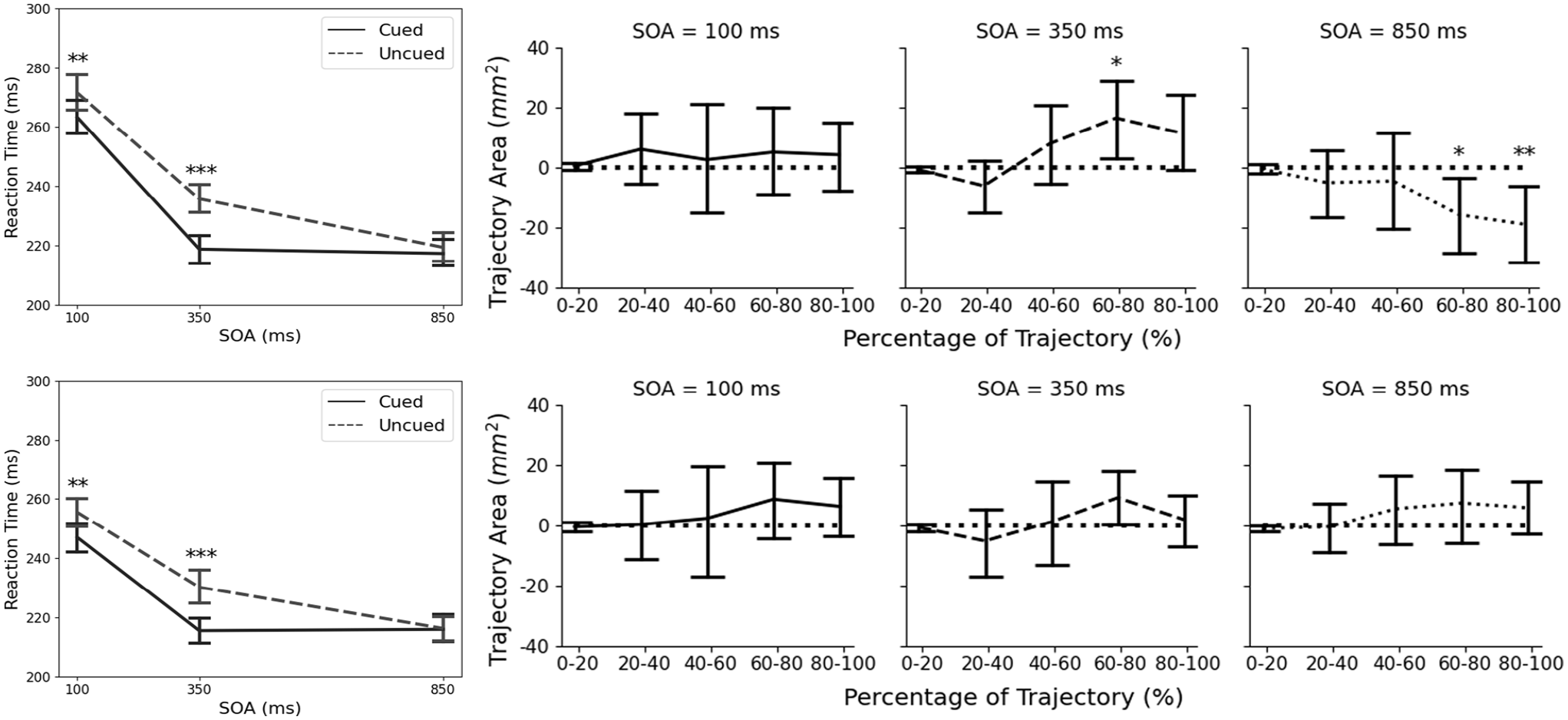

A repeated measures ANOVA showed that there was a significant effect of SOA,

Reaction time (left; asterisks indicate significant difference between the cued and uncued targets at a specific SOA) and area between the average cued and uncued trajectories (right; asterisks indicate significant difference from 0) for Experiments 1 and 2. Error bars represent the 95% CIs. *

Movement time

There were no significant effects of SOA,

Trajectory area

ANOVA did not show any significant main effects, SOA:

Discussion

This experiment revealed two main findings. First, RTs were shorter for the cued targets than the uncued targets at short SOAs (100 and 350 ms), but not at long SOAs (850 ms). This finding in RT is consistent with results from previous studies (Friesen & Kingstone, 1998; Frischen, Bayliss, & Tipper, 2007), both in terms of the timing (emerges as early as 100 ms SOA for centrally presented gaze cues; Frischen et al., 2007) and magnitude (between 10 and 20 ms of RT difference). This finding is slightly different from what was reported in Experiment 1 of Yoxon et al. (2019), where they did not find the modulating effect of SOAs on RTs (i.e., the interaction between target and SOA was not statistically significant). Using the same task, the only difference between the Yoxon et al. setup and that of the current experiment is that Yoxon et al. only showed a person’s disembodied head instead of his entire upper body with upper limbs. This difference potentially indicates that, in the context of goal-directed actions, social gaze cues would elicit facilitation effects and such effects would diminish as SOA increased. Critically, the emergence of such effects is contingent upon whether the gaze cue model also has a body and the potential to interact with the target in the same way that the participants might interact with it, that is, act-ability.

Second, and more interestingly, although the social gaze cue did not affect the temporal characteristics of the movement (MT), trajectory analysis showed that the gaze cue did affect the movement’s spatial characteristics. A facilitatory effect was observed at 350 ms SOA (with trajectories deviating towards the location of the cue on uncued target trials), and an inhibitory effect was observed at 850 ms during the second half of the reach (with trajectories deviating away from the location of the cue on uncued target trials). As Welsh and Weeks (2010) suggested, deviations between the cued and uncued trials during the initial portion of the movement reflect an effect of gaze cue on action planning, whereas deviations during the later portion of the movement reflect an effect on action execution and motor control. Yoxon et al. (2019) only examined the spatial characteristics of the movement at exactly 20% of the movement, while the current study looked at segments throughout the entire trajectory. This more thorough approach revealed that the social gaze cue had a facilitatory effect on movement execution when the SOA was short (350 ms), but the effect turned inhibitory when the SOA was long (850 ms). The crossover from facilitation to inhibition occurred between the 350 and 850 ms SOAs, which is consistent with previous findings on the IOR (see Klein (2000) for a review). More critically, the inhibitory effect only manifested in movement execution, but not in movement planning (indicated by a lack of effect during the initial portion of the trajectory) or attention (indicated by a lack of effect in RT). In sum, the results of the present study indicate that gaze cues may impact action planning if the model that presents the social gaze cues appears able to interact with the potential target locations.

Experiment 2

Experiment 1 showed that introducing act-ability, or the potential to interact with the targets, to the gaze cue model elicits activation of the motor system with varying degrees of facilitation effects in RT as a function of SOA, as well as facilitatory and inhibitory effects in movement execution (trajectories) across different SOAs. Unique to Experiment 1 was the presence of the model’s torso and limbs because the model formed a pose suggesting that the model was prepared to interact with the targets. Compared to the disembodied head used in Yoxon et al. (2019), the effect of act-ability could be confounded with the presence of the model’s torso and upper limbs. In other words, the effects in Experiment 1 could be attributed to the presence of the model’s upper body (as opposed to a disembodied head) instead of his potential to interact with the targets (the pose of his arms). In Experiment 2, the same model was used, but with his arms crossed in front of his chest, which ensured that the arms were still visible, but controlled for the model’s perceived ability to interact with the targets. If the results from Experiment 1 were attributed to act-ability, the facilitatory and inhibitory effects in the trajectory analysis would disappear in the current experiment. Alternatively, if they were attributed to the presence of the upper body, then results from the two experiments should be comparable. Nonetheless, the effects of the cue and SOA on RTs were still expected.

Methods

Participants

Twenty adults (13 females and 7 males), aged between 18 and 34, participated in this study. All participants were right-hand dominant with normal or corrected-to-normal vision and none had participated in Experiment 1. They all provided full and informed consent. All procedures were approved and were consistent with the standards of the University of Toronto Research Ethics Board. Based on the effect size reported in Experiment 1 (

Stimuli and apparatus

The stimuli and apparatus for Experiment 2 were identical to those of Experiment 1, except the same young adult male model had his arms crossed in front of his chest (Figure 1 bottom).

Procedure and design

The procedure and design for Experiment 2 were identical to those of Experiment 1, where there were 16 blocks of 12 trials (2 target locations × 2 gaze directions × 3 SOAs), for a total of 192 trials, with the first 12 trials used as practice and not included in the subsequent data analysis.

Data analysis

The analysis protocols for Experiment 2 were identical to those of Experiment 1. Seventy-nine (79) trials (2.19% of the total trials) were removed due to the marker’s loss of tracking and another 14 trials (0.39%) were removed because their RTs were smaller than 100 ms or greater than 1000 ms, or their MT was greater than 1000 ms.

Results

Reaction time

A repeated-measures ANOVA showed a significant main effect of SOA, F(1.56, 30.28) =

Movement time

ANOVA showed that there was a significant main effect of SOA, F(1.98,37.63) = 4.81,

Trajectory area

Initially, Grubbs’ two-sided test for outliers with 95% CIs showed that there were 10 outlier segments (out of 600; or 1.67%), which were removed from the analysis. The ANOVA did not show any significant main effects, SOA:

Discussion

Participants in Experiment 2 were presented with a gaze model with his arms crossed in front of his chest, eliminating his potential to interact with the potential target (i.e., act-ability). Two main findings were reported. First, RT analysis revealed a facilitation effect of the gaze cue on participants’ attention during a manual reaching task. Specifically, RTs for the cued target were shorter than those for the uncued target when the SOA was relatively short, at 100 and 350 ms, and this difference disappeared at the longer SOA, at 850 ms. This finding is congruent with what was reported in Experiment 1, suggesting the importance of the torso and upper limbs in eliciting the facilitation effect. Second, trajectory area analysis did not show any significant effects for any SOA. This finding is consistent with the earlier prediction where the social gaze cue does not affect motor execution when the cueing model does not have the potential or ability to interact with the target.

General discussion

The current study investigated the underlying mechanisms of social cueing on movement execution. Following the approach of an earlier study (Yoxon et al., 2019), the present study used an upper-limb reaching task to evaluate the facilitatory and inhibitory effects of a non-predictive gaze cue on attention and motor control. Unlike Yoxon et al., which presented the social gaze cue via a disembodied head, participants in the current study were presented with the entire upper body of a gaze cue model that either had the potential to interact with the target (Experiment 1) or not (Experiment 2). Both temporal (RT and MT) and spatial (trajectory area) characteristics of the movement were evaluated. For the temporal characteristics, both experiments showed a modulating effect of the SOA on RTs for the cued and uncued targets, where RTs for the cued targets were shorter than the uncued targets when the SOA was relatively short (100 and 350 ms). RTs on cued and uncued target trials were not different at a longer SOA (850 ms). Analysis of the spatial characteristics of the movement revealed something more intriguing—a facilitation effect at the 350 ms SOA and an inhibitory effect at the 850 ms SOA that emerged at around the middle-to-end portion of the movement. This pattern emerged in Experiment 1 when the hands of the model were near the targets, but not in Experiment 2 when the hands of the model were not near the targets. This contrast implies that social gaze cues may have a context-dependent influence on movement execution, where the act-ability of the model may produce gaze cues that lead to motor system activation.

Recall that Yoxon et al. (2019) found differing effects of gaze cues (from a disembodied head) and finger-pointing cues on movement planning and execution. Specifically, although gaze and finger-pointing cues led to changes in RTs, only finger-pointing cues affected reach trajectories. The current study provided the gaze model with the potential to interact with the target. Doing so mitigated the discrepancy between the head-only and finger-only stimuli and produced similar results in the reach trajectory as those reported in the finger-only experiment of Yoxon et al. This overall set of findings implies that a gaze cue that is made more socially- or action-relevant (via the presence of implied action) may be crucial in enabling the gaze cue’s effect on motor execution and control. Consistent with this idea, Chen et al. (2020) compared the cueing effect of a pointing finger with that of a pointing foot. Whereas the hand cue elicited the facilitation effect, the foot cue did not. In a social setting, directional cues are normally conveyed through hands, not feet. Therefore, the effect of directional social cues on attention and movement execution should also be contingent upon the social relevance of the cue itself: The addition of the gaze cue model’s torso and upper limbs, especially when the hands have the potential to interact with the target, also contributed to the enhanced social relevance of the cue.

The intricate interaction among motor planning and execution, social perception, and attention could potentially be related to the interaction between different visual pathways. In addition to the ventral (perception) and dorsal (action) pathways that emerge from early visual centres, Pitcher and Ungerleider (2021) suggested the existence of a third visual pathway dedicated to the dynamic aspect of social perception (relatedly, also see Stephenson et al. (2021) for a review on the neural substrates that contribute to the shared-attention system). In terms of connectivity, this new pathway is hypothesised to start at the early visual cortex (V1) and project to the medial temporal area (V5/MT) before ending at the superior temporal sulcus (STS). The human STS has been shown to respond to various types of visual stimuli that are social in nature, such as biological motion (Thompson et al., 2005), human voice (Kriegstein & Giraud, 2004), language (Wilson et al., 2018), and, more relevantly, eye gaze (Engell & Haxby, 2007; Pelphrey et al., 2004). These findings suggest the potential role that the STS plays in establishing the gaze cueing effect. Furthermore, the involvement of the motion selective area V5/MT is also crucial for the present discussion. Because the majority of the cells in V5/MT are directionally selective (DS), it is considered to be specialised in visual motion (see Zeki (2015) for a review). Gilaie-Dotan (2016) suggested that V5/MT, along with the medial superior temporal (MST) area, utilises the non-hierarchical connections to propagate relevant visual information to other brain areas, including those of the dorsal pathway that are responsible for visually guided reaching (Whitney et al., 2007).

Combining the knowledge of STS and the hypothesised third, dynamic social pathway with that of V5/MT, the implication of results from the current study becomes apparent. As the current study revealed, gaze cues could indeed affect movement execution. The mediating effect of the temporal offset between the gaze cue and target onsets on movement execution is consistent with the implied relationship between the dorsal and the dynamic social pathways. The common information processing component, V5/MT, could have contributed to the relationship between gaze cues (dynamic social pathway) and movement execution (dorsal pathway). Because the movement deviations due to the gaze cue occur at the later portion of the reach, it is likely that the information processed through the dynamic social pathway is projected to the dorsal pathway. The motor system, therefore, utilises both the direct input from V5/MT and the input from the dynamic social pathway. Because of the neural processing delay, the influence of the dynamic social pathway may not emerge until during the later stage of action execution.

It should be noted here that a potential limitation of the current study is the between-subject design for Experiments 1 and 2. This design was adopted to avoid any carry-over effects that may incur in a within-subject design—presenting participants with the same gaze model with and without act-ability in the same session may produce unwarranted bias in either condition (but more critically could lead participants to intuit that the model without act-ability [in the hands-crossed condition] could potentially act on the object). Furthermore, key predictions for the present study focused on the presence (Experiment 1) or absence (Experiment 2) of trajectory deviations, rather than on potential relative differences in the magnitude of any trajectory deviations. Given the design and critical predictions, RT and trajectory comparisons were performed between conditions (cued vs. uncued trials) within the same experiment. Such within-experiment comparisons are sufficient to reveal the presence and absence of the facilitatory and inhibitory effects in target prioritisation and action planning and execution. Future studies may consider adopting a within-subject design to provide an alternative approach to testing the hypotheses.

Finally, the results from the current study are consistent with calls for a shift in the methodology through which one should investigate the spatial cueing effect (e.g., Gallivan et al., 2018; Song & Nakayama, 2009). As mentioned in the Introduction, orienting of attention has been commonly studied using the spatial-cueing paradigm, which involves measurements such as RT using tasks such as button pressing (e.g., Posner & Cohen, 1984) or eye tracking (e.g., Rafal et al., 1989). However, in the context of social cueing under a more naturalistic setting, gaze cues tend to be associated with action execution. Because of the potential link between social perception and motor control, adopting an action-based evaluation method could yield more insights into the effects of social gaze cues from a functional perspective. In conclusion, the present study established a connection between social gaze cue and movement execution, where allowing the gaze cue model to have the potential to interact with the target enabled the social gaze cue to influence movement execution.

Footnotes

Acknowledgements

We would like to thank Goran Perkic for being the gaze cue model and Jacob Burgess in assisting to collect part of the data for this study. We would also like to thank Luis Jiménez and another reviewer for their thoughtful comments during the review process.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.