Abstract

The regularity of musical beat makes it a powerful stimulus promoting movement synchrony among people. Synchrony can increase interpersonal trust, affiliation, and cooperation. Musical pieces can be classified according to the quality of groove; the higher the groove, the more it induces the desire to move. We investigated questions related to collective music-listening among 33 participants in an experiment conducted in a naturalistic yet acoustically controlled setting of a research concert hall with motion tracking. First, does higher groove music induce (1) movement with more energy and (2) higher interpersonal movement coordination? Second, does visual social information manipulated by having eyes open or eyes closed also affect energy and coordination? Participants listened to pieces from four categories formed by crossing groove (high, low) with tempo (higher, lower). Their upper body movement was recorded via head markers. Self-reported ratings of grooviness, emotional valence, emotional intensity, and familiarity were collected after each song. A biomechanically motivated measure of movement energy increased with high-groove songs and was positively correlated with grooviness ratings, confirming the theoretically implied but less tested motor response to groove. Participants’ ratings of emotional valence and emotional intensity correlated positively with movement energy, suggesting that movement energy relates to emotional engagement with music. Movement energy was higher in eyes-open trials, suggesting that seeing each other enhanced participants’ responses, consistent with social facilitation or contagion. Furthermore, interpersonal coordination was higher both for the high-groove and eyes-open conditions, indicating that the social situation of collective music listening affects how music is experienced.

Introduction

Music is a universal human phenomenon that through history and across cultures is typically experienced in a social setting (Freeman, 2000). Even a seemingly passive exercise such as listening to a concert can be understood as a collective activity because the intentional joint attention to the music is a form of joint action (Fiebich & Gallagher, 2013). Indeed, listeners’ strongest musical experiences are often reported to take place during live concerts (Lamont, 2011). The power of shared musical experiences likely resides partly in the positive feedback between individuals’ excitement and the excitement of the crowd as a whole. This excitement is often expressed in rhythmic body movements, leading to the question of whether being able to see the body movements of others enhances movement energy and movement coordination among participants. Here, we investigate responses to music during collective listening, asking whether body sway and interpersonal synchrony across individuals are enhanced by (1) rhythmic characteristics of the music and (2) seeing the other members of the audience co-participating in the act of listening to the same concert, as well as whether these factors affect subjective experience.

There is a large literature on the ability of individuals to entrain their movements to sequences of regularly presented sounds and to the beat that can be perceptually extracted from complex music (Jantzen & Kelso, 2007; Levitin et al., 2018; London, 2012; Patel & Iversen, 2014; Repp & Su, 2013; Trainor & Marsh-Rollo, 2019). While many non-human animals execute rhythmic movements, few readily synchronise their movements to auditory rhythms, and this propensity of humans to move to auditory beats appears to be related to language and musical processing (Patel & Iversen, 2014). Indeed, the motor systems is intimately connected with auditory rhythm perception, such that simply listening to a rhythm engages motor regions of the brain (Fujioka et al., 2012; Grahn & Brett, 2007). The human response to rhythms is very flexible, encompassing tempos from about 200 to 2,000 ms between beat onsets (Merchant et al., 2015). Furthermore, people extract the grouping or metrical structure from music in that they can tap or move different effectors on every beat, twice per beat, or on beat groupings, such as on every second beat as in a march or on every third beat as in a waltz (Toiviainen et al., 2010). Rhythmic entrainment is also evident in neural oscillations that phase align with presented auditory beats (Calderone et al., 2014 Chang et al., 2016, 2019; Fujioka & Ross, 2017; Fujioka et al., 2012; Iversen & Balasubramaniam, 2016; Large & Snyder, 2009). The importance of rhythm for humans can be seen in that poor perception and/or entrainment to beats has been linked to all of the major development disorders, including dyslexia, autism, stuttering, attention deficits, and developmental coordination disorder (Trainor et al., 2018).

How individuals move in response to music with different characteristics has been less studied (but see Naveda & Lemon, 2010; Toiviainen et al., 2010). However, the concept of groove, defined as the extent to which a piece makes you want to move (Janata et al., 2012), has been explored. High-groove music has been shown to increase the desire, readiness, and propensity to move (Janata et al., 2012; Madison, 2006; Stupacher et al., 2013). The evidence as to whether it increases the actual amount and/or energy of movement remains inconclusive (Hove et al., 2020; Hurley et al., 2014; Leman et al., 2017; Leow et al., 2014; Witek et al., 2017). Movement energy, defined more broadly as vigour, has emerged as a window on the interaction between reward prediction and motor control (Shadmehr et al., 2019). Depending on the nature of the task, vigour can correspond to quantities such as the speed with which one reaches to grasp a more or less desirable object or the energy with which ones is walking towards a goal (Shadmehr & Ahmed, 2020). Given the oscillatory nature of body sway and musical rhythm, we applied a measure for the energy of an oscillator to determine whether physical responses to music are more energetic for high- compared to low-groove music. Furthermore, we addressed whether these are affected by the social context. Does the presence of fellow audience members lead to an enhanced response?

While individuals’ motor timing with respect to external pacing signals is a widely investigated topic, fewer studies have examined the coordination dynamics between two people engaged in tasks such as swinging a pendulum or rocking in chairs, in which spontaneous synchronisation often occurs (Demos et al., 2012; Richardson et al., 2007; R. C. Schmidt et al., 1990; R. C. Schmidt & O’Brien, 1997). These studies indicate that seeing the other person and the presence of an auditory pacing signal enhance interpersonal synchrony. Larger groups create the possibility for more complicated interactions where synchronisation depends not only on overall coupling and individuals’ intrinsic dynamics but also on group topology as a pattern of connectivity (Alderisio et al., 2017). Spontaneous motor synchronisation between conspecifics is also seen in non-human species that live in social groups (Couzin, 2018; Ravignani, 2018; Ravignani et al., 2014). Arguably, one of the benefits of such collective behaviour is to integrate information across the group and quickly influence group decisions (Couzin, 2018; Miller et al., 2013). But there are further reasons why we expect social settings to enhance individuals’ responses to music, namely joint or shared attention, humans’ affinity for spontaneous coordinated group action, and the social nature of the human brain.

In humans, social or joint attention is common. We have the ability to “tune to the same frequency” as others in a social setting and activate our collective attention to relevant information (Steinmetz & Pfattheicher, 2017). For example, the mere presence of observers with whom participants also share common contextual information homogenises their direction of visual attention (Richardson et al., 2007). Mere presence even has a role in solo tasks in that individuals’ performance is enhanced due to the presence of blindfolded observers who contribute no information (reviewed in Steinmetz & Pfattheicher, 2017). Joint attention is a critical developmental milestone that infants need to master in order to socialise and learn from others (Tomasello, 2014). Joint attention often leads to joint action, such as during applause, which can be understood as a fast social contagion (Mann et al., 2013) that can spontaneously give rise to rhythmic patterns among participants (Néda et al., 2000). Another example is musicians playing together without a conductor, a situation that involves joint attention, joint intention, and synchronisation (e.g., Chang et al., 2017, 2019; Palmer & Zamm, 2017; Volpe et al., 2016). In group “silent disco” dancing, where participants listen to their individual music over headphones, memory is enhanced for individuals who move in sync with each other (Woolhouse et al., 2016). Interpersonal movement synchrony increases in particular during the most intense moments of songs (Solberg & Jensenius, 2019), with more familiar songs (Ellamil et al., 2016), and in the presence of coupling between auditory, visual, and haptic modalities (Chauvigné et al., 2019).

A number of studies have shown that interpersonal synchronous movement can have profound social consequences. Synchronised movement encourages thinking about the partners’ mental states, so-called mentalizing (Baimel et al., 2018). A meta-analysis showed that even after taking some failed replications into account, synchronous behaviour positively impacts prosocial behaviour, bonding, and cognition (Mogan et al., 2017). After two people move together in synchrony, they report liking each other more and trusting each other more compared to after asynchronous movement (Hove & Risen, 2009; Valdesolo et al., 2010). Prosocial effects of interaction in musical contexts are enhanced if the movement is in synchrony with the musical beat (Stupacher et al., 2017). When playing a game involving the choice between cooperation and competition, participants will cooperate more with those with whom they moved in synchrony (Wiltermuth & Heath, 2009), and a dyad is more likely to be perceived as forming a social unit if it is moving in synchrony (Lakens, 2010). Such prosocial effects of synchronous movement are seen even in infancy (Cirelli et al., 2014, 2016; Cirelli, Trehub, & Trainor, 2018; Cirelli, Wan, et al., 2018; Trainor & Cirelli, 2015; Tunçgenç et al., 2015). Furthermore, some human activities are not merely enhanced by the group but acquire their meaning only through group activities (De Jaegher & Di Paolo, 2007), such as when chanting protest songs with others in a coordinated manner and so endowing the crowd with agency (Cummins, 2018). Finally, humans’ social capability for joint social attention and coordinated action may also be critical for our technological and cultural achievements (Barrett et al., 2010; Tomasello, 1999, 2014) facilitated by the fact that large parts of the human cortex respond to social stimuli (Frith & Frith, 2001; Schilbach et al., 2013).

Among the human activities discussed so far, music is one that is intrinsically social (Brown & Knox, 2017; Lamont, 2011). Yet, it has been difficult to investigate while achieving both ecological validity and experimental control (D’Ausilio et al., 2015). We conducted the present study in the LIVELab at the McMaster Institute for Music and the Mind, which combines a fully functioning concert venue with technology enabling control of the acoustics and motion capture of the entire audience. Joint attention and movement synchronisation are likely important parts of the audience concert experience, but research to date has not attempted to separate the contributions of moving in sync with the music and moving in sync with other audience members. Indeed, simply measuring movement responses at a concert confounds these two contributions. Furthermore, effects of rhythmic qualities of the music on audiences’ movement energy and interpersonal synchronisation have also been little explored, although one previous study found that fans of a performing rock group moved faster than neutral listeners in a concert situation (Swarbrick et al., 2019). Here, we manipulated the groove of the music and attempted to separate effects of moving in sync to the music from visual social cues to movement by manipulating whether audience members’ eyes were open or closed. We hypothesised that movement energy and interpersonal movement synchronisation among participants would be greater for high- than low-groove music and when visual social cues were present.

Method

Participants

Thirty-four (median age = 26, SD = 7.5, females = 20) healthy adults with no known hearing or movement impairments volunteered to take part in the experiment. They were attendees at a local conference dedicated to the topic of music cognition, hence they were not naive to the broad topic of the experiment. Data from 33 (N = 33) participants were analysed because one participant was seated outside the range of reliable motion capture. All attendees provided informed consent and the protocol was in agreement with the Declaration of Helsinki and was approved by the McMaster University Research Ethics Board. There were no explicit selection criteria and sample size was determined before data analysis on the basis of theoretical constraints, namely, our desire for larger group sizes, and practical constraints, namely, the cost and complexity of conducting the experiment.

Apparatus

The study was conducted in a laboratory and live performance space (LIVELab, Large Interactive Virtual Environment1) equipped with active acoustics and motion tracking capacity covering the seating area. The auditorium’s sound system (Meyer Sound, Inc., CA) was used to deliver the acoustic stimuli. Participants were seated in concert hall chairs, 58 cm wide, arranged in rows, spaced at 120 cm.

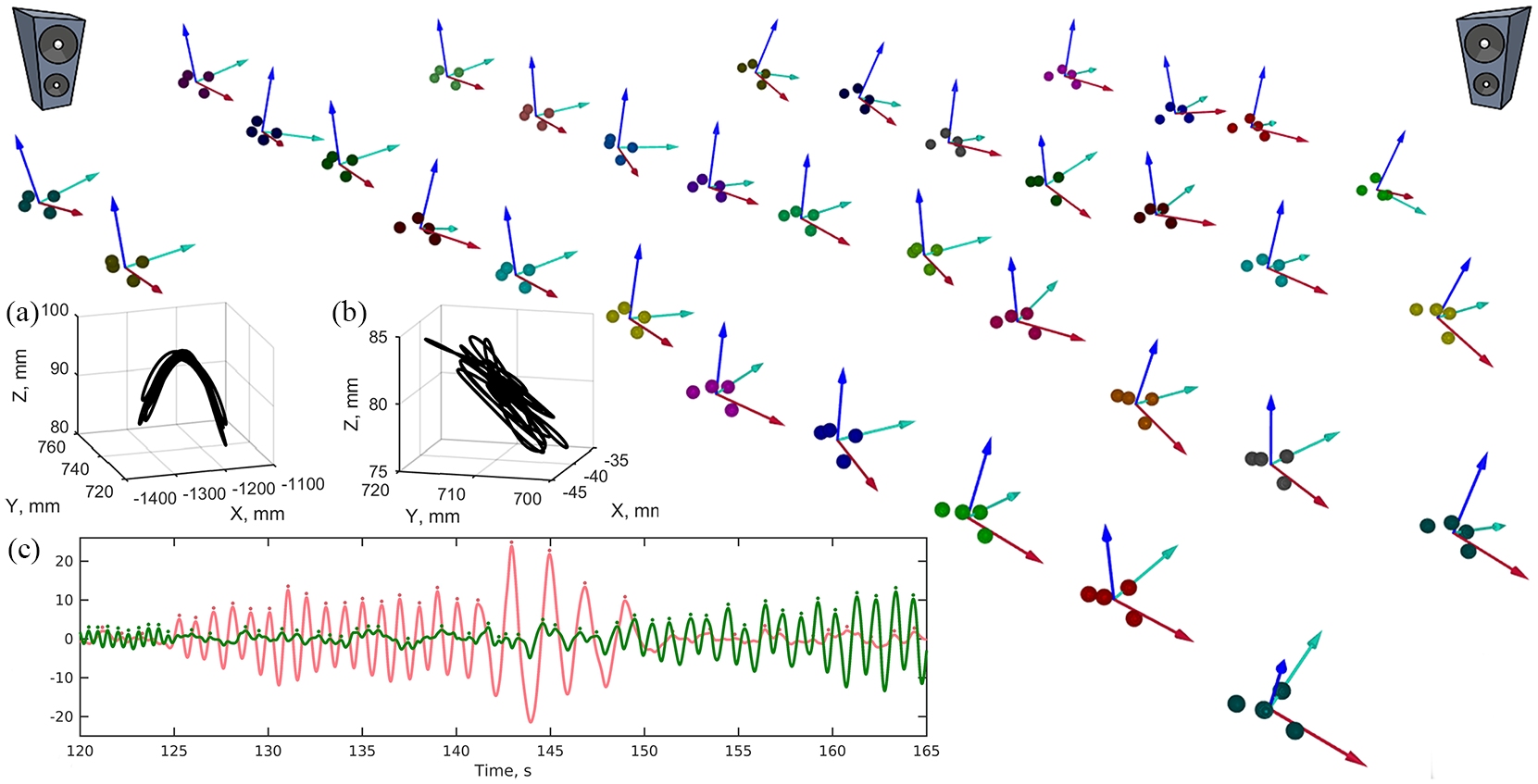

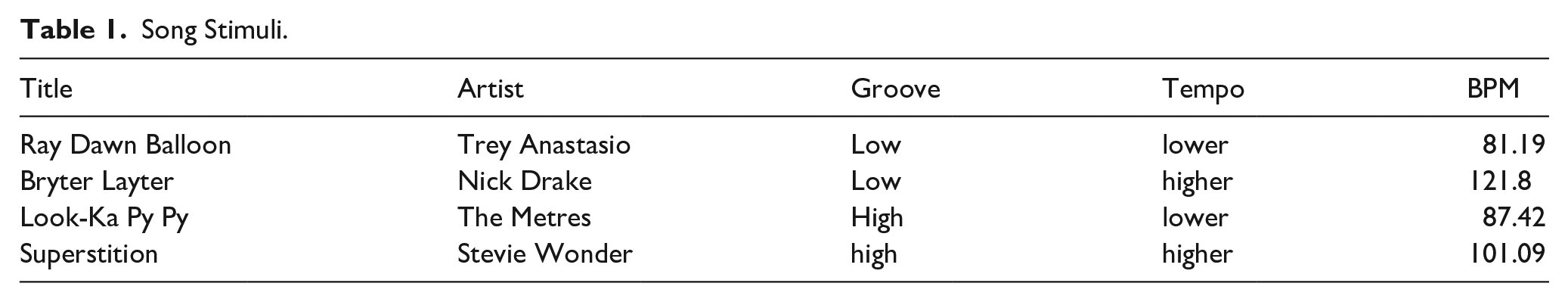

Head movements were recorded at a sampling rate of 100 Hz with a motion capture array of 24 infrared cameras and passive reflective markers (Qualisys Inc., Gothenburg, Sweden). Each participant wore a stiff felt cap with an elastic under-chin strap and four reflective markers placed on the top and on the lateral and frontal extremities near the edge. The four markers defined a rigid body with position and orientation in the coordinate system of the hall (see Figure 1) selected from among the lowest and highest ranking songs in a reference corpus on groove (Janata et al., 2012) and lower/higher tempo. Average song tempos were extracted by marking the locations of the beats along the course of the song using the BeatRoot beat-finding software (Dixon, 2007) and then examining each song to make manual corrections if necessary.

Seating arrangement and motion capture in the concert hall. The locations of participants’ hats are shown as four-point rigid bodies; arrows represent head orientations. The audience faces the upper right corner. Insets show two representative modes of head movement, (a) side-to-side and (b) front-back. The 10 sec sample trajectories are shown in the coordinate system of the room where X is aligned with the medio-lateral dimension of the seats, Y with the antero-posterior, and Z the vertical. Inset (c) shows movement in the first two principal axes from a selected time interval from one participant. Cycle-by-cycle analysis was performed by parsing the continuous movement using peak detection, as shown by the dots. The amplitude, period, and energy of these cycles was considered for further analysis. The licensing information for the speaker image within this Figure can be found here: https://www.pngitem.com/middle/JwwTT_speaker-clipart-hd-png-download/

Design

In addition to manipulations of stimulus groove and stimulus tempo, the presence of visual social cues to others’ movements was manipulated by asking participants to close their eyes on half of the trials. Specifically, using a within-subjects design, the factors of stimulus groove (high, low), stimulus tempo (higher, lower), and visual social cues (eyes open, eyes closed) were crossed for a total of eight conditions, presented in random order. As all participants were tested as a group, they all experienced the same stimulus order. Before each stimulus was presented, participants heard 5 s of random notes to minimise carryover effects. However, the single order of trials is a limitation of the design. One trial was recorded in each condition. Each of the four songs was repeated twice, once with eyes open and once with eyes closed.

Task

Participants’ task was to listen to the stimuli and report their subjective experiences after each song. They were aware that their head movements were being recorded, but no specific instructions were given as to whether or how they should move to the music.

After each trial, each participant independently gave a grooviness rating, familiarity rating, emotional valence rating, and emotional intensity rating of the presented song. The directions for participants were as follows: “Grooviness is defined as the aspect of music that induces a pleasant sense of wanting to move along with the music” (extremely weak to extremely strong), “How familiar are you with this song?” (not familiar to very familiar), “How sad or happy did you feel while you were listening to the song?” (very sad to very happy), “How intense was this emotion?” (not intense to very intense).

Procedure

After hearing the instructions, signing informed consent forms, and filling out the questionnaires, participants performed the sequence of eight trials. A short pause was given after each trial for participants to rate each song on grooviness, familiarity, emotional valence, and emotional intensity. At the beginning of each trial, the experimenter instructed participants to keep their eyes open or closed. The experimenter and two assistants monitored the audience to make sure that participants complied with the eyes closed/eyes open instructions. However, it was not possible to check post hoc to verify that all participants fully complied. The first 5 s of each trial consisted of random notes to reduce carryover from trial to trial, followed by the 3-min song excerpt.

Movement analysis

Preprocessing

The movements of end effectors usually follow stereotypical trajectories and are constrained to a dynamic space with reduced dimensionality at any given time but across repetitions this space can change (Wolpert et al., 2013). Principal Component Analysis (PCA), effectively a dimensionality reduction method when applied to multivariate movement recordings, extracts the relevant dimensions of movement and shows how they are aligned with the recording coordinate system (Daffertshofer et al., 2004). After applying PCA to the three-dimensional time series of head movements, separately for each participant and each trial, we found that on average the first two eigenvectors, here called dimensions of movement of principal components PC1 and PC2, accounted for 98.92% (SD = 1.09%) of the signal (see Figure 1c and the planar movement in Figure 1a and b). The eigenvectors were approximately aligned with the chairs’ medio-lateral and anterior-posterior dimensions. The average angle between each eigenvector and the corresponding axis of the frame of reference was 19.39° (SD = 11.87°). Across the 33 participants by 8 trials, the first eigenvector aligned with the x-axis in 60.66% of trials, the y-axis in 38.6%, and the vertical in 0.74%, indicating that participants’ movements were mostly constrained in the medio-lateral and/or anterior-posterior planes, where the principal axis could switch between the medio-lateral or anterior-posterior planes across participants or across trials for the same participant. A band-pass filter (second-order, zero-phase) between 0.2 and 4 Hz was applied to the PC1 and PC2 time series to eliminate measurement noise, irrelevant movements at faster time-scales, and positional drift. (Separately, we confirmed that there was no activity in the higher frequency range, see Supplementary Figure S1). Subsequent analyses were based on these two components. In particular, each of the 33 individual 3D trajectories recorded in each trial, were reduced to two one-dimensional time-series, PC1 and PC2. Hence, from here on PC1 and PC2 refer to two continuous time series of the movements of an individual participant in a single trial.

Cycle-by-cycle analysis

A major challenge in the analyses was the unconstrained nature of the task arising from the naturalistic design. When movement is elicited by music, different metric levels are distributed to different parts of the body in what seems to be a biomechanically constrained fashion; for example, gross-motor movement of the upper torso responds to long periods of two or four beats (Toiviainen et al., 2010). In the present case, movement often switched between the two principal axes, meaning that for different sections of a trial either the first or second axis represented the axis of primary movement, see Figure 1c. Different participants switched at different moments in each trial. Furthermore, sometimes movement periodicity (tempo) doubled or halved to match different metric levels of the stimulus beat (e.g., quarter note level; half note level) implying a sort of non-stationarity that is a problem for many potential time series analysis methods. Occasional large amplitude events were seen when participants shifted in their chairs.

An artefact rejection procedure was devised to eliminate extraneous events. Each raw time series was parsed into a discrete sequence of movement cycles defined by successive peaks in the trajectory, see Figure 1c. Peaks were detected initially based on velocity zero-crossings. Subsequently, amplitude (peak magnitude relative to subsequent valley), and duration (minimum time from one peak to the next) were considered. Cycles with periods or amplitudes that exceed the trial median by four standard deviations were removed and the respective part of the raw time series was replaced with zeros.

We applied the Hartigans dip test of unimodality (α = .05; Hartigan & Hartigan, 1985) to the series of cycle parameters separately for each trial and participant. On average across participants, the unimodality null-hypothesis was rejected for cycle amplitudes in 5% (SD = 3%) of trials and for cycle periods in 37% (SD = 12%) of trials, suggesting that periodicity possessed unsuitable statistical properties to be treated as a dependent variable.

Measures of movement

To characterise the movement of each participant in each trial we used four measures based either on the continuous movement trajectories or cycle-by-cycle parameters.

Tempo alignment

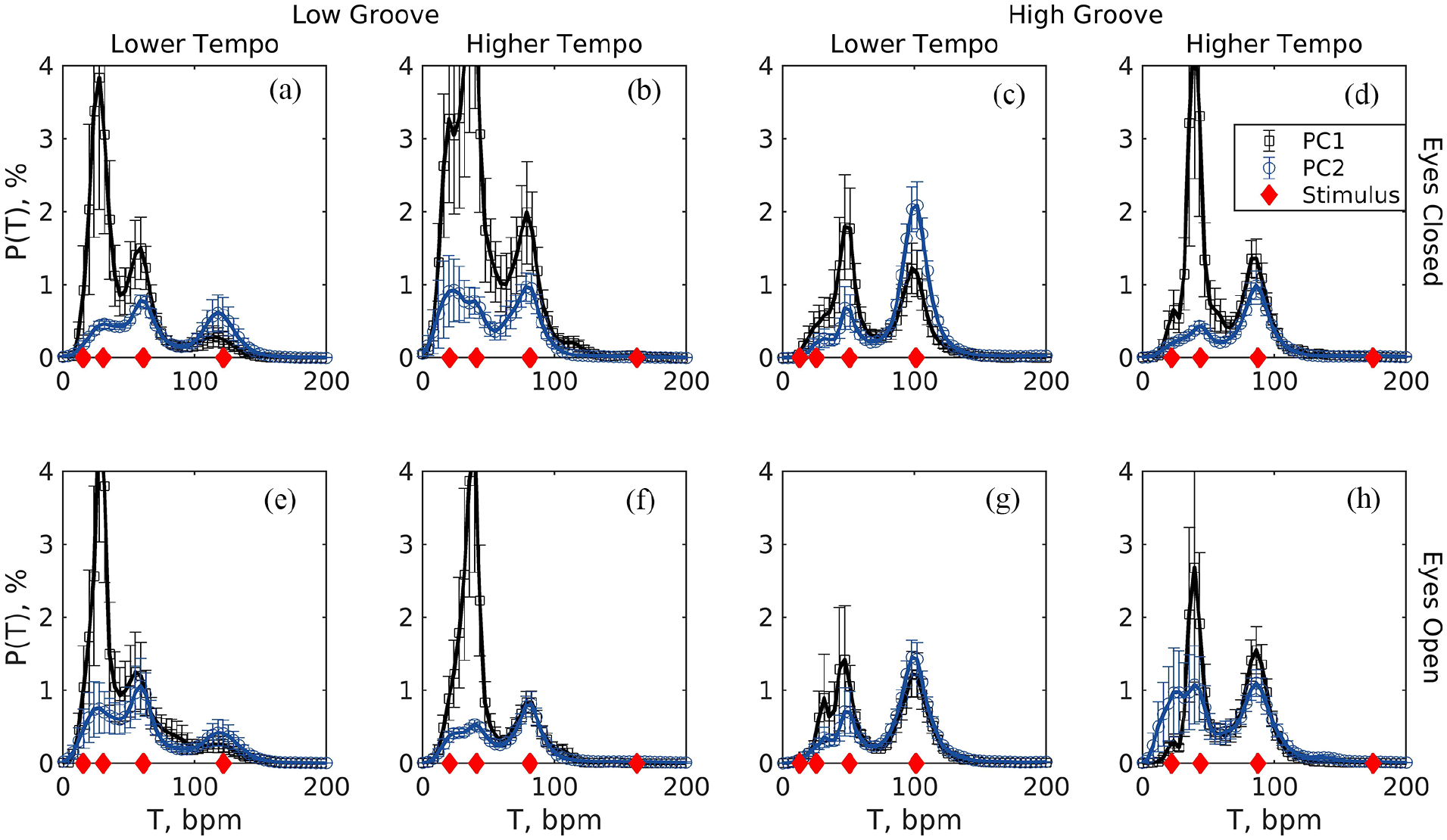

The degree to which participants’ movements matched the tempos of the stimuli was measured in terms of the absolute difference between the mode of movement tempo and the beat tempo at the closest metrical level. This is required because different participants can attend to different metric levels in the present conditions of free movement and complex musical stimuli and it is necessary to verify that participants’ movements contained a periodicity corresponding to one of the metric levels of the stimulus (Burger et al., 2014). On a trial-by-trial basis we took the cycle periods (see Section 2.7.2) converted to tempos and localised up to four peaks in a probability density function estimated with an adaptive kernel-density method (Botev et al., 2010), see Figure 2.

Probability densities of cycle tempos in the first and second axes of movement (PC1 and PC2) averaged (SE) across participants in each of the eight trials (low/high groove X low/high tempo X eyes open/closed). (a) Low groove, lower tempo, eyes closed; (b) Low groove, higher tempo, eyes closed; (c) High groove, lower tempo, eyes closed; (d) High groove, higher tempo, eyes closed; (e) Low groove, lower tempo, eyes open; (f) Low groove, higher tempo, eyes open; (g) High groove, lower tempo, eyes open; (h) High groove, higher tempo, eyes open. Probability densities were estimated individually from each participant’s series of movement cycles using an adaptive kernel-density estimation method. Diamonds (red) along the x-axis show the stimuli tempos at four metric levels.

Amplitude

Movement amplitude describes the half-distance back-and-forth or side-to-side (i.e., in PC1 and PC2) of participants’ head trajectories. As stated, movement tended to fall either in the one or the other dimension and switch between them, hence, for a measure of movement amplitude we first took the median of the peak amplitudes of movement cycles separately in PC1 and PC2 and then averaged the two parameters.

Energy

The intensity or energy of physical movement to music has been measured in terms of movement speed (Leow et al., 2014), vigour (Atkinson et al., 2004; Swarbrick et al., 2019), or empowerment (Buhmann et al., 2016; Leman et al., 2017). For an oscillatory process, its amplitude, frequency, and other kinematic variables can describe its geometry, but they do not have direct physical or physiological meaning; increasing the energy of an oscillating physical system can increase its amplitude, its frequency, or both, depending on constraints. Furthermore, amplitude and frequency can be distributed bimodally even within the same trial as participants switch the metrical level to which they attend, that is they can double the frequency and reduce the amplitude (see Figure 1c). We described the energy of oscillatory pendulum-like body sway by applying a method that follows biomechanical arguments and sums the velocity-dependent kinetic energy and amplitude-dependent potential energy,

Dimensionality

This measure captures the complexity of the movement by reflecting the relative extent to which both front-to-back and side-to-side movements were present in individual participants. As stated above, movement was confined to the axes of the first two principal components. The contribution of the second component relative to the first varied across individuals and trials, with most of the movement being in one dimension in some cases, but more distributed across the two dimensions in others. For this reason, we analysed as a dependent variable the percent variance of the original data explained by the second principal component divided by the same quantity for the first component.

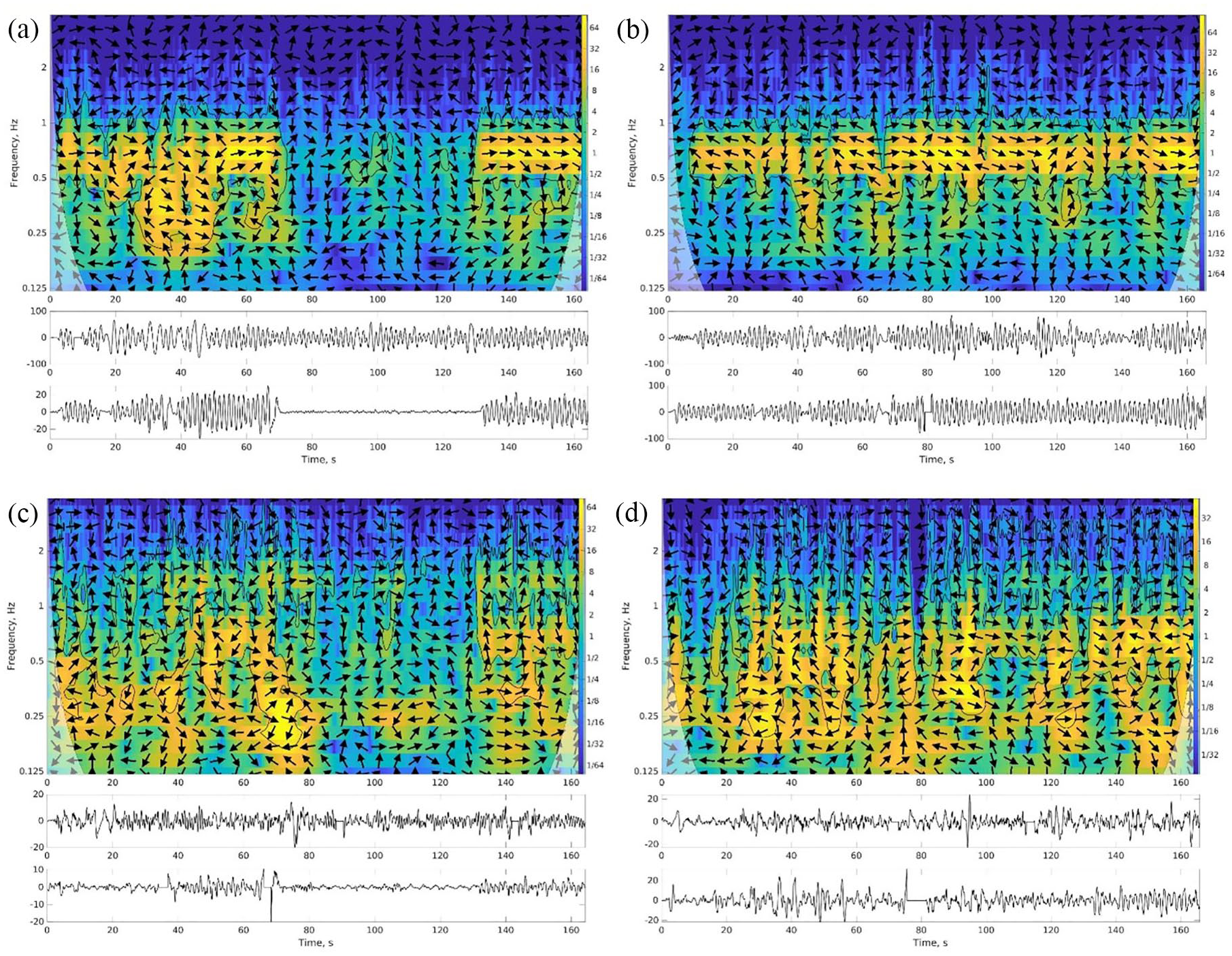

Interpersonal coordination

Interpersonal coordination between pairs of participants was measured with the cross wavelet transform (Grinsted et al., 2004). This gives a measure of how much the oscillations of each pair of participants match in frequency and amplitude. The method takes pairs of variables (movements of participants in this case) that fluctuate in time and returns their common power or so-called common features, matching oscillations across time and frequency (tempo of movement) bins. It has localisation in frequency because it analyzes the signals in separate frequency bands, similar to spectral analysis, and also localisation in time (see Figure 3). The cross wavelet transform can account for nested rhythmic structure (Washburn et al., 2014) and is sensitive to the shifting patterns of coordination present even within short trials of postural tasks (Harrison et al., 2021). The toolbox from Grinsted et al. (2004) was used to analyse movement coordination separately in each of the two pre-processed dimensions (axes) of movement (i.e., PC1 and PC2), after filtering, artefact removal, and principal component analysis. The phases in the cross wavelet transform were not retained for further analysis. For sake of data reduction, power was averaged across time and frequency bins of the cross wavelet transform, and then between the two axes of movement, resulting in a single measurement C for a given trial and participant pairing. (Treating each of the axes separately, not shown here, did not lead to qualitatively different results.) C was log-transformed to improve normality. Consistently across all trials and pairings, power exceeded the critical value for statistical significance (the bright regions in Figure 3), which was expected given that all participants were responding to a common stimulus.

The cross wavelet transform of two variables fluctuating in time (lower insets) has high power for matching oscillations localised in time and frequency (coded as brightness). Shown here is a sample pair of participants. Left panels (a, c) are eyes closed trials and right panels (b, d) are eyes open trials with the same stimulus. Top panels (a, b) show the largest dimension of movement; bottom panels (c, d) show the second largest. Arrows indicate phase (right for in-phase, left for anti-phase).

Stimulus ratings

At the end of each stimulus presentation participants rated through self-report the musical piece on four items: groove, familiarity, emotional valence, and emotional intensity. The groove question rated “the aspect of the music that induces a pleasant sense of wanting to move along with the music” on a scale from 0 (extremely weak) to 10 (extremely strong) following (Sioros et al., 2014). Familiarity, valence (from sad to happy, L. A. Schmidt & Trainor, 2001), and intensity were rated on a six-point scale (0–5).

Statistical analyses

Linear mixed effects models (Bates et al., 2014; Singer & Willett, 2003) were used as an alternative to repeated-measures ANOVAs 2 because the non-independence of observations in the eyes-open condition could potentially lead to under-estimated standard errors. The coefficients of the significant effects and interactions are reported in the text, with significance determined using the Satterthwaite method. The details and model-selection procedure—term-by-term model expansion with likelihood ratio tests at each step—are reported in the Supplementary Materials.

Results

Effects of stimulus groove, stimulus tempo, and visual social cues on individual movement parameters

Tempo alignment

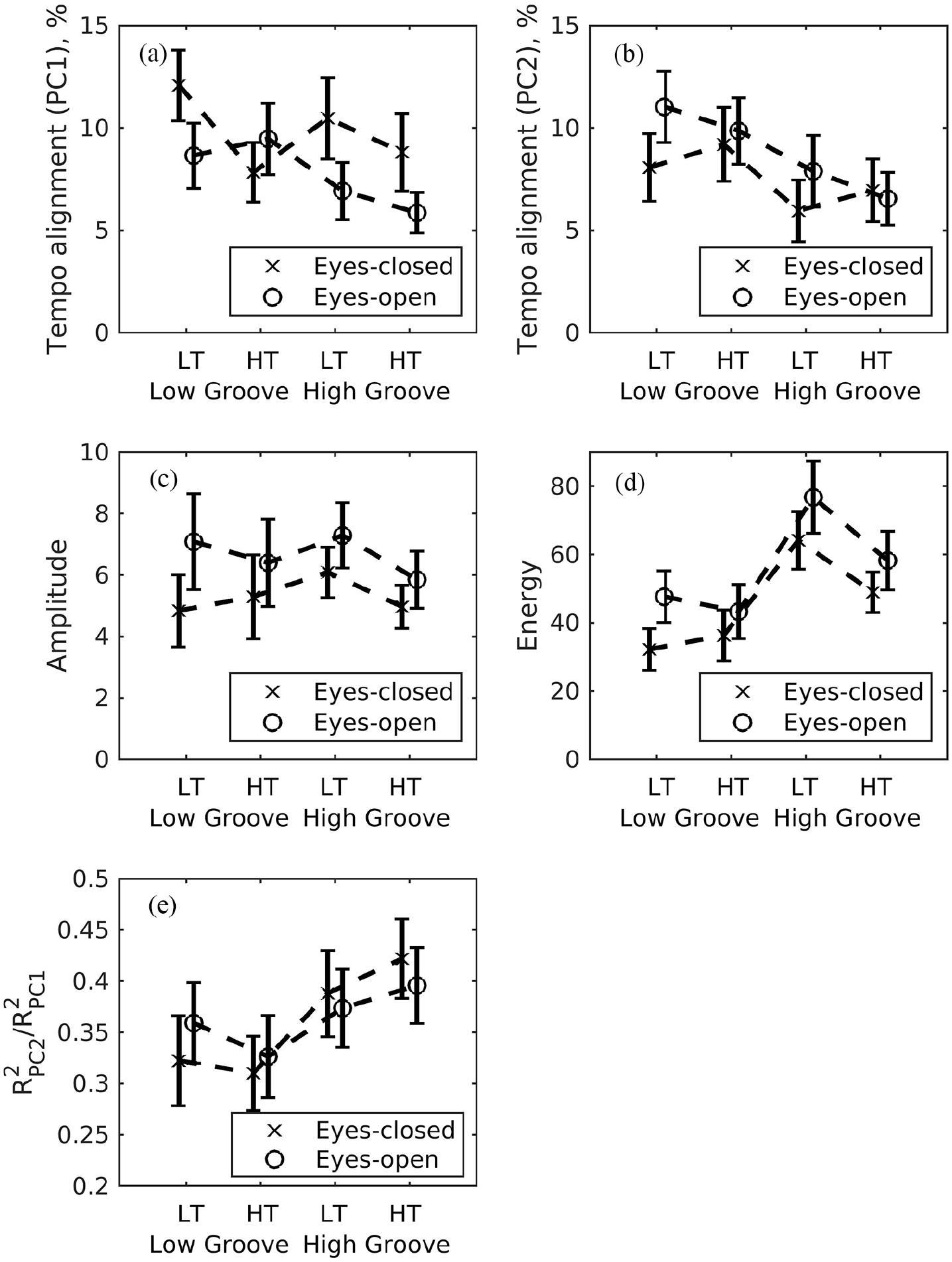

The percent difference between movement tempo and stimulus tempo changed little across conditions in the primary axis of movement (PC1) but tended to be smaller for high-groove stimuli, see Figure 4a. The selected model was the null model with an intercept as the trivial significant effect (β = 0.083, SE = 0.006, t = 13.83). In the second dimension of movement, the model consisted of a significant effect of groove (β = –0.029, SE = 0.010, t = –2.85), indicating that the subtler movements exhibited lower tempo difference (better tempo-matching) with higher groove (see Figure 4b).

Movement parameter means (SE) across visual social cues, stimulus groove, and stimulus tempo. LT = lower tempo; HT = higher tempo. (a and b) Tempo alignment in PC1 and PC2. (c) Amplitudes averaged across PC1 and PC2. (d) Energy averaged across PC1 and PC2. (e) Dimensionality as the ratio of amount of variance in PC2 relative to PC1.

Amplitude

There was a significant effect of visual social cues (β = 0.093, SE = 0.010, t = 8.91), indicating that movement amplitude was larger in eyes-open than eyes-closed trials (see Figure 4c).

Energy

The energy of body sway was larger with high than low groove stimuli (main effect of stimulus groove: β = 31.51, SE = 5.31, t = 5.94). There was also an interaction between stimulus groove and stimulus tempo (β = –18.87, SE = 7.51, t =−2.51), indicating that the increased movement energy for high groove stimuli was more pronounced in slower than faster tempo trials (see Figure 4d and Supplementary Figure S2). In addition, there was a main effect of social visual cues (β = 14.87, SE = 5.31, t = 4.08), with higher movement energy for eyes-open than eyes-closed conditions.

Dimensionality

Relatively more movement in the second axis (as represented by PC2) is an indication of a higher movement dimensionality. The relative amount of movement in the second axis was significantly greater for high- than low-groove conditions [main effect of stimulus groove: β = 0.065, SE = 0.025, t = 2.60), see Figure 4e.

Ratings of musical groove, familiarity, emotional valence, and emotional intensity

As expected, high groove stimuli were rated as being higher in groove. In addition to the main effect of stimulus groove (β = 5.76, SE = 3.89, t = 14.77), there was an interaction between stimulus groove and stimulus tempo (β = –2.03, SE = 0.55, t = –3.69), indicating that participants rated the high-groove fast-tempo stimuli as lower on groove than the high groove slow tempo stimuli. The marginal means (SD) for rated groove in low-groove lower-tempo, low-groove higher-tempo, high-groove lower-tempo, and high-groove higher-tempo stimuli across eyes-closed and eyes-open trials were 3.16 (1.80), 4.18 (2.19), 8.82 (1.28), 7.59 (2.17), respectively.

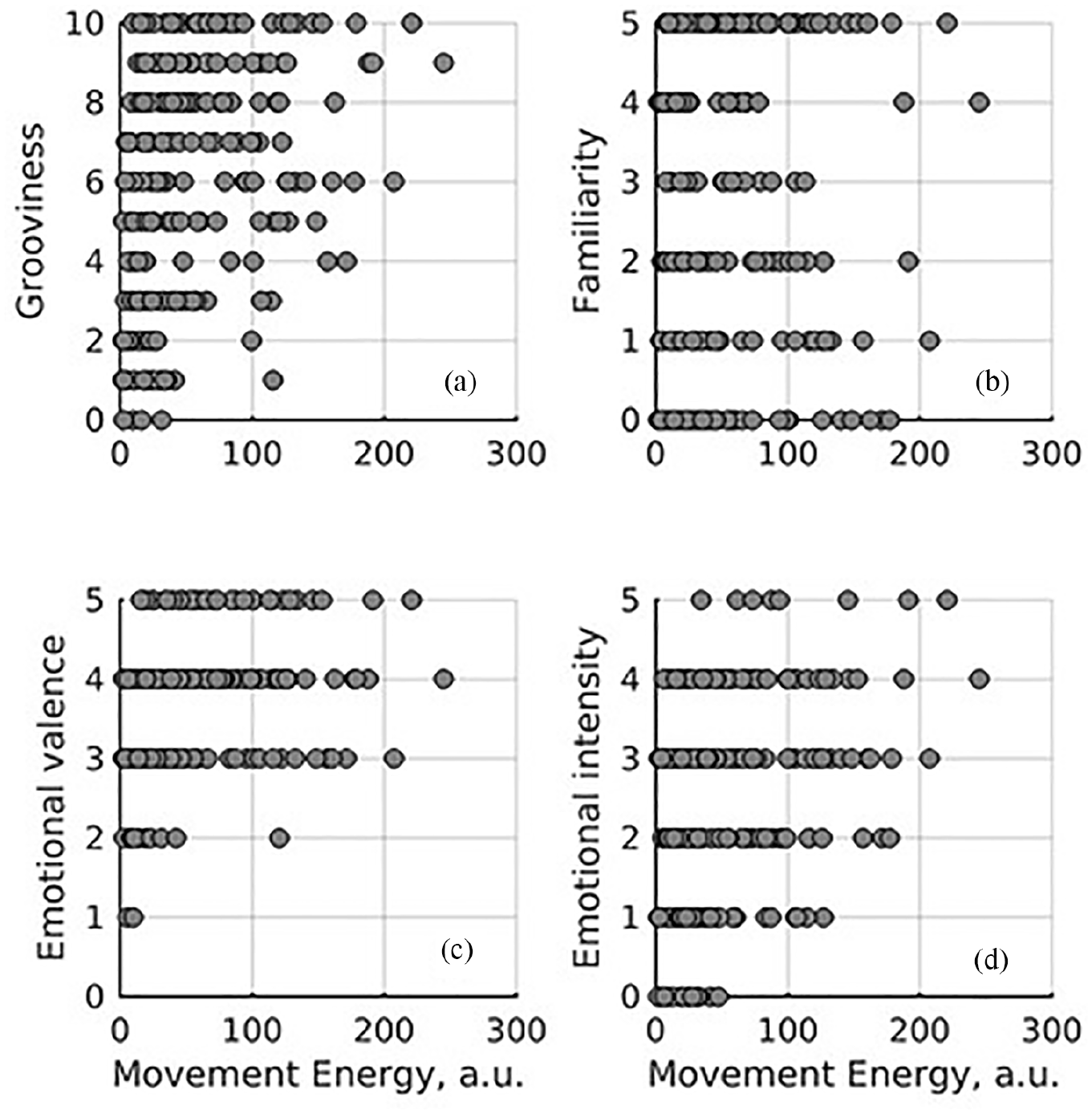

Of particular interest was the possible association between music ratings and movement energy, see Figure 5. Following the same linear modelling approach, we added consecutively ratings of groove, familiarity, emotional valence, and emotional intensity as predictors of energy. Groove (β = 2.46, SE = 0.71, t = 3.48), valence (β = 5.19, SE = 2.50, t = 2.08), and intensity (β = 4.26, SE = 1.79, t = 2.38) were positively associated with energy. That familiarity was not associated with energy, at least in this study, suggests that perception of stimulus features (i.e., groove, valence, intensity) affect movement energy more than whether the person had heard the music previously.

Association between movement energy and self-reported song ratings. Each point is a participant.

Interpersonal coordination

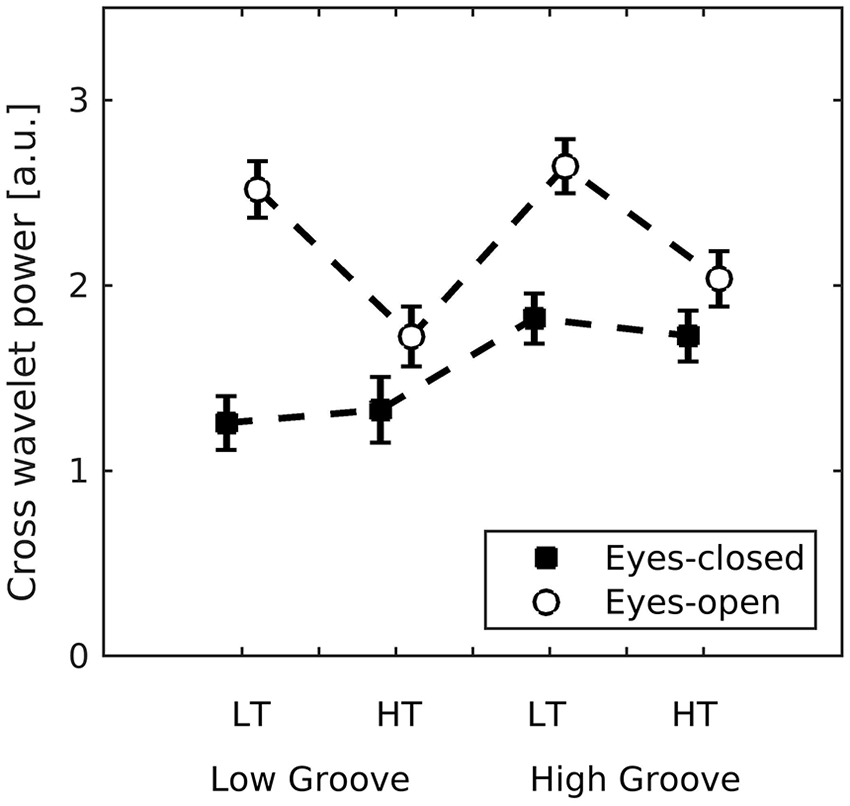

The mean power of the cross wavelet transform (C) served as the metric of interpersonal coordination. C was larger when visual cues were available and with high-groove stimuli (main effects of visual social cues (β = 1.32, SE = 0.11, t = 12.48) and stimulus groove (β = 0.60, SE = 0.11, t = 5.63; see Figure 6). There was no main effect of stimulus tempo (p = .33) . There was an interaction between visual social cues and stimulus groove (β = –0.45, SE = 0.05, t = –8.52), indicating a stronger effect of visual cues for low- than high-stimulus groove. A second interaction between visual social cues and stimulus tempo (β = –0.89, SE = 0.05, t = –16.77) indicated a stronger effect of visual social cues for slow- than fast-stimulus tempo. A third interaction between groove and tempo (β = –0.17, SE = 0.05, t = –3.22) indicated a stronger effect of high groove in slow tempo trials. Finally, a three-way interaction between visual social cues, high groove, and fast tempo, (β = 0.36, SE = 0.07, t = 4.84) indicated that C was higher for high-groove, fast-tempo trials in the eyes-open condition than for high-groove, fast-tempo trials in the eyes-closed condition. In sum, interpersonal synchronisation was higher when visual social cues were present (eyes open) and for high groove stimuli, but the effect of visual social cues was strongest when stimulus groove was low, and these effects were moderated by stimulus tempo.

Mean (SE) interpersonal coordination (C) measured as the average power of the cross wavelet transform. This method returns high power for coinciding oscillations localised in time and frequency (see Figure 3). It was applied to each pair of participants and averaged across time, frequency, and the first and second dimensions of movement, and then log-transformed.

This measure of coordination was highly correlated with the movement energy, (r[262]= .79, p < .0001), which is not surprising given that the cross-wavelet transform reveals common power in the frequency-time domain.

Association between interpersonal coordination and interpersonal similarity of stimulus ratings

We examined whether the coordination between pairs of participants predicted how well their rated responses to the musical stimuli matched. We took the absolute difference within pairs of participants who were within 3 m of each other. C was not associated with interpersonal matching of groove, familiarity, valence, or intensity ratings, as shown by equivalence tests with Fisher’s z transformation (Goertzen & Cribbie, 2010; Lakens, 2017) which rejected the null hypothesis that the given correlation falls outside of the equivalence bounds (all p’s < .001).

Discussion

In an ecologically realistic context, we used motion capture to measure the body sway movements of an audience while we manipulated the groove and tempo of musical stimuli and the presence or absence of visual social cues in the form of eyes-open and eyes-closed conditions. We measured (1) characteristics of free movement, (2) interpersonal coordination, motivated by the embodied nature of musical groove and collective experience, and (3) subjective experience reflected in ratings of the groove, emotional valence, emotional intensity and familiarity of each musical excerpt. In this naturalistic situation, both body movements and the multiple metric levels of each musical stimulus were not strictly constrained as in a typical laboratory experiment. The first and second principal dimensions of motion corresponded roughly to side-to-side and front-to-back movements and captured 98% of the variance, although which movement direction was primary varied across individuals. For movement vigour we used a biomechanically-based measure of oscillator energy (Dotov & Frank, 2011; Frank, 2010). We found that movement energy and interpersonal synchrony were both increased with high-groove stimuli and in the presence of visual social cues, and that ratings of groove, emotional valence and emotional intensity correlated with movement energy. Together, these findings indicate that characteristics of the music and the social context both influence audience experiences and behaviours.

Initial analyses of movement revealed that the secondary axis of movement (represented by PC2) explained a greater proportion of the movement variance when groove was high compared to low, indicating that high-groove music engendered more complex movement patterns. Furthermore, particularly for the secondary axis of body sway (PC2), movements were most closely aligned to the stimulus tempo for high-groove stimuli. These findings suggest that higher groove leads to higher movement complexity and better tempo matching, particularly for secondary movements. Previous studies have indicated that different elements of the rhythm, such as those involving more or less syncopation, could be expressed in different body parts such as torso versus hands (Witek et al., 2014). It remains an interesting question for future research to investigate how interpersonal synchrony during collective music listening is affected by different aspects of the rhythm, such as amount of syncopation, and how interpersonal synchrony of different parts of the body relate to the tempos of different rhythmic metrical levels.

With respect to movement energy, which combines movement amplitude and speed to give a biomechanically-relevant indication of movement vigour, the analyses revealed that energy was higher for high- than low-groove music, particularly for slower tempos, indicating that perceptual characteristics of the music influence how people move. Movement energy was also higher in eyes-open compared to eyes-closed conditions, indicating that the presence of cues to other audience members’ movements increased the energy of people’s movements. This is consistent with a previous study showing that participants danced more in a small group of four than individually (De Bruyn et al., 2009) and it is consistent with the notion of social facilitation of movement (Steinmetz & Pfattheicher, 2017). We cannot rule out that participants decreased their movements in the eyes-closed condition because they were afraid of bumping into something. However, this is unlikely as they were seated and in wide armchairs that each had individual armrests, and previous work shows that reducing or eliminating visual feedback leads to the increase, not decrease as in our case, of several dynamic aspects of postural sway (Edwards, 1946; Kinsella-Shaw et al., 2006). With respect to ratings, when comparing embodied and subjective responses, higher movement energy was associated with higher ratings of groove and more positive ratings of emotional valence and higher ratings of emotional intensity, confirming the idea that subjective music experiences are related to body movement.

Interpersonal coordination (or matching) of body sway as measured by the cross-wavelet power was higher for high-groove music and when visual social cues were present (eyes-open condition). These factors interacted, such that the increase of interpersonal coordination when visual social cues were present was stronger for low- than high-groove music, and these effects were modulated by the tempo of the music, such that the effects of groove and visual social cues and were larger at slower tempos. This suggests that visual social cues are particularly powerful in conditions where synchrony to the music might be more challenging, that is, when groove is lower and tempo is slower. In general, however, increased groove and the presence of visual social cues enhanced the coordination as predicted. The manipulation of eyes open versus eyes closed here was critical for showing the effects of being in an audience. Because of the presence of common drive where each individual can synchronise to the music itself, measures of coordination can be high even without actual interpersonal interaction. The increase in interpersonal coordination from eyes closed, where individuals can only synchronise with the music, to eyes open, where individuals can both synchronise to the music and with each other, implies the role of interpersonal interaction. Previous studies have shown that synchronous movement between people leads to increased social affiliation, liking, trust and cooperation (Cirelli et al., 2014, 2016; Cirelli, Trehub, & Trainor, 2018; Cirelli, Wan, et al., 2018; Hove & Risen, 2009; Mogan et al., 2017; Trainor & Cirelli, 2015; Tunçgenç et al., 2015; Valdesolo et al., 2010). It is therefore likely that the interpersonal synchrony experienced at concerts is in part responsible for people’s often intense enjoyment of the concert experience (Lamont, 2011). Indeed, musical events are among those human activities that can draw people together and induce powerful spontaneous social effects (Brown & Knox, 2017; Burland & Pitts, 2014).

Interestingly, unlike in the case of movement energy, interpersonal movement coordination was not associated with how much participants matched in movement energy or in ratings of stimulus groove, familiarity, emotional valence, and emotional intensity. Thus, when two people match closely in their movements, it does not necessarily mean they have similar subjective experiences of the music. It is possible that synchronous behaviour in pairs is too narrow a construct to account for the rich and flexible forms of social interactions that underlie unconstrained collective behaviour in the real world. Synchronisation is a very specific phenomenon within the diversity of possible forms of dynamic coordination (Pikovsky et al., 2003). Given the free and collective form of the present study, we cannot rule out the possibility that there were effects beyond simple synchronisation as well as group-to-person interactions in addition to person-to-person interactions. Much research has focused on synchrony as it is relatively easy to define, measure and control in an experimental setting. In controlled behavioural lab studies, synchronisation can happen spontaneously and without instruction if, for example, the two participants are facing each other and performing an identical movement (Ouiller et al., 2008). When participants are exposed to an artificial adaptive stimulus, stimulus interactivity increases spontaneous entrainment above and beyond predictability (Dotov et al., 2019; Nakata & Trainor, 2015). Interestingly, spontaneous synchronisation is not necessary or can even be detrimental for optimal performance depending on the task space (Cuijpers et al., 2015; Lahnakoski et al., 2020; Wallot et al., 2016), again suggesting that additional forms of coordinated interactions are important. Perhaps future research should consider “generalized synchronization” (Friston & Frith, 2015) or coordination (Amazeen et al., 1995) as the theoretical basis of interpersonal interaction. Coordination theory can also accommodate more complicated dynamic forms of interaction such as causal mutual influence at a delay between musicians (Chang et al., 2017, 2019) or complex alignment (Zapata-Fonseca et al., 2016). Thus, to fully understand interpersonal experiences in an audience situation, future studies will need to examine different types of dynamic coordination among people experiencing music in a group as well as across diverse situations.

Consistent with this interpretation is our finding that synchronisation with the musical stimuli was good but not perfect, with an average tempo difference between the music and participants’ movements of about 5%–10%. It is beyond the scope of this study to investigate whether this was due to limitations in participants’ abilities for full-body sensorimotor synchronisation as they were seated, or because they tended to sway not only to the stimulus tempo but also to interact with each other, or whether their movements reflected responses to higher level phrasing and emotional expression in addition to synchronisation with the beat (Chang et al., 2017, 2019). This latter possibility is intriguing in that it suggests that the movements of audience members might reflect several processes, including synchronising to the beat of the music, synchronising with each other, and expressing reactions to other aspects of the music such as phrasing and emotional content.

Other important considerations regarding when different forms of coordinated behaviour may emerge include intentionality and group size. Collective intentionality can assume various forms (Zahavi & Satne, 2015). We-intentionality, when we pursue activities and common goals as a group, might be more basic than joint intention (Satne & Salice, 2020), with the latter more likely to give rise to more synchronous movements. It is also informative to consider coordination behaviours in other species. Various collective coordination processes among groups of conspecifics, loosely referred to as synchronisation, have been studied in various species and in some cases their adaptive value has been determined (Couzin, 2018; Ravignani, 2018; Ravignani et al., 2014). Yet, pure synchrony appears to be a relatively rare adaptation occurring in only a few species such as humans, rather than as a universal form of inter-individual coordination within a group. It should be clear why this is the case if one considers that the environmental constraints on group activities often give advantage to non-synchronous movement. Hunting, harvesting, preparing food, and building a shelter would be less efficient if group members mirrored each other’s movements. In flocking birds, pure synchrony could be harmful because it would make it difficult for the cloud to break and disperse when under attack by a predator (Couzin, 2018).

In humans, however, synchronous movement appears to play an important role. Synchrony with others increases feelings of affiliation and cooperation, even in the infancy period (Cirelli et al., 2014, 2016; Cirelli, Wan, et al., 2018; Hove & Risen, 2009; Mogan et al., 2017; Trainor & Cirelli, 2015; Valdesolo et al., 2010). Because of its temporal regularity, music is an ideal stimulus for promoting synchronous movement. Indeed, music is found at all social gatherings where people come together for a common purpose or to experience a common emotion, including weddings, funerals, parties, political rallies, and military exercises. Indeed, it has been proposed that the ability of music to strengthen social bonds within a group may have been an evolutionary adaptation that increased survival at the group level (Huron, 2001; Trainor, 2015).

Musical pieces vary in how much they make us want to move, that is, in groove, but past research has only examined this in individuals. This research shows that listeners are quite consistent in their ratings of groove across musical pieces and that pieces rated high in groove engender more movement than those rated as low (Janata et al., 2012; Madison, 2006; Stupacher et al., 2013). In the present study we addressed to what extent the experience of groove is grounded in body movement energy and, importantly, to what extent it is enhanced in social settings due to interpersonal interactions. Indeed, we confirmed that there is a movement energy response to groove and that the response is enhanced when in a group of similarly moving people. Not only did higher groove pieces induce greater movement energy in audience members, but also interpersonal synchrony was greater with higher groove. Lower groove pieces benefitted particularly from visual social cues in that movement synchrony increased to a greater extent with eyes open than closed for low- than for high-groove pieces.

The present study is among the first to combine controlled manipulation of conditions, rigorous measurement and analysis of movement, and an ecologically valid music listening situation involving a group of over 30 audience members. At the same time, there were several limitations to this study. Because all participants were tested at the same time, all received the same stimulus order. Although we did incorporate 5 s of random notes between trials to help erase memory of the previous trial, we were not able to assess potential order effects. Although unlikely given our setup, we cannot rule out that in the eyes-closed condition participants decreased movement to ensure not bumping into anything. We attempted to separate the groove and tempo of the musical stimuli by including a higher and lower tempo version of each, but the actual tempo difference between lower and higher tempo songs was larger for low- than high-groove stimuli (see Table 1). Furthermore, although the tempos of the songs in our stimulus set had a fairly large range (81–101 BMP), the set did not include songs with very slow or very fast tempos. Our main interest was in effects of groove, so it remains for future research to investigate effects of tempo in a more rigorous manner.

Song Stimuli.

Conclusion

People seek out concerts where they can experience music with others, and collective musical experiences are ubiquitous across human societies. Combining an ecologically valid audience experience with controlled experimental manipulations, precise measurement of movement and biologically-motivated measures of movement energy, we found that audience members moved with higher energy when the music was higher in groove, and when they could see other audience members. Furthermore, higher energy movement was associated with higher subjective ratings of groove and emotional valence and emotional intensity. Importantly, interpersonal synchrony was also higher for higher groove music and when the audience could see each other, confirming that audience members were not moving in sync simply due to entraining to a common musical stimulus. Together these results show that collective behaviour and characteristics of the music influence individual physical, interpersonal, and subjective responses to the music.

Supplemental Material

sj-pdf-1-qjp-10.1177_1747021821991793 – Supplemental material for Collective music listening: Movement energy is enhanced by groove and visual social cues

Supplemental material, sj-pdf-1-qjp-10.1177_1747021821991793 for Collective music listening: Movement energy is enhanced by groove and visual social cues by Dobromir Dotov, Daniel Bosnyak and Laurel J Trainor in Quarterly Journal of Experimental Psychology

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by grants to LJT from the Social Science and Humanities Research Council of Canada, the Natural Sciences and Engineering Research Council of Canada, the Canadian Institutes of Health Research, and the Canadian Institute for Advanced Research.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.