Abstract

In the past decade there has been a lot of attention to the quality of the evidence in experimental psychology and in other social and medical sciences. Some have described the current climate as a ‘crisis of confidence’. We focus on a specific question: how can we increase the quality of the data in psychology and cognitive neuroscience laboratories. Again, the challenges of the field are related to many different issues, but we believe that increasing the quality of the data collection process and the quality of the data per se will be a significant step in the right direction. We suggest that the adoption of quality control systems which parallel the methods used in industrial laboratories might be a way to improve the quality of data. We recommend that administrators incentivize the use of quality systems in academic laboratories.

In the past decade, it has become apparent that researchers (especially in social and behavioral sciences) need to focus their attention on the quality of the empirical evidence they produce. 1 Some have described the current climate as a ‘crisis of confidence’ (Nosek and Lakens, 2014; Pashler and Wagenmakers, 2012; Rouder et al., 2016). In a seminal article that has been cited more than 4000 times, Ioannidis (2005) claimed that ‘most published research findings are false’. Since then, news of data fabrication (e.g. Callaway, 2011), the publication of paranormal phenomena in a flagship journal (Bem, 2011), and a lackluster reproducibility rate (Open Science Collaboration, 2012) has called into question the credibility of academic publishing and the scientific endeavor. Media outlets have eagerly reported, and perhaps overstated, the extent of the problem, declaring that ‘science is broken’ (Gobry, 2016; or that ‘science is not broken’: Aschwanden, 2015).

The reasons for the current climate in the behavioral sciences are diverse and range from the dominant statistical methods to the incentive systems in academia (see the report by Levelt et al., particularly Section 5, for an interesting take on the intersection of the incentive system and the dominant research methodologies: Levelt et al., 2012). The proposal presented here does not address the cases of outright fraud; instead, we try to reach the well-intentioned researcher who attempts to produce the best possible scientific work. In particular, we want to focus on a specific question: how can we increase the quality of the data in psychology and cognitive neuroscience laboratories? Our assumption is that behavioral and social scientists are not less ethical than scientists from other disciplines, but we suspect that the noisiness of the data obtained from human behavior is a contributor to these fields’ problems. It is a research ethics imperative to reduce the sources of noise in our data by implementing data quality systems.

Academic laboratories in the behavioral sciences do not have external controls (e.g. they are never audited by regulatory or licensing entities), and scientists seldom get trained in quality systems. Industrial laboratories have a very different culture as quality systems are widely used. High quality standards are an imperative for industrial activities such as pharmaceutical production as there are external forces like governmental regulations, and market preferences that cannot be ignored. Even a small pharmaceutical plant employs scientists who deal with regulatory agencies at the local and federal level (see Lawrence and Woodcock, 2015, for a description of a new program of quality assessment within the Food and Drugs Administration).

Of particular interest to us are the tasks performed by two related but distinct areas within quality systems in industrial settings: quality assurance (QA) and quality control (QC). Both ensure the quality of an output (a service, a product, data, etc.). QA relates to the planned and systematic activities implemented in a system to ensure that specifications are met: it oversees the process and adopts the methodology for testing and decision-making. On the other hand, QC relates to the activities and methodologies used to assess the quality attributes of a service or a product: it is in charge of the specific actions and tests that are used for determination of conformance to specification (American Society for Quality, 2016).

Although highly interrelated, industrial QA and QC have different foci. This can be illustrated with the following example. Imagine that a hypothetical pharmaceutical company (NoMoreCough Labs) produces a cough syrup. During manufacturing, a sample of the product is taken to the QC lab according to a schedule determined by the QA department. QC performs a battery of tests following a set methodology that compares the product’s features (e.g. pH, viscosity, potency) against specifications approved by QA. The results are provided to QA to determine a course of action. Obviously, if the tests performed by QC are within the specification limits set forth by QA, manufacturing can continue; on the other hand, if there are any tests that yield non-conforming results, QA would make a determination on actions to take. Ideally, there will be protocols to be followed for different non-compliant results to protect patient safety and assure the final quality of the product. In order to comply with the federal regulations from the FDA (Food and Drug Administration, 2015), NoMoreCough Labs has to take action: they either reject the failing product, or make approved adjustments to bring the product into specifications and re-test.

Quality systems in behavioral laboratories

In our experience, academic research groups in the cognitive and psychological sciences develop mostly informal and mostly not-explicitly-articulated quality policies. It is not uncommon for the junior graduate students to ‘mess up’ a few times before they start to adopt their own quality habits. In this environment, the development of formal, explicit, and enforceable quality policies would be beneficial for everyone involved, and these benefits would quickly outweigh the costs of developing and enforcing these systems. Some of the benefits include minimizing the waste of resources on failed studies, facilitating the adoption of open science practices, and improving the signal to noise ratio in the data.

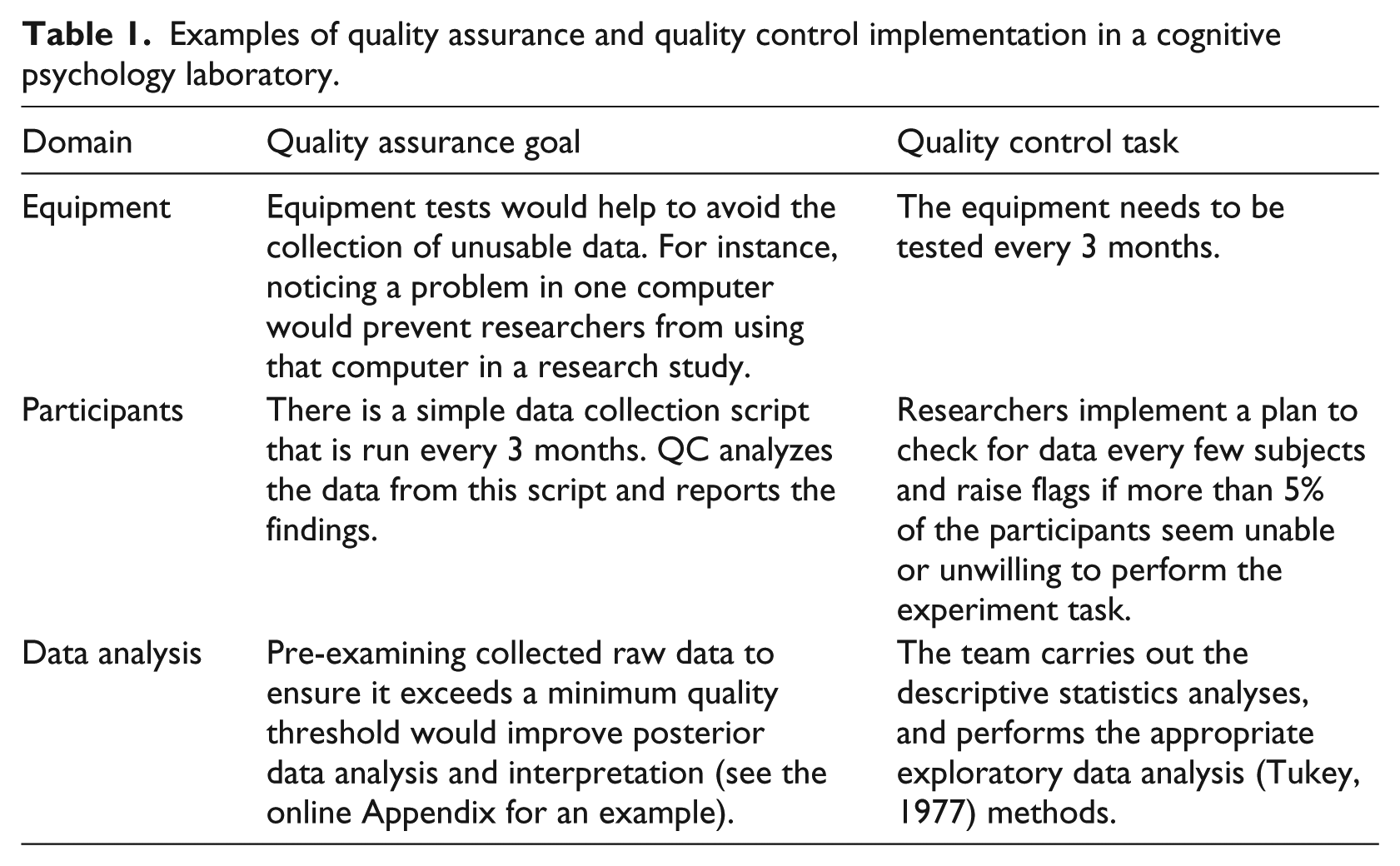

We acknowledge that the quality system needs of a group that does survey-based studies might be different than the needs of a group that collects neurophysiological data; nonetheless, we present in Table 1 some examples of the division of labor between QA and QC process within the context of a cognitive neuroscience laboratory that collects latency (response times), human performance, eye-movement, and EEG data.

Examples of quality assurance and quality control implementation in a cognitive psychology laboratory.

Although the examples in the table are related mostly to cognitive science, we hope that different research groups can benefit from the philosophy set forth by this proposal, and we hope that they make their methods public.

Implementation

The quality systems method should be an intrinsic part of the research cycle, and as such it should benefit from the creativity of the scientific community. We suggest two possible mechanisms to implement the QA/QC systems: an in-house system and a buddy system (see Buddy system subsection).

In-house QA/QC

Academic laboratories are small operations compared to pharmaceutical plants. There is usually a main researcher, sometimes a couple of postdoctoral fellows, a handful of graduate students, and undergraduates who often are part of the team for short periods of time. We suggest that QA should be the responsibility of senior members of the team, as this process is strategic, preplanned, and has a long-term time frame. These QA processes include, but are not limited to, setting up guidance for data collection and criteria for acceptable data. The main goal of the QA process, as we propose it, is to avoid generation of unusable data that could have been prevented by following the predetermined guidelines. The QC processes, on the other hand, might be the responsibility of the more junior members of the team. These processes involve the performance of the activities prescribed by the QA guidelines. These activities have a limited timeline and should be part of the standard procedure for anyone who has contact with experimental data or its collection. In the online Appendix, we present an example of our data quality system for a recent experiment on the effect of cell phone use in a perceptual discrimination task.

Buddy system

This idea was introduced by Morey and Morey (2015) in the context of open science. Perhaps a more stringent quality system would be to have an external group perform a quality verification audit. A laboratory could be audited by a buddy laboratory from either the same or a different institution. The buddy laboratory (i.e. the auditor) group would perform QC procedures according to the QA plan in order to verify the findinds. Ideally, research could have verification ‘badges’ the same way that some of the open science initiatives provide some form of certification for the different levels of openness.

Conclusion

One of the contributors to the current crisis is an emphasis on volume of production, in particular by junior faculty. We suggest that the establishment of quality systems (along with openness – see, for example, Miguel et al., 2014), should be incentivized by administrators and granting agencies. Some structures are already in place to achieve this goal. For example, the National Science Foundation requires a ‘data plan’, which is essentially a data stewardship plan. A description of the quality systems associated to this data can be easily requested as a condition for future funding.

Although future work should examine the impact of these proposals, to empirically validate them, we want to emphasize that quality systems are not to be thought of as yet another bureaucratic hurdle that the research has to overcome. Instead, it is an investment that benefits both the science and the scientist by reducing the noise in data collection, improving the inferential process, and facilitating the transition into a fully open science paradigm. Publication bias has been described as a ‘file drawer problem’ (Rosenthal, 1979), but it can also be described as a ‘dirty underwear drawer problem’. Our idiosyncratic data stewardship systems are not often a subject of our pride. The quality systems described here should contribute to our overall data hygiene.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.