Abstract

Experimental psychologists investigating eyewitness memory have periodically gathered their thoughts on a variety of eyewitness memory phenomena. Courts and other stakeholders of eyewitness research rely on the expert opinions reflected in these surveys to make informed decisions. However, the last survey of this sort was published more than 20 years ago, and the science of eyewitness memory has developed since that time. Stakeholders need a current database of expert opinions to make informed decisions. In this article, we provide that update. We surveyed 76 scientists for their opinions on eyewitness memory phenomena. We compared these current expert opinions to expert opinions from the past several decades. We found that experts today share many of the same opinions as experts in the past and have more nuanced thoughts about two issues. Experts in the past endorsed the idea that confidence is weakly related to accuracy, but experts today acknowledge the potential diagnostic value of initial confidence collected from a properly administered lineup. In addition, experts in the past may have favored sequential over simultaneous lineup presentation, but experts today are divided on this issue. We believe this new survey will prove useful to the court and to other stakeholders of eyewitness research.

Experimental psychologists investigating eyewitness memory have periodically published surveys that reflect widely held scientific opinion about various eyewitness memory phenomena (e.g., Kassin et al., 1989, 2001). These surveys aimed to inform the court (i.e., judges and jurors) and other stakeholders of eyewitness research about generally accepted scientific opinion within the field. Kassin et al. (2001) published the last document of this set approximately 2 decades ago, and the time has come for a much-needed update. In the first part of this article, we briefly review the events that led to the development of these surveys as well as the expert scientific opinion reflected in them. In the second part, we present results from a survey of experimental psychologists knowledgeable about eyewitness memory. We show that expert opinion has become more nuanced over time in two cases and has remained virtually unchanged in all other cases.

We believe these results are newsworthy and will have broad appeal to stakeholders of eyewitness research, including policymakers and police chiefs as well as judges and juries. However, those in the criminal-legal system are not the only ones who should find this information useful. The educated public, worldwide, has taken a vested interest in what eyewitness memory experts think (e.g., Brewin et al., 2019, 2020). We believe this new database of current expert opinion will be informative to them. We also hope this information will prove useful to experimental psychologists. It reveals current areas of agreement and contention among scientists, and it may therefore serve as a signpost of areas of research that need further study.

Surveys Informing the Court

We begin by discussing the standards that govern the admissibility of scientific evidence in courtrooms in the United States. For many years, judges in the United States relied on the Frye test to make admissibility decisions about eyewitness expert testimony (e.g., United States v. Amaral, 1973). This test requires expert opinion to be “generally accepted” within the relevant scientific community for judges to admit that opinion in court (Frye v. United States, 1923). Judges sometimes considered expert opinion on eyewitness memory inadmissible because, in their view, the phenomena or principles discussed by experts did not reach general acceptance within the field (e.g., United States v. Fosher, 1979).

Kassin et al. (1989) put that idea to the test and surveyed 63 experts for their opinions on 21 memory phenomena that were of frequent concern in court. Kassin et al. found that experts generally accepted nine of the 21 phenomena. That is, at least 80% of experts believed the following statements were reliable enough to testify in court: (a) An eyewitness’s perception and memory for an event may be affected by his or her attitudes and expectations, (b) an eyewitness’s confidence is not a good predictor of his or her identification accuracy, (c) the less time an eyewitness has to observe an event, the less well he or she will remember it, (d) the rate of memory loss for an event is greatest right after the event and then levels off over time, (e) police instructions can affect an eyewitness’s willingness to make an identification and/or the likelihood that he or she will identify a particular person, (f) an eyewitness’s testimony about an event often reflects not only what the witness actually saw but also information he or she obtained later on, (g) eyewitnesses sometimes identify as a culprit someone they have seen in another situation or context, (h) the use of a one-person showup instead of a full lineup increases the risk of misidentification, (i) and an eyewitness’s testimony about an event can be affected by how the questions put to that witness are worded.

In 1993, the U.S. Supreme Court changed the standards for admissibility of scientific evidence (Daubert v. Merrell Dow Pharmaceuticals, Inc., 1993). In Daubert, the Supreme Court placed admissibility decisions squarely on the shoulders of judges, making them the primary “gatekeepers” that shield juries from junk science and false expertise. This was a burdensome change in some ways because judges typically lack the knowledge and training needed to evaluate the merit of expert testimony (Chin & Crozier, 2018). In their judicial opinion, the Supreme Court acknowledged this issue and gave guidelines to help judges in their gatekeeping role. One aspect of this structured assessment involves an inquiry about whether the broader scientific community supports the theories, methods, or findings an expert discusses in their testimony.

Motivated by the Daubert ruling, Kassin et al. (2001) revised their prior survey. They kept 17 of the 21 statements from the 1989 survey and added 13 statements on new and important developments in the field. Kassin et al. found many striking consistencies in expert opinion between the 1989 and 2001 surveys. Recall that, in the 1989 survey, at least 80% of experts considered nine phenomena reliable enough to testify in court. In the 2001 survey, at least 80% of experts considered eight of those nine phenomena reliable enough to testify in court. In addition, at least 80% of experts considered several of the new statements reliable enough to testify in court, including the following statements: (a) Witnesses are more likely to misidentify someone by making a relative judgment when presented with a simultaneous (as opposed to sequential) lineup; (b) exposure to mug shots of a suspect increases the likelihood that the witness will later choose that suspect in a lineup; (c) young children are more vulnerable than adults to interviewer suggestion, peer pressures, and other social influences; and (d) the presence of a weapon impairs an eyewitness’s ability to accurately identify the perpetrator’s face. Since Kassin et al. published these findings in 2001, expert surveys have focused exclusively on the impact of stress on memory performance (Akhtar et al., 2018; Magnussen et al., 2010; Marr et al., 2021) or have compared the Kassin et al. survey results to lay opinions (e.g., Read & Desmarais, 2009).

The State of the Science

The science of eyewitness memory has developed in some important respects since Kassin et al. (2001) conducted their survey approximately 2 decades ago. The most notable developments that took place in the field throughout this time came as a result of the landmark report published by a National Academy of Sciences (NAS) committee commissioned to assess the current state of eyewitness identification research (National Research Council, 2014). This committee of leading scientists and scholars recommended a variety of new research directions, including new statistical tools to evaluate eyewitness identification procedures and closer collaborations between scientists and practitioners. Scientists have taken these recommendations seriously. For example, scientists have recently introduced several statistical tools for evaluating eyewitness identification procedures (e.g., Fitzgerald et al., 2023; Kellen & McAdoo, 2022; Lee & Penrod, 2019; Mickes, 2015; Mickes et al., 2012; Seale-Carlisle, Colloff, et al., 2019; A. M. Smith et al., 2019; Starns et al., 2023). Several close collaborations between scientists and law enforcement have also taken place (e.g., Horry et al., 2014; W. Wells, 2014; G. L. Wells et al., 2015; Wixted et al., 2016).

Some of these new statistical tools and collaborations helped call into question some long-standing conclusions within the field. Consider how experts have discussed the strength of the confidence–accuracy relationship in recent years compared with how experts discussed this relationship decades ago. Experts have long considered eyewitness confidence and identification accuracy to be weakly related (e.g., Kassin et al., 1989, 2001). This conclusion was understandable because the point-biserial correlation coefficient used to measure the relationship was often found to be low (e.g., Sporer et al., 1995; G. L. Wells & Murray, 1984). However, some experts have expressed a new appreciation for a strong confidence–accuracy relationship in recent years based in part on work that has abandoned the point-biserial correlation coefficient and has instead measured the relationship using calibration analysis (e.g., Brewer & Wells, 2006; Juslin et al., 1996) or confidence–accuracy characteristic (CAC) analysis (Mickes, 2015). For example, Wixted and Wells (2017) reanalyzed decades of laboratory data using CAC analysis and found that confidence is predictive of suspect identification accuracy when confidence is collected immediately after an identification from an initial, uncontaminated, and properly administered lineup. The Wixted and Wells article seems to have had a notable impact on the field. It has received more than 500 citations since its publication in 2017, according to Google Scholar (last checked August 2023). Moreover, leading—and often competing—scientists have strongly encouraged law-enforcement agencies to collect initial confidence information partly on the basis of those findings (G. L. Wells et al., 2020). This recommendation points to a more nuanced understanding of the confidence–accuracy relationship and suggests to us that many experts today believe that an eyewitness’s initial confidence is a good predictor of identification accuracy if the eyewitness’s memory is uncontaminated and if the lineup is properly administered.

The more nuanced thinking about the confidence–accuracy relationship evident among some eyewitness researchers may not be the only update in thinking that has occurred within the last 2 decades. Experts may have updated their thinking about other phenomena as well. For example, experts have had more nuanced discussions than before about the impact of alcohol and other drugs on eyewitness memory (e.g., Jores et al., 2019; Kloft et al., 2021), and experts have also scrutinized the effects of stress on eyewitness memory more so than in the past (e.g., Marr et al., 2021). We do not comprehensively review the literature to identify each area of research that may have undergone a change in thinking because that is beyond the scope of this article. Might these developments in the research reflect new widespread opinions about eyewitness memory phenomena? If so, then this presents a significant problem to the criminal-legal system. If judges are relying on outdated notions about eyewitness memory research when making decisions, they may inadvertently and consistently admit flawed results. They may be poor gatekeepers of eyewitness expert testimony and might misinform the jury when instructing them about factors that affect eyewitness memory. We need a new survey of expert opinions to address this problem.

The Current Study

This article provides a much-needed update to the set of expert surveys aimed at informing the court. The primary aim of this update is to provide a database of current expert opinions on popular eyewitness memory phenomena. We do not aim to comment on the validity of those opinions or champion one area of research or statistical technique over another. That is not the focus of this article. We are a diverse team of researchers with a diverse set of views, and we only seek to measure current expert opinions. We believe this database of current expert opinions will help judges assess the reliability of expert testimony and help them permit expert testimony that more accurately reflects the opinions of the scientific community. However, judges are not the only ones who should find this information useful. We believe the current database of expert opinion will appeal to educators and other consumers of eyewitness research. We also hope this information will prove useful to researchers as it reveals contentious areas that ought to be investigated further.

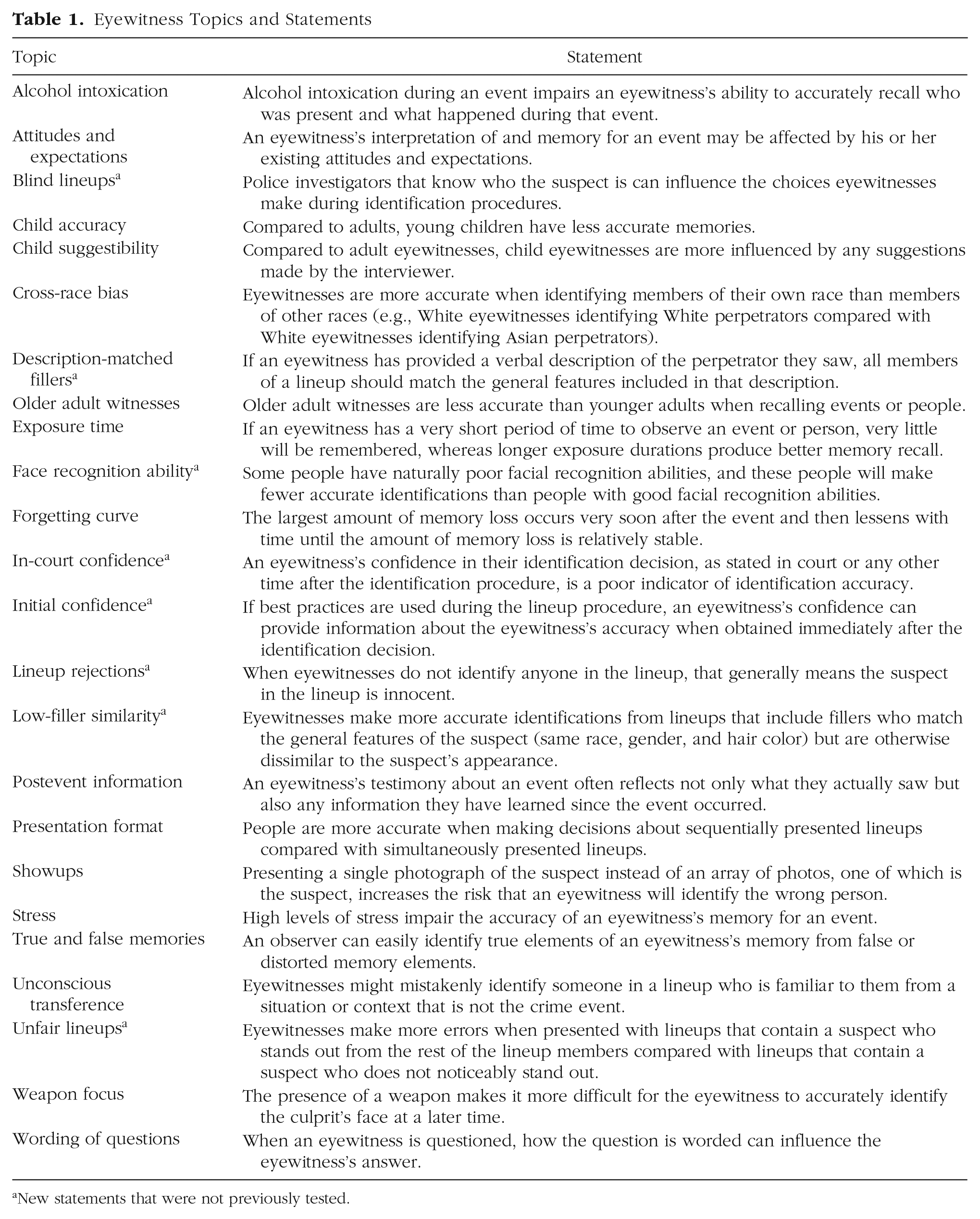

We decided to use the Kassin et al. (2001) survey as the foundation for this update. We felt this was a sensible choice for a variety of reasons. First, the Kassin et al. survey asked about many eyewitness memory phenomena that were of interest to courtrooms in the past (e.g., Benton et al., 2006; Magnussen et al., 2010; Wise & Safer, 2004) and remain of interest now. Second, the Kassin et al. survey has had an impact on appellate judges (e.g., Benn v United States, 2009; People v. LeGrand, 2002; People v. Williams, 2006), and we hoped to replicate that success with our survey. Last, we aimed to compare consistency in expert opinions over time (or lack thereof), and sticking with the Kassin et al. survey allowed us to do that. We selected statements from the Kassin et al. survey that covered a broad range of popular eyewitness memory phenomena. Some of the statements we selected were about testing conditions, including identification and interview procedures, whereas other statements were about encoding conditions, including alcohol intoxication and exposure time. We added several new statements as well that focused on new areas of research in the field or on areas of research we felt were overlooked. Table 1 shows each statement in our survey, and we indicate which statements we took from Kassin et al. and which statements we added. We preregistered this selection process and all our analyses here: https://osf.io/5j9n8.

Eyewitness Topics and Statements

New statements that were not previously tested.

Method

The experts

We recruited scientists and scholars who investigate eyewitness memory. We started the recruitment process by compiling a list of experts in the field by searching a large database of memory-related articles published in scientific, peer-reviewed journals from the last 15 years (Yaffe et al., 2020). For each relevant publication, we stored the first and last author’s name in a list that contained 210 people in total. We then contacted everyone on this list and directly solicited their participation. We recruited 34 experts through this method. We also recruited 37 experts via the Twitter account of the Society of Applied Research in Memory and Cognition (SARMAC) and five experts via the listserv of the American Psychology–Law Society (AP-LS). We recruited 76 experts in total. 1

Of these 76 experts that completed our survey, 75 work as academic researchers, and the remaining expert works as a nonacademic researcher. Regarding the specific area of psychological research, 48 experts specialized in cognitive psychology, 12 experts specialized in social psychology, 10 experts specialized in both cognitive and social psychology, three experts specialized in developmental psychology, and three experts specialized in a combination of cognitive, social, developmental, and neuropsychology. We surveyed experts from December 2020 to June 2021.

The questionnaire

The survey contains 24 statements in total and features 16 statements from the Kassin et al. (2001) survey. Of these 16 statements, several of them were also featured in the survey conducted by Kassin et al. (1989). We took 16 statements that covered a broad range of popular eyewitness memory phenomena relevant to different time points in an investigation (e.g., when the crime occurred and when police tested memory). We slightly modified the language for some of these 16 statements to improve their clarity. For some statements, we specified the memory test (e.g., interview, lineup, or showup). For other statements, we updated the vocabulary and context to improve understanding. For example, Kassin et al. (2001) used the statement “It is possible to reliably differentiate between true and false memories” (p. 408). Here, we updated this statement to make clear who is attempting to distinguish between true and false memories. The statement now reads, “An observer can easily identify true elements of an eyewitness’s memory from false or distorted memory elements.”

This survey also features eight new statements that were not originally included in the prior surveys. These new statements reflect either emerging areas of research (e.g., face recognition ability) or areas of research that have gained more attention within recent years (e.g., lineup rejections). Last, this survey features two statements about the confidence–accuracy relationship rather than one. One statement is concerned with the confidence–accuracy relationship for an in-court identification (or any time after the initial identification procedure), whereas the other statement is concerned with the confidence–accuracy relationship for identifications made during the initial identification procedure. Research suggests that this is a crucial distinction (e.g., G. L. Wells & Bradfield, 1998; Wixted & Wells, 2017).

We also updated the response options slightly to fit sensibly with our statements. 2 In our survey, experts responded to statements using a 7-point Likert scale ranging from 1 to 7 (1 = strongly disagree, 4 = neither agree nor disagree, 7 = strongly agree). For each statement, experts could also select a “Don’t know” option. Lastly, Kassin et al. (2001) directly questioned eyewitness experts as to whether they considered a phenomenon reliable enough to testify in court. We opted not to do this after considering the time needed to complete our survey.

The procedure

Experts began the survey by consenting to take part and answering questions about their expertise (e.g., their profession, specialty, h-index). Experts then responded to 10 statements about general views about the direction of the field and collaborations with the criminal-legal system, but we do not report these results here because they are outside the scope of this article. Experts then responded to the 24 eyewitness memory statements, answered two open-ended questions about the eyewitness memory field, and were finally debriefed. The survey took approximately 20 min to complete. We did not ask for any identifying information throughout the survey.

Results and Discussion

The data set for this survey can be found here: https://osf.io/8zpy6. We begin this section by discussing the general profile of the experts who completed our survey followed by a descriptive analysis of their opinions.

The experts

We collected a variety of quantitative measures from our respondents that highlight their expertise. In Appendix A, we discuss these quantitative measures in finer-grain detail. Briefly, our respondents worked for 16 years (SD = 11) on average in their profession, published 26 articles (SD = 37) on average on the topic of human memory or eyewitness memory, and achieved an average h-index equal to 18 (SD = 17). In sum, the experts in our sample were highly experienced and knowledgeable about research on eyewitness memory. The analyses in Appendix A show that this is true for those we solicited directly via email and for those we solicited indirectly via the Society for Applied Research in Memory and Cognition (SARMAC) Twitter account.

Opinions on eyewitness memory phenomena

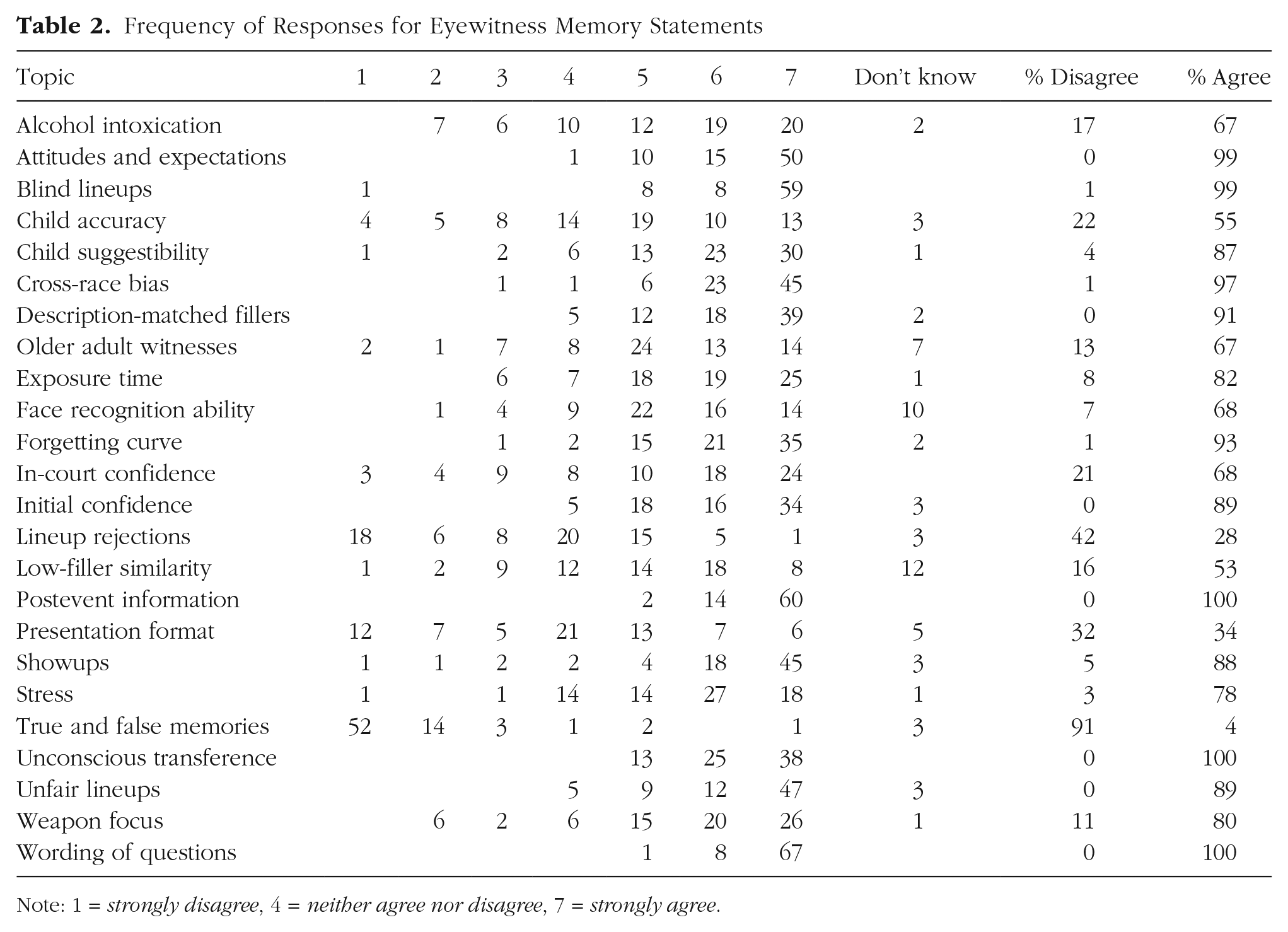

Table 2 contains the raw counts of responses for each statement and shows the proportion of experts who agreed (by responding 5, 6, or 7) or disagreed (by responding 1, 2, or 3) with each statement. We discuss the results for each statement in turn.

Frequency of Responses for Eyewitness Memory Statements

Note: 1 = strongly disagree, 4 = neither agree nor disagree, 7 = strongly agree.

Comparisons to prior surveys

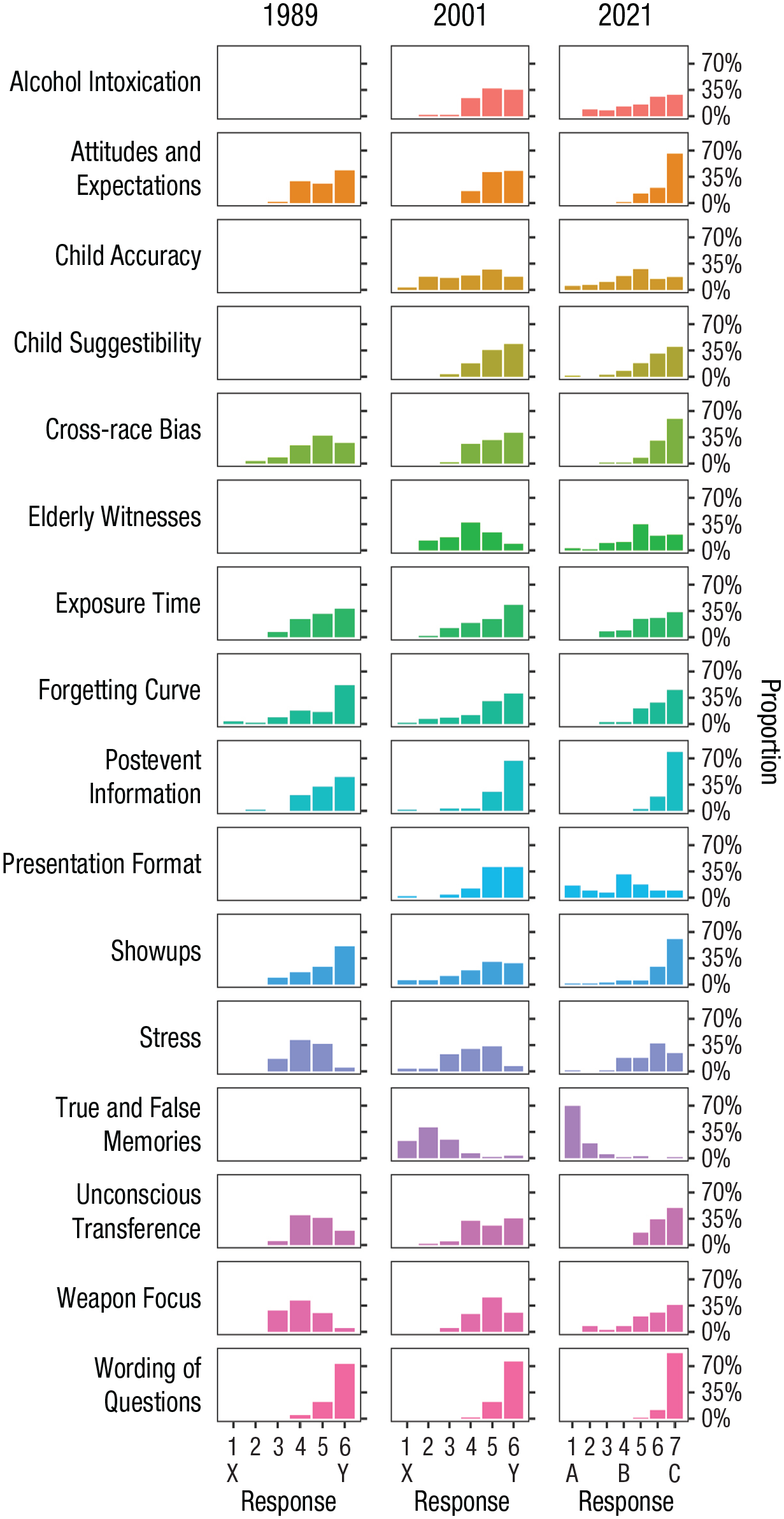

We first compared the responses for the 16 statements that were either similar to, or the same as, the statements in the prior surveys (Kassin et al., 1989, 2001). Figure 1 shows the histograms for these shared statements. The raw response frequencies used to create this figure are shown in Appendix B. In general, Figure 1 shows a remarkable consistency in expert opinion across the surveys carried out at various times throughout the last several decades. For example, there is a remarkable consistency in responses for statements about alcohol intoxication, attitudes and expectations, child accuracy, child suggestibility, cross-race bias, exposure time, forgetting curve, postevent information, showups, unconscious transference, stress, true and false memories, weapon focus, and wording of questions.

Responses to statements shared across Kassin et al. (1989, 2001) and this survey. On the x-axis, the letters “X” and “Y” mean “the opposite is likely true” and “very reliable,” respectively. The letters “A,” “B,” and “C” mean “strongly disagree,” “neither agree nor disagree,” and “strongly agree,” respectively. “Don’t know” decisions are not shown here and are not included in the proportion calculations. Blank cells indicate that expert responses on that topic were not collected at that time. The cross-race bias responses in Kassin et al. (1989) refer to the statement about a White eyewitness and Black perpetrator.

Having said that, experts generally seem to be in greater agreement about these issues today than the experts that took part in the prior surveys. That is, many of these topics show a shift in responding over time to the extreme ends of the rating scale. This increase in consensus may reflect additional scientific evidence investigating these phenomena. For example, Kassin et al. (1989) questioned experts on the weapon-focus effect, which at the time was an emerging area of research (Loftus et al., 1987). Unsurprisingly, only three experts at that time considered the weapon-focus effect “very reliable.” However, there exist several meta-analyses of the weapon-focus effect now (e.g., Fawcett et al., 2013; N. M. Steblay, 1992), and 80% of our respondents agreed that seeing a weapon during a crime can later impact identification performance. Experts today are also in greater agreement than experts in prior surveys about the impact high levels of stress can have on identification performance. That opinion replicates recent surveys investigating the stress-memory relationship. For example, Marr et al. (2021) found that a large majority of eyewitness memory experts and fundamental memory researchers considered very high levels of stress to be disruptive to encoding processes. Much like the weapon-focus literature, the stress-memory literature has grown significantly over the years (e.g., Davis et al., 2019; Deffenbacher et al., 2004; Shields et al., 2017; Vogel & Schwabe, 2016). Thus, the greater agreement in opinions we observe today may reflect the maturation of these research areas.

The only topic in Figure 1 that might show a change in thinking is presentation format. In 2001, 40 of 63 experts (63%) considered the following statement either “generally reliable” or “very reliable”: “Witnesses are more likely to misidentify someone by making a relative judgement when presented with a simultaneous (as opposed to sequential) lineup.” In 2021, only 26 of 76 experts (34%) agreed with this similar statement: “People are more accurate when making decisions about sequentially presented lineups compared with simultaneously presented lineups.” In fact, 32% of experts disagreed with this statement, 28% neither agreed nor disagreed, and the remaining 6% of experts responded “Don’t know.” This may show more nuanced opinion about which lineup presentation is best. We expected mixed responding and less than 80% agreement given the heated debate on this issue (e.g., Dobolyi & Dodson, 2013; R. C. Lindsay & Wells, 1985; Mickes et al., 2012; Seale-Carlisle, Wetmore, et al., 2019; N. K. Steblay et al., 2011). However, this might be surprising to triers of fact (i.e., judges and jurors) if these research developments have not yet been communicated to them. It is also important to keep in mind that we modified the statement about presentation format, which may have clouded this comparison between past and current expert opinion. We discuss our rationale for doing so in the Limitations section, but we want to remind readers of this fact here.

Most of the statements shown in Figure 1 achieved at least 80% agreement. Indeed, at least 80% of experts agreed on the statements for the following topics: wording of questions (100%), postevent information (100%), unconscious transference (100%), attitudes and expectations (99%), cross-race bias (97%), forgetting curve (93%), true and false memories (91%), showups (88%), child suggestibility (87%), exposure time (82%), and weapon focus (80%). The statement about stress fell just below that mark, as only 78% of experts agreed. Experts had mixed opinions about the remaining statements. Specifically, 67% of experts agreed that alcohol intoxication during an event impairs memory for that event, 67% of experts agreed that older adult witnesses are less accurate at recalling events or people compared with younger adults, and 55% of experts agreed that young children have less accurate memories compared with adults.

Statements not previously tested

Another purpose of updating the 21-year-old survey is to collect current expert opinions on a variety of new and interesting areas of research. Within the last 20 years, various lines of research have come in vogue. One example is the research investigating the relationship between face recognition ability and eyewitness identification performance (e.g., Andersen et al., 2014; Bindemann et al., 2012; Grabman & Dodson, 2020; Morgan et al., 2007), some of which shows that face recognition ability may modify the strength of the confidence–accuracy relationship (Grabman et al., 2019). Other examples include research on the diagnostic value of lineup rejections (e.g., Brewer & Wells, 2006; Clark et al., 2008; R. C. L. Lindsay et al., 2013; Sauerland & Sporer, 2009; Wixted & Wells, 2017; Yilmaz et al., 2022), unfair lineups compared with fair lineups (e.g., Carlson et al., 2019; Colloff et al., 2016, 2017; Key et al., 2017; A. M. Smith et al., 2017; Wetmore et al., 2015), and the confidence–accuracy relationship (e.g., Brewer & Wells, 2006; Mickes, 2015; Sauer et al., 2019; Wixted & Wells, 2017).

We included eight new statements in our survey to capitalize on these new and important research developments. This includes the following topics: double-blind lineups, unfair lineups, face recognition ability, lineup rejections, initial confidence, in-court confidence, low filler similarity, and description-matched lineups. Although Kassin et al. (2001) investigated description-matched lineups, lineup fairness, and the confidence–accuracy relationship, we updated these statements substantially in this survey. In Kassin et al., the statements about description-matched lineups and lineup fairness conflate the extent to which the fillers resemble the suspect and the degree to which the fillers share general features with the suspect (e.g., age, gender, ethnicity, and hair color). In our updated statements we took care to make this distinction clear because fillers can share general features with the suspect such as age, gender, ethnicity, and hair color but look dissimilar in appearance to the suspect (Colloff et al., 2021; Luus & Wells, 1991).

We observed mixed responding and less than 80% agreement for the statements about face recognition ability (68%), low filler similarity (53%), and lineup rejections (28%). These areas of research are relatively new or have been investigated somewhat sparingly over the years, which might explain the mixed responding for these statements. A large majority of experts agreed on the statements about double-blind lineups (99%) and unfair lineups (89%). That is, nearly every respondent endorsed the benefits of double-blind lineups compared with nonblind lineups, and a large majority of respondents agreed that lineups are detrimental to performance if they contain a suspect that noticeably stands out. This is a reassuring result that replicates the views expressed in the scientific review article produced by G. L. Wells et al. (2020). We refer readers to that document for a nuanced discussion of several recommended policies for gathering eyewitness memory evidence.

Regarding the confidence–accuracy relationship, eyewitness experts have largely considered confidence to be a poor predictor of accuracy for many decades (e.g., Sporer et al., 1995; G. L. Wells & Murray, 1984). However, research conducted within the last 20 years has pointed to a new way of thinking about confidence and accuracy (e.g., Brewer & Wells, 2006; Wixted & Wells, 2017). Indeed, 68% of experts agreed with this statement: “An eyewitness’s confidence in their identification decision, as stated in court or any other time after the identification procedure, is a poor indicator of accuracy.” However, 89% of experts agreed with this statement: “If best practices are used during the lineup procedure, an eyewitness’s confidence can provide information about the eyewitness’s accuracy when obtained immediately after the identification decision.” This pattern of responses shows a sensitivity to the time when confidence is collected, the practices used to collect it, and the negative impact of contaminating influences. If confidence is collected immediately after an identification decision and if best practices are used, a large majority of experts (89%) now agree that confidence can be informative of accuracy. If confidence is instead collected at a later point in time such as in court, most experts (68%) believe it to be a poor indicator of accuracy instead. This suggests that experts have a more nuanced appreciation now about how confidence can be informative of accuracy if collected properly. This is an update to the thinking about the confidence–accuracy relationship, which is important for fact finders to know.

Individual differences

We deliberately chose to recruit experts that specialized in a variety of subfields within psychology. Most of our experts specialized in cognitive psychology (63%). The remaining 37% of experts specialized in social psychology (16%), development psychology (4%), cognitive and social psychology (13%), or a combination of cognitive, social, developmental, and neuropsychology (4%). Do our cognitive psychologists hold radically different opinions than our other experts?

We explored this question in Appendix C. Figure C1 shows expert opinions for each statement plotted as a series of histograms. In this figure, we ordered the histograms alphabetically by topic. Moreover, we show the distribution of responses for experts who specialized only in cognitive psychology (blue) and for experts who specialized in other subfields of psychology (orange). The distribution of responses is extremely similar between both groups of experts. Perhaps the only controversial topic between them is presentation format. Our cognitive psychologists often disagreed with this statement, whereas our remaining group of experts often selected “neither agree nor disagree.” This suggests that our group of cognitive psychologists might have held a stronger preference for simultaneous lineup presentation, whereas our other group of experts held either a stronger preference for sequential lineup presentation or did not prefer one presentation format over the other.

Perhaps there are notable differences in expert opinion between junior and senior experimental psychologists. In Appendix C we also compare junior experts to senior experts in three ways. We performed median splits on the number of years experts worked in their profession (Fig. C2), number of articles experts published on the topic of human memory or eyewitness memory (Fig. C3), and experts’ h-index (Fig. C4). Although the difference is slight, our junior experts seem to be less opinionated on the statements about lineup rejections and presentation format than our senior experts. That is, our junior experts more often selected “neither agree nor disagree” for these two statements than our senior experts. This seems true regardless of which measure we used to perform the median split but is most pronounced in Figure C2, which compares experts based on the number of years worked in their profession. Aside from this small but noticeable difference, the distribution of responses stays largely the same for our junior and senior experts.

New developments and future issues

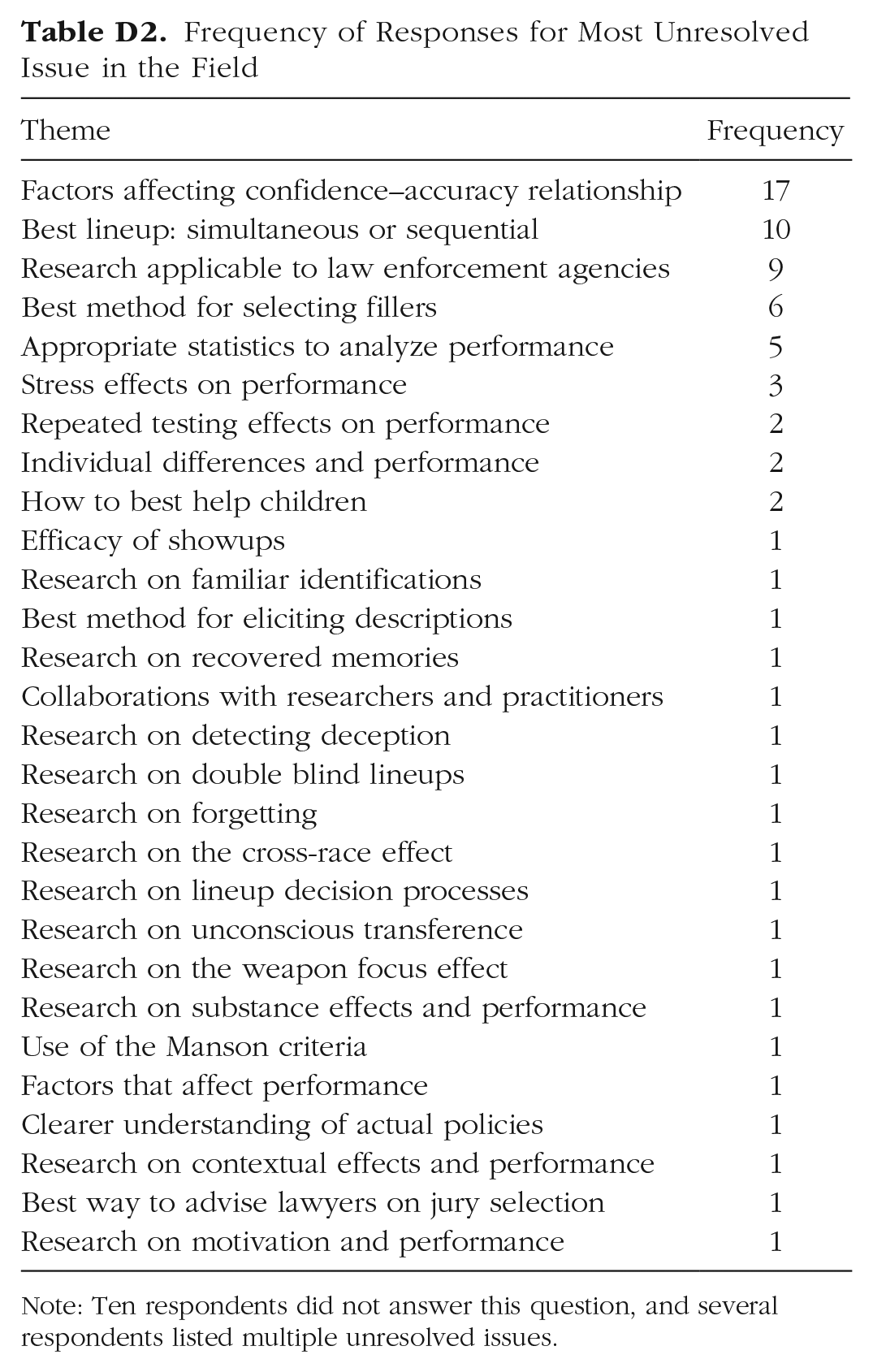

In addition to the 24 statements on eyewitness memory phenomena, we asked two open-ended questions at the end of the survey. The first question asked experts to indicate the most significant advancement in the last 5 years, which focused on research conducted since the publication of the NAS report (National Research Council, 2014). The second question asked experts to indicate the most unresolved issue in the field. We identified 27 unique themes from their responses to the first question and 30 unique themes from their responses to the second question. The full list of themes and the number of times they were mentioned is shown in Appendix D. Here, we focus on the most frequently discussed themes.

The most significant advancement in the last 5 years that experts most often mentioned is the realization that confidence is informative of accuracy if collected immediately after the first identification attempt from a properly administered lineup. Fifteen experts mentioned this advancement in total. Next, 14 experts touched on improved methods and analyses to evaluate eyewitness identification procedures. Of these respondents, half favored signal-detection analytical methods, including formal signal-detection computational modeling and receiver operating characteristic analysis (e.g., Gronlund et al., 2014; Kaesler et al., 2020; Mickes et al., 2012; Wixted et al., 2018). The other half favored deviation from perfect performance and other utility-based approaches (e.g., Lampinen et al., 2019; A. M. Smith et al., 2019; A. Smith et al., 2020). Eight experts discussed the importance of double-blind lineups and the improved understanding of identification procedures. Three experts mentioned the significance of G. L. Wells et al. (2020), which provided up-to-date guidelines for conducting eyewitness identification procedures. Last, two mentioned the important work carried out by the Innocence Project (Scheck et al., 2000), which is a nonprofit legal organization committed to exonerating individuals who have been wrongfully convicted.

The second question asked experts to indicate the most unresolved issue in the field. Seventeen experts discussed the need to identify factors that modify the strength of the confidence–accuracy relationship. These experts often acknowledged the diagnostic value of initial, properly collected confidence but voiced concerns that there may be factors that weaken its diagnostic value. Ten experts mentioned the debate about the best presentation format: simultaneous or sequential lineups. Nine experts voiced concerns about the applicability of eyewitness memory research to actual policies governing the collection of eyewitness evidence. Six experts asked about the best method for selecting fillers for a lineup, and five experts asked about the best statistical methods for evaluating identification procedures.

Limitations

We made a deliberate choice to slightly modify the statements from the Kassin et al. (2001) survey. We felt these modifications were necessary to make. Take, for example, the statement about presentation format that originally included language about absolute and relative decision theory (G. L. Wells, 1984). We felt it necessary to remove any mention of absolute and relative decision theory from this statement so that the statement strictly asks about lineup performance untethered from theory. In other statements, we felt it necessary to specify the memory test in question or to make certain aspects of the statement clearer. Although slight, these modifications may have contributed to some of the changes in opinion we observed over time. Moreover, it is possible that we did not achieve the standard of clarity we aimed to achieve. For example, when we introduced language about an observer in the statement about true and false memories, we did not make clear who the observer was in this situation, which we should have done in hindsight. The new statements we added may have also suffered from lack of clarity to some extent as well. For example, the statement about filler similarity did not clearly define what we mean when we say “dissimilar to the suspect’s appearance.” The statement about courtroom confidence did not clearly indicate whether confidence changed in the time between the initial identification procedure and the in-court identification. That was an oversight. The statement about lineup rejections may have been difficult to answer as well because eyewitnesses can reject the lineup for a multitude of reasons such as when they have no memory for the perpetrator or when they are overly cautious in making a selection. In addition, respondents may have felt restricted when responding to statements. Perhaps we should have added an “it depends” button to the response options or a text box for each statement to allow experts to provide more nuanced opinions in their own words.

It is also worth discussing our sampling approach. We believe expertise on eyewitness memory has broadened outside of the AP-LS member roster, which is why we recruited experts from other psychology and law societies such as SARMAC. To recruit this broader sample, we directly emailed known experts in the field and advertised the survey on the SARMAC Twitter account. It is possible that the respondents who took part via Twitter were not the experts they claimed to be. We explored this issue further by comparing scientific achievements (Fig. A1) and expert opinion between these two groups of respondents (Fig. C5). On the basis of these analyses, we believe all our respondents were experts in the field, but readers should keep this limitation in mind. Lastly, 34 of the 210 experts (16%) we emailed took part in the survey. We can only speculate as to why 16% of those we emailed ended up taking part in the survey. Despite our relatively low response rate for those we emailed, our sample size (76 experts) was larger than the prior surveys. Kassin et al. (1989) had 63 experts (response rate of 53%), and Kassin et al. (2001) had 64 experts (response rate of 34%).

Conclusion

Experts have periodically gathered their opinions on various memory-related phenomena to help judges in their gatekeeping role (Kassin et al., 1989, 2001). This study provides a much-needed update to these prior surveys and creates a database of current expert opinions on classic and contemporary eyewitness memory phenomena. We found many consistencies in expert opinions over time, which highlights the incredible stability within the field. We also found that experts today are likely to endorse the view that initial confidence collected from a properly administered lineup procedure can be informative of accuracy and that confidence expressed later in court may not be as informative. This appreciation for initial confidence has changed policy. For example, the U.S. Department of Justice recently updated their department-wide policy to encourage the collection of initial confidence. In a press release announcing this update, Deputy Attorney General Sally Yates said that “a growing body of research has highlighted the importance of documenting a witness’s self-reported confidence at the moment of the initial identification, in part because such confidence is often a more reliable predictor of eyewitness accuracy than a witness’s confidence at the time of trial” (Yates, 2017, para. 3). In addition, we found that experts today are divided in opinion about the best lineup presentation format. We believe these results will help judges in their role as gatekeepers of expert testimony and highlight areas of research that require further inquiry. We recommend updating this database periodically in the future as the science of eyewitness memory continues to evolve and progress.

Footnotes

Appendix A

We collected a variety of quantitative measures from our respondents that highlight their expertise. Figure A1 shows a box plot of these quantitative measures. Figure A1a shows that many of our respondents had worked in their profession for several years. A large majority (87%) of our respondents have worked in their profession for at least years, and 71% of them have worked in their profession for at least 10 years. The median number of years worked was 13. Figure A1b shows that our respondents published many scientific articles on the topic of human memory or eyewitness memory. All our respondents published at least one scientific article on this topic, 86% of them published at least five scientific articles on the topic, and 24% of them published at least 30 scientific articles on the topic. The median number of articles published on the topic is 13. Two respondents published approximately 200 articles each on the topic. Figure A1c shows that our respondents’ scientific publications were highly impactful, as determined by their h-index. The h-index is a metric of performance that considers both the number of scientific publications and the number of times those publications are cited (Hirsch, 2005). To have an h-index equal to 20, for example, a scientist needs to publish 20 articles that are each cited 20 times. The h-index has limitations of course (Haustein & Larivière, 2015; Kelly & Jennions, 2006; Purvis, 2006), but this measure can serve as an indicator of scientific achievement (Hirsch, 2007). Figure A1c shows that nearly one fourth of our respondents achieved an h-index equal to or greater than 20 (24%), and more than half achieved an h-index equal to or greater than 10 (57%). The median h-index is 13.

We also separated these measures for those respondents solicited directly via email, including the five respondents we emailed via the AP-LS listserv (pink) and those solicited indirectly via the SARMAC Twitter account (blue). The box plots between these two groups of respondents are nearly identical. For example, in Figure A1d, the median number of years worked is 14 for those we emailed and 12 for those we notified via the SARMAC Twitter account, which is not a significant difference, X2(DF = 2, N = 76) = 0.013, p = .909. Moreover, in Figure A1e, the median number of publications on the topic of human memory or eyewitness memory is 16 for those we emailed and 13 for those we notified via the SARMAC Twitter account, which is not a significant difference either, X2(DF = 2, N = 74) = 0.0513, p = .821. Last, Figure A1f shows the median h-index is 13 for both groups of respondents. In sum, the experts in our sample were highly experienced and knowledgeable about research on eyewitness memory. That is true for those we solicited directly via email and for those we solicited indirectly via the SARMAC Twitter account.

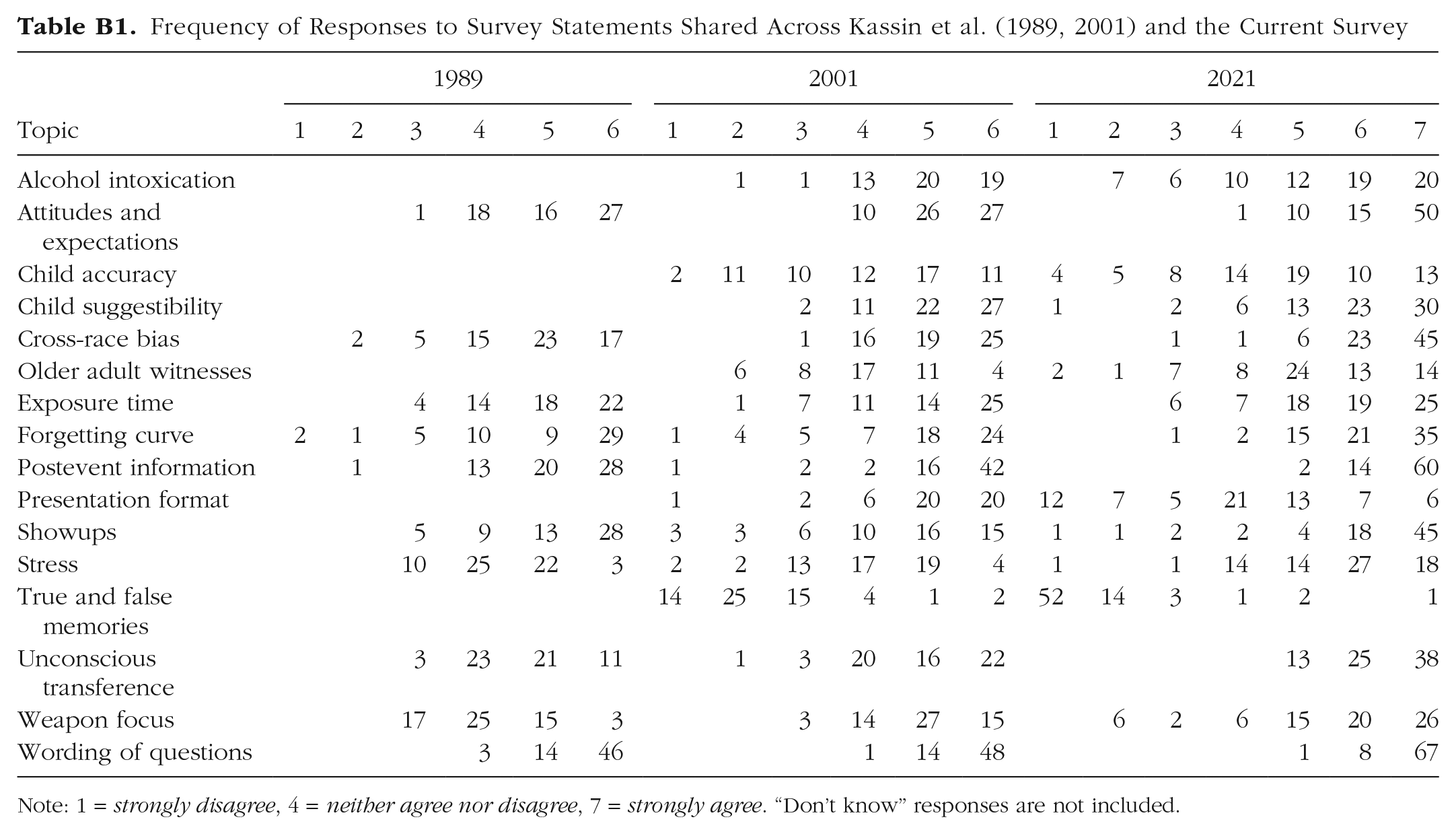

Appendix B

Frequency of Responses to Survey Statements Shared Across Kassin et al. (1989, 2001) and the Current Survey

| Topic | 1989 | 2001 | 2021 | ||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | 6 | 1 | 2 | 3 | 4 | 5 | 6 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

| Alcohol intoxication | 1 | 1 | 13 | 20 | 19 | 7 | 6 | 10 | 12 | 19 | 20 | ||||||||

| Attitudes and expectations | 1 | 18 | 16 | 27 | 10 | 26 | 27 | 1 | 10 | 15 | 50 | ||||||||

| Child accuracy | 2 | 11 | 10 | 12 | 17 | 11 | 4 | 5 | 8 | 14 | 19 | 10 | 13 | ||||||

| Child suggestibility | 2 | 11 | 22 | 27 | 1 | 2 | 6 | 13 | 23 | 30 | |||||||||

| Cross-race bias | 2 | 5 | 15 | 23 | 17 | 1 | 16 | 19 | 25 | 1 | 1 | 6 | 23 | 45 | |||||

| Older adult witnesses | 6 | 8 | 17 | 11 | 4 | 2 | 1 | 7 | 8 | 24 | 13 | 14 | |||||||

| Exposure time | 4 | 14 | 18 | 22 | 1 | 7 | 11 | 14 | 25 | 6 | 7 | 18 | 19 | 25 | |||||

| Forgetting curve | 2 | 1 | 5 | 10 | 9 | 29 | 1 | 4 | 5 | 7 | 18 | 24 | 1 | 2 | 15 | 21 | 35 | ||

| Postevent information | 1 | 13 | 20 | 28 | 1 | 2 | 2 | 16 | 42 | 2 | 14 | 60 | |||||||

| Presentation format | 1 | 2 | 6 | 20 | 20 | 12 | 7 | 5 | 21 | 13 | 7 | 6 | |||||||

| Showups | 5 | 9 | 13 | 28 | 3 | 3 | 6 | 10 | 16 | 15 | 1 | 1 | 2 | 2 | 4 | 18 | 45 | ||

| Stress | 10 | 25 | 22 | 3 | 2 | 2 | 13 | 17 | 19 | 4 | 1 | 1 | 14 | 14 | 27 | 18 | |||

| True and false memories | 14 | 25 | 15 | 4 | 1 | 2 | 52 | 14 | 3 | 1 | 2 | 1 | |||||||

| Unconscious transference | 3 | 23 | 21 | 11 | 1 | 3 | 20 | 16 | 22 | 13 | 25 | 38 | |||||||

| Weapon focus | 17 | 25 | 15 | 3 | 3 | 14 | 27 | 15 | 6 | 2 | 6 | 15 | 20 | 26 | |||||

| Wording of questions | 3 | 14 | 46 | 1 | 14 | 48 | 1 | 8 | 67 | ||||||||||

Note: 1 = strongly disagree, 4 = neither agree nor disagree, 7 = strongly agree. “Don’t know” responses are not included.

Appendix C

Appendix D

Frequency of Responses for Most Unresolved Issue in the Field

| Theme | Frequency |

|---|---|

| Factors affecting confidence–accuracy relationship | 17 |

| Best lineup: simultaneous or sequential | 10 |

| Research applicable to law enforcement agencies | 9 |

| Best method for selecting fillers | 6 |

| Appropriate statistics to analyze performance | 5 |

| Stress effects on performance | 3 |

| Repeated testing effects on performance | 2 |

| Individual differences and performance | 2 |

| How to best help children | 2 |

| Efficacy of showups | 1 |

| Research on familiar identifications | 1 |

| Best method for eliciting descriptions | 1 |

| Research on recovered memories | 1 |

| Collaborations with researchers and practitioners | 1 |

| Research on detecting deception | 1 |

| Research on double blind lineups | 1 |

| Research on forgetting | 1 |

| Research on the cross-race effect | 1 |

| Research on lineup decision processes | 1 |

| Research on unconscious transference | 1 |

| Research on the weapon focus effect | 1 |

| Research on substance effects and performance | 1 |

| Use of the Manson criteria | 1 |

| Factors that affect performance | 1 |

| Clearer understanding of actual policies | 1 |

| Research on contextual effects and performance | 1 |

| Best way to advise lawyers on jury selection | 1 |

| Research on motivation and performance | 1 |

Note: Ten respondents did not answer this question, and several respondents listed multiple unresolved issues.

Acknowledgements

Adele Quigley-McBride is currently affiliated with the Department of Psychology, Simon Fraser University. We thank Gurpreet Reen for comments on an earlier version of this manuscript.

Transparency

Action Editor: Daniel Schacter

Editor: Interim Editorial Panel