Abstract

Drawing on the philosophy of psychological explanation, we suggest that psychological science, by focusing on effects, may lose sight of its primary explananda: psychological capacities. We revisit Marr’s levels-of-analysis framework, which has been remarkably productive and useful for cognitive psychological explanation. We discuss ways in which Marr’s framework may be extended to other areas of psychology, such as social, developmental, and evolutionary psychology, bringing new benefits to these fields. We then show how theoretical analyses can endow a theory with minimal plausibility even before contact with empirical data: We call this the

Keywords

A substantial proportion of research effort in experimental psychology isn’t expended directly in the explanation business; it is expended in the business of discovering and confirming effects.

Psychological science has a preoccupation with “effects.” However, effects are explananda (things to be explained), not explanations. The Stroop effect, for instance, does not explain why naming the color of the word “red” written in green takes longer than naming the color of a green patch. That just

Primary explananda are key phenomena defining a field of study. They are derived from observations that span far beyond, and often even precede, the testing of effects in the lab. Cognitive psychology’s primary explananda are the cognitive capacities that humans and other animals possess. These capacities include, in addition to those already mentioned, those for learning, language, perception, concept formation, decision-making, planning, problem-solving, reasoning, and so on.

3

Only in the manner in which we postulate that such capacities are exercised do our explanations of capacities come to imply effects. An example is given by Cummins (2000): Consider two multipliers, M1 and M2. M1 uses the standard partial products algorithm. . . . M2 uses successive addition. Both systems have the capacity to multiply. . . . But M2 also exhibits the “linearity effect”: computation is, roughly, a linear function of the size of the multiplier. It takes twice as long to compute 24 ×

This example illustrates two points. First, many of the effects studied in our labs are by-products of how capacities are exercised. They may be used to test different explanations of how a system works: For example, by giving a person different pairs of numerals and by measuring response times, one can test whether the person’s timing profile fits M1 or M2, or any different M′. Second, candidate explanations of capacities (multiplication) come in the form of different algorithms (e.g., partial products or repeated addition) computing a particular function (i.e., the product of two numbers). Such algorithms are not devised to explain effects; rather, they are posited as a priori candidate procedures for realizing the target capacity.

Although effects are usually discovered empirically through intricate experiments, capacities (primary explananda) do not need to be discovered in the same way (Cummins, 2000). Just as we knew that apples fall straight from the trees (rather than move upward or sideways) before we had an explanation in terms of Newton’s theory of gravity, 4 so too do we already know that humans can learn languages, interpret complex visual and social scenes, and navigate dynamic, uncertain, culturally complex social worlds. These capacities are so complex to explain computationally or mechanistically that we do not know yet how to emulate them in artificial systems at human levels of sophistication. The priority should be the discovery not of experimentally constructed effects but of plausible explanations of real-world capacities. Such explanations may then provide a theoretical vantage point from which to also explain known effects (secondary explananda) and perhaps to guide inquiry into the discovery of new informative ones.

This approach is not the one psychological science has been pursuing in recent decades; nor is it what the contemporary methodological-reform movement in psychological science has been recommending. Methodological reform so far seems to follow the tradition of focusing on establishing statistical effects, and, arguably, the reform has even been entrenching this bias. The reform movement has aimed primarily at improving methods for determining which statistical effects are replicable (cf. debates on preregistration; Nosek et al., 2019; Szollosi et al., 2020), and there has been relatively little concern for improving methods for generating and formalizing scientific explanations (for notable exceptions, see Guest & Martin, 2021; Muthukrishna & Henrich, 2019; Smaldino, 2019; van Rooij, 2019). But if we are already “overwhelmed with things to explain, and somewhat underwhelmed by things to explain them with” (Cummins, 2000, p. 120), why do psychological scientists expend so much energy hunting for more and more effects? We see two reasons besides tradition and habit.

One is that psychological scientists may believe in the need to build large collections of robust, replicable, uncontested effects before even thinking about starting to build theories. The hope is that, by collecting many reliable effects, the empirical foundations are laid on which to build theories of mind and behavior. As reasonable as this seems, without a prior theoretical framework to guide the way, collected effects are unlikely to add up and contribute to the growth of knowledge (Anderson, 1990; Cummins, 2000; Newell, 1973). An analogy may serve to bring the point home. In a sense, trying to build theories on collections of effects is much like trying to write novels by collecting sentences from randomly generated letter strings. Indeed, each novel ultimately consists of strings of letters, and theories should ultimately be compatible with effects. Still, the majority of the (infinitely possible) effects are irrelevant for the aims of theory building, just as the majority of (infinitely possible) sentences are irrelevant for writing a novel.

5

Moreover, many of the

Another reason, not incompatible with the first, may be that psychological scientists are unsure how to even start to construct theories if those theories are not somehow based on effects. After all, building theories of capacities is a daunting task. The space of possible theories is, prima facie, at least as large as the space of effects: For any finite set of (naturalistic or controlled) observations about capacities, there exist (in principle) infinitely many theories consistent with those observations. However, we argue that theories may be built by following a

This article aims to make accessible ideas for doing exactly this. We present an approach for building theories of capacities that draws on a framework that has been highly successful for this purpose in cognitive science: Marr’s levels of analysis.

What Are Theories of Capacities?

A capacity is a dispositional property of a system at one of its levels of organization: For example, single neurons have capacities (firing, exciting, inhibiting) and so do minds and brains (vision, learning, reasoning) and groups of people (coordination, competition, polarization). A capacity is a more or less reliable ability (or disposition or tendency) to transform some initial state (or “input”) into a resulting state (“output”).

Marr (1982/2010) proposed that, to explain a system’s capacities, we should answer three kinds of questions: (a) What is the nature of the function defining the capacity (the input-output mapping)? (b) What is the process by which the function is computed (the algorithms computing or approximating the mapping)? (c) How is that process physically realized (e.g., the machinery running the algorithms)? Marr called these computational-level theory, algorithmic-level theory, and implementational-level theory, respectively. Marr’s scheme has occasionally been criticized (e.g., McClamrock, 1991) and variously adjusted (e.g., Anderson, 1990; Griffiths et al., 2015; Horgan & Tienson, 1996; Newell, 1982; Poggio, 2012; Pylyshyn, 1984), but its gist has been widely adopted in cognitive science and cognitive neuroscience, where it has supported critical analysis of research practices and theory building (see Baggio et al., 2012a, 2015, 2016; Isaac et al., 2014; Krakauer et al., 2017). We also see much untapped potential for it in areas of psychology outside of cognitive science.

Following Marr’s views, we propose the adoption of a top-down strategy for building theories of capacities, starting at the computational level. A top-down or function-first approach (Griffiths et al., 2010) has several benefits. First, a function-first approach is useful if the goal is to “reverse engineer” a system (Dennett, 1994; Zednik & Jäkel, 2014, 2016). As Marr stated, “an algorithm is . . . understood more readily by understanding the nature of the problem being solved than by examining the mechanism . . . in which it is embodied” (Marr, 1982/2010, p. 27; see also Marr, 1977).

Knowing a functional target (“what” a system does) may facilitate the generation of algorithmic- and implementational-level hypotheses (i.e., how the system “works” computing that function). Reconsider, for instance, the multiplication example from above: By first expressing the function characterizing the capacity to multiply (

Psychological theories of capacities should generally be (a) mathematically specified and (b) independent of the details of implementation. The strategy is to try to precisely produce theories of capacities meeting these two requirements, unless evidence is available that this is impossible, for example, that the capacity cannot be modeled in terms of functions mapping inputs to outputs (Gigerenzer, 2020; Marr, 1977). A computational-level theory of a capacity is a specification of input states,

Marr’s computational-level theory has often been applied to information-processing capacities as studied by cognitive psychologists. However, Marr’s framework can be extended beyond its traditional domains. First, to the extent that

Second, and this is a less conventional and less explored idea, the framework can also be applied to noncognitive or nonindividual capacities of relevance to social, developmental, and evolutionary psychology and more. Preliminary explorations into computational-level analyses of noncognitive or nonindividual capacities can be found in work by Krafft and Griffiths (2018) on distributed social processes, Huskey et al. (2020) on communication processes, Rich et al. (2020) on natural and/or cultural-evolution processes, and van Rooij (2012) on self-organized processes.

Later in this article we spell out our approach to theory building using examples. To encourage readers to envisage applications of the approach to their own domains of expertise and to more complex phenomena than those we can cover here, we provide a stepwise account of what is involved in constructing theories of psychological capacities in general. Following Marr’s (1982/2010) successful cash register example, we foresee that more abstract illustrations demonstrating general principles can encourage a wider and more creative adoption of these ideas.

First Steps: Building Theories of Capacities

We have proposed that theories of capacities may be formulated at Marr’s computational level. A computational-level theory specifies a capacity as a property-satisfying computation

A first thought may be to derive

Abduction is sensitive to background knowledge. We cannot determine which hypotheses are good (verisimilar) by considering only locally obtained data (e.g., data for a toy scenario in a laboratory task). We should interpret any data in the context of our larger “web of beliefs,” which may contain anything we know or believe about the world, including scientific or commonsense knowledge. One does not posit a function

What are the first steps in the process of theory building? Theorists often start with an initial intuitive verbal theory (e.g., that decisions are based on maximizing utilities, that people tend toward internally coherent beliefs, that meaning in language has a systematic structure). The concepts used in this informal theory should then be formally defined (e.g., utilities are numbers, beliefs are propositions, and meanings of linguistic expressions can be formalized in terms of functions and arguments; numbers, propositions, functions, and arguments are all well-defined mathematical concepts). The aim of formalization is to cast initial ideas using mathematical expressions (again, of any kind, not just quantitative) so that one ends up with a well-defined function

Let us illustrate the first steps of theory construction with an example—

Box 1. Possible Objections

One could object that computational-level theorizing is possible only for

To counter the first objection, we note that

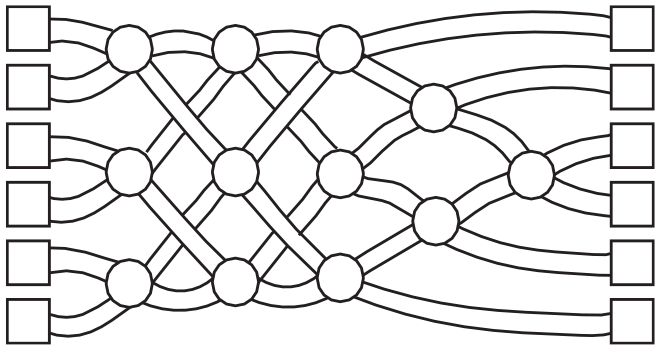

A sorting network. Imagine a maze structured this way; each of six people, walking from left to right, enters a square on the left. Every time two people meet at any node (circle) they compare their height. The shorter of the two turns left next, and the taller turns right. At the end of the maze, people end up sorted by height. This holds regardless of which six people enter the maze and of what order they enter the maze. Hence, the maze (combined with the subcapacity of people for making pairwise comparisons) has the capacity for sorting people by height. Adapted from https://www.csunplugged.org, under a CC BY-SA 4.0 license.

We note that a system may produce outputs in which the target property comes only in degrees: For example, the network in the figure in this box may not always produce a perfect sorting by height if the people entering the maze do not meet at every node; even then, (a) the outputs tend to show a greater degree of ordering than is expected by chance, and (b) under relevant idealizations (i.e., people meet at every node) the system can still produce a complete, correct ordering: Together this illustrates the system’s

Functions, so conceived, may describe capacities at any level of organization: We see no reason to reserve computational-level explanations only to levels of organization traditionally considered in cognitive science. Even within cognitive psychology, the computational level may be (and has been) applied at different levels of organization—from various neural levels (e.g., feature detection) to persons or groups (e.g., communication). A Marr-style analysis applies regardless of the level of organization at which the primary explananda are situated; hence, it need not be limited to the domain of cognitive psychology.

To counter the second objection, we note that computational-level theories are usually considered to be normative (e.g., rational or optimal, in a realist or instrumentalist “as if” sense; Chater & Oaksford, 2000; Chater et al., 2003; Danks, 2008, 2013; van Rooij et al., 2018), but that is not formally required. A computational-level analysis is a mathematical object, a function

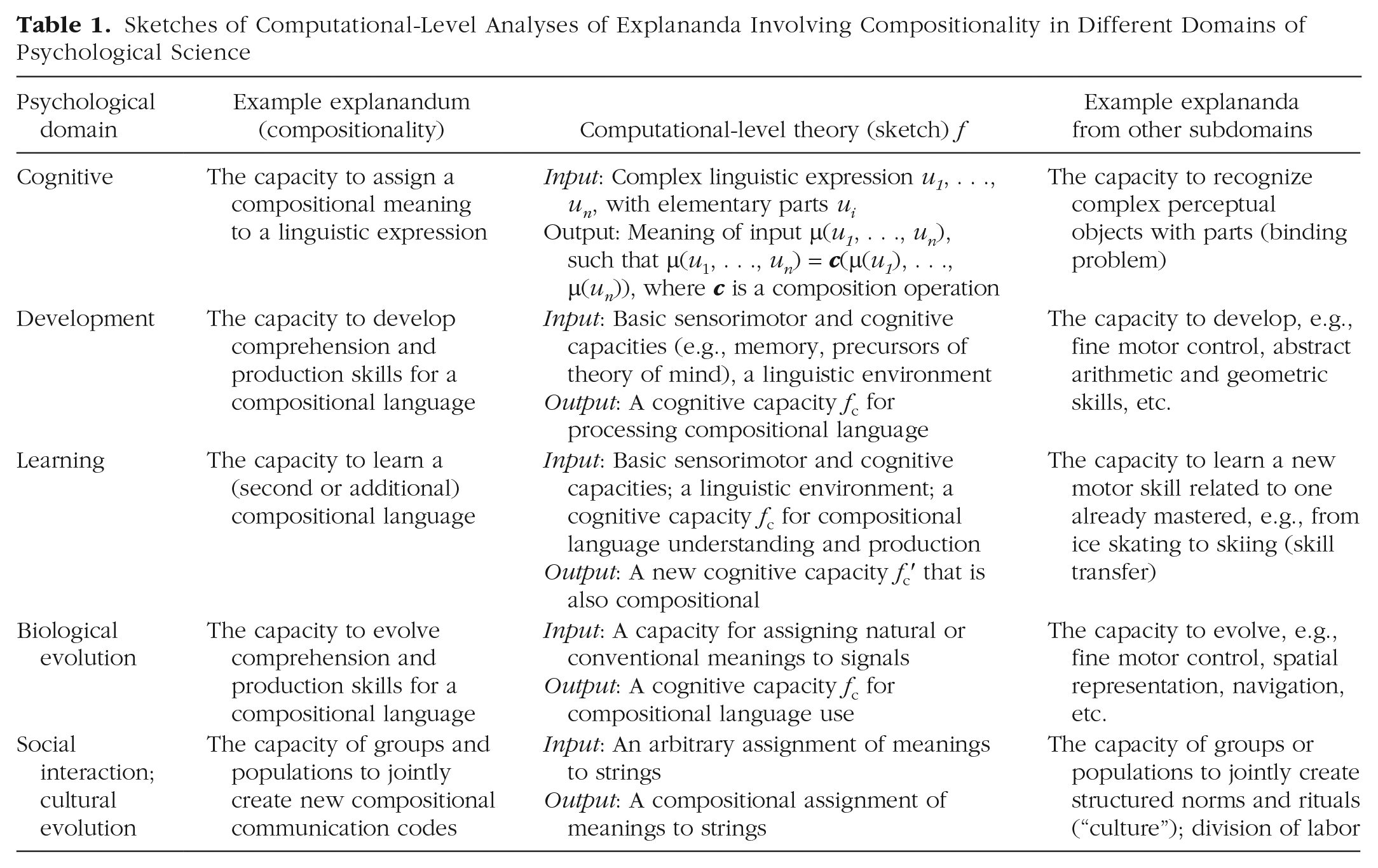

Compositionality is a useful example in this context because it holds across cognitive and noncognitive domains and has important social, cultural, and evolutionary ramifications (Table 1), as may be expected from a core property of the human-language capacity. Compositionality is therefore used here to illustrate the applicability of Marr’s framework across areas of cognitive, developmental, social, cultural, and evolutionary psychology. For example, cognitive psychologists may be interested in explaining a person’s capacity to assign compositional meaning to a given linguistic expression, like a vision scientist may be interested in explaining how perceptual representations of visual objects arise from representations of features or parts (the “binding problem”; Table 1, row 1). In all cases covered in Table 1, a “sketch” of a computational theory can be provided as a first step in theory building. A sketch requires that the capacity of interest, the explanandum, is identified with sufficient precision to allow the specification of the inputs, or initial states, and the outputs, or resulting states, of the function

Sketches of Computational-Level Analyses of Explananda Involving Compositionality in Different Domains of Psychological Science

Further Steps: Assessing Theories in the Theoretical Cycle

Once an initial characterization of

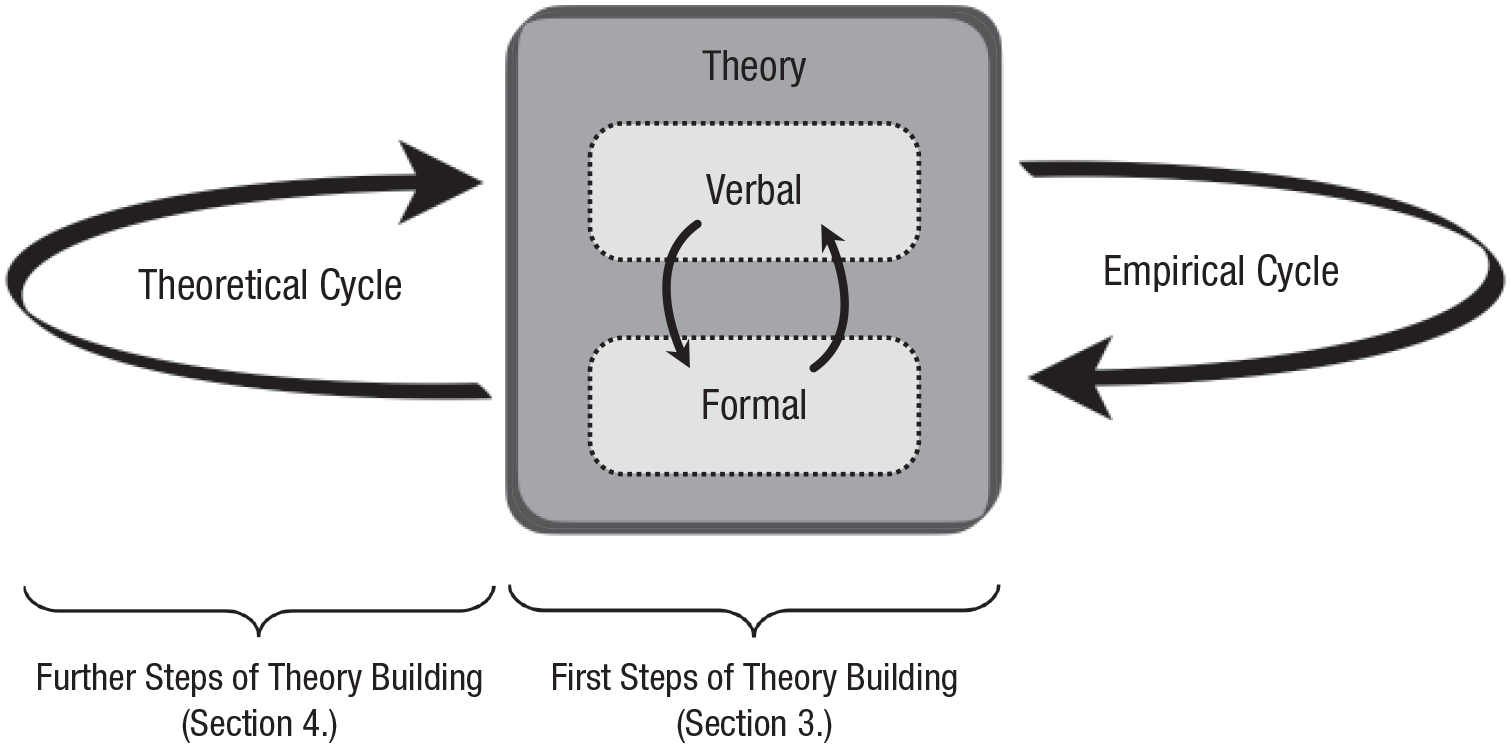

The empirical cycle is familiar to most psychological scientists: The received view is that our science progresses by postulating explanatory hypotheses, empirically testing their predictions (including, but not limited to, effects), and revising and refining the hypotheses in the process. Explanatory hypotheses often remain verbal in psychological research. The first steps of (formal) theory building include making such verbal theories formally explicit. In the process of‘ formalization the verbal theory may be revised and refined. Theory building does not need to proceed with empirical testing right away. Instead, theories can be subjected to rigorous theoretical tests in what we refer to as the theoretical cycle. This theoretical cycle is aimed at endowing the (revised) theory with greater a priori plausibility (verisimilitude) before assessing the theory’s empirical adequacy in the empirical cycle.

The hypothetical

8

example below from the domain of action planning appears simple, but as we demonstrate later it is actually quite complex. One can think of an organism foraging as engaging in Foraging

Some arbitrary choices were made here that might matter for the theory’s explanatory value or verisimilitude. For example, we could have formalized the notion of “good trade-off” by defining it as (a) maximizing the amount of food collected given an upper bound on the cost of travel, (b) minimizing the amount of travel given a lower bound on the amount of food collected, or (c) maximizing the difference between the total amount of food collected and the cost of travel. We could also have added free parameters, weighing differentially the importance of maximizing the amount of food and of minimizing the cost of travel.

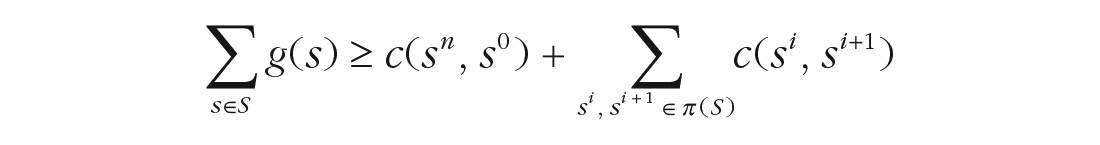

In the theoretical cycle, one explores the assumptions and consequences of a given set of formalization choices, thereby assessing whether a computational-level theory is making unrealistic assumptions or otherwise contradicts prior or background knowledge. As an example we use a theoretical constraint called

In relation to tractability, the foraging

The intractability of an

Tractability/intractability analyses apply widely, not just to simple examples such as the ones above. The approach has been used to assess constraints that render tractable/intractable computational accounts for various capacities relevant for psychological science that span across domains and levels (Table 1), such as coherence-based belief updating (van Rooij et al., 2019), action understanding and theory of mind (Blokpoel et al., 2013; van de Pol et al., 2018; Zeppi & Blokpoel, 2017), analogical processing (van Rooij et al., 2008; Veale & Keane, 1997), problem-solving (Wareham, 2017; Wareham et al., 2011), decision-making (Bossaerts & Murawski, 2017; Bossaerts et al., 2019), neural-network learning (Judd, 1990), compositionality of language (Pagin, 2003; Pagin & Westerståhl, 2010), evolution, learning or development of heuristics for decision-making (Otworowska et al., 2018; Rich et al., 2019), and evolution of cognitive architectures generally (Rich et al., 2020). This existing research (for an overview, see Compendium C in van Rooij et al., 2019) shows that tractability is a widespread concern for theories of capacities relevant for psychological science and moreover that the techniques of tractability analysis can be fruitfully applied across psychological domains.

Building on other mathematical frameworks, and depending on the psychological domain of interest (Table 1) and on one’s background assumptions, computational-level theories can also be assessed for other theoretical constraints, such as computability, physical realizability, learnability, developability, evolvability, and so on. For instance, reconsider foraging. We discussed foraging above at only the cognitive level (Table 1, row 1), but one can also ask how a foraging capacity can be learned, developed, or evolved biologically and/or socially (Table 1, rows 2–5). In some cases, these theoretical constraints can again be assessed by analyses analogous to the general form of the tractability analysis illustrated above (for learnability, see, e.g., Chater et al., 2015; Clark & Lappin, 2013; Judd, 1990; for evolvability, see Kaznatcheev, 2019; Valiant, 2009; for learnability, developability, and evolvability, see Otworowksa et al., 2018; Rich et al., 2020). On the one hand, such analyses are all similar in spirit, as they assess the in-principle possibility of the existence of a computational process that yields the output states from initial states as characterized by the computational-level theory. On the other hand, they may involve additional constraints that are specific to the real-world physical implementation of the computational process under study. For instance, a learning algorithm running on the brain’s wetware needs to meet physical implementation constraints specific to neuronal processes (e.g., Lillicrap et al., 2020; Martin, 2020), evolutionary algorithms realized by Darwinian natural selection are constrained to involve biological information that can be passed on genetically across generations (Barton & Partridge, 2000), and cultural evolution is constrained to involve the social transmission of information across generations and through bottlenecks (Henrich & Boyd, 2001; Kirby, 2001; Mesoudi, 2016; Woensdregt et al., 2020). Hence, brain processes and biological and cultural evolution are all amenable to computational analyses but may have their own characteristic physical realization constraints.

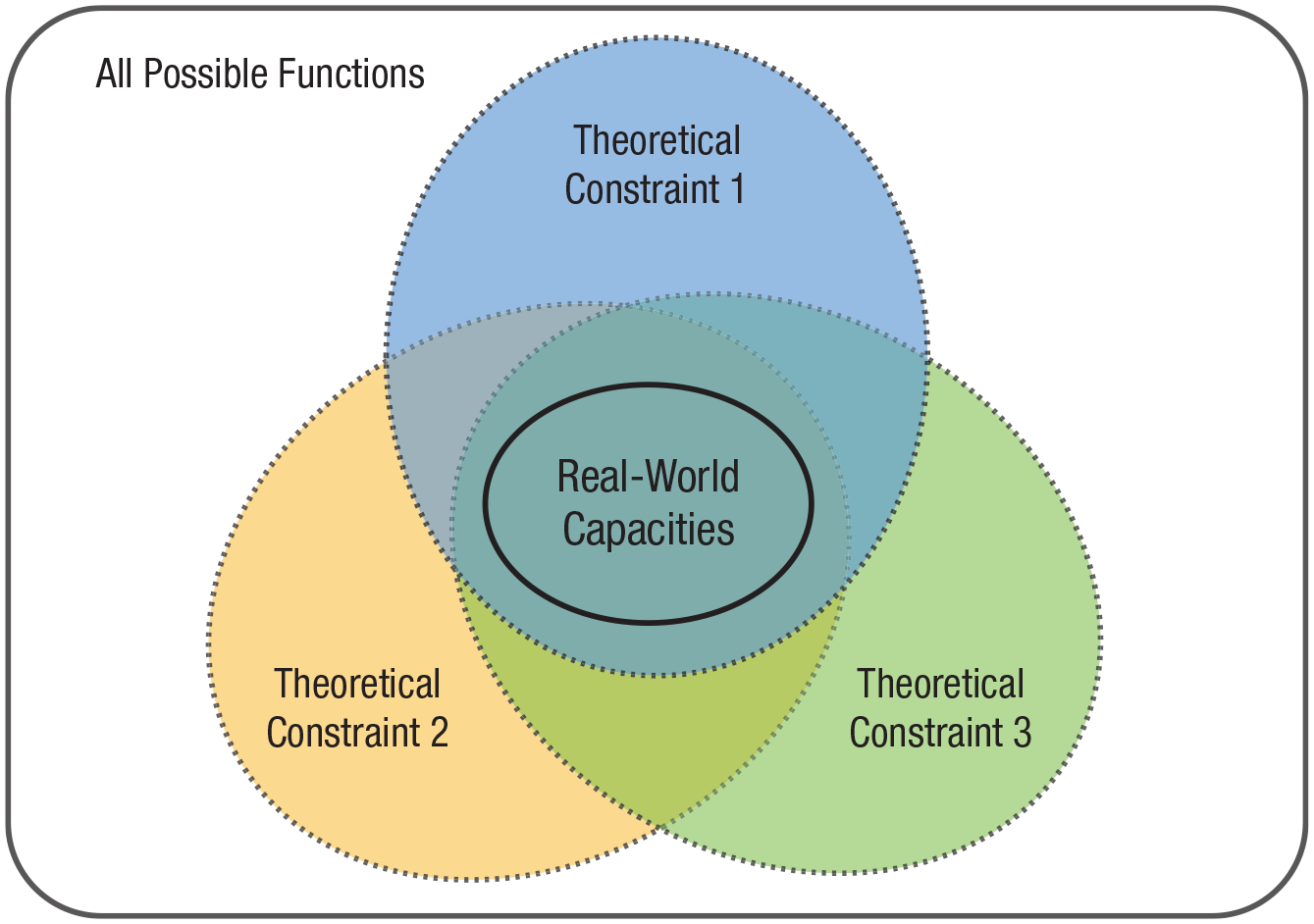

By combining different theoretical constraints one can narrow down the space of possible functions to those describing real-world capacities (Fig. 2). The theoretical cycle thus contributes to early theory validation and advances knowledge even before putting theories to an empirical test. In practice, it serves as a timely safeguard system: It allows one to detect logical or conceptual errors as soon as possible (e.g., intractability of

The universe of all possible functions (indicated by the rectangle) contains infinitely many possible functions. By applying several constraints jointly (e.g., tractability, learnability, evolvability) psychological scientists can reduce the subset of candidate functions to only those plausibly describing real-world capacities.

What Effects Can Do for Theories of Capacities

We have argued that the primary aim of psychological theory is to explain capacities. But what is the role of effects in this endeavor? How are explanations of capacities (primary explananda) and explanations of effects (secondary explananda) related? Our position, in a nutshell, is that inquiry into effects should be pursued in the context of explanatory multilevel theories of capacities and in close continuity with theory development.

12

From this perspective, the main goal of empirical research, including experimentation (e.g., testing for effects) and computational modeling or simulation, is to further narrow down the space of possible functions

Consider again the multipliers example from Cummins (2000). Multiplication is tractable, and the partial products (M1) and successive addition (M2) algorithms meet minimal constraints of learnability and physical realizability. M1 and M2 are plausible algorithmic-level accounts of the human capacity for multiplication. But depending on which algorithm is used on a particular occasion, performance (e.g., the time it takes for one to multiply two numbers) might show either the linearity effect predicted by M2 or the step-function profile predicted by M1. Note that both M1 and M2 explain the capacity for multiplication. It is not the computational-level analysis that predicts different effects (the

Another dimension in the soft taxonomy of effects suggested by our approach pertains to the degree to which effects are relevant for understanding a psychological capacity. Some effects may well be implied by the theory at some level of analysis but may reflect more or less directly, or not at all, how a capacity is realized and exercised. For example, a brain at work may dissipate a certain amount of heat greater than the heat depleted by the same brain at rest; the chassis of an old vacuum-tube computer may vibrate when it is performing its calculations. These effects (heat or vibration) can tell us something about resource use and physical constraints in these machines, but they do not illuminate how the brain or the computer processes information. These may sound like extreme examples, but the continuum between clearly irrelevant effects such as heat or vibration and the kind of effects studied by experimental psychologists is not partitioned for us in advance: The effects collected by psychological scientists cannot just be assumed to be all equally relevant for understanding capacities across levels of analysis. We may more prudently suppose that they sit on a continuum of relevance or informativeness in relation to a capacity’s theory: Some can evince how the capacity is realized or exercised, but others are in the same relation to the target capacity as heat is to brain function.

There are no steadfast rules that dictate how or where to position specific effects on that continuum, but one may envisage some diagnostic tests. Consider again classical effects, such as the Stroop effect, the McGurk effect, primacy and recency effects, visual illusions, priming, and so on. In each case, one may ask whether the effect reveals a

This brings us to a further point. Tests of effects can contribute to theories of capacities to the extent that the information they provide bears on the structure of the system, whether it is the form of the

Conclusion

Several recent proposals have emphasized the need to potentiate or improve theoretical practices in psychology (Cooper & Guest, 2014; Fried, 2020; Guest & Martin, 2021; Muthukrishna & Henrich, 2019; Smaldino, 2019; Szollosi & Donkin, 2021), whereas others have focused on clarifying the complex relationship between theory and data in scientific inference (Devezer et al., 2019, 2020; Kellen, 2019; MacEachern & Van Zandt, 2019; Navarro, 2019; Szollosi et al., 2020). Our proposal fits within this landscape but aims at making a distinctive contribution through the idea of the theoretical cycle: Before theories are even put to an empirical test, they can be assessed for their plausibility on formal, computational grounds; this requires that there is something to assess formally; that is, it requires a computational-level analysis of the capacity of interest. In a theoretical cycle, one addresses questions iteratively concerning, for example, the tractability, learnability, physical realizability, and so on, of the capacity as formalized in the theory. However, the theoretical cycle and the empirical cycle will need to always be interlaced: Abduced theories can then both be explanatory and meet plausibility constraints (i.e., have minimal verisimilitude) on testing; conversely, relevant effects can be leveraged to understand capacities better. The net result is that empirical tests are informative and can play a direct role in further improving our theories.

Footnotes

Acknowledgements

We thank Mark Blokpoel, Marieke Woensdregt, Gillian Ramchand, Alexander Clark, Eirini Zormpa, Johannes Algermissen, and participants of ReproduciliTea Nijmegen for useful discussions. We are grateful to Travis Proulx and two anonymous reviewers for their constructive comments that helped us improve an earlier version of the manuscript. Parts of this article are based on ![]() .

.