Abstract

Is self-serving lying intuitive? Or does honesty come naturally? Many experiments have manipulated reliance on intuition in behavioral-dishonesty tasks, with mixed results. We present two meta-analyses (with evidential value) testing whether an intuitive mind-set affects the proportion of liars (k = 73; n = 12,711) and the magnitude of lying (k = 50; n = 6,473). The results indicate that when dishonesty harms abstract others, promoting intuition causes more people to lie, log odds ratio = 0.38, p = .0004, and people to lie more, Hedges’s g = 0.26, p < .0001. However, when dishonesty inflicts harm on concrete others, promoting intuition has no significant effect on dishonesty (p > .63). We propose one potential explanation: The intuitive appeal of prosociality may cancel out the intuitive selfish appeal of dishonesty, suggesting that the social consequences of lying could be a promising key to the riddle of intuition’s role in honesty. We discuss limitations such as the relatively unbalanced distribution of studies using concrete versus abstract victims and the overall large interstudy heterogeneity.

Keywords

You pay for your $3 cappuccino with a $5 bill. The sleepy cashier mistakenly assumes you have paid with a $20 bill and gives you $17 in change. The person behind you is already eager to order, so time is of the essence. Deciding quickly, do you take the money? Or do you return the undue amount? Almost daily, people face similar temptations to bend ethical rules to serve their self-interest. For example, people may decide to free-ride on public transport or exaggerate the costs of a business trip. When making those decisions, people are often distracted, stressed, or under pressure and thus do not take time to deliberate. Faced with the temptation to lie for profit, what is people’s basic inclination: honesty or dishonesty?

Dual-process models provide a useful framework for answering this question. These models postulate that human decision making results from the interplay of an intuitive System 1 that is fast and inflexible and a deliberate System 2 that is slow and flexible (Kahneman, 2011). In recent years, the dual-process perspective has gained popularity in the study of self-serving dishonesty—accruing benefits to the self while violating accepted standards or rules (Shu, Gino, & Bazerman, 2011, p. 330). Results about the extent to which honesty is intuitive are mixed. Whereas some find that people’s intuitive response in tempting situations is to selfishly lie, others find honesty intuitive. This is the puzzle we seek to solve.

Intuitive Honesty?

People have the truth in mind, and to modify it they need to exert cognitive effort and craft a lie. This is the logic underlying the prominent cognitive theory that regards truth telling as the more automatic, dominant response and lying as a complex cognitive function that imposes greater demand on cognitive skills (Vrij, Fisher, Mann, & Leal, 2006). Indeed, people react faster when instructed to tell the truth compared with a lie (for meta-analyses, see Suchotzki, Verschuere, Van Bockstaele, Ben-Shakhar, & Crombez, 2017; Verschuere, Köbis, Bereby-Meyer, Rand, & Shalvi, 2018); and when instructed to lie, people exhibit heightened activity in the control regions of the brain (Spence et al., 2001). Lying, accordingly, requires cognitive capacity. Indeed, people tend to lie less for their own profit when distracted by a demanding memory task compared with a less demanding task (van’t Veer, Stel, & Van Beest, 2014). Furthermore, people are less likely to send deceptive messages to their counterparts when acting under time pressure compared with no time pressure (Capraro, 2017). These complementary lines of work advocate the following: Honesty is intuitive.

Intuitive Dishonesty?

When people are tired, under time pressure, or doing many things at once (compared with being energized and focused) they are more prone to cave to various temptations, even if those require lying. Being honest and resisting unethical temptations requires self-control (Gino, Schweitzer, Mead, & Ariely, 2011; Tabatabaeian, Dale, & Duran, 2015). This is the logic underlying various lines of recent work. For example, correlational studies have revealed that impulsivity—the tendency to decide intuitively—is positively associated with academic cheating (Anderman, Cupp, & Lane, 2009) and that when people are drained of the cognitive resources required for deliberation they are more likely to engage in workplace deviance (Christian & Ellis, 2011) and unethical behavior (Barnes, Schaubroeck, Huth, & Ghumman, 2011). Experimental work has similarly revealed that restraining participants’ deliberate thinking through cognitive load (e.g., Welsh & Ordonez, 2014), time pressure (Shalvi, Eldar, & Bereby-Meyer, 2012), mental or physical depletion (e.g., Kouchaki & Smith, 2014), priming of intuition concepts (e.g., Zhong, 2011), or conducting experiments in a native language (vs. a foreign language; Bereby-Meyer et al., 2018) increases self-serving dishonesty. Together, these findings suggest the following: Dishonesty is intuitive.

Social Harm Moderates Intuitive Honesty and Dishonesty: Evidence from Two Meta-Analyses

Taken together, the question about people’s intuitive inclinations in tempting situations in which one can profit from lying remains open. Although a large amount of data is available, the results are mixed. To provide an aggregated overview of existing evidence, we conducted meta-analytical tests on experiments on intuitive honesty and dishonesty. In addition to evaluating whether the aggregated evidence supports the intuitive-honesty-versus-dishonesty hypotheses, we further tested a potential moderation that may explain the expected heterogeneity in results.

Our core moderator of interest is whether negative externalities of dishonesty hurt a concrete other (e.g., another participant) or an abstract, vaguer entity (e.g., the experimental budget). Previous theories have stressed the importance of the social element of unethical behavior, outlining that abstract victims and cobeneficiaries of unethical behavior alleviate guilt (Köbis, van Prooijen, Righetti, & Van Lange, 2016). Empirical support stems from studies indicating that people tend to lie when lying benefits in-group members (Cohen, Gunia, Kim-Jun, & Murnighan, 2009; Weisel & Shalvi, 2015; Wiltermuth, 2011) yet are reluctant to do so when lying harms concrete others (Pitesa, Thau, & Pillutla, 2013 Yam & Reynolds, 2016). Furthermore, a substantial body of work on the social heuristics hypothesis (Bear & Rand, 2016; Rand, Greene, & Nowak, 2012) suggests that intuition favors cooperation over interpersonal selfishness in economic games (for a meta-analysis, see Rand, 2016). Applying this theoretical framework to dishonesty suggests that when lying harms a concrete victim, the intuitive urge to be prosocial may be invoked—which may in turn cancel out (or even overpower) the intuitive appeal of self-serving lies.

Directly testing the social-harm account of intuitive honesty and dishonesty in a series of experiments, Pitesa and colleagues (2013) found intuitive honesty when harm was inflicted on another participant but intuitive dishonesty when the research budget was hurt by people’s lies. The moderating role of social harm in determining the direction in which intuition affected dishonesty also fits squarely with the social heuristics hypothesis, proposing an intuitive inclination to cooperate in many social dilemmas (for a meta-analysis, see Rand, 2016).

Method

Search for studies

First, we searched without any restrictions on the publication year Web of Science, PsycINFO, and Google Scholar using the following combinations of the keywords in the first and second brackets with the Boolean operator “OR”: [“deprivation” OR “depletion” OR “cognitive load” OR “intuition” OR “priming” OR “time pressure”] and [“cheating” OR “lying” OR “deception” OR “dishonesty” OR “unethical behavior”].

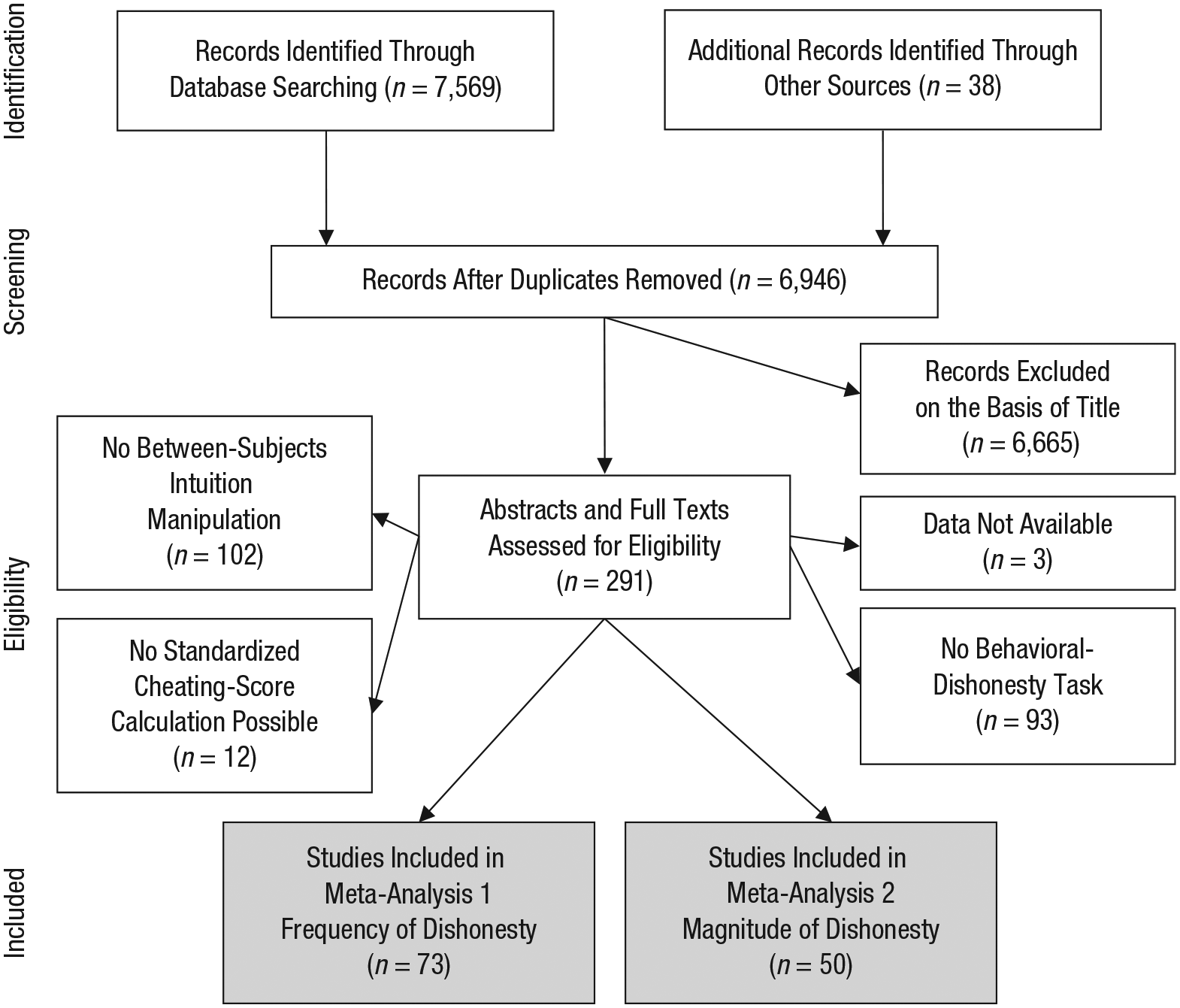

Second, as for other mass-solicitation methods (see Balliet, Wu, & De Dreu, 2014), a call for published and unpublished work was disseminated via various associations and mailing lists. The Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) reporting scheme (see Fig. 1) provides more details about the identification and selection procedure. After a first round of identifying relevant studies and asking authors to send us their work (resulting in the identification of 44 relevant studies), we conducted a second call for papers and a literature search (preregistered; see https://osf.io/8wtcy/), resulting in the identification of an additional 22 relevant studies. During the revision of the manuscript, we issued another call and online search (preregistered; see https://osf.io/bdvmx/), yielding an extra five studies.

Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) flow diagram illustrating the identification, screening-eligibility, and inclusion stages of the composition for both meta-analyses.

Inclusion criteria

Studies were included if they fulfilled two criteria. First, to enable causal inferences about the link between intuition and honesty/dishonesty, we included only experimental setups, hence excluding studies that used a correlational design (Anderman et al., 2009). To achieve the highest possible comparability across study designs, we further excluded within-subject manipulations of intuition (e.g., Foerster, Pfister, Schmidts, Dignath, & Kunde, 2013), thus reducing potential learning effects. Second, the study used a behavioral task to assess dishonesty as the dependent variable in which the participant stood to gain from dishonesty (financially or otherwise).

Inducing an intuitive mind-set

We focused on experiments with a manipulation of intuition and compared those with a control condition—to increase comparability we did not include studies that compared, for example, a control condition with a deliberation condition (such as Wang, Zhong, & Murnighan, 2014). In line with previous researchers who have studied intuitive decision making (Rand, 2016; Verschuere et al., 2018), we classified existing methods of intuition manipulation into five categories:

Time-pressure manipulations (k = 14) require participants to speed up their responses. A short deadline to respond is typically compared with a long deadline or none at all (Shalvi et al., 2012). Limiting the response-time window induces a reliance on intuition because it impairs the participants’ ability to reflect (Rand, 2016; see also Evans, Dillon, & Rand, 2015).

Cognitive-load manipulations (k = 7) require participants to engage in a cognitive task while they engage in a behavioral-dishonesty task (van’t Veer et al., 2014) that can either be easy (e.g., memorizing a three-digit number) or difficult (e.g., memorizing a seven-digit number). Previous research shows that engaging in the difficult memorization task limits the cognitive resources available to people and thus induces an intuitive mind-set (Gilbert, Pelham, & Krull, 1988; Greene, Morelli, Lowenberg, Nystrom, & Cohen, 2008).

Depletion manipulations (k = 30) either require participants to fulfill a taxing task prior to the behavioral task or deplete their cognitive resources physically—for example, by depriving them of sleep or food (Christian & Ellis, 2011)—which impairs the self-control abilities needed for deliberate decision making (for recent meta-analyses on ego depletion, see Carter, Kofler, Forster, & McCullough, 2015; Hagger, Wood, Stiff, & Chatzisarantis, 2010).

Induction manipulations (k = 11) prime participants to decide intuitively. This can either be an instruction to recall a past situation in which they relied on their intuition (Cappelen, Sørensen, & Tungodden, 2013) or can consist of priming emotions that are associated with intuitive decision making (Kouchaki & Desai, 2015).

Foreign-language manipulations (k = 11) were included because recent evidence suggests that completing a study in one’s native language (vs. in a foreign language) can induce an intuitive mind-set (Bereby-Meyer et al., 2018; Geipel, Hadjichristidis, & Surian, 2016).

Measuring dishonesty

We focused on behavioral measures of self-serving dishonesty as an outcome measure, thus excluding studies that used hypothetical scenarios or studies that relied on self-reported dishonesty. Instead, we included only studies in which participants faced the unethical temptation to pursue their self-interest by lying. Multiple methods have been developed to capture such dishonest behavior, and we clustered them into four categories:

Performance-enhancement tasks (k = 33) allow participants to inflate their test score. For example, participants can claim to have solved more matrix puzzles than they actually did—and get paid according to the number they report (Mazar, Amir, & Ariely, 2008).

Stochastic tasks (k = 18), participants privately observe the outcome of a random device, such as a die roll. They then get paid according to the number they report to have seen and can thus lie to increase their financial rewards (Fischbacher & Föllmi-Heusi, 2013).

Sender-receiver games (k = 13), a first player can either send an honest or a deceptive message to a second player, who then decides whether to follow the recommendation influencing both parties’ outcomes (e.g., Gneezy, 2005).

Several other tasks (k = 9) were included, such as perceptual tasks that allow participants to make self-serving mistakes (Kouchaki & Smith, 2014) or tasks in which participants had the opportunity to report they were overpaid by the experimenter (Chiou, Wu, & Cheng, 2017).

More liars or more lying?

Intuition manipulations can affect dishonesty in two ways: changing how many people lie or how much people lie. To test both possible pathways, we standardized the behavior in the dishonesty tasks into two outcome measures. First, to test whether an intuitive mind-set leads more people to lie, Meta-Analysis 1 uses the percentage of liars in the intuition and control conditions as an outcome measure. If direct observation of whether one is dishonest was not possible, such as in the standard die-rolling task (Fischbacher & Föllmi-Heusi, 2013), we estimated the proportion of liars using the algorithms put forth by Garbarino, Slonim, and Villeval (2016). In brief, the algorithm compares the reported proportion of favorable outcomes with their expected frequency assuming honest success. In the Supplemental Material available online, we provide a full description of the Garbarino et al. (2016) algorithm as well as analyses using an alternative algorithm put forth by Moshagen and Hilbig (2017) that yields similar results.

Second, to test whether people lie more, Meta-Analysis 2 compares the magnitude of dishonesty (from fair play to maximal possible lying) in intuition and control conditions. We thus included only behavioral-dishonesty tasks that allowed the calculation of a standardized dishonesty score, which in turn allowed a comparison across dishonesty paradigms (see Abeler, Nosenzo, & Raymond, in press). The standardization of dishonesty scores uses a score of 1 to indicate that participants were dishonest in the most self-serving way possible, whereas a score of 0 indicates that participants were fully honest. For example, in the matrix paradigm that entails five unsolvable matrices (e.g., Yam, Chen, & Reynolds, 2014), claiming to have solved four correctly yields a standardized lying score of 0.8. Hence, Meta-Analysis 2 included only dishonesty tasks with a continuous outcome measure and a well-defined maximum performance score that could be obtained dishonestly. Together, these measures provide a comprehensive overview of the most current methods for studying intuitive honesty/dishonesty. Using this procedure, we identified 73 studies (30 of which were unpublished when we conducted the meta-analyses) with 12,711 participants for Meta-Analysis 1 and 50 studies (22 unpublished) with 6,473 participants for Meta-Analysis 2.

Coding procedure

The assessment of eligibility and the ensuing coding was performed by two independent coders. One author (N. C. Köbis) extracted and coded the data from all included studies, and a second blind coder independently coded the extracted data. Disagreements between coders were resolved by consensus after consulting with at least one of the other authors.

Moderators

In addition to standard demographic information such as the percentage of female participants, age, location, and type of sample (students or general population), we coded the characteristics of the intuition manipulations and dishonesty paradigms—details and results are reported in the Supplemental Material. We further coded the key proposed moderator social harm to indicate whether the victim of participants’ dishonesty was abstract (e.g., the researcher’s budget; k = 57) or concrete (e.g., another participant; k = 16). To conduct the latter analysis, we split two studies (Experiments 2 and 3 from Pitesa et al., 2013) into separate independent samples per their experimental manipulation of the victim of dishonesty.

Analysis

Using the metafor package (Viechtbauer, 2010) for the R software environment (Version 3.5.1; R Core Team, 2018), we estimated the overall effect of the first meta-analysis with a random-effects logistic regression model using a treatment-arm correction as a correction method for cells containing small numbers or zeros. We used the log-transformed odds ratio of dishonesty in the intuition condition compared with the control condition as a dependent variable. In line with common recommendations, the second meta-analysis used a random-effects model with the bias-corrected standardized mean difference (Hedges’s g) of a standardized lying score between the intuition condition and the control condition as a dependent variable (for further details, see Supplemental Material). To test the expected moderation effect of social harm, we conducted mixed-effects meta-regression analyses as well as random-effects subgroup analyses. In both meta-analyses, we estimated the interstudy heterogeneity of variance (τ2) with the restricted maximum-likelihood estimator.

Publication bias and questionable research practices

Given the large proportion of unpublished studies in the sample (41.1%), we tested for publication bias within our sample by evaluating whether the distribution of significant findings differs across published and unpublished studies. Furthermore, we conducted cumulative meta-analyses using the most accurate study as a starting point (Ioannidis & Lau, 2001) as well as p-curve analyses using p values of the main effects included in the meta-analyses (Simonsohn, Simmons, & Nelson, 2015) to assess evidential value. The large interstudy heterogeneity in our sample undermines the reliability of standard procedures to test for publication bias, such as funnel-plot asymmetry, trim and fill, precision-effect test (PET), precision effect estimate with standard error (PEESE), and Egger’s regression (see Carter, Schönbrodt, Gervais, & Hilgard, 2019; Sterne et al., 2011). We report the results of these standard procedures in the Supplemental Material.

Meta-Analysis 1: Frequency of Dishonesty

Results

Intuitive dishonesty and social harm

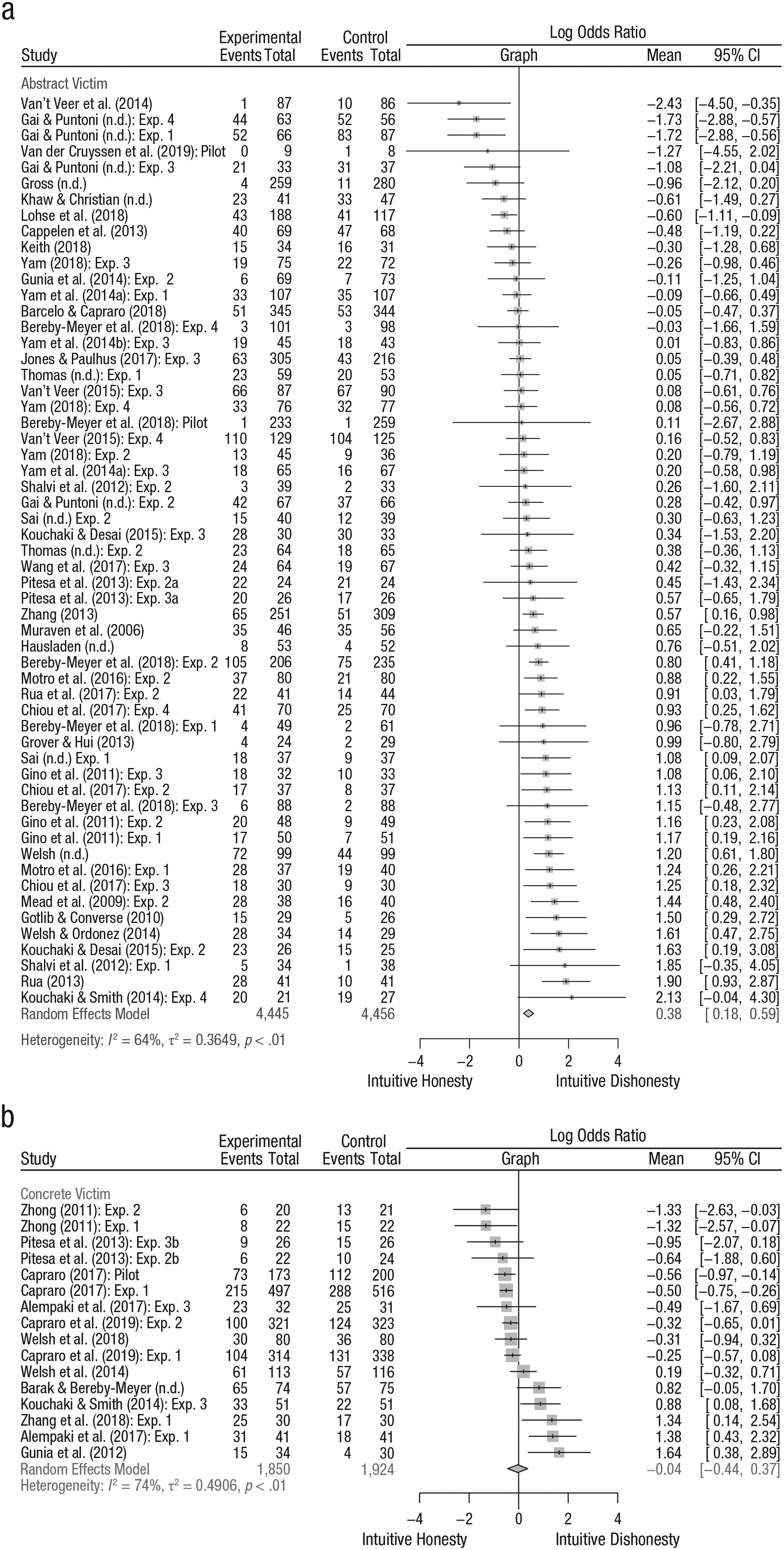

Across all 73 studies, the overall estimate of a random-effects logistic regression model reveals a significant intuitive-dishonesty effect, log odds ratio (OR) = 0.28, 95% confidence interval (CI) = [0.093, 0.473], Z = 2.92, p = .0035. However, because the ratio of studies with and without a concrete victim was not evenly distributed, the overall odds ratio was not a useful summary estimate of the data. We therefore tested the social-harm moderation effect using a mixed-effects meta-regression model, which revealed a moderation effect of Z = −1.94, p = 0.052, 95% CI = [−0.854, 0.003], with the remaining heterogeneity being τ2 = 0.39 (SE = 0.10). A random-effects subgroup analysis revealed an intuitive-dishonesty effect for studies in which dishonesty affects an abstract victim, log OR = 0.38, 95% credibility interval (CrI) = [−0.86, 1.63], Z = 3.64, p = .0004. Thus, 95% of the observed effect sizes fall within that range. However, for studies in which dishonesty affects a concrete victim, no significant effect appears, log OR = −0.04, 95% CrI = [−1.32, 1.24], Z = −0.21, p = .861 (see Fig. 2). Taken together, the odds for dishonesty are 46% higher in the intuition condition compared with the control condition when the victim of dishonesty is abstract but extremely similar when the victim of dishonesty is concrete.

Forest plot of the estimated effects of the first meta-analysis for the subgroups of (a) abstract victims and (b) concrete victims. In the graph column, the vertical line inside the gray box represents the mean value, the size of the gray box represents the study’s weight in the meta-analysis, and the horizontal lines represent the 95% confidence interval. The diamond at the bottom represents the overall effect and its 95% confidence interval.

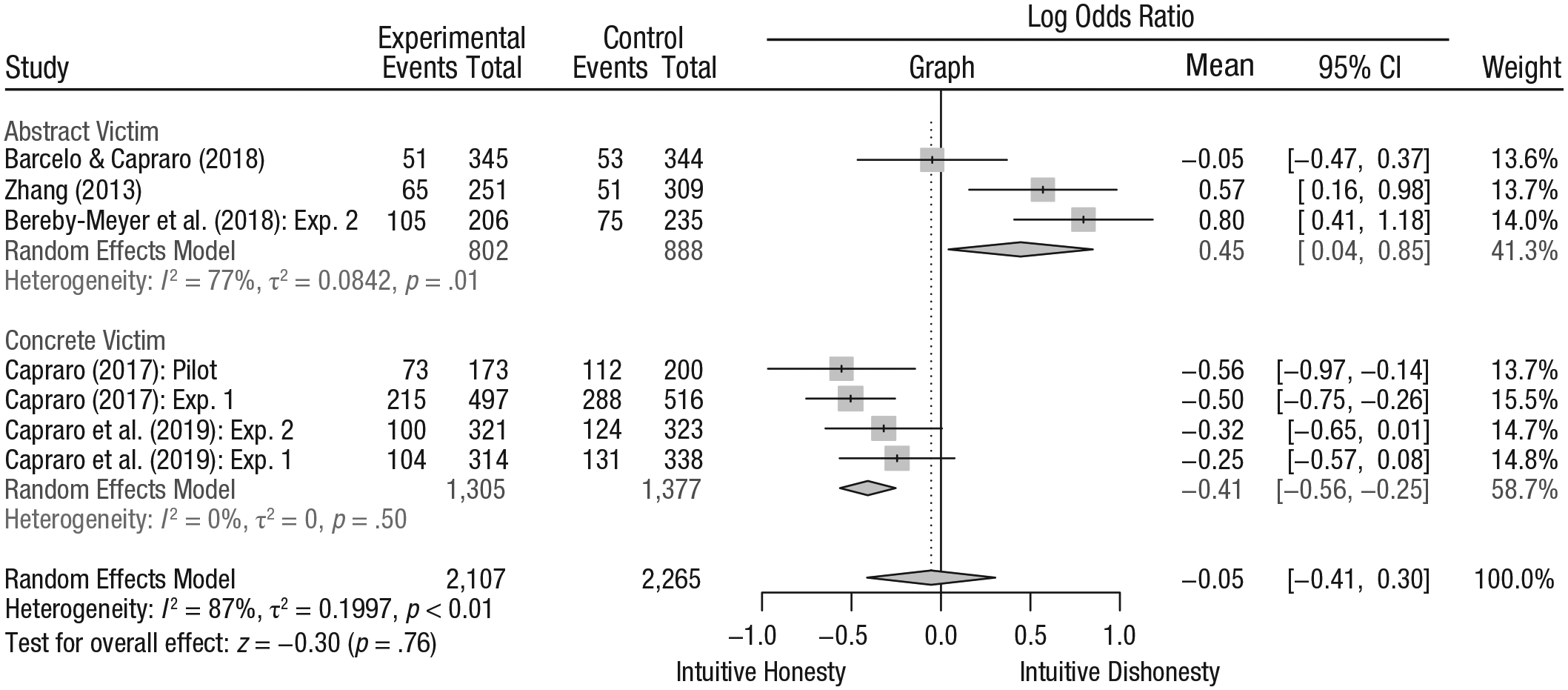

Because of the uneven distribution of studies using concrete and abstract victims, we also conducted a Top10 analysis (Stanley, Jarrell, & Doucouliagos, 2010), which restricts the sample to the 10% of studies with the smallest standard error—a method that often provides a more accurate estimate of the overall effect than relying on the entire sample (see Nuijten, Van Assen, Veldkamp, & Wicherts, 2015). Running the meta-analysis selecting only the top decile of studies with the lowest standard error (k = 7, n = 4,372; 34% of the entire sample) reveals a nonsignificant main effect for intuition, log OR = −0.05, 95% CrI = [−1.08, 0.98], Z = −0.26, p = 0.78, and a full crossover moderation effect, Z = −4.02, p < .0001, confirming an intuitive dishonesty for abstract victims, log OR = 0.45, 95% CrI = [−0.06, 0.96], Z = 2.68, p = .007, compared with an intuitive-honesty effect for concrete victims, log OR = −0.41, 95% CrI = [−0.87, 0.07] Z = −3.09, p = 0.002; see also Figure 3.

Forest plot of the most precise 10% of estimated effects of the first meta-analysis for the subgroups of abstract victims and concrete victims. In the graph column, the vertical line inside the gray box represents the mean value, and the horizontal lines represent the 95% confidence interval. The dotted vertical line represents the overall estimated effect. The diamond at the bottom represents the overall effect and its 95% confidence interval.

Heterogeneity

Overall estimates of heterogeneity indicate that the effect sizes significantly differ across studies, Q(72) = 258.38, p < .001. The absolute variance is estimated to be τ2 = 0.42, and the ratio between true and overall heterogeneity is estimated to be I² = 72.1%, 95% CI = [64.8%, 77.9%]. These estimates suggest that approximately 70% of the observed variance in the effect sizes is due to real differences—which represents a medium-to-large degree of heterogeneity according to Higgins, Thompson, Deeks, and Altman (2003).

Additional analyses

We conducted several analyses using alternative meta-analytical techniques to account for interstudy heterogeneity such as the Hartung-Knapp adjustment, Peto odds ratios, and arsine meta-analyses. Moreover, we used different correction methods for small or zero cell sizes by following a standard approach to add an increment of 0.5 to 0 cell sizes as well as using different classification criteria of liars and different lying estimations altogether. These analyses provide qualitatively similar results and are reported in detail in the Supplemental Material. Additional analyses testing the other moderators outlined above (see Method section) are also described in the Supplemental Material.

Publication bias and questionable research practices

The large proportion of unpublished studies included in the meta-analysis (41.1%) allowed us to test whether significant findings are more likely to be published than nonsignificant findings. A Fisher’s exact test comparing the distribution of significant and nonsignificant findings across unpublished and published studies revealed no significant differences (OR = 1.72, p = .33), indicating no evidence of bias among the included studies—however, these results must be interpreted with caution because it remains unknown whether both samples are represented in (similarly) unbiased ways (Kepes, Banks, McDaniel, & Whetzel, 2012).

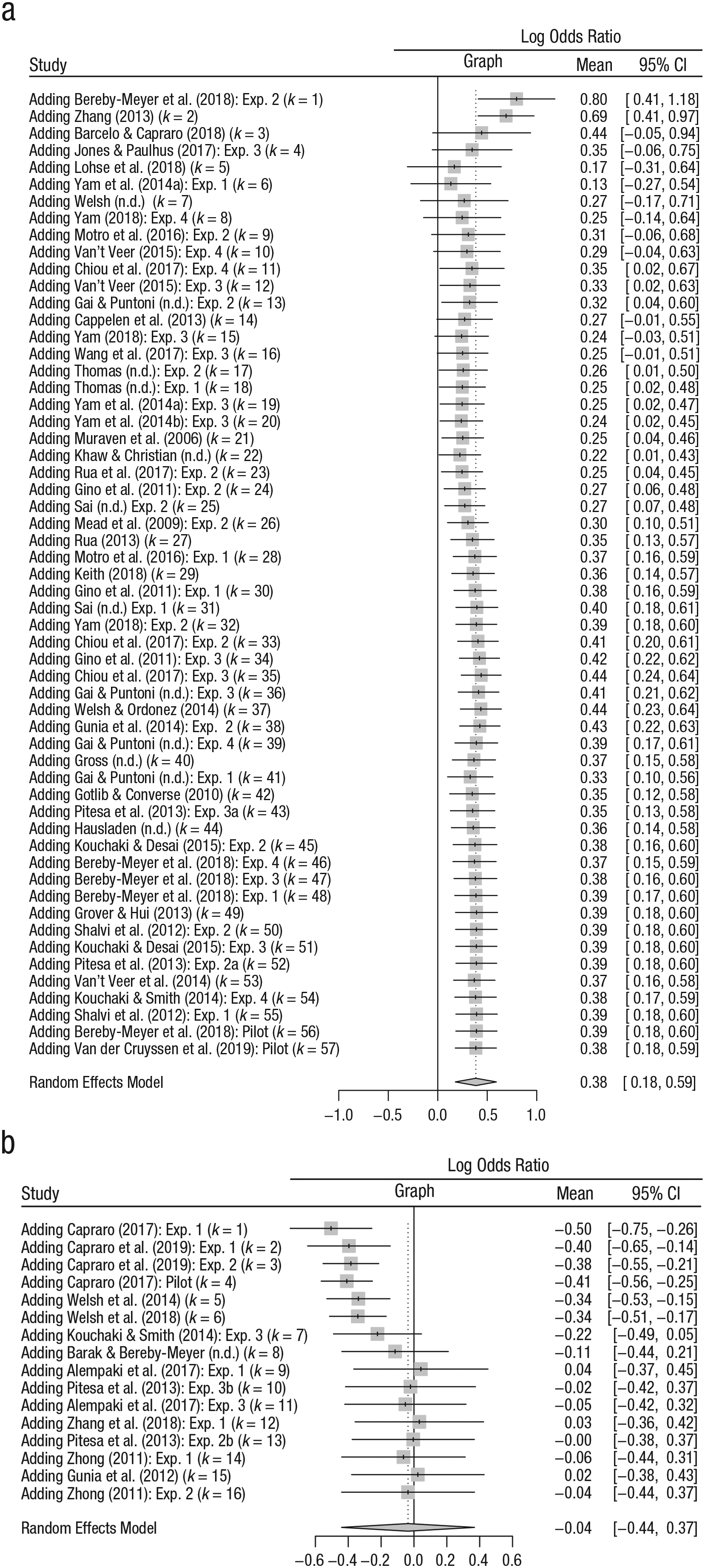

Next, we conducted cumulative meta-analyses for both social-harm conditions. The cumulative meta-analysis technique first calculates the effect with the most precise study (i.e., smallest standard error) and then adds the remaining studies and recalculates the overall estimate for each study using a random-effects weighting scheme. An indication of publication bias is the suppression of small studies with small effect sizes, which becomes visible if the overall effect swiftly drifts toward a larger overall effect when smaller studies are added.

For studies using an abstract victim, the effect with the most precise estimate, log OR = 0.80, exceeds the overall estimate, log OR = 0.38. Contrary to the pattern indicative of publication bias, smaller studies reduce rather than inflate the overall effect. Moreover, the direction of the effect points toward intuitive dishonesty for all studies (see Fig. 4a). For studies with a concrete victim, the most precise study indicates an intuitive-honesty effect, log OR = −0.50. With the inclusion of smaller studies, the overall estimate moves toward a null effect, log OR = −0.04. The shift toward nonsignificance suggests that smaller, more imprecise studies sway the overall estimate toward a null effect (see Fig. 4b). Hence, the results of both cumulative meta-analyses contradict the pattern expected if small study effects indicate potential publication bias.

Cumulative forest plots for studies using (a) abstract victims and (b) concrete victims. The most accurate effect was chosen as a first study. The outcome measure is the log-transformed odds ratio of liars in the intuition and control conditions. In the graph column, the vertical line inside the gray box represents the mean value, and the horizontal lines represent the 95% confidence interval. The dotted vertical line represents the overall estimated effect. The diamond at the bottom represents the overall effect and its 95% confidence interval.

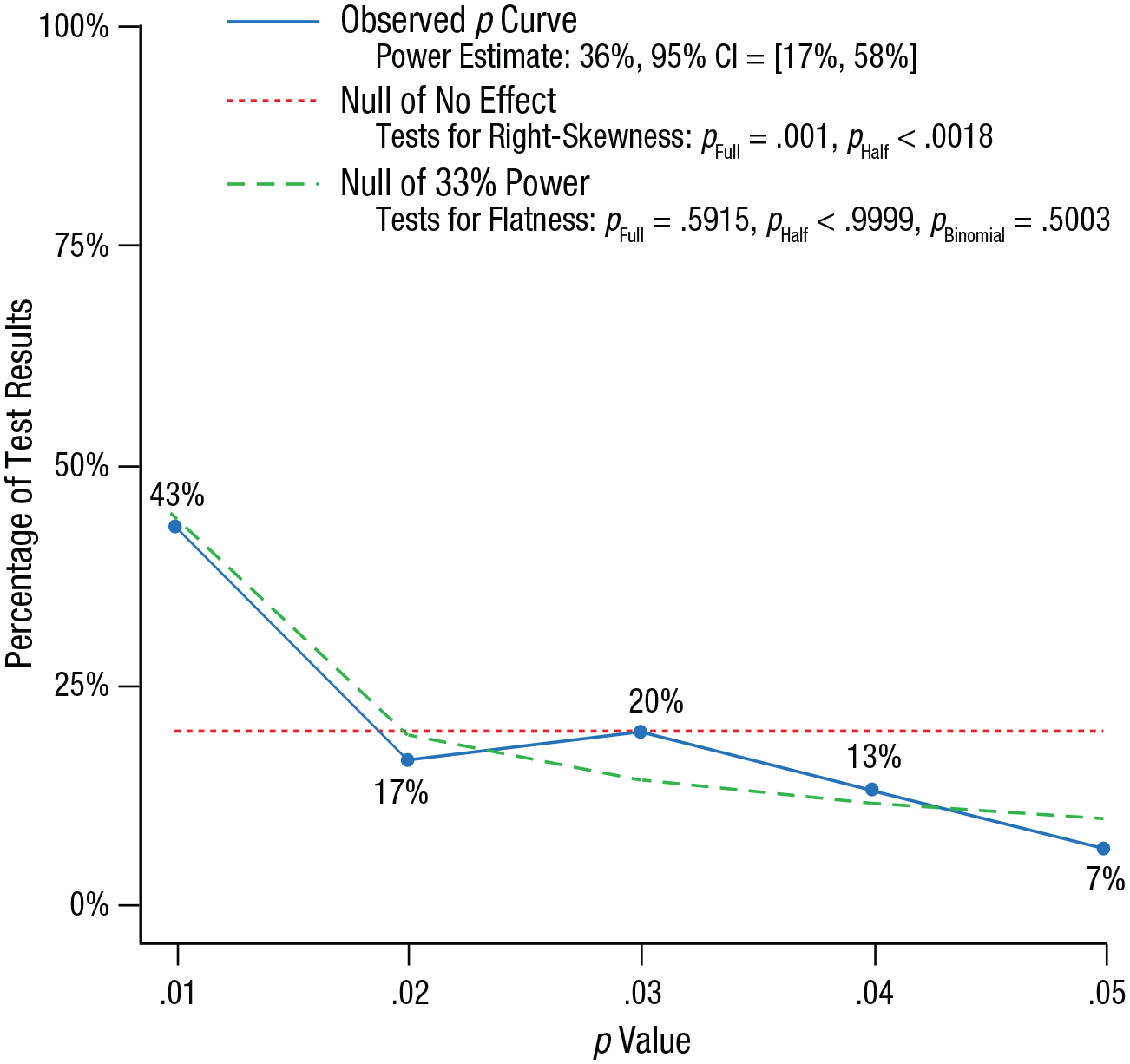

To assess whether the effect sizes included in the meta-analysis have evidential value, we conducted a p-curve analysis (Simonsohn et al., 2015). The distribution of p values stemming from all Z values of the 73 studies included in the first meta-analysis was significantly right-skewed (full curve: Z = −3.89, p < .0001; half curve: Z = −2.91, p = .002) and thus suggests evidential value (see also Fig. 5). Note that we imputed the Z values mostly stemming from the recalculations of the original data and did not use the test statistics provided in the original manuscript because only a small proportion of studies (32.8%) hypothesized an intuitive (dis)honesty main effect and tested it by comparing the percentage of liars in the intuition condition with that in the control condition (e.g., by χ2 statistics). Thus, the analysis is useful for confirming the evidential value of the data but not for assessing questionable research practices. Other common procedures for assessing publication bias are undermined by the large interstudy heterogeneity; thus, we report and discuss those in the Supplemental Material.

Observed p curve for all studies in the first meta-analysis. The observed p curve includes 30 statistically significant (p < .05) results, of which 21 were p < .025. Forty-three additional results were entered but excluded from the p curve because they were p > .05. The blue line shows the observed p curve, the dashed red line shows the uniform distribution of the p values, and the green line plots the right-skewed distribution for a power level of 33%.

Discussion

Drawing on 73 original studies that experimentally manipulated intuition and behaviorally assessed dishonesty, the results reveal an intuitive-dishonesty effect when harm was inflicted on abstract others—for these tasks, an intuitive mind-set heightened the chances of dishonesty. Yet this intuitive-dishonesty effect was not present when lying caused harm on a concretely identifiable other person. With regard to potential publication bias, although it is generally safe to assume that nonsignificant findings were less likely to be published and thus do not enter the meta-analysis, there is little evidence that our results are artifacts of such biases. Contrary to the pattern expected for the existence of publication biases, cumulative meta-analyses suggest that small studies reduce the intuitive-dishonesty effect for abstract victims while potentially suppressing an intuitive-honesty effect for concrete victims. For studies with abstract victims of dishonesty, the overall effect remains significant with the inclusion of smaller studies, which underlines the validity of the intuitive-dishonesty effect. Possible invalidation of the findings due to publication bias is further reduced by the fact that a large proportion of the studies is unpublished and significant findings are evenly distributed across publication status. Finally, a p-curve analysis drawing on all 73 effect sizes confirms that the overall sample contains evidential value.

Meta-Analysis 2: Magnitude of Dishonesty

When assessing dishonesty, a high average level of dishonesty can result either from a few liars lying a lot or from many liars lying just a bit. The second meta-analysis aimed to test the extent to which people lie and whether intuition leads to larger lies compared with a control setting. Thus, we compared the magnitude of lying for a standardized lying score between the intuition and control conditions.

Results

Intuitive honesty/dishonesty and social harm

The aggregate result of 50 experiments included in the second meta-analysis confirms and extends the results of the first meta-analysis. The overall estimate suggests an intuitive-dishonesty effect, g = 0.23, 95% CrI = [0.137, 0.328], Z = 4.764, p < 0.0001, however, akin to the first meta-analysis, studies using abstract victims (k = 45) and concrete victims (k = 5) were unevenly distributed. Because the overall estimate might provide a potentially skewed estimation, we conducted mixed-effects meta-regression models that revealed a moderation effect of social harm, Z = −1.987, p = .047. The residual remaining heterogeneity was τ2 = 0.073 (SE = 0.02).

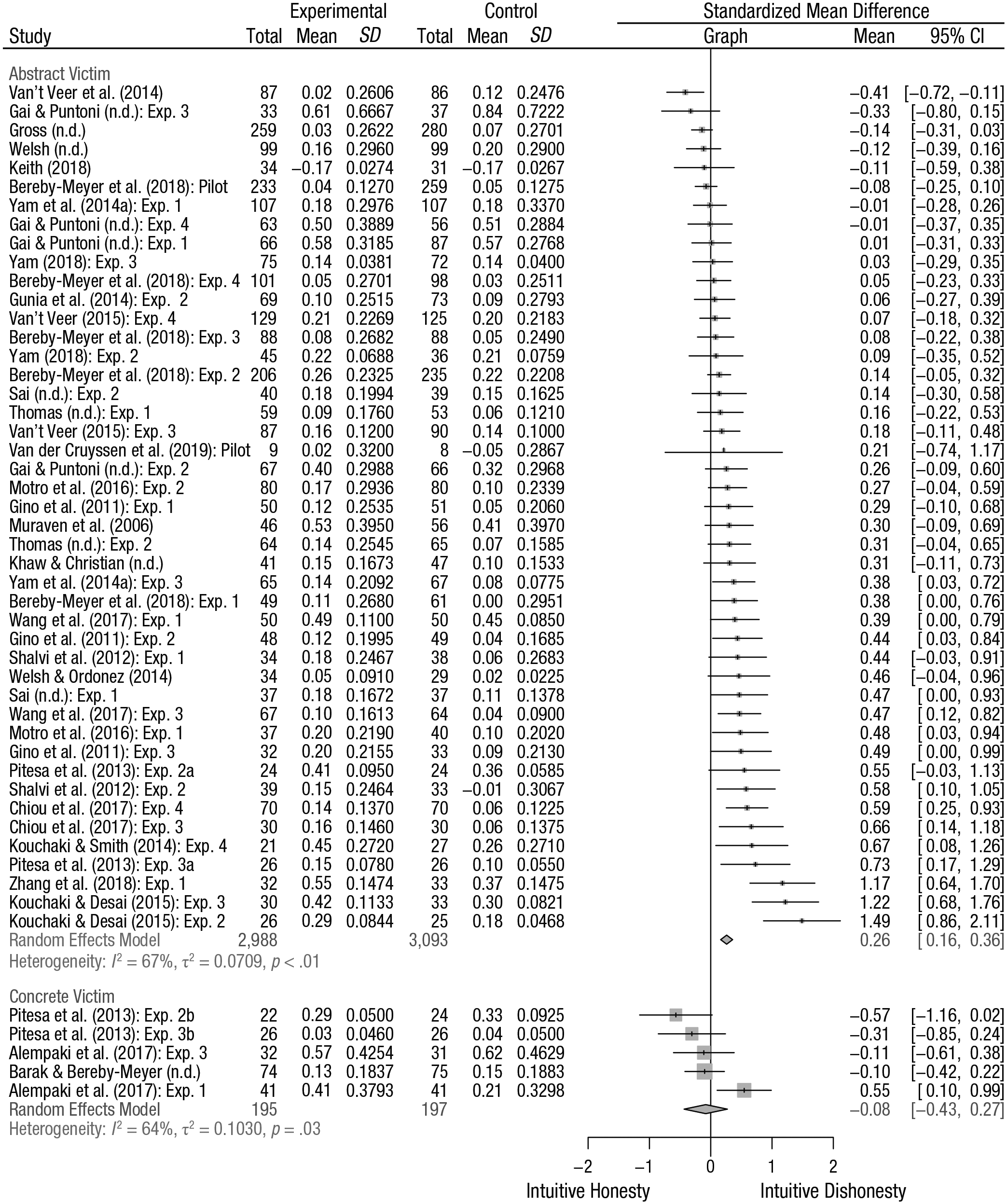

The random-effects subgroup analysis shows an intuitive-dishonesty effect when an abstract victim is harmed by dishonesty, g = 0.261, 95% CrI = [−0.280, 0.802], Z = 5.186, p < .0001, but no such effect when a concrete victim is harmed by dishonesty, g = −0.076, 95% CrI = [−0.696, 0.544], Z = −0.470, p = .63. Overall, we therefore conclude that an intuitive mind-set increases the magnitude of lying for tasks with abstract victims, yet this intuitive-dishonesty effect disappears when a concrete victim is harmed by dishonesty (see also Fig. 6). For the second meta-analysis, the Top10 analysis cannot provide useful information about a moderation effect because no study using a concrete victim is among the most precise decile of estimates. We report the Top10 analysis for the second meta-analysis in the Supplemental Material.

Forest plot showing the overall estimated effect for the second meta-analysis using a random-effects model and the effects estimated for the subgroups using a mixed-effects model. The outcome variable is the bias-corrected standardized mean difference of lying between the intuition and control conditions. In the graph column, the vertical line inside the gray box represents the mean value, the size of the gray box represents the study’s weight in the meta-analysis, and the horizontal lines represent the 95% confidence interval. The diamond at the bottom represents the overall effect and its 95% confidence interval.

Heterogeneity

Again, heterogeneity estimators reveal that there is substantial variation in the effect-size distribution, Q(49) = 150.32, p < .0001, whereas the overall variance is estimated to be τ2 = 0.06. In total, 67.4% of the variance in the effect sizes stems from true heterogeneity, I² = 67.4, 95% CI = [56.3, 75.7].

Publication bias and questionable research practices

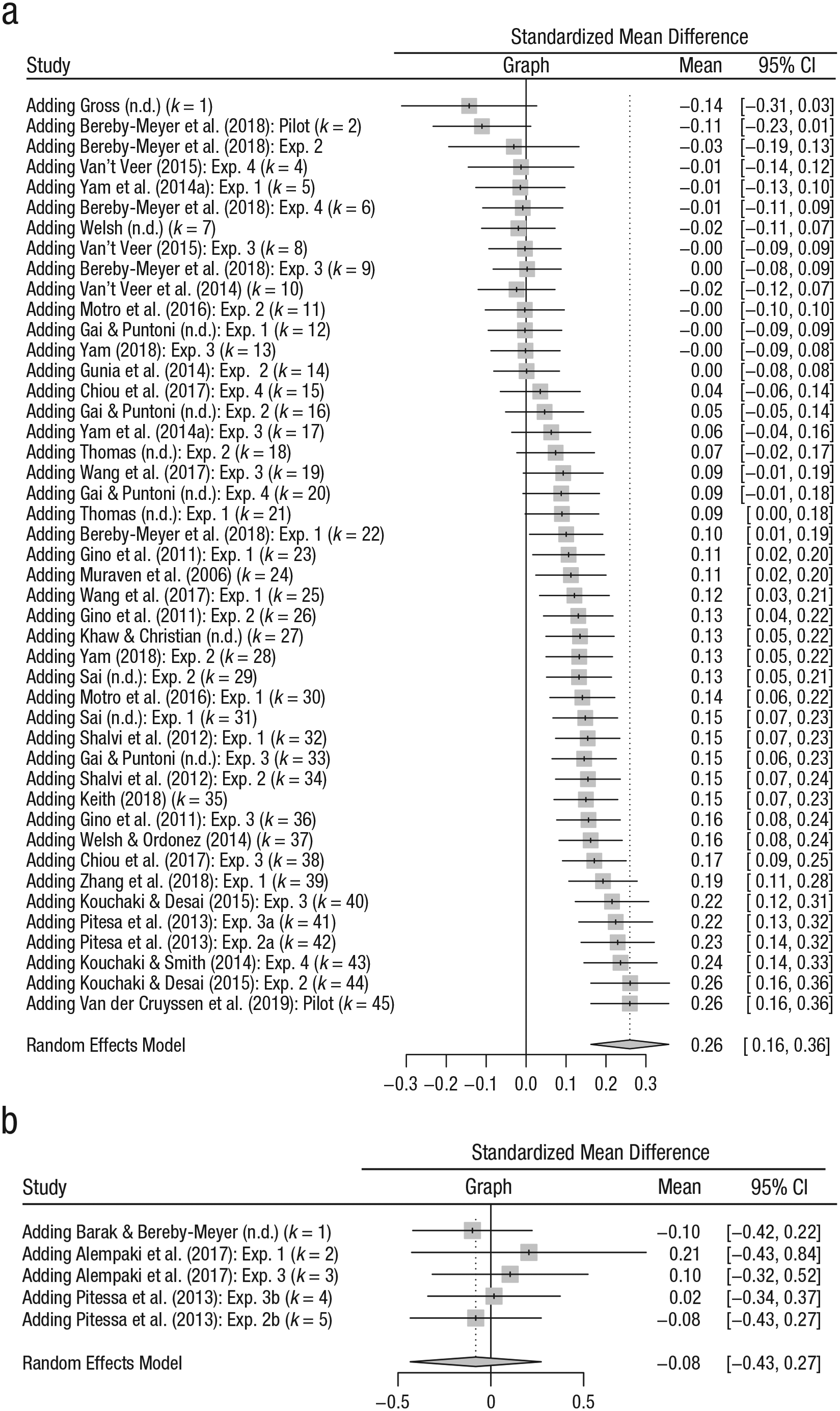

A Fisher’s exact test comparing the distribution of significant and nonsignificant results across published and unpublished studies reveals that significant findings are not evenly distributed in the sample (OR = 5.30, p = .016). The odds of a significant study being published are 5.3 higher than the odds of a nonsignificant study being published. This finding may stem from publication bias. We also conducted two separate cumulative meta-analyses, one for studies using an abstract victim, the other for studies using a concrete victim. For studies using an abstract victim, the initial estimated effect (Hedges’s g = −0.14) differs substantially from the overall estimated effect (Hedges’s g = 0.26). Although the effect of the most precise study points toward intuitive honesty, including smaller studies continually moves the overall effect toward intuitive dishonesty (see Fig. 7a). For studies using a concrete victim, the estimated effect for the initial study (Hedges’s g = −0.10) as well as the overall estimate suggest a null effect (Hedges’s g = 0.08; Fig. 7b). Taken together, the pattern for studies with an abstract victim suggests the existence of a small-study effect (i.e., the phenomenon that smaller studies sometimes show different, often larger, treatment effects than larger ones)—one potential reason is publication bias. For studies with concrete victims, there is little indication of small study effects.

Cumulative forest plot for the second meta-analysis using a random-effects model for studies using abstract victims (a) and studies using concrete victims (b). In the graph column, the vertical line inside the gray box represents the mean value, the size of the gray box represents the study’s weight in the meta-analysis, and the horizontal lines represent the 95% confidence interval. The dotted vertical line represents the overall estimated effect. The diamond at the bottom represents the overall effect and its 95% confidence interval.

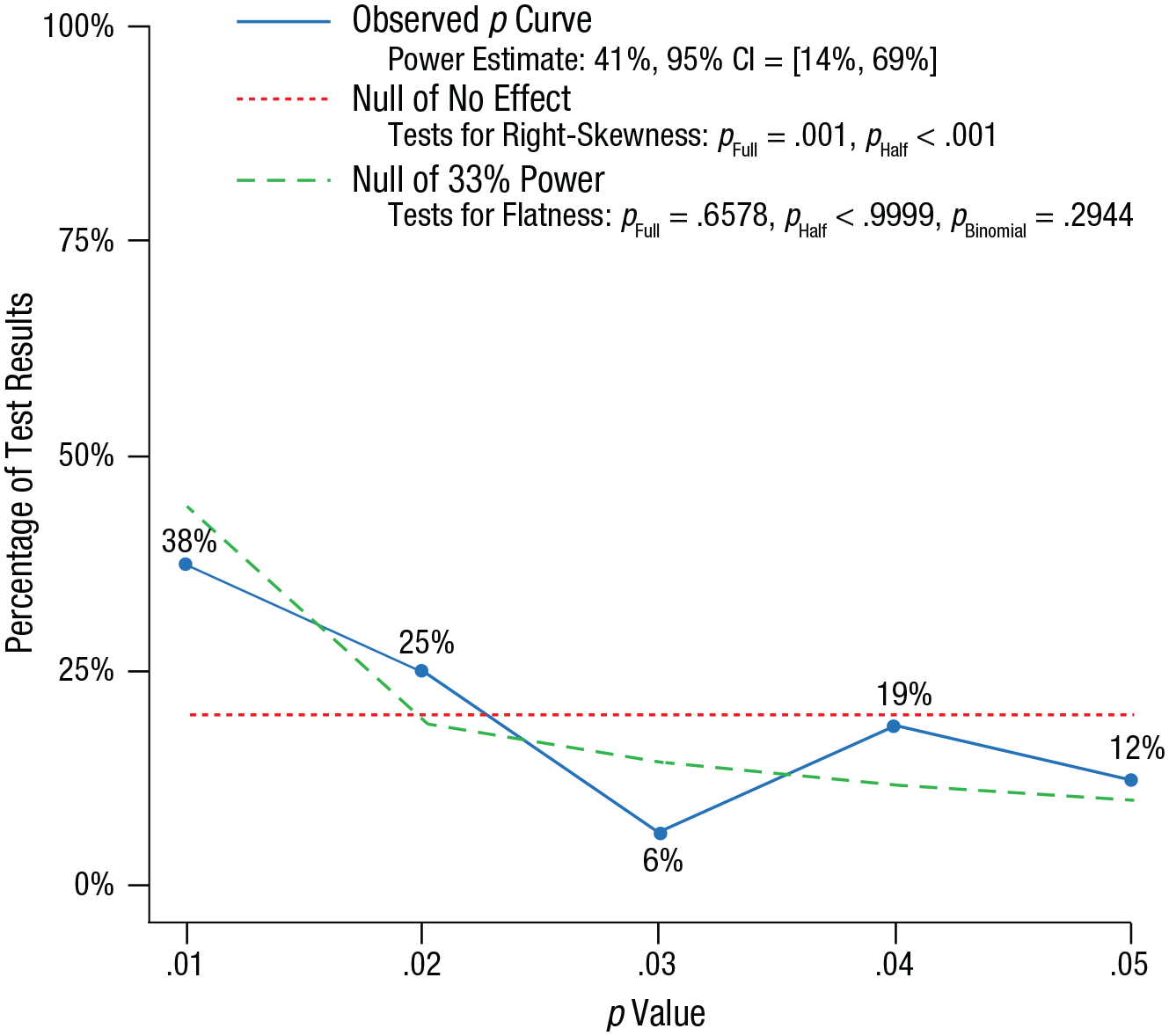

To assess the evidential value of the effect sizes in the sample, we again conducted a p-curve analysis by imputing all 50 Z scores. The results reveal that both the full curve (Z = −3.08, p = .001) and the half curve (Z = −4.00, p < .0001) are significant, which suggests evidential value of the obtained effects (see Fig. 8). We again did not conduct a p-curve analysis of the test statistics reported in the original manuscripts because only 14% of the overall sample qualified. Taken together, the fact that most of the effects included in the meta-analysis (86.1%) are based on our original calculations and the significantly right-skewed p curve of the sample suggest evidential value of the findings.

Observed p curve for all studies included in the second meta-analysis. The observed p curve includes 16 statistically significant (p < .05) results, of which 10 are p < .025. Thirty-four additional results were entered but excluded from the p curve because they were p > .05. The blue line shows the observed p curve, the dashed red line shows the uniform distribution of the p values, and the green line plots the right-skewed distribution for a power level of 33%.

Discussion

The results of the second meta-analysis corroborate those of the first meta-analysis: The overall estimate of 50 studies supports the social-harm moderation. That is, we found an intuitive-dishonesty effect when harm was inflicted on abstract others—compared with participants in a control condition, those who adopted an intuitive mind-set lied to a larger extent in these setups. People intuitively engage in more dishonesty when no concrete victim is harmed by it, yet such an effect is not observed when a concrete victim suffers. That said, the result of the moderation analysis has to be interpreted with caution because of the small number of studies that used a concrete victim (see Kepes et al., 2012). We found a higher proportion of significant findings among the published studies, which indicates that nonsignificant effects are less likely to be published. Cumulative meta-analyses reveal that small studies with larger imprecision influence the studies with abstract victims more strongly than those using a concrete victim. However, the large proportion of unpublished studies in our sample reduces the danger that the file-drawer problem invalidates the obtained findings. Finally, a p-curve analysis again emphasizes the evidential value of the effect sizes in the sample.

General Discussion

The current research set out to solve a puzzle: Are people intuitively honest or dishonest? We conducted two meta-analyses to gain a more definite answer than can be gained from a single experiment (Lakens, Hilgard, & Staaks, 2016). Confirming previous theorizing (Bereby-Meyer & Shalvi, 2015; Verschuere & Shalvi, 2014), we found an intuitive-dishonesty effect in anonymous settings in which punishment is not a threat and dishonesty harms an abstract victim. In these settings, self-interest leads to more people lying (Meta-Analysis 1) and people lying more (Meta-Analysis 2). In addition to self-interest, a second force influences whether people intuitively resist or succumb to lie self-servingly: the social heuristic to do no harm (Baron, 1996). In settings in which dishonesty harms concrete others, we did not observe such an intuitive-dishonesty effect.

When facing ethical dilemmas between dishonestly maximizing self-interest and following normative rules of conduct, people often seek to maintain a positive (self- and public) image and restrain their self-interest to a level that allows them to both feel and appear to be honest (Abeler et al., in press). Adding to this literature, we present the first meta-analyses on the interplay of dual-process models and behavioral ethics. Our results suggest that “thinking fast” amplifies the force of self-interest leading to ethical rule violations, as long as those violations do not directly harm others.

Providing first insights into the contextual factors of the intuitive-dishonesty effect, our moderation analyses provide suggestive evidence that the relationship between intuition and dishonesty is shaped by social harm. In accordance with previous theorizing, particularly the social heuristics hypothesis (Bear & Rand, 2016; Rand, 2016) our data are in line with the idea that salient consequences for others have a substantial impact on people’s intuitive decisions. In particular, prior work has shown that cooperating with others is an intuitive inclination in many social-dilemma-type situations (e.g., Halali, Bereby-Meyer, & Meiran, 2014; Rand, 2016). Our results contribute to this stream of literature, suggesting that the automatic tendency to cooperate might cancel out the selfish urges of dishonesty when knowing that lying comes at a price for a concrete other.

The meta-analyses draw on laboratory research that raises the question of what these results can tell us about intuitive dishonesty outside the lab. For one, recent empirical evidence underlines the external validity of lying in economic games as a proxy for real-life dishonesty. Lying in a controlled laboratory context correlates with a variety of ethical rule breaking outside the lab, ranging from academic fraud (Cohn & Maréchal, 2017) and fare dodging (Dai, Galeotti, & Villeval, 2017) to deceptive market practices (Kröll & Rustagi, 2016). People frequently encounter such situations in daily life, often deciding quickly without much thought. Like in the experiments included in the current meta-analyses, these temptations mostly entail relatively small (financial) incentives. Although each individual act might seem mundane and merely harm vague entities such as “the bus company” when fare dodging or “society as a whole” when fudging a tax payment, the aggregated costs are immense (Gino, 2015). Our results provide the first aggregated evidence that deciding intuitively might lead to more self-favoring dishonesty when those suffering from dishonesty are vague and difficult to identify with.

Another line of work to which our results relate is the identified-victim effect (Jenni & Loewenstein, 1997; Kogut & Ritov, 2005), which suggests that people act more prosocially toward identified rather than unidentified others. In our meta-analyses, we found evidence that the type of victim, concrete or vague, moderates the effect of intuition on self-serving dishonesty. This finding opens up various avenues for future work to explore. In many tasks included in the meta-analyses, lying that hurts a concrete victim marks a strategic choice. The few studies in the meta-analyses that disentangled the social consequences from strategic deception indicate a full moderation of intuition and social harm (Experiments 2 and 3 from Pitesa et al., 2013). To provide additional support for the moderating role of social harm on the link between intuition, we particularly encourage preregistered studies that experimentally manipulate concrete victims compared with abstract victims. In this way, future research can contribute to overcoming the uneven distribution of studies in the current meta-analyses.

Moreover, social factors such as the relationship between the person benefiting and the person suffering from lying likely matter. Previous research has shown that people willingly lie to favor their own in-group (Shalvi & De Dreu, 2014). Does highlighting social-identity features of the liar and the victim lead people to engage in intuitive dishonesty when doing so harms an out-group member but not an in-group member? Conversely, might a concrete representation of the victim of seemingly “victimless crimes” such as corruption curb the intuitive tendency to break (ethical) rules (Köbis et al., 2016)?

Limitations worth noting are the low number of preregistered studies included in both meta-analyses, the unbalanced sample distribution across the key moderator of social harm, and the large methodological heterogeneity, both in terms of intuition manipulation and dishonesty tasks. These limitations undermine the power of moderation and publication-bias analyses. It is generally contested whether a statistical method can detect publication bias and, if so, which one (Carter, et al., 2019). It thus remains unknown whether the strategic nonpublication of empirical results undermines the accuracy of the obtained aggregated estimates. Although we found mixed evidence for small study effects based on cumulative meta-analyses, the fact that large proportions of both meta-analyses draw on unpublished studies (> 40%) reduces the concern about publication bias to some extent. In addition, a large proportion of effect sizes stems from the recalculation of original data (> 65%), and two p curves of the obtained effect sizes in both meta-analyses suggest evidential value.

Conclusion

Understanding whether honesty is intuitive requires a closer look at the cognitive, motivational, and situational factors in which decisions are made. In this current age of distraction, people frequently decide without much thought (Williams, 2018). Not surprisingly, a large collection of behavioral studies has used experimental manipulations to trigger an intuitive mind-set and subsequently give people the chance to pursue their self-interest through dishonest means. Results from two meta-analyses provide the first aggregated evidence of this literature and suggest that people’s intuitive response is to selfishly lie, but only when no concrete other is harmed.

Supplemental Material

Kobis_Supplemental_Material – Supplemental material for Intuitive Honesty Versus Dishonesty: Meta-Analytic Evidence

Supplemental material, Kobis_Supplemental_Material for Intuitive Honesty Versus Dishonesty: Meta-Analytic Evidence by Nils C. Köbis, Bruno Verschuere, Yoella Bereby-Meyer, David Rand and Shaul Shalvi in Perspectives on Psychological Science

Footnotes

Acknowledgements

We thank Isabel van der Vegt and Raoul Grasman for their assistance with the statistical analysis.

Action Editor

Laura A. King served as action editor for this article.

Author Contributions

S. Shalvi, Y. Bereby-Meyer, B. Verschuere, and D. Rand developed the study concept. N. C. Köbis collected and analyzed the data and drafted the manuscript. All of authors contributed to the study design, provided critical revisions, and approved the final version of the manuscript for submission.

Declaration of Conflicting Interests

The author(s) declared that there were no conflicts of interest with respect to the authorship or the publication of this article.

Funding

This research was funded by European Research Council Grant ERC-StG-637915 and Israel Science Foundation Grant 1813/16.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.