Abstract

The development of competencies known as 21st-century skills are garnering increasing attention as a means of improving teacher instructional quality. However, a key challenge in bringing about desired improvements lies in the lack of context-specific understanding of teaching practices and meaningful ways of supporting teacher professional development. This paper focuses on the need to measure the social quality of teaching processes in a contextualized manner. We do so by highlighting the efforts made to develop and measure teacher practices and classroom processes using the Teacher Instructional Practices and Processes System© (TIPPS) in three different contexts: Uganda (secondary), India (primary), and Ghana (pre-school). By examining how such a tool can be used for teacher feedback, reflective practice, and continuous improvement, the hope is to pave the way toward enhanced 21st-century teacher skills and, in turn, 21st-century learners.

Keywords

Classroom instructional quality (and its relationship to learning outcomes) can serve as a critical lever for educational change. However, there is much still to be learned about what actually goes on inside classrooms, particularly in low- and middle-income countries (LMICs). Though an abundance of observational instruments now exist, most have not undergone rigorous methodological development, and even fewer have been used across different contexts, cultures, and interventions (Bruns, 2011; Crouch, 2008). Many of these observational instruments have taken the form of checklists or time on task measures, which have traditionally been more popular for their cost-effectiveness and ease of use for intervention studies. Nevertheless, a recent comparative study of observational instruments by Bruns et al. (2016) states that time on task measures are too coarse to be used for teacher feedback or performance evaluation. Furthermore, time on task measures are unable to distinguish key aspects of the 21st-century classroom environment such as student engagement, the effective use of instructional strategies, or the emotional factors that support child development (Seidman et al., 2018). Thus, it follows that there is a need to turn away from checklists and time on task measures.

Global interest in how teaching practices and classroom processes affect student learning outcomes and their psychosocial development is growing – and with good reason. Instructional quality has proven to be more strongly associated with child learning than structural aspects of schools in both Western (Pianta et al., 2009) and developing countries (Chavan and Yoshikawa, 2013; Patrinos et al., 2013; Yoshikawa and Kabay, 2015). However, the breath of skills required for quality student learning, and concomitantly quality teaching, call for essential competencies and skills beyond literacy and numeracy, otherwise known as 21st-century skills.

The 21st-century skillset is generally understood to encompass a range of competencies, including critical thinking, problem solving, creativity, meta-cognition, communication, digital and technological literacy, civic responsibility, and global awareness (for a review of frameworks, see Dede, 2010). And nowhere is the development of such competencies more important than in developing country contexts, where substantial lack of improvements in learning outcomes has suggested that the task of improving instructional quality is urgent. A challenge in bringing about the desired improvements lies in the lack of context-specific understanding of teaching practices as well as meaningful ways of supporting teachers in their professional development (Seidman et al., 2018; UNESCO, 2016; Wolf et al., 2018). In other words, how can we improve teacher’s 21st-century skills to help produce 21st-century learners?

Feedback of performance has been demonstrated to be a powerful tool in improving practice in a wide array of arenas from individual behavior to organizational performance (Butler and Winne, 1995). In recent years, there has been ample demonstration of the power of feedback in teaching and other human services (Allen et al., 2011; Becker et al., 2013; Cappella et al., 2012; Glisson et al., 2006; Smith and Akiva, 2008). This paper focuses on: (a) the need to measure the social quality of teaching processes in a contextualized manner; (b) the efforts that we have made to develop and measure, with reliability and concurrent validity, teacher practices and classroom processes in secondary, primary, and pre-school classrooms in Uganda, India, and Ghana, respectively, with the Teacher Instructional Practices and Processes System© (TIPPS; Seidman et al., 2013); (c) how these tools can be fed back to teachers and trainees to facilitate reflective practice and continuous improvement; and (d) how such professional supports can lead to enhanced 21st-century teacher skills and, in turn, 21st-century learners.

The social processes (or how) of quality teaching

Classroom observations are being increasingly used in LMICs to improve education quality through information about current teacher/classroom practices or measuring change in practices over time (UNESCO, 2016). Yet in order to fully understand how we can best help teachers, we need to take a step back and learn to regard teachers as learners and to ensure that the learning we want to see in our children is taking place with our teachers. Learning as an active process is rooted in the educational philosophy of social constructivism (Vygotsky, 1962), which established the belief that knowledge itself is situated within a social context; an individual’s ability to learn is regarded as a series of social processes that are inextricably shaped and influenced by his or her context. Though the perspective is rooted in and remains a predominantly Western ideology, it has taken hold in many countries around the world, and constructivist beliefs for education remain widely relevant for teachers across the globe (OCED, 2009). Nevertheless, constructivist perspectives should not be assumed as ubiquitous in education. For example, in cultures where verbal exchange is not the primary means through which knowledge is conveyed (see Treviño, 2006), we must be mindful of how such cultural variation and nuances affect ways of learning. Differences in sociocultural practices could dictate how children (or in this case teachers) may better learn through practices such as observation, listening, or sharing responsibilities rather than verbalization or actions (Rogoff, 2003; Treviño, 2006).

Social constructivism puts greater emphasis on context and also highlights the important role of culture and how knowledge derived from social processes also exist within cultures (McMahon, 1997; Schunk, 2000). Culture becomes a great influence into not only what patterns of social processes can emerge within a context but also how they emerge (Rogoff, 2003). This perspective calls us to think more carefully, not only about social processes (e.g. classroom interactions) and the knowledge that is generated through those processes, but also about how highly dependent those processes are on the cultures and context in which they reside. For example, a study of a teacher in-service program in South Africa (Brodie et al., 2002) calls attention to how situational constraints, particularly in low-resource contexts, can heavily hinder the ways in which teachers can develop alternative practices that are more learner-centered; the authors draw a critical distinction between the “form” (i.e. techniques such as questioning or group work) versus the “substance” (i.e. content such as engaging with learners’ ideas and interests) of learner-centered teaching. Based upon this work and her own in Tanzania, Vavrus (2009) set forth the notion of a contingent constructivist pedagogy, which considers the pedagogical spectrum between formalism and constructivism and calls for the adaptation of pedagogy to the material conditions, local traditions, and the cultural politics of a context. Such considerations as the ones outlined here have large implications on how social processes could best be measured.

Additionally, the notion of teachers as learners calls us to define what it is we feel that teachers need to know. An increasingly globalized and complex world has propelled a movement toward a vast array of skills that fall under the label of “21st century.” Most frameworks focus on various types of higher-order skills such as complex thinking, communication, collaboration, and creativity (also known commonly as the 4Cs) (e.g. Dede, 2010; Saavedra and Opfer, 2012; Soulé and Warrick, 2015). These skills are increasingly being recognized as the gold standard for student abilities, as well as requirements to meet the demands for success in work and life (Binkley et al., 2012). Yet the practice of delivering knowledge to students via a transmission process (e.g. lecture, dictation) remains dominant in large portions of the world (OECD, 2009). Therefore, if what students are to learn needs to go beyond rote, then there needs to be a concomitant shift in teacher pedagogy to match. Twenty-first-century teachers need to know not only how to use a practice but also when to use a practice to accomplish their goals with students in varying contexts (Darling-Hammond, 2006). This requires teachers to have a deeper knowledge of how to address a diverse array of learners and more refined diagnostic abilities to inform their decisions (Darling-Hammond, 2006). The ability to communicate in such a complex environment requires constant information flow and adjustment (Levy and Murnane, 2004), and a skilled teacher should be adroit at regulating the flow of classroom discussion as it ebbs and flows (Dede, 2010).

Social settings frameworks have historically emphasized the importance of looking at teachers as facilitators of an individual’s learning experience (Cohen et al., 2003; Pianta and Hamre, 2009; Tseng and Seidman, 2007). Learning and development rests within the daily interactions and experiences that take place in the classroom (Seidman and Tseng, 2011; Tseng and Seidman, 2007; Wolf et al., 2018) in addition to being a product of the culture where the processes reside (Stigler et al., 2000). In any given classroom, the core processes and practices are working concurrently. However, from the perspective of classroom observations, there is a need to be able to make clear distinctions between concurrent behaviors because doing so will better enable us to discern how these behaviors relate to key dimensions of the classroom environment that support student learning. Focus is put toward processes and practices in the classroom, thereby reducing the singular focus on what is being taught to how something is being taught. This is no easy task, but this strategy is both conceptually and programmatically aligned to support rigorous evaluation.

How we can measure quality teaching processes

Quantitative approaches to assessing classrooms have the ability to be more systematic and can broadly be broken down into two major categories: checklists and rating/categorization scales. Checklists typically require the classroom observer to mark the presence or absence of the item in the classroom. They are very low inference methods and can catalogue a range of desired constructs from artifacts in the classroom to the practices of the teacher. Rating or categorization scales are often higher inference methods, capable of focusing on the quality of specific behaviors as well as on their frequency of occurrence in the classroom (Waxman et al., 2004). As assessments of quality, rating or categorization scales have much greater potential to be fed back to teachers to change their practices.

Instruments to assess teacher practices and classroom processes used in LMICs have primarily focused on measuring student use of their time in the classroom, primarily due to the framework put forth by the Global Campaign for Education (2002) that set the learner in the center of all education quality endeavors. In the past, however, instruments such as the Stallings (Stallings, 1978) were based on Carroll’s (1963) model of school-based learning that focused on the importance of a learner’s time engaged in learning as well as his or her learning rate. Though this modality could still provide a general picture of classroom alignment with policy and expectations, understanding specific behaviors run the risk of being underreported when using a snapshot method unless intervals are quite frequent (UNESCO, 2016).

At one time, time on task was the prevailing method behind classroom observation, but more recent findings question this mode of measurement. Even as they support it, Benavot and Gad (2004: 293) note that, “researchers disagree over the magnitude of this [time engaged in learning and learning rate] relationship, the relative importance of various intervening factors, and the nature of the socio-economic contexts in which the relationship is more or less salient.” Therefore, though defined as systematic observation instruments, time and frequency measurement instruments, such as the Stallings Snapshot, are far too narrow in scope to meet the need of measuring the quality use of classroom time. 1 Furthermore, time on task measures cannot capture teacher competencies around “soft skills” such as emotional support nor can they differentiate levels (i.e. quality) of instruction (Bruns et al., 2016).

Additionally, many internationally used instruments are intervention-specific. The main drawback to tailoring measures to evaluate the specific facets of an intervention is that the measure becomes insensitive to experimental contrast, and use and comparison of data in other evaluations and contexts are not possible. Only a few instruments – primarily the various iterations of the Stallings Observation System (SOS) – have been used across different contexts, cultures, and interventions (Bruns, 2011; Crouch, 2008). The issue is further compounded by the fact that most instruments in use internationally do not provide a clear conceptual framework that serves as a foundation for instrument validity. The development of a high-quality instrument for LMICs would require further psychometric development. Furthermore, while professional development and pedagogy need to be based on research and existing best practices, it is also of the highest importance that the research that informs it continues to be localized, adapted, and refined to the day-to-day realities of the teacher’s context (Burns and Lawrie, 2015; Vavrus, 2002).

Behavioral observation

Generally speaking, there is relatively little knowledge from LMICs about what happens in classrooms. A review of classroom research in developing countries supported by the World Bank (Venäläinen, 2008) continues to echo the recommendations of Schaffer et al. (1994) and goes on to outline the need for improved instruments and methodologies to gauge classroom quality (e.g. student engagement, effective instructional strategies) and other elements of a classroom that cannot be gleaned from the purview of a “snapshot.” Similarly, the need for greater quality teacher professional development has elicited a call for “a focus on the how of teaching” (Burns and Lawrie, 2015: 43), including a focus on more structured, facilitated opportunities for teachers to learn, a better understanding of the contexts in which they work, and the use of improved data to determine what really works (Burns and Lawrie, 2015).

Westbrook et al. (2013) suggest that one way to improve the knowledge gap on classrooms is through systematic behavioral observations to record teaching practices. Evidence from Western countries suggests that teachers and school leaders trust classroom observations more than other value-added measures (Harris and Herrington, 2015). This is perhaps due to the fact that what they see in classrooms directly relates to what teachers do in practice and is a more concrete basis for information that teachers need to improve (Harris and Herrington, 2015).

A body of knowledge on effective pedagogical practices and classroom processes has begun to emerge (Seidman, 2012). An existing Western research base (e.g. Danielson, 2011; Kane and Staiger, 2012; Mashburn et al., 2014; Pianta, 2011) and emerging international research base (e.g. Araujo et al., 2016; Hu et al., 2016; Leyva et al., 2015) are beginning to rigorously assess the educational quality in the classroom. In some cases, classroom process quality has even been successfully linked to student learning outcomes (Allen et al., 2013; Leyva et al., 2015; Pianta et al., 2008a; Wolf et al., 2018).

Contextualization

As the value of understanding process quality in classrooms has become recognized more broadly, behavioral observation instruments are now being used more regularly to evaluate classrooms – particularly the Classroom Assessment Scoring System (CLASS; Pianta et al., 2008b). CLASS research now has a global base in both developed countries (Bell et al., 2012; Gettinger et al., 2011; Pianta and Hamre, 2010; Tayler et al., 2013) and increasingly in LMIC contexts (Araujo et al., 2016; Hu et al., 2016; Leyva et al., 2015). An empirical base for the CLASS across all these various contexts has established a three (domain) factor structure of Emotional Support, Classroom Organization, and Instructional Support (Hamre et al., 2013).

However, it is unclear whether or not the cross-country similarities are the result of the tool (Pastori and Pagani, 2017) and a de facto predefinition of quality that has been defined by the tool that is measuring it (Vandenbroeck and Peeters, 2014). Some critical questions have been raised about whether standards-based instruments can be applied out of the context from which they originated without serious consideration for their cultural consistency and ecological validity (Pastori and Pagani, 2017). Considerations need to be made for underlying cultural complexities and the fact that values may manifest or be implemented differently across locales, though some may be similar or common (Pastori and Pagani, 2017; Rogoff, 2003).

Some emerging empirical evidence now suggests some psychometric inconsistencies with the CLASS three-factor structure outside of the US (see Pakarinen et al., 2010 and von Suchodoletz et al., 2014). Furthermore, in low resource contexts such as sub-Saharan Africa (SSA), the CLASS tool appears never to have been used. Wolf et al. (2018) suggest that based on some piloting exercises of the CLASS for an Early Childhood Education study in Ghana, the tool was not feasible due to the cost implications and potential need for significant adaptations. Much like what was suggested by Pastori and Pagani (2017), measuring classroom quality in a context such as SSA may require a contextually developed and anchored tool (e.g. allowing for incorporation of culturally specific examples) that is designed with the intent of adaptability and ease of use.

Teacher Instructional Practices and Processes System (TIPPS)

In any given classroom, the core processes and practices are working concurrently. However, clear distinctions need to be made between concurrent behaviors – isolating specific practices and processes can better enable us to discern how particular behaviors in the classroom environment serve to support student learning. Looking at processes and practices in the classroom shifts the focus from exclusively what is being taught to how something is also being taught. In other words, both “form” and “substance” of teaching (Brodie et al., 2002) are being considered to determine quality of classroom processes. Assessment of classroom process quality also necessitates a standard, in the form of a specific, conceptually based lens with which observers can view the classroom. Through such a lens, observers can be trained to observe more objectively, spot biases, and minimize subjectivity through clearly defined dimensional guides for rating.

The TIPPS is a classroom observation instrument designed with such considerations in mind and specifically to assess process quality in LMIC contexts. Moreover, TIPPS was developed to look at teaching processes in a granular, nuanced, and culturally relevant manner so that information could be fed back to teachers to improve their performance as well as student academic and social-emotional outcomes. Classroom observers are trained to connect what they observe, using concrete behavioral evidence, to indicators under each dimension of the tool. Observers are guided through common observational biases (e.g. sympathy scoring or appearance bias) so that they can better recognize them should such tendencies arise.

The nature and format of the instrument is meant to ease the process and reduce the cost of administration. We strove to keep the dimensions aligned with general classroom characteristics (rather than subject-specific) as well as to be succinct, focusing on only several key domains of classroom practices and processes. The tool includes items around classroom management, personal learning support, and cognitive activation of students (Praetorius et al., 2014) as well as items that address the importance of a teacher’s efforts to stimulate student interests (Patall et al., 2010) and promote inclusion, for example, gender parity (Stromquist, 2007), in the classroom.

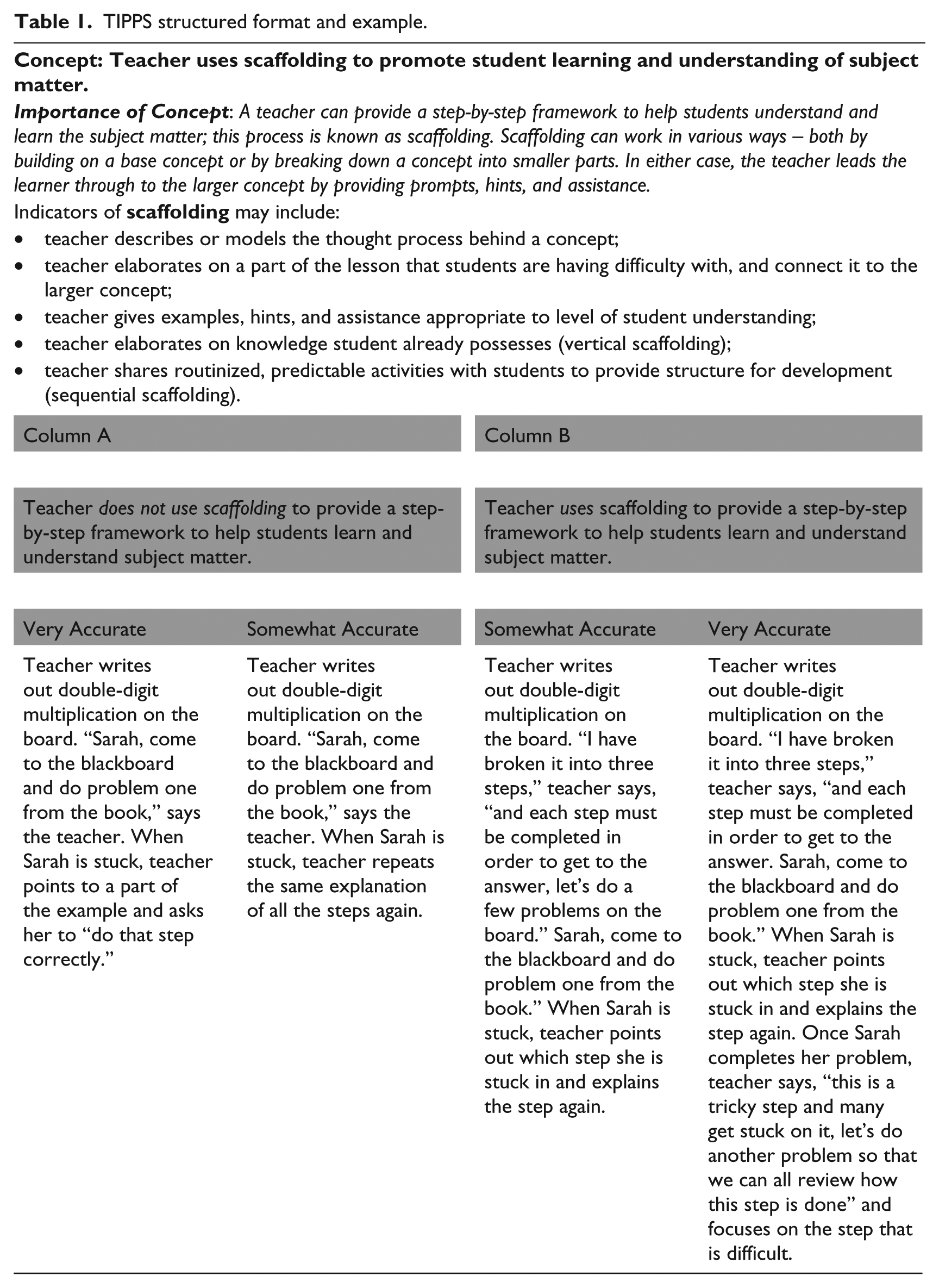

In Table 1, a sample item from the training manual – scaffolding – is presented. First the concept/dimension is defined and next indicators are noted. The instrument’s “structured alternative format” was adapted from Susan Harter’s Perceived Competence Scale for children (1982) with the goal of facilitating more objective and reliable ratings (Seidman et al., 2018). Conceptually and visually, an observer is presented with a dimension and is required to make a dichotomous decision of whether, based upon observed behaviors, that particular dimension is absent or present in the classroom (Column A or Column B). Next, the observer is asked to make a second dichotomous rating, determining if the first dichotomous rating is “somewhat accurate” or “very accurate.”

TIPPS structured format and example.

Finally, we felt an instrument that was meant to capture social processes needed to also allow for the integration of culturally unique processes (Hughes et al., 1993; Vavrus, 2009). The manifestations of “common” or genotypic processes would vary in each unique context (i.e. expressed phenotypically). Essentially, the way in which we structured the tool – particularly the concrete examples for each dimension – would allow for contextual-uniqueness or specificity.

Preliminary development of the instrument has taken place across multiple LMICs (Democratic Republic of the Congo, India, Tanzania, and Uganda) and at different developmental levels (primary, secondary, and early childhood) in a series of iterative endeavors. Dimensions could be added or deleted as appropriate to the context. In the following section, we present empirical data on the reliability and validity of the TIPPS in three different levels of schooling (secondary, primary, and early childhood) and countries (Uganda, India, and Ghana).

TIPPS secondary (Uganda)

Initial reliability and validity of the TIPPS instrument was established in Uganda (Seidman et al., 2018). In this first empirical study, data were collected from 197 secondary schools (i.e. 737 classrooms), across various regions of the country. Pairs of locally recruited observers were trained to observe live classrooms using the initial version of TIPPS and were asked to match their observations to a manual that outlines 18 behavioral indicators known as “TIPPS dimensions.” These dimensions were constructed to typify a core set of teacher practices and classroom processes, based upon a review of the most commonly used classroom observation instruments and pedagogical literature. The commonalities we found could be a result of educational reforms in many developing countries that have long been driven by notions of modernity (Inkeles, 1975). However, the overlap in core domains are more likely due to the similarity in the conceptual understanding of “teacher quality” (UNESCO, 2006), especially based on the type of professional development that teachers often receive driven largely by Western constructivist beliefs for education across the globe (OECD, 2009).

In spite of the theoretical and conceptual relevance of the dimensions, initial on-the-ground training in Uganda on TIPPS was met with some difficulties. Local enumerators were finding some of the terminology unfamiliar and difficult to internalize. To remedy this issue, the tool required some revisions based on qualitative work. Unfamiliar concepts were discussed to gauge whether such concepts did in fact exist within the Ugandan context; if they did exist, the appropriate wording was decided upon as a group to find the most relevant language in Ugandan English. The aforementioned process was critical to the contextualization of the instrument, and this process has been repeated for all subsequent versions since.

Classroom-level TIPPS dimensions were to be matched with 8th grade biology, English, and mathematics achievement scores. The results were promising in terms of the instrument’s coherence, rater-reliability, and concurrent validity (Seidman et al., 2018). Thirteen dimensions revealed sufficient variance and good to high levels of rater-reliability (range, median). Factor analysis revealed a three-factor structure: “Instructional Strategies,” which includes student-centered learning such as the encouragement of student questions and ideas; “Sensitive & Connected Teaching,” which refers to a teacher’s ability to connect lessons to everyday life and their sensitiveness to respond to students’ needs; and “Deeper Learning,” which characterizes a teacher’s ability to break down concepts to help facilitate student learning.

The factors revealed significant and intriguing subject-specific associations with biology, English, and mathematics scores, leading us to consider the possibility of a differential specificity hypothesis: student performance in an academic discipline could be differentially related to the successful enactment of particular practices within that discipline (Seidman et al., 2018). For example, a teacher’s ability to connect what students are learning to everyday life manifested a trend toward better performance in biology only. Additionally, individual items of the tool also had significant, meaningful associations to learning outcomes. For example, in English, a teacher’s use of specific feedback related directly to student performance in English.

TIPPS primary (India)

We developed and piloted a primary classroom version of the TIPPS as a precursor to developing a feedback loop for Kaivalya Education Foundation (KEF) to improve practice for teachers. All of the original dimensions from the TIPPS secondary version were mutually deemed relevant by New York University and KEF teams at the primary level. However, the concrete examples for each dimension were adapted to be more culturally and developmentally appropriate for primary classrooms. KEF’s design team was tasked with vetting the examples as well as translating the manual into Hindi. A full translation, back-translation protocol was undertaken, and in the end, a side-by-side English-Hindi manual was produced.

Data were collected from 256 classrooms throughout Rajasthan, India. Of these 256 classrooms, 148 were sampled to reflect variation by (a) region of the country, (b) rural/urban/tribal, (c) number of years of KEF programming received by school/principal, and (d) number of students in the classroom. Observers were locally recruited KEF staff (project managers from different KEF teams across India), who were trained to observe video-taped classrooms using the TIPPS. Low inter-rater reliability did not permit for higher-order analyses. Nevertheless, the study did provide important learnings for the development and implementation of the tool (see Jayaram et al., in press).

TIPPS ECE (Ghana)

Unlike the primary version of TIPPS, adaptation for an early childhood version required more than translation and a few adjustments in examples. Instruction in ECE classrooms required different teacher competencies and instructional foci. For example, a key principle of learning in ECE that was absent for primary and secondary was play, which provides young children the opportunity to acquire physical, cognitive, and social skills (Britto and Limlingan, 2012). Thus, the development of the ECE version of the TIPPS focused on theoretical constructs and key quality indicators in the classroom that support early child development, including the use of structured free-play in the classroom, ECE-focused instructional practices, social-emotional support, classroom management and environment (Wolf et al., 2018).

Reasoning and problem solving are among the most commonly included elements for the otherwise variedly defined term “critical thinking” (Lai, 2011), and research on young children’s thinking shows that it is possible for early childhood educators to promote children’s critical thinking skills through their classroom practice (Whittaker, 2014). Nevertheless, the teaching practices toward critical thinking need to manifest in a developmentally appropriate manner in order to facilitate effective learning.

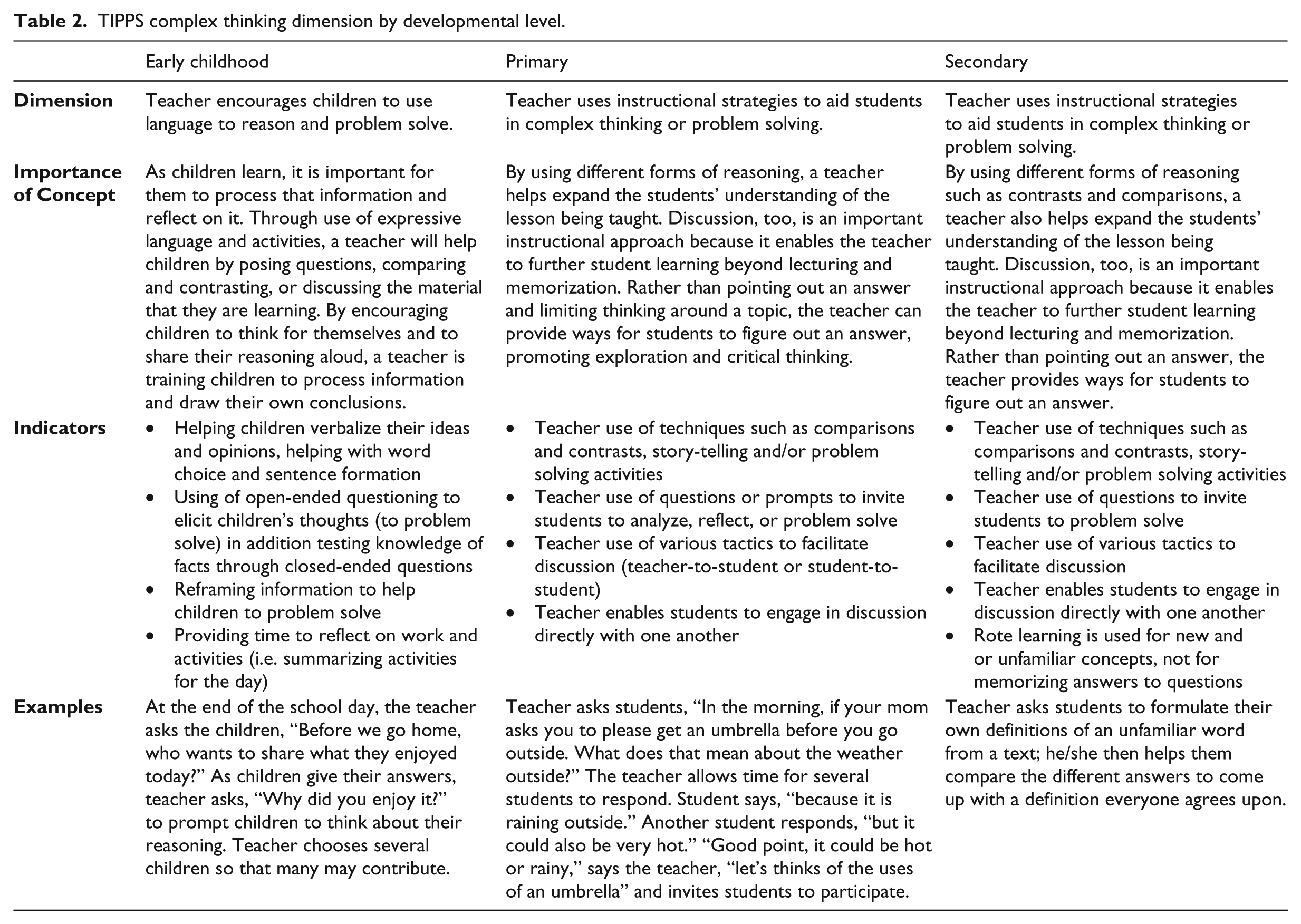

Table 2 provides a comparative example of how a dimension (critical thinking) was adapted and made developmentally appropriate for the ECE classroom. The dimension is largely similar for primary and secondary. However, the wording of the dimension, while still maintaining an emphasis on reasoning and problem solving, is slightly altered. This is because in the case of an early childhood classroom, a teacher may need to do more to scaffold and lead a child through their reflection and thinking processes than an older student who may be able to reflect and evaluate with less prompting and more independence. Therefore, the dimension intentionally specifies “language,” and the importance of concept emphasizes the practice of encouraging students to think aloud. Consequently, the indicators also focus far less on the concept of discussion, which is developmentally more appropriate for older children in primary and secondary, and the concrete examples serve to ground the theoretical list of processes surrounding the item. Again, the concepts of form (critical thinking) and substance (how the child is engaged in critical thinking) play critical roles in the construction of the TIPPS dimension.

TIPPS complex thinking dimension by developmental level.

In this empirical study, data were collected from 240 primary schools (i.e. 317 kindergarten classrooms) in Ghana, from six districts in the Greater Accra Region. A set of locally recruited observers were trained to observe classrooms using the TIPPS. Factor analysis revealed a distinct, but different three-factor structure: Facilitating Deeper Learning (FDL), which includes instructional support strategies used to encourage learning such as scaffolding and providing high-quality feedback; Supporting Student Expression (SSE), which includes considering student ideas and interests, as well as the development of higher-order thinking skills such as reasoning and problem solving, connecting concepts to students’ lives outside of the classroom, and language modeling; and Emotional Support and Behavior Management (ESBM), which includes concepts related to both student emotional support (e.g. sensitivity and responsiveness, tone of voice) and positive behavior management strategies (e.g. providing a consistent routine) (Wolf et al., 2018).

Two factors, Supporting Student Expression and Emotional Support and Behavior Management, predict classroom end-of-school-year academic outcomes. One factor, Supporting Student Expression, was shown to predict classroom end-of-school-year social-emotional outcomes. The findings reveal that the TIPPS was successful in identifying some critical elements of process quality in Ghanaian pre-primary classrooms. Furthermore, a teacher’s ability to support student expression during instruction is significant for improved classroom outcomes (Wolf et al., 2018).

Summary

TIPPS observers have been reliably trained and calibrated, with a median AC1 statistic (Gwet, 2002) of .86 in Uganda and an intraclass correlation coefficient (ICC) of 71.1% (variance shared across raters) in Ghana. While Ghanaian observers were trained to use the Early Childhood version of TIPPS, Ugandan observers were trained to use the Secondary version of TIPPS that overlaps with the primary version of TIPPS utilized in India. The training process itself was similar in all developmental levels and includes concept familiarity, bias awareness, and practicing to hone observer behavioral observation techniques. Rater reliability in multiple contexts is a testament to the fact that the TIPPS has been able capture some common dimensions of classroom quality across contexts (as inherent to the context) or, at the very least, that the instrument has appropriately expressed dimensions of classroom quality in a culturally appropriate manner (as external but learned constructs to the context). Yet in the case of India, we see that content and linguistic adaptation of the tool was not enough, as described below.

With the exception of three concepts (of the 19), the Indian observer cohort had low inter-rater reliability. We attribute the difference in rater reliability to the fact that Indian cohort of observers had a great deal of expertise in a particular curriculum and pedagogy. An individual’s quality of thinking around a particular topic is inextricably linked to the context in which that thinking must be done (Bailin et al., 1999). Part of that context includes not only background knowledge but also dispositions and situations (Bailin et al., 1999; Halpern, 2013; Han and Brown, 2013). Therefore, knowledge and experience in a given area of study or practice can be a significant determinant of the ability to think critically in that area (Bailin et al., 1999) and even a potential impediment to critical thinking (Nosich, 2012).

The pedagogical expertise of KEF staff often emerged as a barrier to enhancing objectivity in their classroom observations. This adherence to “practices that work” as well as “supporting teachers to do better” was counterproductive to our efforts to sharpen their observational lens to apply TIPPS as an effective heuristic for objective observation; they could not focus on “what was happening” rather they focused on trying to “fix what is happening” in the classroom. Also, knowledge of the curriculum being taught in the classroom hindered observer objectivity as some trainees pointed out that the teacher in the practice video was not teaching the curriculum as instructed. A final counter-productive factor was observers’ familiarity with the teachers being observed. During practice videos, many observers indicated that they were familiar with teachers being observed and made assumptions about the classroom that were not indicated in the classroom footage they were observing. While these well-intentioned critiques indicate the high level of commitment to KEF, their profession, and their determination to make a difference, it did not make for objective observers.

These initial studies provide insights into the previously unknown mechanisms of interventions and everyday practice. However, the studies also underscore what still needs to be done to fully realize the goals of an observational tool to assess pedagogical practices and classroom processes in LMICs that (a) is easy to use; (b) has cross-cultural and developmental reach; and (c) has potential as a feedback tool to improve teacher and student performance. Having methods that have some equivalence across countries could dramatically reduce costs of evaluation and be the catalyst for more regular and rigorous cross-national analyses, and an instrument that allows for the integration of culturally unique processes is especially desirable, particularly for its crucial contributions for quality teacher feedback. Yet to be fully ascertained, this may require further ethnographic approaches and contingent constructivist pedagogy as described by Frances Vavrus (2009), not only for the form and substance of the tool itself but also, as we have learned through experience, for the training process. We will continue to gather more information on the cultural equivalence of behavioral observation methods and persist in refining the various versions of TIPPS based on the data we have thus far. With knowledge in hand from a tool that has been contextualized in several cultural milieus and developmental contexts, we now turn to how it can be used via feedback and reflective practice to improving 21st-century teaching skills.

Developing 21st-century teacher skills

The education delivery system has a substantial impact on the way in which 21st-century skills develop in learners. Pedagogy, curriculum, school rules and climate, assessments, and benchmarking skill acquisition are all key factors in the way 21st-century skills develop and are monitored. Nevertheless, the classroom is the primary environment where the aforementioned factors culminate to bring knowledge acquisition and skills development. Furthermore, the classroom is the space where learners observe the modeling of these skills by their teachers and can practice themselves. Therefore, it is equally important to prepare and train teachers in not only the acquisition of 21st-century skills but also the dissemination of these skills. Measuring the classroom processes and teacher practices that are enabling and supporting the development of 21st-century skills in the classroom can serve as an important first step.

The role of feedback and reflective practice

We posit feedback to the teacher on his/her own teaching performance as a key deliverable of observation instruments. Thus, our focus shifts from earlier efforts that focused on utilizing classroom observation as a means for understanding and highlighting facets of teaching quality to that of identifying key contributors to teacher feedback in a cycle of continuous professional development (Arbour et al., 2015; Yoshikawa et al., 2015). However, for teachers to improve their practices, the manner in which professional feedback occurs needs to change. Sustained, meaningful changes in processes of the classroom requires feedback that is purposed for the continuous improvement and ongoing support of teachers. There is promising evidence to endorse coaching and feedback interventions, with an emphasis on actual performance or practices, as effective means to alter setting-level regularities (Seidman, 2012). Moreover, the tools used to measure complex social settings such as the classroom continue to require further development and contextualization as well as validation. For classroom assessment in particular, an effective tool would need to be sufficiently granular and nuanced (Pianta, 2011).

Critically, classroom observations must not focus on what training the teacher may have received but rather what the teacher does in class (Burns and Lawrie, 2015) and in a culturally appropriate contextualized manner. Therefore, receiving low-stakes, high-support feedback, such as from observation, allows the teacher to be able to reflect on his or her in-class performance and empower him/her to make changes. In addition, an observation instrument must deliver on two core aspirations – clarity and granularity. The need is to identify both the strong and the weak elements of the classroom in a manner that is both comprehensible and actionable for the teacher. Generic feedback is unhelpful toward improving core practices of the teacher because it is not providing information that is actionable. Granular feedback allows the teacher to reflect on past performance as well as support action for the future change; this degree of specificity has proven to be the most valuable information to feedback to teachers (see Allen et al., 2011; Jones et al., 2013; Rivers et al., 2013).

Individualized support for the teacher is also necessary to support a successful educational intervention or a professional development system. An observational instrument can become a key component in that effort by tying the teacher’s own growth and improvement to student learning and development. Using feedback of information on social regularities in a supportive and empowering context stimulates reflective practice and lasting and effective behavior change (Seidman, 2012). Long term, in-school integration of classroom observations, not just one-off or short term, is a key factor for an instrument’s utility for teachers. Teachers can chart their own progress, and not through data with which they cannot relate but through tangible evidence derived from live or video classroom observation, coaching, and feedback on performance by an experienced mentor. Teachers are empowered to be active participants in their professional development as well as in the refinement of their pedagogy to best serve the students (e.g. 4Rs: Brown et al., 2010; Jones et al., 2011; My Teaching Partner: Allen et al., 2011; RULER: Rivers et al., 2013) – two aspects currently underserved in SSA’s teacher education and continuing professional development programs (Hardman et al., 2011).

Mentoring and reflective practice are not new concepts in LMICs, 2 and reflective practice, in particular, emphasizes the ability to recognize and monitor one’s own thinking, understanding, and knowledge about teaching (Parsons and Stephenson, 2005). Here too, constructivist ideologies undergird and reinforce the importance of the dynamic, active process of learning for teachers. Throughout this process, it is fundamental that information gained from the acts of monitoring and recognition be mobilized for deep rather than rote thinking (Rodgers, 2002). Cursory practices, such as feedback from behavior checklists, should be eschewed in favor of thinking that highlights critical thinking and the rigorous analyses of information. This level of depth is often not easily achieved through intervention, and reflection that is forced may not cultivate true reflective practice because such exercises can lack critical thinking and trigger social desirability bias (Hobbs, 2007; Orland-Barak, 2005).

As pointed out previously, true reflective practice can be a powerful medium to affect behavior change. But oftentimes, teachers need assistance, guidance and/or support structures in order to improve their practice. This type of teacher support frequently comes in the form of teacher mentorship and coaching, which is generally understood to be intensive, ongoing, responsive, and collaborative job-support (Ackland, 1991). Western education research suggests that teachers who are provided with specific feedback and opportunities to practice these changes in the classroom are able to increase the effectiveness of their teaching (Allen et al., 2011; Jones et al., 2013; Rivers et al., 2013). However, there are worthy examples from LMIC contexts as well.

The Kaivalya Education Foundation has pioneered education leadership and behavior change management in India that works to foster meaning, learning, joy, and pride in every stakeholder in the system by focusing on aspects of self-motivation and engagement. Their work with teachers over the last decade has revealed several critical insights – more specifically that teachers want to access tangible support to improve their practice, broaden their perspectives of education, and further develop their ability to learn new topics. Teachers have also expressed struggles with their limited capacity for self-reflective practice and an inability to advance themselves or support the development of others.

To support teachers, KEF deploys Gandhi Fellows, community-embedded education improvement workers, for one-on-one engagement in each school. This training has included implementation and use of the TIPPS, generating tangible metrics and insights about teachers’ instructional practices and classroom processes (Jarayam et al., in press). Data are used by a Fellow to provide teacher feedback, focusing on areas of strengths and improvement. The combination of a Fellow’s support and TIPPS feedback has been used to empower teachers to self-assess their abilities in a low-stakes environment. Simultaneously, through KEF’s Principal Leadership Development Program (PLDP), a Fellow works with the principal of the school to envision the way they can use the TIPPS to devise an improvement plan for their school. The principal’s coaching and mentoring support becomes an integral, structural part of the school organization.

Conclusion

As competencies such as self-awareness, collaboration, and critical thinking continue to be emphasized as key competencies for sustainable development (see Rieckmann, 2017), it is likely that teacher training interventions based on Western constructivist beliefs will continue to pervade the education developmental landscape in LMICs. Nevertheless, as we have contended here, there is still a great need to proceed with greater consideration for the respective contexts in which teachers are evaluated and receive their professional development. In order to create 21st-century learners, we must focus on teachers’ 21st century skills and re-conceptualize how we can evaluate and train teachers. To achieve this, we have invoked constructivist understandings of what goes on in classrooms and, in particular, teachers’ practices. Beyond common dimensions of practices, we sought to discover and construct dimensions that were expressed in contextually and culturally meaningful ways. In this vein, we were able to identify reliable dimensions with concurrent validity that are capable of being fed back to teachers.

Even so, the process of developing TIPPS was not without its challenges as we navigated various developmental and cultural contexts. Some issues, such as that of translation and language adjustment for content validity, are perhaps simpler and more easily solved. Yet, translating the dimensions of the tool for observers and practitioners is a bare minimum first step. Many of the greater challenges have been in getting observers and practitioners to understand how what they assert they understand on paper actually manifests as actions in the classroom. For example, what does it look like for a teacher to incorporate student interests into a lesson? Is it simply to allowing a student to answer questions or give examples? Or is it something deeper than that? An observer, practitioner, or even a teacher who has never experienced this in a classroom themselves will likely have a very difficult time identifying the behavioral manifestations of such a dimension, let alone employing the practice.

As was mentioned earlier, the classroom is the space where learners observe the modeling of skills by their teachers. If teachers do not know how to identify teaching practices, they certainly will not know how to model them. This is a critical issue not only for observational training but also for feedback and professional development of teachers and a fundamental reason why identifying culturally relevant manifestations of teaching practices is necessary. Having observers, practitioners, and/or teachers study classrooms from their own cultures (whether through live observation or videos) has been supremely important for successful training, rater reliability, and overall relevance of the tool.

In addition, we are still attempting to determine the relevance of certain dimensions of the tool in various contexts if at all. For example, observing inequitable treatment of students (or favoritism) in the classroom has been generally difficult (i.e. invariant) in a few contexts thus far. We have yet to determine whether this is due to the particular contexts or because such dynamics are much more nuanced than can be discerned by an independent third party. A dimension such as cooperative learning also remains to be seen with much frequency, yet its importance to the learning process (as emphasized by many interventions) seems to merit its continued presence in the tool for now.

In spite of the challenges we have outlined, with the right tool in hand to support the process, we feel the key to improving teacher practices is with granular and clear feedback. This needs to be done on a frequent and regular basis to foster self-reflection and continuous improvement. Teaching skills such as critical thinking require that teachers be educated in a manner that is reflective of that process – through professional development that engages ongoing reflection and continuous learning (Han and Brown, 2013). Only with successful accomplishment of such 21st-century teaching skills will we be able to enhance the 21st-century learning of students in LMICs.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The projects described in this manuscript were supported in part by grants from the Economic and Social Research Council (ESRC) [grant number ES/M004740/1]; the UBS Optimus Foundation, World Bank Strategic Impact Evaluation Fund (SIEF), and NYU Abu Dhabi Reseasrch Institute; and a contract from the Kaivalya Education Foundation.