Abstract

This article aims to provide a practical overview of the various methods of covariate adjustment in randomized clinical trials leading to recommendation for future practice. Topics covered are baseline adjustment for a quantitative outcome (analysis of covariance), consequences of covariate adjustment for different types of outcome (quantitative, binary, time-to-event), surveys of covariate adjustment as done in published trials, regulatory guidance on covariate adjustment, how big is the gain in statistical power (a simulation study), some pertinent examples in cardiovascular trials, center-adjusted analyses (are they worthwhile?), a brief mention of some alternative approaches to covariate adjustment, other uses of covariates (eg risk models). We conclude that modest gains in statistical power are achieved by adjustment for covariates that influence prognosis.

Keywords

Introduction

It is well known that the primary endpoint in a randomized trial, whether a quantitative, binary, time-to-event, or other outcome, will usually be influenced by a patient’s baseline characteristics recorded at baseline. For instance, the chances of an event outcome occurring in any individual patient will depend on their risk profile. Hence, when estimating the effect of randomized treatments on the primary endpoint, it is often thought appropriate to undertake covariate-adjusted analyses, the objectives being a potential increase in the strength of evidence for a treatment difference (i.e. a gain in statistical power) while providing a more valid estimate of the treatment effect and its uncertainty.

The main aim of this article is to provide a practical overview for applied statisticians and clinicians of the various methods of covariate adjustment, paying attention to (1) the different types of outcome data, (2) the appropriate choice of covariates, (3) guidance from regulators, (4) surveys of current practice in published trials, (5) simulation studies into the gains in statistical power, (6) topical examples in cardiovascular trials, and (7) some alternative techniques.

In addition, brief mention is made of other uses of covariate data, especially models for assessing how treatment effect can depend on patient risk.

Baseline adjustment for a quantitative outcome: analysis of covariance

For a quantitative outcome, recorded at both a fixed time post-randomization and also at baseline, it has long been recognized that analysis of covariance provides the most efficient estimate of the treatment effect.1,2 But still there are trials which fail to do so in their pre-defined primary analysis.

For instance, the SYMPLICITY HTN-3 trial of renal denervation versus sham control in resistant hypertension randomly assigned 535 patients in a 2:1 ratio. The primary endpoint change in office systolic blood pressure (SBP) at 6 months had a mean treatment difference of −4.07 mm Hg (95% confidence interval (CI) = −8.62 to +0.48 mm Hg) (P = 0.08). Their conclusion “this blinded trial did not show a significant reduction …” was a great surprise given previous very positive findings from non-blinded trials. 3

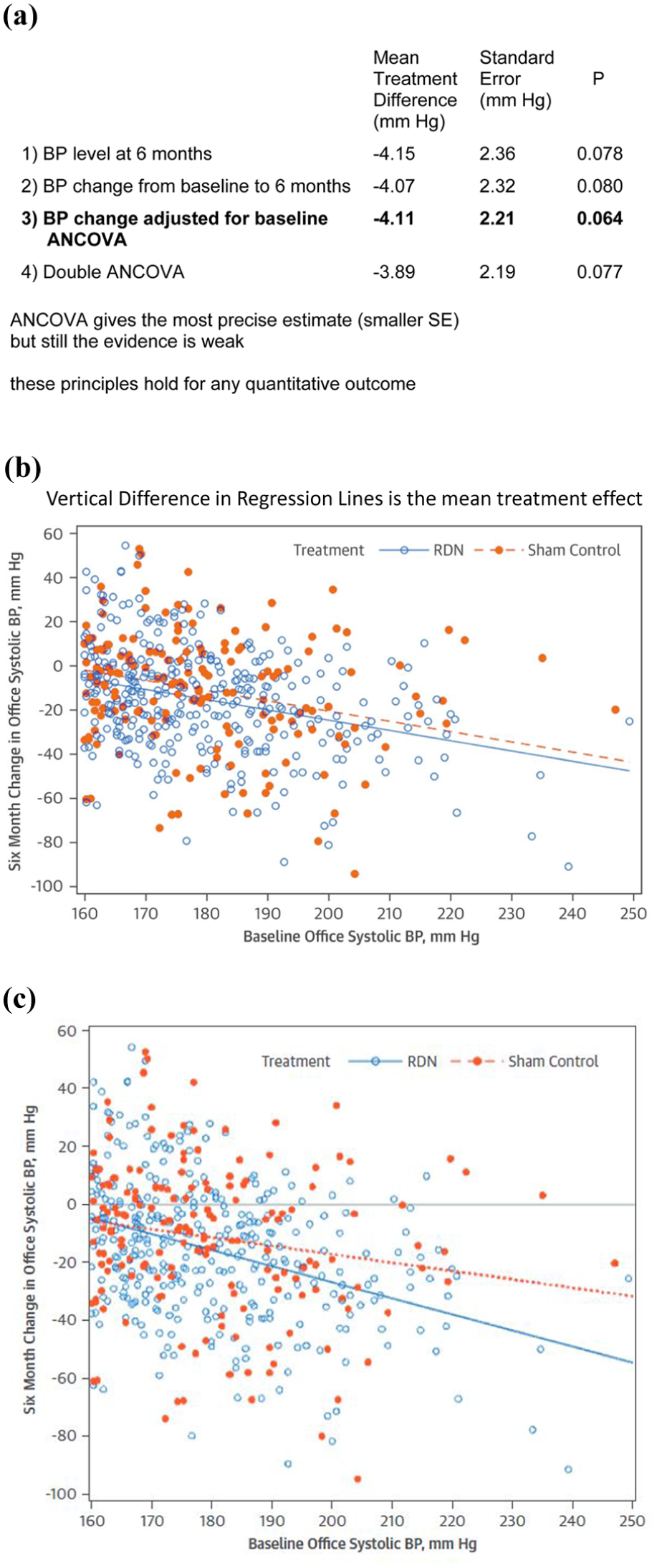

Figure 1(a) shows a revealing set of subsequent analyses. 4 Worse still is (1) an analysis of SBP level at 6 months (i.e. ignoring the baseline value). Better than (2) the published analysis of change at 6 months is (3) the BP change adjusted for baseline using the analysis of covariance (ANCOVA). Better still is (4) a double ANCOVA which adjusts for two baseline values: one at randomization and the other at initial screening a few days earlier. The increased efficiency of analysis going from (1) to (4) is represented by the smaller standard error of the estimated mean treatment difference.

Results from the SYMPLICITY HTN-3 trial of renal denervation versus sham control. (a) Alternative analyses for a quantitative outcome. 4 (b) Scatter plot of 6 month change by baseline value. (c) Potential interactions are a challenge: here interaction P = 0.053.

Note analyses (1) and (2) are two-sample t-tests, whereas (3) and (4) are linear regression models, both containing randomized treatment and baseline SBP as binary and continuous covariates, respectively, while the latter adds in an extra covariate for SBP at screening. Also note how the estimated mean treatment difference varies. Analysis (1) had the largest estimate, artificially enhanced by the mean at baseline being by chance slightly lower for the renal denervation group. Analysis (2) over-corrects for this baseline imbalance, whereas analysis (3), ANCOVA, gives the most valid estimate.

Figure 1(b) shows individual patient 6-month change in SBP by baseline value and also the two regression lines by treatment groups, the vertical difference between them being the estimated treatment effect −4.11 mm Hg. It is striking how small this is compared with great between-patient variability of blood pressure change. Such displays of individual patient data are to be encouraged.

Figure 1(c) shows what happens when a potential interaction is explored by allowing each treatment to fit a separate slope. In this case, interaction P = 0.053 suggests that the treatment effect may be greater for patients with a higher baseline SBP. In general, covariate adjustment is based on assuming no true interaction between treatment and covariates. Issues of effect modifiers and subgroup analysis lie beyond the scope of this series of articles.

Covariate adjustment for different types of outcome

The properties of covariate adjustment are most readily understood for a quantitative outcome. The most commonly accepted approach is a multivariable linear regression model, the most simple case being ANCOVA as in Figure 1. If the covariates are associated with the outcome, then the treatment effect estimate will be more precise, that is, with a smaller standard error. If the covariates are balanced, then the P-value for the treatment effect should become smaller. If a key covariate has a chance imbalance between treatments, this will affect both the magnitude of the treatment effect and its P-value, the consequence depending on the direction of that imbalance. Given one does not know in advance what these chance imbalances will turn out to be, such covariate adjustment will, on average, result in a greater strength of evidence (smaller P-value) for the treatment effect. That is, in the presence of one or more covariates that are strongly associated with the outcome, covariate adjustment will enhance statistical power.

For a binary or time-to-event outcome, one usually fits a logistic model or a proportional hazards model to simultaneously evaluate the impact of treatment and covariates. The same principles hold true on average for achieving a smaller P-value and enhanced statistical power, but the specific mechanism is different.5,6 If the fitted covariates are associated with the outcome, the effect estimate (eg odds ratio, hazard ratio) is expected to increase away from the null. Also, there is no gain in precision, that is, its 95% CI will tend to widen slightly. We will illustrate these points later with four examples.

Surveys of covariate adjustment in published trials

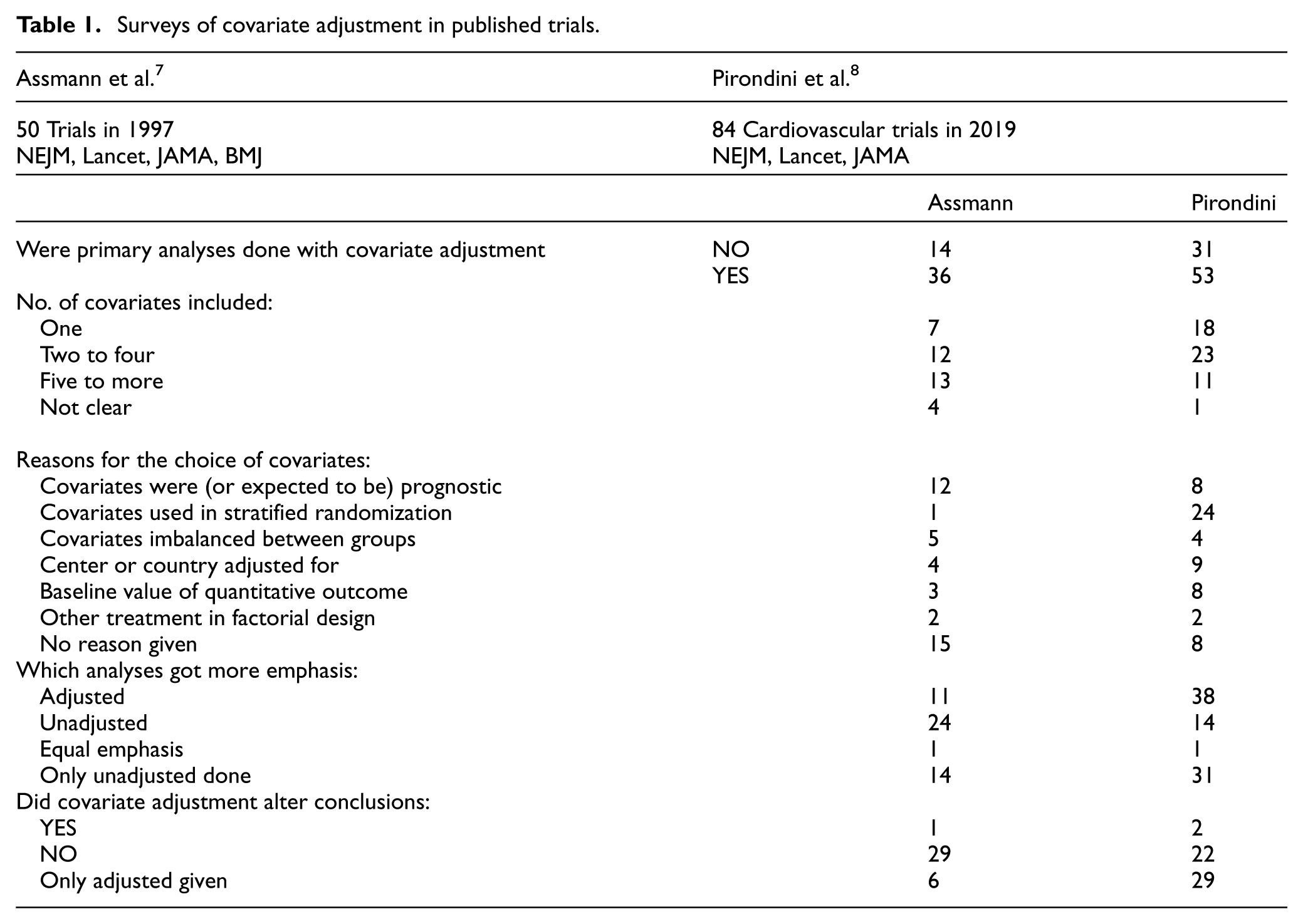

Table 1 documents the main findings from two surveys into uses of covariate adjustment in trials published in major general medical journals: Assmann et al. 7 assessed 50 trial reports in 1997, whereas Pirondini et al. 8 assessed 84 reports of cardiovascular trials in 2019. Although we report both, we will concentrate on the latter since it reflects more recent practice. For most trials (N = 53/84), some form of covariate-adjusted analysis was performed for the primary endpoint, and then it usually received more emphasis being the pre-defined primary analysis (N = 38). When both unadjusted and adjusted analyses were presented only in two trials, the latter altered the conclusions.

Surveys of covariate adjustment in published trials.

Table 1 shows a great diversity of reasons for the choice of covariates: most commonly factors used in the stratified randomization (N = 24), center or country (N = 9), covariates expected to be prognostic (N = 8), and the baseline value of a quantitative outcome (N = 8). The latter two reasons seem particularly appropriate. The number of covariates for adjustment varied markedly.

Regulatory guidance on covariate adjustment

The Food and Drug Administration’s (FDA) recent guidance document 9 provides valuable general advice to trialists, which I summarize as follows:

Unadjusted analysis is acceptable.

Adjustment may lead to more powerful hypothesis testing.

One should pre-specify detailed procedures before unblinding.

One should adjust for variables related to prognosis.

One should adjust for variables used in stratified randomization.

The number of covariates should be small relative to sample size.

Do adjust for the baseline value of a measurement outcome.

Avoid post hoc variable selection (e.g. adding unbalanced covariates, dropping non-significant predictors, adding significant ones).

How big is the gain in statistical power: a simulation study

There are general claims about enhanced statistical efficiency by using covariate adjustment but little quantifiable evidence in support. If the covariates are not related to the outcome, then no gain can be expected; thus, adjustments for variables in the stratified randomization will not matter if they are poorly chosen as non-prognostic. So a key matter is the strength of prognostic influence of the chosen covariates.

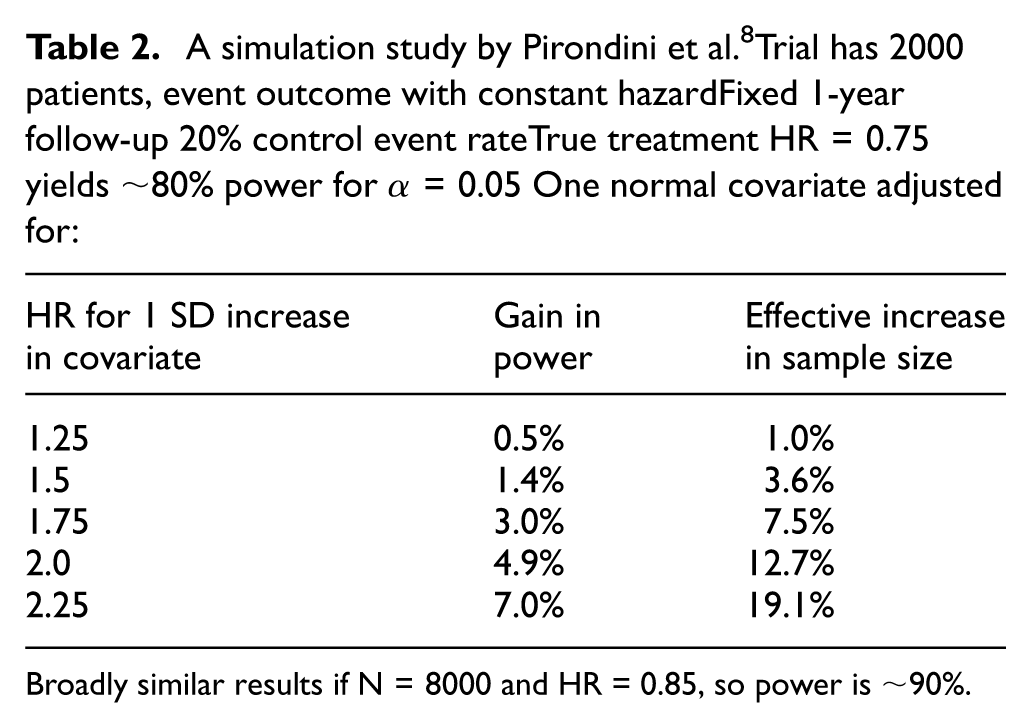

Pirondini et al. 8 undertook a simulation study based on a two-arm trial with an event outcome having constant hazard over a fixed 1-year follow-up. Their results were from 10,000 trial simulations under two scenarios: a true treatment hazard ratio = 0.75 (or 0.85) with a sample size of 2000 (or 8000) patients and consequent power around 80% (or 90%) for a two-sided alpha = 0.05 (see Table 2 for the former). For each simulation, one introduces one quantitative covariate assumed to be normally distributed with its prognostic relevance expressed as the hazard ratio for a 1 standard deviation increase in the covariate.

A simulation study by Pirondini et al. 8 Trial has 2000 patients, event outcome with constant hazardFixed 1-year follow-up 20% control event rateTrue treatment HR = 0.75 yields ∼80% power for α = 0.05One normal covariate adjusted for:

Broadly similar results if N = 8000 and HR = 0.85, so power is ∼90%.

For both scenarios, there is only a modest gain in statistical power if the covariate is only weakly related to prognosis, but this gain becomes marked if the covariate is strongly prognostic (see second column of Table 2). This can then be converted to an effective increase in sample size (see third column of Table 2). For instance, if the covariate doubles the hazard for any 1 standard deviation increase in its value, then for our two scenarios (a smaller trial with a bigger true treatment effect and a bigger trial with a smaller true treatment effect), the increase in effective sample size was 12.7% and 13.3%, respectively. The challenge in any specific trial is to have the insight to pre-define covariates that collectively provide a sufficiently strong prognostic model that can achieve such efficiency gains. Unfortunately, such prior knowledge of what are the key prognostic factors is often lacking. Also, the form of covariate adjustment needs to be correctly specified, for example, are linear assumptions appropriate?

Examples of covariate adjustment in cardiovascular trials

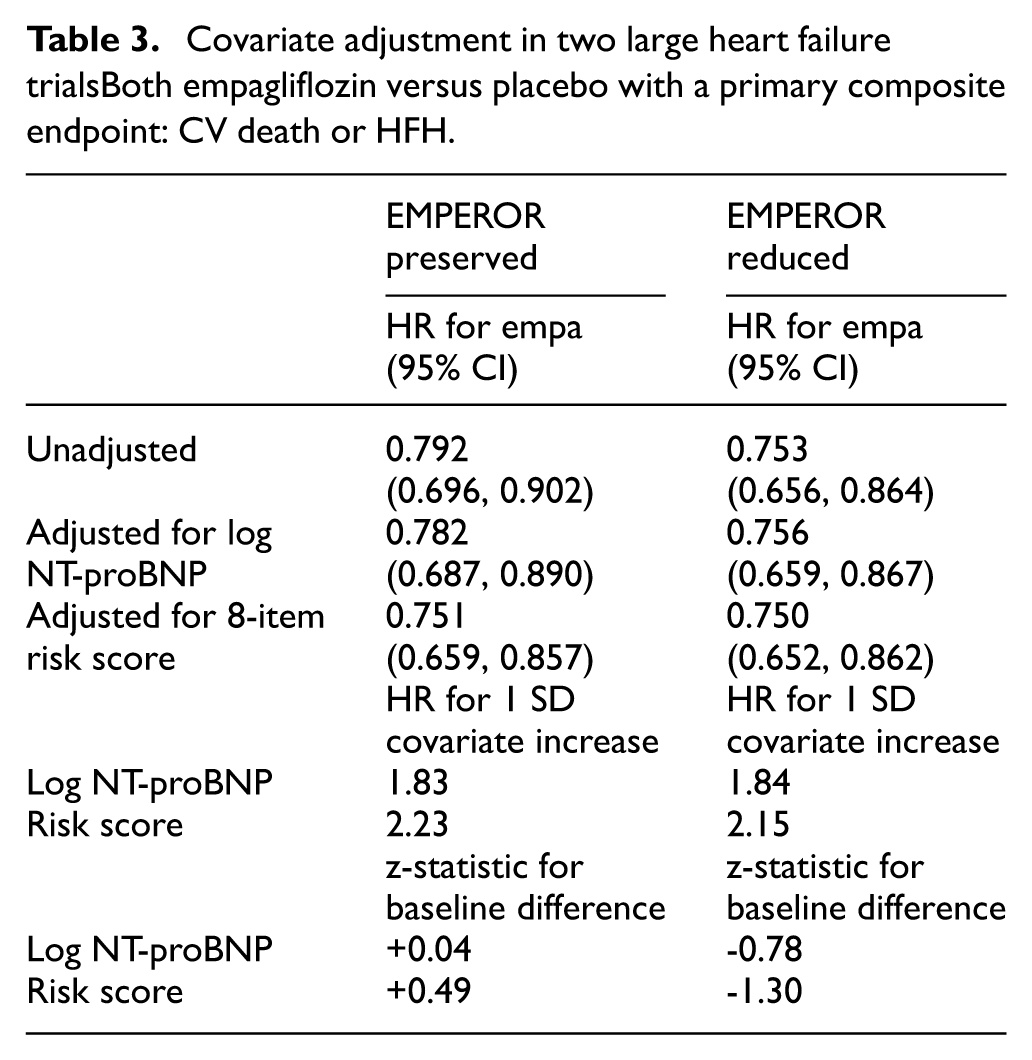

The survey in Table 1 found just two trials when the conclusions were potentially affected by covariate adjustment. We now briefly summarize them both in Table 3.

Covariate adjustment in two large heart failure trialsBoth empagliflozin versus placebo with a primary composite endpoint: CV death or HFH.

The SYNTAX trial of percutaneous coronary intervention (PCI) versus coronary artery bypass surgery (CABG) in 1800 patients with three-vessel or left main disease focused on survival over 10 years of follow-up in its last report. 10 The primary unadjusted analysis had 20% and 24% mortality in the PCI and CABG groups, yielding a hazard ratio of 1.19 with 95% CI = 0.99 to 1.43, P = 0.066. A post hoc sensitivity analysis identified eight baseline predictors of survival (eg age), and the consequent multivariable proportional hazards model yielded a covariate-adjusted hazard ratio of 1.23 with 95% CI = 1.02 to 1.48, P = 0.028.

As one might expect, the treatment effect was slightly enhanced by covariate adjustment, but it was deemed exploratory and did not influence the Lancet Conclusion: “at 10 years, no significant difference existed in all-cause death.”

Note the word “significant” is sadly used as a binary decision tool like so many trial conclusions in major medical journals. Perhaps a more nuanced interpretation such as “the findings are inconclusive though there is weak evidence to suggest that PCI may have a slightly poorer 10 year survival compared to CABG” would have been wiser.

The EXTEND trial of intravenous alteplase versus placebo in 225 patients with ischemic stroke had a binary primary outcome: modified Rankin score 0 or 1 after 90 days. 11 In an unadjusted analysis, this outcome was achieved in 40/113 (35.4%) and 33/113 (29.5%) of alteplase and placebo patients (P = 0.35). But two key patient baseline factors, age and National Institutes of Health Stroke Scale (NIHSS) score, are of known prognostic relevance and were unbalanced between treatment groups, with mean age 73.7 versus 71.0 and median NIHSS score 12.0 versus 10.0, indicating that by chance lower (more favorable) values tended to occur in the placebo group.

Modified Poisson regression models with robust error estimation 12 were undertaken with and without adjustment for age and NIHSS score. With unadjusted, the consequent risk ratio was 1.20 with 95% CI = 0.82 to 1.76, P = 0.35, whereas with adjustment, the risk ratio became 1.44 with 95% CI = 1.01 to 2.06, P = 0.04. The conclusion was “Use of alteplase resulted in more patients with no or minor neurological deficits.” There are several points to note here. The credibility of the covariate-adjusted model depends on it having been pre-defined as the primary analysis with precise specification of how the two covariates were fitted (were they both linear terms?), and they were both chosen in advance. Second, in my experience, it is unusual for covariate adjustment to make such a big impact on the estimated treatment effect. Third, it might be wiser to exercise more caution in interpreting these findings: the trial is relatively small with a borderline P-value indicating just modest evidence of treatment efficacy only after covariate adjustment. Finally, the Modified Rankin scale is an ordinal outcome scoring from 0 to 6, and so analyses that utilize its ordinal nature may be more appropriate than the use of a binary cut-off.

In understanding the properties of covariate adjustment, it is of value to explore trials in a clinical field where factors influencing patient prognosis are well known. Thus, we present in Table 3 findings from two similarly designed trials in heart failure of empagliflozin versus placebo, EMPEROR PRESERVED 13 and EMPEROR REDUCED, 14 which respectively randomized 5988 and 3730 patients with preserved and reduced ejection fraction followed for a median 26.2 months and 16 months during which the composite primary outcome cardiovascular death or heart failure hospitalization occurred in 926 and 823 patients.

Both trials showed strong evidence of a treatment benefit in the primary unadjusted analyses, but it is relevant to see if effect sizes could be enhanced further by covariate adjustment. Let us first look at the results for EMPEROR Preserved (see left side of Table 3). NT-pro BNP is a biomarker well known to be associated with the prognosis of heart failure. It has a skew distribution, so its log transform is the covariate used for adjustment. The hazard ratio per 1 standard deviation increase in log NT-proBNP is a substantial 1.83 so not surprisingly the hazard ratio for the treatment effect is reduced slightly: 0.782 compared with the unadjusted 0.792. The extent of baseline imbalance between treatment groups for such a quantitative covariate is best summarized by the z-statistics: in this case, z = 0.04 indicates good balance with mean log NT-proBNP very slightly higher in the empagliflozin group.

EMPEROR Preserved developed a risk score including an additional seven covariates. The risk score has an even stronger link to the outcome (hazard ratio 2.23 per 1 SD increase), and hence, adjustment for it pulls the hazard ratio further away from null: 0.751 compared to unadjusted 0.792. This effect is aided partly by the slight imbalance in the baseline risk score: z =+ 0.49 means that the empagliflozin group had a slightly “unfair” disadvantage in baseline risk profile which is then corrected for.

Repeating this exercise for the EMPEROR Reduced trial 14 with its own somewhat different eight-variable risk score produced rather different results (see right side of Table 3). The three models (unadjusted, adjusted for NT-proBNP, adjusted for the risk score) showed very similar hazard ratios: 0.753, 0.756, and 0.750, respectively. While the prognostic strengths of log NT-proBNP and the risk score were similar in both trials, EMPEROR Reduced had baseline imbalances in the opposite direction, z = −0.78 and z = −1.30 respectively, meaning empagliflozin had a slightly better average risk profile. Thus, the competing forces of (1) adjusting for varying individual patient risk and (2) correcting for a slight favorable baseline imbalance effectively canceled one another out. Note that for both trials, covariate adjustment made almost no difference to the hazard ratio’s confidence interval width.

These two trials, as summarized in Table 3, indicate that there is no guarantee that covariate adjustment will enhance a treatment effect in every instance even with strongly prognostic covariates. Adjusting for baseline imbalances also plays a role and that “could swing either way” in any specific trial’s analysis. Nevertheless, the underlying average effect is favorable (e.g. hazard ratios will tend to pull away from the null), as illustrated by the simulation study in Table 2.

Center-adjusted analyses: are they worthwhile?

In our surveys of published trials (Table 1), a minority did perform center- (or country-) adjusted analyses, perhaps encouraged by randomization being stratified by center.7,8 With many centers, this is usually best done by having the center as a random effect. Compared to adjusting for patient factors that relate to patient outcome, adjusting for center usually makes a negligible impact on the results. Reasons are that the treatments are usually well balanced across centers, and also there is often little detectable between-center heterogeneity in patient outcomes.

One problem is that trials often have some centers that recruited very few patients, and therefore, a pre-defined strategy may be needed to combine small centers into larger units. For instance, the low-output heart failure (LIDO) trial 15 in severe failure had 203 patients recruited from 26 centers in 10 countries. For analysis, these were combined in 11 “centers” of adequate size, some being across three countries, for example, France, the Netherlands, and the United Kingdom. The end result called into question the concept of “center”, and perhaps not surprisingly, center-adjusted results were compatible with the unadjusted ones.

Overall, center adjustment might be deemed a harmless sensitivity analysis, but usually other forms of covariate adjustment should get a higher priority.

Alternative approaches to covariate adjustment

Other articles in this issue will present in detail some alternative approaches to covariate adjustment, so my introductory comments on this diversity will be brief and reflect my personal opinion.

Williamson et al. 16 advocate a strategy based on the inverse probability of treatment weighting, which has been shown to increase the precision of the treatment effect estimate. It is a challenge to decipher why this approach achieves its claimed success, since the required propensity score is based on random covariate differences between treatment groups. Each covariate’s impact on prognosis is not directly involved.

Van Lancker et al. 17 promote the use of marginal estimands. They specifically advocate the standardization approach since it can improve efficacy, leveraging covariate information, and is robust to model mis-specification. But Harrell 18 argues against the use of marginal estimates since they only apply to group decision-making. That is, they do not provide estimates relevant to the specific patient.

Morris et al. 19 provide a practical guide to covariate adjustment covering a variety of alternative approaches: conventional direct adjustment, standardization, and inverse probability of treatment weighting. They conclude that no single method is always best, since the choice depends on the trial context. Their perspective focuses on the pre-defined statistical analysis plan (SAP) requiring advance specification of any chosen covariate adjustment strategy.

My own judgment is that conventional covariate adjustment is well understood and easier to explain to non-statisticians. From the consequent prognostic model containing randomized treatment and key covariates, one can readily explore the extent to which each covariate contributes to the primary outcome. In addition, the degree of imbalance between the treatment groups in a covariate will help determine its influence on the change in treatment effect in going from the unadjusted to the adjusted analysis. These insights into what drives the value of covariate adjustment are less apparent in some alternative approaches.

Other uses of covariates in clinical trials

One routine use of baseline covariates is for baseline treatment comparisons, in which the trial report’s Table 1 commonly displays descriptive statistics of all relevant baseline variables by treatment group. One objective is to demonstrate the baseline similarity of groups that is achieved by randomization. The temptation to include P-values for each baseline comparison should be resisted, since by definition, any apparent differences are due to chance.

To undertake stratified randomization based on a few baseline covariates formed into strata is a common practice. Minimization techniques can facilitate the inclusion of more such baseline variables in the goal of achieving more extensive balance. What matters is to balance on factors that strongly influence patient prognosis, and that is not necessarily known in advance. Thus, in practice, stratifying the randomization is often of limited value, and it is more important to undertake covariate-adjusted analyses.

Subgroup analyses are commonly undertaken, preferably based on a limited set of pre-defined baseline covariates of key interest. The consequence is often to demonstrate a consistency of treatment effect across patient subgroups. The situation becomes more challenging when one or more patient factors appear to be effect modifiers backed up by statistically significant interactions. Such exploratory subgroup findings lack statistical power, and any post hoc subgroups with more (or less) effect than the overall result are prone to be exaggerations of any true signal and may well be false claims.

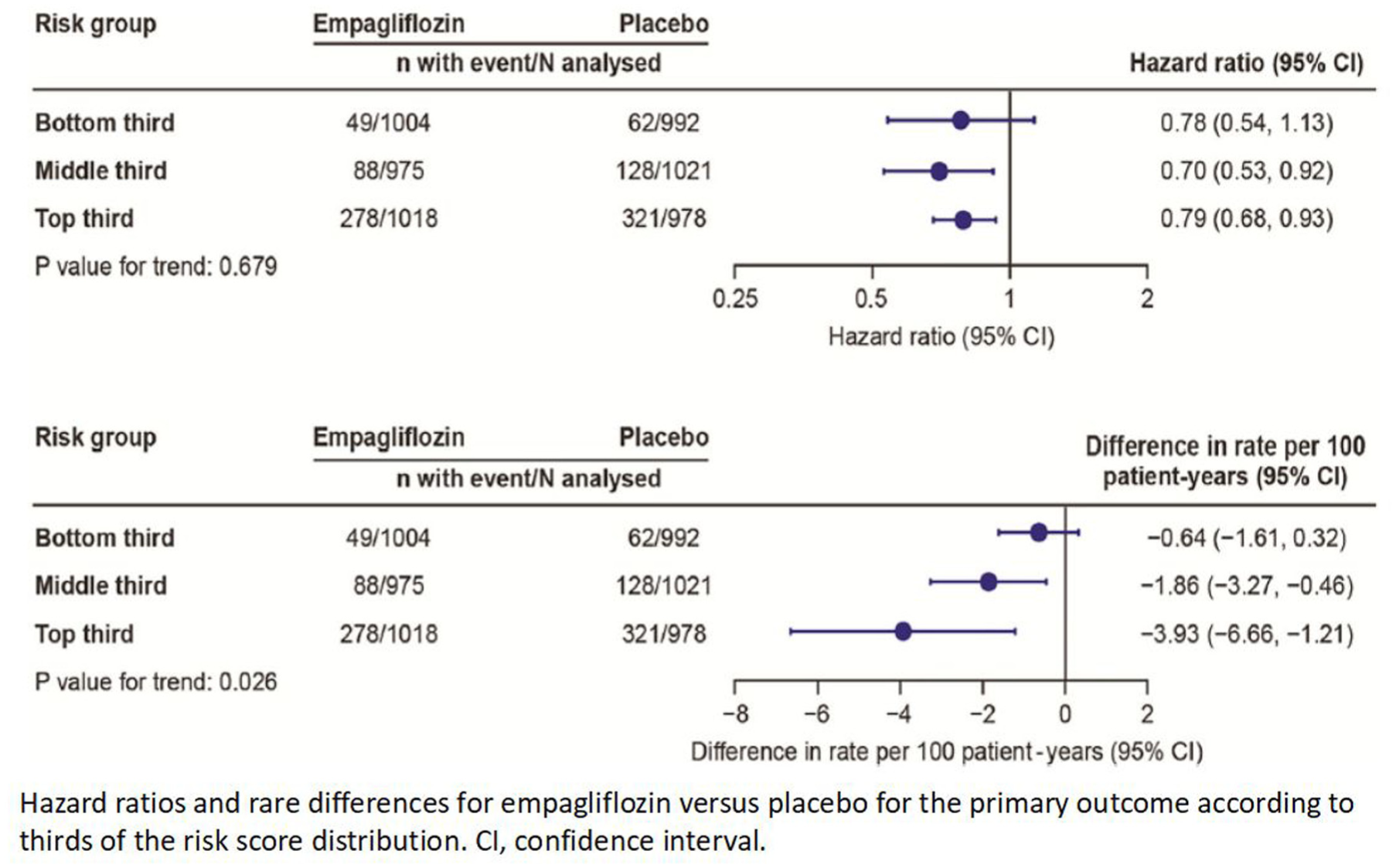

The development of risk models can be useful in order to see if the treatment effect varies by patient risk. Ideally, such a risk model should be obtained on an external database of patients similar to those in the trial, but that is often an unrealistic goal. However, if the trial is large, then its database can be used to generate a statistically robust risk model. The EMPEROR Preserved trial of empagliflozin versus placebo is one such example: 13 for the composite primary outcome of time to cardiovascular (CV) death or heart failure hospitalization, multivariable proportional hazard models were used to identify eight baseline variables that were each strong independent predictors. Forming these into a risk score facilitated categorizing individual patients into three equal-sized risk groups, for each of which the treatment effect can then be estimated. Figure 2 shows the consequent results on both relative and absolute scales. First, the hazard ratio for the treatment effect is consistent across risk groups with no evidence of interaction. In contrast, looking at the absolute effect, the difference in primary event rates per 100 patient years, the treatment effect is strongly influenced by patient risk. For low-risk patients, the incidence rate is low, and so the treatment benefit appears small (0.04 events per 100 patient years) and uncertain: its 95% CI includes no effect. For high-risk patients, the estimated absolute benefit is substantial (3.93 events per 100 patient years).

Should trials concentrate on higher risk patients? For eg, EMPEROR preserved risk model. 13 High risk patients contribute more events and greatest benefits.

This example illustrates a general phenomenon: while a treatment benefit may appear to apply to all eligible patients, for an event outcome, it is plausible that the absolute benefit (which is what matters to patients) depends markedly on the patient’s risk profile: for low-risk patients, the small effect may question whether treatment is worthwhile, whereas for high-risk patients, the treatment benefit may be more marked than the overall result would imply. A wider use of risk models in large trials of pivotal importance is to be encouraged.

An extension of this principle may apply to treatments that have a benefit–risk trade-off. For instance, the efficacy of anti-platelet agents (preventing ischemic events) needs to be carefully balanced against their harm (causing major bleeding events). A trial of vorapaxar versus placebo in post-myocardial infarction patients showed overall that the efficacy outweighed the harm. But the use of risk models for both ischemic and bleeding outcomes facilitated the assessment of an individualized benefit–risk trade-off. 20 This enabled identification of a subset of patients for whom the bleeding risk exceeded the ischemic benefit, and in whom the treatment is best avoided.

Conclusion

There is a sound case for making covariate adjustment standard practice for any clinical trial’s primary analysis. The key is to ensure that the relevant covariates and precise statistical method of adjustment are pre-defined. Priority should be given to covariates that are known (or thought likely) to influence patient prognosis, as manifested in the choice of primary outcome. However, covariate adjustment can realistically only achieve a modest gain in statistical power. In any specific trial, the adjusted analysis may (or may not) yield stronger evidence of a treatment effect, depending on the presence and direction of any treatment group imbalances for the chosen covariates of prognostic relevance.

Footnotes

Declaration of conflicting interests

The author declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: The author disclosures are research funding from AstraZeneca and trial committees with Abiomed, Boehringer Ingelheim, CSL Behring, Fractyl, Idorsia, Janssen, Medtronic, Novartis, and Occlutech and consultancy with Amgen, Boston Scientific, Cardiol, CVRx, Edwards, Faraday, JenaValve, Lilly, and Merril Life.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.