Abstract

Background:

Designing trials to reduce treatment duration is important in several therapeutic areas, including tuberculosis and bacterial infections. We recently proposed a new randomised trial design to overcome some of the limitations of standard two-arm non-inferiority trials. This DURATIONS design involves randomising patients to a number of duration arms and modelling the so-called ‘duration-response curve’. This article investigates the operating characteristics (type-1 and type-2 errors) of different statistical methods of drawing inference from the estimated curve.

Methods:

Our first estimation target is the shortest duration non-inferior to the control (maximum) duration within a specific risk difference margin. We compare different methods of estimating this quantity, including using model confidence bands, the delta method and bootstrap. We then explore the generalisability of results to estimation targets which focus on absolute event rates, risk ratio and gradient of the curve.

Results:

We show through simulations that, in most scenarios and for most of the estimation targets, using the bootstrap to estimate variability around the target duration leads to good results for DURATIONS design-appropriate quantities analogous to power and type-1 error. Using model confidence bands is not recommended, while the delta method leads to inflated type-1 error in some scenarios, particularly when the optimal duration is very close to one of the randomised durations.

Conclusions:

Using the bootstrap to estimate the optimal duration in a DURATIONS design has good operating characteristics in a wide range of scenarios and can be used with confidence by researchers wishing to design a DURATIONS trial to reduce treatment duration. Uncertainty around several different targets can be estimated with this bootstrap approach.

Introduction

In several therapeutic areas, it is important to identify the optimal duration of treatment, defined as the shortest duration providing an acceptable efficacy. For example, reducing antibiotic treatment duration has been suggested as a way of combatting antimicrobial resistance, 1 but this has to be done while maintaining high cure rates. Furthermore, shorter treatment durations often increase adherence, reduce side effects and will be more cost-effective, provided they do not lead to an increased risk of relapse.

We recently proposed the DURATIONS randomised trial design 2 as an improvement over standard non-inferiority trials 3 for identifying the shortest acceptable treatment duration. 4 Its main attraction is that it does not involve selection of a single shorter duration to test against a control, which is often chosen based on very limited prior evidence; instead, patients are randomised to multiple durations, enabling the relationship between duration and response to be directly estimated using pre-specified flexible regression models. In a wide range of examples, randomising 500 patients to 5–7 durations enables the underlying duration-response curve to be estimated within 5% average absolute error in 95% of simulations. 2

The DURATIONS design moves away from a binary hypothesis testing paradigm so that the trial outcome is not a treatment difference between two fixed durations, but rather the whole estimated duration-response curve. In applications, we wish to use this curve to inform decision-making, and hence it is essential to understand the properties of various ways of drawing inference from the curve. This article, therefore, first defines DURATION-design quantities analogous to power and type-I error and then compares different strategies of inference for these quantities through extensive simulations.

Methods

Suppose there is a treatment that is known to be highly effective compared to placebo, and that is usually prescribed as standard-of-care to patients with a particular disease or condition for a fixed course duration; examples include antibiotics for bacterial infections and direct acting antivirals against Hepatitis C. In addition, suppose the recommended treatment course is

Now, suppose we want to design a trial to identify the shortest effective duration and assume that the primary outcome of the trial is cure, a binary variable equal to 1 if the patient recovers from their condition or 0 otherwise. Using a DURATIONS randomised trial design,

2

we randomise

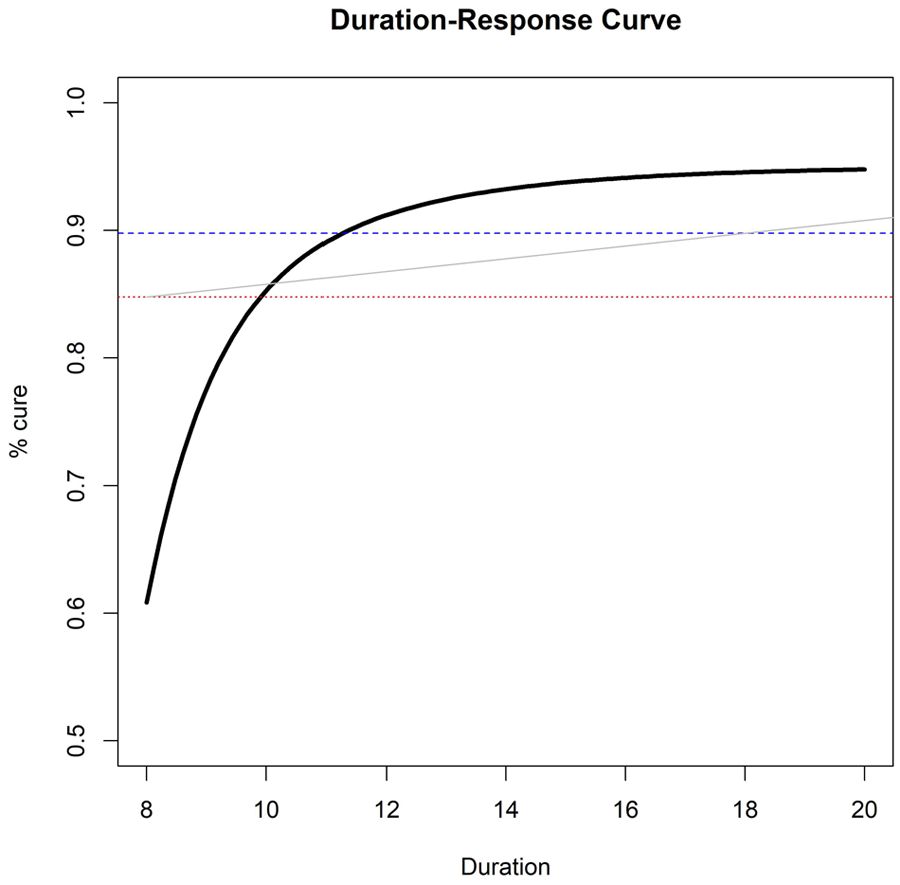

Example of estimated duration-response curve(solid, black), drawn against three possible non-inferiority margins or ‘acceptability frontiers’ (dotted, red = 10% fixed risk difference; dashed, blue = 5% fixed risk difference; solid, grey = duration-specific acceptability frontier).

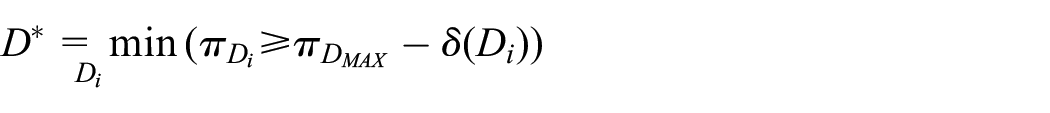

When provided with this curve, how should clinicians and policy-makers choose what is the ‘optimal’ duration to prescribe? A simple choice is to target the duration leading to at most a fixed loss of efficacy (risk difference

In this section, we propose different ways of estimating such a

Model confidence bands

The simplest approach is to extrapolate

The first and most naïve method of controlling type-1 error uses the lower bound of the pointwise confidence bands around the curve, looking for the duration

With

Delta method CI

To compare two specific points on the duration-response curve, we need to estimate a confidence interval around the difference in outcome between the two points. In our application, we want to compare each shorter duration

Bootstrap CI

Alternatively, rather than relying on these approximations, given the observed dataset with

Bootstrap duration CI

Another way of using bootstrapping is to directly estimate a confidence interval around

Theoretical comparison of methods

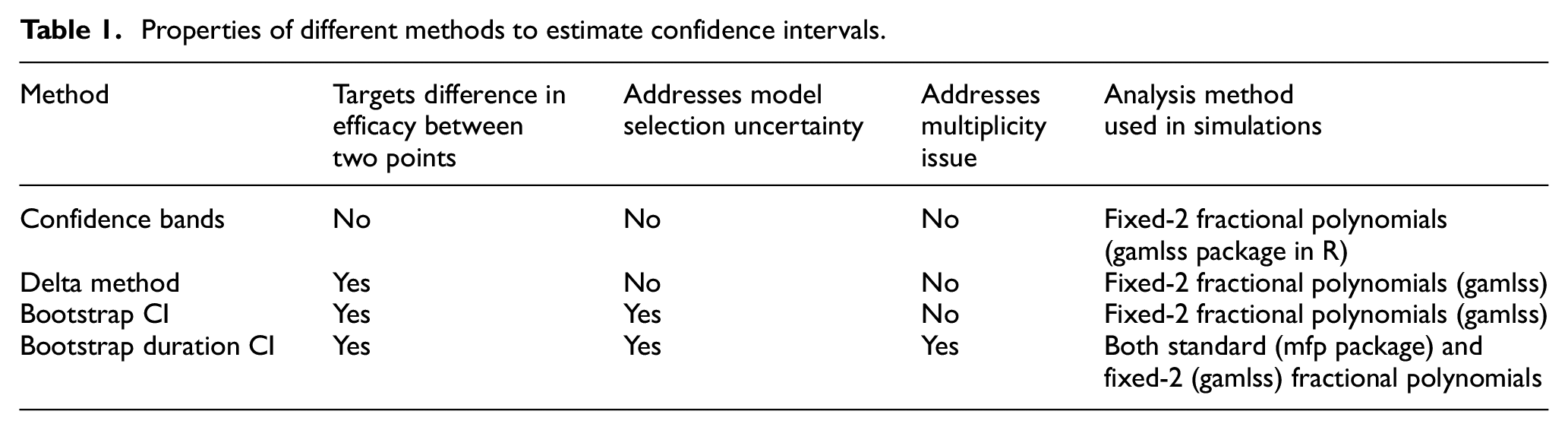

Table 1 provides an overview of the properties of different methods. The attractiveness of the confidence bands method comes from its simplicity, as it is probably the method most researchers would naturally use to estimate

Properties of different methods to estimate confidence intervals.

While the delta method addresses this problem, it is affected by at least one other issue, at least when using fractional polynomials 5 as the flexible regression method: specifically, that model selection variability should be taken into account. 6 The two bootstrap methods are theoretically appealing strategies to solve this problem, as repeating the fractional polynomial selection step for each bootstrap sample is one approach to address model selection variability.

With the delta method and the bootstrap CI method, we estimate multiple confidence intervals around the curve and compare each upper bound against the maximum tolerable risk difference. Hence, we may theoretically run into a multiplicity issue. However, our repeated tests are performed to solve an equation, rather than to formally compare different duration arms, making this less problematic. Bauer et al. 7 discussed a similar version of this serial gate-keeping strategy, showing that it has strong control over type 1 error.

When the model used to estimate the duration-response curve is correct, we expect the bootstrap duration CI method to estimate a confidence interval for

Alternative objectives

Thus far, we assumed that the trial objective was to find the minimum duration

Fixed rate

Instead of considering a fixed difference in cure rate compared to control, one might simply want to identify the duration

This may be reasonable when there is good prior information on the expected cure rate

Fixed risk ratio

Analogously to a standard non-inferiority trial, the margin

For example, if we wanted to preserve 90% of the effect of treatment at

The acceptability frontier

While non-inferiority trials naturally have a single non-inferiority margin

where

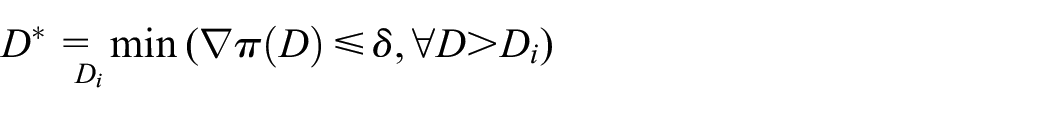

Maximum acceptable gradient

Instead of targeting a specific cure rate, or difference in cure rate, investigators might be interested in the gradient of the duration-response curve. If we expect the curve to asymptote after a certain duration

For example, in the scenarios above, we found that

Simulations

We compared the methods presented above in a simulation study designed using the recently proposed ‘

Aims

The main aim is to compare different strategies of drawing inference from the duration-response curve. Specific questions are: are bootstrap methods necessary, or does the simpler delta-method suffice? Is the fixed-2 fractional polynomial analysis preferable? How problematic is the multiplicity issue with the bootstrap CI method?

Data-generating mechanisms

The DURATIONS design aims to be resilient to the true underlying duration-response relationship. Consequently, we generate data under sixteen different scenarios that are listed and plotted in the additional material online, including the eight scenarios in (Quartagno et al, 2018). These reflect a wide range of possible duration-response relationships, including both those generated by fractional polynomials and those generated from sigmoid functions (which are not strictly within the fractional polynomial paradigm). Across scenarios, the optimal duration’s proximity to the nearest integer varies, to explore the effect this may have on results. For comparisons, we re-scale the

Estimation targets

We initially assume the estimation target of interest is the duration

Methods

We compare the four different methods of estimating

Performance measures

The choice of performance measures is not straightforward. Unlike most randomised trial designs, our DURATIONS randomised design does not define a null hypothesis to test. This is because the longest treatment duration we randomise patients to is already known to be (usually highly) effective, and our aim is only to find the most appropriate shorter duration. Under very simple assumptions, that is, that the duration-response curve is continuous and monotonic, we know that either there is an integer duration

Given this, and having defined our estimation target, we define three main performance measures of interest:

1. Type-1 Error: in this context, we define type-1 error as the probability that our trial ends up recommending a duration

2. Optimal Power: there are several ways to define power compared to standard hypothesis testing. We define

3. Acceptable Power: designing a trial to reach high levels of Optimal Power might be difficult, but one might be interested in simply finding an effective duration that is shorter than the recommended one, even if it is not necessarily the

In addition, we measure performance by considering the distribution of all the recommended durations

Different estimation targets

We performed additional simulations to explore the operating characteristics of the bootstrap duration CI method with each of the different estimation targets. We analysed the same 1000 datasets generated for the 16 scenarios in our base case simulations, with

Results

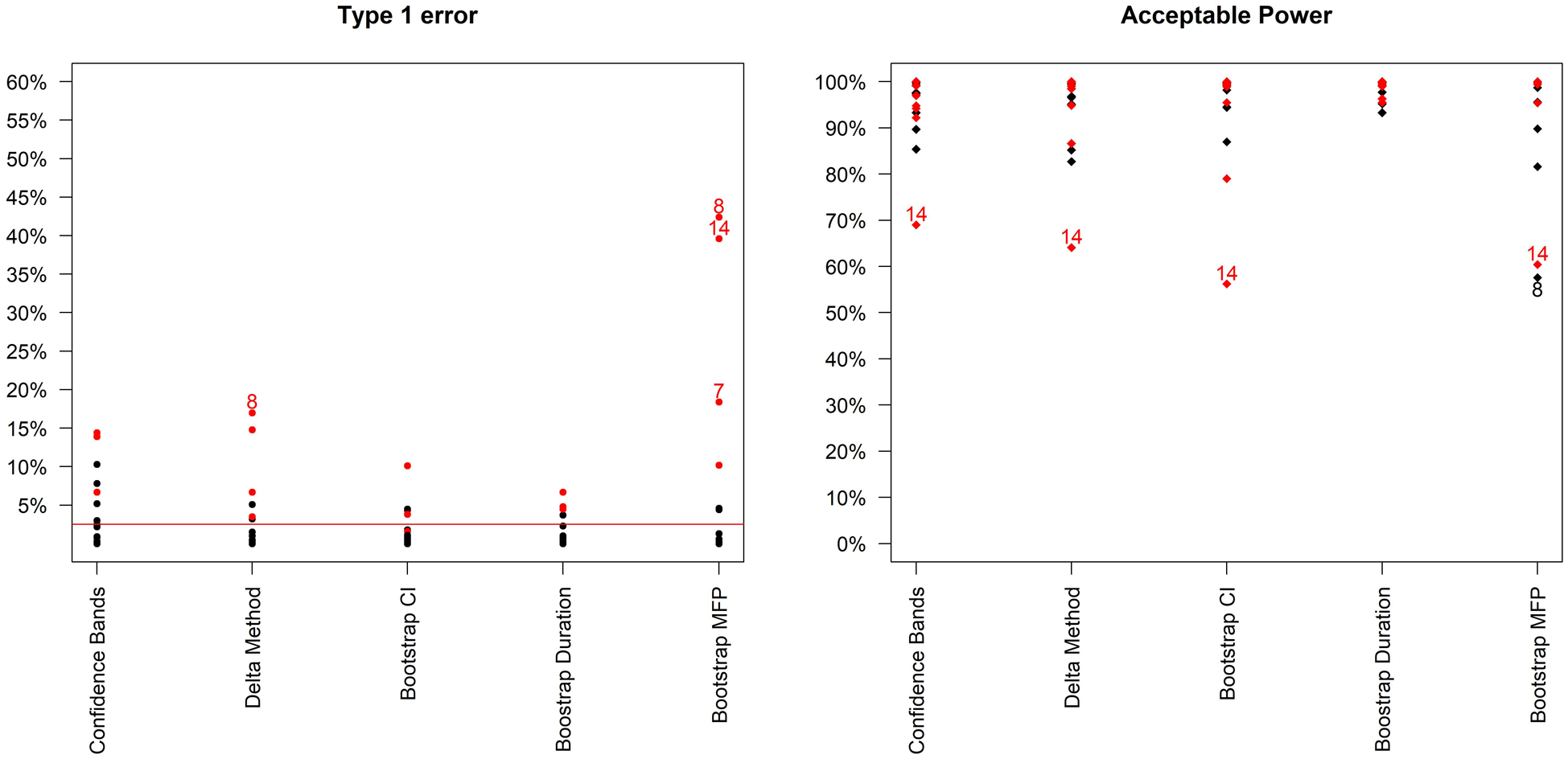

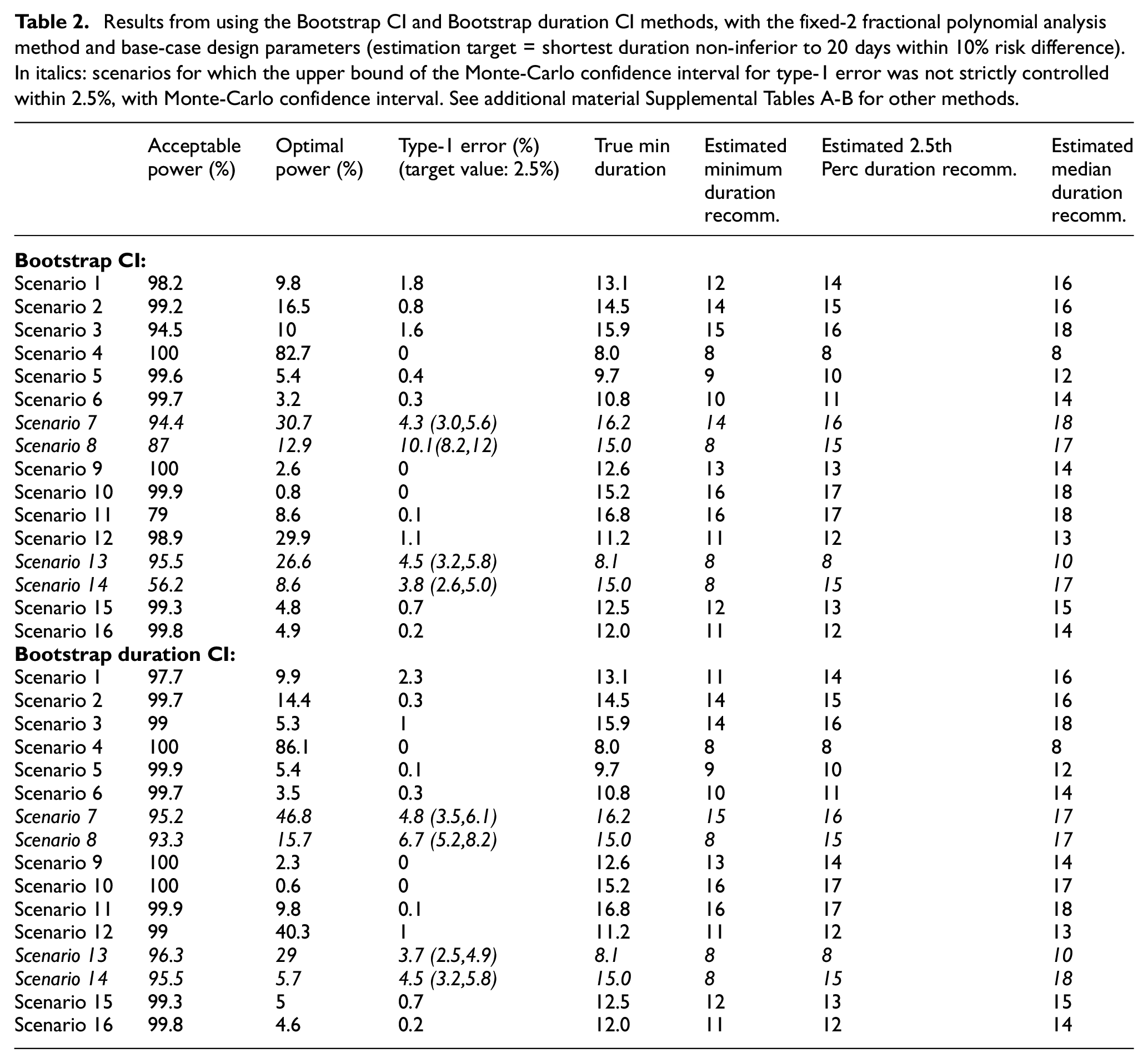

Figure 2 compares type-1 error and acceptable power across the different methods. It is immediately clear that type-1 error is not adequately controlled under certain scenarios. These scenarios are mainly those where the curvature at the optimal duration is positive, that is, those for which steepness of the curve is increasing at the optimal duration. These are arguably not the most likely scenarios in our settings, as we expect to be investigating part of the duration-response curve where the curve is asymptotic; nevertheless, it is preferable to use bootstrap methods that provide better inference by taking into account model selection variability. Differences between the two bootstrap methods are less marked (Table 2, and Supplemental Figure b in additional online material), although type-1 error is generally slightly lower using the bootstrap duration CI method.

Type-1 error and acceptable power (probability of recommending any sufficiently effective shorter duration) of the 5 analysis methods across the 16 simulation scenarios. Bootstrap MFP uses the Bootstrap duration CI method, but using the standard fractional polynomial approach as the analysis method (as in R package mfp). Scenarios leading to type 1 error > 15% or Acceptable Power < 70% are indexed. In addition, scenarios where the curvature is positive at the optimal duration are in red.

Results from using the Bootstrap CI and Bootstrap duration CI methods, with the fixed-2 fractional polynomial analysis method and base-case design parameters (estimation target = shortest duration non-inferior to 20 days within 10% risk difference). In italics: scenarios for which the upper bound of the Monte-Carlo confidence interval for type-1 error was not strictly controlled within 2.5%, with Monte-Carlo confidence interval. See additional material Supplemental Tables A-B for other methods.

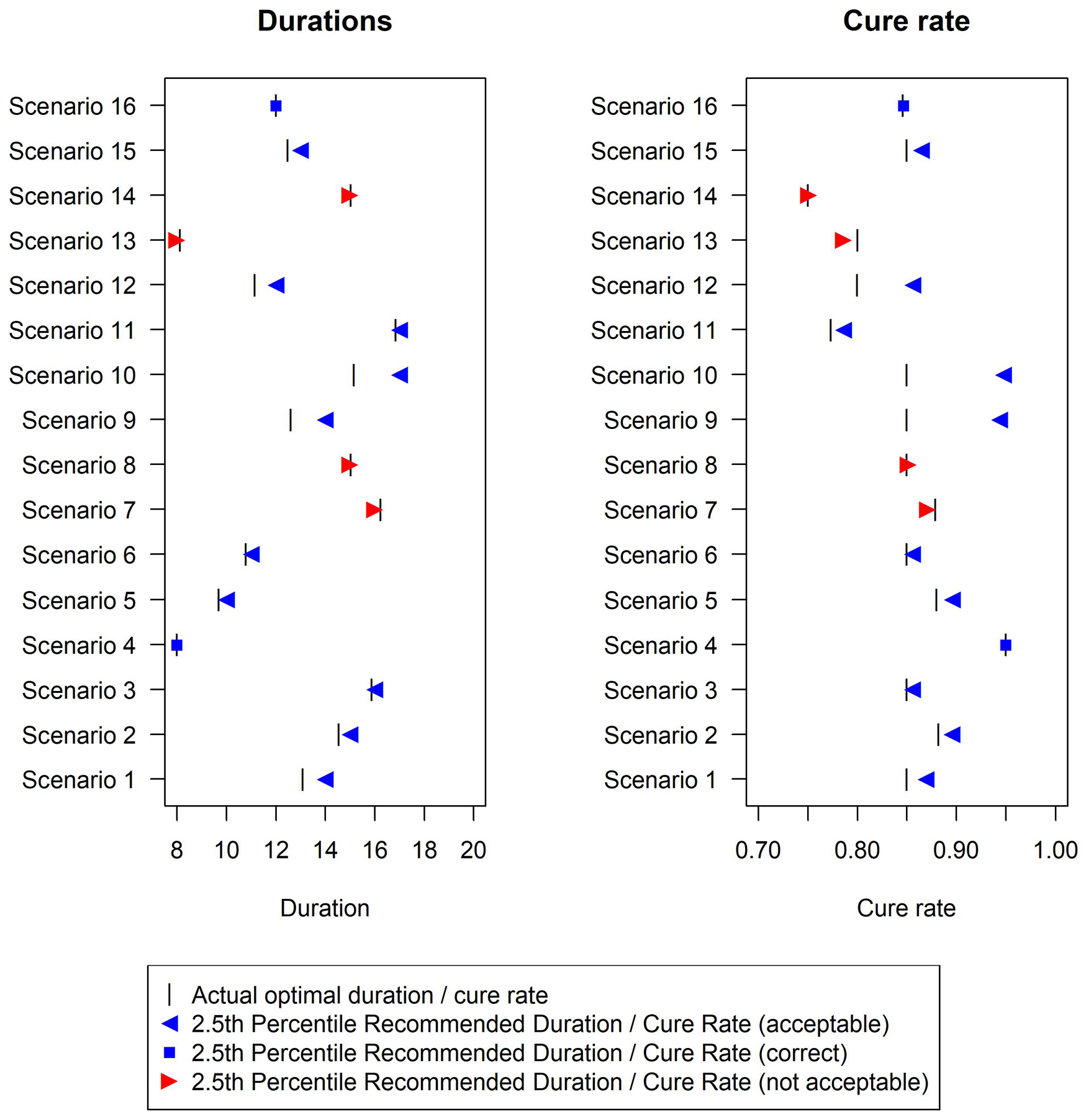

In terms of the analysis model, the fixed-2 fractional polynomial method, which is used in all analysis methods except for the last reported method in Figure 2 (and Supplemental Table E in additional material), is preferable with regards to type-1 error. This is because it selects the best fitting model, while standard fractional polynomials seek a parsimonious model. Similarly less satisfactory results were reported using standard fractional polynomials with the bootstrap CI method as well (results not shown). There are only a few scenarios under which the two bootstrap methods fail to control the type-1 error within 2.5%, namely scenarios 7, 8, 13 and 14 (Figure 3). However, this figure shows that the difference between the estimated minimum duration and the actual minimum acceptable duration is small (≤1 day) in these scenarios and that the 2.5th percentiles of recommended durations (and associated cure rate) are very close to the optimal duration (and corresponding cure rate).

Duration recommended and associated cure rate across the 16 simulation scenarios using the Bootstrap duration CI method, with base-case design parameters (estimation target = shortest duration non-inferior to 20 days within 10% risk difference). The vertical bar indicates the true minimum effective duration.

In terms of power, differences are not as pronounced, and all methods achieve very good acceptable power (>90%) under most scenarios. Of note, the simulated sample size (

The only scenario where optimal power exceeds 80% is Scenario 4 (Table 2, Supplemental Tables A-D in additional material), that is, constant cure rate at every duration. In this scenario, optimal power can be broadly interpreted as the power of a non-inferiority trial of

Finally, conclusions are similar using

Different estimation targets

Results (Supplemental Tables D-G and Figure c in the additional material) show reasonable performance for the first three alternative estimation targets. For the maximum gradient target, type-1 errors are large, and further analysis refinements would be necessary to use this.

Analysis example

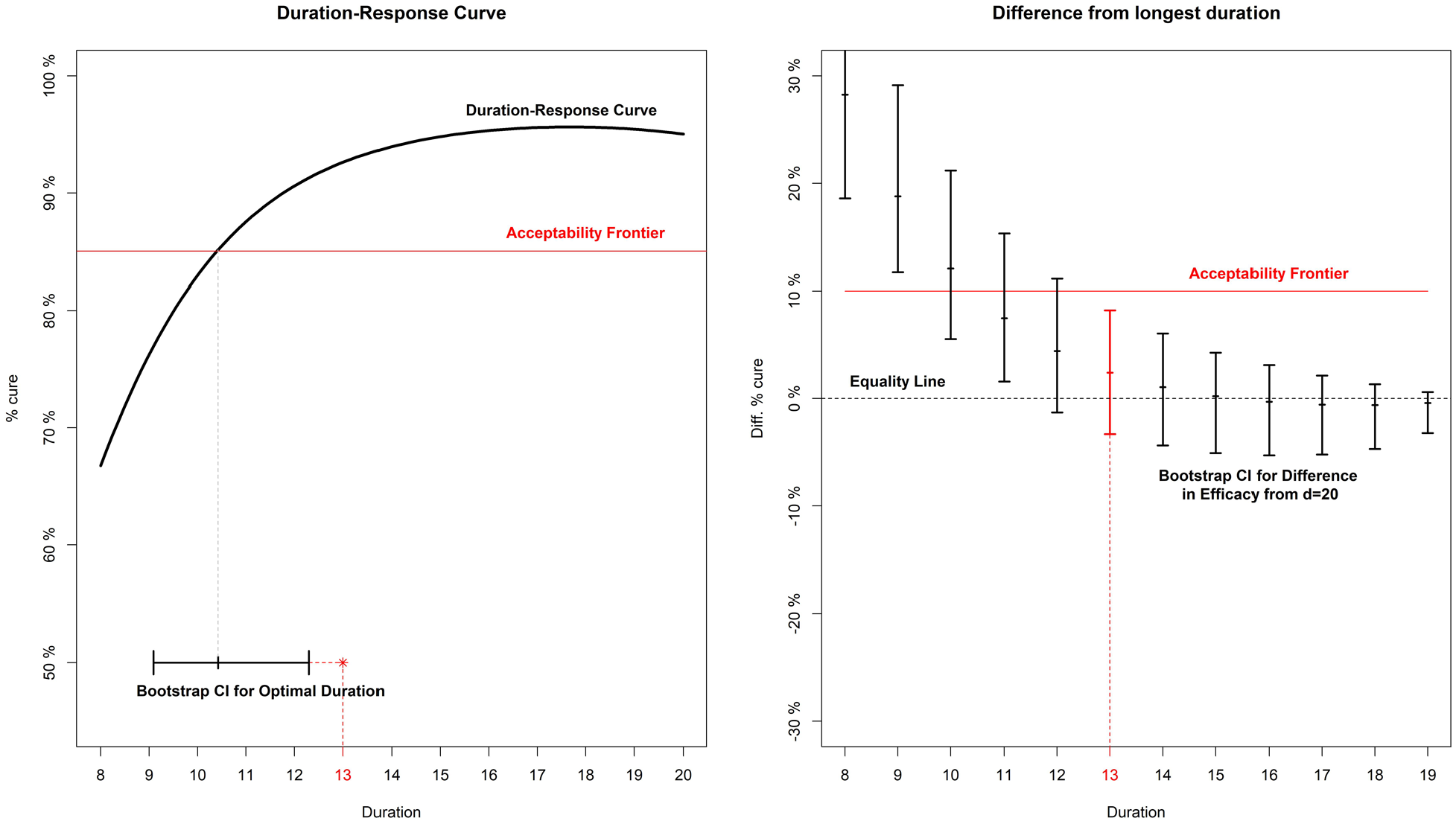

Given the simulation results, here we sketch our favoured three-step approach to analysing a DURATIONS trial. First, an acceptability frontier should be defined, answering the question ‘what would the non-inferiority margin be for a trial comparing the longest duration to each shorter one?’. In this example, we assume a reasonable non-inferiority margin is 10% cure rate difference compared to the control duration, as in the base-case scenario of our simulations (Figure 4). Second, we run our fixed-2 fractional polynomial algorithm to estimate the duration-response curve (black solid line in Figure 4). Third, we use the bootstrap (BCa method)

11

to find the confidence interval either around our estimated optimal duration

Analysis example for a hypothetical trial. On the left panel, the duration-response curve is estimated and then a bootstrap CI is built around the point where it crosses the acceptability frontier. On the right panel, bootstrap CIs are built around the difference in efficacy (cure rate) between each arm and the longest (d = 20).

Discussion

In this article, we have compared different strategies for drawing inference from a duration-response curve estimated using a DURATIONS randomised trial design. We defined quantities analogous to type-1 error and power in this scenario and found a method based on bootstrap re-sampling – to estimate the duration associated with a specific cure rate difference from control – has good inferential properties when combined with a fixed-2 fractional polynomial analysis method. This is therefore our recommended approach.

One issue with the standard non-inferiority design for identifying optimal treatment duration is the potential for so-called ‘bio-creep’, that is, the erosion of efficacy from sequentially testing for non-inferiority shorter durations, iteratively updating the control duration to one previously shown to be non-inferior. 12 One advantage of the DURATIONS design is that it avoids this problem, as long as all the durations are evaluated in the same trial. Another advantage is its resilience; in a standard non-inferiority trial, whenever a single design parameter turns out to have been badly misjudged, the whole trial can quickly lose power or interpretability. By contrast, the DURATIONS design has been developed to be flexible enough to maintain good properties against a wide range of duration-response curves.

Extensions

Design and analysis of randomised trials often intersect, and hence what is an analysis decision (how to analyse and draw inference from the observed data) also has design implications (how to best design a trial that we aim to analyse in a particular way). Hence, future work will investigate how to design a DURATIONS randomised trial that we aim to analyse with methods presented here, particularly considering the key challenge that the error rates depend on the true duration-response curve, which is unknown at the point of design.

We focused on binary outcomes, but similar methods could be easily used for continuous and survival outcomes, with the only additional complication of having to derive a suitable estimation target. When developing the target based on the acceptability frontier, we assumed that this frontier was subjectively drawn by the analyst, based on assumptions about the trade-offs between shortening duration and loss of treatment efficacy. An alternative could be to derive the acceptability frontier using available data on the secondary advantages of shorter durations. For example, if the goal was to design a DURATIONS trial in Hepatitis C, the acceptability frontier could be built using data on costs.

Although the methods presented here were motivated by trials whose aim was to optimise treatment duration in large Phase III-type trials, the same methods could be used to optimise treatment dose in smaller Phase II-type trials. There is a large literature on methods for these smaller dose-finding trials, and some of the methods introduced in those settings could be adapted to our design. For example, several papers have investigated dose selection using gatekeeping strategies.7,13,14 One important difference is that in our application, there is generally already an accepted maximum duration,

Future work could include investigating the effect of including additional covariates in the fractional polynomial model, for example age or sex, if there was evidence that the optimal duration might vary depending on these factors. It could also investigate using an adaptive design, to allow for closure of poorly performing arms (i.e. those at the lowest durations). 4

For tuberculosis and related settings, where the optimal duration might be investigated for a new drug or new regimen, it is important to investigate the best way to include a formal comparison with an independent control treatment of fixed duration, for example with the standard 6-month tuberculosis treatment course with rifampicin, isoniazid, pyrazinamide and ethambutol.

Conclusion

We recently proposed the DURATIONS randomised trial design as an alternative to the standard two-arm non-inferiority design when the goal is to optimise treatment duration. In this article, we have investigated the operating characteristics of various methods of drawing inference from the duration-response curve and found that a method based on bootstrap to estimate a duration associated with a specific cure rate has good properties and is an appealing choice. Using this analysis method, DURATIONS randomised trials could help identify better treatment durations in an optimal way across many illnesses. 4

Supplemental Material

Quartagno_Additional_Material__-_2nd_revision-1 – Supplemental material for The DURATIONS randomised trial design: Estimation targets, analysis methods and operating characteristics

Supplemental material, Quartagno_Additional_Material__-_2nd_revision-1 for The DURATIONS randomised trial design: Estimation targets, analysis methods and operating characteristics by Matteo Quartagno, James R Carpenter, A Sarah Walker, Michelle Clements and Mahesh KB Parmar in Clinical Trials

Footnotes

Code

The code used for the simulations will be made available on the GitHub page of the first author.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Medical Research Council [MC_UU_12023/29]. ASW is an NIHR Senior Investigator. The views expressed are those of the author(s) and not necessarily those of the NHS, the NIHR, or the Department of Health.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.