Abstract

Background:

Experimental treatments pass through various stages of development. If a treatment passes through early-phase experiments, the investigators may want to assess it in a late-phase randomised controlled trial. An efficient way to do this is adding it as a new research arm to an ongoing trial while the existing research arms continue, a so-called multi-arm platform trial. The familywise type I error rate is often a key quantity of interest in any multi-arm platform trial. We set out to clarify how it should be calculated when new arms are added to a trial some time after it has started.

Methods:

We show how the familywise type I error rate, any-pair and all-pairs powers can be calculated when a new arm is added to a platform trial. We extend the Dunnett probability and derive analytical formulae for the correlation between the test statistics of the existing pairwise comparison and that of the newly added arm. We also verify our analytical derivation via simulations.

Results:

Our results indicate that the familywise type I error rate depends on the shared control arm information (i.e. individuals in continuous and binary outcomes and primary outcome events in time-to-event outcomes) from the common control arm patients and the allocation ratio. The familywise type I error rate is driven more by the number of pairwise comparisons and the corresponding (pairwise) type I error rates than by the timing of the addition of the new arms. The familywise type I error rate can be estimated using Šidák’s correction if the correlation between the test statistics of pairwise comparisons is less than 0.30.

Conclusions:

The findings we present in this article can be used to design trials with pre-planned deferred arms or to add new pairwise comparisons within an ongoing platform trial where control of the pairwise error rate or familywise type I error rate (for a subset of pairwise comparisons) is required.

Keywords

Introduction

Many recent developments in clinical trials are aimed at speeding up research by making better use of resources. Phase III clinical trials can take several years to complete in many disease areas, requiring considerable resources. During this time, a promising new treatment which needs to be tested may emerge. The practical advantages of incorporating such a new experimental arm into an existing trial protocol have been clearly described before,1–4 not least because it obviates the often lengthy process of initiating and launching a new trial which may compete for patients with the existing one. One trial using this approach is the STAMPEDE trial 5 in men with high-risk prostate cancer. STAMPEDE is a multi-arm multi-stage (MAMS) platform trial that was initiated with one common control arm and five experimental arms assessed over four stages. Five new experimental arms have been added since its conception 1 – see next section for further design details. This was done within the paradigm of a ‘platform’ that has a single master protocol in which multiple treatments are evaluated over time. It offers flexible features such as early stopping of accrual to treatments for lack-of-benefit or adding new research treatments to be tested during the course of a trial. There might also be scenarios when at the design stage of a new trial another experimental arm is planned to be added after the start of the trial, that is, a planned addition. An example of this scenario is the RAMPART trial in renal cancer – see “Results” section and online Supplemental Appendix for design details. In some platform designs, however, the addition of the new experimental arm would be intended but not specially planned at the start of the platform, that is, unplanned, and is opportunistic at a later stage. In other words, in the planned scenario, the addition of a new research arm at a later stage is clearly foreseen at the design stage, whereas in the unplanned scenario the opportunity or need to assess a further comparison becomes apparent during the trial.

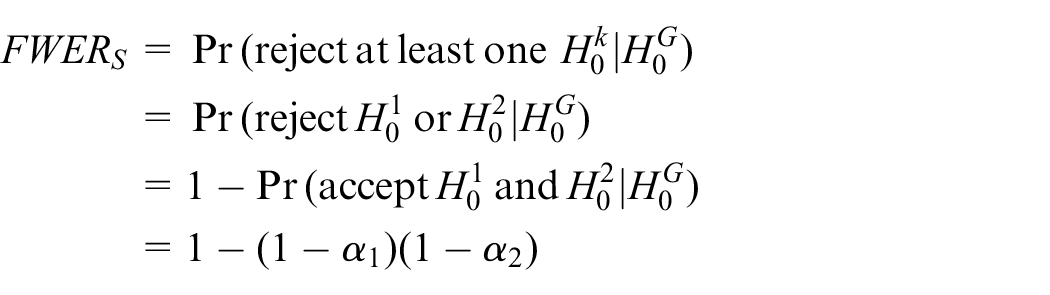

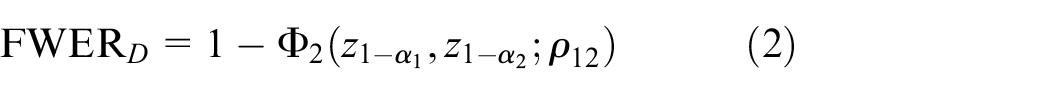

The type I error rate is one of the key quantities in the design of any clinical trial. Two measures of type I error in a multi-arm trial are the pairwise type I error rate (PWER) and familywise type I error rate (FWER). The PWER is the probability of incorrectly rejecting the null hypothesis for the primary outcome of a particular experimental arm at the end of the trial, regardless of other experimental arms in the trial. The FWER is the probability of incorrectly rejecting the null hypothesis for the primary outcome for at least one of the experimental arms from a set of comparisons in a multi-arm trial. It gives the type I error rate for a set of pairwise comparisons of the experimental arms with the control arm. In trials with multiple experimental arms, the maximum possible FWER often needs to be calculated and known – see the review by Wason et al. 6 for details. In some multi-arm trials, this maximum value needs to be controlled at a predefined level. This is called a strongly controlled FWER as it covers all eventualities, that is, all possible hypotheses. 7 Dunnett 8 developed an analytical formula to calculate the FWER in multi-arm trials when all the pairwise comparisons of experimental arms against the control arm start and conclude at the same time. However, it has been unclear how to calculate the FWER when new experimental arms are added during the course of a trial.

The purpose of this article is threefold. First, we describe how the FWER, disjunctive (any-pair) and conjunctive (all-pairs) powers – see “Methods” section for their definitions – can be calculated when a new experimental arm is added during the course of an existing trial with continuous, binary, and time-to-event outcomes. Second, we describe how the FWER can be strongly controlled at a prespecified level for a set of pairwise comparisons in both planned (i.e. the added arm is planned at the design stage) and unplanned (e.g. such as platform designs) scenarios. Third, we explain how the decision to control the PWER or the FWER in a particular design involves a subtle balancing of both practical and statistical considerations. 9 This article outlines these issues and provides guidance on whether to emphasise the PWER or the FWER in different design scenarios when adding a new experimental arm.

The structure of the article is as follows. In the next section, the design of the STAMPEDE platform trial is presented. In “Methods” section, we explain how the FWER, disjunctive, and conjunctive powers are computed when a new experimental arm is added to an ongoing trial. In “Results” section, we present the outcome of our simulations to verify our analytical derivation. We also show two applications in both planned (i.e. RAMPART trial in renal cancer) and platform design (i.e. STAMPEDE trial) settings. We then propose strategies that can be applied to strongly control the FWER when adding new experimental arms to an ongoing platform trial in scenarios where such a control is required. Finally, we discuss our findings.

An example: STAMPEDE trial

STAMPEDE 1 is a multi-arm multi-stage (MAMS) platform trial for men with prostate cancer at high risk of recurrence who are starting long-term androgen deprivation therapy. In a four-stage design, five experimental arms with treatment approaches previously shown to be safe were compared with a control arm regimen. In this trial, the primary analysis was carried out at the end of stage 4, with overall survival as the primary outcome. Stages 1 to 3 used an intermediate outcome measure of failure-free survival. As a result, the corresponding hypotheses at interim stages played a subsidiary role – that is, used for lack-of-benefit analysis on an intermediate outcome, not for making claims of efficacy. We, therefore, focus here on the primary hypotheses on overall survival at the final stage – for designs with both lack-of-benefit and efficacy stopping boundaries see the articles by Blenkinsop et al. 10 and Blenkinsop and Choodari-Oskooei. 11

Recruitment to the original arms began late in 2005 and was completed early in 2013. The design parameters for the primary outcome at the final stage were a (one-sided) pairwise significance level of 0.025, power of 0.90, and the target hazard ratio of 0.75 on overall survival which required 401 control arm deaths (i.e. events on overall survival). An allocation ratio of

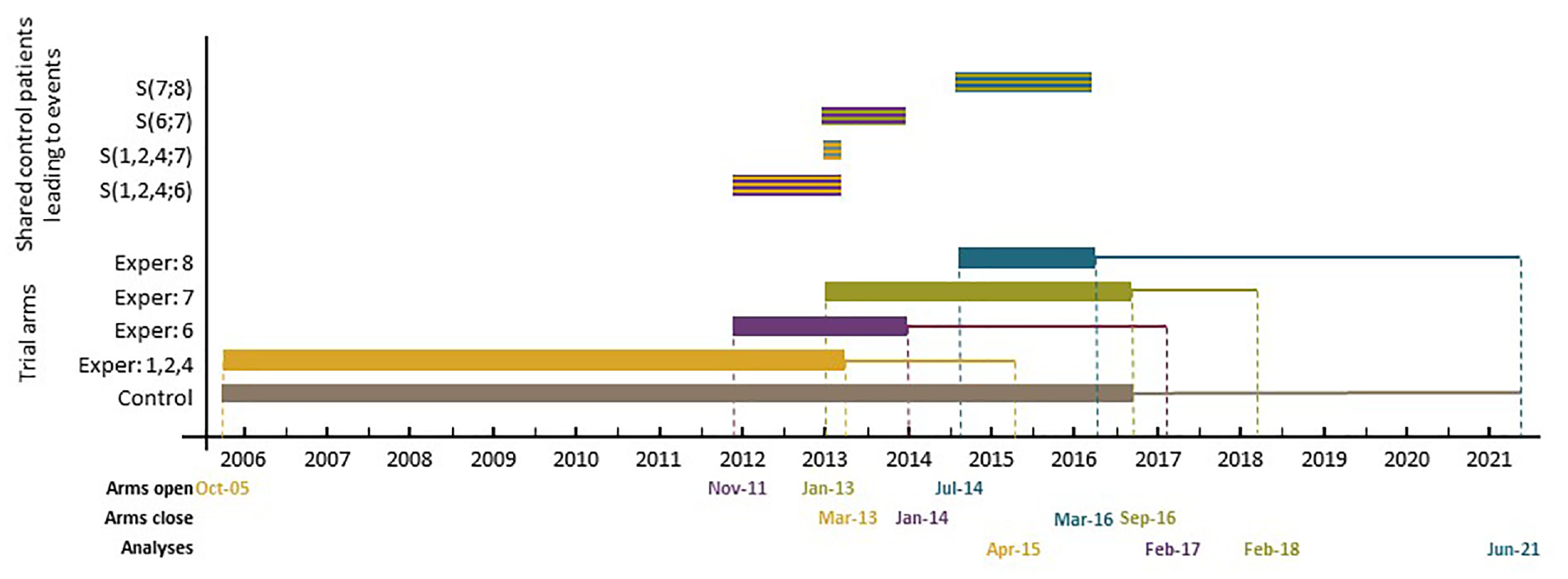

Since November 2011, five new experimental arms have been added to the original design. Figure 1 presents the timelines for different arms, including three of those added later. Note that patients allocated to a new experimental arm are only compared with patients randomised to the control arm contemporaneously, and recruitment to the new experimental arm(s) continues for as long as is required. Therefore, the analysis and reporting of the new comparisons will be later than for the original comparisons. Figure 1 also shows the recruitment periods when the pairwise comparisons of the newly added experimental arms with the control overlap with each other as well as with those of the original comparisons – see the top section of Figure 1.

Schematic representation of the control and experimental arm timelines in the STAMPEDE trial.

Methods

In this section, we first present the formulae for the correlation of the two test statistics when one of the comparisons is added later in trials with continuous, binary, and survival outcomes. We then describe how Dunnett’s test can be extended to compute the FWER, as well as conjunctive and disjunctive powers when a new arm is added mid-course of a two-arm trial.

Type I error rates when adding a new arm

In a two-arm trial, the primary comparison is between the control group (C) and the experimental treatment (E). The parameter

The efficient score statistic for

In practice, the progress of a trial can be assessed in terms of ‘information time’t because it measures how far through the trial we are.

12

In the case of continuous and binary outcomes, t is defined as the total number of individuals accrued so far divided by the total sample size. In survival outcomes, it is defined as the total number of events occurred so far divided by the total number of events required by the planned end of the trial.

12

In all cases

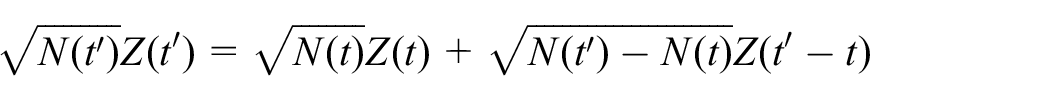

Furthermore, the Z-test statistic can be expressed in terms of the efficient score statistic S and Fisher’s information as

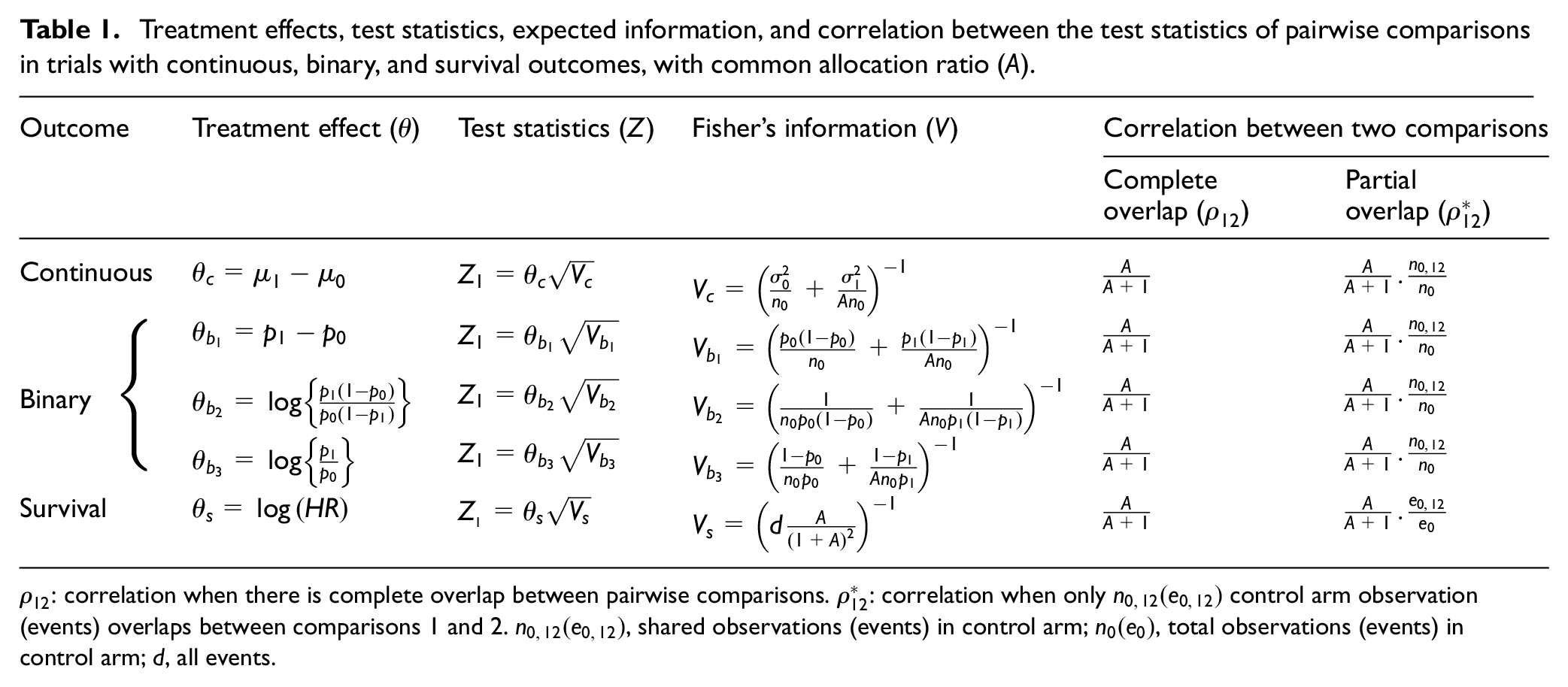

Treatment effects, test statistics, expected information, and correlation between the test statistics of pairwise comparisons in trials with continuous, binary, and survival outcomes, with common allocation ratio (A).

If a different experimental arm,

When

where subscript S stands for Šidák. If the control arm observations are shared between the two pairwise comparisons, one can replace the term

where

The formula for

where

Note that the factor

Our analytical derivation shows that equation (3) applies to both continuous and binary outcome measures. However, in survival outcomes, the ratio

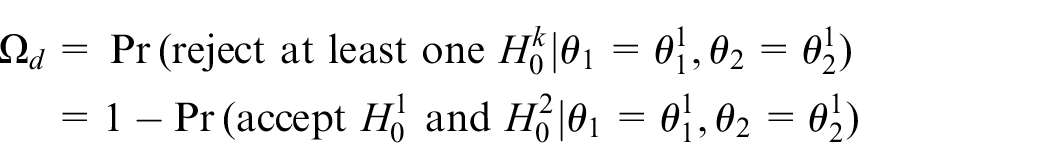

Power when adding a new arm

The power of a clinical trial is the probability that under a particular target treatment effect

when the two comparisons,

If

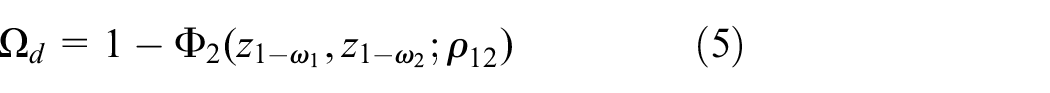

Conjunctive (all-pairs) power is the probability of showing a statistically significant effect under the targeted effects for all comparison pairs. When the two tests are independent, conjunctive power

Given the correlation

If a new experimental arm is added later on, the corresponding formula for

Results

In this section, we first show the results of our simulations to explore the validity of equation (3) to estimate

Simulation design

In our simulations, we considered a hypothetical three-arm trial with one control, C, and two experimental arms (

As in the STAMPEDE trial, the comparison set of patients for the deferred experimental arm

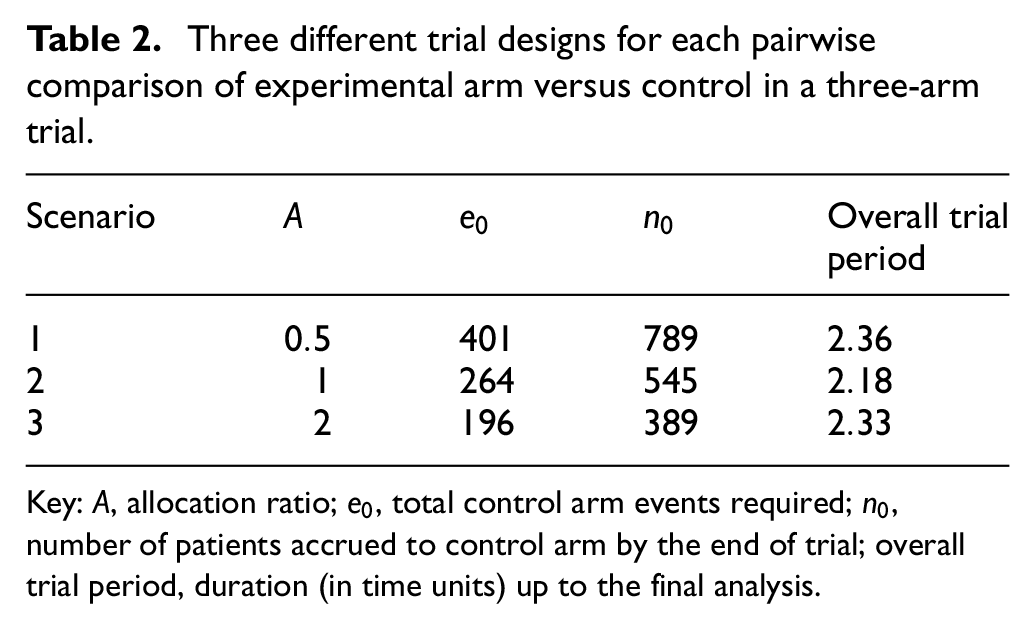

Three different trial designs for each pairwise comparison of experimental arm versus control in a three-arm trial.

Key: A, allocation ratio;

Finally, the main aim of our simulation study is to explore the impact of the timing of adding a new experimental arm on the correlation structure and the value of the FWER. For this reason, only one original comparison was included in our simulations. In the following sections and Discussion, we discuss how the FWER can be strongly controlled and address other relevant design issues, when more pairwise comparisons start at the beginning.

Simulation results

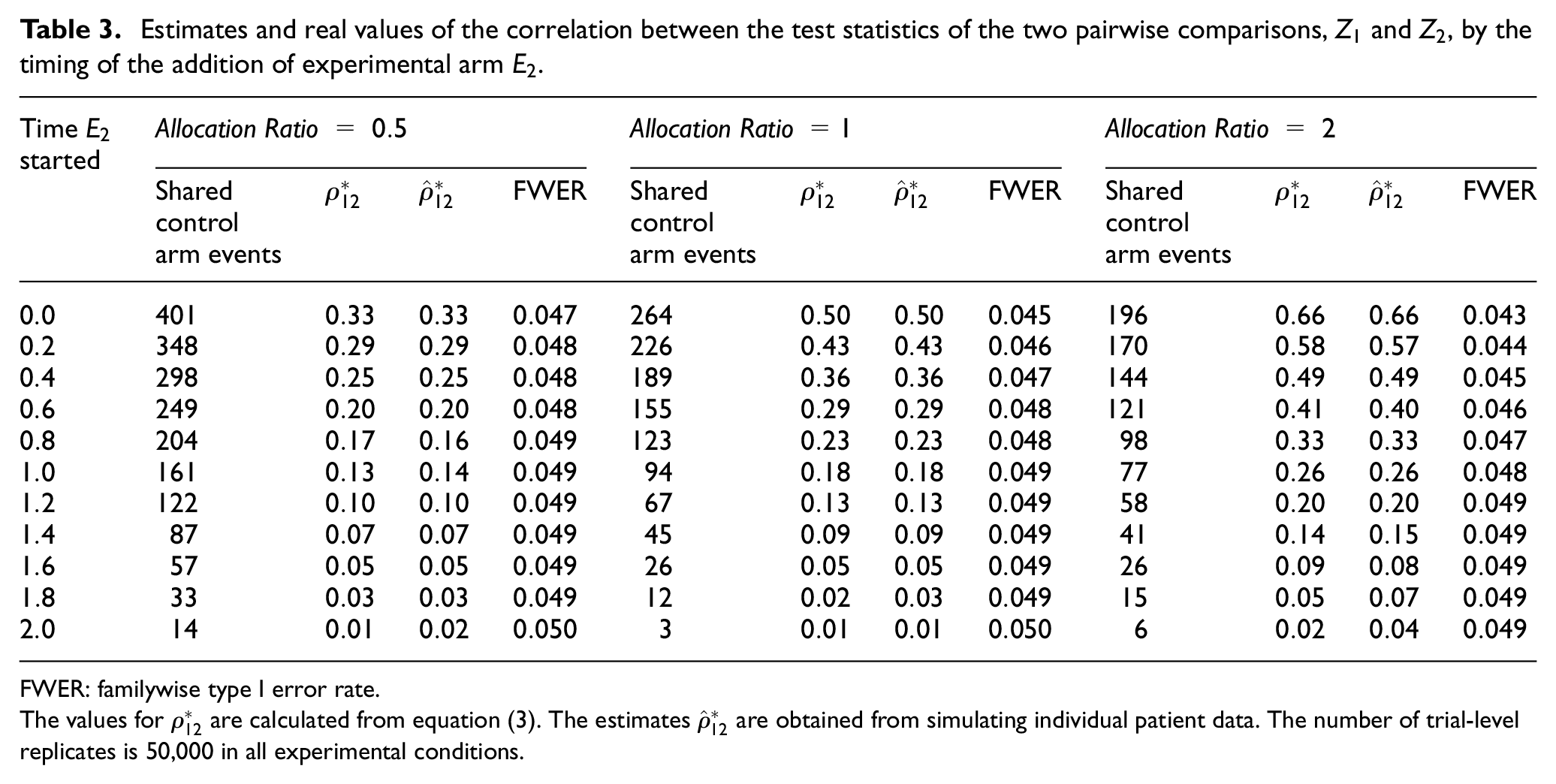

The results are summarised in Tables 3 and 4. Table 3 shows the values of the correlation between the test statistics of the two pairwise comparisons as computed from the corresponding equation for

Estimates and real values of the correlation between the test statistics of the two pairwise comparisons,

FWER: familywise type I error rate.

The values for

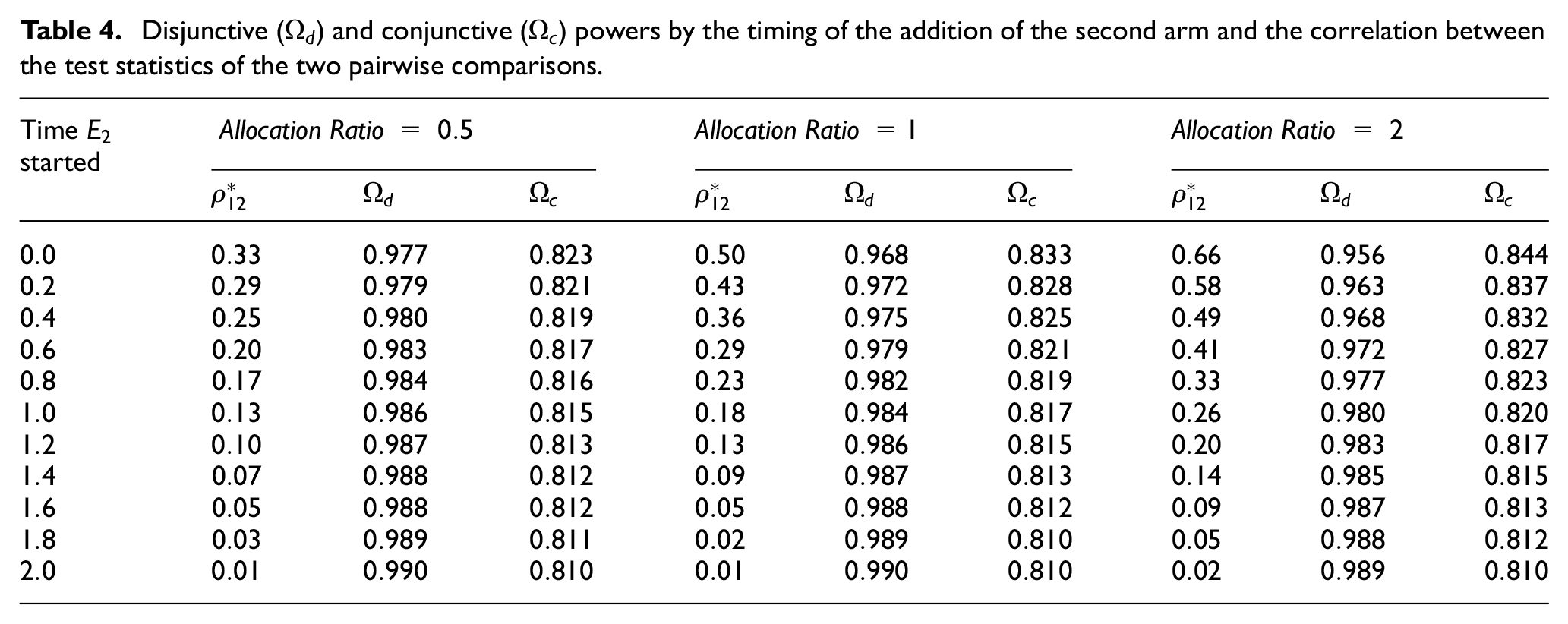

Disjunctive (

The results for each allocation ratio indicate that when

Furthermore, it is evident from our simulations that when more individuals are allocated to the control arm (i.e.

Finally, Table 4 presents the disjunctive and conjunctive powers in each scenario. The results indicate that the timing of the addition of the new arm has more impact on both types of powers. Nonetheless, the impact is still relatively low – particularly, when the allocation ratio is less than one. However, the degree of overlap affects the two types of powers in opposite directions. While conjunctive power decreases with smaller overlap, disjunctive power increases in such scenario.

FWER of STAMPEDE when new arms were added

In this section, we calculate the correlation between the test statistics of different pairwise comparisons in STAMPEDE when new arms are added. The newly added therapies look to address different research questions than those of the original comparisons. When the first new experimental arm was added, STAMPEDE had only three experimental arms open to accrual because arms

To calculate the correlation between different test statistics, we needed to estimate (or predict) the shared control arm events of the corresponding pairwise comparisons – Section B of Supplemental Appendix explains this in detail. As the results in Table 1 in Supplemental Appendix show, only seven common control arm primary outcome events were expected to be shared between

Design application: RAMPART trial

Renal Adjuvant MultiPle Arm Randomised Trial (RAMPART) is an international phase III MAMS trial of adjuvant therapy in patients with resected primary renal cell carcinoma (RCC) at high or intermediate risk of relapse. The control arm (C), that is, active monitoring, and the first two experimental arms (

We carried out simulations to investigate the impact of the timing of adding the third experimental arm on the FWER. This was done at 2 years, 3 years, and 4 years into the two original comparisons. The simulation results confirmed our findings that the timing of the addition of

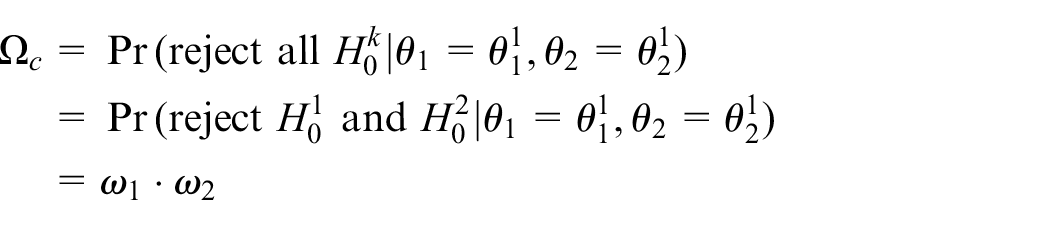

Strong control of FWER when required

Opinions differ as to whether the FWER needs to be strongly controlled in all multi-arm trials.9,6,18–21 In our view, there are cases, such as examining different doses of the same drug, where the control of the FWER might be necessary to avoid offering a specific therapy an unfair advantage of showing a beneficial effect. However, in many multi-arm trials where the research treatments in the existing and added comparisons are quite different from each other, we would argue that greater focus should be on controlling each pairwise error rate.9,22 To support this view, consider the following: if two distinct experimental treatments are compared to a current standard in independent trials, it is accepted that there is no requirement for multiple testing adjustment. 18 Therefore, it seems fallacious to impose an unfair penalty if these two hypotheses are instead assessed within the same protocol where both hypotheses are powered separately and appropriately. This is seen most clearly when the data remain entirely independent, for example, when these are non-overlapping with effectively separate control groups. Moreover, the statistical reasoning behind the multiplicity adjustment is to limit the possibility of chance as the cause of significant finding. As Proschan and Waclawiw 19 point out, this becomes less compelling if each comparison answers a different scientific question. In Wason’s 2014 review, this seems to be an emerging consensus among the broader scientific community (see Figure 2 in Wason et al. 6 review).

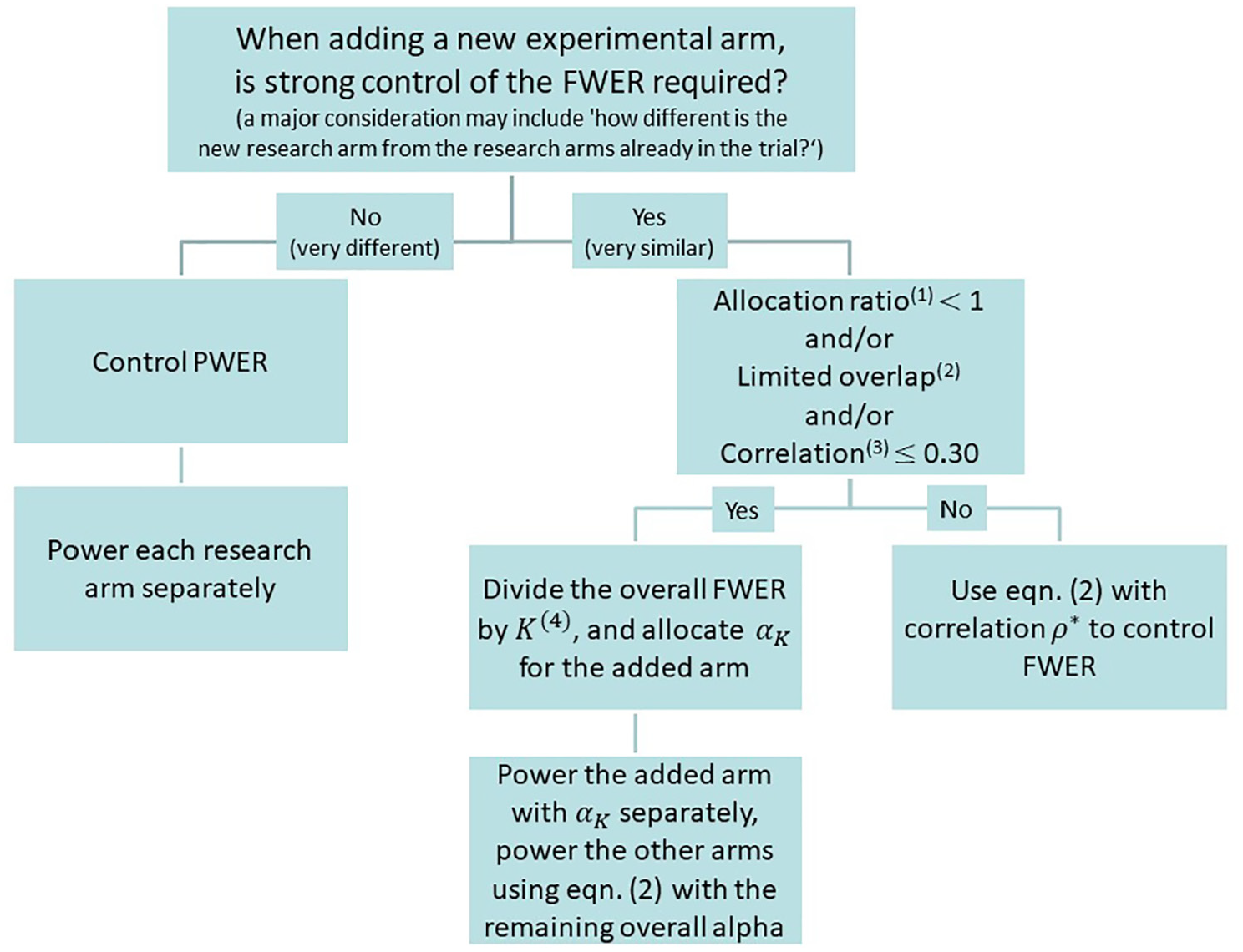

Strategies to control type I error rate when adding new experimental arms.

The timing of adding a new research arm can be considered to be a design parameter. Therefore, like any other design parameter, its prespecification is a pre-requisite to the exact calculation of the overall type I error rate. The prespecification of the timing enables the exact calculation of the correlation structure, that is, from formulas in Table 1– which is then used by the Dunnett method to compute the overall type I and II error rates.

However, the findings from our simulations and analytical derivations indicate that by assuming no overlap between the new research arm and that of the existing comparisons, we can relax the requirement for the prespecification of the timing with minimal impact on the FWER. The ‘no-overlap’ condition is when the FWER reaches its upper bound and is strongly controlled regardless of the timing of the addition of the new research arm. In the following, we describe how both the original comparisons and the added arm can be powered using this approach in both planned and unplanned scenarios.

Our results indicate that the timing of adding a new experimental arm to an ongoing multi-arm trial – where the allocation ratio is often one or less, that is, more patients are recruited to the control arm – is almost irrelevant in terms of changing the value of the FWER. Even in cases where there is an overlap (in terms of ‘information time’) of 60%, the impact on the increase of FWER can be negligible. The practical implication of this finding is that in cases where strong control of the FWER is required, one can simply divide the overall FWER by the total number of pairwise comparisons K, including the added arms, and take the worst-case scenario of complete independence and design the deferred arm with

Finally, we emphasise that the decision to control the PWER or the FWER (for a set of pairwise comparisons) depends on the type of research questions being posed and whether they are related in some way, for example, testing different doses or duration of the same therapy in which case the control of the FWER may be required. These are mainly practical considerations and should be determined on a case-by-case basis in the light of the rationale for the hypothesis being tested and the aims of the protocol for the trial. Once a decision has been made to strongly control (or not) the FWER, Figure 2 summarises our guidelines on how to power the added comparison to guarantee strong control of the FWER. We believe this is a logical and coherent way to assess the control of type I error in most scenarios.

Discussion

It is practically advantageous to add new experimental arms to an existing trial since it not only prevents the often lengthy process of initiating a new trial but also it helps to avoid competing trials being conducted.1,2 It also speeds up the evaluation of newly emerging therapies and can reduce costs and numbers of patients required.3,4 In this article, we studied the familywise type I error rate and power when new experimental arms are added to an ongoing trial.

Our results show that under the design conditions, the correlation between the test statistics of pairwise comparisons is affected by the allocation ratio and the number of common control arm shared observations in continuous and binary outcomes and primary outcome events in trials with survival outcomes. The correlation decreases if more individuals are proportionately allocated to the control arm. This correlation increases as the proportion of shared control arm information increases, and it reaches its maximum when the number of observations (in continuous and binary outcomes) or events (in survival outcomes) is the same in both pairwise comparisons. Our results also showed that the correlation between the pairwise test statistics and the FWER are inversely related. The higher the correlation, the lower the FWER.

We reiterate that in a platform protocol, the emphasis of the design should be on the control of the PWER if distinct research questions are posed in each pairwise comparison, particularly when there is little or no overlap between the comparisons. To support this, we would argue that the scientific community at large is increasingly judging the effects of treatments using meta-analysis rather than focusing on specific individual trial results. 23 For this purpose, the readers and reviewers are not concerned about the value of type I error for each trial or a set of such trials.

Another relevant question in a multi-arm platform protocol is what constitutes a family of pairwise comparisons. Even in the multi-arm parallel group trials, as Miller 24 indicated: ‘There is no hard-and-fast rules for where the family lines should be drawn .’… In platform trials, this is even more complicated. The difficulty in specifying a family arises mainly due to the dynamic nature of a platform trial, that is, stopping of accrual to experimental treatments for lack-of-benefit and/or adding new treatments to be tested during the course of the trial. The definition of a family in this context involves a subtle balancing of both practical and statistical considerations. The practical and non-statistical considerations can be more complex in nature, hence the need for (case-by-case) assessment. However, we (and many others) believe the most important criteria is the relatedness of the research questions.6,19,22 A consideration that can help to decide the relatedness of the research questions may include ‘how different is the target population for the added arm?’ Moreover, therapies that emerge over time are more likely to be distinct rather than related, for example, different drugs entirely rather than doses of the same therapy. For this reason, each hypothesis is more likely to inform a different claim of effectiveness of previously tested agents. An example is the STAMPEDE platform trial where distinct hypotheses were being tested in each of the new experimental arms, and these do not contribute towards the same claim of effectiveness for an individual drug. In this case, the chance of a false-positive outcome for either claim of effectiveness is not increased by the presence of the other hypothesis.

Although we have focused on single-stage designs, our approach can easily be extended to the multi-stage setting where the stopping boundaries are prespecified. As we have shown in RAMPART, if there are interim stages in each pairwise comparison, the correlation between the test statistics of different pairwise comparisons at interim stages also contribute to the overall correlation structure. Similar correlation formula to those presented in Table 1 can be analytically derived, see Supplemental Appendix, to calculate the interim stages correlation structure. Our experience has shown that even in this case the correlation between the final stage test statistics principally drives the FWER. Our empirical investigation has indicated that even large changes in the correlation between the interim stage test statistics have minimal impact on the estimates of the FWER. Nonetheless, if researchers wish to have the flexibility of non-binding stopping guidelines, then the correlation structure can be estimated in the same manner as discussed in this article by considering the correlation between the final-stage test statistics only. In addition, in the multi-stage designs, there is a chance that some of the original arms are stopped for lack-of-benefit. Even in this case, the FWER is strongly controlled at the prespecified level if the decision to add a new research arm is taken at the initial design stage, that is, planned scenario. However, in the unplanned scenario, the only way to control the FWER for the new set of pairwise comparisons is to suitably reduce the pairwise type I error rates for all the ongoing comparisons, that is, decrease their final significance level, due to the addition of the new research arm.

Furthermore, in our simulations, one experimental arm started with control at the beginning of the trial since the aim was to investigate the impact of the timing of adding new experimental arms on the correlation structure and the value of the FWER. In many scenarios such as RAMPART and STAMPEDE, more than one experimental arms start at the same time in which case there will be substantial overlap in information between the pairwise comparison of these arms to control. If strong control of the FWER is required in this case, Dunnett’s correction (i.e. equation (2)) should be used to calculate the proportion of the type I error rate that is allocated to each of these comparisons as we have done in the case of RAMPART.

Moreover, in some designs such as RAMPART, it is required to control the FWER at a prespecified level. In general, any unplanned adaptation would affect the FWER of a trial. This includes the unplanned addition of a new experimental arm. It will be possible to (strongly) control the FWER if the addition of new pairwise comparisons is planned at the design stage of an MAMS trial as we have shown in the RAMPART example. In this case, the introduction of a new hypothesis will be completely independent of the results of the existing treatments. In platform protocols in general, it becomes infeasible to control the FWER for all pairwise comparisons as new experimental treatments are added to the existing sets of pairwise comparisons.

In this article, we have investigated one statistical aspect of adding new experimental arms to a platform trial. The operational and trial conduct aspects also require careful consideration, some of which have already been addressed by Sydes et al. 1 In this article, Sydes et al. put forward a number of useful criteria that can be thought about when considering the rationale for adding any new experimental arm. For example, in the STAMPEDE trial, the decisions to add new research arms have been made independently of the accumulating data from the ongoing comparisons. In other words, the decisions to add new arms have been driven by the need to assess new treatment regimens rather than results from the ongoing comparisons. To achieve this, there should be a mechanism in place to ensure that the committee that makes the decision to add an arm is blind to the accumulating results from the ongoing comparisons. Both statistical and conduct aspects require careful examination to efficiently determine whether and when new experimental arms can be added to an existing platform trial.

Conclusion

The familywise type I error rate is mainly driven by the number of pairwise comparisons and the corresponding pairwise type I error rates. The timing of adding a new experimental arm to an existing platform protocol can have minimal, if any, impact on the FWER. The simple Bonferroni or Šidák correction can be used to approximate Dunnett’s correction in equation (2) if there is not a substantial overlap between the new comparison and those of the existing ones, or when the correlation between the test statistics of the new comparison and those of the existing comparisons is small, less than, say, 0.30.

Supplemental Material

sj-pdf-1-ctj-10.1177_1740774520904346 – Supplemental material for Adding new experimental arms to randomised clinical trials: Impact on error rates

Supplemental material, sj-pdf-1-ctj-10.1177_1740774520904346 for Adding new experimental arms to randomised clinical trials: Impact on error rates by Babak Choodari-Oskooei, Daniel J Bratton, Melissa R Gannon, Angela M Meade, Matthew R Sydes and Mahesh KB Parmar in Clinical Trials

Footnotes

Acknowledgements

We are grateful to Professor Cyrus Mehta, Professor Patrick Royston, Professor Ian White, and Dr Tim Morris for their comments on the earlier version of this article. We also thank both the editor and reviewers for their helpful comments on the earlier version of this manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Medical Research Council (grant number MC_UU_12023/29).

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.