Abstract

Background/aims

In multi-site clinical trials, where trial data and conduct are scrutinised centrally with pre-specified triggers for visits to sites, targeted monitoring may be an efficient way to prioritise on-site monitoring. This approach is widely used in academic trials, but has never been formally evaluated.

Methods

TEMPER assessed the ability of targeted monitoring, as used in three ongoing phase III randomised multi-site oncology trials, to distinguish sites at which higher and lower rates of protocol and/or Good Clinical Practice violations would be found during site visits. Using a prospective, matched-pair design, sites that had been prioritised for visits after having activated ‘triggers’ were matched with a control (‘untriggered’) site, which would not usually have been visited at that time. The paired sites were visited within 4 weeks of each other, and visit findings are recorded and categorised according to the seriousness of the deviation. The primary outcome measure was the proportion of sites with ≥1 ‘Major’ or ‘Critical’ finding not previously identified centrally. The study was powered to detect an absolute difference of ≥30% between triggered and untriggered visits. A sensitivity analysis, recommended by the study’s blinded endpoint review committee, excluded findings related to re-consent. Additional analyses assessed the prognostic value of individual triggers and data from pre-visit questionnaires completed by site and trials unit staff.

Results

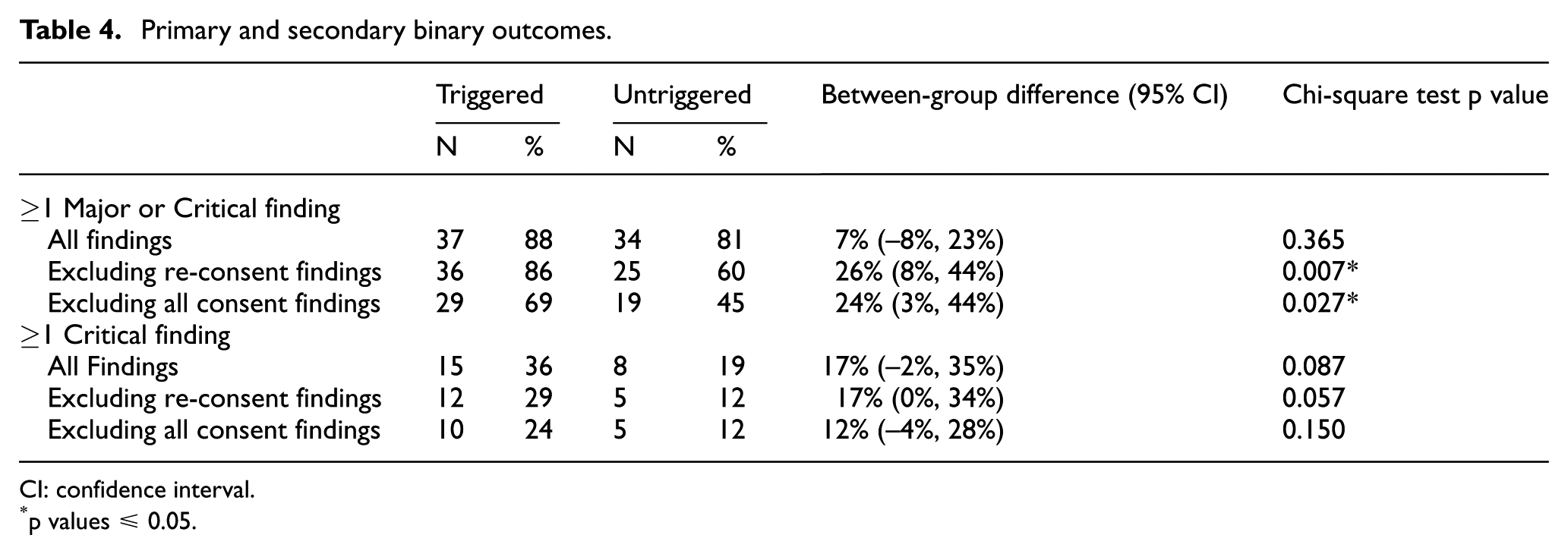

In total, 42 matched pairs of visits took place between 2013 and 2016. In the primary analysis, 88.1% of triggered visits had ≥1 new Major/Critical finding, compared to 81.0% of untriggered visits, an absolute difference of 7.1% (95% confidence interval −8.3%, +22.5%; p = 0.365). When re-consent findings were excluded, these figures reduced to 85.7% versus 59.5%, (difference = 26.2%, 95% confidence interval 8.0%, 44.4%; p = 0.007). Individual triggers had modest prognostic value but knowledge of the trial-related activities carried out by site staff may be useful.

Conclusion

Triggered monitoring approaches, as used in these trials, were not sufficiently discriminatory. The rate of Major and Critical findings was higher than anticipated, but the majority related to consent and re-consent with no indication of systemic problems that would impact trial-wide safety issues or integrity of the results in any of the three trials. Sensitivity analyses suggest triggered monitoring may be of potential use, but needs improvement and investigation of further central monitoring triggers is warranted. TEMPER highlights the need to question and evaluate methods in trial conduct, and should inform further developments in this area.

Keywords

Introduction

Clinical trial monitoring is defined by the International Conference on Harmonisation (ICH) as ‘The act of overseeing the progress of a clinical trial, and ensuring that it is conducted, recorded and reported in accordance with the protocol, Standard Operating Procedures, Good Clinical Practice (GCP), and the applicable regulatory requirements’, and aims to protect the rights and well-being of trial participants, while ensuring protocol compliance and data integrity. 1

Monitoring often relies on-site visits, an approach recommended in ICH GCP guidance: ‘In general there is a need for on-site monitoring …’ (section 5.18.3). 1 Through that guidance, and following high-profile data fraud cases, 2 on-site monitoring has become a standard means of ensuring GCP compliance since the 1990s, at least in industry-sponsored trials. Visit activities commonly include intensive document review, in particular, source data verification (SDV): the process of checking case report form data against source notes. While this may be done in a sample of patients, or on selected data items on all patients, many trials’ site visits aim to check 100% of trial data. 3 Intensive on-site monitoring has been highlighted as inefficient and associated with significant costs4–9 which are passed down to patients and healthcare systems as drug development expenses.2,4

A growing body of evidence shows that 100% SDV is of limited value.10–13 Trialists14,15 and regulators16–18 have expressed support for ‘risk-based monitoring’, recognising that not all clinical trials require the same approach to quality control and assurance. This is reflected in the update of ICH GCP E6. 19

One risk-based approach is ‘triggered’ or ‘targeted’ on-site monitoring. It was suggested as a possible option for trials of investigational medicinal products classed as low or medium risk by the Medicines and Healthcare products Regulatory Agency, Medical Research Council (MRC), and UK Department of Health. 16 An initial risk assessment determines the key risks resulting from the intervention and the design of the trial and strategies to minimise those risks are specified. If triggered monitoring is selected, over the course of the trial sites are prioritised for visits based on central monitoring ‘triggers’: predefined indicators such as number of protocol deviations, case report form return rates, uncommon patterns of serious adverse event reporting or subjective assessments of site performance. Such targeted monitoring is also mentioned as a possible approach in the update to ICH GCP E6. 19

Although triggered monitoring approaches are not uncommon 3 and have clear potential benefits in terms of resource-use, there is no empirical evidence to show how well they work. TEMPER was designed to provide such evidence.

Methods

Study design

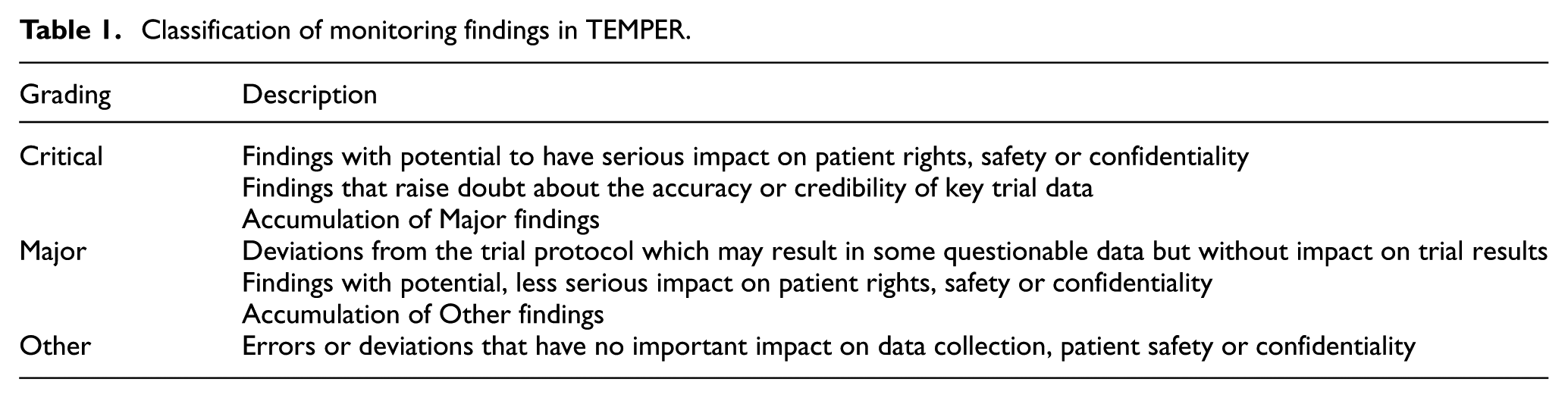

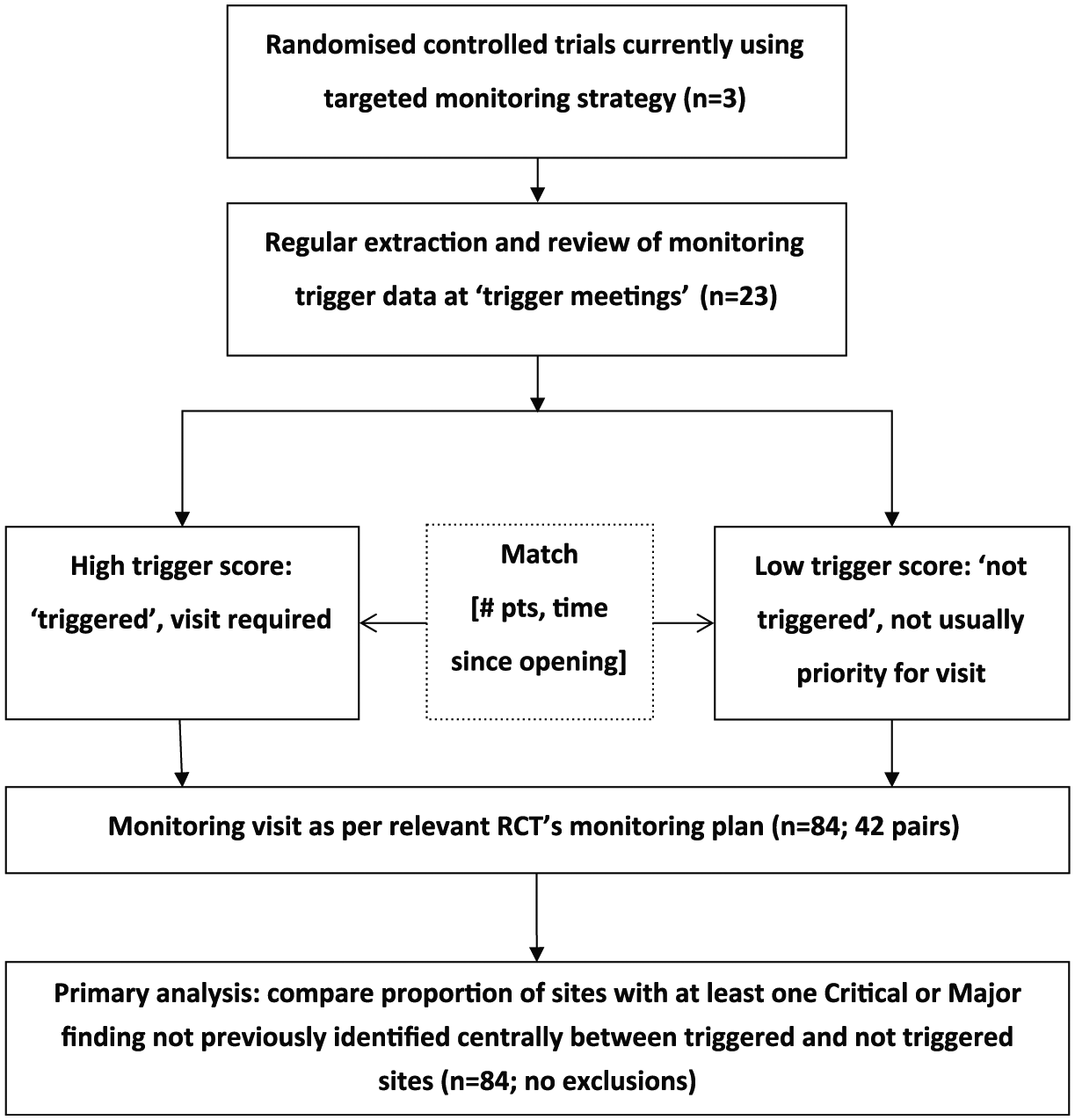

TEMPER is a prospective, matched-pair study assessing the value of triggered monitoring in distinguishing sites with important protocol or GCP compliance issues not identified centrally. Trials unit teams used triggers to identify sites to visit (‘triggered visit’). Each of these was matched with an ‘untriggered site’, and the paired sites were visited and monitored according to the trial’s monitoring plan. Site visit findings were categorised according to a standard classification based on a high-level summary (Table 1). We compared the proportion of triggered and untriggered visits with ≥1 Major/Critical finding not identified through central monitoring or previous visits. The study design is summarised in Figure 1. We developed a bespoke system, the TEMPER Management System (TEMPER-MS) to support implementation of the study. 20

Classification of monitoring findings in TEMPER.

TEMPER study design.

Ethics committee advice deemed no ethical review was required because the additional site visits were within the scope of each trial’s monitoring plan. To ensure visits were arranged and conducted as per normal practice, site staff were not explicitly informed about the TEMPER study or the reason for a monitoring visit.

Trial selection

Included trials were conducted and monitored by the MRC Clinical Trials Unit at University College London; sponsored by the UK MRC; employing a triggered monitoring strategy; investigational medicinal products risk category B (‘somewhat higher risk than standard medical care’) according to MRC/Department of Health/Medicines and Healthcare products Regulatory Agency risk classification. 16 Trials also needed to have started recruitment before 2012 and plan follow-up to continue until after 2014.

Triggers

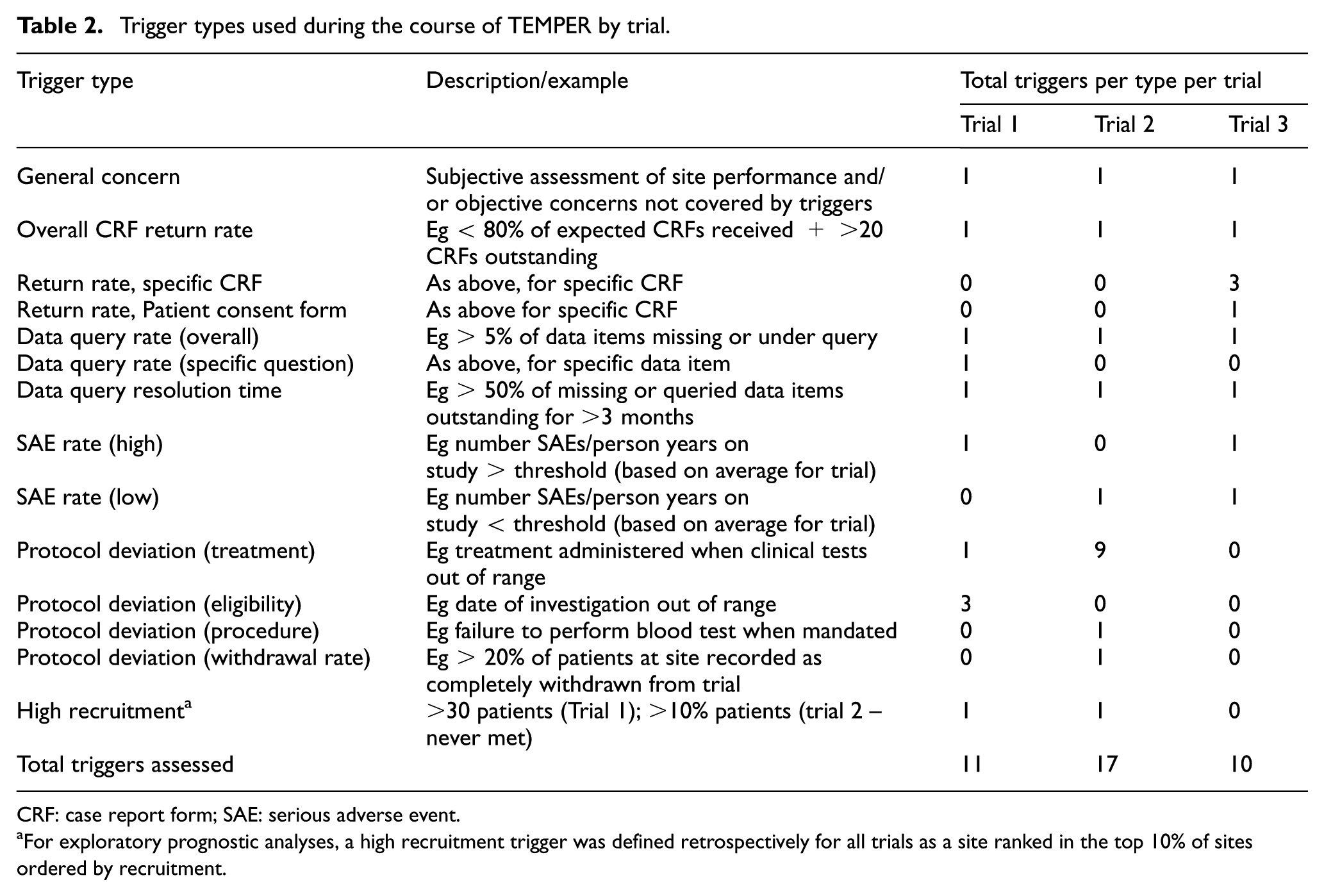

Triggers were based on those in use in the selected trials, with some additional quantification where thresholds of concern had not previously been defined. The triggers were mainly quantitative although subjective ‘general concerns’ could also be added to each site’s overall trigger score in response to, for example, worrying contact with a site or other more objective concerns not captured by the trial’s triggers. As risks and monitoring needs changed over time, some new triggers were added and/or thresholds modified (e.g. one trial demanded a higher threshold for data return ahead of an interim analysis). Table 2 summarises and exemplifies the trigger types used by trial at the completion of TEMPER.

Trigger types used during the course of TEMPER by trial.

CRF: case report form; SAE: serious adverse event.

For exploratory prognostic analyses, a high recruitment trigger was defined retrospectively for all trials as a site ranked in the top 10% of sites ordered by recruitment.

Site selection

We scheduled regular ‘trigger meetings’ (3–6 monthly or more frequently if required) with trials unit teams to review trigger data. Sites’ trigger scores were calculated by the TEMPER-MS and reviewed by the trial teams to decide which sites to visit. Chosen sites usually had the highest total trigger scores, but general concerns sometimes led to other sites being prioritised. All trigger meeting discussions were documented.

To replicate real-life prioritisation by resource-limited trial teams, we asked teams to distinguish between sites that would definitely be visited in normal practice (‘triggered-and-usually-visited’); and those, usually with lower trigger scores, considered lower priority for a visit at that time (‘triggered-but-not-usually-visited’); both are grouped as ‘triggered visits’ for the primary analysis.

The TEMPER-MS matching algorithm proposed ‘untriggered’ sites to visit, minimising differences in (1) number of patients and (2) time since first patient randomised, while maximising differences in trigger score (see Appendix in Supplementary Material and Diaz-Montana et al. 20 ). The closest match was accepted, unless there were clearly documented reasons not to. For example, an untriggered site that had been visited very recently outwith TEMPER was replaced with the next closest match.

Site visits

To maximise similarity between triggered and untriggered monitoring visits, they were all conducted according to the trial’s monitoring plan with the same planned checks at all visits in addition to follow-up of any specific concerns raised by the trials unit team. These were broadly similar across the trials in the study: monitoring usually included SDV on a sample of patients and review of consent forms, pharmacy documents and facilities, and Investigator Site Files.

We aimed to conduct all visits within 3 months after the trigger meeting, with paired visits as close together as possible, and no more than 28 days apart. The triggered visit was planned before its untriggered match to help ensure that any changes to monitoring visit approach implemented by the trial team at the time of the triggered visit could be reflected in the paired visit. All monitors performed the same roles at site visits. Triggered visits were attended by TEMPER-specific and trial-specific monitors, untriggered visits only by TEMPER monitors. The same GCP and monitoring training was undertaken both by the trial team members attending visits and the monitors; the latter also received trial-specific training. A TEMPER-specific monitoring visit report ensured consistent reporting. Reports were written and followed-up according to each trial’s monitoring plan and trials unit procedures.

Data collection, finding classification and endpoint definition

Findings were classified as ‘Critical’, ‘Major’ or ‘Other’ (see Table 1), with their final grade taking account of any relevant response from the site to the monitoring report. All Critical and Major findings were further categorised as new or ‘already known prior to the monitoring visit’ (e.g. through central monitoring or self-reporting by the site). The latter were excluded from the primary outcome, but included in the monitoring report to allow follow-up to resolution by the trial team as required. The protocol provided detailed guidance on appropriate gradings (see Online Supplementary Material). This was updated to incorporate new findings as they arose. Selected findings (related to consent and missed serious adverse events in particular), if repeated to a predefined level, could be ‘upgraded’ from ‘Other’ to ‘Major’ or from ‘Major’ to ‘Critical’. In these cases, one additional finding of the higher grade was added to the total findings for that visit.

Outcome measures

The primary outcome measure was the proportion of sites with ≥1 Major or Critical finding not already identified through central monitoring or a previous visit (‘new’ findings). Secondary outcomes were number of Major and Critical findings, proportion of sites with ≥ 1 Critical finding, number of Critical findings and category of Major/Critical findings.

Sample size

Sample size calculations, based on review of previous trials unit monitoring reports from trials using triggered monitoring, assumed ∼70% of triggered visits would produce ≥1 new Major/Critical finding. To detect an absolute reduction of ≥30% (from 70% to 40%) in untriggered sites, with 80% power and two-sided significance level of 5%, required ∼84 site visits in 42 matched pairs. We sought balanced numbers of visits across trials and required ≥50% of each trial’s triggered visits to be ‘triggered and usually visited’. Ten additional, unmatched visits were made to high recruiting sites not otherwise selected for visits, to allow further assessment of ‘high recruitment’ as a predictor of findings in secondary analyses.

Analysis

The primary analysis was a two-group comparison of the proportion of sites with ≥1 new Major/Critical finding in the triggered versus untriggered groups. Analyses of total numbers of Major and Critical findings used one-sample t-tests of the within-pair differences. Prior to the first analysis, the TEMPER Endpoint Review Committee recommended a sensitivity analysis to exclude all findings related to re-consent, as these typically communicated minor changes in side-effect profile that could have been communicated without requiring re-consent. A second, exploratory analysis, excluded

In secondary analyses, using all 94 visits, and with additional information of potential prognostic value obtained from questionnaires completed by the Trials unit and site staff prior to the monitoring visits (see Online Supplementary Material), the ability of individual triggers and site characteristics to predict on-site findings was assessed by comparing the proportion of visits with the outcome of interest (Eg ≥ 1 Major/Critical finding) at sites where a trigger had/had not fired. This utilised chi-square tests (with trend for ordered categories) or Fisher’s exact test as appropriate for univariate analyses and logistic regression for multivariate analyses.

Consistency

To reduce intra- and inter-observer bias, in addition to Monitor training and the use of the categorisation system, a Consistency Monitoring Group, comprising trials unit staff from the participating trials’ teams, discussed suitable gradings for findings. The Endpoint Review Committee comprised four experienced trialists from the trials unit with no direct link to the trials, and reviewed, for all visit reports and blind to whether the visit was triggered or untriggered, all Major and Critical findings and a selection of Other findings. They also performed cross-visit reviews of similar sorts of findings to ensure consistency of grading. The categorisation appendix and grading of relevant findings from previous visits were updated if required following Consistency Monitoring Group or Endpoint Review Committee discussions.

Results

Three trials were included; all randomised, multicentre (>100 sites) cancer treatment trials with a time-to-event outcome measure (recurrence-free or overall survival), planned accrual of >1000 patients and paper-based data collection.

Site selection and matching

In total, 23 trigger meetings and 84 paired monitoring visits took place between 2013 and 2016 (Figure 1). Triggered and untriggered sites had mean trigger scores of 4.0 (range of 2–6) and 0.8 (range of 0–3), respectively. The matching algorithm gave mean within-pair differences (triggered–untriggered) of -1.4 months (70.1 vs 71.5) in time since first randomisation and +8.5 (49.9 vs 41.4) in patients randomised.

Visit conduct

Three visits were >1 week outside the 3-month visit window. Five untriggered visits were >28 days after their triggered match, the longest gap being 4 months; the continued suitability of the untriggered match as a control was confirmed at the next trigger meeting. One untriggered visit was before its triggered match because of a short-notice postponement.

The median (interquartile range) number of trials unit staff attending triggered and untriggered visits was 3 (2–3) and 2 (2–2), respectively (Wilcoxon p < 0.01). Visit conduct within pairs was similar in most respects: full Investigator Site File checks were done at 25/42 triggered and 27/42 untriggered visits (p = 0.65), pharmacy facility checks at 25 and 29, respectively (p = 0.36), while the median (interquartile range) number of patients undergoing SDV was 4 (3–5) and 4 (3–5), respectively (paired t-test p = 0.08). However, more consent forms were checked at triggered (median (interquartile range): 44 (27–77)) than untriggered visits (35 (18–70)) (paired t-test p = 0.01).

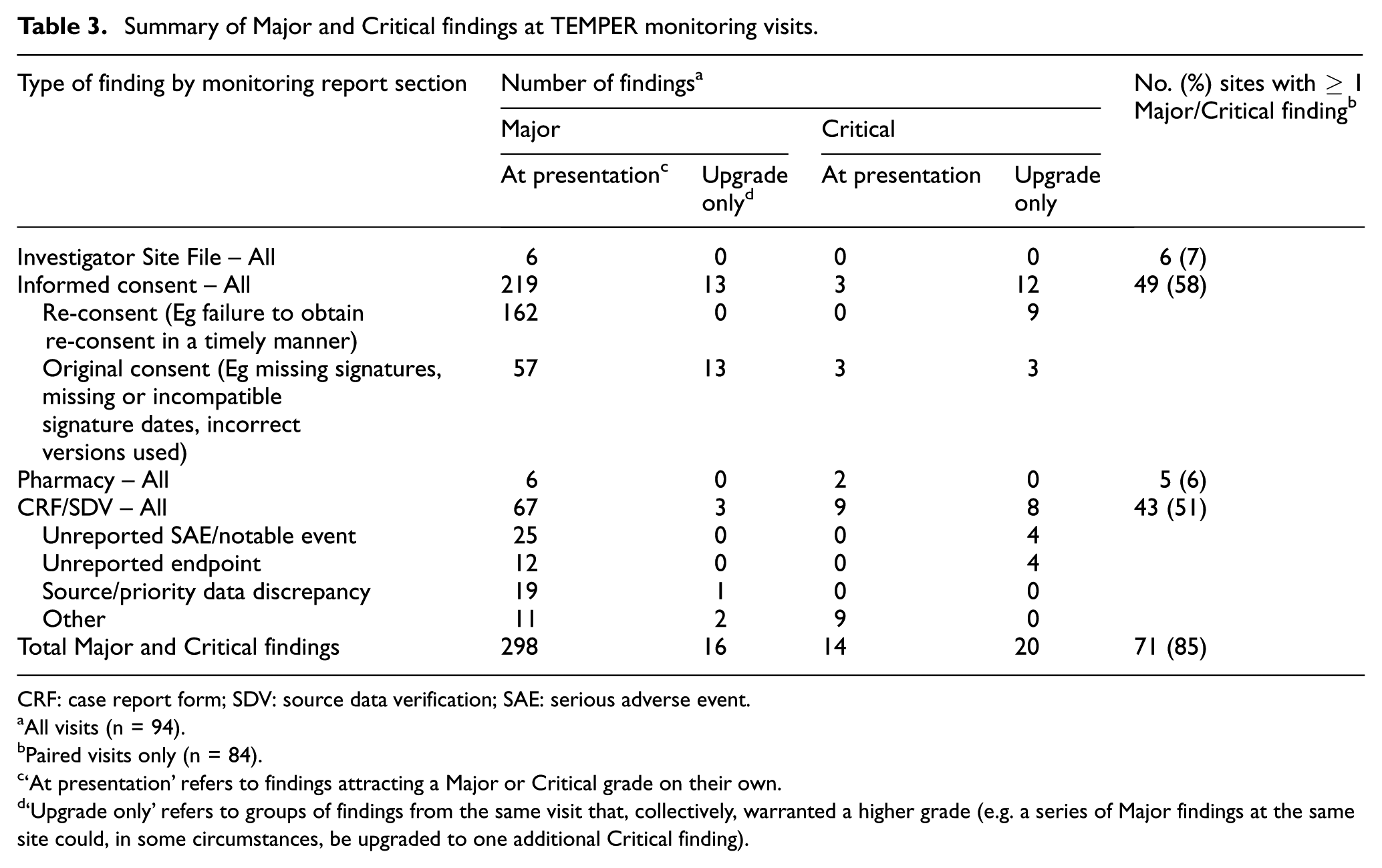

Primary outcome: Major/Critical findings

Table 3 summarises all Major and Critical findings, and Table 4 summarises the primary outcome; 88.1% of triggered visits had ≥1 new Major/Critical finding, compared to 81.0% of untriggered visits, an absolute difference of 7.1% (95% confidence interval (CI) –8.3%, +22.5%; p = 0.365). When re-consent findings were excluded, these figures reduced to 85.7% versus 59.5% (difference = 26.2% (95% CI 8.0%, 44.4%; p = 0.007)); while excluding

Summary of Major and Critical findings at TEMPER monitoring visits.

CRF: case report form; SDV: source data verification; SAE: serious adverse event.

All visits (n = 94).

Paired visits only (n = 84).

‘At presentation’ refers to findings attracting a Major or Critical grade on their own.

‘Upgrade only’ refers to groups of findings from the same visit that, collectively, warranted a higher grade (e.g. a series of Major findings at the same site could, in some circumstances, be upgraded to one additional Critical finding).

Primary and secondary binary outcomes.

CI: confidence interval.

p values ≤ 0.05.

Secondary outcomes

Critical findings (Online Supplementary Material Table S2) were almost solely from consent form and source data reviews. The majority (59%) were upgrades because of a cumulative number of Major findings, and the remainder were graded Critical in their own right. The proportion of visits with Critical findings was approximately halved in untriggered visits, but these differences were of borderline statistical significance (Table 4).

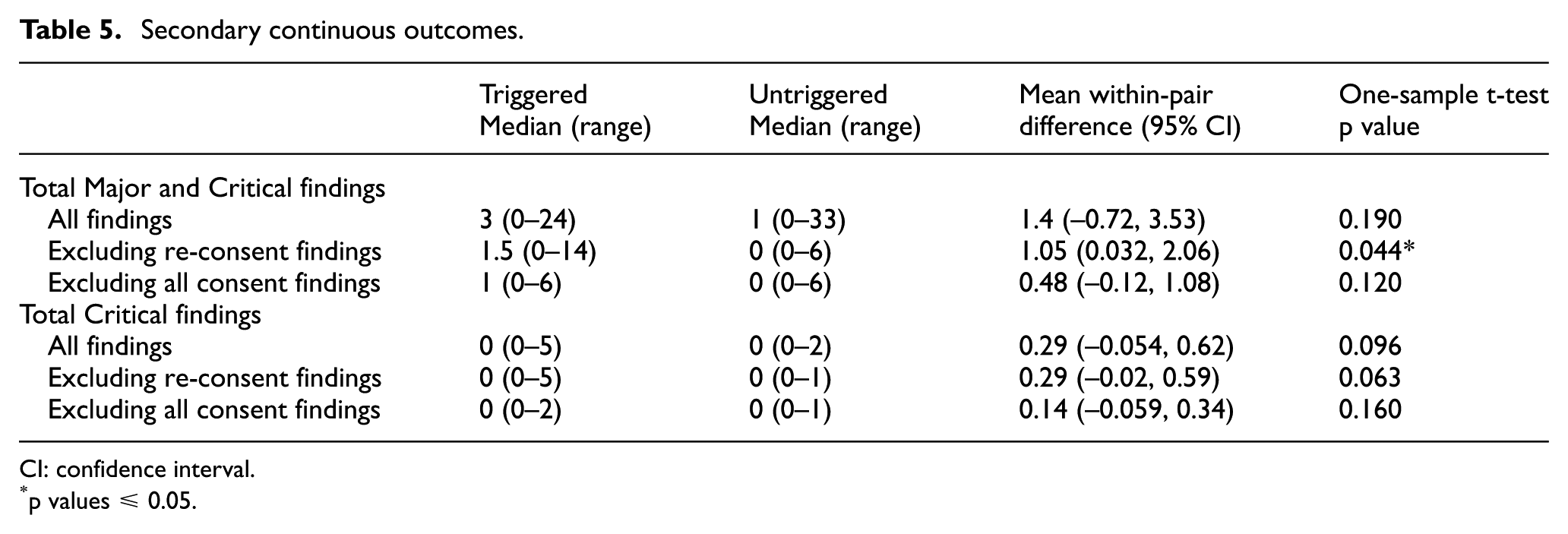

The median number of new Major and Critical findings (Table 5) was three at triggered visits and one at untriggered visits; the mean within-pair difference was 1.40 (95% CI –0.72, 3.53; p = 0.19) for all findings, 1.05 (95% CI 0.032, 2.06; p = 0.044) excluding re-consent findings and 0.48 (95% CI –0.12, 1.08; p = 0.12) excluding all consent findings (when the median number of findings was 1 and 0, respectively). The median number of new Critical findings was zero at all visits.

Secondary continuous outcomes.

CI: confidence interval.

p values ≤ 0.05.

Prognostic value of individual triggers

The ability of specific triggers to predict the presence of Major and/or Critical findings at the site visit was assessed across all outcomes (Online Supplementary Material Tables S3 and S4). While the finding rates tended to be higher when the trigger had been fired at the time of site selection, only three triggers showed even a modest association with outcome (p < 0.05 for at least one outcome, no adjustment for multiple testing). These were ‘data query resolution time’, ‘protocol deviation’ and ‘general concern’. Multivariate analyses were carried out for each outcome measure, but resulted in univariate models only, namely, the trigger with the strongest association with that outcome measure in the univariate analysis.

High-recruiting sites were defined as the top 10% of trial sites ordered by recruitment at the time of the site visit. The prognostic value of high recruitment on outcomes was investigated excluding all consent findings, as the number of consent forms checked was directly related to number of patients. We found no evidence of higher finding rates at these sites.

Other site characteristics

Trials unit teams completed 90/94 pre-visit questionnaires. There was no clear evidence of a linear relationship between the trial team ratings and the presence of Major or Critical findings, including or excluding consent findings (data not shown).

Pre-visit site questionnaires were provided by 76/94 sites. There was no evidence of a linear association between the chance of ≥1 Major/Critical finding and the number of active trials either per site or per staff member. There was, however, evidence that the greater the number of different trial roles undertaken by the Research Nurse, the lower the probability of Major/Critical findings. To a lesser extent, the reverse was true for the principal investigator (see Online Supplementary Material Table S5).

Discussion

We have shown that triggered monitoring, as used in these trials, did not satisfactorily distinguish sites with higher and lower levels of concerning on-site monitoring findings. The pre-specified primary comparison showed no significant difference between triggered and untriggered visits in the proportion with ≥1 Major/Critical finding not previously identified centrally. However, over 70% of on-site findings related to issues in recording informed consent, and 70% of these to re-consent; the pre-specified sensitivity analysis excluding re-consent findings demonstrated a clear difference in event rate. There was some heterogeneity between trials in the primary comparison, but much greater consistency in the sensitivity and secondary analyses. In addition, there was some evidence that the trigger process used could identify sites at increased risk of serious concern: around twice as many triggered visits had ≥1 Critical finding, in the primary and sensitivity analyses. Thus, we would suggest that triggered monitoring has promise, but clearly needs refinement.

The categorisation framework we used is, we believe, similar to those applied by regulators to the same findings. However, these typically identify a finding of importance in relation to an individual patient, when it is only by accumulation that these are likely to have serious impact on the trial as a whole. Risk-based monitoring is not looking for perfection in trial data or conduct, but to detect errors that really matter. We found no visit findings that raised serious issues that would apply across sites, involved serious trial-wide safety issues, or suggested any biases across trial arms which would impact credibility of the trials’ results.

The prevalence of sites with Major and Critical findings was higher than expected, echoing the experience of others. 23 However, the great majority of our findings, like others’, 24 related to documenting the consent process, for example, ensuring that correct versions are used, and signatures and dates are present and consistent with the timing of randomisation. The ‘quality by design’ concept 25 states the first course of action should be preventive; informed consent form templates used by academic clinical trials units should, therefore, be reviewed to see if their design can be improved and completion errors reduced. Timely central monitoring of consent forms with adequate anonymisation 22 may mitigate the effects of many consent form completion errors, particularly if trial treatment timelines mean that full consent forms – or at least selected items – can be reviewed before randomisation.

Re-consent was usually provoked by updates to drug safety information, of which participants (at least those still on treatment) should be aware. This can be a lengthy process and therefore difficult to monitor centrally. When re-consent is explicitly required, better central monitoring methods are possible, perhaps using site logs with lists of expected visit dates. However, although regulatory guidance is clear that participants must be informed about

Beyond consent processes, the majority of other findings were identified from SDV activities. A growing body of evidence suggests intensive SDV is often of little benefit to randomised controlled trials, with any discrepancies found having minimal impact on the robustness of trial conclusions.11,12,26 SDV for a sample of participants may be sufficient to detect

We carried out exploratory analyses of the prognostic value of individual triggers to see if visits to sites at which a specific trigger had fired were substantially more likely to find Major or Critical findings than visits to sites at which this trigger was not fired. The sample size was sufficient to detect an absolute difference of approximately 30% in finding rates. Some triggers, including high or low serious adverse event rates, were rarely met so their prognostic value could not be assessed. Three triggers were of potential, though still at best modest value, given the multiple outcome measures assessed: the speed of data query resolution, protocol deviations and ‘general concern’. These triggers were not wholly independent, and it was not possible to combine them in a way that improved finding rate discrimination more than our triggered/not triggered visit categorisation. We note that high recruitment and poor case report form return rates, although commonly used as triggers 3 were not of clear prognostic value.

Analysis of site staffing and workload suggested that the fewer trial responsibilities held by the research nurse, the higher the chance of a Major/Critical finding, with a trend to the converse (mainly when findings relating to consent are excluded), in relation to the principal investigator. These findings suggest that, while an insufficiently supported and possibly overstretched investigator may impact adversely on trial conduct, an experienced, capable local Research Nurse, able to take responsibility for many elements of trial conduct, is key.

Ultimately, the sensitivity and specificity of triggered monitoring depends on the selection of triggers. We found Major and Critical findings at untriggered visits, suggesting it remains necessary to visit these sites unless central monitoring techniques can be improved or the discriminatory value of triggers can increase. We used the trials’ existing triggers – quantified more precisely where needed to facilitate ranking of sites – without any prior assessment of their potential value. The search for more discriminatory triggers should encompass work on Key Performance Indicators 29 and Central Statistical Monitoring. 30 Subjective assessments may be of value, but are perhaps more prone to inconsistency, particularly when staff turnover is high, and therefore, harder to generalise. We might also optimise current triggers, for example, with better (non-dichotomous) treatment of continuous variables or greater incorporation of temporal trends.

Planning, conducting and follow-up on monitoring visits is time-consuming and therefore costly,2,8,9 so maximising cost-benefit is key. We did not routinely use triggers to guide the content of site visits which was perhaps not optimal. Refined triggers could target specific activities, for example, data quality issues could provoke SDV visits and general concerns could provoke additional training. Prospective study of trigger-defined visits is warranted.

Central monitoring enables review of information across sites and time without the time constraints of a site visit. Maximising these strengths would free more time at visits for targeted SDV and activities best done in-person, for example, process review, building rapport or training.

We acknowledge several limitations. TEMPER was conducted in only three trials of similar type although we see no reason to doubt its applicability to other trials. The trials unit staff present at triggered and untriggered visits were not blind to visit type. TEMPER monitors were at all visits, but trial team staff were only required to attend triggered visits. However, the additional staff at triggered visits often included new trial staff attending for training purposes and the planned activities were the same at all visits. The only notable difference in completed activity within pairs was the number of consent forms checked, which was higher in the triggered visits compared to the untriggered visits. While this could have increased the chance of findings at triggered visits, this appears not to have been the case, the difference in finding rates being greater when consent findings were excluded. Observation bias due to lack of blinding of monitoring staff was mitigated by consistent training on the trials and monitoring methods, the use of a common finding grading system and independent review of all Major and Critical findings which was blind to visit type.

The sample size was modest, but nonetheless adequately powered to detect the minimal differences in visit finding rates necessary to support the triggered monitoring strategy employed in these trials. TEMPER assessed the value of pre-existing triggers, rather than first exploring the best triggers. Evidence to support triggered monitoring comes largely from our sensitivity and exploratory analyses, although these were pre-planned, and recommended by an independent committee, which was blind to visit type.

Research into trial conduct rarely has the rigour we demand of clinical trials. The motivation to study this area comprises (1) the need to monitor trials effectively, minimising risk to patients’ rights and safety and protecting data integrity and (2) the need to do so in a cost-effective manner, noting that monitoring activities are a major component of trial conduct costs at the coordinating centre. TEMPER is one of the few studies to address monitoring strategies in a prospective manner,23,31,32 and the first, we believe, to specifically evaluate triggered monitoring. Its results should help challenge and guide the future use of triggered monitoring.

Supplemental Material

793379_supp_mat – Supplemental material for Triggered or routine site monitoring visits for randomised controlled trials: results of TEMPER, a prospective, matched-pair study

Supplemental material, 793379_supp_mat for Triggered or routine site monitoring visits for randomised controlled trials: results of TEMPER, a prospective, matched-pair study by Sally P Stenning, William J Cragg, Nicola Joffe, Carlos Diaz-Montana, Rahela Choudhury, Matthew R Sydes and Sarah Meredith in Clinical Trials

Supplemental Material

793379_supp_mat_2 – Supplemental material for Triggered or routine site monitoring visits for randomised controlled trials: results of TEMPER, a prospective, matched-pair study

Supplemental material, 793379_supp_mat_2 for Triggered or routine site monitoring visits for randomised controlled trials: results of TEMPER, a prospective, matched-pair study by Sally P Stenning, William J Cragg, Nicola Joffe, Carlos Diaz-Montana, Rahela Choudhury, Matthew R Sydes and Sarah Meredith in Clinical Trials

Supplemental Material

793379_supp_mat_3 – Supplemental material for Triggered or routine site monitoring visits for randomised controlled trials: results of TEMPER, a prospective, matched-pair study

Supplemental material, 793379_supp_mat_3 for Triggered or routine site monitoring visits for randomised controlled trials: results of TEMPER, a prospective, matched-pair study by Sally P Stenning, William J Cragg, Nicola Joffe, Carlos Diaz-Montana, Rahela Choudhury, Matthew R Sydes and Sarah Meredith in Clinical Trials

Supplemental Material

793379_supp_mat_4 – Supplemental material for Triggered or routine site monitoring visits for randomised controlled trials: results of TEMPER, a prospective, matched-pair study

Supplemental material, 793379_supp_mat_4 for Triggered or routine site monitoring visits for randomised controlled trials: results of TEMPER, a prospective, matched-pair study by Sally P Stenning, William J Cragg, Nicola Joffe, Carlos Diaz-Montana, Rahela Choudhury, Matthew R Sydes and Sarah Meredith in Clinical Trials

Supplemental Material

793379_supp_mat_5 – Supplemental material for Triggered or routine site monitoring visits for randomised controlled trials: results of TEMPER, a prospective, matched-pair study

Supplemental material, 793379_supp_mat_5 for Triggered or routine site monitoring visits for randomised controlled trials: results of TEMPER, a prospective, matched-pair study by Sally P Stenning, William J Cragg, Nicola Joffe, Carlos Diaz-Montana, Rahela Choudhury, Matthew R Sydes and Sarah Meredith in Clinical Trials

Footnotes

Acknowledgements

The authors acknowledge the teams working on the trials that took part in TEMPER, the Endpoint Review Committee and Consistency Monitoring Group, previous TEMPER Study Monitors and study team members, Matt Nankivell for conducting the independent validation analyses, staff at sites who participated in TEMPER study visits and Sharon Love and Director of MRC Clinical Trials Unit at University College London, Mahesh Parmar, for review of the final manuscript. The results were presented at the Joint 2017 International Clinical Trials and Methodology Conference (ICTMC) and Society for Clinical Trials (SCT) meeting, Liverpool, UK, May 2017.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

This work was supported by the Cancer Research UK (grant C1495/A13305 from the Population Research Committee); additional support was provided by the Medical Research Council (MC_EX_UU_G0800814) and the MRC London Hub for Trial Methodology Research (MC_UU_12023/24).

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.