Abstract

In the process of strawberry easily broken fruit picking, in order to reduce the damage rate of the fruit, improves accuracy and efficiency of picking robot, field put forward a motion capture system based on international standard badminton edge feature detection and capture automation algorithm process of night picking robot badminton motion capture techniques training methods. The badminton motion capture system can analyze the game video in real time and obtain the accuracy rate of excellent badminton players and the technical characteristics of badminton motion capture through motion capture. The purpose of this article is to apply the high-precision motion capture vision control system to the design of the vision control system of the robot in the night picking process, so as to effectively improve the observation and recognition accuracy of the robot in the night picking process, so as to improve the degree of automation of the operation. This paper tests the reliability of the picking robot vision system. Taking the environment of picking at night as an example, image processing was performed on the edge features of the fruits picked by the picking robot. The results show that smooth and enhanced image processing can successfully extract edge features of fruit images. The accuracy of the target recognition rate and the positioning ability of the vision system of the picking robot were tested by the edge feature test. The results showed that the accuracy of the target recognition rate and the positioning ability of the motion edge of the vision system were far higher than 91%, satisfying the automation demand of the picking robot operation with high precision.

Keywords

Introduction

Although China’s fruit industry has made great achievements, according to the characteristics and trends of the development of China’s citrus industry, it still faces some urgent problems and new challenges. Fruit picking is the longest and most labor-intensive link in the entire industrial process, accounting for almost 33%-50% 1,2 of the entire production process. When the picking robot automatically picks, a large number of fruits become independent positioning targets, so the robot’s own powerful vision system is required to complete the picking work. According to the principle of basketball motion capture system, the vision system of picking robot can also use feature capture technology to locate the fruit target, so as to realize autonomous operation. 3,4

Since the 1970s, motion capture has been an important method of photographic image analysis in biomechanics research, and it has been increasingly applied in animation production, robot control, human–computer interactive games, sports training, and other fields. 5,6 As a result, a growing number of research institutions and companies have discovered the value of human motion capture, bringing the technology from the laboratory to the market and diversifying the options available. Motion capture systems are mechanical, ultrasonic, electromagnetic, optical, and inertial sensors. Each technology has its advantages, but regardless of the technology, users are subject to certain limitations. 7 Picking robots are generally used in an open natural environment, which greatly increases the difficulty of visual system recognition and positioning. However, how to design effective signal processing algorithm better before the recognition of human movement signals effectively filter out the noise signal of how the different patterns of behavior to distinguish between data to extract the effective features, how to design the classification algorithm of high efficiency, high robustness, and so on. These problems are based on motion capture sensor is the important content in the research of human behavior recognition and difficult to support, as human behavior analysis and recognition is very worthy of study in the field of direction, but there are few related research. 8,9

In the past 10 years, local image features have been widely used in robot vision positioning. To assess the similarity of an image, a strategy to exploit these features is to compare the original descriptor extracted from the current image with the descriptor in the local model. Francisco M Campos describes the subsequent steps in this process, where you must use composite functions to aggregate the results and assign scores to each location. In the framework of a multi-classifier system, Francisco M Campos compared the performance of several candidate combinators in visual positioning tasks. For this evaluation, he chose the most popular method among the non-trained subclasses of composition, the summation rule and the product rule. The potential of these combinators can be better understood by discriminant analysis involving algebraic rules and two extensions of these methods (threshold and weighted modification). In addition, the voting method previously used for robot visual positioning was evaluated. 4 TaeKoo Kang proposed a new visual tracking framework and verified its advantages by mobile robot experiments. During the moving process of the mobile robot, the image sequence generated by the vision system is not static but will slide and vibrate. These problems cause the image to blur. Therefore, TaeKoo Kang proposed a powerful visual tracking framework based on the Adaboost detection method, which uses the appearance detection method to solve the robustness problem of target tracking under fuzzy conditions. The framework consists of three parts: distortion error compensation and feature extraction using improved discrete Gauss-Helmet moments and fuzzy distortion error compensation; target detection based on appearance and feature judgment; target tracking using finite impulse response filter. In order to verify the performance of the framework, a mobile robot visual tracking experiment was carried out. 10 When building an environment map, Visual SLAM only uses images as external information to estimate the position of the robot. SLAM is the basic premise for autonomous robots. This problem is solved by using laser or sonar sensors to construct two-dimensional maps in small dynamic environments. However, there are still many problems to be solved in dynamic, large-scale, and complex environments, and the use of vision as a basic external sensor is a new research field. There is still much room for improvement in the application of computer vision in feature detection, feature description, feature matching, image recognition, and recovery. Qiang describes the latest, easy-to-understand techniques for visualizing SLAM. Multi-robot system has many advantages over a single robot, which can improve the accuracy of SLAM system and better adapt to the dynamic and complex environment. 11

In this article, a training method of standard motion edge detection technique for high-precision picking robot based on image standard motion edge detection and optimal attitude capture technique algorithm is presented. This training method fully refers to the theory and technology of detecting the best edge posture based on the badminton robot’s standard movements in the fierce competition and can effectively realize the edge capture of the robot’s best edge posture standard movements in the picking operation. In order to further improve the effect of edge detection of standard movements, Sobel operator, Roberts operator, Log operator, Canny operator, and so on were analyzed and compared. Finally, we selected the operator with higher accuracy and Log operator to detect the best attitude of the image’s standard movement edge. The robot capture scheme is simulated and verified through simulation experiments of two experimental methods. The results show that the use of these two capture schemes can help the robot capture the standard action of the best picking posture in high-precision picking operations. Using these two capture solutions can also capture the best posture training algorithm for the high-precision picking robot. This can effectively reduce the fruit-breaking rate of the robot in the process of picking operations, and provide a reference for the advanced technology design and application of modern high-precision picking robots.

Robot vision image detection method

Human behavior capture analysis theory

The spatial posture of each joint can be obtained through the position and state signals of each important joint of the captured object sensed by the sensor (such as the motion acceleration, azimuth angle and tilt angle collected by the sensor). This scheme has the advantages of not being restricted by the space horizon and blocked by objects, large activity range and simple application, and large cumulative error. Meanwhile, the attitude position accuracy of the motion sensor-based scheme is limited by the manufacturing level, installation technology, sampling frequency, and attitude estimation algorithm of the sensor, and its attitude characteristics are shown in Table 1.

Table of spatial attitude eigenvalues.

Image detection technology based on LoG algorithm

(1) LoG algorithm

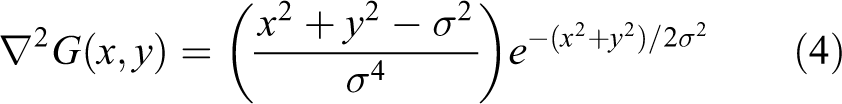

In the experimental part of this article, the LoG algorithm is mainly applied. The LoG operator is an improved algorithm based on the Laplacian operator. The Laplacian operator is a second-order differential operator, which is sensitive to noise. The LoG edge detection algorithm firstly uses Gaussian filter to filter the image noise, and then uses Laplacian operator to detect the edge. The two-dimensional Gaussian smooth function G(x, y) with normal distribution can be expressed as

In the above equation, σ is the variance, also called the scale, whose size determines the degree of smoothness. By convolving G(x, y) with the original image f(x, y), a smooth image G(x, y) can be obtained, whose expression is

The obtained smooth image g(x, y) is further detected by Laplacian operator edge, which can reduce the interference of part of the noise. The result obtained by convolving the original image with the Gaussian function and then finding the Laplacian derivative of the convolution is equivalent to the result obtained by first finding the Laplacian derivative of the Gaussian function and then finding the convolution with the original image, namely

The smoothness of Gaussian smoothing filter determines the precision of edge detection of LoG operator. The size of the Gaussian smoothing filter is not adaptive, it depends on the size of the scale. When value is small, more edge points can be obtained, but the noise suppression is insufficient. When sigma is large, it smooths out the noise, but it also widens the edges and reduces the detail. Suppose the one-dimensional Gaussian function is g(x, σ), and the signal function f(x) with noise is filtered by g(x, σ) to obtain s(x, σ), which is expressed as

When the scale is 0.5, 1, 1.5, different filtering effects are obtained. After f(x) is filtered by g(x, σ), when σ = 0.5, the waveform can show the step position very accurately, but the noise cannot be suppressed. When σ = 1, 1.5, the noise is well smoothed, but the step position becomes wider and the step is slightly skewed.

The known 3 × 3 Gaussian template can be modified by type (5) to obtain an improved Gaussian filter. Set the position weighting coefficient of the pixel with the smallest gray value, gray value and the second largest gray value to 0. The sum of the cycle and the weighting coefficient k is integrated into the value of the template of the reciprocal relationship, and finally g(i, j) is obtained. During the filtering process of g(i, j), its weighted coefficient changes with the change of the noise gray value in the template. For example, when the weighted coefficients w2, w8, and w9 in g(i, j) correspond to the pixels of the minimum gray value, the maximum gray value and the second largest gray value in the template, then g(i, j) is

(2) Application of robot visual image detection technology

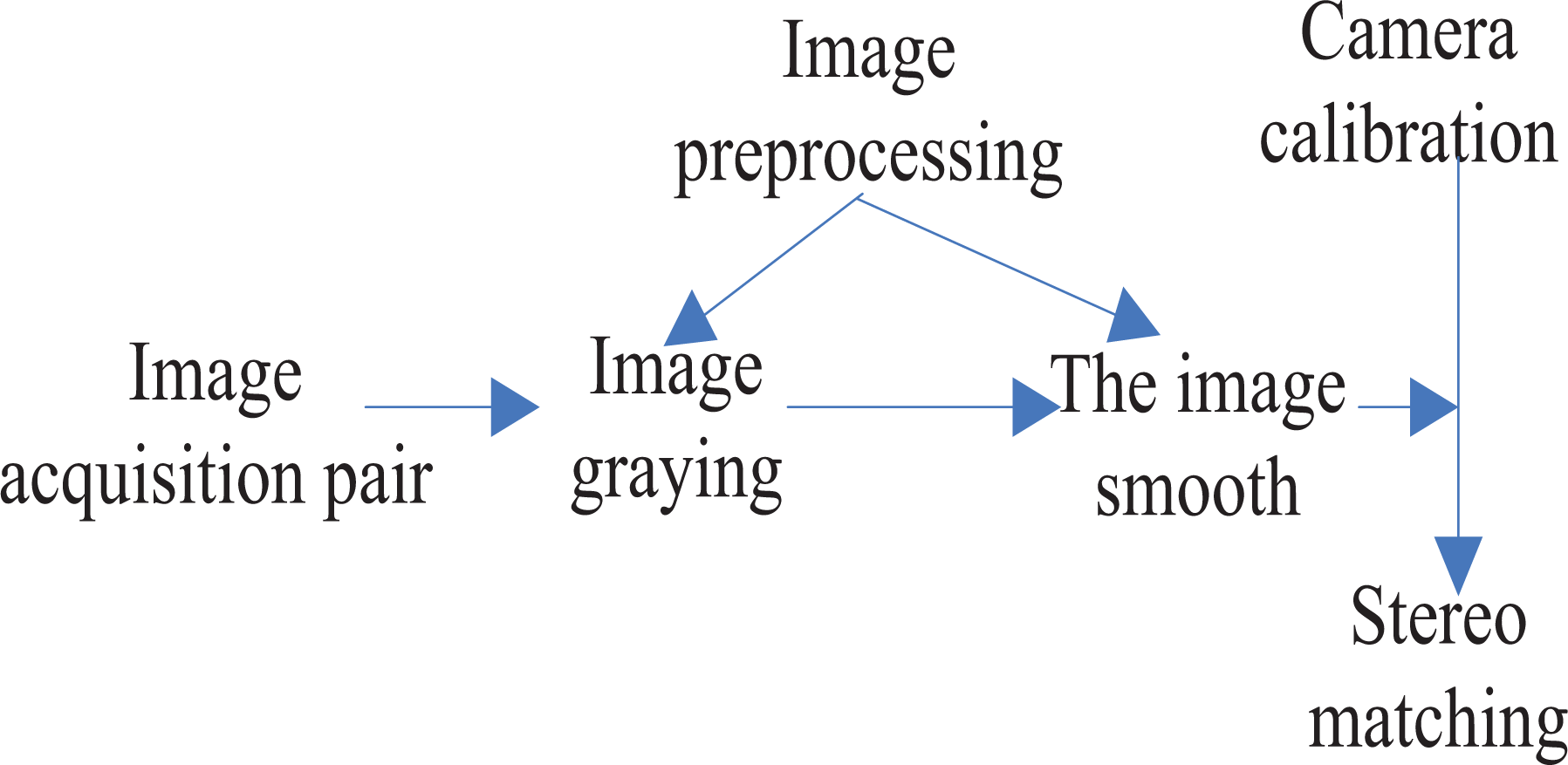

Generally, when the camera lens is distorted, the camera model in this case is defined as a nonlinear model, otherwise it is a linear model. The linear model is the simplification of the ideal pinhole model. As shown in Figure 1, image processing includes image acquisition, image preprocessing (preprocessing includes grayscale and smoothing), camera calibration, and stereo matching.

Operation flow of image processing.

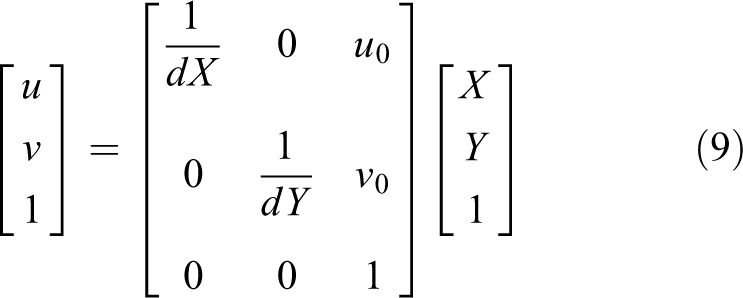

The expression of any pixel point in the u-v coordinate system is:

The relationship between the two coordinate systems can be expressed as

The corresponding formula between camera coordinates and image coordinates is

1) Nonlinear camera model

In practice, the camera model is an ideal imaging model under the linear model, which can be obtained by solving the linear equation

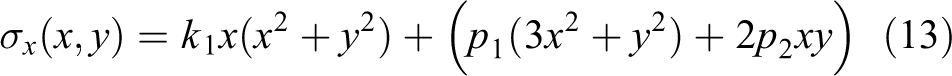

The first term is called radial distortion. k 1 and k 1 are radial distortion factors. Radial distortion refers to the deviation of the theoretical coordinates of image points in the radial direction. When the image is shifted radially inward, the points on the outer edge of the image will be concentrated and the imaging proportion will be reduced. Otherwise, the imaging proportion will be increased

2) Typical camera calibration method

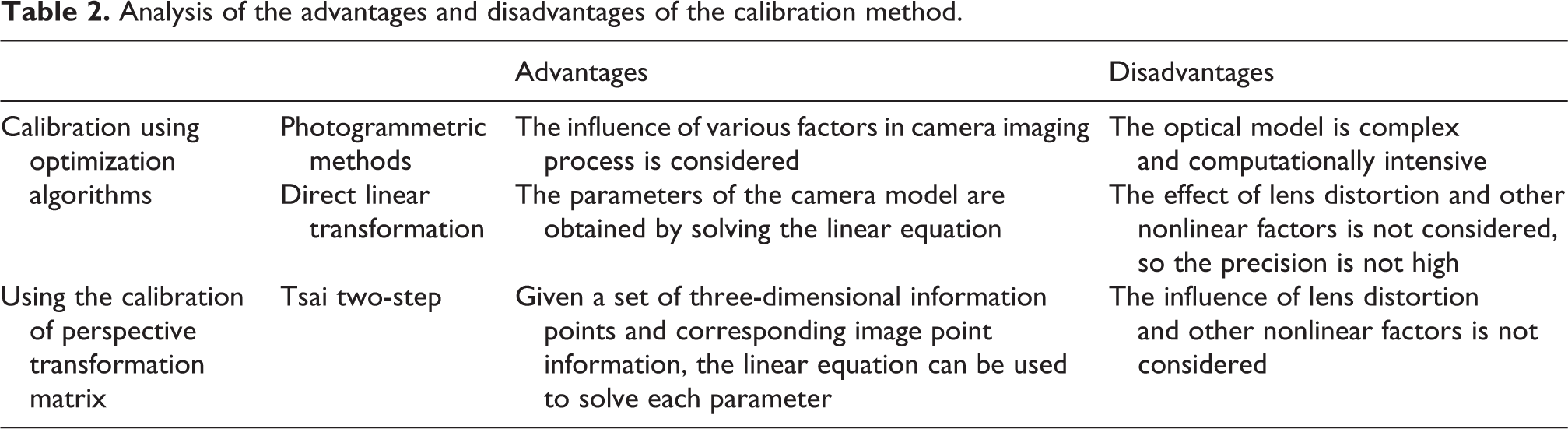

Camera calibration is a key link in stereo vision system to obtain 3D coordinates from 2D images. From the perspective of algorithm, traditional camera calibration can be divided into several categories, and each calibration method has a certain use environment, as shown in Table 2.

Analysis of the advantages and disadvantages of the calibration method.

3) Image feature extraction algorithm

Edge detection is an important algorithm for image feature extraction. Through the comparison of algorithms, the appropriate algorithm is selected for image processing, and its flow is shown in Figure 2.

Robot target edge detection and recognition process.

Badminton motion capture

The object’s goal is to perform human-computer interaction by capturing the hand movements of the badminton. Even if the three-dimensional coordinates of the specific marker point of the hand are changed to realize the interaction with the computer, the object belongs to the human-computer interaction in artificial intelligence and virtual reality. Artificial intelligence is a branch of computer science. It is a new technical science that studies and develops theories, methods, technologies, and application systems for simulating, extending, and expanding human intelligence. Research in this area includes the following aspects: robots, language recognition, human–computer interaction, virtual reality, pattern recognition, intelligent sensor networks, natural language processing, expert systems, and so on. Here, the human–computer interaction technology refers to a technology for realizing human–computer interaction through computer input and output devices.

(1) Establishment of imaging model and coordinate system

By analyzing the image sequence, restoring the pose of the target object is visual tracking. The behavior recognition is to identify the specific behavior of the target object by extracting the position and posture changes of the target object (such as area, number, length, position, etc.). To obtain the changes of the posture of the target through computer vision technology, we must first establish the transformation relationship between different coordinate systems through the imaging model.

In order to determine the relationship between the coordinate systems, first select the pinhole imaging model to establish the coordinate system. The complete coordinate system includes the marker coordinate system Om - XmYmZm , the camera coordinate system Oc - XcYcZc , and the camera ideal screen coordinate system Ou - XuYu , (hand marker points). The coordinates in the coordinate system and the camera coordinate system are represented by (xm , ym , zm ) and (xc , yc , zc ), respectively. The transformation relationship between the mark coordinate system and the camera coordinate system is shown in equation (15)

Among them: V 3×3 is the orthogonal unit rotation matrix, W is the translation vector, and Tcm is the conversion matrix, which contains three rotation components and one translation component. Perspective projection is a single-sided projection that is closer to the visual effect by projecting the body onto the projection surface using the central projection method. Perspective projection conforms to people’s psychological habits, that is, objects that are close to the viewpoint are large, objects that are far from the viewpoint are small, and disappear as far as the extreme point, becoming a vanishing point.

The relationship between a point (xc , yc , zc ) in the camera coordinate system and its projection (xu , yu ) in the ideal screen coordinate system is as follows

where λ is the scale factor, and the perspective projection matrix C is the internal parameter of the camera, which can be obtained by camera calibration. There are two points to note here. First, considering that the camera has imaging distortion, the midpoint (xu , yu ) of the ideal screen coordinate system will be determined by its corresponding point (xd , yd ) in the actual screen coordinate system by formula (15–16) calculated. Among them, (xo , yo ) is the position of the optical distortion center, s is the scaling factor, and f is the distortion factor. Another point is that each point in the image is measured in pixels, and converted to the camera coordinate system or mark coordinate system, the measurement unit is the physical length. Standard physical measurement unit.

Experiments of badminton motion capture

Badminton visual capture experiment background

Multimedia database motion capture system in badminton athletes training and badminton teaching has many advantages, the multimedia database system of learning of the badminton athletes action features real-time motion capture technology, through to the athletes of the roadmap and video frame analysis and processing, get good athletes score in the game of skill and action. 12,13 This way of learning can make the learning needs of athletes no longer restricted by regional conditions. Using the database system can record the athlete’s road map features and video frame capture technology and action features in real time, and count the corresponding technology and action data from the technology and action features, so as to better obtain the real-time multimedia data support of badminton technology and actions. 14,15 When the badminton picking robot is working autonomously, one of its most important functions and links is to be able to autonomously capture and locate the picked fruits and targets, which needs to rely on the powerful badminton visual capture system of the picking robot. 16 According to the working principle of the badminton motion feature capture system, the badminton visual capture system of the picking robot can also adopt the technology of badminton feature capture to independently locate the characteristics of the fruit and the target, so as to better realize the autonomous operation. 17,18

In order to solve the problem that fruit crop picking is easy to be damaged, the methods of motion edge detection and feature capture in badminton competition are introduced into the motion training process of picking robot to achieve the optimal posture of picking robot during operation. 19,20 In order to verify the feasibility of the scheme, the edge detection method based on LoG operator was used to detect the edge of the motion training process image of picking robot, and the optimal motion posture was obtained. The statistical analysis of the picking failure rate before and after training shows that this scheme can significantly improve the picking failure rate in the picking process and improve the picking accuracy, providing an effective design method for the research of picking robot. 21

Experimental design of robot image experiment

The badminton motion capture system can capture the game video frames in real time and collect the technical motion data from the motion features, so as to obtain the multimedia data support of badminton technical motion. When the picking robot works autonomously, its most important link is to locate the fruit target autonomously, which needs to rely on the powerful visual system of the robot. 22 According to the principle of badminton motion capture system, the vision system of picking robot can also use feature capture technology to locate the fruit target, so as to realize autonomous operation.

Truemotion is an inertial motion capture system. Like other motion capture devices, Truemotion consists of three main components: sensors, which can sense external signals and collect data; the signal collection and transmission equipment is responsible for collecting the signals collected by various sensors and transmitting them to the PC terminal. The data transmission uses wireless Bluetooth transmission technology and USB interface transmission technology. Signal processing equipment, mainly computer hardware (such as PC) and software equipment, the software equipment is Truemotion data processing software, responsible for the original processing of the collected signals. Truemotion combines navigation and angle positioning. The signal acquired by the sensor is processed in real time through the signal processing equipment to calculate the relative offset position of each joint of the body, which can be developed again for us to achieve the acquisition of human motion data and 3D reconstruction. As shown in Figure 3, Figure 4 and Figure 5, they are the system interface, experimental site and hardware equipment, specific experimental software and process. 23

Badminton motion capture system.

Picking robot image recognition experimental platform.

Experimental hardware and software equipment and operation process.

In order to verify the feasibility of using the scheme of badminton motion capture multimedia database system in the vision detection system of picking robot, the method of robot experimental test was used to verify the scheme. Firstly, the image processing of the vision system was tested (see Figure 6). 24,25

Picking robot test scene.

In order to verify the adaptability of the time system to the complex picking environment, the night operation environment was selected as the research object. Affected by the night light, the collected images often contain more noise. Firstly, the images were filtered and enhanced, and the image results were shown in Figure 7. 26,27

Enhanced image.

The accurate recognition rate and accurate positioning rate of the image can be obtained through the test. The results of five tests are counted and the results as shown in Table 3 28,29 are obtained. According to the data in the table, the accurate recognition rate and positioning rate of the image recognition system of the picking robot exceeds 95%. Therefore, the picking robot motion capture image recognition system designed in this research can quickly and accurately locate, capture and recognize motion, and effectively calculate the action frequency, providing an effective reference for the picking robot’s picking action. The test results show that the visual detection system designed in this article can accurately identify the fruit image at a high rate and can accurately locate the fruit, which can meet the demand of high-precision picking, so as to verify the feasibility of the scheme.

Picking performance test of picking robot.

Discussion

Analysis of visual image recognition technology of picking robot based on log algorithm

Badminton in the process of motion capture, motion capture objects are often the video sequence, the sequence is the game camera to obtain a set of image change over time, according to a certain time interval can get image along with the change of time relationship in the absence of special requirements, two pictures of time interval is the same, for dynamic image capture, you can get more abundant information than static image. In the process of image acquisition, image edge detection is the most important link. The main goal of image edge detection and edge detection algorithm is to test the maximum gradient change by making the image implicit information. By optimizing the gradient value and noise suppression, as shown in Figure 8, the effective edge position can be found. There are many edge detection operators, so Log algorithm is chosen. In the actual use of the logarithmic edge detection algorithm, the image is firstly filtered, and then the maximum gradient value of the image is detected to determine the edge position of the image.

The lower edge of LoG algorithm detects various ratios.

In order to capture the image edge detection, need to get a maximum of image edge gradient, through the setting threshold, keep higher than the threshold point, all the points below the threshold is set to 0, which can effectively identify the image edge, provide data support for fruit target information acquisition, the various test values as shown in Figure 9. According to the above experimental data, in order to analyze the relationship between the baseline distance of the visual system, camera angle, depth distance, and distance error, the surface of these discrete points can be simulated by mathematical algorithm. The interpolation approximation method requires the difference polynomial to pass through the known interpolation node, but this is prone to oscillation. Although there is no error in the interpolation node, the error outside the interpolation node will increase. Using polynomial interpolation for data fitting is to find a simple function, not through the known points, but to approach the original function as well as possible on the whole.

Statistical results of multiple test sets.

Due to the external influence, there may be some unstable points in the experimental data, such as large errors, which have a great impact on the experimental curve and directly lead to the deviation of the experimental data in the future experiment if the curve law is used. Therefore, through comparative analysis, the plane fitted by polynomial difference is finally selected. Based on the analysis of apple’s coordinate error, it is concluded that the error of the fruit in x and y directions is within the allowable range, but the z value is too large, so another experimental platform is built. This article mainly discusses the relationship between the position of the camera and the z value. In the large amount of data obtained, polynomial interpolation is used to compensate the data. This experiment can also be concluded as follows: according to the placement, different fitting surfaces can be used to compensate the values. This has a guiding significance for apple picking robot.

Combined analysis of motion capture and visual image detection of picking robot

In order to quantify and evaluate the recognition results of fall behavior, the recall rate reflects the proportion of the positive samples correctly determined in the total positive samples, while the precision reflects the proportion of the true positive samples in the positive samples determined by the classifier. Similarly, true positive (TP) and false positive (FP) values of sitting, walking, and other seven types of behaviors can be classified and identified, and we can use the precise rate to represent the recognition rate of behaviors, as shown in Figure 9.

As shown in Figure 10, the average recognition rate reached 95.85%. At the same time, the recognition rate of all the actions is higher than that of the experiments in the literature. In addition to high accuracy, the biggest innovation of the system lies in the adoption of longest common subsequence (LCSS) as the kernel function of support vector machine for the processing based on time series signals, and the better robustness and higher recognition rate optimization for the classification of time series information, which proves the feasibility of the scheme.

Recognition rate of each data set.

Conclusions

At present, the research on human motion capture and behavior recognition based on sensor pair in China is still in the development stage, but thanks to the rapid development of chip technology and computer science technology, it is believed that the research on human behavior based on sensor has attracted more and more researchers’ attention. With the advent of the information age and the popularization of computer, mobile phone and other multimedia terminals, multimedia has provided great convenience for sports teaching and athlete training. Only the use of advanced technical means to carry out the teaching reform, in order to achieve the best physical education teaching effect. The use of multimedia database system can provide students with more vivid learning materials to enhance students’ interest in learning, the use of advanced multimedia system can be real-time analysis of multiple videos, access to some of the standard and extreme actions of elite athletes, for teaching and training to provide materials for athletes. The badminton motion capture system has a high ability of image feature extraction, which can be used in the design of vision system of picking robot, effectively improve the target recognition ability of the system, and improve the level of automation.

Based on consulting a large number of human motion capture and behavior recognition domain, on the basis of comparing the common human motion capture scheme considering computer image detection scheme based on motion capture has low cost, simple operation, and accurate data, the advantages of the scheme is used to data collection, to ensure that research is established on the basis of reliable data. Edge detection is an important algorithm for image feature extraction. In the process of motion capture of badminton game video images, the edge detection algorithm can be used to express the key movements clearly. It can be applied to the motion training of picking robot to extract the characteristics of the optimal motion range of picking robot and provide the basis for motion training.

In this article, the robot can reach the best picking state with the fastest speed by using the position of image detection and programming. In order to verify the feasibility of the scheme, the picking robot was tested. In order to verify the feasibility of the scheme, the edge detection method based on Log operator was used to detect the edge of the picker robot’s motion training process image, and the optimal motion attitude was obtained. The statistical analysis of the picking failure rate before and after the training shows that this scheme can obviously improve the picking failure rate during the picking process, improve the picking accuracy, and provide an efficient design method for the research of picking robot.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.