Abstract

Due to the absorption and scattering effect on light when traveling in water, underwater images exhibit serious weakening such as color deviation, low contrast, and blurry details. Traditional algorithms have certain limitations in the case of these images with varying degrees of fuzziness and color deviation. To address these problems, a new approach for single underwater image enhancement based on fusion technology was proposed in this article. First, the original image is preprocessed by the white balance algorithm and dark channel prior dehazing technologies, respectively; then two input images were obtained by color correction and contrast enhancement; and finally, the enhanced image was obtained by utilizing the multiscale fusion strategy which is based on the weighted maps constructed by combining the features of global contrast, local contrast, saliency, and exposedness. Qualitative results revealed that the proposed approach significantly removed haze, corrected color deviation, and preserved image naturalness. For quantitative results, the test with 400 underwater images showed that the proposed approach produced a lower average value of mean square error and a higher average value of peak signal-to-noise ratio than the compared method. Moreover, the enhanced results obtain the highest average value in terms of underwater image quality measures among the comparable methods, illustrating that our approach achieves superior performance on different levels of distorted and hazy images.

Introduction

In recent years, underwater images have been widely used in marine energy exploration, marine environment protection, marine military, and other fields. 1 However, when light propagates in water, the water medium and water particles will absorb and scatter light, respectively, as shown in Figure 1. The absorption effect causes the color distortion of underwater images; the scattering effect causes the low contrast and blur of underwater images. 2 Therefore, underwater images present defects such as color deviation, low contrast, and blurry details. Such degraded images have a huge impact on subsequent feature extraction and object recognition. As a result, the clarity of underwater images has gradually become a research hotspot. The existing methods to improve the visibility of underwater images can be divided into image restoration methods (IRMs) and image enhancement methods (IEMs). 3

Schematic of underwater optical imaging.

The IRM is based on the underwater image degradation model, and the image is restored by inversely solving the underwater imaging model. A multitude of methods has been emerged for underwater image restoration based on dark channel prior (DCP) which was proposed initially by He 4 for image dehazing. However, directly applying the DCP algorithm to underwater images does not provide a good enhancement effect. Since the dark channel value obtained based on the minimization, operation is likely to be the red channel component in the dark channel calculation process, which leads to a dark image after restoration. Recently, some improved algorithms have been proposed, such as Chiang and Chen 5 combined the wavelength compensation method with the DCP algorithm to enhance the underwater image; Han and Chen 6 adopted the combination of the DCP algorithm and color correction. Besides, Nascimento et al. 7 proposed the underwater dark channel prior algorithm, an improved version of the DCP algorithm, which can accurately calculate the transmission map. Galdran et al. 8 proposed a DCP algorithm based on the red channel (R-DCP), which is considered to be an improvement of the DCP method. In his research, colors associated with short wavelengths were restored, which help restore the lost contrast.

The abovementioned IRMs improve the quality of underwater images to a certain extent. However, the disadvantages of these physics-based methods are that they require high computing resources and consume a long execution time. Moreover, these approaches are usually only suitable for image processing in specific scenes. Thus, the scope of their practical applications is limited.

The IEM can directly improve the visibility of underwater images without knowing the underwater optical model and any physical characteristics. IEM mainly includes 9 : underwater image enhancement algorithm based on the histogram, underwater image enhancement algorithm based on Retinex, and underwater image enhancement algorithm based on fusion technology. Among the image enhancement algorithms based on histograms, the best algorithm is the contrast limited adaptive histogram equalization (CLAHE) method proposed by Zuiderveld, 10 which has been validated to be effective for enhancing the contrast of underwater images. Besides among the underwater image enhancement algorithms based on Retinex, the best processing effect of the algorithm is the multiscale Retinex with color restoration (MSRCR) proposed by Jobson et al., 11,12 which has been verified to effectively correct the color cast of color-distorted images.

However, the main deficiency of the CLAHE and MSRCR methods is that they cannot achieve both color cast correction and contrast enhancement. To further improve the image quality, some multistep methods that fuse different algorithms are beginning to attract attention. These multistep methods based on fusion technology can achieve better results than single measure in solving main problems of underwater images such as color deviation, low contrast, nonuniform illumination, noise, and blurry details. Li et al. 2 proposed a multistep fusion method based on the principles of minimum information loss and the histogram distribution prior to remove the blur of underwater images. Ancuti et al. 13 proposed a fusion-based approach (FB) to enhance the visibility of underwater images and videos without concentration on specific conditions. This method obtains two input images by utilizing white balance (WB) and bilateral filtering to original image. And then Gaussian pyramid and Laplace pyramid are used to fuse different weights from the two input images. However, there is a partial reddish effect in the enhanced image processed by the FB method. Moreover, the limitation of the FB method is that it does not consider the underwater image degradation process, and it cannot achieve uniform enhancement.

Therefore, an improved approach for eliminating the local reddish effect and achieving uniform enhancement for underwater images is proposed in this article. In our fusion framework, the first input is the same as the FB method. But the second input is computed from the color-corrected version of the original image and then this input is obtained by utilizing the DCP algorithm. This input is designed to reduce degradation due to particle scattering. Then the multiple features of the two input images are extracted as the weight map of fusion. Ultimately, a high-quality image with vivid color and fine details can be acquired by fusing the two input images based on a multiscale fusion strategy. The advantage of the proposed approach is that by changing the CLAHE algorithm used in the second input of the FB method to the DCP algorithm, which takes into account the underwater image degradation process, it can effectively eliminate the partial reddish effect introduced by the FB algorithm and has a wide range of applications.

The remainder of this article is arranged as follows. In the second section, the proposed approach is introduced in detail. In the third section, results and discussion are illustrated to demonstrate the superior performance of the proposed approach. In the fourth section, the conclusions are presented.

The proposed approach

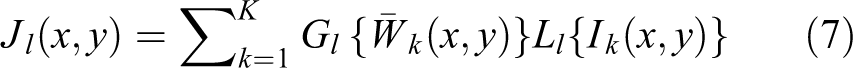

The flow chart of the proposed approach implementation is shown in Figure 2. The proposed approach is composed of three parts, that is, design input images, calculate the weight of input images, and multiscale fusion.

Flow chart of the proposed approach implementation.

First, the first input image (input 1) is obtained by utilizing the WB algorithm to correct color from the original image and the second input image (input 2) is obtained by applying the DCP algorithm to input 1 to reduce the degradation due to particle scattering. Then calculate the global contrast weight, local contrast weight, saliency weight, and exposure weight of input 1 and input 2, respectively, and normalize the four weights of the two images to obtain the normalized weights W 1 and W 2. Finally, input 1 and input 2 are fused according to normalized weights W 1 and W 2. To avoid undesirable halos in the output image caused by edge mutation, a multiscale fusion strategy is adopted.

Processing algorithm for input images

In our fusion strategy, a well-designed input image is the key to obtaining a high-quality output image. As shown in Figure 2, the first derived input image (input 1) processed by the WB algorithm is obtained to correct the color deviation of the original image, while the second (input 2) processed by the DCP dehazing algorithm is computed to enhance contrast and sharpness of input 1.

WB algorithm of input 1

In our experiments, input 1 was first obtained by applying a simple and efficient WB operation to an original image. The simple WB algorithm based on the shades of grey 14 with gain factor is more computationally effective. The parameter settings are the same as in reference. 13

In this algorithm, the first input image I out processed by color correction is estimated by original image I and the value μ

where

This WB method derives the first input of the fusion process from the original underwater image efficiently. However, the WB method is insufficient for the amelioration of visibility. To obtain a better-enhanced image, the second input of the fusion process is defined to enhance the contrast of the underwater image.

DCP dehazing algorithm of input 2

DCP algorithm is another major processing step that aims to enhance the contrast of the color-corrected image by dehazing due to volume scattering. To achieve an optimal contrast level of the image, input 2 was obtained by applying the DCP dehazing algorithm proposed initially by He 4 for image dehazing. The implementation steps of the dehazing algorithm 4,15 in this study are as follows:

(1) Underwater hazy image model

The model to describe a hazy underwater image is represented as follows

where I(x) is the original image and J(x) is the enhanced image. t(x) is a transmission map describing the portion of the light that reaches the camera, x = (x, y) T denotes the pixel coordinate vector in the image. Where x and y denote the pixel coordinate values, respectively. A is the estimated background color of the water body, A = (r, g, b) T is a vector, where r, g, and b are the values of the three channels of the image.

(2) Acquisition of the transmission map

The transmission map of the DCP dehazing algorithm is obtained as follows, transforming equation (2) into

where c denotes the three RGB channels of the image and Ac represents the value of the estimated color A under channel c.

(3) Restore the image

The image restoration part is the same as the traditional DCP algorithm. The calculation formula is as follows

where the role of t 0 is to prevent the transmittance from being too small, resulting in the brightened image after enhancement. Similar to the parameter settings of the DCP dehazing algorithm in the air, it has been tested and verified that the best processed effect is to reduce 30–50% of the haze using the DCP method.

The DCP dehazing algorithm derives the second input of the fusion process from the color-corrected version. It takes into account the underwater image degradation process; it can effectively eliminate the partial reddish effect and enhance the contrast of the underwater image.

Weight calculation of input images

To further improve the quality of underwater image restoration, this article extracts the feature information of the two input images, thereby defining the fusion weight map, that is, the global contrast weight map (WG ), 16 the local contrast weight map (WL ), 13 the saliency weight map (WS ), 17 and the exposedness weight map (WE ). The calculation results of these weight maps are shown in Figure 2.

Multiscale fusion technology

To generate consistent results, their four weight maps based on the input images I

1 and I

2 are extracted, respectively. Firstly, sum the four weight maps of the input image I

1 to obtain the weight map W

1 of I

1. Similarly, the weight map W

2 of the input image I

2 can be obtained; then, normalize the W

1 and W

2 to obtain the corresponding standardized weights figure

where k is the serial number of the input images,

The enhanced image version J(x,y) is obtained by fusing the defined inputs with the weight measures at every pixel location (x,y)

where Ik

symbolizes the input that is weighted by the normalized weight maps

To avoid undesirable halos in the output image during single-scale fusion, this article uses multiscale fusion technology. The basis is the Laplacian pyramid originally proposed by Burt and Adelson, 18 which has now become a mature image fusion technology. The specific methods are shown in reference. 13 Multiscale fusion calculation is as follows

where l denotes the number of the pyramid levels (l = 5), L{I} represents the Laplacian version of the input I, and

Results and discussion

In this section, experimental results are exhibited to assess the performance of the proposed approach. Qualitative results, quantitative results, and applications are implemented, respectively. Four existing excellent underwater images processing methods are utilized to compare with the proposed approach, that is, CLAHE, 10 DCP, 4 MSRCR, 12 and FB. 13 Most of the images used for the experiments come from FB data set, 13 U45 data set, 19 and real-world underwater image enhancement (RUIE) data set. 20 All experiments in this article were implemented in Matlab R2018b with the same CPU (Intel i7-7700 3.60 GHz), and share the same parameter settings that have been specified in corresponding equations.

Qualitative results

In qualitative assessment, eight original underwater images are displayed and corresponding results processed by different methods are compared with the proposed approach. The images for qualitative evaluation tests come from reference. 13 The eight original images were divided into two groups. The first group has different degrees of color distortions, as shown in Figure 3 (image1–image 4); and the second group of original images has different degrees of blur, as shown in Figure 4 (image 5–image 8).

Comparison of different underwater image processing methods for color distortion image. (a) Original images, (b) CLAHE, (c) DCP, (d) MSRCR, (e) FB, and (f) the proposed approach. CLAHE: contrast limited adaptive histogram equalization; DCP: dark channel prior; MSRCR: multiscale Retinex with color restoration; FB: fusion-based approach.

Comparison of different underwater image processing methods for hazy image. (a) Original images, (b) CLAHE, (c) DCP, (d) MSRCR, (e) FB, and (f) the proposed approach. CLAHE: contrast limited adaptive histogram equalization; DCP: dark channel prior; MSRCR: multiscale Retinex with color restoration; FB: fusion-based approach.

Figure 3(a) to (f) shows the comparison of different underwater image processing methods for color distortion image. Figure 3(b) shows the results of CLAHE. Although the CLAHE algorithm can enhance the contrast of the image, the color cast of the processed image still exists. As shown in Figure 3(c), due to the lack of color compensation in DCP, there is still a color cast phenomenon in the processed image, which causes the overall color of the image to be bluish and invalid for images with various color distortions. There are many artificial adjustment parameters for MSRCR in Figure 3(d). The same set of parameters can restore color-distorted images, but when processing other color-distorted images, due to improper color compensation and gain, the image appears grayish-white and there are a lot of halos at the edges of the image. In Figure 3(e), the FB method performs well in hazy images. However, this method can introduce a local reddish effect in processed images. It can be found that underwater images enhanced by the proposed approach show neither under enhancement nor over enhancement as shown in Figure 3(f). The results of the proposed approach show a natural color in comparison with the results of the abovementioned methods.

Figure 4(a) to (f) shows the comparison of different underwater image processing methods for a hazy image. Figure 4(b) presents the processed results of the CLAHE algorithms for different images. The contrast was improved and the images were clear for slight hazy images. However, for severe hazy images, some local areas of the images were overbright or overdark. As shown in Figure 4(c), after the DCP method processed the second group of underwater images, the image contrast was improved, but as the backscattering intensified, the algorithm gradually failed. Figure 4(d) shows the processed results of the MSRCR algorithm. The results show that the MSRCR enhancement algorithm can improve contrast and restore color. However, the MSRCR algorithms suffer from noise amplification in relatively local regions, which may lead to serious color mottles. As shown in Figure 4(e), the FB algorithm has an excellent performance in processing serious hazy images, but for slight hazy images, it may lead to the excessive enhancement and the image is overbright. In Figure 4(f), the proposed approach exhibits superior performance whether it is for images with slight backscatter or severe backscatter. For example, the backscattering of the eighth group of images (image 8) is the most serious. After processing with the proposed approach, the cylindrical outline behind the diver is visible, and the blue–green occlusion is better removed.

Quantitative results

In quantitative evaluation, the performance of the proposed approach is evaluated and corresponding results processed by different approaches are compared by performing a small sample test of 30 images from U45 data set 19 (Figure 1A(a) in Appendix 1) and a large sample test of 400 images from RUIE data set 20 and the Internet. The 400 images are regularly divided into four groups, that is, G1: blueish image, G2: greenish image, G3: blue-and-greenish image, and G4: random image. A nonreference underwater image quality measure (UIQM) 21 is employed to quantify the results of the five mentioned methods in consideration of the high-quality images share universal features: genuine colors, high contrast, and fine sharpness. The default settings for UIQM are implemented in this study. A higher UIQM value indicates that the image has better performance in terms of color, contrast, and sharpness.

Table 1 exhibits UIQM values on 30 images from U45 data set. 19 Due to limitation of pages, the processed results of the 30 images are shown in Figure 1A(b) to (f) from Appendix 1. The maximum values have been marked in bold for each row in the tables, which represents the best result among compared methods. Note that since the poor qualitative results (Figure 1A(c) in Appendix 1) after processing by the DCP method are different from the better quantitative results, it is not compared with other methods here. Table 1 reveals that 12 of the 30 tested images show the highest UIQM value by the proposed approach. Although the proposed approach does not perform best for every image among compared methods, the results of the proposed approach show the highest average UIQM value, which demonstrates that the proposed approach exceeds the compared methods in terms of the UIQM values and achieve a better performance in high contrast and fine sharpness of the processed images.

Qualitative results in terms of UIQM.

Bold font represents the maximum value of underwater image quality measure (UIQM) among the four processing methods (CLAHE, MSRCR, FB, Proposed approach).

CLAHE: contrast limited adaptive histogram equalization; DCP: dark channel prior; MSRCR: multiscale Retinex with color restoration; FB: fusion-based approach; UIQM: underwater image quality measure.

Table 2 presents the average UIQM values on 400 underwater images. 20 As presented in Table 2, it is found that the proposed approach also performs an outstanding performance on 400 natural images by obtaining the highest average UIQM value. Moreover, the average UIQM value of each group of images for the proposed approach is greater than that of the FB method, indicating that the proposed approach has better performance than the FB method. It can be observed that CLAHE and DCP methods also perform well on these images. However, when referring to processed images from Figure 1A(b) and (c) in Appendix 1, the results processed by CLAHE and DCP methods are not impressive due to severe color distortion, especially the DCP algorithm, the processed image is almost the same as the original image. In conclusion, compared with the three other methods, the proposed approach performs well in terms of the UIQM, which objectively demonstrates that the proposed approach can effectively enhance contrast and details of underwater degraded image.

Average values of UIQM for 400 underwater images.

CLAHE: contrast limited adaptive histogram equalization; DCP: dark channel prior; MSRCR: multiscale Retinex with color restoration; FB: fusion-based approach; UIQM: underwater image quality measure.

To comprehensively evaluate the performance of the proposed method, we evaluate the FB algorithm and its improved version (the proposed approach) through traditional image evaluation standards, that is, mean square error (MSE), peak signal-to-noise ratio (PSNR), and processing time. Table 3 exhibits the comparison results of MSE, PSNR, and processing time for FB algorithms and proposed approach on 400 natural underwater images. 20 The lower average MSE values and higher average PSNR values indicate that the proposed approach introduces a small amount of noise and retains more valuable image information in comparison to the FB method. Moreover, the processing time of the proposed approach is almost the same as that of the FB method. Compared with FB method, our approach requires less computing time to process the low-pixel images (300 × 400), while slightly longer time for high-pixel images (600 × 800 and 1200 × 1600), which illustrates that our approach has certain advantages in processing low-pixel images. Generally, the results objectively demonstrate the superiority of the proposed approach for underwater image enhancement compared to that of the FB method.

MSE, PSNR, and processing time values of 400 underwater images.

MSE: mean square error; FB: fusion-based approach; PSNR: peak signal-to-noise ratio

Applications

The goal of image enhancement is to provide high-quality images for further applications, such as feature matching, edge detection, and target recognition. Aiming to verify the utility of the proposed approach, an application test is carried out. The original implementation of speeded-up robust features (SURF) 22 is applied exactly in the same way in six cases, namely the original image, CLAHE, DCP, MSRCR, FB, and the proposed approach.

Figure 5 presents the number of correct SURF feature points matching. The amounts of the feature point matching are 2 for original images, 15 for images processed by CLAHE, 9 for images processed by DCP, 5 for images processed by MSRCR, 19 for images processed by FB, and 21 for images processed by the proposed approach, respectively. The results demonstrate that the proposed approach significantly increases the number of matched pairs of keypoints by enhancing the global contrast and the local sharpness in underwater images.

Application tests. (a) Original images, (b) CLAHE [16], (c) DCP [8], (d) MSRCR [15], (e) FB [19], and (f) the proposed approach. CLAHE: contrast limited adaptive histogram equalization; DCP: dark channel prior; MSRCR: multiscale Retinex with color restoration; FB: fusion-based approach.

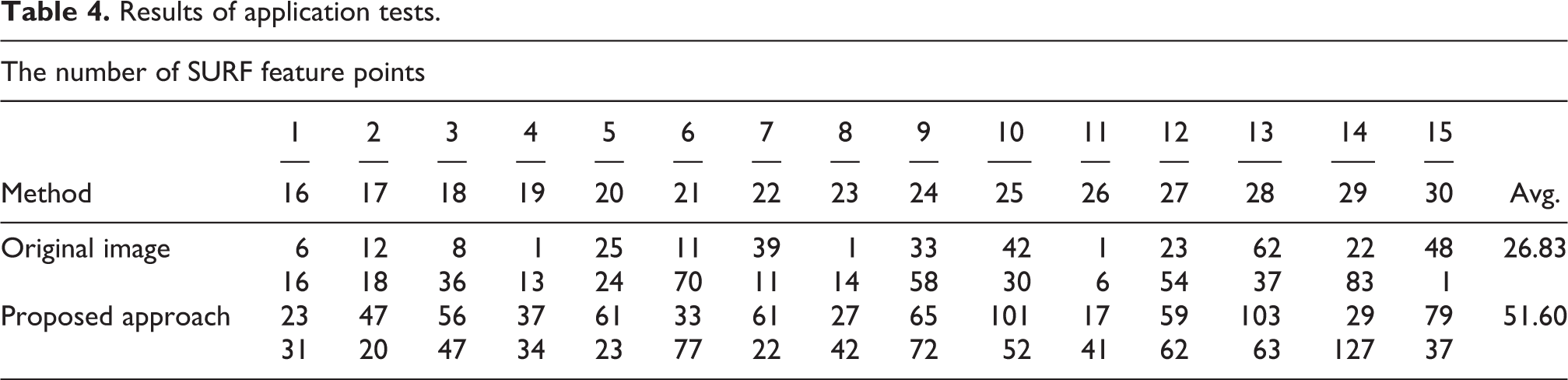

To further verify the utility of the proposed method, the comparative experiments before and after image processing were performed. We employed the SURF operator to calculate feature points for processed image and corresponding affine transformation and matched the feature points for the pair of images. Table 4 exhibits the number of correct SURF feature points matching on 30 images from U45 data set. 19 The average amounts of correct SURF feature points matching on images processed by our methods are far larger than that of the original images. The high scores presented by the proposed approach can be attributed to well-pleasing color and clear visibility of enhanced images. Therefore, the application tests further illustrate the effectiveness and practicality of our proposed approach in feature extraction.

Results of application tests.

Conclusion

In this article, an improved approach for eliminating the local reddish effect and reducing image noise is proposed. The approach first applies the WB and DCP dehazing technologies to the original image, respectively; then two input images were obtained by color correction and contrast enhancement; finally, the restored image was obtained by utilizing the multiscale fusion strategy which is based on the weighted maps constructed by combining the features of global contrast, local contrast, saliency, and exposedness.

The results show that the proposed approach has the characteristics of vivid color, improved contrast, and natural appearance. The qualitative results show that the proposed approach has achieved the goal of correcting color cast and removing haze of the underwater image. The quantitative results demonstrate that the proposed approach also maintains an excellent performance on different levels of distorted and hazy images by achieving the highest average UIQM value compared with that of the four advanced methods. Moreover, the lower average MSE values and higher average PSNR values indicate that the proposed approach introduces less noise and retains more valuable image information in comparison to that of the FB method. Consequently, experimental results prove the effectiveness of the proposed approach in underwater image enhancement.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by the Scientific and Technological Development Project of Shandong Province (2019GGX104013 and No. 2019GGX101063) and a Project of Shandong Province Higher Educational Youth Innovation Science and Technology Program (2020KJN002).