Abstract

Fire is a fierce disaster, and smoke is the early signal of fire. Since such features as chrominance, texture, and shape of smoke are very special, a lot of methods based on these features have been developed. But these static characteristics vary widely, so there are some exceptions leading to low detection accuracy. On the other side, the motion of smoke is much more discriminating than the aforementioned features, so a time-domain neural network is proposed to extract its dynamic characteristics. This smoke recognition network has these advantages:(1) extract the spatiotemporal with the 3D filters which work on dynamic and static characteristics synchronously; (2) high accuracy, 87.31% samples being classified rightly, which is the state of the art even in a chaotic environments, and the fuzzy objects for other methods, such as haze, fog, and climbing cars, are distinguished distinctly; (3) high sensitiveness, smoke being detected averagely at the 23rd frame, which is also the state of the art, which is meaningful to alarm early fire as soon as possible; and (4) it is not been based on any hypothesis, which guarantee the method compatible. Finally, a new metric, the difference between the first frame in which smoke is detected and the first frame in which smoke happens, is proposed to compare the algorithms sensitivity in videos. The experiments confirm that the dynamic characteristics are more discriminating than the aforementioned static characteristics, and smoke recognition network is a good tool to extract compound feature.

Keywords

Introduction

About 6–7 million times of fires happen every year, which cause huge economic losses. About 0.2% of gross domestic product of the Global are destroyed and 1 billion people are killed by fire. 1 This is only the direct loss, and the indirect loss is about five times of the direct loss. So, it is very important to detect fire and provide an alarm as early as possible. It is well-known that smoke always happens before flame and smoke is difficult to be sheltered. Therefore, it is a good idea to detect smoke rather than flame.

Many methods are developed to detect smoke based on the chrominance, 2,3 texture, 4 –7 shape, 7,8 frequency, 9 transparency, and so on. 10 But smoke chrominance is widely varied according to fire material, and its varying range is too wide to cover all samples. The common smoke chrominance are black, white, gray, and pink. Smoke also has many types of textures, which is related to frequency. The frequency of heaven smoke is easy to obtain, but this computing is difficult for the one of thin smoke because the frequency of the latter is flooded by chaotic environments. So, the methods based on chrominance, texture, frequency, and transparency are difficult to differentiate smoke from other similar objects. If smoke is thin or less, which usually happens at the early stage of fire, the classifying accuracies would be more worse. Haze and fog are usually mistaken as smoke. That is also the reason why these traditional methods are not enough sensitive.

Smoke is always rising, so it is a good idea to make use of motion. Some methods have been developed based on this characteristic, 7,10 –14 and these works are concluded by Luo et al. 15 Smoke motion is very different from other objects, but there is still some confusing objects, such as waving flags, climbing vehicles on mountain, moving light, and so on. 11 Though the appearances of flag, vehicle, and light are completely different from the one of smoke, their motion characteristics are similar. These exceptions usually happen when smoke is assumed to move vertically up. If this hypothesis is not assumed and the moving direction is random, there will be more exceptions. Though it is true that smoke always goes upwardly, there is a implicit assumption that an axis of the camera frame should be perpendicular to the horizon. In most cases it is met, but it is a hypothesis with some exceptions.

Though dynamic characteristics of smoke is different from many others, it is difficult to describe and extract this motion information by hand. That is why there is not many researches to detect smoke by dynamic characteristics. Deep learning is a good tool to extract features, so this article proposes a deep neural network to detect smoke. This network focuses on time-domain features of consecutive images. Though there is no such benchmark as ImageNet, COCO to evaluate the proposed method, a contrasting experiment is still conducted based on several small data sets, such as visor, 16 fesb, 17 yfn, 18 and VisiFire. 19 The experiments validate that: (1) dynamic characteristics is extraordinarily discriminating with better scores even based on these extern data sets and (2) smoke recognition network is a good tool to extract compound feature. The pipeline of the proposed network is illustrated in Figure 1. The probabilities of smoke, cloud, fire, flag, fog and haze, vehicle, and others of a typical sample are [99.88 0 0 0 0.12 0 0], respectively, and the maximum, 99.88%, shows that the image includes smoke.

The pipeline of the proposed network. The probabilities of smoke, cloud, fire, flag, fog and haze, vehicle, and others of the typical image are [99.88 0 0 0 0.12 0 0], respectively, and the maximum, 99.88%, is corresponding to smoke. The network extracts the dynamic characteristics and distinguishes smoke from cloud, fire, waving flags, fog and haze, vehicle lighting at night, and climbing vehicles, which usually deceive many others methods. (a) The proposed network. (b) A typical sample.

The rest of this article is organized as four sections. In the second section, we describe a brief state of the art in smoke dynamic characteristics. The third section presents the details of our proposed method. The fourth section highlights some experiments with comparison with other methods. Finally, the fifth section provides a conclusion.

Relevant work

Such static characteristics as chrominance, 2,3 texture, 4 –6 shape, 7 frequency, 9 and transparency 19 are adopted to detect smoke, but the wide-ranging varieties of these characteristics make the recognition difficult. 15 Even some of these characteristics are synthesized with machine learning methods. 4,8,19 –23,24 Based on surface wave and Markov tree model to extract smoke textures, Ye et al. adopted support vector machine (SVM) to judge whether there is any smoke. 4 After synthesizing the edge blurring, the gradual changing of smoke energy and the gradual changing of smoke color, Lee et al. also adopted SVM to make decisions. 20 After spectral features are extracted, Li et al. 25 classified smoke, cloud, and underlying surface by a back-propagation neural network. Yuan et al. 8 extracted Haar-like features and statistical features, and made decisions by AdaBoost.

Dynamic characteristics, such as moving speed, 11 moving direction, 12 moving trail, 26 and the changing of smoke contour during moving, 7 which usually are accompanied with tracking algorithms, 27 –29 have been already adopted to detect smoke. Yu et al. 11 adopted optical flow to trace smoke. Lu et al. 13 adopted the spatiotemporal histogram to calculate the speed and acceleration information of smoke and judged whether it is smoke. Yuan 26 adopted a new frame to collect the moving areas and created an accumulative motion model to estimate the motion orientation of smoke. Calderara et al. 14 detected scope of moving smoke through energy variation. Tian et al. 6 adopted NRLBP (no repeated LBP algorithm) to extract smoke features, recorded the continual changes in time domain, and made the final decision by SVM. These works have been proved that dynamic characteristics is discriminating, but the distinguishing ability is not very outstanding because the dynamic characteristics are extracted into some hand crafted features. And there are some hypotheses underlying. The commonly used hypothesis is that camera should be fixed with one axis being vertical, so smoke rises upward. It is helpful to improve the accuracy but the improvement is limited. It is still difficult to be applied in surveillance scenario.

Since both static and dynamic characteristic are helpful, some synthesized method are proposed. Calderara et al. 30 used Bayesian model combined texture and color features of moving objects to detect smoke. But the result is still worrying. The confusing objects, such as cloud, fog, and haze, cannot be rightly distinguished. The reason might be that smoke are too various, and hand crafted features are not robust enough to include all diversification. So, there is still not an automatic product which could recognize smoke for other objects.

Deep learning is a new branch of machine learning with the capability to automatically extract features by a series of convolutional layers. 31 Convolutional neural network (CNN) has been proved to be good enough to extract static features from images with an outstanding discriminatory power, equaling or exceeding human level. 32,33 Sheng et al. extracted smoke static features by CNN and dynamic characteristic by recurrent neural network to recognize smoke. 34 But the 2D convolution kernel could not collect the temporal information between the consecutive images. Fortunately, Tran et al. 35 has proposed a 3D convolution (C3D) network that included 3D convolution kernel and 3D pooling layer for action recognition, which could automatically extract the temporal and spatial features and achieve an accuracy of 85.2% on UCF-101 data set. Inspired by this network, a simplified network is proposed to extract dynamic characteristics, which achieved an accuracy of 98.07% in visor data set, exceeding the best score of today, 85.20%. 32

The proposed method

The network structure

The 3D ConvNet is very suitable for the spatiotemporal signals. 35 Compared with 2D ConvNet, 3D ConvNet can better model temporal information through 3D convolution and 3D pooling. In 3D ConvNets, convolution and pooling operations are performed spatially and spatially. While in 2D ConvNets, they are only performed spatially. The input of our network is 16 consecutive images, and the kernels of all convolution and pooling layers are 3D, defined by depth, length, and width. For examples, the size of all convolutional kernels are 3 × 3 × 3, which has been proved to be better than 7 × 7 × 7 and 5 × 5 × 5 in the smoke task, and the one of all pooling kernels is 2 × 2 × 2. 35 The convolutional layers can find various localized features, and output different features. The pool layers condense the large amount features from the convolutional layers into small vectors.

Our network has 13 layers, as shown in Figure 2. The network includes five convolution layers, and the channels of the first to fifth convolutional layers are 64, 128, 256, 256, and 256, respectively. The strides of all convolution layers are: (1) 5 max pool layers are included and their strides are 2. (2) After these layers, there are two fully connected layers, each of which has 1024 hidden neural units. A softmax layer follows the last fully connected layers, which converts video clip with size of 16 × 3 × 112 × 112 into a 7 × 1 output during a transitional 512 × 1 × 4 × 4 result. The output is a probability array with seven elements, which represent the probabilities of smoke, cloud, fire, flag, fog and haze, vehicle, and others, respectively. The position with the maximum value in the array is the most possible category. For example, the result [

Our network has eight major layers, including five convolutional and five pool layers, two fully connected layers and a softmax layer. The size of all convolutional kernels are 3 × 3 × 3, and the one of all pooling kernels is 2 × 2 × 2.

The loss function is defined to drive a backpropagation function to update the network parameters. It has two parts, one being the difference between the probability of the right classification

where

The sample data set

The public data sets, such as UCF-101 and Sports-1M, lack smoke samples. So, 108 videos is selected from YouTube and four videos from VisiFire as a smoke data set. Each video is randomly cut into video clips at different parts with size of 3 × 112 × 112 and 16 consecutive frames and be assigned with the corresponding labels. The positive subset includes samples from various scenes, such as indoor, outdoor, urban, forest, grassland, open areas, and so on, and from various perspectives. The negative subset includes samples without smoke, and the samples with some confusing objects are specifically appended, such as cloud, fire, waving flags, waving leaves, fog and haze, vehicle lighting at night, climbing vehicles, and others. Both the positive subset and the negative subset include samples of distant view and close view. The samples of the data set is shown in Table 1 and six typical clips are shown in Figure 3.

The sample data set.

Examples of the positive and negative subsets. Every sample are illustrated with four frames. (a) Six samples of the positive subset include farm, indoor, forest, house, and ground. (b) Six samples of the negative subset include fire, cloud, flag, vehicle lighting, and waving leaves. The perspectives of these samples are various, including overlook, side looking, and looking down.

The training process

The hardware platform is configured with Nvidia GTX1080 and Intel i7-6700k. The relevant parameters of the training process are listed in Table 2.

The hyper parameters.

The dynamic characteristics revealed by deep neural network

Our network costs about 18 min 16 s every epoch, and achieves the best accuracy 90.10%. The loss values and the test accuracies are evolving along the training epochs, as shown in Figure 4.

The loss values and the test accuracy during the training process. The downward green curve is the loss values and the upward blue curve is the test accuracy. The horizontal axis is the training epochs. The left vertical axis is loss values and the right is accuracies.

To demonstrate the dynamic characteristics revealed by deep neural network, four typical channels of the 1st, the 2nd, the 3rd, and the 4th convolution layers are shown in Figure 5, corresponding to the 3rd column, the 4th column, the 5th column, and the 6th column. Only the strongest responses of every convolutional layer are listed. For example, the 8th channel is the strongest response of the 1st convolution layer, which is shown in the 3rd column. The 3rd column, the response of the 1st convolutional layer look like optical flow, and the ones of the 4th convolution layer look like motion vectors of smoke. The higher the level of the convolution layer, the more abstract the meaning, and the more difficult to understand its physical meaning. So, only some responses of the first four convolutional layer are shown. It is clear that the network has learned to find the changing areas and provides dynamic characteristics, which means that the network could effectively extract temporal and spatial feature information. Of course, the sizes of the 3rd column, the 4th column, the 5th column, and the 6th column should be a half of the preceding one because the sizes of the pooling kernels are 2 × 2 × 2, but be enlarged as the same size in Figure 5.

A part of feature maps of the proposed network. Every row is a sample of feature maps. The first row is a smoke sample and the second row is a cloud sample. (a) and (b) the frames of a video clip with the size of 112 × 112. (c) The responses of the 8th convolutional channel of the 1st convolutional layer with the size 112 × 112. (d) The responses of the 23rd channel of the 2nd convolutional layer with the size 56 × 56. (e) The responses of the 37th channel of the 3rd convolutional layer with the size 28 × 28. (f) The responses of the 45th channel of the 4th convolutional layer with the size 14 × 14. The sizes of (d) to (f) are a half of the preceding one.

Experiments

Two experiments are conducted to validate the network. The first one is to measure how good the network judges whether there is smoke by classifying accuracy. Two tests are executed to demonstrate the accuracy of the proposed network, one based on our data set and the other one based on four external data sets. The second experiment is to measure how sensitive the network could be, and a new metric is proposed. The proposed network finds smoke averagely at the 23rd frame, which is more sensitive than other algorithms.

The classifying accuracy

The proposed network recognizes smoke, fire, fog and haze, cloud, vehicle, flag, and others on our data set with accuracy 78.00%, 84.06%, 99.43%, 100.00%, 95.00%, 95.00%, and 91.00%, respectively. Besides, the average classifying accuracy is 87.31%. The classifying accuracy is shown in Figure 6.

The classifying accuracy. The rows represent the Top-1 accuracy of each category. The accuracy of smoke is 78.00%. The gray dotted line is the average accuracy, 87.31%.

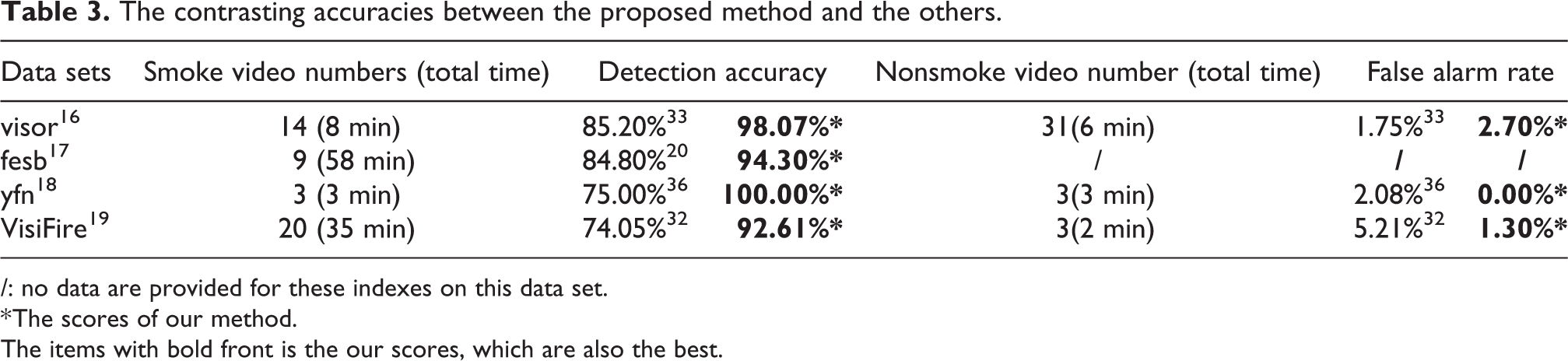

To compare the proposed method with other methods, a contrasting experiment is executed. To be fair, our data set for training and preceding testing is abandoned and four external famous data sets, which are visor, 16 fesb, 17 yfn, 18 and VisiFire, 19 are selected to conduct the experiment. As shown in Table 3, our smoke detecting rates achieve 98.07%, 94.30%, 100%, and 92.61% on visor, fesb, yfn, and VisiFire, respectively, and the corresponding ones are 85.20% contributed by Sebastien et al. 33 on visor, 84.80% contributed by Lee et al. 20 on fesb, 75.00% contributed by Li et al. 36 on yfn, and 74.05% contributed by Zhang et al. 32 on VisiFire. Our false alarm rates achieve 2.70% on visor, 0.00% on yfn, and 1.30% on VisiFire, and the corresponding ones are 1.75%, 33 2.08%, 36 and 5.21%, 32 respectively. It is obvious that the proposed method is much better than 2D CNN network 32 and traditional method 36 with the best scores in all metrics.

The contrasting accuracies between the proposed method and the others.

/: no data are provided for these indexes on this data set.

* The scores of our method.

The items with bold front is the our scores, which are also the best.

Due to the coexistence of smoke and fire, the images whose predicted label are fire with Top-1 hit, usually include smoke also. The reason might be that fire is more obvious than smoke when both are visible. So, this brings down the classifying accuracy of smoke. So, the recall of smoke is provided to show how good the network is in recognizing smoke. The mean recall is 82.40%, which is shown in Figure 7.

The recall of smoke. The purple points represent the precisions in corresponding recall. The mean precision is 82.40%.

The sensitiveness

The sensitiveness is the serial number difference between the frame which are recognized as smoke and the frame in which smoke has occurred. The sensitiveness is very important to alarm early fire as soon as possible. The test is executed on the four external data sets. Our network finds smoke at the 25th, 16th, and 32nd frame. The mean sensitiveness of the proposed method is 23, which is based on the four external data sets. The corresponding ones are 150 30 on visor, 88 9 on yfn, 99 8 on VisiFire, and 91 11 on VisiFire. The sensitiveness is shown in Table 4.

The contrasting sensitiveness between the proposed method and the others.

/: no data are provided for these indexes on this data set.

The mean sensitiveness is provided to contrast these methods. The mean sensitiveness of the proposed network 8,9,11,30 are 23, 88, 91, 99, and 150, respectively. This testifies that the proposed network is more sensitive than the AdaBoost method 8 and the compound method, 30 which is shown in Figure 8. This is very meaningful for fire alarm.

The contrasting sensitiveness between the proposed method and the others.

The test speed

The test speed, a round of a forward pass, by the proposed network is 51.8 fps in our hardware platform, an Intel i7-6700k CPU with a Nvidia GTX1080 GPU. It is enough for surveillance applications.

The test samples

Some typical scenes, such as indoor, outdoor, urban areas, forest, grassland, and open areas, are tested in this experiment. The samples with various perspectives are included, such as from overlook, looking up, aerial view, and looking horizontally. Thin and heavy smoke, even with various colors are included. Twenty five samples are shown in Figure 9. The 1st and the 2nd are outdoor and indoor samples, the 3rd is the smoke in a forest, and the 5th happens in a factory. The smoke of the former four samples are white, and the one of the 5th sample is black. In fact, smoke with different appearances, shown in samples from the 1st to the 10th, and the 11th to the 15th, are recognized rightly. The 12th, the 13th, and the 14th samples are identified as fire with a small probability of smoke. In fact, there is some tiny smoke. The 15th sample is classified as smoke with a small probability of fire. The 18th and the 20th samples are recognized as fog and haze and cloud, respectively with high confidence, though they look like smoke and some human cannot classify rightly. The objects with similar appearance are identified clearly. The 21st and 22nd samples are moving vehicle lights from far to close. The 23rd sample is a waving flag. The objects with similar motions, for examples, waving flags, climbing vehicles on mountain, and moving light, are identified clearly.

The testing samples. The title of each subplot is the predicted label with the corresponding probability. For fire, which usually coexists with smoke, two labels and corresponding two probabilities are listed. For these case, the maximum one is usually corresponding to fire and the second one is corresponding to smoke.

This experiment illustrates that the method is generally used without any hypothesis and can accurately distinguish smoke with similar appearance objects and similar motion objects.

Conclusion

The traditional methods based on static features cannot well distinguish smoke from many other similar objects. The traditional methods based on dynamic features could not extract enough temporal features from videos. The features of these methods are usually hand crafted, which are not enough to capture the smoke characteristics. So, multiple model features, including static features such as chrominance, texture, shape, and dynamic features such as rising up, flickering, have to be integrated to improve accuracy. On the one hand, some hypotheses, such as color, rising vertically to sky, and so on, underlie these methods, but restrict their application fields. On the other hand, such methods are not sensitive to thin smoke.

Consequently, the proposed method has been validated that dynamic characteristics is discriminating enough to classify smoke, and the discriminating capability is even better than the one of the compound methods which integrate static and dynamic features. This also proves that neural network is outstanding to extract compound features in contrast to the hand crafted methods.

Because the fire videos which happened from the embryonic stages to the violent stages are rare, all samples on hand are collected into this set. If exact samples are provided, the network would be more useful.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Natural Science Foundation of Zhejiang Province under Grant LQ19F020005, the Project of Science and Technology Plans of Wenzhou City under Grant 2018ZG021, the Natural Science Foundation of China under Grants 61672442, the Joint Funds of 5th Round of Health and Education Research Program of Fujian Province (No. 2019-WJ-41), the Joint Funds of Scientific and Technological Innovation Program of Fujian Province (No. 2017Y9059)) and Natural Science Foundation of Fujian Province under Grants 2018J01574.