Abstract

Due to motion constraint of 4-R(2-SS) parallel robot, it is difficult to calculate the translation component of hand–eye calibration based on the existing model solving method accurately. Additionally, the camera calibration error, robot motion error, and invalid calibration motion poses make it difficult to achieve fast and accurate online hand–eye calibration. Therefore, we propose a hand–eye calibration method with motion error compensation and vertical-component correction for 4-R(2-SS) parallel robot by improving the existing eye-to-hand model and solving method. Firstly, the eye-to-hand model of single camera is improved and the robot motion error in the improved model is compensated to reduce the influence of camera calibration error and robot motion error on model accuracy. Secondly, the vertical-component of hand–eye calibration is corrected based on vertical constraint between calibration plate and end effector in parallel robot to calculate the pose and motion error in calibration of 4-R(2-SS) parallel robot accurately. Thirdly, the nontrivial solution constraint of eye-to-hand model is constructed and adopted to remove invalid calibration motion poses and plan calibration motion. Finally, the proposed method was verified by experiments with a fruit sorting system based on 4-R(2-SS) parallel robot. Compared with random motion, the existing model, and solving method, the average time of online calibration based on planned motion decreases by 29.773 s and the average error of calibration based on the improved model and solving method decreases by 151.293. The proposed method can improve the accuracy and efficiency of hand–eye calibration of 4-R(2-SS) parallel robot effectively and further realize accurate and fast grasping.

Introduction

The automatic sorting of fruits based on robot is of great significance to the automated, large-scale, and accuracy development of agricultural production and agriculture product processing. 1 –3 During the automatic sorting of fruits, the accurate and reliable calculation of grasping pose is the precondition to realize the accurate, fast, and nondestructive grasping control of robot. 4 Currently, the main methods of grasping pose calculation include infrared image analysis, spectrum analysis, and machine vision. Compared with other methods, the machine vision has the advantages of noncontact, good adaptability, cost-effectiveness, and so on. It is more suitable for grasping pose calculation of fruits. 5 However, there is a challenging problem in grasping pose calculation based on machine vision, which is online hand–eye calibration with high accuracy and efficiency. 6 –8 In addition, the parallel robot, which features strong rigidity, stable structure, and high precision, places greater demands on accuracy and efficiency of online hand–eye calibration. 9,10 Currently, the main hand–eye systems include eye-to-hand and eye-in-hand according to the pose relationship between camera and end effector of robot. The camera of eye-to-hand system is fixed outside the robot. 11 The camera pose in the world coordinate system remains unchanged. The pose relationship between camera and end effector changes with the robot motion. 12 The camera of eye-in-hand system is fixed on the end effector and moves with the robot. 13,14 The pose relationship between camera and end effector remains unchanged. The eye-in-hand system can move the camera close to the object to acquire a clear image, but it is difficult to ensure that the object appears in the field of view of camera. In addition, the shake of camera while moving and the image smear caused by acceleration while acquiring image would affect the accuracy of calibration. The eye-to-hand system with stationary camera has the advantages of high detecting accuracy and good stability, which is more suitable for the fruit sorting system of parallel robot with limited work space. 15

The main methods of hand–eye calibration include measuring method, active motion calibration method, self-calibration method, and calibration method based on calibration object. Because the measuring method needs the aid of measuring equipment with high accuracy, such as an optical coordinate measurement machine, 16 the cost is high and the process is complicated. The active motion calibration method has strict requirement for the motion accuracy of vision system. It is not suitable for eye-to-hand system with stationary camera. 17 In addition, it is difficult to realize the active motion calibration because the robot motion control and trajectory calculation are difficult to achieve accurately and efficiently. 18,19 The self-calibration method only uses the camera parameters and the constraint of robot to realize hand–eye calibration, which makes the calculation complex and the accuracy difficult to improve. 20 The calibration method based on calibration object establishes the relationship between camera and robot by calibration object to reduce the difficulty of pose calculation. It can improve the accuracy and speed of hand–eye calibration effectively. 21,22 So this article uses the calibration method based on calibration object to calibrate the eye-to-hand system.

Currently, the solving methods for hand–eye model mainly include homogeneous transformation method, 23,24 matrix rearrangement method, 25 method based on matrix vectorization and Kronecker product, 26 dual quaternion method, 27 and Lie group method. 28 The rotation matrix is expressed by rotating an angle around an axis in homogeneous transformation method, which is obtained by calculating the unit vector and rotation angle of rotation axis. In the matrix rearrangement method, the rotation matrix is obtained by rearranging matrix elements to transform implicit equation of rotation into explicit equation in hand–eye model. However, the obtained rotation matrix is not orthogonal matrix. Solving the translation vector based on nonorthogonal rotation matrix would lead to greater translation error. The dual quaternion method uses the dual quaternion to represent the motion in space. The Lie group method adopts the Lie group theory to transform the minimization problem into the least square fit problem in solving equations based on multiple sets of observations for obtaining a simple and clear solution. The method based on matrix vectorization and Kronecker product transforms the hand–eye model equations into explicit equations that are easy to solve. Compared with other methods, the method based on matrix vectorization and Kronecker product can calculate the rotation matrix and translation vector in hand–eye model simultaneously, which can reduce the transfer error. It has the advantages of good robustness, high accuracy, and calculation speed. It is more suitable for the online hand–eye calibration and grasping pose calculation of 4-R(2-SS) parallel robot based on machine vision.

The existing solving methods for hand–eye model require the robot to perform multidirectional rotation and large-scale translation during calibration. For robots with degree of freedom (DOF) constraint, it is difficult to meet this requirement. Therefore, some scholars proposed a calibration method for the robots with DOF constraint by limiting the rotation angle of camera to nonzero during calibration. However, for the robots with rotational constraint, it is difficult to calculate the

Therefore, for the 4-R(2-SS) parallel robot with 4-DOF of rotational constraint, it is difficult to calculate eye-to-hand model parameters based on existing hand–eye calibration methods accurately. Additionally, the motion error of robot, calibration error of camera, and invalid calibration motion poses of robot make it difficult to achieve fast and accurate online hand–eye calibration and grasping pose calculation of 4-R(2-SS) parallel robot. 33 In this article, an online hand–eye calibration method with motion error compensation and vertical-component correction for 4-R(2-SS) parallel robot is proposed and applied to grasping pose calculation. An improved eye-to-hand model of stereovision with motion error compensation is proposed to reduce the influence of camera calibration error and robot motion error on the accuracy of eye-to-hand model. The existing model solving method based on matrix vectorization and Kronecker product is improved to calculate the pose and motion error in hand–eye calibration of 4-R(2-SS) parallel robot with rotational constraint accurately. A calibration motion planning method based on nontrivial solution constraint of eye-to-hand model is proposed for improving the accuracy and efficiency of online hand–eye calibration and further realizing accurate and fast grasping of parallel robot.

Materials and methods

Due to motion constraint of 4-R(2-SS) parallel robot, it is difficult to calculate translation component of hand–eye calibration based on the existing model solving method accurately. Additionally, the camera calibration error, robot motion error, and invalid calibration motion poses make it difficult to achieve fast and accurate online hand–eye calibration. Therefore, we propose a hand–eye calibration method with motion error compensation and vertical-component correction for 4-R(2-SS) parallel robot by improving the existing eye-to-hand model and solving method.

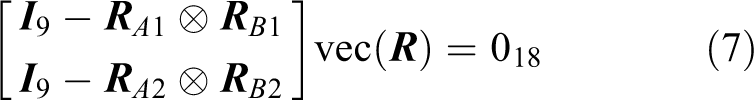

Model solving method based on matrix vectorization and Kronecker product and limitation analysis

The model solving method based on matrix vectorization and Kronecker product transforms the hand–eye model equations into explicit equations. For calculating the rotation matrix and translation vector in hand–eye model, it is necessary for the robot to move with some specific requirements.

Model solving method based on matrix vectorization and Kronecker product

To reduce the influence of measuring error of calibration plate pose

The sizes of rotation matrices

Therefore, the hand–eye model

Then:

The rotation and translation parts of equation (4) are transformed respectively. The rotation matrices

The

The

Limitation analysis of model solving method based on matrix vectorization and Kronecker product for 4-R(2-SS) parallel robot

If the end effector does not rotate, in which

Then:

Therefore, three equations can be obtained at each motion moment of end effector. For the nine unknowns of the rotation matrix

The

The

where

To make equation (14) have nontrivial solution, the matrix

Improved eye-to-hand model and model solving method

The eye-to-hand model of single camera is improved and the robot motion error in improved model is compensated to reduce the influence of camera calibration error and robot motion error on model accuracy. The vertical-component of hand–eye calibration is corrected based on vertical constraint between calibration plate and end effector in parallel robot to calculate the pose and the motion error in calibration of 4-R(2-SS) parallel robot accurately.

Improved eye-to-hand model of stereovision with motion error compensation

(1)

where

(2)

Although the calibration plate is fixed on the top of the end effector and the motion of calibration plate is consistent with that of the end effector in the eye-to-hand system of parallel robot, the pose matrix and differential motion matrix of calibration plate and end effector are different in the different coordinate systems. Therefore, the hand–eye model

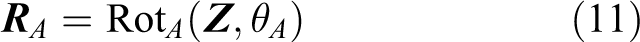

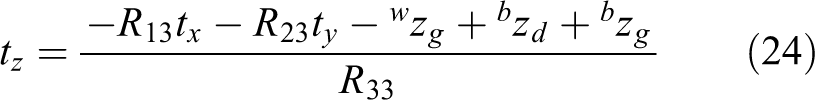

Improved solving method with vertical-component correction for the eye-to-hand model

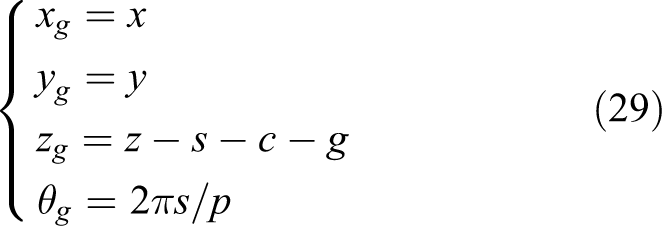

Because of the motion constraint of the 4-R(2-SS) parallel robot with 4-DOF, it is difficult to calculate the

Due to the structural stability and motion constraint of the 4-R(2-SS) parallel robot with 4-DOF, the

Coordinate diagram in eye-to-hand system of 4-R(2-SS) parallel robot.

According to the orthogonality of rotation matrix in the pose matrix

The

The vertical component

Then, according to equations (20) and (21), the

Thus, the vertical component of pose matrix X obtained by the existing model solving method based on matrix vectorization and Kronecker product is corrected by calculated

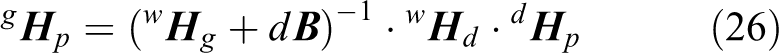

Hand–eye calibration and grasping pose calculation based on improved eye-to-hand model and model solving method for 4-R(2-SS) parallel robot

The nontrivial solution constraint of eye-to-hand model is constructed and adopted to remove invalid calibration motion poses and plan calibration motion. The pose transformation matrix of end effector from current pose to optimal grasping pose in

Fruit sorting system based on 4-R(2-SS) parallel robot

The 4-R(2-SS) parallel robot with 4-DOF for automatic sorting of fruits is shown in Figure 2. The parallel robot body includes parallel mechanism consisting of 4-R(2-SS) (R represents the revolute joint and S represents the spherical joint) side chains with the same kinematic structure and end effector which is a clamping mechanism. It can realize three-dimensional translation and one-dimensional rotation around

4-R(2-SS) parallel robot with 4-DOF for automatic sorting of fruits. 1: Parallel mechanism; 2: calibration plate; 3: clamping mechanism; 4: kinect camera; and 5: fruit. DOF: degree of freedom.

Motion planning and hand–eye calibration of 4-R(2-SS) parallel robot based on nontrivial solution constraint of eye-to-hand model

(1) (a) The pose transformation matrix (b) The rotation angle (c) The pose transformation matrix

For the constraint (a), if

If Necessity: If Sufficiency: If the

(2)

Then the end effector does small-scale rotation and large-scale translation randomly near the ideal hand–eye calibration positions. The random motion poses of end effector are chosen according to the proposed three constraints in this article. As shown in Figure 3, the motion poses of end effector, which satisfy the nontrivial solution constraint, are adopted to construct the improved eye-to-hand model of stereovision with motion error compensation. The improved solving method with vertical-component correction is adopted to solve the eye-to-hand model. Then the accurate and fast hand–eye calibration for 4-R(2-SS) parallel robot with 4-DOF is realized based on the motion planning.

Hand–eye calibration for 4-R(2-SS) parallel robot with 4-DOF based on the motion planning. DOF: degree of freedom.

Grasping pose calculation of 4-R(2-SS) parallel robot with 4-DOF

To achieve accurate and stable grasping of fruit, the end effector of parallel robot needs to move to the position of fruit and grasp the fruit with an optimal grasping pose in the fruit sorting system based on 4-R(2-SS) parallel robot with 4-DOF. Assuming that the optimal grasping pose is

The

The current pose

where

Structure of 4-R(2-SS) parallel robot with 4-DOF. DOF: degree of freedom.

Then, according to equation (27), the pose

where the

Results and discussion

The proposed method was verified by experiments with a self-developed novel fruit sorting system based on 4-R(2-SS) parallel robot with 4-DOF. The hardware of experiment included the calibration plate with

Comparison of online hand–eye calibration according to random motion and planned motion based on nontrivial solution constraint of eye-to-hand model

The end effector fixed with calibration plate does random motion and planned motion based on nontrivial solution constraint of eye-to-hand model in the field of view of Kinect camera, respectively. At each calibration position, the calibration plate images are acquired by color camera and infrared camera in Kinect camera, respectively. According to equation (27), the pose of end effector at each calibration position is calculated and transformed into the pose matrix The end effector randomly moves to 15 calibration positions for calibration data acquisition. The hand–eye model equations are constructed to solve the pose matrix If there is no solution to the model equations, the end effector removes and processes (a) and (b) are repeated. Otherwise, the online hand–eye calibration is completed.

The planned motion based on nontrivial solution constraint of eye-to-hand model is shown in Figure 5. The orange line represents the motion path of end effector. The blue dots represent the ideal calibration positions. The boxes represent the actual calibration positions after the random motion of end effector near the ideal calibration positions. The cylinder represents the work space for fruit sorting in 4-R(2-SS) parallel robot.

Planned motion based on nontrivial solution constraint of eye-to-hand model.

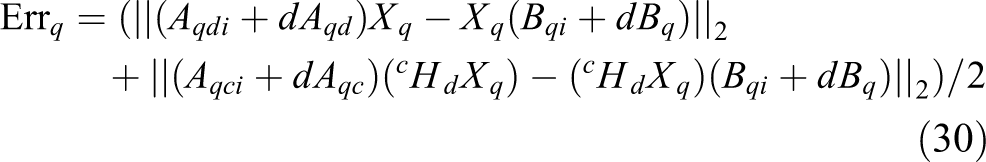

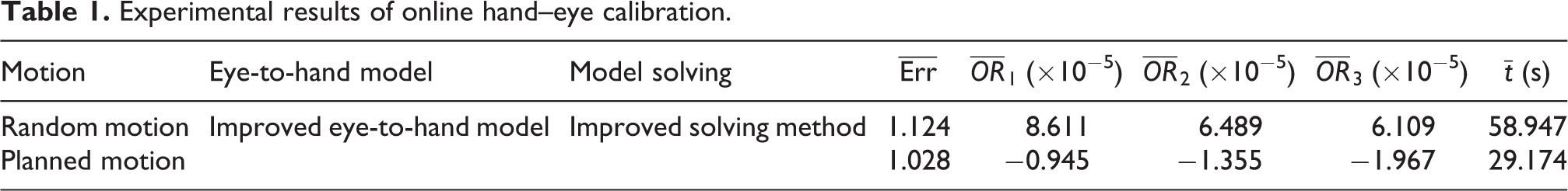

The improved eye-to-hand model of stereovision with motion error compensation and the improved solving method with vertical-component correction for the eye-to-hand model are applied to the experiments of online hand-eye calibration based on random motion and planned motion. The average error

Experimental results of online hand–eye calibration.

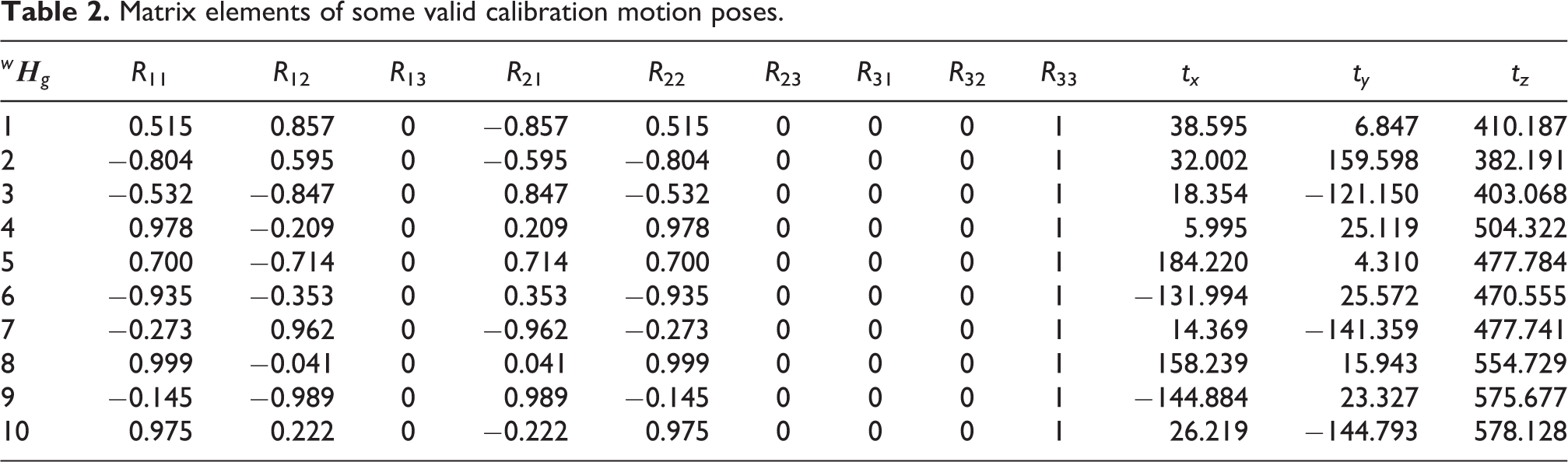

The obtained rotation matrix

From Table 1, we can see that compared with the random motion, the average error and average time of online hand–eye calibration based on planned motion decrease by 0.096 and 29.773 s, respectively. The planned motion based on nontrivial solution constraint of eye-to-hand model has the advantages that the motion distribution in the work space is uniform, and the invalid calibration motion poses can be removed in advance. Therefore, the planned motion can improve the calibration accuracy and reduce the calibration time, so as to lay the foundation for accurate and fast grasping of parallel robot.

Comparison of hand–eye calibration based on existing and improved methods

The end effector fixed with calibration plate does planned motion based on nontrivial solution constraint of eye-to-hand model in the field of view of Kinect camera. At each calibration position, the calibration plate images are acquired by color camera and infrared camera in Kinect camera, respectively, as shown in Figure 6. According to equation (27), the pose of end effector at each calibration position is calculated and transformed into pose matrix

Calibration plate images. (a) Color images and (b) infrared images.

Matrix elements of some valid calibration motion poses.

Based on the acquired multigroup calibration data, the existing eye-to-hand model, the improved eye-to-hand model of stereovision with motion error compensation, the model solving method based on matrix vectorization and Kronecker product, and the improved solving method with vertical-component correction are applied to hand-eye calibration experiments of 4-R(2-SS) parallel robot with 4-DOF, respectively. The average error

Experimental results of hand-eye calibration.

From Table 3, we can see that compared with the existing eye-to-hand model, the average errors of hand–eye calibration according to the improved eye-to-hand model combined with existing and improved solving methods decrease by 0.002 and 0.108, respectively. The orthogonality of the obtained rotation matrix

Comparison of grasping pose calculation according to existing and improved hand–eye calibration methods

To verify the advantage of the hand–eye calibration method with motion error compensation and vertical-component correction for 4-R(2-SS) parallel robot when it is applied to grasping pose calculation, the existing and improved hand–eye calibration methods are applied to the experiments of grasping cluster fruit, respectively. The images of cluster fruit are acquired by Kinect camera firstly, as shown in Figure 7. The optimal grasping pose

Cluster fruit regions in color images.

Then, the hand–eye calibration results according to the existing eye-to-hand model, the improved eye-to-hand model of stereovision with motion error compensation, the model solving method based on matrix vectorization and Kronecker product, and the improved solving method with vertical-component correction are applied to grasping pose calculation, respectively. According to the obtained

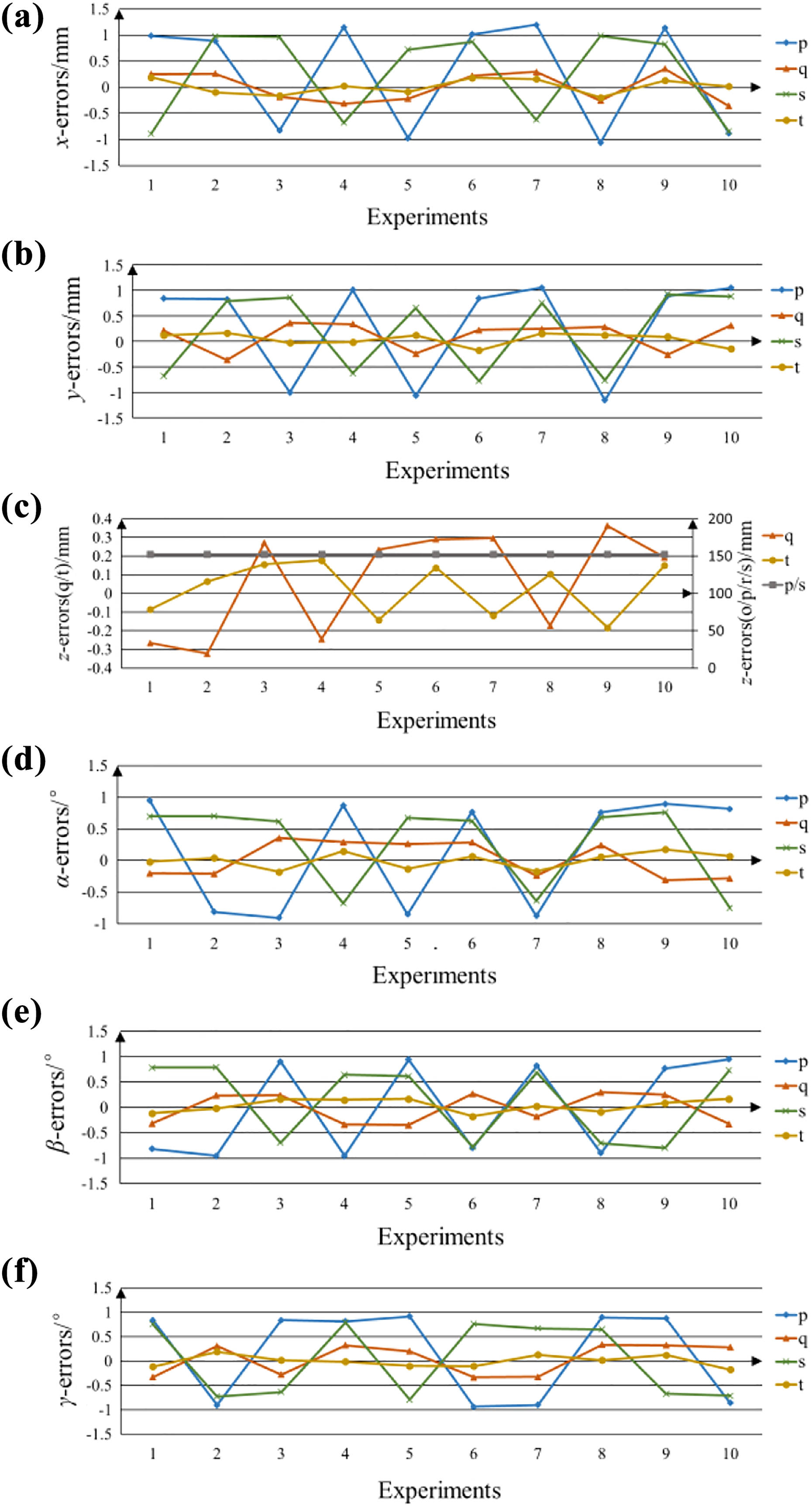

The errors of grasping pose calculation based on hand–eye calibration results. (a)

From Figure 8, we can see that compared with existing eye-to-hand model and model solving methods, the average errors of

Conclusions

Because of the 4-DOF motion constraint of robot, the motion error of robot, the calibration error of camera, and the invalid calibration motion poses of robot, it is difficult to improve the accuracy and efficiency of online hand–eye calibration of 4-R(2-SS) parallel robot. Therefore, an online hand–eye calibration method with motion error compensation and vertical-component correction for 4-R(2-SS) parallel robot is proposed and applied to grasping pose calculation in this article. The following conclusions can be drawn: An improved eye-to-hand model of stereovision with motion error compensation is proposed to reduce the influence of camera calibration error and robot motion error on accuracy of eye-to-hand model of 4-R(2-SS) parallel robot. The vertical component of eye-to-hand model parameters is corrected based on the vertical constraint between calibration plate and end effector. The existing model solving method based on matrix vectorization and Kronecker product is improved to solve the problem that it is difficult to calculate eye-to-hand model parameters due to the rotational constraint of 4-R(2-SS) parallel robot with 4-DOF accurately. The nontrivial solution constraint of eye-to-hand model is constructed. A calibration motion planning method based on nontrivial solution constraint of eye-to-hand model is proposed for improving the accuracy and efficiency of online hand–eye calibration. The proposed method was verified by experiments with a self-developed novel fruit sorting system based on 4-R(2-SS) parallel robot with 4-DOF. Experimental results demonstrate that the improved eye-to-hand model and solving method and the proposed calibration motion planning method can improve the accuracy and efficiency of hand–eye calibration of 4-R(2-SS) parallel robot effectively and further realize accurate and fast grasping of parallel robot.

In the future work, we will explore how to further improve the accuracy and efficiency of online hand–eye calibration of 4-R(2-SS) parallel robot, so that it can be applied to more complex environment. Additionally, we will also study how to improve the reliability of online hand–eye calibration to lay the foundation for accurate and fast grasping based on machine vision and parallel robot.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Natural Science Foundation of China (grant no. 51375210); the Postgraduate Research and Practice Innovation Program of Jiangsu Province (grant no. KYCX17_1780); the Zhenjiang Municipal Key Research and Development Program (grant no. GZ2018004); and the Priority Academic Program Development of Jiangsu Higher Education Institutions.