Abstract

Service robots are built and developed for various applications to support humans as companion, caretaker, or domestic support. As the number of elderly people grows, service robots will be in increasing demand. Particularly, one of the main tasks performed by elderly people, and others, is the complex task of cleaning. Therefore, cleaning tasks, such as sweeping floors, washing dishes, and wiping windows, have been developed for the domestic environment using service robots or robot manipulators with several control approaches. This article is primarily focused on control methodology used for cleaning tasks. Specifically, this work mainly discusses classical control and learning-based controlled methods. The classical control approaches, which consist of position control, force control, and impedance control , are commonly used for cleaning purposes in a highly controlled environment. However, classical control methods cannot be generalized for cluttered environment so that learning-based control methods could be an alternative solution. Learning-based control methods for cleaning tasks can encompass three approaches: learning from demonstration (LfD), supervised learning (SL), and reinforcement learning (RL). These control approaches have their own capabilities to generalize the cleaning tasks in the new environment. For example, LfD, which many research groups have used for cleaning tasks, can generate complex cleaning trajectories based on human demonstration. Also, SL can support the prediction of dirt areas and cleaning motion using large number of data set. Finally, RL can learn cleaning actions and interact with the new environment by the robot itself. In this context, this article aims to provide a general overview of robotic cleaning tasks based on different types of control methods using manipulator. It also suggest a description of the future directions of cleaning tasks based on the evaluation of the control approaches.

Keywords

Introduction

The development of robots has led to an incredible influence scientifically and socially for several decades. The robot of today is showing considerable potential in various fields such as cleaning, tidying up, or doing the laundry in the domestic environment. Cleaning tasks, in particular, are the most frequent household chores in human environments according to the analysis of Cakmak and Takayama. 1 If service robots could perform cleaning tasks with effective and efficient way, this development would have a noteworthy impact on our daily lives.

The development of the robotic cleaning task is significant for the home environment because the percentage of older people in the world’s population is growing. According to current statistics, the number of people over age 65 is predicted to triple between 2000 and 2050. As a result, in 2050, the ratio of working-age people to the number of seniors age 65 and above is expected to decrease. Also, the number of 65+ people was approximately 17.5% of the total population in 2012 and is expected to increase to 30% by 2050. Therefore, if service robots could clean the human environment, elderly or physically handicapped people would be able to stay longer in their own homes and be less dependent on caretakers.

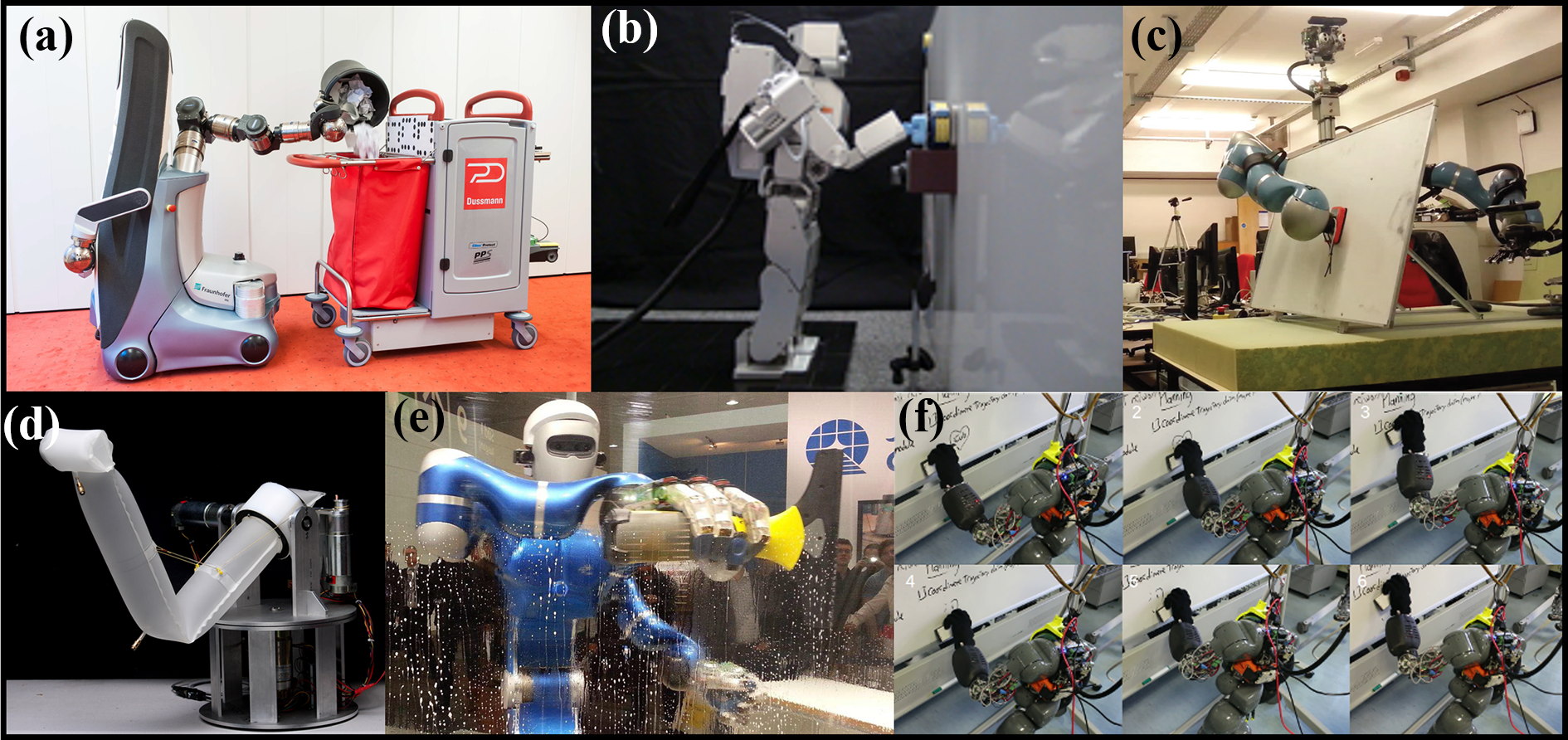

In the market, different types of commercial service robots are available for different purposes, including floor, pool, solar panel, lawn, and windows (Figure 1(a) to (e)). 2 –16,18 –29,35 A popular cleaning robot is iRobot, which has sold for use in more than 10 million homes worldwide. 11 Siemens has developed a navigation system for floor cleaning robots and extensively tested it in several chain store supermarkets. 36 However, these robots can perform specific tasks and cannot be used for multipurpose cleaning tasks of households.

In fact, the floor cleaning robots are useful and save time for people, but the cleaning strategies of the robots are not implemented in a very effective way. Initial floor cleaning robots are operated with random movement, with no guarantee that the robot will visit every area or corner of the domestic environment. Current floor cleaning robots are working in a smart way to clean an entire floor surface, but some dirt still remains in a few spots. Moreover, these robots are only developed for cleaning floors at home. Their workspace is constrained to two-dimensional flat surfaces, and it cannot clean floors or furniture or non-flat surfaces.

Figure 1(f) shows examples of future robots that can be used for multipurpose cleaning and is inspired by the whole human body or the human hand. In addition, these robots are highly dexterous, anthropomorphic, and can handle cluttered environments. 37 For example, it demonstrates that these robots can perform floor cleaning, dish washing, kitchen table cleaning, and so on. 30 –34 It is a fundamental step in the development of a modern cleaning robot that could be used for developing more advanced service robots to handle complete household cleaning tasks from kitchen to living room, wash room to corridor, table to windows, indoor to outdoor, and so on.

In the actual environment, there are many different types of cleaning tasks. Leidner et al. classified how humans clean using tools in various situations. 38 The classifications of the cleaning motion are natural for human, but these cleaning robots can have difficulty in following the motion. Therefore, many research groups have developed cleaning tasks using robot manipulators because the robot can not only mimic human movement but also have dexterity for cleaning in various environments such as window, table, sofa, furniture, and so on. However, robot cleaning using the manipulators is a complex. For example, wiping, sweeping, scrubbing, and tidying up activities in which human uses cleaning motions are difficult to develop using a manipulator due to its complex control strategy. Where the cleaning strategy should be at least considered for two basic components such as trajectory planning without collisions and perception to identify the dirt.

To overcome the challenges using the robot manipulator, a variety of control approaches for robotic cleaning tasks have been researched. In this article, we present a comprehensive summary of control architectures that are used for cleaning purposes. We categorize the architecture into two main approaches: classic control architecture and learning-based control architecture. In further categorization, we considered in three classic control methods: position control (PC), force control (FC), and impedance control (IC). For the learning-based control, we consider three strategies: learning from demonstration (LfD), supervised learning (SL), and reinforcement learning (RL). Additionally, this article focuses on the control approaches for robotic cleaning tasks, which are demonstrated in actual (real time) and virtual environment (simulation).

This article presents an overview of control strategies for cleaning robots using manipulators. It includes the methodology of paper selection, a classification of the main cleaning tasks, a detailed description of the classical and learning-based control approaches, and some final discussions and conclusions.

Methodology: Search strategy and selection criteria

For the literature review of cleaning robots, we considered a set of parameters, including different paper databases, paper selection, keyword selection, and so on. We searched electronic databases from April 2018 to May 2018 using IEEE Xplore, Pubmed, Google scholar, Science Direct, Scopus, and Web of science to identify articles concerning aspects of modeling, recognizing, interpreting, and implementing cleaning tasks in service robotics applications. Specifically, terms and keywords used for the literature research were robotic cleaning tasks, service robot, sweeping, cleaning, wiping, and scrubbing for each database.

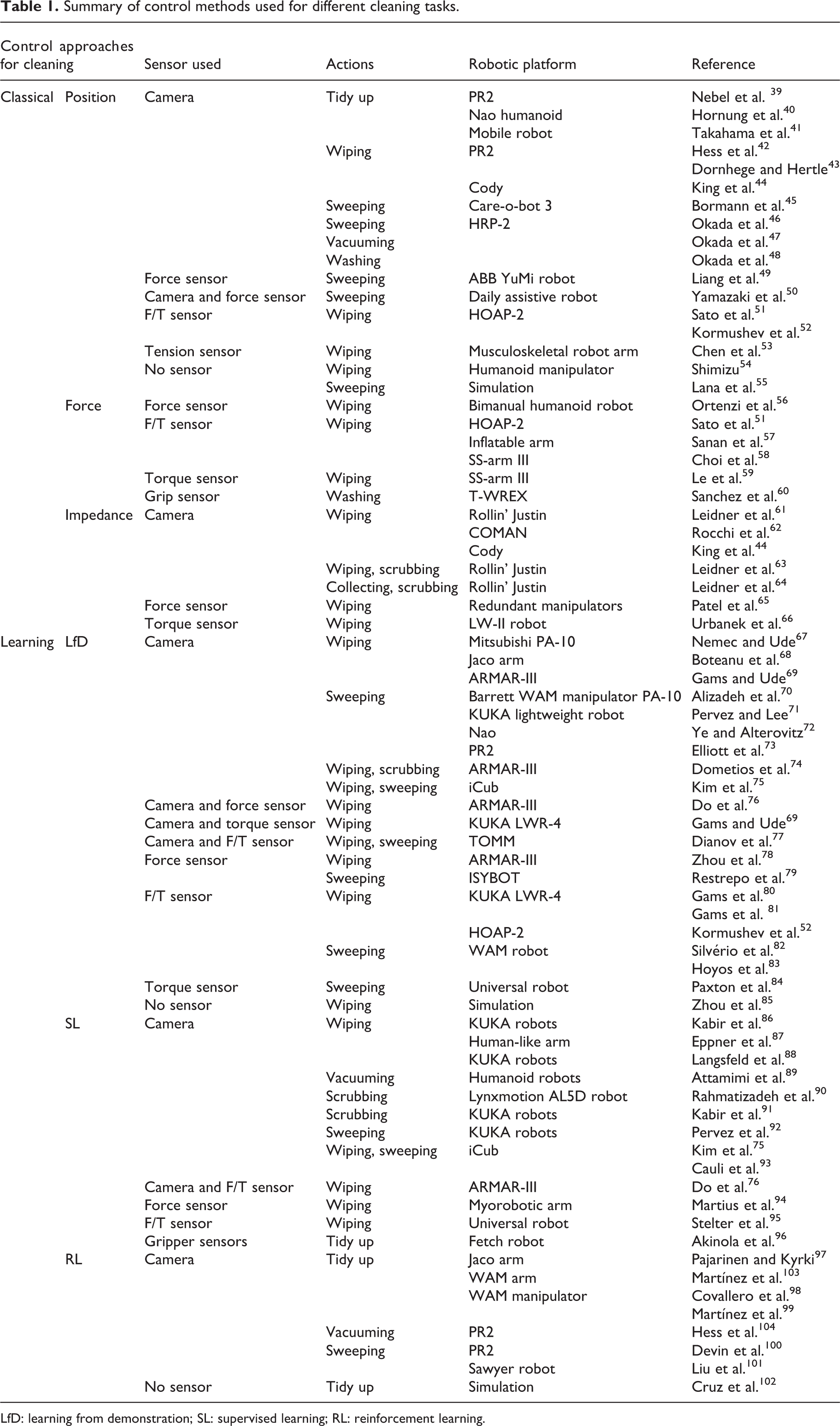

After collecting the papers, we sorted them based on cleaning task classification and types of control strategies used to perform cleaning tasks. All data extracted from the papers and reported in Table 1: control strategy, performed tasks, types of sensors used, and types of robotic platform. Concerning selection criteria, the results were sorted for each database with a maximum of 100 results for each combination of key words. During the screening procedure, items were excluded if they were an abstract, duplicates, a chapter from a book, not written in English language, and not full access. Moreover, the references were fully assessed during the evaluation procedure, and papers were excluded if they did not appear appropriate for this review after the reading of title and abstract.

Summary of control methods used for different cleaning tasks.

LfD: learning from demonstration; SL: supervised learning; RL: reinforcement learning.

Particularly, some papers were discarded based on two categories: (1) if the paper did not include words that were strongly related to the purpose of this review, that is, “sweeping,” “washing,” “vacuuming,” “scrubbing,” “tidy up,”” cleaning,” and “wiping” and their derivatives or synonyms; and (2) if the paper did not include control strategies for cleaning tasks; the time period of the literature survey was from January 2004 to May 2018. After an intensive procedure for paper selection, 71 papers were adopted for review (Figure 2).

Paper selection methodology from different databases.

Classification of cleaning tasks

In the domestic environment, most people perform household chores such as doing the dishes, cleaning washrooms, and doing the laundry every day. As described in the work of Cakmak and Takayama, 1 49.8% of all chores are cleaning tasks, and the tasks are defined with several verbs in the actual environment. The common verbs for cleaning tasks include wiping, sweeping, washing, tidy up, vacuuming, and scrubbing. In fact, as people grow up, they learn how to clean household objects and their own place naturally. However, in terms of robotic operation, it is difficult to define the cleaning actions and complex manipulation. Therefore, Leidner et al. 38 classified the cleaning tasks based on the principle of wiping the surface using a tool. Broadly speaking, it can be applied for most of the cleaning tasks. Additionally, the authors defined three basic terms for the process: tool, medium, and surface. They described medium as particles or liquid such as dust that are placed between the tool and surface. Based on the author’s paper, we classify cleaning tasks that is operated with total six robotic motions described in Figure 3.

*Wiping: The motion of removing (a substance) from the surface with hand. For example, dirt from the surface or table should be considered as skimming (see Figure 3(a)).

*Sweeping: The motion is to collect dust placed on the floor. Then, the dust is accumulated in a specific place as collecting (see Figure 3(b)).

*Scrubbing: The action of scrubbing is rubbing something hard by a tool. It repeats the action several times to remove the dirt by exerting force (see Figure 3(c)).

*Vacuuming: The motion of vacuuming is to absorb (suck up) dust and dirt from floors or other surfaces using an air pump. However, the action is unrelated to the direction of the tool motion (see Figure 3(d)).

*Washing: The action of washing needs two different actions, which are emitting and scrubbing where emitting corresponds to water ejection on the surface and scrubbing to remove the dirt using tools (see Figure 3(e)).

*Tidy up: The action of tidy up is used to organize and arrange messy room. For example, the action repeats pick some objects and place the objects in specific area or box to tidy up the room (see Figure 3(f)).

In this work, we categorized control methods that are used for cleaning tasks. Based on our selected papers, we divided these control methods into two basic types: classical and learning-based. We also subcategorized them by considering the sensor used, robotic platform, and performance. The detailed categorization is tabulated in Table 1.

Control approach for cleaning tasks

In this section, we discuss detailed study of classical control and learning-based control for cleaning tasks. The classical control is used for predefined task for cleaning without learning or adapting environment with different feedback from the sensors. Moreover, learning-based control is used to learn the robot from human demonstrations, self-exploration, or prerecorded data sets to clean the environment. After learning, the robot can generalize cleaning tasks in unfamiliar environment.

Classical control approach

Position control

PC is an important method in the early stage of cleaning tasks to show the possibility of robot movement to achieve continuous and repetitive cleaning motion. In this approach, robot kinematics especially inverse kinematics plays an important role. For example, Yamazaki et al. 50 describe a Jacobian-based inverse kinematics method for controlling upper body motion. The authors used the singularity robust inverse method, 105 which has a good track record in stability, so that it helps to avoid self-collision using redundant degrees of freedom (DoFs) of upper body during cleaning experiments. Okada and colleagues 46 –48 also generated body posture and constraint of handling information using the whole body inverse kinematics technique. In this way, the whole body posture sequence is used for sweeping motion. Liang et al. task-space kinematic control is developed for dual arm PC for cleaning. 49 To generate a sweeping motion, both arms rotate using full dual PC with the closed-loop system. Another approach using analytical and algebraic methods for the inverse kinematics of a manipulator was developed for wiping tables and mirrors using a manipulator. 54,55 The analytical approach focuses on solving and decoupling of inverse kinematics about a spherical wrist of the manipulator with a generalized unconstrained orientation. The algebraic approach uses the manipulation task model, which describe poses, linear and angular velocities, forces and moments for generating cleaning actions, and implemented only in a simulation. To generate trajectories for cleaning tasks, motion planning algorithms are needed. Takahama et al. suggested performing manipulation for tidying books in a room using the dual arm. 41 The manipulation requires a sequence of motions that confirm position and planning of manipulator’s motion. Hess et al. proposed novel coverage path planning for robotic manipulators that can clean arbitrary 3-D surfaces. 42 Normal coverage path planning returns suboptimal paths with respect to the joint space. Therefore, the author suggested a generalization of the traveling salesman problem approach, which transforms the surface into a graph that defines a set of clusters over nodes. Dornhege and colleagues discussed integrated task and motion planning for wiping tasks using the PR2 robot. 39,40,43 Their approach combines classical symbolic planning with the geometric reasoning in the temporal fast downward/modules planner with semantic attachments. The planner is able to deal with unexpected events, such as execution failures, but not in the current state. Then, if the planner cannot find the goal, it monitors the robot movements and replans if necessary.

Chitta et al. used MoveIt framework 106 on Care-o-bot 3 to clean a trash bin using PC with self-collisions avoidance 45 (Figure 4(a)).

Cleaning robots using classical control approaches: (a) cleaning a trash bin using Care-O-bot 3 (position control), 45 (b) cleaning a vertical surface using humanoid robot HOAP-2 (position control and force control), 51 (c) wiping a whiteboard using bimanual humanoid robot (force control), 56 (d) wiping motions using inflatable manipulator (force control), 57 (e) wiping a widow using Rollin’ Justin (impedance control), 63 and (f) erasing a whiteboard using COMAN (impedance control). 62

Another study was conducted for hospital cleaning purposes: The author used the PC to control two joints in the wrist of a Cody robot for cleaning the bed of a patient. 44 This control method enables the robot manipulator to adapt to the surface of the patient’s arm using position feedback from joint encoder. Also, in the bilateral control system, PC is used for the pneumatic artificial muscle’s arm to generate flexion motions without time delays for wiping pink ink. 53 Besides, this application is developed using proportional derivative (PD) controller for the movement of arm joints to clean a vertical surface using a humanoid robot in the work of Sato et al. and Kormushev et al. 51,52

The PC is mainly used for predefined task in cleaning environment. However, if the robot faces uncertainty from the environment, the control method cannot easily adjust to the situation. In particular, without force and torque, feedback could be crashed or damaged the robot manipulator during cleaning.

Force control

In the FC architecture, sensors measure applied forces from the environment. Therefore, FC, which considers the interaction between the device and the environment, plays an important role in cleaning tasks. Sanchez et al. used nonlinear FC and passive counter-balancing to perform a wide range of force for washing using a pneumatic robot. 60 Sato et al. proposed a force tracking controller 51 (Figure 4(b)). The controller is able to operate torque control mode that can generate varying trajectories even if small tracking errors are occurred. The authors used an ankle force controller, which calculated the hand force using a foot pressure sensor with zero moment point 107 and center of mass 108 for cleaning a whiteboard. Choi et al. 58 and Le et al. 59 proposed an algorithm to estimate external force exerted on the end-effector of a robot manipulator using information from joint torque sensors. The algorithm is used for wiping the table with the combination of time delay estimation that adapts the robot manipulator with unknown input as the external force. Moreover, FC is used to solve the problem of constrained motion for cleaning actions. Ortenzi et al. suggested operational space dynamics for wiping a whiteboard 56 (Figure 4(c)). This approach is used to minimize joint torque and increase stability while the robot is in contact with the environment. An inflatable manipulator consisted of McKibben actuators is used to wipe a surface. The manipulator adapts and contacts to the surface and cleans it using its reaction forces 57 (Figure 4(d)). The FC is one of the most useful control approaches for interacting with the environment. Also, the robots can adapt their cleaning motion for uneven surfaces based on force data. However, the control method is still not useful where the forces are continuously changing and the robot failed to generate cleaning trajectory. Therefore, this method is also limited to highly controlled environments.

Impedance control

IC could be the most suitable approach for cleaning tasks 109 because it allows dynamic interaction (both force and motion) between the robot and the environment. Specifically, the IC architecture is quite different from FC and PC. For example, in the case of PC, we only consider end-effector position using kinematics; in the case of FC, only static force is employed; and in the case of IC, PC, and FC, both are considered. Thus, IC is defined as the ratio of force output to motion input, or the force that results from the motion input. Moreover, we found several robots that use IC algorithms for different cleaning purposes, including those by Urbanek et al., who used Cartesian IC for a table wiping scenario. 66 Actually, Cartesian impedance is modeled as a mass-spring-damper system in the Cartesian directions of the tool center point to create a compliant behavior for the robotic end-effector.

With Cartesian IC, we can operate the robot with multiple DoFs robustly. This approach is extended to apply a compliant whole-body IC framework for interacting with the environment using the Rollin’ Justin robot. 61 The control is used to move the arm with Cartesian tool motion, which is distributed based on high particle density areas according to the rapidly exploring random trees and kernel density estimation (KDE). The same authors used an identical control approach and developed a generic method to parameterized whole-body controllers for compliant manipulation tasks with different contacts 63,64 (Figure 4(e)). They implemented a hybrid reasoning mechanism for task parameterization and integrated symbolic transitions to improve cleaning actions. As a result, the robot could demonstrate not only scrubbing a mug but also collecting particles of dust with a sponge. In addition, augmented hybrid IC was used with an outer–inner loop controller to wipe a surface using seven-DoF redundant robot arms. 65 The outer-loop controller is used to apply force feedback to control the end-effector and the inner-loop controller conducts to obtain the proper joint torque using joint-space inverse dynamics with an error reference controller. Rocchi et al. introduced a novel whole-body control library (OpenSot), which is combined with joint IC to create a framework that can effectively generate complex whole-body motion behaviors for humanoids 62 (Figure 4(f)). The framework uses the compliant inverse kinematics control scheme for erasing a whiteboard using COMAN. The control scheme consists of a PD controller for generating joint torque for the robot manipulator, and the current robot joint state is updated to the PD controller. Furthermore, another implementation of IC to clean a human on a bed using equilibrium point control was developed by King et al. 44 The control is a form of IC inspired by the equilibrium point hypothesis for all arm motions. Using equilibrium point control (EPC), the motion of the robot’s arm is commanded by adjusting the position of a Cartesian-space equilibrium point over time.

IC is one of the most suitable and safe approaches to control robots. Due to its adaptability to the environment, controlling force output versus motion, it modifies the trajectory in a continuous way. However, if the robot faces a new environment, the trajectory adaptation-based control can be a problem concerning generalization of the cleaning motion.

Learning-based control approach

Cleaning is one of the most complex and diverse tasks for a robot due to its high variability, as well as the highly unstructured and cluttered environment. To deal with these problems, researchers suggested that robots could be taught to learn. This learning-based approach could allow robots to manage variability, which the classical approach could not handle. Several works have examined the field of robot learning, particularly for cleaning. Here, we consider robotic control through the learning approach in three categories: (1) LfD, (2) SL, and (3) RL.

Learning from demonstration

Robot LfD is a control method in which the robot performs new tasks autonomously. It can be derived from observations of a human’s demonstration rather than manually programming for the robot’s desired behavior. The control approach is used to increase robotic capabilities to adapt and generalize robotic cleaning tasks to the new environment. LfD is developed with several methods: dynamic movement primitives (DMPs), Gaussian mixture model (GMM), Gaussian mixture regression (GMR), Hidden Markov model (HMM), and so on. 66,70

DMPs are the units of action that are formalized and encoded as a stable dynamic system. 110 DMP is a method to teach a robot skills from a task demonstration and reproduce the task with a new environment. Paxton et al. proposed an approach for LfD with noisy human demonstration to generalize the sweeping task 84 (Figure 5(a)). The method uses GMM/GMR to reproduce a nominal path by mimicking human behavior. Then, the DMP model and inverse optimal control are incorporated with a reward function for generating the necessary path in a new situation. The reward function consists of covariance matrix R and environmental features captured during the demonstration. Gaussian function with covariance matrix R can be learned from the features of the demonstration data and helps to reproduce new sweeping trajectories with different positions of obstacles in a new environment. Moreover, Kormushe et al. developed an integrated approach for a whiteboard cleaning task using upper body kinesthetic teaching 52 (Figure 6(a)). During the demonstration phase, the position, velocity, and acceleration of the end-effector are recorded in the robot’s frame of reference using forward kinematics. Then, in the learning phase, DMP is encoded to extract variation and correlation information across demonstration data, and a set of virtual attractors is used to reach a goal. The set of attractors is learned by weighted least-squares regression. As a result, robot is able to imitate human movements and reproduce the learned trajectories for cleaning the whiteboard.

Cleaning robots using leaning robots using learning-based control approaches: (a) sweeping a table using UR5 (LfD), 84 (b) wiping surfaces using ARMAR-III (LfD), 81 (c) wiping a table using ARMAR-III (SL), 76 (d) wiping and sweeping a table using iCub (LfD with SL 75 ), (e) wiping a glass using KUKA robots (AILC with RL), 111 and (f) sweeping papers using WAM robots (LfD). 82 LfD: learning from demonstration; SL: supervised learning; AILC: adaptive iterative learning control; RL: reinforcement learning.

For implementing consecutive movement primitives for wiping actions with DMP, the second-order and third-order DMP are mostly used. 67 In particularly, the third-order DMP is used to generate a new trajectory using modification of Gaussian kernel functions. It can also generate the sequencing of discrete motions and can be evaluated by the task of wiping the table. In addition, it is applied to the online coaching of robots with human–robot interaction. 69 The coaching method consists of visual feedback, stiff force feedback, and compliant force feedback and used for periodic DMP using online adaptation of weights. The weights of a periodic DMP can be learned via incremental locally weighted regression. 112 It is also employed with force feedback for wiping tilted surfaces using trajectory generated by the robot 80,81 (Figure 5(b)). The same authors also proposed a similar approach with DMP learned from visual feedback using a two-layered imitation system, which consists of canonical dynamic system and output dynamical systems. 113 In this control method, it uses decoupled periodic movement learning and force profile learning for generating the adaptation of wiping movement.

Ernesti and colleagues proposed a periodic DMP combined with a rhythmic motion for wiping a table 76,114 (Figure 5(c)). The rhythmic DMP is needed to encode transient and periodic movement for using a two-dimensional canonical system. The system explains the current phase of the periodic motion and the distance of the current state from the periodic pattern. Therefore, the system can reproduce multiple paths corresponding to the original trajectory, even though the robot starts from different starting points. Another approach of the DMP is coordinate change DMP (CC-DMP), which explicitly represents the relationship between leader and follower in multi-agent collaborative tasks. 78,85 CC-DMP captures the variance in the leader’s motion in the simulation, while the coupling term learns specific variations for force adaptation by the current situation and the environment. For example, in a wiping system, CC-DMP is used to encode the relationship between a wiping movement and a moving wiping surface, while the coupling term adapts to the roughness, which is different but relatively static for a variable surface. As a result, the robot generates a desired constant pressure on the surface. The extension of CC-DMP is represented with a vision-based controller to adopt the reference path of a robot’s end-effector to allow wiping of a dynamic whiteboard. 74 In addition, a task-parameterized DMP (TP-DMP) approach is exploited with adaptive motion encoding to a sweeping task based on a few demonstrations. 71 The authors extracted the task parameter (e.g. trash point) and applied expectation-maximization (EM) algorithm to a mixture of GMMs for TP-DMP. In addition, Urbanek et al. 66 presented wiping a surface using primitive movement by generating rhythmic patterns using a sum of weighted Gauss kernels for mapping desired movement. The rhythmic robot arm movements are generated using Cartesian IC.

GMM provides a suitable and compact way to encode a set of human demonstrations of a specific task or skill. Therefore, many researchers use GMM to solve complex tasks. 115 –117 However, GMM is not able to perform all tasks, so Levine et al. 118 proposed task-parameterized GMM (TP-GMM), which is a technique for permitting generalizing of trajectories from demonstrated trajectories and task parameters (frames). This method is helpful for developing cleaning tasks. Silvério et al. developed a learning bimanual end-effector pose for the bimanual sweeping task 82 (Figure 5(f)). They combined TP-GMM and quaternion-based dynamic systems to learn full end-effector poses of a bimanual robotic manipulator. TP-GMM is used to encode the demonstration for learning multiple frames and adapt orientation control of the two end-effectors. The system allows a desired impedance for the reproduction, and trajectories are generated by GMR learned with TP-GMM. In addition, a similar approach of Silvério et al. 82 without a dynamic system was used with partially observable task parameters for sweeping task. 70 In this method, during the kinesthetic teaching as the human demonstration, dustpan frame, dust pieces, and robot frame as task parameters are recorded. To generalize the task, TP-GMM is computed using EM algorithm. The learned TP-GMM parameter can reproduce trajectories using GMR with the lack of information of task parameters. Using the same approach, another study by Alizadeh et al. 70 was also applied to the work of Kim et al. 75 (Figure 5(d)) for wiping markers and sweeping lentils. It is an extension of TP-GMM with an incremental learning skill. 83 With this method, first, a set of trajectories is generated using a new model from TP-GMM with task parameters. Then, new task parameters are added to the model. Finally, the model parameters are updated by EM algorithm with the information of the new trajectories for sweeping task.

In addition, Elliott et al. developed a training surface approach for extracting the actions using a single cleaning demonstration. 73 Cleaning pattern is extracted by a trajectory from original demonstration, where the robot continuously contacts with the surface using a tool. Ye and Alterovitz presented demonstration-guided motion planning (DGMP), where the paths are based on sampling-based motion planning methods. 72 In the DGMP learning phase, human demonstrations are encoded into a learned cost metric, which guide a cleaning motion planner. Therefore, the robot can perform table cleaning task guidance from the cost metric with motion planning in the new environment. Another work with a similar method was proposed by Restrepo et al., 79 where the authors implemented virtual guides using virtual mechanisms by demonstration. Users modify the guides iteratively through physical interaction using a scaled FC to escape the active guide during following a sweeping trajectory.

Furthermore, in the work of Boteanu et al., 68 a hierarchical task network is used to train primitive tasks for wiping surfaces based on user teaching. During execution of the cleaning task, the robot could face two types of failures: symbolic and execution. If symbolic failures occur, the robot searches for a way to work the task automatically, but the robot will request new user demonstration when it faces execution failures. Dianov et al. also proposed a technique capable of extracting abstract symbolic task specifications from human demonstrations and a new framework with abstract graphing and ontology for plate cleaning. 77 The abstract graph represents the key structure of the learned tasks, and ontology contains the contextual information of extracted task structures. The task graph learning approach is used to learn both abstract graph and ontology. Moreover, Lee and Ott proposed an approach for the kinesthetic coaching of motion primitives for a window wiping motion using an impedance controller. 119 To develop the approach, the author used a modified HMM representation combined with a Gaussian regression for online incremental kinesthetic learning to generate the continuous trajectory. While LfD has been successfully used to mimic human behavior and generate robotic cleaning motions, it still has a limited ability to adapt and clean to new environments.

Supervised learning

SL is the machine learning task that maps an input to an output based on a labeled set of training examples. It is typically used in classification or regression processes from given data so that the data learned is used for applying to unseen situations in a suitable way. The representative of the learning is the artificial neural network (ANN), Gaussian process regression (GPR), support vector machines (SVMs) and so on.

For SL, the convolutional neural network (CNN) is one of the most popular approaches to controlling robots for different fields of application, such as cleaning, manipulation, grasping, locomotion, and so on. 75,118,120,121 Rahmatizadeh et al. proposed multitask learning architecture for cleaning a small object using CNN. 90 They collected the data from human demonstration, and each image is used as an input. The network consists of CNN and long short-term memory (LSTM) to operate the robot autonomously. CNN also plays a role as task selector, and LSTM generates the robot joint command to send the robot to clean the object using a towel. Kim et al. and Cauli et al. also developed an architecture for wiping stains and sweeping lentils using CNN 75,93 (Figures 5(b) and 6(b)). To collect the data for training CNN, they used kinesthetic teaching moving the iCub robot arm. They modified the output of an AlexNet 122 model to obtain the position and orientation of the end-effector. The system was able to implicitly distinguish the two different types of dirt and perform the correct cleaning movement. In the work of Kim et al., 75 there are strong assumptions for which robot and table must be located in a fixed pose decided before training. In order to relax these assumptions and make the system platform independent, Cauli et al. 93 applied a geometric transformation from the robot camera plane to a canonical bird-view virtual camera plane and augmented the acquired data set adding a Perlin noise 123 background to the images.

In addition, Pervez et al. proposed deep-DMP architecture for sweeping tasks. 92 Using this method, the authors used the CNN by three processes, which consists of feature extraction, soft-argmax (fully connected layer for acquiring task parameters), and forcing term. Then, DMP was used to generate the planner movement for the robot manipulator. Moreover, a task level hierarchical system was developed to clean up the table. 96 The authors used a deep learning, CNN-based shape completion method for detecting novel objects given a partial view. The system uses GraspIt 124 to search for possible grasps and generates arm trajectory using MoveIt. 106

Additionally, Martius et al. developed an ANN with differential extrinsic synaptic plasticity for wiping a table using a tendon-driven soft robot arm 94 The network consists of sensor values (force data) as input and robot arm actions as output. To train the network, they used tangent H as the activation function; then, the network generates wiping motion patterns. Stelter et al. 95 also used force measurement data for wiping with multidimensional time-series shapelets. To learn detection and classification of force measurements, they collected force measurement and a list of hand-labeled contacts with multidimensional time series. Best match distance is used to extract the feature of the data, and binary classifiers are used to classify the best shapelets via Gaussian KDE. Attamimi et al. developed a visual recognition system for tidying up the rubbish on the table. 89 For the classification of the object materials, SVM classifiers were used to train multiple feature vectors using the object database. Also, they implemented ungraspable object detection based on GMM given the feature vector I(H, S, LQ), which consists of the table’s color (H and S), and near-infrared (NIR) reflection intensity LQ. In addition, support vector regression (SVR) is applied to generate an internal model between perception, action, and effect from nonlinear data for wiping movements 76 (Figure 5(c)). The authors suggest learning cycle between object properties and action parameters for the cleaning task and build the model from sensor data resulting from wiping experiments. Langsfeld et al. developed cleaning of deformable objects using a bimanual robot. 88,126 With the method, force and deflection data from the tool are collected to estimate the object stiffness between the grasper and grasping object. For detection of the stain, k-means classifier is used to find three clusters as clean, dirty, and background on the pixels of the image. Also, GPR is used to predict planner parameters, which are the normal forces applied to the surface and going over the stain region using the robotic cleaning tool. The same authors applied an identical approach to different tasks such as cleaning an object with a scrubbing motion. 86,91 Eppner et al. generalized a whiteboard cleaning task using imitation learning. 87 To perform the imitation learning, the user first demonstrates the cleaning task to estimate the probability distributions over joint configurations and positions of the human arm. Then, they estimate the cleaning actions that maximize the joint probability distribution represented by a dynamic Bayesian network (DBN) training.

SL shows the possibility of generalizing cleaning tasks for the new environment. However, data collected for training are still not easy to adapt to the new situation (due to totally different position, shape, and illumination of the environments), because the robot has never seen the data during the training. Therefore, more time is needed to acquire further data, so the robot can adjust to the new conditions.

Reinforcement learning

RL is an area of machine learning established by behavioral psychology where an agent takes actions in an environment to find an optimal policy to perform tasks using a reward function. The representatives of RL are Q-learning, state-action-reward-state-action (SARSA), deep Q network, asynchronous actor-critic algorithm, and so on.

Typically, a Markov decision process (MDP) is used to implement RL and it consists of a five-tuple <S,A,T,R,α>, where S is a set of possible robot states, A is the set of robot actions, T is the transition function, R is the reward function, and α ∈ [0,1] is the discount factor. It is also used in RL to utilize dynamic programming techniques, and it can be determined for robot cleaning behavior based on robot state and the environment. Hess et al. developed the state transition model to wipe a table efficiently. 104 The authors proposed a model using MDP, which consists of a set of table states, a set of cleaning actions, a transition function, and a reward function. The transition function is modeled by observing the outcomes of a robot’s actions to generate paths for cleaning table surfaces. The best action is achieved with a reward function based on table and robot state with camera images. The MDP is also used for fully observable problems with uncertainty for clearing objects on the table. 99 To solve the problem using MDP, the authors used a symbolic representation, which consists of action, preconditions, and outcomes (success probability) with a set of noisy indeterministic deictic (NID) rules. 125 With the information, the model of clearing task is learned so that the robot repeats choosing actions (putPlateOn, putCupOn, and putForkOn) to maximize the reward according to the current policy. To speed up learning, the authors used the relational exploration with demonstrations 126 algorithm that integrates active teaching demonstration. In addition, the same authors developed a method to sweep lentils using the same approach. 103 However, they employed probabilistic relational action-sampling in DBNs planning algorithm, 127 which is a model-based planner for action planning sequences with NID rules. For the learning phase, they used learning heuristics, which obtains modeling of the rule with reduced computation time. Pajarinen and Kyrki presented a partially observable MDP (POMDP) for robot manipulation such as cleaning dishes in the dishwasher. 97 The POMDP is used to estimate different action choices based on optimization reward function with the probabilistic model in an uncertain world.

For solving more complex tasks, deep RL can be used for cleaning tasks. For example, RL with the process from observation to action using a deep network by Google DeepMind 128 could have several applications. Devin et al. developed an object-level attentional mechanism to acquire useful visual representations in the context of policy learning for sweeping oranges 100 (Figure 6(c)). To develop the mechanism, they proposed a two-level hierarchy of attention (high level, low level) over scenes for policy learning. The high-level hierarchy presents meta-attention, which is to indicate objects in the scene regardless of the task. The meta-attention consists of a semantic component, which identities the object with a feature vector and position component, and the object is located on the table from a camera image. The low-level component, which is called task-specific attention, finds the possible objects and is relevant to the task given during the performance. It supports the robot to choose objects to perform a task given using a trained CNN. For a generalization of the task, the methods use both deep RL methods and trajectory-centric RL algorithms such as PI2 129 or REPS. 130 Moreover, Liu et al. proposed an imitation-from-observation algorithm, which is based on learning a context translation model from raw video for sweeping tasks. 101 The algorithm is performed by learning to translate a demonstration from differences in viewpoint. The conversion of the different viewpoint helps to collect a feature representation for training the model. The method uses deep RL to optimize for sweeping actions that follow the demonstration translated from a target context.

In addition, the interactive RL approach for the domestic task of cleaning a table was studied by Cruz et al. 102 They used contextual affordances, which has relations between state, objects, action, and effects to avoid the failed state. With this method, first, in simulation, an agent is trained using classic RL as an external trainer. Then, the trainer delivers all possible cleaning actions to a second robot trained with interactive RL. For the learning interactive RL approach, they implement an on-policy method SARSA to update every state action value for cleaning a table. Moreover, Covallero et al. proposed manipulation planning, which merges manipulation skills and planning to acquire the sequences of actions for table clearness. 98 They used a mixture of symbolic and geometric restrictions to reduce computational costs and find the best sequence of clearing action given a cost function. Furthermore, Nemec et al. combined various adaptive iterative learning control (AILC) methods with RL for wiping glass using a bimanual KUKA LWR arm 111 (Figure 5(e)). AILC is used to obtain the desire force with an adaptation of the feedback in the current iteration loop. Then, probabilistic policy improvement RL algorithms 129 can scale to complex learning systems and minimize the number of tuning parameters.

As the capability of computers has grown, cleaning tasks using the RL approach have expanded. In particular, deep RL can apply not only to cleaning tasks, but also to other applications such as assembling toys, hanging clothes, and so on. Nevertheless, to reduce completion time for cleaning tasks with the learning approach, robots still need the human as a teacher to converge to the goal point rapidly.

Classical control can apply cleaning tasks in a highly controlled environment with low computation time. However, the learning-based control approach has the possibility to generalize the task in the new environment with high computation time. Therefore, if we developed the cleaning application, we can consider combining the two control approaches properly, based on the tasks and environments, so that the robot will achieve better performance than that resulting from applying only one control approach to the robot.

Discussion and conclusion

The objective of writing this survey article is to summarize the work related to control approaches for the cleaning robots. As we discussed in the introduction, the use of service robots will expand tremendously in the near future due to the rise in population of elderly people around the world. In this work, we primarily focus on cleaning tasks and the control approach using robotic manipulators or human-like robots. However, current cleaning robots dedicated to specific applications cannot handle the variability of the domestic environment. 131,132 Specifically, these robots are designed to perform a specific task, such as cleaning a floor or window. They do not have the capability to accomplish a combination of tasks. 133 In fact, the tasks they perform are simple and do not require human intervention. To undertake more complex tasks such as dish washing, washroom cleaning, kitchen cleaning, sweeping, and wiping, more human-like robots that can mimic human movements and are more adaptable to task variability are needed. In conclusion, to have universal domestic cleaning robots, at least a several-DoF (more than four) robotic manipulator with anthropomorphic hand or three-point gripper, or a humanoid robot, is required.

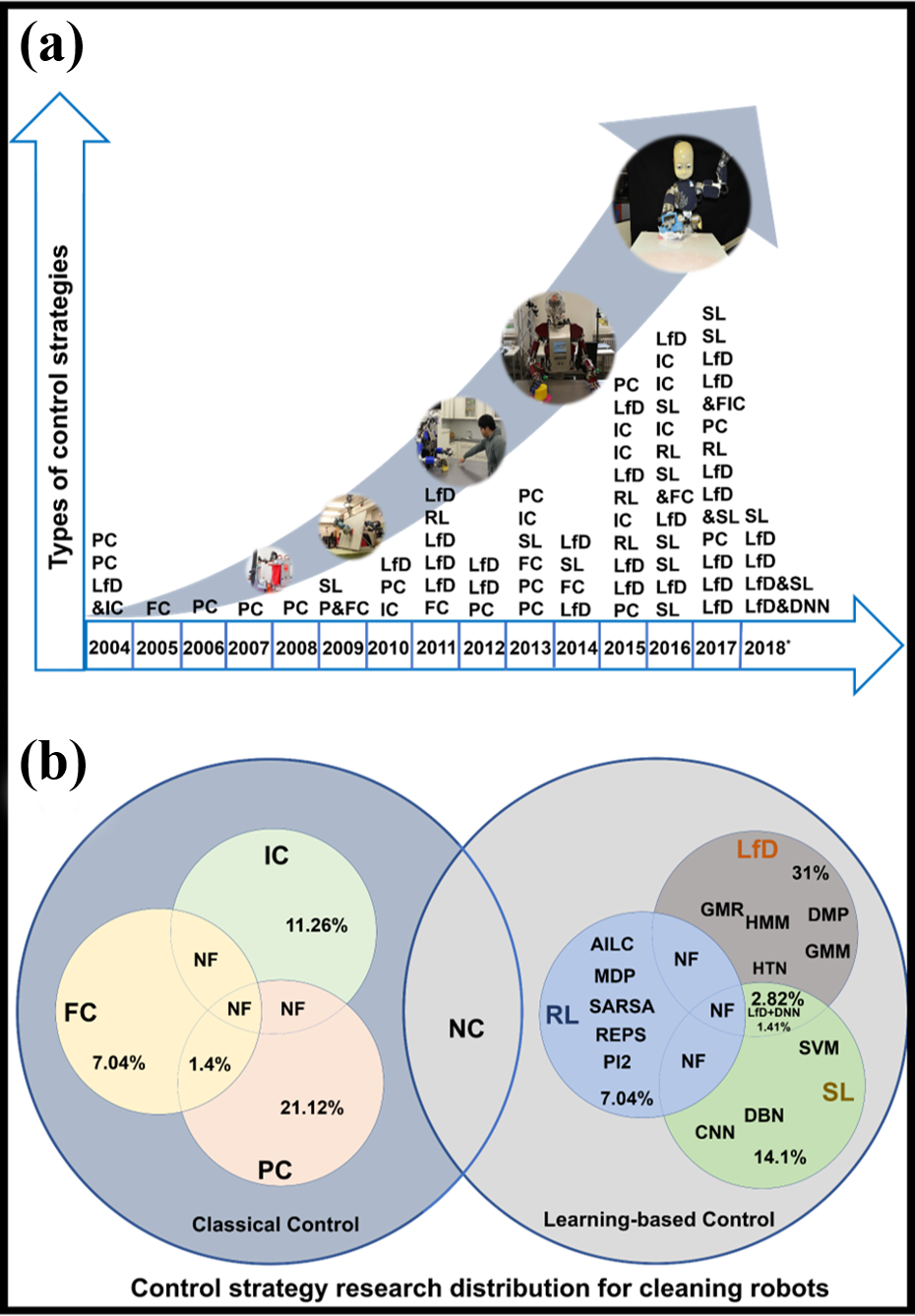

Apart from the design architecture of the robot, one of the most challenging factors is determining how to control the robots to accomplish domestic cleaning tasks. For example, to clean a washroom basin or wash dishes, we need to have robots that can see the object, detect the dirt, understand the object material types, generate the cleaning movement, detect if the object is cleaned or not, and place the object at the cleaned place. Imagining tasks done by robots are still far from the current state of the art. Researchers continue to develop more sophisticated control strategies to solve these challenges. Here, our work mainly seeks to assess the latest control strategies used for cleaning robots and categorize them into classical control and learning-based approaches (see Table 1). Moreover, our categorization data suggests that sensors used for cleaning generally consist of two types—camera or F/T sensor—or a combination of these. In addition, the robotic platform that is used for different cleaning tasks is important. For example, the KUKA robot can perform cleaning, scrubbing, sweeping using the camera, and wiping using the F/T sensor, in the case of humanoid robots such as Nao or iCub, systems that rely only on camera inputs are more common. The detailed description is provided in Table 1. Based on our survey, we observed that these control strategies have been developed for cleaning purposes since 2004; the related work for this is covered and reported in Figure 7(a).

Survey report on control methods for cleaning robots: (a) detailed year-to-year report and the trend on control methods used for different cleaning tasks; (b) Venn diagram for different control approaches, algorithms, and their share in the cleaning robotic field. PC: position control; FC: force control; IC: impedance control; LfD: learning from demonstration; SL: supervised learning; AILC: adaptive iterative learning control; RL: reinforcement learning; MDP: Markov decision process; SARSA: state-action-reward-state-action; DMPs: dynamic movement primitives; GMM: Gaussian mixture model; GMR: Gaussian mixture regression; HMM: hidden Markov model; DBN: dynamic Bayesian network; SVM: support vector machine; CNN: convolutional neural network.

This article covers the majority of work related to classical control used for cleaning and sorted it into PC, FC, and IC. Of that work, we observed that most robots are controlled using PC (covers 21% of all research based on cleaning; see Figure 7(b)). An obvious reason for this could be its well-established models and simplicity. Technically speaking, PC is one of the basic methods to control the robot using proportional (P), proportional integral (PI), and proportional integral and derivatives . 134,135 These control methods can be easily implemented using positional feedback in any robotic manipulator with trajectory planning algorithms. It can be used easily for cleaning of dirt, but only for fixed trajectory tasks that do not require information such as force feedback on the object. 51 However, PC can be used for repetitive tasks, but it cannot handle delicate objects such as glass and plates because it can break objects easily. 51,56 Similarly, with FC, we can have force feedback with fixed trajectory, but the robot cannot perform any undefined tasks. 51,59 FC for cleaning also poses similar risks for breaking delicate objects, as with PC. For example, the robot can perceive delicacy of the object but cannot control the motion according to the object stiffness; this field contributed only 7% (see Figure 7(b)) in the cleaning robotics research. 57,58,59,64

Apart from the FC and PC method, IC has also been applied to different cleaning tasks, which cover 11.3% of the body of robotics research on manipulator-based cleaning. IC is one of the most advanced methods in the classical approach to control any robots. It has capabilities to handle very delicate and fragile objects, which makes it more suitable to apply for cleaning. 64 Control of robots based on IC allows dynamic control or dependency on force input to motion output and allows the robot to produce variable stiffness movement using joint torque. 61,63 This variability enables the use of cleaning robots for multipurpose applications such as dishes, windows, tables, basins, and so on. However, performing complex motions based on trajectory is still challenging for IC. Considering trajectory planning using perception, all classical control approaches are highly computational and have less flexibility for handling clutteredness.

Another approach that is highly exploited for cleaning purposes is learning, which can be called an implementation of artificial intelligence in the robots to perform complex cleaning tasks. 100 Moreover, this method covers 52% of the research of cleaning tasks compared to the classical approach (39%) (see Figure 7(b)). Based on our results, we observed that there is a sudden surge in learning-based research from 2015. We found through our work that the learning-based approach is much simpler to implement for the generation of complex trajectories. 75,92 Based on our literature survey, we considered the three basic learning control approaches: RL, SL, and LfD.

The learning-based control approach, LfD, is generally used to generate new cleaning trajectories from human demonstration. 136 It contributes 31% in the cleaning robotics tasks. As discussed in “Learning-based control approach” section, we have examined LfD and its different types of models such as DMP, GMM, GMR, and so on. Specifically, these models carry some advantages over others: DMP has differential equations for creating a smooth trajectory and is used to model each demonstrated trajectory. However, DMPs have a limitation of flexibility for the new environment and can reproduce noise from the demonstration. 137 To overcome these issues, the CC-DMP and TP-DMP approaches were developed for wiping and sweeping tasks. 71,74 Another approach of LfD, GMM/GMR, is used to compute an average trajectory from a set of human demonstrations and then reproduce new trajectories for cleaning tasks. However, GMM is not able to generate all cleaning trajectories. 138 Therefore, TP-GMM was developed to generalize cleaning trajectories with task parameters. This LfD approach is a beneficial control approach for mimicking and generating cleaning motion similar to human behaviors using robot manipulators 138 and is used for different cleaning tasks. However, the perception and detection system for cleaning the new environment are weak points and challenges using the TP-GMM approach. 70

To overcome the weak points of the LfD, the SL approach was developed. SL is used to predict dirt regions or robot cleaning motions based on the network learned with a set of labeled training examples. ANN is the basic idea of SL and is used to predict robot cleaning actions based on input data. 139 For combining image processing with ANN, CNN was developed to predict cleaning motions based on image data examples. Also, LSTM is used to generate the robot joint command for cleaning with CNN. DBN, which estimates the maximized joint probability distribution, is used to estimate cleaning actions. For the regression problem, GPR, which is modeled with a nonparametric Bayesian approach, is used to predict tool parameters for cleaning deformable objects; and SVR, which can build a model based on data, generates wiping motions. SL usually generate a model trained with a given data set first. Then, based on the data, SL can predict a dirt area or cleaning motion. The strong point of SL is that it can adapt to the trained environment with disturbance and noise because data augmentation techniques 123 are used to increase the performance of cleaning tasks. However, the problem with the SL is that the performance of cleaning depends on the given data. For example, if the robot performs the cleaning tasks in a totally new environment, the robot cannot achieve success with the tasks. 75 Therefore, RL is used to train the robot to learn cleaning motion and interact with the new environment. To develop this approach, MDP is commonly used to set the robot state, actions, environment, and so on. Also, reward plays an important role to achieve a goal. The original RL has limitations to apply various application because the action and state space are not easy to design for complex tasks. 104

To overcome the different learning shortcomings, researchers have proposed different solutions by combing the best features of each learning method (RL, LfD, and SL). For example, deep RL, combining the features of SL and RL, 100 such as PI2 and REPS, was developed to work the entire process from observation to cleaning action using a deep network. RL is a good control approach because the robot (agent) can handle its own tasks without human intervention. 104,140 However, after leaning, sometimes robot shows strange movements that the human never did 140 and also it is computational expensive.

Still, learning-based control approaches have issues, so learning control approaches that combine two methods have been developed for cleaning tasks. Especially, LfD combined with SL can increase the performance of the perception system and reproduce reasonable cleaning trajectories. Furthermore, D-DMP 92 and GMM combined with CNN 75 have been developed for wiping and sweeping movements.

After discussing several control aspects for cleaning, we observed a paradigm shift for control strategies in cleaning tasks from a classical to learning-based approach. This means that there is an increasing use of classical control strategies, which today are combined with the use of artificial intelligence techniques to easily address effectiveness and robustness in control design.

However, a huge amount of research work is still required to develop an efficient and universal control method for cleaning tasks. Because it is a complex, variable, and unstructured environment, a highly efficient design and control strategy is required. In the authors’ vision, we see that learning could be the most promising solution by removing its flaws. Specifically, we see that many possibilities are coming or need to come. For example, LfD is the most utilized learning-based control for cleaning due to its ease in generating a complex and versatile trajectory, and it can handle the unstructured environment with different tasks. Moreover, dynamic movements, deep learning, and LfD could advance the performance significantly. Of course, many challenges remain. They should be addressed on a timely basis to bring highly intelligent robots to the household to help people as soon as possible.

We also would like to note that development of smart human-like robots is needed by considering the importance of mechanical design. We observed that the field of service robotics still has not considered the bio-inspired design to utilize most optimized solutions. That are mainly based on the traditional robotic design using hard and rigid components. 141,142 But looking at nature, they are not completely hard; the design is mostly compliant, hybrid (hard and soft), or completely soft. Here, we would like to propose that utilizing the soft robotics technologies could help to solve several domestic cleaning challenges. Soft robotic depicts several fascinating features such as stiffness that is similar to real tissue, inherent compliance, adaptability, and conformability 143 –146 These features could be most suitable for cleaning with ease of control. Moreover, with the classical robots, we have not yet considered the safety issue in the domestic environment, which we believe would be a crucial issue to address in the near future. Here, the author would like to propose that the design of a highly compliant robotic manipulator, soft arm, modular soft arm, 147,148 with reconfigurable grippers 149 and with dynamic deep-learning-based cleaning robot, could be the most promising solution for future robotics. Moreover, to improve the generalization of cleaning tasks, transfer learning 150 can be useful. In principle, transfer learning makes it possible to transfer the learned model to a different robot platform. In this sense, transfer learning provides opportunities to make a general model for cleaning tasks that can operate the same performances of tasks in different robotic platforms in the future.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Funding Sources Ministero dell’Istruzione, dell’Università e della Ricerca, (Grant / Award Number: ‘SI-Robotics’).