Abstract

Natural-language-facilitated human–robot cooperation refers to using natural language to facilitate interactive information sharing and task executions with a common goal constraint between robots and humans. Recently, natural-language-facilitated human–robot cooperation research has received increasing attention. Typical natural-language-facilitated human–robot cooperation scenarios include robotic daily assistance, robotic health caregiving, intelligent manufacturing, autonomous navigation, and robot social accompany. However, a thorough review, which can reveal latest methodologies of using natural language to facilitate human–robot cooperation, is missing. In this review, we comprehensively investigated natural-language-facilitated human–robot cooperation methodologies, by summarizing natural-language-facilitated human–robot cooperation research as three aspects (natural language instruction understanding, natural language-based execution plan generation, knowledge-world mapping). We also made in-depth analysis on theoretical methods, applications, and model advantages and disadvantages. Based on our paper review and perspective, future directions of natural-language-facilitated human–robot cooperation research were discussed.

Keywords

Introduction

Attracted by the naturalness of natural language (NL) communications among humans, intelligent robots start to understand NL to develop intuitive human–robot cooperation in various tasks. 1,2 Natural-language-facilitated human–robot cooperation (NLC) has received increasing attention in human-involved robotics research over the recent decade. By using NL, human intelligence at high-level task planning and robot physical capability—such as force, 3 precision, 4 and speed 2 —at low-level task executions are combined to perform intuitive cooperation. 5,6 For example, in furniture assembly, it is challenging to perform natural cooperation, for that a human has limited precision and speed in hold driller, while a robot lacks understanding of assembly sequence. By giving robots NL instructions, such as “drill holes, then clean surface, last install screws,” a human’s high-level plan was combined with robots’ low-level executions, such as “grasping drillers and brush,” and “motion planning in drilling, cleaning, and installing,” and finally conducting natural cooperation. 4

Currently, typical manners in human–robot cooperation include tactile indications, such as contact location, 7 force strength, 8 and visual indications, such as body pose, 9 and motion. 10 Compared with these methods, using NL to conduct an intuitive NLC has several advantages. First, NL makes human–robot cooperation natural. For traditional methods mentioned above, humans need to be trained to use certain actions/poses for making themselves understandable by a robot. 11,12 While in NLC, even nonexpert users without prior training can use verbal conversations to instruct robots. 13 Second, NL transfers human commands efficiently. The traditional communication methods using visual/motion indications require the design of informative patterns, “‘lift hand’ means ‘stop’, ‘horizontal hand movements’ means ‘follow,’” for delivering human commands. 14 Existing languages, such as “English, Chinese, and German,” already have standard linguistic structures, which contain abundant informative expressions to serve as patterns. 15 NL-based methods do not need to design specific informative patterns for various NL commands, making human–robot cooperation efficient. Lastly, since NL instructions are delivered orally instead of being physically involved, human hands are set free to perform more important executions. Typical areas using NLC are shown in Figure 1.

Promising areas using NLC. (a) daily robotic assistance using NL. 16 A robot categorized daily objects with human NL instructions. (b) Autonomous manufacturing using NL. 17 An industrial robot welded parts under human’s oral instructions. (c) Robotic navigation using NL. 18 A quadcopter navigated in indoor environments with human’s oral guidance. (d) Social accompany. 19 A pet dog is playing balls with a human with socialized verbal communications. NLC: natural-language-facilitated human–robot cooperation; NL: natural language.

Advancements of NLP support an accurate understanding of the task in NLC. Advancement of a robot’s physical capability support increasingly improved task execution in NLC. With supporting technique from both natural language processing (NLP) and robot execution, NLC has been developed from low-cognition-level symbol matching control, such as using “yes/no” to control robotic arms, to high-cognition-level task understanding, such as identifying a plan from the description “go straight and turn left at the second cross.”

NLC research is regularly published in international journals, such as IJRR, 20 TRO, 21 AI, 22 ] and KBS, 23 and international conferences such as ICRA, 24 IROS, 25 and AAAI. 26 By using keywords “‘NLP, human, robot, cooperation, speech, dialog, natural language,” about 1400 papers were retrieved from Google Scholar, 27 then with a focus of NL-facilitated human–robot cooperation, about 570 papers were related. The publication trend is shown in Figure 2, where the increasing significance of NLC is reflected by steadily increasing publication numbers.

The annual amount of NLC-related publications since the year 2000 according to our paper review. In the past 18 years, the number of NLC publications are steadily increasing and reaching a history-high level in current time, revealing that NLC research is encouraged by other researches such as robotics and NLP. NLC: natural-language-facilitated human–robot cooperation; NL: natural language.

Compared with existing review papers about human–robot cooperation using communication manners such as gesture and pose, 28,29 action and motion, 30 and tactile, 31 a review paper about human–robot cooperation using NL communication is lacking. Therefore, given the huge potentials of facilitating human–robot cooperation and increasingly received attention in NLC, in this review paper, we aim to summarize the state-of-the-art NLC methodologies in wide-range domains, revealing current research progress and signposting future NLC research. Our novelty is that we summarized the NLC research as three aspects: NL instruction understanding, NL-based execution plan generation, and knowledge-world mapping. Each aspect was comprehensively analyzed with research progress, method advantages, and limitations. The organization of this article is shown in Figure 3.

Organization of this review paper. This review systematically summarized methodologies for using NL to facilitate human–robot cooperation. Three main researches are introduced as NL instruction understanding, NL-based execution plan generation, and knowledge-world mapping. In each research, typical models, application scenarios, model comparison, and open problems are summarized.

Framework of NLC realization

Realization of NLC is challenging due to the following aspects. First, human NL is abstract and ambiguous. It is hard to understand humans accurately during task assignments, impeding natural communications between a robot and a human. Second, NL-instructed plans are implicit. It is difficult to reason appropriate execution plans from human NL instructions for effective human–robot cooperation. Third, NL-instructed knowledge is information-incomplete and real-world inconsistent. It is difficult to map enough theoretical knowledge into the real world for supporting successful NLC. To solve these problems for effective and natural NLC, mainly three types of research have been done.

NL instruction understanding: To accurately understand assignments during NLC, the research of NL instruction understanding has been done to build semantic models for extracting cooperation-related knowledge from human NL instructions.

NL-based execution plan generation: To reason a robot’s execution plans from human NL instructions, the research of NL-based execution plan generation has been done to create various reasoning mechanisms for identifying human requests and formulize robot execution strategies.

Knowledge-world mapping: To map NL-instructed theoretical knowledge to real-world situations for practical cooperation, the research of knowledge-world mapping research has been done to recommend the missing knowledge and correct the real-world inconsistent knowledge for realizing NLC in various real-world environment.

NL instruction understanding

NL instruction understanding enables a robot to receive human-assigned tasks, identify human-preferred execution procedures, and understand the surrounding environment from abstract and ambiguous human NL instructions during NLC. By improving the robot’s understanding toward the human, the accuracy and naturalness during NLC are improved. To intuitively understand human NL expressions with an environment awareness, two types of semantic analysis models were developed: literal models and interpreted models. For both literal models and interpreted models, cooperation-related information is explicitly or implicitly extracted indicated by humans. The difference between them, however, is the information source. The literal models only extract information from human NL instructions, while the interpreted models will also extract information from human’s surrounding environment. With literal models, the robot understands tasks merely by following human NL instructions, while with interpreted models, robots understand tasks by critically thinking about cooperation-related practical environment conditions, becoming situation aware.

From the model construction perspective, to analyze meanings of human NL instructions in NLC, literal models mainly use literal linguistic features, such as words, Part-of-Speech (PoS) tags, word dependencies, word references, and sentence syntax structures, shown in Figure 4; interpreted models mainly use interpreted linguistic features, such as temporal and spatial relations, object categories, object physical properties, object functional roles, action usages, and task execution methods, as shown in Figure 5. Literal linguistic features were directly extracted from human NL instructions, while interpreted linguistic features were indirectly inferred from common sense based on NL expressions.

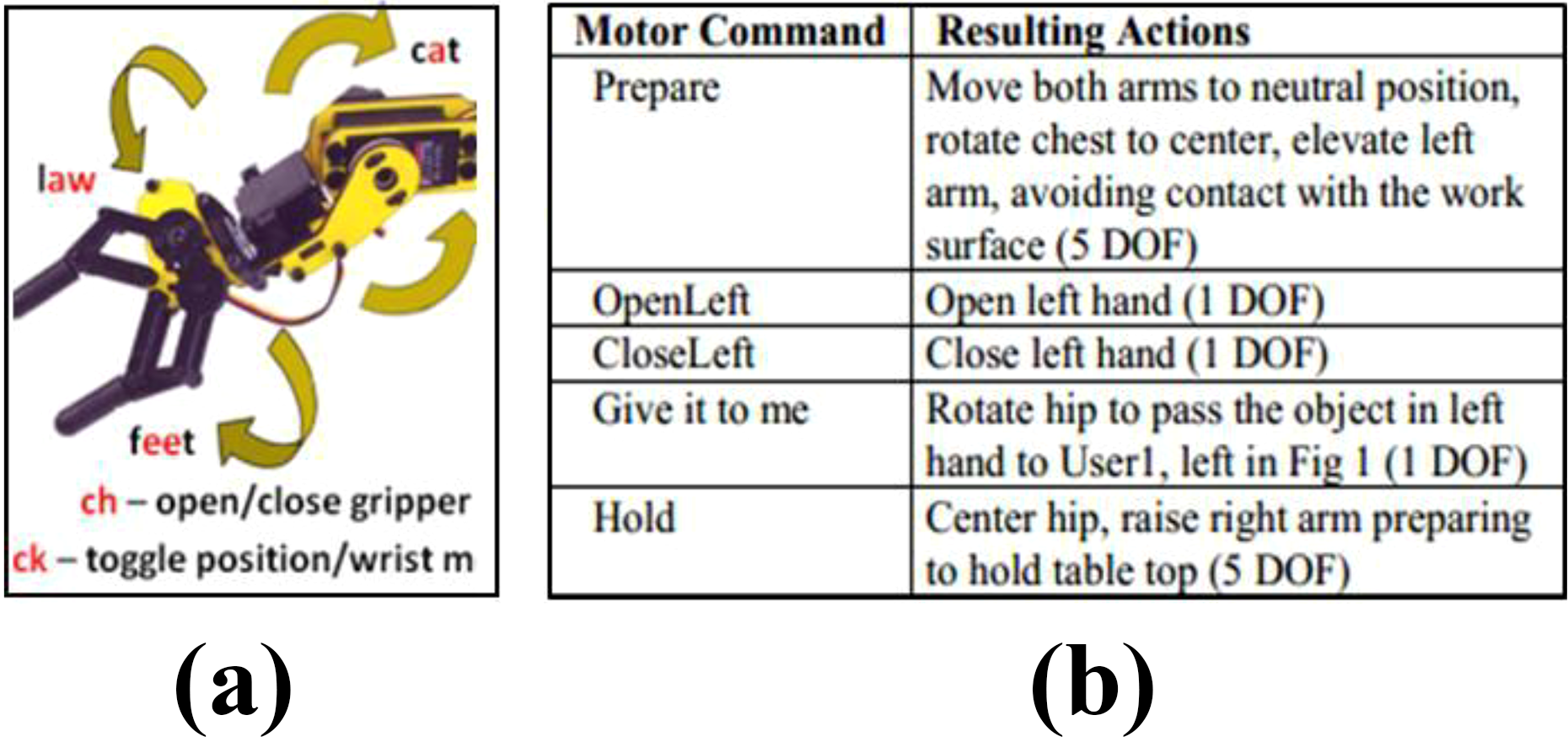

Typical literal models for NL instruction understanding. (a) House et al. 32 is a grammar model. The robotic arm’s motion was controlled by predefined vowels, such as “aw, ee, ch,” in human speech. (b) Dominey et al. 33 is an association model. NL expressions, such as “OpenLeft,” was interpreted as specific parameter “open left hand for 1 DOF” for robotic arms. NL: natural language. DOF: degrees of freedom.

A typical interpreted model for NL instruction understanding. 34 Robot memory, real-world states and human NL instructions were integrated to instruct robot executions. NL: natural language.

Literal models

With regard to involvement manners of literal linguistic features, literal models are categorized into the following types. (1) Grammar model: Literal linguistic feature patterns such as “action + destination” are manually defined. (2) Association model: Literal linguistic features are mutually associated with commonsense knowledge.

Grammar models

To initially identify key cooperation-related information, such as goal, tool usage, and action sequences, from human NL instructions, grammar patterns are defined to build grammar models. Grammar patterns refer to keyword combinations, PoS tag combinations, and keyword-PoS tag combinations. 35,36 By using these grammar models, robot behaviors will be triggered by the grammars mentioned in human NL instructions. Some grammar patterns explored execution logics. For example, verbs and nouns were combined to describe a type of actions such as V(go) + NN(Hallway) and V(grasp) + NN(cup). 37 –39 Some grammar patterns explored temporal relations, such as the if–then relation “if door open, then turn right” and the step 1 to step 2 relation “go—grasp.” 40,41 Some grammar patterns explored spatial relations, such as the IN relation “cup IN room” and the CloseTo relation “cup CloseTo plate.” 42,43 The rationale of the grammar model is that sentences with a similar meaning have similar syntax structures. Similarity of NL meanings was calculated by evaluating the syntax structure similarity.

Association models

To understand abstract and implicit NL execution commands during cooperation, association models were developed by associating different literal linguistic features together to extract new semantic meanings. Essentially, the association model exploited existed knowledge by creating high-level abstract knowledge from low-level detailed knowledge. One typical association model is a probabilistic association model. Informative literal linguistic features in NL instructions were correlated with other informative keywords by using probability likelihoods computed from human communications. Typical works are as follows. Learning from previous human execution experiences: Cooperation-needed actions are inferred based on mentioned tasks, locations, and their probabilistic associations.

44

Learning from daily common sense: Quantitative dynamic spatial relations such as “away from, between,…” have been associated with its corresponding NL expressions based on their probabilistic relations

45

; general terms such as “beverage” are specified to “juice” according to cooperation types and task–object probabilistic relations.

46

With this probabilistic association model, the uncertainty in NL expressions was modeled, disambiguating NL instructions and improving a robot’s adaptation toward different human users with various NL expressions. Another typical association model is an empirical association model. High-level abstract literal linguistic features, such as ambiguous words and uncertain NL phrases, are empirically specified by low-level detailed literal features such as action usage, sensor values, and tool usages. The rationale is that general knowledge could be recommended for disambiguating ambiguous NL instructions in specific situations. Compared with probabilistic association models, which use objective probabilistic calculation, empirical association models use subjective empirical association.

Typical usages include the following types.

By defining sensor value ranges as ambiguous NL descriptions, such as “slowly, frequently, heavy,” ambiguous execution-related NL expressions were quantitatively interpreted, making ambiguous NL expressions sensor-perceivable. 41,47

By integrating key aspects, such as execution preconditions, action sequences, human preferences, tool usages, and location information, into abstract NL expressions—such as “drill a hole”—human instructed high-level plans were specified into detailed robot-executable plans—such as “clean the surface,” or “install a screw”. 4,36,39,42,48

By using discrete fuzzy statuses—such as “close, far, cold, warm”—to divide continuous sensor data ranges, unlimited objective sensor values were “translated” into limited subjective human feelings, such as “close to the robot, day is hot,” supporting a human-centered task understanding. 49,50

By combining human factors, such as “human’s visual scope,” with linguistic features, such as a keyword “wrench” in human NL instructions, empirical association model became environmental-context-sensitive, making a robot to understand a human NL instructions such as “deliver him a wrench” from the human perspective “human desired wrench is actually the human-visible wrench.” 51 –53 The advantage of using association models in NLC is that the robot cognition level is improved by means of mutual knowledge compensation. With this association model, a robot can explore unfamiliar environments by exploiting its existing knowledge.

Interpreted models

Human requests are usually situated, which means human NL expressions are with default environmental preconditions, such as “cup is dirty, a driller is missing, robot is far from a human.” Human NL instructions are closely correlated with situation-related information, such as human tactile indication (tactile modality), human hand/body pose (vision modality and motion dynamics modality), and environmental conditions (environment sensor modality).

To accurately understand human NL instructions, interpreted models are developed to integrate information from multimodalities, instead of merely from NL modality. The rationale behind interpreted models is that a human is dependent with their surrounding environment and better understanding of human needs to be environmentally context aware. With multimodality models, information from different modalities related to human, robot, and their surrounding environment was aligned to establish semantic corrections.

54

–56

Using NL instructions and human-related features to understand human NL instructions, typical features beyond linguistic features considered in single-modality models also include the following: individual identity detected by radio-frequency identification (RFID) sensor,

57

touch events detected by tactile sensors,

58

facial expressions (joy, sad),

59

hand poses detected by computer vision systems,

60

and human head orientations detected by motion tracking systems.

61

Supported by rich information from multimodality information, typical problems tackled for NLC include complex-instruction understanding,

62

human-like cooperation,

61

human social behavior understanding, and mimicking.

50

For multimodality models using environment and robot-related features to understand human NL instructions in NLC, typical features also include the following: spatial object-robot relations indicated by human hand directions,

62

temporal robot-speech-and-head-orientation dependencies measured by computer vision systems,

61

object visual cues detected by cameras,

63,64

robot sensorimotor behaviors monitored by both motion systems and computer vision systems.

34

Supported by rich information from these features, typical problems tackled in NLC include real-time communication, context-sensitive cooperation (sensor-speech alignment), machine-executable task plan generation, and implicit human request interpretation. Typical algorithms used for constructing multimodality models include hidden Markov model (HMM) for modeling hidden probabilistic relations among interpreted linguistic features,

63,65

Bayesian network (BN) for modeling probabilistic transitions among task-execution steps,

66

–68

and first-order logic for modeling semantic constraints among interpreted linguistic features.

69,70

These algorithms integrate different modalities with appropriate contribution distributions and extract contributive feature patterns among modalities. Multimodality models have three potential advantages in understanding human NL instructions. By exploring multimodality-information sources, rich information can be extracted for an accurate NL instruction understanding. Information in one modality can be compensated by information learned from other modalities for better NL disambiguation. Consistency of multimodality information enables mutual confirmations among knowledge from multiple modalities. A reliable NL command understanding could be conducted.

Supported by these advantages, multimodality models have the potential to understand complex plans and various users and to perform practical NL instruction understanding in real-world NLC situations.

Model comparison

Literal models, which use basic linguistic features directly from human NL instructions, are shallow literature-level understanding. Interpreted models, which use multimodality features interpreted from human NL instructions, are comprehensive connotation-level understanding. Each of them has unique advantages, therefore suitable for different application scenarios. For literal models, they are good at scenarios with simple procedures and clear work assignments, such as robot arm control and robot pose control. For interpreted models, they are good at scenarios with involvements of daily common sense, human cognitive logics, rich domain information, such as object physical property-assisted object searching, intuitive machine-executable plan generation, as well as vision–verbal–motion-supported object delivery. From literal models to interpreted models, robots have been more closely integrated with humans both physically and mentally. This integration enables a robot to accurately understand both human requests and practical environments, improving the effectiveness and naturalness of NLC.

Open problems

Although robots using grammar models have an initial capability of understanding human NL instructions during cooperation, the drawback is that feature correlations needed for understanding have been exhaustively listed. It is difficult to summarize all the likely encountered grammar rules. Compared with grammar models, association models give more cooperation-related knowledge to a robot by exploiting associations among literal features. Even though the association model could interpret abstract linguistic features into detailed execution plan, it still suffers from incorrect association problems. These open problems are decreasing NL instruction understanding accuracy and further decreasing robot adaptability.

Although interpreted models are capable of comprehensively understanding human NL instructions by considering practical environment conditions, it is difficult to combine different types of modalities such as motion, speech, and visual cues with an appropriate manner to reveal practical contribution distributions for different modalities. Second, it is difficult to extract contributive features for describing both distinctive and common aspects of one modality in understanding NL instructions. Third, the overfitting problem still exists when using multimodality information to understand NL instructions. NL instruction understanding based on different modalities could be mutually conflicting, thereby preventing the practical implementation of multimodality models. Model details are presented in Table 1.

Summary of NL instruction understanding methods.

NL: natural language.

NL-based execution plan generation

With task knowledge extracted in NL instruction understanding, it is critical to use the task knowledge to plan robot executions in NLC. Models for NL-based execution plan generation (“generation model” for short) are developed for formulizing robot execution plans, theoretically supporting robots to cooperate with humans in appropriate manners. In these models, previously learned piecemeal knowledge is organized with different algorithm structures. Different algorithms enable the models with different cooperation manners under various human–robot cooperation scenarios. For example, dynamic models supported by HMM enable real-time NL understanding and execution, while static models supported by naive Bayesian (NB) enable spatial human–robot relation exploration. During a plan generation, correlations among NLC-related knowledge, such as execution steps, step transitions, and actions, tools, or locations—as well as their temporal, spatial, and logic relations are defined. Regarding reasoning mechanisms, generation models have three main types. Probabilistic models: To enable robots with cooperation associative capability, in which a likely plan is inferred, and appropriate tools and actions are recommended, probabilistic models were developed based on probabilistic dependencies, shown in Figure 6. Logic models: To enable robots with logical reasoning capability, in which internal logics among execution procedures are followed, logic models were developed based on ontology and first-order logics, shown in Figure 7. Cognitive models: To enable robots with cognitive thinking capability, in which plans are intuitively made and adjusted, cognitive models were developed based on weighted logics, shown in Figure 8.

Typical probabilistic models. (a) Takano’s 71 is an HMM model, in which NLC task’s potential execution sequences are modeled by hidden Markov statuses. (b) Salvi et al.’s 72 is a naive Bayesian model, in which observations “object size, object shape” and their conditional correlations such as “size-big, shape-ball,…” are combined to form joint-probability correlations such as “object-size-shape,….” NLC: natural-language-facilitated human–robot cooperation; NL: natural language.

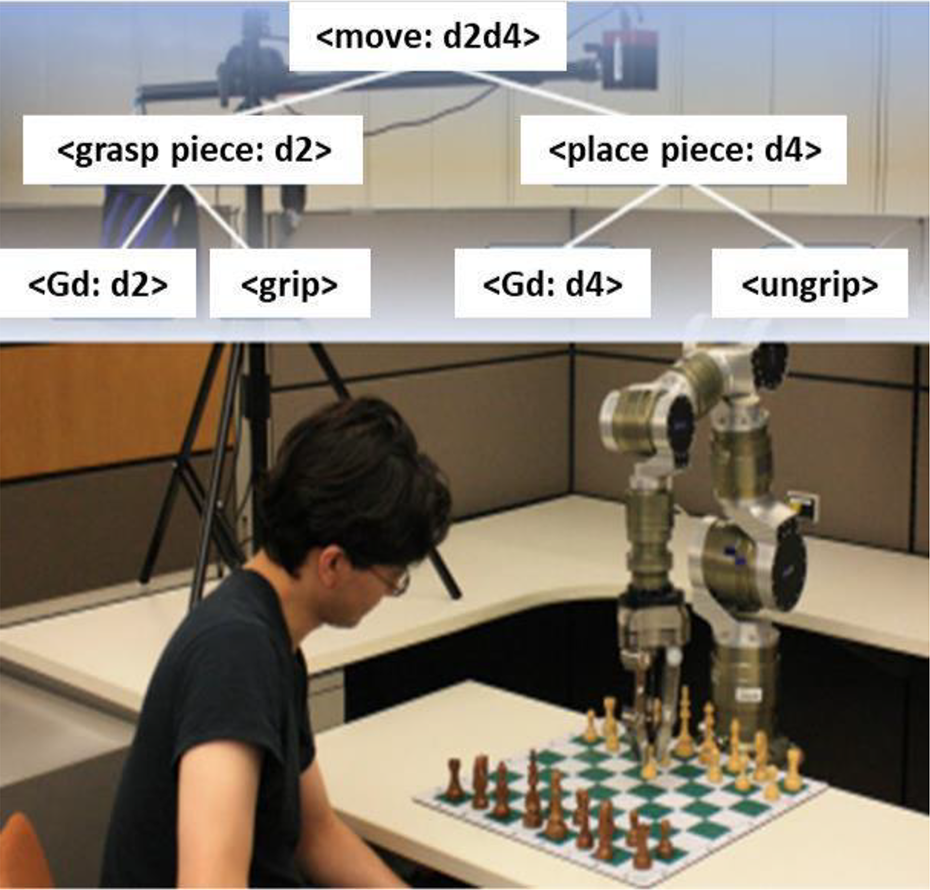

In the study by Dantam and Stilman, 38 hard logic relations, such as “move = (grasp, place),…,” were defined to control robot motion in playing chess with a human.

In the cognitive model, 73 human’s cognitive process in decision-making was simulated by execution logics with different influence weights, based on which important logics with larger weights could be emphasized and trivial logics with smaller weights could be ignored. With this soft logic manner, the flexible cooperation between a human and a robot could be conducted.

Probabilistic model

Joint-probabilistic BN methods

To enable a robot with cooperation planning based on various observations, joint-probabilistic BN methods are developed. By using single-joint probability p(x, y), a robot could use the probabilistic association p between a human NL instruction y, such as “move,” and one execution parameter x, such as object “ball,” to plan simple cooperation such as object placement “move ball.”

72

Typical joint-probability associations in NLC include activity-object associations, such as “drink-cup,”

51

activity-environment associations such as “drink—hot day,”

74

and action-sensor associations.

75

During the generation of cooperation strategies, a single joint-probabilistic BN association is used as independent evidence to describe one semantic aspect of a task. For using multiple joint-probabilistic associations ∏(·,·), interpreted linguistic features of NLC task are collected from various NL descriptions and sensor data, describing relatively complex plans. Typical methods using multiple joint-probability associations include Viterbi algorithm,

75

NB algorithm,

74

and Markov random field.

76

With these algorithms, the most complete plan described in human NL instructions are selected as a human-desired plan. With multi-joint-probabilistic BN models, tackled problems are as follows. Modeling plans by extracting linguistic features, such as NL instruction patterns

76,77

; Enriching cooperation details by aligning multiple types of sensor data, such as speech meaning, task execution statuses, and robot or human motion status

39

; Making flexible plans by specifying verbally-described tasks with appropriate execution details, such as execution actions and effects

78,79

; Intuitively cooperating with a human by integrating current NL descriptions with previous execution experiences

80

; Accurate tool searching by associating theoretical knowledge, such as tool identities with practical real-world evidences, such as tools’ colors and placement locations.

81

One common characteristic of probabilistic models, such as NB, is that dependencies among task features are simplified to be fully or partially independent. 74 In practical situations, when a set of observations are made, evidence, such as speech, object, context, and action involved in cooperation, is usually not mutually independent. 82 As for task plan representation, this simplification brings both negative effects, such as undermining the plan representation accuracy, and positive effects, such as preventing overfitting problems in plan-representation process. The common problem of multi-joint-probabilistic BN models is that temporal associations are ignored, limiting the implementations of real-time NLC.

DBN methods

To enable a temporal knowledge association for real-time cooperation planning, dynamic Bayesian network (DBN) was developed. With DBN, temporal dependencies p( | −1) were propagated among NLC-related requests and object usages −1. 83 Given that the final format of DBN is the joint probabilistic form p(y, , −1, ), DBN is still a joint-probabilistic model. A widely used DBN algorithm in NLC is HMM algorithm, 84 which uses a Markov chain assumption to explore the hidden influence of previous task-related features on the current NLC status. The rationale of HMM in NLC is that human-desired executions, such as going to a position, grasping a tool, and lifting a robot hand, are decided by the previous cooperation ( −1), such as action sequence, and current cooperation. These statuses include environmental conditions, task execution progress, and human NL instructions, as well as working statuses for the human and robot. HMM uses both observation probabilities (absolute probability p(x)) and transitions abilities (conditional probability p(Y/X)) for modeling associations P(x, y) among NLC-related knowledge. 71,84 With HMMs, tackled problems mainly include real-time task assignments, 85 dynamic human-centered cooperation adjustment, 71,86 accurate tool delivering by simultaneously fusing multi-view data such as NL instruction, shoulder coordinates, shoulders-elbows’ 3-D angle data, and hand poses. 84,87 Limited by Markov assumptions, HMM is only capable of modeling shallow-level hidden correlations among NLC-related knowledge. Moreover, given that hidden statuses need to be explored for HMM modeling, a large amount of training data is needed, limiting HMM implementations in unstructured scenarios with limited training data availability.

Logic model

To support a robot with rational logical reasoning of cooperation strategies, rather than merely conducting exhausting probabilistic inferences from various NL-indicated evidences, logic models were developed. Logic models teach robots using unviolated logic formulas to describe complex execution procedures which include multiple actions and statuses. Unviolated logics usually are first-order logic formulas, such as “in possible worlds a kitchen is a region (∀w∀x(kitchen(w, x) → region(w, x))).”

88

The rationale behind logic models in NLC is that an NLC task is decomposed into sequential logic formulas by satisfying which specific NLC task could be accomplished. In a logic model, logics are equally important without contribution differences toward execution success. Logic relations, including tool usages, action sequences, and locations, are defined in the structure. Typical tackled problems include the following: Autonomous robot navigation by using logic navigation sequences, such as going to a location “hallway” then going to a new location “rest room.”

66,70

Environment uncertainty modeling by summarizing potential executions, such as “ground atoms (Boolean random variables) eats (Dominik, Cereals), uses (Dominik, Bowl), eats (Michael, Cereals) and uses (Michael, Bowl).”

89

Robot action control by defining action-usage logics such as “move (grasp piece(location, grip), place piece(location, ungrip)).”

66,90

–92

Autonomous failure analysis by looking up first-order logic representations to detect the missing knowledge, such as “tool brush, action: sweep.”

4,92

NL-based robot programming by using the grammar language, such as point(object, arm-side), lookAt(object), and rotate(rot-dir, arm-side).

69

The drawback of logic models in modeling NLC tasks is that logic relations defined in the model are hard constraints. If one logic formula was violated in practical execution processes, the whole logic structure would be inapplicable, and the task execution would fail. This drawback limits models’ implementation scopes and reduces a robot’s environment adaptability. Moreover, hard constraints were defined indifferently, ignoring the relative importance of executions. The execution flexibility is undermined due to critical executions not being focused and trivial executions not being ignored when the NLC plan modifications are necessary.

Cognitive model

Neural science research 93 and psychology research 94 proved that a cognitive human planning is not a sensorimotor transforming, instead a goal-based cognitive thinking. This reasoning is reflected on that cognitive thinking of cooperation is not relying on specific objects and specific executions, instead it is merely relying on goal realization. Based on this theory, another generation model category is summarized as a cognitive model. Human-like robot cognitive planning in NLC is reflected in flexibly changing execution plans (different procedures), adjusting execution orders (same procedures, different orders), removing some less-important execution steps (same procedures, less steps), and adding more critical executions procedures (similar procedures, similar orders).

Cognitive models

To develop human-like robot cognitive planning for robust NLC, cognitive models are developed by using soft logic, which is defined by both logic formulas and their weighted importance. A typical cognitive model is Markov logic network (MLN) model. MLN represents NLC task in a way such as “0.3Drill(1) ^ 0.3TransitionFeasible(1, 2) ^ 0.3Clean(2) ⇒ 0.9Task(1, 2),” 4 imitating the human cognition process in task planning.

In this model, single execution steps and step transitions were defined by logic formulas, which could be grounded into different logic formulas by substituting real-world conditions. With this cognitive model, a flexible execution plan can be generated by omitting non-contributing and weak-contributing logic formulas and involving strong-contributing logic formulas. Different from hard constraints in logic models, constraints (logic formula) in MLN are soft. These soft constraints mean when human NL instructions are partially obeyed by a robot, the task could still be successfully executed. Typical tackled problems include using MLN to generate a flexible machine-executable plan from human NL instructions for autonomous industrial task execution, 73 NL-based cooperation in uncertain environments by using MLN to meet constraints from both robots’ knowledge availability (human-NL-instructed knowledge) and real-world’s knowledge requirements (practical situation conditions). 89,95 The advantage of using cognitive models in NLC is that soft logic is relatively like a human’s cognitive process reflected in human NL instructions during cooperation. It helps a robot with intuitive cooperation in unfamiliar situations by modifying, or replacing, and executing plan details, such as tool or action usages, improving robots’ cognition levels and enhancing its environment adaptability. The major drawback is that MLN is still different from human cognitive processes to consider logic conditions at a deep level to enable plan modification, new plan making, and failure analysis. Logic parameters for analyzing real-world conditions are still insufficient to imitate logic relations in the human mind, thereby limiting robots’ performances in adapting to users and environments.

Model comparison

Usually the probabilistic model is conducted in an end-to-end manner, which directly reasons cooperation strategies from observations, ignoring internal correlations among execution procedures. A logic model uses a step-by-step manner, with which ontology correlations and temporal or spatial correlations among execution procedures are explored, enabling process reasoning for intuitive planning. The cognitive model also uses a step-by-step model. Including logic correlations, the cognitive model also explores relative influences of execution procedures, enabling a flexibly plan adjustment. For the probabilistic model, it is good for scenarios with rich evidence and single objective goal, such as tool delivery and navigation path selection. For the logic model, it is good for scenarios with either poor evidence or multiple objective goals, such as assembly planning and cup grasping planning. For the cognitive model, it is good for rich or poor evidence and multiple subjective goals, such as human emotion-guided social interaction, and human preference-based object assembly.

Open problems

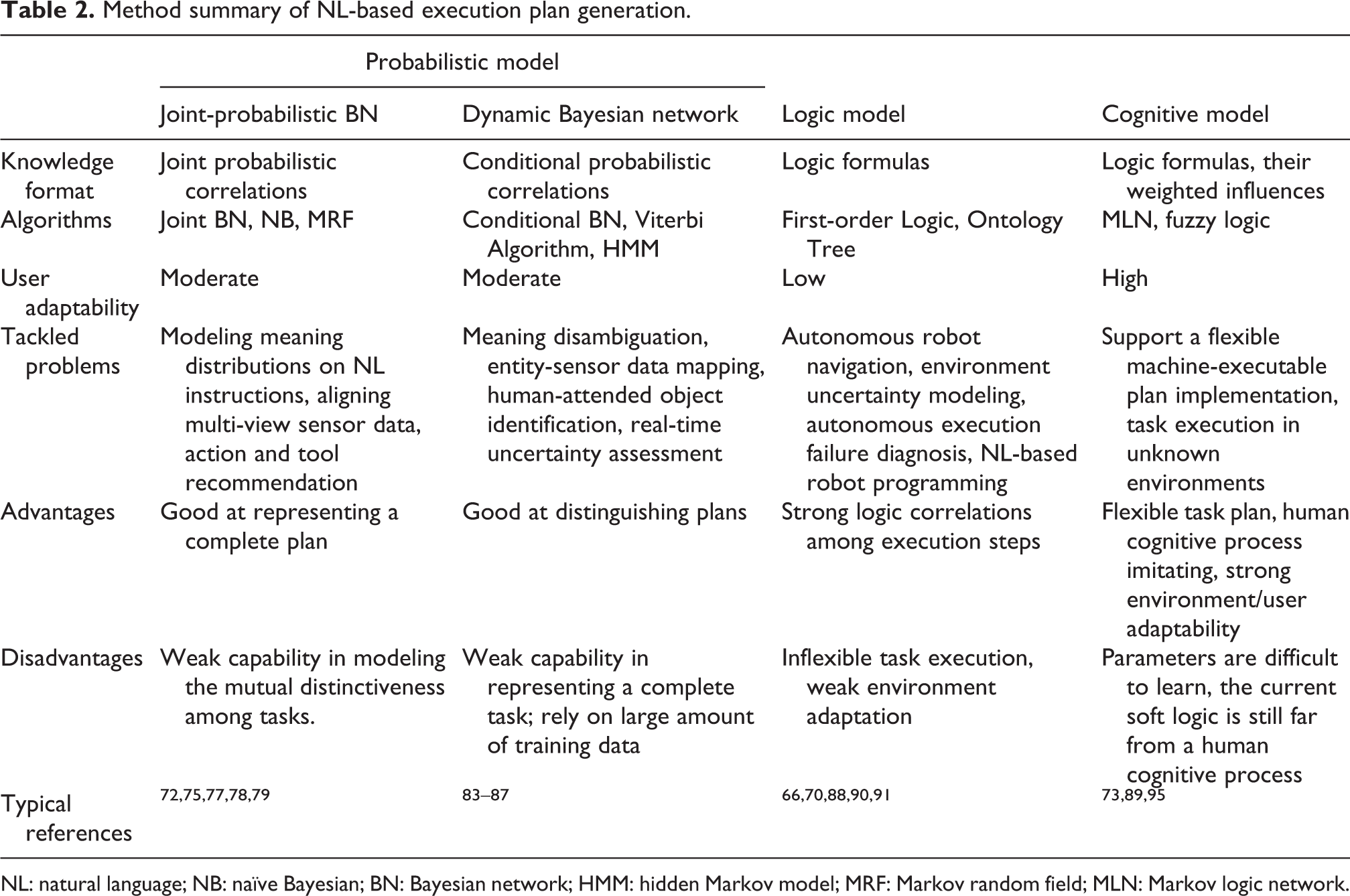

Probabilistic models lack explorations of indirect human cognitive processes in NLC, limiting naturalness of robotic executions. Logic models are inflexible and incapable of simulating a human’s intuitive planning in real-world environments. The cognitive model is close to a human’s cognitive process in simulating flexible decision-making processes. However, cognitive models are still suffering from two types of shortcomings. One shortcoming is that cognitive process simulation is still not a cognitive process because the fundamental theory of cognitive process modeling is lacking insufficient support for a human-like task execution. 89 The second problem is the difficulties of cognitive model learning. Different individuals have different cognitive processes, thus making it difficult to learn a general reasoning model. Model details are presented in Table 2.

Method summary of NL-based execution plan generation.

NL: natural language; NB: naïve Bayesian; BN: Bayesian network; HMM: hidden Markov model; MRF: Markov random field; MLN: Markov logic network.

Knowledge-world mapping

With understanding of NL language and execution plans, it is critical for a robot to use this knowledge in practical cooperation scenarios. Knowledge-world mapping methods are developed to enable intuitive human–robot cooperation in real-world situations. The general process of knowledge-world mapping is shown in Figure 9. Considering the different implementation problems, knowledge-world mapping methods include two main types: theoretical knowledge grounding and knowledge gap filling. Theoretical knowledge grounding methods accurately mapped learned knowledge items, such as objects, spatial/temporal logic relations, into corresponding objects and relations in real-world scenarios. Gap filling methods detect and recommend both the missing knowledge, which is needed in real-world situations, but has not been covered by theoretical execution plans, as well as real-world inconsistent knowledge, which is provided by a human, but could not find corresponding things in practical real-world scenarios.

Typical methods for theoretical knowledge grounding. In (a), Takano and Nakamura 11 predefined motions, such as “avoiding, bowing, carring,…,” were directly associated with their corresponding symbolic words. In (b), Hemachandra et al.’s 96 special features, such as “kitchen location, lab locations,…,” were considered to identify human-desired paths.

Theoretical knowledge grounding

To accurately map theoretical knowledge to practical things, knowledge grounding methods are developed. In these methods, a knowledge item is defined by properties, such as visual properties “object color and shape” captured by RGB cameras, motion properties “action speed” captured by motion tracking systems and execution properties “tool usage and location” captured by RFID. Different from the direct symbol mapping method, which has an element-mapping manner, the general property mapping method has a structural mapping manner. The rationale behind these methods is that a knowledge item can be successfully grounded into the real world by mapping its properties. The properties were collected by using methods such as “semantic similarity measurement,”

97

which can establish correlations between an object and their corresponding properties. One typical mapping method by using general property mapping is semantic map.

96,98

Theoretical indoor entities such as rooms and objects are identified by meaningful real-world properties, such as location, color, point cloud, spatial relation “parallel,” neighbor entities, constructing a semantic map with both objective locations and semantic interpretations “wall, ceiling, wall, floor.” For detecting visual properties in the real world, RGBD cameras are usually used. For spatial relations, it is detected by laser sensors and motion tracking systems. By identifying these properties in the real world, an indoor entity is identified, enabling an accurate robotic navigation in real-world NLC. Other typical mapping method also include the following. Object searching by using NL instructions (detected by microphones) as well as visual properties such as object color, size, and shape (detected by motion tracking systems and cameras).

99

Executing NL-instructed motion plans, such as “pick up the tire pallet” by focusing on realizing actions “drive, insert, raise, drive, set.”

100

Identifying human-desired cooperation places, such as “lounge, lab, conference room,” by checking spatial-semantic distributions of landmarks, such as “hallway, gym,…”

101

With mapping methods, knowledge could be mapped into real world in a flexible manner, in which only parts of properties need to be mapped for grounding a theoretical item into a real-world thing. This manner could improve a robot’s adaptability toward users and environments. The limitation is that these mapping methods still use predefinitions to give a robot knowledge, reducing the intuitiveness of human–robot cooperation.

Knowledge gap filling

A theoretical execution plan defines an ideal real-world situation. Given unpredicted aspects in a practical situation, even if all defined knowledge has been accurately mapped into the real world, it is still challenging to ensure the success of NLC by providing all knowledge needed in a practical situation. Especially in real-world situations, human users and environment conditions vary, causing the occurrences of knowledge gaps, which are knowledge required by real-world situations but are missing from a robot’s knowledge database.

To ensure the success of a robot’s execution, knowledge gap filling methods are developed to fill in these knowledge gaps. There are three main types of knowledge gaps: (1) environment gaps, which are constraints such as tool availability and space or location limitations imposed by unfamiliar environments 102 ;

(2) robot gaps, which are constraints such as a robot’s physical structure strength, capable actions, and operation precision 103 ; (3) user gaps, which are missing information caused by abstract, ambiguous, or incomplete human NL instructions. 104,105 Filling up these knowledge gaps enhances robot capability in adapting dynamic environments and various tasks or users. Knowledge gap filling is challenging in that it is difficult to make a robot aware of its knowledge shortage in specific situations, and it is difficult to make a robot understand how missing knowledge should be compensated for successful task executions.

The first step of gap filling is gap detection. Gap detection methods mainly include the following.

Hierarchical knowledge structure checking, which detects knowledge gaps by checking real-world-available knowledge from top-level goals to low-level NLC execution parameters defined in a hierarchical knowledge structure. 41,103

Knowledge-applicability assessment, which detects knowledge gaps by checking the similarities between theoretical scenarios and real-world scenarios. 34,103

Performance-triggered knowledge gap estimation, which detects knowledge gaps by considering the final execution performances. 105,106

Hierarchical knowledge structure checking has the rationale that if desired knowledge defined in a knowledge structure is missing in real-world situations, then knowledge gaps exist. Knowledge applicability assessment has a rationale that if the NLC situation is not similar with the previously trained situations, then knowledge gaps exist. Performance-triggered knowledge gap estimation has a rationale that if the final NLC performances of a robot is not acceptable, then knowledge gaps exist. In this detection stage, execution plan provides reasoning mechanisms. While real world provides practical things such as objects, locations, human identities, and relations such as spatial relations and temporal relations, which are detected by perceiving systems.

The second step of gap filling is gap filling. Gap filling methods mainly include the following. Using existing alternative knowledge such as “brush” in the robot knowledge base to replace inappropriate knowledge such as “vacuum cleaner” in NLC tasks such as “clean a surface”

4,106

; Using general commonsense knowledge “drilling action needs driller” in a robot database to satisfy the need for a specific type of knowledge such as “tool for drilling a hole in the install a screw task”

106,103

; Asking knowledge input from human users by proactively asking questions such as “where is the table leg”

105,107,108

; Autonomously learning from the Internet for recognizing human daily intentions, such as “drink water, wash dishware.”

107,108

In gap filling stage, execution plan describes the needed knowledge items. Real world provides practical objects as well as robot performance monitoring.

Model comparison

Knowledge grounding model and knowledge gap filling model are two critical steps for a successful mapping between NL-instructed theoretical knowledge and real-world cooperation situations. For knowledge grounding models, the objective is strictly mapping NL-instructed objects and logic relations into real-world conditions. It is a necessary step for all the NLC application scenarios, such as human-like action learning, indoor, and outdoor cooperative navigation. For knowledge gap filling models, the objective is to detect and repair missing or incorrect knowledge in human NL instructions. It is only necessary when human NL instruction cannot ensure successful NLC under given real-world conditions. Typical scenarios include daily assistance such as serving drink, where information such as correct types of “drink,” “vessel,” and default places for drink delivery is missing; cooperative surface processing where execution procedures are incorrect and tools are missing.

Open problems

A typical problem of theoretical knowledge grounding is the non-executable-instruction problem. Human NL-instructed knowledge is usually ambiguous that NL-mentioned objects are too ambiguous to be identified in real world; abstract that high-level cooperation strategies are difficult to be interpreted into low-level execution details; information-incomplete that important cooperation information such as tool usages, action selections, and working locations are partially ignored; real-world inconsistent that human NL-instructed knowledge is not available in real world. These non-executable problems limit practical executions of human NL-instructed plans. One type of cause of non-executable-instruction problems include intrinsic NL characteristics, such as omitting, referring, and simplifying, as well as human speaking habits, such as different sentence organizations and phrase usages. Another type of cause is the lack of environment understanding. For example, if object-related information such as availability, location, and distances to a robot or human was ignored, it is difficult for a robot to infer which object a human user needs. 51

For knowledge gap filling, when a robot queries knowledge from either a human or open knowledge databases such as openCYC, 109 the scalability is limited. For a specific user or a specific open knowledge database, available contents are insufficient to satisfy general knowledge needs in various NLC executions. The time and labor cost are high, further limiting knowledge supports for NLC. Model details are presented in Table 3.

Summary of knowledge-world mapping methods.

Discussion

DL for better command understanding

Nowadays, NLP is undergoing a deep learning (DL) revolution to create sophisticated models for semantic analysis. Potential benefits of using advanced DL models for NLC include the following.

The word embedding methods, add semantic correlations, such as “cats and dogs are animals,” into irrelevant words, such as “cat, dog.” 110 In future NLC research, embedding methods could be used to introduce extra task-specific meanings, such as general common sense “drilling needs the tool driller” and safety rules, “stay away from hot surface and sharp tools,” endowing robots with better command understanding with awareness of environment limitations and human requirements.

Sequence-to-sequence language model, such as long- and short-term memory, sequentially outputted meaning based on the continuously inputted text. 109,111 In future NLC research, sequence-to-sequence models will enable robots to follow real-time instructions for timely executions and modifications, by aligning temporal verbal instructions with task-related knowledge, such as “action sequence and location assignments.”

Attention-based NL understanding models, such as “Recurrent neural networks (RNN) encoder–decoder,” emphasize relatively important words by increasing the weights of the important expressions. 112 For example, in translating sentence “that does not mean that we want to bring an end to subsidization,” keyword “subsidization” which with key information is emphasized. 113 In future NLC research, attention models could help a robot to focus on human-desired executions by analyzing verbal attention.

Cost reduction for knowledge learning

Cost reduction in knowledge collection is critical for intuitive NLC. On the one hand, to understand human NL instructions, represent tasks, or fill in knowledge gaps, a large scale of reliable knowledge is needed. On the other hand, time, economic cost, and labor investments need to be reduced. To solve this problem, two trends in developing knowledge-scaling-up methods appear recently: existing-knowledge exploitation and new-knowledge exploration. In existing-knowledge exploitation, existing knowledge is interpreted and extracted into general knowledge, thereby increasing knowledge interchangeability. Knowledge for specific situations, such as “use cup and spoon for preparing coffee,” could be used for general situations, such as “preparing drink.” 74 In new-knowledge exploration, new knowledge is collected by proactively asking human and autonomously retrieving from the Word Wide Web, 114 books, 115 operation logs, 116 and videos. 117

NLC system personalization

When a robot cooperates with a specific human for a long time, the personalization of a robot becomes critical. For personalization, it does not only mean defining individualized knowledge for a robot to adapt to a specific user, but it also means designing a knowledge-individualization method, for a robot to autonomously adapt to variable users. 102 Therefore, a future research in NLC would be developing knowledge-personalization methods to consider both execution preferences and social norms, supporting a long-term NLC personalization.

Safety consideration in NLC system design

When robots are deployed in human-involved environments, it is necessary to follow the safety standards 118,119 to minimize and detail safety hazards before actual implementations. The objective of enforcing the safety standards in NLC system design is for protecting humans’ individual safety and psychological comfortableness. Given the unique aspects of NLC systems in communication and interaction, the safety considerations are typically three types, summarized as follows. First, verbally instructed actions that endanger human being’s safety should be rejected or be carefully assessed by the robotic systems. 120 This requires NLC systems to have safety-related common sense for safety issue understanding and prediction. Second, the verbal abuse from either a human to a robot or from a robot to a human should be avoided, because verbal abuse will make a human feel uncomfortable psychologically and finally influence the performance of NLC systems. 121,122 The psychology comfortableness of NLC systems requires the research of human behaviors and human–machine trust. Third, it is necessary to enforce rigid risk assessment and controlled safety verification before releasing the NLC systems into the market, to make sure NLC systems are safe and helpful without causing safety issues for humans. 123

Conclusion

This article reviewed state-of-the-art methodologies for realizing NLC. With in-depth analysis of application scenarios, method rationales, method formulizations, and current challenges, and research of using NL to push forward the limits of human–robot cooperation were summarized from a high-level perspective. This review article mainly categorized a typical NLC process into three steps: NL instruction understanding, NL-based execution plan generation, and knowledge-world mapping. With these three steps, a robot can communicate with a human, reason about human NL instruction, and practically provide human-desired cooperation according to human NL instructions.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.