Abstract

In this article, the cognitive vision module of an autonomous flying robot is studied. The problem of the scene understanding by the robot, which flies on the high altitude, is analyzed. In such conditions, the examined scene can be regarded as two-dimensional. It is assumed that the robot operates in the urban-type environment. The scene representation is stored in the neighborhood graph that collects data about the objects locations, shapes, and their spatial relations. The fragments of the scene are understood by the robot in the context of neighborhoods of the objects. It is shown that such information can be effectively used for recognition of the object, while many objects of similar shape exist in the scene. In the proposed recognition process, not only the information about the shape of the object is utilized but also the spatial relations with other objects in its close neighborhood are examined.

Introduction

Autonomous mobile robots have to be equipped with a system of sensors connected with a cognitive module that processes the signals obtained from the sensors. In such a context robot, vision systems have been studied for years—the research by Bonin-Font et al. 1 and Tadeusiewicz 2 can be put as examples. Such systems have special meaning for autonomous robots, including unmanned aerial vehicles (UAVs). In an unknown environment, cognitive abilities such as the object recognition, 3,4 the scene analysis, 5 –9 and its understanding are indispensable (see the work done by Bielecka et al. 10,11 and Tadeusiewicz 12,13 in the context of medical image understanding). Therefore, systems of pattern recognition and scene analysis based on vision systems have been studied intensively in robotics in the context of scene understanding and the positioning of a robot. 14 –18

Most of the approaches presented in these studies have specific specializations which force utilization of different algorithms and techniques. Comparing the method presented in this article to those discussed in the studies by Siagan and Itti 17 and Sinopoli et al. 18 , it can be used to model not only the overall shape of the scene or its salient features but to create, store, and process the models of specific artifacts located in the examined scene—like buildings, cars, and so on. Modeling and keeping the information about the shapes of objects discovered in the scene creates the possibilities for precise close-to-object navigation, essential for inspection tasks. The advantage over most of the methods described in the literature by Filliat and Mayer 14 Yershova et al., 15 and Muratet et al. 16 is the utilization of graph data structure to store all the information about the scene that is collected by the UAV during the operation. Such approach has various benefits. For example, the scene model can be represented in human readable way for further analysis as well as for easy analysis with graph algorithms (like subgraph search presented in this article used in localization algorithm).

In this article, the module of two-dimensional scene analysis, which can be applied for use by a UAV, is proposed. The presented studies are a continuation of the investigations described in the studies by Bielecki et al. 19 –21 In particular, the detailed description of the state of the art and motivations can be found in the study by Bielecki et al. 20

The problem formulation

Let us recall briefly the specification of the problem formulation—see the study by Bielecki et al. 20 for details.

Let us consider a UAV that operates in an urban environment. The analysis of the mission scene—localization of a specific building and studying the properties of the scene—is one of the agent’s tasks. Let us assume that the agent’s memory contains the map, which is given a priori. Furthermore, let the robot be equipped with the mobile camera that is able to point to the ground in order to take the pictures of the surface above which it operates. The map that the robot carries in memory presents the buildings extracted from the background. By referring to the given two-dimensional map and the two-dimensional image from its sensors, the agent compares the extracted shapes of the buildings and orients itself in the environment. Such task is fundamental for a UAV in order to conduct safe and robust navigation.

22

The method of the objects recognition has to be rotation and scale invariant as the pictures of the ground are taken from different altitudes and various directions of the robot’s flight. Then, the robot descends in order to explore the environment directly using a camera or other types of sensors, like a single-point directional rangefinder or an light detection and ranging (LIDAR) system. The close exploration of the environment allows the agent to create its three-dimensional representation. As a result, the desired object can be located, and the robot gets better understanding of the surrounding area. Thus, the studies can be divided in the following stages: Creation of a single, two-dimensional object representation based on the camera image taken from high altitude. Creation of the overall two-dimensional scene representation based on the camera image. This step allows the robot to recognize the recorded scene as a fragment of the arbitrarily given map. The single desired object, marked on the map, should be recognized. Obtaining a three-dimensional representation of the scene fragments based on the calculated two-dimensional scene representation. The two-dimensional representation allows the agent to locate the buildings. When their localization is known, the robot can investigate individual objects in order to create their three-dimensional representation. Understanding the three-dimensional scene.

The second point from the list is the topic of this article. While such scenario can also be related to the search for landing position which is widely studied, 23,24 the investigation presented in this article focuses more on universal method of shape recognition. It allows to find the particular shape among multiple similar shapes in the scene. This is accomplished by incorporating idea of spatial relationship between objects into the recognition process.

In the article by Bielecki et al., 20 the authors focused on single object analysis, and the two-dimensional scene analysis was addressed only initially. This article is a continuation of this research. Here, the problem of scene analysis is described in detail.

Algorithm

The scene analysis in this article is aimed to develop a model of area that enables the robot to, in later steps, conduct search of selected object in real environment. It is important to note that when coordinates are mentioned in terms of object or scene representation, the authors mean the Euclidean coordinates of object with respect to the frame of image taken as an input for the algorithms. It means that point (0,0) of the Euclidean space is in the bottom left corner of the input image.

The following scenario is presented to give the notion of how the proposed algorithms work and what is their sample application: The flying robot’s task is to find the building in real environment. A bitmap picture representing the scene photographed from high altitude is used as a reference information. The image is not taken by a robot itself, but it is provided in the form of previously prepared data and is being used by the robot during investigation of the area. As the preprocessing methods are outside of the scope of this article, it is assumed that input data is already a prepreprocessed image. It consists of a group of well-separated, contrasting objects (buildings). Provided bitmap image is vectorized to obtain vector data representing objects shapes. Representation of single object is a sequence of points on Euclidean plane, highlighting the corners of buildings. The algorithm of neighborhood graph (later addressed also by During robot’s operation, it takes several pictures of the area beneath. Each picture is vectorized, and neighborhood graph is calculated for each of the pictures. The graphs carry information about the relation between objects in fragments of the investigated area. These pictures and graphs will be referenced later by Syntactic algorithm is used for searching marked object in currently examined graph. Once the object is found, its neighborhood is compared with the neighborhood of If not all

It may also happen that marked object has a duplicate in the

Vectorization

Vectorization algorithm was described in detail by Bielecki et al.

19

–21

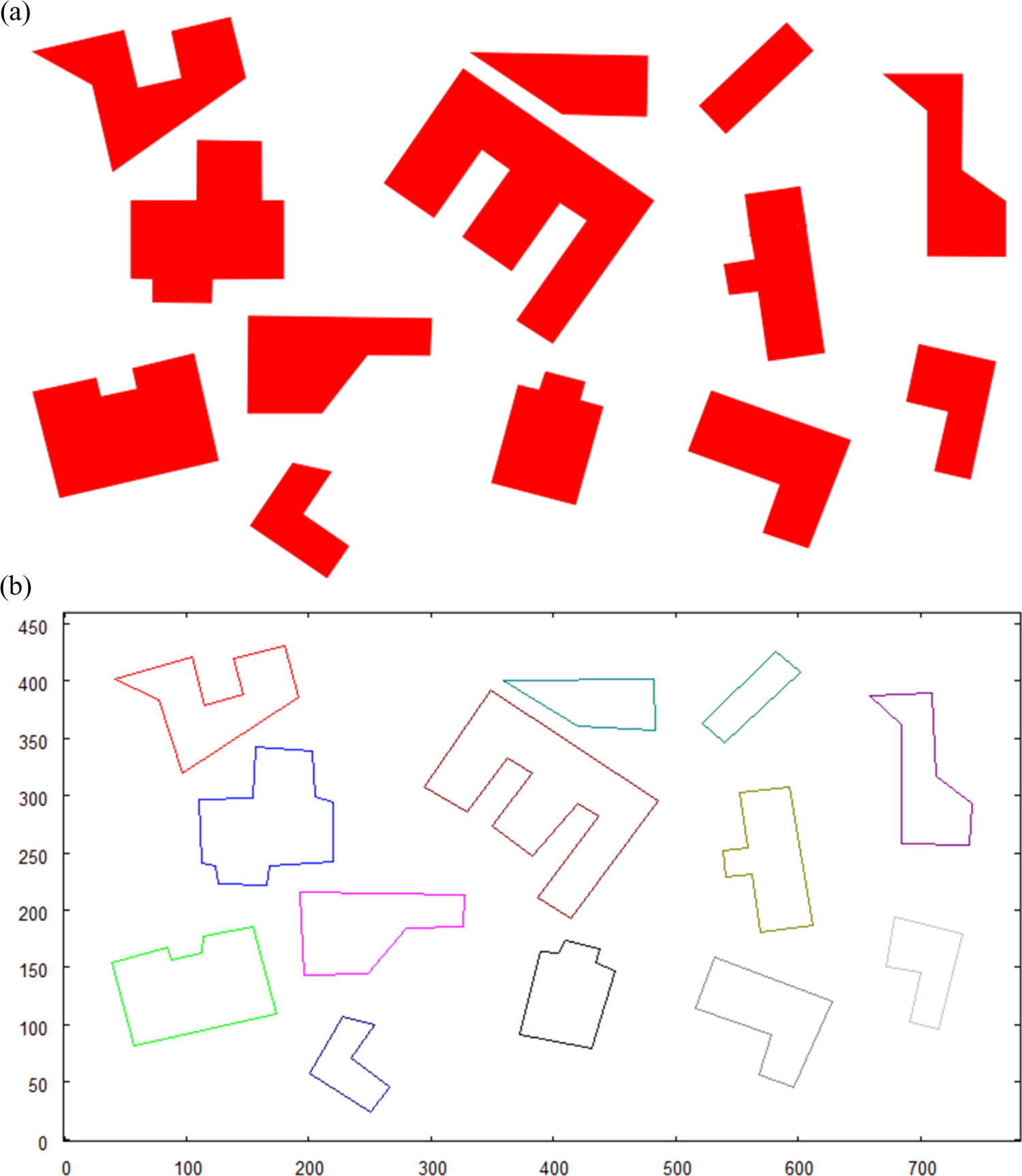

This algorithm allows to obtain a memory-efficient vector representation from preprocessed picture. Input for this method is a bitmap picture with distinctive shapes, representing objects (buildings) that will be subject for later examination. Such picture can be a result of preprocessing executed on image from the robot’s camera during the investigation of a given area. The result of the algorithm consists of a set of objects’ vector representations. Each vector representation is a sequence of points in Euclidean space, located in the border of the shapes. When extracting objects (buildings) from the bitmap, it is necessary to obtain accurate representation of their shapes from the input picture. The desired, accurate representation is the one, where points in the sequence represent coordinates of the corners of extracted object. This is essential for proper recognition of shapes taken from both

(a) Input for vectorization; (b) vectorization result. Coordinates calculated with respect to the bottom left corner of the picture.

Neighborhood graph construction

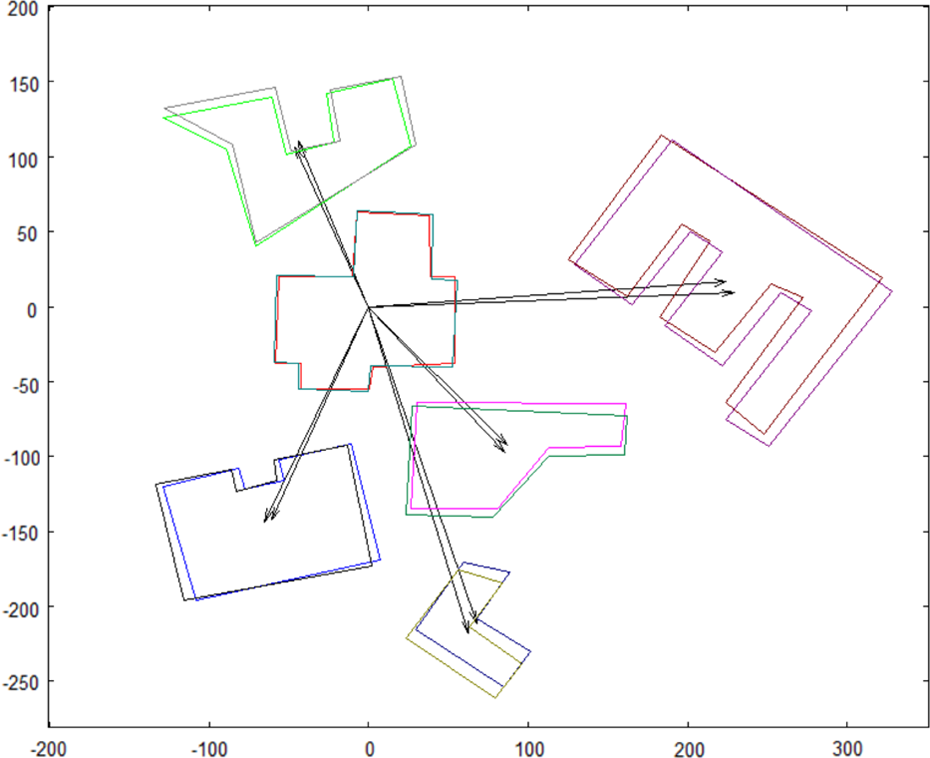

Neighborhood graph is a data structure designed to store the scene representation. It collects data about objects locations (coordinates of their centroids), shapes (their vector representation), and their spatial context. The context is defined by the list of objects that are in close neighborhood. The input for graph construction algorithm consists of a set of vectorized objects. For each object in the set, a list of buildings located in close neighborhood are found in this set. It is accomplished in a following manner for currently processed object The distance is measured from the centroid of the object The object A range The set of objects is searched again. Each object having at least one corner coordinate lying within the range

The method of finding the closest neighbors is illustrated in Figure 2(a). The final graph structure is presented in Figure 2(b).

(a) Neighborhood graph construction with one object as an example; (b) result graph.

For the purpose of further calculations (like searching the graph), the centroid of each object is stored in data structure along with the representation of each original object and those in the closest neighborhood list. A part of the neighborhood graph data structure (see Figure 3) collects spatial context of object

Neighborhood graph structure part.

Search method

Based on the neighborhood graph and the notion of spatial context of an object, the search algorithm was designed. It is aimed to search an object, selected from one graph, among the objects from the second graph. In our proposed scenario, one object ( First part of this method is the search of the object selected from When the object The marked object from The object found in The object The final step is to check if the corresponding objects from the closest neighborhood of

Representation used for syntactic algorithm of object matching. Example of match found between two objects.

Marked object from

Results

The following tests were executed to cover different situations mentioned in the scenario from the beginning of the previous section.

Incomplete match

The first test was executed for input picture that does not show complete area with

(a) Visualized graph structure with the object to be searched—highlighted with the thick lines; (b) graph constructed from input picture where marked object is searched.

(a) Two translated objects, marked and found, with their neighborhood. Partial match found in searched graph. Note that one neighbor object from reference graph was not found. (b) Input graph with recognized objects.

Complete match

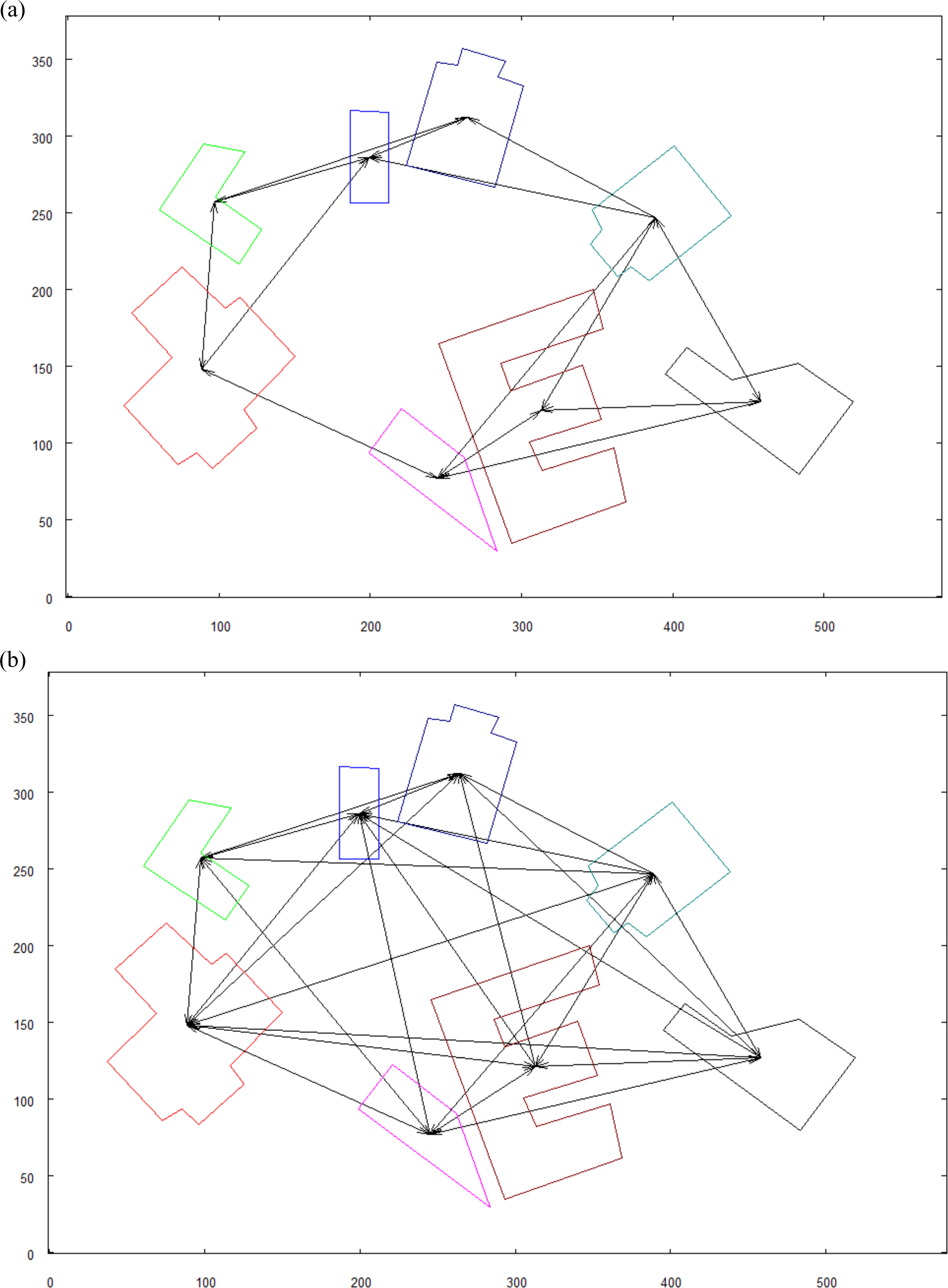

In order to increase the confidence of the matching which was found by the robot, a bigger picture is used. It would be the best if the complete spatial context of the searched object were recognized. Such case is shown in Figures 9 and 10. Note that the reference map is the same as in Figure 7(a), only the input image is changed to one that covers wider area. Factors calculated in this test—

Graph constructed from input picture where marked object is searched.

(a) Two translated objects, marked and found, with their neighborhood. Complete match found—each object from the neighborhood has its corresponding object found in the input picture; (b) input graph with the recognized objects.

Duplicate of marked object

In Figure 11, there is a marked object that shares the same spatial context with other object in graph. To make spatial context unambiguous, the graph is extended in the manner where “neighbor of my neighbor is my neighbor.” The results are shown in Figure 12. It can be observed that the graph became much more complex, and the spatial context for each object is more unique. The input graph is also extended. The graph before and after extension can be seen in Figure 13. Factors calculated in this test—

Reference graph with the marked object (signed with “M”) that has a duplicate with the same spatial context.

Extended reference graph. The marked object signed with “M.”

(a) Graph of the input picture before extension; (b) result of the extension of neighborhood in “neighbor of my neighbor is my neighbor” manner.

The marked object was recognized in the input graph. The full matching has not been found because the input image covered smaller area than the range of all neighbors of the marked object. The results are shown in Figure 14.

(a) Two translated objects, marked and found, with their neighborhood. The partial match found, indicated by the group of objects having a pair and three not paired; (b) input graph with the recognized objects.

Discussion

In section “Algorithm,” in suggested scenario, it was stated that precheck is executed on the neighborhood graph to check if

Concluding remarks

In this article, the graph-based approach to a scene representation and recognition was presented. In the previous investigations,

19,20

the authors presented the approach to two-dimensional shapes recognition. Recognition of a group of objects was studied, as well. Those studies, however, were not focused on a scene representation and understanding. In the current study, the neighborhood graph was introduced that constitutes the data structure aimed to provide an informative scene representation for the autonomous robot. The proposed data structure holds spatial information about the scene where close objects are related to each other. The nodes of the graph collect data about the scene objects, their shapes, and locations. The neighborhood graph also constitutes a base for a recognition of the objects with high level of certainty. The object from one graph is searched among objects in another graph with their close neighborhood taken into account. The recognition process is based on comparing not only the shape of the searched object but also its spatial relations with those objects that lay close to it. The experiments showed that different levels of certainty of the searching can be achieved when the robot obtains various images of the investigated scene. The desired level of certainty will differ from one application to another. When the robot is required to find the object in the scene with complete confidence, it has to collect a wider image of the scene. In such image, the searched object should be visible with the complete set of close objects from

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.