Abstract

Ensemble registration is concerned with a group of images that need to be registered simultaneously. It is challenging but important for many image analysis tasks such as vehicle detection and medical image fusion. To solve this problem effectively, a novel coarse-to-fine scheme for groupwise image registration is proposed. First, in the coarse registration step, unregistered images are divided into reference image set and float image set. The images of the two sets are registered based on segmented region matching. The coarse registration results are used as an initial solution for the next step. Then, in the fine registration step, a Gaussian mixture model with a local template is used to model the joint intensity of coarse-registered images. Meanwhile, a minimum message length criterion-based method is employed to determine the unknown number of mixing components. Based on this mixture model, a maximum likelihood framework is used to register a group of images. To evaluate the performance of the proposed approach, some representative groupwise registration approaches are compared on different image data sets. The experimental results show that the proposed approach has improved performance compared to conventional approaches.

Introduction

Image registration aims to geometrically match up two or more images of the same scene taken at different times, from different perspectives, and/or by different imaging machineries. 1 It is a crucial step in many image analysis tasks, including image fusion, change detection, and image super resolution. 2 In many situations, scene information of images is acquired from different sources. For example, vehicle images of road traffic system are acquired with different sensors or illumination directions. In medical imaging, the underlying patient anatomy could be imaged by different acquisition techniques such as magnetic resonance (MR), computed tomography, position emission tomography, and ultrasound (US). It is difficult to register such images because that the image intensities cannot be compared directly. This kind of registration problem is referred as multisensor registration.

Through literature review, a large number of methods have been proposed to deal with image registration problem. These methods broadly fall into two categories: feature-based and intensity-based methods. Feature-based methods extract salient features from two images and then find a geometrical transformation to match the two sets of features. 3 –5 The preferred features include corners, line intersections, edge lines, contours, and closed-boundary regions. These features are manually or automatically detected and represented by control points. 1 The advantage of feature-based methods is that they are fast and robust to noises and large geometric distortions. However, if the distorted images come from different modalities, the same image scenes of these images have large differences in intensity and morphology. In this case, using feature-based methods alone cannot get accurate registration results.

To address this deficiency, several researches developed a two-step registration scheme. 6,7 First, a coarse result is produced by implementing a feature-based method. This result is used as an initial solution for the next step. Then, an optimal result is produced by implementing an intensity-based method. Intensity-based methods define similarity measures directly based on the joint intensity distribution of two images and consider the registration problem as an optimization process to minimize or maximize the similarity measures. In this kind of methods, correlation coefficient 8 and mutual information 9 are commonly used similarity measures. Although these measures can be employed to fine tune parameters of coarse registration step, most of them are only designed for solving pairwise registration problems.

Groupwise registration methods aim to register more than two images. Compared with pairwise registration methods, these registration methods use joint information of the entire group of images to estimate the correspondences between each pair of images. The groupwise registration approach proposed by Woods et al. 10 constructed a global cost function by adding sums of squared intensity differences between each image pair and then minimized this cost function to estimate the transformation of each image. Lorenzen et al. 11 proposed a domain-specific approach to simultaneously align a group of brain MR images. This approach used a human brain atlas to classify tissue and then registered the tissue classification images. However, these approaches only focus on registering monotone images.

Some approaches are designed to measure the dispersion in a joint intensity scatter plot (JISP) for multisensor image registration. Neemuchwala et al. 12 used the length of a minimum-length spanning tree to quantify the dispersion in the JISP. An iterative scheme proposed by Guimond et al. 13 is applied to correct the intensity differences between images. Leventon and Grimson 14 presented a maximize likelihood (ML) registration approach that specified the correct JISP from previously registered images. Recently, Orchard and Jonchery 15 presented a clustering approach for multisensor groupwise registration. Their approach modeled the distribution of points in the space of joint intensity based on a Gaussian mixture model (GMM) and then estimated the model parameters using an expectation maximization (EM) algorithm. Špiclin et al. 16 proposed a treecode registration approach for registering a group of multisensor images. Their approach estimated the joint density function through an efficient hierarchical subdivision of the joint intensity space. Zhu et al. 17 used an infinite GMM (IGMM), which has the capability of determining a proper number of mixing components to model the joint intensity distribution of the unregistered images and designed a variational Bayesian method to estimate motion parameters. While effective for groupwise registration, the mixture model used in this approach assumes that each intensity vector is independent of its neighbors and does not take into account spatial dependencies. This drawback will make their registration accuracies decline.

To alleviate this limitation, many approaches have been proposed to incorporate spatial information into the image. Some approaches impose spatial constraints on the image pixel labels. In these approaches, the Markov random field (MRF) 18 is a commonly used model. Recently, the hidden MRF (HMRF) model is proposed. 19,20 It is a stochastic process, generated by a MRF, whose state sequence cannot be observed directly but can be observed indirectly through a field of observations. But the HMRF model is computationally demanding. Instead of imposing the MRF-based constraint on the pixel labels, some other approaches directly impose spatial constraints on contextual mixing proportions and take into account the spatial correlation of pixels. 21 –23 However, in these approaches, the prior distribution is different for each pixel and depends on the neighbors of the pixel. This limitation makes them lost global cluster information. In addition, this kind of approach cannot determine the number of mixing components. Thus, it is not very suitable for groupwise registration.

Based on aforementioned considerations, a novel coarse-to-fine scheme for groupwise image registration is proposed in this article. It comprises a coarse registration process and a fine registration process. Major contributions of this article include the following: A simple and valid method is introduced to match segmented regions of reference and float image sets. An effective registration approach based on region feature matching is presented to eliminate the initial displacements of unregistered images. It is used as an initial solution for the fine registration processing. A modified GMM with a local template is proposed to model the joint intensity of coarse unregistered images. The weights of the template are computed based on both the spatial distance and the intensity difference between the central intensity vector and its neighbors. A ML approach with a minimum message length (MML) criterion is employed to infer the fine-tuning registration parameters.

The proposed approach employs the advantages of both feature-based and intensity-based methods. The performance of the proposed approach is evaluated on different multisensor image data sets and the results show that the proposed approach has improved performance compared to other groupwise registration approaches.

The rest of this article is organized as follows. A brief introduction of the proposed approach is presented in section “Methodology.” The experiment settings that include evaluation method and experimental data are introduced in section “Experiment.” The experimental results are reported and analyzed in section “Results.” Conclusions are drawn in the last section.

Methodology

Overview of the approach

The main steps of the proposed approach are shown in Figure 1. It starts with an initialization process and the initialized images are registered by coarse registration method. The coarse registration results are used as a good initial solution and are then refined by fine registration method. Through this scheme, we can get the aligned images.

Main steps of the proposed method for coarse-to-fine groupwise registration.

Coarse registration

Region feature extraction

For feature-based image registration method, feature extraction is critical for the success of feature matching and image registration. Our approach segments images and takes segmented regions as the features used for image registration. In the past decades, fuzzy segmentation methods have been widely used for data cluster and image segmentation. 24,25 One of the most popular methods is the well-known fuzzy c-means (FCM) algorithm. As a soft segmentation method, FCM is able to retain more original image information than the hard segmentation methods. In this article, we use FCM algorithm with spatial constraints to partition a given image to different regions. 26 Each segmented region, which is indicated as reg, has three parameters: λ, ec, and fc. λ is the angle between the x-axis and the major axis of the ellipse that has the same second moments as reg. ec and fc are x- and y-coordinates of the center of reg.

Region feature matching

A simple approach is used to match segmented regions. This approach is based on the idea that if two regions are similar in morphology, the area of their nonoverlapping region is small when they are aligned. Given two segmented regions reg1 and reg2, our approach calculates the transform parameters between them in the first place. The calculating method of transform parameter is introduced in next section. The transformation function that is applied to reg2 is represented by g and the transformed image is indicated as g(reg2). Then, we calculate the difference between reg1 and reg2 as follows

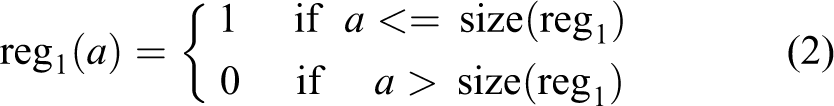

where reg1(a) refers to the a th pixel location of reg1. It is calculated as follows

where size(reg1) denotes the number of pixels in reg1. s reg is computed as

A small AD(reg1, reg2) value indicates that reg1 and reg2 are similar to each other.

Image registration based on region matching

Assume that D source images will be registered. Let

where ro is rotation angle; te and tf are translations along x- and y-axes, respectively. These coarse registration parameters are computed as follows

After coarse registration, the rotation and translation displacements of images are roughly eliminated. This registration results are used as an initial solution for the subsequent fine registration processing.

Image registration based on segmented region.

Segmented region match.

Fine registration

Problem formulation

An image group consists of D images. Thus, the location of each pixel x is associated with D intensity values and is represented by a joint intensity vector Ix.

15

Ensemble registration is to give these images a set of motion parameters that refer to the transforms applied to them. In this article, we focus on addressing the problem of rigid registration. If each image has L motion parameters, the total number of motion parameters is LD. Let θ be the set of motion parameters and the joint intensity vector of transformed images is denoted by

where X is the number of pixels. We use a GMM with a local template to model the joint intensity distribution.

23

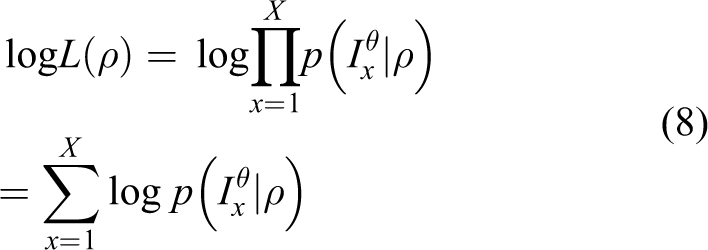

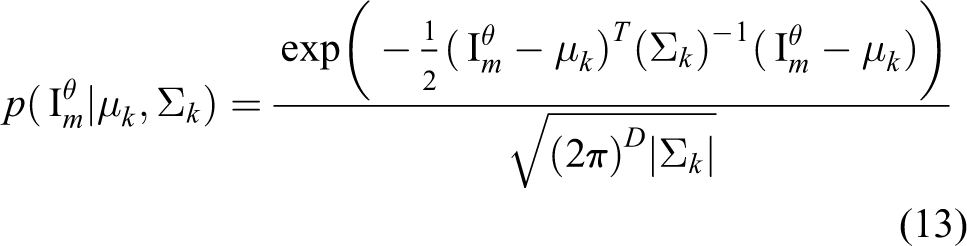

For each pixel location x, the likelihood of joint intensity vector

where K is the number of mixture components, πk represents the prior probability of

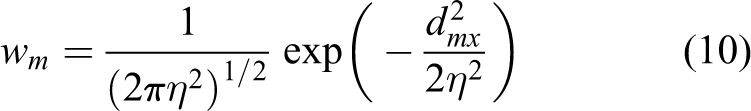

where dmx is calculated as

where sd

mx

and id

mx

are the spatial distance and intensity difference between intensity vector

The function

where μk and Σk are the mean and covariance matrix of the k th component distribution, respectively.

In the above GMM, the number of K, which will affect the fitness of mixture model, need to be known in advance. To overcome this difficulty, we adopt the approach presented in the study by Figueiredo and Jain, 28 which is based on the MML criterion, and obtain the following cost function

where W is the number of parameters specifying each component. It is

Parameter learning

The EM update procedure is used to estimate the parameters of the proposed mixture model. In the E-step, the expectation of the complete data log likelihood Q is calculated. Let zxk be a hidden random variable that indicates the membership of

From equation (15), we can see that quantity V cannot be directly calculated. Because that wm/Rx satisfies wm/Rx ≥ 0 and

The posterior probability of hidden variable zxk is computed as follows

where i denotes the i th iteration. Given the result (equation (17)), the expectation of the complete data log likelihood Q is calculated as

In the M-step, the revised parameters are determined by minimizing function (18). First of all, the parameter πk is updated as

The objective function in equation (19) does not make sense if we allow any of the πk s to be zero. To overcome this problem, only the component with nonzero πk contributes to the log likelihood. Thus, the complete data log likelihood Q is

where Kz denotes the number of non-zero probability components. For the k th mixing component, of which πk is greater than 0, we update its parameters μk and Σk as follows

As for updating motion parameters θ, we optimize Q with respect to the motion parameters θ, we set its gradient to zero

and have

In order to find motion parameters θ that satisfy the above equation, we introduce a small motion increment

Equation (25) can also be expressed by the simple form as given below

The optimal motion increment is obtained by solving these equations for

Groupwise registration using GMM with local template.

The pseudocode of the proposed approach is outlined in algorithm 3. A multiresolution framework is employed to decompose each source image into several levels. Each group of images is registered at a low level at first and then successively at the higher level. Motion parameters obtained at one level are used as an initial guess for the next level. In general, the scales of multiresolution framework are 20%, 50%, and 100%. In each test, the motion parameter number of each image is 3 (ro, te, and tf). Thus, when four images are registered simultaneously (D = 4), the total number of motion parameters LD is 12. The initial number of mixing components K is 10. The size of local template is 5 × 5 and the standard deviation η is set to 1.

Experiment

In this section, we perform experiments to evaluate the performance of the proposed method. Five other registration methods are selected as reference to compare. Among them, two of five are feature-based registration methods, that is, Scale Invariant Feature Transform-based registration method (SIFTR) 7 and segmented region-based registration method Segmented Region Registration (SRR). The other three methods are intensity-based groupwise registration methods. They are GMM-based registration method Groupwise Registration (GR), 15 IGMM-based registration method Dirichlet Process Registration (DPR), 17 and the registration method with spatial constraints Spatial Intensity Constraint Groupwise Registration (SICGR). Our method is named as SRR-SICGR. It contains the SRR method and the SICGR method.

The criterion that is similar to the one in the work of Orchard and Mann 15 is used to compare the performances of registration methods. It compares the estimated transformation to the gold standard transformation by computing the average pixel displacement (APD). Therefore, a small APD refers to a good registration and vice versa. We consider that the average registration error of a success registration is less than three pixels.

Four public available image data sets are considered to evaluate the performances of different registration methods. The source images of each data set are initialized by applying known displacements. Then, different registration methods are run on the obtained trial image data sets. Details about image data sets are outlined as follows.

Vehicle image

A vehicle image, which is shown in Figure 2, is used to test different registration methods. The region of interest (ROI), which is shown in Figure 2, is used for performing registration. Four displacement ranges are used to generate initial random rigid displacement of image. They are [−10, 10], [−15, 15], [−20, 20], and [−25, 25] (pixels or degrees), respectively. In each displacement range, 50 trial groups are generated and each trial group consists of four images. Among the four images of a trial group, one image is the reference image (be indicated as image (a)) and the other three unregistered images are generated by applying random displacements to reference image (be indicated as images (b) to (d)).

Vehicle image. The ROI is outlined in image. ROI: region of interest.

Variable illumination

Five face images obtained from the Extended Yale Face Database B 30 are used to demonstrate the performances of different methods. These images, which are shown in Figure 3, are illuminated from different angles ranging from far left to far right. The ROI, which is shown in Figure 3(a), is used for performing registration. Four displacement ranges transform parameter range (TPR) are used for sampling translation and rotation parameters. They are [−10, 10], [−15, 15], [−20, 20], and [−25, 25] (pixels or degrees), respectively. In each displacement range, 50 trial groups are generated.

Face images. The ROI is outlined in image (a). ROI: region of interest.

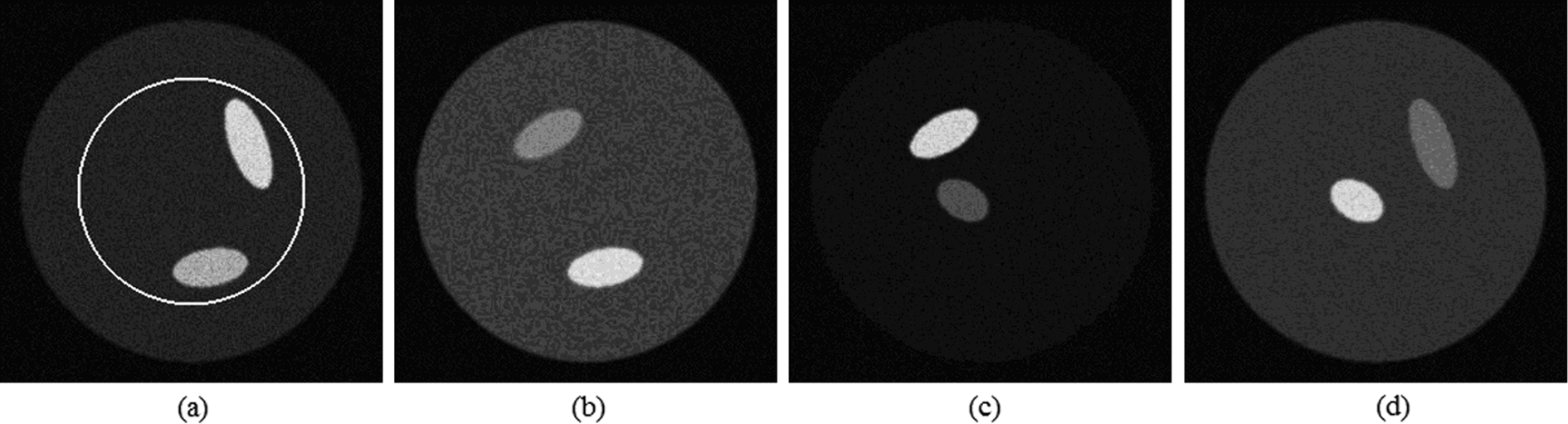

Disjoint content

An image group of the multisensory phantom is shown in Figure 4(a). The information of these images is complementary. The ROI, which is used for registration, is shown in Figure 4. Four displacement ranges ([−10, 10], [−15, 15], [−20, 20], and [−25, 25]) are used for sampling rigid displacements. Fifty trail groups are generated using random translation and rotation in each displacement range.

Images from phantom data set. The ROI is outlined in image (a). region of interest.

Medical images

We also test these methods on a group of medical images, which is shown in Figure 5. This group consists of three MR images (T1 weighted, T2 weighted, and Proton-Density weighted). The ROI, which is shown in (a) of Figure 5, is used for performing registration. Four displacement ranges are used for sampling rigid registration parameters. They are [−10, 10], [−15, 15], [−20, 20], and [−25, 25] (pixels or degrees), respectively. In each displacement range, 50 trial groups are generated.

Multisensor medical images. The ROI is outlined in image (a). region of interest.

Results

Vehicle image

The results for the rigid-body registration of vehicle image are shown in Tables 1 and 2. Table 1 shows pair registration success rates for the data set and Table 2 shows mean registration errors for image pairs. TPR refers to displacement range. From the results, we can see that when TPR is in [−15, 15], only the SRR method does not successfully register all image pairs. The GR, the SICGR, and the SRR-SICGR methods have lower registration errors than other methods. When TPR is [−20, 20], the SIFTR and the DPR methods successfully register all image pairs. Among them, the DPR method has the lowest mean error. As the displacement range is expended, the success rates of intensity-based methods decrease fast. In contrast, the success rates of feature-based methods have no obvious change. The SIFTR method has the highest registration accuracy. The accuracy of the SRR-SICGR method is slightly lower than that of the SIFTR method.

Pair registration success rates of different methods for vehicle images within different displacement ranges.

Note: Italics indicate highest pair success rates in each row.

Mean registration errors of different methods for vehicle images within different displacement ranges.

Note: Italics indicate lowest mean registration errors in each row.

All methods are implemented in MATLAB R2012a, running on a Windows machine with a Core (TM) 2 Duo 1.83 GHz CPU. Table 3 shows the average times of different methods to register a group of images. As seen, the SIFTR method spends less time than other methods, while the SRR-SICGR method requires the most time.

Average computation times of different methods for vehicle images.

Note: The italics indicates the minimum total time.

Variable illumination

Tables 4 and 5 show the registration results for the variable illumination experiment. As seen, feature-based methods have lower success rates than intensity-based methods when TPR is [−10, 10]. The SIFTR method has the lowest success rate and highest mean error. This shows that it has difficult to register intensity uncorrelated images. The SICGR method has lower mean error than other methods. When TPR is expended to [−15, 15], the GR, the DPR, and the SRR-SICGR methods have the highest success rates. Among them, the SRR-SICGR method has the lowest mean error. As the TPR is expended, the success rates and registration accuracies of intensity-based methods decrease somewhat. The SRR-SICGR method has the highest success rate and lowest mean error because that it employs a coarse-to-fine registration scheme.

Pair registration success rates of different methods for face images within different displacement ranges.

Note: Italics indicate highest pair success rates in each row.

Mean registration errors of different methods for face images within different displacement ranges.

Note: Italics indicate lowest mean registration errors in each row.

To evaluate the registration performances of the proposed method under noise environment, we compare with the GR method and the DPR method. We set TPR = [−10, 10]. Each image of phantom data set is corrupted with Gaussian (G) noise and salt-and-pepper (SP) noise. The variance of G noise and the density of SP noise vary from 0 to 0.05. Figure 6 plots the registration results under different noise levels. From the results, we can see that all these methods have higher registration errors when noise increases. Under the same noise level, our method has the best performance compared with other two methods.

Registration results of three methods for face images within different noise levels.

Disjoint content

Tables 6 and 7 show the registration results for the disjoint content experiment. According to the results, the SIFTR method fails to register all image pairs. When TPR is [−10, 10], intensity-based methods successfully register all image pairs. Among them, the DPR method has the lowest mean registration error. As the displacement range is expended, the SRR and the SRR-SICGR methods have higher success rates than other methods. In addition, the SRR-SICGR method has the highest registration accuracy compared with other methods.

Pair registration success rates of different methods for biological images within different displacement ranges.

Note: Italics indicate highest pair success rates in each row.

Mean registration errors of different methods for biological images within different displacement ranges.

Note: Italics indicate lowest mean registration errors in each row.

Figures 7 and 8 show the clustering results of the GR, the DPR, and the SRR-SICGR methods. There are five different clusters in the JISP of the phantom data set: four small ellipses and a large embedding circle. When TPR = [−10, 10], all three methods can converge to the correct solution. In addition, the DPR and the SRR-SICGR methods successfully estimate the number of components. When TPR = [−25, 25], the GR and the DPR methods cannot get correct clustering results because of large geometric distortions. In their clustering results, some components are forced to stretch to model multiple clusters. Thus, the performances of these two methods are not better than that of the SRR-SICGR method.

The clustering results of three methods when TPR = [−10, 10]. (a) The clustering result of the GR method. (b) The clustering result of the DPR method. (c) The clustering result of the SRR-SICGR method.

The clustering results of three methods when TPR = [−25, 25]. (a) The clustering result of the GR method. (b) The clustering result of the DPR method. (c) The clustering result of the SRR-SICGR method.

The clustering results of three methods when TPR = [−10, 10]: (a) the clustering result of the GR method; (b) the clustering result of the DPR method; and (c) the clustering result of the SRR-SICGR method.

The clustering results of three methods when TPR = [−25, 25]: (a) the clustering result of the GR method; (b) the clustering result of the DPR method; and (c) the clustering result of the SRR-SICGR method.

Medical images

The registration results for medical images are shown in Tables 8 and 9. It is seen that when TPR is [−10, 10], the success rate of the SIFTR method is lower than that of other methods. The GR and the SRR-SICGR methods have the lowest mean errors. When TPR changes from [−15, 15] to [−20, 20], the SICGR method has the highest success rate and lowest mean error. As the TPR is further expended, the SRR-SICGR method has better performances than other methods on registration success rate and registration accuracy.

Pair registration success rates of different methods for medical images within different displacement ranges.

Note: Italics indicate highest pair success rates in each row.

Mean registration errors of different methods for medical images within different displacement ranges.

Note: Italics indicate lowest mean registration errors in each row.

Conclusion

In this article, a novel coarse-to-fine approach is proposed for registering groupwise images. In the coarse registration step, our approach divides unregistered images into reference image set and float image set. The images of the two sets are registered based on segmented region matching. The coarse registration results are used as an initial solution for next step. In the fine registration step, our approach uses a GMM with a local template to model the joint intensity of unregistered images. The weights of the template are calculated based on both the spatial distance and the intensity difference of the central intensity vector and its adjacent vectors. Based on this GMM, a ML is constructed and a MML criterion-based method is employed to determine the unknown number of mixing components. The registration problem is solved by estimating relevant parameters. The proposed approach employs the advantages of both feature-based methods and intensity-based methods compared with previous groupwise registration approaches. Different multisensor image data sets are considered to evaluate the performance of the proposed approach and the registration results support the competitiveness and robustness of our approach.

Footnotes

Author’s Notes

Yinghao Li is also affiliated to College of Software and Applied Science and Technology, Zhengzhou University, Zhengzhou, China.

Acknowledgements

The completion of this research was made possible thanks to the following organizations for providing the data: the Retrospective Image Registration Evaluation project, Landsat, and the Yale Face Database.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is jointly supported by Scientific and Technological Research Program of Chongqing Municipal Education Commission—China (grant no. KJ130516, YJG133005), Open Fund of State Key Laboratory of Remote Sensing Science—China (grant no. OFSLRSS201419), the Research Funds of Chongqing Science and Technology Commission—China (grant no. cstc2013jcyjA40042), and the National Natural Science Foundation of China—China (grant nos 61301033, 61403349, and 61309013).