Abstract

In system development, integration is crucial—especially in domains like robotics, where the complexity of the applications makes it a particularly challenging task. The rapid growth in the sector can lead to the obsolescence of existing tools and procedures. Although other technologies have emerged in scientific research, they face limited acceptance within the robotics community due to high entry barriers and a lack of evaluative studies, with real use cases, demonstrating their effectiveness. In this effort, we have used

Introduction

Model-driven development (MDD) has proven its efficiency in the integration of systems in different domains. Several comparative studies have shown that MDD significantly reduces development time, with some experiments

1

even concluding that a model-based approach could be executed in only 11% of the time required by code-centric methods. However, in robotics software development, there is a prevailing preference for a hand-written approach. To demonstrate how MDD can be used in a code-centric domain,

2

such as robotics, by reusing existing code we have created a set of tools, called

This paper focuses on the evaluation of

To complement the studies, we also conducted a qualitative study, interviewing experts to establish the usability and ease of use degree of the developed tools.

This paper is structured as follows: Firstly reviews related work on empirical and qualitative evaluations of software solutions, providing a foundation for our study. The next section outlines the background necessary to contextualize this work. Then the core of the publication focus on the quantitative and qualitative evaluations, respectively. Finally, it concludes by summarizing our findings.

Related work

Empirical MDD software evaluation studies

As an example of the comparison between MDD and traditional software development there studies based on the Palladio Editor 3 that show the MDD’s benefits in improving design accuracy and system analysis, on the other side limitations like tooling constraints and a steep learning curve are evident. The findings from a survey through practitioners to evaluate MDD’s impact 4 indicate that MDD increases productivity and maintainability while reducing errors, particularly in complex systems. The key metrics are development speed and quality, though initial setup complexity and costs are highlighted barriers. Practitioners recommended MDD primarily for large-scale projects, where its advantages are more apparent. A comparative case study 5 points out that the traditional software development processes are faster for the initial progress, while MDD provides better scalability and maintainability. For this empirical study, the considered metrics include time to delivery, scalability, and defect rates. It concluded that MDD offered more sustainable benefits for long-term projects. Another empirical effort 6 analyzed MDD in four industrial case studies with a focus on usability, compatibility, and performance, and using as metrics adoption rates, ease of integration, and the impact on development time and quality. The study concludes that domain-specific contexts significantly influence platform effectiveness. Together, these studies highlight that while MDD may require higher initial investments, it often outperforms traditional development in long-term scalability, maintainability, and quality, particularly in complex, large-scale projects. Also, the domain must be taken into account and unfortunately for robotics, there is no previous work evaluating the impact of an MDD solution.

Methodologies for qualitative evaluations

The goal question metric (GQM) 7 is an approach to measure software quality. It begins by defining clear, goal-oriented objectives, breaking these goals down into specific questions, and then developing metrics to evaluate the results. This structured inquiry ensures that the evaluation is focused and aligned with the overarching objectives of the software engineering approach, covering a broad range of factors such as performance, reliability, maintainability, and usability. In addition, System Usability Scale (SUS) 8 is a standardized tool used to measure the usability of a system, providing a quick and reliable way to assess user satisfaction and usability through a simple 10-item questionnaire. The 10 questions alternates positive and negative questions and the participant must score it using a 5-point Likert scale. SUS is a standardized, widely recognized tool that is both quick and easy to administer. Similarly, Post-Study System Usability Questionnaire (PSSUQ) 9 focuses on three core dimensions: System usefulness, information, and interface quality. Participants complete the PSSUQ after interacting with a system, rating their experience on a 7-point Likert scale.

Background: The RosTooling

The ROS ecosystem encompasses an array of libraries and packages catering to functionalities, such as perception, planning, control, and simulation. Supporting programing languages, like C++ and Python, ROS boasts an active community contributing to its extensive range of resources.

ROS has a widespread impact on the robotics community. 10 With a federative development model, i.e., new packages can be added to the ecosystem without modifying the core, ROS allows easy sharing of components across developers’ teams.

The target of this evaluation,

From a technical perspective,

The main novelty of

Developer perspective

The big picture of the of the

Diagram representing the

The Code generator extensions: Simplest and most useful, these add new file generators to the compiler. Validation extensions: Add new compiler validation rules via plugins. Extraction and conversion extensions: Provide reusable mechanisms (like a Python API and model-to-model techniques with ECORE) to generate and map models. Metamodels and DSLs extensions: Allow adding and referencing new metamodels and DSLs.

User perspective

From the user’s perspective, there are two key aspects of the

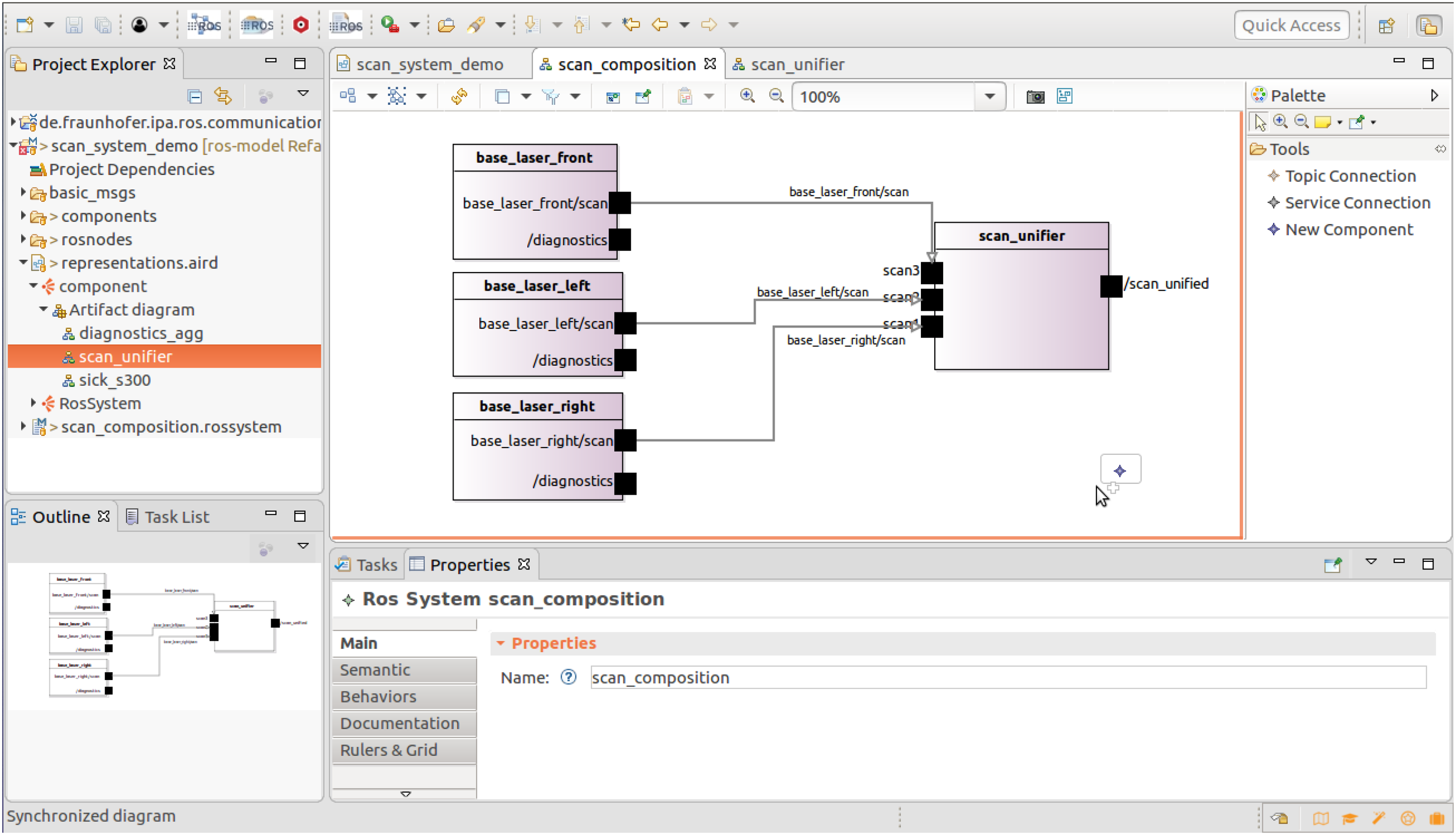

Screenshot from the

Limitations

The main limitations of this kind of approach, and specifically of the Ambiguity and lack of structure. ROS lacks well-defined architectural abstractions like components, modules, or subsystems. This makes it hard to define meaningful, reusable models. Also, the bottom-up modeling approach results in complex, hard-to-read system models that require specialized graphical tooling to manage. Limitations of model extraction technologies. The static code analysis limitations due to the high variability in ROS coding styles lead to incomplete model extraction ( 66% coverage). While runtime extraction is unreliable, as it can’t capture all needed data (e.g., service calls, file-level parameters), it may be risky or incomplete. Inconsistency between design-time and runtime information due to dynamic naming and the set of interfaces.

Also, it must be taken into account that every bottom-up MDD approach presents several inherent limitations. This type of approach typically exhibits weak or missing support for version control systems, which complicates collaborative development and model evolution. Additionally, there is a steep learning curve for developers, especially within the ROS community, which is accustomed to rapid prototyping using familiar programing languages. The introduction of new tools can thus raise the entry barrier and slow down adoption.

Quantitative evaluation

We conducted 14 experiments on real robots Tables 1 and 2. In all case studies the objective was the integration of complete robotic applications from existing ROS packages starting with the description of a collection of user stories to fulfill.

List of user stories for the manipulation use case, the number of subsystems (S), the total number of components (C), and parameters (P).

List of user stories for the mobile assistant robot use case, the number of subsystems (S), the total number of components (C) and parameters (P).

For the cases presented here and as a comparative study, the design and implementation phases of the system were performed by using

The experiments were carried out by people outside of the

Study design

The following four evaluation criteria,15,16 and their respective metrics, have been carried out for the evaluation.

Development time

Its corresponding metric is the total hours actively working on development tasks. Hours are categorized by development phase (design, implementation, testing, and deployment) to reveal the phase-specific impacts of each approach. The phases are defined as:

Design: The system architecture and detailed component designs are defined, including software choices and interface design. In our study, for both cases, we already have the set of components to be integrated; for the case of the traditional approach, the list of repositories that contain the code of the different components, and for modeling, thanks to the extraction tools, the models are obtained automatically. Implementation: Involves building and integrating the components of the system by developing any necessary software. For the MDD solution, thanks to code generators, this is partly done automatically. Testing: The system is tested to ensure that it meets its requirements and functions as expected, especially important for our experiments is the integration testing of the complete system. Testing for our experiments consider also the debugging and fixing issues time, so we can then compare the advantages of one approach over the other. Deployment: The system is installed and made available for use by its intended users.

Additionally, time is recorded for each user story individually. All the data have been collected by calendar working day in a detailed Excel sheet.

Code generation efficiency

Code generation efficiency measures how effectively a development approach produces functional code with minimal resources, an important factor in evaluating MDD methods. 17 Frameworks that require less code to describe a system are more efficient than those needing extensive custom code.

The metric in this case is the Lines of Code (LOC). Although fewer lines can improve maintainability, LOC alone does not reflect code quality or functionality. Instead, this metric focuses on the quantity of code generated, not its quality. A tool named cloc is used, to count the LOC. It contains language support for a wide range of programing languages and therefore is able to detect blank lines and commented-out lines. In the evaluation we only considered the lines that contain actual code.

Error traceability

Error traceability refers to the ability to identify, trace, and link errors or defects in the software back to their root cause.

To measure the error traceability, the selected metric is the resolution time as the average time to fix a runtime error. 18 Once a system is operational and a misbehavior is detected, error traceability refers to the ability to identify the source of the error. To support this process, a standardized form is used to document each error. This form categorizes errors into four main types: misspellings, incorrect system behavior, misconfigured parameters, and interface mismatches. Additionally, the time taken to resolve each error is recorded. This approach enables tracking of the effort required to address issues in terms of the working time invested.

Portability

Portability is the ability to run the same piece of software on different hardware.

To measure portability, we analyze the relationship between the cost or effort of porting software and rewriting individual components.

19

Where

In our case, Equation 1 sums the number of lines for all user stories, showing the average portability value among them. The process definition

Use cases

Manipulation use case

For implementation, two different robotic cells were used, as shown in Figure 3. Both cells contain a robotic arm with a gripper mounted at the flange position. In addition, a RealSense 3D camera is mounted to perform perception tasks. They only differ on the robot arm manipulator which comes from different vendors, using on one hand a Universal Robot UR5e robot and on the other hand, a PILZ PRBT.

Picture of both robot cells together performing the same task. Universal Robot UR5e on the left side and the Pilz PRBT on the right side.

In this study, we tackle the topic of portability and how the use of models can streamline the process. Selecting manipulation as a use case is also a great challenge since it requires a high degree of configuration and parameterization. In order to have a better collection of data to guide us to a conclusion, we have defined different user stories, ranged from simple to complex.

In total, we conducted the development of four different user stories (Full description of the user stories attached as supplemental material), all of which were built for the two target hardware, the Universal Robot UR5e arm and the Pilz PRBT, which means a total of eight different application designs. For all the cases the robot low-level drivers, together with the path planning software are implemented as a subsystem that will be re-used among the different cases. To obtain the models corresponding to the component drivers, all of them already available on ROS 2, static code analysis tools have been used as a reverse engineering method. Concretely, we made use of the framework HAROS.

14

This framework involves extracting information from the source code without executing it. Thanks to it, from code written in C++ or Python, we are able to automatically extract its related model compatible with the

For the case of the UR5e robot, the subsystem is composed of 18 components, and 17 for the PRBT, in both cases this subsystem cover the low-level functionalities. Table 1 details the number of extra components added for every application and the definition of the parameters.

Figure 4 shows a simplified view of the implementation of the user story MANI-02 as it is sketched by the RosTooling.

Screenshot from the visual interface of the RosTooling showing a simplified overview of the system for the MANI-02 user story for the Universal Robot UR5e.

Extract from the ROS System DSL for the MANI-02 with the the Universal Robot UR5e robot arm.

Listing 1 shows an extract from the ROSSYSTEM model, the design of the system developed using the

Mobile assistant robot use case

For this study, we used the Care-O-bot 4, 20 this robot features an advanced and completely modular hardware setup as shown in Figure 5. Its core is an omnidirectional mobile base that includes three powerful laser scanners. The torso and head are outfitted with multiple sensors, by integrating a total of five RGB-D cameras. This robot enables, among other, navigation, perception, manipulation, and human robot interaction functionalities. For the experiment, two setups have been used, the real robot, which is running on ROS 1, and a simulation environment created in ROS 2.

Care-O-bot setup used for the experiments. The real hardware robot on the left side, and the simulation environment in the right.

With this study, we addressed several topics. First of all, the interoperability between middlewares. Secondly, a design based on an existing and deployed system. This is a typical scenario in robotics, many mobile platforms can be bought with a pre-defined installation. Lastly, this type of robot is very complex as it enables the development of a large number of functionalities.

For this scenario, we consider two variants, the real robot in ROS 1 with bridges to ROS 2 software and the simulation in ROS 2. By defining three different user stories (Full description of the user stories attached as supplemental material), we developed six different application variations. All six were developed by using the two methods, the traditional approach, and the

The first step to using the

Extract from the ROSSYSTEM model for the MOBI-02 user story where the real robot Care-O-bot 4-25 is integrated as subsystem.

The ROSSYSTEM model extract in Listing 2 shows a part of the design with the

Collected data

Development time

Based on Figure 6, the key findings are: (1) Modeling tools make design the most time-intensive phase, (2) code generation significantly reduces implementation time, cutting it from 93 hours to 40 hours (a 57% reduction), and (3) testing and debugging time is also reduced, from 29.75 to 17.75 hours (a 40.3% reduction). Overall, modeling tools shift development focus from implementation to design. Design in this case means the formal description of the system architecture. The actual task performed by the developer, starting from the basis of component models, is either with a graphical interface or with the use of modeling languages (or DSLs) to describe the configurable components that form the system and how they interact with each other. In our study, that means that the design consists of the creation of ROSSYSTEM files as the examples in Listing 1 and Listing 2. Obviously, the more advanced the tooling user becomes, the faster and more intuitive it will be to use, as well as the more reusable model patterns will be constructed.

Total work hours taking into account all the user stories for both use cases and splitting them by development steps.

Additionally, using

Lastly, for the manipulation case,

Work hours data by splitting the number of hours required to port an application to a new hardware, the target of the manipulation use case.

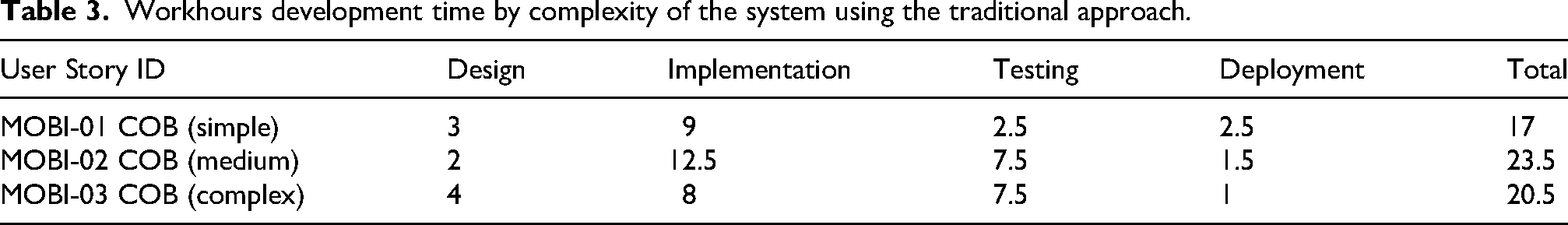

Tables 3 and 4 illustrates the development effort, measured in hours, required for various use cases across different development approaches. In the case of a simple use case, the effort is slightly higher when using modeling techniques (18 hours) compared to traditional development (17 hours). The creation of models and the associated entry barriers of the technology do not justify their use for integrating simple components. However, this scenario changes for medium-complexity systems, where the modeling approach,

Difference of the work hours metric system complexity for the mobile assistant robot use case.

Workhours development time by complexity of the system using the traditional approach.

Workhours development time by complexity of the system using the

Lines of code

Figure 9 shows the total LOC written manually by adding the data from both use cases and all the user stories implemented. We can see that by the implementation of the ROSSYSTEM models and thanks to the generators we reduced from 5,616 LOC to 1,776 LOC this means 31% of the total number of LOC. Also, by including subsystems in the models, we reduce from 1,283 LOC to 290 LOC.

Total amount of lines of code manually written by both approaches by taking into account all the use cases and user stories.

In total, with the traditional approach 6,854 lines must be manually written, this includes Python code, build, manifest, and configuration files in YAML format, while with the tooling only the ROSSYSTEM model files must be written, all in YAML format, and the total of lines is 2,066, the achieved reduction is 69.86%.

Runtime error-fixing time

Figure 10 represents the sum of all the counted minutes by all the studied user stories together.

Time counted in minutes to fix the errors found during the testing step.

The most evident reductions are seen in misspelling issues from 30 to 10 minutes and in mismatched interfaces, it reduces from 134 to 40 minutes. This is because the models include spelling checkers and cross-validation of the properties while designing.

Regarding the system performance, there is no difference, it is the same for both approaches since it depends on the hardware and the quality of the components used.

In the case of fixing errors of values given to the parameters, we see that with the

Portability

Table 5 presents the development costs, measured in LOC, for creating a new user story from scratch compared to porting components from a previous development. In the mobile platform use case, the traditional approach requires 1299 LOC to develop a new user story. By porting components from a similar application, this number decreases to 283 LOC. In contrast, the MDD approach necessitates 531 LOC for a new user story, which can be reduced to 76 LOC when reusing components. For the manipulation use case, which emphasizes portability, the traditional approach entails developing 1.859 LOC for a new application. Porting components reduces this to 759 LOC. Using the

Comparison of the lines of code (LOC) written to implement a new use story from scratch versus porting parts of the code for both approaches.

By using the equation previously presented as shown in Figure 11, for the manipulation use case Figure 11, the score with the

Portability score for both use cases.

For the case of the mobile assistant robot, by evaluating the portability between the simulation and the real robot environment, the resulting value by using the

In terms of portability, the use of models has a high performance. If the design of the system is modular, porting modules from one application to another using models is very efficient, as the system model can reuse complete modules from other applications.

Observations

MDD is characterized as a design-centric approach. Then in our case study, as expected, design also gets higher importance, shown in Figure 6, this is the step that requires more time, while in a code-centered development, the implementation is the step that requires more effort. But like every MDD approach, a better design reduces time in the rest of the steps, especially we should highlight the reduction of the testing time, as well as the reduction of the time to fix errors, as shown in Figure 10. Different measures to improve the efficiency of the design could be a more intuitive graphical interface or the extension of the catalog of components and subsystems, in order to reduce the time of analysis of the available elements for their composition.

Two factors increase the time in the development of systems, on the one hand, the learning of a new tool and a new language, on the other hand, for this type of solution it is essential to have a catalog, a set of models of components that can be imported and composed to create systems. This can be clearly seen in Figure 8, where the

In code generation efficiency, it is evident that the use of the models brings a clear advantage (Figure 9). It reduces the amount of manually written code, which is also error-prone. It must also be noted that with the modeling solution, the user only has to learn one specific language or format, while the manually created code involves the ability to develop code in various languages.

This code generation efficiency is also delivering good results while analyzing the portability as shown in Figure 11. This is a very notable advantage for modular robots or serial production.

Threads to validity

In empirical evaluations, construct, internal, external, and conclusion validity are critical considerations, as established by scientific studies.

21

Construct validity concerns arise from the flexibility in operationalizing evaluation criteria, such as code generation efficiency and portability, which could be measured in terms of time but would still support the conclusion that The development was carried out by people not involved in the development of In both cases, the developers were fully dedicated to the experiments, free from the distraction of parallel tasks. The order in which the approach was performed first (with If there is a development effort is used for both, then the time is added to both approaches.

Testing on physical hardware further aimed to emulate real-world conditions. Finally, conclusion validity, which addresses risks of type I (false-positive) or type II (false-negative) errors, was safeguarded by using multiple metrics to inform a reliable statement in the observations.

Qualitative evaluation

For this study, we encouraged people who are not involved in the development of the tool to experiment and use it.

We created material on how to test all relevant features and conducted hands-on experiments with two different groups of developers. In both cases, the target was professionals working on research projects related to the development of software for robotic applications.

Both groups were given a brief theoretical introduction to model-driven programing. Then participants had two hours to experiment and carry out different examples and tutorials. Finally, the attendees filled out a survey, the results of which are detailed here.

Design

We combined GQM and SUS approaches. GQM and SUS cover broad and deep aspects of software evaluation. GQM’s structured approach ensures that all relevant aspects of the software engineering process are considered, while SUS provides detailed usability data that can highlight specific areas for improvement.

The goals we aim to cover with our study are:

Identify the benefits of using Identify where Evaluate the usefulness of ROS modeling. Analyzing the usefulness of a software tool ensures that it effectively meets user needs and justifies the investment of time and resources in its adoption or development. Evaluate the ease of use of models and the Evaluating the acceptance of model-based techniques over ROS existing code. Analyzing the acceptance of a software tool by a user group is crucial to determine whether it will be adopted in practice and integrated into users’ workflows.

For clarity and consistency, the questions (Q1–Q34) (Questionnaire attached as supplemental material) have been organized into different sections:

(Q1-Q6) Profiling questions (Q5-Q7) Models evaluation (Q8-Q14) Ease to use (Q15-Q25) Usability (Q26-Q29) Comparative evaluation with existing methods (Q30-Q31) Future usage intentions (Q32-Q34) Open feedback

The questions can be mapped to the goals in the following way:

Q22, Q23, Q24, Q26, Q27, Q28, Q29 Q21, Q22, Q23, Q25 Q15-Q25, Q26-Q29 Q8, Q9, Q10, Q11, Q12 Q26-Q29, Q30-Q31

To simplify the collection of the data and also to get manageable data, all the questions, excluding the profiling questions and the open feedback ones, can be answered with a 5-point Likert scale, where “1” strongly disagree and “5” strongly agree.

To complete the GQM method, the last aspect is the definition of the metrics:

General average all the questions. This means the use of all the answers Q5-Q31. Average of all the questions in S2, evaluation of the models Average of all the questions in S3, ease to use. Average of all the questions in S4, usability. Average of all the questions in S5, comparative with traditional methods. Average of all the questions in S6, future use intentions Average of all the questions related to G1 Average of all the questions related to G2 Average of all the questions related to G3 Average of all the questions related to G4 Average of all the questions related to G5 Score formula from the method SUS, applied to its related questions.

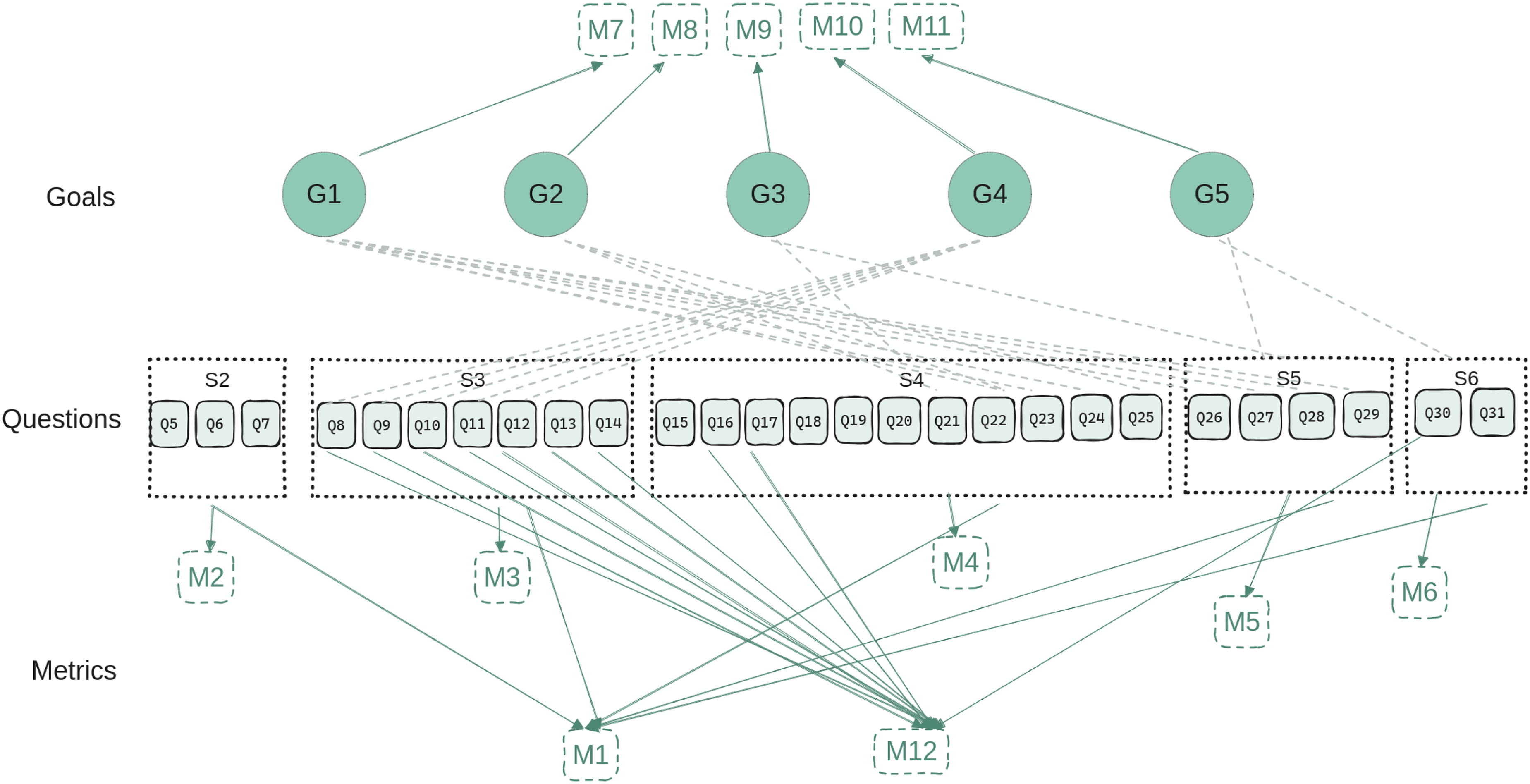

As a representation of how they are related to each other, Figure 12 shows the mapping between the goals, the questions, and the metrics.

Design of the goal question metric (GQM) methods. The diagram shows the relations between the selected goals, questions and metrics.

Collected data

With this experiment, we collected a total of 15 responses. For the analysis of the data, all the answers are divided into the profiles ROS experts people with more than five years of experience with ROS), systems integrators and architecture designers (AD).

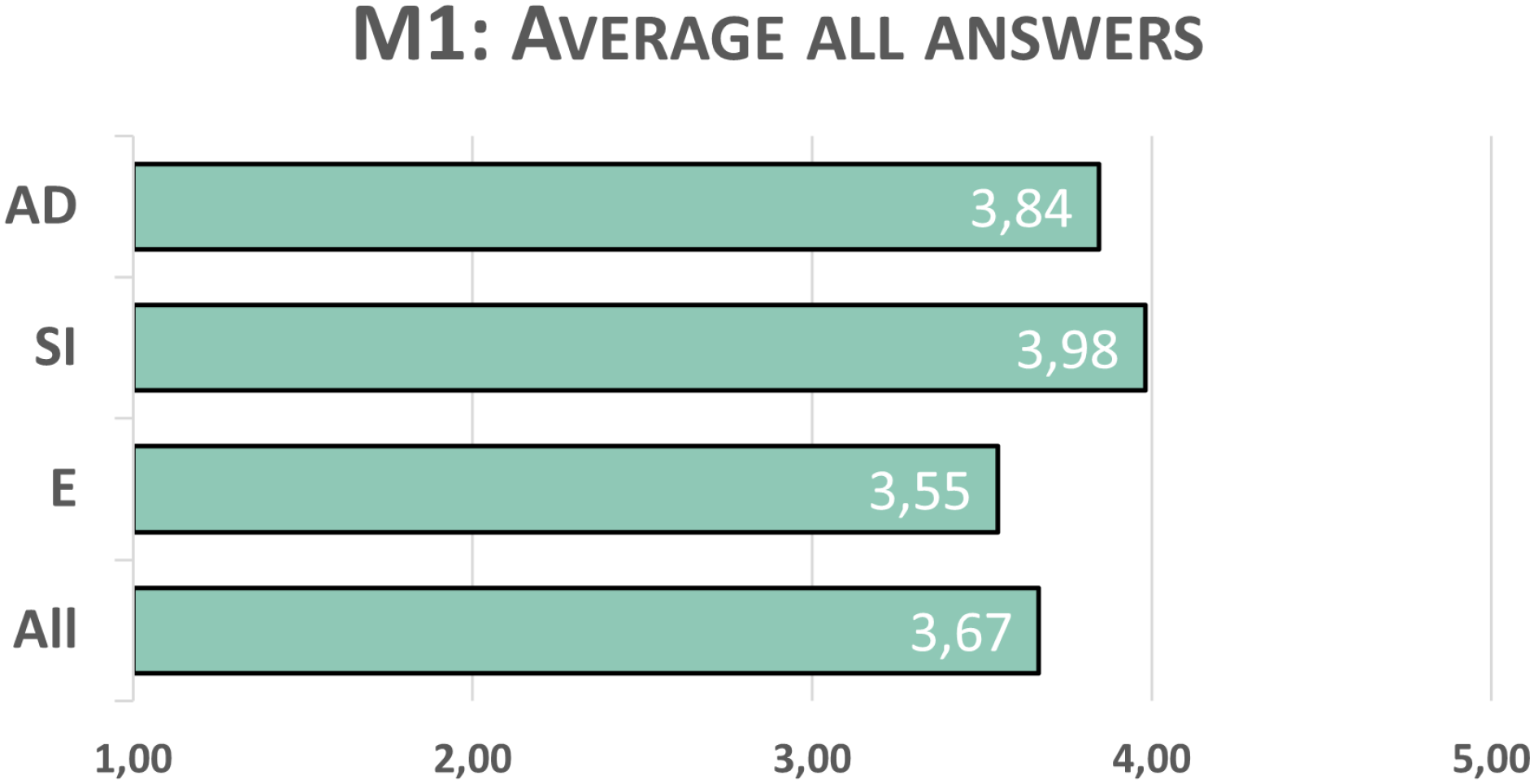

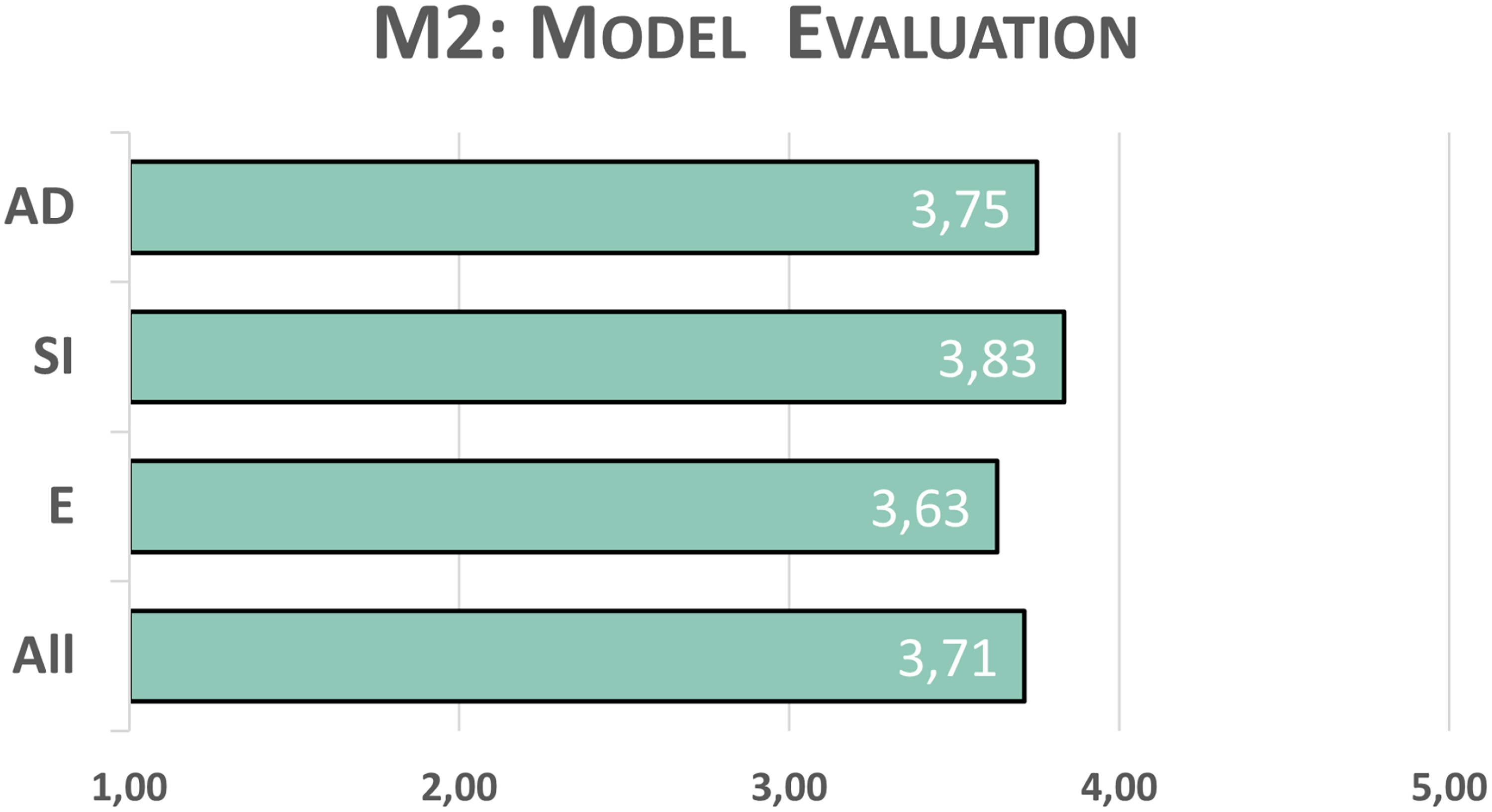

Considering all the data obtained and giving all the questions of the survey the same weight, a broad overview of the data obtained is presented in Figure 13.

Results obtained for the M1, i.e., considering the answers for all the questions, and all with the same weight.

In general terms, we can see that the profile with the highest degree of satisfaction with the solution we provide is the system integrators (SIs). This certainly makes sense, since they are the target audience of the

Next are the AD, this profile is very interesting because they are the people who really understand the need to make a well-documented design from the beginning of the development process.

For the evaluation of the models, we take into account factors like model completeness, which measures how well models capture the entire system, model consistency, to check for coherence, preventing misunderstandings and errors in the development process, and model clarity, to assess readability.

Using a 1–5 scale, 1 means that models are unclear, difficult to interpret, and fail to accurately or precisely represent the structure and behavior of a ROS system. Important properties and nuances may be missing or misrepresented. 5 implies that the models are clear, well-structured, and easy to interpret. They accurately and precisely capture the key properties of ROS systems.

In this aspect, the results are very similar for all the profiles they are between 3.63 and 3.83, as shown in Figure 14. The clarity of the models and their accuracy in capturing the properties is highlighted by the responses. The worst ranking is found in the accuracy in capturing nuances of the software.

Results obtained for the M2. Answers related to the completeness, consistency, and clarity of the models.

The next two metrics evaluate the ease of use and the usability of the technical solution. For the evaluation of ease of use, we have two metrics,

Using the scale 1–5, we define score 1 as the tooling is very difficult to use, with a steep learning curve and high complexity. Users need assistance, find it cumbersome or unmanageable, and lack confidence when using it. The tool’s interface and workflow are unintuitive, making even basic tasks frustrating and inefficient. While 5 means the tooling is very easy to use, it has an intuitive design. Users feel confident and independent. The tooling is well-organized, manageable, and does not overwhelm users with unnecessary complexity or excessive learning requirements.

Looking at the comparison in Figure 15, it is clear that the ROS experts are the ones who give the lowest score for ease of use, we obtain here the most negative metric of our whole survey (3.33 for

Comparison of M3 and M10, easy of use considering learnability and without it, and of M4 and M9, usability in terms of the typical aspects of software engineering tools and taking into account its benefits on top of robot operating system (ROS).

For the usability scale, from 1 to 5, 1 means that the tooling negatively affects development as it restricts how the developer wants to work, feels hard to integrate, or lacks coherence. Features may feel disconnected or unhelpful, offering little value during software design or coding. Conversely, 5 means it integrates smoothly, fits the developer workflow, and adds clear value. Features are consistent, intuitive, and helpful for design, implementation, and collaboration.

In Figure 15, we observe that in general, more evident in the case of ROS experts, we score higher in usability than in ease of use. For SIs, the average is slightly higher if we consider the comparison with the usability compared to the current process in ROS (3.99 vs. 3.96), while the trend for the rest of the profiles is the opposite. In either case, the differences are not very significant.

The

Results obtained for the M6, i.e., the future use intentions.

With the The models can be better understood compared to manually written packages (4/5 for all, and 4.8/5 for system integrator). The communication between developers is reduced by using models (3.73/5 for all, and 4.33/5 for SIs). The system software is better documented than typical manually written launch packages (3.72/5 for all, and 3.8/5 for system integrator). When configuring the system, the amount of changes inside the code is reduced (3.54/5 for all, and 3.8/5 for the system integrator). The code generator makes implementation and deployment easier (3.4/5 for all, and 3.83/5 for SIs). The amount of system configuration is reduced compared to manual development (3.27/5 for all, and 3.8/5 for SIs). The approach reduces the validation and testing efforts (3.26/5 for all, and 4.16/5 for SIs).

The next metric,

Answers related to the M8 metric, the relation between the

AD: architecture designers; SI: system integrators.

Figure 17 shows the average values for

Results obtained for the M11. The acceptance from the robot operating system (ROS) community.

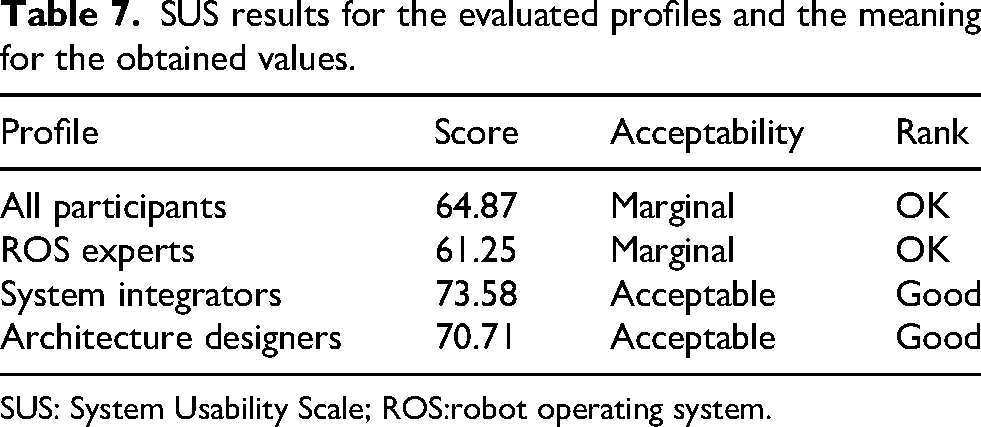

Lastly, we calculate the SUS score by applying its standardized equation. 8

The result is a number between 0 and 100, where if the score is between 50 and 70, the acceptance of the surveyed solution is marginal. For values over 70, it is considered acceptable. Table 7 shows the resulting scores for the

SUS results for the evaluated profiles and the meaning for the obtained values.

SUS: System Usability Scale; ROS:robot operating system.

Observations

G1: Identify the benefits of using RosTooling during the development of robotic software systems. (M7)

The three most voted options (see M7 results) are related to understandability, the reduction of the gap between the different members of the team, and documentation. All these points are somehow related to process optimization.

Understandability and the improvement of the communication between the members of the team ensures a more efficient workflow and fosters collaboration. Effective documentation serves as a reference that standardizes processes and provides consistency, which reduces time spent on clarifications and retraining, and also eases the maintenance of the system.

The lower scores are assigned to the items related to technical tools that reduce the programer’s effort. It is well known that the ROS community, used to programing code by hand, generally tends not to appreciate code generators. Therefore, the answers are not a big surprise. Likewise, everything related to technical tools is evaluated previous section, which the quantitative evaluation.

Nevertheless, the SIs appreciate very positively the validation during the design of the composition of the systems.

G2: Identify where RosTooling is most valuable in the development and lifecycle of a system. (M8)

The obtained answers (see Table 6) clearly show the value of using models, and a modeling-based tool, for the design of the system. Modeling, thanks to its visual representation, improves the overview of the system, its analysis, and documentation. But also, assets consistency, by providing a blueprint to be followed by the developers, and to some extent, reliability of the composition, by checking the validness of the connections and interfaces.

G3: Evaluate the usefulness of ROS modeling. (M9)

The fact that the SIs gave a pretty high score on usability (3.99/5) as shows M9 in Figure 15, even for the SUS scale (73.58/100) as shown in Table 7, indicates that the current development of the

G4: Evaluate the ease of use of models and RosTooling . (M10)

In this aspect we must make a disclaimer,

G5: Evaluating the acceptance of model-based techniques over ROS existing code. (M11)

About acceptance (Figure 17) we see that there is a great acceptance to use models during the design step. Using models as an artifact to improve the understanding between the members of the team, as well as to serve as documentation of the system.

Acceptance is lower in the implementation. This also fits the data divided by profiles, integrators and architects give more importance to the design and therefore see that the use of models adds value to their work.

The implementation part, in ROS, is mostly done by hand, the experts, used to hand-crafted code, do not favor code generators as they add rigidity to the implementation, being this the root of a lower acceptance.

Threads to validity

To address the threats to validity in our survey, we assess construct, internal, and external validity following standard classification guidelines. 21 For construct validity, we utilized the GQM methodology to ensure questions directly mapped to evaluation goals, acknowledging that our metrics relied on averaging responses. To enhance goal alignment, questions were crafted clearly and concisely, and we analyzed combinations of metrics to better capture goal-related aspects. Internal validity was maintained by limiting introductory materials to theoretical overviews, allowing participants to independently explore the tools and benefits without external influence. Although the 5-point Likert scale tends toward neutral responses, we adopted it as part of the SUS scale, widely validated in terms of comprehension and response rate. For external validity, recognizing that the solution targets robotics practitioners, we conducted the experiments within European robotics project teams, drawing participants from both academia and industry. Participants brought diverse profiles and expertise, representing fields such as drone, service, logistics, and industrial robotics, as well as developers of application-agnostic architectures.

Conclusion

Based on the observations from our studies, we can conclude that the proposed MDD solution, the

The tool also facilitates collaboration and standardization through its modeling approach. Visual models improve team communication, helping bridge the gap between members with varying expertise. They also serve as comprehensive documentation artifacts, streamlining processes and easing maintenance. However, the usability of

Adoption of

It is worth noting that conducting the same experiment using additional robotic system development tools would be beneficial to enable broader comparisons beyond just

In summary, the MDD solutions demonstrate a significant potential to enhance robotic software development through better design practices, improved collaboration, and increased portability. To fully realize its benefits, future efforts should focus on improving usability, expanding model libraries, and promoting cultural shifts within the ROS community to embrace model-based techniques.

Supplemental Material

sj-pdf-1-arx-10.1177_17298806251363648 - Supplemental material for Evaluation of a model-driven approach for the integration of robot operating system-based complex robot systems

Supplemental material, sj-pdf-1-arx-10.1177_17298806251363648 for Evaluation of a model-driven approach for the integration of robot operating system-based complex robot systems by Nadia Hammoudeh García, Yuzhang Chen, David Lieb and Andreas Wortmann in International Journal of Advanced Robotic Systems

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This article is supported by the CORESENSE project with funding from the European Union’s Horizon Europe research and innovation programe (Grant Agreement No. 101070254). The authors of the University of Stuttgart were partly funded by the Ministry of Science, Research and Arts of the Federal State of Baden-Württemberg within the Innovations Campus Future Mobility (ICM).

Declaration of conflicting interest

The authors declare that there are no conflicts of interest regarding the publication of this manuscript.

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request. 22

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.