Abstract

Mobile robots often follow humans in warehouse environments or indoor office spaces. A mobile robot requires a control system that stably follows humans without colliding with them. A human-following robot is composed of target detection, target tracking, and control. Conventional 2D laser-based human following systems exploit information from 2D planar laser data to detect human legs through machine learning methods, such as support vector data description and random forest. However, in crowded or cluttered environments, 2D LiDAR data is limited by the lack of features that distinguish human legs from obstacles. Due to the lack of features, false-positive detection is a problem in crowded or cluttered environments. Recent studies using 3D LiDAR have used sensors mounted at an elevated height to measure the overall shape of a person to extract features. Typical mobile robots are mounted in the bottom due to the vibration of the sensors, so there is a cost problem that additional sensors are required. We propose a framework for human following using 3D LiDAR. Our method is able to detect human legs using a LiDAR sensor attached at a low position without additional sensors. This study proposes a 3D laser-based human leg detection and tracking framework to improve the robustness of human-following for autonomous mobile robots. With a deep learning-based human leg detector, using the Point-Voxel-RCNN model, the proposed 3D human leg tracking system can help robots robustly follow humans in cluttered and crowded environments. Additionally, we demonstrate the robustness of our method in a practical cluttered environment by comparing the performance of a conventional human leg detection and following system.

Introduction

Autonomous mobile robots can be used in a diverse field and, thus, have endless applications. Many industries leverage human–robot interactions and robots significantly contribute to increasing work productivity or supplementing human capabilities.1–4 Many sensors and techniques can help robots recognize objects and people around them. The most commonly used sensors are vision and laser sensors, for example, cameras and light detection and ranging (LiDAR). Recently, Miao and Liu 5 and Gelfert 6 have proposed to detect people, using only cameras. However, vision sensors are sensitive to many environmental conditions, for example, changes in light conditions. Light conditions and motion blur result in a poor image quality in low light or dynamic environments. Laser sensors such as LiDAR are less accessible than vision sensors, however they can measure distances accurately and unlike cameras, they are robust and can be used in a wide range of environmental conditions.

Many studies have explored how human-following robots can detect and track people using various sensors.7–9 Yuan et al. 7 constructed a tracking system that integrates information from vision sensors and laser sensors based on the particle filter. This system enables a mobile robot to follow a target human. Wu et al. 8 designed a module that enables a mobile robot to successfully follow specific objects, such as other robots or humans, using an ultrahigh-frequency radio frequency identification (RFID) device. However, RFID devices have the inconvenience of requiring special labels to be attached to the objects intended to be followed. Many studies have been conducted to detect human legs by assuming certain shapes to recognize humans. However, human legs that are observed from 2D laser sensors are hard to classify because the shape of 2D human legs is similar to that of obstacles such as chairs. Xavier et al. 10 assumed the shape of human legs to be an arc of a circle and calculated the geometric features, such as the radius and the inscribed angle of the circle for detection. Studies11–15 analyzed not only the geometric shape of the legs but also other information within the leg data to classify human legs. These studies comprised two main steps to classify human legs. Chung et al. 11 classified human legs by calculating the width, depth, and girth of the leg data and which was used to learn the data distribution by adopting the support vector data description. 16 Li et al. 12 defined the spatial relationship of geometric information and learned different types of features using AdaBoost 17 to improve the system performance by reducing false positive detection rate for unavoidable situations such as occlusion. Leigh et al. 13 used geometric information in laser clusters to learn classifiers for human leg and non-human leg clusters using random forest (RF). Wang et al. 14 proposed an adaptive-switch decision tree design to improve the detection performance by focusing on false-positive detection issues that surfaced in various noise conditions using the standard RF. The authors achieved this by differentiating between noise-sensitive features and non-noise-sensitive features. Despite many studies using 2D LiDAR, 2D LiDAR data has limitations when it comes to detecting human legs. 2D LiDAR data lacks features that distinguish human legs from obstacles. In human following systems, the insufficient performance of the detector leads to unreliable tracking.

Deep learning-based object detection methods using 3D laser data can be widely classified into two categories: single-stage detectors and two-stage detectors. Single-stage detectors calculate the class and location of an object simultaneously and consume low-enough computational time to ensure real-time operation.18,19 Unlike single-stage detectors, two-stage detectors20,21 required two steps to detect objects. First, they generate regions of interest (RoIs) through a region proposal network to indicate locations with a high probability of object presence from the input data. In the second step, class prediction and box regression are performed using the generated RoIs.

Object detection methods using 3D laser point cloud data can be further classified into point-based and grid-based methods. Conventional deep learning frameworks are well-suited for ordered input data such as 2D images or 3D Voxels. Qi et al. 22 proposed a novel deep learning framework for object classification and segmentation using unordered 3D raw point cloud data as input. While point-based methods encode spatial information from 3D raw point cloud data and detect objects using the PointNet framework,22,23 grid-based methods exploit data preprocessing methods to convert 3D raw point cloud data into ordered data such as 3D voxels or a 2D bird's-eye view (BEV) feature map for conventional deep learning frameworks. Shi et al. 21 proposed a framework, the Point-Voxel RCNN (PV-RCNN), that integrated grid and point-based methods and showed state-of-the-art detection performance with high detection accuracy in autonomous driving environments. PV-RCNN model 21 has been used to detect the shape of 3D human legs from 3D raw laser data with the aim of developing a system to enable a human-following robot to robustly track people. However, due to the heavy computational burden of the PV-RCNN model, it is challenging to use it for real-time detection.

With the increase in access to 3D laser sensors, many studies are targeting object detection using 3D laser data.24–26 Chen et al.

26

use a support vector machine to detect humans from 3D laser sensor data. However, in typical mobile robots, the sensors are placed at a low position due to vibration problems. Since the method described by Chen et al.

26

requires point data from both the body and the legs, additional sensors are required to be installed at a higher level. The additional sensors are a cost problem. Person-MinkUNet, a 3D person detection network based on Minkowski Engine and U-Net architecture, is proposed by Jia and Leibe,

27

applying submanifold sparse convolution. Yan et al.

28

introduced an online learning system for human classification by mobile robots using 3D LiDAR sensors, requiring minimal labeled data and leveraging real-time clustering and automated sample generation. The RPEA network

29

is proposed, featuring a Residual Path architecture to retain spatial information and an efficient Channel Attention module to suppress noise in 3D point clouds, to improve detection accuracy. However, recent studies28,29 have focused on the problem of identifying people using their overall body shape. In the case of a typical mobile robot equipped with a LiDAR sensor, it is generally mounted at a height between 0.35 and 0.45 m from the ground. For interacting between people and mobile robots, they need to be within 2 m of each other. When the LiDAR measures within 2 m range, it typically only detects the person's legs. In this study, we propose a 3D human leg tracking system using the adaptive search space PV-RCNN model. The real-time performance of the PV-RCNN model was improved by reducing its computational time using a target pose-based search space adjustment method. We also adopted open-loop control to enable the mobile robot to follow the target in real time. Our main contributions are summarized as follows.

We utilized the PV-RCNN model to train the 3D human leg shape and improved the human leg detection performance of the human leg tracking system for mobile robots. To reduce the computational cost of the PV-RCNN deep learning model and improve its real-time detection capability, we implemented a target pose-based search space, which result in reduced computational cost.

3D human leg tracking system using the adaptive search space PV-RCNN model

This study proposes a human leg detection and tracking system using PV-RCNN. Figure 1 shows a flowchart of the human tracking system proposed by this study, which can be classified into three major processes: (a) the detector, (b) the tracker, and (c) the motion controller of the mobile robot. In the detector, the collected human leg data is used to detect human legs from the input data through a pre-trained PV-RCNN-based deep learning model. Subsequently, the data detected in the detector is transferred to the tracker, where it undergoes a series of tracking processes and ultimately updated the tracking data. After identifying the target to be tracked, the velocity of the mobile robot is calculated using the positional information to control the robot and ensure it safely follows the target.

3D human leg tracking system framework.

3D human leg detector

The PV-RCNN model has the limitations of slow inference time for real-time object detection. To address this, we implemented a preprocessing step before the raw point cloud entered PV-RCNN. This preprocessing step reduced the computational burden and ensuring robustness, thereby enabling real-time object detection.

Figure 2 shows a flowchart of the preprocessing of 3D raw point cloud data to reduce the inference time of the PV-RCNN model when detecting human legs. The initial search space is defined by setting a boundary in front of the robot. When a target human was detected, the boundary is reset around the location of this target. If the detection was failed, the search space expands back to the initially boundary to re-identify the target human. By configuring the adaptive search space, we reduced the amount of raw point cloud data that required computation, thereby improving real-time detection using the PV-RCNN model. Furthermore, setting the search space around the detected target enabled robust tracking.

3D point cloud preprocessing flow chart.

Figure 3 illustrates an example of the search space. The left image represents the search space when no human is present, and the right image demonstrates the reduction of the search space when a target human was detected. Since the robot follows the person in front of it, the initial search space is defined as a square relative to the robot's forward direction. When a person is detected, the search space is adjusted to a circular shape based on the center position coordinates of the person calculated by the network. The circular shaped search space is smaller than the square shaped search space, resulting in lower computational costs. PV-RCNN accepted 3D laser data as input, which is then voxelized. Using a 3D sparse convolution layer, the downsampled voxels were transformed into a 2D BEV feature map. Thereafter, a 3D RoI was determined, which was then refined using keypoints, ultimately outputted a bounding box.

Example of search space. (a) Front view search space. (b) Target pose-based search space.

Figure 4 depicts a simplified illustration of the process by which the PV-RCNN model detects human legs from 3D laser data. The mobile robot can recognize humans using the designed detector, which collected preprocessed data

PV-RCNN framework. 19

The

Tracking of multiple human legs

The proposed 3D laser-based human leg tracking system was designed using a simple and basic tracker. While the performance of the tracker is important for the overall performance of the human tracking system, updated tracked data of the detector's performance determines the robustness of the entire system. Therefore, by using the highly accurate PV-RCNN model, we were able to design a robust human tracking system using only a basic tracker. The tracker combines a Kalman filter for predicting the future state of the tracking data and a Global Nearest Neighbor (GNN) data association method for solving the data association problem between the detected data and tracked data.

The GNN method is used to perform the data association process between detected data and tracked data in a 2D human leg tracking system.

13

At the current timestep

For a mobile robot to robustly follow a human, it is essential to have management tasks that create and remove tracking data using detection data. Approaches such as tracking all detection data as humans or continuously tracking and maintaining tracking data that has not been detected for a certain period can compromise the robustness of the system, as we cannot always be confident that the output data from the detector is reliable. Therefore, to ensure the robustness of the tracking system, if detection occurs

To match the tracking data at timestep

Motion control of a mobile robot for target-following

To safely and robustly follow a target, a mobile robot must define a safe distance

Posture error between the mobile robot and the target human from the target posture.

The velocity

Experiments and results

In this experiment, a deep learning-based 3D human leg tracking system was proposed to address the issue of performance degradation in 2D data-based human leg tracking systems due to increased false-positive detections in cluttered environments. To evaluate the performance of the human leg tracking system, we validated human tracking experiments in both clear and cluttered environments. We compared the leg detection performance of our proposed method with that of Leigh et al. method. 13 The method of Leigh et al. 13 is based on the method of the leg detector package of Robot Operating System (ROS) 30 and trains the target features to the RF classifier to detect the target, and Leigh et al. method 13 shows a performance of reducing the false positive rate compared to the leg detector package of ROS. 30 We adopted the Velodyne VLP-16 3D LiDAR device to obtain 3D point cloud data, which was processed on a laptop to conduct human leg tracking and robot following experiments. Figure 6 shows the mobile robot equipped with a 3D LiDAR and laptop. The specifications of the laptop were CPU Intel Core i7-7700HQ, GPU Nvidia GeForce GTX 1070.

Mobile robot with 3D LiDAR.

Evaluation metrics of human leg detection

Precision and recall, widely used as evaluation metrics for detection performance, were computed to evaluate the performance of the detector. Precision means how accurate is the detection results of the detector, and recall indicates how well the detector can detect actual objects without omitting any. Equations (4) and (5) were used to calculate precision and recall, respectively, using the values of true-positive (TP), false-positive (FP), and false-negative (FN).

Experimental results of leg tracking in a clear environment

Figure 7 shows the scenario where the robot followed the target human in a clear environment. The image on the left represents the actual robot and human. The image in the middle shows the detection of human legs using Leigh et al. method, 13 and the one on the right illustrates the proposed system that detected human legs from raw point cloud data.

Results of human leg detection with different methods in a clear environment. (a) Real-world environment. (b) Tracking results. 13 (c) Our tracking results.

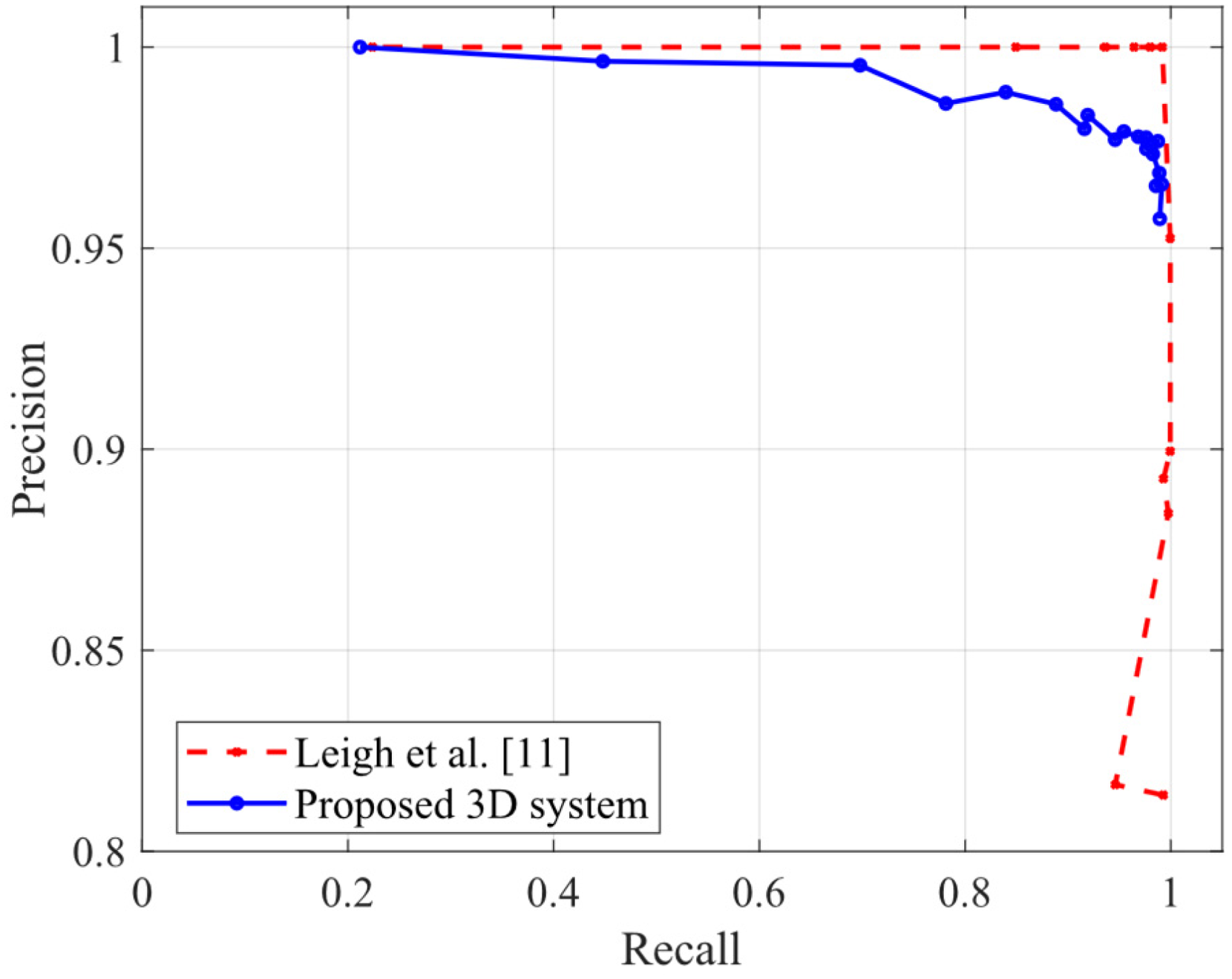

Figure 8 shows the precision–recall curve that varies with the detection threshold of the two system detectors. In this figure, the solid line (labeled “Proposed 3D system”) represents our proposed PV-RCNN-based 3D human leg tracking method, and the dotted line (labeled “Leigh et al. 13 ”) represents the 2D laser-based human leg tracking method adopted by Leigh et al. 13 When evaluating the performance of a detection system, both precision and recall should be considered simultaneously. Therefore, it was necessary to analyze both metrics.

Precision–recall curves of different methods in a clear environment.

It can be seen from Table 1 that, both systems achieved a precision of over 98% and a recall of close to 98% when detecting humans. The performance of our proposed 3D system in this paper is that even if the target tracking system fails to detect, it is able to detect again in the next loop and does not fail to follow the target. Thus, it can be concluded that both systems demonstrated satisfactory performance when following target human in clear environments and were reliable.

Comparison of best detection performance in a clear environment.

Experimental results of leg tracking in a cluttered environment

Figure 9 shows the results of the performance evaluation of the tracking system's detection capabilities. An experiment was conducted where the mobile robot navigated a complex office environment, where obstacles such as chairs and table legs, to resemble human legs, were present in relative abundance. The image on the left shows the actual robot and human. The image in the middle shows the detection of human legs by Leigh et al., 13 and the one on the right illustrates the proposed system that detected human legs from 3D raw point cloud data.

Results of human leg detection with different methods in a cluttered environment. (a) Real-world environment. (b) Tracking results with false-positive detection from Leigh et al. 13 (c) Tracking results of the proposed system.

Figure 10 shows the precision–recall curves of different methods in a cluttered environment. The average precision (AP) value is used when quantitatively comparing the performance of detection system. The area under the precision–recall curve represents the AP value, with a larger area indicating better system performance.

Precision–recall curves of different methods in a cluttered environment.

As seen in Table 2, the 2D laser-based system 13 exhibited an increase in the false-positive detection rate, thus resulting in a decrease in the precision of the detector to 43.95%; in contrast, the proposed system showed a recall of 77.61% and precision of 81.99%, indicating superior performance compared to the 2D laser-based system.

Comparison of best detection performance in cluttered environment.

In a clear environment, both Leigh et al. method 13 and the proposed 3D system performed well in target tracking. However, in a cluttered environment, the proposed 3D system outperformed the Leigh et al. method. 13 This means that our proposed 3D system is more useful in real office environments.

Table 3 shows a comparison of inference time using the preprocessed data based on adaptive search space. When detecting human legs, PV-RCNN took 0.222 s; in contrast, our proposed system that used the target pose-based search space took 0.205 s. The comparison shows that our proposed system reduced the inference time by approximately 8% compared to the conventional PV-RCNN.

Comparison of inference time.

Conclusion

In this study, we propose a 3D human leg tracking system that uses PV-RCNN-based 3D object detection method to detect 3D human legs and enhance the detection performance of the human leg detector in cluttered environments. The human leg tracking system enables a more robust human-following capability for mobile robots. A significant issue with 2D laser sensor-based human tracking systems is that, in complex environments where many objects resemble closely leg shapes, false detections probability will be increased and leading to tracking performance degradation. To address this issue, we replaced the 2D laser sensor-based detector with a 3D laser sensor-based detector, which utilizes the PV-RCNN model, a deep learning-based 3D object detection framework, to learn the shape of human legs and detect them from 3D laser data. Through experiments conducted in cluttered environments such as offices with many obstacles that resemble leg shapes, we confirmed a reduction in the false-positive detection rate of the human leg detector and an improvement in the performance of the tracking system. The proposed human tracking system in this paper will help to solve the problems such as mobile robots losing the target human and to improve the overall human-following performance of mobile robots. In the future, we will work on a network that utilizes not only 3D LiDAR but also RGBD camera sensors to fuse point cloud data and image data for more robust performance in target tracking systems.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.