Abstract

Visual–tactile fusion information plays a crucial role in robotic object classification. The fusion module in existing visual–tactile fusion models directly splices visual and tactile features at the feature layer; however, for different objects, the contributions of visual features and tactile features to classification are different. Moreover, direct concatenation may ignore features that are more beneficial for classification and will also increase computational costs and reduce model classification efficiency. To utilize object feature information more effectively and further improve the efficiency and accuracy of robotic object classification, we propose a visual–tactile fusion object classification method based on adaptive feature weighting in this article. First, a lightweight feature extraction module is used to extract the visual and tactile features of each object. Then, the two feature vectors are input into an adaptive weighted fusion module. Finally, the fused feature vector is input into the fully connected layer for classification, yielding the categories and physical attributes of the objects. In this article, extensive experiments are performed with the Penn Haptic Adjective Corpus 2 public dataset and the newly developed Visual-Haptic Adjective Corpus 52 dataset. The experimental results demonstrate that for the public dataset Penn Haptic Adjective Corpus 2, our method achieves a value of 0.9750 in terms of the area under the curve. Compared with the highest area under the curve obtained by the existing state-of-the-art methods, our method improves by 1.92%. Moreover, compared with the existing state-of-the-art methods, our method achieves the best results in training time and inference time; while for the novel Visual-Haptic Adjective Corpus 52 dataset, our method achieves values of 0.9827 and 0.9850 in terms of the area under the curve and accuracy metrics, respectively. Furthermore, the inference time reaches 1.559 s/sheet, demonstrating the effectiveness of the proposed method.

Introduction

Intelligent robots can perform specific tasks by simulating human behaviors, emotions, and thoughts according to preset procedures. Compared with traditional robots, intelligent robots have better perception and decision-making performance. 1 A crucial research direction in the field of visual–tactile fusion is to perceive objects through visual and tactile sensors on robotic arms and classify them accurately. Features such as the shape and color of objects are recognized by visual sensors, while features such as texture, roughness, and hardness are identified by tactile sensors. 2 Visual and tactile features cooperate with one another and play a crucial role in the task of accurate object classification.

Humans rely on visual and tactile perception to interact with the physical world, 3 and the same is true for robots. However, when a robot uses a single perception mode (visual or tactile) for object recognition, it is difficult to distinguish objects with similar appearances or attributes. The best method for addressing these deficiencies is to combine visual and tactile data. The combination of visual and tactile data not only overcomes the limitations of recognizing objects with a single perception mode but also obtains more diverse complementary information, thus greatly improving the ability to identify objects.

Visual–tactile fusion has played an increasingly important role in object recognition, 4 object grasping, 5 physical attribute adjective classification, 6 object clustering, 7 and cross-modal matching. 8 However, most of the existing visual–tactile fusion methods directly splice visual and tactile feature vectors, and the feature information of visual and tactile modalities is not fully utilized, so it is difficult to improve the accuracy and speed of object classification.

To improve the speed and accuracy of robotic object classification, this article proposes a visual–tactile fusion object classification method based on adaptive feature weighting, which solves the problems of feature redundancy and the decline in classification speed and accuracy caused by the direct splicing of visual and tactile feature vectors. Compared with other methods on public and newly developed datasets, experimental results demonstrate that our method can effectively utilize feature information and improve the speed and accuracy of object classification. When applied in real life, this research can not only greatly improve the working speed and accuracy of service and collaborative robots but also greatly improve the personal experience of users.

The main contributions of this article are as follows: A visual–tactile fusion object classification method based on adaptive feature weighting is proposed. In this method, a channel attention mechanism is used to adaptively calibrate the feature response of the channel. The calibrated features enhance important information while weakening background, noise, and other minor information. Compared with other methods on public and newly developed datasets, the proposed method can effectively enhance the directivity of the features, solve the problem of feature redundancy, and significantly improve the speed and accuracy of classification. The Visual-Haptic Adjective Corpus (VHAC) dataset in the study by Zhang et al.

9

is expanded and improved, and the VHAC-52 visual–tactile adjective dataset is developed. This dataset contains visual and tactile data of 52 kinds of common objects and 14 adjectives used to describe the objects’ visual and tactile attributes. Compared with the Penn Haptic Adjective Corpus 2 (PHAC-2) and VHAC datasets, this dataset provides richer and more comprehensive information about the objects, allowing for more accurate object recognition and more adequate model training and testing. The preprocessing mode of tactile signals is changed. Compared with the VHAC and PHAC-2 datasets, each tactile signal in the VHAC-52 dataset contains two complete grasping processes. The experimental results demonstrate that the proposed processing method improves the model’s ability to capture subtle changes in tactile signals, thus improving model classification accuracy.

Related works

Multimodal learning

Visual–tactile fusion perception is a common multimodal fusion. Multimodal fusion is an important part of machine learning. 10 Multimodal fusion involves studying how to best use different modes of data to improve the model recognition accuracy and plays an important role in addressing various data degradation scenarios, such as external noise and sensor errors. Since visual and tactile perception differs in many aspects, such as the sensor working principle, data structure, and measurement range, making full use of their respective advantages during feature fusion is a very difficult problem. With the recent continuous success of machine learning algorithms in various fusion tasks, multimodal fusion has been researched by many groups.

Bednarek et al. 11 found that the fusion of multimodal data could maintain the robustness of robot perception when faced with various disturbances. Ramachandram and Taylor 12 used multimodal data to provide complementary information for each mode and noted that a deep multimodal learning model could compensate for lost data or modes during the reasoning process. Zhang et al. 13 used multimodal learning to describe different object features from various perspectives. Lahat et al. 14 found that each modality in multimodal learning contributed to the overall process and that these gains could not be deduced or calculated from a single modality. In summary, multimodal fusion methods not only address the shortcomings of single-modal methods in terms of information giving but also effectively improve the model performance.

Visual–tactile fusion perception

Object recognition

Gao et al. 15 constructed a visual–tactile deep neural network model to classify the physical attributes of object surfaces and proved that their model achieved higher performance than a single-modal model on the PHAC-2 public dataset. 16 To improve the precision of object classification, Liu et al. 17 designed a joint group kernel sparse coding method, and the experimental results proved that this method significantly improved the classification precision and addressed the issue of weak pairings between visual and tactile data samples. Luo et al. 18 proposed a deep maximum covariance analysis method for object texture recognition based on visual–tactile fusion perception. To identify new objects without training new data for daily tasks, Abderrahmane et al. 19 proposed a visuo-tactile zero-shot learning algorithm that used physical attributes of objects to identify new objects by learning visual and tactile data collected from known objects.

3D Object recognition

Tahoun et al. 20 proposed a novel multimodal (visual and tactile) semisupervised generative model for reconstructing the shapes of complete 3D objects. Wang et al. 21 perceived the shapes of 3D objects by combining visual and tactile signals with prior knowledge of object shapes.

Object grasping

Calandra et al. 22 proposed an end-to-end action-conditional model for regrasping, and the results showed that the regrasping strategy of visual–tactile fusion sensing greatly ameliorated the grasping success rate and reduced the force necessary to grasp the object. Cui et al. 23 proposed a 3D convolution-based visual and tactile fusion deep neural network model to judge the grasping states of many types of deformable objects. To enable robots to achieve more stable grasping planning, Watkins-Valls et al. 24 proposed a 3D convolutional neural network using visual–tactile fusion data to deduce the complete geometric shapes of objects. Guo et al. 25 proposed a visual and tactile hybrid depth model for detecting robotic grasping and used tactile data to evaluate grasping stability.

Object clustering

Zhang et al. 26 proposed a deep auto-encoder-like nonnegative matrix factorization framework for visual and tactile fusion object clustering. Moreover, they used a graph regularizer and modality-level consensus regularizer to reduce the disparity between the visual and tactile data. In addition, Zhang et al. 27 proposed a generative partial visual and tactile fusion framework for object clustering that effectively solved the problem of signal loss caused by occlusion, noise, and sensor errors during data collection.

Cross-modal matching

Zhang et al. 28 proposed a partial visual and tactile fused framework that used modality-specific encoders and a modality gap mitigated network to narrow the large gap and mine complementary information between visual and tactile data. Dong et al. 29 proposed a new lifelong visual and tactile learning model that used visual–tactile fusion information to capture differentiated material properties and narrow the clear differences between heterogeneous feature distributions. The experimental results proved that the model could fully explore potential correlations within and across modalities. Liu et al. 30 designed a dictionary learning model that simultaneously learned weakly paired visual and tactile modes in the projection subspace and a latent common dictionary, thus solving the problem of visual and tactile cross-modal matching.

The above research fully demonstrates the importance of visual–tactile fusion in various robot manipulation tasks. However, the previous visual–tactile fusion methods based on deep learning only directly splice visual and tactile features at the feature layer, which cannot fully utilize the feature information of each modality.

The preprocessing mode of the tactile signals

In the PHAC-2 16 and VHAC 9 datasets, each tactile signal includes only one complete grasping process; however, the tactile information of the previous and second grasping processes needs to be compared when the model analyzes the tactile signals. Therefore, to make it easier for the model to capture subtle changes in the tactile signals, on the VHAC-52 dataset, we change the segmentation method of the tactile signals, that is, every two grasping processes of tactile data are divided into an independent tactile signal.

Introduced model

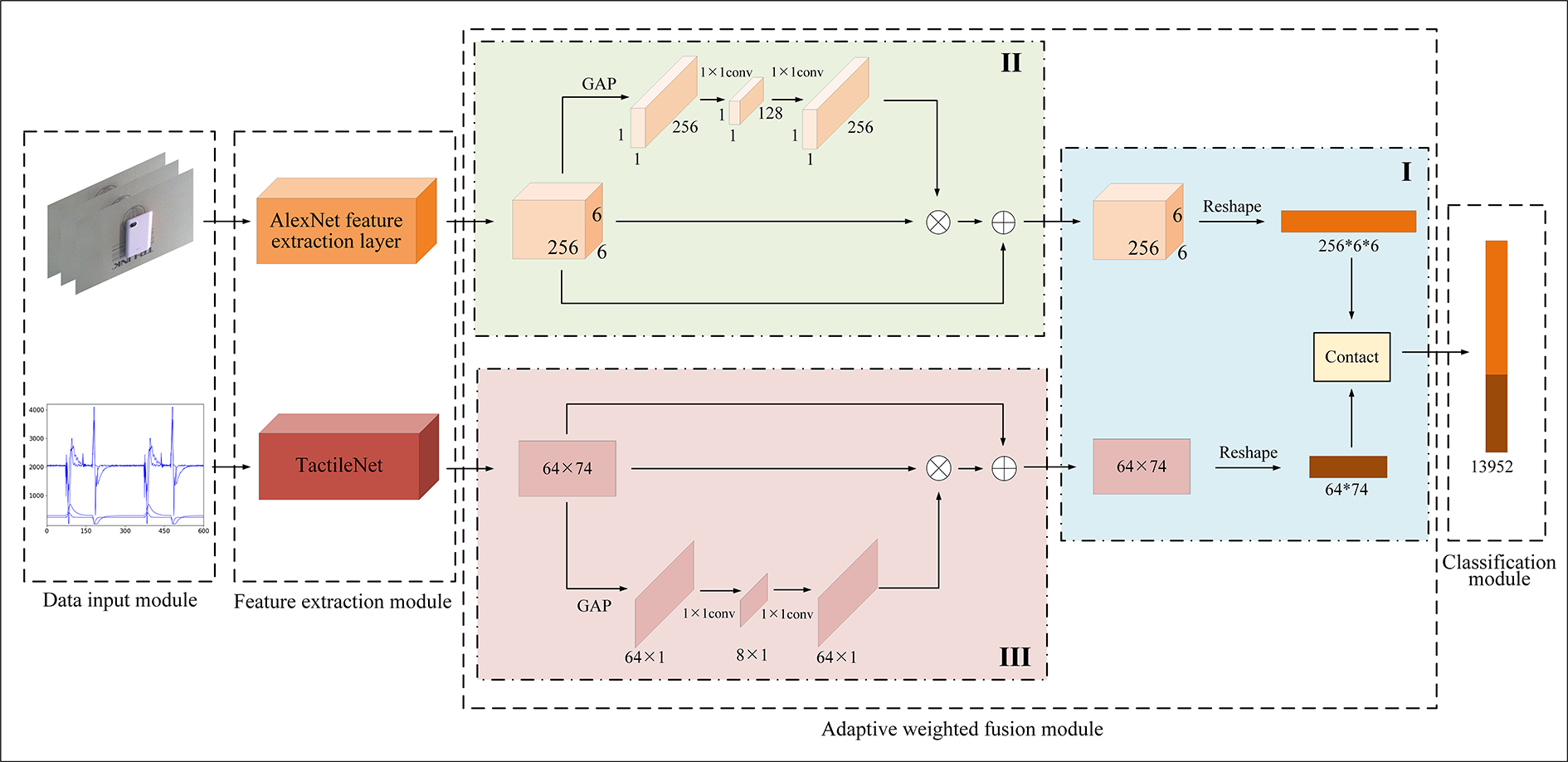

Figure 1 shows an overall structure diagram of the proposed lightweight visual and tactile fusion network model in this article. The proposed model includes four modules: a data input module, a feature extraction module, an adaptive weighted fusion module, and a classification module. Visual images and tactile signals are extracted by the AlexNet 31 feature extraction layer and TactileNet network model, respectively, and then input into the adaptive weighted fusion module for feature recalibration and fusion. The adaptive weighted fusion module is composed of two parallel channel attention networks and a feature fusion network, and the weights of each channel of visual and tactile feature vectors are readjusted with the adaptive weighted fusion module. Finally, the fused feature vector is input into the classification module, and the classification result is output by a fully connected layer.

The overall structure diagram of the lightweight visual–tactile fusion network model.

Data input module

The data input module consists of visual images and tactile signals of each object. The visual image is an RGB three-dimensional matrix, and after pretreatment, its size is 224 × 224 × 3. The tactile signal is a one-dimensional time series, and after pretreatment, its size is 600 × 4. The visual image and the tactile signal form a pair of inputs in the model, that is, a visual image matches a tactile signal.

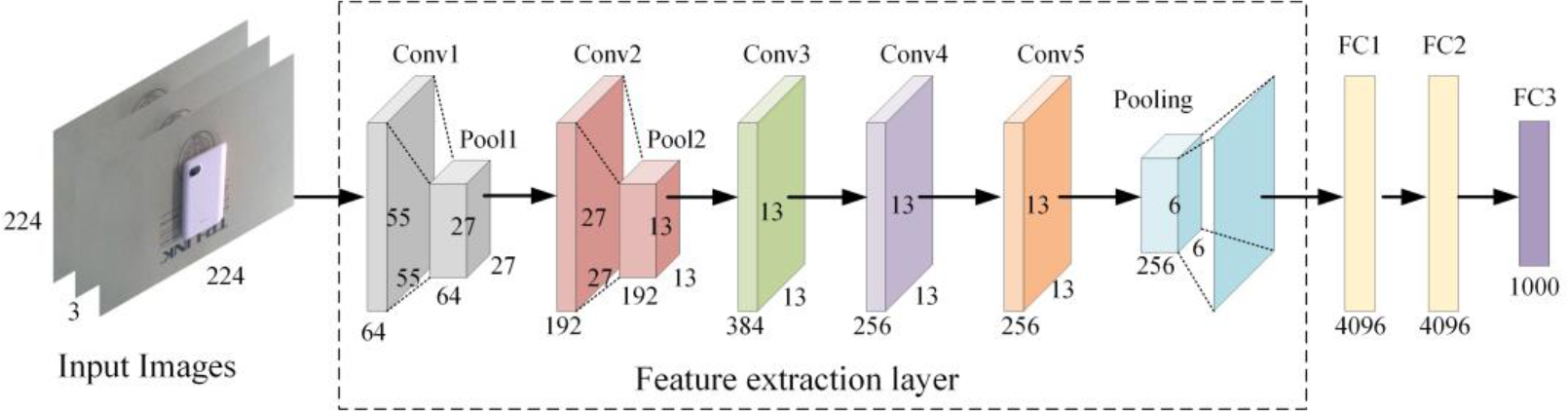

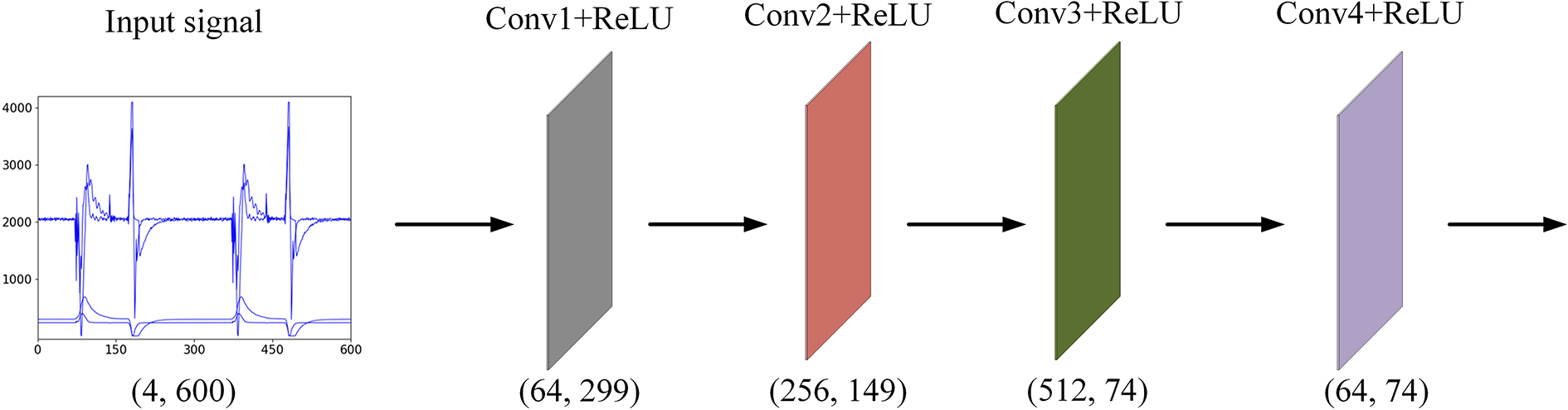

Feature extraction module

The feature extraction module consists of the AlexNet feature extraction layer and the four-layer one-dimensional convolutional neural network, and the output of the feature extraction module is visual and tactile feature vectors. Figure 2 shows the structure diagram of the AlexNet network model, and its feature extraction layer is in the dotted box. Figure 3 shows the structure diagram of the TactileNet network model.

Structure diagram of the AlexNet network model.

Structure diagram of the TactileNet network model.

The rectified linear unit activation function is used after each convolutional module of TactileNet to increase the nonlinearity between each layer of the neural network. The four-layer one-dimensional convolutional neural network first increases and then decreases the dimensions of the tactile signals, thus achieving cross-channel interactions among the tactile features. Moreover, the use of 1 × 1 convolution in the last layer can effectively decrease the number of parameters in the tactile model and improve the operating speed of the whole network model.

Adaptive weighted fusion module

To extract more discriminative features from the visual and tactile feature vectors, an adaptive weighted fusion module is used to recalibrate the features. The adaptive weighted fusion module is mainly composed of the channel attention mechanism. The channel attention mechanism can select and enhance the feature information that is more critical to the current target task and suppress the irrelevant feature information at the same time, thereby achieving adaptive weighted feature processing.

As shown in Figure 1, the adaptive weighted fusion module contains two network branches with similar architectures: the upper branch is the attention branch of the visual channel, which is responsible for adaptive weighted processing of the 3D visual feature vectors; the lower branch is the attention branch of the tactile channel, which is responsible for adaptive weighted processing of the two-dimensional tactile feature vectors. At the end of the module, the adaptive weighted visual and tactile feature vectors are spliced with “contact” to obtain the adaptive weighted visual–tactile fusion feature vectors.

The channel attention mechanism can be divided into three stages: the channel information processing stage, channel information weighting stage, and channel information superposition stage. The specific implementation processes are as follows.

During the channel information processing stage, a 6 × 6 × 256 visual feature vector is reduced to a 1 × 1 × 256 channel descriptor by the global average pooling layer. Then, the dimension of the channel descriptor is decreased and then increased by 1 × 1 convolution, yielding the channel coefficient matrix. The purpose of this dimensional reduction and elevation is to perfect the expression ability of the model, which allows the model to better fit complex correlations between channels. In this process,

The tactile feature vectors are processed similarly to the visual feature vectors. First, during the channel information processing stage, a 64 × 74 tactile feature vector is reduced to a 64 × 1 channel descriptor by the global average pooling layer. Then, the dimension of the channel descriptor is decreased and then increased by 1 × 1 convolution, yielding the channel coefficient matrix. In this process,

Classification module

The input to the classification module is the visual–tactile fusion feature vectors, and the output is the classification accuracy of the objects and physical attribute adjectives, namely, the accuracy (ACC) and area under the curve (AUC), respectively. The loss function used is the MultiLabelSoftMarginLoss multilabel classification loss function. As shown in Formula (1), x is the predicted label of the network model, y is the actual label of the network model, and C is the number of classifications, with

Experiments and analysis

Dataset

Chu et al. 16 produced a tactile adjective dataset PHAC-2, which contains visual and tactile data of 53 common household objects and 24 tactile adjectives to describe each object.

In terms of the visual data, the PHAC-2 dataset contains eight visual images of different angles of each object. In terms of the tactile data, four exploratory procedures of squeezing, holding, slow sliding, and fast sliding are performed on each object, and five types of signals: low-frequency fluid pressure (P DC), high-frequency fluid vibration (P AC), core temperature (T DC), core temperature change (T AC), and 19 electrode impedance (E 1…E 19) are collected by the BioTac 32 tactile sensor installed on the fingertips of the PR2 robot. Each object is described with one to seven tactile adjectives. The visual images of some objects in the PHAC-2 dataset are shown in Figure 4.

Visual images of some objects in the PHAC-2 dataset. PHAC-2: Penn Haptic Adjective Corpus 2.

An analysis of the PHAC-2 dataset shows that this dataset has some defects. In terms of visual data, there are only eight visual images for each object; thus, fewer images must be allocated to the training and testing sets, which not only causes insufficient model training but also makes the testing results more serendipitous. Additionally, the PHAC-2 dataset contains only tactile attribute adjectives of objects; however, visual attributes of objects also play important roles in accurate object classification. Only when both tactile attributes and visual attributes are considered can the superiority of the visual–tactile fusion method be better reflected.

Thus, a visual–tactile adjective dataset, VHAC-52, which contains visual and tactile data of 52 common objects and 14 visual and tactile adjectives to describe each object, is developed. The VHAC dataset includes the visual and tactile data of 22 objects and 26 visual and tactile adjectives. The VHAC-52 dataset expands the number of objects to 52 on this basis, and the visual and tactile attribute adjectives are redefined. The visual images of 52 objects in the VHAC-52 dataset are shown in Figure 5.

Visual images of 52 objects in the VHAC-52 dataset. VHAC-52: Visual-Haptic Adjective Corpus 52.

The data collection process for the VHAC-52 dataset is similar to that for the VHAC dataset. In terms of visual data collection, 30 objects are placed in turn on a robot experimental platform, and visual images of these objects are collected by a RealSensor D435i (Intel Corporation) camera installed on the wrist of the Kinova robotic arm (Kinova Corporation). After the force control of the robotic arm is turned on, 20 pictures of each object are collected at different spatial angles by moving the robotic arm to various spatial positions and performing operations such as flipping, translating, and rotating the object. In terms of tactile data collection, two types of signals: the low-frequency fluid pressure (P DC) and high-frequency fluid vibration (P AC) are collected for each object by a NumaTac (SynTouch Corporation) tactile sensor installed on the fingertips of the Kinova robotic arm. A total of 40 grasping operations are performed for each object, and each grasping operation includes three exploratory processes: clamping, holding, and opening. Figure 6 shows the robotic arm collecting visual images (left) and tactile signals (right) of a power bank.

The robotic arm is collecting the visual and tactile data of the power bank.

Since the PHAC-2 dataset does not contain visual attribute adjectives for the objects, the algorithm cannot classify objects according to their visual attributes. Based on the above problems, Zhang et al. 9 added attribute adjectives related to the visual aspects of objects, such as the colors black, yellow, white, and red, to the label set of the VHAC dataset. In this article, the adjective label of the VHAC-52 dataset is simplified and unified, and 14 attribute adjectives related to the visual and tactile aspects of objects are redefined. Figure 7 shows the 14 physical attribute adjectives and the number of objects they describe in the VHAC-52 dataset.

Fourteen physical attribute adjectives and the number of objects they describe in the VHAC-52 dataset. VHAC-52: Visual-Haptic Adjective Corpus 52.

Data preprocessing

Tactile signal

This article adopts a method similar to the tactile signal preprocessing approach used with the PHAC-2 dataset. Since the NumaTac tactile sensor samples P AC signals at a frequency of 2200 Hz and P DC signals at a frequency of 100 Hz, for convenience, in this article, the P AC signals are downsampled to 100 Hz to be consistent with the sampling frequency of the P DC signals.

The tactile data collected by the robotic arm are divided into two types: first, each of the 40 grasping processes is divided into an independent tactile signal (one-step signal, the data length is 300 data points), and second, every pair of the 40 grasping processes is divided into an independent tactile signal (two-step signal, the data length is 600 data points). Then, the segmented tactile signals are saved in the “.csv” file format.

Visual signal

The process of acquiring visual images is affected by factors such as ambient brightness, light intensity, and camera resolution. Thus, to improve the quality and discernibility of the image and the reliability of the feature extraction, matching, and recognition process, the visual images must be preprocessed. Image preprocessing includes image enhancement, rotation, and shearing.

The specific implementation processes are as follows: first, the sizes of the original images in the training set are uniformly adjusted to 300 × 300, and the areas with sizes of 224 × 224 are intercepted in the center; second, to ensure that the model can recognize different angles of the object, the intercepted images are randomly flipped with a probability of 50%; and finally, to diversify the images and ensure that the model is affected by irrelevant factors as little as possible, the brightness, contrast, saturation, and hue of the images are randomly adjusted to ±30% of the original images. For the images in the testing set, the original images are uniformly adjusted to 300 × 300, and an area of size 224 × 224 is captured in the center in this article.

Experimental settings

The hardware and software configurations of the server used in this article are shown in Table 1. The deep learning PyTorch framework of the graphics processing unit (GPU) version is installed on the Ubuntu 18.04.4 Linux operating system.

Hardware and software configurations of the server.

Note: CUDA: compute unified device architecture.

In this article, the number of epochs is set to 150, the training batch size is set to 10, and the learning rate is set to

To fully assess the performance of the model, the PHAC-2 and VHAC-52 datasets are divided into training and testing sets at a ratio of 8:2. The training set is used to train the model, and the testing set is used to evaluate the model performance. The ACC and AUC metrics are used to evaluate the classification accuracy of the objects and physical attribute adjectives, respectively. The ACC is the ratio of the number of correctly classified objects to the total number of objects. The higher the ACC value is, the higher the classification accuracy of the objects, as shown in Formula (2). The AUC is the area enclosed by the coordinate axes under the receiver operating characteristic (ROC) curve. The larger the AUC value is, the better the classification performance of the physical attribute adjectives

Analysis of experimental results

In this work, extensive comparison experiments and ablation experiments are performed on the VHAC-52 and PHAC-2 datasets. To avoid accidentality in the experiment, all experimental results are the average of the three experiments. Compared with the PHAC-2 dataset, the larger VHAC-52 dataset enables more adequate model training and testing. Therefore, to assess the performance of the model more accurately, most of the experiments in this article are carried out on the VHAC-52 dataset.

Selection of the channel factor r

The channel factor r plays an extremely crucial role in the channel attention mechanism. The selection of an appropriate channel factor can effectively reduce the complexity of the model and improve the accuracy of the results. Therefore, we conduct experiments on the different values of the channel factor r

1 of visual channel attention and the channel factor r

2 of tactile channel attention on the VHAC-52 dataset. As shown in Figures 8 and 9, when

Comparison of the AUC and ACC values for different visual channel factors r 1 on the VHAC-52 dataset. AUC: area under the curve; ACC: accuracy; VHAC-52: Visual-Haptic Adjective Corpus 52.

Comparison of the AUC and ACC values for different tactile channel factors r 2 on the VHAC-52 dataset. AUC: area under the curve; ACC: accuracy; VHAC-52: Visual-Haptic Adjective Corpus 52.

Comparison of two kinds of tactile signals

In this article, two kinds of tactile signals are compared on the VHAC-52 dataset. From Figures 10 and 11, we have the following conclusions: (1) The AUC and ACC values of the two-step signal are better than those of the one-step signal. (2) The overall loss value of the two-step signal is less than that of the one-step signal. The reason for these results is that two grasping processes are contained in one tactile signal in the two-step signal method, which makes it easier for the model to capture subtle changes in the tactile signals. Thus, if experimental conditions permit, it is better to divide multiple grasping processes into a tactile signal, because the model is more likely to respond to subtle changes in the tactile signals and can thus better classify adjectives and objects.

Comparison of the AUC and ACC values of the two kinds of tactile signals on the VHAC-52 dataset. AUC: area under the curve; ACC: accuracy; VHAC-52: Visual-Haptic Adjective Corpus 52.

Comparison of the loss of the two kinds of tactile signals on the VHAC-52 dataset. VHAC-52: Visual-Haptic Adjective Corpus 52.

Comparison of different fusion methods

Contact and ADD are two classic feature fusion modes. The difference is that ADD is a feature fusion achieved by adding feature graphs, while contact is a feature fusion achieved by merging channel numbers. To select a more efficient feature fusion method, we report a set of comparison experiments on the VHAC-52 dataset. As depicted in Table 2, compared with ADD, contact has advantages in the accuracy, training time, and inference time of objects and physical attribute adjectives classification. Therefore, this article chooses contact for feature fusion.

Comparison of different fusion methods on the VHAC-52 dataset.

VHAC-52: Visual-Haptic Adjective Corpus 52; ACC: accuracy; AUC: area under the curve.

Ablation experiments

To prove the effectiveness and necessity of adaptive weighted processing of both visual and tactile feature vectors, on the VHAC-52 dataset, method I (direct fusion of visual and tactile feature vectors at the feature level), method I + II (fusion of tactile feature vectors and visual feature vectors calibrated by the channel attention mechanism at the feature level), method I + III (fusion of visual feature vectors and tactile feature vectors calibrated by the channel attention mechanism at the feature level) and method I + II + III (i.e. adaptive weighted fusion) are compared in this subsection.

As shown in Table 3, compared with method I, our method (method I + II + III) improves the ACC by 4.00% and the AUC by 3.26%. Compared with method I + II, method I + II + III improves the ACC by 2.50% and the AUC by 1.60%. Compared with method I + III, method I + II + III improves the ACC by 2.00% and the AUC by 0.75%. Moreover, compared with method I, the inference time of method I + II + III is reduced to 15.59s. Compared with methods I + II and I + III, method I + II + III also has advantages in training time and inference time.

The results of ablation experiments on the VHAC-52 dataset.a

VHAC-52: Visual-Haptic Adjective Corpus 52; ACC: accuracy; AUC: area under the curve.

a I, II, and III are marked in Figure 1.

Furthermore, as shown in Figure 12, the loss value of method I + II + III is lower than the loss values of the other methods under the same conditions.

Comparison of the loss of ablation experiments on the VHAC-52 dataset. VHAC-52: Visual-Haptic Adjective Corpus 52.

Comparison with the existing state-of-the-art methods

To justify the superiority of our method, on the public dataset PHAC-2, we compare our method with four methods based on a single modality (SM) and five methods based on visual–tactile fused sensing (VTFS). As presented in Table 4, the experimental results demonstrate that our method achieves an AUC value of 0.9750. Compared with the highest AUC value of 0.9558 obtained by the existing state-of-the-art methods, our method improves by 1.92%, and our method is equivalent to the highest ACC value obtained by the existing state-of-the-art methods. In addition, compared with the five VTFS-based methods, our method achieves the best results in both training time and inference time. Although the four SM-based methods require less training time, their performances for classification are significantly worse than our method. The good efficiency and accuracy indicate that our model has stronger stability and better classification ability when applied in the real world.

Comparison with the existing state-of-the-art methods on the PHAC-2 dataset.

PHAC-2: Penn Haptic Adjective Corpus 2; SM: single modality; VTFS: visual–tactile fused sensing; ACC: accuracy; AUC: area under the curve.

SM-based methods

VisionNet 33 performs classification by training the convolutional neural network AlexNet. TouchNet 33 performs classification by training an adjusted AlexNet. The purpose of this adjustment is to match AlexNet with the tactile signals in the dataset. VisualNet 6 performs classification by training a modified AlexNet, specifically, by replacing the last three fully connected layers in AlexNet with three 1 × 1 convolutional layers. HapticNet 6 performs classification by training multilevel convolutional layers, max-pooling layers, and 1 × 1 convolutional layers.

VTFS-based methods

DLTU 15 performs classification by training a visual–tactile fusion deep neural network. DLSMC 6 performs classification by fusing visual and tactile features randomly sampled for K and using a 1 × 1 convolutional layer to reduce the dimensionality of the fused features. LGR 22 performs classification by training the deep residual networks. VTFSA 35 performs classification by further simplifying and extracting task-beneficial visual–tactile fusion (VTF) features, that is, by incorporating a self-attention (SA) mechanism into the VTF features. VTS 34 performs classification by fusing visual and tactile data processed from deep neural networks and applying multitask learning.

Conclusion

To improve the efficiency and accuracy of object classification, we propose a visual–tactile fusion object classification method based on adaptive feature weighting. First, the weighted fusion module can adaptively adjust the feature response values of the visual feature channel and tactile feature channel to redistribute the weights and extract more discriminative features. Second, the segmentation method of the tactile signals is changed, allowing the model to capture subtle changes more easily in the tactile signals. The experimental results demonstrate that the ability of the model to understand physical attribute adjectives and recognize objects is improved. Furthermore, to train the model more adequately and more accurately test it, a richer and more diversified visual–tactile adjective dataset is developed in this article. In future work, more diverse objects will be collected to further enrich the dataset and simplify the proposed network model.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by the Project of Shandong Provincial Major Scientific and Technological Innovation under Grants 2019JZZY010444 and 2019TSLH0315, the Project of 20 Policies to Facilitate Scientific Research at Jinan Colleges under Grant 2019GXRC063, the Natural Science Foundation of Shandong Province of China under Grant ZR2020MF138, and the National College Students Innovation and Entrepreneurship Training Program at Qilu University of Technology under Grant 202110431006.