Abstract

Search and rescue robots gained a significant attention in the past, as they assist firefighters during their rescue missions. The opportunity to move autonomously or remotely controlled with intelligent sensor technology, to detect victims in unknown fire smoke environments, introduces a growing technology in fire engineering. Since sensor systems are a component of mobile robots, there is a demand for intelligent robot vision, especially for human detection in fire smoke environments. In this article, an overview of sensor technologies and their algorithms for human detection in regular and smoky environments is presented. These sensor technologies are categorized into single sensor and multi-sensor systems. Novel sensor approaches are led by artificial intelligence, 3D mapping and multi-sensor fusion. The article provides a contribution for future research directions in algorithms and applications and supports decision-makers in fire engineering to get knowledge in trends, novel applications and challenges in this field of research.

Keywords

Introduction

The increasing number of catastrophes and numerous apartment fires over the last years caused a tremendous loss of human life (19,178 fire deaths worldwide in 2019 1 ). This results that search and rescue robots gained a significant attention in the past, as they assist firefighters during their search and rescue mission. 2 Especially novel sensor approaches equipped on these mobile robots support firefighters in human detection in hazardous environments. This can be explained by the fact that firefighters are on the one hand exposed to unstable building structures and on the other hand their cognitive fatigue, due to long search and rescue missions, reduce the efficient and time-critical human detection in smoky indoor environments. 3

Teixeira et al. 4 classify sensor systems into single sensor and multi-sensor (more than one sensor) systems. The physical intrinsic traits of a human that can be measured are shape, emissivity, scent or internal motion. The shape of a human is detectable with visual cameras or range finding sensors, whereas the emissivity of a human can be detected with thermal cameras. Scent is detectable with chemical sensors and radar sensors are suitable for internal motion, for example, heartbeat or respiration. 4

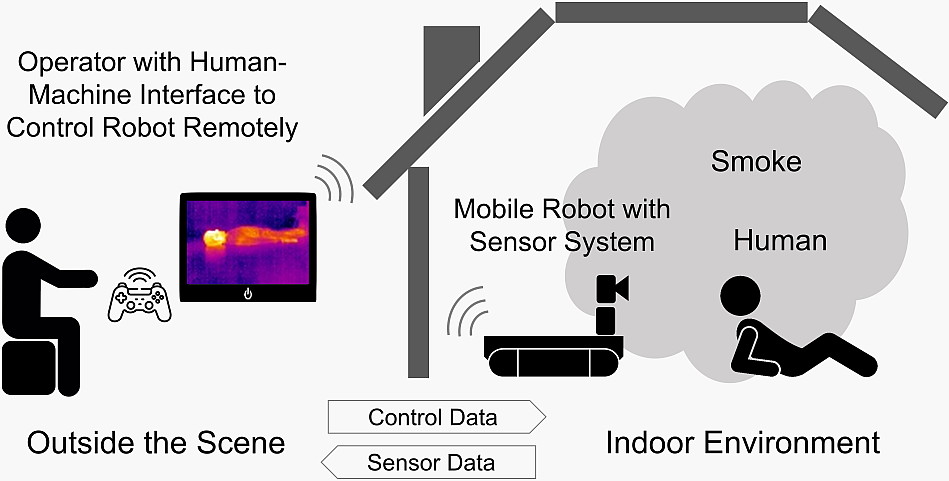

Figure 1 illustrates the human detection approach, defined by the author of this work, during search and rescue missions in more detail.

Human detection approach during search and rescue missions.

The sensor system (single or multi) is equipped on a mobile robot that moves autonomously or remotely controlled by an operator outside the scene. The sensors detect humans in a regular or smoky indoor environment. The data are sent to a human–machine interface and the operator is enabled to better assess whether a human is in the indoor environment or not.

The article has the following structure: At the beginning, the vision and range finding single sensor approaches for human detection in regular and smoky environments are presented. The following section describes the human detection methods with multi-sensor approaches and their application in smoky environments. Afterwards, a brief overview of the reliability of the communication between the operator and the mobile robot in the area affected by smoke is then given. Finally, a conclusion of the work and future research directions are provided in the last section.

Single sensor approaches

In this section, an overview of various single sensor approaches in regular and smoky environments is given. This includes imaging and distance measuring sensors, as well as touch and chemical sensors.

Thermal camera

Thermal cameras are common for imaging physiological conditions of the skin surface to analyse its condition. For clinical diagnosis or monitoring, Yoon et al. 5 examine the temperature of regions of the forearms. The objective of the work 5 is to segment regions of the forearms automatically in an infrared image and locate regions of importance. Herry et al. 6 also point out ways to segment and register (automatically) hands and arms on infrared images. The basis of this segmentation are mathematical morphological operations. Human detection with a thermal camera during search and rescue missions is introduced by Königs and Schulz. 7 The authors 7 figure out that a simple threshold approach detects high temperature blobs indoor and outdoor. They also describe that the results of the approach become worse on warmer days and indoor during unusual circumstances, for example, in fire smoke contaminated environments. The work of Sharma et al. 8 describes two algorithms for detecting humans in visibility at night. The authors 8 propose an algorithm, which uses the theory of the radiation of black bodies (hot spot), and an algorithm which subtracts the background. Average accuracy of the first algorithm is 69% and of the second algorithm is 79%. The authors 8 note that the result depends on the background conditions, for example, illumination or brightness. Compared to this, another work 9 suggests an algorithm for human detection based on thermal pixel values with an accuracy of up to 90%. An alternative approach of sensing humans in infrared illustrations is introduced by Setjo et al. 10 The authors 10 apply the classifier by Haar to sense the presence of humans in infrared images. The results are: The more distance between the object and the camera, the less accurate the detection of the human. Positions that are not turned facing to the front are also difficult to detect. The approach can sense two or more persons in the infrared image with the following postures: overlap each other, in front of one another or side by side. The approach reaches false detection (e.g. infrared shadow formed by reflecting glass) if an object with attributes like a person is sensed. In the work of Cerutti et al., 11 the authors conduct an exploratory study on human detection in outdoor scenes by using an 8 × 8 pixels thermal camera. Experiments show that the described approach achieves 97% of accuracy. Ivašić-Kos et al. 12 present findings of human sensing on a custom data set of infrared images with YOLOv3 convolutional neural network (CNN). Experimental tests result in an improved performance for the trained model (30% average precision) compared to the genuine model (7% average precision). Also, Perdana et al. 13 use a CNN (MobileNet) to sense humans on infrared images taken from an unmanned aerial vehicle (UAV). The approach reaches an average precision of 82.49%. Further, the study of Hoshino et al. 14 examines the human detection with infrared images taken from an UAV using a trained single shot detector (SSD) CNN. Jaradat and Valles 15 propose a human detection approach with trained thermal images on a CNN and perform an average precision of 96.3%. Cruz Ulloa et al. 16 determine an average precision of 85% using a YOLOv3 CNN based on thermal images. With thermal images trained on a YOLOv4 CNN, Tsai et al. 17 achieve an average precision of greater than 95% for the standing, sitting, lying and squatting postures of humans.

The two works of Starr and Lattimer 18,19 evaluate thermal cameras and discuss the application and capabilities of thermal cameras for distance mapping and navigation in fire smoke contaminated environments. The authors 18,19 point out that thermal cameras outperform optical cameras in the presence of thick smoke and light smoke with high temperature. However, thermal cameras are noisier. Image processing is able to reduce the noise. Also, Kim et al. 20 focus on a method to find hot spots in a fire smoke contaminated environment. The authors 20 solve this by analysing the statistical characteristics in infrared images and fusing the data with the Bayesian estimation (calculation of fire sections). Afterwards, the method is validated in large-scale fire tests (with several conditions in temperature and smoke). In a smoky environment (indoor), the approach shows an accurate hotspot on the infrared image.

An approach of human detection in challenging visibility conditions is provided by Kapusta and Beeson. 21 The authors 21 use a 3D thermal camera and reach an accuracy of up to 90% at 20 fps inside a smoky environment. Also, Krišto et al. 22 submit a work using a thermal camera (320 × 240 pixels) and the YOLO CNN to detect humans in a foggy environment. The trained model achieves an average precision of 97.85% in human detection.

Optical camera

Prior work for human detection with an optical camera is mainly in the field of UAVs. 14,23 –26 Also, the two works of Liu et al. 27,28 propose a novel approach to automatically detect humans in real time with an optical camera. Experimental tests show that the system is suitable in regular environments. Xiao et al. 29 show a new human detection methodology by using a learning classifier (online). Experiments show that the approach achieves higher rates of detection compared to 3D human sensing approaches. Ghidary et al. 30 introduce another method of human detection in indoor environments. The work 30 determines the 3D position of face and head with the method depth from focus. The authors calculate the position of the person in the scene with the position of the camera and the tilt and pan angles. The approach measures with an error rate of less than 0.1 m in a distance range from 0.9 m to 3.4 m. Myrzin et al. 31 present a current human sensing approach that connects rotation invariant histogram of oriented gradients (RIHOG) characteristics, binarized normed gradients processing, as well as segmentation of skin. For evaluation, RGB body images are used. The quadratic support vector machines model demonstrates an average precision of 90.4% in human detection and shows the performance of RIHOG characteristics for human sensing.

A further study 19 evaluates the efficiency of visual cameras working in the visible area. The work 19 uses an edge detection method and considers the value switch between each pixel. Next, it assesses each of these differences to a standard value. Experiments result that the image is substantially mitigated due to smoke if the viewability is about 4 m. Below a visibility of about 1 m, the method is unable to locate any features. Tests during light smoke with high temperature show no influence on the identified edges.

Radar

A novel approach of human detection in a cluttered environment using passive millimetre wave radar is introduced by Nanzer and Rogers. 32 The method consists of a Bayesian classification formulation and shows promising results in classification of humans and objects. An algorithm for human detection using an ultra-wideband impulse-based mono static radar is demonstrated by Chang et al. 33 Also, Stephan and Santra 34 show a framework for precise human sensing in an environment (indoor) based on radar. In another work, Chen 35 discusses radar backscattering from a human, as well as how to analyse the radar backscattering from a subject of interest. Finally, an example of the radar backscattering from human arm and leg motions is introduced. Cui and Dahnoun 36 discuss a framework for person sensing with a millimetre wave radar. The authors 36 show a sensitivity of over 90% in indoor environments and mention that the correctness can be enhanced with two radars. Bandala et al. 37 use an ultra-wideband radar to sense the existence of persons based on their movement of respiration. The researchers demonstrate an accuracy of 97.28%.

A further work 38 demonstrates how ultra-wideband radar is able to sense objects in hazardous environments. The researcher 38 shows that ultra-wideband radar is able to pass through bad weather (e.g. fog) and sense objects compared to other vision or range finding systems. Yamauchi 38 develops algorithms that process radar data to reduce ground reflections. In the research work ‘Evaluation of Navigation Sensors in Fire Smoke Environments’, the authors Starr and Lattimer 19 examine radar (26 GHz) to quantify the performance of this range finding technology. The system delivers a small change in distance (6% with outliers) during a thick smoke, as well as during a light smoke with high temperature test. Mandischer et al. 39 introduce a strategy to steer in low visibility environments with radar and an efficient radar filtering process. The process consists of three steps and enables a consistent noise reduction.

In the work of Sakai and Aoki, 40 the authors focus on fire rescue operations and the issue that the view of firefighters is blocked by thick smoke. The authors 40 use a millimetre wave radar to design a 3D map from the reflection data. Afterwards, Sakai and Aoki 40 execute a 3D grouping and labelling process for the human detection. An experiment verifies the detection of humans and objects in a smoky environment.

LIDAR

Taipalus and Ahtiainen 41 introduce a novel algorithm for human detection with a 2D light detection and ranging (LIDAR) system. The LIDAR receives echoes of human legs captured from the level of knees. With a catalogue of predefined characteristics, the authors 41 use these for clustering the reflections. If two clusters meet the defined requirements (close together, leg classification), a human object is created.

Experimental tests demonstrate that the algorithm can detect humans in regular indoor environments. Lucian et al. 42 also present an approach for human detection by counting pairs of legs. The authors 42 achieve a detection rate of 96.8%. Another work 43 describes the human detection task with a 3D LIDAR system mounted on an UAV. Based on a CNN, the authors 43 propose an approach which can evaluate point clouds sent by a LIDAR system (3D). The algorithm consists of three elements: pre-processing data, post-processing data and person classification. An enhanced learning framework for human sensing with LIDAR (3D) is introduced by Yan et al. 44 Experiments show that the human classifier, created by the authors, 44 performs better than other solutions in terms of accuracy.

In the work of Yamauchi, 38 the author shows during an experiment that LIDAR cannot penetrate thick smoke for object detection. A further work 19 evaluates two LIDAR systems (single-echo and multi-echo) operating at 905 nm. The results show that if smoke affects a viewability of 4 m, the measurement of the distance is involved. If smoke affects a viewability of around 1 m, a LIDAR system reverts the distance to the bottom of the smoke and cannot sense the object’s upper edges. Within light smoke with high temperature, a LIDAR system returns a result comparable as under regular environments. The outcomes show that LIDAR is not appropriate of providing accurate range finding in thick smoke environments. Similarly, Pascoal et al. 45 review various LIDAR systems. In regular environments (adequate viewability), LIDAR systems have a high accuracy and linearity, particularly after warming up. In thick smoke environments, LIDAR provides erroneous or saturated outputs.

Sonar

Blumrosen et al. 46 show a wideband sonar system for human detection in indoor environments. The technology manages to classify different human activities in human detection. An alternative method for face detection using sonar is presented by Miao et al. 47 The authors 47 use different configurations of transmitter–receiver pairs and demonstrate that the system can achieve a high detection rate of up to 99%.

Another work 19 evaluates sonar systems at 42 and 50 kHz. In thick smoke or light smoke with high temperature, the sonar systems result with an error rate of 10%–20%.

Tactile sensor

Omata and Terunuma 48 develop a tactile sensor with a piezoelectric element for human detection and demonstrate an excellent performance. The sensor distinguishes the difference in hardness of a soft tissue, just as the human touch does. Also, Moromugi et al. 49 develop a tactile sensor to estimate human’s soft tissue hardness. With the tactile sensor, a measurement of the hardness with any pressing force is possible. Also, the tactile sensor removes automatically unwanted effects from the variability of the pressing force. The tactile sensor demonstrates a standard deviation of 0.447 under fluctuating pressing force, compared to 0.7 with a conventional durometer measurement under controlled pressing force. Two other works 50,51 also examine tactile sensors. The authors 50,51 successfully demonstrate the proposed detection principles through evaluation experiments. They reduce the contact force and optimize the layout of the reference plane. The hardness error is suppressed to a shore A hardness of 4 (contact force below 1 N). This outcome relates to the detection ability of the hardness of fat.

Chemical sensor

Güntner et al. 52 introduce a research paper with sensor arrays (compact and orthogonal) to sense the skin- and breath-emitted metabolic tracers’ ammonia, acetone, CO2 and isoprene. It contains three metal-oxide sensors (nanostructured), each particularly customized at the nanoscale (selective and sensitive tracer detection), additionally with commercial humidity and CO2 sensors. The sensor array rapidly detects ammonia, isoprene as well as acetone with high precision. Another human sensing approach based on various ambient gas parameters is introduced by Kamal et al. 53 The authors 53 perform various experiments under controlled situations and accomplish the task with several machine learning algorithms: RandomForest, Bagging, J48 and IBK. The method achieves an accuracy of up to 95%.

Table 1 summarizes the prior work of single sensor approaches reviewed in this section.

Overview of single sensor approaches.

– no prior work; × not possible.

Table 1 shows that single sensor approaches are mainly examined in regular environments. A comparison between the number of prior work in regular and smoky environments shows the focus of research.

Overall, the most suitable single sensor approach for human detection in regular environments is a thermal camera. A thermal camera offers the advantage that the human temperature can be clearly distinguished to the background temperature. Although the results show that accuracy in human detection decreases with distance from the human, this approach offers an accuracy of up to 90% at 13 m. Future trends tend to use deep learning approaches to automatically detect humans in various environments.

An optical camera is another promising approach for human detection in regular environments. The latest approaches examine automatic human detection in real time by using online learning classifier. At this time, the prior work does not characterize the effects of different real-world factors on system performance, such as scattered background conditions.

LIDAR is, in comparison to radar and sonar, the most accurate single range finding approach for human detection in regular environments. Experiments show that the shape of a human can be detected with this approach and result in an accuracy of greater than 90%. However, this range finding approach has several disadvantages. For instance, an ideal human detection system should be able to distinguish human shapes with similar shapes and surface textures in the same scene.

Radar is the most accurate single range finding approach for human detection in smoky environments. Experiments show that radar provides less than 6% change in distance during thick smoke, as well as during light smoke with high temperature. However, the approach has the disadvantage that there are none out of the shelf radar systems available.

In smoky environments, thermal cameras are the most promising single sensor approach for detecting hot objects. Various experiments show that thermal cameras detect hot spots in thick smoke. This type of sensor outperforms optical camera systems in the presence of thick smoke and light smoke with high temperature. However, the approach has various disadvantages. For instance, thermal cameras generate more noise and they cannot distinguish between objects and humans in the same temperature range.

Multi-sensor approaches

This section gives an overview of multi-sensor approaches in regular and smoky environments. This includes the multi-sensor fusion of imaging and distance measuring sensors.

Radar and LIDAR

A novel work 54 focuses on a fusion approach by using radar and LIDAR, particularly in human detection. The approach contains object detection, human sensing and occluded depth creation. The multi-sensor system detects humans by merging the human features by LIDAR as well as Doppler radar distribution. Another work 55 presents a sensor fusion scheme for detecting partially concealed humans.

A further work 56 investigates techniques in which sensor data from LIDAR and ultra-wideband radar can be fused. The author 56 examines the fusion of sensors, where data from numerous sensors are blended in a single representation. The smoke detection algorithm makes use of LIDAR to verify, whether it is enclosed by smoke. If yes, the ‘obstacle avoidance behaviour’ (radar-based) is activated. If not, the LIDAR algorithm with higher performance is used. Compared to a single sensor approach, this approach allows robustness to environmental unpredictability. Further works 57 –59 examine the fusion of radar and LIDAR data in more detail. The first work 57 describes a fusion strategy to reduce the negative influence on LIDAR in thick smoke by introducing a high bandwidth radar (2D). The authors 57 also introduce a LIDAR–radar ratio, which correlates with the number of aerosols in the surroundings. The second work 58 introduces two ways for merging LIDAR and radar data to achieve simultaneous localization and mapping in an environment with low viewability. The third work 59 focuses in optimizing the quality of the map. In addition, the authors 59 reach to model the concentration of aerosols with combined radar and LIDAR data. The authors 59 use a finite model.

Majer et al. 60 present another multi-sensor system with ultra-wideband radar and LIDAR. In numerous experiments, the authors 60 demonstrate that the merging approach not only improves the performance, it also efficiently overcomes sensing malfunctions by thick smoke.

Thermal camera and optical camera

The work of Han and Bhanu 61 proposes a scheme to automatically locate the correspondence between the human shape extracted from infrared and RGB images. The method reaches appropriate results for human shape extraction and image registration, as well as demonstrates improvements in performance compared to none fused sensors. The two works of Kang et al. 62,63 focus on a new method for human sensing by merging RGB and infrared images. The authors 62,63 use a normalized cross-correlation of average histogram algorithm to analyse the relationship between infrared and RGB data. Experiments show that the approach yields multiple human detection in real time. Correa et al. 64 present a robot that uses an infrared camera to sense humans and an optical camera to acquire a video of the scene. In the work of Scebba et al., 65 the authors explore a method for the automatic sensing of the human’s nose. The approach is based on facial landmark sensing from merged infrared and RGB images. The authors 65 evaluate the detection rate as well as the precision of the algorithm acquired from several objects under challenging situations. Experiments result in an appropriate spatial accuracy (two pixels average root-mean-square error) and a high detection rate (up to 92%). Brunner et al. 66 figure out that optical cameras, compared to thermal cameras, degrade the detection accuracy. In the work of Gupta, 67 infrared images are used for extracting local characteristics and RGB images are utilized for skin detection. The skin detection is conducted with a ‘non-parametric histogram-based trained skin pixel likelihood model’. Three feature descriptors (SIFT, SURF, HOG) are examined in the scenery of human sensing in infrared images. Results illustrate that human sensing is more suitable in thermal images than skin sensing in RGB images. Dawdi et al. 68 submit a research work for human detection using shape template matching. In real time, the authors 68 blend RGB images into thermal images, processed on board of an UAV.

Thermal camera and LIDAR

Gleichauf et al. 69 present a triangular calibration approach with a thermal camera and a 2D LIDAR. The authors 69 assign every laser measurement within the field of view with a corresponding thermal pixel. The system is verified for distances up to 10 m. Another work 70 proposes a special application of a thermal camera and a LIDAR by mounting the sensors on an UAV.

Starr 71 develops a sensor fusion system with long-wavelength stereo infrared and a spinning LIDAR. The data are fused in a multi-resolution 3D voxel domain and use evidential theory to model free space and occupied states. Furthermore, Starr 71 presents a heuristic approach to split attenuated LIDAR signals from low attenuation signals. Additional works 72,73 submit an approach of sensor combination with LIDAR and stereo infrared for enhanced range finding in smoky environments. The approach allows range finding in regular and smoky environments. It relies on the ability of thermal cameras in smoky environments and the accuracy of LIDAR in regular environments. The authors 73 combine sensor data in a multi-resolution voxel domain (3D) for free space and occupied states. Experiments in a scenario (indoor) with low smoke, thick smoke and fire illustrate that the system is suitable in regular and smoky environments. LIDAR does not provide any data in thick smoke.

Thermal camera and radar

The work of Davidson et al. 74 demonstrates the fusion of real data from a thermal camera and radar. The authors 74 describe a solution to detect objects in 3D, including signal processing of subsystems and fusion. Quaranta et al. 75 propose an algorithm for the fusion of 2D radar and thermal camera data. Another work on human detection based on radar and thermal imaging is examined by Ulrich et al. 76 The authors 76 use a multi-sensor fusion approach that first conducts a process step, where frames of candidates for mirrored or real humans are defined in thermal images. These frames are associated to the radar objectives and then classified. Radar is used to distinguish mirrored and real humans, which is challenging by thermal cameras only. In summary, the authors 76 demonstrate the performance of the approach with real measurements. Bao et al. 77 propose an algorithm with a radar and a thermal camera based on an interacting multiple model and unscented Kalman filter, which reduces the radar radiation time.

Kim et al. 78 develop a multi-sensor system that utilizes sensor fusion between an infrared camera (stereo) and a radar (frequency modulated continuous wave (FMCW)) to detect targets through low viewability in real time. The infrared camera (stereo) is applied to obtain information (3D) about the location, while the radar offers accurate distance data. Matching radar data with those in the thermal image (3D), the accuracy of the infrared camera (stereo) is updated. Fire tests with and without smoke demonstrate that the error of distance for the infrared camera (stereo) is lowered due to sensor fusion of the infrared camera (stereo) with FMCW radar.

Optical camera and radar

Streubel et al. 79 introduce another novel approach by fusing stereo optical and radar data to significantly improve the tracking of humans in an indoor environment. The authors 79 demonstrate that the precision of the proposed fusion system achieves an accuracy of 90.3% compared to 83.4% and 81.8% obtained by an optical camera and a radar only. Ivanovs et al. 80 explore a new sensor configuration for autonomous human sensing and contact-free monitoring of respiration using a combination of an optical camera and an ultra-wideband radar. In the proposed approach, a CNN is used to process camera data and sense humans in real time. After the human is detected, radar is utilized to execute the monitoring of respiration. Experiments with humans (different poses) illustrate that the suitable detection range is 4 m in regular environments.

Jiang et al. 81 present an object detection approach with collected data from a smoky environment by fusing millimetre wave radar with visual data. Experimental results prove the algorithm’s feasibility.

Thermal camera, radar and LIDAR

The merging of LIDAR, thermal and radar data for object detection in environments with a minimal visibility is described by Fritsche et al. 82 The authors 82 merge radar and LIDAR scans for a simultaneous localization and mapping (SLAM) approach that generates an environmental map. Additionally, the authors create heated obstacle scans with merged radar and LIDAR data in aggregation with infrared images.

Table 2 summarizes the prior work of multi-sensor approaches reviewed in this section.

Overview of multi-sensor approaches.

– no prior work.

Thermal cameras, in combination with an additional sensor, seems to be the most used approach for human detection in regular environments. Results show a detection rate of up to 92% while using a thermal camera to detect humans and an optical camera to acquire the scene. Thermal cameras deliver 3D scene information in smoky environments, while radar provides accurate distance information in the field of view.

Comparing Table 2 with the single sensor approaches listed in Table 1, it becomes clear that the extract of current prior work in sensor fusion does not leverage the full potential of their specific sensor combinations. Therefore, human detection, especially in smoky environments, is a research field which needs to be considered more closely and characterizes a gap in research.

Challenges in communication

In this section, a short overview of challenges in communication between the operator and the mobile robot is given.

A status and challenges in human–robot interaction is given by Sheridan. 83 The author 83 indicates among others the challenge in compensation for dropouts and delays in communication.

Tadokoro 84 presents challenges of future mobile robots. Besides several challenges in robotics, Tadokoro 84 points out that wireless communication is one of these challenges. The author 84 mentions in this context stability, bandwidth and latency to transfer large quantity of data between the operator and the mobile robot.

Also Liu et al. 85 identify the challenges in robot-assisted firefighting as well as future trends for efficient and smart operations. The authors 85 mention for instance a challenge in precise information in real time for targeted decision-making.

A design of a wireless communication device for use in a smoky environment is given by Orita et al. 86 The authors 86 design a wireless communicator at which multiple dropping devices create a wireless ad hoc network along the way of the mobile robot.

Conclusion and future work

The research article provides a compact overview about sensors for search and rescue robots assisting firefighters in their mission of detecting humans. The main areas involved in this article are single sensor and multi-sensor approaches used for human detection in regular and smoky environments. The prior work and novel algorithms are presented. Also a brief overview of challenges in communication between the operator and the mobile robot is given.

In general, methods to detect humans during search and rescue missions in hazardous environments are increasing in recent years, which indicates a growing field of research in this area. Especially, the latest trend in deep learning approaches with thermal cameras as well as multi-sensor systems confirm the demand of rescue robots with intelligent sensors to accomplish the automatic human detection in smoky indoor environments. Furthermore, an overview of sensors that detect humans under rubble in earthquake-affected areas with dust would be of interest.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.