Abstract

Accurate identification of driving intention and reasonable control of driver’s behavior is seen as an important mean to reduce man-made traffic accidents for the intelligent vehicle. However, the intention identification processes associated with driving emotion-related impact have received very little attention. With the aim of uncovering the emotional impact on driving intention identification, the car-following condition was taken as an example, and multi-source and dynamic data of human–vehicle–environment under different driving emotional states were obtained through the experiments of emotions induction, actual driving, and virtual driving in this study. The feature extraction and dynamic identification models based on rough set theory and back-propagation artificial neural network were built to recognize driving intentions. The results showed that there were some differences in driving intention identification under different emotional modes. The differences were mainly manifested in the complexity and accuracy of intention feature vectors. The rationality and validity of the intentions feature extraction and identification models were verified by the actual driving experiments, virtual driving experiments, and interactive simulation experiments. The research results can provide theoretic basis for the emotional intelligence research of advanced vehicle, driving assist systems, and unmanned vehicles.

Introduction

Traffic accidents constitute a global social and economic problem. Human factor plays a more important role in the traffic accident beyond vehicle, road, and other factors.1,2 Consequently, a particular interest has been shown to drivers’ behaviors research in the domain of traffic safety.3–6 Relevant research showed that 90% of rear-end collisions and 60% of frontal collision could be avoided, if driver realized the danger and took effective measures 1 s in advance.7,8 Therefore, it is an important means to control the driving behavior reasonably for the traffic accidents reduction and traffic safety improvement. Driving Safety Alerting (DSA), as an important module of the Advanced Driver Assistance System (ADAS), is one of the effective techniques to prevent factitious traffic accidents. 9 The accurate understanding of the driver’s intention is a key step for the DSA. However, the internal microscopic mechanism of driving intention is mostly ignored in the present alerting system. The model construction of driving intention identification is under the assumption that a same intention would be produced when drivers are faced with a same traffic situation. In other words, all drivers were in the most rational state, and the optimized behavioral decision could always be made. Obviously, this assumption is contrary to reality. So much accumulate research in the field of consumption,10,11 medicine,12,13 education, 14 transportation,15–17 environmental protection, 18 criminology, 19 and dietary behavior 20 has indicated that the affectivity is not a negligible factor in the research on human behavior. In the field of transportation, A Hayley et al. 21 researched the implicated relation between the individual dimensions of emotional intelligence and risky driving behavior. The result revealed that “Risky Driving” was related to greater levels of Emotional Recognition and Expression and lesser Age, and the Negative Emotions, sub-scale of Dula Dangerous Driving Index, was significantly predicted by Emotional Control and Age. A mediation model incorporating Age, Emotional Control scores, and the Negative Emotions driving behavior score indicated that a significant indirect effect of Age through Emotional Control. D Herrero-Fernndez and S Fonseca-Baeza 22 found that slight gender differences were in drivers’ angry thoughts, with women scoring higher than men. However, younger drivers had higher scores than older drivers in general. In addition, they also discovered there were several mediation effects of aggressive driving and risky driving on the relationship between aggressive thinking and the crash-related events. S Oltedal and T Rundmo 23 argued that anxiety was significantly correlated to excitement-seeking and risky driving behavior, and excitement-seeking was significantly correlated to risky driving behavior and collisions. L Precht et al. 24 pointed out that anger resulted in more frequent aggressive driving behaviors but did not increase driving error frequency. Anger consequently creates danger due to deliberate behaviors rather than because of cognitive overload. TY Hu et al. 25 indicated that Negative Emotions while driving were associated with elevated risk perception, while positive ones were linked to lower risk perception. M Chan and A Singhal 26 demonstrated that emotions modulated drivers’ attention reorienting away from task driving to emotional stimuli, resulting in decreased attention and information processing critical for driving performance. Intention is the psychological variable that has the closest relationship with real behavior. 27 Intention is a measurement index, with a high accuracy, for the prediction of human behavior. 28 In recent years, the influence of emotion on intention also has been concerned. In consumption area, the regulating action of emotion on consuming intention was studied, and new ideas were provided for the formulation of scientific and personalized emotional marketing strategy.29–32 In the field of medicine, the intention characteristics of specific patients under the effect of emotion were studied. 33 In group environment study, the influences of guilt, anger, and pride on group intention were studied. 34 C Ma et al. 35 analyzed the effect of aggressive driving behavior on driver’s injury severity by considering a comprehensive set of variables at highway-rail grade crossings in the United States. J Bernstein et al. 36 examined the factor structure of several self-report measures frequently utilized in the assessment of driving-related behaviors and emotions. X Wang et al. 37 designed emotions induced experiments, actual driving experiments, and virtual driving experiments to obtain multi-source and dynamic data of human–vehicle–environment under driver’s different emotional states. The main influencing factors of typical driving emotions were extracted with factor analysis method, and emotion identification model was established based on the fuzzy comprehensive evaluation and Pleasure, Arousal, and Dominance (PAD) emotional model. The emotions of joy, anger, sadness, and fear can be identified online. The influence of anger emotion on driving behavior was studied by Lisa Precht et al. 38 based on natural driving data. Research result showed that although anger had not caused an increase in the frequency of driving mistakes, it has led to more frequent acts of aggression. Compared with mild anger, violent anger was often accompanied by more aggressive behavior. On the other way, the action mechanism of emotion–intention chain was studied based on evolution theory. 24

As mentioned above, the influence of emotion on driving intention cannot be ignored in driving behavior research. To a certain extent, driver’s intelligence was reflected in the driving intention identification models. Without considering emotion, the actual performance of driving behavioral decision-making cannot be reflected. In view of this, the feature extraction and dynamic identification models based on rough set theory (RST) and back-propagation (BP) artificial neural network were built to recognize driving intentions. The differential identification of driving intention under different emotional states was studied in depth in this study.

Methodology

Feature extraction based on RST

The essence of driving intention feature extraction is attribute reduction. According to RST, an information system can be represented as

Identification model of driving intention based on BP neural network

BP neural network is a kind of multi-layer and feed-forward network. A large number of input–output mapping relationships can be learned and stored with BP neural network. Neural network is a parallel and distributed network structure for information processing. BP neural network is widely used in traffic safety

39

and many other fields.

40

Generally, it is composed of neurons, and each neuron has only one output. A neuron can be connected to many other neurons. Each neuron input has multiple connection paths and each connection path corresponds to a connection weight coefficient. The structure diagram of BP neural network is shown as Figure 1, where

Structure of BP neural network.

A standard BP network with three layers was selected in this article. The feature vectors of driving intention under different emotional states, obtained from RST, were seen as the input layers. Three kinds of driving intentions in car-following condition were seen as output layers. The empirical formulas and machine learning were used to determine the number of nodes in the hidden layer. The selected empirical formulas were

Data acquisition

Experiment material and equipment

Emotional stimulus materials used in this experiment mainly included International Affective Picture System (IAPS) and Chinese Affective Picture System (CAPS). IAPS is the authoritative emotional stimulus material in the world. CAPS is the emotional stimulus material that adapts to unique Chinese social and cultural background. The stimulus materials included visual stimulus materials (words and pictures), auditory stimulus materials (emotional music), and multi-channel stimulating materials (video). The experiment equipment included comprehensive experiment vehicle for road traffic (equipped with high precision positioning system, multi-function speed measuring instrument, microwave radar, laser radar, video capture system, etc.), high simulation vehicle virtual driving platform, and so on. A part of the emotional stimulus materials and experiment equipment are, respectively, shown in Figure 2.

Part of emotional stimulus materials and experiment equipment: (a) part of driving emotional visual stimulus materials, (b) emotional pictures materials of angry faces, (c) comprehensive experiment vehicle for road traffic, and (d) high simulation vehicle virtual driving platform.

Object drivers and experiment conditions

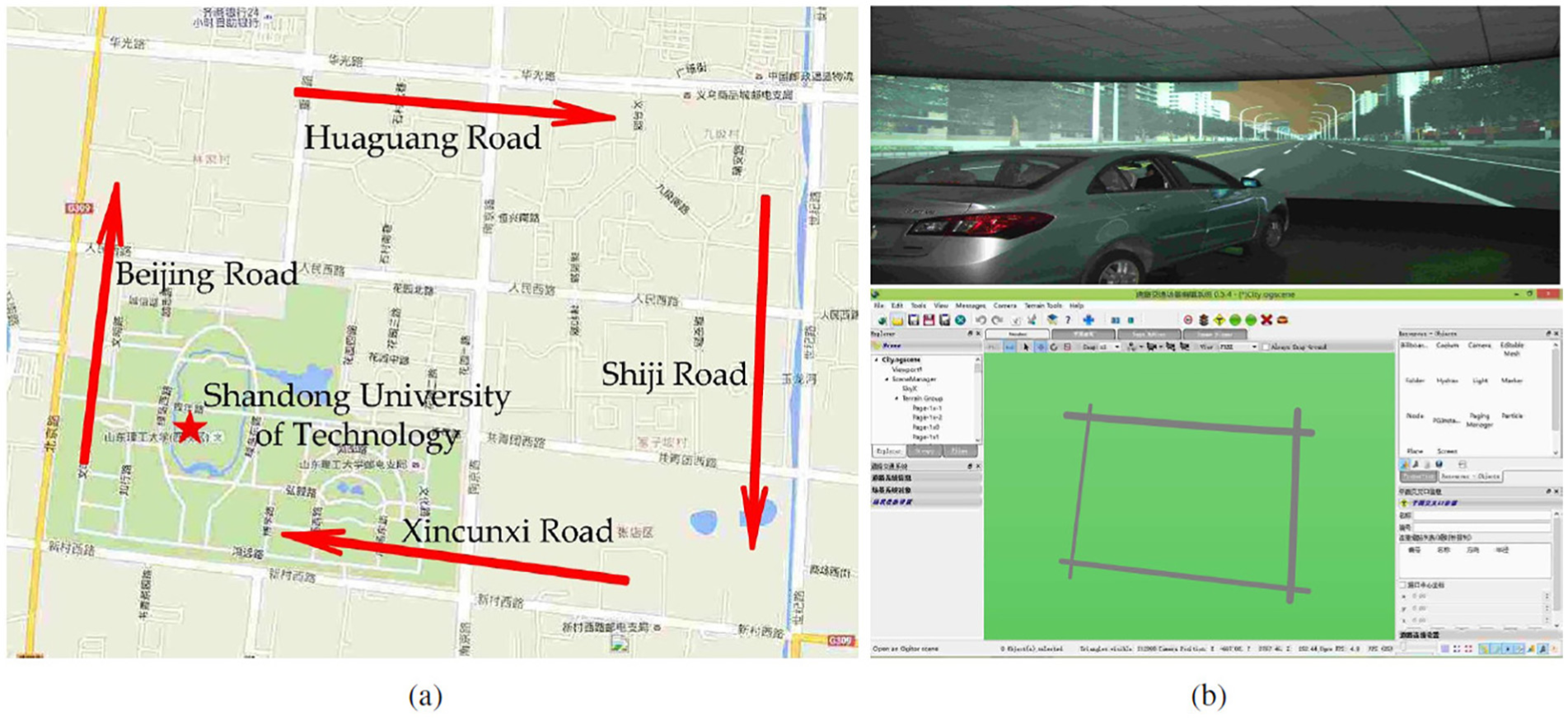

Sixty-two drivers, including 33 male and 29 female drivers, were selected to take part in the experiment. The age distribution of the object drivers was between 20 and 65 years, and the average age was 39.84 years. The sections of Beijing Road, Huaguang Road, Shiji Road, and Xincunxi Road in Zhangdian, Zibo (see Figure 3) were selected as the experiment route to organize actual driving experiment in off-peak periods under good weather and road conditions. The whole length of the route is about 9.7 km (speed limit 60 km/h). Two experiment vehicles were used to simulate the process of car-following. One of the vehicles, as front vehicle, ran normally along the experiment route. The other one, as following vehicle, which was drove by an object driver, followed the front vehicle. In this study, drivers’ emotions are involved, including both positive and negative driving emotions. Considering the safety of driving, the number and scale of actual vehicle driving experiments carried out by us are limited. The current advanced driving data acquisition and experimental technology provides many other options for our research, among which the driving simulator is the most widely used driving data acquisition method.41,42 The driving experiments included actual driving and virtual driving experiment in this study. The actual driving experiment data were closer to natural driving data. However, the actual driving experiment was difficult to organize. While virtual driving experiment was simpler and easier to obtain large numbers of data which can supplement the data effectively. However, we collected the basic road attribute data of the road section in the real vehicle driving experiment, including road type, road width, road slope, intersection drainage, signal phase, and so on. The scenario library of driving simulator background program is built using script tool. Virtual road network was structured (see Figure 3) in virtual driving platform according to the road basic properties and traffic volume of the actual driving experiment route. However, we generated the driving following scene in the virtual road scene. In the above scenario, the front car drives automatically according to different driving models in the virtual scenario according to the set computer program. The target vehicle was followed by the subject. The car-following scene was created in the virtual driving scenario. The above virtual road and vehicle following driving scenes are displayed on the 180° circular screen, and the experimental subject completes the driving experiment in the simulated cockpit (see as Figure 3).

Experiment route in actual driving and virtual driving experiments: (a) experiment route of actual driving and (b) virtual road network.

Physiological and psychological test

The basic information of object drivers, such as age and sex, was collected. Operation reaction time was obtained through physiological test, and the test method was elaborated in Wang and Zhang. 43 The driving propensity was determined through questionnaire, and the test method was shown in Zhang et al. 44 The driving propensity was divided into conservative type, cautious type, and risk-taking type. A revised version of Geneva Emotion Wheel was selected as classification basis of driving emotion.45,46 Sixteen kinds of driving emotions were included in the Emotion Wheel. 47 For the convenience of study, eight typical driving emotions, namely, anger, surprise, fear, anxiety, helplessness, contempt, relief, and pleasure were selected.

Induction and effect evaluation of driving emotion

Different emotions are generated in different environments. Anger is an intense and unpleasant emotion caused by desire disillusion or action frustration. Surprise is an emotional experience of organism when faced with sudden or novel events. Fear is an emotional experience of feeling alarmed and strong suppression in face of danger situation. Anxiety is an emotional response to a real challenge or potential threat. It is a general response of human to uncontrollable incident or situation. Helplessness is a feeling of being unable to do anything. Contempt is an emotion that feeling better and more powerful than others (usually in spirit). Relief is a feeling that comes when something burdensome is removed or reduced. Pleasure is a kind of emotional experience when organism reaches the expected goal. It is a kind of excited and positive emotion. Analysis results of human emotional experts showed that music, movies, and imagination were ideal methods for emotion induction, and the success rate was more than 75%.48,49 IAPS, CAPS, spiritual motivation, and material motivation were used to induce driving emotions. The induction process contained two steps. First, the IAPS and CAPS picture materials were displayed to object drivers. Meanwhile, the background and expressed emotions of the pictures were described for object drivers to produce strong emotional memory and association. Then, the movie materials that can induce specific emotions in CAPS were showed to object drivers to induce emotions in depth on the basis of emotional memory. Object drivers started driving immediately after finishing the above two steps. The music that can induce specific emotion was played to make the driving environment full of emotional color during driving. Aimed at a more effective induction, diversified emotions induction methods were used according to the characteristics of different emotions. The object drivers were informed that they would get prizes if they finished the experiment when inducing pleasure emotion. In addition to horrible video materials, the tragic accident pictures and videos were displayed for object drivers to induce their fear emotion. The scenes of traffic accidents, crowed traffic conditions, and dangerous lane changing were set to induce fear, anxiety, and anger emotions in virtual driving experiment. The interesting news such as novel Guinness records was told to object drivers to induce their surprise emotion. The scene of driving competition was set on the simulator to induce contempt emotion. The specific implementation processes were as follows. First, many driving routes were constructed in simulated driving scene, and obstacles, such as construction area, were set up in the driving routes. Second, five experiment organizers and one object were ordered to drive a virtual vehicle along the experiment routes in sequence. Third, the driving competition was controlled by simulator controller to let the object driver always reach the end of the experiment routes with the shortest time. Fourth, the results of driving competition were told to the object driver. Then, the driving skill of object driver was praised to let him or her produce a strong superiority. Finally, the emotional memory of object driver in the driving competition was aroused by voice guidance and psychological suggestion in subsequent driving experiment. In virtual driving experiments, a traffic congestion scene was built on driving simulator, and the object drivers could only drive slowly. The voice guidance and psychological suggestion were comprehensively used to arouse helplessness emotion when object drivers driving in the virtual scene. In actual driving experiments, the emotional memory of helplessness was aroused by voice guidance and psychological suggestion. Based on voice guidance and psychological suggestion, the object drivers were guided to describe their own experiences such as passing the driving skill test to generate relief emotion. The natural speech questioning, emotional self-report, analysis of facial expression, and behavioral action during driving were utilized comprehensively to assess the result of emotion induction. Facial expressions and behaviors of object drivers during driving were recorded in real time with video detection system. The emotion experiences and driving intentions of object drivers were inquired through natural speech questioning, and the results of the inquiry were recorded. After finishing the driving experiment, the object drivers would watch the video playback and report their emotional experiences and driving intentions at different moments of the video. The effectiveness and intensity of emotion induction were determined finally according to the results of natural speech questioning, emotional self-report, the analysis of facial expressions, and behavioral actions.

Driving experiment

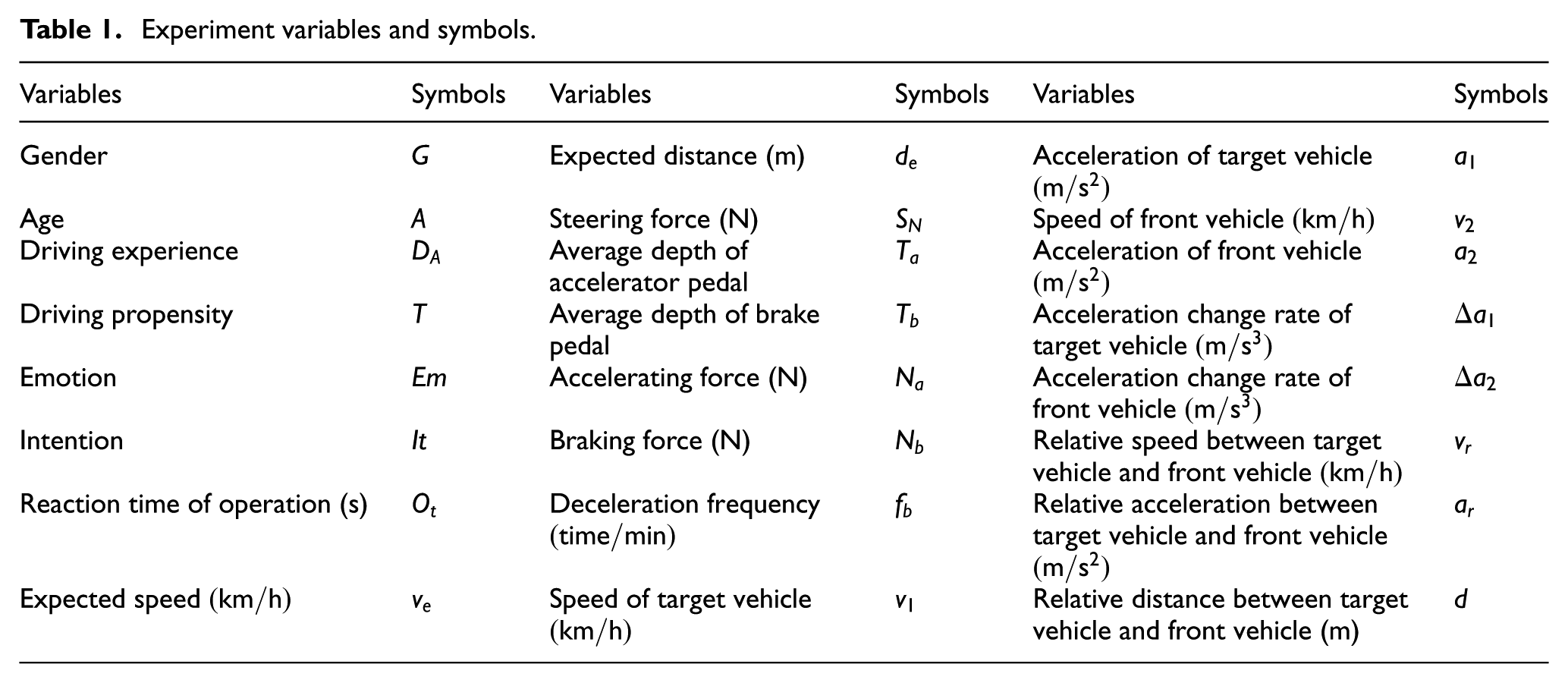

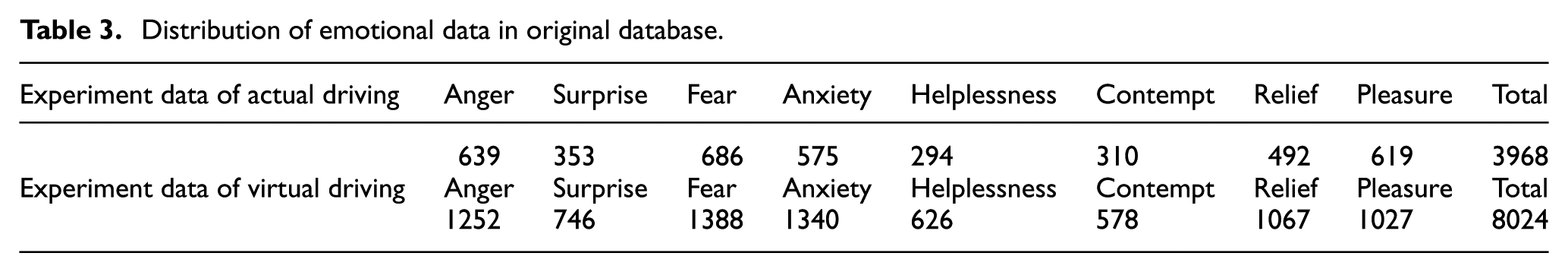

Before driving experiment, the equipment was installed, connected, and debugged. Before the start of emotion induction, the object drivers listened to soothing music to make their emotion at a peaceful state. Driving experiment would begin after the corresponding emotion was induced. The instruments were used to collect and record data synchronously during driving. The information of expected speed and distance for object drivers on the experiment route was counted after the driving experiment. Eight kinds of emotions were induced and eight groups of data were obtained in each experiment. Effective fragments of emotional induction were selected from driving video files, and combined units of emotion–intention were collected. Each data element corresponded to an emotion–intention combined unit and the corresponding data of driver, vehicle, and environment. In total, 3968 and 8024 emotion–intention combined units were obtained from the actual and virtual driving experiment, respectively. The original database comprised the actual and virtual driving data. Limited to the space, only part of data was enumerated, see Tables 1 and 2.

Experiment variables and symbols.

Part of the experiment data.

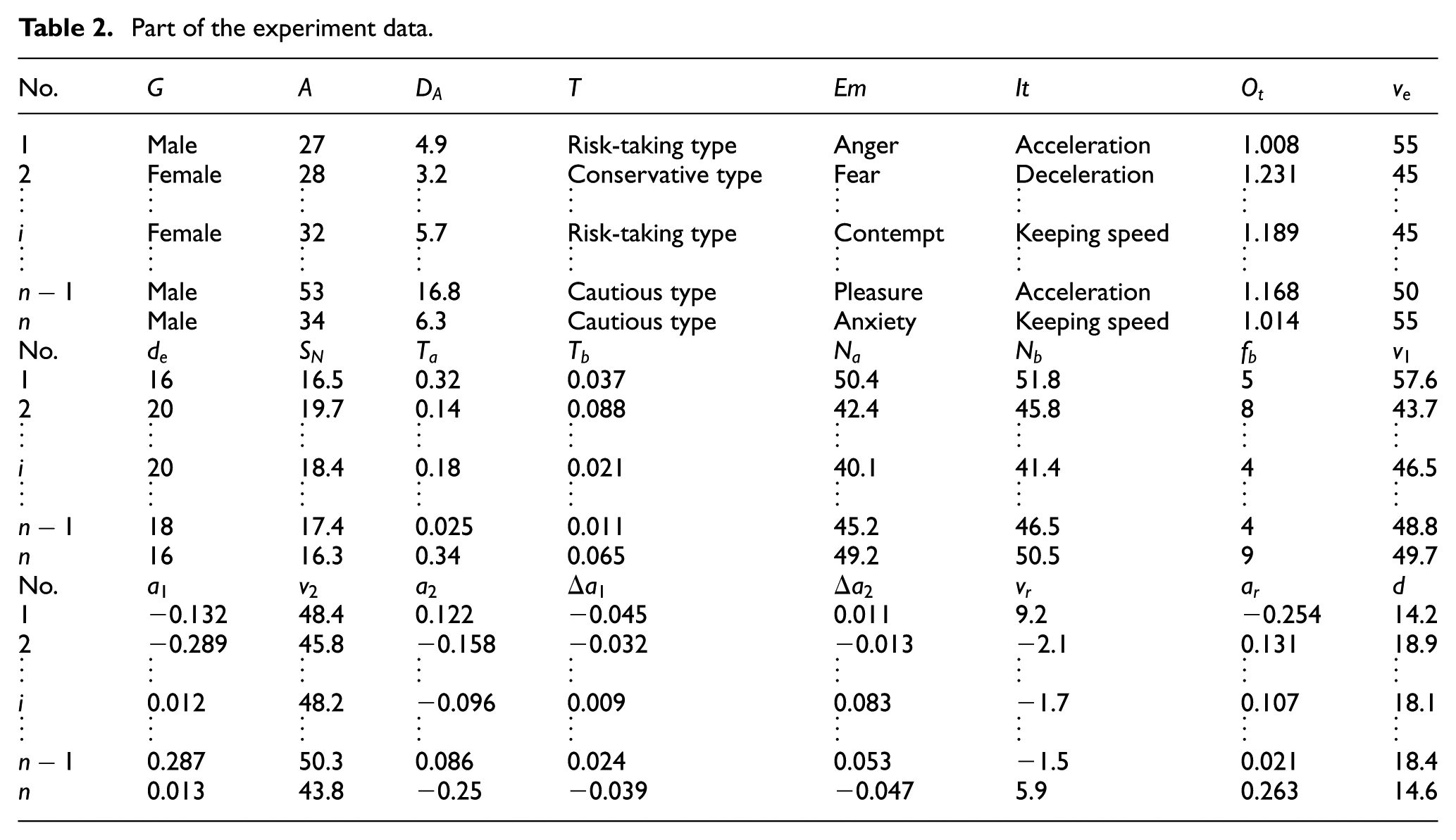

The sample size of emotional data that obtained from driving experiments is shown as Table 3.

Distribution of emotional data in original database.

Result

Feature extraction

In obtained variables, driver’s gender, driving propensity, intention, and emotion were attribute variables and other variables were quantity variables. The attribute variables were coded as dummy variables and the quantity variables were coded as discrete variables. 50 The feature extraction algorithm based on classical RST is intuitive and accessible. However, this algorithm, with a high cost of searching time and space, is a combination explosion problem. The greedy heuristic algorithm was adopted to reduce the attributes of driving intention. Each kind of emotion–intention data was processed with feature extraction algorithm. The final results of feature extraction are shown as Table 4.

Feature vectors of driving intention under different emotional states.

Driving emotion has a significant effect on the process of cognition and decision-making, and the effect is mainly manifested in the choice and driving performance.

Under the anger state, driver was in an excited state and had a strong awareness of self-protection and releasing pressure. The behavioral performance was radical. A high speed and a small following distance were often selected, and the fluctuation of acceleration was big. Surprise is a transient emotion, and it is easy to transfer to other emotions. Under the surprise state, a transient free state of driver’s consciousness would occur and driver’s attention cannot be concentrated. The behavior choices were unconscionable. The vehicle’s movement situation could not be well cognized. Under the fear state, a slow speed and a safe following distance were selected. The driver desired to get rid of the current traffic situation as soon as possible. Under the anxiety state, the behavioral performance was radical. The vehicle speed, acceleration, and following distance would continue to fluctuate within a certain range. Under the helplessness state, the vehicle speed and following distance cannot be controlled into a safe and reasonable range. The driver was in a timid state. The ability to control the traffic situation was poor, and the driver was easily affected by external environment. Under the contempt state, the driver was full of superiority and had a strong self-awareness. Relief and pleasure are two positive emotions. Under the relief and pleasure states, reasonable speed and following distance would be selected. The fluctuation of the acceleration was small. Compared with relief, pleasure is a more excited emotion. Under the pleasure state, the behavioral performance was more radical. In addition, drivers’ behaviors under different emotional states were also influenced by gender, age, driving experience, and driving propensity. In terms of gender, the same emotion has different effects on male and female drivers. For example, male drivers are more likely to be angry, and fear has more significant effect on female drivers. In terms of age and driving experience, with the increase in age and driving experience, the control ability of emotions will be enhanced. Different drivers, with different driving propensities, have different behavioral performances. For example, the behavior of risk-taking drivers is still radical even in a positive emotional state. And, behavioral performance of conservative driver is relatively soft even in angry state.

Model calibration

The feature vectors of driving intention were taken as the input layer, and the corresponding intentions were taken as the output layer. The values of weight and threshold were adjusted continually through network learning. Finally, the driving intention identification models under different emotional states were formed when the accuracy requirement of total error function was satisfied. For the eight kinds of emotion–intention data, 80% of sample data were selected randomly to constitute the learning database of BP network. The selected data quantity of anger, surprise, fear, anxiety, helplessness, contempt, relief, and pleasure were 1512, 879, 1659, 1532, 736, 710, 1559, and 1317, respectively. In terms of training parameter, the error threshold was set as le-3 and the learning rate was set as 0.5. MATLAB was used for the network learning. Finally, all the error converged to the threshold value. The error curves of network learning under different emotional states are shown as Figure 4.

Error curves of network learning under different emotional states.

Discussion

Actual driving verification

The test database of actual driving experiments was structured with the remaining emotional data. The test data were put into the BP neural network to verify the model. The distribution of actual driving test data and identification accuracy of driving intention under different emotional states are shown as Figure 5.

Result of actual driving verification: (a) distribution of actual driving test data and (b) identification accuracy of driving intention.

As can be seen from Figure 5, the identification accuracy of driving intention under anger state was more than 90%. The identification accuracy under fear, anxiety, contempt, relief, and pleasure were more than 85%. The identification accuracy under surprise and helplessness were more than 75%. It can be concluded that the intention identification model established in this article had a high accuracy. Without taking into account the driving emotion, the RST and BP network were used to construct the feature extraction and identification models of driving intention. 3174 groups of data were randomly selected from 3968 groups of actual driving data for model learning, and the remaining data were used to verify the model. For the identification model that without considering driving emotion, the identification accuracy of acceleration, keeping speed and deceleration were 77.14%, 73.21%, and 69.58%, respectively. The results showed that the feature extraction and dynamic identification models of driving intentions proposed in this article were reasonable and effective.

Virtual driving verification

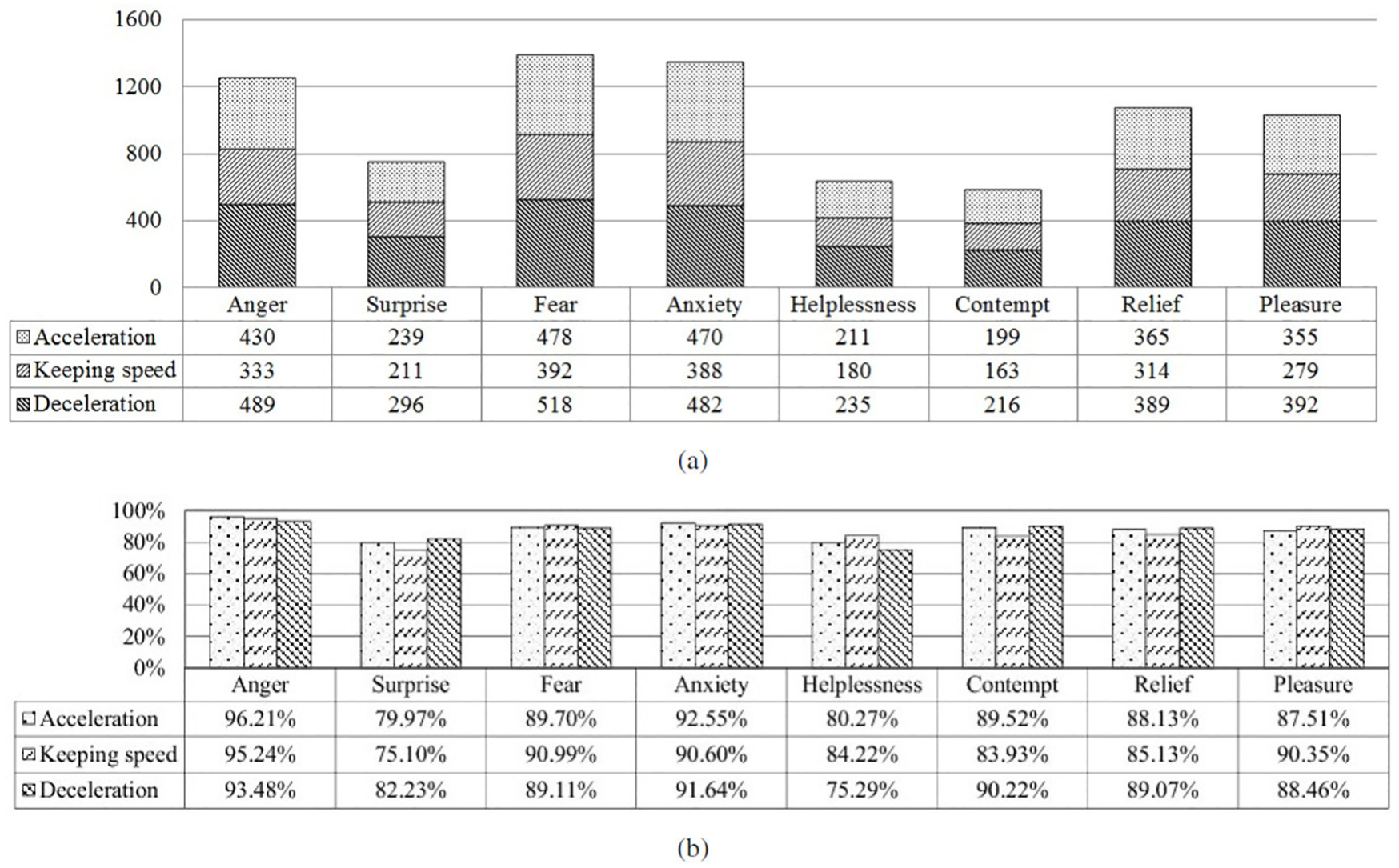

The virtual driving test data were input to the BP network to verify the model. The distribution of virtual driving test data and the identification accuracy of driving intention under different emotional states are shown as Figure 6.

Result of virtual driving verification: (a) distribution of virtual driving test data and (b) identification accuracy of driving intention.

As can be seen from Figure 6, the driving intention identification accuracy under anger and anxiety was more than 90%. The identification accuracy under fear, relief, and pleasure was more than 85%. The identification accuracy under surprise, contempt, and helplessness was more than 75%. Without taking into account the driving emotion, the RST and BP neural network were used to construct the feature extraction and identification models of driving intention. 6419 groups of data were randomly selected from 8024 groups of virtual driving data for model learning, and the remaining data were used to verify the model. For the identification model, the identification accuracy of acceleration, keeping speed and deceleration were 80.33%, 76.58%, and 70.36%, respectively. The results showed that the feature extraction and dynamic identification models of driving intentions proposed in this article were reasonable and effective.

Simulation verification

The car-following simulation system based on projection pursuit regression was used for simulation verification. Limited to the space, the calibration process of the model was not described in detail. The speed, acceleration, and following distance of target vehicle were verified. The validation of one target vehicle was taken as an example, and the results are shown as Figure 7, where the simulation result of the model that without considering driving emotion was shown as Simulation 1 and the simulation result of the model that considering driving emotion was shown as Simulation 2.

Result of simulation verification: (a) simulation result of speed, (b) simulation result of following distance, and (c) simulation result of acceleration.

Simulation results showed that driving performance can be more accurately simulated with the model that taking driving emotion into account. The validity of the proposed model was indirectly verified.

RST is an effective tool to process imprecise, inconsistent, and incomplete information. It has lots of advantages such as strong applicability and no requirement on prior knowledge. A large number of input–output mapping relationships are learned and stored in BP network, and the mathematical equation describing the relationship in advance is not needed. A good recognition result can be gotten from the models proposed in this article. The results of this research showed that there were some differences in driving intention identification under different emotional modes. The differences were mainly manifested in the complexity and accuracy of intention feature vectors. For example, the accuracy of intention identification can be more than 90% in anger mode, with a five dimensions feature vector. The accuracy of intention identification was difficult to reach 90% in helplessness mode of while the dimensions of the feature vector were six. The main reason is the different driving behaviors under different emotional modes. The above differences must be fully understood to realize the accurate and efficient identification of driving intention. The research idea of mutual isolation of emotion and intention needs to be abandoned. Driving emotions, which have complex influence relationships with drivers’ psychological and physiological factors, are complex and diverse. On one hand, the choice and definition of driving emotion are emphasis of the further study. On the other hand, it should be pointed out that the measures of multi-source and dynamic data of human–vehicle–environment were carried out in a certain environment, and the results were easily influenced by driving environment. In addition, the selection of the experiment methods and subjects had limitations. Perhaps, the influence of the environment and subject selection should be reduced in further research.

Conclusion

On the basis of understanding the differences of driving behaviors in different emotional states correctly, feature extraction and dynamic identification models of driving intentions adapting to the multi-mode emotions were established based on RST and BP neural network. The rationality and validity of the model were verified by the experiments of actual driving, virtual driving, and interactive simulation. The driving intentions of acceleration, keeping speed, and deceleration under the condition of car-following were identified dynamically and accurately. A new idea for the accurate and efficient identification of driving intentions was proposed. The theoretical support for the coupling mechanism of emotion and driving intention can be provided. As a preliminary study on the measurement of micro-psychological dynamics of automobile drivers only delves into the influence of driving emotion on intention in vehicle following state and its identification method in this study. As the situation of vehicle cluster changes rapidly, the emotional influence mechanism of driving intention in multi-lane complex environment needs to be further studied. Only the identification of the four most basic emotions of human beings were studied. Human emotions are complex and diverse. Considering the dynamic evolution law of driving emotions and the multi-lane complex environment, the identification of more specific and subtle emotions of car drivers needs to be further studied. In this article, the measurement of human–vehicle–environment multi-source data is carried out in a specific environment, and the test results are easily affected by the driving environment. There is a certain difference between the driving virtual environment and the actual road conditions, and the proficiency of the driver using the driving simulator will also have a certain impact on the experimental results. The selection of experimental methods and subjects is limited to a certain extent, and subsequent studies should pay attention to reduce the impact of the test environment and the selection of experimental subjects on the research results.

Footnotes

Handling Editor: Hai Xiang Lin

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by the Joint Laboratory for Internet of Vehicles, Ministry of Education—China Mobile Communications Corporation, the Science Fund of State Key Laboratory of Automotive Safety and Energy (under Project No. KF16232), the Natural Science Foundation of Shandong Province (Grant No. ZR2014FM027), and the National Natural Science Foundation of China (Grant No. 61074140).