Abstract

This study developed and effectively implemented an efficient navigation control of a mobile robot in unknown environments. The proposed navigation control method consists of mode manager, wall-following mode, and towards-goal mode. The interval type-2 neural fuzzy controller optimized by the dynamic group differential evolution is exploited for reinforcement learning to develop an adaptive wall-following controller. The wall-following performance of the robot is evaluated by a proposed fitness function. The mode manager switches to the proper mode according to the relation between the mobile robot and the environment, and an escape mechanism is added to prevent the robot falling into the dead cycle. The experimental results of wall-following show that dynamic group differential evolution is superior to other methods. In addition, the navigation control results further show that the moving track of proposed model is better than other methods and it successfully completes the navigation control in unknown environments.

Keywords

Introduction

Mobile robots have been used to solve many problems in recent years, and it helps human solving many problems such as environmental exploration, object handling, and navigation.1–3 To achieve these missions in a complex environment, the navigation technology of mobile robot is a very important topic and the design of controller becomes a major subject.

Mobile robots detect the obstacles through the sensors to avoid collision in the navigation control. Therefore, obstacle avoidance is an essential element for the navigation commission. Recently, many novel designs4–6 for intelligent robot control have been developed to improve obstacle avoidance in robot navigation. For example, researchers have used fuzzy logic control (FLC) and neural network (NN) to apply in robot navigation control. Al-Sahib and Ahmed 4 applied the information detected by the sensors directly into the designed fuzzy controller, and it makes the robot successfully perform the obstacle avoidance. Dutta 5 combined FLC, NN, and self-adaptive learning to adjust the parameters in fuzzy neural network (FNN). Though the adopted type-1 fuzzy models in Al-Sahib and Ahmed 4 and Dutta 5 could complete the navigation control, its performance was not acceptable, and the uncertainty of input data owing to the environmental noise in real state affects the control. In recent years, the type-2 fuzzy system has been proposed to perform fuzzy inference and obtain a better performance than the type-1 fuzzy system. Liang and Mendel 7 then used Karnik–Mendel algorithm to implement the order reducing of type-2 fuzzy system. Kim and Chwa 8 then used the type-2 neural fuzzy network (Type-2 FNN) to improve performance. But the computation in Liang and Mendel 7 and Kim and Chwa 8 is more complex. This study utilized interval type-2 neural fuzzy network combined with the center of sets (COS) 9 to reduce computational complexity. Therefore, in this study, an efficient interval type-2 neural fuzzy controller is proposed for navigation control of mobile robot.

Training of the parameters is the main problem in designing a type-1 or type-2 neural fuzzy network. Backpropagation (BP) training5,8 is commonly adopted for solving this problem. Since the steepest descent approach is used in BP training to minimize the error function, the algorithms may reach the local minima very quickly and be not easy to find the global solution. The aforementioned disadvantages lead to suboptimal performance, even with a favorable neural fuzzy network topology. Therefore, training technologies that can be used to adjust the system parameters and find the global solution while optimizing the overall structure are required. Recently, many evolutionary algorithms10–14 for optimization have been used to adjust the parameters in NN or FNN. Evolutionary algorithms, which are also called biologically inspired computation, originated from the observation of natural phenomenon. These simulate biological behavior of some creatures such as particle swarm optimization (PSO), 10 ant colony optimization (ACO), 11 differential evolution (DE), 12 and artificial bee colony (ABC). 13 DE has been commonly used to solve the optimization problems in recent years. It is superior in simple structure, less setup parameters, and optimization ability, but the disadvantage is unstable convergence and easy to fall into local optimum. In this study, a novel algorithm, dynamic group differential evolution (DGDE), is proposed to improve the drawbacks of traditional DE.

The aim of this study is to improve the navigation control of a mobile robot and successfully reach the goal in the unknown environment so as to increase the exploration benefits. The proposed navigation control method consists of mode manager, wall-following (WF) mode, and towards-goal (TG) mode. The mode manager was designed to enable the robot to switch between two behavior modes (1) TG and (2) WF according to the relation between robot and environment. An interval type-2 fuzzy neural network controller (IT2NFC) with DGDE algorithm is proposed to implement the WF control of mobile robot. In the proposed DGDE, the concepts of grouping as well as modified mutation are also used to increase solution stability and precision. In addition, a mechanism for calculating the distance between robot and goal was used to prevent the robot from falling into a dead cycle. Several evolutionary algorithms are implemented to compare and analyze the navigation performance by two evaluation indexes. One evaluating index is the total moving distance of the robot in whole navigation. The other evaluating index is the time required to complete the navigation.

Reinforcement learning of WF control

WF control of a mobile robot requires a good controller to maintain proper distance between robot and wall as well as being adaptive to every kind of environments. This study developed an IT2NFC with DGDE learning algorithm, and four evaluation methods are implemented for the comparisons of the robot’s WF.

Description of the mobile robot

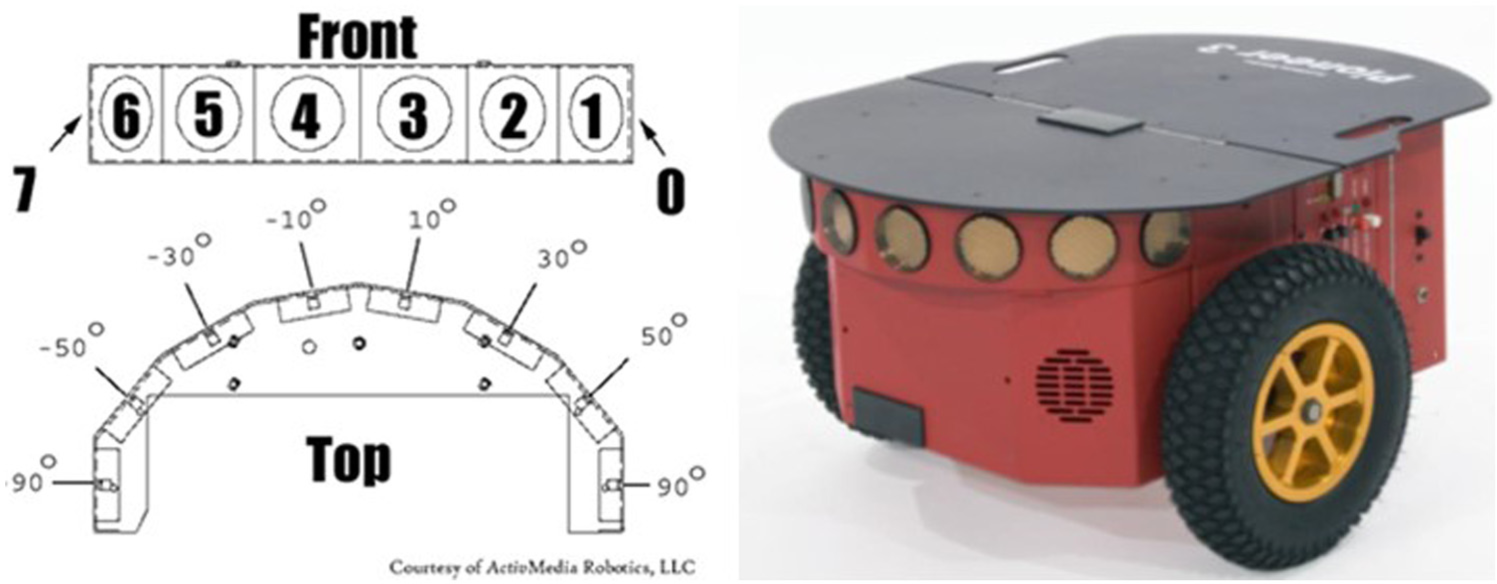

The mobile robot, Pioneer3-DX, is manufactured by Adept MobileRobots LLC, USA and shown in Figure 1. This robot has the characteristics of high load, high endurance, and high scalability. Pioneer3-DX supports cross-platform libraries and software development kits as well as robot motion control, client–server models, and many equipped libraries to be applied in different areas or to be combined with various peripherals.

Pioneer3-DX mobile robot.

The Pioneer3-DX mobile robot has eight sensors, one on each side, and the other six sensors are separated by 20° on the front side to provide with object detection, distance measuring, obstacle avoiding, characteristic identification, position, and navigation. Only one sensor of the sonar sequence is stimulated at one time, and the frequency of distance measuring is set to 25 Hz, that is, every sensor activates in 40 ms, and distance measuring is from 10 cm to 5 m. Some basic specifications of Pioneer3-DX are listed in Table 1.

Specification of Pioneer3-DX.

WF control using IT2NFC with DGDE learning algorithm

This study proposes IT2NFC with a DGDE learning algorithm to efficiently implement the WF control of a mobile robot.

The proposed interval Type-2 neural fuzzy controller

The whole framework and operation process of IT2NFC are described in this section, and the proposed controller is to extend our previous research.15,16 Because the computational complexity of normal interval Type-2 fuzzy system is higher in defuzzification, the nonlinear combination output of functional link neural network (FLNN) 17 is added into the consequent of corresponding fuzzy rule. Each rule can be expressed as

where

IT2NFC is a five-layer network structure and is shown in Figure 2.

Layer 1 (input layer): The input data are imported into the next layer, and it is only the transmission of information without any computation

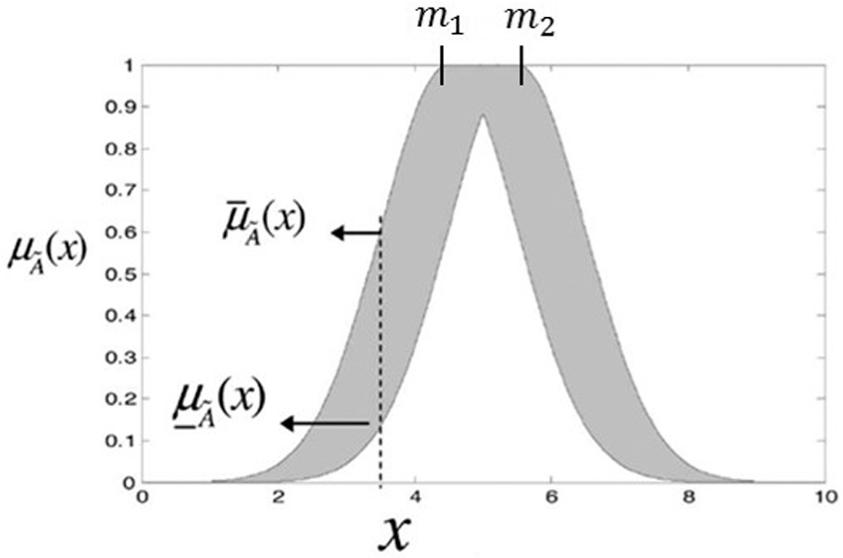

Layer 2 (membership function layer): It is mainly the computation of fuzzification and every node is defined as the interval type-2 fuzzy set. It is shown in Figure 3 that Gaussian membership function with uncertain mean value,

where

and

Therefore, the output of the layer 2 can be represented as an interval

Framework of IT2NFC.

Uncertain mean value of interval type-2 fuzzy set.

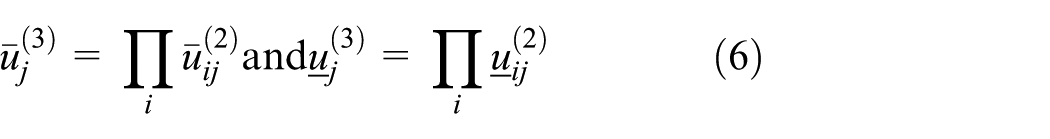

Layer 3 (firing layer): Each node in this layer is a rule node using product operation to obtain the firing strength,

where

Layer 4 (consequent layer): Interval type-2 fuzzy system produces interval type-1 fuzzy set by degrading computation and outputs the value after defuzzification. Owing to the higher complexity of traditional type-2 degrading computation like iterative procedure of Karnik–Mendel algorithm, 7 we adopt COS for less complexity by

Such a method is composed of firing strength of upper and lower bound, and it simplifies the process of degrading computation. The composition of firing strength and responding output are

and

where

where

Layer 5 (output layer): The degrading output of previous layer located between

Differential evolution

Differential evolution is an evolutionary computation developed by Storn and Price in 1997. The main processes of differential evolution (initialization, mutation, and recombination) are similar to those in genetic algorithm and it is shown in Figure 4.

Flowchart of DE.

1. Initialization. It is to setup the parameters of DE and to randomly initialize the target vector of solution space

where

2. Mutation. Three randomly selected individuals as

where

2D depiction of mutation.

3. Recombination. In this operation, every mutation vector performs crossover operation with corresponding target vector and then produces a new trial vector,

where

4. Selection. It is evaluated by the fitness value to select trial vector whether it could be the target vector of next generation. If the fitness value of trial vector

Proposed DGDE

Owing to the fast convergence in traditional DE, it often falls into the local optimum. This study proposed a novel DGDE to improve the local searching ability in traditional DE. The detailed explanations of DGDE are shown as follows:

Step 1: Initialization

The study proposed DGDE to adjust parameters in IT2NFC for the WF control. All the parameters in IT2NFC are coded as individual chromosomes including Gaussian mean,

Individual chromosome.

Step 2: Grouping

The fitness values of all the chromosomes are sorted in descending order and all the grouping number is coded as 0 initially (see Figure 7).

Sorting the fitness values in descending order.

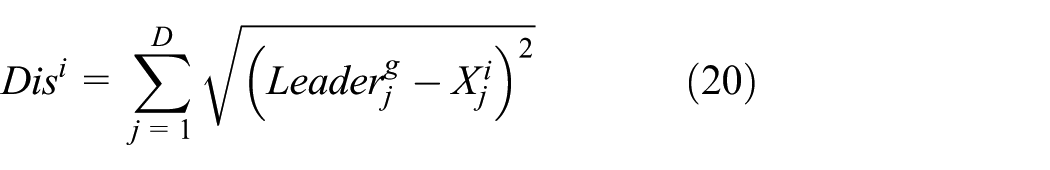

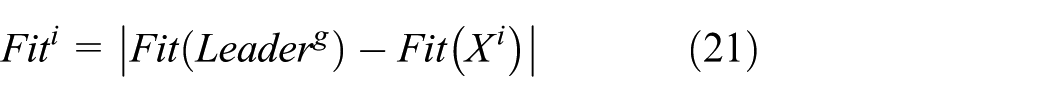

The best individual is set as the leader and is updated with grouping number, g. Then, taking the leader as the center, two thresholds, average distance and average fitness, are calculated. If an individual is satisfied with both the two thresholds, it will be set as the gth group

where D means dimension, NP is the number of chromosomes,

The best chromosome is set as leader.

The distance,

If

Similar individuals are grouping into the same group.

If any ungrouping individual exists, it will return back to step 1. The remained ungrouping individual with best fitness value will be defined as the new grouping leader, and the process repeats steps 1 to 3 until all individuals are grouped (see Figure 10).

Continue grouping for the ungrouping individuals.

Step 3. Mutation

Here, two novel mutation methods (called DGDE_ M-1 and DGDE_M-2) are proposed in the DGDE

where

To prevent the conventional DE easily falls into the local optimum, a random selected leader is used as a base vector (i.e. DGDE_M-1), and the directional vector can efficiently increase the searching ability of the algorithm. In DGDE_M-2, the best individual is set as the base vector. A differential vector of two randomly selected individuals is added, and the improved vector after mutation is surrounded around the best individual. If the position of leader is better than others, the differential vector of random leader and random individual can effectively improve searching ability and this is beneficial to search in the solution space.

Steps 4 and 5 are the process of recombination and selection, and are the same as the traditional DE. The flowchart of DGDE is shown in Figure 11.

Flowchart of DGDE.

Reinforcement learning is utilized to implement in the WF mode of mobile robot. Such a method needs neither designing control rules by experts nor collecting training data. The proper way is to define the fitness function for evaluating the performance of mobile robot in the environment. Flowchart of WF mode is depicted in Figure 12. There are four input signals (

Flowchart of wall-following mode.

To be adapted for every situation, the mobile robot is trained in a

Training environment.

For maintaining WF and avoiding collision in the training process, three stop conditions are designed:

Mobile robot will collide wall if any distance of sonar sensor,

Mobile robot leaves the wall if the sensing distance,

Total moving distance is more than one cycle of training environment to guarantee the mobile robot at least completes one cycle of the training environment.

(a) Colliding with wall and (b) leaving wall.

The proposed DGDE is used to optimize the IT2NFC parameters. Each individual represents one IT2NFC solution and is used to control the mobile robot in the training environment. While the robot meets the stop condition, the fitness function is used to evaluate the WF performance of the robot in the training environment. Then, the next individual takes the initial position until the terminating condition of the algorithm is activated.

The defined fitness function includes four sub-fitness functions, the moving distance (

Moving distance sub-fitness function (

While mobile robot’s moving distance is greater than

Distance to wall sub-fitness function (

Here,

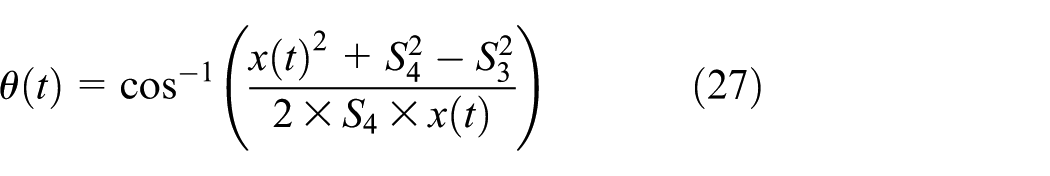

Angle to wall sub-fitness function (

where

In order to keep the mobile robot parallel to the wall, the average of

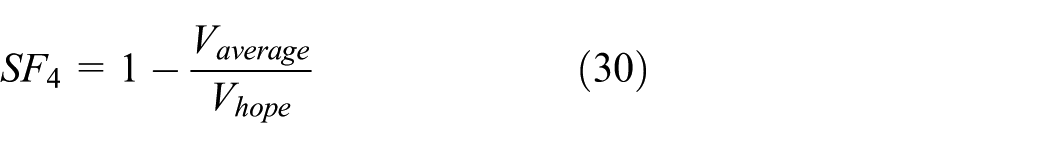

4. Moving speed sub-fitness function (

where

By summation of the four sub-fitness functions,

Here, the weighted coefficients are

Demonstration of (a)

Experimental results of WF control

This study implemented two mutation methods, DGDE_M-1 and DGDE_M-2, and compared the efficiency and stability with other evolutionary algorithms. The initial parameters of DGDE are set in Table 2. In order to observe the stability of each algorithm, the experiments were repeated for ten times.

Initial parameters of DGDE.

Initially, setup parameters are total number of parameters (NP), crossover rate (CR), mutation weighting factor (F), and number of Fuzzy rules (Rule). Since the best number of fuzzy rules is difficult to determine, 5, 6, and 7 rules are used in the experiments. The performance evaluation includes the best fitness value (Best), the worst fitness value (Worst), the average fitness value (Average), the STD, and the number of successful runs. Experimental results are shown in Table 3. Here the number of success means that the number of successful runs one cycle for mobile robot in the training environment. Though the controller with fewer rules means that it needs fewer coding dimension consumption, less computation time, and less memory, the experimental result shows that the performance is worse. Thus, six fuzzy rules are adopted in this study.

Performance evaluation of different rules.

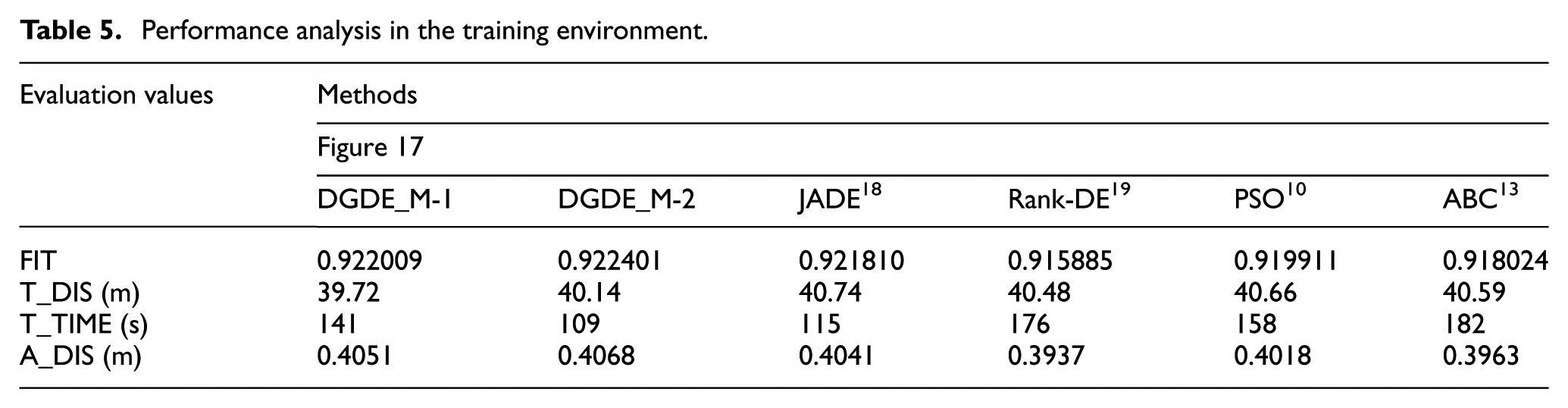

Different algorithms are applied in WF mode to identify the performance in Table 4. The experiments were repeated for 10 times for each algorithm. The average fitness values of 10 times in proposed DGDE_M-1 and DGDE_M-2 are 0.919452 and 0.919648, respectively. The large average fitness value and the small STD in Table 4 represent that the mobile robot can implement better the WF mode and higher stability. In addition, the proposed DGDE_M-1 and DGDE_M-2 in the repeated 10 experiments successfully perform the whole WF control, whereas the other methods will sometimes hit obstacles. According to the data in Figure 16 and Table 4, the proposed DGDE performs better in WF mode, shorter time in cycling the environment, and lower STD than other algorithms. Therefore, the proposed method also possesses higher stability. And there shows several moving tracks of mobile robot with DGDE in training environment in Figure 17.

Wall-following performance of different algorithms.

Training fitness curves of wall-following control using different algorithms.

Moving track in training environment applied by different algorithms. (a) DGDE_M-1, (b) DGDE_M-2, (c) JADE, (d) Rank-DE, (e) PSO, and (f) ABC.

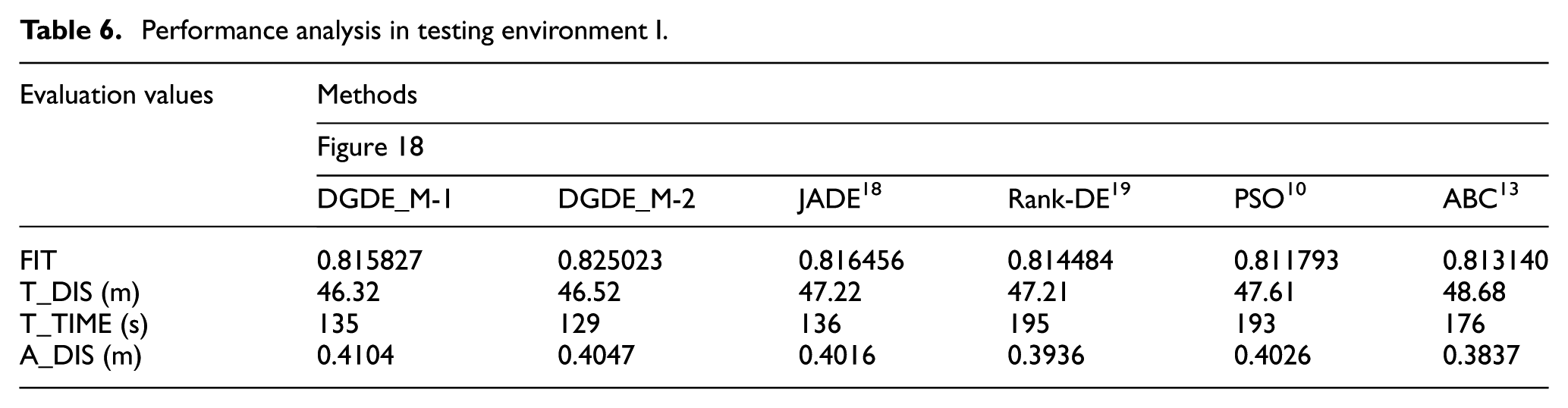

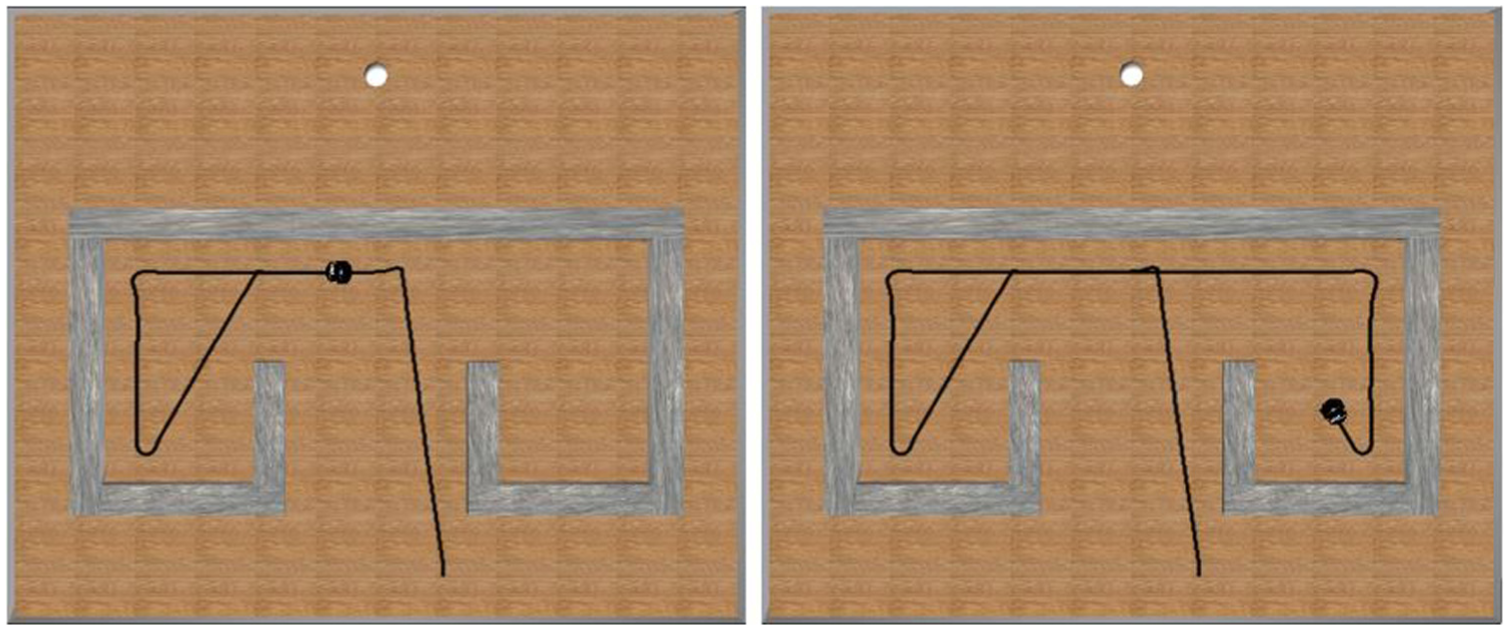

Two testing environments are set to verify whether different algorithm can successfully process WF after training. The performance evaluation includes the defined fitness function in equation (31) (FIT), the total moving distance of the robot in whole WF (T_DIS), the time required to complete the whole WF (T_TIME), and the average distance between the robot and the wall in the whole WF (A_DIS). The defined fitness function in equation (31) is used to evaluate the WF performance of a mobile robot. If a learning algorithm is converged, the robot does not move suddenly near the wall or suddenly away from wall. That is, the total moving distance of the robot in whole WF will be reduced. If the time required to complete the whole WF is short, the moving speed of mobile robot is fast. Then, the proposed algorithms are compared with other algorithms in Tables 5–7 and Figures 17–19, and the result shows that the proposed algorithm is superior to others.

Performance analysis in the training environment.

Performance analysis in testing environment I.

Performance analysis in testing environment II.

Moving track in testing environment I applied by different algorithms: (a) DGDE_M-1, (b) DGDE_M-2, (c) JADE, (d) Rank-DE, (e) PSO, and (f) ABC.

Moving track in testing environment II applied by different algorithms: (a) DGDE_M-1, (b) DGDE_M-2, (c) JADE, (d) Rank-DE, (e) PSO, and (f) ABC.

Navigation control

Recent research in mobile robot navigation has focused on known and unknown environments. Since limited information is available in an unknown environment, mobile robot is likely to collide and lead to the fail commission when compared to that in the known environment. Therefore, an effective navigation control method, mode manager, is used to switch the behavior mode according to the relation between the robot and environment. Two behavior modes, TG and WF, are utilized in the navigation control of mobile robot. When the robot closes to obstacles, the mode manager will switch to WF mode for avoiding collision. Otherwise mode manager switches to TG mode to assist robot approaching the goal as fast as possible. In this study, we do not consider to evaluate a fitness function for TG mode. The reason is TG mode performs the shortest distance between the mobile robot and the goal in an unknown environment. The detailed flowchart of navigation control is shown in Figure 20 and the relative pseudo code is also shown in Appendix 1.

Flowchart of navigation control of mobile robot.

TG behavior

When a mobile robot is navigated in an unknown environment, the goal position information is available. The mobile robot moves toward the goal by adjusting the moving direction according to the relative position between the robot and the goal. There is an angle,

Mode manager

The mobile robot is divided into four areas,

Four sections of mobile robot.

The main purpose of TG mode is to make the robot approach the goal. The smallest distance between the robot and the goal is recorded during the navigation control. When the distance between the robot and the goal is longer than the recorded smallest distance during WF mode, the robot will fall into the dead cycle (see Figure 23). Therefore, a modified mode manager is proposed to escape the dead cycle in Figure 24. The mode manager counts the distance between the robot and the goal in each time step and records the smallest distance. If the current distance between the robot and the goal is smaller than the recorded smallest distance, the mode manger switches to TG mode. Therefore, when the mode manager is added into the navigation control, the mobile robot successfully escapes the dead cycle as shown in Figure 25.

Dead cycle.

Flowchart of mode manager.

Escaping dead cycle of mobile robot.

Experimental results of navigation control

Public-approved navigation experimental environments are used to demonstrate the strength of the proposed navigation control method shown in Figure 26. Some other evolutionary algorithms are implemented to compare and analyze the navigation performance by two evaluation indexes. One evaluating index is the total moving distance of the robot in whole navigation. As the stability of the WF mode increases, the total moving distance decreases. The other evaluating index is the time required to complete the navigation or the moving speed in total. The analysis of the total moving distance and the time required to complete the navigation is listed in Table 8. In Table 8, the ABC 13 has a smaller total moving distance than our method, whereas the ABC 13 has a larger time required to complete the navigation than our method. The proposed method is superior in navigation control than other methods.

Navigation control results of proposed method with mutation DGDE-1 (left figure) and DGDE-2 (right figure): (a) bug trap, (b) back_and_forth, B&F, (c) T, and (d) rooms_easy.

Performance analysis of different algorithms.

Conclusion

This study proposes an efficient control method to implement navigation control of a mobile robot. According to the relationship between the robot and the environment, a novel mode manager is used to switch WF mode and TG mode. An interval type-2 neural fuzzy controller with the evolutionary algorithm is used in the mobile robot as an adaptive developing controller without designing rules by experts or collecting training dataset. The proposed DGDE uses the group concept, which substantially increases the searching ability and convergence speed of DE. The experimental results show that the proposed method of mobile robot is superior to others in WF mode and navigation control. The proposed model is successfully implemented as a navigation controller on Pioneer 3-DX mobile robot in an unknown environment.

Footnotes

Appendix 1

Pseudo code of navigation control

Handling Editor: Ling Zheng

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.