Abstract

To identify periodic harmonic irregularities on subway wheel surfaces, a novel data-driven method is proposed to address the challenges of nonlinear interferences and low signal-to-noise ratio in vibration signals collected during operation. To avoid the influence of manual signal processing and feature engineering, this study introduces a three-stream feature fusion deep learning framework. Specifically, the preprocessed vibration signals are input into three parallel channels: (1) raw time-domain signals into a 1D convolutional neural network (1D-CNN), (2) continuous wavelet transform (CWT) time-frequency representations into a 2D convolutional neural network (2D-CNN), and (3) fast Fourier transform (FFT) spectra into a bidirectional gated recurrent unit (BiGRU) network. The extracted features from the three branches are subsequently fused and a multi-head self-attention mechanism embedded at the tail of the network to enhance classification performance. The proposed method achieves an accuracy of 97.62% in recognizing wheel state degradation levels. To meet the multi-parameter diagnostics in engineering, a multi-task learning (MTL) framework based on hard parameter sharing is introduced, and establishing task-specific branches at the back end of aforementioned model. Results of experiment show that the MTL framework maintains an average accuracy of 97.25%, which is comparable to or exceeds that of single-task models.

Introduction

With the rapid development and acceleration of urban metro systems and high-speed railways in recent years, extensive research has been conducted on wheel state monitoring, especially in areas such as degradation mechanisms, dynamic responses, and fatigue strength effects. Despite these efforts, no fundamental solution has yet been proposed to address wheel state deterioration. Deterioration of wheel conditions will exacerbate impact interactions at the wheel-rail interface, amplifying wheel-rail forces and intensifying vibrations in wheelsets, axle boxes, rails, track components, and architectural structures, accompanied by increased noise levels.1–3 In actual railway operations, periodic or centralized re-profiling of wheels is typically adopted to restore surface integrity and roundness, which often results in substantial resource waste. Therefore, real-time monitoring and diagnosis of wheel status—followed by targeted maintenance—can significantly enhance operational safety and economic efficiency.

Early-stage approaches relied heavily on signal processing techniques, evolving from simple band-pass filtering and spectral analysis to more sophisticated signal decomposition methods and their improved variants, such as Variational Mode Decomposition (VMD), Empirical Mode Decomposition (EMD). These methods were often coupled with signal reconstruction indicators and parameter optimization strategies.4–6 Subsequent developments have integrated machine learning and deep learning techniques into the diagnostic pipeline, where signal processing outputs are inputed to classifiers such as Convolutional Neural Networks (CNNs), Support Vector Machines (SVM), and Back Propagation (BP) neural networks, among others.7–9 However, these approaches present several limitations. Signal preprocessing procedures heavily rely on domain-specific prior knowledge, shallow machine learning models struggle with the low signal-to-noise ratios (SNR) characteristic of real-world industrial data, and deep learning models often require manual feature selection and other human-driven processes, introducing additional uncertainty into the diagnostic results.

Currently, increasing attention has been given to modular neural network design.10–12 The fusion of multiple deep learning components has thus become a prominent strategy in data-driven fault diagnosis. For instance, combining CNNs with LSTM, BiGRU, and attention modules has been shown to effectively extract multi-scale features from raw signals, thereby enhancing recognition accuracy.13,14 The incorporation of attention mechanisms and Transformer-based models into residual or convolutional networks significantly improves robustness against strong noise interference.15,16 Furthermore, converting time series signals into Markov Transition Fields (MTF), followed by classification using ResNet or attention-based architectures, has demonstrated strong potential. 17 Techniques such as digital twins and knowledge distillation have also achieved high diagnostic accuracy, especially in small-sample and model optimization scenarios.18,19

Based on on-board vibration acceleration, vehicle condition monitoring and fault diagnosis systems have been deployed in many practical applications, and a wide range of algorithmic studies have been conducted. The application of on-board vibration acceleration to monitor wheel defects will also become one of the key future directions.20–23 For instance, Shi et al. employed a CNN-based multi-sensor fusion approach, followed by a residual deep network, to identify wheel tread abrasions. 24 Jiang et al. proposed a structure-aware generalization network to improve the robustness of OOR wheel diagnosis. 25 Liu et al. developed a locally constrained inference network to address the issue of small-sample limitations in wheelset defect diagnosis. 26 Ye et al. applied deep learning models to predict routine geometric parameters of metro vehicle wheelsets. 27 Moreover, Ye et al. constructed a deep learning model based on multi-channel feature fusion for OOR detection in high-speed train wheelsets. Their model incorporated envelope spectra, frequency spectra, and two-dimensional time-frequency images as inputs to 1D-CNN and 2D-CNN modules for feature extraction and classification. 28

In this study, vibration signals were collected during the actual operation of metro vehicles. Without considering variable operating conditions or small-sample scenarios at this stage, we focus on directly identifying wheel status under high-noise environments using a purely data-driven approach. A multi-channel feature fusion deep learning model is constructed, where the preprocessed signals are fed into three parallel channels—1D-CNN, 2D-CNN, and BiGRU—followed by feature fusion and an embedded attention mechanism to enhance learning performance. Compared with the CNN-BiGRU-AM model used in bearing fault diagnosis, the present study introduces a 1D-CNN to extract features from raw time-domain signals and a 2D-CNN to capture two-dimensional time–frequency features, thereby enabling a more comprehensive and in-depth exploration of the complementary information within vibration signals. To simultaneously diagnose radial runout and polygonal order—a multitask learning (MTL) framework is further developed. This model architecture is well-aligned with practical engineering requirements and significantly improves both diagnostic accuracy and operational efficiency.

Theory

CNNs

A CNN is a feedforward neural networks and exhibits a deep structural hierarchy. It has become one of the most representative data-driven algorithms. The basic structure is shown as follows. 29

(1) Convolutional layer: For a one-dimensional convolution, the operation is defined as:

For a two-dimensional input, the convolution operation is expressed as:

For a signal sequence

Where,

(2) Activation function: CNNs usually use the Rectified Linear Unit (ReLU) as the nonlinear activation function, and the sigmoid functions, hyperbolic tangent (tanh) are also Commonly used.

(3) Pooling layer: Used to downsample feature representations within the receptive field, Common pooling strategies include maximum pooling and average pooling:

For each input feature map

Classic convolutional neural networks that adopt this architecture include LeNet-5, AlexNet, VGGNet, and GoogLeNet.

BiGRU

Due to the existence of gradient explosion and gradient vanishing problems in traditional recurrent neural networks (RNNs), advanced architectures such as the Long Short-Term Memory (LSTM) network 30 and the Gated Recurrent Unit (GRU) network 31 have been developed. The BiGRU is a widely adopted variant of GRU that enhances sequential modeling capability by incorporating both forward and backward temporal dependencies. Similar to the Bidirectional LSTM (BiLSTM), the BiGRU can capture contextual information from both past and future time steps, which is particularly advantageous for time series analysis, such as vibration signals from metro vehicle systems. Compared to BiLSTM, BiGRU offers a simpler structure with fewer parameters, while maintaining comparable performance in sequence prediction and classification tasks. This makes it highly suitable for real-time condition monitoring and resource-constrained deployment scenarios.

The GRU network simplifies the LSTM structure by construct the update gate. Additionally, unlike LSTM, GRU does not utilize an explicit memory cell. Instead, it introduces a direct linear dependency between the current hidden state

Inspired by the gating mechanisms in BiLSTM networks, 32 researchers have proposed the BiGRU model, which is composed of both a forward GRU and a backward GRU. The final output of BiGRU is determined by the combined states of these two parallel GRU layers. This bidirectional architecture enables the model to extract feature information that may otherwise be overlooked, thereby enhancing its ability to model contextual information within sequences.

Attention mechanism

The attention mechanism has become a widely adopted information selection strategy in deep learning, enabling models to assign greater focus to specific regions or components of the input that are more relevant to the task at hand. 33 While attention mechanisms can be employed as standalone modules, they are more commonly integrated into neural networks or other machine learning models to enhance representation learning and performance.

The attention mechanism lies in the attention weights which computed by evaluating the correlation between the query and key vectors, as the following two computational steps 34 :

Step 1: The attention distribution (attention weights) was computed as follows:

Where,

Step 2: An information selection mechanism was used to perform a weighted aggregation over the input values based on the attention weights. Taking the most widely used soft attention as an example, the output is calculated as:

Variants of the information selection mechanism

The self-attention mechanism refers to the case where the query, key, and value all originate from the same source. When multiple queries Q are used to extract relevant information in parallel from the input, the mechanism is referred to as multi-head attention. In self-attention, the inputs

Engineering-oriented problem

State of metro vehicle wheels

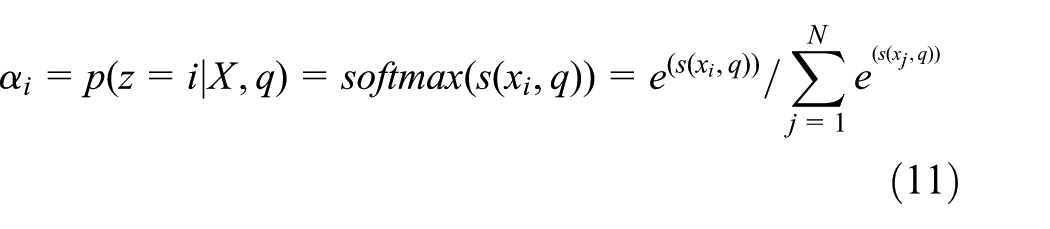

The wheelset of a metro vehicle serves as the sole mechanical interface between the bogie and the rail track. A schematic of the bogie structure is shown on the left side of Figure 2. Due to the inherent nature of the steel wheel–steel rail system, the wheel-rail interaction is characterized by harsh mechanical loads and complex contact conditions. During operation, the wheelset is subjected to a combination of complex dynamic behaviors, including creep and rolling contact, lateral and vertical vibrations, longitudinal hunting, and self-excited oscillations. These coupled motions can induce various forms of damage, such as rolling contact fatigue, tread spalling, flat spots, and polygonalization of wheels. Moreover, the combination of initial manufacturing defects and in-service damage accumulation may lead to the gradual evolution of localized deformations into periodic harmonic irregularities on the wheel tread surface after a period of operation. The major consequences of out-of-round wheels include: fatigue damage or fracture of the primary suspension coil springs and hangers; deterioration of operational stability indicators such as derailment coefficient, wheel load reduction rate, and critical speed for hunting instability; fatigue fracture of rail fastener clips; reduction of local rail strength; and significantly intensified vibrations and noise levels of elevated sections and station structures, which may lead to damage and cracking risks.35–37

The geometric state of metro vehicle wheels is typically characterized by two key indicators. The first indicator reflects the severity of wheel damage and OOR by measuring the radial runout—the larger the radial runout, the more severe the wheel deformation. The second indicator captures the number of polygonal lobes, which describes the number of periodic peaks on the wheel tread surface; a higher polygonal order implies a more severe OOR. If either of these two indicators exceeds the allowable limit, the wheelset is deemed to have reached an unacceptable level of polygonal wear or OOR.

In high-speed railway systems, wheel defects are typically characterized by high-order and low-amplitude polygonal wear, that is, a large number of polygonal lobes but small radial runout values. In contrast, metro vehicles are more commonly affected by low-order and high-amplitude wheel defects, where the polygonal order is relatively low, usually fewer than 12 lobes, with 5–9 lobes being the most frequent. In such cases, the radial runout value can exceed 0.2 mm rapidly, and in severe cases may reach 0.7 mm or even over 1 mm.

In this study, the two aforementioned indicators—radial runout and polygonal order—are used as the primary targets for wheel state recognition and diagnosis.

Monitoring and data acquisition of metro wheel state

The data used in this study were collected from a metro line in China that has been in continuous operation for over 5 years. The metro vehicles on this line are equipped with a vehicle condition monitoring and health management system, which primarily performs online monitoring of wheelset bearings, gearbox bearings, and motor bearings. Vibration-shock composite sensors are installed at the axle ends, gearbox, and traction motor. The data acquired are transmitted via a train-to-ground wireless communication system to the ground terminal, where diagnostic results are analyzed and displayed. The data are simultaneously transmitted to the central expert system for further learning and diagnosis. In this study, the axle-end vibration data are employed as wheel state data samples. As previously mentioned, this is also a research focus in recent years. A simplified wheelset dynamics model was employed for sensor deployment, and the schematic of the wheel system and vibration impact sensor installation is illustrated in Figure 1. Through several years of continuous monitoring and data collection, combined with mechanical dimensional measurements taken at corresponding time intervals, a dataset comprising vibration signals under various mechanical condition states was constructed.

Diagram of data collection.

When routine inspections detect surface defects on wheels or when increased operational noise is observed, a non-drop wheel lathe is employed to precisely measure the mechanical dimensions of the wheels. Simultaneously, the corresponding axle-end vibration data are collected. The vibration data samples are then categorized according to the measured mechanical dimensions. In this study, data were collected using sensors with a sampling frequency of 10 kHz. The test was conducted at an operating speed of 70 km/h, When the running speed remains stable at 70 km/h for more than 10 s, the vibration data from the most recent 10 s are taken as a valid sample, with a wheel diameter of approximately 825 mm, corresponding to a wheel rotational frequency of approximately 7.5 Hz. To ensure that each data sample captures no fewer than three full wheel rotation cycles and satisfies the requirement for signal length being a power of two, a sliding window approach was adopted to segment the data into samples. Each sample consists of 4096 data points, and adjacent samples share an overlap of 1024 points (i.e. one-quarter of the window length) of the data acquisition and classification are summarized in Table 1. For each operating condition (i.e. each level of the target indicator), 300 samples were selected, resulting in a total of 1200 samples across four classes per indicator. Before each calculation, the data are randomly shuffled using “randper,” during the division of training and testing sets to realize cross-validation.

Table of dataset partition.

Model design and experimental optimization

Feature fusion-based diagnostic model

This study employs a deep learning-based feature extraction strategy that directly operates on one-dimensional (1D) and two-dimensional (2D) signal representations. The acquired vibration samples are preprocessed to generate both 1D and 2D inputs, which are then used to design a multi-dimensional feature fusion model for wheel state diagnosis.

Preprocessing of 1D inputs

CNNs are known for their strong capability in feature extraction, while BiGRU exhibits powerful performance in learning temporal dependencies within sequential signals. In this study, three types of 1D inputs were preprocessed and separately fed into a 1D-CNN and BiGRU network for comparative experiments. These inputs include (1) the raw time-domain vibration signal obtained via the sliding window segmentation method, (2) the fast Fourier transform (FFT) spectrum, and (3) the envelope spectrum. The FFT spectrum and envelope spectrum only select frequency bands below 300 Hz. The 1D-CNN architecture was configured with two basic convolutional modules, containing 16 and 32 filters with kernel sizes of 2 × 1, respectively.

To compare the diagnostic effectiveness of 1D inputs, taking the radial runout classification task (as shown in Table 1) as an example, the average classification accuracies are presented as follows.

(1) Raw Time Domain: test accuracy of CNN is 77%, test accuracy of BiGRU is 30%;

(2) FFT Spectrum: test accuracy of CNN is 91.5%, test accuracy of BiGRU is 93.5%;

(3) Envelope Spectrum: test accuracy of CNN is 84%, test accuracy of BiGRU is 86%;

The training time of each preprocessing is approximate for each model. The experimental results on the training and test sets indicate that when using the raw time-domain signal as input, the CNN network should be used. However, for frequency-domain inputs such as the FFT spectrum and envelope spectrum, BiGRU network should be used in these cases.

Preprocessing of 2D inputs

In this study, six widely used methods for converting 1D time series data into 2D image representations were employed to perform 2D preprocessing on the raw vibration signals. These methods include Continuous Wavelet Transform (CWT) time-frequency images, Short-Time Fourier Transform (STFT) time-frequency images, Markov Transition Field (MTF), Recurrence Plot (RP), FFT spectrum images, and Envelope spectrum images. Based on the dominant frequency components related to the wheel out-of-round, the time-frequency and spectral images were generated by selecting the frequency range below 300 Hz to ensure relevant feature coverage. These 2D images were then used as inputs to a 2D-CNN for fault classification. The CNN architecture consisted of two basic convolutional modules, each containing 16 and 32 filters with a kernel size of 2 × 1, respectively.

Using the radial runout dataset presented in Table 1 as a case study, the classification accuracies on the training and test sets are reported as follows.

(1) Continuous Wavelet Transform (CWT) Image: test accuracy is 93.5%, preprocessing time is 222 s;

(2) Short-Time Fourier Transform (STFT) Image: test accuracy is 88.5%, preprocessing time is 165 s;

(3) Markov Transition Field (MTF) Image: test accuracy is 57.5%, preprocessing time is 499 s;

(4) Recurrence Plot (RP): test accuracy is 85%, preprocessing time is 6058 s;

(5) FFT Spectrum Image: test accuracy is 93.5%, preprocessing time is 333 s;

(6) Envelope Spectrum Image: test accuracy is 82%, preprocessing time is 339 s.

Training Time of each input is approximate, so the experimental results indicate that for long-time series data, the generation of MTF and RP images is computationally expensive and inefficient, the CWT time-frequency image was selected as the preferred input for the 2D-CNN model.

Three-channel feature fusion

Based on the preceding analyses, this study adopts a three-channel feature fusion strategy. Specifically, the raw time-domain signal is input into a 1D-CNN to extract low-level temporal features, the FFT spectrum is input into a BiGRU network to capture dependencies across frequency bands, and the CWT time-frequency image is input into a 2D-CNN to extract time-frequency features. The outputs from the FCLs of each of the three networks are flattened and concatenated to form a comprehensive fused feature representation. Taking the radial runout classification dataset (Table 1) as an example, the proposed fused model achieves an average recognition accuracy of 94.3%, which exceeds the classification performance of each individual network operating in isolation.

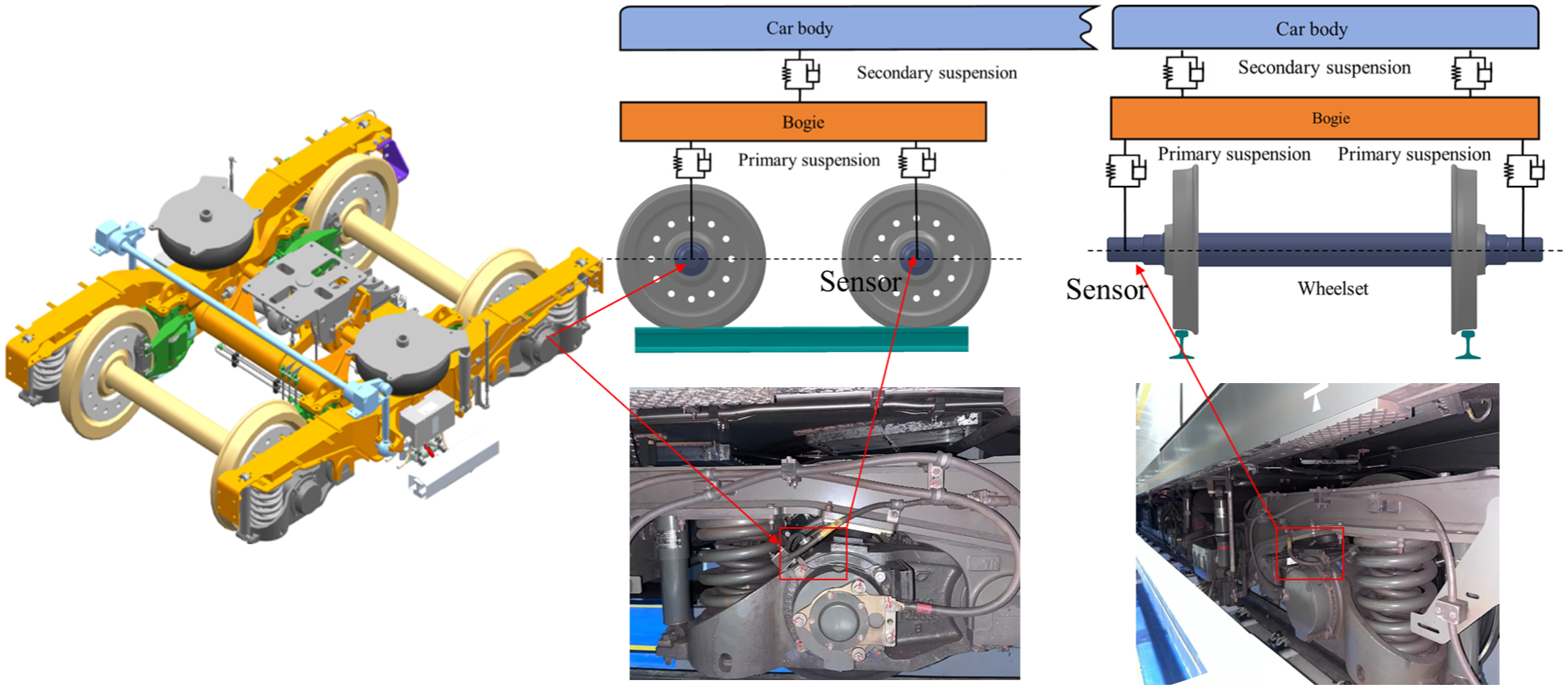

Convolutional and attention optimization

Considering that real-world operational data are characterized by a low signal-to-noise ratio, complex signal components, and weakly distinguishable time-frequency features, it is essential to first optimize the CNN backbone by increasing network depth. Inspired by the VGG architecture, this study deepens the CNN network without significantly increasing computational cost. A total of five convolutional modules were constructed. To further mitigate overfitting and reduce data volume, the model incorporates batch normalization layers, dropout layers, and global average pooling. Using the radial runout dataset from Table 1 as input, When using 1D time-domain signal inputs and 2D CWT time-frequency image inputs, trial calculations of the VGG network modules were performed, and multiple rounds of simplification and adjustment were conducted based on average accuracy. The number of convolution kernels, convolutional layers, and other parameters was adjusted accordingly. The model configuration that achieved the highest accuracy is shown in Figure 2.

CNN depth optimization.

To further enhance classification accuracy, a multi-head self-attention mechanism was integrated into the back end of the three-channel feature fusion model. Specifically, four attention heads were employed, with 64 channels allocated for both the query and key vectors. The optimized network architecture is illustrated in Figure 3. Applying this improved model to the same radial runout dataset, the average recognition accuracy reached 97.62%. The testing accuracy of the optimization process are as follows, the training time is between 158 and 193 s.

(1) Three-Channel Feature Fusion: 94.3%, training time is 158 s;

(2) Depth Enhancement of 1D-CNN & 2D-CNN: 96%, training time is 193 s;

(3) Addition of Self-Attention Mechanism: 97.3%, training time is 183 s;

(4) Addition of Multi-Head Self-Attention Module (2 heads): 97%, training time is 184 s;

(5) Addition of Multi-Head Self-Attention Module (4 heads): 97.62%, training time is 184 s;

(6) Addition of Multi-Head Self-Attention Module (8 heads): 96.5%, training time is 195 s.

Network model for wheel state recognition.

Metro wheel state recognition

Wheel state classification

The diagnostic model shown in Figure 3 was applied to the radial runout dataset described in Table 1, following the identification procedure outlined here. The resulting training accuracy and loss curves and the confusion matrix for the test set in the first trial are presented in Figure 4. The loss function reaches its minimum and stabilizes around the 20th training epoch, while the training accuracy attains 100% and remains consistent thereafter. The trends of the loss and accuracy curves indicate effective optimization and stable learning performance. Across five independent training trials, the training accuracy consistently reached 100%. The average accuracy on the test set was 97.62%.

Training curves and confusion matrix: (a) accuracy and loss curves and (b) confusion matrix of the test set.

Specifically, t-SNE was used to dimensionality reduction and visualization. Figure 5 shows the t-SNE visualizations of the model’s input, the outputs from the 2D-CNN, 1D-CNN, and BiGRU branches, as well as the final model output, together with the corresponding clustering quantitative metrics. The specific values of these metrics for each branch and the overall model are listed in Table 2. As shown in Figure 5 and Table 2, the proposed method enables enhancing the intra-class compactness of similar samples.

t-SNE visualization of model input and output: (a) input t-SNE of the overall model, (b) output t-SNE of 2DCNN branch, (c) output t-SNE of 1DCNN branch, (d) output t-SNE of BiGRU branch, (e) output t-SNE of the overall model, and (f) quantitative metrics of clustering.

t-SNE quantitative metrics of three branches and overall model.

Ablation and comparative experiments

To evaluate the feasibility and superiority of the proposed method, ablation experiments were conducted.

Four models were tested separately: the proposed model, the model without the 1D-CNN branch, the model without the BiGRU branch, and the model without the 2D-CNN branch. Each model was subjected to 10 diagnostic computations. Before each computation, the data samples were randomly shuffled to achieve cross-validation. A paired-sample t-test was conducted on the diagnostic accuracy results of the four models.

Besides, we considered that, in addition to self-attention and multi-head self-attention mechanisms, another mainstream category includes spatial attention and channel attention mechanisms. The CBAM module is an integrated application of both spatial and channel attention mechanisms; therefore, we chose to compare and incorporate the CBAM module in our study. So the CBAM was used as a substitute for the multi-head self-attention mechanism. The input channel dimension for the CBAM was set to 512, with a reduction ratio of 16 for the squeeze operation.

Each configuration was evaluated over 10 independent runs, and the average classification accuracy and computation time for each model variant are summarized as follows.

(1) Proposed Model (1DCNN + BiGRU + 2DCNN + MH-Attention): accuracy 97.62%, training time 158 s;

(2) BiGRU + 2DCNN + Multi-Head Self-Attention: accuracy 96.25%, training time 122 s;

(3) 1DCNN + 2DCNN + Multi-Head Self-Attention: accuracy 95.95%, training time 121 s;

(4) 1DCNN + BiGRU + Multi-Head Self-Attention: accuracy 96.06%, training time 37 s.

(5) 1DCNN + BiGRU + 2DCNN + CBAM Module: accuracy 95.67%, training time 133 s.

The experimental results demonstrate that the proposed model achieved the highest test set accuracy among all configurations. The differences in average accuracy between the proposed model and the models without the 1D-CNN, BiGRU, and 2D-CNN branches were 1.37%, 1.67%, and 1.56%, respectively. The performance differences between the proposed model and the other ablation models were statistically significant (p < 0.05), with p-values of 0.0084, 0.145, and 0.163, respectively.

When the multi-head self-attention mechanism was replaced with the CBAM module, the computation speed improved by 12.6%; however, the average classification accuracy dropped by approximately 2%.

Using the same evaluation protocol, the model was also applied to the wheel polygonal order dataset described in Table 1. The observed trends were consistent with those for radial runout classification. It is also noted that the average diagnostic accuracy for wheel polygonalization is generally 1%–2% lower than that for radial runout diagnosis.

Integrated wheel state recognition based on MTL

Joint representation of geometric wheel state indicators

In the dataset described in Table 1, each sample assigned to a specific level of radial runout or polygonal order may simultaneously contain multiple levels of the other indicator. Consequently, most existing studies and diagnostic models focus on only one of the two indicators, which not only reduces diagnostic efficiency, but also limits the applicability of such approaches in real-world engineering scenarios. For example, the method based on the Local Reasoning Constraint Network proposed in Liu et al. 26 mainly focuses on diagnosing tread surface defect types under small-sample conditions and does not belong to multi-indicator diagnosis.

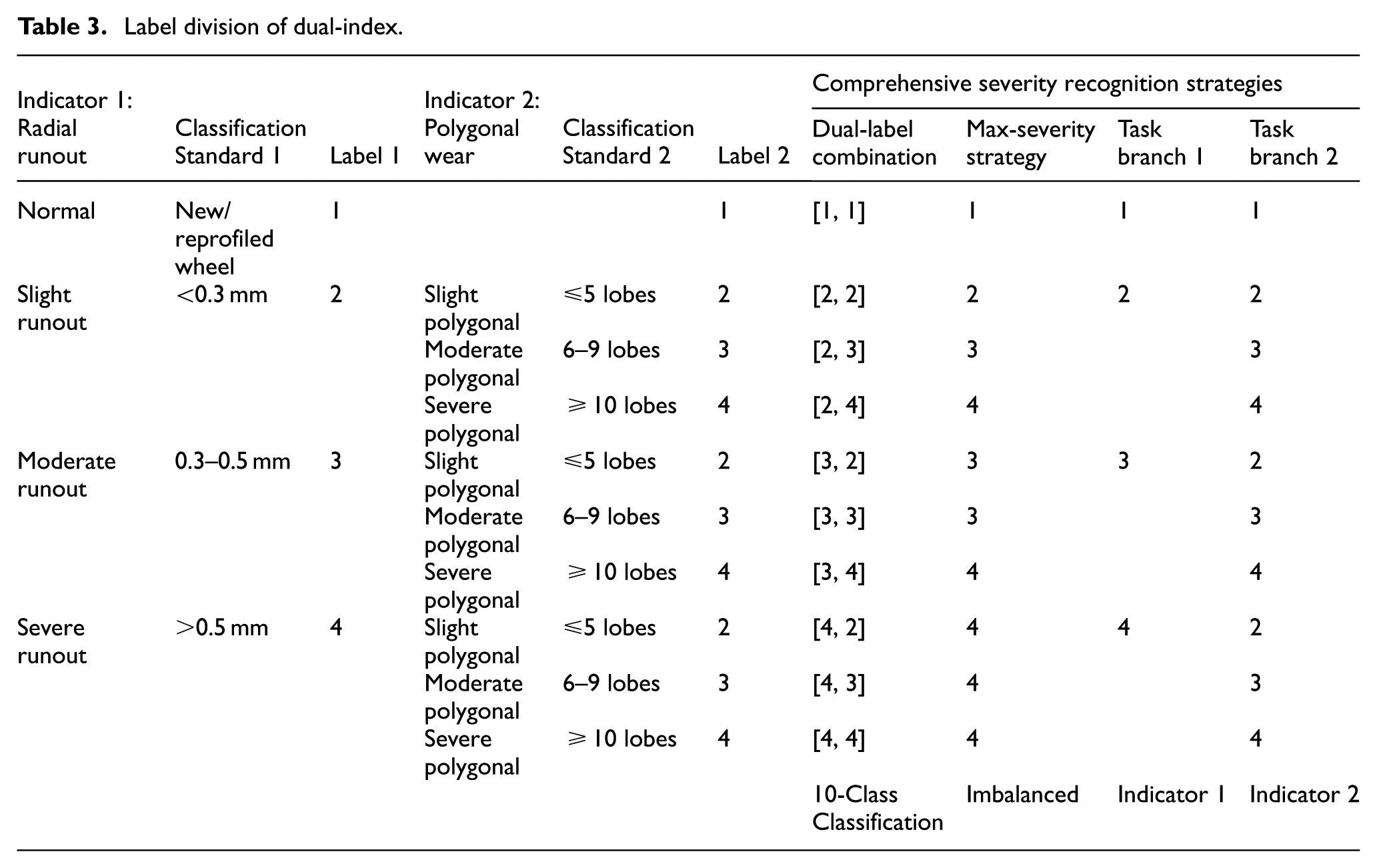

In response to this challenge, the classification strategy for these two indicators is summarized in Table 3, exploring four approaches: single-label, multi-label composite, maximum-value selection, and task-branching. If following the commonly used multi-label learning paradigm for compound fault diagnosis, 38 it would require separate samples for each single fault type as well as for composite faults. That would typically need to be synthesized through theoretical modeling, which limits practicality. Alternatively, if adopting a “maximum-severity selection” strategy, where the more severe indicator is used as the sole classification label, the dataset becomes severely imbalanced, and a high degree of feature redundancy persists across samples. Experimental results using this approach with the proposed model did not yield satisfactory accuracy.

Label division of dual-index.

In contrast, a promising solution lies in the deep learning paradigm of MTL, 39 which enables simultaneous training and inference across multiple diagnostic tasks. Unlike multi-label or single-task learning, MTL defines its total loss function as a weighted sum of the losses from each individual task. This property makes MTL particularly suitable for the simultaneous recognition of radial runout severity and polygonal order in metro wheel state monitoring.

MTL model

MTL can be implemented using either hard parameter sharing or soft parameter sharing strategies. In hard parameter sharing, a shared backbone network is constructed to extract generalized low-level features, while task-specific branches are added for each individual task. In contrast, soft parameter sharing 40 maintains separate model architectures for each task but allows selective parameter sharing between tasks.

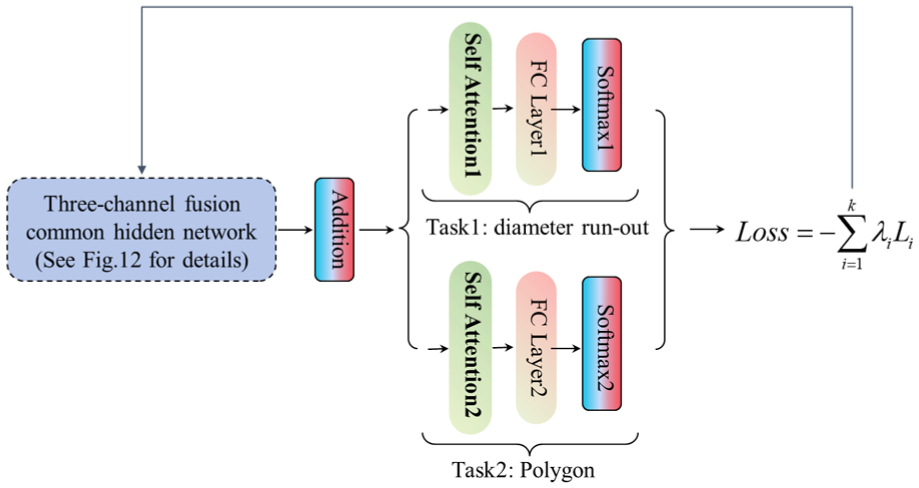

In this study, a hard parameter sharing approach was adopted for the MTL framework. Specifically, the three-channel feature fusion network with an integrated attention mechanism, as developed in this work, is employed as the shared backbone network. On top of this shared structure, two task-specific branches are constructed, the model is shown in Figure 6.

The multi-task learning model in this paper.

The loss function for the MTL network proposed in this study adopts a weighted combination of cross-entropy losses from the two tasks. IF the individual loss function of i-th task is defined as

Where

Integrated wheel state recognition

In the MTL model, some researchers have also conducted studies on adaptive loss weighting methods. However, since this paper involves only two tasks and the optimization range is relatively small, we adopted a manual weighting approach. Manual weighting with a step size of 0.1 can meet practical requirements. To further ensure accuracy, when approaching the average weight, the step size was adjusted to 0.05 for the accuracy calculation. The parameters

Comparison of the weighted loss curve.

Avg-accuracy of weighted loss.

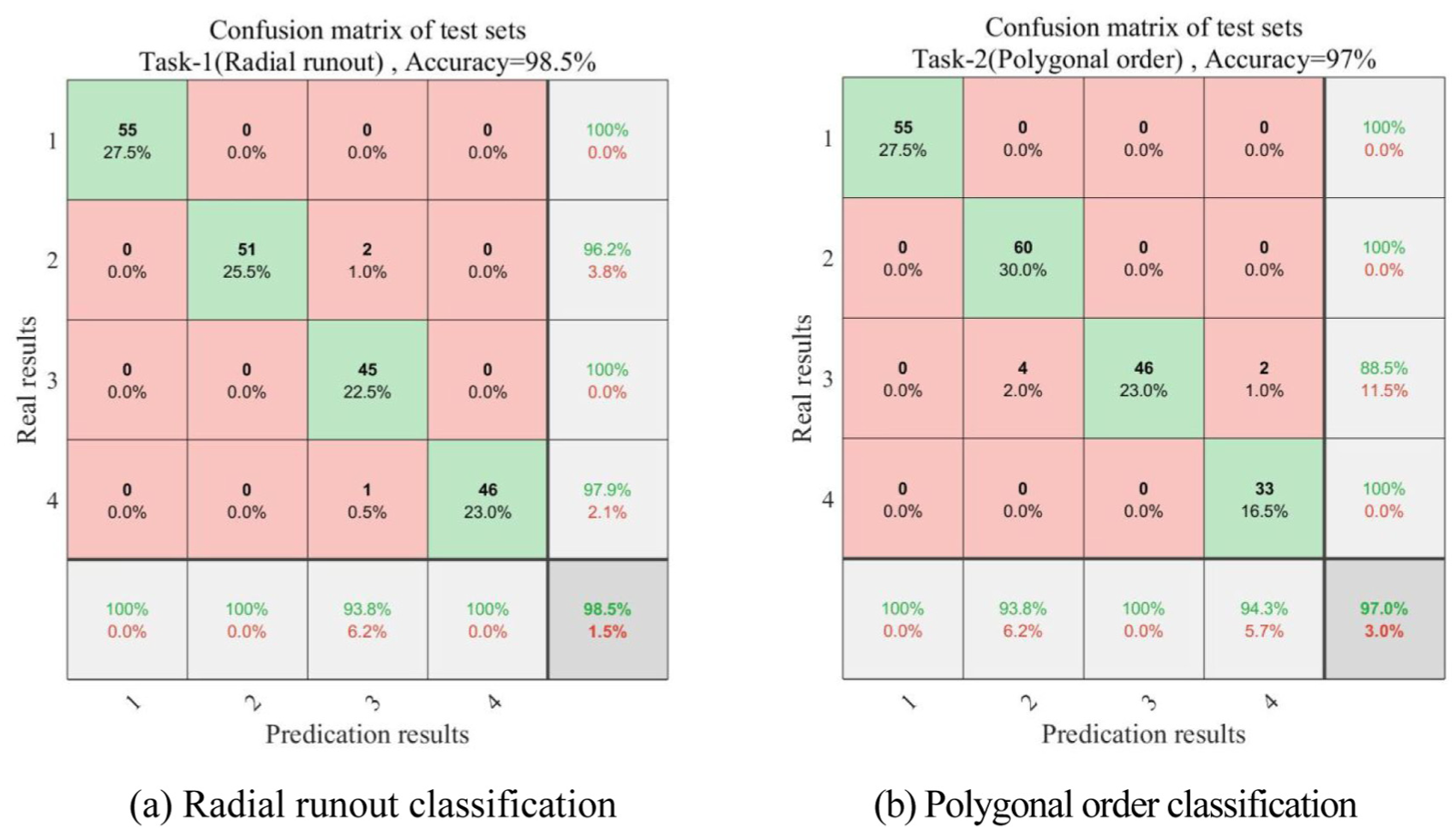

Based on the MTL model, the dual-task labels corresponding to the samples in Table 3 were evaluated. The resulting confusion matrices for Task 1 and Task 2 are shown in Figure 8, respectively, the performance in the multi-task framework shows no decline in accuracy.

Confusion matrix of the multi-task learning: (a) radial runout classification and (b) polygonal order classification.

Discussion and outlook

In integrating the proposed model with engineering applications and operation–maintenance management, we have identified several aspects that require further investigation and improvement, as summarized below:

(1) In future research, in addition to developing new fault diagnosis models and paradigms, efforts will be devoted to transferring diagnosis across different operating conditions—for example, diagnosing wheel defects under various running speeds on test lines and under complex service conditions on main lines. These studies aim to further enhance the practicality and robustness of wheel condition identification for metro vehicles.

(2) The present study has not conducted in-depth performance testing and optimization with respect to the computational power and memory constraints of actual on-board embedded devices, which represents one of the major limitations of this work. In practical engineering deployment, extensive further research on model simplification is needed. To improve the engineering applicability of the proposed model, future work should consider model lightweighting, pruning, and knowledge distillation techniques.

(3) Within the multi-task learning framework, further studies are needed on adaptive loss weighting strategies based on task difficulty to replace the current manual weighting approach, thereby improving model convergence efficiency and overall performance.

(4) According to statistics from the local operator of the metro line analyzed in this study, when unscheduled inspections because increased noise or periodic inspections, manual re-measurement of mechanical dimensions is conducted to determine the degree of wheel polygonization or damage. Within one wheelset re-profiling cycle, this typically results in 4–5 train stoppages and manual inspections include unscheduled inspections and periodic inspections. If the proposed wheel state identification method were deployed in the vehicle condition monitoring and diagnostic system of this line, maintenance could be scheduled only when the degree of wheel polygonization reaches the limit. This would eliminate these intermediate operations, improve train utilization, reduce the frequency of maintenance, and extend the interval between periodic inspections.

Conclusion

To address the challenge of periodic harmonic irregularities that develop on metro vehicle wheels after extended operation, and considering the low signal-to-noise ratio and strong nonlinearity of the vibration signals collected during operation, this study proposes a novel wheel state recognition model. The model integrates 1D convolution, 2D convolution, BiGRU, and a self-attention mechanism, eliminating the need for manual signal processing and handcrafted feature extraction. Additionally, an MTL framework is developed to simultaneously assess multiple wheel state indicators. The main conclusions are as follows:

(1) In the proposed three-channel feature fusion framework, the raw time-domain signal is input to a 1D-CNN to extract temporal features; the FFT spectrum is fed into a BiGRU to capture inter-frequency dependencies; and the CWT time–frequency image is processed by a 2D-CNN to extract time–frequency characteristics. The fusion of these three feature representations results in a high classification accuracy for wheel state recognition.

(2) Inspired by the VGG architecture, the depth of both the 1D-CNN and 2D-CNN branches was increased, and a multi-head self-attention mechanism was embedded after feature fusion. This optimization significantly improved model performance, achieving 97.62% accuracy in classifying the severity of radial runout, and 96.5% accuracy in identifying the range of polygonal orders. Compared to the integration of a CBAM attention module, the proposed attention-enhanced fusion model demonstrated superior accuracy for the target application.

(3) An MTL model was further developed for integrated wheel state recognition. The previously proposed fusion model was adopted as the shared backbone network, and two task-specific branches were constructed to independently classify radial runout severity and polygonal order. By applying a weighted loss strategy, the model was able to simultaneously diagnose both indicators from the same input sample, enabling a direct assessment of the overall severity of wheel polygonization. The average classification accuracy reached 97.25%, comparable to or exceeding that of single-task models. Moreover, task-related knowledge sharing in the MTL framework contributed to performance improvements in individual tasks, making the approach well-suited to practical engineering applications.

Footnotes

Handling Editor: Chenhui Liang

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: (1) Supported by the National Natural Science Foundation of China (Grant No. 52065030); Yunnan Major Science and Technology Program (No. 202102AC080002). (2) Supported by the National Key Research and Development Program of China (Grant No. 2018YFB1306103); Yunnan Major Science and Technology Program (No. 202002AC080001). (3) Supported by the National Natural Science Foundation of China (Grant No. 62263017)

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.