Abstract

In the current multi-variety and small-batch production mode, this paper proposes a quality prediction method for rocket body structure manufacturing process based on improved extreme learning machine (ELM). Aiming at the non-optimal problems caused by random selection of input layer weights and hidden layer bias of ELM, particle swarm optimization (PSO) is combined with gravitational search algorithm (GSA) to form a hybrid algorithm to optimize the random parameters of ELM. Taking a weld in a rocket body structure as an example, the weld width prediction model based on improved ELM is established and verified. The results show that compared with the prediction model based on the standard ELM and the prediction model based on PSO-ELM, the prediction model based on improved ELM has higher prediction accuracy, and its prediction deviation does not exceed 2.5%, which can effectively predict the weld width.

Introduction

Aerospace manufacturing industry is an important part and application field of intelligent manufacturing, and also an important embodiment of a country’s comprehensive national strength. The spacecraft is mainly composed of rocket body structure, power plant system, and control system. Among them, the rocket body structure is the most critical structural component of the spacecraft. It has typical characteristics such as large size, light weight, thin wall, and complexity. 1 It is an important determinant of the spacecraft’s carrying capacity and deployment capability. The process of the rocket body structure is complex and there are many processes. It mainly involves four manufacturing processes, namely machining, welding, riveting, and assembly.

The rocket body structure is mainly composed of propellant tank, riveting cabin, fairing cap, and other parts. Among them, the propellant tank is used as a pressure vessel to store propellant. It is the largest structural component in the rocket body structure and the main bearing structure of the spacecraft. The riveting cabin is a cylindrical shell riveted by skin, truss, and partition frame, which is used to install equipment, sensors, instrument brackets, etc.

In recent years, with the development of aerospace manufacturing industry, multi-variety and small-batch production mode has gradually become the main production mode. “Multi-variety” is mainly reflected in the different production specifications and processes between similar products; “small-batch” is mainly reflected in the small output of each product, and even dozens or single pieces of production. 2 It can be seen that these changes put forward higher requirements for the quality prediction of the rocket body structure production process.

The basic principle of quality prediction is to establish a quality prediction model with the key quality influencing factors in the manufacturing process as input and the quality characteristic index as output, so as to find out the possible quality problems and take measures to rectify them, so as to reduce the waste of production resources and improve the excellent rate of products. Zheng 3 proposed a prediction method of welding distortion for large scale thin-walled structures to solve the problems of geometrically nonlinear and non-uniform change of material parameters in the welding process. Three types of the weld is classified according to the structure characteristics of the propellant tank. The three-dimensional stress mapping method is applied to predict the welding distortion of the whole tank. The numerical results are compared with the experimental data to verify the effectiveness of the welding distortion prediction method. In recent years, domestic and foreign scholars’ research on quality prediction mainly focuses on machine learning, intelligent optimization algorithms, etc., and has made great progress.

Machine learning

Machine learning (ML) refers to allowing computers to continuously learn from known data to obtain the intrinsic relationship between input data and output data, and then to judge and predict unknown data. At present, the commonly used machine learning mainly includes support vector machine, BP neural network, extreme learning machine, and so on.

Tao and Yuan 4 proposed a prediction model based on DE-BP neural network, which combines principal component analysis and differential algorithm to improve the search accuracy and convergence speed of BP neural network. Dong and Xu 5 proposed a product quality prediction model based on dragonfly algorithm and least squares vector machine. The model uses dragonfly algorithm to optimize the parameters of vector machine, and the feasibility of the method is verified by an example. Bai et al. 6 compared the prediction model based on deep learning with the prediction model based on shallow learning. The results show that the prediction model based on deep learning has better prediction effect.

In addition, Chen et al. 7 combined the random forest algorithm with the long-term and short-term memory network to establish a combined prediction model, and compared it with a single model. The results show that the combined model has better prediction effect. Xue et al. 8 improved the BP neural network by adding fuzzy parameters to the excitation function. The results show that the improved BP neural network has higher convergence speed. Niu et al. 9 proposed a BP neural network prediction model based on variance and covariance. The model optimizes the initial weight and threshold of the neural network through mind evolutionary calculation, and then uses the bat algorithm to optimize the key parameters. The experimental results show that the improved model has higher prediction accuracy.

Furthermore, Mohammadi et al. 10 used extreme learning machine to predict the power density of wind farms and compared it with the artificial neural network model. The results show that the weight obtained by extreme learning machine has the minimum norm when the data fluctuates greatly. Prasad et al. 11 proposed a soil moisture prediction model based on improved extreme learning machine. The model uses data-driven methods to describe complex soil environments. The experimental results show that the method has high prediction accuracy. Aiming at the problem of network intrusion detection, Ahmad et al. 12 compared the three methods of extreme learning machine, support vector machine, and random forest. The results show that the detection accuracy of extreme learning machine is higher under the condition of complete data sampling.

However, many parameters in machine learning are often randomly selected, and sometimes these random parameters cannot be guaranteed to be optimal. For example, the input layer weights and hidden layer thresholds randomly selected in the extreme learning machine are sometimes not necessarily optimal, which may lead to some non-optimal or unnecessary weight problems.

Intelligent optimization algorithm

The intelligent optimization algorithm originates from the idea of the survival of the fittest in nature. In simple terms, the intelligent optimization algorithm is an iterative process that randomly generates the initial solution, optimizes the feasible solution, measures the target solution, and finally finds the optimal solution. At present, the commonly used intelligent optimization algorithms mainly include ant colony algorithm, artificial bee colony algorithm, particle swarm optimization algorithm, gravitational search algorithm, etc.

Juang and Yeh 13 combined ant colony algorithm with artificial neural network to solve the problem that ant colony algorithm is easy to fall into local optimal solution. The experimental results show that the hybrid algorithm has fast convergence speed and strong global search ability. Gurcan and Dogan 14 proposed an artificial bee colony algorithm based on competitive adaptive search strategy. The algorithm determines the local search equation through the co-evolution framework during optimization. The experimental results show that the method can effectively improve the convergence speed. Gao et al. 15 proposed an improved artificial bee colony algorithm. The algorithm constructs three different strategy candidate libraries and two different neighborhood mechanisms. The experimental results show that the method can better obtain high-quality candidate individuals.

In addition, Sun 16 studied the optimization performance of random parameters in particle swarm optimization algorithm for high-dimensional optimization problems. The experimental results show that the more components of the same random vector, the better the optimization performance of the algorithm. Yang et al. 17 proposed a multi-objective particle swarm optimization algorithm based on fusion multi-strategy. The algorithm introduces the decomposition idea and multi-point mutation strategy. The experimental results show that the method enriches the diversity of the optimal solution set and effectively avoids the premature problem of the algorithm.

Furthermore, Shaw et al. 18 proposed a gravitational search algorithm based on reverse learning. The algorithm combines the reverse learning mechanism with the gravitational search algorithm to expand the search range of the population in the evolution process. The experimental results show that the method can avoid falling into local optimum to a certain extent, but it will reduce the convergence speed in the later stage of population evolution. Li et al. 19 proposed an improved gravitational search algorithm, which combines the chaotic mechanism with the gravitational search algorithm, and uses the randomness and ergodicity of chaotic motion to enhance the global search ability.

Although these intelligent optimization algorithms have been widely used in many optimization fields, there are still many shortcomings. For example, the particle swarm optimization is sometimes affected by the current optimal solution when the particles are updated, resulting in the phenomenon of “premature”; the gravitational search algorithm has slow convergence speed, low search efficiency, and is easy to fall into local optimal solution in the early stage of operation.

Therefore, aiming at the non-optimal problems caused by random selection of some parameters in machine learning, it is necessary to introduce appropriate optimization algorithms into machine learning to optimize its random parameters, so as to improve computational efficiency, enhance the accuracy of the algorithm, reduce resource consumption, and make the algorithm better adapt to various application scenarios.

A prediction method based on improved extreme learning machine

Extreme learning machine (ELM) is one of the methods of machine learning. Aiming at the non-optimal problems caused by random selection of input layer weights and hidden layer bias of the extreme learning machine, this paper proposes a prediction model based on the improved extreme learning machine. The model combines particle swarm optimization with gravitational search algorithm to form a hybrid algorithm, and then optimizes the input layer weight and hidden layer bias of extreme learning machine.

Extreme learning machine

Extreme learning machine is a new type of single hidden layer feedforward neural network, which has the advantages of fast learning speed, short training time, and good generalization performance. 20 It overcomes the shortcomings of traditional neural network, such as long learning time and easy to fall into local minimum. The network structure model of ELM is shown in Figure 1. The input layer weight and hidden layer bias are randomly generated during the training process, and the output layer weight is calculated by the hidden layer output matrix. 21

ELM model.

X represents the input layer, Y represents the output layer, as shown in Formula (1) and Formula (2):

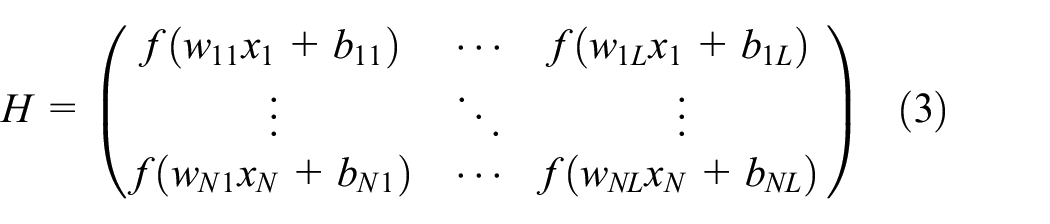

H represents the hidden layer, as shown in Formula (3):

Where f(wx + b) represents the activation function, w represents the weight of the input layer, and b represents the bias of neurons in the hidden layer.

β represents the weight of the output layer, as shown in Formula (4):

Where βij represents the weight between the i-th hidden layer neuron and the j-th output layer neuron.

However, the input layer weight and hidden layer bias of ELM are randomly selected and cannot be guaranteed to be optimal. Therefore, it is necessary to introduce appropriate optimization algorithms into ELM to optimize its random parameters.

Particle swarm-gravitational search hybrid algorithm

Aiming at the non-optimal problems caused by random selection of input layer weights and hidden layer bias of ELM, this paper uses a particle swarm-gravitational search hybrid algorithm to select the random parameters of ELM.

Particle swarm optimization

Particle swarm optimization (PSO) is an intelligent optimization algorithm that simulates the predation process of birds in nature. All particles in PSO have neither mass nor volume, and each particle has self-cognition ability and social cognition ability. The process of PSO is shown in Figure 2. 22

PSO process.

In a D-dimensional search space, there is a community composed of N particles. The particle position and velocity of the community are shown in Formula (5) and Formula (6):

The position and velocity of the i-th particle in the community can be expressed by Formula (7) and Formula (8):

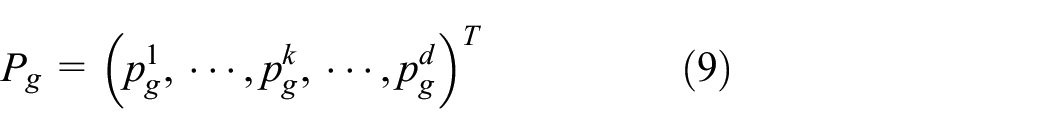

The best position of population history and the best position of individual history of particle i can be expressed by Formula (9) and Formula (10):

Gravitational search algorithm

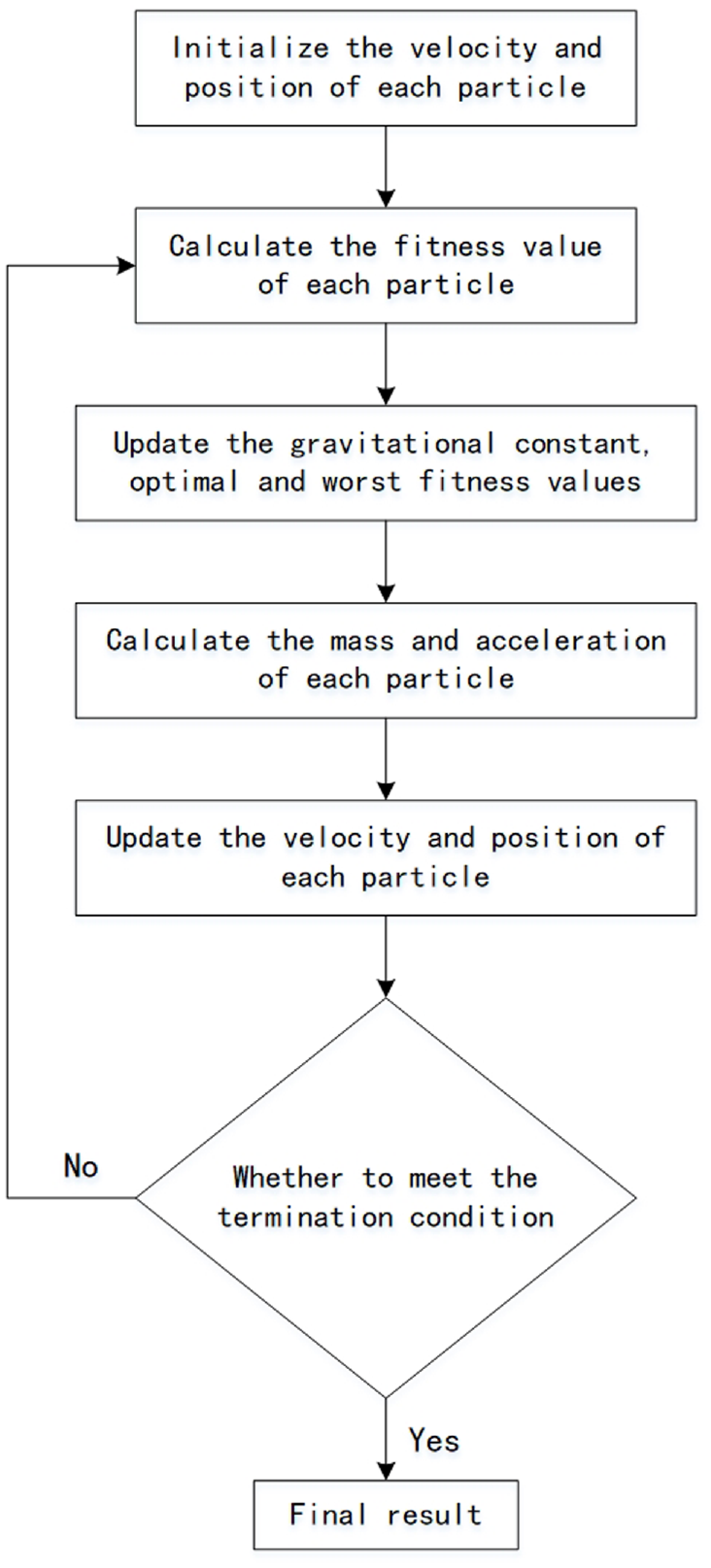

Gravitational search algorithm (GSA) is an intelligent optimization algorithm based on the law of universal gravitation. 23 It has the advantages of high computational efficiency, less parameter adjustment, and strong global convergence. Each particle in GSA is given an inertial mass. Under the action of gravity, particles with low inertial mass always move toward particles with high inertial mass. The motion process of the particles is the solution process of the optimization problem, and the algorithm flow is shown in Figure 3. 24

GSA process.

In a D-dimensional search space, there is a system composed of N particles. The particle position of the system is shown in Formula (11):

The position of the i-th particle in the system can be expressed by Formula (12):

Where

At time t, the force exerted by particle j on particle i at dimension k can be expressed by Formula (13):

Where M pi (t) is the passive gravitational mass of particle i, M aj (t) is the active gravitational mass of particle j, ε is a minimal constant, R ij is the Euclidean distance between particle i and particle j, and G(t) is the gravitational constant. R ij , G(t) can be expressed by Formula (14), Formula (15):

Where G0 is the initial gravitational constant, α is the attenuation coefficient, iter is the current number of iterations, and maxiter is the maximum number of iterations.

At time t + 1, the velocity of particle i in the k-th dimension can be updated by Formula (16):

Where r i is a random number in (0, 1).

PSO-GSA hybrid algorithm

PSO searches for the optimal solution through the memory of the particle’s own position and the sharing of the group position. Although PSO has high convergence speed and search efficiency, in the early stage of the algorithm, the particles are easily affected by the current optimal solution and fall into the local optimal solution, resulting in insufficient search accuracy.

GSA searches for the optimal solution through the continuous movement of particles under the action of gravity. Although GSA has strong information interaction and global search ability, it has slow convergence speed and low search efficiency, and it is easy to fall into local optimal solution in the early stage of the algorithm.

After the combination of PSO and GSA, the simulated gravitational mechanism of GSA helps to guide the particles in PSO to move to the global optimal solution and jump out of the local optimal solution.

Therefore, this paper combines the two algorithms to form a particle swarm-gravitational search hybrid algorithm, which not only combines the advantages of the two, but also makes up for each other’s shortcomings, so as to have better optimization ability.

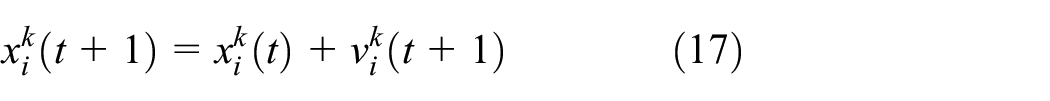

At time t + 1, the position and velocity of particle i in the k-th dimension can be updated by Formula (17) and Formula (18):

Improved ELM

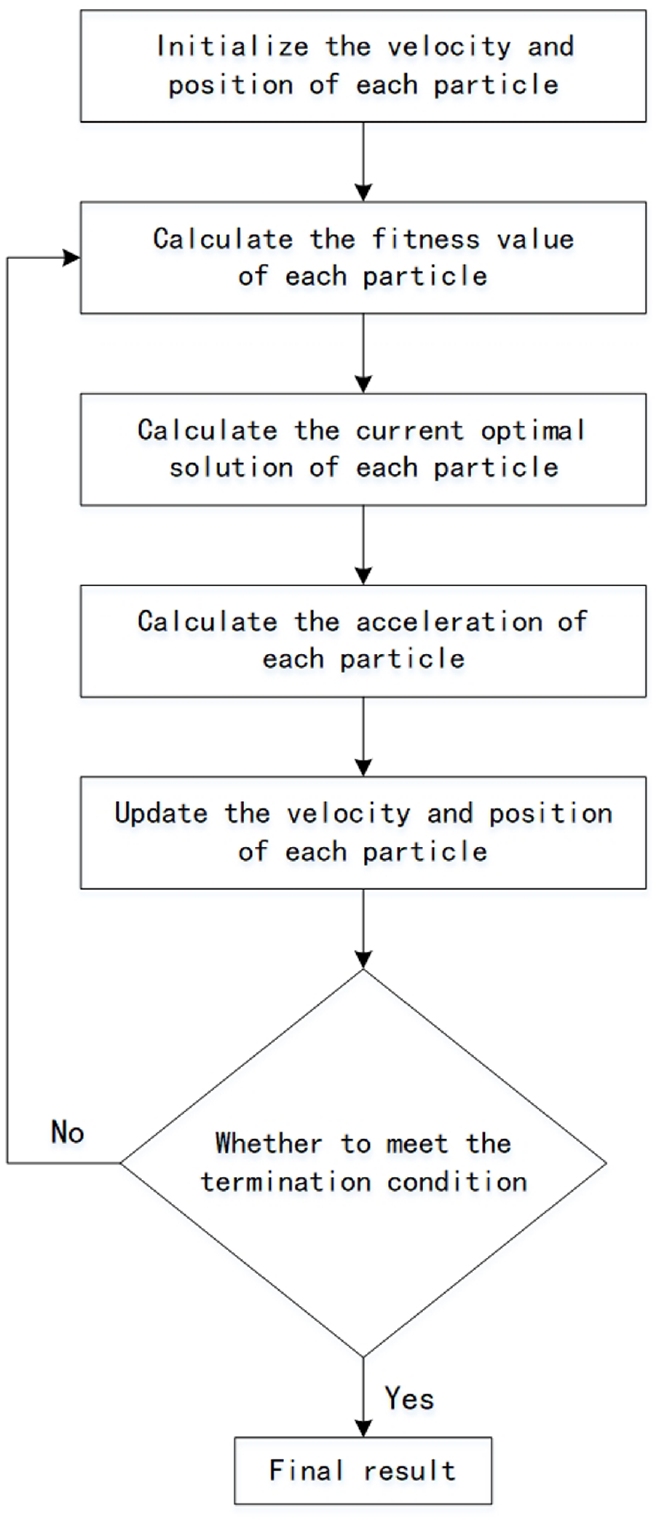

The improved ELM optimizes the input layer weight and hidden layer bias of ELM through the particle swarm-gravitational search hybrid algorithm. Therefore, the individuals in the search space are composed of a set of input layer weights and hidden layer bias. When the optimal parameters are found, the output layer weight of the neural network can be calculated by the hidden layer output matrix. The process of improved ELM is shown in Figure 4.

Improved ELM process.

The calculation steps of improved ELM are as follows:

Step 1: The initial population is randomly generated, where each particle is composed of the parameters shown in Formula (18), and is randomly initialized in the range of [−1, 1].

Step 2: Calculate the output layer weight of each particle by Formula (20), and use the root mean square error ERMSE as the fitness function to calculate the fitness value of each particle output by the neural network.

According to ELM, for a set of K different sequence samples {(Xj,Yj)|j = 1, 2, …, K}, if the number of neurons in the input layer is N, the number of neurons in the hidden layer is L, and the number of neurons in the output layer is M, then the output layer Y can be expressed by Formula (19):

From this, the least square solution of β can be obtained, as shown in Formula (20):

Where H+ represents the generalized inverse matrix of H.

Step 3: Calculate the current optimal solution.

According to PSO, at time t + 1, the position and velocity of particle i in the k-th dimension can be updated by Formula (21) and Formula (22):

Where w is the inertia weight coefficient, c1 and c2 are the acceleration coefficients, and r1 and r2 are random numbers in (0, 1).

Step 4: Calculate the acceleration of each particle by Formula (24).

According to GSA, at time t, the sum of the gravity of the particle i at the k-th dimension can be expressed by Formula (21):

Where r j is a random number in (0, 1).

According to Newton’s second law, at time t, the acceleration of particle i at the k-th dimension can be expressed by Formula (22):

Where M ii (t) is the inertial mass of particle i.

At time t, the gravitational mass and inertial mass of particle i can be expressed by Formula (25) and Formula (26):

Where fit i (t) is the fitness value of particle i at time t, best(t) and worst(t) are the optimal fitness value and the worst fitness value of all particles at time t, which can be expressed by Formula (27) and Formula (28):

Step 5: Update the position of each particle by Formula (17), and a new population is generated.

Step 6: The above optimization process is repeated until the maximum number of iterations is reached, so as to obtain the optimal solution of input layer weight and hidden layer bias.

Case study

In a rocket body structure, the barrel section of the secondary fuel tank is welded by four wall plates, with a total of four welds. In this paper, 500 sets of data of the welding process are randomly selected to establish the weld width prediction model based on improved ELM. Where 450 sets of data are randomly selected as the training set, and the other 50 sets of data are selected as the test set to verify the validity of the model.

The model takes the weld width as the output layer and 12 typical elements of the welding process as the input layer (welding current, welding speed, wire feeding speed, protection gas flow, penetration, temperature, humidity, chamfer, blunt edge, clearance, step difference, misalignment). The training samples of the quality prediction model are shown in Table 1.

Training samples of the weld width prediction model.

In this case, the parameters of the weld quality prediction model are set as follows: the number of input layer nodes is 12, the number of output layer nodes is 1, the hidden layer activation function is Sigmoid function, and the number of hidden layer nodes is 100.

In order to facilitate verification, this case also establish two weld quality prediction models for comparison: standard ELM and PSO-ELM.

Figure 5 shows four curves of the weld width of 50 groups of test samples, which are the actual curve, the prediction curve based on the standard ELM, the prediction curve based on the PSO-ELM and the prediction curve based on improved ELM. It can be seen that compared with the prediction curve based on the standard ELM and the prediction curve based on the PSO-ELM, the prediction curve based on improved ELM fits the actual curve better.

The predicted value and actual value of weld width.

Figure 6 shows the absolute error curve of weld width prediction of test samples. (Absolute error= | Prediction value–Actual value |). It can be seen that the maximum absolute error of the prediction based on the standard ELM is 0.60 mm (Sample 21), and the minimum is 0.10 mm (Sample 12). The maximum absolute error of prediction based on PSO-ELM is 0.42 mm (Sample 21), and the minimum is 0.08 mm (Sample 32). The maximum absolute error of prediction based on improved ELM is 0.30 mm (Sample 11, Sample 21), and the minimum is 0.04 mm (Sample 40).

Absolute error of prediction of weld width.

Figure 7 shows the relative error curve of weld width prediction of test samples. (Relative error=Absolute error Actual / value). It can be seen that the maximum relative error of prediction based on the standard ELM is 3.73% (Sample 21), and the minimum is 0.69% (Sample 12). The maximum relative error of prediction based on PSO-ELM is 2.61% (Sample 21), and the minimum is 0.46% (Sample 32). The maximum relative error of prediction based on improved ELM is 2.03% (Sample 11), and the minimum is 0.24% (Sample 40).

Prediction relative error of weld width.

The results show that compared with the prediction model based on the standard ELM and the prediction model based on PSO-ELM, the prediction model based on improved ELM has higher prediction accuracy, and its prediction deviation does not exceed 2.5%, which can effectively predict the weld width.

Conclusion

In the current multi-variety and small-batch production mode, this paper proposes a quality prediction method for rocket body structure manufacturing process based on improved ELM. The main research work and results are as follows:

(1) Aiming at the problem that PSO and GSA are easy to fall into local optimal solution, this paper combines the two algorithms to form a particle swarm-gravitational search hybrid algorithm, which better balances the search efficiency and search accuracy.

(2) Aiming at the non-optimal problems caused by random selection of input layer weights and hidden layer bias of ELM, this paper uses the particle swarm-gravitational search hybrid algorithm to select the random parameters of ELM.

(3) A weld in a rocket body structure is taken as an example to establish a prediction model based on improved ELM for the weld width, and the model is verified. The results show that compared with the prediction model based on the standard ELM and the prediction model based on PSO-ELM, the prediction model based on improved ELM has higher prediction accuracy, and its prediction deviation does not exceed 3%, which can effectively predict the weld width.

Footnotes

Handling Editor: Sharmili Pandian

Author contributions

Yao contributed about 70% of the research work of this manuscript, and was a major contributor in writing the manuscript. Liu contributed about 30% of the research work of this manuscript. All authors read and approved the final manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Availability of data and materials

The datasets used and/or analysed during the current study are available from the corresponding author on reasonable request.