Abstract

The performance of model predictive control strategies for hybrid electric vehicles (HEVs) highly depends on the accuracy of future speed predictions. This paper proposes improved prediction models for deterministic model predictive control (DMPC) and stochastic model predictive control (SMPC), respectively. For DMPC, the neural network-based predictor is first introduced and taken as the benchmark predictor. A novel deterministic predictor considering historical prediction errors is proposed, which relies on the assumption that the offset between the prediction and measurement at current instant is a good estimate of the offset in the short future. Based on the proposed deterministic predictor, a stochastic predictor that considers the distribution law of historical data at different locations is further proposed for SMPC. Simulation results show that the controller using the proposed deterministic prediction model improves fuel economy by 2.89%, and the controller using the proposed stochastic prediction model improves fuel economy by 4.5% compared with the benchmark.

Keywords

Introduction

In the next 50 years, the world’s population will grow from 6 to 10 billion, and the number of cars will increase from 700 million to 2.5 billion. 1 If all these vehicles continue to be driven by internal combustion engines, the oil resources consumed, the amount of exhaust gas emitted, and the environmental pollution problems that follow will be unimaginable. 2 These factors force us to rapidly develop alternative energy-driven vehicles. It is against this background that new energy vehicles have come into people’s attention. Broadly speaking, new energy vehicles include electric vehicles (EVs), hybrid electric vehicles (HEVs), and fuel cell vehicles (FCEVs).3,4 However, there are still unbroken technical bottlenecks at this stage, whether it is conventional batteries used in electric vehicles or fuel cells in fuel cell vehicles. Therefore, as a transitional model, HEVs will occupy more and more market shares and develop toward diversification and high performance. On the other hand, the performance of HEVs also depends on the control strategy. Therefore, an effective energy management strategy is crucial to exploit the energy saving potential of HEVs.

A large amount of research has been conducted on energy management strategies (EMS).5–7 The rule-based control strategy is the easiest and most widely used control strategy. As the design of this strategy is based entirely on expert experience, the best performance usually can only be achieved under specific road conditions. The performance may drop significantly when the road condition changes. 8 The dynamic programming (DP) algorithm based on Bellman’s optimality principle is widely used in the energy management of HEVs because it can obtain the theoretical global optimal solution. 9 However, the global optimal solution can be obtained by DP only when the complete road conditions are known, which is impossible in practical applications. And the computational burden of DP increases exponentially with the increase of number of variables, which cannot be deployed in real-time applications. Despite these shortcomings, DP still has great practical value, it can be used as a benchmark to revise the parameters of other control strategies, or used to compare with other control strategies to verify the gap between other control strategies and optimal strategy.10,11 Another important class of control strategies is based on convex optimization, which has great value in real-time applications due to its short computational method. In DP, the model of the problem can be nonlinear, non-convex, and mixed integer. However, the convex optimization problem has strict requirements on the description form of the problem, and any problem must be approximated as a convex optimization problem, so only the approximate optimal problem can be obtained.12–14 Besides convex optimization, another commonly used real-time control strategy is Equivalent Consumption Minimization Strategy (ECMS).15–17 The performance of ECMS has a lot to do with the choice of equivalent factor. A suitable equivalent factor can make the performance of the control strategy approach that of DP. 18 However, in practical applications, the selection of equivalent factors is still a difficult problem, 19 the table-based strategies are still the most widely used. In addition, ECMS does not take into account future road conditions, which also limits its performance.

Another type that is considered to have great application potential in the energy management of new energy vehicles is model predictive control (MPC).7,20,21 MPC can achieve a balance between performance and computational burden. Compared with EMCS, its performance is significantly improved, and the computational burden is much smaller than that of DP. Therefore, it is considered to be the most valuable control strategy in actual deployment.22–24 So far, MPC has been widely studied by enterprises and scholars, and a series of research results have been achieved. It has been widely used in the energy management of many different types of new energy vehicles, such as FCVs, HEVs, and EVs. 25 Depending on whether the uncertainty of future driving information is taken into account, MPC can be further divided into two categories: deterministic MPC and stochastic MPC. In deterministic MPC, a deterministic predictor for predicting the exact trajectory of future vehicle speed or demand torque is required, which is usually difficult and the prediction performance can only be guaranteed in a short prediction horizon. After the predicted trajectory is obtained through the predictor, some optimization algorithms, such as DP algorithm, quadratic programming (QP) algorithm, or nonlinear programming algorithms, are used to solve the optimal control strategy corresponding to the predicted trajectory. Then the first control command of the control strategy is taken as the optimal control action at the current moment, and this process is repeated in each MPC update cycle. 26

In stochastic MPC (SMPC), the purpose of the predictor is not to predict the exact trajectory of future driving information, but the probability distribution of vehicle speed or demand torque. The goal of the SMPC controller is to minimize the expectation of the cumulative coast function in the prediction horizon, which is usually solved by stochastic dynamic programming (SDP). The computational burden of SDP also grows exponentially with the number of variables, so the optimal control strategy is usually saved in the form of a table. In practical deployment, online computation is replaced by looking up the table. 27 The performance of DMPC and SMPC both depends on the design of the controller and the accuracy of the prediction model. 28 If the accuracy of the prediction model cannot be guaranteed, even the optimal controller may obtain a poor control action, so a predictor that accurately reflects the changes of future driving information is the basis of a high-performance MPC. A variety of speed predictive models already exist for deterministic MPC, including exponential decay models, 29 autoregressive moving average (ARMA) method, 30 neural network-based models, Markov models, etc. 31 Some methods of using the information during driving to correct the prediction model in real time have also been proposed, such as using the prediction error of the previous instant to correct the prediction error of the future. 32 Some paper proposes a novel robust coordinated decision-making technique via robust multiagent reinforcement learning to coordinate the longitudinal and lateral driving decisions of an automated vehicle while ensuring policy robustness against observational uncertain-ties. 33 A strategy incorporates active disturbance rejection current compensation (ADRCC) to achieve a speed difference of zero at two ends of the half-shaft as the tracking control target, and compensating current is superimposed on the original given current of the motor controller to investigate the electromechanical coupling dynamics and vibration characteristics of the system under impact conditions. 34 And an article aims to identify robust objective evaluation criteria for the nonlinear combined longitudinal and lateral dynamics of a vehicle. 35 For stochastic MPC, most of the existing prediction models are based on Markov chains (MC). The prediction accuracy of MC-based predictors is completely dependent on historical data and is usually only valid for specific road conditions. And these prediction models are based on the assumption that the transfer process of vehicle speed conforms to the Markov property, but this is not necessarily true in the actual driving process. Except for Markov chain-based predictors, there are few studies on other stochastic prediction models, and most of the studies on SMPC focus on the design of control strategies. However, the performance of SMPC is limited by the predictors, and more precise prediction models can further mine the potential of the controller.

The further improve the performance of MPC, this paper proposes a novel deterministic speed predictor for DMCP and a stochastic speed predictor for SMPC. The main contributions are as follows: (1) An improved deterministic predictor is proposed, which takes into account the error of historical prediction; (2) An improved stochastic predictor combining the deterministic predictor and the stochastic term is proposed, which takes into account the distributional randomness of historical data; (3) A comprehensive analysis is performed through simulations, the application potential of proposed predictors is verified.

The rest of this paper is structured as follows: section “Problem formulation” introduces the mathematical model of HEV and driving scenario. sections “Deterministic prediction and DMPC” and “Stochastic prediction and SMPC” reports the DMCP and SMPC strategies, respectively. Then section “Simulations and discussions” presents the simulation results and analysis. Section “Conclusion” is the main conclusions of this paper.

Problem formulation

In this section, the driving scenario is introduced and the mathematical model of the Parallel Hybrid Electric Vehicles (PHEVs) is established. Then, the optimal control problem is described and formulated in the MPC form.

Driving scenario

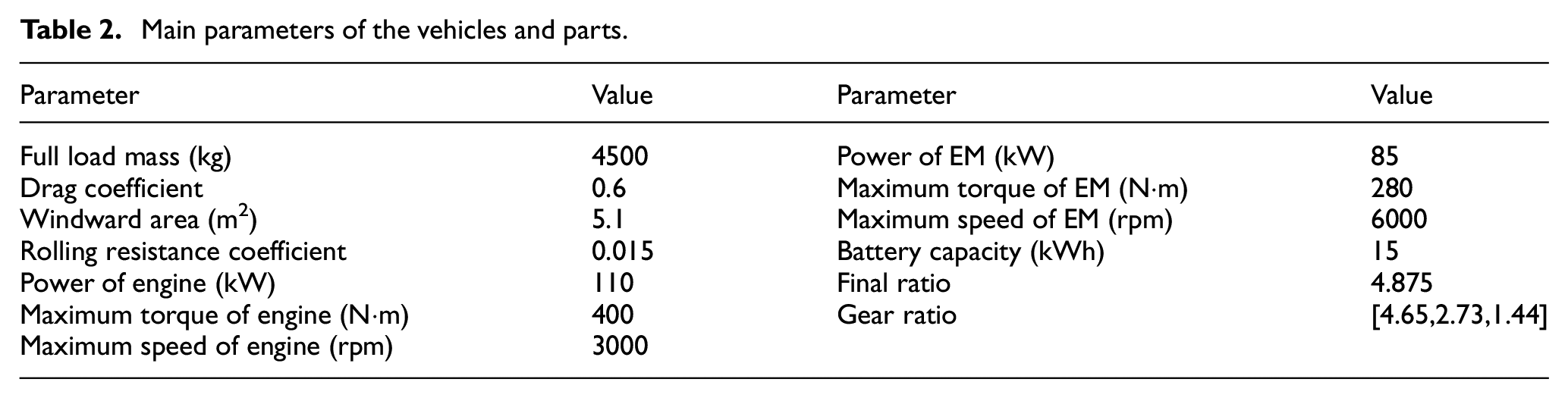

The research object of this paper is electric trucks driving a fixed route. A bus route in Xi’an, China, is taken as an example, as shown in Figure 1. Other information is uncertain, it depends on traffic conditions and can be regarded as stochastic. For this, speed profiles are obtained from 50 driving tests, as shown in Figure 2. The relevant information statistics of the daily route are shown in Table 1. The parameters of the vehicle and parts are shown in Table 2. Since the route is fixed, historical data can be effectively utilized to design control strategies. MPC requires prediction of future road information, and is currently divided into two types: deterministic prediction and stochastic prediction. This section describes the optimal control problem in discrete space for DMPC and SMPC, respectively. How to solve these problems will be discussed in detail in sections “Deterministic prediction and DMPC” and “Stochastic prediction and SMPC.”

A driving route and corresponding altitude in Xi’an, China. In the top plot, location A and B denotes the start and end position, respectively. The bottom plot shows the profile of altitude from A to B.

The speed profiles of historical driving tests.

Statistical information of the fixed driving route.

Main parameters of the vehicles and parts.

PHEV model

The structure of the PHEV is shown is Figure 3, which is composed of an engine, an electric machine (EM), a clutch CL, and a gearbox.

Mechanical structure of the HEV powertrain. The engine, EM, and the gearbox are connected in series on a shaft. The engine and EM are connected through the clutch CL.

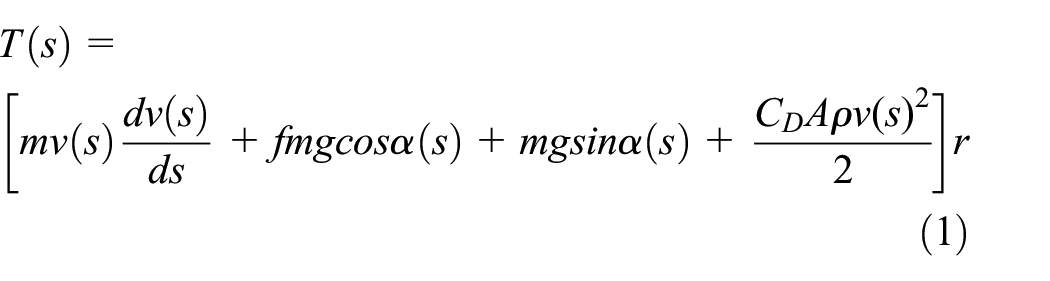

According to Newton’s laws, the longitudinal dynamics of the vehicle can be described by Du et al. 36

where, T denotes the demand torque of the wheels; m denotes the mass of the vehicle; f is the rolling resistance coefficient; C D is the air resistance coefficient; A is the windward area; g is the acceleration of gravity; α is the slope; v denotes the velocity of the vehicle.

Dynamic model (1) is built on the distance domain, in order to remove the nonlinearity, define

where, E is kinetic energy of a unit mass.

The derivative of equation (2) can be obtained by

So, the kinetic equation (1) can be rewritten as:

During driving, both the engine and the EM can provide driving force to the vehicle through the gearbox. When the CL is disengaged, the vehicle is fully driven by the EM. The state of the CL is represented by σ c .

where, 0 and 1 denote the clutch is disengaged and engaged, respectively.

Further, the control action corresponding is denoted by

So, the dynamics of CL is expressed by

Assuming that the operation state of the engine is the same as that of the clutch and ignore the on/off process of the engine, that is, when the clutch is disengaged, the engine is off; when the clutch is engaged, the engine is on. So, the torque of the engine can be described as

where, Te and T

m

denotes the output torque of the engine and EM, respectively; f

d

and i

g

(gear) denotes the gear ratio of the differential and gearbox, respectively;

Further, the shifting process of the gearbox is modeled by

where,

When the clutch is disengaged, the speed of the engine is zero, and when the clutch is engaged, the speed of the engine is equal to that of EM, that is,

where, ω e and ω m denote the speed of the engine and EM, respectively.

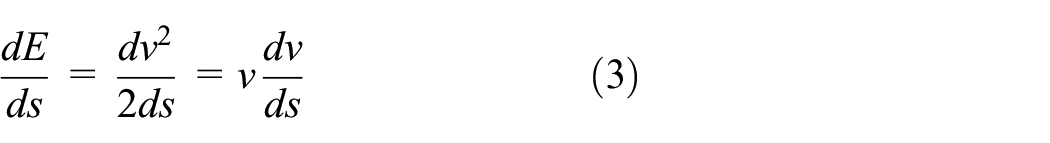

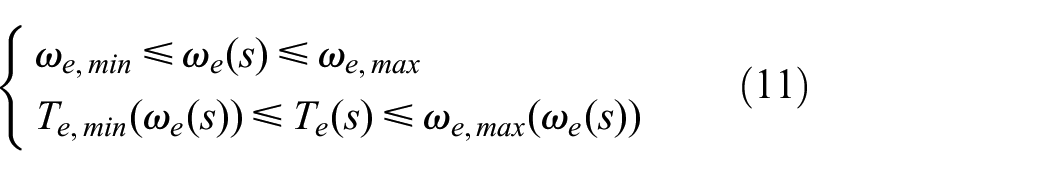

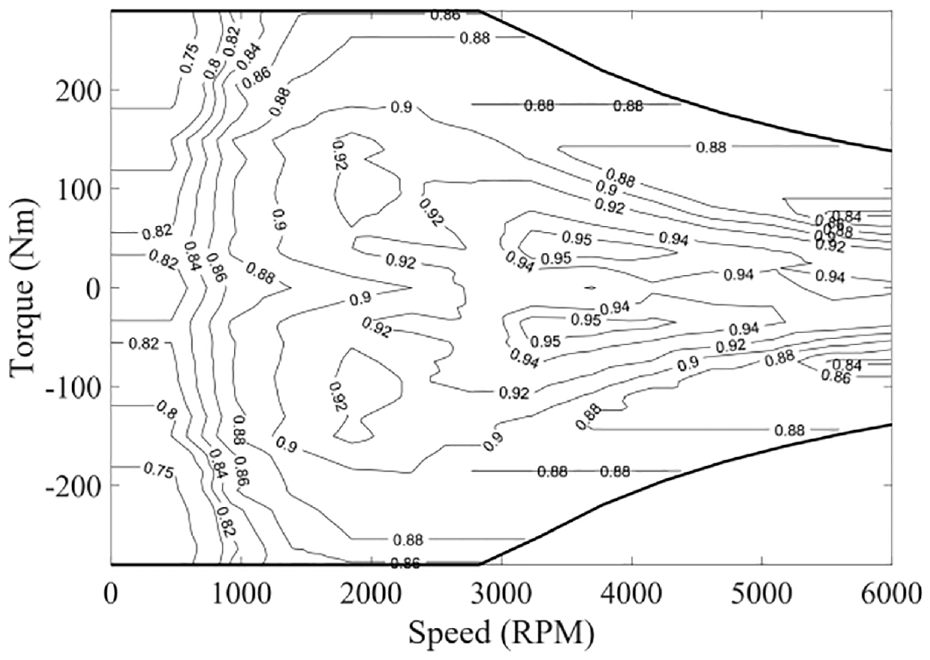

The PHEV adopts a diesel engine with a displacement of 2.5 L. The fuel consumption rate of the engine is denoted by m f (s), as a nonlinear function of the speed and torque, which is shown in Figure 4. The constraints of the engine are as follows:

Fuel consumption rate of the diesel engine versus torque and speed (unit: g/kWh).

The efficiency of EM is also modeled by a nonlinear function of speed and torque, which is shown in Figure 5. Define equation (2) to represent the power of EM, where P d denotes the dissipated power of EM.

Efficiency of EM versus torque and speed.

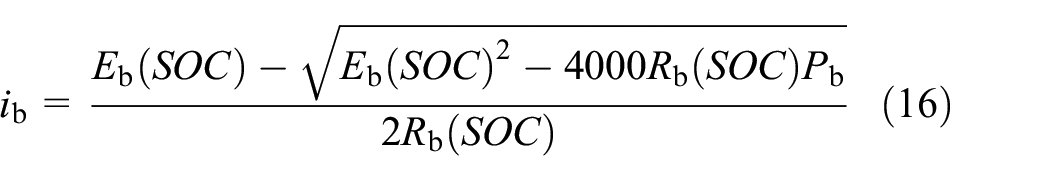

The battery model adopts the equivalent circuit model. The

where,

Battery charge and discharge power is

where,

Among them,

Assuming that EM is the only electricity-consuming device in the vehicle, so Pbat = P m . The power and SOC of battery also be constrained by

MPC problem description

In DMPC problem, the gear of gearbox, demand torque, and the SOC of battery are taken as the state variables; torque of EM, the state of CL, and shift action are taken as the control variables. In SMPC problem, the kinetic energy is taken as the stochastic variable considering the uncertainty of future traffic conditions. The order of state, control, and stochastic variables can be correspond to

From a mathematical point of view, the MPC problem is to use a series of discrete control actions to optimize the performance indictors of vehicle driving within a certain time range. The performance indicators include fuel consumption and shift times.

For DMPC, the cost function in a sampling interval can be described as

For SMPC, the cost function is

where, β1 is a positive weight factor, which is used to limit the frequent shifting of the vehicle; β2 is the penalty factor to prevent frequent start/stop of the engine; δ s denotes the discrete interval of distance.

The objective of DMPC is to minimize the total cost in prediction horizon, which is described as

where, i is the index over entire driving process; k denotes the index over the prediction horizon.

Considering the uncertainty of future vehicle speed, the goal of SMPC is to minimize the expect cost in prediction horizon, which is described as

where,

Both SMPC and DMPC need to satisfy a series of constraints, which is detailed as

Deterministic prediction and DMPC

This section reports a DMPC scheme for the PHEV. First, two deterministic speed predictors are introduced. Then, the solution for DMPC is introduced.

Since the historical data is continuous, which needs to be discretized before introducing the predictive models. Without loss of generality, the set

ANN based predictor

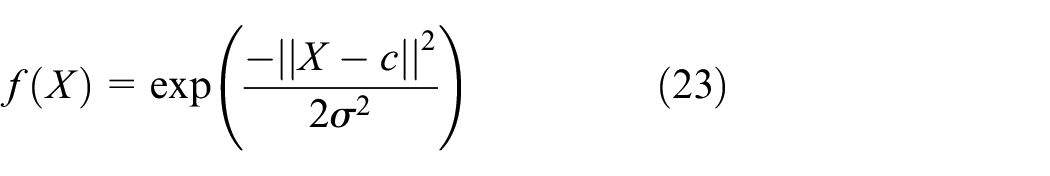

Artificial neural network (ANN) has relatively strong nonlinear mapping ability, and can find the law of data. So, it has been widely used in driving information prediction. Here, the radial basis function (RBF) neural network are used to predict the future velocity, which consists of input layer, hidden layer, and output layer. In this study, the Gaussian function are chosen to active the neurons in the hidden layer, which is described as

where, c and σ denote the neural net center and the spread width, respectively.

The input of the RBF is the trajectory of historical data, and the output is the predicted trajectory, which can be described as

where, H

q

denotes the dimension of input vector; H

p

denotes the length of prediction horizon;

An improved ANN based predictor

The accuracy of most predictive models relies primarily on historical data. In practical applications, the prediction error in the past short period indicates the gap between the prediction model and the actual situation, which can be effectively used to improve the prediction error. A reasonable assumption is that the offset between the prediction and measurement at current step is a good estimate of the offset in the short future. Based on this, an improved predictor is defined as

where,

The improved predictor is based on the assumption that the offset between the measurement and the prediction has an impact on future prediction and that the impact will decay over time. It is clear that λ describes how the difference between

An example of prediction process is shown in Figure 6 to show the difference between equations (24) and (25). It can be seen that the offset between the two predicted trajectories will become smaller and smaller, and eventually coincide. The rate of decay depends on the value λ. The predictive trajectory of the vehicle speed according to equation (25) is denoted as

An example of the predicted trajectories, where Δ denotes the offset between measurement and prediction at step i. The blue and red lines denote the predicted trajectories generated by equations (21) and (22), respectively. It can be seen that Δ gradually decays in the prediction horizon, and the two predicted trajectories gradually overlap.

Dynamic programming for DMPC

The optimal problem described in section “MPC problem description” is nonlinear and mix-integer optimization problem. It is usually challenging to solve such problems in limited time. But the main contribution of this study is the novel predictors, we do not care the computation burden of the optimal policy. In this case, dynamic programming (DP) is the best option to test the performance of predictors.

First, a cost-to-go function

where,

So, the task of the optimal control problem is to get the minimum cost described in equation (28) and the corresponding control action, which can be described as

where,

According to Bellman principle, equation (29) can be solved recursively

Suppose the cost at the end of prediction horizon is 0, that is,

Stochastic prediction and SMPC

The fundamental principle of SMPC is similar to that of DMPC, the main difference is that the strategy obtained by SMPC is statistically optimal. This section introduces a stochastic predictor and the solution method of SMPC.

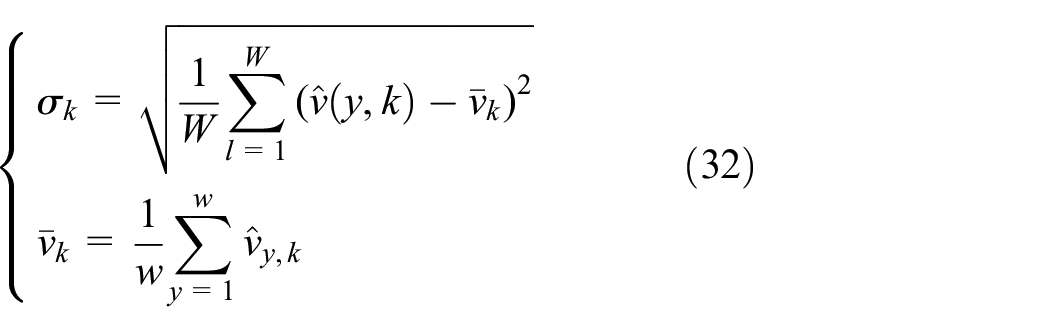

A novel stochastic predictor

A novel stochastic predictor is proposed based on the deterministic predictor in Section “an improved ANN based predictor” First we need to model the uncertainty of future, which is usually difficult. In this study, we try to add a random term to the prediction model using the distribution of historical data along the driving route. The improved predictor is defined as

where,

The motivation of equation (31) is to better utilize the velocity distribution law at different locations. The predictive result of traditional predictors only depends on the previous measurements, while the prediction result of equation (31) depends not only on the previous measurements, but also on the historical measurements of position k along the route.

Stochastic dynamic programming for SMPC

Stochastic dynamic programming is a general algorithm to solve SMPC problem. Unlike DMPC, the goal of SMPC is to minimize the expectation of the cumulative cost in the prediction horizon.

Define equation (33) to represent the expectation of the cumulative cost in the prediction horizon.

where,

The task of SMPC is to find the minimum of equation (33) and corresponding optimal policy

Considering the existence of the stochastic variable, the cost function at the next step can be regarded as conditional probability. For the stochastic predictor described in equation (31), the minimum expected cost function can be expressed as:

where, 2N

p

+1 is the number of available state of v. In order to unify the discrete space of v, let 2N

p

+1 = w; P

kj

is the possibility of transition from

The constant C normalizes the overall probability to 1.

Another difference between SMPC and DMPC is that online computation is not required. After the optimal policy

Simulations and discussions

A comprehensive comparative analysis of the controllers using the proposed predictors is conducted by simulations in this section. DP is employed as the benchmark in this study. For ease of description, these control strategies are named as follows.

DMPC: DMPC with ANN based predictor.

DMPC: DMPC with the improved deterministic predictor described in equation (22).

SMPC: SMPC with the stochastic predictor described in equation (28).

DP: Dynamic programming, which is used as the benchmark assuming that the profile of speed is completely known. It is a global optimization algorithm which can obtain the optimal policy.

Parameters

The simulations was preformed on a personal computer (Intel i7-11800H at 2.3 GHz and RAM 16 GB), using MATLAB 2021a. Since the purpose of the simulation is to compare the performance of the predictors, computational burden is not taken into account. A small quantization resolution is chosen for continuous variables. The main parameters related to simulations are shown in Table 3.

Parameters of simulations.

Results and discussions

Table 4 summaries the results and cost benefit of the controller using proposed predictors. Compared with DMPC, I-DMPC has the 2.98% lower cost, SMPC has the 4.5% lower cost. This indicates that the improved predictors proposed in this paper can improve the performance of the controllers.

Results of simulation.

One of the driving tests is chosen to compare the performance of the proposed predictors. The speed profile and the trajectory of gear shift during driving are shown in Figure 7. The top plot shows the velocity along the route. Remaining plots show the profiles of gear for different controllers. From the gear shifting trajectories of DMPC and I-DMPC, it can be seen that the shifting frequency of I-DMPC is significantly lower than that of DMPC in a short distance. For example, when the driving distance is between 10 and 12 km, due to frequent changes of vehicle speed, DMPC frequently switches between 3-rd and 4-th gears within a short distance, which will increase the wear of the clutch and reduce the driving comfort. Although I-DMPC has a high number of shifts between the driving distances of 10–15 km, the distance between two shifts longer than that of DMPC, and there is no frequent shift of shift of gears within a short distance. When the driving distance is between 10 and 11 km, DP mostly works in 4-th gear, while SMPC and I-DMPC switch between 2-nd, 3-rd, and 4-th gear, and DMPC switches between 3-rd and 4-th gear. This shows that the performance of the controllers using the predictors proposed in this paper still has a certain gap compared with DP in low-speed and complex road conditions. In high-speed route sections (e.g., 15–25 km), SMPC and DP always work in 4-th gear, and DMPC and I-DMPC switch between 3-rd and 4-th gear. This shows that the performance of SMPC at high speed is close to that of DP, but the performance of DMPC and I-DMPC at high speed still has a certain gap with DP.

The driving test and the corresponding simulation results. The top plot shows the velocity along the route. Remaining plots show the profiles of gear for different controllers. It can be seen that the shifting frequency of SMPC is lower than DMPC and I-DMPC. In high-speed conditions (15–25 km), SMPC always works in 4-th gear, which is closest to DP.

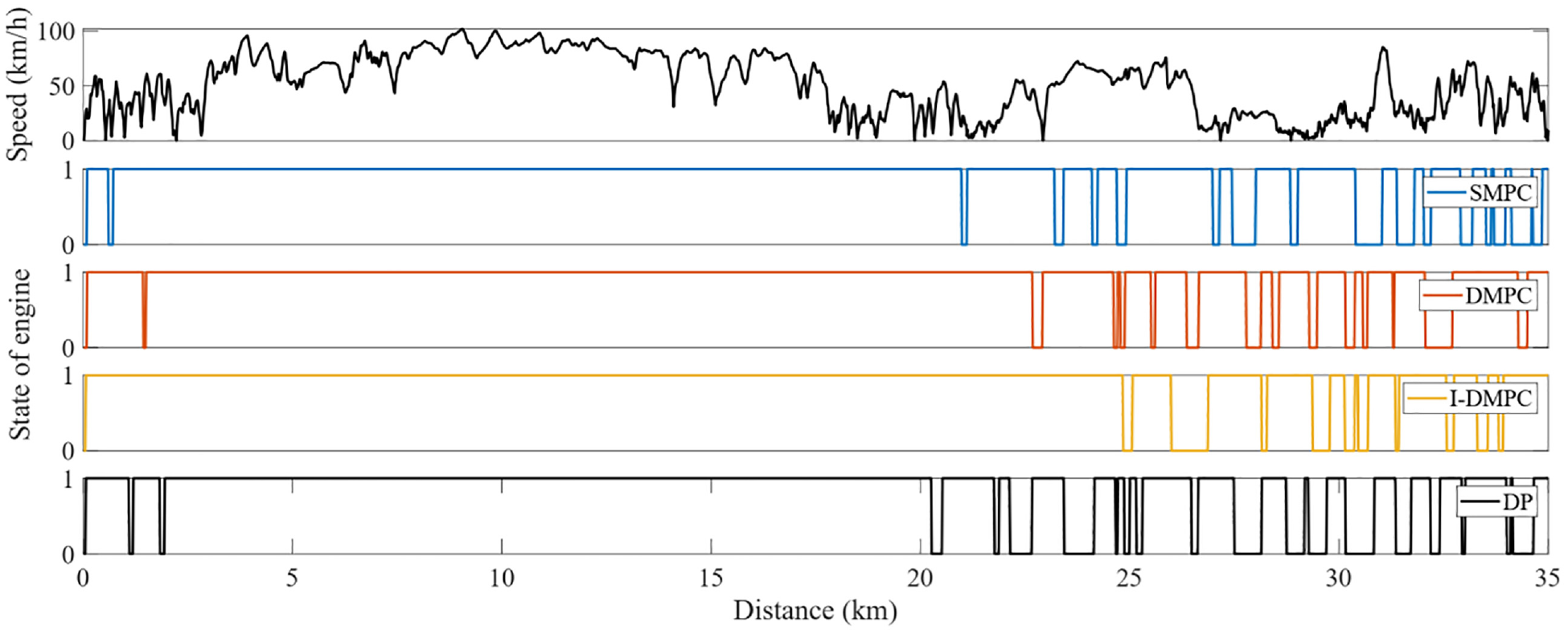

The trajectories of engine state of different controllers are shown in Figure 8. As can be seen, all four controllers prefer to keep the engine running under high-speed road conditions. This is because the operating points of the engine corresponding to the high-speed road conditions are usually in the high-efficiency operation range, and the performance of the controllers in high-speed road conditions is close to that of DP. Compared with SMPC and I-DMPC, DMPC has more engine switching times, but the fuel consumption is not reduced. This shows that the performance of the predictive model used by DMPC is poor, resulting in frequent engine state-stop switching without reducing fuel consumption. Compared with SMPC and I-DMPC, DP also has more number of engine state-stop switching, but the total cost is also the least. Since DP represents the global optimal strategy, this shows that there is a contradiction between the minimum number of engine state-stop switching and the lowest fuel consumption, and the optimality cannot be achieved at the same time. In practical applications, the appropriate penalty factor for engine state-stop switching should be determined according to specific needs.

Trajectories of engine state of different controllers. The top plot shows the velocity along the route. Remaining plots show the profiles of engine state of different controllers.

The SOC trajectories of different controllers during the driving test are shown in Figure 9. It can be seen that the trajectory of SMPC is closest to DP. This shows that considering the distribution law of historical vehicle speed at different locations can effectively improve the performance of the predictor. The SOC trajectory of DMPC is at the top at 0–20 km, which shows that the control strategies using the prediction models proposed in this paper prefer to use battery energy for driving than DMPC when the electric power is sufficient, which can save fuel consumption. The SOC trajectory of I-DMPC at 0–10 km is at the bottom, which shows that I-DMPC prefers to use battery energy than DP and SMPC. However, the driving cost of I-DMPC is higher than that of DP and SMPC, which means that from the perspective of the minimum cost of the entire route, even when the battery is sufficient, the proportion of battery energy to total energy should be kept within an appropriate range. Using too much or too little energy from the battery will drive the control strategy away from the global optimum.

Trajectories of SOC of different control strategies during the driving test. It can be seen that the trajectory of SMPC is closest to DP.

The operating points of the engine of different controllers are shown in Figure 10. It can be seen that the sets of operation pointes of SMPC are the closest to DP and concentrated in the efficient area. Operation points of I-DMPC are also denser than DMPC. Engine of DMPC operates in more dispersed area, which results in their poorer performance.

Operating points of engine during the driving test. The sets of operation points of DP are densest. Operation points of SMPC are closest to DP. DMPC operates in the largest area, which results higher cost.

Conclusion

To improve the performance of MPC energy management strategies of PHEVs, this paper develops a deterministic predictor for DMPC and a stochastic predictor for SMPC. For DMPC, the neural network-based predictor is first introduced and taken as the benchmark predictor. A novel deterministic predictor considering historical prediction errors is proposed, which relies on the assumption that the offset between the prediction and measurement at current instant is a good estimate of the offset in the short future. Based on the proposed deterministic predictor, a stochastic predictor that considers the distribution law of historical data at different locations is further proposed.

Simulation results show that the controller using the proposed deterministic predictor improves fuel economy by 2.98% compared with the benchmark. The controller using the proposed stochastic predictor improves fuel economy by 4.5% compared with the benchmark.

The proposed two strategies can be used as a high-level controller providing the control commands for underlying controller such as electronic control units of the engine and the powertrain. Future work will focus on two aspects: (1) Generalizing the proposed control strategies to the eco-driving problem of arbitrary routes; (2) Implementing proposed control strategies in a truck and testing the performance in real traffic.

Footnotes

Handling Editor: Chenhui Liang

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Natural Science Foundation of China (U1937203), State Key Laboratory for Mechanical Behavior of Materials (1991DA105206), Young Talent Fund of Association for Science and Technology in Shaanxi, China (20240432), and Shaanxi Province Science and Technology Activities for Overseas Students Selected Funding Project (2023-007).