Abstract

Micromanufacturing raises some interesting, yet demanding and complex issues regarding modeling and data handling. Since utilizing both of these methods seems to be the key for process optimization, and preferably in real time, their coexistence is not a straightforward task, mainly due to interoperability and computational times. This work is a first step towards creating a roadmap for the design and the implementation of digital twins, provided that the digital twin offers both process optimization and tacit knowledge aggregation towards digital twin reusability. This involves the cooperation of different mathematical tools, an automated information manipulator and a human operator. Towards presenting such a roadmap and illustrating the choice of a workflow, a classification of the potentially useful tools is also presented herein. After that, a method to connect the tools through a software platform is discussed in order for a digital twin to be implemented. Finally, numerical examples are given to prove the fact that information reduction is needed in order for the proposed data manipulation effort (in terms of both processing and storing) to be minimized.

Introduction

One of the emerging tendencies in digital twins has been that of integrating physics models, a highly useful tool for micromanufacturing, in particular. For instance, recently, a Molecular Dynamics approach 1 has been used to provide a digital twin related to laser micro processing with theoretical knowledge, while the scope of the digital twin has been mainly to perform quality assessment. This work discussed some issues about the exact use of the constituent modules of a platform for real-time decision making. As such, it seems that a digital twin, as an umbrella term,2,3 could operate seamlessly based on an ensemble of technologies and techniques, including theoretical (physics based) models. As a matter of fact, this statement happens to constitute also the most important challenge in the Digital Twins’ design and implementation; that of severe integration. This challenge becomes more demanding, since the real-time character of optimization has been adopted, 4 as a far more convenient concept in the manufacturing processes case. As shown below, the integration difficulties are only becoming bigger taking into account the physics of the microprocesses. To this end, this work focuses on the possible ways the theoretical, physics based, models can be integrated under the framework of a single digital twin for micromanufacturing. It is pre-stated here, that there are three ways of achieving such an integration; calibration of the digital twin, integrating of fast models in the decision making loops and finally, decision making during design. The latter two constitute the focus of current literature, as shown in the next section. Numerical examples are also given for specific cases of decision making.

Regarding microprocessing itself, it can be stated that the evolution of micromanufacturing has been rather rapid in the last few years, including works such as micro molds for polymer processing, 5 micro-deposition 6 and hybrid micromanufacturing, 7 micro-patterned structures, 8 and nanostructuring, 9 even two-photon polymerization. 10 These applications are indicative of the complexity introduced by the micro level. The variables, especially the manufacturing-implicated ones, such as the process parameters and the performance indicators, constitute a set of highly diversified quantities, that are measured, modeled and utilized in different ways. This difficulty becomes bigger because of the nature of micromanufacturing, since it involves a lot of local phenomena that affect the macro-behavior of the part and/or the process. In particular, and with respect to the above mentioned processes, it is evident that quantities related to process parameters or performance indicators, such as deformation, specifically involve at least three types of modeling variables: the ones that are directly implicated in manufacturing processes, such as quality, the ones that are physics based and are directly affected from micro level, such as deformation and finally, the ones that are indirectly implicated, such as temperature, which is affected by microstructure in a complicated way, but affects directly deformation and thus quality. In any case, the current work attempts to address the digital twin design problem in a holistic manner, regardless of the process itself.

Furthermore, it is not just the engineering aspect, involving inevitably all Cyber and Physical aspects (monitoring and manufacturing, respectively) of the configurations and the manufacturing process involved, that is relevant; it is also the issue of modeling that raises questions on what may be the most effective method. At this point, it would be of great importance to define two different categories of micromanufacturing stumbled across the aforementioned literature; (1) processing of micro parts and (2) processing of micro-features. Although they are quite similar, their concurrent existence might imply a different use of modeling. Thus, to unify these two, the (α, λ) categorization is introduced, denoting the feature and the part characteristic dimension, respectively, as shown in Figure 1. This consideration is rather an ansatz, that is expected to accelerate the design of the data space for the digital twin and consequently boost the choice of the monitoring and the modeling modules related to the digital twin.

(α, λ) Categorization of parts (process modeling consideration).

Information manipulation within a digital twin is not only about modeling, but it is also about real-time decision making; the need for a digital twin in microprocessing is similar to the situation in plain manufacturing. However, the repercussions on their use vary, for one reason only: the size effects11,12 which are defined as the effects of micro-characteristics on the macro-behavior. Therefore, the functionalities of a digital twin in micromanufacturing could be categorized according to the twins’ relation with space, time, and aspect (s-t-a). The latter is defined rather arbitrarily, however, there are three main categories of operations and consequently of functionalities for processes digital twins; design of a process, modeling and operation during manufacturing. This three-fold s-t-a character constitutes a first attempt to conceptualize digital twin design, while strongly interacting with concepts like requirements 13 and definition, systems architecture, 14 zero-defect strategy, 15 and sustainability. 16 This is performed through non quantified localization for each one of the aforementioned aspects with respect to the complexity that is implicated. The three different aspects are not comparable to each other in this case. Even though design may imply either the part or the process, for the purposes of this work, the focus here will be the specific lifecycle stage, that of manufacturing operation. Thus, three distinct aspects are considered: Design (referring to both part and manufacturing), the modeling (that will also be integrated in a convenient form in a digital twin) and manufacturing. There is a flow of information among these aspects and each one has different characteristics that can be classified in space and in time. For instance, manufacturability features extend in space dimensions beyond functionality, since the features of the part have to address specific functionalities. The same is the case in time, since the time scales involved in manufacturing phenomena are expected to overcome the functionalities-related time scales.

The repercussions of such a classification would be immediate on the choice of the digital twin modules as well as on the architecture itself, as the data (and variables) that have to be taken into consideration have specific spatiotemporal characteristics.

In any case, despite this first step for manufacturing complexity comprehension, the communication between the involved modules can only be described as chaotic. The amount of required information collected from each transaction exacerbates the complexity and renders the need for fast data processing and models highly relevant. Thus, the procedure of defining the framework under which such a digital twin can be implemented needs to be driven by a review of the capabilities of the involved technologies. The corresponding technologies review is described in the next section, where mainly modeling and communications issues are mentioned, while the rest of the document is structured as in the following manner: Section 3 provides illustrations of great value regarding how the modules and their interactions can be useful in terms of decision making. Under the same principle, a template architecture is given as a vision for the future on the workflows, followed by examples in the next Section, on how information should be manipulated and be used to reduce effort. The conclusions can be found in last Section.

State of the art

Physics modeling

Michopoulos et al. 17 in their work present a seminal classification of modeling for the case of micromanufacturing which is adopted and enriched in terms of presentation from the current work, especially toward generalizing the categories of (both physics and data) models.

In the meantime, the use of physics in digital twins is dictated by specific approaches 18 ; for instance, theoretical models add up to the general knowledge, because besides pretraining 19 they can be used in the loop if they are able to run adequately fast 1 as well as adaptively. 20 The diversity in modeling is rather great, for example, micromechanical cutting 21 differs from those of micro electron beam welding 22 as well as from Laser Chemical Vapor Deposition (LCVD), 23 but may have some similarities with microforming. 24 The diversity in the mechanisms as well as the diversity of the phenomena and the models is evident in aggregative studies. 25 In the meantime, the computational time of the models could be reduced in various ways; besides aposteriori methods, which occur after the solution of many physics models, there are a priori methods, such as a priori expressions of solutions in terms of series or addressing the problem at solver phase (indicative for micromanufacturing 26 or more generic cases27,28).

In Figure A1 of the Appendix A, an overview of the modules is presented, including not only physics-based but also data driven models. In any case, additional methods that have been used in (micro)manufacturing applications implemented concepts from quantum mechanics, 29 and discrete elements. 30

As aforementioned, the inclusion of physics in the digital twins may be essential, especially since they can be used to derive explanations on data. The intuition lying behind these mathematical tools is unsurpassed, since there is experience of nearly 300 years on their use. The geometric interpretation of tracks in phase space, 31 the energy dissipation, or even the stability can be very useful toward understanding the process and optimizing it. It is not only about intuition building or running a Human-in-the-Loop optimization. It has to do with explaining the behavior to some kind of intelligence, physical or artificial. Thus, theoretical models can be very useful toward integrating explainable machine learning 32 ; the primitive form of which can be successful feature extraction.

A special reference will be made to higher order theories or equivalently effective theories. An introduction to these can be found in literature 33 but the application in micromanufacturing, due to “importance” and “appropriateness,” can be detected back to micromechanical theories of Mindlin,34,35 Cosserat, 36 and others. The importance of these works is monumental for the current study, as they introduce minimum information models. This implies the inclusion of size effects but the addition of a minimum number of modeling constants. The importance will be highlighted further below in the manuscript, by using a numerical example. This concept of modeling approach could be further expanded; either due to geometry 37 or microstructure.38,39 Other types of modeling are hierarchical modeling, which makes use of apparent properties derived from models of inferior scale, 40 largescale models, which use High performance computing, and simplified modeling, in which case reduced order models or elaborated models or are used. 41

Data driven models

There is also a family of models that do not rely on physics principles; the data driven models. There seem to be two categories of data driven models; the first one is mostly based on non-relational data and uses Machine Learning techniques, while the second one mostly refers to time series and concerns Auto-Regressive-Moving-average with exogenous noise (ARMAX) models. The use of Machine Learning models is without a doubt extremely popular for Quality assessment, as in the case of using Neural Networks for the quality assessment for micromachining 42 or for optimization such as in the case of using particle swarm optimization of process parameters in laser micromachining. 43 Their use can be categorized into several main groups; classification, clustering, association rules, feature selection 44 as well as regression. On the other hand, ARMAX models offer the possibility of evolution prediction of an output, when an input is given, and can be used for modeling and control. A characteristic example is the adaptive control of micro ultrasonic machining. 45

It is worth noting that the value of the sensors as time passes is decreased due to information they provide, often due to environmental conditions 46 or aging. So the models have to take into account different sensors or even networks of sensors (i.e. the “convergecast” under the framework of IIoT 47 ). To this end, feature selection algorithms are also relevant, and in this case they may be based on information or any other metric, like Analysis of Variance (ANOVA). 48 This fact may increase the complexity as it results in coupling the sensors with the data processing; the concept of prediction, training and classification capabilities of algorithms49,50 is the other side of the coin. In this case, relevant methods are Markov chains51,52 and Fuzzy Sets 53 (without excluding the association to physics), while for the case of stochastic nonstationary signals, 54 there is a whole list of metrics that are used.

This classification of tools that are useful for digital twins and automated decision making is complemented by other methods, indicatively Taguchi methodology (impact study), 55 Tikhonov regularization for inverse physics models56,57 and for optimization techniques. 58 In the context of digital twins, empirical models (also known as surrogate) can be used for fast decision making. The way these are integrated are either to substitute theoretical models, or as plain data driven models, estimated by measurements.

Cyber physical systems (CPS) issues

Besides the software modules mentioned above, there are also hardware aspects that need to be taken into consideration. For instance, sensors, controllers, processors, and communication equipment ought to be taken into account. 59 They are all affecting the computational efficiency of the overall system (digital twin), in terms of quality assessment, control signal estimation, control signal enforcement, and response to what-if scenarios. 1 In particular, the communications include, besides the involvement of distributed or parallel systems, the technologies like Internet of Things and Machine-to-Machine Communications (IoT-M2M) applied, or the asynchronous and synchronous protocols used within a microcontroller based processing architecture. Additionally, in micromanufacturing applications, 60 the actuators themselves are coupled with regulators (implying controllers) and are able to control processing in displacement in the order of microns. Often, the synchronization of macro and micro level is relevant, in terms of control and fine tuning. This increases the amount of the information needed, not only because of control or inter-microprocesses communications, 61 but also due to microfeatures or microparts involved in Computer Aided Process Planning. 62 Additionally, visualization issues 63 have been raised in literature. All these add up to the complexity of the computational system, requiring even a cloud infrastructure in cases where local intelligence has been exhausted by the requirements of microprocessing, either on processing, or design. 64 The diversity of the phenomena and hence the sensors needed 5 also contribute to the complexity increase.

To conclude, both Process and Machine Control 65 require a lot of aggregated data to achieve the desired outcome. This does not end here, however. Other families of applications include the ones engaging micro manipulation; these involve Microelectromechanical systems (MEMs), nanoscale assembly, genetic engineering 66 and the hierarchy of the data of the sensors results to really complex schemas and architectures.

Toward a generic framework

Given the aforementioned variety in the processing tools, one can come up with a generic framework that a digital twin could align with. Namely, there are three basic agents involved in major activities:

Tools – data elaboration

The integration of these tools is toward automated decision making. This term is used to describe activities such as responding to what-if scenarios, performing diagnosis and prognosis, conducting optimization and estimating state/quality as well as designing control signals.

Processes – microprocessing

All the above mentioned functionalities cannot be performed without monitoring and control equipment. Thus, communication protocols, such as IoT, are used here. Of course, there is need for data storage but this is of not taking into consideration for the current diagram (Figure 2 summarizes the framework).

This is the main three agents framework based on PDI optimization. P: prediction; D: diagnosis; I: intuition.

Human operators – operating

Humans are kept within the loop. Interpretation of analytics, enforcement of strategy, evaluation of performance and decision making based on experience are functionalities for which a human has to intervene. This can be considered to be the social aspect of a digital twin.

Figure 2 illustrates the framework, bonding together the three modules that undertake the functionalities originating by the corresponding CPS entities; (i) Optimization module, based on prediction, diagnosis, and intuition, (ii) interfaces for operating manufacturing entities, linking to other levels of decision making, and (iii) manufacturing itself. It seems that the consideration of this triplet can lead to a specific sequence of steps that can help building a digital twin, as one would still require a specific roadmap for designing and implementing a digital twin. It would be good to take into account all the aspects related to conceptual modeling, 67 such as Application, Middleware, Networking, Object. The diagram of Figure 3 illustrates such a roadmap, indicating where the micro-level effect intervenes, adding complexity in the data manipulation.

An iterative modular implementation roadmap for digital twin design and implementation in micromanufacturing. Micro-level affects each one of the stages.

This sequence that is depicted in Figure 3 utilizes the technologies that have been described in the previous section. Six different steps interact with each other in an iterative way and are used to build a dedicated digital twin. The first two steps have to do with selecting the appropriate technologies and techniques from the variety of modeling techniques that have been enlisted in an indicative way in Section 2. Then, the next two steps help define workflows, allowing these technologies to exchange information during design and operation phase of the digital twin. Finally, there are two extra steps helping guaranteeing the performance and the sustainability of the digital twin. It is a fact that each one

More specifically, the first step would be to make a list of all the functionalities and the capabilities (pool) that are part of the case. Quality assessment (QA), Control (CT), Knowledge Base (KB) are the functionalities, Physics models (PM) and Machine Learning (ML) are the modules that can be used, DSS refers to Decision Support Systems (for example heuristics or Analytical Hierarchical Process), UH is an extra module that can be used and stands for Uncertainty handling, while the Process Parameters and Key Performance Indicators (PP’s and KPIs, respectively) have to be defined.

The second stage would be needed to reduce the complexity. Physics models and ANOVA could be used to this end. Then, the sensorization is to be considered, followed by the definition of workflows. The ultimate goal is to achieve information exchange among the selected tools of step 1 so that the functionalities are implemented. Finally, training of the system is required, especially for the cases of data driven models and then the performance and the maintainability of the digital twin itself have to be taken into consideration.

In each one of the aforementioned stages, complexity is introduced by the micro character of the (α,λ) part. In fact, hierarchical modeling could be considered, 40 while the mechanical properties themselves have quite a diversity. 68 Ben-Amoz’s 69 work is an illustrative example on how additional properties may be needed in the case of size effects. Extra phenomena that take place also affect the complexity, 70 as well as extra variables, 71 while coupling between phenomena may also occur.72,73

The adoption of the CPS character of the entities that are come across this procedure demands the definition of the layers of cognition and configuration. The first step is 11 corresponded with the PDI optimization functionalities and hence the techniques/tools that will be chosen to be integrated have to be extremely well defined in terms of functionalities. The configuration aspect, on the other hand, is automatically addressed through the workflow definition (step 4 of the roadmap of Figure 3). Thus, it is only the communications that have to be taken into consideration. To this end, step 3 of the roadmap is meant to address also of the networking and interfacing that is required for the digital twin to operate in a seamless way. Extra techniques may be used for information decrease, such as embedding fingerprints. 74 In any case, the machine requirements 75 have to be taken into consideration while the SaaS 76 character of a digital twin is probably the most efficient method for cases such as Cognitive Control. 77

The choice of the aforementioned PPs and KPIs is not straightforward. The overall framework, however, can be highly helpful toward this and, eventually, boost the modeling procedure. This can be further facilitated with the help of integrating the concept of Key Variables 78 This implies that PPs and KPIs set is expanded, based on the variables that affect the manufacturing attributes (cost, quality, time, energy efficiency, etc), based on what the modeling (or DT) focus and level of detail is. Given the appropriate modeling technique (i.e. higher-order theories that are based on effective field, or hierarchical modeling), then the effort/efficiency ratio is differentiated based on what key variables can be defined. Such an attempt is sketched hereafter, in Section 4.2.

Paradigms of techniques integration

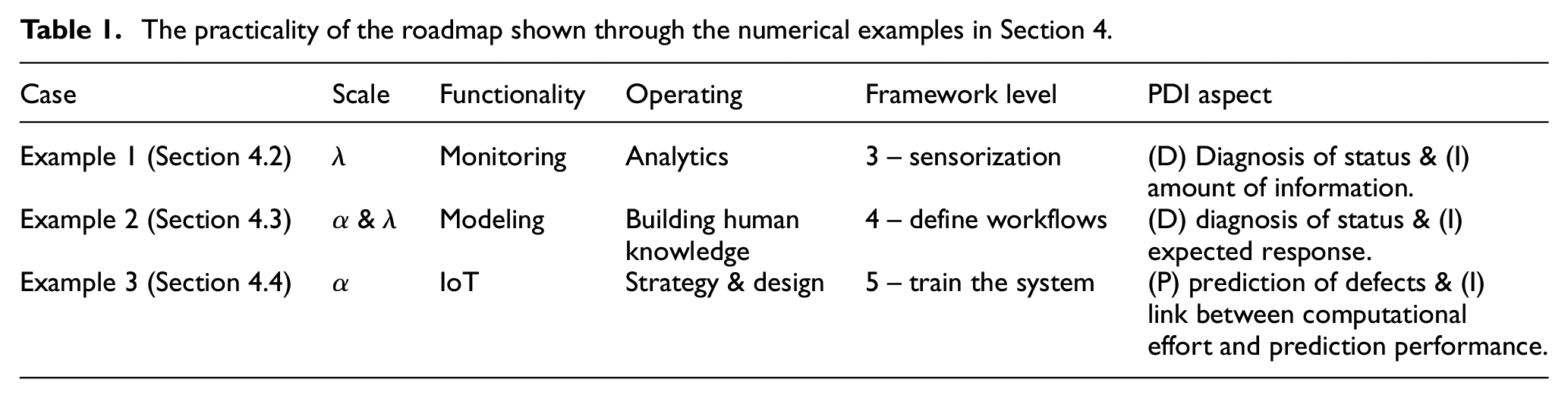

With framework being set, the following case studies have been elaborated, through dedicated simulations. They are used as illustration of how modeling techniques can be utilized in the framework of decision making within a digital twin. Table 1, hereafter, regards the applicability area of these case studies within the presented framework.

The practicality of the roadmap shown through the numerical examples in Section 4.

Models adoption and experimental verification

The usability of effective theories that are elaborated in the numerical examples of the next subsections cannot be presumed without any experimental verification. To this end, their added value is demonstrated here. With respect to mechanical behavior, the experimental results from Afazov et al 79 are adopted. There, the theoretical results in cutting forces overestimate in any case the experimental values, while attempts for utilizing theories integrating size effects deteriorate this phenomenon. However, in the context of utilizing Mindlin’s 34 approach with extra energy terms one can adopt different modeling techniques regarding the constitutive equations. 80 In this work, this model is extended to a further extent, integrating the gradient of the strain itself, resulting in the heuristic formula of equation (1), with σ and ε being the stress and the strain, respectively and the dotted terms related to strain rate.

Utilizing the constants for copper, 80 ignoring the temperature term, as well as utilizing a conservative value g = 0.05 m,11,35 it seems that this formula achieves an underestimation with respect to the aforementioned theoretical models. More specifically, for strain equal to 0.5, and a strain rate of 1.5 Hz, its seems that the reduction is of 2.5%, with respect to classical Johnson-Cook method, approaching the experimental value to a further extent. Similar approaches can be found also in other works.81,82 The micro end milling process could also benefit from such theories. 83

The usability of such theories is also apparent in thermal phenomena. Except for the correction in heat equation itself, 84 the melting front propagation is also prone to such size effects, 85 resulting in various profiles. The evolution of the melt pool in terms of mean values could also be altered this way changing the Stefan condition 86 into a new one, while the thermoelastoplasticity is also changeable according to size effects. 87

Sensorization and sensor value

Pi theorem19,88 may be proved extremely useful when choosing the sensors as suggested by literature. It can be used to eliminate excessive or redundant information related to the status of the part during microprocessing. Information concept may be considered its statistical equivalent, while classification capability has to be taken into account, resulting in some sort of iterative design. The use of a simple example from discrete physics can be utilized to illustrate the amount of information involved. Minimum information models are used here, without loss of generalization, and a static case is considered to further simplify the illustration. So one is discussing a case where a 2D fourth order differential equation is used. This paradigm is in line with the framework of the current work, since intuition is built through human-in-the-loop optimization of sensors and at the same time both α and λ are used, because the digital twin in this case includes both cases of parameter identification and defect detection.

In this example (Figure 4), the selection of sensors is dictated by physics automatically, since:

Red nodes denote the inner state of the part and can be calculated by boundary nodes.

Green nodes can be read by sensors

Orange nodes require an extra set of sensors

Black nodes have only a local effect and could be disregarded for the time being

White nodes are not relevant in this case

Discrete physics model of a 2D phenomenon described by a higher order model. Different colors in nodes denote different stencil application and thus different potential information acquired by sensors.

A direct conclusion out of this procedure could be that micro-characteristics may double the need for sensors in order to have the full view of the status of a micro-related feature in the (α, λ) sense part.

Numerical example for sensor value

To show what happens in the case where the digital twin is used to identify the part properties, an 1D model is used. This may be tricky, as many times, such as in the d’Alembert’s solution of the wave equation, the 1D solution is not indicative of the reality. However, in this case, one dimension is sufficient to prove the point. The minimum information model is used, with one parameter for macro-behavior and one to introduce size effects.

Two sensors have been considered, one measuring the parameter of interest (i.e. displacement) and one concerning the second derivative in space (which may correspond to stress or electric field induced). The sensors have been positioned in the middle of the part and their values are shown below in the diagrams of Figure 5, as functions of the two parameters aforementioned.

Displacement (top) and stress (bottom) indicator dependence on micro and macro level part properties.

The equipotential curves are also distinct in the diagrams below. They are curves of different order, so there would be no error expected in the classification (or regression) of the property.

It seems logical at this point to assume that with two sensors we can identify the property.

In the second example, the defect detection is considered, simulated with a limited in space variation of the material parameters, asymmetrically placed on the part but both occurring at the same point. The corresponding results are given in Figure 6.

Displacement (top) and stress (bottom) indicator dependence on micro and macro level defects.

In this case, the equipotential curves resemble each other. Also, it is noted that the positioning of the sensors has been selected based on the maximum variability of the measures. It can be safely deduced that it is quite complex to classify the defect. So, more information will be needed, derived from a dynamic response. Time-frequency representations12,89,90 could be used to this end, however this exceeds the purposes of the current work.

Physics modeling usability through case studies

Speeding up the physics models, as mentioned earlier, is extremely interesting for supporting the exchange of information between physics and data driven models. In the exact opposite case, however, where a physics model is not (or cannot be) accelerated, a library of results (with supportive uncertainty generation) could be use instead. The first use will be that of pretraining, but it could also be used as a pool of solutions, since an aposteriori Reduced Order Model (ROM) will be considered.

Numerical example for information embedding in machine learning

The intuitiveness of physics can nevertheless be embedded in data driven models. In the following example, dispersion is predicted through a machine learning system. To train the system, the signal w(t) of equation (2) has been utilized. The input is the signal itself set as an initial condition, with τ = 0. Then a recursive Neural Network has been used to capture the output; it has been the same signal with (a) uniform delay and (b) dispersion.

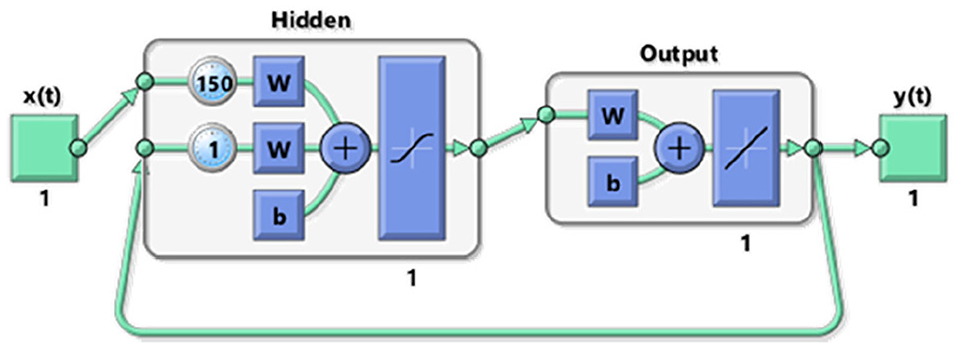

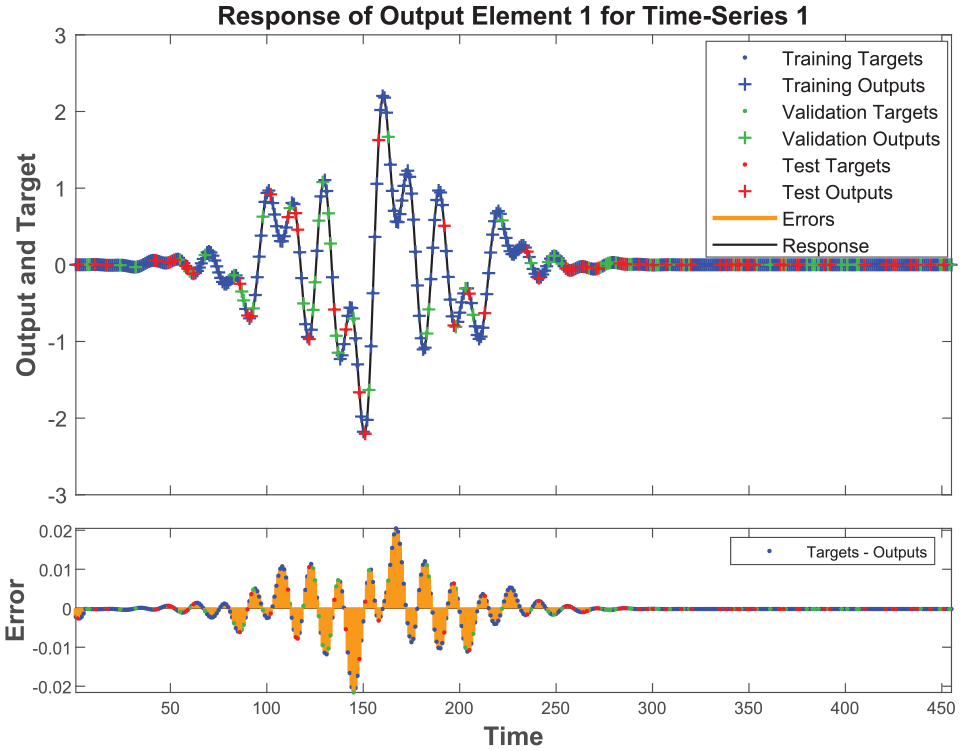

The intuitiveness of physics can nevertheless be embedded in data driven models. In the following example, dispersion is predicted through a machine learning system. To train the system, the signal w(t) of equation (2) has been utilized. The input is the signal itself set as an initial condition, with τ = 0. Then a recursive Neural Network has been used to capture the output; it has been the same signal with (a) uniform delay and (b) dispersion. For these two cases, the structure of the neural networks is given in Figures 7 and 9, respectively, and their prediction performance can be seen in the results depictions of Figures 8 and 10.

Structure of the recurrent neural network identifying uniform dispersion pattern.

Error in the response of the recurrent neural network identifying uniform dispersion pattern.

The delay in the input is used to capture the group velocity in discrete time. However, in order to acquire an error of less than 1%, it is required to add poles to the system in the second case, denoting the creation of dispersive output (different delay per frequency). Figures 7 and 8 represent the linear dispersion-less propagation while Figures 9 and 10 illustrate the dispersive propagation.

Structure of the recurrent neural network identifying irregular dispersion pattern.

Error in the response of the recurrent neural network identifying irregular dispersion pattern.

Exchange of data between physics and data models

The information manipulation throughout a workflow of a digital twin realization may be quite complex. Pretraining and Calibration are only the starting components of the manipulation. Other meta-functions include Visualization of data through Augmented Reality (AR), Intuition Building and Alarms Management (KPIs), Process Control with constrains (for instance laser power cannot be negative), Quality Assessment related issues, and finally, aspects deriving from implementation issues and protocols.

As such, in every case, the workflow should be defined, based on the predetermined functionalities of the digital twin. In Figure 11, two different workflows (a) and (b) are presented.

Two different workflows in platform acting as a digital twin: (a) simplistic version and (b) elaborated version. The contribution on the outcome of the various modules (physics and empirical model) is also shown.

Workflow (a) is much more straightforward, but the functionalities may be more restricted, while at the same time, the information embedded from the various models is not a holistic representation. In addition, the amount of information is increased even in the case of minimum information modes, as shown in Table 2.

The number of constants as information metric: its value is depending on the modeling case.

Only linear mechanical behavior is discussed.

Numerical example for data aggregation

In this section, the need for information manipulation and data dimensionality reduction is given. To begin with, the case of micro additive manufacturing is considered, with full data utilization as an approach. To this end, a set of multispectral plenoptic cameras is expected to be used (Figure 12) to cover the whole area of the part, with φ being the area where boundary conditions are applied, and q the area where body forces are exerted (i.e. in Electron Beam Machining EBM). In any case, the boundary nodes for the temperature are needed. The planes denoted as (i) and (ii) along the focus planes of the cameras.

Indicative experimental setup of monitoring in micromanufacturing: φ is the area where boundary conditions are applied, q denotes the area with respective body forces and (α,λ) are micro feature and micro part characteristic dimensions as per notation in Figure 1. Thermal cameras are considered under the scope of micro additive manufacturing.

It can be easily calculated that the amount of data of one camera can be calculated using equation (3):

With N xy being the number of pixels per spatial unit, Nz the number of focal planes, B the number of wavelengths, D the bit depth, A the area of the part and fs the sampling rate.

Given the behavior of the material of Figure 13 and according to relevant literature,91,92 even if one uses conservative values for these parameters, as:

one focal plane

8 bits per measurement

0.75 points per micron

Originating from the Gauss Points needed to estimate the integral of Gaussian profile of laser in micro processing. As shown in Figure 13, it is not up to the wavelength used.

six bands (red/green/blue and near/Mid/Shortwave infrared)

Three bands are for part visualization and three for quality assessment and temperature estimation.

and an area of 250,000 squared microns and a sampling rate of 10 kHz.

Nonlinear dispersion in heat equation (involving real part of wavelength).

the total amount of data still reaches a total of about 8 GB/s. This implies that the need for information reduction techniques is high. Such techniques could potentially take advantage of:

the smoothness of the profile of the temperature

the evolution of the thermal field and the fact that the field may be stationary away from the laser source.

Conclusions

It is crucial for micromanufacturing automation to be able to use digital twins. However, besides the complexity of the scenarios involved, the micro level interferes with each one of the modules utilized and expands the level of the complexity to unmanageable levels. It has been proved though, that the collaboration between the so called black box approaches and physics may solve the information manipulation problem. To this end, the tools have to be well and formally described in terms of possibilities and capabilities, so that existing mathematical tools can be used to operate together and compose a digital platform that can act as a digital twin. It seems that in any case, the empirical models have the opportunity to handle uncertainty seamlessly, in comparison to theoretical models. The latter, however, can be used either as reference models, 93 or as knowledge extractors. 19

On a more concrete level, it seems that for sure the theoretical models can be integrated in digital twin frameworks, under the concept of decision making. The sensors value example is closely related to the models added value with respect to the design phase, while the use of surrogate models (neural networks) to imitate the physics models and be able to speed them up indirectly is highly useful for integration of fast physics models. Thus, PDI optimization is feasible through this procedure, and the implementation steps of Figure 3 are highly implementable.

Also, as indicated in the numerical example concerning exchange of data, the model that will be adopted, based on the desired level of detail, will help the designer optimize the design of the digital twin from a CPS point of view, through selecting the necessary and sufficient sensors.

Finally, the (α,λ) modeling of micromanufacturing seems to be highly useful in terms of modeling, since it can be used to finalize the need for data and link them to explicit theoretical models, through a specific elaboration.

As such, micromanufacturing related decision making seems feasible in the next few years, integrating metrology toward data models, real-time optimization techniques and building intuition, the so called three agents model involving PDI optimization.

With respect to future work, it remains to further define the steps of the implementation roadmap with further details, taking into account the individualization of the digital twins per process and per data acquisition/communication technique, as the variety of microprocesses demands fine-tuning of techniques and workflows. However, the current form of the framework is hopefully a concrete step toward integrating theoretical models in real-time decision making and in digital twin design related decision making.

Footnotes

Appendix A: Modelling classification

Handling Editor: Chenhui Liang

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is under the framework of EU Project AVANGARD. This project has received funding from the European Union’s Horizon 2020 research and innovation program under grant agreement No 869986. The dissemination of results herein reflects only the authors’ view and the Commission is not responsible for any use that may be made of the information it contains.