Abstract

Generative Artificial Intelligence (AI) tools, particularly large language models (LLMs), are rapidly transforming qualitative data analysis by offering unprecedented speed and scale. However, this integration introduces significant challenges to methodological transparency due to the algorithmic opacity of these “black-box” systems and their hidden decision points. Traditional qualitative reporting guidelines predate generative AI and lack specific guidance for disclosing AI usage. This paper addresses this critical gap by introducing a novel heuristic framework that poses 20 questions across five key themes: The Research Team, Participant Interaction, Study Design, Data Practices, and Data Analysis, providing disclosure actions for each. This framework blends and augments existing frameworks, aligning with principles to guide researchers in meticulously documenting their AI-mediated analytic choices. Methodologically, the framework advances the field by requiring explicit reporting on: the roles of AI tools and the AI literacy of the human research team; AI’s involvement in participant communication, informed consent processes, and safeguards for sensitive demographic data; the alignment of AI tools with theoretical frameworks, their influence on sampling strategies, and discussions during IRB review; data storage, AI’s role in data creation (e.g., synthetic data, transcription, interview protocols), and its assistance in determining data saturation and participant checking; and the precise contributions of AI to coding and thematic categorization, alongside detailed documentation of iterative human-AI interactions and prompts used. By fostering rigorous audit trails and comprehensive documentation, the framework aims to maximize transparency, ensure methodological rigor, and uphold ethical standards in AI-augmented qualitative inquiry, thereby enhancing the credibility of findings.

Keywords

Introduction

In early 2024, Wachinger and colleagues asked ChatGPT, a generative artificial intelligence (AI) tool, to develop first-pass codes for a set of maternal-health interviews; the model produced serviceable themes in minutes, yet reviewers struggled to determine how those themes had been derived or whether hidden biases shaped the output. Such episodes now occur across disciplines, signalling a turning point for qualitative methodologists. Powerful large-language models (LLMs) used for analysis and increasingly embedded in computer-assisted qualitative data-analysis software (CAQDAS) promise unprecedented speed and scale, but they also introduce opaque, automated decision points that undercut the field’s longstanding commitment to methodological transparency (Morgan, 2023; Silver, 2023). While these affordances can free researchers to concentrate on interpretation and theory-building, the underlying algorithms operate as “black boxes,” obscuring the analytic pathways by which raw text becomes thematic insight (Lipton, 2018). As a result, peers, journal editors and ethics boards often cannot judge the credibility of AI-assisted findings or verify that participant data were handled in ethically defensible ways.

Existing qualitative reporting checklists, such as the highly-cited Consolidated Criteria for Reporting Qualitative Research (COREQ) (Tong et al., 2007) and the Standards for Reporting Qualitative Research (SRQR) (O’Brien et al., 2014), predate generative AI. Neither framework asks authors to disclose prompts, model parameters, or iterative human-AI interactions, leaving reviewers without clear criteria for evaluating AI-mediated studies. The absence of tailored guidance fuels inconsistent institutional-review-board (IRB) decisions and erodes trust in AI-mediated methods (Friesen et al., 2021; Makridis et al., 2023).

I begin to address that gap by first analyzing emerging uses of generative AI in the qualitative methods literature via a thematic literature review, and by synthesizing the methodological issues that such uses raise vis-à-vis transparency.

1

The following research questions motivated my analysis: • How does the opacity of generative AI affect interpretive processes in qualitative research? • What ethical and methodological tensions emerge when AI tools are incorporated into traditional qualitative workflows? • What heuristic strategies can researchers employ to ensure transparency and maintain the epistemological integrity of qualitative inquiry?

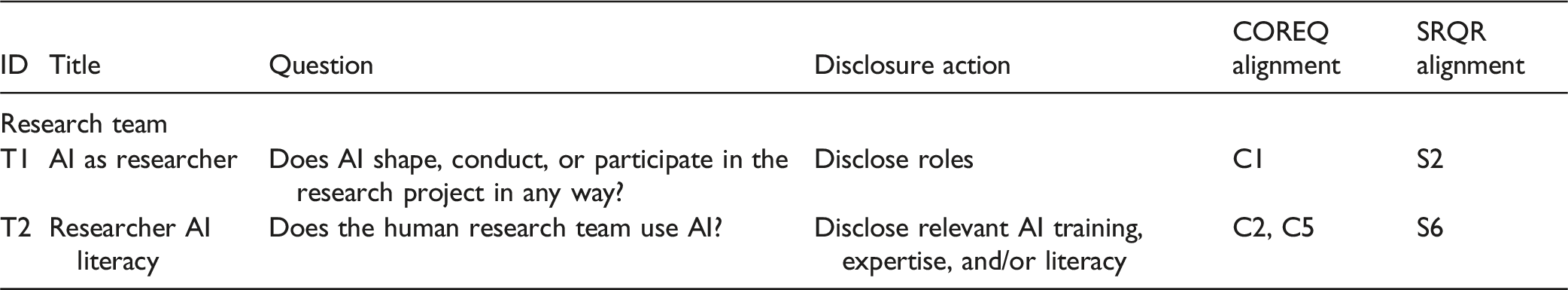

As a result of investigating these questions, I introduce “Transparently Reporting Operations when Using Transformative AI (TROUT-AI),” a novel matrix that poses 20 questions across five themes regarding the use of AI and provides disclosure actions to follow when the questions are answered in the affirmative. This matrix blends and augments the COREQ and SRQR frameworks.

To start, I sketch recent advances in generative AI relevant to qualitative work, deliberately omitting deeper technical history to keep the focus on methodological implications. The following section reviews transparency as a core value in qualitative traditions, and it is followed with a discussion on recurring problems related to establishing transparency when using generative AI in qualitative research. The final section introduces the TROUT-AI matrix and illustrates its application, and the conclusion notes actionable recommendations, limitations, and a focused agenda for future research.

Artificial Intelligence and Its Emerging Role in Qualitative Research

Foundational Components of AI

Early AI systems of the 1960s and 1970s operated primarily as rule-based mechanisms, relying on rigid sets of if-then statements meticulously crafted by human experts (McCarthy, 1959). While effective for handling well-defined tasks, these systems proved inadequate when faced with complex and variable inputs, particularly natural language processing (Jones, 1994; Khurana et al., 2023). By the 1980s and 1990s, researchers moved toward statistical and machine-learning approaches, shifting away from explicit rules in favor of models that could identify patterns in data (LeCun et al., 2015).

A transformative shift occurred with the introduction of encoder-decoder architectures and the attention mechanism, allowing models to dynamically prioritize different elements of an input sequence when generating an output (Bahdanau et al., 2016). Building upon this framework, transformer models emerged (Vaswani et al., 2017). These models demonstrated that self-attention mechanisms alone could efficiently capture global dependencies in language, eliminating the need for recurrent structures (Jones, 1994; Khurana et al., 2023). This breakthrough (along with others like learning approaches and computational breakthroughs) enabled the development of LLMs, trained on vast textual corpora to derive sophisticated, scalable statistical representations of language (see Kaur et al., 2024; Patil & Gudivada, 2024; Shoeybi et al., 2020).

Generative AI encompasses a diverse class of machine-learning models designed to generate novel content, including text, images, and audio based on learned patterns in existing data (Goodfellow et al., 2014). Generative AI is a broad category of AI designed to create new content, and LLMs are a foundational technology (Combrinck, 2024; Qiao et al., 2024). While they share generative AI’s fundamental objective of content creation, LLMs distinguish themselves through their reliance on transformer architectures and extensive training on large-scale textual corpora (Gamieldien et al., 2023). To engage with LLMs, systems employ conversational interfaces referred to as chatbots (Combrinck, 2024).

In their most basic form, chatbots function as dialogue management tools: they interpret user queries and generate contextually relevant responses in real time (Zhang et al., 2023). They excel in structured, task-oriented exchanges, for example, by answering frequently asked questions or handling customer support dialogues (Hill et al., 2015). Contemporary LLM-based chatbots (e.g., OpenAI’s ChatGPT) engage in more open-ended and dynamic conversations, producing responses that feel more natural and context-aware (Bender et al., 2021; Brown et al., 2020; Combrinck, 2024). While foundational generative AI and many contemporary chatbots are fundamentally reactive systems, designed to respond to user prompts within a conversational context, the frontier of AI research is developing more autonomous “agentic” systems capable of performing actions beyond simple text generation (Brown et al., 2020; Park et al., 2023; Sapkota et al., 2025).

Generative AI in Qualitative Research

Emerging Opportunities

AI has begun to transform qualitative research, a field traditionally characterized by interpretive and contextually rich analyses (Bijker et al., 2024; Gamieldien et al., 2023). Early qualitative research workflows relied on CAQDAS to facilitate organizational tasks such as transcript storage, document analysis, and hierarchical coding (Beekhuyzen & Bazeley, 2025). These systems introduced a degree of consistency and efficiency over fully manual approaches but remained largely dependent on user-driven coding and segment retrieval (Morgan, 2023). Over time, CAQDAS platforms like MAXQDA (2025), NVivo (2025), and ATLAS.ti (2025) began to integrate LLM-based services in familiar interfaces, enabling auto-coding, concept clustering and rapid excerpting, signaling an incremental shift toward automated analysis (Silver, 2023). But, advances in AI—particularly in LLMs— introduced new possibilities for qualitative research, supporting more nuanced tasks such as text summarization, sentiment analysis, automating coding, and facilitating theme identification from textual data.

In recent studies, LLMs like OpenAI’s ChatGPT have been employed to generate initial codes (Perkins & Roe, 2024), suggest thematic categories (Katz et al., 2024), and summarize qualitative datasets such as interview transcripts or open-ended survey responses (Yan et al., 2024; Zhang et al., 2023). A review of the qualitative research literature identified thematic analysis as a common application of AI (Gamieldien et al., 2023; Katz et al., 2024; Slotnick & Boeing, 2024; Wachinger et al., 2024), followed by uses in inductive/deductive coding (Bijker et al., 2024; Chen et al., 2024; Qiao et al., 2024; Siiman et al., 2023; Tai et al., 2023), and content analysis (Bijker et al., 2024). For example, several projects leveraged ChatGPT to perform first-pass coding of transcripts, which researchers then compared against human-generated codes (Pattyn, 2024; Prescott et al., 2024). This breadth of applications demonstrates that AI’s role in qualitative research is expanding beyond simple coding, as scholars test its utility across various analytical traditions.

Potential Benefits and Use Cases

AI-based tools introduce distinct advantages that can enhance and streamline qualitative research. Studies have documented that a primary advantage is increased efficiency in handling large volumes of qualitative data and accelerating the coding process (Dunivin, 2024; Jalali & Akhavan, 2024). By automating labor-intensive initial coding, AI may allow researchers to dedicate more time to higher-level interpretation, thereby streamlining the research process. Another reported benefit is the high degree of consistency and agreement between AI-generated codes and human analysts’ codes. In fact, five studies found that AI-derived themes overlapped closely with those identified by human researchers (Bijker et al., 2024; Gamieldien et al., 2023; Prescott et al., 2024). Such findings suggest that, when properly guided, generative models can reproduce or support human-like coding decisions with a notable level of reliability. Several studies documented improvements in accuracy or output quality when using AI with structured guidance. Chen et al. (2024) found that incorporating human coding processes into prompts improved results, while Combrinck (2024) showed that providing AI with frameworks and examples enhanced accuracy. Zhang et al. (2023) demonstrated that a structured prompt design framework improved outputs, and Qiao et al. (2024) achieved high coding accuracy through careful prompt configuration.

Beyond gains in efficiency and reliability, generative AI can enhance the analytic process through novel insight generation and supportive human-AI collaboration. Rather than conducting an exhaustive line-by-line review, analysts can leverage AI-driven insights while maintaining the critical role of human interpretation—challenging, refining, or discarding automated coding recommendations as needed (Yan et al., 2024). Arora et al. (2025), for example, describe a hybrid thematic analysis process in which GPT-4 was first used to generate a preliminary codebook, after which human analysts refined the codes and examined the data in depth, a similar finding to that of Katz et al. (2024) and Wachinger et al. (2024) who found AI tools generate themes closely matching original human-created themes. Such division of labor combines AI’s efficiency with human expertise, yielding a more robust analysis than either could achieve alone. The value of human-AI collaboration was supported by de Paoli’s (2023, pp. 19–20) finding that “there is no denying that the model can infer codes and themes and even identify patterns which researchers did not consider relevant and contribute to better qualitative analysis [….] the likely scenario is not one of the human analysts being replaced by AI analysts, but one of a Human-AI collaboration.”

Such human-AI collaboration not only accelerates analysis but can also improve rigor, as the human expert validates and builds on the AI’s contributions. But, continuous human oversight is key: at each stage of AI involvement, a researcher should validate the outputs. This could mean cross-checking a sample of AI-coded excerpts against manual coding to ensure no misinterpretation has occurred. Notably, all reviewed studies that achieved high-quality results kept a human “in the loop” to oversee AI contributions, and none advocated for an entirely hands-off AI approach. Several studies employed specific validation strategies. Bijker et al. (2024) used precision rates and intercoder agreement using Fleiss’s kappa, and Dunivin (2024) and Pattyn (2024), respectively, evaluated agreement between AI and human coders using Cohen’s kappa. High kappa values provide evidence that the AI’s categorizations align with human interpretations, whereas discrepancies flag areas for further review. In some cases, when AI outputs did not initially match well with human analysis, researchers used this feedback to refine their prompts or adjust the model parameters, iteratively improving alignment (Slotnick & Boeing, 2024; Zhang et al., 2023). Other researchers, like Pattyn (2024) compared outputs from multiple AI tools (ChatGPT 4 and Bard) with human coders, and Prescott et al. (2024) also used both ChatGPT and Bard to evaluate consistency with human-identified themes.

Researchers have found that crafting prompts with clear context and specific instructions dramatically improves the relevance and clarity of AI-generated codes or summaries. Rather than ad hoc queries, successful studies used structured prompt frameworks. For example, Zhang et al. (2023) developed a systematic prompt design protocol that significantly boosted ChatGPT’s coding performance. Key strategies, such as those demonstrated by Combrinck (2024), include tailoring prompts to the research context (often by providing the AI with background information or examples of coding), and using iterative refinement, which is to say repeatedly tweaking the prompt based on the AI’s output until the results align well with human judgment. Dunivin (2024) experimented with instructing the AI to take on a specific role (e.g., “You are a qualitative researcher analyzing interview transcripts…”), which helped guide the model’s approach and tone. Techniques like chain-of-thought prompting, where the AI is asked to explain its reasoning step-by-step, have been shown to yield more transparent and accurate theme identification as well (Dunivin, 2024). Mastery of prompt engineering thus emerges as a key skill. By clearly communicating the task and context to the model, researchers can substantially enhance the quality of AI assistance. The evidence to date suggests that generative AI, used judiciously, can both speed up qualitative analysis and enrich it with supportive pattern-finding.

Methodological Considerations and Challenges

A hallmark of qualitative research is its commitment to interpretive depth, moving beyond categorization to uncover the complex meanings participants bring to a study (Beekhuyzen & Bazeley, 2025). As AI-based tools become integrated into qualitative workflows, it is imperative that this interpretive ethos remains intact. While AI can expedite pattern recognition, an overreliance on automated outputs risks reducing rich, contextually embedded insights to surface-level themes (Morgan, 2023; Prescott et al., 2024). Researchers must critically interrogate AI-generated suggestions, assessing whether they authentically capture participants’ lived experiences and the nuanced dynamics under investigation. If a researcher were to accept AI-generated codes or themes at face value without careful review, important subtleties could be lost and compromise the integrity of the analysis. For instance, Prescott et al. (2024, p. 2) note that “qualitative analysis by human coders targets meanings and interpretations, whereas LLMs target structural and logical elements of language,” which can result in an inability of the latter to capture nuance and context (Jalali & Akhavan, 2024). This lack of nuance can lead to contextual misinterpretation. For example, a sarcasm or local idiom might be tagged literally by the AI, distorting the intended meaning. Perkins and Roe (2024, p. 393) remind us that, “while [generative AI tools] bring new benefits to research processes, it is the human researcher’s intuition, expertise, and interpretative skills that breathe life into data, transforming it into meaningful knowledge.” Carrying a similar degree of import, researchers must also be aware of the inherent biases built into the training data on which LLMs are built. Since they are heavily trained on human-generated data from the internet, they can inadvertently learn and perpetuate these existing biases, which may include those related to race, gender, sexuality, or historical and social inequities (Morgan, 2023; Slotnick & Boeing, 2024).

Researchers must remain reflexive in their practices by examining how generative AI and their assumptions, theoretical orientations, or technological choices shape the analytical process (Levitt et al., 2018; Woods et al., 2016). AI outputs must be critically evaluated and integrated with caution to avoid analytic bias or oversight, as current generative AI cannot autonomously handle the interpretive depth of qualitative analysis (Combrinck, 2024; Morgan, 2023; Perkins & Roe, 2024). This underscores the need for qualitative scholars to evaluate how AI-driven processes align—or conflict—with the epistemological foundations of their methodological traditions (Wachinger et al., 2024). Ultimately, the responsible integration of AI into qualitative research depends on transparency—ensuring clarity around how data are processed, how models are trained, and how algorithmic outputs intersect with human judgment. The following section delves deeper into this issue, offering practical strategies to uphold openness and maximize integrity throughout the research process.

Transparency

Transparency in Qualitative Research

Transparency has long been a defining principle of rigorous qualitative research. Given that qualitative methods rely on interpretive frameworks and context-sensitive analyses, researchers must articulate how they collect, analyze, and make sense of their data (Beekhuyzen & Bazeley, 2025). Transparency also involves critically examining and disclosing the researcher’s theoretical orientations, underlying assumptions, and potential biases (Finlay, 2002). This level of openness enables readers to follow the investigative process—from participant recruitment and data collection strategies to coding frameworks and thematic development—shedding light on the interpretive choices that shaped the study. By making these influences explicit, qualitative researchers enhance the trustworthiness of their work and invite deeper engagement with their interpretive claims. Transparent research also strengthens confidence in the validity of reported themes and patterns. This emphasis on methodological openness aligns with broader shifts in the social sciences toward reflexivity and accountability (Seale, 1999). Transparency is central to establishing the legitimacy and scholarly contribution of qualitative research.

Within qualitative traditions, transparency is commonly assessed through four interrelated dimensions of trustworthiness: credibility, confirmability, transferability, and dependability (Beekhuyzen & Bazeley, 2025; Lincoln & Guba, 1985). Each of these dimensions is strengthened when researchers clearly document their methodological choices, disclose the interpretive pathways that shape their findings, and articulate the epistemological commitments guiding their work. For example, demonstrating that thematic categories emerged organically from the data rather than being imposed arbitrarily requires a clear and detailed account of coding protocols, iterative refinements, and decision-making rationales. Credibility refers to the plausibility and trustworthiness of a study’s findings (Lincoln & Guba, 1985). A transparent analytic process ensures credibility by making explicit how themes emerge from data rather than being arbitrarily imposed. Researchers can enhance credibility by providing verbatim participant quotes, coding justifications, and clear accounts of iterative data analysis (Tong et al., 2012). Additionally, reflexivity, which is the practice of systematically documenting the researcher’s assumptions, positionality, and decision-making,further strengthens credibility by acknowledging potential biases and their influence on interpretation (Levitt et al., 2018).

How Transparency is Evaluated

Transparency in qualitative research is inherently complex due to the diverse epistemological traditions within the field, such as grounded theory and phenomenology, each with distinct expectations for methodological rigor (Levitt et al., 2018). Nevertheless, common principles guide transparency, emphasizing clarity in methodological reporting, analytic procedures, and data accessibility. Methodological transparency requires detailed reporting on data collection processes, including sampling rationale, study settings, interview protocols, and the guiding theoretical or epistemological frameworks (Hiles, 2008; Moravcsik, 2019). Researchers commonly achieve this by presenting codebooks, explicating theme derivation through data excerpts, and including verbatim participant quotations to demonstrate clear links between data and theoretical interpretations (Beekhuyzen & Bazeley, 2025; Creswell & Poth, 2024; Levitt et al., 2018). Additionally, structured memos or reflexive narratives illustrate how analytical categories evolve and how interpretive adjustments occur during research (Nowell et al., 2017). Such evidence of the data-to-theory linkage allows readers to evaluate the grounding of conclusions in the empirical material.

Documentation clarifies the handling of initial codes, treatment of outliers, and management of researcher biases. Frameworks like the SRQR explicitly support transparency by calling for reporting of credibility-enhancing techniques (e.g., member checking, peer debriefing, triangulation) and associated rationales (O’Brien et al., 2014). By keeping analytic logs or research journals, investigators make the evolution of their thinking visible, capturing how initial codes were refined, how outlier cases were handled, and how researcher perspectives were managed over time (Nowell et al., 2017). Such detailed documentation fosters critical engagement with the research process, reinforcing the interpretive integrity and contextual sensitivity essential to qualitative inquiry. The methods for establishing transparency in qualitative research have been tried and tested, but integrating generative AI into research challenges existing and accepted transparency practices.

Problems Establishing Transparent Research Augmented by Generative AI

“Black Box” Systems

Researchers are encountering new challenges demonstrating precisely how AI systems are influencing their analytical decisions. The “black box” nature of AI models and the inscrutability of their outputs is the key problem. While qualitative inquiry prioritizes interpretive depth and analytic transparency, LLMs operate using billions of parameters fine-tuned through gradient-based optimization (Brown et al., 2020). This optimization process, though highly effective at detecting patterns in vast datasets, produces outputs whose underlying logic is neither linear nor readily interpretable, introducing opacity into an otherwise carefully documented qualitative workflow (Lipton, 2018).

Where conventional qualitative methodologies rely on memoing, iterative coding refinements, and reflexive engagement with researcher positionality (see Charmaz, 2024; Corbin & Strauss, 2015), generative AI systems do not easily integrate into these processes. Even researchers skilled in navigating multiple epistemological standpoints may struggle to articulate why a machine-generated thematic cluster emerged in a particular form. While various “explainable AI” approaches offer partial insights—such as feature attributions or saliency maps—these techniques are not designed with qualitative research’s interpretive aims in mind (Rudin, 2019). As a result, standard auditing practices (e.g., thick description, multi-layered coding memos) cannot fully account for the ways AI-generated categories align with, diverge from, or potentially distort emergent themes.

When AI augments these processes, the precise moments where machine-generated insights intersect with researcher-driven decisions often become obscured. AI-assisted modifications, particularly those that autonomously revise code structures, can introduce decision points that remain hidden from view (Woods et al., 2016). “Human-in-the-loop” approaches, where AI-generated codes or thematic clusters undergo multiple rounds of human review and refinement, are important and necessary. But even though this integration can enhance coding efficiency and efficacy, it also adds layers of complexity. If this feedback loop could be documented fully, experienced scholars may struggle to reconstruct the chain of interpretive decisions because of AI’s baked-in opacity.

These challenges are further amplified when generative AI is embedded within commercial or proprietary software ecosystems. Researchers may gain access to automated coding and content analysis features without visibility into the model’s architecture or training corpus, and the biases embedded therein (Bender et al., 2021). The sheer scale and complexity of AI model parameters create formidable barriers to methodological transparency. For qualitative inquiry such opacity poses significant risks, as statistical pattern recognition does not inherently equate to interpretive validity.

Iterative workflows involving AI-generated suggestions introduce new uncertainties regarding the boundary between researcher subjectivity and algorithmic influence. As researchers adjust codes in response to machine-generated prompts, each iteration subtly recalibrates the AI’s recommendations, creating a feedback loop that obscures whether refined themes reflect the researcher’s conceptual insights or the model’s statistical biases. While qualitative scholars are accustomed to iterative coding, machine-guided re-codings lack the transparency of traditional researcher-led adjustments informed by emergent theory or respondent validation. In this sense, the opacity of AI threatens the integrity of reflexive documentation, making it more difficult to substantiate and defend the interpretive pathways that shape final themes.

Ethical and Privacy Obstacles

In qualitative research, ethical and privacy considerations have long been central due to the sensitive and often deeply personal nature of the data collected (see Ogden, 2008a, 2008b; Preissle, 2008). The integration of generative AI into qualitative workflows compounds these ethical challenges. A primary issue is the safeguarding of participant confidentiality by way of, among other things, the pseudonymization of participant data. AI-driven systems, particularly those reliant on cloud-based infrastructures, often require data to be transmitted, stored, and processed. As with all information technologies, generative AI systems and the underlying data sources that support them will be a target for cybersecurity risks (e.g., code injections and exploits, ransomware, insider threats, etc.), which could reveal participant data uploaded to a GPT system. A less obvious but equally consequential issue concerns information leakage. A researcher could upload a pseudonymized dataset for analysis. But, for example, consider the facets of ChatGPT’s training data, as reported by its parent organization, OpenAI (2025): (1) Information that is publicly available on the internet, (2) Information that [OpenAI] partner[s] with third parties to access, and (3) information that [OpenAI] users, human trainers, and researchers provide or generate.

Proprietary AI algorithms frequently lack transparency regarding data handling and model training procedures, making it difficult for researchers to fully assess how participant data are processed (Cheong, 2024). This opacity raises concerns about the potential for AI models to re-identify individuals or disclose sensitive information, particularly when trained on large, heterogeneous datasets that incorporate publicly available data without proper consent (see Lubarsky, 2017). Some reidentified data may be factual (e.g., Person X works at Organization Y), other improperly disclosed data—either factual or hallucinated–could cause reputational harm. Even though generative AI companies take measures to protect against the leakage of personally identifying information (PII) from their training datasets, research has already shown that those measures are not reliable (Nunez Duffourc et al., 2024). Using generative AI platforms may increase the risk of unauthorized access or unintended data exposure—ultimately undermining the confidentiality assurances that are foundational to qualitative research.

Informed consent, another cornerstone of ethical research, becomes increasingly complicated in AI-assisted studies. Participants typically expect their data to be handled with strict care and used only for explicitly stated purposes (Mozersky et al., 2020). However, generative AI often introduces intricate data processing techniques that are difficult to fully convey in lay terms. Researchers must ensure that participants not only understand that AI is being used but also grasp what this entails—such as potential secondary uses of their data, storage practices, and the risks associated with automated processing. A failure to communicate these dimensions effectively risks weakening the ethical foundation of informed consent, leaving participants unaware of how their contributions may be repurposed or analyzed beyond the immediate study.

Institutional Constraints: IRB and Regulatory Hurdles

A major challenge in integrating generative AI into qualitative research arises from institutional constraints, particularly those related to ethical oversight. Institutional review boards (IRBs) and data protection bodies have traditionally evaluated research based on well-established methodological frameworks, leaving a significant gap in assessing studies that incorporate emerging AI technologies (Wachinger et al., 2024). Many IRBs lack updated guidelines or standardized protocols to address the unique ethical concerns and risks posed by AI-augmented research (Knight et al., 2025). The absence of clear IRB guidelines on AI usage forces researchers to justify their methodological decisions and data-handling practices on a case-by-case basis. This lack of consistency can lead to prolonged review timelines and disparate decisions across institutions. Without standardized criteria for transparency in AI-assisted analysis. As a result, researchers navigating these emerging methodologies often face uncertainty and delays in securing approval, as regulatory processes struggle to adapt to the complexities of AI-driven analysis.

Augmenting Relevant Qualitative Research Reporting Frameworks

Existing Reporting Frameworks

At the micro level, the lack of transparency in an AI-informed qualitative research project fosters skepticism about the validity of the findings, the trustworthiness of the research, and the integrity of the researchers who produced them. At the macro level, insufficient transparency challenges the very foundation of qualitative inquiry. Without transparent reporting, the fundamental goal of capturing the complexity of human experience is compromised, reducing nuanced, interpretive insights to little more than algorithmic outputs. New ways of thinking about transparency in qualitative research are needed sooner rather than later, assuming that the presence of AI in research work is only going to increase.

Selecting and Augmenting COREQ and SRQR

The following sections attempt to meet new needs in qualitative research by augmenting two highly-cited reporting frameworks for qualitative research to address generative AI uses and transparency problems: 1) the Consolidated Criteria for Reporting Qualitative Research (COREQ) (Tong et al., 2007) and 2) the Standards for Reporting Qualitative Research (SRQR) (O’Brien et al., 2014). 2

COREQ is a 32-item checklist designed to enhance explicitness and comprehensiveness in the reporting of qualitative studies; it is listed in Appendix 1. The checklist addresses three domains: (1) research team and reflexivity, (2) study design, and (3) data analysis and reporting. The authors were motivated to create COREQ by a need to address insufficient reporting standards in qualitative research, with the aim of improving the rigor, credibility, and scientific validity of this category of science. These same motivations were shared by the SRQR authors. The SRQR checklist comprises 21 items across six categories covering key aspects of qualitative research reporting, which were derived from a systematic review and synthesis of existing guidelines, appraisal criteria, and reporting standards for qualitative research. SRQR is listed in Appendix 2.

While sharing the same motivations, the reporting frameworks achieve their goals through slightly different structures and foci. COREQ promotes transparency by detailing the specific information that researchers should explicitly report across the domains, whereas SRQR targets key elements in different sections of a qualitative research article. When considered in isolation, each framework provides useful guidance. But combined they allow one to consider major themes concerning rigorous and transparent qualitative research and how to apply guidelines in specific sections of a research article.

Transparently Reporting Operations when Using Transformative AI (TROUT-AI)

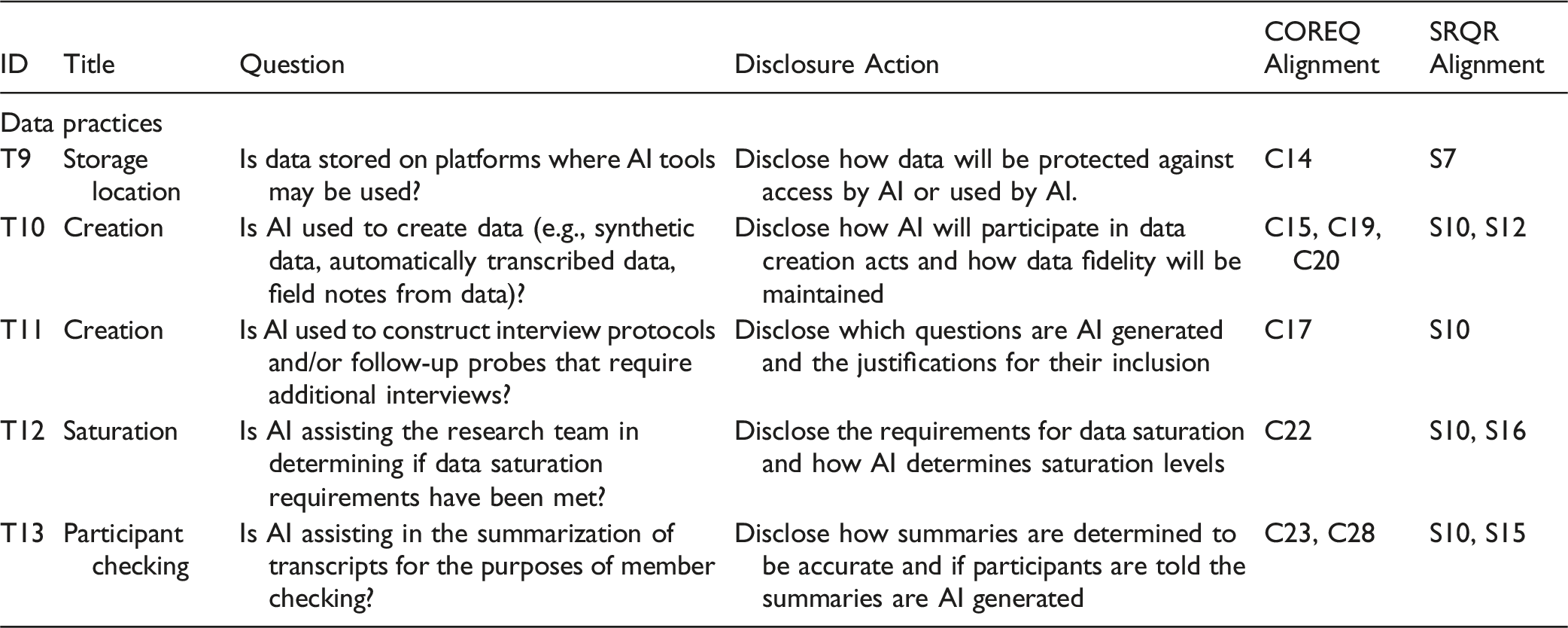

As the COREQ and SRQR frameworks were published well before the rise of generative AI (2007 and 2014, respectively), they do not address the emerging transparency problems discussed above. However, their targeted thematic and structure-specific guidelines provide enough guidance for what areas to address in qualitative research since AI’s transparency issues are widely known. To guide researchers through the process of maximizing transparency when conducting qualitative research augmented by the use of generative AI, I created a matrix that poses 20 questions across five themes regarding the use of AI and provides disclosure actions to follow when the questions are answered in the affirmative. Titled “Transparently Reporting Operations when Using Transformative AI (TROUT-AI),” TROUT-AI themes were developed after analyzing the COREQ and SRQR frameworks in relation to transparency needs. The questions are aligned with 25 of the 32 COREQ principles and 17 of the 21 SRQR principles. I provide TROUT-AI in full in Appendix 3 for reference. The following subsections address the five themes and describe the disclosure actions with recommendations. Alignment with TROUT-AI items are prefaced with a T#.

The Research Team

Team members must specifically address the expansion of traditional qualitative research teams to explicitly include generative AI tools, thus extending the scope of reflexivity to encompass the technological dimension of research practices. The Research Team section (T1, T2) argues for thorough documentation regarding the incorporation of AI. It requires human researchers to clearly document what tools they used and the roles of those tools throughout the research project (T1). Doing so emphasizes that qualitative research teams must now reflexively account for technological influences, not merely human ones.

The documentation should include the name of the tool and any relevant model information to maximize transparency. The documentation must comprehensively describe the tasks the AI performed, ranging from literature synthesis and text revision to data summarization and coding. For example, a researcher might detail how a ChatGPT model was used to generate preliminary codes from interview transcripts, specifying the prompts provided to the model and how its outputs influenced subsequent human interpretation.

Whenever possible, team members should be explicit about their competencies with the AI tools employed in the study (T2). While formal credentials and training may not be available, team members can disclose a description of their professional development pathways toward mastering important skills (e.g., prompt engineering) with a specific tool. Researchers should also acknowledge their understanding of AI limitations, including such things as hallucinations and managing biases. These reflections could exist in the methods section of an article, for instance, as an appendix, or linked to from a research work to a research repository of associated documentation (e.g., the Open Science Framework (also known as OSF)). Access to this documentation by readers should improve the trustworthiness of the research.

Participant Interaction

The Participant Interaction section (T3-T5) focuses explicitly on how generative AI tools interact with research participants within qualitative studies. The TROUT-AI guidelines require clear documentation if generative AI tools are used for initial participant communication or screening (T3). Researchers must transparently describe how AI is deployed in participant outreach, such as automated email or chatbot screening, and provide rationale for these choices. For example, if a chatbot initially screens participants based on predetermined criteria, researchers must disclose the specific prompts and decision rules used by the chatbot.

Participants’ awareness and understanding of AI usage in the research process are critical (T4). Researchers must explicitly detail how informed consent documents describe AI’s involvement, specifying models used, their purpose, and their implications for participants. For example, researchers must clarify if an LLM is employed to summarize participant responses and how such usage may affect their data privacy or interpretative accuracy.

Participant protection concerning AI’s handling of sensitive demographic data is also crucial (T5). Researchers must disclose the safeguards implemented to prevent a participant’s identity and demographic biases embedded within AI systems from harming consenting participants. For instance, if demographic variables are involved in an AI-driven data analysis, researchers must explain explicitly how the potential biases were mitigated through strategies like controlled prompt engineering, human oversight, or cross-validation with human-generated analyses.

Study Design

In the Study Design section, the focus here is on how generative AI is methodologically integrated into qualitative research (T6-T8). Researchers must explicitly outline the alignment of generative AI tools with their theoretical frameworks and chosen qualitative methodologies (T6). For instance, if a phenomenological study incorporates generative AI for initial thematic coding, researchers should meticulously describe how the AI complements human interpretive tasks without undermining the interpretive depth characteristic of phenomenology. Researchers must also disclose how these AI tools are selected and fine-tuned for their specific qualitative purposes, considering factors like model accuracy, interpretative biases, and capability to manage qualitative complexity.

The TROUT-AI guidelines necessitate documenting generative AI’s influence on sampling strategies (T7). Researchers must clearly describe the algorithms, prompts, or logic underpinning AI-assisted sampling decisions, detailing how the use of AI impacts representativeness and potential biases. For example, if an AI algorithm is employed to filter participants based on responses to open-ended questions, researchers should disclose the decision rules and the rationale behind AI-driven participant inclusion or exclusion criteria.

As with all qualitative research where human subjects are involved, researchers need to disclose their IRB processes, but they should go above and beyond what is typically required to discuss how AI was considered in the IRB application and approval procedures (T8). These disclosures should include how policy and ethical discussions concerning AI’s integration into the research project were discussed, with whom, and any outcomes. Being transparent about ethics and policy friction points likely has the positive effect of increasing trust in the research due to the research team’s open reflexivity. Further, such disclosures can educate other research teams about possible issues they might face when pursuing AI-augmented qualitative research.

Data Practices

TROUT-AI guidelines under the Data Practices section stress meticulous documentation of data handling (T9-T13). Researchers must explicitly detail the storage, management, and security practices associated with data, particularly when data are hosted on cloud-based platforms accessible to AI (T9). It is essential to clearly report the security protocols and compliance measures in place to ensure participant confidentiality and data integrity. For instance, if interview transcripts are uploaded to a cloud-based AI analysis platform, researchers must describe encryption measures, access control protocols, and data retention policies.

TROUT-AI mandates clear articulation of generative AI’s role in data creation processes, including the generation of synthetic datasets or automated transcriptions (T10). Researchers should document the authenticity and accuracy measures applied, such as validation processes comparing AI-generated data against original human-generated data, and clearly outline steps to rectify discrepancies. For instance, researchers might describe how automated transcriptions generated by AI are cross-checked against human transcriptions for accuracy and completeness. Any corrective steps (e.g., manual edits, tool retraining) should be logged and reported.

AI may be used to generate interview protocols or qualitatively-analyzable survey questions, which require research teams to disclose such practices (T11). Researchers could provide the exact prompts or exemplar outputs in an appendix, justify why AI-drafted items were retained or modified, and describe pilot testing procedures that ensured questions aligned with the study’s epistemological stance and goals. At the minimum, the use of AI for these tasks should be clearly justified.

When researchers enlist AI to help evaluate thematic saturation, they must disclose their practices (T12). This may include the quantitative or heuristic thresholds chosen, the rationale for those thresholds, and any human interventions in that process. Reporting should note disagreements between AI and human judgments and how they were resolved.

Finally in this section, participant checking—or the processes by which researchers confirm data and/or interpretations with research participants—needs to be considered for the ways AI might transform the outputs presented to participants (T13). Participants need to be able to see themselves in data created by the researchers, but if data is a direct result of AI (i.e., by transcribing or summarizing), then it may be that the data contains bias or other misrepresentations of the participant. Any data or documentation included in the participant checking process that has been influenced by AI should be transparently reported to participants.

Data Analysis

The final TROUT-AI section, Data Analysis, focuses on comprehensive transparency in documenting the specific contributions of generative AI tools to qualitative data analysis tasks (T14-T15). Researchers are required to explicitly detail the precise roles and extent of AI involvement, particularly concerning tasks such as coding and thematic categorization (T14). If AI-generated themes or codes form the initial basis for analysis, researchers must clearly delineate how AI-generated outputs were subsequently reviewed, refined, or modified by human analysts, ensuring methodological clarity. For example, if a researcher uses ChatGPT to generate initial thematic categories, the subsequent human validation steps and any adjustments made to these AI-derived categories should be explicitly reported.

TROUT-AI emphasizes detailed documentation of iterative interactions between researchers and AI tools, particularly regarding the refinement of codes and themes based on emerging insights from the data (T15). Researchers should provide comprehensive documentation of this iterative process, including the specific prompts provided to AI, AI responses, and subsequent researcher-driven adjustments. Detailed documentation should explicitly identify instances where AI recommendations were integrated, modified, or rejected and the rationale behind these decisions. This approach ensures transparency in how AI influences interpretive trajectories and final analytical outcomes.

Conclusion

As qualitative research increasingly integrates generative AI, addressing transparency becomes imperative to maintain the credibility and integrity of scholarly inquiry. This analysis underscores that although AI offers significant potential for enhancing qualitative research, its inherent opacity poses substantial methodological and ethical challenges. To mitigate these issues, I have proposed a heuristic framework designed to enhance transparency through rigorous audit trails, standardized reporting mechanisms, and dynamic documentation protocols. This structured approach provides clear guidance for researchers navigating AI integration, ensuring they preserve methodological rigor without compromising interpretive depth or ethical standards.

Future research should focus on empirically validating TROUT-AI. Studies should investigate how maintaining detailed audit trails and reflexive documentation influence the interpretive integrity and credibility of qualitative findings. Additionally, refining standardized protocols for AI integration, particularly regarding decision-point documentation in human-in-the-loop workflows, and establishing knowledge repositories for interdisciplinary best practices are critical tasks. Finally, future investigations should explore the ethical dimensions of AI integration in qualitative research. Specifically, studies could examine how transparency-enhancing measures mitigate risks related to participant privacy, informed consent, and bias amplification. By systematically linking transparency practices to ethical outcomes, the field can develop strategies that balance the transformative potential of AI with the core commitments of qualitative inquiry—ensuring that methodological integrity, ethical responsibility, and interpretive depth remain central to AI-augmented research. Only through implementation, testing, and refinement will TROUT-AI lead to improved transparency practices.

As it is the case with all research, TROUT-AI has its limitations. While the TROUT-AI framework provides significant advancements toward transparency, it cannot resolve the inherent “black box” nature of LLMs and related AI applications, and it provides no guidelines for doing so in ways that the explainable AI (e.g., Dwivedi et al., 2023) and critical algorithm studies (e.g., Beer, 2018) communities continue to pursue and provide. TROUT-AI does not attempt to address the normative question: should generative AI be used in qualitative research? This question is open to debate among methodologists. One can assume, however, that the research community will continue to adopt generative AI in its practices. So, it is critical that these conversations occur sooner rather than later, and that acceptable methods are clearly described so that researchers can rigorously use generative AI successfully and ethically.

Supplemental Material

Supplemental Material - Generative AI in Qualitative Research and Related Transparency Problems: A Novel Heuristic for Disclosing Uses of AI

Supplemental Material for Generative AI in Qualitative Research and Related Transparency Problems: A Novel Heuristic for Disclosing Uses of AI by Kyle M. L. Jones in International Journal of Qualitative Methods

Footnotes

Ethical Considerations

Institutional Review Board (IRB) approval was not required for this study.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author declares no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

AI Usage Disclaimer

This manuscript incorporates the use of generative AI tools—including ChatGPT, Google’s NotebookLM Plus, and Elicit—throughout its development. These tools assisted in literature synthesis, drafting and revising text, checking logical flow and organizational coherence, and identifying potential conceptual gaps. Multiple versions of ChatGPT were used at different stages. GPT o-series models (including versions with Deep Research capabilities) were used for their advanced and transparent reasoning and analysis, while GPT-4.5 was primarily employed for writing enhancement. NotebookLM was used for targeted literature analysis. Elicit was used for broad literature identification and analysis. NotebookLM Plus uses Google’s Gemini language model (version unknown) and Elicit’s AI model is not publicly disclosed. The author assumes full responsibility for the interpretation, accuracy, and final content of the manuscript, including any outputs derived from AI tools.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.