Abstract

The integration of artificial intelligence (AI) into qualitative research is shifting the boundaries of knowledge production. Instead of mere tools, AI is increasingly conceptualized as a co-researcher or research partner—an epistemic agent that helps in the analysis, interpretation, and reflexivity of data. This article proposes an abductive model, called AbductivAI, for analyzing qualitative data, based on collaboration between humans and AI agents. It explores how AI functions as an analytical partner, reflexive collaborator, and participant in distributed cognition within the framework of the human-AI qualitative research workflow and data analysis approach. To build the model, we used advanced prompting techniques (Chain-of-Prompting) and used the 323 abstracts submitted to the World Conference on Qualitative Research as data. Although this data was not intended for research, its exploration allowed us to test the AbductivAI model, showing that interactions between humans and AI agents can benefit qualitative data analysis if the human recognizes and defines their role as a reflexive agent responsible for the entire process.

Introduction

The proliferation of studies examining Generative Artificial Intelligence (GenAI) in research contexts has increased exponentially in recent years. A key question arises: how can qualitative, unstructured, and non-numerical data take advantage of these technological advances without losing the important insights that come from researchers’ interpretations and conclusions?

In contemporary qualitative studies, researcher bias is widely acknowledged as inherent. This bias can be addressed through established data validation and triangulation techniques. Concurrently, ethical considerations surrounding GenAI implementation have gained prominence, including discussions of bias mitigation, responsible AI usage, and algorithmic fairness. Integrating AI into social science methodologies represents more than technological augmentation—it signals a fundamental transformation in qualitative knowledge production. Traditional qualitative analysis has been conceptualized as an inherently human-centered endeavor, relying on subjective interpretation, contextual understanding, and reflexivity (Denzin & Lincoln, 2018). The rise of AI as a possible partner in research, instead of just a tool, raises important questions about how we understand information versus how we detect patterns (Floridi & Chiriatti, 2020), how it affects researchers’ self-awareness, and what guidelines we need for successful teamwork between humans and AI (Mesec, 2023).

This study proposes a collaborative coding protocol integrating traditional qualitative techniques with GenAI applications to mitigate the potential adverse effects of less rigorous technological implementation. Grounded in the principles of Human–AI collaboration, we introduce an Abductive Model that combines deductive and inductive reasoning through Chain-of-Thought Prompting for qualitative data interpretation. Crucially, abduction is not merely a synthesis of induction and deduction but rather a form of creative inference—an interpretive leap that generates plausible and deeper understanding (Reichertz, 2007; Tavory & Timmermans, 2014). The proposal also includes developing AI agents designed to collaborate with human researchers throughout the analytical process.

Rethinking AI’s Role in Qualitative Research

The literature review reveals that integrating AI into qualitative research has led to a critical reappraisal of both technological capabilities and human responsibilities. AI is no longer seen merely as a supportive tool but increasingly as an active partner in different stages of the research process—from data collection and transcription to coding, thematic analysis, and reporting (Lieder & Schäffer, 2024; Nguyen-Trung, 2024). This development invites researchers to rethink the role of AI not only as a technical tool but also as a methodological collaborator while reconsidering their roles as interpreters, ethical gatekeepers, and co-creators of knowledge. In this article, we synthesize recent literature to explore this dual transformation: how AI is reshaping research workflows and how researchers must adapt to maintain the integrity of qualitative inquiry.

AI in Qualitative Research: From Passive Tool to Active Collaborator

Current AI tools make significant contributions across the qualitative research lifecycle. They handle routine and time-consuming tasks such as transcribing interviews, standardizing raw data, performing various levels of coding, and even identifying emerging themes and patterns (Gil de Zúñiga et al., 2024; Wachinger et al., 2024). These functions go beyond simple automation—AI is increasingly used as a semi-autonomous analytical assistant that enhances precision and scalability (Morgan, 2023). Bryda and Sadowski (2024) propose a structured, bottom-up approach using generative and lexico-semantic models (e.g., ChatGPT, NLP techniques) to automate inductive coding and create dynamic codebooks. This allows researchers to focus on deeper interpretive and theoretical work. AI also supports sentiment and emotion detection, anomaly detection, and conceptual visualization (Ibrahim & Voyer, 2024; Jalali & Akhavan, 2024; H. Zhang et al., 2025), enriching the analytical toolkit available to qualitative researchers. By comparing themes with one another, AI can help researchers validate their findings and reveal hidden trends in large data sets. Some scholars suggest more advanced roles for AI, such as a reflexive collaborator or ‘devil’s advocate,’ challenging assumptions and encouraging critical reflection (Johnson et al., 2020; Johnson & Paulus, 2024; Sarofim, 2024; Thominet, Acosta, et al., 2024; Thominet, Amorim, et al., 2024). These contributions position AI not just as an assistant but as a team member or cognitive partner in the research process (Sidji et al., 2024).

The Researcher’s Role Change in the Age of AI

While the analytical scope of AI continues to expand, human researchers remain central to maintaining rigor, ethics, and reflexivity essential to qualitative research. The literature shows that researchers need to do more than just analyze data; they must also critically interpret AI-generated insights to verify that they are relevant and ethical. Hossain et al. (2024) emphasize that while AI can improve efficiency, researchers must remain vigilant to avoid over-reliance. They are responsible for validating AI outputs, establishing audit trails, and ensuring alignment between AI-generated codes and the original research questions (Lockwood, 2024). Similarly, Marshall and Naff (2024) caution against treating AI analysis as inherently authoritative, advocating instead for human oversight rooted in qualitative traditions. As AI tools are integrated into methodologies, researchers must balance their benefits against potential risks, such as the loss of interpretive depth or the perpetuation of algorithmic bias. This highlights the importance of AI literacy, critical engagement, and ethical sensitivity in the researcher’s role (Bhaskar et al., 2024; Gandhi et al., 2024; Paulus et al., 2025).

Tensions and Synergies: Navigating the Human-AI Relationship

The emerging partnership between humans and AI presents both synergies and tensions. On the one hand, AI improves scale, speed, and access—particularly valuable for under-resourced researchers. On the other hand, it introduces epistemological and ethical challenges that require human judgment and methodological integrity (Yang & Berdine, 2023). AI is versatile and adaptable to various qualitative methods, from grounded theory to discourse analysis and ethnography (Gul et al., 2024). However, its implementation depends on whether it is framed as a passive tool (used under strict human control) or an active agent contributing to meaning-making and theory-building (Burleigh & Wilson, 2024; Morgan, 2023). This distinction directly shapes how researchers engage with AI and interpret its contributions. As Williams (2024) notes, strategic implementation is key: researchers must make conscious decisions about when and how to use AI to support rather than replace human interpretation. Castelló and White (2023) similarly argue that AI can enrich theory building only if embedded in a critically aware research process.

Implications for Training and Research Practice

The integration of AI into qualitative research workflows requires ongoing training and professional development. Researchers need to acquire foundational skills in AI technologies to operate the tools effectively and evaluate their findings through a critical and ethical lens (Gandhi et al., 2024). This shift will also require institutional support and pedagogical reform. Future generations of researchers should be equipped to view AI not as a threat, but as a reflexive partner that necessitates thoughtful, contextualized interaction. As Ibrahim and Voyer (2024) point out, the adaptability of researchers is crucial to integrating new tools without compromising qualitative depth. It is crucial for researchers to assume responsibility for ensuring the transparent and ethical use of AI systems. Establishing audit trails, maintaining methodological alignment, and contextualizing AI outputs are essential to preserving qualitative inquiry’s human-centered essence (Lockwood, 2024; Marshall & Naff, 2024). The role of AI in qualitative research is being radically reimagined—from task automation to active collaboration. However, this transformation does not diminish the centrality of human insight. Instead, it reinforces the importance of critical interpretation, ethical responsibility, and methodological grounding. By viewing AI as a co-analyst or cognitive partner—rather than a replacement—researchers can harness its strengths while preserving the philosophical underpinnings of qualitative inquiry. The future of human-AI collaboration depends on technological advances and the researcher’s ability to engage reflexively, adapt intelligently, and maintain the interpretive richness that defines qualitative research.

AI as a Co-Researcher: a Theoretical Perspective

Actor-Network Theory (ANT) (Latour, 2005) and Sociomateriality (Orlikowski & Scott, 2008) are two different ways of thinking that provide useful views on how humans and AI interact during research. ANT has been used within qualitative research to examine interrelationships, interplays, and engagements between diverse entities (Landers et al., 2023; Shilon, 2023; Törrönen, 2022). The theory explores how entities such as humans and AI interact (Holton & Boyd, 2021) to form networks. It proposes that knowledge and reality emerge from these formed networks, where actors (i.e., entities that play a role in a process or outcome) continually negotiate roles and relationships. ANT fundamentally acknowledges all entities’ contributions to the collective construction of reality (Keiff, 2024). Its ontology is inherently relational, conceiving entities as constructed through interactions with others rather than predefined and stable.

Today’s discussions about knowledge, particularly in theories like Actor-Network Theory (ANT), view knowledge as arising from the diverse connections within networks (Birkbak, 2023; Cresswell et al., 2010). This shift reflects a move away from traditional conceptions of knowledge as the product of individual, rational cognition toward an understanding of knowledge as relational, distributed, and materially situated. This also echoes Callon’s position on the manner in which scientific knowledge is co-constructed through networks, challenging individual-centered epistemology (Callon, 1984). Within this context, epistemological inquiry focuses not solely on the human subject but on the dynamic configurations of actors—both human and non-human—that co-produce knowledge outcomes. Thus, knowledge is considered something that comes from diverse connections, where different things work together to shape each other. This means that knowledge does not come from isolated sources. Instead, it arises through the interaction of diverse components/actors within a network. These networks include various actors—such as researchers, tools, and algorithms—each playing a role in shaping epistemic outcomes. Agency is distributed across these networks, with both human and non-human actors—such as technologies (currently in the form of AI)—influencing and reshaping research processes (Sayes, 2014). These actors participate actively in framing questions, structuring data, and in the production of meaning. This has profound implications for understanding knowledge production, as it suggests that epistemic authority does not reside exclusively with the human knower, since the human element is advocated by sophisticated technological means. Consecutively, knowledge is dynamically generated through interactions with a broader array of agents, including AI. The definition of a knowledge-producing entity eventually broadens with the inclusion of non-human actors in epistemic processes. ANT thus provides an important framework for understanding the fluidity of research processes and the contemporary forces—such as AI—that shape epistemic outcomes. By viewing knowledge as something that comes from the interactions between different types of people and groups, this approach highlights how complicated and spread out knowledge creation is in today’s world (Saleem & Raza, 2023).

Similar ideas about existence form the basis of sociomateriality theory, which highlights the close links and mutual shaping of social and material aspects. It recognizes intangible and physical elements in production processes (Smith, 2019; Sy et al., 2024). The social aspect includes human decision-making, methodological choices, and discourses surrounding AI’s role—such as its function in automating data collection and analysis processes. The material aspect refers to AI’s technological and infrastructural features, such as algorithms and training sets, influencing qualitative production. The ontology of sociomateriality is deeply entangled, and epistemologically, knowledge is produced through ongoing sociomaterial configurations. This focus shifts attention from isolated entities to the emergent nature of actors and their mutual influence. It also supports the conceptualization of AI as a co-creator in research, dependent on researchers allowing AI to act as a co-researcher—beyond being considered a mere tool. In this light, Scott and Orlikowski (2025) propose treating AI not as a “thing” but as a phenomenon in the making. Christou (2023) highlights AI’s potential in theory development within qualitative contexts.

Integrating ANT’s networked and relational prism with the performative lens of sociomateriality enables a more comprehensive understanding of AI’s role in research. This merged theoretical view positions AI not as a passive processor but as an active agent within research networks, shaping and being shaped by embedded practices. The convergence of these lenses captures networked interaction and deep entanglement. ANT illustrates how entities such as humans and AI act as dynamic actors in research networks, while sociomateriality frames AI’s inseparability from knowledge production. Together, they offer a nuanced, robust set of lenses to understand AI as a co-researcher by revealing its entangled, relational, and performative presence in research processes. As discussed above, this is achieved through ANT’s focus on how AI becomes part of research networks by shaping how tasks are performed, how decisions are made, and how knowledge flows between different actors. It illustrates that AI is not outside the research process but actively involved in it. At the same time, sociomateriality draws attention to how AI’s technical features—such as algorithms and interfaces—work together with human choices, methods, and evaluations. These two views show how AI affects research methods, knowledge production, and meaning-making, not just how researchers use it. Ultimately, this delivers a valuable pathway for generating new theoretical and practical insights into AI’s role as a co-researcher and co-producer of knowledge.

Collaborative Coding Protocol

The abductive model, defined here as AbductivAI, was created to promote collaboration between humans and AI agents to improve the quality of qualitative data analysis processes. For testing the proposal, we analyzed the World Conference on Qualitative Research 2025 abstracts (title, abstract, keywords, field, and topics). Costa and Amado wrote the book “Content Analysis Supported by Software” (Costa & Amado, 2018), and they created two models (inductive/open and deductive/closed) based on ideas from Mayring (2014) and Amado (2017) to assist researchers in analyzing qualitative data and setting categories. Following these two models, we propose the AbductivAI model (Figure 1), which is a mix of the first two models with an integration of GenAI. AbductivAI Model. Made With Draw. io

The model is structured in several iterations. For example, the reconfiguration of co-coding categories reveals the need for new categories (move from phase 4 to phase 2). At the end of coding, due to human disagreements, it is necessary to adjust inconsistencies that have been detected (move from phase 6 to phase 5). The results challenge the initial question, leading to a theoretical refinement (phase 8 to phase 1). These iterations enrich the process while allowing for more in-depth data analysis.

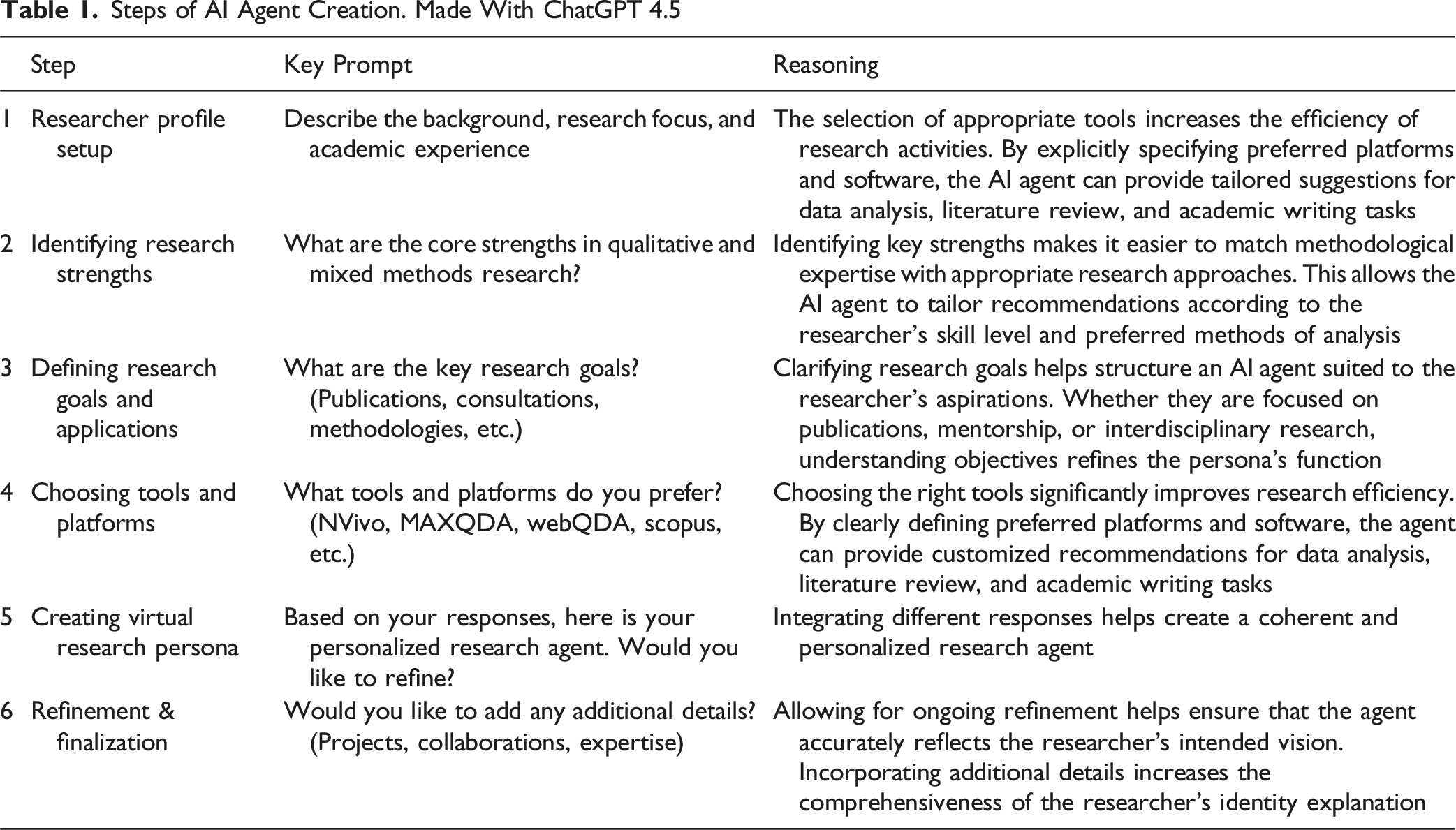

AI Agents

Steps of AI Agent Creation. Made With ChatGPT 4.5

AbductivAI Model

Procedures for Each Phase of the AbductivAI Model

Chain-of-Thought (CoT) Prompting in AbductivAI Model

From the description, the AbductivAI model seems rather laborious because humans must encode all the data using traditional methods. This model can be explored in situations where there is only one human, and, for example, an agent comes in as a second coder and a third as an expert to validate the coding carried out. At this point, the human must also verify the agent’s expert role results.

Chain-of-Thought Prompting—Collaborative Coding Protocol

The growing integration of artificial intelligence into qualitative research offers promising new pathways for enhancing rigor, transparency, and scalability in qualitative data analysis. This proposal describes a Chain-of-Thought (CoT) Prompting (Wei et al., 2022) (Table 3) that draws on the AbductivAI model, a structured framework that blends iterative category refinement, collaborative human–agent coding, and abductive reasoning. The proposal is intended for researchers working with textual datasets. The CoT guides humans and AI agents through each analytical phase, from research question formulation to final interpretation.

Two AI agents and two human coders collaborate, moving through distinct phases that facilitate both inductive discovery (arising from the data) and deductive coding (based on theoretical frameworks). Initial codes are generated through theoretical background and exploratory questioning. As coding progresses, both humans and agents apply, revise, and expand this coding system, allowing for dynamic interpretation and validation.

This step-by-step CoT Prompting (Wei et al., 2022) ensures analytical consistency, encourages critical reflection, and leverages computational tools for deeper insight. Studies that involve a lot of qualitative data or combine different fields can greatly benefit from its ability to verify findings through cross-validation, reduce personal bias, and adapt to new ideas or categories.

The structure that follows corresponds to the eight phases of the AbductivAI model and can be adapted to qualitative CAQDAS, such as webQDA or NVivo, implemented programmatically using Python. It is ideal for researchers aiming to conduct transparent, collaborative, and theory-informed qualitative analysis with the support of generative AI.

Discussion

The accuracy, reliability, and validity of the data and findings are especially important in qualitative research. AI-generated insights are the result of analyzing data provided by researchers, utilizing the algorithms of LLMs along with their training data and contextual understanding. To answer the research question and to generate insights for qualitative research, the LLM model processes large amounts of data, looking for patterns, themes, and categories, and summarizing them according to the requirements of the chosen qualitative research method. It is important to note that AI does not create entirely new knowledge on its own. The qualitative research themes or categories it generates are based on what that LLM model has already learned from the data the LLM has been given. Thus, the algorithm the LLM uses directly validates the insights it generates. Research by Allemang and Sequeda (2024) shows that the accuracy of LLM using a knowledge graph has improved from 16% to 54%, which is quite an achievement, but when it comes to AI as a co-researcher, it is still not enough for today. Researchers are constantly striving to improve the accuracy of knowledge generated by AI. For this purpose, different algorithms are used, such as grouping all questions in the benchmark, evoking rules related to the body of the query domain, and many more (Allemang & Sequeda, 2024). Other authors have evaluated the performance of various LLMs. In most studies, researchers look at how well ChatGPT, Claude, Gemini, Llama3, and other popular models answer questions and handle different topics, focusing on how complex the questions are and how accurately the AI can respond (Aydın et al., 2025). The goal is to use a variety of prompt engineering techniques ranging from simple zero-short (Wei et al., 2022), few-short (Brown et al., 2020), and chain-of-thought prompting (Wei et al., 2022) to complex multimodal CoT (Zhang et al., 2023) or graph prompting (Liu et al., 2023). However, even when using the most sophisticated prompt engineering techniques and training AI (in pre- and post-training sessions) with the largest and most diverse datasets possible, the challenge of the reliability of AI-generated insights remains. In their detailed study on the ethical issues of generative AI and ways to address them, Huang et al. (2025) found that how trustworthy and accurate AI-generated insights are relies not just on the quality of the data or the prompt engineering methods, but also on limitations of the algorithms (for example, some models are like black boxes, making it hard to confirm if the generated text is valid and reliable) and on rules and transparency, since current regulations do not always guarantee the reliability of AI text because of the different situations in which qualitative data is used.

Ethical Considerations in AI-Assisted Qualitative Research

Ensuring ethical integrity is essential when integrating AI agents with qualitative research workflows. In the context of the AbductivAI-informed Chain-of-Thought (CoT) Prompting protocol, ethical practice is maintained through a combination of transparency, accountability, reflexivity, and collaborative validation. AI agents need to clearly record everything they do—such as coding, suggesting categories, or analyzing data—and make sure to separate their work from human choices, including keeping track of code versions and tagging history for easy reference (Al-kfairy et al., 2024). Abstracts or participant-generated content must be anonymized and processed in compliance with data protection principles, and AI agents must operate on pre-screened and ethically approved datasets (Davison et al., 2024). To avoid accidentally supporting existing biases or dominant ideas, human coders play an important role in interpreting the data, particularly during the stages of discovering new insights and making final judgments. The process requires continuous reflection on how human–agent interactions might shape the knowledge production process, with coders logging and discussing moments of disagreement, uncertainty, or revision (Costa, 2020).

Rigour

In qualitative and mixed methods research, rigor is particularly important because it indicates the quality and reliability of the study, allowing for much interpretation by the researcher (LaDonna et al., 2021; Schwandt et al., 2007). A well-conducted research study must have the potential to be replicated with a different data sample, in different contexts, or under different initial conditions (Johnson et al., 2020). In our study, AI, as a co-researcher, participated in all steps alongside the human researcher. Although AI models are constantly evolving and developing, the codes generated by AI still cannot be blindly trusted. In our study, we concur with Christou’s (2023) perspective that human researchers must reflexively evaluate and validate AI-generated codes. We agreed with Mazeikiene and Kasperiuniene’s (2024) study that artificial intelligence can only provide trustworthy qualitative coding if a researcher checks and confirms the AI’s subcategories and categories at each stage. We propose that human-AI collaboration must be an iterative process, with review and tracking of all steps by a human researcher. The AI’s work needs to be clear; every step and detail must be shared (Hasija & Esper, 2022), AI-created codes must be checked against those made by human coders (Sinha et al., 2024), and only after confirming all these points can AI and humans work together in social research.

Conclusion: Towards Human-AI Transformative Paradigm Shift

AI’s integration as a co-researcher in qualitative studies represents a revolutionary shift in research paradigms, fundamentally transforming the creation and understanding of knowledge. This evolution extends far beyond enhancing existing methodologies—it represents a complete reconceptualization of qualitative inquiry itself. Examining the literature reveals AI’s trajectory from passive tool to active intellectual contributor. Actor-Network Theory provides the theoretical underpinning for this shift, positioning AI as an engaged participant that actively shapes research alongside human investigators, challenging traditional notions of research agency and expanding possibilities for knowledge creation. The key part of this change is the rise of complementary intelligence, which is a teamwork approach where AI’s ability to handle large amounts of data, spot trends, and create themes works together with humans’ understanding of context, moral decisions, and detailed interpretation. This partnership produces insights neither could generate independently, embodying true co-research. This collaboration is simultaneously reshaping qualitative methodologies. Approaches like “Reconstructive Social Research Prompting” explicitly incorporate AI as an interpretative partner, while new hybrid methodologies expand traditional boundaries. These innovations signal the birth of entirely new research paradigms rather than mere enhancements of existing approaches. Power dynamics within research teams inevitably transform under this model. While humans maintain the final interpretative authority and ethical responsibility, AI increasingly shapes the direction of research by identifying patterns and providing analytical suggestions. This distributed research agency demands new frameworks for understanding collaborative knowledge production and accountability. The ethical dimensions of this relationship require deliberate attention. AI’s increased agency raises critical questions about transparency, representation, and responsibility that must be addressed through intentional ethical design and ongoing critical reflection. Specialized ethical frameworks for human-AI collaboration become essential for establishing trust in co-produced research outcomes. Practically, implementing the co-researcher model requires sophisticated research designs with explicit plans for collaboration through iterative workflows, feedback mechanisms, and validation processes. These designs must strategically balance AI’s analytical capabilities with human contextual expertise, creating more complex but potentially more powerful research approaches. Success in this model depends on mutual preparation. Human researchers need comprehensive training in AI capabilities and limitations, while AI systems require specific fine-tuning for qualitative methodologies rather than relying on general-purpose models. This dual preparation establishes the foundation for effective collaboration. Looking forward, the AI co-researcher concept opens critical directions for future inquiry: developing specialized AI systems for qualitative research, creating validation protocols for co-produced knowledge, exploring how different research traditions might engage with AI collaborators, and investigating how co-researcher dynamics might democratize research access. In simple terms, AI shift from being just a tool to a co-researcher marks a major change in how we do research—creating teamwork that combines different types of intelligence to build knowledge together, while also bringing up important questions about research ethics, design, training, and how we understand knowledge that will influence the future of qualitative research.

Footnotes

Author Contributions

All authors were personally and actively involved in the work that led to this article and take responsibility for its content.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article:The work of first is funded by national funds through FCT – Fundação para a Ciência e a Tecnologia, I.P., under the Scientific Employment Stimulus - Institutional Call - [CEECINST/00013/2021/CP2779/CT0011] and the CIDTFF.

Declaration of Conflicting Interests

The authors declare that there are no conflicts of interest regarding the research, authorship, and/or publication of this article.