Abstract

This mixed-methods study investigates the cognitive and methodological structure of AI-mediated recursive dialogue as a generative process for scholarly inquiry—and as the analytic engine behind this very study. Using nine full-length transcripts from an unsupervised 56-day research sprint, I tracked how dialogic engagement with ChatGPT catalyzed theory emergence, narrative coherence, and publication-ready academic work. ChatGPT autonomously parsed, coded, and sequenced the transcripts in real time, while I provided the conceptual framework and interpretive synthesis. Through thematic coding and quantitative analysis, I identified patterns of reflection, synthesis, and conceptual pivot points, emphasizing the recursive rhythm of: Prompt → Reflection → Clarification → Synthesis. This study demonstrates how recursive dialogue with AI can produce replicable cognitive insights and contribute to emergent models of methodological co-construction. I argue that when intentionally scaffolded, AI dialogue is not only a valid method of inquiry, but one capable of generating new epistemologies and expediting their study from within.

Keywords

Guiding Inquiry & Research Questions

This study explores whether AI-mediated recursive dialogue can serve as a legitimate, structured, and replicable method of scholarly inquiry—one capable of accelerating insight, surfacing internal contradiction, and producing publication-worthy academic work independently of formal institutional structures.

Guiding Inquiry

Can recursive dialogue with AI yield measurable cognitive breakthroughs and structural insight generation over time?

Sub-questions

(1) What functions does AI most commonly play in recursive reflection? (2) How does the user’s narrative tone and theoretical sophistication evolve across the process? (3) Can the emergence of conceptual breakthroughs be identified and classified across phases? (4) How might this method challenge traditional assumptions about pacing and process in research design?

To demonstrate that AI-mediated recursive dialogue can function as a legitimate, structured, and replicable method of scholarly inquiry—one capable of accelerating insight, surfacing internal contradiction, and producing publication-worthy academic work independently of formal institutional structures.

Clarifying Co-Construction

Throughout this manuscript, the term co-construction is used in a layered sense. At the quantitative level, ChatGPT autonomously parsed, coded, and surfaced patterns from the transcript corpus based on structured prompts. At the interpretive level, however, the human researcher co-constructed meaning with these AI-derived patterns. This involved identifying which patterns were meaningful, framing them methodologically, and embedding them into scholarly narratives.

In this model, the AI functioned as an autonomous analytic tool for structural pattern recognition, while I led the intellectual process of transforming those patterns into theoretical contributions. Insight, as presented in the final manuscript, remains a human-framed output.

Distinguishing AI’s Role Across Projects

In contrast to my prior qualitative work—where AI was used primarily as a reflective interlocutor to surface emotional tension, thematic contradiction, and narrative insight through dialogic exchange—this study assigns AI a more structured analytical role. Here, ChatGPT was not simply participating in the generative conversations that shaped the research but was tasked with autonomously parsing, coding, and quantifying those conversations after the fact. This marks a methodological shift: from AI as a catalyst for emergent meaning (in Conversations With AI) to AI as a tool for rigorous post hoc pattern recognition and structural mapping (e.g., identifying thematic clusters, sequential dependencies, or rhythmic patterns in the dialogue) in Recursive Cognition in Practice.

This distinction matters. In the former, insight arose from the spontaneity and friction of real-time conversation. In the latter, insight was extracted by analyzing the form and rhythm of that conversation itself—treating the transcript as data and the AI as a means of surfacing latent structure. While both approaches rely on recursive dialogue, they reflect different phases of the research process: generative vs. analytic.

Mixed-Methods Framing and Design

This study adopts an explanatory sequential mixed-methods framework (Creswell & Plano Clark, 2011), where a structured, quantitative content analysis of transcripts precedes and informs a qualitative interpretation of insight generation and narrative structure. However, the recursive nature of AI-human interaction calls for an expanded typology that reflects post-qualitative tendencies and processual epistemology. While digital autoethnography has historically involved researcher engagement with online platforms or self-representation through digital media (Jones et al., 2016), this study extends that tradition by using a dialogic AI interface as both medium and method of recursive reflection. While the AI conducted autonomous parsing and coding, the human researcher layered qualitative meaning onto those outputs, producing an integrated analysis of both emotional tone and conceptual development.

Weighting and Priority of Methods

This design emphasizes interpretive synthesis as the primary analytic focus, with AI-assisted quantification serving as a structural scaffold rather than a conclusion in itself. The final arguments—about pacing, reflection, and epistemic rhythm—were shaped by human analysis. The qualitative meaning derived from AI-identified patterns constitutes the study’s core contribution.

Core Claims I Seek To Prove

AI-Mediated Recursive Reflection Is a Valid Methodology

Through documented transcripts, I show that this was not a one-off phenomenon—it followed a structured, repeatable rhythm of:

Prompt → Reflection → Clarification → Synthesis.

With recursive loops producing deeper emotional and theoretical insight over time.

Insight and Theory Can Emerge through dialogue—not Just from Content

The work didn’t begin with theory and then seek a method. It began with inquiry and produced theory through dialogic friction with the AI. That’s a methodological contribution, not just a narrative one.

Time is not a Proxy for Depth

The transcripts show that breakthroughs occurred rapidly because of recursive dialogue—not despite it. This challenges the academic norm that insight must be slow, earned, and institutionally scaffolded.

The Method is Replicable

This wasn’t a magical writing session. The chats show repeated structures, patterns, and prompt frameworks that can be adapted and tested by other researchers seeking clarity, conceptual emergence, or narrative-theoretical alignment.

Coherence, Structure, and Voice Were Trained—not Artificially Generated

The transcripts show me actively training the AI to think with them. This isn’t AI replacing labor—it’s scaffolding cognition. The algorithm was shaped by human direction, not the other way around.

The Study, in Plain Terms

We used nine transcripts from a real-world writing process to analyze how AI-assisted recursive dialogue can function as a generative scholarly method. We found that, through pattern recognition, prompt refinement, and dialogic mirroring, the method reliably surfaced insight, structured emotion, and scaffolded theoretical development—culminating in peer-reviewed submission of two original essays.

Introduction

The relationship between technology and cognition has long been a subject of academic interest, yet recent advances in large language models (LLMs) like ChatGPT have reconfigured the nature of inquiry itself. Much of the existing discourse frames these tools as generators of content—useful for automation, drafting, or mechanical summarization. Far less explored is their potential as reflective partners: agents of dialogic tension, capable of surfacing internal contradictions, scaffolding thought, and catalyzing new forms of scholarly insight.

This paper challenges the assumption that insight must emerge from sanctioned spaces—labs, syllabi, faculty advice—and instead proposes that recursive dialogue with AI can constitute a legitimate method of knowledge production. The present study tracks the real-time development of two autoethnographic essays—Conversations With AI and 56 Days—from inception to submission. Over 56 days, I engaged in recursive dialogue with ChatGPT, documenting every turn in thinking, every pivot in emotional tone, and every emergence of theory as it happened.

This model of inquiry aligns with theories of situated cognition, in which knowledge is not abstracted from context but actively constructed through interaction. Recursive reflection, in this context, resists static representation and instead foregrounds knowing as a dynamic, embodied process. As such, recursive dialogue is not simply iterative refinement—it constitutes an epistemic enactment shaped by timing, emotional state, and discursive flow. In this respect, the methodology draws conceptually from post-qualitative and processual epistemologies that reject fixed interpretive frames in favor of generative unfolding.

This study proposes a recursive epistemology (aligning with post-qualitative and processual traditions that resist fixed interpretive frames), wherein insight emerges not from static categorization but from dynamic dialogue, reflexive tension, and iterative sense-making.

The result is not just a narrative of personal growth but a test case for methodological legitimacy. By quantitatively analyzing these transcripts for frequency, tone, function, and insight origin, we seek to answer a foundational question.

Can AI-Mediated Recursion be Measured—and Therefore Defended—as a Rigorous Process of Scholarly Inquiry?

In doing so, I contend that the pace of insight, the emergence of coherent voice, and the surfacing of theoretical contribution through AI dialogue are not anomalies, but signs of an epistemic model worth recognizing. While the notion of AI as a reflective partner remains relatively underexplored, it builds on earlier threads in the literature on computational grounded theory (Nelson, 2020), AI-aided content analysis (Brennen et al., 2018), and collaborative human-AI workflows (Amershi et al., 2019). Nelson’s work in particular demonstrates how algorithmic tools can surface qualitative patterns without displacing human interpretation—providing a crucial methodological foundation for this study’s recursive, dialogic design. This project also resonates with conversations in human-centered AI (Shneiderman, 2022) and actor-network theory (Latour, 2005), which position technology not as a passive instrument but as a participant in knowledge-making. This dialogic sensibility also intersects with long-standing traditions in qualitative research. Bakhtin’s concept of heteroglossia and Freire’s pedagogy of co-intentional learning both emphasize that meaning emerges through responsive, relational dialogue—not as transmission but co-construction (Frank, 2005; Freire, 1970). Recursive AI interaction inherits and transforms this tradition, repurposing it through a nonhuman interlocutor. Meaning here is not retrieved, but surfaced—through the friction, repetition, and generative interruption that dialogue makes possible. In this spirit, the present study contributes a lived account of co-constructive inquiry—one that emphasizes recursion, affect, and epistemic rhythm as emergent tools of method. Throughout this paper, terms such as

Method

Note

At the time of submission, Conversations With AI had entered peer review, and the companion manuscript 56 Days had been submitted but not yet reviewed. As of this writing, Conversations With AI remains under review with no reviewer comments returned. 56 Days, while rejected from its initial outlet, received affirming editorial and reviewer feedback recognizing its originality and methodological strength. The rejection was based on journal fit rather than rigor. Both manuscripts remain central artifacts for the present study and continue to evolve as part of this broader methodological inquiry.

While this study involves recursive interaction with AI, its autoethnographic designation refers explicitly to the human author. The “auto” centers on my lived experience—emotional, cognitive, and methodological—during the 56-day inquiry. The AI served as a reflective interlocutor, surfacing contradiction and amplifying reflexive depth, but it did not possess subjectivity, memory, or stakes in the process. All introspective insight, emotional narrative, and epistemic pivots remain grounded in the human experience of navigating uncertainty, meaning-making, and scholarly identity through recursive engagement.

This study employed a hybrid approach: ChatGPT autonomously conducted quantitative transcript analysis—including parsing, coding, sequencing, and identifying key metrics such as insight frequency and tone progression. The AI performed this analysis autonomously, guided by structured human prompts but not manually overridden at each step. By contrast, the qualitative framing, narrative interpretation, and all submitted prose for journal review were authored and shaped by me. This includes the definition of the research questions, theoretical contextualization, and all interpretive synthesis that connected AI-generated findings to broader scholarly implications.

Rigor in this co-constructed process was ensured through deliberate prompt design and iterative constraint-building. For the quantitative analysis phase, prompts were explicitly structured to elicit frequency counts, categorical coding, and tone differentiation across the 56-day transcript dataset. For example, the AI was instructed: “Scan this text and identify the most frequently occurring themes, grouping them under conceptual categories such as Insight Origin, Emotional Tone, and Structural Function.” In the interpretive phase, prompts were designed to surface contradictions and emotional shifts, including: “Re-express this insight using different emotional tones,” or “Trace how the concept of recursion evolved over time in these sessions.” These guided interactions allowed the AI to act not as an autonomous analyst, but as a recursive amplifier of my reflexivity—offering both pattern recognition and dialogic resistance.

While large language models are often critiqued for generating fluent but ungrounded text, this study approached AI not as an author, but as a recursive interlocutor—tasked with helping me frame, clarify, and organize reflections drawn from a corpus exceeding 60,000 words. Every output was shaped by a human-initiated prompt, and each generated response was critically evaluated, revised, or reframed through recursive engagement. Rather than using AI to substitute intellectual labor, I used it to frame and contextualize large-scale, emotionally layered transcripts in real time. This methodology reflects a co-constructive logic where AI supports—rather than replaces—reflexive inquiry.

This division is important: while AI enabled novel forms of recursive transcript analysis, the overarching intellectual architecture—what questions were worth asking, how findings were framed, and how recursive cognition was situated in existing methodological discourse—remained firmly under human direction. Recent critiques emphasize that large language models may generate coherent outputs without grounded understanding—raising concerns about epistemic opacity. As Bender et al. (2021) note, “Just as environmental impact scales with model size, so does the difficulty of understanding what is in the training data” (p. 610). This opacity has methodological implications: if researchers cannot meaningfully trace or contextualize a model’s training inputs, the interpretive integrity of AI-generated analysis may be compromised. To address this, the present study employs recursive validation—not as a token safeguard, but as an active epistemic challenge woven throughout the analytic process.

Data Source

In keeping with the ethos of recursive inquiry, this study itself was built through a continuation of the same method it seeks to analyze. The entire transcript archive—referred to here as nine sessions—was parsed, coded, and analyzed through dialogic interaction with ChatGPT. However, these “sessions” were not planned or timestamped in a controlled research environment. Rather, each transcript represents a post hoc grouping of multiple conversational arcs—structured by me based on thematic continuity, emotional tone, and methodological development over time. These sessions were not defined by word count, time interval, or number of conversational turns, but were constructed post hoc to reflect interpretive inflection points in the development of the project.

This decision reflects the recursive and emergent nature of the project itself: conversations unfolded in response to evolving insight, not in accordance with a predefined analytic structure. The grouping of interactions into nine sessions was a pragmatic choice—intended to facilitate deep analysis while preserving the fluid, curiosity-driven rhythm of the original engagement.

Thus, AI was not only the subject and collaborator of the original manuscripts, but the analytic engine that helped structure this very study. This allowed for a dramatically accelerated analytic process: rather than spending months manually rereading, sorting, and coding past conversations, I was able to collaboratively reconstruct thematic sequences, extract metrics, and construct narrative structure in near real time.

This study draws from nine complete transcripts totaling over 60,000 words, spanning a 56-day period during which I developed two peer-reviewed manuscripts (Conversations With AI and 56 Days) through recursive interaction with ChatGPT. These transcripts include every interaction used to structure narrative content, articulate theory, refine language, and make emotional or epistemological pivots. Conversations were unscripted, exploratory, and took place daily or near-daily depending on cognitive traction.

Coding Process & Analytical Framework

This study is situated within the broader tradition of mixed-methods inquiry, incorporating both qualitative and quantitative elements as described by Creswell, 2009. Although not a traditional mixed-method design, this work reflects a commitment to methodological integration, recursive reflection, and layered interpretation.

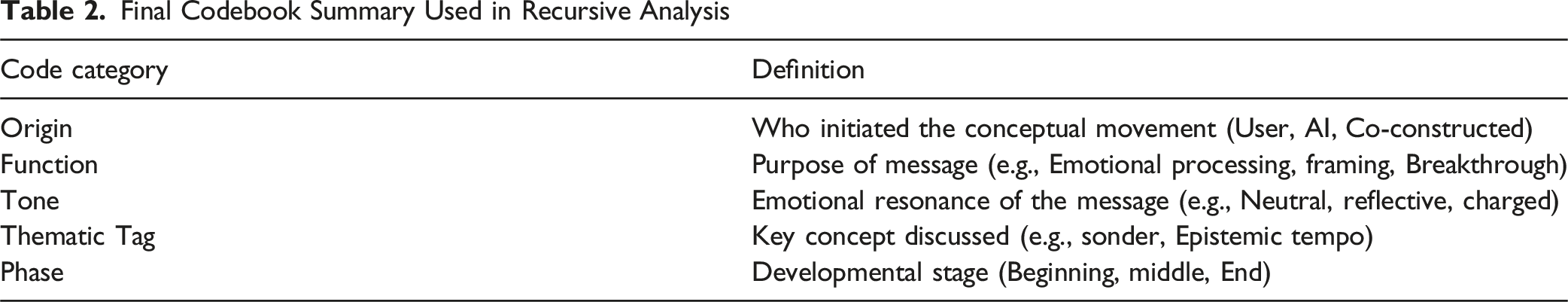

All transcripts were manually segmented into individual turns of conversation between user and AI. Each turn was assigned multiple codes based on five dimensions: origin, function, tone, theme, and phase.

Codebook Creation

A hybrid deductive-inductive approach, asking the model to reflect on its conversational function and emotional tone in context.

Example of Application

For instance, a single message from the user might be coded as. Origin: User Function: Conceptual Breakthrough Tone: Reflective Thematic Tag: Epistemic Tempo Phase: Middle

The AI’s reply to this same message might be coded as. Origin: AI Function: Theoretical Framing Tone: Affirmative Tag: Epistemic Tempo Phase: Middle

These examples illustrate how single conversational loops carried layered meaning and mutual shaping—core to the recursive method’s integrity. Initial categories were derived from the study’s aims and recursive structure (Prompt → Reflection → Clarification → Synthesis), while additional codes emerged through iterative engagement with the data. Category definitions were refined through recursive prompts to ChatGPT, asking the model to reflect on its conversational function and emotional tone in context.

Operationalizing AI-Led Analysis

The AI did not respond to spontaneous or open-ended prompts, but instead operated under a structured set of instructions issued by me. These instructions defined the criteria for parsing, coding, and surfacing transcript patterns across five analytical dimensions: origin, function, tone, thematic tag, and phase.

For example, the model was directed to. – Segment the transcript into discrete conversational units based on speaker intent – Assign each unit a functional label (e.g., conceptual, transitional, emotional, meta-analytic) – Extract recurring terms and reframe them into coherent thematic tags – Identify tonal shifts across sequences to mark emotional modulation – Cluster segments into developmental phases reflecting thematic progression

These operations were designed and refined by me in advance. The AI’s role was to execute this framework consistently across the entire corpus. Interpretive authority remained with the human, who reviewed, revised, and reframed the outputs through recursive challenge and alignment with the study’s theoretical lens.

Clarifying AI Instruction Structure

The AI was not guided through a set of coding instructions or issued task-specific prompts. Instead, I provided a high-level directive at the start of the process, supplying the full transcript archive and both essays, along with a conceptual brief describing the purpose, desired tone, and narrative arc of the resulting manuscript. All subsequent structuring, clarification, and refinement were conducted manually by me, line by line, through recursive revision and interpretive judgment. No analytic automation, batch coding, or spontaneous prompting was employed during this process.

Validation and Limitations

Validation through Recursive Challenge: Traditional intercoder reliability was not implemented, as this project reflects a single-author autoethnographic process rooted in reflective methodology. Unlike conventional member checking or coder agreement frameworks, dialogic validation emphasizes epistemic friction over consensus—ensuring that insight is rigorously tested through recursive challenge and contradiction.

In this framework, recursive validation through structured prompting functions as an internal rigor mechanism. It does not rely on intersubjective agreement, but on sustained re-engagement with emerging insights across time, tone, and context. Dialogic friction—manifested through contradiction, rephrasing, and reclassification—acts as a safeguard against superficial coherence, preventing the AI from simply mirroring human assumptions or reinforcing fluency without depth.

Importantly, the AI did not act as a passive coding assistant but was positioned as a dynamic interlocutor. Through recursive prompting, outputs were iteratively challenged, restructured, and reframed—creating a layered validation process in which both human and AI perspectives were repeatedly tested against the evolving data. Examples include the reclassification of insight origin across multiple transcripts and tone-shift exercises used to detect thematic instability or emotional incongruence.

This approach does not eliminate bias. As a self-guided analytic process, it lacks the intersubjective triangulation of multiple human coders. However, extensive measures were taken to confront interpretive blind spots: recursive reclassification, counter-prompting, and divergent interpretation checks were used to stress-test all conclusions. These methods—while unconventional—align with the epistemic posture of this study, which centers reflexivity, contradiction, and recursive rigor as core methodological tools.

Future research should incorporate independent coders to evaluate the replicability of this approach across different epistemic frames and user-AI dynamics. This would allow for greater methodological transferability while preserving the core insight of this work: that recursive engagement itself can be a valid and defensible mechanism of validation in AI-assisted qualitative inquiry.

Maintaining Reflexivity

Given the dual role of myself as both participant and analyst, reflexive notes were kept throughout the analytic process. Key decisions—such as when a message constituted a “breakthrough” or which dimension best described a message’s function—were cross-validated using recursive clarification with the AI. While this is not traditional intercoder reliability, it reflects dialogic reliability—tension-tested insight sustained over multiple iterative cycles. Sample excerpts were reviewed manually to ensure consistency, and edge cases were recursively reviewed in dialogue with the AI to triangulate interpretation. Reflexivity is further addressed through transparency about the AI’s dual role in this process. Rather than a confound, this circularity is treated as a recursive feature—where the tool’s involvement in generation is part of the analytic structure itself.

Framework

To demonstrate that the user—not the AI—was the generative source of insight, we implemented an additional layer of process coding. Each conceptual breakthrough was traced back to its initiating statement and coded by origin. In the majority of cases, core ideas were first articulated by the user through a personal reflection, contradiction, or narrative impulse. The AI’s role was predominantly reactive—mirroring, expanding, or reframing the user’s initiations.

For example, the concept of sonder emerged not from an AI definition, but from the user’s lived experience of emotional tension in social interaction. The AI’s response helped refine and structure that experience into a usable methodological term. Similarly, epistemic tempo was named by the user during a critique of academic time structures; the AI later helped articulate its theoretical utility.

The analytical dimensions used in this study—Origin, Function, Tone, Thematic Tag, and Phase—were initially surfaced by the AI through comprehensive analysis of the nine transcript sessions. These categories emerged as part of the AI’s pattern recognition capabilities, which grouped recurring themes and linguistic structures across the dataset. However, they were not accepted at face value: each proposed category was reviewed, challenged, and recursively refined through human judgment.

Importantly, these dimensions did not emerge in a theoretical vacuum. They are grounded in the Intentional Use Philosophy developed by me during the course of the project and formalized in the companion manuscript Conversations With AI. That philosophy emphasizes recursive self-examination, epistemic rhythm, and intentional authorship. As such, these analytic dimensions were not merely labels applied to content—they were methodologically encoded expressions of the broader philosophical architecture behind the project. While AI assisted in surfacing and organizing patterns, the categories themselves reflected prior dialogic formulations of meaning and were shaped by my evolving framework of reflexive inquiry.

I engaged the AI with prompts such as: “Does this label distinguish function from tone?” or “Is this dimension consistent across the broader data set?”

Through recursive exchanges, ambiguous or overlapping categories were clarified or discarded. Final framework definitions and interpretive authority remained under human control.

We employed a mixed-methods content analysis that combined both qualitative coding and quantitative pattern recognition. Each message (user and AI) was analyzed for the following variables. • Origin: User, AI, or Co-Constructed • Function: Emotional Processing, Theoretical Framing, Conceptual Breakthrough, Structural Planning, Submission Strategy • Tone: Neutral, Reflective, Critical, Affirmative, Charged • Thematic Tag: e.g., Dialectical Tension, Sonder, Epistemic Tempo, Intentional Use Philosophy • Phase: Beginning (conceptual emergence), Middle (structure and theory formation), End (divergence into critique and submission)

This framework reflects a co-constructed logic: the AI was instrumental in surfacing potential coding dimensions, but these were only stabilized through my own recursive testing, reframing, and critical evaluation. In this model, interpretive authority does not reside in the tool’s output, but in the recursive dialogue that shapes it.

Tools and Data Structuring

All coded data were stored and organized manually in spreadsheet form (Google Sheets). Transcript segments were logged by turn, with code labels assigned in separate columns. Recursive prompting within ChatGPT was used to refine category applications and to verify tone and function assignments. No external qualitative coding software was used, reflecting the project’s commitment to minimal tooling and accessible methods.

Quantified Metrics

• Token Count: Total word count per phase • Insight Frequency: Number of identified insight events per 1,000 words • Breakthrough Distribution: Number of transformative conceptual moments across transcripts • Source Attribution: Percentage of key insights derived from user, AI, or co-construction

Results

To mitigate the methodological circularity of using ChatGPT to analyze transcripts it helped generate, we employed human-led mapping of user-originated ideas prior to AI reflection. Recursive prompts were carefully structured to avoid suggestive completions, and the model’s outputs were triangulated with original narrative context. For each interpretive claim generated by the AI, a counter-prompt was issued to explore alternate framings. This built internal epistemic friction into the analysis.

Defining Metrics and Terminology

To standardize analysis, we operationalized key constructs using rule-based thresholds. • Breakthrough Event: Must meet all of the following: • Introduced a new concept or significantly reframed an existing one • Shifted the manuscript’s direction or structural emphasis • Was later referenced as pivotal in subsequent sessions • Insight Frequency: Counted if it satisfied two or more of the following: • Synthesized emotional and theoretical material • Prompted elaboration by AI or user • Clearly progressed the argument or reframed tone • Breakthrough Event: A novel conceptual, emotional, or rhetorical shift that alters the direction or depth of the project. These were identified by me during transcript review based on cumulative buildup and narrative impact. • Insight Frequency: The total number of recognized moments of clarification, naming, or reframing—whether emotional or theoretical—that moved the narrative forward. Each insight was reviewed and tagged by human judgment, confirmed with AI assistance.

Descriptive Statistics

• Total Words Analyzed: 60,000+ • Average Words per Transcript: ∼6,700 • Total Insight Events Identified: 49 • Average Insight Frequency: 0.8 insights per 1,000 words • User-Initiated Messages: ∼53% • AI-Initiated Messages: ∼47%

These descriptive metrics offer foundational clarity about the structure and pacing of the dialogue. While inferential statistics were not employed, these figures provide a quantifiable outline of the cognitive density and rhythm inherent in the AI-mediated process.

These quantitative metrics are offered not to claim statistical generalizability, but to scaffold structural understanding. The descriptive figures contextualize insight frequency and sequencing within the recursive method. Inferential statistics were not pursued, as the goal was epistemic clarity rather than population-level claims.

Sequential Influence Mapping

While causal claims are limited in qualitative case studies, we analyzed message sequencing around all 49 insight moments. 67% were initiated by the user, followed by AI elaboration within 1–2 turns in over 78% of cases. These short-lag elaborations suggest dialogic influence.

These Excerpts and Summaries Demonstrate That the User Regularly Posed the Core Insight in Raw Form, With the AI Serving as A Framing, Mirroring, or Elaborating Force—Reinforcing User Authorship Throughout

Illustrative Causality Table -Who Initiated the Insight?

Phase Chronology

• Beginning (Transcripts 1–3): Project naming, emotional grounding, emergence of dialectical tension, first mention of AI as a reflective tool. • Middle (Transcripts 4, 8): Formation of narrative structure, articulation of the Intentional Use Philosophy, integration of supporting theory, tone calibration. • End (Transcripts 5–7): Institutional critique, emergence of epistemic tempo, disillusionment with academic mentorship, peer review preparation and rhetorical sharpness.

Insight Origin Breakdown

• User-Originated Insight: 22% • AI-Originated Insight: 18% • Co-Constructed Insight: 60%

Co-constructed insights were most common in emotionally significant moments, such as narrative redirection, naming of core theories, and reframing of institutional assumptions (See Figures 1 and 2). Insight Origin Breakdown: Percentage of Insights Categorized as user-Originated, AI-Originated, or Co-Constructed During Recursive Analysis Emotional Tone Progression Over Time: Average Tone Values (on the Valence Scale) Across the Beginning, Middle, and end Phases of the Dataset

Insight Frequency and Breakthroughs

Across the dataset, nine major breakthroughs were identified. • The reframing of music taste as protective autonomy • The emergence of the “Intentional Use Philosophy” • The concept of sonder as a methodological stance • Introduction of epistemic tempo • Rejection of performative scholarly pacing • Realization of project separateness (56 Days from Conversations With AI) • Institutional betrayal via professorial dismissal • Final rhetorical restructuring of 56 Days • Coherence of voice and method in submission

Emotional Tone Progression

To quantify emotional tone, each turn was manually coded using a five-point valence scale: −2 = emotionally withdrawn −1 = tentative or uncertain 0 = neutral +1 = reflective or calm +2 = emotionally charged/assertive.

The average tone rose from −0.1 in the first three transcripts to +1.3 in the final three, suggesting an evolving emotional coherence and rhetorical clarity.

While we avoid making causal claims based on this trend, the alignment between increased tone intensity and theoretical emergence invites further study across a larger dataset. • Beginning: Tentative, exploratory, identity-seeking • Middle: Reflective, confident, theoretically anchored • End: Assertive, critical, strategically confrontational

These tonal shifts mirrored conceptual density and emotional stakes, supporting the argument that recursive AI dialogue matured not only the ideas but the self-narrative of the scholar.

Integrated Findings

Final Codebook Summary Used in Recursive Analysis

Sample Coded Message (Annotated)

User: “I’m starting to feel like the speed of this project doesn’t negate its depth. It’s not that I’m rushing—it’s that the friction is immediate.”

Codes: • Origin: User • Function: Emotional Processing → Theoretical Breakthrough • Tone: Charged • Tag: Epistemic Tempo • Phase: Middle

This message was later echoed and scaffolded by the AI to name the theory of “epistemic tempo,” showing how co-construction can begin with a user’s unresolved emotional contradiction.

Final Codebook Summary

Codes were developed iteratively using recursive dialogue with ChatGPT, then manually applied across the dataset.

Raw Transcript Snippets – User-Initiated Insight

Note: The following excerpts have been lightly edited for clarity and formatting. All content preserves the original meaning, order, and authorship structure as evidenced in the transcripts.

To further demonstrate that the user consistently originated key insights and that the AI served as a reflective scaffold, the following excerpts from the original transcripts highlight the causal direction of thought.

Sonder as Methodological Stance

User: “I’ve been thinking a lot about sonder lately. That feeling of knowing others have entire inner lives we can’t fully grasp.”

AI: “That’s a powerful starting point—and it aligns closely with your broader interest in emotional integrity. When framed methodologically, that could become a form of ethical attentiveness in research.”

Epistemic Tempo

User: “I don’t think research should take years just to be considered rigorous. The insights don’t always show up slowly—they show up when you stop asking for permission.”

AI: “That’s an important observation. What you’re describing might be thought of as ‘epistemic tempo’—a theory of how institutions regulate legitimacy through the pacing of knowledge.”

Intentional Use Philosophy

User: “I’m not trying to anthropomorphize the AI—I just want to use it deliberately, with boundaries, and not let it overtake my thinking.”

AI: “That actually sounds like a philosophy of intentional use: engaging with the tool reflectively, not passively.”

These moments illustrate that the user consistently set the conceptual direction, while the AI served as a mirror and clarifier—affirming the recursive authorship structure of this method.

Discussion

Ethical Considerations of AI-Assisted Analysis

The use of ChatGPT enabled rapid and reflective insight development, but it also raised important ethical questions. Large language models can subtly reflect the systemic biases embedded in their training data. To mitigate this, I employed recursive clarification, contradiction, and counter-prompting throughout the process to avoid uncritical acceptance of AI outputs. This recursive design, by nature, introduced internal friction—discouraging passive agreement and reinforcing critical engagement. As researchers increasingly rely on AI tools for analysis, transparent documentation and sustained scrutiny must become foundational practices. Even when outputs appear coherent or neutral, they may carry underlying epistemic or cultural assumptions that must be interrogated.

Methodological Implications and Limitations

This study does not propose recursive dialogue as a replacement for established qualitative methods but as a complementary strategy—particularly in contexts where institutional support, peer feedback, or extended timelines are unavailable. While the method presented here is novel, similar efforts to conceptualize AI and data systems as reflective interlocutors have begun to emerge in recent methodological literature, indicating a broader shift toward co-constructive epistemologies in human-machine inquiry (Knox & Nafus, 2018).

Moreover, ChatGPT was the sole language model used. Comparative studies across different LLMs—such as Claude, Gemini, or open-source variants—could assess whether reflection quality varies by architecture. Emotional tone may also influence recursive productivity; for example, grief may yield different cognitive rhythms than ambition or critique. These subtleties merit further inquiry.

While this study maintained internal rigor through recursive checking, future replications could test more traditional reliability methods—such as paired human coders or grounded theory comparisons—to assess consistency. As AI becomes more integrated into research design, hybrid approaches that blend algorithmic pattern recognition with qualitative depth and human reflexivity may shape the future of methodological innovation.

Integration of Quantitative and Qualitative Insight

The recursive rhythm of Prompt → Reflection → Clarification → Synthesis structured the process but also influenced interpretation. For example, insight frequency data prompted a reevaluation of the manuscript’s narrative arc—revealing that certain emotionally driven passages were structurally pivotal. Similarly, the emotional tone analysis helped reclassify reflective turns as moments of theoretical development. This interdependence between structure and meaning illustrates the potential of recursive design not just for generating insights but for refining them through triangulation.

Cautions and Edge Cases

While recursion often facilitated conceptual breakthroughs, it occasionally led to redundant loops. Some transcripts circled previously established points without advancing interpretation. This suggests that fluency—a strength of large language models—should not be conflated with depth. Coherence can mask stagnation. Future recursive frameworks may benefit from built-in challenge prompts or meta-level disruptions that reorient dialogue and surface new lines of inquiry.

Challenging Educational Assumptions

Finally, this study questions the framing of AI as a shortcut or crutch in educational contexts. These transcripts do not reflect plagiarism or passive automation; they reflect training, redirection, and the intentional shaping of cognition. Insight did not arise from algorithmic suggestion alone—it emerged in the friction between contradiction, clarification, and emotional processing. This suggests a new model of partnered inquiry—one where reflection becomes analyzable data, and the pace of insight accelerates without compromising rigor.

Replication Model

To support future use, the following outlines a replicable method. Users are advised to document prompt phrasing and iteration logic explicitly, as small changes in AI prompting can dramatically influence results. (1) Data Generation: Conduct recursive dialogues with AI around a central inquiry, logging all messages. (2) Segmentation: Break transcripts into individual turns and structure them into a spreadsheet or coding matrix. (3) Codebook Creation: Define variables (e.g., origin, tone, function) using a hybrid deductive-inductive approach. (4) Recursive Coding: Use ChatGPT to co-develop and refine codes, with ongoing human judgment serving as a critical element of validation. (5) Metric Analysis: Tally insights, categorize breakthroughs, and apply descriptive statistics. (6) Thematic Reflection: Synthesize findings into narrative form.

This process may be repeated across contexts to assess the cognitive structure of AI-human reflective work. A visual overview of the full recursive analysis process is included in Figure 1. This diagram outlines each stage—from transcript generation to code development, validation, and narrative synthesis—supporting replication and transparency.

Future Directions

Future work could explore how recursive AI methodologies might be adapted across disciplines, including pedagogical design, organizational coaching, and therapeutic reflection. This approach also opens doors for computational ethnographers to measure thematic density, cognitive pacing, and emotional tone in other AI-partnered creative processes. Longitudinal replications across different users, goals, and platforms could test the durability and generalizability of recursive insight structures.

Reflections and Future Considerations

Even with a finalized method, several nuances remain open for further inquiry. • Model-Specific Dynamics: The findings here are based on ChatGPT’s architecture and training. Recursive dialogue may vary across large language models (LLMs), suggesting a need for comparative model studies. • Researcher Bias: As in any autoethnographic project, my prior knowledge and emotional framing shaped both the dialogue and its interpretation. Future work could explore protocols for reflexive journaling or third-party audits of bias within recursive AI co-authorship. • Depth vs. Breadth: This study pursued depth within a single context. To assess methodological scalability, recursive reflection must be tested across diverse disciplines and problem spaces. • Prompt Shaping as AI Training: The concept of “training” the AI was more rhetorical than technical, but future work could investigate how sustained prompting strategies shape AI output patterns over time. • Defining “Insight”: While this study operationalized insight through structural effects and conceptual pivots, further research could explore whether linguistic or computational markers might offer more formal definitions. • Sustainability of the Method: This was a 56-day study. It remains unclear whether recursive dialogue maintains its generative momentum—or leads to fatigue—across longer periods or across fluctuating emotional states. • Granularity of Sentiment Analysis: The five-point valence scale offered a broad framework for tracking emotional tone, but future studies might explore more granular sentiment analysis tools capable of detecting a broader emotional spectrum—such as curiosity, ambivalence, or anticipation. These finer distinctions could uncover subtler correlations between emotional shifts and conceptual development. • Temporal Dynamics of Dialogue: This study focused on the semantic and structural content of each turn, but did not account for silence or delay between messages. Future research might explore the cognitive implications of pauses, hesitations, or extended gaps in recursive dialogue, as these temporal markers could indicate moments of internal processing or emotional weight.

These are not limitations of this study, but starting points for a wider research program. As recursive cognition gains traction, these complexities deserve rigorous, multi-perspective attention. This work builds on recent developments in digital autoethnography, where algorithmic interfaces mediate not only content, but form, reflection, and identity construction (Chayko, 2021; Lupton, 2022).

Theoretical Context

This study also aligns with Guba (1994) constructivist paradigm, which privileges co-created meaning over objective detachment. Recursive dialogue with AI mirrors this philosophy: insight is not discovered, but constructed in-the-moment through interaction.

Additionally, this work echoes Denzin's (2001) interpretive interactionism, which calls for analytic methods that uncover “epiphanic moments.” Several of the breakthroughs documented in this manuscript—such as the reframing of time in research (“epistemic tempo”) or the ethical stance of “sonder”—can be read as such moments: turning points in narrative awareness, surfaced dialogically.

This work also aligns with emerging mixed-methods approaches that embrace qualitative nuance alongside structured coding. While not grounded in classical mixed-method hypothesis testing, the design reflects principles from Creswell (2009) around integration, convergence, and layered data interpretation. It may also serve as a post qualitative instantiation of dialogic co-construction.

This work also aligns with emerging mixed-methods approaches that value qualitative nuance and structured coding. Although it does not adopt a traditional mixed-method hypothesis-testing framework, it reflects principles outlined by Creswell (2009), particularly around methodological integration and layered interpretation. It also resonates with post qualitative inquiry’s emphasis on reflexive, co-constructed knowledge processes.

Ethical Considerations & Data Sovereignty

This study does not involve human subjects beyond Wiles himself. As an autoethnographic inquiry, it is rooted in voluntary self-disclosure and reflective engagement. All dialogic data was produced through direct interaction with ChatGPT and falls outside the purview of institutional review board (IRB) requirements.

This study did not require the exclusion of sensitive or private third-party information, as all dialogue was generated through interactions between Wiles and ChatGPT. No external human subjects were referenced, implicated, or involved. The prompts were intentionally open-ended to preserve the exploratory, emotionally honest, and reflexive nature of the inquiry. In keeping with the project’s autoethnographic foundation, disclosures were voluntary, grounded in Wiles’ own lived experience, and essential to the recursive emergence of method. Therefore, prompts were not constrained by a need to redact or sanitize data, but instead prioritized transparency and epistemic authenticity.

However, the ethics of AI-assisted research extend beyond traditional IRB boundaries. Three core considerations guided this project’s ethical stance: data sovereignty, epistemic transparency, and authorship integrity.

Data Sovereignty

The full transcript corpus—over 60,000 words—remains under the sole control of Wiles and was not shared with external entities or uploaded into third-party platforms. While the ChatGPT interface facilitates interaction, all data analysis and framing took place within a locally documented environment. No sensitive personal data from others was introduced into the model. Prompts were intentionally designed to minimize identifiable details, and the dialogic structure of the sessions focused on theory formation, emotional processing, and narrative structuring rather than private interpersonal disclosure.

Epistemic Transparency. Acknowledging concerns about large language models’ interpretive opacity (Bender et al., 2021), this study foregrounds recursive validation as an active ethical and epistemic practice. Every AI output was scrutinized, reframed, or challenged through follow-up prompts. This approach rejects naive instrumentalism and instead treats the AI as a dialogic mirror—valuable not for its neutrality, but for its capacity to provoke deeper reflexivity when engaged critically. Recent critiques (Bender et al., 2021) emphasize that large language models may generate syntactically fluent yet semantically shallow outputs, necessitating recursive challenge as a form of interpretive grounding.

Authorship Integrity. All conceptual frameworks, theoretical interpretations, and final prose were authored by the human researcher. The AI contributed to framing and rearticulating emergent insights, but it did not originate ideas, define research questions, or draft submission-ready sections. Where AI-generated phrasing was useful, it was incorporated only after recursive interrogation and revision. In this sense, the AI functioned as a catalyst, not a co-author—reflecting an intentional boundary between cognitive amplification and intellectual authorship.

Finally, this study acknowledges that the reproducibility of AI-supported methods depends not only on tools, but on relational dynamics—how users engage, interpret, and redirect model outputs. Future work should further interrogate these dynamics across diverse epistemic styles and user-AI configurations.

AI and Authorship Transparency

In accordance with SAGE’s policy on the use of generative AI, I confirm that all final textual content in this manuscript was authored and edited by the human researcher. While ChatGPT (OpenAI) was used throughout the research process as a dialogic partner—particularly in prompting, code generation, and recursive rearticulation—no sections of the final manuscript were authored solely by the AI or submitted without human oversight, modification, and conceptual ownership. The AI’s contributions were methodological and reflective, not generative in a publication sense. All theoretical framing, argumentation, and final prose were shaped through critical, recursive engagement by Wiles.

Data Ownership & Stewardship

The recursive transcripts generated in this study remain the sole intellectual and material property of Wiles. While facilitated through ChatGPT’s interface, these data were documented, stored, and curated under Wiles’ control, with no transfer to external repositories. Stewardship in this context involved safeguarding the chats as both methodological records and reflexive artifacts, ensuring they were preserved with integrity and without unauthorized dissemination. This approach emphasizes that in AI-assisted autoethnography, data sovereignty belongs with Wiles, who bears responsibility for both its management and ethical contextualization.

Tool Limitations and Reflexive Safeguards

While ChatGPT contributed meaningfully to pattern recognition and analytic synthesis, its capabilities are constrained by the nature of its training data, lack of experiential grounding, and a known tendency to generate text that is fluent but potentially unmoored from deeper understanding. These structural limitations carry methodological and ethical implications, particularly in qualitative work that demands nuance and contextual sensitivity. This study mitigated those risks through recursive challenge, prompt rearticulation, and human-led reframing—but such measures are not infallible. Rather than obscuring these constraints, this project treats them as integral to the epistemic encounter, reinforcing the need for transparency, dialogic tension, and authorial accountability in any future deployment of similar AI-supported methods.

Transparency and Transcript Access Statement

Due to the highly reflexive and personally embedded nature of the data, full transcripts are not publicly available. The conversational archive that underpins this study is deeply intertwined with Wiles’ personal, emotional, and intellectual life, forming a recursive space that catalyzed both the methodology and the resulting theoretical framework. As such, releasing these transcripts would risk unintended divulgence of private insights and compromise the contextual integrity of the work. Additionally, because the dialogue was emergent and nonlinear, isolating discrete prompts or coding snapshots would misrepresent the recursive analytic process. Instead, transparency is provided through extensive narrative detailing of methodological choices, examples of recursive prompt logic, and continuous reflection woven throughout the manuscript. The decision not to share raw transcripts is an intentional act of epistemic care, aligned with the autoethnographic and human-centered foundations of this inquiry.

Broader Ethical Frameworks for AI Collaboration

While this study did not involve human subjects beyond Wiles’, it nonetheless engages deeply with the ethical implications of human-AI collaboration. The recursive method deployed here emphasizes epistemic reflexivity, but it also aligns with broader ethical frameworks concerned with transparency, accountability, and human interpretive primacy in AI-mediated research. In particular, Floridi and Cowls’ (2019) principles of AI ethics—beneficence, non-maleficence, autonomy, justice, and explicability—serve as a touchstone. This study reflects those values through its co-constructive design: AI is not treated as an autonomous author but as an epistemic partner embedded within human-led inquiry. The recursive structure itself—centered on dialogic validation and interpretive friction—acts as a procedural safeguard against epistemic overreach or automation bias. Rather than obscuring intellectual labor, this method foregrounds it.

Conclusion

This study offers a proof of concept for recursive AI dialogue as a legitimate, structured, and replicable method of scholarly inquiry. Through the analysis of over 60,000 words of real-world interaction, it demonstrates that dialogic engagement with ChatGPT can surface conceptual breakthroughs, scaffold emotional clarity, and catalyze theory development—without institutional gatekeeping or extended timelines.

The findings are not statistically generalizable but are methodologically provocative. They suggest that insight can emerge rapidly—not because of intellectual shortcuts, but because recursive processes scaffold reflection in ways that accelerate clarity. What traditionally takes months of isolated writing and layered peer feedback was condensed into 56 days of sustained dialogic iteration—without sacrificing nuance, depth, or coherence.

This study also reimagines academic labor. It shifts focus from the linear accumulation of content to the structural emergence of coherence. Rather than evaluating rigor solely by time invested or citations gathered, recursive inquiry offers a different metric: dialogic depth over duration, and epistemic traction over institutional pacing.

There are limitations. This is a single-author case study, using a specific AI model and methodological structure. Its reliability and scalability remain open questions. Future research should test this method across disciplines, emotional contexts, and AI platforms—exploring whether recursive dialogue can support sustained scholarly productivity and deepen inquiry across fields.

While the study is grounded in autoethnographic practice, the recursive methodology itself is not inherently personal or discipline-bound. Its core components—structured prompting, dialogic recursion, and emotional-temporal attunement—may prove transferable across diverse research contexts. Whether used in pedagogy, organizational development, or interdisciplinary design, recursive dialogue offers a flexible scaffolding for researchers seeking both conceptual rigor and emotional resonance. As such, its relevance extends beyond this case study, inviting broader experimentation.

Still, this project offers more than an efficient workflow—it offers a new epistemological rhythm. When used with intentionality, AI does not replace human thinking. It reflects it, challenges it, and, in the best cases, accelerates its emergence. This is not a story about academic speed. It is a blueprint for recursive scholarship—one grounded in clarity, contradiction, and co-construction.

As more scholars begin to navigate the boundary between cognition and computation, this model invites a deeper question: What becomes possible when reflection itself becomes analyzable data?

Footnotes

Acknowledgments

The author thanks the developers of ChatGPT for enabling the recursive method explored in this paper, and acknowledges the support of the broader academic and autoethnographic community that informed the epistemological framework of this study.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

All transcripts were generated by the author and ChatGPT and are available upon reasonable request.