Abstract

During the 2020 worldwide lockdowns due to COVID-19, qualitative researchers were restricted to online communication (e.g., Zoom) to gather qualitative interview data. Since that time, qualitative researchers have increasingly transitioned from conducting interviews primarily in person to conducting interviews using online communication technologies such as Zoom, but little is known about how interview approaches may impact the interview experience or data quality. This study explores differences between face-to-face (FTF) and online (Zoom) interviewing approaches, data quality, and interviewee perceptions of rapport, empathy, and conversational involvement. Participants were assigned to an interview condition (FTF or Zoom) and randomly assigned to one of two qualitative interviewers. The interview topic was mental health communication. After the interview was completed, participants completed a post-interview survey measuring perceptions of interviewer empathy, rapport, and conversational involvement. The results revealed statistically significant differences between interview conditions and participants’ perceptions of empathy and rapport. Perceptions of rapport and empathy were rated highly for both interview approaches, but significantly higher in FTF when compared to Zoom for a subset of the sample. There were no statistical differences between the FTF and Zoom approaches when considering conversational involvement or data quality.

Keywords

The way we communicate in our professional and personal lives has changed in recent years; online mediated communication technologies, such as the use of Zoom teleconferencing, are now commonplace in everyday interaction and are becoming a tool for many social scientists (Gibson, 2020; Seitz, 2016). While the use of asynchronous technology as a way of communicating online has been discussed for decades (Deakin & Wakefield, 2014), online technology in qualitative research, such as being able to see interview participants via synchronous videoconferencing, is a more recent advancement that the increased use of the internet and the COVID-19 Pandemic has accelerated. The increasing availability of online technologies provides new opportunities for qualitative researchers to recruit and interview participants and share research findings.

Face-to-face (FTF) interviewing, where both the interviewee and interviewer are co-present, has generally remained the accepted and preferred practice and is seen by most researchers as the “gold standard” of interviewing in qualitative research (Green & Thorogood, 2014; McCoyd & Kerson, 2006). Yet, while FTF interviews have been the mainstay of qualitative research, video conferencing programs, such as Zoom Videoconferencing (Zoom) may provide researchers with a cost-effective and convenient alternative to in-person interviews (Edwards & Holland, 2013).

While work such as Lobe et al. (2020, 2022), Dubé et al. (2023), and Oliffe et al. (2021) provide important insights into the benefits and challenges of both FTF qualitative interviews and online interviews, few studies have experimentally tested the assumption that they are experienced by participants similarly (Namey et al., 2021; Underhill & Olmsted, 2003). The aim of the current exploratory study is to examine differences between qualitative interviews conducted FTF and online via Zoom Videoconferencing technology on the following critical dimensions: data quality and interviewee perceptions of interviewer empathy, rapport, and conversational involvement. The following sections detail the literature comparing FTF and online interviewing, providing a rationale for the current study.

Background

COVID-19 and Shifting Interview Approaches

The COVID-19 pandemic accelerated the need to explore alternative data collection methods for qualitative research. Boland et al. (2022) described the COVID-19 pandemic as an unforeseen external threat that created a set of extraordinary barriers to qualitative researchers. When faced with abandoning their research or finding new interview methods, qualitative researchers swiftly turned to online approaches to interviewing. As the world waited for a vaccine and to be free from lockdown restrictions, virtual/online interviewing approaches (e.g., Skype and Zoom) became the connection technology of the time (Turk, 2020). This modality shift required researchers to ask if online interviewing could meet qualitative data objectives while retaining the same level of data quality (Boland et al., 2022; Namey et al., 2021; Renosa et al., 2021).

Currently, qualitative researchers find themselves free to return to conducting interviews in the traditional FTF gold standard approach. Yet, many researchers are reluctant to abandon the flexibility and convenience of online approaches and are keen to report their advantages for conducting qualitative interviews (Boland et al., 2022; Dube et al., 2023; Namey et al., 2021; Renosa et al., 2021). Research into the use of online technologies as the primary method for qualitative data collection is still in its early stages. To date, most of the literature addressing online compared with FTF qualitative interviewing discusses the advantages and disadvantages of online technology through anecdotal lessons learned from researchers’ experiences (Corti & Fielding, 2016; Howlett, 2022; Janghorban et al., 2014; Lobe et al., 2020; Vindrola-Padros et al., 2020; Weller, 2017). In the following sections, we summarize this research detailing the primary strengths and weaknesses of online technologies for qualitative interviews.

Online Interviews: Advantages and Disadvantages

Research findings are mixed about the advantages and disadvantages of online compared with FTF qualitative interviews. The research literature points to the advantages of online qualitative interviewing, highlighting increased convenience, cost-effectiveness, and flexibility. Discussion of disadvantages includes concerns about technology, digital fraud, comfort with disclosure, and limited access to nonverbal cues.

Advantages

The advantages of online interviewing generally relate to convenience. When conducting qualitative interviews online, researchers may have expanded access to more participants since many people work from home and have access to technologies such as Zoom on their cell phones, and people can participate regardless of where they live (Saarijärvi & Bratt, 2021). Online interviews cut travel time to and from the interview location and transport costs, interviewing more participants in a shorter amount of time, allowing the researcher to work from home while conducting research, allowing for more flexible scheduling, and reducing unpredictable circumstances, such as poor weather conditions, that may deter participants from meeting in the FTF context (Archibald et al., 2019; Gray et al., 2020; Jenner & Myers, 2019; Tomás & Bidet, 2024). Online interviews also simplify recruitment by eliminating the need for participant transportation and parking (Braun et al., 2017; Jenner & Myers, 2019).

Disadvantages

Overview of the Disadvantages of Online Interviews

There are several perceived disadvantages of online video interviewing with all having implications for compromising data quality. The first of these includes technology issues. Video interviewing requires reliable technology with a camera and microphone, and a stable internet connection. Technical problems such as network breakage, equipment difficulties, and an unstable connection can complicate the interview flow. Some groups may be excluded because they do not feel comfortable with or have access to the technology required. Tomás and Bidet (2024) refer to this as digital exclusion. The profile of the targeted sample may determine the appropriateness of online interviewing. For example, impoverished individuals or the elderly may not have access to the internet or the hardware necessary to participate (Lobe et al., 2020). Data quality and security can be compromised when participants do not have equal access to reliable and secure internet or technology (Katz & Gonzalez, 2016; Kennedy et al., 2021).

The research literature also points to digital fraud as a possible disadvantage to conducting online interviews. Recent work suggests that data quality may also be compromised by fraudulent participation. “Imposter participants” may compromise online qualitative interviews, especially when monetary incentives exist (Mistry et al., 2024; Sharma et al., 2024; Wright et al., 2024). Imposter participants are individuals who provide false identities and experiences when participating in research (Chandler & Paolacci, 2017). For example, in a study of imposter participants (Chandler & Paolacci, 2017), a sample of more than 300 participants was split into two groups and screened through a survey for an online interview study. The control group participants reported their sexual orientation at the end of a survey. In contrast, the “blatant prescreening” group reported their sexual orientation at the beginning of the survey after being told explicitly that only lesbian, gay, or bisexual (LGB) people were eligible to participate. The findings revealed that while only 3.8% self-reported as LGB in the control condition, 45.3% of the participants in the blatant prescreening group identified as LGB (Chandler & Paolacci, 2017). In qualitative interviews, we want to interview participants who are “information rich” and who can speak to the topics of interest. The phenomenon of participant fraud undermines this objective. These practices need more research attention so that they can be understood more fully.

Another possible disadvantage of online interviews is uncomfortable disclosure when discussing deeply personal or sensitive topics (Seitz, 2016). Namey et al. (2021) found that participants reported feeling less comfortable sharing information when participating in online interviews when compared to FTF interviews. More comfort in disclosing information can be particularly advantageous when the interview topic is highly personal or sensitive (Howlett, 2022; Self, 2021). However, the research is mixed on this topic. Some studies reveal that interviewees may feel more relaxed and are, therefore, more willing to disclose information in online interviews and this may have a positive impact on data richness (Oliffe et al., 2021; Tomás & Bidet, 2024). Jenner and Myers (2019) compared online and FTF interviews in two studies and found that both online and FTV interviews generated similarly rich datasets. The authors concluded that the level of privacy of the interview setting, rather than the interview approach itself, made the most difference in influencing data quality (Jenner & Myers, 2019).

Another disadvantage of online interviewing is limited access to nonverbal cues during interview interactions. Online interviews do not allow the researcher and participant to be in each other’s physical presence and see each other entirely (Tomás & Bidet, 2024). For centuries, humans have obtained and processed information in FTF environments, not in front of electronic screens (Markham, 2009). Only a participant’s face and perhaps upper body tend to be visible in synchronous online interviews, with many nonverbal communication cues, such as hand and lower body movements, absent from the interview interaction. Also, in online interviews, the video image can be blurry and delayed, making it challenging to read nonverbal behaviors, such as eye contact, that may serve as critical interpersonal cues in the research interview (Caetano & Moran, 2021). These nonverbal cues provide valuable information during the interview, guiding interviewers and interviewees in interpreting messages. Indeed, research shows that it is common for behavior to be misinterpreted over video (Caetano & Moran, 2021), and feelings of unease and uncertainty can be masked due to the lack of visual body cues and gestures (Seitz, 2016).

While these advantages and disadvantages provide insight into the opportunities and challenges of online interviewing, more research is needed to understand differences in research outcomes when conducting FTF and online qualitative interviews. One theory that shows promise for explaining these differences is Media Richness Theory (Daft & Lengel, 1986).

Media Richness Theory

Media Richness Theory (MRT) is used in this study as a theoretical framework to better understand the patterns in the data across modalities. According to MRT, different communication modalities vary in their ability to convey verbal and nonverbal cues (Daft & Lengel, 1986). This theory posits that FTF is the richest modality, allowing for immediate feedback, accessing verbal and nonverbal cues, and allowing for personal interaction, while online modalities (e.g., Zoom) are considered less rich. MRT puts forth that richer modalities, such as FTF, are better suited for emotional, sensitive, and complex communication exchanges, while the online modalities are more appropriate for simple, familiar, and routine types of interactions (Daft & Lengel, 1986). If this is the case, modality has direct implications for meaningful qualitative interviewing, due to the centrality of interviewer-interviewee interaction in interviewing contexts.

Interviewer-Interviewee Interaction: Rapport, Empathy, and Conversational Involvement

Conceptual Definitions of Rapport, Empathy, and Conversational Involvement

Rapport

Chu and Gilligan (2014) argues that “the quality of the collected data from the interview depends, in part, on qualities of the researcher-participant relationship” (p. 4). Building a relationship with the interviewee is a key component of qualitative interviews (Gabbert et al., 2021) and understanding the development of rapport in the researcher-participant relationship is a priority for empirical researchers in the field (Pitts & Miller-Day, 2007). Rapport can be viewed as an interactional phenomenon when interactants experience a sense of connection with one another based on mutual attention, coordination, and understanding (Pitts & Miller-Day, 2007). Watts (2008) argues that developing rapport is a challenging process that may be mutually constructed through each person’s willingness to look deeply into the world of the other.

Rapport Building in FTF and Online Interviewing

Rapport building commonly relies on a researcher’s ability to maintain an interpersonal connection with the participant, focusing on the participant’s words while simultaneously reading their emotional state. While still in its infancy, there have been some studies that suggest a loss of personal connection and intimacy in online interviews when compared to FTF interviews (Seitz, 2016). Seitz (2016) argued that the lack of direct contact with the interview participant made it challenging to elicit richly detailed answers, especially on sensitive topics, suggesting that online modalities create a barrier to the development of rapport. Jiang (2020) notes that greater effort/concentration is required to process non-verbal cues in online interview contexts, and silence in video calls may create anxiety amongst participants.

Lobe et al. (2022) and Seitz (2016) point out that a genuine interpersonal connection, like in-person contexts, can be challenging, if not impossible, to establish online. Deakin and Wakefield (2014) astutely pointed out that in online interviews, there is an absence of traditional FTF interview rituals, such as greeting each other, sharing coffee, shaking hands, giving a hug goodbye, suggesting that the absence of these ritualistic interview behaviors can make rapport building difficult.

Alternatively, research confirms that introverts and people who are shy are more likely to be drawn to online interviews and prefer socializing online in that it allows them to feel more comfortable opening up in front of a screen rather than to a person (De Villiers et al., 2022; Seitz, 2016). Studies suggest this is because interacting face-to-face may make introverts feel less comfortable, less able to communicate their ideas, and less fully able to express their authentic selves to others (Orchard & Fullwood, 2010). In the end, findings are mixed when asking if interviewees’ perceptions of rapport are the same in FTF as in online qualitative interviews.

Empathy

Beyond cognitive understanding of another’s feelings, Heritage (2011) defines empathy as “an affective response that stems from the apprehension or comprehension of another’s emotional state or condition” (p. 161). Indeed, empathy “involves sharing the perceived emotion of another. . . ‘feeling with’ another” (Eisenberg & Strayer, 1990, p. 5). Empathic behavior has both verbal and non-verbal components, for example, the power of touch to calm anxiety or emotional distress. As these definitions suggest, establishing empathy in a qualitative interview requires a high degree of emotional involvement (Prior, 2018).

Empathy in FTF and Online Interviewing

Leake (2019) argues that empathy is necessary when conducting qualitative research on sensitive topics. Often, disclosure of sensitive issues in qualitative interviewing is not pre-planned or anticipated (Rubin & Rubin, 2011; Tracy, 2019). Studies have shown that when it comes to discussing emotional or traumatic experiences (e.g., loss of a loved one, a job, homelessness, or illness), online interviews appear inadequate for the task in that they lack the functionality to demonstrate an appropriate level of ethical care and empathy in a meaningful manner (Boland et al., 2022; Leake, 2019). In online environments, it may be difficult to see each other’s facial and nonverbal expressions in online environments and this has direct implications for communicating empathy or concern (Caetano & Moran, 2021; Seitz, 2016). Hence, Seitz’s (2016) recommends that FTF interviewing may be more appropriate for qualitative interviewing about sensitive topics. However, Engward et al. (2022) suggest that online interviewing may just require more interviewer preparation to reduce barriers to empathy.

Conversational Involvement

In qualitative interviewing, conversational involvement is necessary for getting rich data from qualitative interviewing (Rubin & Rubin, 2011). Rubin and Rubin (2011) discuss that excellent qualitative interviewing is responsive and promotes conversational involvement from both the interviewer and the respondent. They explain that responsive interviewing is an extended conversation of engagement that seeks a deep understanding of a phenomenon. They explain, “Depth is achieved by going after context; dealing with the complexity of multiple, overlapping, and sometimes conflicting themes; and paying attention to the specifics of meanings, situations, and history” (Rubin & Rubin, 2005, p. 35). Rubin and Rubin (2005) believe a relationship is developed between the interviewer and interviewee as they navigate the interview process, with both individuals being fully engaged and present in the conversation, which is vital to generating nuanced research results (Rubin & Rubin, 2005, ).

Manning (1992) suggests that involvement occurs when participants in an encounter display an appropriate level of engagement with and commitment to the social interaction. Potential hindrances to conversational involvement include self-consciousness, interaction consciousness, or a preoccupation with matter external to the encounter, all of which could be influenced by the interview approach (Manning, 1992). Indeed, talk is not only about the exchange of knowledge but also about affirming a relationship (Manning, 1992).

To our knowledge, no existing research examines or compares conversational involvement in FTF and online interviewing. Therefore, it is unclear how the interview approach (FTF or MC) can shape what and how much a participant is willing to engage, divulge, and share about their lived experiences (Weller, 2017).

Current Study

Despite the many studies reflecting on the advantages and disadvantages of online interviewing as a reliable method for collecting qualitative data, few studies have compared participants’ experiences or the different interview approaches or the resultant data quality (Archibald et al., 2019; Howlett, 2022; Krouwel et al., 2019; Lobe et al., 2020, 2022; Vindrola-Padros et al., 2020; Weller, 2017). Are there differences between FTF and online qualitative interviews in terms of rapport, empathy, and conversational involvement and do each generate the same data quality? Media Richness Theory suggests that FTF approaches are richer; however, do FTF interviews enhance rapport, empathy, and conversational involvement? This current study provides a quasi-experimental examination of these differences by posing the following hypothesis and research question: Hypothesis: Participants will report greater perceived interviewer empathy, rapport, and conversational involvement in face-to-face (FTF) interviews than in online (Zoom) interviews. RQ: Does data quality differ between FTF and Zoom interviewing modalities?

Methods

We conducted an exploratory quasi-experimental study to (1) compare interviewees’ perceptions of interviewer empathy, rapport, and conversational involvement across both FTF and online in-depth qualitative interviews and (2) assess differences in data quality across the two interview approaches. The topic of mental health communication was selected because this topic has been identified as a sensitive topic that may require heightened sensitivity in interviewing (Dempsey et al., 2016; Silverio et al., 2022). The comparison of online and FTF interviewing approaches was the primary study, with the qualitative investigation of mental health communication serving as a secondary project and not addressed in this report. Participants 18 and over were randomly assigned to face-to-face (FTF) or online (Zoom) interview conditions. Both conditions involved an in-depth interview about how the participant communicates about mental health with others. After the interview, the participant was independently emailed a link to an online survey assessing demographics and their experience of interview rapport, empathy, and conversational involvement.

Participants

After receiving approval from the university IRB (IRB-23-159), participants were recruited through two routes: (1) social media posts describing the study and requesting participants for a paid study ($15) and (2) a university recruitment database for course credit. The university database consisted of undergraduate students at a university in the southwestern United States. Interested individuals contacted the research team and then received a telephone call describing the study as “an investigation of mental health communication and interviewing.” The consent form was then reviewed and signed and then the interview was scheduled. The consent form identified two goals for the study: (1) to understand if FTF or Zoom interviews differ significantly and, if so, how and (2) to understand the messages exchanged among friends, family, and via social media about mental health. Additionally, participants were required to speak English, have a working computer with Zoom technology and an internet connection, a working camera and microphone, and a private space with no distraction for completing the interview if they were assigned to the Zoom condition. Participants assigned to the Zoom condition were instructed to conduct the interview on a computer rather than a phone and select a private space with no distractions.

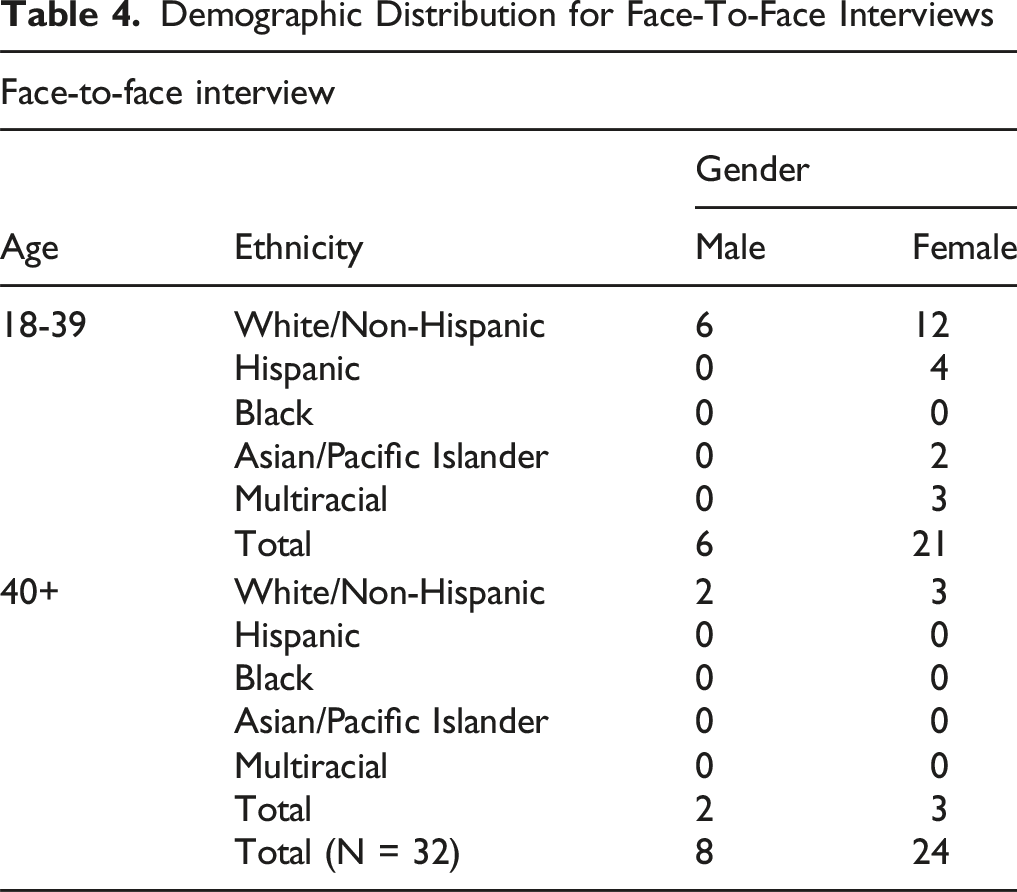

A total of 73 individuals participated in the study, of which 20 identified as male (27.4%) and 53 identified as female (72.6%). Participants were between 18–46 years old (M = 22, SD = 3.57). Younger participants were classified as ages 18–39 (n = 55, 75%), and older participants were classified as ages 40 and older (n = 18, 25%). See Tables three and four for a breakdown of race, age, and gender in each interview condition. Participants included White/Caucasian (n = 45, 61.6%), Black/African American (n = 1, 1%), Hispanic/Latino (n = 11, 15%), Asian/Asian American/Asian Pacific Islander (n = 10, 13%), and Multiracial/Other (n = 6, 8%) participants. The educational analysis indicated, less than high school (n = 1, 1%), high school graduate (8, 11%), less than four years of college (n = 37, 50.7%), undergraduate degree (n = 19, 26%), graduate degree (n = 7, 9%).

Demographic Distribution for Online Interviews

Demographic Distribution for Face-To-Face Interviews

Data Collection

Procedures

Experimental Conditions/Interview Modalities

We minimized differences across data collection modalities and interviewers in a few ways. First, the interview space was consistent across FTF and Zoom conditions with blank walls to avoid distractions, and the interview guide was the same for all interviews. All participants in the Zoom condition were instructed to zoom in from a “private space with no visual or audio distractions.” Second, all interviewers were female qualitative researchers with similar physical characteristics (Caucasian, 30–40 years of age, blonde) and similar interviewing styles (see Pezalla et al., 2012), with 4–5 years of interviewing experience in FTF interviewing and 4 years’ experience conducting online interviews, with both trained in the current interview guide concurrently by the same experienced qualitative researcher.

Following the interview guide, interviewers reviewed the goals of the research study, then asked participants to define mental health, describe the last time they talked about their mental health with another person, with whom they typically discuss their mental health, what topics they discussed, their satisfaction with the mental health communication, messages they receive about mental health and illness from social media, interpersonal relationships, family relationships, social groups, media, cultural messages, and discuss how shame and stigma might affect their interpretations of received messages. 1 As with any qualitative inquiry, the interviewer inductively probed questions to elicit detailed and nuanced information. Any interruptions (e.g., connectivity with Zoom or a physical interruption for a bathroom break with FTF) were to be noted by interviewers. No interruptions were reported. All interviewer notes were documented at the conclusion of the interview so as not to impede the flow of the conversation. The interviews were video- and audio-recorded and transcribed verbatim for qualitative analysis. Zoom provides an auto-transcribe function, and FTF interview videos were transcribed by Descript computer software. All transcripts were compared to the video recording and any errors corrected. Interview length ranged from 15 to 45 min (M = 35.56) with no statistically significant differences in interview length across interviewers.

Study Measures

Measures of interviewer-interviewee interaction—rapport, empathy, and conversational involvement—and demographic variables were collected through an online survey administered immediately after the interview. Data quality was operationalized as respondents’ transcribed word count.

Demographics

The post-interview survey was administered to participants immediately after the in-depth interview. The survey assessed the following demographic information: age, gender, education, and race.

Rapport

Rapport refers to the nature of an interaction between two or more people when the interactants experience a sense of connection with one another based on mutual attention, coordination, and understanding (Pitts & Miller-Day, 2007). In this study, we measured rapport using the Rapport Questionnaire (Bernieri, 2014). The rapport measure is comprised of thirteen items on a 5-point Likert scale (α = .78), such as “The interview interaction was cooperative…” or “I felt connected to the interviewer…” (1 = not at all; 5 = almost the whole time) (M = 4.77, SD = .32).

Empathy

Empathy is conceptualized as the affective response of feeling attuned to another’s emotional state. Operationally, the CARE measure (Mercer et al., 2004) was used to assess perceptions of the interviewer’s empathy. The empathy measure is comprised of eight items on a 5-point Likert scale (α = .86), asking questions like, “How was the interviewer at making you feel at ease?” and “How was the interviewer at being attuned to your emotional state?” (1 = poor; 5 = excellent) (M = 4.79, SD = .31).

Conversational Involvement

This study conceptualized conversational involvement as the interviewee’s perception of the interviewer’s engagement and presence in the conversation. To measure this, we administered the Involvement subscale of the Relational Communication Scale (Hale et al., 2014). The conversational involvement measure was comprised of seven items on a 5-point Likert scale (α = .74), asking questions like, “The interviewer was highly involved in the conversation” and “The interviewer was fully engaged in the conversation” (1 = not at all; 5 = extremely) (M = 4.92, SD = .24).

Data Quality

Although word count is not a direct measure of data quality, it has been widely used in comparative literature and is held to be strongly associated with data quality (Abrams et al., 2015). The more words uttered by the participant reflect the amount of talk in any interview session. After transcribing each interview verbatim, we omitted any “warm-up” discourse between the interviewer and interviewee and calculated the number of words spoken by participants (excluding any words spoken by the interviewer) in each transcript after the first interview question was asked. The average word count across transcripts was then calculated within and across each interviewer and each interview condition.

Covariates

To control for possible covariates, the following variables were measured. Participants’ comfort with videoconferencing technology was assessed with a single item on a 5-point Likert scale: “I would rate my comfort with using videoconferencing technology such as ZOOM as...” (1 = very uncomfortable; 5 = very comfortable). Additionally, shame surrounding mental health was assessed with three items on a 7-point Likert scale, asking questions like, “I would prefer my friends and family did not know if I received help for mental health problems” (1 = strongly disagree; 7 = strongly agree) (α = .86).

Data Analysis

To answer our hypothesis, we first ran descriptive statistics to assess all measures’ reliability and calculate each study variable’s means and standard deviations. Then, we ran Pearson product-moment correlations among the dependent variables to examine associations. Before analyzing the interview approach, we conducted a one-way ANOVA to assess if there were significant differences between the two interviewers regardless of the interview condition. Finally, we conducted one-way ANOVA to examine the effect of interview conditions (FTF or online) on the three dependent variables.

To address our research question regarding data quality, we conducted independent t-tests to examine the differences in data quality across interview conditions (FTF and Zoom) and between the two qualitative interviewers.

Due to the exploratory nature of this study, we also conducted several additional post hoc analyses to understand possible considerations for future research. This included multivariate analyses and two-way ANOVA tests to understand how interview modality (FTF or Zoom), interacted with shame, age, ethnicity, and gender to influence rapport, empathy, and conversational involvement evaluations. Moreover, since we recruited fewer than 100 individuals, we conducted a post hoc sensitivity power analysis with Clincalc, setting the alpha level to 0.05 (Quach et al., 2022).

Results

Dependent Variables across each Interviewer

Interview Approach and Rapport, Empathy, and Conversational Involvement

Means and Standard Deviations of Dependent Variables in Each Interview Condition

ANOVA analyses revealed significant differences between interview conditions for empathy (F (1,72) 4.537, p = 0.037) and rapport (F (1,72) 5.138, p = 0.026), but not for conversational involvement (F (1,72) 3.141, p = 0.081).

Word count, our metric for data quality, examined respondent’s word count across interviewers and each interview condition. A one-way ANOVA revealed no differences in word count across interviewers (F (1,72) 0.364, p = 0.548) or across FTF vs. Zoom interview conditions (F (1,72) 0.543, p = 0.464).

Post-Hoc Analyses

While 73 interviews can generate ample qualitative data, the number of participants we recruited for this study did not quite reach the power levels we hoped for. The post hoc sensitivity power analysis revealed 72.3% power (80% or more power is desired) for this exploratory study. While not optimal, the sample size was determined to be adequate for this study. This is further discussed in the study limitations.

Due to the exploratory nature of this study, we wanted to learn more about how covariates may have impacted rapport, empathy, and conversational involvement. So, we conducted a series of post hoc analyses exploring the effects of interview approach on all three dependent variables while controlling for age, ethnicity, gender, and perceptions of shame associated with discussing mental health. A multivariate analysis test revealed no statistically significant differences or interaction effects when controlling for age, ethnicity, gender, and perceptions of shame for empathy or conversational involvement. There were significant findings regarding age, gender, and perceptions of shame; however, only for rapport.

Age & Rapport

Due to the exploratory nature of this study, we wanted to learn more about the significant effect of each interview approach on rapport, so we conducted a series of post hoc analyses exploring the effects of the interview approach on rapport while controlling for age as a continuous rather than a categorical variable.

A multivariate analysis was performed to analyze the effects of interview approach and age on rapport. A multivariate ANOVA revealed that there was a statistically significant interaction effect of interview approach and age on rapport (F (1,72) = 3.875, p = .008). Younger participants, 18 – 39 years old, reported significantly higher levels of rapport in the Zoom condition (M = 5.00, SD = 0.29) than in the FTF condition (M = 4.53, SD = 0.20). In comparison, older participants, 40 and older, reported a higher level of rapport in the FTF interview condition (M = 4.97, SD = .29) than in the Zoom condition (M = 4.86, SD = 0.20).

Gender & Rapport

A simple main effect analysis showed that gender did have a statistically significant effect on rapport (F (1,72) = 4.156, p = .045). Females reported significantly higher scores on rapport (M = 4.826, SD = 0.042), than males (M = 4.660, SD = 0.070). The two-way ANOVA analysis further showed a significant difference with males in the FTF interview condition reporting significantly higher scores on rapport (M = 4.789, SD = 0.26) than males in the Zoom condition (M = 4.53, SD = 0.58).

A multivariate ANOVA further revealed a statistically significant interaction effect of interview approach, age, and gender on rapport (F (1,72) = 3.713, p = .031). The analysis further showed that males over 40 in the FTF condition reported significantly higher scores on rapport (M = 4.92, SD = 0.108) than males in the Zoom condition (M = 4.76, SD = 0.399).

Perceptions of Shame on Rapport

To control for perceptions of shame in discussing mental health-related issues and problems in our analysis, we conducted a post-hoc two-way ANOVA to assess the effects of interview approach and shame (associated with discussing mental health) on rapport. A simple main effect analysis showed that perceptions of shame did have a statistically significant effect on rapport (F (1,72) = 13.069, p < .001, partial η 2 = .509). Participants with low levels of shame (M = 4.878, SD = 0.217) reported significantly higher levels of rapport than participants with high levels of shame (M = 3.461, SD = 0.435). Further analysis revealed that participants in the FTF condition who had high levels of shame reported significantly higher rapport (M = 4.769, SD = 0.116) than participants in the Zoom condition (M = 3.462, SD = 0.164).

Discussion

In this study, we explore if online qualitative interviewing yields the same interviewee experience as FTF qualitative interviewing. Specifically, this study assessed data quality and interviewees’ perceptions of rapport, empathy, and conversational involvement across online and FTF interviewing approaches. Our findings support the results of several previous comparative studies while adding rigor, novelty, and relevance to the ongoing debate about FTF and online qualitative interviews (Namey et al., 2021, 2022).

Interviewee Experience: Rapport, Empathy, and Conversational Involvement

Our findings reveal that participants in both FTF and Zoom conditions perceived high levels of rapport, empathy, and conversational involvement in their interviews. While high levels of each were reported, participants in FTF interviews reported higher levels of rapport and empathy than participants in online Zoom interviews. But then, after accounting for age and gender, younger participants (18 – 39 years old) reported significantly higher levels of rapport in the Zoom condition than in the FTF condition, in contrast to male participants 40 and older who perceived higher levels of rapport in the FTF condition. Ethnicity did not affect any of the dependent variables.

Increased rapport and empathy in FTF interviewing is consistent with some studies (Meijer et al., 2021), but as indicated in our earlier review of the literature, there is some disagreement in the literature with some research finding no differences (Archibald et al., 2019; Deakin & Wakefield, 2014; Tuttas, 2015). Perhaps participant age is central to understanding the interactional dynamics of qualitative interviewing. Moreover, to our knowledge, none of the previous research controlled the interview experience and interviewers in the structured manner of this current study, so more research is needed to fully understand interviewee experiences of rapport and empathy. This current study suggests that FTF qualitative interviewing may be the best interview approach when interviewing individuals over the age of 40 about sensitive topics and perhaps online interviewing may be sufficient for interviewing individuals under the age of 40.

While rapport and empathy were enhanced in the FTF condition, there were no differences across FTF and online interviewing for conversational involvement. There is scant literature addressing conversational involvement in qualitative interviewing, but research on this in interpersonal conversations (Burgoon et al., 1999) suggests that conversational involvement might also be enhanced using the FTF approach. Since the current study did not reveal differences across conditions, future research may want to explore this more fully and consider if these results were truly due to the interview approach or if the operationalization of conversational involvement in the study was deficient in capturing the kind of conversational involvement that occurs in qualitative interviews.

Regarding data quality, this study revealed no discernible differences between FTF and online interview approaches in participant word count. Much of the current literature examining data quality and qualitative interview approaches suggests that online interviews produce less information (number of words) than FTF interviews (Abrams et al., 2015; Synnot et al., 2014). However, our data do not confirm these general findings and reflect the findings of Namey et al. (2022), highlighting no differences across conditions. These disparate findings may be due to a lack of consistency in how data quality is operationalized. While word count is a proxy for data quality in some qualitative research, it may not be the most optimal measure.

Interviewee Experience: Familiarity with Technology and Topic

People tend to be familiar with online communication platforms like Zoom. In this study, most participants reported familiarity with online videoconferencing technology and comfort with communicating online. Yet, based on the results of our post-hoc analysis of age and gender, it may be that individuals under the age of 40 may be a bit more comfortable communicating from the comfort of their own personal space via technology than communicating FTF in an unfamiliar environment. This finding is consistent with Jenner and Myers’ (2019) suggestion that privacy may be more important than the interview approach, especially when interviewing participants about sensitive topics. Perhaps younger participants perceive their own space as more private than an interview room elsewhere and, hence, more comfortable. More experimental studies are needed to examine this suggestion.

In addition to the familiarity with technology, the topic was also familiar to most of the participants in this study. We purposely selected individuals who were information-rich on the topic of mental health communication for this study, and these participants may have been more comfortable than the average person discussing mental health communication. Future research may benefit from examining if there are differences between FTF and online interviewing when participants are unfamiliar with the sensitive topic.

Rich & Lean Modalities

According to Media Richness Theory, “richer” modalities, like FTF interviewing, are more appropriate when communication exchanges have high degrees of uncertainty, while “leaner” modalities, like Zoom interviewing, are more appropriate for discussing familiar issues with low levels of uncertainty (Daft & Lengel, 1986). Given that most of the younger participants in this study were technology-friendly and comfortable discussing mental health issues, they may have experienced low degrees of uncertainty, allowing for more rapport in the “leaner” Zoom modality. In contrast, those participants who had higher levels of shame (about discussing mental health issues) experienced more rapport discussing sensitive issues in the ‘richer’ FTF approach.

Limitations

There are limitations to the current study. The most significant limitation involves sampling. The open recruitment strategies used in this study allowed participants to self-select to participate based on experience with the topic of mental health communication, and this resulted in more females than males and a predominantly White sample. Recruiting equal numbers of males and females and a more ethnically diverse sample would help to enhance the generalizability of these findings. Moreover, both interviewers in this study were female. It is possible that findings pertaining to gender and rapport might differ if one interviewer had been male. This approach to recruiting also limits the sample size to those interested in and willing to participate in the study. It is possible that participants who were willing to participate in research, in general, are more comfortable with being interviewed for research purposes. Although 73 qualitative interviews were time-intensive and yielded substantial qualitative data, the sample size was sufficient but not optimal for quantifying and testing differences across interviewing conditions. Future research should also consider a true randomized experimental study of FTF and online qualitative interviewing approaches using larger sample sizes.

Future research may also want to consider replicating this study and collecting a more comprehensive set of demographics to test differences across groups. Also, future research could assess both the interviewer’s and respondent’s experiences of FTF and online interviewing to get a fuller, dyadic picture of the interview experience. Perhaps even documenting the nonverbal cues that interviewers perceive in the different modalities as an indicator of data richness. Lastly, future research should consider how to best operationalize data quality in qualitative interviews.

Conclusion

In this post-Covid era, this study is one of the first to experimentally examine how FTF and online video interviews affect key qualitative interviewing interactional outcomes (rapport, empathy, and conversational involvement). In designing and conducting qualitative research, selecting FTF or online technology depends on a host of issues, including research objectives, sensitivity of the research topic, demographics of participants, cost of conducting research, access to the population, availability of online technology, and familiarity with technology use. For example, it may be appropriate to use a “leaner” modality, like Zoom interviews, for familiar topics with low sensitivity and a “richer” modality, like FTF interviews, for topics high in sensitivity and with individuals over 40. Given the exploratory nature of this study, further research is needed to better understand how modalities influence communication encounters, especially those concerning sensitive topics. Going forward, it is important for studies examining the differences across modalities to establish clear guidelines for measuring data quality, so results can be more easily compared and understood. This study provides additional evidence to consider when conducting FTF or online qualitative interviews.

Footnotes

Acknowledgments

We acknowledge the efforts Daisy Thomas and Jane O’Connor in assisting with participant recruitment and scheduling and Dr. L Edward Day for editorial assistance.

Ethical Considerations

The Chapman University Ethics Review Committee at Chapman University approved our interviews (approval: IRB-23-159) on January 3, 2023.

Consent to Participate

Respondents gave written consent for review and signature before starting interviews.

Consent for Publication

Respondents provided written informed consent for findings to be published and made public.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets generated during and/or analyzed during the current study are available from the corresponding author on reasonable request.