Abstract

This paper reimagines the integration of ethnography and survey research by demonstrating how ethnographic methods can fundamentally shape survey development by centring epistemological collaboration, rather than being relegated to the margins of mixed methods. Through a critical assessment of current literature—where the overwhelmingly positivist framing limits ethnography’s potential—this article posits for the need of an approach foregrounding ethnography - provocatively framed here as ‘ethnography first’. Drawing on a study of digital media use among unhoused people in Berlin, this article illustrates how an ethnographic approach woven throughout the research and writing process can yield insights that surpass ‘contextual framing.’ In doing so, it offers a model for genuinely integrated mixed-methods research that makes use of the epistemological and ethical insights of ethnography.

Keywords

Introduction

Ethnographic and survey research sit, in many ways, as opposites on the spectrum of observational social scientific research methods. Ethnographic researchers often aim to gain a long-term, in-depth understanding of a particular community, wrestling with questions of the nuances of the politics of representation along the way (Eriksen, 2015). Survey researchers, on the other hand, produce a snapshot view of the opinions of a particular group, mainly focusing on generalisability, validity, and reliability (Lee et al., 2012). The two methods are divided by fractious debates about the (im)possibilities of gaining ‘objective’ knowledge in the social sciences, reflected in the discourse around the fundamental differences between positivist and interpretivist research paradigms (Baškarada & Koronios, 2018). Yet, with the increasingly broad appeal of Mixed Methods Research (Doyle et al., 2016) and the accompanying growth of interest in ethnography outside of its traditional disciplinary boundaries (Jones & Watt, 2010), research attempting to combine the two methods is becoming more commonplace.

Yet, when one surveys the growing body of literature combining ethnographic and survey methods, a glaring question arises: Where exactly, in these studies, is the ethnography? For all the supposed interest in ethnography as a tool to supplement detail about participants’ subjective experiences and act in tandem with the output of survey research to provide a more holistic view of the data, the resulting studies tend to be couched within the rhetorical conventions of positivist research, with a few ethnographic vignettes, usually short interview excerpts, thrown in for good measure. Ethnography – a method characterised by its commitment to holistic perspectives and nuance – becomes reduced, in these accounts, to mere anecdotes, confined to the background of the research (Baiocchi & Connor, 2008). Reorienting ethnography to a more central position can enhance the ethical grounding and policy relevance of social research, particularly when engaging vulnerable populations. This requires repositioning ethnography from a subordinate, supporting role to one of equal epistemological weight — a shift I provocatively refer to as ‘ethnography first’ in order to draw attention to the implicit primacy of quantitative framing in most research combining the two. In this model, ethnography is not simply used to inform or contextualise quantitative methods, but acts as the primary foundation from which methodological decisions are derived and is preserved in its depth and complexity throughout the research and its dissemination.

The aim of this article is twofold: first, to provide a critical overview of the literature on combining survey and ethnography methodologies, demonstrating how ethnography is often relegated to a subordinate position; and second, to illustrate – through a counterexample – the potential of ethnography to profoundly inform and transform survey design by centring and epistemologically collaborating with the people one is researching with.

In doing so, I highlight how collaborative, reflexive engagement with ethnographic participants can generate deeper insights and foster more context-sensitive, ethically grounded research instruments. This approach ultimately challenges the prevailing norms of Mixed Methods studies by calling for a genuinely integrated practice that elevates the epistemological contributions of ethnography, rather than subsuming them under a positivist framework. Unlike typical approaches in Mixed Methods Research that frequently position ethnography as supplementary, the ‘ethnography first’ approach advocated in this article prioritizes participants’ epistemologies, fundamentally reshaping survey design rather than merely informing it. While this argument centres on ethnography and survey research as a specific combination, it may hold broader implications for the combination of qualitative and quantitative research methods.

Interpretive and Positivist Paradigms: Divergent Epistemological Paths

From the emergence of modern social science in nineteenth-century Europe, two broad orientations have deeply influenced how researchers conceptualise and study social phenomena: positivism, which seeks to emulate the methods of the natural sciences by striving for objectivity and reproducible findings; and interpretivism, which insists that human action must be understood in context, often through immersion in people’s lived experiences (Hasan, 2016; Yanow, 2014). This tension between positivist and interpretivist paradigms continues to underlie methodological considerations in the social sciences today, influencing whether researchers prioritize standardized and statistically generalizable data or whether they explore context-specific, reflexive insights about social life.

Positivism and Survey Research

The origins of positivism are often traced to developments in logical philosophy (Kincaid, 2023), emphasizing the emulation of natural science procedures – such as falsifiable hypotheses, operationalisation of concepts, and systematic data collection. One of the core methods that emerged from this paradigm is the survey, whose central logic is the operationalisation of concepts. Surveys are key to many strands of social inquiry, particularly when the goal is to representatively capture characteristics of particular populations (Glock & Bennett, 1967; Krosnick, 1999).

Within the positivist paradigm, survey research became “the most widely used tool of empirical investigation” (Glock & Bennett, 1967, p. IX). Following the work of early pioneers such as Durkheim, guidelines for the development and administration of representative surveys were established throughout the 20th century. Emulating the natural sciences, surveys were constructed by formulating falsifiable research hypotheses and testing them through a standardised instrument. Depending on the specific research questions, surveys might be descriptive, correlational, explanatory, or a combination of the three.

Interpretivism and Ethnography

Within the discipline of anthropology, methodological debates abound in relation to the differences between participant observation and ethnography; with the former usually seen to be the central method of fieldwork and the latter the actual depiction of insights gathered in such fieldwork (Hockey & Forsey, 2012). Yet, to facilitate comparison with survey research, I will be analytically conflating the two in this article.

As Clifford Geertz states, “[f]rom one point of view, that of the textbook, doing ethnography is establishing rapport, selecting informants, transcribing texts, taking genealogies, mapping fields, keeping a diary, and so on” (2014, p. 167). This methodological toolkit by itself does not prescribe an a priori commitment to interpretivism. In fact, ethnography was not always tied to an interpretive paradigm. Indeed, until the mid-twentieth century, efforts were made to anchor the insights derived from participant observation within “the normative premises of science” (Wallerstein, 1996, p. 22). For example, structural functionalist and structuralist approaches sought to produce universal, law-like explanations grounded in systematic observation (Kuper, 1975).

However, by the 1960s, positivist attempts at doing ethnography became all but displaced, shifting instead toward an increased focus on its interpretive affordances (Geertz, 1973) and centring around the “epistemological stance that knowledge is necessarily subjective” (English & Nielsen, 2023, p. 10). This orientation produced a style of ethnographic writing that employs ‘thick description’ and rarely – if ever – poses clear research questions in the positivist sense. Reflexivity about the implications of knowledge production on – and with – Others resulted in a wholesale rejection of treating the research subject as an external reality held at arm’s length (Yanow, 2014, p. 105), and a focus on ethnographic research beyond objectivist attempts at representation (Clifford & Marcus, 1986; Ingold, 2011). In turn, objectivity itself became the subject of ethnographic interrogation (McDonald, 2017), with ethnography becoming rooted in “long-term and open-ended commitment, generous attentiveness, relational depth, and sensitivity to context” (Ingold, 2014, p. 384).

The Emergence of Mixed Methods Research

Rather than being static and bounded, however, over the course of the 20th century, earlier trends of social scientific disciplinary divergence softened, and researchers from one discipline took to understanding the subject matter historically claimed by another, or used methodologies developed by other disciplines. Consequently, Mixed Methods Research (MMR), through integrating different research approaches in a pragmatic rather than dogmatic way (Denscombe, 2008), evolved as one solution to the methodological discontents of the “paradigm wars” (Giddings, 2006, p. 196).

It is in this context that an increasing interest in combining the affordances of ethnographic and survey research – in spite of the stark philosophical differences underpinning the two – has taken shape. Research on topics spanning from health seeking among elderly Hispanics in New York City (Freidenberg et al., 1993), childbearing preferences in Nepal (Pearce, 2002), and rural-urban migration in India (Thachil, 2018) has sought to integrate ethnographic and survey research. This is in line with a wider trend towards the development of MMR research, much of which sidelines debates about the epistemological and ontological incommensurabilities of methodologies (Ghiara, 2020; Kuhn, 2022) in favor of pragmatism (Hesse-Biber, 2015).

Reasons given by researchers for choosing to combine ethnographic and survey research are varied. Greene et al. (1989) outline five key rationales for mixed-method research: (1) triangulation, to corroborate findings across methods; (2) complementarity, to enhance and clarify results; (3) development, where one method informs the other’s design; (4) initiation, to uncover contradictions and new perspectives; and (5) expansion, to broaden the scope of inquiry. These rationales are not mutually exclusive.

Integrating Ethnographic and Survey Research: A Review of the Literature

I carried out a targeted scoping review of peer-reviewed studies that either (a) integrated ethnographic and survey methods in primary empirical work or (b) synthesised such studies on a specific topic. Searches were run in Web of Science, Scopus and Google Scholar using the Boolean strings “survey AND ethnography” OR “survey research AND ethnographic research”. Although this strategy is not exhaustive, it is purposely calibrated to retrieve scholarship in which the joint use of the two approaches is central rather than incidental.

The studies from this review showed that the most prominent rationale researchers gave for integrating the two methods fit into the category of development (Axinn et al., 1991; Crede & Borrego, 2013; McKeever et al., 2015; Nisbet & Goidel, 2007; Thachil, 2018). Their implementation of development was unidirectional across the board: Ethnography is being used to aid in the development or administration of a survey. The single text employing the logic of initiation entailed the same logical direction, with ethnography being used to understand outliers and consequently adjust a statistical model (Pearce, 2002).

Within all of these studies, ethnography is to some extent subsumed within the positivist language and logic, and often employed as a tool to mitigate weaknesses of survey research according to said logic: external and ecological validity, construct validity, sampling vulnerable or ‘rare’ populations, measurement errors, etc. For example, Thachil (2018) underscores how ethnographic fieldwork can improve construct validity and aid in sampling a vulnerable population, while Nisbet and Goidel (2007) demonstrate how variables drawn from ethnographic studies might enhance survey instrument design. Ethnography here serves to strengthen the truth-claims developed through survey research, and is relegated to short vignettes, with some of the texts (McKeever et al., 2015; Nisbet & Goidel, 2007) not containing any actual ethnographic writing.

Researchers who emphasized triangulation (Freidenberg et al., 1993), complementarity (Adato, 2008; Gamoran & Berends, 1987), or expansion (Pope et al., 2011) highlighted the mutual benefits of combining ethnographic and survey research. By integrating these approaches, they aimed to corroborate, interpret, and critically examine each other’s findings, ultimately providing a more comprehensive and nuanced understanding. Pope et al. (2011) illustrate this by using both methods to explore different facets of computer decision support in health care, complementing extensive ethnographic vignettes and quotes with survey data to reveal potential convergences and contradictions. Even as these studies highlight the mutual benefits of combining ethnography and survey research, they do so mostly within the language and logic of positivism; with Adato (2008), for example, stressing benefits for internal and external validity.

The Subsumption of Ethnography Within the Logic of Positivism

The dominance of positivism in the studies combining ethnographic and survey research carries over into the very fabric of their texts, aligning with Johais and Leser’s (2024) assessment that positivism is as much a writing style as a philosophical construct, using a clearly demarcated writing structure (Background, Methodology, Results, Discussion) and a passivised writing style. Despite the widespread praise for ethnography in the studies surveyed above, ethnography itself is largely marginalized within many of their texts. This can be exemplified by Crede and Borrego (2013), who draw on ethnographic data to develop survey items for a survey to examine graduate engineering student retention. Their approach is both innovative and valuable; however, the ethnographic aspect is primarily represented through brief quotes, such as ‘‘[working in my group I’ve] gained an international perspective, I’ve learned a few words in other languages’’ (Crede & Borrego, 2013, p. 69).

This deference to the logic and language of positivism in most of the texts combining ethnographic and survey research methods is shaped considerably by publication pressures, given that positivist research is dominant across the social sciences (Baškarada & Koronios, 2018; Breen & Darlaston-Jones, 2010; Grix, 2004; Steinmetz, 2005). Ultimately, positivist methodology entails making truth-claims on the basis of rigid ‘scientific’ procedures. Adhering to and describing those procedures through the lens of positivism’s obligatory rhetorical conventions is precisely what demarcates research papers as ‘high quality research’. In this framework, ethnography must become flattened into “ethnographic case study methods” (Nisbet & Goidel, 2007, p. 411) adding “contextual and cultural factors into research” (Axinn et al., 1991, p. 189) and serving to add ‘lower-level value’ (Baiocchi & Connor, 2008; Kincaid, 2023; Laitin, 1986). Giddings and Grant (2007) note that, in the context of MMR, this is owed much to the fact that MMR mixes methods – doing tools – rather than methodologies – thinking tools – resulting in a subsumption of the ethnographic method within the methodological concerns of positivist research. The tendency to reduce ethnography to brief illustrative vignettes in existing Mixed Methods studies largely reflects institutional pressures and publication norms, which privilege quantifiable results and reinforce the subordinate positioning of qualitative, context-rich data.

This then begs the question how – if at all – ethnography in its holistic sense can be integrated with survey research, or positivist and quantitative research more broadly. The answer I propose lies in stressing the potential for and commitment to epistemological collaboration inherent in ethnography. Rather than using an outdated understanding of ethnography as a way to integrate ‘emic’ perspectives into research and then defer to a positivist logic, I suggest following anthropologists Boyer and Marcus in stating that our focus ought to lie in generating knowledge combining ethnography with other research methods by “deferring to, absorbing, and being altered by found reflexive subjects – by risking collaborative encounters of uncertain outcomes” (2021, p. 13). This involves “integrat[ing] fully our subjects’ analytical acumen” (Holmes & Marcus, 2021, p. 14) and being attentive to the modes of knowledge production they consider meaningful – including survey research. If we understand ethnography as contributing to the already existing and emergent bodies of knowledge within the communities we study, it follows that adding quantitative forms of knowledge to this body can in many cases be desirable, in particular given its appeal to policymakers (Hasan, 2016) and the resulting promise of impact it holds for many communities.

Shifting – or at Least Reflecting – the Power-Dynamics of Knowledge Production

In taking seriously the reflexive insights of ethnography and the skepticism many unhoused people voiced about top-down data collection, the research project within which this particular research is situated sought to shift away from viewing participants as mere subjects of research. Instead, we embraced the ethos of co-creation and collaboration described by Holmes and Marcus (2021), recognising that knowledge production is never neutral but deeply interwoven with questions of power and politics (Scheper-Hughes, 1995). Much like participatory research traditions (Freire, 1978) – though falling short of actually being participatory – this approach reframes ‘data-gathering’ as a dialogic practice, where participants help shape research questions, instruments, and interpretations.

Integrating survey research as one possible mode of knowledge making into ethnographic research in this way required intensive engagement with our subjects throughout the entire process, including conversations on the appropriateness of different methodological tools, the construction and administration of these tools, as well as on the interpretation of their findings and consequent dissemination. It means starting with ethnography and following the field to understand the requirements levelled at modes of knowledge production that emerge from inside of it. These considerations do not, of course, change the often-stringent requirements of scientific publications.

When writing and submitting articles focused on the outcomes of my research to more positivist-leaning journals, while trying to retain substantive elements from both ethnographic and survey research, article length emerged as one key problem. Furthermore, these attempts at combining the results outside a methodology-focused paper were partly plagued by difficulties related to tonal shifts, and a worry that the truth-claims from ethnography might be evaluated differently than those from the survey research.

Consequently, authors seeking to publish individual papers that combine survey and ethnography outcomes may be best advised to adhere to the logics of positivism – or at least muster substantial stamina in navigating the demands of combining the two. By contrast, a larger project with multiple outputs can more easily benefit from an expansive perspective. By starting with ethnography and allowing other forms of knowledge-making (such as survey instruments) to emerge from the field, researchers can more fully harness the transformative potential of ethnography. This also creates space to reflect on their methods in ways that lie outside the strict scope of positivist research.

As outlined in the introduction of this article, I want to put forward one counterexample to the current body of literature combining ethnographic and survey research, foregrounding a narrative, interpretive framework rather than a positivist one. While many survey researchers conduct small-scale pilots to refine their questionnaires before large-scale administration (Krosnick, 1999), this ethnographic approach entails a deeper, more sustained immersion in participants’ everyday contexts, with iterative learning that goes beyond merely fine-tuning questions. It involved spending months building relationships and gaining firsthand knowledge of the community through participant observation and informal conversations at every stage of the process. This also meant recognising ethnographic data as equally significant as survey data and capable of standing independently, not merely in support of a survey instrument.

Methods and Ethics

Our research employed a multi-method approach, combining ethnographic fieldwork with a survey instrument, to investigate digital inclusion among unhoused people in Berlin. The study was conducted in two phases: a multi-sited ethnography followed by a survey. The research was approved by the Ethics Committee of the Department of Psychology and Ergonomics at the Technical University of Berlin (Tracking Number: MH_02_20200430).

The ethnographic phase of our project spanned from January 2020 to February 2023 and involved multi-sited fieldwork in various non-governmental organisation (NGO) settings in Berlin that provide services to unhoused individuals. The goal of this phase was to gain a deep, contextualized understanding of the everyday experiences of unhoused people with digital media, the challenges they face, and the role of digital technologies in their lives. Data collection methods included participant observation, informal conversations, and in-depth interviews. Participants in the ethnographic phase were informed about the research aims and provided verbal consent to participate. In instances where I did not consult participants regarding their preference for pseudonymization, or where they explicitly requested it, their names are marked with an asterisk when first mentioned.

The survey phase took place between April and May 2022 and involved administering a structured questionnaire to a convenience sample of unhoused individuals in five day-centres for unhoused people across Berlin. These centres were chosen based on their accessibility and willingness to participate in the study. Participants were provided with an informed consent sheet written in simple language, available in the same languages as the survey. Interviewers went through the consent form with each participant to ensure they understood the information and voluntarily agreed to participate.

Ethnographic Insights on Developing a Survey Instrument: Initial Fieldwork and Survey Development

On my first day working with Karuna, an NGO assisting, among other groups, unhoused people in Berlin, the chairman of Karuna’s board, welcomed me to the team and swiftly introduced me to Ingo and Günter, who were working at the reception of the for-free-shop. Ingo was unhoused himself and had until recently been working as a street outreach worker; Günter had been unhoused when he was younger and had remained a fixture in the community in Berlin ever since. After I, somewhat timidly, had explained to them that I would start working at reception with them, with the goal of learning from them and the people frequenting the shop in order to then take over the task of distributing smartphones that had been donated to Karuna, their reaction was anything but timid: “That’s way overdue! I can’t wait to stop having to hand those things out!”, Ingo said. As word had spread among the community that the shop was at times handing out smartphones, they had become overwhelmed with demand and were excited about the prospect of me taking the distribution off their hands. For me, keen to conduct ethnographic research and then on this basis survey research with unhoused people on their digital media usage, the phone clinic seemed like an excellent jumping-off point.

Over the next few days, I developed a plan for me to hold a smartphone clinic twice a week in a separate room in the same building as the shop, each time handing out a maximum number of 20 phones on a first-come, first-served basis. While initially, fewer than a handful of people used the clinic each day, within a month, the phone clinic system became overwhelmed with demand, with dozens of people queueing up hours before the clinic started, and I had to pivot to new strategies for the distribution.

However, within the month of the clinic, I handed out smartphones to 157 unhoused people, speaking with each of them about their experiences with digital media on the street and the issues they had encountered. While staying alert to the power dynamics inherent to this particular type of ethnographic access and the way it may have affected what people were and were not willing to tell me, the clinic without question proved an intensive crash course on the topic of digital media usage among unhoused people in Berlin. Many of the people making use of the clinic were glad to share their interests, questions, and grievances regarding digital media with me.

Overview of First Survey Draft.

To ensure the validity of the survey instrument, I operationalised the themes that emerged from my fieldwork with established constructs from the existing literature. I was aiming to enhance construct validity by grounding the survey questions in validated measures, while adapting them to the specific context of unhoused individuals in Berlin emerging from the ethnographic fieldwork. For instance, personal variables such as age, gender, and housing status were included based on standard demographic questions and measures from North et al. (2004). I also incorporated questions on physical and mental health using previously validated instruments of self-assessment like those from Pérez-Zepeda et al. (2016) and Ahmad et al. (2014), looking to minimise the intrusiveness of these questions while maintaining reliability and validity.

In assessing digital skills and internet usage, I employed the Internet Skills Scale developed by van Deursen et al. (2016), selecting items with the highest factor loadings to maintain reliability and validity. Questions on internet attitudes were drawn from Joyce and Kirakowski (2015), focusing on constructs with strong confirmatory factor analysis results. Throughout the process of adapting the survey as a result of ethnographic engagement, I kept referring to the pertinent literature and adapting existing scales or instruments in accordance with the input of the people I was working with in order to yield both meaningful and reliable insights.

Nacht der Solidarität: Questioning the Appropriateness of a Survey Instrument

By happenstance, our research project began shortly before a long-anticipated attempt to quantify homelessness in Berlin. Whereas many countries and cities across Europe and beyond have been conducting homelessness counts for some time, there had never been an official count of unhoused people in Berlin. Following the example of the Nuit de la Solidarité in Paris, Berlin’s government had funded a project called Nacht der Solidarität (Night of Solidarity, shortened to NdS), in which a large number of volunteers would systematically walk the streets of Berlin for one night and approach each unhoused person they encountered, counting them if consent was given and administering a short demographic survey. In addition, Berlin’s emergency shelters would count the number of people using their services that night, and the two numbers would be added up to – in theory – produce the first comprehensive set of statistics on homelessness in Berlin.

Initially, this seemed like a fortuitous coincidence for our project: a set of statistics of the population of unhoused people in Berlin could potentially help us to render our own survey representative of this population – which is generally difficult to achieve for surveys on unhoused people and marginalised populations more generally. Around a month before the NdS, which was scheduled for January 2020, I therefore attended an informational event on the project to gather more information. However, after the lead researcher for the NdS had finished her presentation, there were some strong negative reactions from the audience.

Members of an organization with the goal of representing the interests of unhoused people in Berlin vocally criticized the entire premise of the NdS: “We are not cattle to be counted!”, one man who said he had been unhoused in Berlin for many years, exclaimed immediately after the presentation. “Why don’t you count the numbers of empty flats in Berlin instead?”. Other criticisms uttered in the ensuing discussion included the potential dangers posed by untrained volunteers approaching people on the street, the potential use cases of the data gathered through the NdS – “Don’t think for a second the police won’t use this to round us up!” Given this quite negative reaction, one question started looming large over our project: Given the negative reactions to the count and the associated survey, was a survey actually an appropriate research instrument at this point in time?

The literature on employing ethnography in combination with a survey eagerly stresses how ethnography is a useful tool in developing a survey that is more attuned to the specificities of the populations it seeks to understand; however, it does not address a much more fundamental question to which ethnographic field work might provide an answer: Does the population in question actually want to be surveyed? Surveys, after all, are a particular form of power-knowledge (Foucault, 2020), producing truth-claims whose ultimate consequences are often opaque to those being surveyed. Especially for vulnerable populations, this quantifying form of knowledge production comes with legitimate concerns and risks, as they have much experience with knowledge being gathered about them consequently being used in service of further displacing or depriving them. It is this wariness of quantification that played a significant role in many unhoused people’s rejection of the NdS. One person I engaged on the issue during the phone clinic remembered it as follows: You know, why do they want to know how many homeless people sleep in Charlottenburg [a Berlin borough]? What are they going to do with that data? Why do they need to know where I eat, sleep, and shit? It’s obvious what they what to do: They want to know where to send the police, and where they need to do more to squeeze us out of the city.

In a recent manifesto on collaborative ethnography, anthropologists Holmes and Marcus provide pertinent insight into the question of the modes of knowledge-production emerging from collaborative ethnographic field work, describing them as “a deferral (not necessarily deference) to a subject’s modes of knowing” (2021, p. 28). In our particular context, the imperative became deferring not just to unhoused peoples’ modes of knowing, but also to the modes of knowledge-production by which they wanted to be known. Consequently, our first instinct after the overwhelmingly negative reaction to the NdS was that, perhaps, a survey was not an appropriate way of gathering knowledge for our project. Abandoning the survey would have been difficult given that it was part of the funding proposal for our project – exemplifying how the prevalent “audit culture” (Strathern, 2000) in the academy can constitute a significant hurdle for taking insights gathered through ethnographic field work forward in the context of a project. However, within our research team, we agreed that this would have to remain a real option if we wanted to take the epistemological concerns of our participants seriously. I decided that I would speak to the people I was working with to discuss whether they felt that a survey was an appropriate research tool, and if they did not think so, to abandon it.

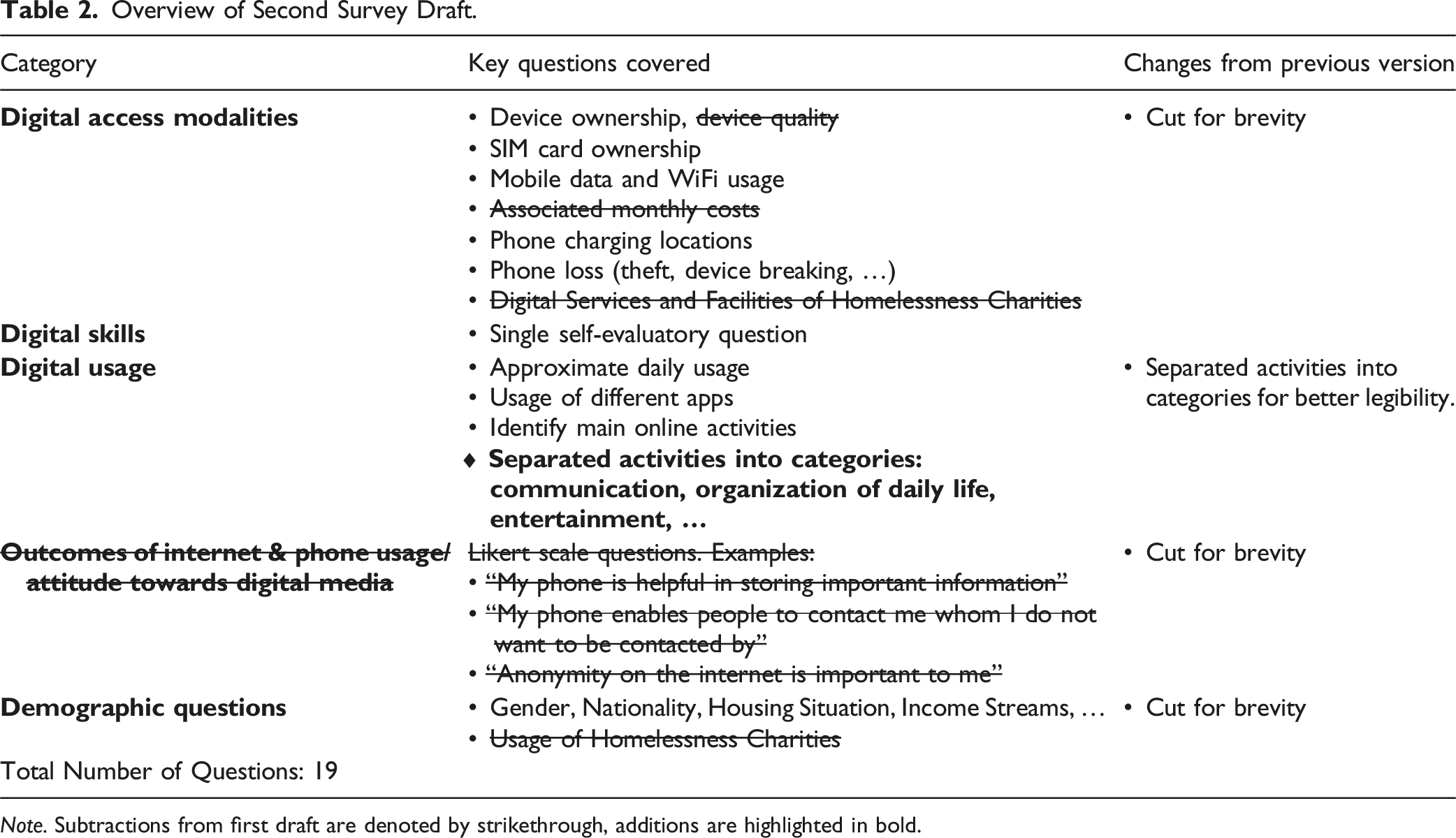

Developing a Second Survey Draft

Overview of Second Survey Draft.

Note. Subtractions from first draft are denoted by strikethrough, additions are highlighted in bold.

Trialling the Second Draft and Developing a Third Draft

When I spoke to people in the for-free-shop, what stood out to me was that only few of them were aware of the debate around the NdS or had heard of the organisation which had called for its boycott.

Somewhat unexpectedly, however, none of the people I spoke to took this criticism of the NdS to be an indication for not carrying out our survey. Instead, they at times seemed surprised by my concerns: “Well, you just explained to me what you want to use this information for, and it makes a lot of sense. So why don’t you just explain it to people like that?”, one person asked. This seemed to be the concurring opinion: If our team made it sufficiently clear what reasons we were collecting this data for and appeared genuine in positioning ourselves as advocates for unhoused people, the survey had the potential to be an important asset for unhoused people in Berlin. I suggested to them that our team was simultaneously collecting data in other ways – ethnography and interviews – that we would be able to use for advocacy purposes. Overwhelmingly, people said they did not believe Berlin’s government would respond to this kind of data collection: “They will want cold, hard numbers”, one person told me. What was central, the people I spoke to agreed, was that this data not be collected for data collection’s sake, but for the clear purpose of attempting to lobby for changes with and on behalf of unhoused people. In the terms of Holmes and Marcus (2021), the mode of knowledge people wanted us to defer to was that mode which had the clearest affordances for engaging with authorities.

What some of the people I spoke with did take issue with, however, was the premise of carrying out a short survey to have a low-threshold research instrument. “If you’re going to do this, then you may as well do it properly rather than waste people’s time and not find out much”, one of them told me. After going through the shortened second draft of the survey, many of them felt it was surprisingly short and did not cover much ground. Many of them had suggestions for what they thought to be important questions to be asked: They suggested questions on the effects of Covid, to focus more on the challenges experienced by unhoused people in accessing digital media, and to include people who had not been using the internet in the last twelve months, for whom, in the current draft of the survey, the survey was finished if they said as much. Initially, we had been hesitant to ask more sensitive questions on topics such as alcohol or drug usage as we felt they might be too invasive. However, multiple people stated that this worry seemed unnecessary to them: “As long as you just leave it open to people whether they have to respond to them, it shouldn’t be a problem”, one person put it.

As I gathered more and more of their questions and modifications, I realised that including all (or even most) of them would make the survey longer than our research team had thought desirable. When I raised this with the next people trialling the survey, they agreed that it would be preferable to do a longer survey – in an appropriate setting such as a day centre for unhoused people, where people spend significant amounts of time and are often looking for things to do – only if participants were given a fair compensation for the time and effort on the respondents’ part. While there are opposing views on the reimbursement of participants in vulnerable groups (Fry et al., 2005), our research team decided that it would be unethical not to reimburse unhoused people for the time they donated to our project.

One particular challenge of conducting surveys with unhoused people in Germany is the high level of language diversity. During this trial phase, I therefore also asked participants which languages they thought the survey should be available in given their experiences with the community. After cross-checking the suggestions made by participants with employees at homelessness service provision sites, we settled on making the survey available in German, English, Polish, Russian, and Romanian. Translations into these languages were provided by a registered translation firm. Due to our limited translation budget, we also created auto-translated versions using Google Translate for Arabic, Turkish, Bulgarian, and French, which we asked to be corrected by native speakers who were not certified translators.

Overview of Third – and Final – Survey Draft.

Note. Subtractions from second draft are denoted by strikethrough, additions are highlighted in bold.

Survey Administration

Given the length of the survey, our research team decided in conjunction with some of the unhoused people we were working with that the most appropriate setting to administer it was in homelessness day centres. This would ensure a few things: there would be a separate, quiet room with chairs and tables – which would be impossible on the street; people would not be pressed for time or worried about securing a spot – as is often the case in night shelters; and the day centres were low-threshold with few access restrictions other than extreme intoxication, which would hypothetically allow almost anyone to take part in the survey. Still, our decision to administer the survey in homelessness day centres meant that unhoused people who do not use the day centre system – some of whom may be the most impoverished and socially excluded unhoused people – would by default be precluded from participation. While somewhat unhappy with this trade-off, we decided to stress in any forthcoming publications that future research on the topic in Germany should consider how to include people not using homelessness service access points.

Through our various contacts in the field, we got five day-centres in Berlin to agree to carry out the survey at their centres. This involved sitting down with the social workers responsible for each centre and talking them through the survey and what we wanted to use it for. Some of the centres we contacted rejected our request, as they felt this would prove an additional stressor for their already understaffed service. Within 1.5 months, we administered 141 surveys, the results of which will be the topic of a forthcoming publication. We chose our target sample size of around 140 participants – with 141 participants being the final number at the end of our last day of final surveying – to align with widely accepted statistical guidelines for exploratory research and surveys targeting heterogeneous populations (Bartlett et al., 2001), balancing the desire for a high number of participants with the constraints of surveying a ‘hard-to-reach’ population (Willis et al., 2014).

One of the unhoused people who had helped us with trialling the survey, Oliver, was keen to help us administer the survey as well. While Oliver did administer the survey as well, his inclusion in the survey administration phase was particularly helpful as he was able to recruit participants in the centres through a snowball principle, as he already knew some people, who then asked some more people, etc. We had decided ahead of time that, given our lack of knowledge about the entirety of the population of unhoused people and given the limited number of possible participants, we would decide against attempting to recruit a ‘representative’ sample and rather rely on what is termed a ‘convenience sample’, a practice widespread in homelessness research (Daly et al., 2010; Galperin et al., 2020; Sudman et al., 1988).

Before administering the survey, we provided a detailed explanation of how correlational surveys work, how our data would be used, and what we intended to use it for. We were also transparent that, once we published the data, we could not know how it would be interpreted by other actors but would do our best to contextualise it in any context in which we would publish it. This explanation was given in addition to the explanations necessitated by the informed consent form, which included information on a variety of rights retained by the participants. Participants then checked a box stating they had understood the explanation. The more detailed engagement with the data collection and interpretation process was received positively by a number of participants. We additionally explained to participants that they would receive 10 Euros as compensation for their time, and that they would receive this compensation regardless of how many questions they filled out or whether they finished the survey.

Survey administration was carried out by one of five trained interviewers using a touch-enabled laptop or tablet, with the option of a printout version. No participant opted for the printout version. The survey was available in German, English, Polish, Russian, and Romanian, as well as in auto-translated Turkish, Arabic, and French. Through our interviewers and the day centre staff, we had interpreters who could read the survey aloud in German, English, Polish, Russian, Turkish, and French. This was important as a small number of respondents struggled with their eyesight or had limited reading skills.

We decided within our research team to take ethnographic notes during the survey administration about the statements made by participants that went beyond answering the survey questions. We highlighted this to participants, simultaneously offering the opportunity of opting out of this. These notes were revealing in many ways that the survey by itself was not. One particularly pertinent example of this was the case of the survey question “My phone allows me to tune out my surroundings and get a sense of privacy”, which respondents replied to using a Likert scale. This was included as the literature and our ethnographic research had suggested that many unhoused people use their phones to create some separation between them and the outside world within which they are located most of the time. However, this question was difficult to phrase. Through comparing our ethnographic field notes after the administration phase, we realised that many respondents seemed to have understood the question to have meant “Do you feel you have privacy in spite of using a phone?”, relating to the privacy concerns that have been a central issue in the societal discourse around social media. We therefore decided to disregard responses to this question – which we likely would not have done had we not kept ethnographic notes. While most participants finished the survey within a 20-30 minutes, some took significantly longer, partially calling into question the accessibility implications of our decision to extend the survey as we did on the advice of the participants co-designing the survey.

Discussion

The literature reviewed in this paper reveals how surveys and ethnography rarely achieve a balanced epistemological synthesis. In many studies, ethnography is relegated to a secondary role. These tendencies reflect broader institutional and publication pressures in the social sciences, where the scientific legitimacy of large-scale, quantifiable data can overshadow the contextual richness and reflexive depth that ethnography can offer (Giddings & Grant, 2007). Against this background, this paper has argued for placing ethnography, and consequently collaboration with participants, at the forefront of the research process, letting the priorities of participants shape not only how a survey is constructed but whether a survey should be conducted at all.

The empirical case of working closely with unhoused people in Berlin underscores how such an ‘ethnography first’ approach significantly diverges from established practices. Rather than merely refining wording or validating measurement instruments, the initial phone clinic and subsequent conversations allowed participants to shape the research from the bottom up and voice fundamental concerns: the mistrust many felt toward official counts or data-gathering, the need for a nuanced approach that respected the complexities of their everyday lives, and the surprising openness to a longer, more comprehensive survey – provided it genuinely served their interests.

The creation of scientific knowledge is not impartial. It is heavily impacted by the subjective decisions of researchers and shaped by the human and nonhuman relations within which they find themselves (Latour & Woolgar, 1986). Ethnography provides a critical perspective to examine and enhance this research process, serving as a counterpoint to the reductive tendencies of positivist approaches.

The example put forward in this paper aims to supplement the existing literature on combining ethnographic and survey research by taking ethnography as the research’s starting point and having other methods – in this case, a survey instrument – develop from the demands of the field. This example not only acknowledges the profound and intricate understanding gained from ethnographic observations but also illustrates how these observations may directly contribute to and improve the process of survey research, especially in research environments with vulnerable people, where including their understanding of what they see as the most important aspects of research about their communication is a crucial ethical imperative. Rather than narrowly using ethnography for one of the purposes set forth by Greene et al. (1989), this paper attempts to harness the possibilities of ethnography as broadly as possible. In fact, our ethnographic experience of the ‘Nacht der Solidarität’ led us to questioning the appropriateness of a survey instrument wholesale, consequently checking in with our participants about their opinions on this.

More broadly, although this paper focuses on integrating ethnography with survey research, its core ideas may resonate for a variety of combinations of qualitative and quantitative methods; an interrogation of this may warrant further exploration in future research. This ‘ethnography first’ strategy could similarly inform other research contexts, such as migration studies, public health interventions, or education, where participant engagement fundamentally shapes methodological and ethical validity.

A clear limitation of this approach, as already set out above, is that this is purely a reflexive, methods-focused paper for the purposes of demonstration. It does not include empirical results from the survey research and only results from the ethnographic research that pertain to the construction of the survey instrument. This is owed to the fact that this paper is seeking to constitute a methodological intervention, supplementing the literature on combining survey and ethnographic research. Yet, the core message – not displacing the insights of interpretivist approaches in favour of positivist ones solely due to the more widespread acceptance of the truth claims of the latter – holds for any paper combining the two approaches.

Conclusion

By developing an approach that foregrounds collaborative design and reciprocal engagement between researchers and participants, this study moves beyond the typical MMR ‘add-ethnography-and-stir’ formula. Instead, it demonstrates that ethnography can function as a theoretical catalyst, reconfiguring the underlying assumptions of quantitative tools and reshaping the conceptual frameworks applied to vulnerable populations – an approach provocatively termed ‘ethnography first’ in order to draw attention to the fact that it is survey/quantitative framework which is in practice elevated above ethnography in most research combining the two.

In doing so, the article points toward a larger paradigm shift in which participants are not merely objects of study but active co-constructors of knowledge, thereby challenging the default hierarchy of positivist claims and charting new directions for an integrated, reflexive, and genuinely collaborative social research. Ultimately, by foregrounding collaborative ethnographic practice rather than relegating it to a subordinate methodological role, this study not challenges prevailing positivist hierarchies in social science research. It also charts a clear path towards genuinely integrative, ethically responsible, and participant-centred inquiry, combining interpretivist and positivist methods in service of more nuanced research that is accountable to participants, attentive to the persuasive power of positivist truth claims, and capable of informing change on participants’ terms.

Footnotes

Acknowledgements

The author would like to acknowledge Prof. Maren Hartmann, who was the project leader for the associated research project, and Vera Klocke and Adrian Turan, who were members of this research project. Particular gratitude is extended to the unhoused research participants, and all the staff and volunteers at Karuna Umsonstladen and Gebewo Tagestreff Mitte.

Ethical Statement

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research for this paper was made possible through a grant from the German Research Foundation (Project number 403628254).

Declaration of Conflicting Interests

The author declares no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Research data will be available upon request.