Abstract

Member checking is an established technique in qualitative research that is intended to improve the quality and rigour of studies. However, recent debate about whether member checking achieves its intended purposes and the potential risk for unintended consequences creates uncertainty about its use. This generates confusion, particularly for those new to qualitative research. In 2021, Motulsky proposed five evaluation questions to guide researchers in decision-making about member checking. There have been no reports of the application of these questions in qualitative studies. By retrospectively applying these questions to a recently completed doctoral interpretive descriptive study on public involvement in health service design, we critically evaluate the usefulness of these questions in member checking decision-making. An important gap in understanding is addressed in how the evaluative questions might aid novice researchers in member checking decision-making by highlighting the critical importance of member checking considerations in the study planning phase. Decisions about study design and whether to use member checking require careful consideration of risks and benefits. Novice researchers, in particular, should not rely on checklists and short textbook explanations to drive member checking decision-making. They should read widely and critically, use reflective praxis, and report and justify their member checking decision-making process.

Keywords

Introduction

In this article, we add to the knowledge on decision-making about the incorporation of member checking in qualitative research. While authors have espoused the benefits of member checking in qualitative research, there are ongoing debates about its use (Motulsky, 2021). In summarising member checking debates, Motulsky (2021) provided five evaluative questions that could be considered by researchers to guide member checking decision-making. To this point, there have been no clear reports of the application of Motulsky’s (2021) questions in any qualitative studies. By drawing on our experiences from a recently completed and published doctoral study (Lloyd et al., 2023) on researchers’ experiences of and/or attitudes towards evaluating health service outcomes from public involvement in health service design, we critically evaluate and reflect on the usefulness of Motulsky’s (2021) questions for member checking decision-making.

Through this process, we highlight important issues for all qualitative researchers, but importantly for novices engaged in research training. The importance of critical thinking, reflectivity and praxis, thorough planning, and consideration of risks and benefits for participants in all elements of research design and process are highlighted.

Member Checking and Common Approaches

Member checking is commonly promoted as a process that improves the accuracy, validity, or credibility of results from qualitative research (Creswell & Clark, 2018; Creswell & Poth, 2018; Given, 2008). It is recommended in textbooks, guidelines, and quality checklists to improve research quality and validity (e.g., Tong et al., 2007). Two main approaches are based on whether raw or analysed data is used (Thomas, 2017). Transcript review involves participants editing, retracting, clarifying, or adding to the data following review of their transcript (Hagens et al., 2009). This review generally takes different forms, including direct editing of transcripts, written or verbal comments, or participation in a subsequent interview discussion about the transcript.

The second main approach is the review of analysed data, such as emerging themes, recommendations, draft reports, or diagrammatic elicitation (Sahakyan, 2023). This can be specific to an individual participant or the whole sample, with responses commonly taking the form of written feedback (e.g., Birt et al., 2016), subsequent individual interviews (e.g., Buchbinder, 2011; McKim, 2023; Urry et al., 2024), group interviews (e.g., Madill & Sullivan, 2018) or focus groups (e.g., Erdmann & Potthoff, 2023; Klinger, 2005).

Gupta (2024) suggests another informal approach to member checking, though this is less commonly described. During interviews, researchers can clarify their comprehension by repeating or paraphrasing data for participants to check for accuracy, simultaneously considering whether non-verbal responses are congruent and authentic.

In the seminal work of Cho and Trent (2006), member checking is promoted to support transactional or transformational validity, the interactive process between researcher/s and participants, which aims to enhance consensus and the accuracy of data. Those taking a positivist stance might view member checking as an opportunity to correct errors or misinterpretations (transactional validity) (Varpio et al., 2017). Researchers committed to a more collaborative and critical process, that aims to promote social change, may use member checking for transformational validity (Cho & Trent, 2006), which values the process of co-construction of data interpretations. Those informed by constructivist and critical epistemologies may strive to empower participants through this co-construction (Brear, 2019). Cho and Trent (2006) argue that transactional or transformational validity are not mutually exclusive. Rather, the value and form of member checking relate to the purpose of conducting it and to the epistemological orientation of the research.

Interest in Member Checking

Member checking has attracted international interest, with authors writing articles from a range of countries, including Australia (Brear, 2019; Doyle, 2007; Urry et al., 2024), Canada (Hagens et al., 2009), Finland (Iivari, 2018), Germany (Erdmann et al., 2022), Israel (Buchbinder, 2011; Goldblatt et al., 2011; Mero-Jaffe, 2011), New Zealand (Thomas, 2017), Norway (Slettebø, 2021), the United Kingdom (Birt et al., 2016; Harvey, 2015; Madill & Sullivan, 2018; Rowlands, 2021), and the United States of America (Candela, 2019; Carlson, 2010; DeCino & Waalkes, 2019; Harper & Cole, 2012; Koelsch, 2013; Varpio et al., 2017). These articles include case studies from diverse fields, such as education (Candela, 2019; Carlson, 2010; DeCino & Waalkes, 2019; Harvey, 2015; Mero-Jaffe, 2011; Sahakyan, 2023), health (Birt et al., 2016; Bloor, 1997; Brear, 2019; Buchbinder, 2011; Doyle, 2007; Erdmann et al., 2022; Goldblatt et al., 2011; Hagens et al., 2009; Urry et al., 2024), health education (Varpio et al., 2017), information systems (Iivari, 2018), psychology (Madill & Sullivan, 2018), and social science (Harper & Cole, 2012; Koelsch, 2013; Rowlands, 2021; Slettebø, 2021). Across these articles, authors provide significant critique about member checking, debating whether it achieves the intended purpose and the impact it has on the research and participants. Despite strong arguments that member checking does not automatically ensure study rigour (Madill & Sullivan, 2018; Thomas, 2017), it is embraced uncritically by many researchers.

Member Checking Debates

Ongoing debates about member checking (e.g., Birt et al., 2016; Carlson, 2010; Varpio et al., 2017) create confusion, particularly for novice researchers. The strongest critique of member checking came from Motulsky (2021), with a summary of key debates. Motulsky (2021) reinforced that member checking is not a proxy for valid rigorous research, should not be part of a procedural checklist, and failure to include it in the study design or reporting is not a threat to study validity (Motulsky, 2021).

Member Checking Implies Rigour

It has been argued that lack of informed, critical debate has created a de rigueur demand for member checking inclusion in research guidelines and by journal editors in published work (Motulsky, 2021; Varpio et al., 2017). As Thorne (2020) states in her critique of saturation, research reporting conventions should not be upheld without critical examination, particularly when the evidence that underpins them is not strong. This demand is upheld by the inclusion of member checking in research quality checklists, as this may indicate to authors, reviewers, and editors that member checking is an inherent indicator of rigour and can downplay the complexity of qualitative research and the appropriate use of member checking (Birt et al., 2016; Varpio et al., 2017).

Member Checking Enhances Credibility

Member checking has been debated from an epistemological and methodological perspective (Birt et al., 2016; Goldblatt et al., 2011; Varpio et al., 2017). Authors state that researchers must be clear on the purpose of member checking and how it aligns with their epistemological stance (Birt et al., 2016), particularly when the rationale for its use is based on validity. Critics of member checking state that validity is not assured through member checking (Goldblatt et al., 2011; Thomas, 2017) because not all participants in a study may choose to engage in the process and that ensuring validity, credibility, and trustworthiness in qualitative research requires a multipronged approach.

The Impact of Member Checking on Research Findings

Authors (Birt et al., 2016; Brear, 2019; Varpio et al., 2017) argue that member checking is rarely reported with sufficient detail about the process, the number of respondents, or how interpretations of the data have changed as a result. Response rates when member checking is employed are often low, which is problematic for claims about credibility (Mero-Jaffe, 2011), particularly if the outcomes are not reflected, discussed, and reported. The limited reporting of the difference member checking has made to individual studies has led to speculation that the impact might be small or insignificant (Goldblatt et al., 2011; Thomas, 2017).

The impact of member checking on research findings is complicated by the methods employed to select and present data. In interviewee transcript review, the provision of transcribed data can reveal errors and confirm accuracy, but participants could merely be acquiescing to the researcher’s perceived expertise and authority (Birt et al., 2016; Buchbinder, 2011; Slettebø, 2021). Providing participants with analysed data and interpretations for scrutiny and discussion is suggested to enhance validity more than only providing raw data (Brear, 2019). However, this requires a critical level of engagement by participants, and a level of research training might be required to allow all participants to meaningfully contribute (Brear, 2019).

Issues can arise when dealing with participant responses, particularly when participants are reading analysed interpretations of the collective data and not only their personal views and stories (Morse, 2015). If participants contend the accuracy of the data or analysis, the researcher must decide how to proceed. Morse (2015) believes the researcher ‘outranks’ the participant as a judge of the analysis based on their theoretical and research knowledge and experience. However, some researchers may find dismissing participants’ views ethically challenging.

Member Checking Enables Participant Involvement and Empowerment

How member checking impacts the power balance within a study has long been debated (Buchbinder, 2011; Goldblatt et al., 2011; Mero-Jaffe, 2011; Thomas, 2017). Authors have warned that member checking can result in participant disempowerment “if they [participants] internalize negative representations of themselves in researchers’ analysis” (Brear, 2019, p. 2). Member checking can reinforce the position of power of the researcher if analysed data are presented because the researcher, rather than the participant, controls what and how data are presented (Madill & Sullivan, 2018). Participants may feel they have to agree with the researcher’s findings (Birt et al., 2016; Buchbinder, 2011) and may not receive proportionate recognition of this role and their research contribution. However, others claim member checking is a way researchers can foster reciprocity, demonstrating respect and value for participants and their views. In giving the participant some control over the data (Hagens et al., 2009; Mero-Jaffe, 2011), researchers potentially position the participant as a co-researcher (Thomas, 2017; Varpio et al., 2017), who (with the right conditions) can constructively challenge the researcher’s interpretations, resulting in robust knowledge exchange (Madill & Sullivan, 2018).

The Impact of Member Checking on Participants

Researchers must decide whether any potential gains from member checking outweigh the risk of unintended negative consequences for participants (Hagens et al., 2009). Impacts on participants are mixed and can be difficult to predict (Candela, 2019). Authors such as Harper and Cole (2012) describe a similar effect from member checking as group therapy, where participants had a validating and normalising effect from reading their and other participants’ analysed data. This made them feel less alone because others had similar experiences and thoughts and offered them new suggestions for coping with a problem.

Conversely, participants have experienced discomfort or embarrassment from reading their spoken conversation transcribed verbatim (Birt et al., 2016; Hagens et al., 2009; Mero-Jaffe, 2011) and faced emotional turmoil by having to relive negative or traumatic experiences (Harper & Cole, 2012). The time and effort required of participants to engage in member checking can be significant (Birt et al., 2016; Goldblatt et al., 2011; Thomas, 2017) and may not be their priority or interest. There is also a small risk that transcripts or data are sent to the wrong participant (Hagens et al., 2009).

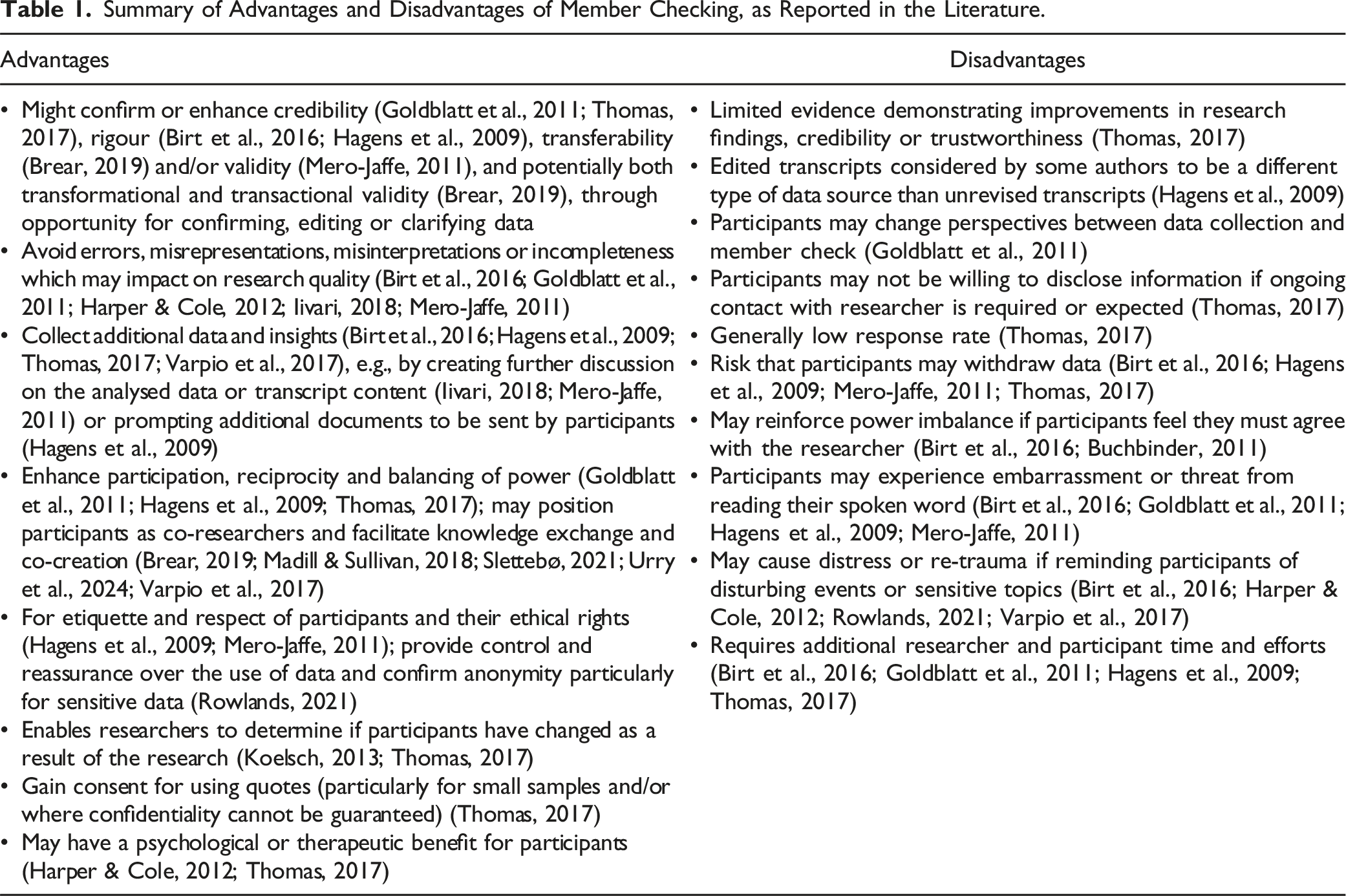

Summary of Advantages and Disadvantages of Member Checking, as Reported in the Literature.

Motulsky’s (2021) Evaluative Questions

In 2021, Motulsky stated, “Editors, peer reviewers, Institutional Review Board (IRB), dissertation advisors, and research supervisors may assume that threats to validity are not adequately addressed unless member checking is included in the research design” (p. 389). It was argued that despite contentious views on member checking, there remains an uncritical acceptance of member checking amongst those who rely on rule-following to produce sound research, particularly the neophyte researcher (Motulsky, 2021).

Motulsky’s (2021, p. 403) Evaluative Questions to Guide Member Checking Decision-Making.

In an extensive literature search, no reports were found detailing how the questions have been applied. Recent publications cite Motulsky (2021) to justify decisions to use member checking (e.g., Connelly et al., 2023; Matsuno et al., 2023; Ogueji et al., 2022), or not (e.g., Bolt et al., 2022; Jensen et al., 2023; Spann et al., 2023), without specific reference to the evaluative questions. In another example, the authors simply noted they “considered Motulsky’s (2021) suggestions regarding member checking” (Su et al., 2023, p. 380), without further detail about these considerations and how they influenced their decisions. This gap in knowledge provided the rationale for critically reviewing the utility of Motulsky’s (2021) questions for member checking, by retrospectively applying them to a recent doctoral study, where the member checking method of interviewee transcript review was used. A full description of this qualitative interpretive descriptive study on public involvement in health service design has been previously published (Lloyd et al., 2023).

Background

My Recent Doctoral Study

An interpretive descriptive qualitative study set within a pragmatist paradigm was completed that focused on the evaluation of health service outcomes from public involvement in health service design (Lloyd et al., 2023). In this study, participants were researchers who had published articles on this topic and were recruited purposively from our systematic review (Lloyd et al., 2023). Semi-structured interviews were conducted by NL via Zoom videoconferencing, using an interview guide. The interviews lasted between 34 and 70 minutes (mean of 47) and were audio-recorded and transcribed verbatim. Transcripts were emailed to participants for their review. Data were analysed using the framework method (Gale et al., 2013).

Member Checking and my Study

As a doctoral student, I had not previously interviewed participants for the purposes of research or designed a study of this nature. While planning the study, I read research methods textbooks and journal articles to determine how I could improve study rigour. I learned that interviewee transcript review might improve the quality and credibility of interview data and potentially add further depth and meaning (Birt et al., 2016; Hagens et al., 2009) so I chose to incorporate it in my study design.

During data collection, my verbal explanation of the member checking process, as part of an explanation about my study process, prompted one participant to engage in a respectful discussion on the relative merits and their personal opinion of member checking. They stated that they did not think it was worthwhile. They later emailed a reference to the study by Hagens et al. (2009), which they used as a rationale for not completing member checking in their research. I had already been reflecting on the value of member checking, and the robust discussion with an experienced researcher was thought-provoking. It prompted further reflections and reading on member checking and was the impetus to reflect more formally on my own member checking decision-making.

The Application of Motulsky’s (2021) Evaluative Questions

After reading Motulsky’s (2021) article on member checking we spent time in supervision discussing the merits of their evaluative questions to support member checking decision-making. A literature review failed to find any studies where the evaluative questions had been applied. While my study was complete, we believed there was value in retrospectively applying these questions and reflecting on my decision-making in light of them. We believed that this process would add value to the literature on member checking and would stimulate other researchers, particularly doctoral students, to critically examine their use of member checking and the decision-making involved. Our aim in retrospectively applying Motulsky’s (2021) member checking evaluation questions to our recently completed and published doctoral study (Lloyd et al., 2023) was to address a major gap in knowledge of the usefulness of these questions for member checking decision-making.

Findings - the Usefulness of the Questions

1. “How would member checking, if used at all, fit into the researcher’s aims and beliefs about research? Consider epistemology/research paradigm, epistemic privilege beliefs, research purpose and question(s). Would the goals include transactional or transformational validity or a holistic integration of both (Cho & Trent, 2006; Motulsky, 2021, p. 403).

When I designed my study, I lacked the methodological knowledge to answer some of the evaluative questions. Motulsky’s (2021) first questions could serve as a prompt for researchers at a similar level of experience to research new terms and principles. They prompt researchers to make a stance about which type of validity (or both) is the goal. I only considered validity in my study from a transactional validity perspective (Cho & Trent, 2006), possibly owing to my clinical background and biomedical, positivist-oriented views of research amongst the health sciences. I chose the interviewee transcript review approach because I had read that it would improve the accuracy of data. However, I also thought it was important that participants had a copy of their data as authors have agreed that interviewee transcript review reinforces the ethical rights of participants (Hagens et al., 2009). I would be unlikely to change my approach to member checking based on Motulsky’s (2021) first evaluative question. In hindsight, the first questions may have forced reflection on the purpose of interviewee transcript review and the type of validity, but this would have required a greater depth of reading than I conducted when choosing to use this approach. 2. “What burdens or opportunities would member checking provide to the participants—is there the possibility of harm or re-traumatization? What are the possible ethical issues? Would the participants be amenable to the additional time and effort involved and can they be located? How would member checking be perceived by the participants? Does the researcher have the time and resources necessary?” (Motulsky, 2021, p. 403).

This question adeptly directs the researcher to consider the impact of member checking from the participant’s perspective and whether what is being asked of them is reasonable. This is critical for all approaches to member checking, but arguably more so for the approach using analysed data (either individual or across cases).

Of the 13 interview participants in my study (Lloyd et al., 2023), 11 responded to requests to review their interview transcript for member checking. Of these, nine participants agreed to the data being used as presented, with no edits made. Two participants made edits using the ‘track changes’ feature of Microsoft Word. The main type of edit involved corrections to grammar and punctuation, and removal of repetitions and redundancies of words and phrases. There were additions to clarify content, for example, inserting detail to clarify who was being spoken about when they had said “us” or “they”. One transcription error was corrected, where the participant noted they had helped set up ‘patient groups’, not ‘patient meetings’.

Hallett (2013) claims member checking discussions focus on the influence on the findings, and not on the participants. I had considered the imposition on participants when designing the study. I deemed interviewee transcript review as unlikely to cause harm because the participants were experienced researchers, all of whom held university qualifications, almost all of which included PhDs. They were familiar with the interview method and verbatim transcription, and this was a single interview on their experiences of research on the involvement of the public in health service design, a topic not expected to cause emotional discomfort. Study participants were well informed about interviewee transcript review in the study invitation and consent form and verbally during the interview. Transcripts were returned to participants quickly, and within the timeframe participants were told to expect them (two weeks), which may have increased our response rates.

It is worth noting that a couple of participants in my study, even as highly experienced researchers who were prepared for transcriptions, found it challenging to see their conversations transcribed verbatim. One noted it was somewhat uncomfortable to read what they had said (likening it to listening to one's recorded voice), and the other commented they had talked a lot and apologised for the lengthy transcription required.

Unsurprisingly, the discomfort participants can experience in reading their transcripts has been reported frequently (Birt et al., 2016; Goldblatt et al., 2011; Hagens et al., 2009; Mero-Jaffe, 2011). In hindsight, I should have sent transcripts in the same, edited format expected for any quotations used in study reporting.

The ability for participants to confirm or advise regarding their preferences for de-identification was critical in my study, given the participants were authors of studies included in a systematic review, and thus potentially identifiable. Other authors have described a similar rationale when using sensitive data (Rowlands, 2021). I felt the opportunity for participants to aid in de-identification and address any inaccuracies would be appreciated by participants, but I cannot confirm if this is true. In my study, there were a few comments about confidentiality and de-identification. One was a suggestion for an alternate de-identifier for their study context, one confirmed the “level of anonymity was alright”, and another commented that a participant’s response about the type of work they did “might be too identifying of me”. These data were not used. Further reading (Birt et al., 2016; Harper & Cole, 2012; Varpio et al., 2017) has emphasised the extent of harm or re-traumatization that can occur, and Motulsky’s (2021) evaluative questions may prevent a novice researcher from inadvertently creating the same issue. 3. “How exactly would member checking be conducted—when and in what way (transcript review, synthesis of findings, summary of themes, draft research report; through written feedback, interview or focus group, multiple collaboration interviews, creative or recursive alternatives)? How would the material from member checks be analyzed and included in the findings? How might they influence the findings?” (Motulsky, 2021, p. 403).

These questions again serve as a useful prompt for novice researchers designing a qualitative study, particularly given the multitude of approaches to member checking. Motulsky’s (2021) questions may be more useful for those doing interviewee transcript review because with this approach there is the potential to assume the responses might only be minimal edits or correction of inaccuracies, however, participants can retract data or provide a significant amount of new data. Some authors question whether edited transcripts should be considered “a different type of data source than the transcripts not revised by other interviewees” (Hagens et al., 2009, p. 50). Newer researchers may be unprepared for how they will analyse and include edited transcript data in the findings or fail to consider whether the process has influenced the findings, and Motulsky’s (2021) questions are a valuable trigger to do so. I have reflected on whether I should have considered sending participants analysed data. I undoubtedly would have been perturbed at having to share my first foray into data analysis and summarising of themes with the study participants, who were all much more experienced researchers than myself. However, I do wonder what rich discussion and findings may have been yielded had I considered this. I am undecided if the increased planning and analysis work would have been a worthwhile trade-off if I had the knowledge and confidence to do it. 4. “How would discrepant or challenging voices be handled relationally and reported or analysed in the study? How would rapport with participants and power dynamics be affected?” (Motulsky, 2021, p. 403).

These questions from Motulsky (2021) were not something I had greatly considered before conducting the study, and I wonder whether this may have been more relevant if I had chosen a different member checking technique. If anything, the power dynamics in my study were reversed (at least from my perspective) because the participant’s research qualifications and experience exceeded my own. However, this is not always the case. This awareness of their experience may have been a factor in my decision to use member checking because my initial reading led me to believe it was the ‘right’ thing to do. This is something Motulsky (2021, p. 392) directly observes when they state, “novice or early career researchers and doctoral students, who are eager to follow “the rules”. In my study, I felt I had good rapport with the participants and this was potentially improved through member checking because of the opportunity for additional exchanges with participants. As in other studies (e.g., Hagens et al., 2009), an advantage of the interviewee transcript review process was that it resulted in participants providing further information and resources relevant to my study. Four participants subsequently emailed project reports, podcasts, published articles, and evaluation tools related to the study topic through a formal, pre-arranged process in which it was easy, ethical, and acceptable to make contact.

If researchers were unprepared or unaware of what their approach would be in scenarios where rapport or power balances have the potential to be challenging, Motulsky’s (2021) questions would be valuable. Given the reporting of member checking is often lacking in detail (Brear, 2019), the inclusion of the prompt to report potential power imbalances is particularly prudent. 5. “How would member checking be discussed in the research report? How would the member checking process impact the research analysis or interpretations?” (Motulsky, 2021, p. 403).

Motulsky’s (2021) Question 4 also includes a question about reporting, but Question 5 is broader in asking researchers to consider the impact of the whole process, rather than just the member checking material or discrepant or challenging voices. The open-ended question about discussing member checking differs from qualitative reporting checklists such as the COnsolidated criteria for REporting Qualitative research (COREQ) (Tong et al., 2007), where item 23 refers to a form of member checking but with a simple question: “Were transcripts returned to participants for comment and/or correction?” (p. 352). In my reporting (Lloyd et al., 2023), I provided a few sentences stating that some participants made comments and corrections, some provided suggestions to de-identify data, and no data was retracted. I made no further comment about how member checking impacted my analysis, though more detail would be required if analysed data or themes were sent to participants. It would be useful for novice researchers to see an example of high-quality reporting with transparent justification, which is more important than just ticking a box.

Discussion

Motulsky (2021) argues that including member checking in quality checklists can lead to the uncritical adoption of member checking and thus hinder critical thought, creativity, and reflexivity, all of which are required in high-quality research (Motulsky, 2021). However, it is possible that their evaluative questions, in guiding decision-making regarding member checking, may function as a checklist of sorts, with (less experienced) researchers being enticed to tick off their responses to each question and use the reference to justify their decisions. As demonstrated in this critical review and reflection, we recommend that instead of using the evaluative questions as a checklist, they should be used as a reflective praxis tool and as guidance for strong methodological rationale and transparent reporting.

When the evaluative questions are used, researchers must understand their purpose. The article by Motulsky (2021) provides some necessary context and rationale for these considerations and is illuminated by the examples in the literature, the “cautionary tales” (Motulsky, 2021, p. 392) commonly written by doctoral students (e.g., Candela, 2019; Carlson, 2010; Hallett, 2013; Rowlands, 2021). The detailed accounts of study settings and participants with the researcher’s reflections on the merits and disadvantages of the approach are far more helpful in steering the research decision-making than short textbook statements informing the reader that member checking will validate the data and improve rigour (Creswell & Poth, 2018).

The challenge for researchers new to qualitative research is to know where to begin. Whether or not to member check is one of many methodological decisions. The Motulsky (2021) article is very well written, but it is dense and structured in a way that is difficult to navigate for those new to the topic. There are numerous ways the two main member checking approaches can be conducted, and seeing an array of options applied in different settings for different purposes is invaluable. Motulsky (2021) includes a section on altered techniques for member checking which may be helpful for researchers deciding on their approach, and there are other examples as well (e.g., Birt et al., 2016; Brear, 2019; Sahakyan, 2023). Erdmann and Potthoff (2023) recommend seven decision criteria based on research ethics principles, which novice researchers will find helpful in deciding which approach to use and how. The authors urge researchers to consider “(1) participants, (2) nature of the research subject and research results, (3) researcher(s), (4) relationship between participants, (5) relationship between participant(s) and researcher(s), (6) resources, and (7) methodological approach” (Erdmann & Potthoff, 2023, p. 5).

Making decisions about member checking form only a part of the process of designing qualitative research. The decisions made in the study planning phase are critical in setting up for success, as “a flawed design leads to poor operation or failure” (Maxwell, 2008, p. 215). Research design involves theoretical, methodological, and ethical considerations relevant to the specific study (Given, 2008). Basing a decision on a short description of member checking in a textbook, or because it is included in a checklist or guideline, does not automatically add study rigour. It may also mean that decisions are made without sufficiently considering the purpose or risks. There are many decisions to be made during the study design phase, which is challenging for novice researchers who may hope to find a shortcut to the process and risk jumping straight to choosing the research question and study design without adequate critical reading and thinking. This stage of research is rather like going on a bear hunt 1 – you can’t go over or under it; you have to go through it. Reading widely and adopting critically reflective research practices are vital when making decisions about study design and producing high-quality research. Researchers must report adequate detail about the rationale for decisions about study design, including whether or not to employ member checking.

Conclusion

Whether or not to include member checking is one of many decisions to be made when designing a research study. We have reviewed the current debates regarding the risks and benefits of member checking and explored the usefulness of evaluative questions for decision-making when applied retrospectively to a doctoral study. Uncritical adoption of member checking should no longer go unchecked. When member checking is used, researchers should be clear on the purpose and method and how this aligns with their epistemological stance. Thorough planning about the method, including how participant responses and any arising issues will be dealt with and reported with transparency is essential. Whether or not it is used, researchers should clearly articulate in the study reporting their rationale for why or why not, and if used, how it has impacted the findings and interpretations. While the primary intention of this article was to review and discuss decision-making regarding member checking, we have also raised issues related to the importance of critical thinking, reflectivity and praxis, thorough planning, and consideration of risks or benefits to participants in all elements of research design and process. These learnings are especially critical for novice qualitative researchers and important in rigorous doctoral research training.

Footnotes

Acknowledgements

The authors wish to thank the interview participants for their contributions to the study and for planting the seed for this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.