Abstract

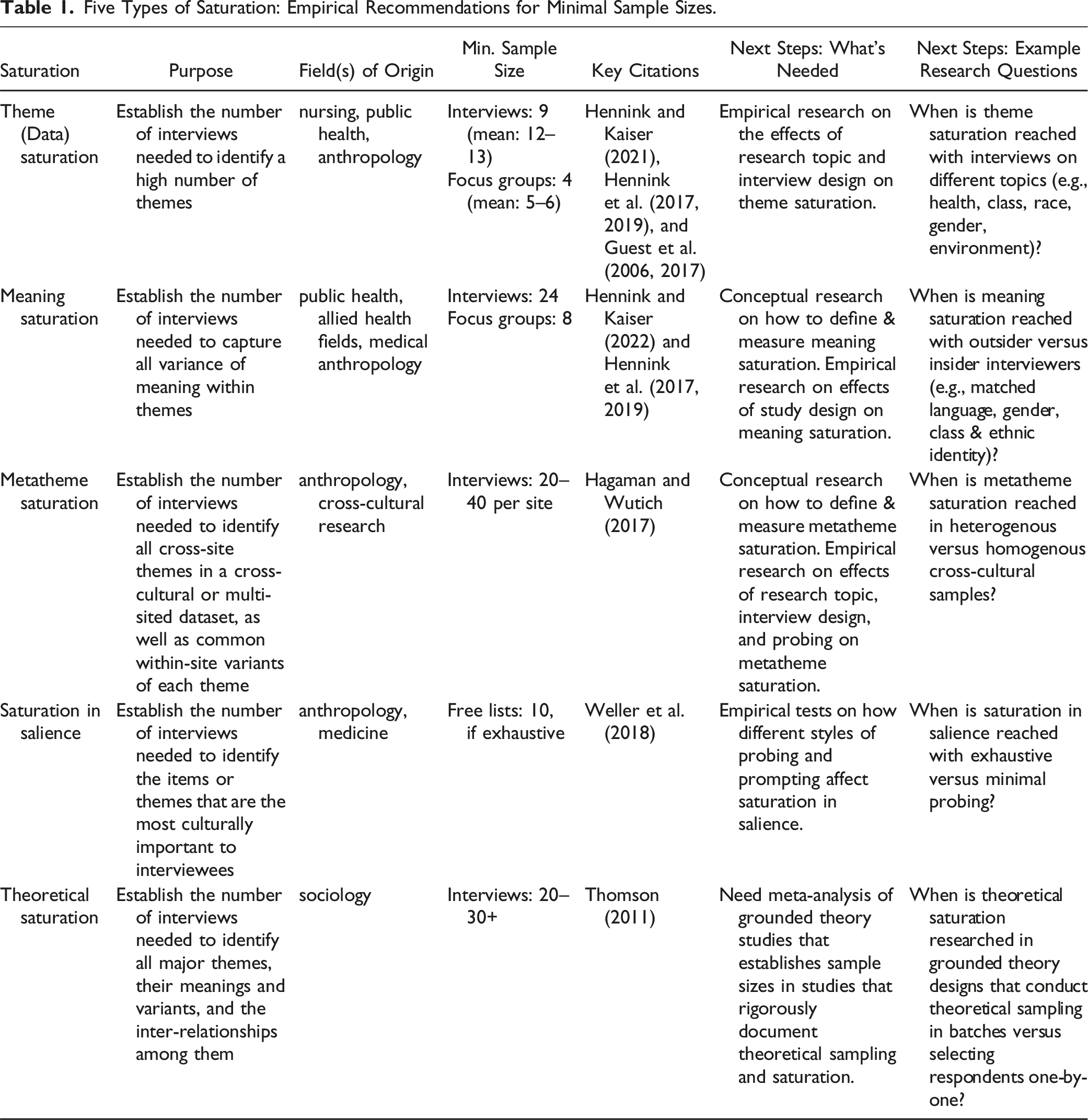

There has been a recent explosion of articles on minimum sample sizes needed for analyzing qualitative data. The purpose of this integrated review is to examine this literature for 10 types of qualitative data analysis (5 types of saturation and 5 common methods). Building on established reviews and expanding to new methods, our findings extract the following sample size guidelines: theme saturation (9 interviews; 4 focus groups), meaning saturation (24 interviews; 8 focus groups), theoretical saturation (20–30+ interviews), metatheme saturation (20–40 interviews per site), and saturation in salience (10 exhaustive free lists); two methods where power analysis determines sample size: classical content analysis (statistical power analysis) and qualitative content analysis (information power); and three methods with little or no sample size guidance: reflexive thematic analysis, schema analysis, and ethnography (current guidance indicates 50–81 data documents or 20–30 interviews may be adequate). Our review highlights areas in which the extant literature does not provide sufficient sample size guidance—not because it is epistemologically flawed, but because it is not yet comprehensive and nuanced enough. To address this, we conclude by proposing ways researchers can navigate and contribute to the complex literature on sample size estimates.

Keywords

Introduction

There has been a recent explosion of articles on minimum sample sizes needed for analyzing qualitative data. Now is an excellent time for taking stock of the empirical literature on sample sizes for qualitative data analysis—and to critically assess what future research is needed to advance knowledge of minimum sample sizes. In this integrative review, we examine the literature on minimum sample size estimation for 10 types of qualitative data analysis: 5 types of saturation and 5 common methods. To begin, we draw on the large and fast-growing literature on saturation. We then discuss qualitative data analysis methods for which sample size estimation is rarely discussed and poorly understood. In doing so, we generate a taxonomy, conceptual framework, and research agenda for understanding minimum sample size that includes and goes beyond the literature on saturation. Our overall goal is to assess what we know, reflect on the extent that it helps us move forward, and anticipate what we will need to know in the future.

Background

In 2006, Guest and colleagues (2006) published an article on estimating the minimum number of interviews that researchers need for studies based on qualitative data. The main finding of that article was striking: Theme 1 saturation—defined as identifying around 90% of themes in a set of qualitative interviews—could be reached within 6–12 interviews, an important addition to the literature on sample 2 size estimation for nonprobability samples. Advances in this field help researchers more accurately predict the sample sizes needed for research designs that use qualitative data, to better forecast the time and cost of field research, and to justify sample sizes in grant proposals.

Much of the work in this field prior to Guest et al.’s (2006) study focused on methods and parameters for estimating sample size

With the impact of the finding from Guest et al. (28,000+ citations as of 2024), the empirical approach to determining data saturation is now the most common one (e.g., Constantinou et al., 2017; Francis et al., 2010), as seen in Hennink and Kaiser’s (2022) influential systematic review of the saturation literature. Complementary approaches use statistical modeling or simulations to estimate the size of samples required to identify common and rare themes in purposive samples (Fugard & Potts, 2015; Galvin, 2015; Lowe et al., 2018; Tran et al., 2017; van Rijnsoever, 2017) or to find examples of behaviors that occur with unknown or rare frequency (Altmann, 1974; Babbage, 1835; Bernard & Killworth, 1993; Gross, 1984; Tippett, 1935). Meta-analyses of the peer-reviewed research on sample sizes for qualitative data are also widely cited in the sample size estimation literature (Carlsen & Glenton, 2011; Marshall et al., 2013; Mason, 2010; Sobal, 2001; Thomson, 2011).

As consensus around the empirical approach solidified, debates in the literature on data saturation and sample size estimation shifted, centering on what “saturation” means and how to study it (Aldiabat & Le Navenec, 2018; Daher, 2023; Daniel, 2019; Hennink et al., 2017; Leese et al., 2021; Marshall et al., 2013; Mason, 2010; Naeem et al., 2024; Rahimi, 2024; Saunders et al., 2018; Sebele-Mpofu, 2020). Independent lines of analysis arose around five saturation concepts:

Additionally, many methodologists have indicated concern about widespread misunderstanding and misuse of guidelines for minimal sample sizes (Baker & Edwards, 2017; Blaikie, 2018; Bowen, 2008; Braun & Clarke, 2021; LaDonna et al., 2021; Marshall et al., 2013; Mason, 2010; Morse, 1995; Naeem et al., 2024; O’Reilly & Parker, 2013; Sebele-Mpofu, 2020; Sim et al., 2018; Tight, 2023; Trotter, 2012; Vasileiou et al., 2018; Weller et al., 2018). Braun and Clarke (2021, p. 202) go so far as to argue that scholars should resist or reject neo-positivist-empiricist framings for data analysis (including the use of the saturation concept), and that it is inappropriate or impossible for qualitative researchers to make

Approach

In this integrative review, we discuss sample size guidelines for 10 types of qualitative data analysis: 5 types of saturation and 5 common methods. We intentionally selected these 10 types of analysis from very different traditions and epistemologies in research with qualitative data. In doing so, we add a novel taxonomy and conceptual framework for non-methodologists to help navigate the complex literature on sample size estimation. In addition, we provide guidance for methodologists on how they can move this literature forward, including areas where existing research on minimum sample size estimation is insufficient or nonexistent. For each approach we review, we discuss its use, the minimum sample size recommendations from empirical research, and next steps for scholars seeking to advance this literature.

We followed well-established methods for conducting integrative literature reviews (Cronin & George, 2023; Torraco, 2005; Whittemore & Knafl, 2005). Unlike systematic reviews, integrative reviews are designed to go beyond systematic searches to discover and integrate literature that are not currently in conversation. We began with systematic searches of each of the 10 types of qualitative data analysis (“theme saturation,” “data saturation,” “metatheme saturation,” “saturation in salience,” “theoretical saturation,” “reflective thematic analysis,” “ethnography,” “schema analysis,” “qualitative content analysis,” “classical content analysis”) plus all possible variants of the search terms “saturation,” “sample size estimation,” and “minimum sample size.” The results here characterize the results by method, as some searches yielded hundreds of articles with well-established methods reviews while other method searches yielded one or no articles. There are known weaknesses to systematic review methods, and systematic review methods may not be appropriate for reviews that combine concepts (Haddaway et al., 2020; Uttley et al., 2023). To ensure nothing was missed; we corresponded with expert scholars and added to the relevant literature. The purpose of an integrative review is to generate a taxonomy, conceptual framework, and research agenda (Torraco, 2005), and our approach reflects this.

We present the results of our integrative review in several sections. First, we discuss the five main saturation methods, explaining how each estimate for minimum sample size fits into a qualitative research design. Next, we discuss five exemplars of qualitative analysis, chosen to illustrate a range of approaches to sample size estimation. We begin with three examples of qualitative methods that currently have little or no sample size guidance—and explain what can be done to address this. Finally, we introduce two methods where the sample size for collecting qualitative data can be determined based on statistical power analysis or information power. The overall goal, as shown in the tables and figure below, is to assess what we know, reflect on the extent that it helps us move different kinds of research designs forward, and anticipate what needs to be done to advance the field.

Sample Size Estimates for 5 Types of Saturation

Five Types of Saturation: Empirical Recommendations for Minimal Sample Sizes.

Theme (Data) Saturation

Theme (or, data) saturation generally refers to identifying a high percentage of the themes that exist within a corpus of data. This concept was introduced initially as “information redundancy” (Lincoln & Guba, 1985), was advanced by Guest and colleagues (2006) as “data saturation,” and was widely adopted as “theme saturation” in later studies (Hennink & Kaiser, 2022). As such, theme saturation is the most commonly used saturation concept in the literature on estimating the minimum sample size for “qualitative research,” where that term is a euphemism for “statistically non-representative.”

Uses

Theme saturation is determined by identifying themes in a data set. If a researcher’s analytic goal is simply to produce a list of themes, then assessing theme saturation may be helpful. On a practical level, this kind of theme identification is very useful for codebook development or as an early step in a mixed-methods research design (e.g., to inform survey design). It is difficult, however, for a researcher to make a theoretical contribution based solely on such analyses because simply listing themes rarely provides enough information to advance a body of knowledge.

Minimum Sample Size

Nine interviews or four focus groups (Guest et al., 2006, 2017; Hennink & Kaiser, 2022), with the caveats that: (1) focus group size matters and (2) individual interviews often yield more data than focus groups (Fern, 1982; Schuster et al., 2023), especially for interviewers who are experienced in probing techniques (about which more below). Recent work suggests more interviews may be needed to reach saturation if the interviews are web-based, with estimates ranging from 30 to 67 web-based interviews (Squire et al., 2024). (But note: For developing surveys with cognitive interviewing, a minimum sample size of 55–75 is needed to identify the majority of high-impact survey problems [Blair & Conrad, 2011].)

Next Steps

Buckley’s (2022) paper proposes 10 easy-to-follow steps for theme saturation. And Mwita (2022) found that theme saturation can be affected by length (in minutes) of data collection, the use of mixed methods, and the relevance of respondents selected. Beyond this, there is value in understanding the range of sample sizes needed for theme saturation with different research topics (e.g., health, environment). For example, people are more forthcoming in focus groups on sensitive health issues, but less so for politically sensitive environmental or justice topics (Schuster et al., 2023). As well, bounded domains (“tell me about the routes you took when you evacuated from the flood”) may have fewer themes, while unbounded domains (“tell me about your experience in walking from Guatemala to the U.S. border”) may have many themes (Weller et al., 2018).

The effects of these observations on theme saturation are not yet well tested empirically. More research is also needed with different purposive sampling designs (e.g., typical vs. extreme cases) and different data elicitation strategies (i.e., beyond individual interviews and focus groups). For example, further empirical research is needed on the number of interviews necessary to reach saturation for web-based interviews, dyadic/couple interviews and third-party-present interviews (Boeije, 2004), family history and life history interviews (Nelson, 2010), stakeholder interviews (Beresford et al., 2020), Photovoice interviews (Wang & Burris, 1997), and arts-based elicitation (Bagnoli, 2009). Such research will make valuable incremental contributions.

Meaning Saturation

The concept of meaning saturation was introduced by Hennink et al. (2017) and refers to capturing all of the variance of meaning within a theme (Hennink et al., 2017, 2019). While theme saturation is about theme

Uses

Unlike theme saturation and theoretical saturation, meaning saturation aligns more clearly with commonly used approaches to descriptive theme analysis. As a result, meaning saturation is a useful and widely applicable saturation concept for thematic research with qualitative data.

Minimum Sample Size

24 interviews or eight focus groups (Hennink et al., 2017, 2019; Hennink & Kaiser, 2022).

Next Steps

Meaning saturation has not been the focus of as much methodological research as theme saturation and theoretical saturation. What’s needed next: more conceptual work on defining and measuring meaning saturation and more empirical research on the effects of probing and sampling strategy (such as homogeneous or maximum variability sampling) on meaning saturation. Because meaning saturation is likely the most useful saturation concept for theme analysis, this is where the most research is needed and where the greatest advances are to be made.

Metatheme Saturation

Metatheme analysis was introduced as a type of cross-cultural metaphor analysis (Josephides, 1991), and later developed as a mixed-methods approach that derives overarching themes from the analysis of qualitative data using quantitative semantic network analysis of words or codes (Barnett & Danowski, 1992; Nolan & Ryan, 2000; Schnegg & Bernard, 1996; Wutich et al., 2014). Recent research has established a rigorous qualitative approach to metatheme analysis, which uses qualitative comparisons of themes for identifying and comparing overarching themes from multi-sited or cross-cultural datasets (Beresford, 2021; du Bray et al., 2022; Schuster et al., 2022; SturtzSreetharan et al., 2021; Trainer et al., 2022; Wutich et al., 2021).

Uses

Metatheme saturation supports the identification of minimum sample sizes needed to create a list of the cross-site themes in a study, as well as to identify common within-site variants of each theme.

Minimum Sample Size

20–40 interviews per site. Hagaman and Wutich (2017) found that all metathemes could be identified once within 20 interviews in each site, but that it took as many as 39 interviews to reliably identify metathemes (ensuring that each metatheme occurred at least three times per site) in each of their four cross-cultural research sites.

Next Steps

Empirical research is needed to examine how metatheme saturation varies depending on: research topic, number of research sites, data elicitation, and homogeneity/heterogeneity of purposive samples. Hagaman and Wutich (2017) found metatheme saturation was reached in as few as 18 interviews in a more heterogeneous sample, but it took up to 35–39 interviews to reach metatheme saturation in more homogeneous samples. This finding regarding metatheme saturation in heterogeneous versus homogeneous samples needs replication.

Saturation in Salience

The concept of saturation in salience was introduced by Weller et al. (2018). It is commonly applied in the analysis of free-list data, a deceptively simple method. The analysis of free-list data was developed in cognitive psychology to examine how people hold on to, organize, and retrieve knowledge about things, like plants, animals, and occupations (Bousfield, 1953; Magaña & Norman, 1980) or about their own emotions (Yik & Chen, 2023). It was picked up by, and is a staple of, cognitive anthropology (Borgatti, 1994; Dengah et al., 2021; Romney & D’Andrade, 1964); it is now widely used in ethnobotany, consumer behavior, food science, and marketing.

Uses

Saturation in salience is used to identify the items or themes in a set of qualitative interviews that are the most culturally important to interviewees. Salience can be estimated for any set of qualitative data (e.g., illness narratives, migration histories, etc.), and can be used to identify the terms and topics needed to develop closed-ended survey questions in a mixed-method research design (Weller & Romney, 1988).

Minimum Sample Size

Ten free lists can produce 95% of salient items, if elicitation is exhaustive, with appropriate probing (Weller et al., 2018).

Next Steps

More research is needed on how different styles and amounts of probing in various kinds of open-ended interviews (free lists, life histories, etc.) impact when saturation in salience is reached (Brewer, 2002; Tran et al., 2017). Weller et al. (2018) found that extensive probing can yield more themes with fewer interviews and, conversely, saturation can be reached quickly with superficial interviewing using fewer probes. This may lead researchers to conclude falsely that the superficial (small-

Theoretical Saturation

The concept of theoretical saturation originates in grounded theory and is the point when “no additional data” are found to develop the analytic categories’ properties (Glaser & Strauss, 2017 [1967]:61). In a grounded theory study, theoretical saturation is achieved through an iterative process of theoretical sampling (new respondents are selected to advance the development of a theory), data collection, coding, comparison, and model building until all concepts and linkages among them are described (Aldiabat & Le Navenec, 2018; Breckenridge & Jones, 2009; Charmaz, 2006; Glaser & Strauss, 2017 [1967]; Morse, 2004; Strauss & Corbin, 1990).

Uses

Theoretical saturation occurs when all of the themes identified are fully understood in all their variants, and inter-relations among themes are established. Theoretical saturation indicates a “stopping point” for sampling but can only be determined through an iterative process of data collection and analysis.

Minimum Sample Size

20–30+ interviews recommended. Theoretical sample size cannot be determined

Next Steps

Procedures for reaching theoretical saturation are extensively documented, though some grounded theorists have argued that sufficiently detailed documentation is lacking (Breckenridge & Jones, 2009; McCrae & Purssell, 2016). There are relatively few empirical studies that unpack the mechanics of reaching theoretical saturation and its implications for sample size adequacy (Butler et al., 2018; Kearney et al., 1995; Saunders et al., 2018; Tuckett, 2004). More empirical and comparative research is needed to determine how researcher expertise and research topic affect theoretical saturation (Morse, 2000; Thomson, 2011).

Sample Size Estimates for 5 Types of Qualitative Data Analysis

Five Types of Qualitative Data Analysis: Empirical Recommendations for Minimal Sample Sizes.

Reflexive Thematic Analysis

Reflexive thematic analysis is an iterative process to identify themes in data and reflexively interpret thematic meanings (Braun & Clarke, 2022a, 2022b). The approach stems from constructivism: It assumes that the world, as we perceive it, is a construction of human meaning and experiences that can never be objectively known. In other words, themes do not exist independently from the researcher who is identifying and interpreting them—and each researcher’s knowledge, biases, and positionality become central to their identification and interpretation of themes.

Uses

The analytic purpose of reflexive thematic analysis is to represent “situated and contextual interpretations of data” rather than to assume that inherent meaning can be discovered in a data set (Braun & Clarke, 2021).

Minimum Sample Size

None. Braun and Clarke (2022a) argue it is impossible to estimate sample size

Next Steps

Reflexive thematic analysis is a widely used and cited method, and many researchers are affected by the absence of techniques to estimate sample size and to assess sample quality and adequacy. This puts these researchers at a disadvantage in competitions for scientific grant funding and during peer review in scientific journals and academic institutions. The PRICE model (Naeem et al., 2024) is a good first step toward theorizing the role of saturation in thematic analysis. To move forward, meta-analysis of reflexive thematic analysis studies could establish the “stopping points” at which researchers deemed sample sizes to be adequate.

Ethnography

Ethnography is an umbrella term for the many methods used by social scientists to analyze and describe a culture (Ballestero and Winthereik 2021; Ruth et al., 2022). While there is little consensus about what counts (or doesn’t count) as an ethnographic method, most writers on the subject agree on the importance of interviewing and participant-observation, a time-intensive data collection method in which researchers experience the phenomena under study. Here we follow Bernard (1994, 2017) in taking a big tent, mixed-methods approach to ethnography, which encompasses qualitative and quantitative approaches.

Uses

Ethnography is a research approach for analyzing and describing culture, defined as shared norms, knowledge, and behaviors. Different approaches focus on describing cultural components and areas of consensus or dissensus.

Minimum Sample Size

Guidance on sample sizes for studies based on ethnography comes from recent work by DiStefano and Yang (2024) on saturation in a mixed-methods ethnographic study with observations and interviews, and from 40 years of research on the cultural consensus model (Romney et al., 1986).

DiStefano and Yang (2024) found that it took 50 data documents (field notes from 33 observations and transcripts from 17 interviews) to identify 80% of the of the 2273 themes present in their study. To identify 90% of the themes, it took 81 data documents (field notes from 55 observations and transcripts from 26 interviews).

The consensus model is based on the observation that the more people agree about the content of a topic, the fewer people you need to understand and describe that topic. So, to discover the names of the days of the week in English, a sample size of four respondents is enough, but for research on lesser known or more disputed cultural topics, a larger sample size would be necessary. Overall, the cultural consensus literature indicates that 20–30 systematic interviews on a single cultural topic should be sufficient to adequately understand and describe that topic (Weller, 2007; Weller & Romney, 1988). Importantly, the cultural consensus method applies to any set of structured questions, such as questionnaire surveys and other formal interviews. It does not apply to free-lists, pile-sorts, or unstructured interviewing.

Next Steps

Naroll (1970) found that anthropologists who stayed in the field for at least a year were more likely to report on sensitive issues like witchcraft, sexuality, political feuds, and so forth, and that anthropologists who spoke the local language were more likely to report data about witchcraft than were those who didn’t. Empirical research is needed to confirm this finding on sensitive topics and to determine adequate sample sizes for a wider range of ethnographic methods—e.g., the number of key informants, unstructured interviews, or time in participant-observation necessary to describe a given cultural phenomenon. Meta-analysis would be a good first step for researchers looking to contribute to the literature on minimum sample sizes for ethnography.

Schema Analysis

Schema analysis is a method for identifying mental models, cognitive simplifications, or cultural scripts people use to understand the world (D’Andrade, 1991, 1995; Rice 1980; Rumelhart, 1984; Schank & Abelson, 1977; Strauss & Quinn, 1997). This approach uses methods derived from anthropology, psychology, linguistics, and sociology. Quinn’s foundational work detailed the mechanics of schema analysis, which are designed to extract the underlying cultural rules for behavior, timing, order, and the like (Quinn, 1987, 1997; 2005a; 2005b, 2018).

Uses

Schema analysis is a research approach for identifying mental or cultural models.

Minimum Sample Size

There are no explicit guidelines but Quinn (2005b, p. 2) noted that sample sizes in schema analysis tend to be modest, both in terms of interviews and discourse segments. D’Andrade (2005) and Dengah et al. (2021) argue that Weller’s work on sample sizes for the cultural consensus model (

Next Steps

Empirical research is needed to verify that sample sizes used for cultural consensus analysis are appropriate for schema analysis, given significant differences in the techniques used in interviewing and analysis. Meta-analysis could be a starting place, since most schema analysts report their sample sizes and sampling techniques.

Classical Content Analysis

Classical content analysis is an analytic tradition in which researchers systematically code qualitative data and analyze statistically the case-by-code matrix (Krippendorff, 2013). The method has a long history, going back to Wilcox’s (1900) work to determine the space (in column inches) devoted to violence in newspapers.

Uses

Classical content analysis is a systematic approach for coding and comparing qualitative data.

Minimum Sample Size

Unlike the other kinds of research reviewed here, many content analysts aim to generalize their findings to a population. This requires that analysis be done on data collected from a random sample or a complete census of a corpus of data (Krippendorff, 2013). For such samples, power analysis is the appropriate technique for determining minimum sample size. Weller’s (2015) accessible approach enables non-statisticians to calculate sample size using a power analysis.

Next Steps

None. The literature provides sufficient guidance for scholars working in a classical content analysis tradition. This is largely settled science.

Qualitative Content Analysis

Originating in communications (Mayring, 2000, 2019, 2022), qualitative content analysis is a rule-based procedural approach with reliability checks. It was developed originally as a more interpretive and contextual approach to classical content analysis, though many variants have evolved (e.g., Hsieh & Shannon, 2005; Kuckartz, 2014).

Uses

Qualitative content analysis is a systematic approach to categorizing and interpreting themes and contexts in qualitative data.

Minimum Sample Size

Sample size for qualitative content analyses—including within-case and cross-case comparisons—can be calculated using an information power approach (Malterud et al., 2016, 2021). This is a set of guidelines for considering the breadth of research aims, size of the study population, availability of prior theory to help define the research, quality of data available, and the overall analysis strategy. Thus, larger samples are needed for broader aims, larger study populations, more exploratory projects, lower-quality data, and more cross-case designs. For example, a comparison of men and women would require double the sample size of a study of women or men alone.

Next Steps

The information power approach calls for a uniquely qualitative approach to power analysis (Onwuegbuzie & Leech, 2007), but there are not yet clear guidelines for how to calculate target samples. More conceptual and empirical work is needed.

Discussion and Conclusions

Our goal here has been to take stock of current research on minimum sample sizes for 10 types of qualitative data analysis: 5 types of saturation and 5 common methods. We find a trend toward over-reliance on theme saturation (see Figure 1) and inadequate attention to the literature on meaning saturation, metatheme saturation, theoretical saturation, and saturation in salience. We have also identified three general directions for continued research on A flow chart to help researchers determine sample size estimates for iterative, multi-stage research designs with qualitative data.

First, we need more conceptual work to better define and operationalize theme saturation, meaning saturation, saturation in salience, and metatheme saturation, and more empirical research on all kinds of saturation. For example, on the conceptual level: What are the implications of defining saturation exhaustively versus prioritizing attention to the most salient, common, or repeated meanings? On the empirical level: What are the implications of setting theme saturation at 80% versus 90% of themes or metatheme saturation at 3 versus 5 repetitions of meaning?

Second, more meta-analysis is needed to amass empirical guidance for sample sizes used in theoretical saturation (for grounded theory), reflexive thematic analysis, ethnography, and schema analysis. While these methods have few or no techniques available to empirically test sample size adequacy, meta-analysis of existing studies with adequate sample sizes helps identify priorities for research.

Third, more work is needed on how different sample sizes are best suited for different types of data (e.g., free lists vs. narratives vs. field notes), different research questions, and different stages of analysis.

There is not a single answer to “how many interviews are enough?” … other than: “it depends” (Baker & Edwards, 2012). It depends on the number and prevalence of themes in the population, the percentage of total themes a researcher needs to identify, and the way respondents are selected (Lowe et al., 2018; van Rijnsoever, 2017; Weller et al., 2018). It depends on how much probing and prompting is done, and with what skill (Fern, 1982; Tran et al., 2017; Weller et al., 2018). And it depends on the type and quality of qualitative data being analyzed, the analytical goal of the research, and the epistemological assumptions of the researcher(s) (see Figure 1) (Morse, 1995, 2000). Empirical work that further clarifies these things will support the continued use of qualitative data in social science.

Footnotes

Acknowledgements

We thank our students (2022-2024) in the NSF Cultural Anthropology Methods Program, ASU's Institute for Social Science Research workshops on Qualitative Data Analysis: The Basics, Grounded Theory, and Content Analysis; ASU course ASB 500 (Qualitative Data Analysis); and SJSU course ANTH 234 (Advanced Research Methods: Qualitative Data Analysis) for their help piloting earlier versions of Figure 1 and workshopping these arguments.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded under the US National Science Foundation Cultural Anthropology Program grant (Award SBE-2017491) to the NSF Cultural Anthropology Methods Program and an NSF CAREER grant to Melissa Beresford (Award BCS-2143766).