Abstract

There are many different methods or approaches that can be applied to the evaluation of complex programmes. This paper describes the use of the Most Significant Change (MSC) participatory technique to monitor and evaluate programmatic effects. The MSC is a form of monitoring because it occurs throughout the programme cycle and provides information to manage it. Further, MSC is an evaluation because it provides stories from which programmes’ overall impact can be assessed. However, MSC, a participatory evaluation technique using qualitative approaches, is neglected by many researchers. We hope this study will convince relevant funders and evaluators of the value of the MSC technique and application. This paper offered step-by-step guidelines on how to use the MSC technique when evaluating a large-scale intervention covering perspectives of different beneficiaries within a limited period. The MSC process involves purposively selecting the beneficiaries, collecting the Most Significant (MS) stories, which are then systematically analysed by designated stakeholders and or implemented partners, selected through internal vetting, and external process by involving beneficiaries and stakeholders. The central question focuses on changes in the form of stories such as ‘Who did what?’; ‘When did the change occur?’; and ‘What was the process?’ Additionally, it seeks feedback to explain why particular a story was selected as MS and how the selection process was organised. The MSC technique further attempts to verify the validity, significant, relevant, sustainability of the change, and impact on marginalized or Gender Equality and Social Inclusion (GESI) groups brought by the programme. Furthermore, the technique seeks verification of the MS story by triangulating comprehensive notes and recordings.

Keywords

Introduction

Most Significant Change (MSC) is a monitoring and evaluation technique that uses participatory qualitative approaches to assess programmatic outputs. The MSC serves as a form of monitoring throughout the programme cycle offering key information to senior staff to manage the programme and contributes to evaluation by assessing impact and outcomes in a holistic way (Limato et al., 2018). The MSC is participatory, involving multiple stakeholders in determining the type of changes to be recorded and systematically analyzed of relevant data.

The MSC involves collecting electively choosing of the stories that report changes experienced by participants. These stories focus on who did what, when, why, and the reasons why the event was significant from qualitative and participatory perspectives (Serrat, 2017). Rick Davies introduced MSC in 1995 to address the challenges associated with monitoring and evaluating a complex participatory rural development project in Bangladesh (Willetts & Crawford, 2007). Since then, it has been used in several countries for monitoring and evaluation purposes (Waters, James & Darby, 2011). Unlike traditional evaluation methods, MSC does not have pre-defined and measurable indicators. Instead, it uses personal stories that illustrate change after the programme has been implemented on certain types of issues (Davies & Dart, 2005). Before implementing MSC, the project implementer defines ‘domains of change’ to categories the expected changes following an intervention. These domains allow for capturing changes that may not fit into the pre-defined categories. Due to its emphasis on personal stories rather than predefined indicators, MSC is often referred to as a ‘monitoring technique without indicators’. The central question in MSC revolves around the stories of who did what, when and why, and the reasons.

Behind the significant of the events, the technique ensures the monitoring and evaluating of the project and the programme’s performance by prioritizing work from less valued to more valued. This includes what intervention aims to achieve and how to achieve more of it. According to Davies and Dart (2005), MSC encourages organizational learning, provides a rich description of changes, and captures unexpected changes and indirect and process outcomes that cannot be measured by indicator-based evaluations. It is particularly suitable for programmes for which existing monitoring and evaluation tools may not provide sufficient data to understand project impacts. Additionally, MSC technique is well-suited for monitoring that also focuses on learning of certain behaviour such as Social Behavioural Change (SBC). Importantly, MSC is an appropriate technique for those researchers who are seeking to assess the effects of interventions on people’s lives particularly in the context of SBC. It also supports the project's implementing partners to improve their capabilities in capturing and analyzing the impact of the work.

The programme evaluation process using MSC technique formally commences with the collection of the change stories reported by participants, transcription and translation of the stories. The process further includes the systematic formulation of a story page of all collected stories, internal vetting of the stories, selection of the MSC story, and ultimately ends with revising the MSC story selection technique. In addition, the MSC process involves eliciting stories from stakeholders focusing on what MSC has occurred as the result of an initiative and why participants think that (positive or negative) change occurred. The MSC technique collects stories describing the changes that the intervention beneficiaries experience to understand how the intervention works, whatever its complexity, informing the evaluation and making subsequent interventions more relevant to their local context (Ørnemark, 2016). All stories are internally verified or shortlisted by those stakeholders who are directly involved in the intervention such as donor agencies and implementing partners, and only shortlisted ones are presented to management or the selection committee or MSC selection workshop for external evaluation to represent the MSC stories.

The MSC is a flexible evaluation approach which can be modified from the initial phase of the MSC design to the project implementation phase (Davies & Dart, 2005). The approach ensures capturing both: (a) ‘pre-defined change domains’, and (b) an ‘open window change domains’ to capture any other perhaps unexpected changes that appeared after the project implementation. There does not seem an obligation for thoroughly applying of each of the steps of the MSC, it can be modified and contextualized if and when the programme requires this. It is an innovative idea in the field of qualitative research approach which can be scaled down and adjusted to increase in compliance as per project needs. The MSC technique is also useful in a large group in a short timeframe, as an alternative to stories being generated and selected by small discrete groups of people. MSC can be used for making a strategic plan for achieving a higher degree of ownership which ultimately leads to a realistic and grounded experience to a greater extent than the average strategic plan. Likewise, MSC can be used as a participatory component of summative evaluation by involving an external evaluator who then considers the evidence and makes judgments about the extent to which the programme is worthwhile and how it could be improved. Eventually, the MSC approach depends on evaluators using their best judgement based, to some degree, on their values to assess the merit and worth of a programme.

These MSC stories are analyzed and selected by a panel of participants including project implementers, policymakers, stakeholders and other experts at the selection workshop. The panel selects the stories that they consider representing the MS giving the reasons why particular stories are selected as MS. After the selection of the MSC story, the approach requires feedback from all stakeholders such as project beneficiaries of the community, implementers, and policymakers, which is very crucial for verifying the story. At this stage beneficiaries can expand their view reflecting on how project implementers and policymakers perceive that the story is MS. Furthermore, feedback is needed to; (i) let beneficiaries know that their stories are read and valued by the stakeholders, (ii) communicate to them the reasons behind the panel’s judgements, and (iii) facilitate change.

Willetts and Crawford (2007) identified numerous crucial lessons in a pilot evaluation of the MSC technique. Wiletts and Crawford further stated their MSC technique faced many challenges at every stage of the monitoring and evaluation cycle i.e., stories/data collection, analysis, and MSC story selection process. Currently, the approach is widely used by international development organizations (Dart & Davies, 2003). The MSC technique has been applied in various sectors such as international development, healthcare, education, and community development (Davies & Dart, 2005). Davies and Dart further emphasized that the technique offers high returns and there is a growing need for exploration of how it can be creatively combined with other monitoring and evaluation techniques and approaches to assess the programmatic effect.

The application of the MSC technique in evaluating of programmes in Nepal is rare, as suggested by its notable absence in peer-reviewed journals (Limato et al., 2018). The few organisations that applied the MSC approach to assess the programmatic effect in Nepal don’t seem to have published about it in academic work. As Ohkubo et al. (2022) noted practical examples employing the MSC technique for ongoing monitoring and evaluation are currently limited across the globe. The knowledge gap prompts the need for comprehensive exploration and illustration not only in Nepal but also worldwide. In response to this gap in the literature our study endeavours to fulfill this void by providing detailed and tangible instances of the application of the MSC technique in the context of ongoing monitoring and evaluation.

Hence, this study seeks to address this gap by providing comprehensive insights into the step-by-step guidance for employing the MSC technique for monitoring and evaluating. The primary focus was to be on eliciting perspectives from selected beneficiaries within a constrained timeframe, utilizing a qualitative approach, and specifically emphasizing the collection of success stories.

Research Methods

The MSC is a participatory monitoring and evaluation approach that primarily uses qualitative methods to assess the programmatic effects. Monitoring is an ongoing process of information for programme management focusing on activities and outputs during implementation. Evaluation, on the other hand, is a less-frequent process that collects information to assess outcomes and impacts towards the end of the programme. Both monitoring and evaluation processes involve judgements about achievements, but evaluation takes a broader view of the entire programme and covers a longer period. Evaluation should be integral to each programme and planned from the beginning. MSC can help to track stories of change related to the issues that are not easily quantifiable such as ‘capacity is strengthening’ or ‘gender equity’ (Institute of Development Studies, 2023).

Sampling Procedure

Like most qualitative research, MSC technique follows a purposive sampling method. It applies selective sampling to collect stories/information about exceptional circumstances, particularly successful circumstances/stories instead of information/stories on the average condition of participants. The evaluator aims to obtain specific stories that are considered MSC after the implementation of the project. So, based on the purposive sampling, researchers would carefully select those participants who have unique and rich information related to the study issue. In this purposive sampling method, the sample size is determined based on the principle of data saturation, the point where researchers feel that additional data will only repeat what is already there, without adding new insights (Sharma et al., 2021). The sampling approach focuses on cases that have rich and successful stories/information because they are special in some way. Furthermore, the sampling system uses this approach in capturing the SC of stories, i.e., those stories from which the most can be learnt. It is advisable to select participants from those who are directly involved with the programme activities. In most cases, researchers purposively select participants/storytellers whom they thought might have benefited from the intervention (Tonkin et al., 2021). If there are external evaluators, it is essential to consult with project implementers, before selecting participants.

Steps of the Most Significant Change (MSC) Technique

Davies propagated the MSC technique’s ten steps, but researchers/stakeholders have no obligation to use the full ten steps. Each MSC evaluation technique can contextualize and modified as project required. Before elaborating on each step, it is worth considering that steps (4) collection of significant change stories, (5) story management process, (6) story development process, and (7) story selection process is fundamental, and the remaining three steps are discretionary. Here, researchers present a comprehensive overview of what the full implementation of the MSC technique look like hereunder (Figure 1). Step 1. Introducing MSC Technique and Fostering Interest Most significant change (MSC) participatory monitoring and evaluating technique.

The first step generally involves introducing a range of stakeholders to the MSC technique and fostering interest and commitment to apply it in assessing a programme’s impact. Initially, people may be skeptical about MSC approach’s validity and worried that the approach will cost too much time and money. To remove their doubts in MSC an enthusiastic researcher is needed. Researchers may want to present stories from other evaluations and show examples of previous reports. Likewise, it is essential to make clear the purpose of the MSC technique, its process, and the role it will play in the organization, that is going to financially support and implement the programme. Step 2. Defining the Domains of Change

The second step is to define the ‘domains of change’ such as changes in the quality of people’s lives, changes in people’s participation in development activities, changes in the sustainability of people’s organizations and activities Reid and Reid (2020) and any other changes that appeared after programme implementation (Davies & Dart, 2005). The defining of change domains ensures guidance to the researchers in collecting stories concerning the kind of changes they need to be searching for without being too focused on one perspective. Another reason for defining the changes domain is to track whether the project is making progress toward its stated objectives. Selbaraj (2021) suggested that three to five domains of change are good practice; however, the number can be decided at an organisational level or requirement. Further, domains of changes are varying which depends on nature and intensity of the project.

Along with these, organisations can define an ‘open window’ domain to track different types of changes, or any other type of changes that allows participants to report SC that does not fit into the pre-described domains (Davies & Dart, 2005). The open window domain offers SC story collectors flexibility to focus on things that they are relevant in their context. The changing domains may vary such as changes in the lives of individuals, sustainability of people’s institutions and organisational performance, changes in whole communities or policy. Step 3. Defining the Reporting Period

The third step is to decide how frequently to monitor changes based on pre-determined domains. Monitoring involves periodic collection of information, but the frequency of monitoring varies across programmes and organisations. The frequency of information collection relies partly on the nature of the project. The most common frequency has probably been tri-monthly coinciding with the prevalence of quarterly reporting in many organizations and funding bodies. Low frequency on reporting i.e., yearly runs the risk of staff and project participants forgetting how the MSC process works and why it is being used. With a higher frequency of reporting participants in the MSC process are likely to learn more quickly how to best use the process. Though, an annual reporting cycle may be appropriate in certain contexts, it is likely to be a slow process of assessing the project targets and outcomes. Step 4. Collection of Significant Change Stories

The fourth step is the collection of SC stories, the central part of MSC. It asks open-ended questions to participants such as when SC happened, how they judge the event/story, how they think that this is a SC story, and whether the event changes the quality of people’s lives (Limato et al., 2018). In this step, especially those people who are directly involved in the programme such as participants and field workers are approached by researchers to collect dozens of SC stories as prescribed by the project implementing organization/partner (Lennie, 2011).

It is considered good practice that data/story are collected in the local language. For smooth data collection, two researchers are assigned: one as moderator and another as note taker. The researchers can use data recording devices, as far as possible, to capture the informant/storytellers’ own words/verbatim. In doing so, note-taker can make comprehensive notes by hand during the interview, whilst moderator/facilitator asks the questions.

Before commencing data collection, obtaining written/oral consent from each participant indicating they are willing to participate in the research voluntarily is a significant research ethical requirement (Sharma & Adhikari, 2022a). Along with this, consent from adults (based on legal definition of the country) and parental/legal guardians’ permission is required for participants who are not deemed to be adults. If a guardian provides consent for someone else, the latter should be asked to provide voluntary assent (Sharma et al., 2019, 2024). While taking consent, permission, and assent from the participants it would be necessary to explain the purpose of the ongoing study, the advantages and disadvantages participating in the study. It would be better to organise the interview in a comfortable environment where participants feel free to narrate their change stories. The place requires privacy where the conservation can be observed by others/non-participants/a third person but cannot be interrupted (Sharma & Adhikari, 2022b). In addition, it would be wise not ask for any unnecessary personal and sensitive information and seek only for essential and relevant information that requires for the study. Step 5. Data/Story Management Process

Initially, each collected story/data should be transcribed word forword, referring to the recorded interview and comprehensive notes made by the note-taker during the interview. It would be better, if the transcription can be done on a daily basis in the field. Afterward, story management requires translation of the transcription into the required language for the final study report. The translator should be skilled in language proficiency in which researcher is going to develop evaluation report and have an in-depth knowledge of the subject matter (Pitchforth & van Teijlingen, 2005). In this step, senior researchers triangulate the translations with the recorded interviews and notes for internal validity. Each recording, comprehensive note, transcription, and translation needs to keep safe and secure so that they can be used for further authentication and verification of the SC stories. Step 6. Data/Story Development Process

The sixth step of MSC technique is to develop stories from all (translated) stories. Following each interview, MSC stories should be summarized into one page by researchers. Each MS story drafted by the researchers following the format in Figure 2, should have a beginning, middle, and end, and an additional reasoning phase (Lennie, 2011). The reasoning phase requires an explanation as to why the storyteller believes the story to be SC. The stories are considered SC from a participant’s perspective. In this MSC technique, there is no specific pre-defined changes domain. However, the domains used in most project monitoring and evaluation, as referred to above, are changes in the quality of lives, in the perception of development activities, and in the sustainability of organizations and activities (Serrat, 2017). Beside pre-defined change domain, open window domain can be used to capture unexpected changes brought by the causes of project implementation. Step 7. Internal Vetting and MSC Story Selection Workshop Story development process.

Immediately, after completing the story development process by researchers, project stakeholders and implementing partners start an internal vetting/review of all one-page short stories. The aim of internal vetting is to assess the content, redundancy, and presentation of stories. While doing so, the evaluators; stakeholders, project implementers, and directly involved community members in intervention programme identify the stories that highlighted changes as per programme objective and combines them into a single assessment process. Approximately half of the total stories are then shortlisted for external assessment in a story selection/dissemination workshop (Limato et al., 2018). It is important to select stories from various categories, during the internal vetting process. These categories may include changes in community’s perception towards study issues, reduced in gender discriminatory behaviour, changes in their livelihood, increased job opportunity, and so on that depends on intervention nature.

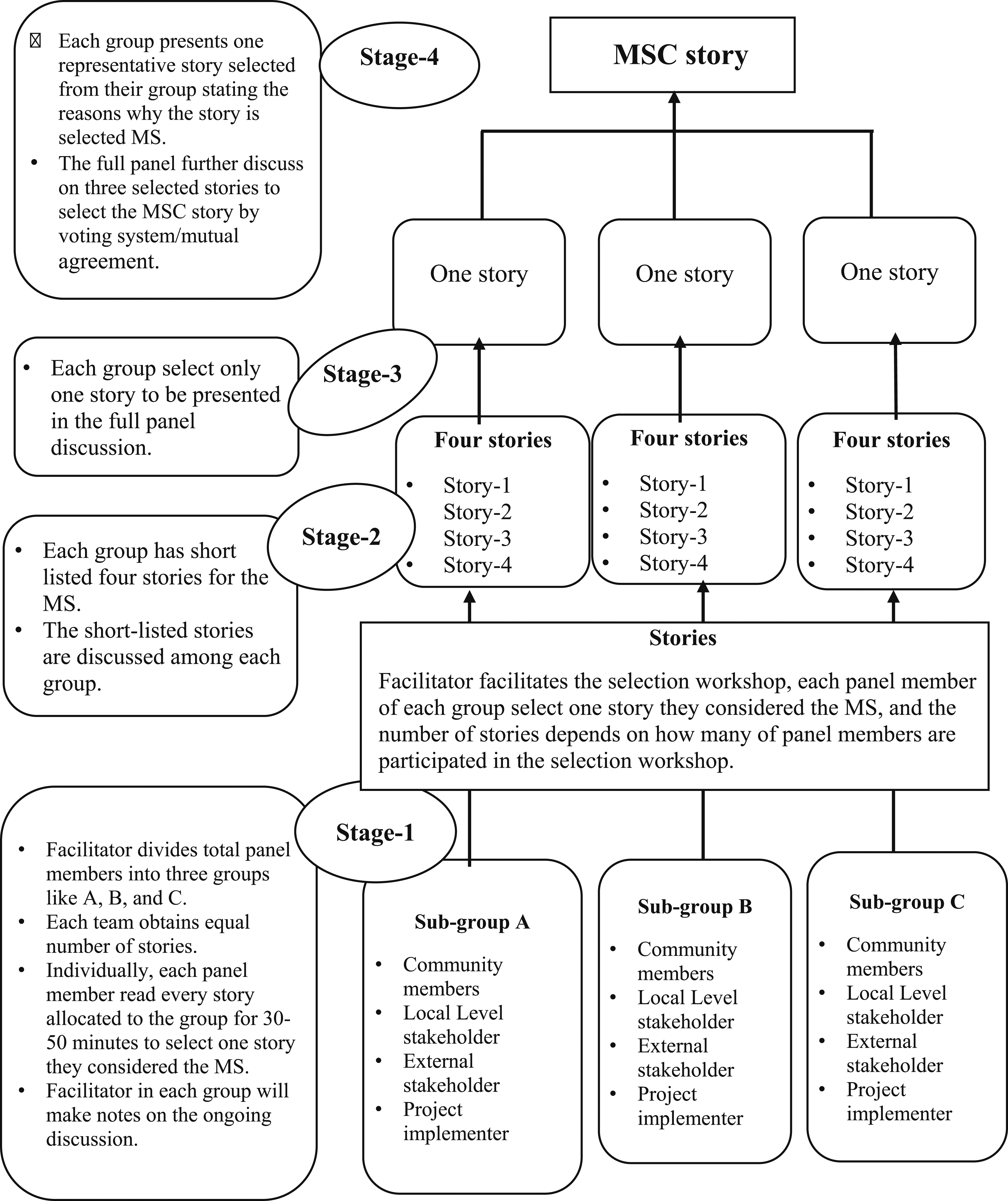

Next a story-selection workshop is required with project beneficiaries, community members, and local and external stakeholders, project implementing partners, and senior researchers. The people are invited as story selection panel members to systematically review the stories that reflect the SC based on a set of prescribed criteria. Therefore, all panel members need be educated about the step-by-step MSC story selection process, their roles and responsibilities before starting the workshop. Panel members need to be categorized into sub-groups based on their expertise and involvement in the issues. When doing so, evaluators need to be careful about power and hierarchy to minimize the impact of power dynamics on group decision-making for instance each group might consist of community member, local stakeholder, external stakeholder, project implementer, and other subject experts representing the overall workshop. After the careful instruction and highlighted roles and responsibilities of each panel member, shortlisted stories from internal vetting workshop are equally distributed to each panel group for external evaluation in the MSC selection workshop.

Next, it is important to clearly explain the story scoring criteria such as validity of the change, significant of the change, sustainability of the change, and changes among vulnerable/marginalized or Gender Equity and Social Inclusion (GESI) groups that considers unequal power relations and inequalities experienced by individuals in terms of social ideates, gender, location, (dis)ability, wealth, education, age, caste/ethnicity, race, and sexuality. Each individual participant from each sub-group scores each story on a scale of 1 (lowest) to 5 (highest) based on prescribed criteria. To do so, each participant from each sub-group has to be thoroughly reviewing each distributed story. Then provided scores have to be submitted anonymously to the small/sub-group. Then after, the scores of each member of the small groups are combined to identify the top ranked stories with the highest score. Lastly, each small group has to share their top stories with the full panel group stating why the group selected these particular stories as MSC. In addition, each group has to present suggestions that are not mentioned in the stories but are still in practice in their communities. After intensive discussion of all the shortlisted stories with the full panel (all participants in workshop) just one or required number of stories have to be selected. In doing so, it would be better if stories can be selected from each sub-group as representative stories considering project objective and outcome. Ideally, the MSC recommends just one story; however the required number of MSC stories can vary; depending on the project’s nature and intensity (Figure 3). Step 8. Feedback on MSC Selection Process Story selection process, source: (Limato et al., 2018).

After the finalization of the MSC story selection process through the workshop, the next step is to provide feedback. Providing feedback on the MSC story selection process and selected stories by stakeholders is regarded good practice. The aim of giving feedback is to let beneficiaries know that their stories were read and valued by the stakeholders. In the process beneficiaries will get a greater understanding of the collective judgments made by the panel, which hopefully will help facilitate changes.

In providing feedback, which SC is selected as MS and why, need to be clearly stated, because it can expand or challenge participants’ view of what significant is (Davies & Dart, 2005). Following this, it seems better to explain how the MSC selection process is organized. Feedback about the process can assist participants in assessing the quality of the collective judgements that are made. Providing feedback further demonstrates that others have read and engaged with SC stories. Similarly, feedback about what story is selected, why the particular story is selected, and how was the selection process, can stimulate an ongoing dialogue within and between organisations about what SC is between. Step 9. Verification of the MSC Story

The ninth step is story verification, which can be appropriately done by triangulating the comprehensive notes and recordings to validate them. It seems better to verify those stories which are shortlisted internally because verifying each collected story may consume more time and effort, which may not be practical (Tonkin et al., 2021). As both Davies and Dart (2005) and Lennie (2011) suggested the best verification method is to check those changes that have been selected as MS at all levels: at the field level, the middle management level and that of senior management. It would be better to verify the MSC story by senior participants. It ensures researchers will be more careful while documenting the SCs and this can help to improve the overall quality of SCs. Furthermore, the verification process can also provide external stakeholders more confidence in the significant of the finding of the MSC approach. Most importantly, verification of the MSC story may not be essential in all projects, hence the MSC technique needs to choose tactfully and rationally. Step 10. Revising the System

Most organisations have a cyclic process of planning, implementation, review, and revision of the MSC technique. It is often referred as the programme or planning cycle and varies between different projects. Those organisations, whom are applying MSC technique to evaluate their project impact have some sorts of implication in certain issues i.e., some might have financial implications, some have knowledge dissemination, some have training and awareness implications. These implication areas can be modified from the introductory to implementation phases which depend on the community people’s needs and the project objective.

While revising the system the most common changes or adaptations may involve: (i) changes in the names of the domains of change; (ii) changes in the frequency of reporting; (iii) changes in the story development process; (iv) changes in the types of participants; and (v) changes in the structure of meetings to select the MS stories (Davies & Dart, 2005). It depends on the project’s nature, intensity, requirements, and the organisation’s (i.e., its staff and managers’) understanding of the MSC technique.

Potential Application and Utility of the MSC Approach

The MSC approach has demonstrated its versatility and effectiveness across various domains, making it a valuable tool in monitoring and evaluating programmes' effectiveness. It has a unique characteristic of participatory nature from the project implementation to final evaluation phase. People who are directly involved in the project can assess the programmes’ effect in diverse sectors.

First, MSC technique can be applied in evaluating the diverse sectors of programmes’ effect. This approach has found widespread applications in international development, health education and promotion, education, Reducing-Child, Early, and Forced Marriage (R-CEFM), and community development showcasing its adaptability to diverse sectors (Davis & Dart, 2005). The emphasis on capturing qualitative, context-specific insights positions MSC as a valuable methodology for assessing the impact of interventions in different fields. Secondly, the technique can be used to capture unintended consequence brought by the intervention. Traditionally most evaluation techniques just aim to capture intended outcomes, which obviously overlooks unintended ones. This MSC technique, by collecting information/stories directly from participants, allows for a nuanced understanding of both intended and unintended changes (Dart & Davies, 2003).

Thirdly, this technique empowers the stakeholders by offering meaningful participation in implementing the programme. It is a participatory approach which aligns with principles of empowerment and inclusivity. By involving all beneficiaries, community members, and other stakeholders in the process, MSC ensures that their voices shape the evaluation, fostering a sense of ownership and transparency (Dart & Davies, 2003). Fourthly, this MSC technique enhances validity and transparency of the project. In contrast to top-down evaluation approaches, this MSC technique enhances the validity of findings by directly involving those benefited by the programme. The transparency is the information/stories selection process is retained from choosing diverse panels, which contributes to the credibility of the evaluation.

Fifthly, it enhances learning opportunities and organizational development. The technique not just assesses programmatic impact but also encourages organisational learning. It provides a rich description of changes, captures unexpected outcomes, and promotes a continuous learning process within organizations (Davies & Dart, 2005). It further focuses on learning, which rather than just liabilities a burden, makes MSC particularly relevant for organissations wanting to improve their capabilities in capturing and analyzing the impact of their work.

Sixthly, the MSC technique tries to address knowledge gaps. Despite its recognition and application in various contexts, there are knowledge gaps regarding the use of the MSC technique, especially in certain regions like Nepal. This highlights the need for comprehensive exploration and documentation of practical examples to address existing gaps in the literature (Ohkubo et al., 2022). Finally, it creatively combines with other techniques. As the MSC approach continuous to evolve, there is a growing need for exploring how it can be creatively combined with other evaluation techniques and approaches. This exploration ensures that MSC remains a dynamic and adaptable tool capable of addressing unique challenges posed by different programmes (Dart & Davies, 2005).

In a summary, the MSC approach is a potential application reaching beyond conventional evaluation methodologies. Its ability to capture qualitative, context-specific insights, involving stakeholders, and fostering organisational learning, put MSC in a central position as a valuable and flexible tool in the field of programme monitoring and evaluation. Further documentation and research of its applications, especially in under-represented regions, can contribute to a more comprehensive understanding of its efficacy and potentiality.

Limitations and Bias in MSC

MSC can be seen as introducing biases, because it tends to focus on success stories rather than on failure. Additionally, bias can occur in the selection of the subject matter. To maintain a high level of transparency, SC stories are to be recorded and documented in a way in which domains of change are not pre-determined, but open as perceived by the programme’s beneficiaries. Another criticism of the MSC selection process is that unpopular views might be silenced by the majority through a vote. To address this issue, researchers can apply voting first before defining the domains, which is more likely to identify and record less-popular views than other techniques of monitoring and evaluation process. The MSC technique might be irrelevant for the quantitative approach. Finally, there will always be a potential biased in those included in the MSC selection workshop, which in turn may bias the story selection.

Conclusion

MSC can be modified from the initial to the project implementation phase (Davies & Dart, 2005). MSC is becoming more common to assess development programmes involving multiple partners and stakeholders’ networks; the MSC approach stands out as an innovative and alternative methodology for monitoring and evaluating programmes emphasizing the importance of narrative-driven insights, participatory engagement and a nuanced understanding of impact. Its continued application and refined promise to contribute significantly to the field of programme evaluation, fostering learning, transparency, and the meaningful inclusion of diverse voices in the assessment of programmatic outcomes. The MSC approach has gained widespread recognisation and application in various sectors and countries. Its strength lies in its departure from traditional evaluation methods, as it eschews predefined and measurable indicators in favour of personal stories that encapsulate change.

This MSC, a participatory nature ensures that programme stakeholders, including beneficiaries, actively contribute to the selection and analysis of stories, promoting inclusively and transparency throughout the evaluation process. The ten-step process outlined by Davies and Dart (2003) provides a comprehensive framework for the application of the MSC technique. From introducing the approach and defining domains of change to the systematic selection of significant change stories and obtaining feedback, each step plays a crucial role in ensuring the integrity and reliability of the evaluation. The participatory story selection workshop, involving a diverse group of stakeholders, adds depth to the analysis by considering various perspectives and mitigating potential biases.

While the MSC approach has proven its worth, challenges and biases are acknowledged. It can be applied in diverse issues that include international development, health care, education, health promotion, and community development, underscoring its versatility. However, the documented examples of its use in the evaluation of programmes in Nepal are notably limited, emphasizing the need for further exploration and illustration. This study contributed to filling this academic gap by providing a detailed guide to applying the MSC technique in ongoing monitoring and evaluation within large-scale projects, focusing on eliciting perspectives from beneficiaries through a qualitative approach. As the MSC approach continuous to evolve, there is a growing need to explore its creative combination with other evaluation techniques and approaches. This adaptability ensures that the MSC approach remains a dynamic tool, capable of addressing the unique challenges posed by different programmes.

Footnotes

Acknowledgments

We would like to thank all previous researchers whose research papers are citied in this research.

Author Contributions

MKS conceived this study, contributed for reviewing the literature, generating themes, making MSC steps, and writing the manuscript. SPK and EvT equally contributed for generating and finalizing the themes, MSC steps, and writing and editing the manuscript. All authors contributed to the final design of the study and provided relevant contributions to its academic content.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest concerning the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.