Abstract

In this paper, we outline our qualitative protocol for the largest, independent, mixed-method, evaluation of the High Volume-Low Complexity Surgical Hubs programme in England – The MEASURE study. In addition to serving as a protocol paper, we outline the key methodological considerations and adaptations that are needed when designing big qualitative studies – complex (multi-site, multi-stakeholder), multi-method (e.g. interviews, observations, documents) qualitative research involving a large number of participations (n = 100+). This paper expands on our previous methodological work, where we used our experience of undertaking a big qualitative study as part of a mixed-method evaluation of a national emergency care-based initiative, to outline the methodological considerations and uncertainties for designing and analysing “big” qualitative studies. In this paper, we put these considerations into practice by providing a transparent account of our qualitative study design. The methodological reflections which we present are centred around the areas where we feel there is the most uncertainty for big qualitative research: study design, sampling (of case sites and stakeholders) and analysis. Underpinning this uncertainty are broader challenges which utilising this approach incite. Namely, that striving for both breadth (national-level insights) and depth (local variation and context), challenges paradigmatic norms and expectations and forces either methodological innovation, or the adaption of existing qualitative methods. We hope this paper provides transparency and insight into an area of qualitative research which has, potentially due to a perception of “safety in numbers” been inherently trusted and rarely scrutinised. Ultimately, we hope that by providing a transparent account of our study design and the challenges we have faced that we continue to encourage discussion and innovation in this evolving area of qualitative research.

Background

Setting the Scene - The High-Volume, Low-Complexity Surgical Hubs Programme

Globally, health services are searching for ways to address rising demand. (Burn, 2021; England, 2022a; England, 2019, 2022) In England, the UK government’s decision to cancel the majority of elective surgery during the pandemic, has created a backlog of patients awaiting routine surgical treatment. (England, 2022a; England, 2019) The High Volume, Low Complexity (HVLC) surgical hub programme, has been introduced to directly tackle this demand as part of NHS England’s elective recovery programme. (England, 2022a; GIRFT, 2024) More specifically, the HVLC programme is aiming to reduce waiting lists for routine surgical treatment by ring-fencing staff and facilities in designated locations to drive efficiencies in the elective care pathway and treat low complexity elective patients. (GIRFT, 2024).

The HVLC programme originated as an orthopaedic-based initiative, but HVLC surgical hubs are now being implemented across six surgical specialties: ophthalmology, general surgery, trauma and orthopaedics (including spines), gynaecology, ear nose and throat and urology. There are currently over 90 fully operational surgical hubs in England, of which 31 have been accredited as part of the national accreditation programme. (GIRFT, 2024).

There is an urgent need to address waiting lists for elective surgical treatment in England and there is a strong political and financial commitment (£1.5 billion capital investment) in support of the programme. (GOV.UK., 2022) However, despite its widespread implementation there have been no large-scale, independent evaluations of the HVLC surgical hubs policy, published in peer-reviewed journals. We are aware of some smaller scale evaluations of the programme, which have focussed on evaluating the impact of a small number of hubs; some of which are not independent from those involved with the programme. (Barratt et al., 2022; Briggs et al., 2022) A recent rapid review of surgical hubs commissioned for the Royal College of Surgeons of Edinburgh and Welsh Government, identified a limited amount of evidence (n = 12 studies). (Okolie et al., 2023) The majority of which was conducted during the COVID-19 pandemic and so was focussed primarily on understanding the ability of hubs to reduce the transmission of COVID-19, rather than elective recovery. The review also identified a lack of qualitative research in this area. (Okolie et al., 2023).

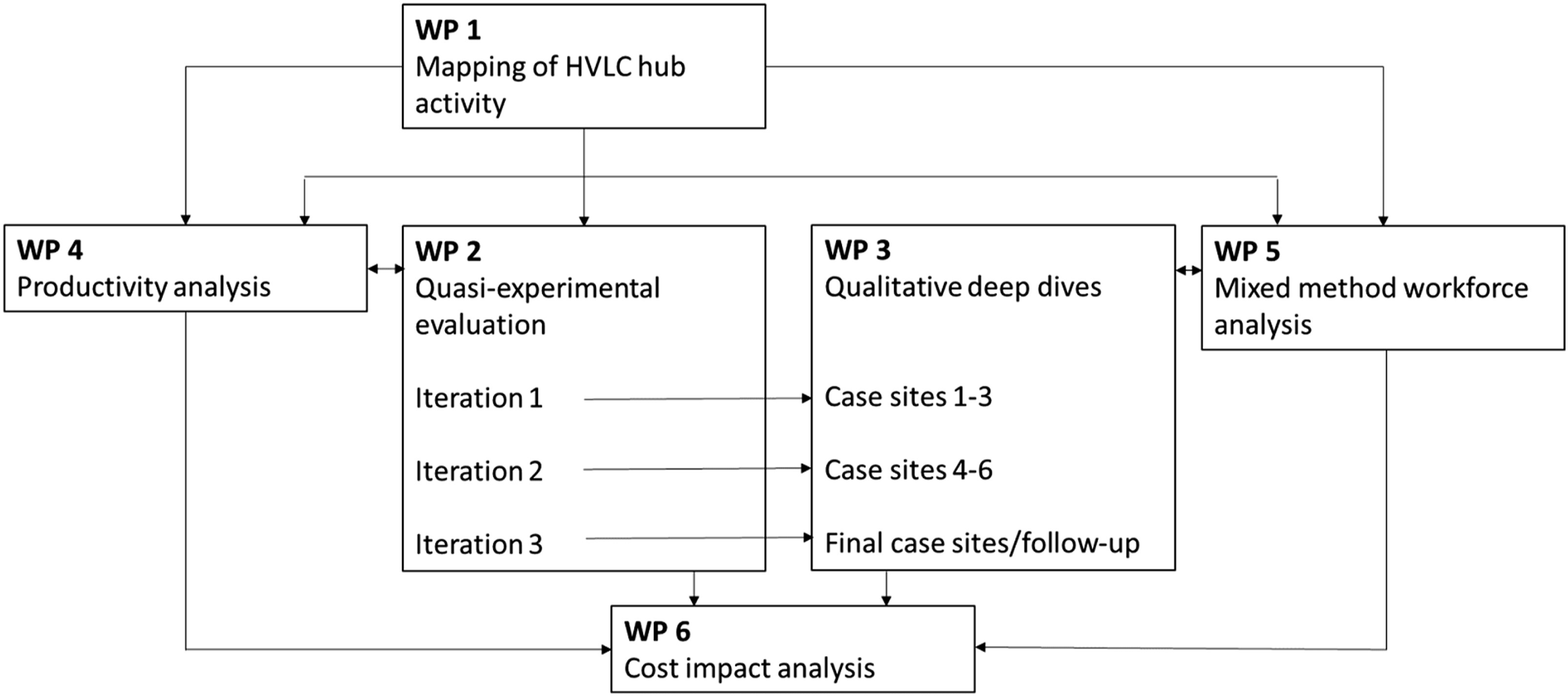

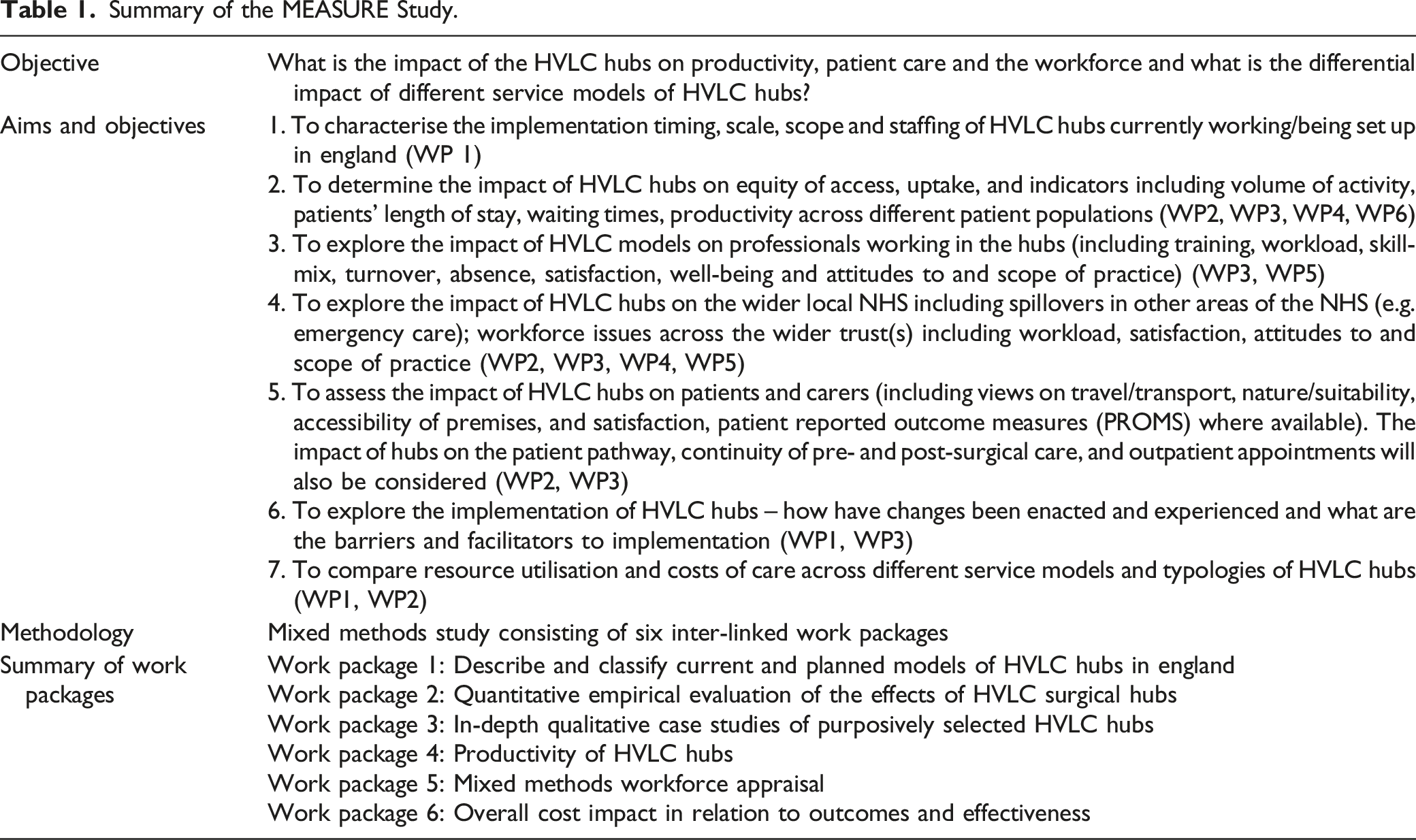

The Mixed Methods EvaluAtion of the high-volume, low-complexity Surgical hUb pRogramme (MEASURE) study, was commissioned by the National Institute for Health and Care Research (NIHR), Health and Social Care Delivery Research (HSDR) Programme. The MEASURE study consists of six integrated work packages (Figure 1, Table 1) which are designed to explore the impact of the HVLC hubs on productivity, patient care and the workforce along with the differential impact of different HVLC hub service models. Overview of the MEASURE study. Summary of the MEASURE Study.

Further details of the study can be found in Table 1 and a separate mixed methods protocol has been published elsewhere. (Scantlebury et al., 2024) In this protocol, we focus on Work Packages 3 and 5, where we will use in-depth qualitative case studies at purposively selected HVLC hubs to evaluate the implementation and impact of hubs on patients and carers, the workforce and healthcare service in England (aims and objectives 2–6, Table 1).

Explanation and Justification of Method

Working Within a Paradox – A Brief Overview to Designing Big Qualitative Studies

Despite the growing number of high-profile mixed methods studies, which have included big (n = 100+), complex (longitudinal, multi-site and multi-method) qualitative data, our methodological understanding of how to design, conduct and analyse qualitative research “at scale” is limited. In a previous methodological insights paper, (Scantlebury & Adamson, 2022) we outlined our experience of undertaking a national mixed-methods evaluation of an emergency care initiative in England (General Practitioners working in or alongside emergency departments - the GPED study). (Scantlebury et al., 2022) GPED taught us that designing and conducting “big qualitative” studies, (especially as part of wider national mixed methods evaluations) is fundamentally different to “usual” qualitative work. (Scantlebury & Adamson, 2022) This is largely because these studies challenge paradigmatic norms in that they not only try to capture complexity and context-specific influences on implementation and impact, but balance this against their wider aim of evaluating a national policy. As a result, long-established, qualitative approaches are difficult to merely apply to these studies, as their scale, complexity and overarching aims are at odds with the very reasons for why these qualitative approaches were created.

Sampling and analysis are two areas where paradigmatic norms are particularly challenged. For instance, there has been a long-standing debate amongst qualitative researchers as to “how much data is enough” and justifying the use of smaller samples within a largely positivist research environment. However, in this context, the emphasis is on establishing the parameters for exploring breadth (i.e. how much data is too much), whilst retaining the need for depth (i.e. avoiding collecting so much data that only thin, descriptive analysis is feasible). To achieve this, we cannot assume that existing qualitative analysis approaches (e.g. thematic analysis) and “rules” for sampling, can be straightforwardly applied to such large and complex data sets as they were not designed for this purpose. (Scantlebury & Adamson, 2022)

Whilst this paper is primarily a protocol paper, we also see this as an opportunity to reflect on our current thinking around the design of large, complex qualitative studies and how this has influenced the design of the MEASURE study. In doing so, we provide the transparency and methodological description that is required, but currently lacking in this area. Within our protocol we pay particular attention to the key areas where we feel adaptation and innovation of existing qualitative methods are particularly needed - study design, sampling and analysis. (Scantlebury & Adamson, 2022)

Design – Navigating the Unknown

In our previous paper, (Scantlebury & Adamson, 2022) we describe the inherent need for large, complex qualitative studies to be designed flexibly. This is particularly important for longitudinal evaluations of health policy, where there is a requirement for research to be responsive to what is often a turbulent policy landscape.

There has been substantial change to the HVLC hubs programme, both in terms of its growth (90 hubs are currently operational), remit (broadening of scope to include some complex cases) and the introduction of a non-mandatory accreditation scheme, since the NIHR launched their commissioning brief in 2022. ((NIHR). 2022) We anticipate that the policy will continue to evolve over the MEASURE study’s four-year lifecycle and that change is inevitable and crucial for the policy to be responsive to the wider challenges facing the NHS and other elective-recovery based policy directives (e.g. National Diagnostics programme, NHS Elective recovery programme) that are being introduced concurrently.

As methodologists, we felt a degree of trepidation at the grant application stage, in proposing a flexible study design. We were concerned, and perhaps more so within a mixed methods study, that this flexibility would be viewed as “vague” or methodological weakness. However, through our experience of the GPED study, we felt we had an opportunity to be transparent about the lack of methodological guidance in this area and to view it as a vehicle for methodological innovation. It is interesting to note that the panel commended the flexible approach to our qualitative study design and stakeholder driven “lines of enquiry.”

Implementation Theory

Box 1. Justification for using CFIR (Damschroder et al., 2022) during measure, text adapted from our grant application

The use of theory is relatively common in applied health research. However, there is a lack of detailed reflection, particularly within large mixed-methods studies, as to why researchers have chosen to use theory beyond generic statements of how it will “underpin” their study. There are also a growing number of theoretical frameworks available (Damschroder et al., 2009; Damschroder et al., 2022; Glasgow et al., 1999; Murray et al., 2010; Research., 2024), which have all been designed to enable “flexible application” and have considerable overlap in terms of their core aims and content. We therefore wish to reflect on why we have chosen to use the CFIR and what we feel the benefits of doing so are to our research.

Our decision to use implementation theory during MEASURE was driven largely by the aims of our research, which qualitatively focusses not just on impact, but the implementation of HVLC surgical hubs. In our previous methodological paper, we describe the crucial role that Normalisation Process Theory (NPT) (Murray et al., 2010) played in our analysis by providing a structure (through its core constructs) for integrating the key messages from our quantitative and qualitative data. In addition to providing a practical means for mixed methods data integration, we felt that NPT elevated our “story” by providing us with a way to understand and communicate the complexity and variation with which the GPED policy had been implemented (depth), whilst simultaneously providing a way of disseminating high-level messages (breadth). (Scantlebury & Adamson, 2022)

A core debate within our team when designing MEASURE, was in deciding which implementation theory to choose - NPT, a theory that we had used successfully during GPED and so were confident with, or the newly updated (at the time of developing our grant application) CFIR addendum. (Damschroder et al., 2022) In addition to our familiarity, the appeal of NPT lay in its flexibility and the overlapping nature of its constructs. During GPED, we felt that by not having rigid analytical limits our analysis was elevated from the descriptive to interpretative level - we did not feel that data had to be deductively placed within a particular domain, but that the theory could be used flexibly to aid our narrative.

CFIR is comparably “formulaic” and proposes 5 core domains, which are broken down into constructs and sub-constructs. We envisage this will make the theory “easier” to apply when working as part of a large team, with a high volume of data. In contrast to GPED where NPT was used primarily by a single researcher as a means of pulling the data together and aiding the final stages of interpretation, during MEASURE, the CFIR framework will be informing our data collection and analysis from the start of the project by multiple researchers. When debating the use of theory for larger projects, the practical application of the theory is an important consideration as given the project’s four-year duration and volume of data collection, we anticipate multiple team members with varying levels of qualitative and implementation science experience being involved in data collection and analysis.

Sampling/Recruitment

Selection of Qualitative Case-Sites

Box 2. Summary of our proposed qualitative study design adapted from our grant application

We found little guidance or critical reflection within the existing literature to guide us in our approach to qualitative case-site selection. In our experience, it is difficult to anticipate the number and types of sites that should be selected “up front” and doing so encourages a mentality of a “target number” to be reached. Instead, we strove to obtain qualitative case sites that could be used to complement national-level quantitative analyses, whilst maintaining sufficient depth and inclusion of deviant (positive and negative) cases surrounding implementation and impact.

To achieve this constant critique and reflection of what data is required throughout the data collection process we would suggest adopting a “data-driven” approach to sampling, whereby sites are selected using quantitative and qualitative intelligence obtained throughout a project. However, adopting a purely iterative approach, could be perceived as methodological uncertainty and there is also a need to ensure that proposed work is adequately costed and resourced. In our grant application, we were deliberately candid in articulating this uncertainty and produced an informed guestimate of the number of sites that we are likely to include, which we justified using a variety of methods and assurances which we outline below.

Dummy Table for Qualitative Sampling Frame.

We envisage that the focus of our final case site data collection (iteration three) will be on collecting data that builds on our existing analysis. For instance, we may wish to explore a hub which is known through our quantitative analysis and/or intelligence from NHS England to be substantively different (e.g. in terms of workforce or productivity) to those we have already explored. Our approach to case-site sampling will therefore become increasingly flexible and will involve constantly referring to our qualitative and quantitative data sets. In parallel, we will undertake regular stakeholder engagement (e.g. discussions with NHS England) to ensure our qualitative data is able to respond to and capture inevitable changes to the policy throughout the project’s duration. We are also mindful of other small-scale qualitative evaluations that are planned and/or may emerge throughout the duration of our project and will be responsive to this to avoid duplication of effort and research competition wherever possible. As a result, some hubs may feature in our analysis once, whilst others may be explored longitudinally across all three iterations. Equally, we may feel it is appropriate to conduct a larger number of “lighter touch” case site visits rather than undertaking a smaller sample in depth (see below).

Sampling and Data Collection during Case-Site Visits

During GPED, we proposed a fixed number of sites, (n = 10 sites) and amount of data to be collected per site (n = 10–15 patient/carer interviews, 10-15 staff interviews, 12–16 hours of observation per week) in our protocol. (Morton et al., 2018) However, it is naïve to assume that sampling principles for “usual” qualitative research can be merely extrapolated to this context or that a “blanket amount” of data collection can be planned for in advance. Instead, the amount (i.e. volume) and type (i.e. stakeholder) of data is likely to vary by case site and stage in the project. For example, we expect that at the beginning of the MEASURE study, we will require a greater amount of data collection per site as these initial sites will be crucial in helping us to gain familiarity with the policy and the inner-workings of surgical hubs. Equally, towards the end of the project (i.e. by our third iteration), we anticipate that data collection will be “lighter touch” and may involve a smaller, more targeted number of interviews and observations to build on our understanding and explore any new developments or unique models. For example, we may wish to explore a smaller number of hubs en-masse to help explain trends in our quantitative analysis pertaining to workforce or key performance and productivity measures.

A key consideration when designing large qualitative case studies is to anticipate a need for some sites to be analysed in depth (e.g. exemplar sites at the beginning of data collection when researchers are less familiar with the topic or sites that are performing better or worse than others) whilst others may require smaller, ad-hoc data collection. Similarly, whilst longitudinal data collection is important, we felt that particularly given the variation in hubs (e.g. surgical specialties involved, accreditation status, workforce model, long-standing vs. new) and anticipated changes to the policy, that we may want to apply a combination of longitudinal visits at selected sites and short, light-touch (e.g. interviews with key informants) data collection at sites which may be different in terms of their surgical hub, patient mix or performance. We have applied these principles to our methods for sampling and data collection, which are outlined below.

Our approach to Data Collection and Sampling During Case Site Visits for MEASURE

Data collection at sites will consist of a combination of non-participant observational data, semi-structured qualitative interviews and documentary data. The researcher(s) will spend approximately 5–7 days at the purposively selected hub over a two-month period. Prior experience (Scantlebury & Adamson, 2022; Scantlebury et al., 2022) tells us that the amount of data that is required and the characteristics of the key stakeholders that should be included in our sampling is likely to vary greatly across location. Therefore, data collection at each site will start with a familiarisation visit, followed by qualitative observations to gain an understanding of the case site in order to purposively select the key individuals at the site to approach for interview and further observation. At each site approximately 12–15 hours of observation will take place over the duration of the visit, recorded in field notes. As an example, this may include team meetings. We estimate that approximately 10–15 interviews will be required per site and will include purposively sampled stakeholder groups: staff (anaesthetists, surgeons, nurses, hub administrators, local GIRFT co-ordinator(s), relevant service leaders e.g. operational managers and regional elective recovery leads as appropriate); and patients and/or their carers. Patient interviews will include participants with a broad range of characteristics following INCLUDE principles. (NIHR., 2020; Treweek et al., 2021) Relevant policy and procedural documents relating to each case site will also be collated.

Within site recruitment of staff and patient participants will be discussed at the site initiation visit and will subsequently be facilitated by the site chief investigator (CI). The site CI will help to identify appropriate key informants to approach in the first instance and will disseminate study information to potential participants on site. This will be supplemented by opportunistic recruitment by researchers at site visits. Patient recruitment will be informed by local PPI representation.

All qualitative data collection will be informed by the tools available as part of the CFIR suite of resources,(Research., 2024) including topic guides and observation templates which will be adapted for our specific purposes. Topic guides for staff will explore the implementation and impact of the HVLC hubs programme from the perspectives of the various participants, as well as the policy’s background and the prior expectations relating to any changes in service provision. The interviews will also capture how the hub provision fits within the wider local surgical provision. The interviews will also cover workforce issues (providing the qualitative data for WP5) and will include experiences of staffing models, training requirements, communication, staff well-being, staff recruitment and sustainability. Patient interviews will capture their experience of being referred to the hub, the treatment within the hub and follow-up care, general views on service configuration and elective recovery. Patients will be provided with study materials whilst at the hub but given the short length of stay that many patients will experience it is anticipated that arrangements will be made to interview patients once they are at home and well enough to participate.

Data Analysis

Our previous methodological insights paper, (Scantlebury & Adamson, 2022) provided a detailed overview of how we analysed our data during GPED. Whilst our plans for MEASURE are based on this approach, we are aware that this process is imperfect and in the absence of methodological guidance or exemplars was developed through trial and error. We feel strongly that whilst it is important to have a broad analysis plan, that there is a need for analytical innovation in this area and so we will be actively searching for ways to refine and improve our approach to analysing the large and complex qualitative data that we will collect throughout the MEASURE study.

The principles of large qualitative analysis are fundamentally different and in our experience are what drive the need for analytical innovation. A core challenge of this type of analysis is to find a way to ensure that you can obtain breadth of knowledge to answer questions pertaining to the impact and implementation of a national policy, whilst not sacrificing the need for an in-depth interpretive analysis. For example, during GPED we found that our data was full of contradictions within and across case sites, time-points and stakeholder groups and that portraying this complexity whilst not losing sight of the bigger picture was incredibly challenging. Equally important are the practical considerations for this type of analysis and their influence on how this shapes the methods you choose. Namely, finding ways to distil vast amounts of qualitative data (e.g. 467 interviews and observations of 142 clinical encounters during GPED) into “manageable chunks” without over-simplifying data and/or losing its context is crucial. This is particularly challenging in the early stages of analysis where it can be difficult to distinguish between useful tangents which warrant further exploration and “red herrings.”

One of the key differences in analysing large, complex qualitative data in contrast to “usual practice” is the importance of using multiple analysis methods and techniques. In our experience, applying one method (e.g. thematic analysis) to such a large and complex data set, is a gross oversimplification of the process and would prioritise breadth and basic description over depth. Our plans which we outline below are therefore adapted from our experience of analysing data during the GPED study which utilised multiple qualitative analysis methods and techniques - further reflections and details of our analysis during GPED are outlined elsewhere. (Scantlebury & Adamson, 2022; Scantlebury et al., 2022).

How Will we Analyse our Data During MEASURE?

All qualitative interviews across WP1 and WP3 will be audio recorded digitally and transcribed verbatim, other qualitative data will be in the form of field notes and documentation. Designed to deal with large quantities of multi-dimensional qualitative data, we will utilise the pen-portrait approach. (Sheard & Marsh, 2019) Drawing on all of the data collection methods used, we will document a holistic descriptive account of each of the sites - a ‘pen-portrait’. This narrative description of each hub will be presented under the broad domains of the CFIR (hub characteristics, outer setting (wider context), inner setting (local context), characteristics of the individuals involved, and the process of implementation). As each pen portrait becomes available, we will offer to feedback this analysis to each of the case-sites.

At the end of the first iteration of the qualitative work - when three pen-portraits are available - the qualitative study team will discuss these early findings with our Virtual Study Advisory Group and patient representives and together we will identify either hypothesis or ‘domains of influence’ (Scantlebury et al., 2021) that we will explore in more detail in further qualitative data collection/analysis and quantitative data analysis. Here we will compare and contrast each of the pen-portraits in order to map key “hub” features from the descriptive accounts across and between case-sites. This will use an interpretive approach (Sheard, 2022) using principles derived from Braun and Clarke’s reflexive thematic analysis (TA) (Braun et al., 2023) and will also incorporate the qualitative data produced in WP1 (see table 1). This will allow for us to explore key findings for the purposes of the main report according to the CFIR; and undertake more thorough in-depth analysis of pertinent issues that have been identified as important from the initial qualitative analysis and input from stakeholders. Whilst it is not possible to state in advance all of the themes will be developed, this will include an in-depth analysis of workforce issues (linked to WP5) (Hughes et al., 2020).

Ethics

The MEASURE study is registered through the research registry (researchregistry9364) and has been approved by East Midlands-Nottingham Research Ethics Committee 23/EM/0231. All participants will be provided with information sheets prior to participation. Written and/or verbal consent will be requested from participants prior to interviews and observation. For non-participant observation, we will be shadowing key individuals working in HVLC hubs in up to 2-h blocks. For instance, we may shadow a staff member during their morning clinic, which would involve fleeting as well as longer periods of observations with multiple staff and patients. Written and/or verbal consent will be obtained from the main staff member being observed at the start of the observation period. We will not obtain written consent from patients featured within an observation period. Instead, staff will introduce the qualitative researcher to each patient and staff member they encounter and will obtain informal verbal consent for us to be present during their care. Should a patient be unhappy for their care to be observed, the observation period will be stopped and will only continue once the member of staff and (new) patient(s) provide their permission.

Rigour

We are methodologically opposed to the use of ‘quality’ checklists for qualitative research. As a commissioned piece of research that has been peer-reviewed at the grant application stage, our project also has a study steering committee and virtual advisory group who, along with our patient advisory group, will be consulted regularly for feedback. We are conscious of the lack of methodological guidance in this area and need for innovation and so part of our process for testing the quality of our work, is to publish articles such as this to encourage critical reflection and drive innovation and methodological discussion in this area.

Conclusion

MEASURE is the largest, independent evaluation of the HVLC surgical hubs programme in England. The qualitative work proposed here, will as part of the MEASURE study be used to provide a comprehensive understanding of the implementation and impact of the HVLC programme, something which is a national priority and key part of the government’s plans to recover elective services in England. As a four-year project, that is flexibly designed and “under-pinned” by theory, a key strength will be our ability to be adaptive to and considerate of any changes to the policy landscape and local context.

Methodologically, the MEASURE study provides an opportunity to deepen our understanding of how to design, conduct and analyse big and complex qualitative data. For our study to be a success, we anticipate the need for methodological innovation, particularly in our analysis where there is currently a lack of knowledge and transparency in how to undertake qualitative analysis at scale. We view this protocol not as a rigid blueprint, but as a “snapshot” of our current methodological thinking and hope that it will build on our recent methodological work by continuing to stimulate discussion and innovation in this area.

Footnotes

Acknowledgments

We would like to thank the wider “MEASURE” study team.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The authors received no direct funding for this manuscript. However, the MEASURE study was funded by the National Institute for Health Research (NIHR) Health Services and Delivery (HSDR) Programme (grant number 153387). The funders had no role in the design and conduct of the study, and the preparation, review or approval of the manuscript. The views expressed are those of the authors and not necessarily those of the NIHR or the Department of Health and Social Care.

Data Availability Statement

The data that support the findings of this study are available on request from the corresponding author. The data are not publicly available due to privacy or ethical restrictions.