Abstract

The nature of online social behaviour is largely changing, from communications taking place on open platforms to interactions occurring in hidden, group-based spaces or closed fora. Boccia Artieri (2017) has called this the new geography of unsearchable small conversations. This transformation challenges our current understanding and application of research ethics in qualitative digital sociology because, on one hand, we can no longer lean upon the approaches used by social data science to handle big and open data and, on the other, we cannot exclusively rely on established practices in qualitative sociology. Academic inquiry into these closed messaging threads and fora, however, remains of great societal importance, not least because of the rise of hate and extreme content in such spaces. With researchers now facing increased barriers when accessing social media (such as having to register an account, the inability to enter a group or chat without being invited, and so on), a rethinking of some of the long-standing cornerstones of ethical research is required for the digital age. The paper makes a methodological contribution to this field by delivering an assessment tool based on the methodology of the project Online Hateful Youth Sociality, which explores the use of memes to share hate on social media. The tool bridges the perspectives of digital sociology, criminology, and digital humanities with ethnographic real-world research to address the ethical issues of disclosure and intrusiveness, harm, the public or private nature of online spaces, and informed consent in the context of researching behaviour in small and hard-to-reach online groups. The assessment tool is presented in the form of an ethical decision flowchart that advocates for an approach that is not only situated but also structured. Ethical considerations are thus applied to the relevant issues depending on specific contexts but with clear, “step-by-step” assessment guidance.

Keywords

Introduction: Overview of Study and Need for a New Assessment Tool

This paper proposes an assessment tool for conducting research ethically in the specific context of studying small or private group communications and interactions on social media using digital ethnographic methods. Its inception arose during the design of the methodology for the project Online Hateful Youth Sociality: Extreme Online Content (ExOC), undertaken at the Department of Sociology, University of Copenhagen, which focuses on memes as the carriers of hate. The need for ethical clarity and direction in handling data of this nature revealed notable gaps within the available guidance offered by ethical boards in the social sciences including sociology, social data science, and criminology. These gaps, in turn, were also visible in the methodologies of academic works that have embarked on research in similar contexts. Tuikka and colleagues have concluded that “not many researchers seem to be all that interested in – at least disclosing their – ethical practices relating to netnography” (Tuikka et al., 2017, p. 9). While their work relates to consumer research, the social sciences also boast a strong tradition of ethical research methods, and should be able to offer relevant and important perspectives on ethnographies of the internet. Still, some of the more complicated ethical choices to be made in relation to intrusiveness, public-private spaces, and informed consent within the increasingly more refracted internet and its smaller, private spaces remain to be addressed in detail. This is particularly notable in the context of studying group-based interactions on social media platforms that greatly vary both in their usage by young and potentially vulnerable users, and in their range of technical affordances. This paper thus specifically addresses questions as to when researchers can or should act according to the notion of least intrusiveness (including passive observations though the use of anonymous profiles), and when such an argument is to be waived, and obtaining informed consent becomes a requirement. Currently, researchers are often left in a situation where guidance for such ethical choices remains unclear.

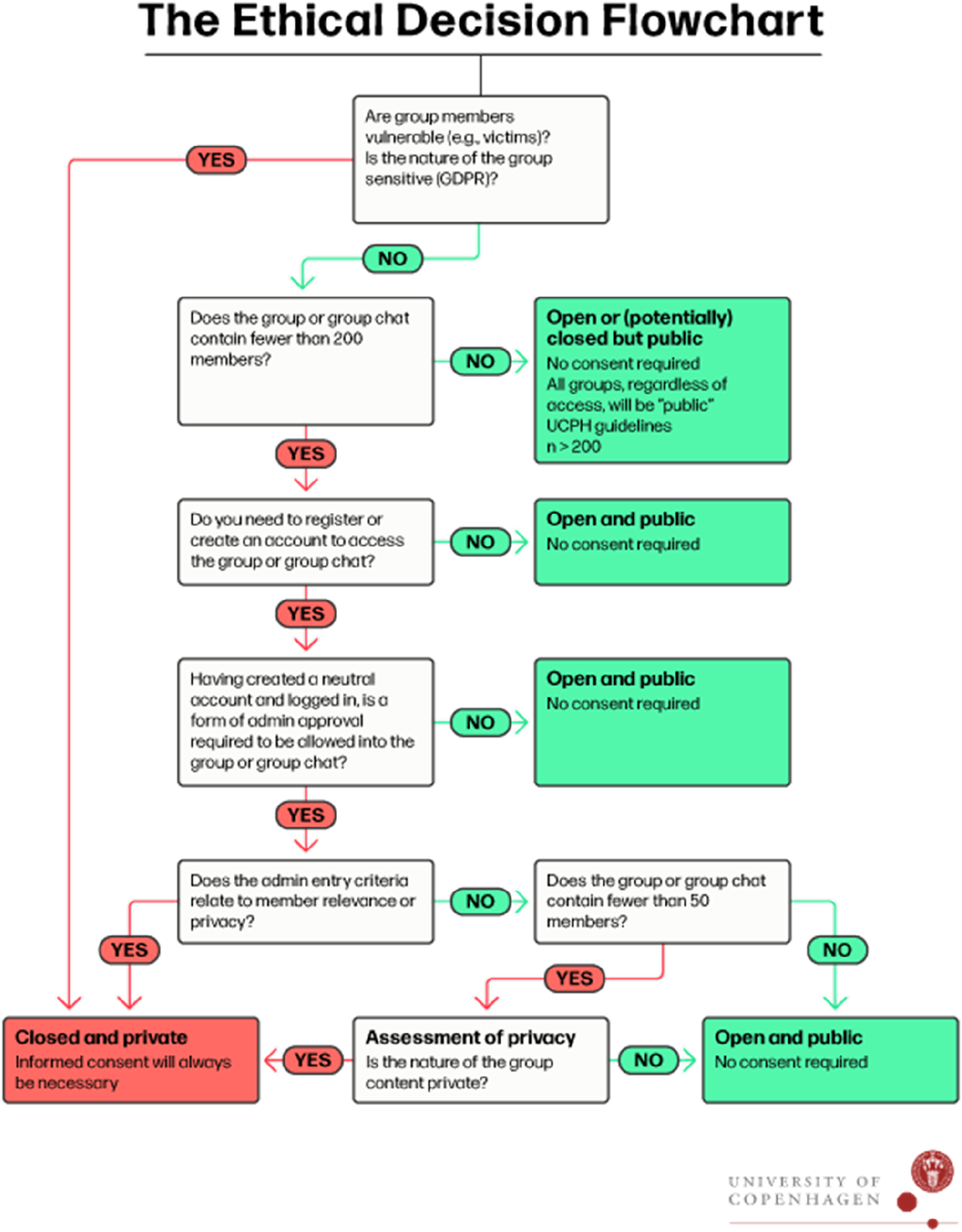

The paper starts with a presentation of the background and aims of ExOC, as these provide context for the development of this research tool. We then present and review the existing ethical guidelines in relation to our key questions and their application by other scholars in studies relating to whether online fora and groups should be considered public or private. The paper provides an assessment tool, visualised as a decision flowchart (Figure 1), which can be used in a situated, step-by-step manner to address issues relating to disclosure, intrusiveness, causing harm, and informed consent in the context of conducting ethnographies of the internet. It concludes by offering a discussion on the future direction of qualitative research in such unchartered online territories. The flowchart presents a step-by-step guide on how researchers can assess traditional situations within qualitative research that require ethical considerations and decisions to be made regarding vulnerability, intrusiveness, access, privacy, and informed consent. It offers concrete and practical guidance that scholars should follow depending on the social context, relevant literature, and the ethical sensitivities of their specific fields of research.

ExOC: Background and Aims

With the arrival and proliferation of social media, memes have become a ubiquitous part of everyday communication (Milner, 2016). A meme is an often humoristic or satirical visual tool usually containing an image with super-imposed text, which makes it easy to modify and to share (Shifman, 2013). The social relevance of and academic interest in memes are widely evidenced in research. For example, memes have been shown to be a regular feature of trolling behaviour (Phillips, 2015), understood to mean the deliberate act of provoking individuals or groups online in order to disrupt interactions (Coles & West, 2016), and of the recruitment of members into extreme communities (Askanius, 2021; Hine et al., 2017; Murthy & Sharma, 2019). Other studies have examined the use of memes within extreme communities themselves, identifying the role of memes as important group demarcations, for example, by displaying misogynist and racist intertextual references (Nissenbaum & Shifman, 2017; Tuters & Hagen, 2020). These findings partly resonate with existing research on memes in the context of humorous consumption (Askanius & Keller, 2021; Matamoros-Fernández et al., 2023; Schmid, 2023), online expressions (Zannettou et al., 2018), and feelings of social belonging (Kanai, 2016; Miltner, 2016) but with a twist of ‘toxic masculinity’, a common aspect of extreme online communities (Massanari, 2017; Sparby, 2017). Following this line of work, studies have started to investigate the spread of memes from ‘fringe’ web communities such as/pol/, Gab, and the subreddit The_Donald to mainstream media including X (previously Twitter) (Zannettou et al., 2018, 2020). As a result, the exposure of young people to hateful and extremist content online is on the rise (Costello et al., 2020; Munn, 2023).

However, research on young people’s consumption and sharing of extreme content online through memes is lacking, with the existing research focusing on the definitions of a meme (Rogers & Giorgi, 2023), its viral nature and its visual expressions (Andreasen, 2020; Barnes et al., 2021), or on measuring and tracing diffusion (via machine learning). As such, memes have often been identified as ‘isolated events’ within big open social media platforms (Zannettou et al., 2018, 2020). Such work has inevitably left the complexity of online communities and social meme-sharing practices as underexplored fields of research. A deeper understanding of these spaces and of the sharing of hateful memes across communities is thus also lacking. Further, the shutdown of API-based big data research (Venturini & Rogers, 2019) happening whilst society is moving towards more closed and hidden online group behaviour (Abidin, 2021) has made the relevance of studying memes within such communities even more relevant.

The aim of ExOC (University of Copenhagen, 2023) is to explain how and why young people produce and share hateful or extreme meme content online, with a particular focus on the communications and interactions that take place in smaller, hidden, closed, or hard-to-access social media groups or chats.

Netnography: From the ExOC Methodology to the Overall Assessment Tool

As in the case of many other research projects, netnography was chosen as the method for ExOC due to its ability to provide essential tools for navigating the landscape of small and hidden groups, both procedurally and ethically. It distinguishes itself from other available methods by its focus on both deep, immersive analysis and more structured forms of data collection. The concept of netnography was developed by Kozinets with the intention that the researcher would adopt an immersive approach within the communities under observation by making one’s presence known but, importantly, without intruding (Kozinets, 2020). In our application of the method, we have also used netnography to gain access to social environments where we could recruit participants for qualitative interviews. Both disclosure and intrusiveness are explored in this paper, and the traditional understanding of these concepts has been reconsidered in order to develop an ethical assessment tool that can speak to the specific context of researching closed groups on social media. While the term “netnography” is often associated with Kozinets’ specific approach, in ExOC, and within this paper, it encompasses non-participatory online ethnographies as well (some of the more participatory approaches are discussed in the key works by Hine (2008), Pink et al. (2016), and Beneito-Montagut (2011)). The non-participatory aspect and the structured approach for identifying observations enable the method to cater to both profound qualitative and quantitative analyses of data outcomes.

Netnography more broadly thus draws upon a range of methods within digital qualitative research. We identify the following four components of netnography as essential in the context of researching closed groups on social media. They are briefly described here from a strictly practical or functional perspective, while the related ethical considerations and implications are explored throughout the paper. The proposed tool will thus mostly be of relevance for studies that adopt broadly similar approaches within their methodologies. • Gaining access: conducting searches using key words or trends (e.g., hashtags on TikTok or Instagram), identifying, and accessing relevant groups that align with a project’s research questions. With closed groups, in particular, it may be necessary to be granted access by a gatekeeper or administrator either by requesting to follow an account or to join a group or server. It may also be necessary to disclose one’s identity and intentions as a researcher when negotiating entry with the gatekeeper, depending on the affordances of the platform at hand. • Data scraping: the collection of content from social media platforms or servers using software, code, algorithms, or data requests (i.e., any form of data collection that is asynchronous) or manually through screenshotting tools (i.e., synchronously). • Observation: presence over time on a range of social media platforms and within multiple groups and messaging threads with synchronous notetaking. • Qualitative interviews with individuals identified as relevant for the aims of the research, and recruited during the observations by sending friend or message requests and engaging in dialogue to negotiate participation.

Combined, these approaches constitute the central components of netnography, referring both to the methodology of ExOC and for the purposes of the present paper, which aims to establish novel overall guiding principles when researching the ‘new geography of unsearchable small conversations’ ethically (Boccia Artieri, 2017; Boccia Artieri et al., 2021).

Ethics in the Netnography of Small Groups: Background and Methods

The methodological considerations prior to embarking on ExOC inevitably inspired reflections on some of the long-established principles within sociological study, including intrusiveness and obtaining consent, and their relevance and application in a contemporary digital context. A review of the existing ethical guidelines was undertaken, with a particular focus on the principles for researching small groups online offered by the protocols of the American Sociological Association (ASA), the American Society of Criminology (ASC), the British Sociological Association (BSA), and the Association of Internet Researchers (AoIR). While all of these broadly cover the issues that traditionally arise when discussing harm, privacy, or informed consent, a lack of specific guidelines that pertain directly to online or digital research was noted. An important exception could be found in the BSA’s contributions, which include an annexe dedicated to digital research. Even then, however, the closest the available guidelines come to offering solutions to the ethical dilemmas that may arise in online research, understood to mean effective procedures that should be followed in precise circumstances, is to suggest the undertaking of a flexible, adaptable, and largely ‘dialogic’ approach. Associations including the BSA and the AoIR suggest a series of questions that researchers may want to ask themselves (and potentially discuss with participants) when certain issues arise, as opposed to concrete answers and applicable instructions in situations of evident ethical ambiguity. Ethical questions are in this way embedded into the process of the research; they are tightly interwoven with the aims of the specific research field (such as youth or social movement research, criminology, health etc.). However, while the suggested questions may constitute an important starting position, they leave the researcher with an arguably unstructured and far too broad range of choices in terms of how to proceed in practice. As such, there is currently no established practice relating to these ethical considerations, and what their implications may be, within the field of qualitative digital research. While this leaves a gap in the research literature, it may also call for qualitative researchers to embrace a more open science tradition and upload their methods and reflections into online repositories for studies.

We begin our review with the ASA (2018), which limits itself, in point 11.1(a) relating to the uncertainty of whether one should treat data as public or private, to stating that ‘Sociologists are aware that not all information on the internet is considered public, and must include informed consent procedures for research in restricted internet locations’. This continues in 11.1(d): ‘Sociologists do not typically need informed consent when using information from public internet sites. These might include much of the content on the internet, including blogs and social media sites. Whenever internet sites are not public, sociologists will receive permission from either the participants or the site managers before beginning the research’ (2018, p. 13).

Notably, no guidance is provided as to how a site can be identified as being a public or private. The ASC (2016) does not refer to the digital at all, and thus does not address the public-private dilemma in contexts such as that of social media. In relation to harm or intrusiveness, it states that researchers ‘should obtain informed consent when the risks of research are greater than the risks of everyday life’ (2016, p. 4). Less vague and of greater help is the BSA’s (2017b) Statement of ethical practice, which offers a section on covert research that addresses issues including the public-private dichotomy and informed consent in general, rather than online specifically. As stated, however, their annexe (2017b) explores such principles more thoroughly, offering a key starting point for the present discussion.

With regards to intrusiveness, the BSA annexe unequivocally states that even the act of inviting a research subject to consider participating in a project could in itself cause harm. Citing the Council of American Survey Research Organisations’ (CASRO) social media guidelines, the annexe states that, in cases of direct interaction, online informed consent should always be obtained. Crucially, they add, however, that it is ‘unclear whether pure observation, where data is obtained without interaction with the participant, would fall under this remit, as no direct reference to this type of research is offered’ (2017b, p. 5). More generally, and most importantly, the document discusses the concept of ‘situational ethics’ in relation to digital sociology (2017b, p. 3). This is expanded on as follows: ‘Each research situation is unique and it will not be possible simply to apply a standard template in order to guarantee ethical practice. Rather, we should consider the situational ethics of digital research, taking very carefully into account the context and the implications of conducting this research rather than referring only to absolutes of right and wrong and to issues explicitly addressed in existing ethical guidelines’ (2017b, p. 11).

This general principle is intended to apply to all stages of the research process, from gaining access to an online group to the decision as to whether seeking the informed consent of its members is necessary or not. The AoIR similarly advocates for ‘guidelines rather than a code of practice’ to ensure the flexibility of online research and its adaptability to diverse contexts and to ‘continually changing technologies’ (2012, p. 5). It calls this a ‘process approach, which emphasizes the importance of addressing and resolving ethical issues as they arise in each stage of the project’ (2012, p. 12) and also describes it as ‘dialogic’, a concept subsequently expanded the BSA, as noted above.

Indeed, the BSA concludes that ‘seemingly clear guidelines are rendered unstable in practice by the blurred distinction between the public and the private’ (2017, p. 6), and returns to the idea that dialogue between researchers and potential participants remains the key to avoiding ‘mismatches’ in perceptions of harm, intrusiveness, the need for informed consent, or the private or public nature of a particular online space. This is because ‘no official guidance regarding internet research ethics have been adopted at any national or international level’ (AoIR, 2012, p. 2). As noted, efforts have been made to raise awareness as to the questions researchers should be reflecting upon, but no board or organisation has been able to offer specific answers. This evident gap remains, and is rather surprising given that such a change in the research landscape resulting from the rise of information and communication technologies was not only foreseeable but in fact expected: ‘While the internet makes people’s interactions uniquely accessible for researchers and erases boundaries of time and distance, such research raises new issues in research ethics, particularly concerning informed consent and privacy of research subjects, as the borders between public and private spaces are sometimes blurred’ (Eysenbach & Till, 2001, p. 1103).

Studies exploring online group dynamics, ethical considerations, and methodological approaches are prevalent in both qualitative and field journals. While discussions as to the public or private nature of online spaces have been reflected upon, we have not identified studies that directly establish what can be considered a closed or an open group. Further, in studies primarily utilizing interview methods, there is often a lack of clarity regarding how access to online groups for recruitment was obtained. While informed consent for interviews is standard, similarly it is not always reported how access was gained to the groups from which participants were recruited (see, e.g., Chiluwa, 2024).

In relation to more observational types of studies, Paechter (2013) delves into the ethical dimensions of qualitative research, particularly concerning the open and closed nature of online groups. She discusses her hybrid insider/outsider status and emphasizes the importance of respecting privacy boundaries and obtaining informed consent in online forums. Similarly, in Schulte-Römer and Gesing’s (2023) study, which examines the methodological challenges of event-based ethnography, ethical concerns regarding participant awareness and data collection practices are raised. Additionally, Kurtz et al. (2017) discuss blogs as ethnographic texts and grapple with the ethical implications of collecting personal data from online platforms. Gatson and Zweerink (2004) discuss the complexities of online ethnography, highlighting the challenges of building trust and navigating community dynamics without some form of participatory engagement. They disclose their researcher identity but do not discuss opt-out options for participants.

Several studies argue against observing without consent. Beneito-Montagut (2011) emphasizes the necessity of informed consent in online studies, advocating for transparent research practices despite the public nature of many social networks. Similarly, Costello et al. (2017) advocate for a more engaged, participatory approach in netnography, challenging the notion of least intrusive methods and underscoring the importance of obtaining consent when engaging with online communities. Masullo and Coppola (2023) argue in favour of applying a non-intrusive covert approach at the start of their netnography, before revealing study and researcher information at a later stage when moving on to interview members of the community. Kaufmann and Tzanetakis (2020) address access and informed consent in online studies of criminality and deviance, stressing researcher protection and cautioning against overly participatory approaches, a stance also echoed by Steinmetz (2012), who discusses anonymization post-data collection.

Scholarly attention has, therefore, been directed towards gaining an understanding of the public-private nature of online groups. Many researchers in qualitative studies are in fact moving towards fully disclosing their researcher roles and obtaining informed consent from participants, particularly when dealing with more private groups and when opting towards more participatory as opposed to least-intrusive approaches. Independently of their position on this methodological spectrum, none of the studies reviewed have been able to provide specific details on how they addressed these questions and reached their conclusions. Additionally, it is evident that some studies perceive arguably similar groups to be either public or private without offering structured arguments to support this diametrically opposing stance. For example, Pruchniewska (2019) and Wellman (2021) perceived larger Facebook groups as open and Schlette et al. (2022) treated Telegram groups in a similar manner. On the other hand, Sipley (2022) considered Facebook groups on neighbourhood issues with approximately 200 members to be private. Furthermore, Zhu et al. (2022) regarded small WhatsApp groups used by young people as closed. Although the conclusions in these studies seem to align, this also highlights a need for greater transparency and clarity regarding researchers’ approaches to accessing and engaging with online communities, particularly in terms of ethical considerations of the public-private divide.

We conclude that, in the absence of precise guidelines for digital social science studies of group-based and hidden content, and given the difficulties faced by academic colleagues and even their lack of engagement as a result of this absence, the following key principles require the greatest attention and consideration in the context of conducting ethical research. • The sensitivity or vulnerability of group members and whether the proposed research is intrusive and has the potential to cause harm • The varying perceptions of and unclear boundaries between public and private spaces online • Ensuring the anonymity and privacy of data subjects and the confidentiality of data • Whether and, if so, how informed consent should be obtained • When and how researcher protection should be organized and secured

The need for an assessment tool to direct contemporary research endeavours that focus on behaviour and interactions within small-group settings on social media platforms and the internet was thus identified.

Assessment of Ethical Criteria

Sensitivity of Group Members and Relevance of Research

One of the most established ethical principles in social science research is the emphasis on the sensitivity and vulnerability of the study population. This principle is universally acknowledged in ethical guidelines; as such, our approach begins here. Existing research considers numerous categories of potential participants to be vulnerable, including but not limited to children, the homeless, members of minority communities, those receiving various forms of medical treatment, or the elderly (e.g., de Groot et al., 2019; see Liamputtong (2007) for a more extensive list and relevant discussions). What makes such groups vulnerable could be their lack of ability ‘to make personal life choices … to maintain independence, and to self-determine’ (Moore & Miller, 1999, p. 1034) perhaps as a result of ‘physiological or psychological factors or status inequalities’ (Silva, 1995, p. 15). In the context of participating in research, this lack of independence means that ‘special safeguards to ensure that their welfare and rights are protected’ are often required (Moore & Miller, 1999, p. 1034). Obtaining informed consent is the primary example of such a safeguard. Individuals involved in the commission of outright illegal or perhaps deviant activities have also been considered vulnerable populations in the literature (Liamputtong, 2007). Notable examples include sex workers (Benoit et al., 2005, 2019) or drug users (Barratt & Maddox, 2016; Renzetti & Lee, 1993), and the notion generally applies to studies in which participation may be ‘incriminating’ and ‘reveal illegal behaviours’ (Dickson-Swift et al., 2008, p. 2).

While the concept remains consistent across all fields within the social sciences, certain nuances become more prominent when studying small, hidden, or closed groups, particularly in the context of researching online hate. As part of the proposed approach, we argue that sharers of hateful memes fall short of the thresholds mentioned above and as such, at least in principle, are neither sensitive nor vulnerable participants. With the exception of children or those under a certain legal age (see below), it is hard to contend that individuals sharing hateful content online are not able to make ‘personal life choices’, or that this particular aspect of their lives and identities is shaped by some form of ‘status inequality’. A line can be drawn here between individuals engaging in deviant behaviour out of choice, and those that are more closely identifiable as potential victims of such behaviour, namely the individuals or groups that are often the very subjects and targets of the hateful behaviour in question. This is undoubtedly the case with the memes and their sharers as investigated in ExOC. While we can thus dismiss the potential vulnerability of most meme-sharers, it is important to acknowledge that other group members within such closed communities could be victimized by the hate they are exposed to. This remained a key consideration when conducting observations and collecting data in the form of interactions that could have included the voices of potentially vulnerable research participants, not relating to the meme-sharers but to the wider membership of the groups. In cases where anyone, including meme-sharers themselves, were assessed as being potentially vulnerable, informed consent was always sought.

ExOC examines hate and its social interactions within such groups. While the content of hateful memes may affect individuals who have been victimized or shamed, the primary point of focus is the hateful behaviour itself. Further, some of the material shared in a hateful manner may include sensitive content. However, it is proposed that the societal relevance of the study justifies observation of this material (Doyle & Buckley, 2017).

Alan Bryman (2016) argues that the social relevance of research is an important consideration when it comes to ethics. He asserts the significance of conducting research that addresses pressing social issues and contributes to a societal understanding of these. However, he simultaneously cautions against prioritizing social relevance at the expense of ethical considerations. We argue, in line with Bryman, for researchers to carefully balance the pursuit of social relevance with ethical principles, ensuring that the benefits of the research outweigh any potential risks or harm to participants. Regarding closed and potentially private online groups, such considerations should thus closely align with the specific field of study. In a general cultural study of memes, there may not necessarily be social relevance for a non-intrusive covert study design like ours. However, in relation to hate-based meme behaviour, we still know very little about the deviance in production and the sharing behaviour among members of smaller groups. Here, and related to the fields of criminology and sociology, the societal relevance would arguably be higher.

Nevertheless, once the balance between the societal value of our research and any potential harm to participants is struck, we take precautions to ensure that any material shared for scientific purposes does not lead to further traumatization of the individuals depicted, who may be vulnerable. This is done by anonymising all data collected and, in cases where other factors in the decision-making process (such as group size or the nature of the topics discussed) led to the research team determining that being unintrusive was not ethically appropriate, the contributions of potentially vulnerable participants would not be collected at all. This is discussed further in the following section on intrusiveness and disclosure.

In relation to sensitivity and vulnerability, another important consideration is the age of the subjects under study. In existing research, the age range of 16–25 is defined as ‘youth’ (Bendit, 2006; MacDonald & Shildrick, 2013). In most cases, research ethics boards and guidelines recommend seeking parental or guardian consent for individuals under a certain age. Sixteen is the threshold in Denmark but a different age, often 18, may be relevant for universities in other countries. Within ExOC, researchers are responsible for assessing the content, form, and discussions within groups to determine whether there is a possibility that individuals under the age of 16 are involved. In cases where data appear to have been shared by individuals likely to be under the age of 16, parental consent is sought. If this cannot be obtained, participants who fall within this vulnerable category, and their individual contributions, are not included into the dataset.

Within ExOC specifically, this has meant actively seeking consent from such individuals, or from parents or guardians where relevant, by sending messages to the posters on the social media platform at hand. This was done in the same way that individuals were contacted more generally for interview recruitment. When consent was either refused or not obtained (e.g., in cases of no response or engagement), the captured interactions were instantly modified to remove the contributions made by those perceived to be 16 years of age or below, keeping only the (still anonymised) interactions involving others from the group. The specific instructions of the ethical assessment tool require that informed consent is obtained for all vulnerable research participants. Of course, researchers wishing to utilise the tool to guide their own ethical decision-making in other projects should familiarise themselves with the literature on vulnerability within their specific fields and act accordingly. For example, if one is conducting research within so-called “paedophile hunter” fora online or on sex workers, it could be argued that the levels of vulnerability of potential participants in such spaces would likely be quite different to those of hateful meme-sharers. Similarly, the relevant legal requirements pertaining to age, which, as stated, may vary from one country to the next, should always be strictly adhered to.

Lastly, as touched on, ExOC employs a process of anonymizing content before storage, which ensures that all material remains non-personally identifiable. This is facilitated through a program specifically designed for the observation process, which systematically redacts all personally sensitive material before securely saving the data on university drives. 1 This process is largely automatized, but collected screenshots are checked by the research team and can be manually edited before these are saved, ensuring that any personally sensitive data that may have been missed by the programme are anonymised or removed. Tying the above concerns together, particular care is taken when using data shared by youths, including participants below the age of 16 for whom consent has been obtained, who could potentially be identifiable by others either within or outside a closed group as a result of the shared material. The main approach to minimise this risk is simply to refrain from including such material in its original form in outputs or publications. Visual content is thus only used internally for analysis, and textual data including quotes are never published verbatim but are presented descriptively following the approach recommended by Kozinets (2020) for data that are indexed and thus publicly searchable (e.g., interactions on Reddit) (see also Steinmetz (2012)). In any case, most examples from the ExOC dataset originate from non-indexed sources (e.g., Facebook or Discord), meaning that specific quotes will not be searchable on sites such as Google. Again, scholars undertaking research in other areas should reflect on these guidelines and apply them to their own specific field of inquiry.

Intrusiveness and Disclosure

While offline ethnographic methods have a long tradition of allowing research that does not disclose the identity of the investigators or the nature of the project itself, there is increasing evidence that researchers lurking covertly on online communities, understood to mean being present on a site without disclosure or engagement, could be perceived as intruders (Cera, 2023). In one example, the practice ‘resulted in a rippling sense of resentment and betrayal among those who find such things underhanded’ (Eysenbach & Till, 2001, p. 1104), which some researchers that are advocating for more participatory approaches try to avoid by fully disclosing their names and by seeking informed consent (Boellstorff et al., 2012). However, it has also been proposed that ‘[t]he fluidity of belonging and participation in a chat app creates a situation [where] it is not really possible to inform every group participant about the ongoing observation’ (Barbosa & Milan, 2019, p. 50). Further, ‘the chat space does not lend itself well to traditional practices of researcher disclosure’ (Moon Sehat et al., 2021, p. 28). Relatedly, Sugiura and colleagues conclude that ‘the convention that all research participants should give full and free consent to participating in research [is], in the online context, neither possibly nor necessary’ (2017, p. 195). A related, long-established principle in both offline and online ethnographies is in fact the avoidance of intrusiveness, both in terms of respecting the behaviours and (inter)actions of potential research participants without disruption, and because the disruption itself may influence the habitat that the researcher wishes to capture in its natural form (Goode, 2015). Bringing the two together, netnographic methods that aim to limit intrusiveness inevitably lean towards incomplete disclosure, or a necessary form of covertness.

In Costello et al. (2017)’s work, non-participatory research is likened to lurking, while participatory research is described as engaged and highly visible, and may include the researcher setting up fora on a dedicated site for participants to contribute to. While Kozinets (2020) argues for a non-participatory approach, he also contends that such methods are not ethnographic unless the researcher is immersed in the culture under study. Importantly, however, he insists on researchers fully disclosing their presence in order to act ethically. Finn and Dillon (2007), on the other hand, argue in favour of a less intrusive approach, whereby researchers observe without making themselves known. In support of the latter position, ‘divulging [a researcher’s] presence would change the dynamics of the conversation and would likely result in further exclusions from the group’ (Moon Sehat et al., 2021, p. 20). Additionally, within criminological and sociological fieldwork, it has been argued that ‘investigators cannot, and probably should not, announce to everyone beforehand that they are willy-nilly engaged in a research project. … Such a disclosure would interrupt the flow of social interaction in a given setting, not to mention throw a roadblock into the path of ongoing investigation’ (Goode, 2015, p. 52, emphasis in original). Doyle and Buckley hypothesise that as long as ‘the researcher only acts as an observer, risk of harm to participants is negligible’, in a context in which ‘covert observation may be required to preserve the authenticity of interactions’ (2017, p. 111–112). We find this to be especially pertinent in the context of researching the creation and sharing of hateful memes, and reactions of hidden communities to content of this nature.

In line with these considerations, therefore, the approach adopted for ExOC is that neither disclosure nor consent are to be made or sought by default once access to a group is gained, and that each would be determined based on situational factors. These would include the potential vulnerability of research participants (described above), the importance of disclosure versus the risk of intrusiveness, and the perceived privacy of small or closed groups (discussed in the next section). The decision flowchart in Figure 1 provides researchers with specific situational guidance relating to these ethical principles. The tool thus strikes a balance between the above views on intrusiveness and on the difficulties of gaining consent from everyone, on one hand, and the importance of maintaining the anonymity and safety of both participants and the research team, on the other. In practice, this entails protecting the identities of the netnographers, which may require the creation of “neutral” accounts with which to unobtrusively lurk. While this may explicitly violate the terms of service of some (e.g., Facebook) but not all (e.g., Snapchat) platforms, without an account of this nature many of the desired data would be otherwise unobtainable. Ethical guidelines within criminology and sociology ‘prohibit or discourage dissimulation’ (Goode, 2015, p. 52), concealment, or pretence relating to one’s character or intentions, but in cases where the benefits of the research outweigh the potential risks or the detriment that may be caused to participants, such concealment can be justified. Examples of research that rely on such considerations include Goffman (1961) failing to inform staff or inmates within an institution that he was conducting research, or Leo’s (2008) covert exploration of police interrogation techniques. ‘Without such a ruse, certain areas of potentially rewarding inquiry are forever closed off’ (Goode, 2015, p. 52). Reflections within this sphere are offered in the previous section, where it was considered that balancing the societal value (Bryman) of research with the potential risk of harm faced by participants can lead to the decision that the former could outweigh the latter. The same can be said in a context where situational factors lead researchers to avoid disclosing their presence in order to collect otherwise unobtainable data.

Further, the specific methodology of ExOC recommends creating such neutral profiles only where this is necessary, that is, if the platforms in question require that an account is registered in order to log in and access certain features or content (see below). The profiles we created have pseudonyms and the researchers avoid active engagement in the interactions among group members when conducting observations of hateful behaviour. A researcher’s presence will only be revealed to individuals or to the wider group in certain cases, such as recruitment for interviews, where informed consent would naturally be sought. This additionally mitigates the risk of exploiting participants, ensuring that researcher presence is unobtrusive by adopting a non-participatory, lurking role. An additional, important reason for creating such neutral profiles is researcher protection. Even in instances when observations are limited to lurking without attempting to recruit participants – at which point the researcher role would be revealed either to the group or to individual posters – the possibility remains that group members sharing hateful content could identify and target colleagues within the research team, whose names and affiliations are searchable on Google. This may be unlikely where groups or chats contain thousands of members, but is far from unrealistic in cases where groups contain much smaller numbers, and when the arrival of new members becomes noticeable to those who keep a close eye on such things. A blank profile lacking affiliation and with a generic, unidentifiable pseudonym greatly reduces the risks of this happening.

Perceived Privacy and the Distinction Between Public and Private

Ethics boards within sociology and criminology lack precise advice as to how to assess when a group is public or private, and this is reflected in the studies included in our review above. Sugiura et al. have described the available guidance as ‘confusing and not fit for purpose’ (2017, p. 186), stating that ‘privacy in the context of online research is a problematic issue’ (2017, p. 194). Other scholars have followed the limited contribution of the ethical boards in noting that the context or the nature of a site or group could be indicators of whether researchers should consider said space as public or private. For instance, Åsa advocates an ‘increased focus on the type of relations that predominate a particular context’ (2010, p. 23), while Whiteman similarly suggests that the ‘research settings’ may contain ‘a distinction between explicit/implicit markers of privacy’ (2012, p. 47). We contend that these suggestions, as with the ‘dialogic’ approach, are unable to concretely respond to the identified gap.

At the University of Copenhagen (UCPH), home of the ExOC project, there is an established principle, which determines that a group ceases to be private once it exceeds 200 in terms of membership size. 2 This originates from Dunbar’s (1993) research calculating the size of an average person’s network as 150, understood to mean the number of people someone knows and is capable of having a stable relationship with. This number is based on the cognitive capacity and limit of the human brain, and was reached through a correlation between brain size and that of the average social group. Although validated to an extent in the context of social networks (Gonçalves et al., 2011), the concept has also been disputed. Testing by Lindenfors et al. (2021), for example, yielded vastly divergent ranges of numbers, leading the authors to conclude that limits to human group sizes cannot be calculated using this method. Similarly, anthropologists de Ruiter, Weston, and Lyon suggested that ‘kinship terminologies suggest that although neocortices are undoubtedly crucial to human behaviour, they cannot be given such primacy in explaining complex group composition, formation, or management’ (2011, p. 557). The key for the development of the assessment tool was thus to strike a balance between the existing literature, including Dunbar’s number, and our new understanding of the public versus private nature of the groups studied by ExOC.

Once concerns relating to potential participant vulnerability are addressed, the tool treats all groups with a size of 200 members and above as open and public (see also Burkell et al. (2014)). This is important because researchers are not required to obtain informed consent from those sharing data and participating in interactions in such groups, even though those interactions could potentially be private in nature. Based on the critical discussion of Dunbar’s study, we consider that choosing 150 or 200 as a fixed benchmark would be insufficient, and that additional assessments would be necessary to respond to the many barriers that researchers face when conducing digital research. The barriers themselves, outlined below, ultimately dictated the demarcation between public and private within the ExOC framework. This approach combines the Dunbar perspective on open groups with the key ethical consideration that the mere availability of data, and participants’ willingness to share them publicly, does not automatically grant researchers the right to record and use them freely (Eysenbach & Till, 2001).

Public and No Registration Required

If a site is public and does not require registration (e.g., Reddit), understood to mean that content is accessible to everyone even without an account, then according to Wilkinson and Thelwall (2011) researchers do not need to ask permission when collecting data for research purposes. User rights and site policies should, however, still be consulted. Metcalf and Crawford, on the other hand, contend that the assumption that ‘publicly available data poses minimal risk to human subjects’ (2016, p. 6) could in fact be harmful. In this context, it is useful from an ethical perspective to differentiate between the types of data being researched. Some online content could ‘be perceived as a form of cultural production’ (Bassett & O’Riordan, 2002, p. 235), thus further justifying its collection without asking for permission. Based on these considerations, we state that data produced within public forums that do not require registration can be considered to be publicly available. This is the case regardless of the number of participants, consistent with University of Copenhagen guidelines.

Potentially Public but Registration Required

For public sites that require registration or the creation of an account (e.g., Snapchat), once an account has been created and the researcher has logged in, it is important to consider whether the specific group one is attempting to access is private or locked. Examples of the latter include private Facebook groups or Discord servers, or conversations where data are available only to “friends” or to connections approved by an administrator. In such cases, it is less justifiable to treat the data within as public if the group consists of fewer than 200 members. It has been argued (BSA, 2016) that researchers should first contact the administrator of the page or group, if any, and share their research affiliation and the purpose of the study. One way of adhering to a site’s terms of service could include creating a visible post communicating the purpose of the research, describing the data collection and anonymisation processes, and explaining the methods and outlets for dissemination. Providing details of this nature can ‘establish credibility and build trust, though researchers should always be aware that some communities may still reject researcher involvement’ (Schuman et al., 2021, p. 384). Attempts to do this, however, have yielded responses described as ‘antagonistic’ (Sugiura et al., 2017, p. 190), leading to criticism of Kozinets’ position of always revealing one’s identity as ‘far too rigorous’ (Langer & Beckman, 2005, p. 195). It may be the case, therefore, that the only ways for the netnographer to access such information are (1) through known contacts that can act as gatekeepers, (2) by creating neutral profiles with which one can lurk, as above, and being granted access through these, or (3) by obtaining informed consent.

In this context, the assessment tool distinguishes between generic entry criteria that groups may utilise to ensure that individuals joining them have a relevance or connection of sorts to the group and, on the other hand, criteria that are merely designed to weed out spam accounts or bots. While the former can be seen as an attempt to lock the group to protect its privacy on an otherwise public forum, the latter is arguably less concerned with who specifically joins the group. As such, with regards to bot-style entry questions, it is proposed that methods (1) and (2) are adopted, depending on contacts and access, and that an assessment of the potentially private nature of the interactions taking place in these open and public fora is made.

In such cases, once access is gained, we advocate that the presence of the research team not be disclosed, regardless of the content, within groups containing 50 members or more. This approach is justified on the nature of the group’s entry criteria focusing on preventing bots from entering, rather than actively monitoring and restricting who is allowed to access its members and content. In groups with fewer than 50, additional care relating to disclosure and obtaining informed consent is taken in line with the procedures discussed elsewhere (see, e.g., Sugiura et al., 2017). In general, the smaller the group and the more sensitive the research team perceives the nature of the content to be, the more it will be ethically necessary to treat the groups as private despite their openly public settings. Conversely, when the entry question is designed to ensure a connection between users requesting to join and the existing group members, that is, the assessment determines that there exists a certain relevance in terms of who is allowed into the group, the team will respect this wish for privacy by making its presence known to administrators and group members. This equates to identifying the given group as definitively private. Option 3) relating to the necessity of obtaining informed consent will therefore apply.

Discussion

A significant gap persists in the ethical guidelines published by official bodies and ethics councils in the social sciences due to their inability or unwillingness to offer specific guidance on the treatment of data and participants within the realm of social media research. Moreover, a comprehensive, ethical discussion concerning group-based and hidden social media behaviour remains unaddressed. Our examination of existing ethical approaches pertaining to qualitative digital methods indicates that the field has not ‘advance[d] beyond describing ethical dilemmas encountered by researchers’ (Doyle & Buckley, 2017, p. 114), and is currently lacking a structured approach that can offer a concrete codification (Kozinets, 2020) of how to proceed in the important context of hateful behaviour on social media. Indeed, scholars have long called for ethical bodies to intervene, inviting the research community to ‘consider the situational, contextual and temporal aspects of [online research]’ during the wait for official policies that can address ‘the complexity and diversity of internet research spaces’ (Warrell & Jacobsen, 2014, p. 23).

To fill this gap, we propose a dedicated assessment tool that draws upon established ethical frameworks within sociology, criminology, and communication studies. Our proposed approach caters to the study of behaviour within smaller or hard-to-reach online groups. While the call for official guidance remains unanswered, the present tool intends to offer a specific and much-needed solution to the dilemma of determining whether an online space should be considered public or private, and the ethical implications of such a decision. The tool is presented visually (Doyle & Buckley, 2017) in the ethical decision flow chart in Figure 1. This offers a previously unavailable step-by-step guide with concrete and applicable solutions to the evergreen ethical dilemmas surrounding researcher intrusiveness, the public-private debate, and obtaining consent.

Our overarching methodological stance thus allows and embraces the adoption of multiple approaches within a structured framework that can be applied in a situated manner. This situated but structured approach implies that the researcher’s actions are context-sensitive, and that each determination is contingent upon the specific platform, its participants, the group’s size, and, where relevant, an in-depth, case-by-case assessment of the potentially private nature of the content of the group’s interactions, even when the data observed is publicly available. This situated approach reflects our commitment to a nuanced and adaptable ethical framework that remains responsive to the complexities and variations encountered within the landscape of online group behaviour. Of primary importance in our assessment tool is the use of neutral profiles, which can guarantee minimal intrusiveness and contemporaneous researcher protection, before the assessment of potential privacy determines whether there should be a requirement to reveal the researcher’s identity and seek informed consent from group members.

This approach remains consistent with broader ethical practices within qualitative research by recognizing that researchers may encounter unforeseen situations throughout their research journey that demand thoughtful ethical decision-making. It is imperative to acknowledge that ethical choices will unfold during the research process itself, extending beyond the initial project planning phase. Under this perspective, we view all ethical guidelines as valuable principles to aim for and as criteria that can and should guide research, rather than rigid rules that must be blindly followed in all circumstances, even when the researcher’s control is limited by the unpredictable nature of the observed world, whether physical or online.

Conducting qualitative research that is ethical in its strict adherence to the demands of institutional review boards or ethical committees, and simultaneously able to explore and reveal new data about society and interactions, has long been a balancing act for researchers in the fields of sociology, criminology, and related social sciences. There are countless examples of studies, both classic and recent, that may, technically speaking, be considered unethical (Bourgois & Schonberg, 2009; Goffman, 2014; Humphreys, 1970; Venkatesh, 2008), but whose contributions remain noteworthy. The reality is that some qualitative research, and especially research of an ethnographic nature requiring observations of behaviour or human interaction, ‘can only be conducted by ignoring informed consent’ (Goode, 2015, p. 52, emphasis in original). Informed consent, of course, is just one example of the standard requirements of ethical research that may, in fact, warrant additional reflection, rather than blind adherence at all times. Such research thus requires that considerations and decisions regarding ethics be continuously evaluated throughout the research process itself in the ad-hoc, structured, and situated manner described and presented. While this balancing act has long been accepted in the context of “real-world” research within criminology and sociology, this practical awareness has not been seamlessly transferred or applied to researching online behaviour.

Our situated, structured approach strikes a balance between making ethics solely a matter of an individual researcher’s personal ethical standards and relying too much on formal pre-study assessments by Institutional Review Boards. In this way, our recommended approach establishes a foundational pre-study standard while also providing a structured framework for determining when and how to apply more stringent ethical measures. Further, in the development of the assessment tool, our aim has also been to assist other colleagues that want to work in the field of digital social science. As identified by Nind et al. (2013), netnography is a field where any form of innovation in the chosen methods, especially in light of the insufficient guidance from ethical bodies and boards, places all risk on the researcher. This in itself requires a rebalancing of the expectations and duties of researchers and learned organisations, and evidences the need for developing clear, structured, and practically useable forms of guidance to rely and reflect on. It is important to emphasize that our framework for assessments cannot be based solely on selectively choosing arguments in order to achieve ethical sufficiency. Therefore, our ethical decision flowchart should not be applied to individual steps in isolation, but should be considered holistically from initial vulnerability considerations to the final decision concerning obtaining informed consent.

Footnotes

Acknowledgements

We extend our gratitude to colleagues at the Department of Sociology, University of Copenhagen, for their constructive feedback on this paper. Special thanks to the entire team at the Microsociology of Online Deviance Lab, which includes PhD students Jonatan Mizrahi-Werner and Kristoffer Magnus Bjerre Aagesen, as well as the group of student assistants who contributed during the developmental stages of this project. Additionally, we would like to express our appreciation to the researcher group at DISTANT, Social Data Science, University of Copenhagen, for their insightful comments and suggestions during the initial stages of our work. We also acknowledge the feedback of our colleagues at EuroCrim 2023 in Florence, Italy. Last but not least, we thank the editor and the reviewers, whose suggestions for improvement significantly contributed to the final version of this manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Independent Research Fund Denmark (Danmarks Frie Forskningsfond or DFF) with grant number 10.46540/2033-00098B.

Notes

Appendix

Further guidance provided by UCPH concerning the figure of 200.

I want to follow an online forum with + 200 members, but it requires access? • Forums that require admission or approval of participants must generally be regarded as closed. However, it must be based on a specific assessment where you can take into consideration: ◦ The purpose of the forum, ◦ The participants, ◦ The data, views etc. That are shared and ◦ Whether the participants have a reasonable expectation of confidentiality in the forum. • The administration of the forum can also be involved in the specific assessment: ◦ Who administrates it, ◦ What the membership requirements are, ◦ Whether the administrator can exclude persons, erase data etc. • The Terms of Use for the platform may also be of importance: ◦ How much access they have to forums, ◦ The extent to which they control content and dialogue, and ◦ Whether they can erase content and members or close the forum. • For example, in a forum for persons with a specific diagnosis or a special interest, there may be an expectation of a confidential space even though there are 200 or more participants.