Abstract

Background

Electronic health systems contain large amounts of unstructured data (UD) which are often unanalyzed due to the time and costs involved. Unanalyzed data creates missed opportunities to improve health outcomes. Natural language processing (NLP) is the foundation of generative artificial intelligence (GAI), which is the basis for large language models, such as ChatGPT. NLP and GAI are machine learning methods that analyze large amounts of data in a short time at minimal cost. The ability of NLP to conduct qualitative analyses is increasing, yet the results can lack context and nuance in their findings, requiring human intervention.

Methods

Our study compared outcomes, time, and costs of a previously published qualitative study. Our approach partnered an NLP model and a qualitative researcher (NLP+). UD from behavioral health patients were analyzed using NLP and a Latent Dirichlet allocation to identify the topics using probability of word coherence scores. The topics were then analyzed by a qualitative researcher, translated into themes, and compared with the original findings.

Results

The NLP + method results aligned with the original, qualitative derived themes. Our model also identified two additional themes which were not originally detected. The NLP + method required 6 hours of labor, 3 minutes for transcription, and a transcription cost of $1.17. The original, qualitative researcher only method required more than 36 hours ($2,250) of time and $1,100 for transcription.

Conclusions

While natural language processing analyzes voluminous amounts of data in seconds, context and nuance in human language are regularly missed. Combining a qualitative researcher with NLP + could be deployed in many settings, reducing time and costs, and improving context. Until large language models are more prevalent, a human interaction can help translate the patient experience by contextualizing data rich in social determinant indicators which may otherwise go unanalyzed.

Background

Machine learning models trained on large amounts of data are the basis for generative artificial intelligence (GAI) which include large language models (LLMs), such as ChatGPT (Fayyad, 2023). In the US over the past year, ChatGPT and similar LLMs have added hundreds of millions of users more rapidly than any emerging technology in the past several decades. The methods behind LLMs are not publicly known, including the extent and use of human intervention and interaction, though human interaction is known to be present (Fayyad, 2023). LLMs are often augmented with human interaction because in many cases, the human aspect improves interpretation, contextualization, and increases translation of findings to the human world. LLMs are increasingly used in the medical and biotechnology fields for drug discovery and development, understanding gene expression, and identifying clinical diseases.

Natural language processing (NLP) is a branch of artificial intelligence which is the basis for LLMs (Abram et al., 2020; Clarke et al., 2020; Natural Language Processing, 2020). Large or small, deficits in NLP models are enhanced and made more relevant when human involvement is incorporated. Qualitative researchers are an ideal partner for NLP models, as the researchers are trained in the interpretive methods of UD, can provide the context required for the NLP to best perform, and NLP methods offer the benefits of speed and volume to the researcher. The overlap between NLP and qualitative research is not an area in which there is a substantial amount of peer reviewed, published research. A PubMed search in August 2023 identified 46 articles studying qualitative research and NLP methods together, a number reduced to 14 when including the comparison in healthcare, and 13 when including medical care.

LLMs are currently underutilized in the analysis and interpretation of unstructured data (UD). UD in healthcare records are collected either through narrative input or the use of open ended questions (Sonntag & Profitlich, 2019). UD often include information relevant to the health and wellbeing of patients which is not otherwise recorded. For example, a new patient history collects data pertaining to multiple aspects of a patient’s current health and often includes a biopsychosocial assessment which covers social and family history, behavioral and sexual health, travel history, housing, employment, home safety, interpersonal violence, and similar fields (Zhang et al., 2020). While these narratives are often transcribed and included in a medical record, their analyses and use may be limited.

Patient communications, such as a phone call or office visit, are often related to a medical problem; however, the communication may also include issues pertaining to housing, transportation, employment, which negatively impact their health outcomes from a social determinant perspective (Patterson et al., 2017). If these interactions are recorded as UD which is not analyzed, over time, these recurrent losses of information accumulate and can delay preventative measures, diagnosis, and treatment (Michel et al., 2014). In the healthcare space, behavioral health providers generate the most UD (Segal et al., 2022). Developing a method to analyze UD quickly and efficiently in healthcare is important for patient outcomes. While LLMs easily and quickly analyze UD, they lack the context of understanding, and may be boosted by human intervention and interaction (Sarker et al., 2019).

We tested a new method of analytics, by partnering a qualitative researcher with an NLP model and measured the differences with a project which used a qualitative researcher only. To test the method, we replicated a qualitative study on patients seeking behavioral health care (Abram & White, 2021). In the original study, a qualitative researcher performed all data collection, transcription, analyses, and interpretation. Determining the benefits of a mixed method approach could be instrumental in increasing the knowledge of behavioral health patients by analyzing unstructured data.

Methods

The institutional review boards determined this project was not human subjects research based on the use of anonymous data. Python 3.8.8 with Spyder 5.0.5 as the integrated development environment (IDE) was the programming tool (Python Programming Language, 2021; Spyder, 2021). Interview data were imported into Python from Microsoft Word and joined as a single file. Previously transcribed data from eight individuals in substance use recovery were analyzed. The qualitative methods and outcomes of the original study are reported elsewhere (Abram & White, 2021).

Data preprocessing used standard NLP techniques (Le Glaz et al., 2021). Data preprocessing standardizes and optimizes words for thematic interpretation. The following methods were used: lower casing of all words for standardization, word tokenization to counting frequencies (each word is a considered a unit of data), removal of all punctuation marks, removal of stop words (words which do not contribute meaning for themes, for instance, “a”, “the”, “huh”), and numeric digits were converted into words. The final step in pre-processing was word stemming. This is a process by which words are reduced to their root so that themes may be identified, for example the words “nurse”, “nursing”, and “nurses” become “nurs”. Stemming provides a more comprehensive topic identification, compared to singular or two-word sets (“ngrams”) (Le Glaz et al., 2021). After pre-processing, the data from all interviews created the corpus, which is the body of all tokens for analysis.

For analyses, latent Dirichlet allocation (LDA) was used as the statistical model. LDA is a probabilistic, unsupervised machine learning model often used in the analyses of text data (Blei et al., 2003). Briefly, LDA considers each token to be a finite number over some number of unknown topics. The topic probabilities represent the potential occurrence of the topic from the entire corpus. Each topic includes the ten words with the highest probability of use in that topic. These scores indicate the importance of each word for the specific theme and are considered the probability of importance of that word. Coherence scores, measured as coherence values, determine the number of topics and their relative fit into the model based on their closeness to one another. Coherence measures how closely related words are in the distribution and assigns them to a theme. Coherence values determine the optimal number of themes for an analysis and range from 0 - 1. The closer the themes, the higher the coherence score (Molik et al., 2021).

A qualitative researcher, not involved with nor with knowledge of the original study, analyzed and interpreted the topics into themes. The NLP + themes and the original study findings were compared by the researcher and the original study lead author separately.

Results

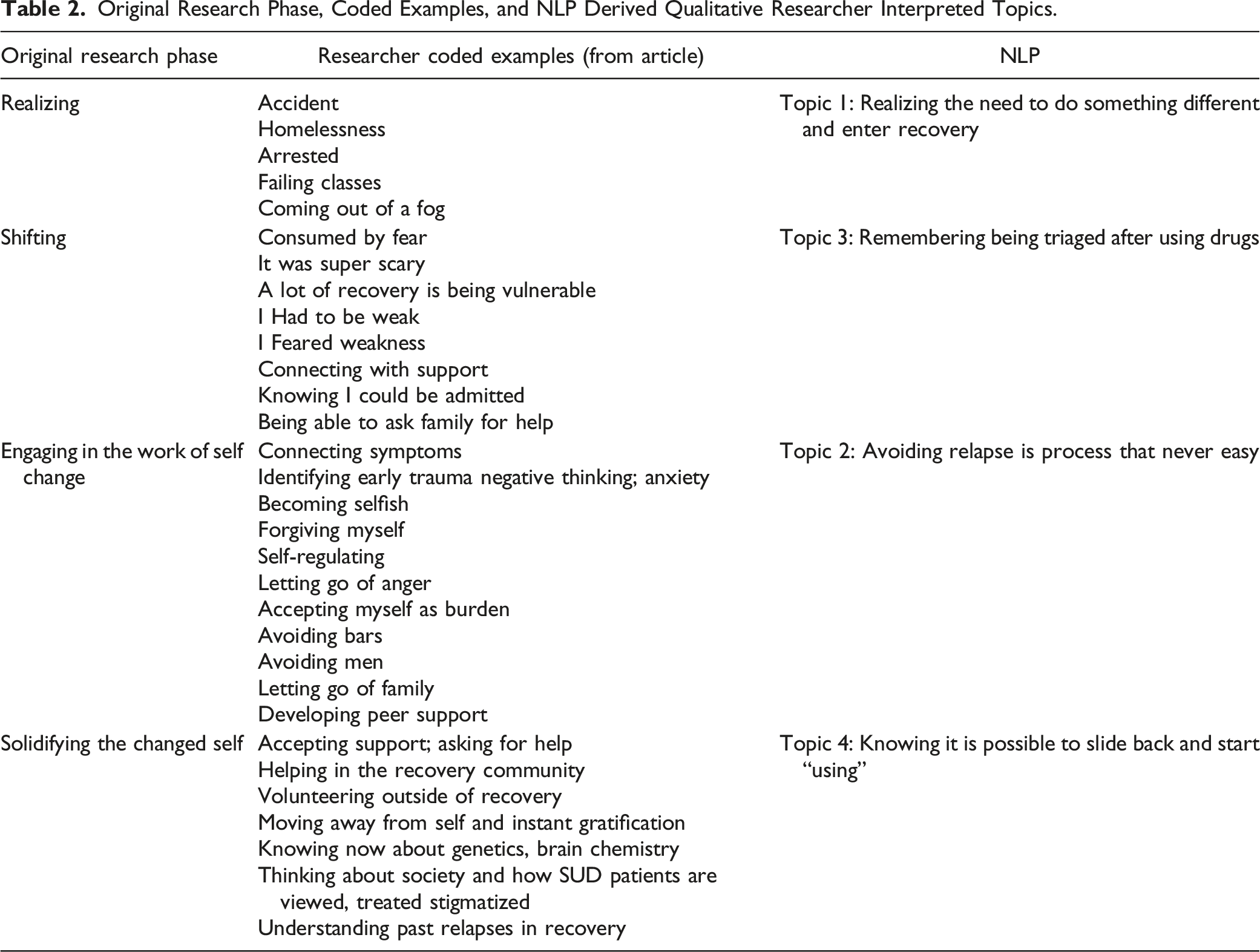

The LDA modeling technique selected an eight themed model as superior (coherence value = .81) presented in Figure 1. This visualization shows the distance between topics and words associated with each. Since this project was to compare the NLP approach and the original findings, and not conduct an associative analysis, we selected the top four themes from the output (coherence value = .78) to match the number of themes identified in the original study. Table 1 shows the NLP identified topics with the coherence scores, which is the attributable proportion of importance, for each word stem within each topic and the themes derived by the qualitative researcher. Table 2 shows the NLP derived themes side by side with the qualitative researcher derived themes from the original study. Intertopic (multidimensional scaling) distance map and topic relevance. NLP Identified Topics With Coherence Scores With Qualitative Researcher Interpretation. Original Research Phase, Coded Examples, and NLP Derived Qualitative Researcher Interpreted Topics.

The researcher interpreted findings derived from the NLP output are closely related to the original findings and interpretation (Abram & White, 2021). A time and cost comparisons between the two projects are as follows: the NLP assisted project required 2 hours of coding, generated themes within 60 seconds, and the qualitative research dedicated almost 4 hours to interpreting the themes. Based on the data file sizes of the transcripts, the time for automated transcription from voice to text would have taken 3 minutes and 15 seconds. The total time of the NLP assisted project was 6 hours and 5 minutes. The costs of automated transcription using Amazon Web Services would have been $1.17.

The original project, completed solely by a qualitative researcher, required a total of 36 hours, including 12 hours for data collection and 24 hours for analysis and interpretation. This does not include travel time to meet with participants, setup time, and debriefs. Transcription costs were ∼$1,100 and completed by a transcription service.

Discussion

There are significant amounts of unstructured data in the world which go unanalyzed. In healthcare, the analyses of UD could greatly improve patient health outcomes as well as reduce the cost of care (Kreimeyer et al., 2017). While many different NLP methods are used in healthcare and research settings, they are often narrowly focused to address a single clinical condition or aspect of patient care as medical care has highly specialized vocabulary and complicated semantics, where context is critically important (Gérardin et al., 2022). NLP augmented with a qualitative researcher could overcome these limitations by providing the necessary context and content understanding of a human with the speed and complexity of analysis provided by a computer, enhancing the original interpretations. Unlike commercially available LLMs, like ChatGPT, tailored NLP methods could be used without providing sensitive or confidential information to a third party. LLMs use the data input to improve their interpretations, therefore, any information provided to ChatGPT, becomes part of the corpus. This corpus would then include all the information provided and could introduce security or HIPAA violations. Another benefit of our method is that expanding a locally operated NLP model increases the amount of data which could be analyzed, reduce the time required for analyses, and save financial resources through avoiding high-cost human or proprietary software transcription. Additionally, incorporating or connecting automated transcription services to the model or with an institution and not offsite through a contractor reduces the potential for a data breach.

This project demonstrates that an NLP + qualitative researcher method can analyze UD and generate similar findings to those derived by a qualitative researcher alone. Another important aspect of this project was the resource savings, including ∼36 hours of researcher time ($2,250) and $1,100 for transcription, resulting in a $3,350 total cost savings. For projects with more data, these savings would increase.

NLP models perform better with more data, so while our findings were similar, with more data, it is probable that we would have had a stronger connection between the findings of our replication study with the original. There are limitations with our study, specifically that the sample size was eight patient interviews, however, with NLP, sample size is measured differently as it is enhanced by the volume of information provided to create a model. In this project, we used interviews with people in care who were participating in a research study. Ideally, treatment notes or other unstructured data would be used, but as a use case or replication study, we demonstrated that our findings align with previous peer reviewed research.

Our future work will obtain unstructured medical record data and use NLP to estimate the probabilities of potential diagnoses. We will then compare the NLP findings with the medical record, and an independent healthcare provider who will complete an assessment. Similar work in health care and clinical research is currently underway (Sarker et al., 2019). As with all methods of data interpretation, NLP is limited in contextual understanding and requires copious amounts of data to provide more specific context, meaning that a diagnosis using NLP alone could be missed. A healthcare provider would enable us to train the model by identifying areas of weakness and better interpreting its findings.

Conclusions

Generative AI and large language models are becoming the norm for analyzing large, complex data. As these tools require human intervention and interaction, qualitative researchers are natural partners to maximize context. The benefit to qualitative researchers is the significant cost and time savings as well as the be on the cutting edge of emerging technology which is revolutionizing many fields.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.