Abstract

Due to the increasing popularity of online qualitative interviewing methods, we provide a systematically organized evaluation of their advantages and disadvantages in comparison to traditional in-person interviews. In particular, we describe how individual interviews, dyadic interviews, and focus groups operate in both face-to-face and videoconferencing modes. This produces five different areas for comparison: logistics and budget, ethics, recruitment, research design, and interviewing and moderating. We conclude each section with set of recommendations, and conclude with directions for future research in online interviewing.

Keywords

Introduction

Online research methods, also referred to as virtual methods (Hine, 2005; Joinson, 2005), internet research methods (Van Selm & Jankowski, 2006) or internet methodologies (Mann & Stewart, 2000), have been transforming qualitative research processes from the late 1980s onwards. Now, the COVID pandemic has forced many researchers to confront the issues involved in online interviewing within an unusually short time frame (e.g., Khan & MacEachen, 2022; Pocock, et al., 2021). Rather than something that has been just a temporary necessity, we believe that the use of online interviewing might become widely available for a variety of purposes. This means that we will increasingly consider the traditional use of in-person interviews not so much as a “gold standard” but rather as a default option that has now been called into question. Consequently, our goal is to provide a systematic comparison of the practical considerations involved in choosing between in-person interview formats and their online equivalents. In doing so we, we will primarily focus on the decisions that researchers need to make in undertaking online interviews, rather than providing a detailed review of the literature. (For such reviews, see Heiselberg & Stępinska, 2022; Keen et al., 2022; Khan & MacEachen, 2022; and Pocock et al., 2021).

Pioneering authors in CMC (Computer Mediated Communication) like Annette Markham (1998); Claire O’ and Madge (2001, 2003), Mann and Stewart (2000), Nancy Baym (2000) and Christine Hine (2000) wrote extensively about the implications of collecting online qualitative data. Despite the ongoing efforts of qualitative researchers from various scientific fields to advance the use of the internet (e.g., Abrams et al., 2015; Archibald et al., 2019; Cater, 2011; Chen & Neo, 2019; Deakin & Wakefield, 2013; Lobe, 2017; Morgan & Lobe, 2011; Tuttas, 2015), CMC has not been extensively used for qualitative data collection.

The reasons for this slow process of adoption include the classic criticism of CMC: that it lacks the social context cues that play a crucial role in successful qualitative data collection. Social context cues involve aspects of the physical environment and nonverbal behaviors such as nodding approval, or frowning. (Sproull & Kiesler, 1986). Reduced social context information presumably makes the research setting impersonal and anonymous (Sproull & Kiesler, 1986), and the lack of visual cues in CMC weaken an online interaction relative to the richness of real-time, face-to-face interaction (Joinson, 2005: 22). Authors like Joinson (1999; 2001; Joinson & Paine, 2012), Rheingold (1993) and Walther (1995; 2013; Walther & Parks, 2002) have contributed experimental evidence that rejects this criticism and the view that the absence of nonverbal and visual cues restricts online interaction’s capability to exchange individuating information. For example, Walther’s study on relational communication (1995) provided some surprising results, showing higher immediacy/affection for CMC than for face-to-face communication.

The current pandemic has changed the interest in online qualitative data collection considerably. With social distancing and other physical restrictions in place in all corners of the world, it has been almost impossible to perform any kind of in-person interviewing. From March 2020 onwards, researchers have had to modify their in-person data collection plans in ways that accommodate online methods. Going online with qualitative data collection methods has increased, not only for those who are particularly interested in developing new methodological options, but also for researchers who deal with every-day, practical research problems. For both experienced qualitative interviewers and beginners alike, online interviewing often presents a new set of challenges.

In the present article, we will offer an overview of three types of interviewing, individual interviews, dyadic interviews, and focus groups for both in-person and video-based formats. Our comparisons are grounded in both the literature and the extensive personal experience that we have gained from collecting online qualitative data on a regular basis since 2005. We will thus emphasize the practical issues involved in choosing whether to collect data through in-person or on online interviews. Note that we do not discuss data analysis issues because there are few differences between face-to-face and digital modes in how the data is analyzed, although differences in how it is collected may well influence what is available for analysis.

What Are Online Research Methods?

Simply put, online research methods facilitate “traditional” methods with the use of infrastructure provided by the internet and related digital technologies (Chen & Hinton, 1999: 2). There have already been wide-ranging modifications of traditional in-person interviews and focus groups so that they fit into online environments (Lobe, 2008), such as social media sites, emails, forums, bulletin boards, and more.

Video-based formats in CMC refer to applications (e.g., FaceTime, Facebook, Video Chat, Skype) and platforms (e.g., ZOOM, GoToMeeting, Webex Adobe) that support full-motion video imaging with real-time audio. These formats can be demanding because participants need to have digital devices with a functional camera and microphone. Currently, desktop computers, laptops, tablets, smartphones and similar devices have these functionalities built-in. Further, participants need to have at least some digital competence to be able to participate in the discussion. Tuttas (2015) and Lobe and Morgan (2021) provide extensive discussions of the features and criteria upon which to choose the most suitable videoconferencing application and platform in a given research situation. Video-based formats are the closest to in-person interaction, so despite taking place online, they still call for recording and transcription.

Three Types of Interviews

Individual Interviews

Online individual interviews aim to capture the spontaneity of traditional in-person interviews (Chen & Hinton, 1999), and thus are essentially an application of traditional interviews to the CMC environment. Like traditional in-depth interviewing, which Neuman (1994: 246) defined as “a short term secondary social interaction between two strangers with the explicit purpose of one person obtaining specific information from the other,” online interviewing seeks to establish digitally-mediated interaction to enter into the other person’s perspectives, which are “meaningful, knowledgeable and able to be made explicit” (Patton, 1990: 278). Individual interviewing has the characteristics of a “conversation with a purpose” (Burgess, 1984: 102).

Dyadic Interviews

Dyadic, or two person interviews, lie on the continuum between individual interviews and focus groups. A 2013 article (Morgan et al., 2013) was one of the first to highlight the pros and cons of this data collection design by discussing three studies that used this method and how the method may have influenced results. Family research has for decades routinely used dyadic interviews to pair people with pre-existing relationships, such as married couples (Allan, 1980; Arskey, 1996; Eisikovits & Koren, 2010; Seale et al., 2008). Dyadic interviews can provide the “sharing and comparing” advantages of focus groups over individual interviews, while minimizing many of the difficulties associated with focus groups (such as the recruitment of 5–8 people for a specific day/time). Participants can engage with each other with the assistance of the moderator, building on shared experiences, while also revealing key differences in experiences. Data generated by this sharing and comparing process are especially useful for understanding how contextual factors influence thoughts, behavior and decision making. Dyadic interviews provide the depth and detail of individual interviews while also providing the interaction present in focus groups.

Focus Groups

Online focus groups are, like other forms of online interviewing, intended to capture the essence of in-person focus groups. We can define focus groups as a “research technique that collects data through group interaction on a topic determined by the researcher” (Morgan, 1997: 6). The term “focus” refers to the fact that a moderator intervenes to shape the discussion using a research-determined strategy. The key feature of focus group interviews is the explicit use of group interaction to produce data and insights, through the process of “sharing and comparing” mentioned above. The source of data is not just the answers to the researcher’s questions but also the comments made by the members of the group to each other. Thus, the interaction of each individual participant is influenced by the social context represented by the group. A successful focus group profits from good interaction among the members as they “develop an explanation or accomplish a task,” so it is important to stimulate the participants to “enter the discussion” rather than merely “answer the question” (Short, 2006: 108–109). A focus group can differ along numerous axes, including formality, degree of structure, familiarity of the participants with one another, and the involvement of the lead researchers (Short, 2006: 104).

Comparing In-Person and Online Interviewing

We discuss five sets of issues, addressing them in more-or-less the order that they occur during the research process: Logistical and budgetary issues Ethical issues Recruitment issues Design issues

Interviewing and moderating issues

For each set of issues, we emphasize the most important differences across mode and type of interview. Our aim is to inform prospective researchers about the main issues they would encounter when taking their research efforts online. Along with mapping the issues, we provide references for more details into specific issues where possible. In this article, we concentrate on the comparison between face-to-face and video because text-based modes have not evolved substantially over the past decade (see Lobe & Morgan, 2021), and because video interviewing has experienced rapid adaption. In addition, our emphasis is on systematic differences, not specific solutions, which are often quite context dependent (for suggestions about solutions in online individual interviewing, see Renosa et al., 2021, and for focus groups, see Daniels et al., 2019).

Comparing Logistical and Budgetary Issues

Technology issues

Comparing Logistical and Budgetary Issues.

When participants need to use less familiar technology, it presents additional challenges to the research team (Lobe & Morgan, 2021). At a minimum, it requires assessing whether the participant truly does have the necessary technology and skills. In some cases, it will mean training participants to use the relevant software, even to the point of helping them download it. Without this kind of prior preparation, there may be time consuming difficulties at the start of the interview, which can be particularly intrusive in focus groups when other participants are forced to wait while the moderator attempts to troubleshoot problems for one or two of the participants.

Related to these challenges to participation is a fundamental problem of potential selection bias due to the digital divide issues (Van Dijk, 2020) in video-based interviewing. To the extent that older age, lower income, more rural location, and other factors determine the ability to participate, this will limit the available sample in ways that might have little connection to the substantive eligibility criteria for a study. Thus, any project that uses digital technology in general, and video conferencing in particular, must give careful attention to how this choice limits the available range of participants, and thus may bias the results. This potential drawback contrasts with the ability to foster and accelerate the participation of those who might be geographically less accessible, have time or other constraints or simply prefer to participate in online form. Further, online participation might be preferable when researching digital technology use and digital social formations.

One further topic related to technology is the form in which the data are captured. An important new development is the availability of automatic captioning on some of the video-conferencing platforms (e.g., Zoom). By using artificial intelligence, these programs can convert the participants’ speech into text during the interview itself. Given the recency of this technology, we are not aware of any published accounts of its utility, but if it does prove to be as effective as it appears, it would be a major advantage over in-person interviewing.

Budgetary issues

There are two detailed studies on overall budgetary issues. First, Rupert et al. (2017) conducted focus groups using in-person and video-conferencing modes. In terms of overall cost, they found little difference between the in-person and video-based groups, because most of the expected expense-saving advantage for the video groups was offset by the need to buy cameras for several participants. Second, Namey et al. (2020) compared expenses for individual interviews and focus groups using online and in-person modes. For individual interviews, online video was notably more expensive due to platform costs. For focus groups, in-person groups were less expensive, again due to platform costs. However, an additional advantage for the online modes comes from eliminating travel for both interviewers and participants. Of course, this matters most when the desired interview sites are widely dispersed. As we will consider later under the heading of recruitment, this also opens up the range of available participants.

Differences by type of interview

With regard to technology barriers, there may well be a difference between online versions of individual interviews compared to dyadic interviews and focus groups. This difference and considerable advantage is due to the ability to conduct individual video-recorded interviews via smart phones with widely used software such as FaceTime, Viber, Telegram, etc. This advantage could also carry over into budgetary matters by eliminating any potential platform costs. In contrast, it is particularly desirable to conduct online focus groups via devices with bigger screens, such as desktop and laptop computers. This increases the visibility of all participants, which can give the participants a sense of a group formation and interaction.

Recommendations

First, for all types of interviews, we advise scheduling pre-sessions to prior the main interview in the form of short 10–15 min meetings with all participants. These pre-session contacts can address technical issues and skills and even ethical considerations (e.g., explaining how to set up a virtual background to help ensure privacy). Second, for focus groups, we recommend having an assistant moderator available who can help with any technology and software issues that arise during the interviews. In general, we recommend thinking about how to use members of the research team as a resource to overcome potential technical difficulties.

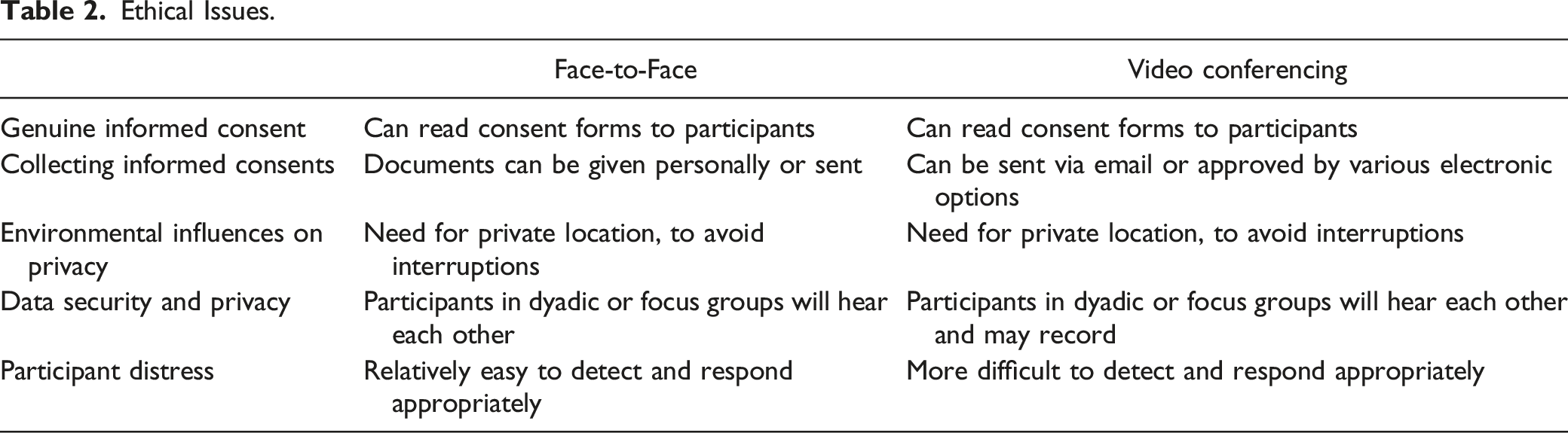

Comparing Ethical Issues

Informed consent issues

Ethical Issues.

A different problem with informed consent that applies to all the online modes is how to collect the actual statements of consent. For in-person interviewing, these forms are distributed and gathered by the interviewer, but the indirect contact between the researcher and the participants in online interviewing can create considerable complications. One option is to send the consent forms to participants prior to the interview, but there may be an issue with getting signatures, which are necessary for ethical approval. In particular, people might be unfamiliar with either electronic signatures or printing and scanning forms before returning them. Another option is to include a link with the statement of informed consent, such that the participant’s visit to that link constitutes consent, or simply responding to the informed consent email with “I understand and sign.” Finally, verbal consent is another option, which can be obtained and recorded in video modes (Khan & Raby, 2020).

Interestingly, one potential counterpoint to these concerns about informed consent is the increased freedom to withdraw from participation in the online modes. In particular, face-to-face interviewing can create a high implicit demand to continue, once a contact has been made, while all of the online modes can be terminated with the click of a button.

Privacy issues

With regard to privacy, the first concern is environmental influences when others are present during the interview and can observe the data collection. This problem is most serious for video-based interviewing. In-person interviews generally involve a direct negotiation to select a private location. In contrast, when other people are nearby, they may possibly see or overhear the exchanges that occur in video interviews. This issue can certainly occur in a home setting (Khan & Raby, 2020), but it can be particularly problematic when participants choose to conduct the interview in a public location (e.g., an individual interview by smart phone while in a coffee shop or restaurant).

One widely cited (e. g., Pocock, et al., 2021) potential advantage for online interviews is that they may allow participants to participate at the time and space that is convenient or safe for them to do so. Hence, another privacy-related issue involves the security of the data that are collected, while they are being collected. In face-to-face interviews, the researcher is present and can warn against any effort to record or otherwise capture the data. In contrast, participants with sufficient technology skills can record video interviews without the interviewer’s knowledge. Even though video-conferencing software usually does not permit recording without the host’s (the interviewer or moderator) approval, determined participants can find ways to record without being noticed.

Ethical concerns around participant distress

Participants’ emotions, and even distress, may be encountered by the researcher while in the field, especially when working with vulnerable populations and/or sensitive topics. Although it is possible to question whether on-line interviewing is an appropriate method for gathering data about sensitive topics, researchers who have worked in this area, including Thunberg and Arnell (2021), suggest that virtual interviews when handled with care can actually provide more agency to the participants in that they can end their participation abruptly and at any time. This can be done during in-person interviews but the ease of clicking a button will undoubtedly be easier for a respondent to do than excusing themself from a face-to-face interview.

Although some commentators refer to self-awareness as a potential benefit to participants in qualitative research (Orb et al., 2001), the production of such insight may also be a source of stress. Carter et al. (2008) demonstrate that while participants largely find qualitative research to be “enjoyable, cathartic, and beneficial” (p.1267), difficult topics may lead to “reflexivity” (p.1270), challenging insights, and charged emotions. For example, Dyregrov and Dyregrov (2015) have found that painful discoveries can be made by marriage partners when learning of the other’s experience.

It is thus critical that the interviewer pay close attention to the strain that can occur during interviews and offer support. This can include providing quiet silence, offering comforting words, allowing them the time they need to process the emotions, letting them know it is OK to express those emotions, and providing them the option of terminating the interview if that is what they feel is best for them. These approaches mirror those that an interviewer would take for in-person interviews. Investigators should include in their manual of operations guidance for interviewers to recognize distress. These can include both verbal and facial cues (Morse et al., 2003). When interviewing vulnerable populations or disturbing topics, researchers should consult an expert in the field (for example, a child psychologist when the research topic is child abuse) about best practices for maintaining safety for the investigator, participant, and others (for example children of the respondents who may be in the vicinity).

Also, at the end of the interview, regardless of whether signs of distress were observed in the interview, the interviewer can ask for participants feelings. After allowing the participant an opportunity to express feelings raised by the interview, the interviewer can ask the respondent if s/he would like to discuss the issues further with an appropriate professional service provider. The staff member can then offer the respondent a range of service options in the local community. They can also offer to assist the respondent in locating appropriate services and provide enhanced referrals (e.g., by calling an organization and assisting in making an appointment).

Code of ethics for online interviewers

Numerous scholars have created a canon of work related to ethics of online interviewing which amount to a “code of ethics.” For example, the Association of Internet Researchers (https://aoir.org/has) have been working to codify guidelines and offerings for the field in an effort to mitigate the ethical challenges of internet-based research. They have produced a series of free, downloadable reports such as Internet Research: Ethical Guidelines 3.0. Unfortunately, for our purposes, much of this work is devoted to issues involving capturing data from bulletin boards and other online communities. For issues related to video interviewing, it may be more helpful to examine the substantial body of literature on ethics in qualitative methods generally, and apply as necessary to on-line work. (Eide & Kahn, 2008; Goodwin et al., 2019; Morse et al. 2003, 2008; Orb et al., 2001; Pietila et al., 2019). Additionally, when preparing manuscripts, authors should bring a sense of transparency about their ethical considerations to the section of the manuscript which describes data collection methods.

Differences by type of interview

In general, the ethical issues described here apply equally to individual, dyadic, and focus group interviewing. The main exception is the potential violation of privacy and data security in video conferencing focus groups. In such groups, participants can see into the personal space of other participants (e. g., access to parts of their living space and family members via camera and screen). Another issue would arise if one person were to secretly record the session, and thus compromise the data security for the other participants. To avoid this, participants should be instructed to choose a private and neutral part of their personal space for the interview. Another option is to use virtual or blurred backgrounds, readily available in the majority of applications and platforms. Finally, participants should be advised against any unauthorized recordings of the interaction, and this information can be included in the informed consent forms, so that the participant’s signature on the document confirms that they understand and agree with privacy and data protection protocols.

Recommendations

Ethical study implementation should reflect the target population’s needs, and this is especially important when gaining meaningful informed consent. Recommendations for achieving this goal (from Taljaard et al., 2018) include: (a) compiling a list of essential ethical issues via key informant interviews; (b) reviewing the literature for ethical considerations in similar studies and consider making contact with other investigators who have ield experience to draw on their expertise; (c) drawing on the views of international ethics committees (survey, personal contact); and, (d) conducting interviews with field leaders about ethical considerations for study design and implementation. In addition, questions such as literacy and access to technology should also guide decisions about approaches to informed consent.

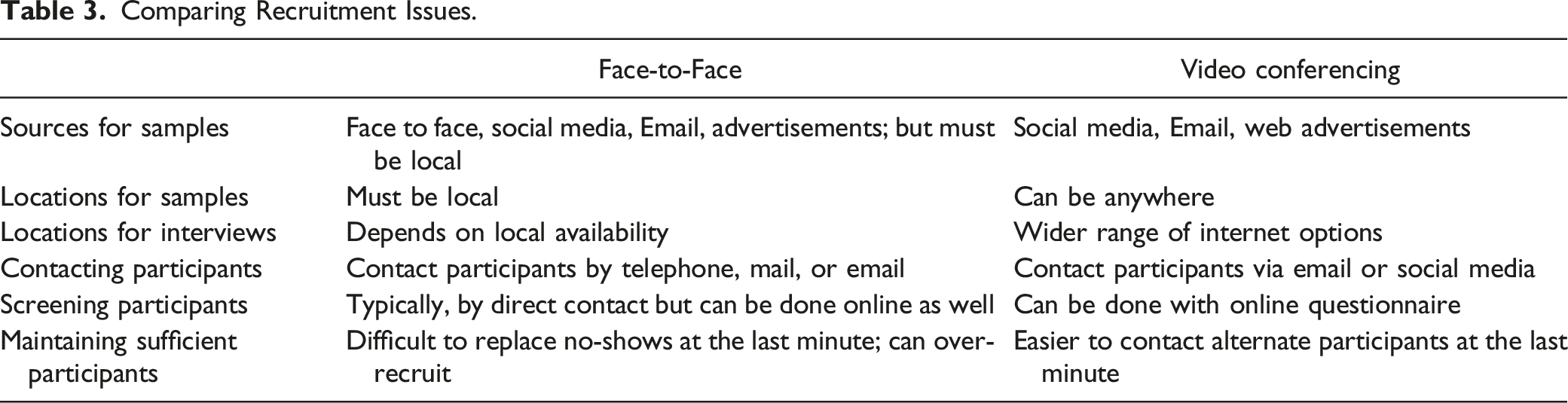

Comparing Recruitment Issues

Sources for participants

Comparing Recruitment Issues.

Maintaining sufficient participants

Another area where all the formats for online interviews show advantages is in replacing participants who do not show up for the interview. Because of the travel required for in-person interviews, the main solution for this problem is to over-recruit by inviting extra participants, who must then be turned away if more than enough people do show up. In contrast, scheduling additional “standby participants” and finding “last minute” solutions for “no-show” participants is much easier in the online models.

Differences by type of interview

Differences in recruiting are especially important for with regard to focus groups, due to the larger number of participants needed in this method. Indeed, the importance of planning for and compensating for recruitment issues associated with face-to-face focus groups is a major topic in almost every textbook on this method (see Morgan, 1998 for an especially detailed discussion of this topic). Hence, the expanded options for recruitment in online focus groups are a notable advantage in comparison to the in-person mode for this method.

Recommendations: In many ways, this is the best-known area with regard to comparing in-person and online interviewing. As always, the key is to specify clear criteria with regard to credibility and to link these criteria to the research questions through purposive sampling. For online recruitment, the main recommendation is to pay careful attention to ways in which samples might be biased due to lack of access to technology or technical knowledge.

Comparing Design Issues

Comparing Design Issues.

One particularly relevant topic-related issue is the participants’ level of engagement with the topic. To the extent that participants have a strong interest in the topic, this will make the interviewer’s job easier, and this is especially important in focus groups, where a mutual interest in the topic will facilitate interaction among the participants. Because of the burden that low engagement topics place on the moderator to keep the interview going, this can create an advantage for in-person formats, due to the direct connection between the interviewer and the participant(s).

A different dimension concerns the nature of the topic itself, so that as always, when the goal is collecting in-depth personal data and understanding personal context, one would choose individual interviews, whereas for topics which benefit from group interactions via the sharing and comparing of experiences within a social context, one would choose focus groups.

With regard to less structured versus more structured interviews, the key concern is how much control the interviewer exercises over the interview. In less structured interviews, the questions being asked and the moderating strategy being used can vary from interview to interview, but when the questions and the moderating strategy need to stay the same across interviews, this requires a more structured approach.

Next, with regard to group composition in dyadic interviews and focus groups, online interview formats can have major advantages for recruitment. In particular, the online modes allow greater freedom with regard to finding participants and bringing them togethering digitally, so they provide better options for creating more specialized group composition. This can be a considerable advantage because the quality of the interaction in both dyadic interviews and focus groups often depends on the amount of “common ground” that participants share (2019). To the extent that the participants have a common background with regard to the topic, it will be easier for them to conduct their discussion. This means that online recruiting can make it possible to create a degree of either heterogeneity or homogeneity among the participants that may not be possible to achieve when there are limitations due to local recruitment.

Design issues concerning group size only appear in focus groups, but they can be crucial. The primary issue pertains to conducting relatively large groups of more than five or six participants. In-person focus groups allow the moderator to control a larger number of participants, while online groups are often limited to smaller sizes. Much of this difference arises from the complexity of managing the group dynamic when it is less possible for the moderator to use non-verbal behaviors and observe those behaviors among the participants. Once again, however, these differences are not the same across the online modes, as will be discussed below.

A final design dimension involves developing rapport, which we place in the design factors because of the extent to which it depends on how the questions are designed. Spradley (1979) first introduced the concept of rapport to the literature, and he emphasized that the participants’ willingness to answer questions hinges on the questions that are being asked. According to Spradley, developing rapport begins with asking a question that is both easy and interesting for the participant to answer. Following this with questions that build on the participant’s previous responses results in the kind of rapport that makes it possible to ask more sensitive questions. (For a further discussion of rapport in online interviewing, see Khan & MacEachen, 2022)

Differences by type of interview

One of the biggest differences between the various types of interviews is the potential difficulty of conducting video-based focus groups when the participants have a low level of engagement with the topic. As we will discuss in the recommendations, creating active interaction between participants in video focus groups can be problematic due to the technology itself. This difficulty is especially relevant for low-engagement topics, but if participants share a stronger mutual interest in the topic, then this can help compensate for problems with group dynamics.

A different issue arises with regard to managing larger groups in videoconference focus groups. From a technological standpoint, such groups could include the same number of participants as in-person groups; however, in practice it is advisable to include fewer participants as this contributes to better interaction. As discussed below, the limited level of non-verbals cues makes it difficult to tell how the other participants are responding to what anyone says. This is couple with problems in telling who is willing to speak next. In our experience, an optimal size is three to five participants (Lobe & Morgan, 2021). The difficulty with large groups is that increasing the number of participants leads to a reduction in the screen size allotted to each of them. This makes it difficult for the moderator and the participants to see each other, which limits the ability to read whatever non-verbal interactions are present. This factor is especially relevant to participants who login using smart phones or tablets with a relatively small screen size.

Recommendations

We believe that online technology produces considerable differences in how to conduct the three different types of interviews. First, individual interviewing can be highly effective in an online mode because it matches everyday communication with smart phones via FaceTime and other connections that create face-to-face interaction. Second, we recommend using dyadic interviews in ways that mimic this same face-to-face interaction. This occurs when video-conferencing programs such as Zoom or Skype have the interviewer’s video turned off and both participants using the “speaker view” option. With this arrangement, each participant communicates directly with the other, just as they would in a one-to-one connection.

In contrast, recommendations for focus groups are more problematic. One key suggestion is to explore which types of interview questions are most applicable to this mode of interviewing. At present, the assumption seems to be that traditional questioning routes and interviewer guides are adequate, but the kind of stiff interaction among participants in online focus groups calls this into question, and especially so in less-structured groups. We thus recommend greater experimentation the wide range of available question strategies (Morgan, 2019), to investigate what works best for generating online group discussions.

Comparing interviewing and moderating issues

Comparing Interviewing and Moderating Issues.

A final set of issues in this area concerns avoiding distractions and departures. The fact that in-person interviews require negotiation of a suitable location tends to minimize these issues, although it is generally wise to avoid scheduling individual interviews in lively settings such as restaurants. Preventing these problems is more difficult with online modes. In particular, when participants are in their own home, they can be open to interruption in many ways, most notably by others who are present in the setting. Hence, online researchers have to anticipate these interruptions in advance and build in strategies to overcome them.

One strategy is to provide the participants full flexibility in choosing the time and location most suitable for the interview. Another strategy is to make sure that the recruitment process asks each participant to acknowledge the importance of not suspending the interview once it has started. In addition, it is advisable to accept an interviewee’s request for a relatively short break (up to 20 minutes), because an attempt to negotiate a shorter break can be more time consuming. When the interviewee wants to take a longer break however, there is a greater possibility that the interview will remain uncompleted, unless the researcher both emphasizes the importance of the interviewee’s contribution to the study and makes firm arrangements for re-contacting them.

Differences by type of interview

Non-verbal and para-verbal probing are more limited than often suggested in video conferencing focus groups, especially in comparison to the richer variety of options in face-to-face focus groups. Part of the problem is due to the limited visibility of participants in larger groups or on devices with small screens, as noted earlier. Further, the physical format for videoconferencing, where each participant typically stares straight ahead in a square or rectangular array of faces, regardless of who is talking, makes it impossible for either the participants or the moderator to make eye contact any one person. In particular, the other participants in a face-to-face group almost always show that they are paying attention by both looking toward the current speaker and providing non-verbal signals (e.g., smiles and head nodding) about the extent to which agree with what is being said. In contrast, the participants in an online focus group each look forward at their own screen, so the current speaker often sees little more than a set of blank faces. And this lack of affect can lead to a “flat” discussion where participants quite literally fail to react to each other. This lower level of non-verbal engagement in videoconferencing can translate into less spontaneous interaction, as the participants wait for the moderator to take action rather than doing so themselves.

Minimal eye contact with the participants also has a substantial impact on the moderator’s ability to make use of non-verbal communication. For example, in a face-to-face group the moderator can smile or nod at a potential speaker, or make an encouraging hand gesture, all of which are well-known ways to boost the likelihood of getting someone to participate (Morgan, 20x). Without this kind of nonverbal assistance, the moderator in an online group has to do more explicit probing to keep the discussion going (e.g., “Who else something else to add?” or “What are some things we haven’t heard yet?” and so on). This strategy is awkward, however, because it goes against the goal of producing a free-flowing conversation. Thus, attempting to solve one problem (low levels of participant input) by repeated probing creates another problem (too much direction by the moderator).

Recommendations

This is another area where our suggestions vary according to the type of interview. In particular, we believe that existing interviewing strategies are well-suited to both individual on dyadic interviews, but that moderating online focus groups can be problematic. At present, advice for how to adapt moderating techniques from in-person to online settings is virtually non-existent; instead, there once again seems to be an assumption that existing practices are sufficient. As we become increasingly aware of the limited interaction among participants in response to these traditional moderating procedures, we need to search for new alternatives. In particular, the loss of effective non-verbal communication between the moderator and the participants often produces more directive action by the moderator, but when this is less desirable (e. g., in less structured groups) we need to develop additional moderating techniques that are specific to online focus groups.

Discussion and Conclusions

One notable aspect of this article is that it is being written during the Covid-19 pandemic. Hence, it corresponds to a considerable upswing in the use of online interviewing, which currently reflects the greatly decreased access to in-person interviewing during this highly unusual event. How this trend will play out in the future is unknown, but when di Gregorio (2021) surveyed 346 qualitative researchers in the spring of 2020, and asked whether they felt that the increased use of online interviewing would continue, nearly two-thirds said “yes.” This suggests a possible future trend away from viewing online interviewing as a “second-best solution,” and toward a recognition of the competitive advantages that it can offer in the proper circumstances.

In considering what these “proper circumstances” are, our review has highlighted notable barriers and notable facilitators for online interviewing. On the one hand, online interviewing has an important limitation due to technology issues, as well as the potential for sample bias when participants do not have the necessary hardware, software, or skills. On the other hand, online interviewing has an important advantage with regard to recruitment issues and the expanded range of options it makes available by increasing geographical access. From this standpoint, both in-person and online interviewing need to be weighed in terms of their relative advantages and disadvantages.

Unfortunately, there has been little attention to formal techniques to for comparing the content from different interview formats. The classic way to accomplish this is to code the separate interview formats, and then assess the similarity of the results. This approach has consistently shown very little difference in the set of codes that are present, no matter which mode is applied or which kind of interview is used (e.g., Namey et al., 2020; 2021). This is not the whole story, however, because online interviews uniformly produce a smaller number of total codes. In other words, they reproduce the full range of codes, but have fewer instances of any given code.

Another comparison of interview quality between online and in-person interviews involves their level of depth and detail, with substantial advantages for face-to-face modes. The most obvious criterion here is simply the number of words that are generated per interview, with online modes almost always producing shorter data sets. As a quality indicator, this can be combined with the previous discussion of coding to produce something amounting to “code density,” i.e., the number of words per code in an interview. Given that online interviews produce smaller numbers of total codes and fewer words, this unavoidably generates a disadvantage with regard to code density. Of course, these counting techniques produce information that is both limited, and highly quantitative.

A different way to assess interview quality is through expert judgments and participant ratings, and this is an area that could definitely benefit from further development. In particular it would help to have standardized tools that are more comprehensive than single scores for overall quality. Unfortunately, at this point, these tools have not been used frequently enough to provide any meaningful conclusions about the relative superiority of in-person or online interviews, and such ratings are still essentially quantitative.

From a more qualitative point of view, it would help to find new ways to assess quality in different types of interviews. One possible approach would be to have coders search interviews for high impact quotations, such as would appear in the Results section of any article. This could help resolve the question of whether a given interview format produces an adequate level of depth and detail. If a set of interviews can produce the kinds of quotations that would support the research conclusions, then that would be enough to meet the needs for high quality content.

Regardless of which paths we follow in improving our ability to assess interview content, the relatively low level of attention to this issue in interviewing makes this area a likely target for future research. Thus, as a final thought, we wish to emphasize the need to develop techniques that indicate which situations are uniquely well-suited to online interviewing. In particular, we need techniques that can tell us when the classic in-person interviewing strategies be used directly or only moderately adapted, versus when we need to create fresh alternatives. If our prediction that online interviews will increase in popularity is accurate, then it is essential that we find ways to maximize their success.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethics

This article did not make any use of human subjects.