Abstract

Introduction

Online data collection methods can increase study accessibility and ease the burden of data collection for participants. Asynchronous Online Focus Groups are a promising method for data collection among healthcare professionals.

Methods

In this article, we describe the use of, and lessons learned from conducting 19 Asynchronous Online Focus Groups across four research studies.

Results

We describe our experiences preparing for, recruiting for, and conducting Asynchronous Online Focus Groups. We highlight decision points around timeframe, eligibility, recruitment, participation, focus group assignment, moderation, and participant engagement. We found that removing geographic barriers was advantageous for collecting data, focus group attrition is a concern for asynchronous formats, and group assignment may affect data.

Conclusions

Asynchronous Online Focus Groups are a promising method for data collection among healthcare professionals. When conducting Asynchronous Online Focus Groups researchers should consider the suitability and the unique implications of this data collection method for data quality.

Introduction

Health services researchers have much to gain from using online qualitative methods to understand the experiences of healthcare professionals (HCP). Given the heavy workload and varied schedules of HCPs, in-person and synchronous qualitative data collection with health professionals can be challenging (VanGeest et al., 2007). The COVID-19 pandemic has highlighted the need for accessible online data collection methods. One promising method for reducing barriers to qualitative data collection participation among HCPs is the use of the asynchronous online focus group (AOFGs).

AOFGs are a data collection method where participants respond to questions in an online repository over a designated period (Zwaanswijk & van Dulmen, 2014). What distinguishes AOFGs from other online focus groups is their asynchronicity, meaning, within an AOFG, participants can answer questions at any time within a pre-determined timeframe. There are similarities between asynchronous and synchronous online focus groups, including being group-based, online, and geographically unrestricted for participants and researchers.

There is growing literature describing the experiences of implementing AOFGs among HCPs (Ferrante et al., 2016; K. L. Matthews et al., 2018; Tuttas, 2015; Wilkerson et al., 2014; Williams et al., 2012). AOFGs have been used to learn about professional views on end-of-life communication (Oosterveld-Vlug et al., 2017), online communities (Rolls et al., 2019), experiences of nurses seeking employment (Hancock et al., 2016), experiences implementing Medicaid policies (Gray et al., 2021), pharmacists’ role in opioid safety (Hartung et al., 2018), clinician perspectives on prescription drug monitoring programs (Hildebran et al., 2014), and virtual reality-based learning (Miller et al., 2018).

Advantages of AOFGs. Researchers have identified advantages that make AOFGs well-suited for data collection from HCPs. Participants in AOFGs highly value time flexibility and anonymity afforded by asynchronously conducted research and the ability to participate from home (Kenny, 2005; Reisner et al., 2018; Tates et al., 2009; Williams et al., 2012; Zwaanswijk & van Dulmen, 2014). Previous research has shown that HCPs are often too busy throughout the normal workday to participate in research (Sahin et al., 2014). Participants view the experience of participating in AOFGs favorably (Gordon et al., 2021) and appreciate the time to reflect when responding (Wilkerson et al., 2014; Williams et al., 2012; Wood et al., 2004). AOFGs also enable wide geographic reach by not requiring travel to a study location (Moore et al., 2015; Reisner et al., 2018; Rupert et al., 2017), which also may facilitate access for people with disabilities or people who are caregivers.

Participant anonymization can be beneficial for group-based data collection (Ayling & Mewse, 2009). Lack of anonymity within in-person focus groups may enable members of dominant social groups (for example, people who are white, male, cis-gendered, or able-bodied) to remain privileged within data collection. Moreover, individuals may feel pressure to acquiesce to group norms and expectations with an in-person focus group (Graffigna & Bosio, 2006; Sim, 1998). Within AOFGs, gender, race, ethnicity, age, disability status, and other characteristics are unknown. Participant anonymity within AOFGs is important when collecting data from HCPs, given that perceived power and hierarchy influence behavior among HCPs (Green et al., 2017; Janss et al., 2012).

The anonymity of professional licensure within groups during data collection may allow HCPs who are perceived to hold less prestigious credentials a similar level of comfort and access to group conversation as those with more prestigious credentials (Morrow et al., 2016; Williams et al., 2012). Hierarchy has implications for group behavior; research has shown that professional status in healthcare reduces the tendency of lower-ranking members to vocalize concerns in group settings (Morrow et al., 2016).

Anonymization and reduction in the perceived hierarchy may increase the likelihood that previously unheard voices are heard (Williams et al., 2012). The inclusion of voices of those who are typically marginalized strengthens research by increasing our understanding of often-neglected individuals who have been traditionally left out of research (Dresser, 1992; Shah & Kandula, 2020; Shavers-Hornaday et al., 1997; Woodley & Lockard, 2016). Within group-based data collection, mere recruitment into studies does not ensure equal participation, given the social realities of racism, sexism, and other discriminatory systems that are present in all group-based settings.

Although cost savings from online methods are debated (Davies et al., 2020; Rupert et al., 2017), studies using AOFGs may incur cost savings via reduced travel costs and transcription. Data in AOFGs is collected in transcript form, reducing costs associated with verbal-to-text transcription services and reducing the time waiting for transcribed data (Ferrante et al., 2016). Data is also entered directly from participants, eliminating the possibility of errors through the transcription process via poor audio quality. Another potential cost saving is in the reduction of travel fees for the research team and travel reimbursements for participants.

Disadvantages of AOFGs. There are potential disadvantages to using AOFGs including a decreased amount of participant interaction, changes in data quality, and potential privacy loss.

In-person focus groups may enhance therapeutic value by creating a sense of community that leads to more participant interaction (Davies et al., 2020). Given the impersonality of the online environment, participant interaction and group cohesion may be diminished (Stewart & Williams, 2005). Research suggests that online focus groups are characterized by lower levels of relational satisfaction, interaction, and relationship building, relative to in-person focus groups (Davies et al., 2020; Graffigna & Bosio, 2006; Reisner et al., 2018). The group dynamic also may be dampened by decreased engagement and lack of immediacy of responses in an asynchronous environment (Matthews & Cramer, 2008J. Matthews & Cramer, 2008) although concern about low engagement may be mediated by the use of synchronous (or real-time) online focus groups, which foster higher levels of interaction than asynchronous online focus groups (Tuttas, 2015). Furthermore, studies suggest that homogeneity and heterogeneity of participants within groups must be balanced to help facilitate interaction and foster disagreement (Morgan, 1995).

Researchers have expressed concern that lower levels of participant interaction may diminish data quality. Cross-talk and interaction, or conversation between participants, have been considered integral to in-person group-based data collection given that it produces dissonance, divergent viewpoints, and thus, rich data (Barbour, 1995; Liamputtong, 2011). Changes in data quality may also stem from the absence of verbal communication in online focus groups (Moore et al., 2015) and the inability to observe and incorporate body language into data collection and analysis (Seitz, 2016). Comparative studies have shown that online text-based data collection tends to produce less contextual data but similar thematic content to in-person focus groups (Namey et al., 2020; Synnot et al., 2014; Woodyatt et al., 2016).

Finally, as with any online format or electronic data storage process, loss of privacy and data breaches are a concern and close consideration should be paid to security procedures, Institutional Review Board, and HIPAA compliance (Lobe et al., 2020).

Given these advantages and disadvantages, AOFGs, and online-based data collection methods, should be considered a unique set of tools that require critical consideration and careful application (Graffigna & Bosio, 2006; Moore et al., 2015).

Methods

Characteristics of Studies Using Asynchronous Online Focus Groups.

Results

Preparing for an AOFG

Factors to consider before conducting AOFGs include timeframe, interview guide considerations, and focus group setup.

Timeframe

Given the asynchronicity of AOFGs, planning is needed to ensure AOFGs are properly monitored. Each AOFG conducted by the authors was open from one to 3 days. Participants were invited to log on and contribute responses within this timeframe. Typically, we broke up the focus group guide into multiple days. Participants would see a new set of questions each day but had the option to go back and answer the previous day's questions. For example, in one study we introduced half the interview guide at 6 a.m. on the first day and the second half of the interview guide at 6 a.m. on the second day. In this study, the AOFG was open for a full 48 hours, closing at 6 a.m. on the third day. In another study, the AOFGs were open for 3 days with four questions available to answer per day.

Since participants engage with the platform on their schedules, moderators may not be online when responses are submitted. Participants also may contribute a response to an item then log off the platform before a moderator can respond. As a result, we had reduced opportunities to prompt, probe, and redirect the conversation.

In our studies, we staffed the focus groups with at least one moderator during waking hours (6 a.m.–10 p.m.). Some participants contributed outside of these hours. Researchers should consider time zone differences if participants are stretched across time zones. One study was bi-coastal, extending the number of hours the AOFGs needed coverage by moderators. Across studies, we often saw an increase in activity in the evening hours (5–8 PM).

A typical AOFG may require at least one moderator for 12 hours/day to prompt and probe participants. For one bi-coastal study, our East Coast moderator covered the AOFGs starting at 6 a.m. EST, whereas the West Coast moderator covered the AOFGs in the evening hours until 10 p.m. PST, resulting in 15 hours/day of staff coverage. For an AOFG, “staff coverage” does not mean constant presence on the board but rather logging on and off multiple times per hour and availability if an issue arises.

Interview guide considerations

An additional consideration for asynchronous online data collection is that the item order is fixed. During in-person data collection, a focus group moderator may change the order of questions based on participants’ responses throughout the interview, an unavailable option during AOFGs. In the software used for these four studies, participants were able to see the question and all previous participant’s answers. This sometimes presented a challenge when a participant would answer a question in an unintended direction and subsequent participants would respond to previous responses rather than the original question. In an in-person moderator-led focus group, the moderator can intervene to redirect the conversation, but this redirection is more difficult in an asynchronous online format.

Focus group setup

A variety of online platforms exist for conducting AOFGs including QualBoard 20|20, Together, and Focus Vision, among others. Before conducting an AOFG, the interview guide will need to be entered into the AOFG software. We spent approximately 8 hours entering and testing the interview guide within the platform in one study. Numerous online platforms can integrate surveys into the AOFG format. This feature is helpful for mixed methods studies or for capturing demographic information among participants in qualitative studies. Some platforms also offer the ability to enter pictures and videos for participant response.

Finally, data collected on the internet is vulnerable to security breaches. Like other online data collection platforms, AOFG software may or may not be approvable by Institutional Review Boards. In our studies, we anonymized participants by assigning pseudonyms using species of trees, rocks, flowers, or herbs (i.e., maple, sapphire, sage, etc.). To protect anonymity and confidentiality, we entered the minimum possible identifiable information into the AOFG platform and encouraged participants to not enter any identifiable information into the AOFG public digital spaces. We also discouraged the sharing of personally identifying information within the AOFGs. In one case, the moderator promptly removed identifying information entered by a participant.

Recruiting for AOFGs

Since AOFGs are relatively new, researchers should be mindful of how AOFG novelty may influence study recruitment. Here we discuss our methods for determining eligibility and recruiting participants.

Determining eligibility

Recruitment for AOFGs may differ from traditional methods. In addition to standard study eligibility requirements, eligibility for participation in an AOFG will be determined by access to and proficiency in the software. Since AOFGs are dependent on technology, this may exclude some people from participation. This could exclude people with unreliable internet access, people who dislike using technology, or people with disabilities that affect their ability to communicate via computer or text-based methods. In all four studies mentioned in this article, we sought to collect data from HCPs who use a range of technology regularly in their professional lives. Thus, we felt that the study participants' technological knowledge and comfort were well-suited to AOFGs. Some users faced difficulty creating accounts within the software, but these issues were easily resolved by the research team.

Recruitment and participation rates

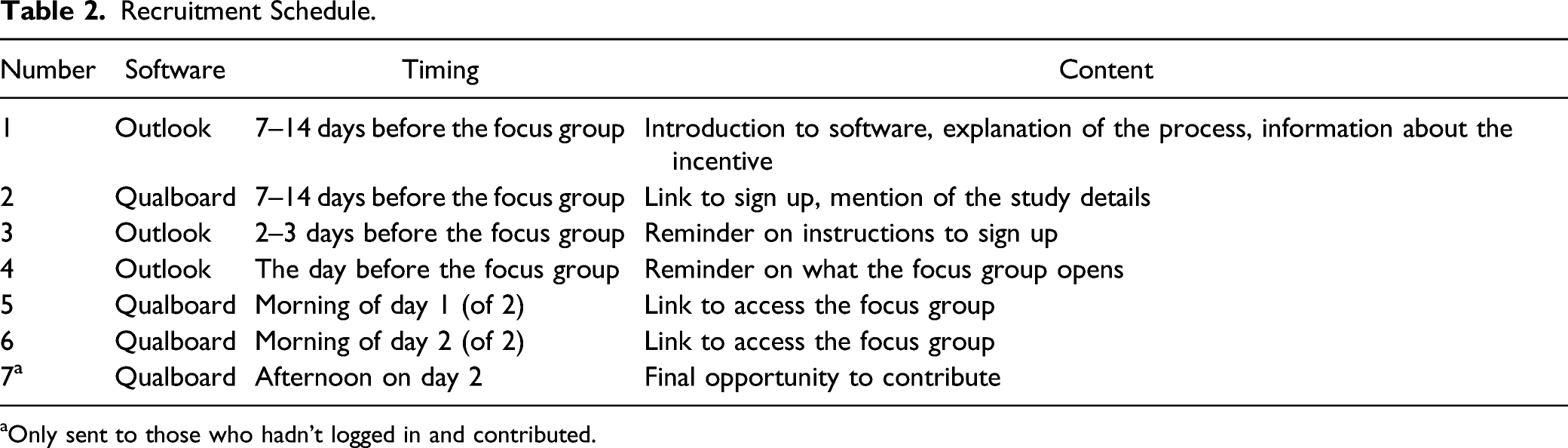

Recruitment Schedule.

aOnly sent to those who hadn’t logged in and contributed.

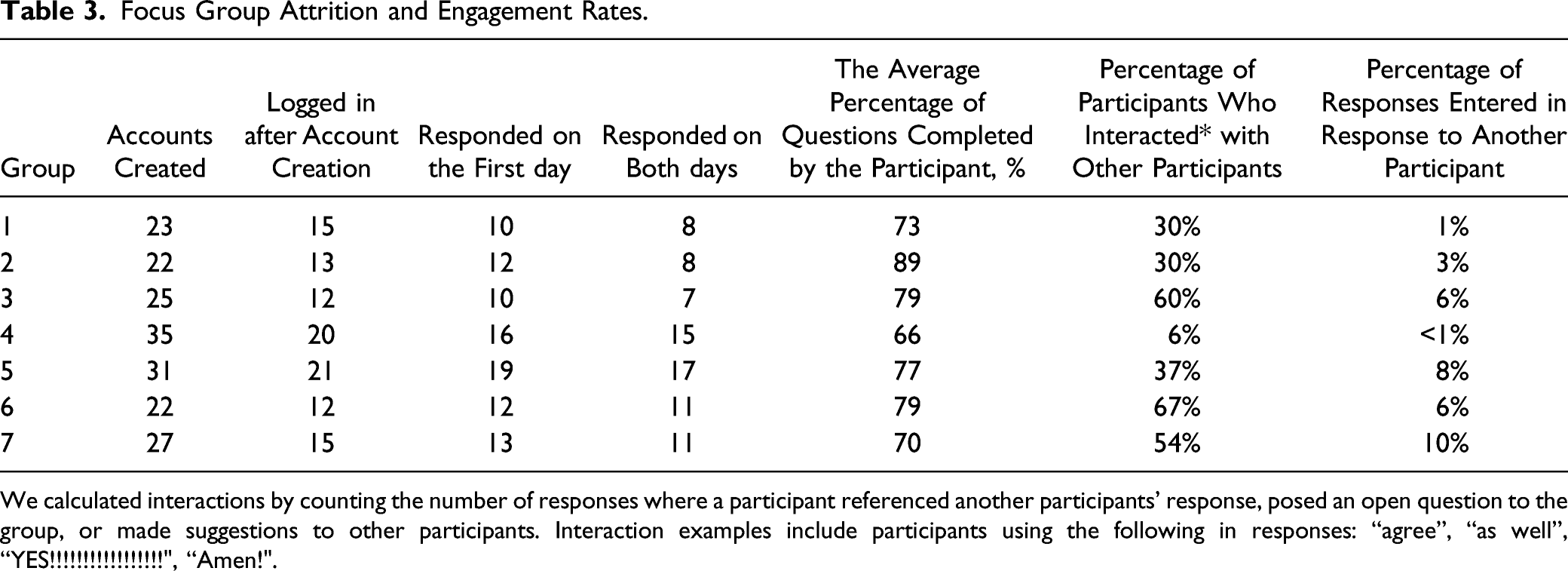

Focus Group Attrition and Engagement Rates.

We calculated interactions by counting the number of responses where a participant referenced another participants’ response, posed an open question to the group, or made suggestions to other participants. Interaction examples include participants using the following in responses: “agree”, “as well”, “YES!!!!!!!!!!!!!!!!!", “Amen!".

Conducting AOFGs

Conducting AOFGs presents unique challenges and considerations including group assignment, oversight and moderation, and participant engagement.

Group assignment

In these four studies with HCPs, we sorted participants into groups by profession. In one study, we were eliciting opinions from a wide variety of HCP professionals, and we hypothesized that some groups would hold divergent views on the study content. In this case, some professionals voiced negative opinions of other types of professionals, thus, we were grateful to have separated groups by profession. Although there are advantages to asynchronicity, it decreases the likelihood of meaningful within-group interaction. In most AOFG software, participants can comment and respond to one another, but asynchronicity creates a more individualized experience. In all four studies detailed above, even though it was possible within the software, participant interaction was minimal. Table 3 illustrates that in one study, the interactional responses (out of all responses) ranged from 1% to 10% by AOFG. In the same study, the percentage of participants per group who interacted with one another varied widely from 6% to 67%.

Oversight and moderation

AOFGs offer flexibility in monitoring data collection among research team members. For a subset of our focus groups, the research teams chose to run two focus groups concurrently, each co-moderated by two researchers. This decision was made, in part, to reduce the data collection timeframe. For both studies, the two co-moderators took shifts monitoring each group. The co-moderators switched between AOFGs and were able to cross-check each other’s prompts and probes. One strength of the AOFG is the ability of other members of the research team to monitor the boards. In our studies, the principal investigators and co-investigators were permitted access to observe the boards, but not to engage with participants. The observers could monitor responses and insert notes, which were visible to the co-moderators but not to participants. As a result, each research team member was involved in data collection, allowing investigators to steer the conversation.

Participant engagement

In these studies, the research team reached out to participants throughout the AOFGs to encourage participation. In one study the research team built and followed a reminder schedule which was applied to all seven focus groups. Focus groups were open for 48 hours in this study. As such, the team emailed all participants who had signed up four times throughout the focus groups: 1) the night before the focus group started, 2) after Day 1 questions were posted on Day 1 (at 6 a.m.), 3) after Day 2 questions were posted on Day 2 (at 6 a.m.), and 4) at 5 p.m. on Day 2. Each email included a brief description of the study, a reminder of the incentive, and a link to the AOFG software (Table 2).

Given the asynchronous format of the AOFGs, it is possible and common for participants to not complete all questions in the interview guide. For example, across seven focus groups in one study, only 38% of participants responded to all items. In another study using three focus groups, over half (53%) responded to all items. We have also found that participants tended to log in on both days of the study or none. In one study with 92 participants, 77 (84%) engaged with the study on both days. The intensity of participation and responsiveness to follow-up questions within focus groups varied. In one study with seven AOFGs, participants averaged 20 responses to the AOFG, ranging from 17 to 24 posted responses by AOFG. In this study, participants responded to an average of 80% of all follow-up questions, ranging from 60% to 90% by AOFG.

Discussion

Recommendations.

Several considerations of AOFGS emerged throughout our use of AOFGs for data collection among HCPs. First, the removal of geography as a barrier to focus group participation is a well-established advantage of online data collection (Ferrante et al., 2016; Moore et al., 2015; J. M. Strickland et al., 2007). In our experiences, our studies included geographically dispersed participants and research teams, thus the removal of geography as a factor in data collection in our studies was essential.

Second, in our experiences with AOFGs, focus group attrition happened at multiple points in the AOFG process. To ensure adequate focus group size, we overrecruited at every stage of recruitment, ensuring an adequate number of participants. Attrition is a well-established barrier within traditional focus groups, and we agree with previous research, which recommends overrecruiting, using multiple reminders, and offering incentives (Morgan, 1995). Consistent with previous research (O. L. Strickland et al., 2003), participation within online methods varies widely. In our experience, engagement had to be carefully monitored and encouraged with email reminders and prompts to ensure participation. Studies on AOFGs recommend enhancing engagement by asking participants to post a brief introduction, regularly contacting participants throughout the study (Ferrante et al., 2016; Tuttas, 2015), and moderators responding encouragingly to each comment (Gordon et al., 2021). Researchers have also used the flexibility enabled by online environments for creative data collection methods, including visual stimuli to engage participants dynamically, which resulted in contestation and increased engagement among participants (Moore et al., 2015).

Third, participant interaction across groups varied and likely influenced responses. Participants’ ability to read others’ responses and interact with them is what distinguishes AOFGs from online interviews. Within-group interactions varied across projects. Participants interacted by replying to other participants’ comments, “liking” each other’s comments, using emojis, and entering responses using all capitals and exclamation marks. In addition to explicit interaction, participants in all AOFGs were able to read and consider other participants’ responses or ignore them – the authors have no way of knowing the degree to which participants reflected on other participants’ comments. We believe that there was likely variation in considering previous responses, thus participants were likely influenced by the responses of others, but we are unable to measure to what degree. Others have reported that interaction in AOFGs can vary widely, with some groups adopting a culture of interaction while others remain siloed – even within the same study using similar engagement processes (Gordon et al., 2021).

Participant interaction should be differentiated from engagement. AOFG engagement is determined by the percentage of questions answered, replies to moderators, and the length and richness of responses. Alternatively, interaction, as measured in this study, was defined as responses made to or referencing a previous response. Despite low interaction, within one project in this study (shown in Table 3) resulting study data was rich. Data richness, defined as data that reveal participants’ thoughts, feelings, intentions, actions, and the context and structures they are embedded within (Charmaz, 2003), is influenced by the interviewer, participant, and topic (Ogden & Cornwell, 2010). For these reasons, the authors believe that more interaction in the AOFGs in this study would have produced different – but not necessarily richer – data. For example, in one study, some participant interactions produced rich data (i.e., long, illustrative, comparative responses) while others did not (i.e., “I second this” and “+1”). While researchers have argued that interaction produces rich data via the surfacing of dissonant viewpoints (Liamputtong, 2011), data produced interactively is not inherently rich. For the projects in this study, our analysis focused on what was said by participants and not how data was produced or the level of interaction (Morgan, 2010). Thus, low levels of interaction were not a driving concern.

Qualitative methods researchers argue that more research is needed to develop methods to measure and analyze the interaction and the impact of interaction on study data using online group-based data collection methods like AOFGs (Ferrante et al., 2016; Morgan, 2010; Rupert et al., 2017).

This article is limited in three ways. First, this article reflects our experience conducting AOFGs using only one type of software. Researchers using other software platforms may have different experiences. Second, this article specifically sought to explore using AOFGs among HCPs. Although we believe this article is instructive for researchers aiming to employ AOFGs to study HCPs, the applicability of our findings to other groups is limited. Third, we noted AOFG participation rates only among studies in which participants were already engaged. Thus, researchers recruiting for AOFGs among individuals not already engaged in a study will likely encounter lower response rates than those reported above.

Conclusion

The use of AOFGs for qualitative research requires special considerations and should be chosen based on the population, research questions, study resources, and content area. It is in the interest of researchers using AOFGs and other online methods to use them carefully, thoughtfully, and view them as one of many ways of collecting data rather than as a direct replacement for in-person methods. Through these projects, we found that AOFGs are an effective and appropriate method of data collection that are well suited for collecting rich qualitative data from busy healthcare providers. These recommendations can be used as considerations and discussion points for studies using AOFGs for data collection among HCPs.

Footnotes

Acknowledgments

The authors would like to thank Gillian Leichtling for editorial contributions and support of this work.

Declaration of Conflicting Interest

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Dr. Livingston is employed as the Medical Director of Health Share of Oregon. This potential conflict of interest has been reviewed and managed by Oregon Health and Science University. Authors Kate LaForge, Dr. Mary Gray, Erin Stack, and Christi Hildebran have no conflicts of interest to disclose.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Institutes of Health, National Institute on Drug Abuse (1 R01 DA047323-01A1).