Abstract

In Australia, graduates of Master of Public Health (MPH) programs are expected to achieve a set of core competencies, designed to ensure they will be culturally safe practitioners when working with Indigenous communities. This study reviewed a sample of MPH programs to determine the level of integration that has been achieved since these core competencies were developed. In this article, we will focus on the innovative data analysis process used for the reviews. The reviews were undertaken by a national network of leading academics in Indigenous public health, including those from both Indigenous and non-Indigenous backgrounds. As each review team consisted of different members from the network, there was a need to ensure consistency in the data analysis process across all the reviews. The researchers chose to use the Leximancer V4 qualitative software data analysis tool to enhance the validity of the study outcomes. One of the limitations found using this approach was that the Indigenous voice was underrepresented in the output from the software tool; hence, a manual thematic analysis was subsequently applied to the discussion threads, to identify themes within the findings. By combining the conceptual and thematic analysis, the research team was able to bridge the gap created by the weaknesses of the two data analysis methods and incorporate both the Indigenous and non-Indigenous worldviews to the interpretation of the findings, while maintaining consistency throughout the review process.

Keywords

What Is Already Known?

Qualitative data analysis software programs have been designed to enable researchers to better manage large data sets. To date, the purpose for which the Leximancer software has predominantly been used in qualitative research is to draw out concepts from the text according to frequency, often limiting outputs to the key concepts that are already the focus of the research and underrepresenting voices of minority groups in the sample.

What This Paper Adds?

We therefore used the tool to explore the connectivity of the key words with less frequently occurring concepts and thematically analyzed the discussion threads generated within the software program. In so doing, the researchers successfully related both Indigenous knowledge systems and Western worldviews to the interpretation of the results, enhancing the reliability of the outcomes.

The National Curricula Review of Core Indigenous Public Health Competencies Integration into Master of Public Health (MPH) Programs was undertaken by members of the Public Health Indigenous Leadership in Education (PHILE) Network. This Network comprises a group of leading academics that conduct research into and teach Indigenous public health content, primarily into Australian MPH programs. Although the two project management staff from the leading universities are non-Indigenous, the members of PHILE, including the research team who undertook this work, are predominantly from Aboriginal and Torres Strait Islander or Maori decent. The reviews were conducted at eight universities around Australia (including the pilot) using a qualitative study design that incorporated a series of action research cycles, described in detail elsewhere (Lee, Coombe, & Robinson, 2015). The study was designed to identify the extent to, and ways in which, Australian Indigenous public health competencies (Genat, Robinson, & Parker, 2009) have been integrated into MPH program curricula to identify models of best practice for integrating the competencies and how integration of the competencies could be improved. There is little existing published work concerning the theory or practice of integrating competencies in curriculum design, the section of our project we describe here.

Qualitative research is undertaken in natural or social settings in an attempt to make sense of phenomena through the meanings that the participants themselves bring (Denzin & Lincoln, 2011; Rossman & Rallis, 2003), in this case the multiple perspectives of people using the Indigenous public health core competencies in their teaching in MPH programs, within their own universities. This research did not seek to take the perspective of knowing what is best for integrating Indigenous health competencies into curriculum, rather it sought to learn from the experiences of individuals integrating the competencies. One of the major challenges intrinsic to this project was the need to manage a number of differing philosophies, including Indigenous and Western knowledge systems and worldviews, objective and subjective interpretation of data, qualitative and quantitative research methodologies, and manual versus software-assisted coding.

Data analysis software programs for numerical data have been available for use since computers became widely available, and some years later, programs were developed to help organize text data (Gilbert, Jackson, & di Gregorio, 2014). These qualitative data analysis software (QDAS) programs have become increasingly sophisticated and can automatically generate output with little programming expertise on the part of the user or understanding of the process they are asking of the QDAS. While these are sometimes viewed as analysis packages, they do not generate output (results) without some programming by the user, and the user in-turn knowing something about the content of their data and how the data should be handled (Gilbert et al., 2014).

This article focuses on the method used to analyze the interview transcripts from the MPH reviews. The data generated from this study included 50 transcribed interviews. Due to the quantity of data, and the researchers not being colocated and also coming from both quantitative and qualitative disciplines, we decided to use a QDAS package to assist in data organization and analysis, with electronic communication and data storage to assist in communication of results. The software package chosen was Leximancer, and this article outlines the rationale for this choice and the strengths and limitations of the package as it relates to the methods chosen for our study. As a lesser known program that analyses data differently to other products on the market, this article makes a valuable contribution to the literature on QDSA programs.

Incorporating Differing Knowledge Systems

The overall aim of our project was to examine models and levels of integration of Indigenous content within the context of curricula that are delivered through the mainstream education system. This study was therefore designed to acknowledge both Indigenous and Western knowledge systems.

Indigenous content necessarily needs to be informed by Indigenous knowledge, which encompasses the fundamental nature of Indigenous peoples: their culture, values, beliefs, and experiences (Denzin, Lincoln, & Smith, 2008; Nakata, 2007). Developing and evaluating Indigenous content in curricula therefore requires inclusion of Indigenous scholars, who understand Indigenous knowledge systems (Denzin et al., 2008; Morseu-Diop, 2008; Nakata, 2007). Even though the Indigenous researchers understand their Indigenous knowledge systems as it applies to them, for this study, the Indigenous and non-Indigenous researchers were at times not validating content that had been constructed by Indigenous scholars, but rather were critiquing that which had been developed within a Western knowledge construct to teach about Indigenous culture in a public health context, content potentially perpetuating colonialist ontologies and epistemologies (Fredericks, 2008; Nakata, 2007), due to the lack of scholarly publications by Indigenous academics in their specific fields of expertise. As Fredericks (2008, p. 27) argues, the role of the Indigenous researcher is “to speak back to the knowledges that have been formed around what is perceived as Indigenous positionings within Western worldviews.” This research therefore needed to provide an opportunity for the Indigenous researchers to decolonize and reposition Indigenous knowledge within the academy (Nakata, 2007; L. T. Smith, 2013).

Nevertheless, we also anticipated that the majority of participants in the study were likely to be non-Indigenous public health academics. It has been acknowledged that Indigenous scholars are currently underrepresented within academia, and while teaching of Indigenous health content should ideally be performed by Indigenous educators, there remains a need for a shared responsibility for this teaching (Behrendt, Larkin, Griew, & Kelly, 2012; Department of Health, 2014; Universities Australia, 2011). It was also acknowledged that many of the non-Indigenous academics would have been assigned responsibility for integrating content to support development of the required graduate competencies, particularly in institutions that had insufficient Indigenous academics to provide this teaching, and generally well-intentioned in their efforts.

To ensure a culturally safe environment whereby both worldviews and contexts were respected, for both interviewers and those being interviewed, each review team therefore consisted of at least one Indigenous and one non-Indigenous researcher. Table 1 provides a summary of the teams undertaking each of the reviews according to their cultural backgrounds. In total, seven Indigenous and two non-Indigenous researchers have been involved in the study and subsequent data analysis and publication of the research.

Team Members Undertaking Each of the Reviews.

This approach enabled the researchers to effectively embrace the perspectives and value the contributions of both Indigenous and non-Indigenous participants in the process, in a manner that has recently been conceptualized in Canada as two-eyed seeing (Iwama, Marshall, Marshall, & Bartlett, 2009). As Martin (2012, p. 24) explains: “Two-eyed seeing holds that there are diverse understandings of the world and that by acknowledging and respecting a diversity of perspectives (without perpetuating the dominance of one over another) we can build an understanding” that expands our existing knowledge. It acknowledges that both Indigenous and Western worldviews are important, which when considered in combination, respects and values the different perspectives that each offer and even provides insight where common ground occurs between the two knowledge systems. Solutions for addressing situations may then come from either or both, as they appear most beneficial to the circumstances.

By applying this approach in our research, we aimed to consider the circumstances of each institution from both perspectives to identify and acknowledge examples of best practice whether they were being implemented by Indigenous or non-Indigenous staff. Equally, we aimed to make recommendations for improving integration and decolonization of Indigenous health content based on the lens and worldview of the Indigenous researchers, while also being sympathetic to the challenges of delivering such curricula in the Western education system, often by well-intentioned non-Indigenous academics, with the aim that graduate outcomes would be improved.

Research Rigor

By the same token, our team brought both qualitative and quantitative research expertise and their respective lenses to the project. In her discussion about two-eyed seeing as a framework for Indigenous health research, Martin (2012) makes the argument that decolonizing research, or arguably in our case research that aims to decolonize translation of knowledge through curricula delivery, may include either qualitative or quantitative methods. She emphasizes that the critical interrogation of how methods are used is vital to a decolonizing approach. While our study was qualitative, in line with the two-eyed seeing approach, we considered the perspectives of researchers from both epistemologies in thinking about our study design.

Some qualitative researchers argue that diverse perspectives and conclusions are valuable (Patton, 2002) and that the artfulness of qualitative inquiry, which is grounded in finding meaning from human experiences, is counterintuitive to scientific rigor (Bochner, 2018). However, we acknowledged that the trustworthiness of qualitative research (Guba & Lincoln, 1989) is nevertheless an ongoing concern (Morse, 2015), particularly in relation to analysis (Nowell, Norris, White, & Moules, 2017). We aimed to ensure methods used within our study were rigorous or trustworthy. Especially from a quantitative viewpoint, a criticism of qualitative research is associated with coding decisions that are perceived to threaten reliability and validity (Jackson & Trochim, 2002; Krippendorff, 1980). Reliability is based on three components: stability is dependent on coders’ ability to replicate their coding of data at different times; reproducibility is reliant on consistent processes being followed irrespective of the time, location, and coders; and accuracy is achieved when human error and disagreements between researchers are reduced or eliminated (Jackson & Trochim, 2002; Krippendorff, 1980; Stemler, 2001). In our research, we needed to reduce the diversity of approaches across the research team to ensure programs were evaluated consistently.

Jackson and Trochim (2002) argue that reliability can be improved by applying statistical analysis to produce concept mapping, so that analysis is more data-driven and less dependent on researcher decisions about coding categories. The team therefore decided to use a concept mapping software tool, Leximancer, to develop the coding schemes for each review, thus removing the potential for human error and inconsistency and increasing the reliability of the analysis (Penn-Edwards, 2010). The initial sorting process was objective and not based on a priori researcher-selected concepts or a process of induction that is not necessarily representative of the diversity in respondent intentions (Jackson & Trochim, 2002), particularly given the differing worldviews we anticipated would be represented in the data and wanted to allow to emerge freely.

Following generation of results output, interpretation and explanation of meaning was required. From a quantitative perspective, research validity is vulnerable due to the reliance on interpretation of meaning by the researcher (Krippendorff, 1980; Miles & Huberman, 1994; Patton, 2002). It can be overcome through a range of strategies including triangulation by incorporating multiple data sources, methods, or investigators (Morse, 2015; Stemler, 2001). In our study, following the initial results output, we compared information collected during the interviews with questionnaire data and curriculum documents, included a minimum of two investigators in each review team, and utilized multiple analysis methods as detailed below.

Choice of Software

The use and availability of QDAS tools is growing. There are a number of programs available, from shareware available on the Internet (Davidson & di Gregario, 2011), to complex programs such as NUD*IST and its stablemate NVivo (Gilbert et al., 2014). The way these programs handle data varies, but most involve being programmed to search for words or strings of text, which generates output enabling the researcher to return to the block of text where the text string (or other qualitative data such as photograph, or line of music) is located. Leximancer is a recent addition to the QDAS stable, the use of which is increasing (Cretchley, Rooney, & Gallois, 2010b; Douglas, 2010; Sotiriadou, Brouwers, & Le, 2014).

Leximancer Functions

Commonly used QDAS programs such as NVivo require the researcher to engage more in the analytical process by administering the structure, style, and coding patterns from the outset (QSR International, 1999–2014; Sotiriadou et al., 2014), which it has been argued can create unnecessary bias (Cretchley et al., 2010b). In addition to the ability to manually code data, the Leximancer program provides an option to automatically generate an output without researcher manipulation (A. E. Smith, 2000, 2003; A. E. Smith & Humphreys, 2006; Sotiriadou et al., 2014). Hence, Leximancer suited this research, as there were different research teams involved in the collection and analysis for each of the reviews, so the automatic coding was preferable to increase reliability. It also meant that a priori coding categories were not required, allowing for different contextual factors within each review to be accommodated while ensuring the process for analysis was consistent.

The Leximancer software program was used primarily to identify discussion threads that could be drawn out of the text (A. E. Smith, 2005). However, we found that although the Leximancer program is a useful tool for content analysis, it was also necessary to conduct a manual thematic analysis to achieve a depth to the interpretation of the results, and learnings key to the study were not missed by the software-assisted analysis. Furthermore, this approach enabled the team to balance a number of opposing paradigms within the construct of the study, in particular the incorporation of the differing worldviews of the researchers, and to ensure rigor and validity for the study outcomes.

The PHILE Network includes individual researchers with qualitative, quantitative, and mixed methods expertise. We therefore needed a form of analysis that could be used, understood, and considered appropriate by the whole team. The use of Leximancer’s algorithm program to sort and search the data and produce frequency tables (Cretchley, Gallois, Chenery, & Smith, 2010a; A. E. Smith, 2003; Sotiriadou et al., 2014) was a process understood by the quantitative researchers, and the visual representation of the concepts and concept clusters generated from the data provided a clear structure for the analysis that the whole team could follow.

The initial output generated by Leximancer comprises concept maps. Concepts generated by the software analysis consist of collections of key words that have traveled together throughout the text data (A. E. Smith, 2000, 2003). The visually brighter the concept names, the more frequently they are coded within the text. Leximancer output includes tabulations of the frequencies and percentages of the links between concepts. The higher the connectivity, the brighter the concept label with extremely warm colors (red and orange); as the connectivity percentage reduces, so do the colors become cooler (blue, green).

The concept map can be developed either automatically or manually; both processes produce a visual representation of the concepts identified in the data (A. E. Smith, 2000, 2003; A. E. Smith & Humphreys, 2006). Automatic concept generation is achieved by uploading the data and pressing an autogeneration button, thereby producing an automated concept map (A. E. Smith, 2005). The manual approach involves programming the Leximancer software tool through the different stages to generate the concept map according to key words that relate to areas under examination (A. E. Smith, 2005).

Connectivity pathways can also be created by the researcher by clicking on a beginning and an end point concept to explore possible relationships between the chosen concepts. This manual application allows the researcher to produce queries (using participants’ spoken words from the interview transcripts) from the in-text data. In this way, the researcher can extract the data where the software has identified “relationships between concepts, and allow(s) structure(s) in the data to emerge based on co-occurrences of words, rather than imposing researcher bias in the form of preconceived thematic categories” (Jackson & Trochim, 2002, p. 310).

Traditional Uses of Leximancer

As Gilbert, Jackson, and di Gregorio (2014) point out, the use of any QDAS package needs to be capable of appropriately assisting in answering the research questions. To answer our research questions, we needed to explore how the various concepts in our data linked together, in what ways they linked, and how strong the links were. Leximancer is a package specifically built to assist in such an analysis.

Researcher use of Leximancer has changed over time. As shown in Table 2, the tool has historically been used to identify key concepts, explore ranking and strengths of identified concepts, and investigate relationships between concepts. Only recently, in a grounded theory study (Harwood, Gapp, & Stewart, 2015), has the program been used to create pathways that explore the connectivity between concepts. The exploration of connectivity pathways was similarly used in this research, as outlined below, but apparently much more extensively than the other study, which was seemingly limited to the exploration of one connectivity pathway between the two most dominant concepts.

Examples of Studies Using Leximancer.

How We Used Leximancer in Our Project

The University of Melbourne Human Research Ethics Committee approved our project in 2010 (Approval #1034186.3), with the reviews occurring between 2011 and 2013. All participants were required to provide informed consent prior to participating. Data collection included face-to-face semistructured interviews and focus groups with MPH coordination and teaching staff, supplemented by two questionnaires: one for the MPH coordinator and the other for course/subject/unit coordinators. Curriculum documents were also collected as part of the process to confirm details of content within the curricula discussed during the interviews.

Most members of the review teams participated in a 2-day training program that included specialist Leximancer software training and a tailored workshop designed to familiarize the members with the data analysis process and conduct several practice exercises with different data sets. Each review team consisted of at least one member who had received this training to lead the data analysis component.

Conceptual Analysis

The process used to generate the Leximancer outputs in this project is outlined in Figure 1. Following transcription of the interviews, data were cleaned to remove insignificant words of sentiment (e.g., yeah, laughter) and to deidentify participants according to status of interviewees or facilitators, which was then uploaded into the Leximancer software package. Similar words were combined (e.g., singular and plural, Aboriginal and Indigenous, jargon with lay terms) to prevent development of multiple concept seeds with the same meaning. Dialogue tags were applied to the speakers’ identification to prevent participants being generated as concepts. Comments from the facilitators were removed, so that their comments were not included in the concept seeding process. Running the software cycle then created a concept map.

Leximancer processing stages used in this study.

For the purpose of this article, and to demonstrate the method used for each of the individual reviews, the interview transcripts were collectively uploaded into the software package using the same process as undertaken to produce the seven previously published review reports. The software produced the list of concept clusters contained in the text in order of frequency, as shown in Table 3.

Concept Clusters According to Level of Frequency.

All the concept maps from the reviews identified Indigenous and/or health as the most common concept cluster(s), which was anticipated given the focus of the research. How lesser concepts interrelated with the most frequent concepts was therefore of particular interest in this study, not merely the frequency of the other concepts. The next step was to examine the details of the connectivity threads that could be manually generated by linking the most frequent concepts. The resulting connectivity pathways were generated using the above table: Indigenous—health—different—work—people; Indigenous—health—public—look; Indigenous—students; Indigenous—health—public—subject; Indigenous—health—public—content; Indigenous—health—public—time; Indigenous—health—public—course—research—whole; and Indigenous—health—public—course—year.

Notably, the later connectivity pathway that follows through to the concept of whole and year absorb the connectivity pathway Indigenous—health—public—course. The concept map with the Indigenous—whole discussion thread is illustrated in Figure 2.

Indigenous—whole connectivity pathway in concept map.

Using the connectivity pathway outputs from the software, the quotes from the transcripts related to each discussion thread were then extracted from each pathway query. For example, one of the quotes drawn from the Indigenous—students discussion thread describes the cohort at one of the universities as consisting of non-Indigenous students who have an interest in Indigenous public health. I think one of the niches for us that works is that, although there’s not a lot of Indigenous students doing the MPH, the people who do the MPH come out with a solid foundation in public health and Aboriginal health…because it is already in the core units. When you’re teaching topics, because of the nature of When we reaccredited for this

Thematic Analysis

The output from the Leximancer analysis was subsequently analyzed manually to provide contextual interpretation for the results (Denzin & Lincoln, 2011) and apply the two-eyed seeing approach to this interpretation process. As this article is concerned with reporting the method used, rather than the results of the study, we have not conducted a thematic analysis of the collective results here. However, for each individual review, quotes extracted from the connectivity pathways were grouped by the researchers, according to subthemes. In this way, the researchers were able to use their insights into Indigenous public health education to generate an understanding of the computer-generated data. The quotes above were categorized in their respective review reports as follows.

Indigenous health practitioners in a section that discussed features of the student cohort within the MPH program alongside additional subthemes of Indigenous students and student researchers; Focus on social determinants in a section that relates to the place and type of Indigenous content and discussion of the structural issues of Indigenous content within the program together with the subthemes of Indigenous health as a core, integration of Indigenous health, exception for three specializations, resourcing for review of content, content links between subjects, and choice of topics; and Integration process in a section referring to recent changes to the curriculum and how the changes were achieved. The section also included the subthemes of competency integration, curriculum consolidation, and curriculum specialist.

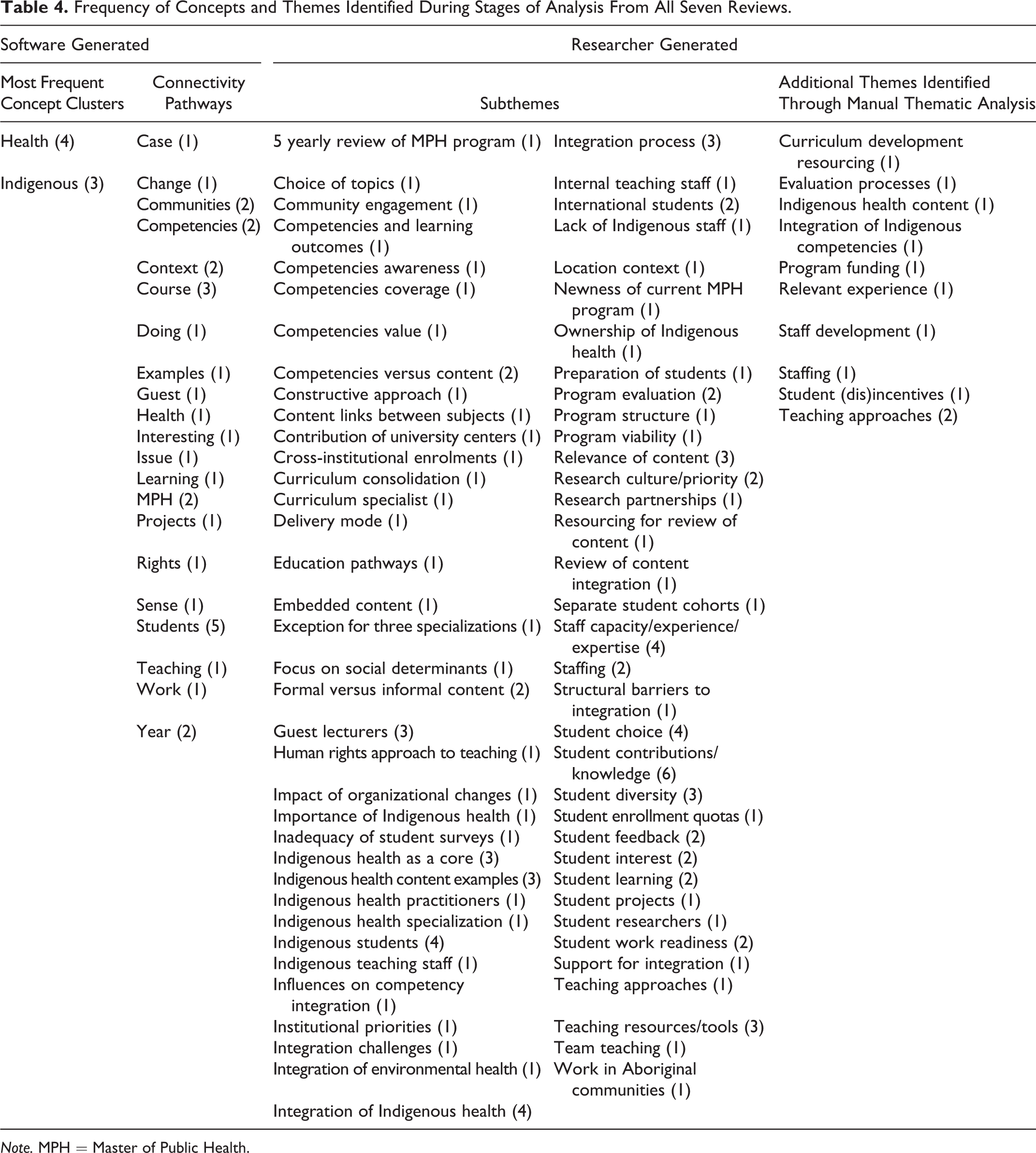

A manual review of the transcripts was also conducted to make sure that key learnings had not been missed by the computer-aided analysis. Table 4 provides a summary of the concept clusters and connectivity pathways generated during the conceptual analysis and the subthemes and additional themes identified by the researchers in the manual thematic analysis, according to frequency in which they occur across the seven published review reports.

Frequency of Concepts and Themes Identified During Stages of Analysis From All Seven Reviews.

Note. MPH = Master of Public Health.

Discussion

One of the key reasons for using computer-aided analysis was to ensure a reliable process was followed for each of the different reviews. This aim was certainly accomplished as each review report followed the same structure and achieved a consistent standard irrespective of the different teams generating the reports. All published review reports are available at http://www.phile.net.au/publications.

A common criticism of computer-aided analysis is its lack of context (Fielding & Lee, 2002), yet this was not our experience. The variation of concepts identified using Leximancer-developed connectivity pathways, summarized in Table 4, illustrates this. While some key concepts such as students, courses, MPH, and competencies were common across reviews, there were numerous concepts identified that were unique to each review, which was indicative of the varying local contexts informing the content of the reviews. The team agreed that the Leximancer output of research concepts was an accurate reflection of their individual interpretation/recollection of the review interviews, demonstrating the strength of the research process.

Similar to the experience of other researchers using Leximancer (Watson, Smith, & Watter, 2005), we also found that concepts were identified that the researchers may not have coded had the process been limited to a manual thematic analysis. The rights concept included in Table 4 is a case in point; the researchers on this particular review would not have coded rights as a theme when examining issues around curriculum integration, yet teaching based on a human rights approach was a key concept that emerged through the Leximancer analysis at one of the universities.

However, the Leximancer program was not without its limitations. As already outlined, there was still a need for the researchers to provide contextual interpretation of the data, evidenced by the number of subthemes generated from analysis of the pathway outputs. There were also a limited number of themes that were missed by the Leximancer in some of the reviews that were picked up by the researchers in the subsequent manual analysis. This highlighted for us the need for the researcher to engage with the data, irrespective of the assistance provided by, and benefits gained from, utilizing QDAS for data analysis. Arguably, the number of subthemes could have been reduced had an a priori coding scheme been used. For example, the focus on social, political, and cultural determinants and the human rights approach to teaching could have been included under the broader teaching approaches subtheme. However, this oversight in the iterative process only became evident in hindsight.

In particular, the Leximancer tool made it difficult to ensure that the Indigenous voice was heard. Given the two-eyed seeing approach utilized, which is predicated on neither worldview dominating, and the likely need to decolonize the Indigenous public health curricula content being examined in the research, this was particularly problematic. Contributing to this dynamic was the larger number of non-Indigenous interviewees that potentially skewed the data based on the frequency of concepts discussed, preventing individual case reporting (Penn-Edwards, 2010). For example, in one review, there were 15 non-Indigenous and 1 Aboriginal participants, and there were some reviews with no Aboriginal and Torres Strait Islander participants. A computer program cannot rectify this kind of structural invisibility and was therefore a barrier that the researchers needed to address through the subsequent thematic analysis. Being aware of this limitation within the Leximancer process, the researchers were able to identify the Indigenous worldview within the manual thematic analysis.

In the afore-stated review with the single Aboriginal participant, this interviewee was discussing broad themes such as engagement, participation, and creating change and describing a different way of working, the importance of collaborative relationships, complementary clinical practice, traditional (Aboriginal) medicine, and challenging the dominant Western mainstream constructs to health-care and health service delivery, policy, power and control, and the international context. However, the Leximancer connectivity pathways linked a series of key words including health, unit, teaching, and change. The data generated within this connectivity pathway were drawn from comments made by a non-Indigenous participant, describing a perceived transferability of skills from working with culturally and linguistically diverse minority groups to the Aboriginal health arena. Although the Aboriginal interviewee was willing to acknowledge that there are transferable skills or techniques that would be beneficial for working in an Aboriginal community context, the examples outlined by the non-Indigenous participants are in no way appropriate as adequate work experience in an Indigenous health context or to create systematic change and increase positive outcomes for Aboriginal and Torres Strait Islander patients. This instance highlighted for us the risk that the views of the majority can essentially obstruct or potentially quash more informed minority voices when you interview a large number of willing and keen—however naive—individuals, if the researcher is not engaged in and checking the data generated by a QDAS.

Equally this was an example of how the two-eyed seeing approach enabled us to engage with the data from both the Indigenous and Western worldviews to interpret the situation and make recommendations for curriculum changes accordingly. This was achieved through a team approach, whereby each review team sat together and applied the two-eyed seeing approach to the analysis, sharing their understandings and avoiding any ambiguity in the process of interpretation. Furthermore, as Indigenous ways of doing and knowing are often shared orally (Bessarab & Ng’andu, 2010), this approach enabled us to combine Indigenous ways of doing research with Western research methodologies, further strengthening the two-eyed seeing process (Martin, 2012) and contributing to decolonization of the research (L. T. Smith, 2013). An unanticipated benefit recognized through this study was the visual representation of the data by the Leximancer program. Outputs were useful in improving interpretation, considering our research teams consisted of individuals from diverse backgrounds and worldviews. It was particularly useful for decolonizing the research knowledge transfer process; recognizing that Indigenous peoples commonly share a visual learning style (Hughes, Williams, & More, 2004) the research team prefer to represent knowledge in visual forms.

A further weakness in the process was the computer-aided analysis was limited to the interview transcripts. Curriculum documents, while used for triangulation and to confirm details referred to in the interviews, and the location of Indigenous health content, were not analyzed using Leximancer. Supplementary analysis of these documents may have added richness to the research, which was not considered at the time.

Another reason the Leximancer program was chosen for the initial stage of analysis was its potential applicability to researchers from different methodological paradigms. It was anticipated that the display of data through graphics and a frequency table would enable the team to consider the outputs through both a qualitative and quantitative lens. This did end up being the case and is an important finding that contributes to the argument for using QDAS.

However, reflections on the process by members of the team who usually undertake quantitative data analysis indicated the greatest strength of the program was its capacity to make sense of many hours of interview data, an advantage of computer-aided analysis (Jones, 2007). It was noted that without the Leximancer software, the researchers would not have achieved the same depth of analysis in such a timely manner. The advantage to using the software was the ability to commence the critical interpretation stage almost immediately and write up of the findings while memory of the interviews was fresh.

Conclusion

Computer analysis is often criticized for being context-free (Fielding & Lee, 2002), and even “potentially alienates the researcher from the data” (Ryan, 2009, p. 142). Furthermore, where QDAS programs produce frequency count output, results can be biased by overrepresented perspectives (Jackson & Trochim, 2002). However, when used appropriately, computer-assisted analysis has several benefits including enhanced data management, shorter analysis time frames, and more rigorous coding, especially for managing large and multiple data sets (Jones, 2007), and has potential to bring increased transparency to the process (Thompson, 2002).

The key to computer-assisted analysis is therefore using it, “not as a way to analyze the data, but rather as a way to organize and link it” (Ryan, 2009, p. 144). It is therefore only “part of the research process” (Penn-Edwards, 2010, p. 264) that should be complemented by a second stage of human analysis for in-depth and rich interpretation (Ryan, 2009). Regardless of type of data, researchers need to be able to analyze their data using a “describe, compare, explain” format.

This is precisely the approach we used in our research, utilizing the software program to perform initial coding of the interview transcripts, followed by a manual analysis of the data generated, to provide the contextual interpretation and also ensure that underrepresented views were still captured. This enabled us not only to bring trustworthiness to the process but also to apply a two-eyed seeing approach to interpretation of the data that valued and acknowledged the contributions of both the Indigenous and Western worldviews of all involved.

Footnotes

Acknowledgments

The authors gratefully acknowledge the contributions of members of the Public Health Indigenous Leadership in Education Network, staff from the universities who participated in the reviews, and the valuable advice received from anonymous reviewers in the substantial revision of this manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Australian Government, Department of Health.