Abstract

Background:

Process evaluations are essential to understand the contextual, relational, and organizational and system factors of complex interventions. The guidance for developing process evaluations for randomized controlled trials (RCTs) has until recently however, been fairly limited.

Method/Design:

A nested process evaluation (NPE) was designed and embedded across all stages of a stepped wedge cluster RCT called the CORE study. The aim of the CORE study is to test the effectiveness of an experience-based codesign methodology for improving psychosocial recovery outcomes for people living with severe mental illness (service users). Process evaluation data collection combines qualitative and quantitative methods with four aims: (1) to describe organizational characteristics, service models, policy contexts, and government reforms and examine the interaction of these with the intervention; (2) to understand how the codesign intervention works, the cluster variability in implementation, and if the intervention is or is not sustained in different settings; (3) to assist in the interpretation of the primary and secondary outcomes and determine if the causal assumptions underpinning the codesign interventions are accurate; and (4) to determine the impact of a purposefully designed engagement model on the broader study retention and knowledge transfer in the trial.

Discussion:

Process evaluations require prespecified study protocols but finding a balance between their iterative nature and the structure offered by protocol development is an important step forward. Taking this step will advance the role of qualitative research within trials research and enable more focused data collection to occur at strategic points within studies.

Keywords

What is already known?

There is widespread agreement on the importance of process evaluations for understanding the contextual, relational, and organizational and systems factors about why an intervention does or does not work. Criticisms do remain however around the nonsystematic approaches to data collection and the reporting of qualitative process evaluations as merely illustration. Recent guidance from the Medical Research Council (UK) calls for greater embedding of process evaluation data within the interpretation of outcomes.

What this paper adds?

This paper provides a description of a nested process evaluation design using mixed-methods to inform a cluster randomized controlled trial. It builds on current debates about the need to better systematize process evaluation data collection, analysis and reporting. The protocol details the design and analytical plan for the process evaluation and how the mixed-method data collection and analysis will answer the prespecified aims for the process evaluation.

Introduction

It is accepted wisdom that process evaluations are critical to understanding the implementation and effectiveness (or otherwise) of complex interventions, particularly where there is seldom a clear causal chain (Haynes et al., 2014; Moore et al., 2014). Evaluation data are critical to understanding the settings in which intervention implementation occurred; the required adaptations to interventions; the contextual features of organizations; and the dynamics, social systems, and relationships which may have influenced trial results. Until recently, there has been limited guidance on how to plan, design, analyze, and report process evaluations for cluster randomized controlled trials (CRCTs), individual randomized controlled trials (RCTs), and public health interventions more broadly (Aarestrup, Jørgensen, Due, & Krølner, 2014; Grant, Treweek, Dreischulte, Foy, & Guthrie, 2013; Saunders, Evans, & Joshi, 2005). A key challenge in developing systematic protocols for process evaluations is the need to fit with local contexts and be tailored to the trial, the intervention, and the outcomes being studied (Oakley et al., 2006). Thus, there is natural variability in what is offered.

Increasing efforts have been made to systematize the design, conduct, analysis, and reporting of process evaluations in health research (Mann et al., 2016; Moore et al., 2014). New guidance put forward by the United Kingdom Medical Research Council (MRC) outlines that process evaluations need to move beyond the “‘does it work’ focus, toward combining outcomes and process evaluation data” (Grant et al., 2013; Moore et al., 2014, 101). Oakley proposes that process evaluations of complex intervention are common, but data are often not collected systematically and rarely used beyond illustration (Oakley et al., 2006). These new directions indicate a need for the development of process evaluation protocols to accompany traditional RCT study protocols and for equal weight to be given to the prespecification of aims and questions. A key challenge is how to find a balance between the fluidity that complexity and process so obviously warrant and the development of process evaluation aims, questions, and procedures in advance.

Aims and Objectives

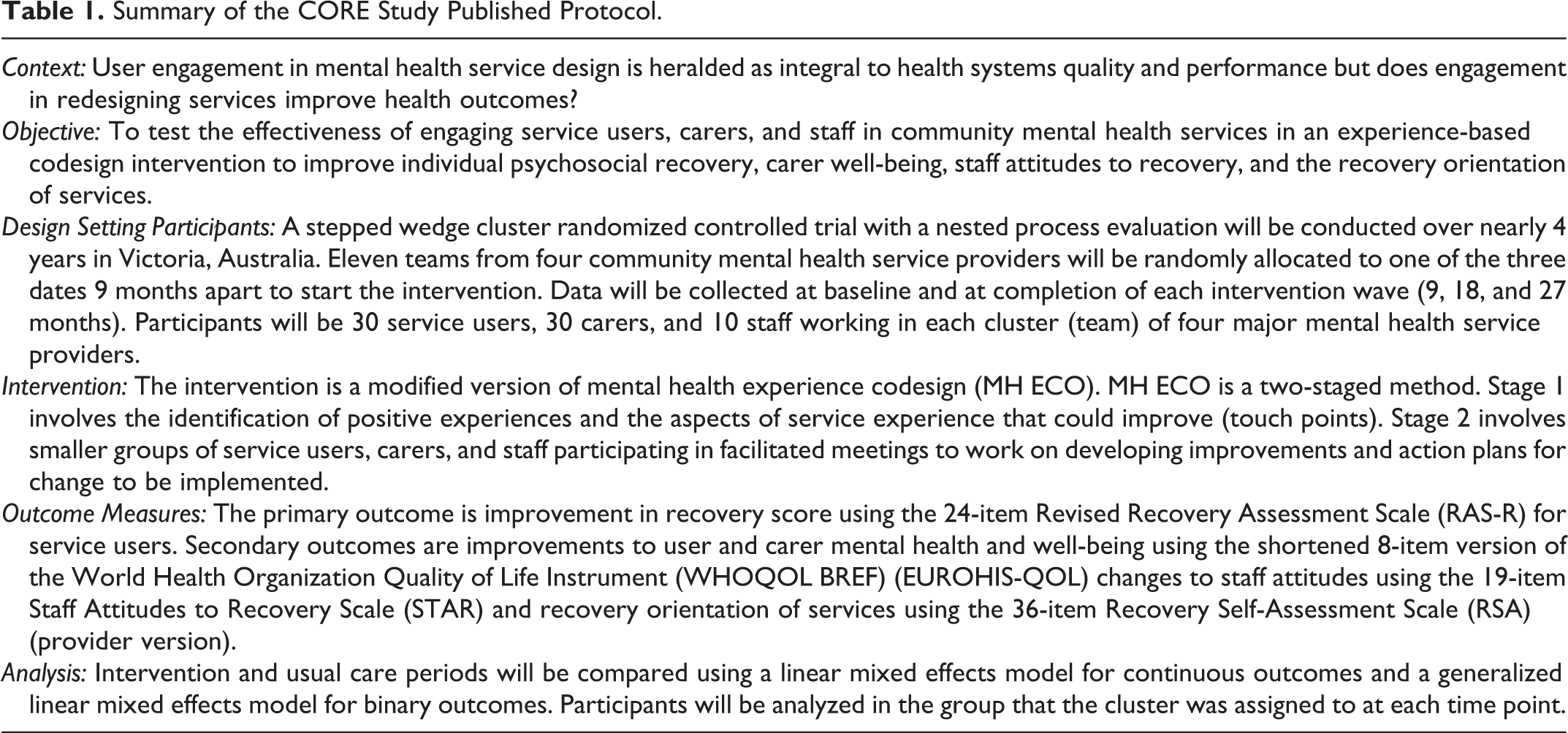

In this article, we present the nested process evaluation (NPE) protocol for the CORE study. CORE is a stepped wedge cluster randomized controlled trial (SWCRCT) of an experience-based codesign (EBCD) intervention for people affected by mental illnesses in the community mental health setting. The full trial protocol for the CORE study and in particular details of the stepped wedge design has been published elsewhere, and the summary details are provided in Table 1 (Palmer et al., 2015).

Summary of the CORE Study Published Protocol.

EBCD is a quality improvement methodology that draws on participatory action research (PAR), narrative theory, learning theories and design thinking (references) (Bate & Robert, 2006, 2007; Donetto, Pierri, Tsianakas, & Robert, 2014; Robert, 2013). PAR methods are premised on a bottom-up approach to data collection and the inclusion of study participants as cocollaborators in the research process while design thinking refers to the principles of good design to inform health-care systems development and improvements. Good design is about the functionality (fit for purpose performance), the safety (good engineering and reliability), and the usability (the interaction with the aesthetics) of a system or service (Bate & Robert, 2006). EBCD brings PAR and design thinking together with an emphasis on experience instead of instrumentality or procedures.

EBCD uses PAR principles to engage those who use services (in the case of CORE, we are referring to mental health services) in the identification of areas for change and codevelopment of solutions; the goal is a better health-care experience. Using a two-staged approach, EBCD involves information gathering about experiences of services using a narrative approach from those who are recipients and carers (referred to as service users herein) to identify the positive aspects of experiences and the areas for improvement (Bate & Robert, 2006, 2007; Fairhurst & Weavell, 2011; Robert, 2013). The second stage is a codesign process where staff and service users (and where possible carers) are engaged in a facilitated learning process to codesign changes based on the low (negative) experiences or areas to be improved identified in Stage 1. EBCD has been undertaken in a number of clinical settings such as head, neck, lung, and breast cancer services; intensive care units; a diabetic clinic; a renal service; fracture and stroke services; and more recently, inpatient and community settings for mental health (Iedema, Piper, Merrick, & Perrott, 2008; Locock et al., 2014a; Piper, Gray, Verma, Holmes, & Manning, 2012; Piper, Iedema, & Merrick, 2010; Robert, 2013). Some evaluation data do exist from these programs, but there have been no trials which have assessed whether EBCD results in improved health outcomes for participants (Donetto, Pierri, et al., 2014). Given the centrality of user involvement in planning, design, and evaluation of services in mental health policy nationally and internationally establishing if this results health outcomes is important (Allen, Radke, & Parks, 2010; National Consumer and Carer Forum (NCCF), 2004; Carman et al., 2013; COAG, 2012; DoH, 2014; DoHA, 2013; Fudge, Wolfe, & McKevitt, 2008; Mental Health Consumer Outcomes Task Force, 2012; New Zealand Ministry of Health, 2012; World Health Organization, 2013). The CORE study is a world first trial of EBCD methodology in community mental health services and to further examine the effect of service user engagement in redesigning services on psychosocial recovery (Palmer & and The CORE Study Team, 2015).

The four aims of the NPE for the CORE study are to: describe organizational characteristics, service models, policy contexts, and government reforms and examine the interaction of these with the intervention; understand how the codesign intervention works, the cluster variability in implementation, and if the intervention is or is not sustained in different settings; assist in the interpretation of the primary and secondary outcomes and determine if the causal assumptions underpinning codesign interventions are accurate; determine the impact of a purposefully designed engagement model on the broader study retention and knowledge transfer in the trial.

To achieve these aims, Grant et al.’s, REAIM framework for evaluating CRCTs was used as a preliminary guide and adapted to formulate the key areas to evaluate (Grant et al., 2013). REAIM is the acronym for five evaluation components: reach, effectiveness, adoption, implementation, and maintenance. These components help to address questions for the dissemination, generalization, and translation of complex interventions into practice. In early work, a major emphasis of REAIM was on adherence to internal and external validity (Glasgow, Vogt, & Boles, 1999). There has been expansion beyond this commitment to the intervention implementation and delivery to consider broader contextual factors.

Method/Design

The CORE Study Trial Design

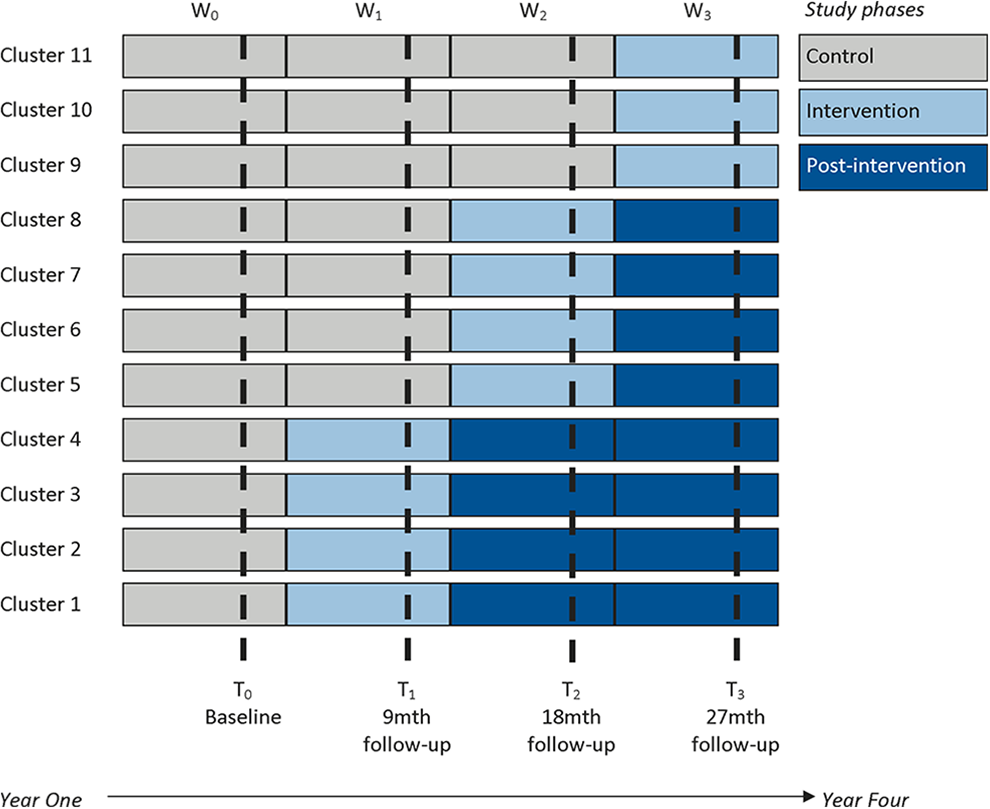

A SWCRCT design has been developed to determine the effectiveness of the EBCD intervention on individual psychosocial recovery outcomes for service users living with severe mental illness (see Figure 1 for an explanation of the design). The trial is overseen by a nine-member advisory and data monitoring committee (ADMC) with expertise across EBCD, psychosocial recovery, RCTs and complex interventions, statistics and health-care systems, and quality improvement and change. The ADMC meets twice a year and is responsible for ensuring trial integrity, safety of participants, rigor of study design, and overseeing the NPE for the study. Membership of the committee and the ADMC charter is available from the published study protocol supplementary files (Palmer et al., 2015).

A stepped wedge cluster randomized controlled trial in the community mental health setting. (Figure first published in http://bmjopen.bmj.com/content/5/3/e006688.full.pdf+html)

The EBCD intervention for the CORE study is a modified version of mental health experience codesign (MH ECO) and is explained below. In a stepped wedge CRCT, clusters receive an intervention in steps (or waves). Figure 1 shows the three 9 monthly intervention waves for the CORE study; waves that have not received the intervention act as a control in the stepped wedge design (Palmer et al., 2015).

The advantages and limitations of using the stepped wedge design have been outlined in the published study protocol (Palmer et al., 2015). Given that the intervention is delivered into practice settings where the primary population will change due to being exited (discharged as recovered in the current service model) or individuals may change services, a mixed cohort with cross-sectional design was also selected. This mixed design allows for replenishment of the sample during the follow-up time points (9, 18, and 27 months) to accommodate for an estimated 40% attrition rate for service users based on people leaving services and possible withdrawals from the study.

The Intervention—MH ECO

MH ECO was developed between 2006 and 2011 by the peak consumer (service user) representative agency in Victoria, Australia, the Victorian Mental Illness Awareness Council, and Tandem representing Victorian Mental Health Carers (formerly the Victorian Mental Health Carers Network) in partnership with the Victorian State Government Department of Health & Human Services. The method was piloted in adult public mental health specialist services and adult residential services within the psychiatric disability and rehabilitation support service with positive evaluation results (Fairhurst & Weavell, 2011; Goodrick & Bhagwandas, 2011). Figure 2 illustrates the modified version of MH ECO to be delivered in the CORE study as published in the study protocol (Palmer et al., 2015).

Flowchart of mental health experience codesign- modified intervention (First published in http://bmjopen.bmj.com/content/5/3/e006688.full.pdf+html)

As previously noted in the published study protocol, the intervention has been shortened to accelerate the information gathering stage (data collected about service experiences), so that participant motivation does not wane and the overall quality improvement cycle is implemented within a 5–6 month time frame (Locock et al., 2014a). In addition, two collaboration group meetings are held instead of the three specified in the original methodology within the codesign phase. In MH ECO, the third collaboration meeting is used to identify any barriers and facilitators to the implementation of the action plans from the codesign meetings. We document this as part of our NPE.

As there was a need to specify parameters around the time for implementation in each service for consistency in the assessment of outcome measures, we decided on two formal collaboration group meetings with a 1-month implementation time frame to action the initiatives. In addition, evaluation data from previous EBCD projects highlights that the implementation of changes from action plans can take up to and beyond 12 months (Adams, Maben, & Robert, 2014; Goodrick & Bhagwandas, 2011; Iedema, Merrick, Piper, & Walsh, 2008; Iedema, Piper, et al., 2008; Piper et al., 2012, 2010). With this in mind, specification of an implementation time frame may also lead to more rapid implementation which will be monitored within the NPE. The NPE data collection will note variations in the length of time it takes services to make changes, the barriers and facilitators to these and any identifiable links between these issues with the kind of change implemented.

The NPE Design

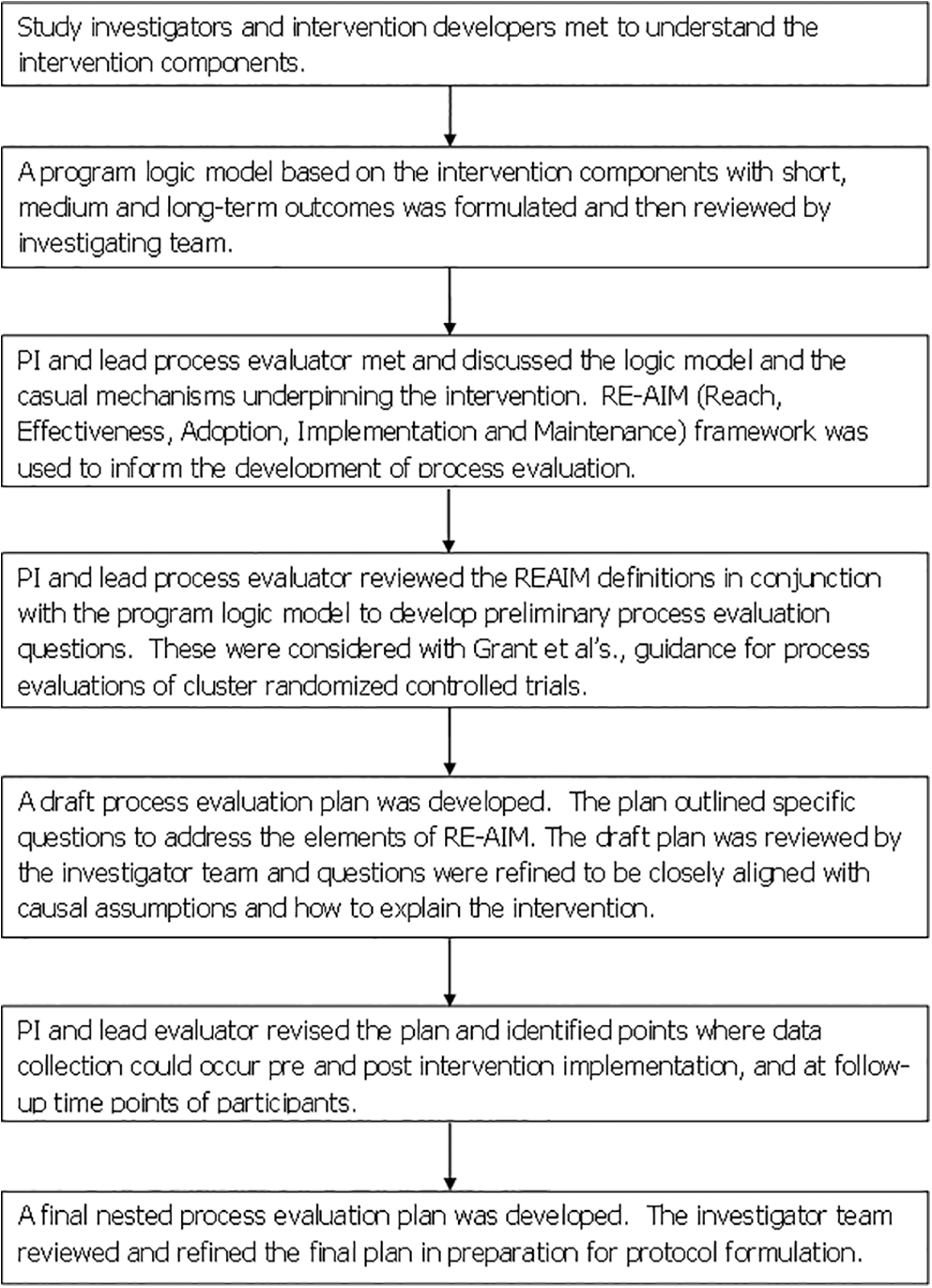

“Nested” refers to the process evaluation running parallel to the trial and data collection being nested within all stages from preimplementation during implementation to postimplementation. Figure 3 outlines the stages undertaken to develop the NPE protocol, the use of the UK MRC guidance and application of Saunders et al.’s steps in the evaluation planning process (Saunders et al., 2005).

Overview of design of the nested process evaluation protocol.

As Figure 3 illustrates, the development of our NPE protocol design began with researchers (VP, KG) meeting with the intervention developers and facilitators (WW, RC) to discuss the components of the intervention, its active ingredients, and possible causal assumptions. In this meeting, the group drafted a program logic model with the anticipated short, medium (the proximal outcomes), and long-term outcomes (the distal outcomes) expected from the codesign intervention (Aarestrup et al., 2014). This program logic appears as an appendix and is already published as part of the trial study protocol (see Supplementary File 1). The definitions of REAIM were reviewed and the interpretation of these in the context of the codesign intervention developed (Glasgow et al., 1999). Following this, some preliminary evaluation questions were devised and considered with reference to the causal assumptions underpinning the intervention. Causal assumptions were identified by consideration of the intended outcomes of the intervention and a review of the existing evaluation literature and reports from previous EBCD studies conducted nationally and internationally (Adams et al., 2014; Donetto, Tsianakas, & Robert, 2014; Iedema, Piper, et al., 2008; Locock et al., 2014a, 2014b; Piper et al., 2012, 2010). Once questions were refined, the research team determined the information that would be needed to inform the evaluation and the methods and tools for data collection that could be used. The result of this appears in Table 2 which illustrates how REAIM dimensions have been interpreted for the CORE study NPE, the prespecified evaluation questions, and the information needed to answer the questions.

The Nested Process Evaluation Framework for the CORE Study.

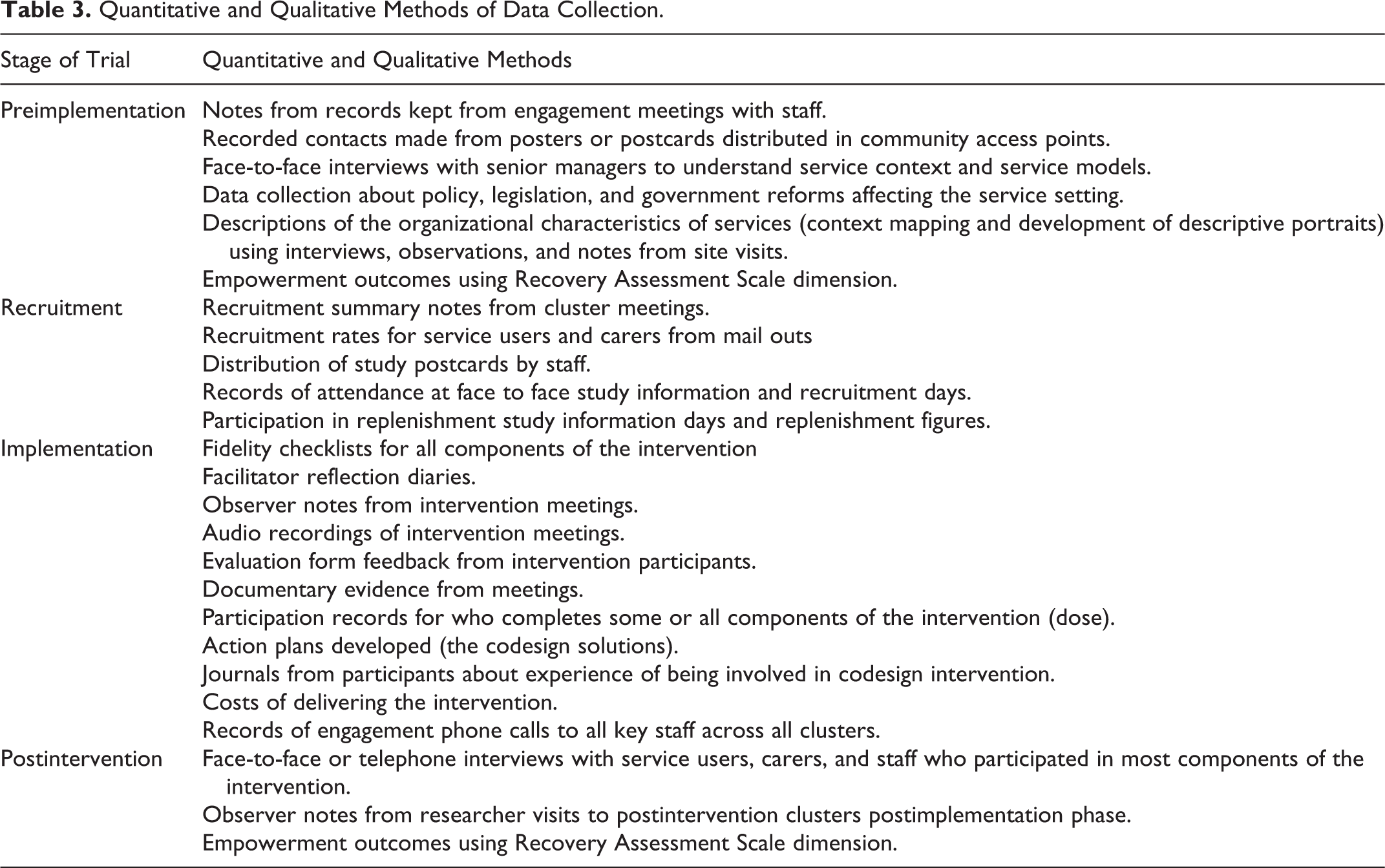

Data Collection

Table 3 details the quantitative and qualitative methods employed in the study to collect data for the NPE. All face-to-face postintervention interviews will be completed by a researcher who is independent of the investigator team.

Quantitative and Qualitative Methods of Data Collection.

Data Analysis

Data for the NPE will be analyzed using what Guetterman et al. have termed “a convergent mixed method design.” Convergent methods involve quantitative and qualitative data collection and analysis at similar times, followed by integrated analysis (Guetterman, Fetters, & Creswell, 2015). Descriptive statistics will be used to present quantitative data about reach and proportions of people within clusters who are recruited to the intervention. Logistic regression will be used to assess the impact of mediating factors and analyzing subgroups regarding effectiveness (Oakley et al., 2006). To explore the causal assumptions between codesign and empowerment, participants’ completed Recovery Assessment Scale–Revised (24 items; Corrigan, Salzer, Ralph, Sangster, & Keck, 2004) “hope and confidence” dimension scores (range 9–45) will be analyzed across all available time points. Hope and confidence are two constructs closely correlated with empowerment, and rather than create additional burden through further data collection for this already vulnerable cohort, we will use the existing data already being collected to explore this question (Herbert, Gagnon, Rennick, & O’Loughlin, 2009; Rogers, Chamberlin, Ellison, & Crean, 1997). This quantitative data will be analyzed with qualitative interview data findings collected with people who participated in the codesign intervention. The qualitative interview data will provide insights into questions around the adoption and implementation of the intervention and further indications of maintenance issues including whether outcomes put forward in the program logic were met. Interview data will be combined with reflective journals from facilitators and observer notes from the research assistants in attendance at intervention meetings. These will be analyzed to identify similarities and differences in perspectives about intervention meetings. Descriptive portrait material developed for each cluster (service) will be used to inform analyses and interpretations. Analyses will identify if there are statistical or qualitative differences between the people who participated in the codesign process compared with those people who experienced the effects of the intervention at a distance. Contextual, policy, and interview data will all be analyzed thematically looking for patterns and themes shared within and across groups of participants and clusters that may or may not have affected reach, effectiveness, adoption, implementation, and maintenance.

Data analysis will occur at the completion of each intervention wave and be conducted by a researcher independent to the investigator team to avoid bias and contamination in subsequent waves. A subgroup of the advisory and data monitoring committee (ADMC) interested in experienced-based codesign and qualitative methods will review each wave of process evaluation data and identify pathways for analysis. At the completion of the intervention, all data from the individual clusters will be combined to be analyzed together before the study final outcomes analysis is completed. The analysis will address the four different aims for the evaluation.

Discussion/Conclusion

Finding a balance between the iterative nature of a complex intervention and the structure offered by protocol development is an important step forward to ensure that process evaluations take an important and central place in RCTs and CRCTs. Too often, process evaluations that are conducted ad hoc appear to be an afterthought in trials. They result in illustrative data collected unsystematically without a clear link to broader aims and no prespecified aims. The advantages to prespecification of the aims and questions for process evaluations is that there is greater efficiency in the use of resources for both data collection and more targeted data collection to ensure that meaningful analyses can be reported. The result is a NPE that is apparent across the all stages of a trial.

Designing the CORE study process evaluation as nested has ensured that we can ask particular questions that shed light and advance the body of evaluation work already completed in previous EBCD studies. We can examine closely some of the causal assumptions underpinning a codesign intervention to identify what works for whom, when, and in what circumstances. Grant et al.’s framework for CRCT process evaluations has proved beneficial for identification of the candidate elements, but there is still a need to emphasize the importance of focusing the NPE analyses on the contextual factors that may affect all aspects of the trial and outcomes, and the types of solutions developed, and examining any links between solution types and maintenance (Adams et al., 2014). It is important to note also that REAIM is one set of candidate elements available for guiding the design of evaluations. Others include the realistic evaluation approach (Pawson & Tilley, 1997) and normalization process theory (Finch et al., 2013; May & Finch, 2009; May et al., 2009; McEvoy et al., 2014; Murray et al., 2010). In this regard, the evaluation of all complex interventions necessitates, we find, greater balance between protocol and process.

Trial Status

The CORE study is registered as a trial with the Australian and New Zealand Clinical Trail Registry, Trial ID: ACTRN12614000457640. Registration Title: The CORE Study: A SWCRCT to test a codesign technique to optimize psychosocial recovery outcomes for people affected by mental illness in the community mental health setting. Date Registered 01/05/2014; Start Date 30/06/2014.

Footnotes

Authors’ Note

Trial Registration Australian and New Zealand Clinical Trials Registry CTRN12614000457640.

Acknowledgments

In addition to the authors listed, the CORE study is dependent on the commitment provided by the Mental Health Community Support Services partners in the project and the staff, service users, and carers of these services. The research team acknowledges the ongoing work of the Victorian Mental Illness Awareness Council (VMIAC) and TANDEM representing Victorian mental health carers in their development of the original mental health experience-based codesign methodology (MH ECO). Ms. Konstancja Densley is responsible for data management. Statistical design and analysis is led by Dr. Patty Chondros. The research team acknowledges the guidance provided by the members of the advisory and data monitoring committee (Dr. Hilary Boyd, Professor John Carlin, Professor Judith Cook, Ms. Karen Fairhurst, Ms. Jane Gray, Dr. Lynn Maher, Professor Glenn Robert, Assistant Professor Rob Whitley, and Professor Sally Wyke).

Author Contributions

VP conceived the CORE study in conjunction with staff located in community mental health services in Victoria Australia and input from the named investigators for the project. VP, RC, WW, KG developed the program logic model which was reviewed by the named authors. VP, DP and KG developed the draft of the nested process evaluation framework which was reviewed by the named authors. VP led the writing of this manuscript with all named authors providing critical comments and revisions. All authors have read and approved the final version.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethics Approval

The University of Melbourne Human Research Ethics Committee (HREC No.: 1442617.10) has approved this study. The Federal Government Department of Health has approved the collection of Medicare Benefits Scheme (MBS) and Pharmaceutical Benefits Scheme (PBS) data, and the State Government of Victoria has approved the collection of hospital admission and triage data.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The CORE study is funded by the Mental Illness Research Fund and the Psychiatric Illness and Intellectual Disability Donations Trust Fund. The Mental Illness Research Fund aims to support collaborative research into mental illness that may lead to better treatment and recovery outcomes for Victorian with mental illness and their families and carers.