Abstract

In this article, the authors describe how they used a hybrid process of inductive and deductive thematic analysis to interpret raw data in a doctoral study on the role of performance feedback in the self-assessment of nursing practice. The methodological approach integrated data-driven codes with theory-driven ones based on the tenets of social phenomenology. The authors present a detailed exemplar of the staged process of data coding and identification of themes. This process demonstrates how analysis of the raw data from interview transcripts and organizational documents progressed toward the identification of overarching themes that captured the phenomenon of performance feedback as described by participants in the study.

Introduction

In the interpretive study reported in this article, we explored the phenomenon of performance feedback within nursing. The impetus for the research was the introduction of a signed declaration of self-competence required for continuing registration as a nurse within South Australia. The use of performance feedback was recommended by the Nurses Board of South Australia to inform a nurse's self-assessment of competence (NBSA, 2000). Performance feedback in this context is defined as information provided to employees about how well they are performing in their work role. This can involve formal and/or informal feedback processes, including written appraisals and verbal comments as part of everyday work. However, a review of the literature highlighted limited research to support the utility of feedback in this context. The study reported here addressed the identified deficit by exploring:

the sources and processes of performance feedback used by nursing clinicians to self-assess their level of competence, the criteria applied by nursing clinicians to classify feedback utility and credibility, and how the criteria were formulated and structured in interpretation.

The qualitative approach of the study was informed by Schutz's (1899–1959) theory of social phenomenology as both a philosophical framework and a methodology. 1 Data were gathered from nursing clinician focus groups, clinical manager focus groups, and organizational performance review policies and procedures. In our analysis of the data, we used a combined technique of inductive and deductive thematic analysis. It is this step-by-step process of analysis that is detailed in this article to demonstrate rigor using a hybrid approach to thematic analysis.

The philosophical framework of social phenomenology

Schutz's social phenomenology is a descriptive and interpretive theory of social action that explores subjective experience within the taken-for-granted, “commonsense” world of the daily life of individuals. Schutz's theory emphasizes the spatial and temporal aspects of experience and social relationships. Social phenomenology takes the view that people living in the world of daily life are able to ascribe meaning to a situation and then make judgments. It is the subjective meaning of experience that was the topic for interpretation in this study.

Schutz viewed safeguarding the subjective point of view as of paramount importance if the world of social reality was not to be replaced by a fictional, nonexistent world constructed by the researcher. To this end, Schutz (1967) formulated a method for studying social action involving two senses of verstehen (interpretive understanding). The first order is the process by which people make sense of or interpret the phenomena of the everyday world. The second order of understanding involves generating “ideal types” through which to interpret and describe the phenomenon under investigation. In the study reported here, the method of analysis used the data-driven inductive approach of Boyatzis (1998) and the deductive a priori template of codes approach outlined by Crabtree and Miller (1999) to reach the second level of interpretive understanding.

Demonstrating rigor within the framework of social phenomenology

Schutz identified that his methodology of first- and second-order constructs needed to be grounded in the subjective meaning of human action. As a result, he proposed three essential postulates to be followed during the research process.

The postulate of logical consistency: The researcher must establish the highest degree of clarity of the conceptual framework and method applied, and these must follow the principles of formal logic. The postulate of subj ective interpretation: The model must be grounded in the subj ective meaning the action had for the “actor.” The postulate of adequacy: There must be consistency between the researcher's constructs and typifications and those found in common-sense experience. The model must be recognizable and understood by the “actors” within everyday life. (Schutz, 1973, pp. 43–44)

At the time when Schutz commenced writing his theories (1930s), he was mindful of the “natural” versus “social” science debate in relation to “valid” methods of research. Although this debate is now thought to be redundant (Crotty, 1998; Emden & Sandelowski, 1998; Patton, 2002), interpretive research still requires a trail of evidence throughout the research process to demonstrate credibility or trustworthiness (Koch 1994). Rigor is described as demonstrating integrity and competence within a study (Aroni et al., 1999).

Schutz's first postulate of logical consistency is similar to the description by Horsfall, Byrne-Armstrong, and Higgs (2001) of rigor in qualitative research, which involves in-depth planning, careful attention to the phenomenon under study, and productive, useful results. Descriptions of theoretical rigor involve sound reasoning and argument and a choice of methods appropriate to the research problem (Higgs, 2001; Rice & Ezzy, 1999). The step-by-step process of analysis that is outlined in this article is a method of demonstrating transparency of how the researcher formulated the overarching themes from the initial participant data.

Schutz's second postulate of subjective interpretation is in line with preserving the participant's subjective point of view and acknowledging the context within which the phenomenon was studied (Horsfall et al., 2001; Leininger 1994). Interpretive rigor requires the researcher to demonstrate clearly how interpretations of the data have been achieved and to illustrate findings with quotations from, or access to, the raw data (Rice & Ezzy, 1999). The participants' reflections, conveyed in their own words, strengthen the face validity and credibility of the research (Patton, 2002). The process of data analysis outlined in this article demonstrates how overarching themes are supported by excerpts from the raw data to ensure that data interpretation remains directly linked to the words of the participants.

Schutz's postulate of adequacy resonates with the process of member checks, a method sometimes used to validate participants' responses to a researcher's conclusions about them (Cutcliffe & McKenna, 2002) or to confirm findings with primary informant sources (Leininger, 1994). Others have questioned whether members are the best judges of what is valid in the research process; member checks can become the participant's response to a new phenomenon, namely the researcher's interpretations (Sandelowski, 2002). However, in the study reported here, a limitation was that member checks postanalysis or follow-up focus groups were not included, as the participants expressed willingness to attend only one interview. Themes raised during the focus groups were summarized at the end of the session for participants to confirm or alter, to ensure an accurate summary of the discussion. Presentations of the researcher's data interpretation at conferences and colloquiums allowed opportunities for further comment by audiences of nursing clinicians.

The postulate of adequacy can also be illustrated by Sandelowski's (1997) construct of direct application, in which the credibility of the research is measured by the way in which practitioners use in their practice the knowledge generated by the research. Practitioners then become the critics of the research findings; that is, they understand, apply, and evaluate the findings (Sandelowski, 1997). As the recent research study was practice based, the aim was to formulate relevant and useful findings, to evaluate, and to make recommendations for improvements to current performance feedback processes. To date, this has involved presentations to groups of nurses, the use of the thesis findings by educators across the state when providing workshops on performance feedback to both managers and clinical staff, and the publication of findings in peer-reviewed journals (Fereday & Muir-Cochrane, 2004, 2006).

Demonstrating rigor through a process of thematic analysis

Thematic analysis is a search for themes that emerge as being important to the description of the phenomenon (Daly, Kellehear, & Gliksman, 1997). The process involves the identification of themes through “careful reading and re-reading of the data” (Rice & Ezzy, 1999, p. 258). It is a form of pattern recognition within the data, where emerging themes become the categories for analysis.

The method of analysis chosen for this study was a hybrid approach of qualitative methods of thematic analysis, and it incorporated both the data-driven inductive approach of Boyatzis (1998) and the deductive a priori template of codes approach outlined by Crabtree and Miller (1999). This approach complemented the research questions by allowing the tenets of social phenomenology to be integral to the process of deductive thematic analysis while allowing for themes to emerge direct from the data using inductive coding.

The coding process involved recognizing (seeing) an important moment and encoding it (seeing it as something) prior to a process of interpretation (Boyatzis, 1998). A “good code” is one that captures the qualitative richness of the phenomenon (Boyatzis, 1998, p. 1). Encoding the information organizes the data to identify and develop themes from them. Boyatzis defined a theme as “a pattern in the information that at minimum describes and organises the possible observations and at maximum interprets aspects of the phenomenon” (p. 161).

In addition to the inductive approach of Boyatzis (1998), in our analysis of the text in this study, we also used a template approach, as outlined by Crabtree and Miller (1999). This involved a template in the form of codes from a codebook to be applied as a means of organizing text for subsequent interpretation. When using a template, a researcher defines the template (or codebook) before commencing an in-depth analysis of the data. The codebook is sometimes based on a preliminary scanning of the text, but for this study, the template was developed a priori, based on the research question and the theoretical framework.

Following data collection from 10 focus groups and a document analysis of 16 policies or procedures, both the interview transcripts and the documents were entered into the QSR NVivo data management program, and a comprehensive process of data coding and identification of themes was undertaken. This process is described as a systematic, step-by-step process in the sections below.

Although presented as a linear, step-by-step procedure, the research analysis was an iterative and reflexive process. This interactivity, applied throughout the process of qualitative inquiry, is described by Tobin and Begley (2004) as the overarching principle of “goodness.” The data collection and analysis stages in this study were undertaken concurrently, and we reread the previous stages of the process before undertaking further analysis to ensure that the developing themes were grounded in the original data. The primary objective for data collection was to represent the subjective viewpoint of nurses who shared their experiences and perceptions of performance feedback during focus group discussions.

Stages of data coding

The chart shown in Figure 1 represents each stage of coding.

Diagrammatic representation of the stages undertaken to code the data (adapted from Boyatzis, 1998, and Crabtree and Miller, 1999).

Stage 1: Developing the code manual

The choice of a code manual for the study was important, because it served as a data management tool for organizing segments of similar or related text to assist in interpretation (Crabtree & Miller, 1999). The use of a template provided a clear trail of evidence for the credibility of the study.

For this study, the template was developed a priori, based on the research questions and theoretical concepts Schutz's (1967) social phenomenology. Six broad code categories formed the code manual (motives, social relationships, the meaning of social action, systems of relevance, ideal types, and “common sense”). The example throughout this article follows Schutz's concept of the social relationships between sources and recipients of feedback, distinguished as three types: We-relation, Thou-relation, and They-relation. The example has been chosen because it clearly demonstrates how the fundamental process of communication between a source and recipient of feedback is underpinned by a more complex process of interpretation in relation to assessing feedback credibility.

For this study, codes were written with reference to Boyatzis (1998) and identified by

the code label or name, the definition of what the theme concerns, and a description of how to know when the theme occurs.

The codes relating to social relationships were written in Table 1.

An example of codes developed a priori from the template of codes.

Stage 2: Testing the reliability of the code

An essential step in the development of a useful framework for analysis is to determine the applicability of the code to the raw information (Boyatzis, 1998). Two performance appraisal documents from health care organizations were selected as test pieces. Following the coding process of the documents using the predefined codes, I invited my supervisor to code the documents as well. The results were compared, and no modifications to the predetermined code template were required.

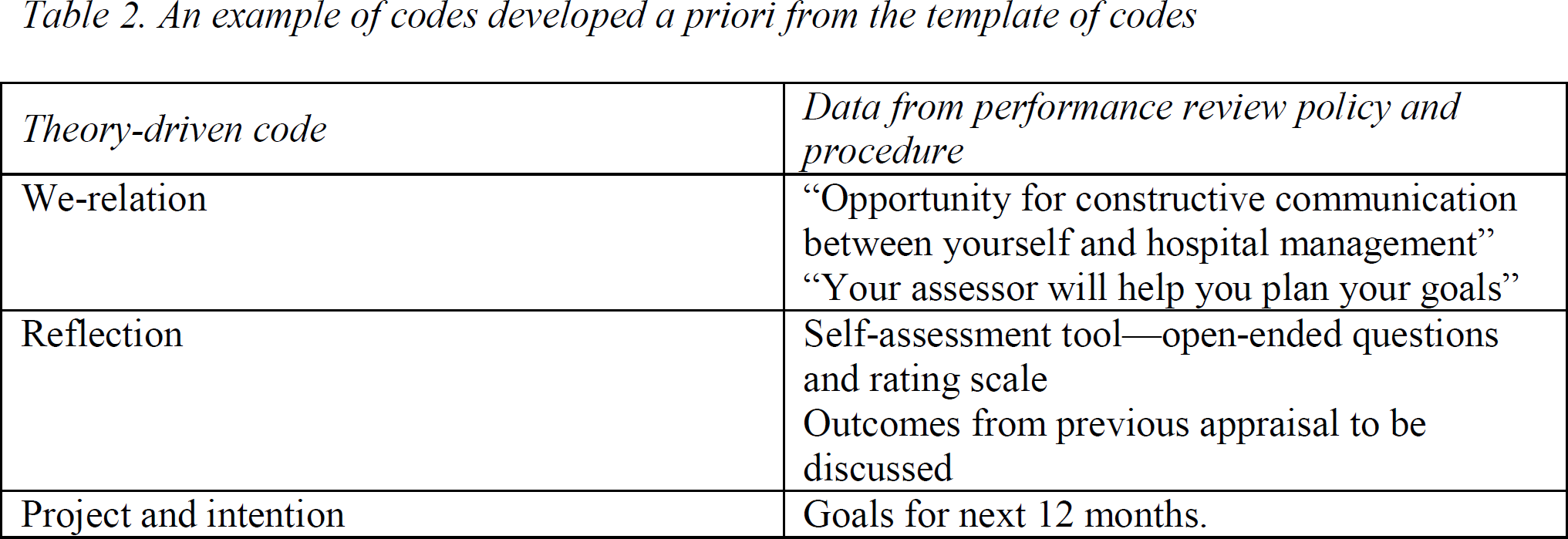

An example of applying the template of codes while examining a document is given in Table 2.

An example of codes developed a priori from the template of codes

Stage 3: Summarizing data and identifying initial themes

The process of paraphrasing or summarizing each piece of data enters information “into your unconscious, as well as consciously processing the information” (Boyatzis, 1998, p. 45). This process involves reading, listening to, and summarizing the raw data. we used this technique as a first step when analyzing each transcript of the focus groups.

We summarized the transcripts separately by outlining the key points made by participants (noting individual and group comments) in response to the questions asked by the facilitator. These key questions formed the framework for the semistructured interviews. For nursing clinicians, questions included

When I talk about “feedback on your work performance”—do you have any immediate thoughts about this topic? Who do you receive performance feedback from? Do you personally seek out any feedback? Are any of these sources of feedback more important than others? Why? If you had to rate these sources according to the value they hold for you in relation to feedback on your own work performance how would you rate them? How do you use the feedback you receive to change any area of your work performance? When does feedback have the most impact on you—when do you really take notice? How do you keep evidence of feedback?

It is important to note that a content analysis was not the aim of the data analysis, and, consequently, a single comment was considered as important as those that were repeated or agreed on by others within the group. The summary for each focus group reflected the initial processing of the information by the researcher and provided the opportunity to sense and take note of potential themes in the raw data. In Table 3, we have provided an example from one focus group.

Summarizing the data from focus groups under trigger question headings. The source of data was Clinical Focus Group 4

Stage 4: Applying template of codes and additional coding

Using the template analytic technique (Crabtree & Miller, 1999), we applied the codes from the codebook to the text with the intent of identifying meaningful units of text. The transcripts and organizational documents had previously been entered as project documents into the N-Vivo computerized data management program. The codes developed for the manual were entered as nodes, and I coded the text by matching the codes with segments of data selected as representative of the code. The segments of text were then sorted, and a process of data retrieval organized the codes or clustered codes for each project document across all three sets of data (nursing clinician, nursing managers, and organizational documents), as exemplified in Table 4.

Coding all three data sources by applying the codes from the code book

Analysis of the text at this stage was guided, but not confined, by the preliminary codes. During the coding of transcripts, inductive codes were assigned to segments of data that described a new theme observed in the text (Boyatzis, 1998). These additional codes were either separate from the predetermined codes or they expanded a code from the manual. From the following example, the concepts of trust and respect were initially coded as part of the We-relation. However, other comments from different sources in relation to trust and respect resulted in this becoming a separate data-driven code, as shown in Table 5.

An example of data-driven codes with segments of text from all three data sets

Stage 5: Connecting the codes and identifying themes

Connecting codes is the process of discovering themes and patterns in the data (Crabtree & Miller, 1999). In Table 6, we have illustrated the process of connecting the codes and identifying themes across the three sets of data, clustered under headings that directly relate to the research questions. Similarities and differences between separate groups of data were emerging at this stage, indicating areas of consensus in response to the research questions and areas of potential conflict. Themes within each data group were also beginning to cluster, with differences identified between the responses of groups with varying demographics; for example, differences were expressed by less experienced and more experienced nursing clinicians.

Connecting the codes and identifying themes using the research questions as headings: Criteria for credibility of performance feedback

Stage 6: Corroborating and legitimating coded themes

The final stage illustrates the process of further clustering the themes that were previously identified from the coded text. Corroborating is the term used to describe the process of confirming the findings (Crabtree & Miller, 1999, p. 170). Fabricating evidence can be a common problem in the process of interpreting data (Crabtree & Miller, 1999), even though this is not an intentional process but constitutes the unintentional, unconscious “seeing” of data that researchers expect to find.

At this stage, the previous stages were closely scrutinized to ensure that the clustered themes were representative of the initial data analysis and assigned codes. The interaction of text, codes, and themes in this study involved several iterations before the analysis proceeded to an interpretive phase in which the units were connected into an explanatory framework consistent with the text. Themes were then further clustered and were assigned succinct phrases to describe the meaning that underpinned the theme. Three overarching or core themes were identified that were felt to capture the phenomenon of performance feedback as described in the raw data. One of these themes was familiarity, which encompassed many of the subthemes, both data driven and from the tenets of social phenomenology (We-, Thou-, or They-relations) (Table 7).

Corroborating and legitimating coded themes to identify second-order theme

In summary, from this process of analysis, the credibility of the feedback message was found to be linked to the level of familiarity shared by the nursing clinician and the source of feedback. Familiarity in this study involved a person who had frequent contact with the nurse, demonstrated an understanding of the situational context within which the feedback originated, and was respected for his or her clinical expertise and/or experiential knowledge. The findings indicated that the type of social relationship between the source and the recipient of performance feedback was a significant criterion for credibility and subsequent utility of the feedback message. A high level of familiarity between the feedback source and the recipient was found to be potentially detrimental to the integrity of the feedback message and, in turn, the utility of the message for performance improvement.

Limitations of the study method

Limitations occur for all studies, and because of the nature of a doctoral study, the data were coded and themes identified in the data by one person and the analysis then discussed with a supervisor. This process allowed for consistency in the method but failed to provide multiple perspectives from a variety of people with differing expertise. When using this method for another study, the coding of data could involve several individuals with themes' being developed using discussions with other researchers, a panel of experts, and/or the participants themselves.

Conclusion

This article has provided an illumination of the steps involved in the process of thematic analysis and describes an approach that demonstrates rigor within a qualitative research study. Outlined is a detailed method of analysis using a process of thematic coding that involves a balance of deductive coding (derived from the philosophical framework) and inductive coding (themes emerging from participant's discussions). Through this process, it was possible to identify clearly how themes were generated from the raw data to uncover meanings in relation to performance feedback for these participants and how feedback can inform their self-assessment of competence in nursing. This careful description of the steps and processes used in data analysis can be replicated and assist other researchers in demonstrating a high degree of clarity of the conceptual framework and method of analysis applied.