Abstract

Background:

Communication between health care providers is becoming more intertwined with technology. During the pandemic, telehealth strategies grew exponentially. Remote viewing of imaging on a smartphone may offer efficient communication; however, the reliability of injury assessment when compared with traditional methods is not known. The purpose of this study was to evaluate intraobserver and interobserver reliability of distal radius fracture radiograph review for smartphone versus traditional Picture Archiving and Communication System (PACS).

Methods:

Eight evaluators (3 attending hand surgeons, 3 hand surgery fellows, 2 orthopedic residents) evaluated 26 distal radius fracture radiographs on 2 different viewers: smartphone or PACS. The reviewers were asked to record: (1) operative or nonoperative preference; (2) fracture classification (based on Fernandez and Jupiter); and (3) treatment strategy (volar plate, dorsal plate, pins, cast, bridge plate, or fragment-specific fixation). The percentage of intraobserver agreement was recorded for each observer. Reliability was calculated using Fleiss’ kappa coefficient for intraobserver and interobserver agreement and graded by strength of correlation.

Results:

Intraobserver agreement averaged 97% when deciding between operative and nonoperative treatment, 76% for classification, and 84% for treatment. Kappa scores were graded as “excellent” for operative decision and “substantial” for classification and treatment. Attendings and fellows generally had higher agreement than that of residents. Interobserver agreement was graded as “substantial” for all categories for both PACS and smartphone.

Conclusions:

Evaluation of radiographs on a smartphone for the purpose of treating distal radius fractures does not appear to be significantly different from an evaluation on traditional PACS.

Keywords

Introduction

Distal radius fractures in US elderly patients are ranked second in incidence only to hip fractures with an estimated incidence of 643 000 per year and an estimated annual Medicare expenditure of $385 to $535 million dollars. 1 With a large aging US population, these fractures will only become even more common, placing increased demand on providers for diagnosis, evaluation, and treatment decision-making. In orthopedic surgery, clinical decision-making almost ubiquitously involves review of radiographs. Effective review of radiographs remotely with mobile devices has been examined with promising results for tibial plateau fractures, 2 adult orthopedic polytrauma,3,4 pediatric orthopedic trauma, 5 hand trauma, 6 and ankle fractures. 7

Mobile devices in health care have become increasingly common and have been shown to overall improve workflow, efficiency, and communication, as well as interteam relationships and accessibility. 8 Smartphone messenger applications allow physicians to quickly and remotely review and discuss multimedia data like radiographs in contrast to traditional methods like plain films on a light-box or Digital Imaging and Communications in Medicine (DICOM) images on a computer. Prior studies have demonstrated the diagnostic efficacy of mobile devices for emergency radiology,9,10 and more recent studies have shown physicians’ increasing use of teleconsultation with smartphone camera and messaging applications.2,11,12

Previous studies have reported moderate interobserver reliability of digital radiographs viewed on mobile device for distal radius fracture AO classification and treatment choice, and fair interobserver reliability for DICOM viewers. 13 In this study, we additionally aimed to see whether the individual observer could reliably classify and recommend the same treatment when viewing the radiographs on a smart phone versus a traditional Picture Archiving and Communication System (PACS). Thus, the purpose of this study was to compare intraobserver reliability for distal radius fracture classification, decision for operative treatment, and fixation strategy between radiographs viewed on smartphone and traditional PACS. Secondarily, we evaluated interobserver agreement between PACS and smartphone.

Methods

After institutional review board review, this study was given exemption status. Eight evaluators (3 attending hand surgeons, 3 hand surgery fellows, 2 orthopedic residents) evaluated radiographs of 26 distal radius fractures on 2 different viewers: PACS and smartphone. Deidentified radiographic images for this study were obtained from an institutional injury database. By consensus of 2 study authors, fractures with adequate anteroposterior and lateral digital radiographs were chosen to represent a distribution of distal radius fracture types. Authors involved with selection of radiographic imaging did not participate in the study. Age, laterality, and sex of the patient were presented to observers along with the images. No other demographic information was provided. All radiographs consisted of 3 views (anteroposterior, lateral, and oblique), and initial injury radiographs were taken in emergency department prior to surgery. A vignette example is shown in Figure 1.

Example of radiographic vignette.

All observers evaluated the 26 sets of images twice: once on their smartphone and once at a computer terminal. The image sets were shuffled into a different order at each station. Each observer was given a grading sheet to mark their decision to operate (operative or nonoperative treatment), fracture classification (based on Fernandez and Jupiter), 14 and fixation strategy (cast, pins, volar plate, dorsal Plate, bridge plate, or fragment-specific fixation). The grading sheet also had a space for general comments and feedback. The Fernandez and Jupiter classification was available for reference with a short tutorial on the classification system provided prior to the experiment. 14 Participants were not restricted from making adjustments to the images such as magnification level or window contrast level on the DICOM imaging. They were also able to zoom and manipulate images on the phone. The goal was to simulate the environment in which a surgeon would either sit at a computer terminal in the hospital or view the images on his or her mobile phone remotely.

Statistical Analysis

A sample size of 26 radiographic images and 8 observers was selected based on prior literature, which reported 25 images would be necessary to detect a kappa of 0.50 with a power of 0.80 for 2 observers with 90% positive ratings in a dichotomous variable.13,15-17 For validation, post hoc power analysis was conducted, showing that 26 radiographic images with 8 observers yielded a power of 92% to detect an intraclass correlation coefficient (ICC) of 0.75 under an alternative hypothesis when the ICC under null hypothesis is 50% at 5% level of significance. Interobserver and intraobserver reliability was calculated with multirater kappa measure, 18 and calculated kappa values were interpreted and compared using 2-sample z test per guidelines of Landis and Koch. 19 Paired t test analysis was performed to assess differences in treatment decisions for radiograph vignettes between groups.

Percent agreement was calculated by the number of cases that had an identical response divided by the total number of cases. We additionally decided to calculate the kappa coefficient to correct for agreement that would otherwise be present by chance. For interobserver agreement, we compared all observers for agreement between smartphone and PACS for decision to operate, classification, and fixation strategy.

The strength of agreement of kappa coefficients was graded by the boundaries suggested by Landis and Koch. Values less than 0.00 indicate “poor “reliability; 0.00-0.20, “slight” reliability; 0.21-0.40, “fair” reliability; 0.41-0.60, “moderate” reliability; 0.61-0.80, “substantial” agreement; and 0.81-1.00, “excellent” or “almost perfect” agreement. 19

Results

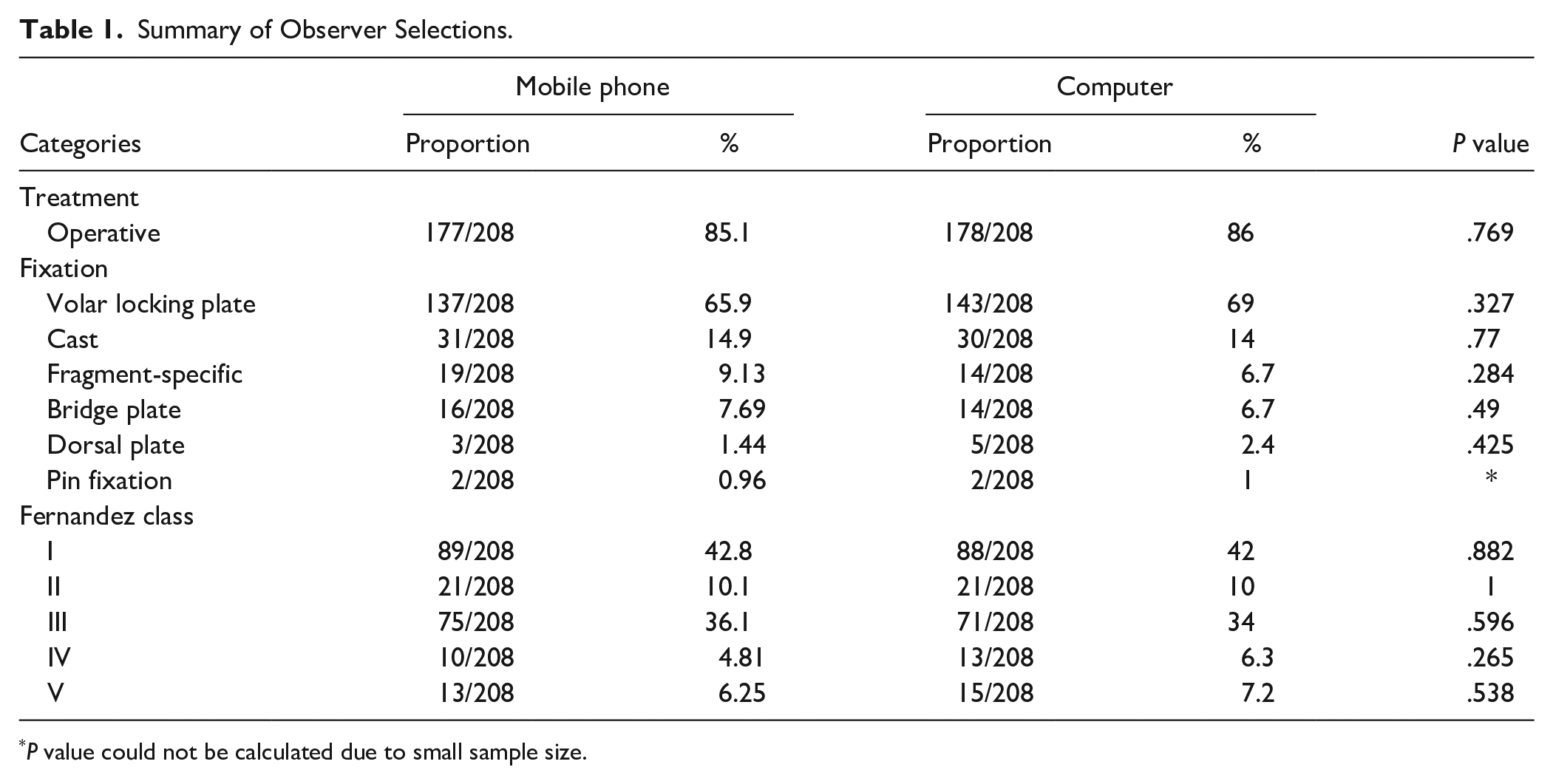

Table 1 demonstrates the overall distribution of observer selections for treatment, classification, and fixation strategy. The radiograph sample had a mean age of 62.46 (SD, 17.02; range, 29-95), 16 (61.5%) of 26 female patients, and 17 (65.38%) of 26 right laterality. Overall, a high proportion of observers chose operative treatment for the mobile device (85%) and computer (86%) modalities. Volar locking plate fixation was the most common strategy chosen for both groups (66% and 69%, respectively). The majority of Fernandez classifications were class I (43% and 42%, respectively), followed by class III (36% and 34%, respectively). We also reviewed the comments section, in which several observers remarked that their evaluation was “faster” on the mobile phone.

Summary of Observer Selections.

P value could not be calculated due to small sample size.

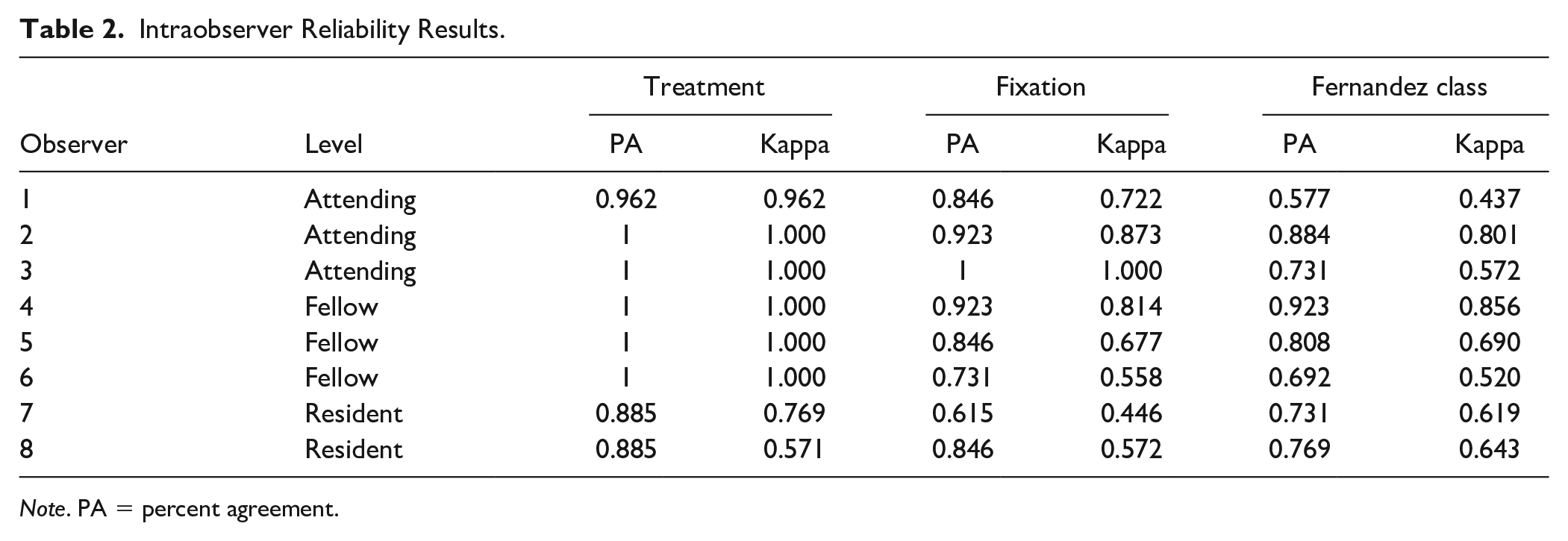

Table 2 demonstrates intraobserver results. For the decision to operate, percent agreement and reliability were high in all groups with attendings and fellows recording a nearly perfect score in both categories. For fracture classification, 3 scored in the “moderate” range, 4 in the “substantial” range, and 2 in the “excellent” range. For fixation strategy, attendings scored in the “substantial” or “excellent” range, residents scored in the “moderate” range, and fellows scored in moderate, substantial, and excellent ranges.

Intraobserver Reliability Results.

Note. PA = percent agreement.

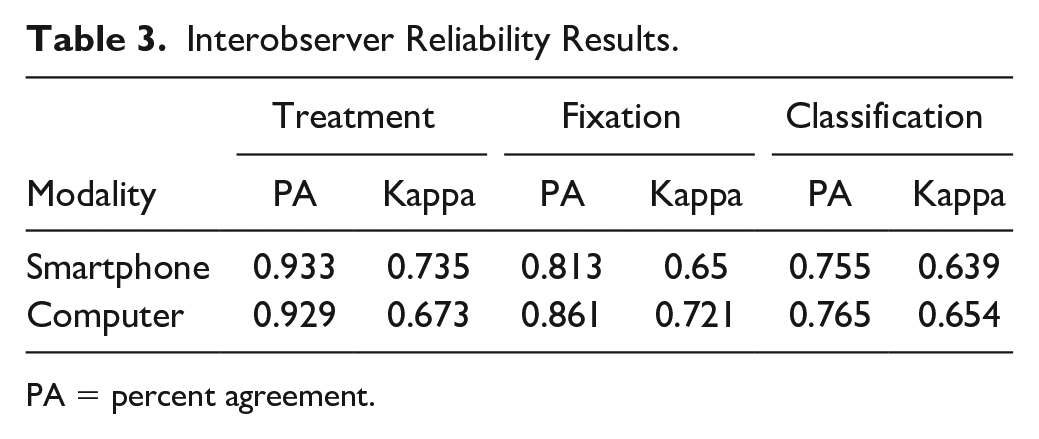

Table 3 demonstrates interobserver results. Kappa scores were not significantly different between smartphone and PACS for decision to operate, classification, and fixation strategy. All kappa scores were graded in the “substantial” range.

Interobserver Reliability Results.

PA = percent agreement.

Discussion

During the COVID-19 pandemic, remote technology and telehealth services experienced an exponential increase. 20 Providers are increasingly using smaller and more mobile devices to obtain information. As hand surgeons frequently rely on radiographs to make clinical decisions, we inquired to investigate whether or not a decision made based on a radiograph of a distal radius fracture was different when viewed on a smartphone versus at a computer station. We aimed to simulate the experience of the remote surgeon who receives a text message from another provider who is on site with the patient.

Our results demonstrated high intraobserver reliability for hand surgeons’ diagnosis and treatment of distal radius fractures when the decision is made from radiographs viewed via smartphone versus a traditional PACS viewer. For decision to operate, attendings and fellows had a nearly perfect intraobserver correlation. These data suggest that viewing radiographs on smartphone is equivalent to viewing radiographs at a traditional computer station. Although not a primary goal of this study, we also noted that attendings and fellows tended to score higher than residents on decision to operate and classification. Attendings also scored higher than fellows and residents on fixation strategy.

Both findings suggest that experience plays a role in being consistent, which has been shown in other studies. For example, Mulders et al surveyed surgeons and residents on AO/Orthopaedic Trauma Association classification and treatment, and they found high consensus among attendings but only moderate consensus among residents on surgical indications. They also found higher confidence among attending treatment decisions, with greater proportion of residents reporting classification did not guide their treatment or prognosis. 21 Waljee et al similarly found that younger surgeons were more likely to choose open reduction and internal fixation for distal radius fracture versus external fixation or pinning. 22 Other studies on pediatric orthopedic surgery have found lower intraobserver reliability for classifications and treatment indications in surgeons with less training.23-25

We also noted a substantial interobserver agreement across all participants when comparing mobile phone with computer. Our reliability scores were somewhat higher than most reported studies on fracture classification and treatment. For example, Musikachart et al reported moderate interobserver reliability (k = 0.44-0.45) for surgeons asked to determine shaft-condylar and lateral capitello-humeral angles in children, used to assess acceptable reductions of supracondylar humerus fractures. 23 Foroohar et al 26 asked 16 orthopedic surgeons to classify proximal humerus fracture radiographs by the Neer Classification and found that all interobserver reliability values ranged from slight to moderate (k = 0.03-0.57). Turgut et al 27 asked 15 orthopedic surgeons to classify adult femoral neck fractures by Garden, Pauwels, and AO classifications, with average kappa values of 0.34, 0.24, and 0.43, respectively.

Several explanations for these differences include younger surgeons may have a greater bias for operative treatment28,29 or an overall shift toward open management of distal radius fractures in surgical training, 28 which may have biased the observers to more often choose operative management with volar plates. We additionally had a high proportion of bending-type and compression-type fractures treated with volar plates. When selecting cases, we wanted to most closely simulate a realistic experience. Bending-type and compression-type fractures treated with volar plates are more commonly encountered and are thus representative of a realistic distribution.28,30

We acknowledge several limitations of this study. First, participants had different mobile devices with different operating systems. We did not specifically evaluate the effect of these differences. Second, although several observers perceived their evaluation time was shorter on the phone, we did not record the time taken to evaluate each case. Last, we are aware that more than just age, sex and pathoanatomy play a role in a surgeon’s decision to treat. However, a study by Neuhaus et al 29 showed that radiographic factors were most important in decision-making for distal radius fractures. Finally, an important limitation is that the power calculation was performed based on detecting a kappa of 0.50 as reported in other literature.13,15-17 Using 0.5 as a threshold still implies only “moderate agreement”; thus, there may still be clinically significant disagreement not detected in this study.

Conclusions

In conclusion, this study suggests equivalent and high intrarater and interrater reliability for treatment and diagnostic decision-making for distal radius fractures viewed on mobile devices. Telemedicine methodologies should be ensured to be Health Insurance Portability and Accountability Act–compliant to protect patient privacy. Evaluation of radiographs on a smartphone for the purpose of treating distal radius fractures does not appear to be significantly different than an evaluation on traditional PACS.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethical Approval

This study was reviewed by the Institutional Review Board of Thomas Jefferson University and was given exemption status (Reference #21E.976).

Statement of Human and Animal Rights

All procedures followed were in accordance with the ethical standards of the responsible committee on human experimentation (institutional and national) and with the Helsinki Declaration of 1975, as revised in 2008.

Statement of Informed Consent

Informed consent was obtained from all individual participants for being included in the study.